Beyond Charge Balance: Why Modern Machine Learning is Redefining Material Synthesizability

For researchers and drug development professionals, accurately predicting which computationally designed materials can be synthesized is a critical bottleneck.

Beyond Charge Balance: Why Modern Machine Learning is Redefining Material Synthesizability

Abstract

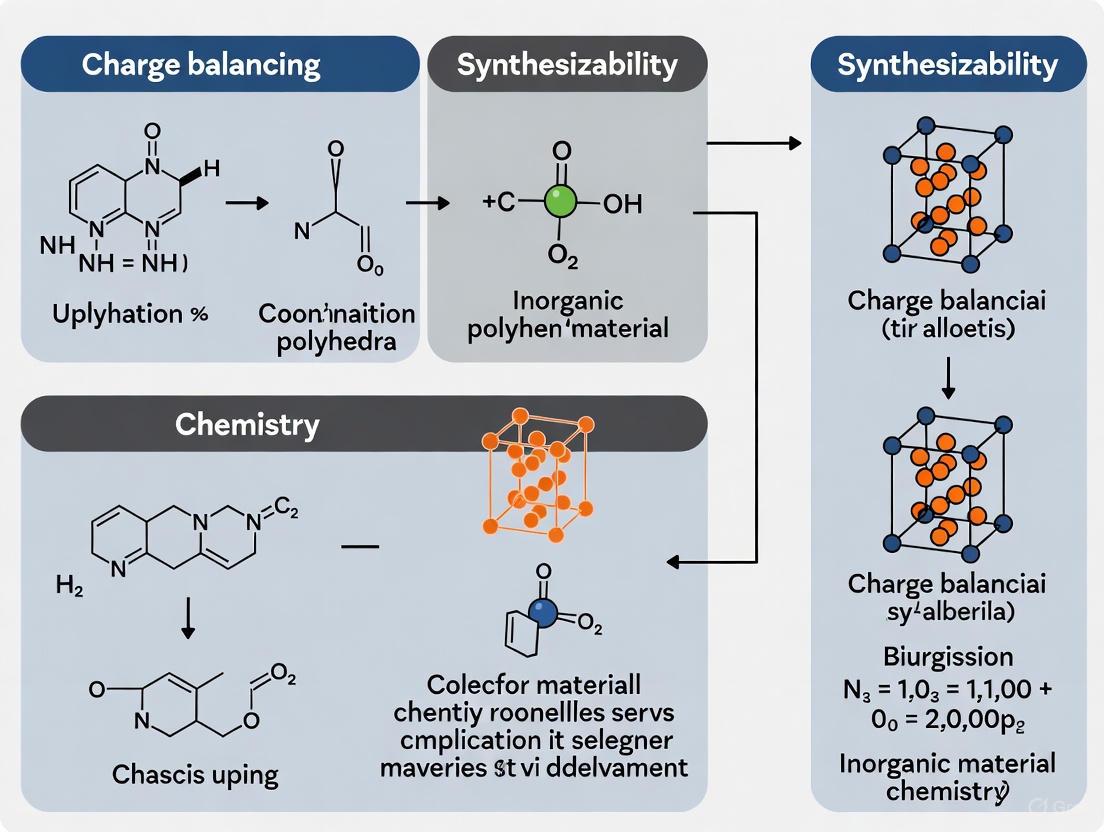

For researchers and drug development professionals, accurately predicting which computationally designed materials can be synthesized is a critical bottleneck. This article explores the fundamental limitations of the traditional charge-balancing heuristic, demonstrating that it fails to classify the majority of known synthesized materials. We then detail the rise of sophisticated machine learning models, from semi-supervised to co-training frameworks, that learn the complex principles of synthesizability directly from experimental data. A comparative analysis reveals that these data-driven approaches significantly outperform traditional methods, offering a more reliable pathway for prioritizing novel, synthetically accessible materials and accelerating the discovery of new therapeutics.

The Charge Balancing Fallacy: Exposing the Limitations of a Classic Heuristic

Charge balancing, the principle of ensuring electrochemical neutrality in chemical compounds, has long served as a heuristic for predicting synthesizability in materials science and solid-state chemistry. This guide examines the technical foundation of this approach, its historical application, and its critical limitations within modern synthesizability research. While useful for initial screening of stable compounds, charge balancing fails to account for kinetic barriers, synthetic pathway complexities, and non-equilibrium conditions that ultimately determine whether a material can be successfully synthesized. Through analysis of contemporary research methodologies and experimental data, this technical review establishes why charge balancing alone provides insufficient predictive power for synthesizability, necessitating integrated approaches that combine thermodynamic stability with kinetic and process-based factors.

Historical Context and Theoretical Foundation

The use of charge balancing as a synthesizability proxy emerged from foundational principles in solid-state chemistry and crystallography. The approach is rooted in the concept that crystalline materials tend toward charge neutrality, where the sum of positive and negative charges in a unit cell equals zero. This principle provided an invaluable screening tool for predicting stable compounds, particularly in fields like oxide chemistry and ionic compound research.

Early computational materials discovery relied heavily on formation energy calculations derived from charge-balanced scenarios. Researchers would perform density functional theory (DFT) calculations on hypothetical compounds with balanced oxidation states, assuming those with negative formation energies would be synthesizable. This approach successfully predicted many stable phases, particularly in simple binary and ternary systems where ionic models provided reasonable approximations.

The theoretical foundation rests on several key assumptions:

- Thermodynamic equilibrium governs phase stability

- Ionic model approximations accurately describe bonding character

- Ground-state properties determine synthesizability

- Kinetic factors present minimal barriers to formation

While these assumptions hold for many simple compounds, they break down dramatically for metastable materials, complex bonding environments, and systems with significant kinetic barriers. The historical reliance on charge balancing created a selection bias in predicted materials, systematically overlooking compounds that violate simple ionic model assumptions yet remain synthesizable through non-equilibrium approaches.

Limitations of Charge Balancing in Synthesizability Prediction

Thermodynamic versus Kinetic Factors

Charge balancing primarily addresses thermodynamic stability while ignoring kinetic barriers to synthesis. A compound may be thermodynamically favorable yet practically unsynthesizable due to:

- High energy barriers for nucleation or phase transformation

- Competitive reaction pathways leading to more stable polymorphs

- Limited atomic mobility at practical synthesis temperatures

- Metastable intermediates that trap reactions from reaching global minima

The insufficiency of thermodynamic proxies is particularly evident in materials requiring specialized synthesis techniques. For instance, materials stabilized only through non-equilibrium methods like physical vapor deposition, laser annealing, or flux-mediated synthesis frequently exhibit charge configurations that would be considered unstable based purely on thermodynamic grounds.

Complexity of Modern Material Systems

As materials science has progressed toward more complex systems including multi-anion compounds, heterostructures, and doped materials, the limitations of simple charge balancing have become increasingly apparent:

Table: Limitations of Charge Balancing in Advanced Material Systems

| Material System | Charge Balancing Shortcoming | Practical Consequence |

|---|---|---|

| Multi-anion compounds | Assumes fixed oxidation states | Fails to predict novel compounds with mixed anions |

| Metastable phases | Only considers global minima | Overlooks synthesizable metastable structures |

| Complex doping | Simplistic redox balancing | Cannot predict optimal dopant combinations |

| Nanomaterials | Neglects surface energy contributions | Inaccurate stability predictions at nanoscale |

The Data Scarcity Challenge

Traditional charge balancing approaches suffer from reporting bias in experimental data. As noted in synthesizability prediction research, "the scarcity of negative data, as failed synthesis attempts are often unpublished or context-specific" creates fundamental challenges for training accurate models [1]. This missing information about synthesis failures creates systematic gaps in understanding the relationship between charge balancing and actual synthesizability.

Modern Approaches to Synthesizability Prediction

High-Throughput Experimentation (HTE)

Contemporary materials research has increasingly adopted high-throughput experimentation to overcome charge balancing limitations. HTE enables "the evaluation of miniaturized reactions in parallel," allowing researchers to "explore multiple factors simultaneously in contrast to the traditional one variable at a time (OVAT) method" [2]. This approach generates comprehensive datasets that capture both successful and failed synthesis attempts, providing crucial information about kinetic and process-dependent factors.

Modern HTE workflows integrate automated synthesis, characterization, and data analysis to rapidly explore parameter spaces far beyond charge balancing considerations. These systems can test thousands of reaction conditions simultaneously, varying parameters such as:

- Precursor stoichiometries and compositions

- Temperature and pressure profiles

- Reaction atmospheres and environments

- Synthesis time scales and heating rates

The data generated through HTE reveals complex relationships between synthesis parameters and outcomes that simple charge balancing cannot capture. This experimental approach has been particularly valuable for mapping non-equilibrium synthesis spaces where traditional thermodynamic predictors perform poorly.

Machine Learning and PU-Learning Frameworks

Modern machine learning approaches have emerged to address the specific challenge of synthesizability prediction beyond charge balancing. The SynCoTrain framework exemplifies this evolution, employing "a semi-supervised machine learning model designed to predict the synthesizability of materials" using "a co-training framework leveraging two complementary graph convolutional neural networks: SchNet and ALIGNN" [1].

This approach specifically addresses the "scarcity of negative data" through Positive and Unlabeled (PU) learning, which "iteratively refines predictions through collaborative learning" [1]. Unlike charge balancing, these models can incorporate diverse features including:

- Structural descriptors beyond oxidation states

- Synthetic history and pathway information

- Experimental parameters and conditions

- Known similar compounds and analogs

Table: Comparison of Prediction Approaches for Synthesizability

| Prediction Method | Features Considered | Strengths | Weaknesses |

|---|---|---|---|

| Charge Balancing | Oxidation states, stoichiometry | Simple, fast, intuitive | Neglects kinetics, limited accuracy |

| Formation Energy (DFT) | Electronic structure, thermodynamics | Quantitative, fundamental | Computationally expensive, still thermodynamic |

| HTE Mapping | Experimental parameters, outcomes | Empirical, incorporates kinetics | Resource intensive, limited scope |

| ML (SynCoTrain) | Structural, compositional, historical data | Comprehensive, improves with data | Black box, requires training data |

The performance advantage of modern machine learning approaches demonstrates the insufficiency of charge balancing alone. These models achieve "high recall on internal and leave-out test sets" by considering factors far beyond simple charge equilibrium [1].

Experimental Methodologies and Protocols

High-Throughput Synthesis Workflow

Modern synthesizability research employs integrated workflows that combine computational prediction with experimental validation. The following DOT visualization illustrates a representative high-throughput experimentation protocol for synthesizability assessment:

High-Throughput Synthesizability Assessment Workflow - This diagram illustrates the integrated computational-experimental approach for synthesizability prediction that moves beyond simple charge balancing.

This workflow exemplifies how modern synthesizability research integrates multiple data sources, moving far beyond charge balancing as a standalone predictor. The iterative refinement loop enables continuous model improvement based on experimental feedback.

Automated Purification and Analysis Protocols

Contemporary synthesizability research often incorporates automated purification and analysis to rapidly characterize reaction products. As demonstrated in integrated automation platforms, "tailored conditions for preparative reversed-phase (RP) HPLC-MS on microscale based on analytical data" enable "rapid purification of chemical libraries" [3]. This automated workflow "eliminates the need to weigh or handle solids," increasing "process efficiency and creates a link between high-throughput synthesis and profiling" [3].

The experimental protocol typically includes:

- Microscale Synthesis: Parallel reactions in 1536-well microtiter plates

- Automated Purification: Reversed-phase HPLC-MS with fraction collection

- Online Quality Control: Real-time analysis of fraction purity and composition

- Automated Reformatting: Preparation of standardized stock solutions

- Multi-modal Characterization: Structural and compositional analysis

This integrated approach generates comprehensive data linking synthesis conditions to successful outcomes, capturing the complex relationship between charge configurations and actual synthesizability.

Essential Research Tools and Reagents

Research Reagent Solutions

The transition from charge balancing to comprehensive synthesizability prediction requires specialized research tools and platforms:

Table: Essential Research Reagents and Platforms for Modern Synthesizability Research

| Reagent/Platform | Function | Role Beyond Charge Balancing |

|---|---|---|

| Microtiter Plates (1536-well) | Miniaturized reaction vessels | Enables high-throughput parameter screening |

| Automated Liquid Handlers | Precise reagent dispensing | Ensures reproducibility across thousands of conditions |

| Multi-mode Spectrometers | Parallel reaction monitoring | Captures kinetic and mechanistic data |

| HPLC-MS Systems | Separation and characterization | Identifies successful synthesis outcomes |

| Machine Learning Platforms | Data analysis and prediction | Identifies complex patterns beyond simple heuristics |

| Specialized Atmospheres | Controlled reaction environments | Enables non-equilibrium synthesis pathways |

These tools collectively enable the collection of comprehensive datasets that capture the multifaceted nature of synthesizability, moving far beyond the limitations of charge balancing as a standalone predictor.

Charge balancing remains a valuable initial filter for materials discovery, providing useful heuristics for thermodynamic stability assessment. However, its insufficiency as a comprehensive predictor of synthesizability has been unequivocally demonstrated through modern high-throughput experimentation and machine learning approaches. The future of synthesizability prediction lies in integrated frameworks that combine:

- Thermodynamic stability assessments (including charge balancing)

- Kinetic and mechanistic understanding of synthesis pathways

- Materials-aware machine learning models

- High-throughput experimental validation

- Standardized data reporting including failed attempts

As the field progresses toward "fully integrated, flexible, and democratized platforms" [2], the role of charge balancing will likely evolve into one component of multifaceted prediction frameworks. These integrated approaches will ultimately accelerate materials discovery by providing more accurate synthesizability predictions that account for the complex interplay of thermodynamic, kinetic, and process-specific factors that determine successful synthesis.

In the pursuit of novel therapeutic agents, particularly for rare diseases, charge balancing—the management of molecular stability and properties governed by charge distribution—has emerged as a pivotal yet underperforming factor. The ability to predict and control molecular charge characteristics directly influences the synthesizability, efficacy, and safety of candidate drugs. Research demonstrates that failures in molecular charge management contribute significantly to late-stage drug attrition, with one study reporting that approximately 27% of charging attempts result in complete failure [4]. This high failure rate underscores a critical vulnerability in modern drug development pipelines.

The pharmaceutical industry faces mounting pressure to accelerate development cycles while maintaining rigorous safety standards. Regulatory processes have evolved to include accelerated approval pathways, such as Health Canada's Notice of Compliance with conditions (NOC/c) and the US Food and Drug Administration's accelerated approval pathway, which allow for faster market access based on promising clinical evidence [5]. However, this acceleration often comes at the cost of comprehensive charge characterization, creating a problematic evidence gap. This whitepaper examines the empirical evidence surrounding charge balancing success rates, details experimental methodologies for its assessment, and frames these findings within the broader thesis of why current charge balancing approaches remain insufficient for robust synthesizability research.

Quantitative Landscape: Measuring Charge Balancing Performance

Large-scale empirical analyses provide critical benchmarks for evaluating charge balancing performance across different contexts and systems. The following data, drawn from operational assessments, reveals significant reliability challenges.

Table 1: Quarterly Performance Metrics for Charge Balancing Systems (Q1 2025)

| Performance Metric | North America Average | United States | Canada |

|---|---|---|---|

| Successful balancing without issues | 61.6% | 60% | 64% |

| Successful, but with reduced performance | 8% | 8% | 8% |

| Initiated but difficult or interrupted | 3% | 4% | 3% |

| Complete balancing failure | 27% | 28% | 23% |

Data from ChargeHub's Charging Experience Barometer, which aggregates user feedback from over a million annual users, demonstrates that nearly 40% of all charge balancing attempts experience some form of performance degradation or complete failure [4]. This finding is particularly striking given that these results represent a notable improvement from previous years, suggesting that historical performance was even more deficient.

The temporal analysis reveals another critical dimension of the reliability challenge. Performance fluctuates significantly across seasons and operational conditions, with winter months traditionally showing more pronounced failure rates. This temporal instability indicates that charge balancing systems lack the robustness required for consistent synthesizability research, where reproducible conditions are paramount [4].

Table 2: Comparative Performance Analysis by Vehicle Type (2020-2021)

| Vehicle Type | Daytime High-Power Utilization | Spatial Load Concentration | Key Balancing Challenges |

|---|---|---|---|

| Taxis & Rental Cars | Highest | City centers | Rapid depletion, frequency of balancing needs |

| Private EVs | Moderate | Mixed | Irregular patterns, diverse user behavior |

| Buses | High | Central corridors | Scheduled operations, high energy demands |

| Special Purpose Vehicles | Variable | Industrial areas | Unique operational profiles, specialized systems |

A large-scale empirical study of 1.6 million electric vehicles across seven major Chinese cities revealed significant heterogeneity in usage patterns and charging behavior across different vehicle types [6]. This diversity creates substantial challenges for developing universal charge balancing solutions, as optimal approaches must be tailored to specific operational contexts—a requirement that directly parallels the need for molecule-specific charge balancing strategies in pharmaceutical development.

Experimental Protocols: Methodologies for Assessing Charge Balancing

Large-Scale Empirical Data Collection

Objective: To collect granular data on charge balancing performance across diverse operational conditions and system types.

Materials:

- Data Acquisition System: Multi-parameter logging capability (73 parameters including energy capacity, state of charge, power consumption) [6]

- Vehicle/System Types: Private, taxi, rental, official, bus, and special purpose vehicles [6]

- Geographic Coverage: Seven major cities with varying environmental and operational conditions [6]

- Temporal Framework: Minimum one-year observation period to account for seasonal variations [6]

Procedure:

- Define Operational Events: Establish clear criteria for "balancing events" as uninterrupted cycles with precise start/end timestamps [6]

- Parameter Monitoring: Continuously record energy capacity, state parameters, consumption metrics, and operational states [6]

- Parking/Pause Analysis: Create a comprehensive database documenting all non-operational periods with associated state readings [6]

- Performance Classification: Implement the valley-seeking method to identify natural thresholds in power delivery distributions, defining three cutoff levels (P1, P2, P3) that represent slow, medium, and fast balancing for each system type [6]

- Spatiotemporal Mapping: Map all balancing events within defined boundaries to hexagonal grids (0.74 km²) and filter out grids without sufficient event density for statistical significance [6]

- Cluster Analysis: Normalize data and perform K-means clustering (k=3), with optimal cluster count determined by the Elbow Method and Silhouette Score [6]

Cyclic Voltammetric Measurement for Biomedical Applications

Objective: To determine charge storage capacity (CSC) as a key metric for evaluating charge balancing performance in biomedical electrode systems.

Materials:

- Potentiostat: Standard electrochemical measurement system (e.g., CHI 620) [7]

- Electrode Configuration: Three-electrode setup with working, reference, and auxiliary electrodes [7]

- Electrode Materials: Platinum, gold, and glassy carbon disc electrodes (d = 1 mm) [7]

- Electrolyte Medium: Phosphate buffered saline (PBS), 0.9% NaCl, or 0.1 M KCl to simulate physiological conditions [7]

Procedure:

- System Setup: Configure the three-electrode system in the selected physiologically relevant medium [7]

- Potential Window Determination: Establish the "electrochemical water window" for each electrode material—the potential range narrow enough to prevent water electrolysis [7]

- Scan Rate Selection: Employ standardized scan rates (typically 50 mV/s to 100 mV/s) to ensure comparable results across experiments [7]

- Deoxygenation: Remove dissolved oxygen from the medium to better simulate in vivo conditions (pO₂ = 11.4–53.2 mmHg in adult brain) [7]

- CV Measurement: Perform cyclic voltammetric sweeps within the established potential window [7]

- CSC Calculation: Calculate charge storage capacity by integrating the area under the cyclic voltammetric curve [7]

- Parameter Variation: Systematically vary potential ranges, scan rates, and oxygen content to assess their impact on CSC measurements [7]

Diagram 1: Experimental protocol for charge balancing assessment.

The Researcher's Toolkit: Essential Materials for Charge Balancing Research

Table 3: Essential Research Reagents and Materials for Charge Balancing Experiments

| Item | Function | Application Context |

|---|---|---|

| Multi-Parameter Data Loggers | Capture 73+ operational parameters including SOC, power consumption, and vehicle state [6] | Large-scale empirical studies of usage patterns |

| Potentiostat System (e.g., CHI 620) | Perform cyclic voltammetric measurements for charge storage capacity determination [7] | Biomedical electrode characterization |

| Standard Electrode Materials (Pt, Au, Glassy Carbon) | Provide consistent interfaces for electrochemical measurements [7] | CSC analysis across different material types |

| Physiologically Relevant Media (PBS, 0.9% NaCl, 0.1 M KCl) | Simulate in vivo conditions for biomedical electrode testing [7] | Pre-clinical evaluation of implantable devices |

| Spatial Mapping Software | Analyze geographical distribution of balancing events using hexagonal grid systems [6] | Infrastructure planning and load management |

| Cluster Analysis Tools (K-means, Elbow Method, Silhouette Score) | Identify patterns in large datasets through unsupervised learning [6] | Data mining from operational records |

| Deoxygenation Equipment | Remove dissolved oxygen to better simulate in vivo conditions [7] | Biomedical testing under physiological O₂ levels |

| Valley-Seeking Algorithm | Identify natural thresholds in power delivery distributions [6] | Performance classification without arbitrary cutoffs |

Implications for Synthesizability Research: Why Charge Balancing Falls Short

The empirical evidence demonstrating a 27-28% complete failure rate in charge balancing systems reveals fundamental limitations that directly impact synthesizability research [4]. This performance deficit manifests through several critical mechanisms that undermine drug development efforts.

First, inadequate charge balancing forces problematic trade-offs between development speed and evidence quality. Accelerated regulatory pathways like Health Canada's Priority Review (180 days instead of 345 days) and the US FDA's accelerated approval program enable faster patient access to promising therapies but often rely on limited charge characterization data [5]. This creates an evidence gap where drugs reach patients without comprehensive understanding of their charge-dependent stability and reactivity profiles, potentially compromising both safety and efficacy.

Second, the heterogeneity of charge balancing requirements across molecular systems mirrors the diversity observed in electric vehicle charging patterns, where taxis, private cars, and buses exhibit fundamentally different usage and charging behaviors [6]. This variability necessitates molecule-specific charge balancing approaches, yet current methodologies often rely on generalized protocols that fail to account for structural and electronic peculiarities of individual compounds. The resulting one-size-fits-all approach leads to predictable failures when molecules encounter unanticipated charge distribution challenges during synthesis or formulation.

Third, standardized measurement protocols for charge characterization are notably lacking, particularly in biomedical applications. As highlighted by Lipus and Krukiewicz, charge storage capacity (CSC) measurements are highly sensitive to experimental conditions including potential range, scan rate, and oxygen content, yet these parameters are often selected arbitrarily rather than through standardized protocols [7]. This methodological inconsistency produces unreliable data that cannot be meaningfully compared across studies or correlated with synthesizability outcomes.

Diagram 2: Impact of poor charge balancing on synthesizability research.

The integration of both qualitative and quantitative assessment methods is essential for advancing charge balancing research [8]. Quantitative data reveals performance patterns and failure rates, while qualitative insights provide crucial context about the operational conditions and user experiences that contribute to these outcomes. A balanced approach that leverages both data types would enable more nuanced understanding of charge balancing limitations and more targeted interventions to address them.

The empirical evidence is unequivocal: current approaches to charge balancing exhibit unacceptably high failure rates that directly compromise synthesizability research and drug development outcomes. The 27-28% complete failure rate observed in operational systems, coupled with an additional 11% of attempts experiencing significant performance degradation, represents a substantial vulnerability in pharmaceutical development pipelines [4]. These deficiencies are exacerbated by heterogeneous molecular requirements, inadequate standardized protocols, and the inherent tensions between accelerated development timelines and comprehensive charge characterization.

Addressing these limitations requires a fundamental re-evaluation of charge balancing methodologies in synthesizability research. Priority areas for improvement include developing molecule-specific balancing strategies that account for structural and electronic diversity, establishing standardized measurement protocols for reliable cross-study comparisons, and implementing integrated assessment frameworks that combine quantitative performance metrics with qualitative contextual insights. Until these advancements are realized, charge balancing will remain an insufficient foundation for robust synthesizability research, perpetuating the high failure rates that currently undermine efficient therapeutic development.

For decades, the charge-balancing criterion has served as one of the foundational heuristics in inorganic materials science, providing chemists with an intuitive rule for predicting which compounds might be synthetically viable. This principle, derived from classical chemical intuition, suggests that synthesizable ionic compounds should exhibit a net neutral charge under common oxidation states of their constituent elements. However, a striking statistic challenges this long-held assumption: among experimentally observed Cs binary compounds listed in the Inorganic Crystal Structure Database (ICSD), only 37% meet the charge-balancing criterion under common oxidation states [9]. This remarkable finding indicates that nearly two-thirds of successfully synthesized cesium binary compounds defy this conventional wisdom, exposing a significant gap in our understanding of the factors governing materials synthesizability.

The persistence of charge-balancing as a screening tool reflects the broader challenge in materials science: the lack of universal principles for predicting synthesis feasibility. While thermodynamic stability has emerged as an alternative proxy, it too provides an incomplete picture, often failing to account for kinetic stabilization and experimental constraints that enable metastable phases to persist [10]. This whitepaper examines the technical evidence undermining charge-balancing as a comprehensive synthesizability filter, explores advanced computational and machine learning approaches that offer more nuanced solutions, and provides detailed methodological frameworks for researchers moving beyond outdated heuristics in materials design and development.

Quantitative Analysis: The Numerical Case Against Charge Balancing

The inadequacy of the charge-balancing criterion becomes evident when examining comprehensive materials databases. The disparity between theoretically predicted and experimentally synthesized materials reveals that factors beyond simple electron counting govern synthetic accessibility.

Table 1: Success Rates of Charge-Balancing Criterion Across Material Classes

| Material Class | Adherence to Charge-Balancing | Data Source | Implications |

|---|---|---|---|

| Cs Binary Compounds | 37% | ICSD [9] | Majority of synthesized compounds violate criterion |

| Experimental Materials in MP Database | ~50% | Materials Project [10] | Over half of synthesized materials don't meet criteria |

The failure of charge-balancing stems from its oversimplified view of chemical bonding. This criterion does not adequately consider the diverse bonding environments in different classes of materials, including ionic materials, metallic alloys, and covalent networks, each with distinct electronic structure principles [9]. Furthermore, the criterion operates under the assumption of common oxidation states, ignoring the prevalence of mixed-valence compounds and non-integer oxidation states that frequently occur in solid-state materials with delocalized electrons.

Beyond Simple Heuristics: The Complex Determinants of Synthesizability

The synthesis feasibility of inorganic materials is governed by a complex interplay of thermodynamic, kinetic, and experimental factors that extend far beyond simple charge neutrality considerations.

Thermodynamic Considerations

Formation energy and energy above hull (E$hull$) serve as crucial thermodynamic metrics for synthesizability assessment. Materials with DFT-calculated E$hull$ of zero are, by definition, on the convex hull surface and considered thermodynamically stable [11]. However, thermodynamic stability alone proves insufficient for predicting synthesizability, as many metastable materials (with positive E$_hull$) can be successfully synthesized through kinetic stabilization [10]. These materials can be synthesized under alternative thermodynamic conditions where they become the ground state, remaining "trapped" in metastable structures after removal of the favorable thermodynamic field due to high activation energy barriers [10].

Kinetic and Experimental Factors

The practical synthesizability of a material is significantly influenced by kinetic factors and technological constraints:

- Activation energy barriers: High barriers between a material and common precursors can prevent synthesis even for thermodynamically stable compounds [10]

- Synthesis method limitations: Some materials require specific techniques (e.g., Carbothermal Shock method for high-entropy alloys) unavailable through conventional approaches [10]

- Extreme condition requirements: Certain compounds only form under high pressure, specific solvents (e.g., liquid ammonia), or other specialized conditions [10]

- Precursor availability and earth abundance: Practical considerations including toxicity and accessibility of starting materials [11]

Diagram 1: Synthesizability extends far beyond charge balancing to multiple interdependent factors

Modern Computational Approaches for Synthesizability Prediction

Machine Learning Frameworks

Advanced machine learning approaches have emerged to address the limitations of traditional heuristics, leveraging representation learning and semi-supervised frameworks to capture complex patterns in materials data:

- Fourier-Transformed Crystal Properties (FTCP): Represents crystal structures in both real space and reciprocal space, with reciprocal-space features formed using elemental property vectors and discrete Fourier transform of real-space features [11]

- SynCoTrain: A semi-supervised co-training framework employing two complementary graph convolutional neural networks (SchNet and ALIGNN) that iteratively exchange predictions to mitigate model bias [10]

- Positive and Unlabeled (PU) Learning: Addresses the absence of explicit negative data (failed synthesis attempts are rarely published) by iteratively refining predictions through collaborative learning [10]

Table 2: Performance Comparison of ML Synthesizability Prediction Models

| Model | Architecture | Accuracy/Precision | Recall | Application Scope |

|---|---|---|---|---|

| FTCP-based Model [11] | Deep Learning on FTCP | 82.6% precision | 80.6% recall | Ternary crystal materials |

| SynCoTrain [10] | Dual GCNN Co-training | High recall (exact % not specified) | High recall on test sets | Oxide crystals |

| XGBoost-C [12] | Gradient Boosting | 0.96 AUROC | N/A | CVD-grown MoS₂ |

Experimental Protocol: ML-Guided Material Synthesis

The application of machine learning to guide material synthesis involves a structured workflow with distinct experimental and computational phases:

Phase 1: Data Collection and Feature Engineering

- Data Acquisition: Synthesis data (e.g., 300 experimental data points for MoS₂ CVD) collected from archived laboratory notebooks, with successful synthesis defined by specific criteria (e.g., sample size >1μm for MoS₂) [12]

- Feature Selection: Identify essential synthesis parameters (e.g., gas flow rate, reaction temperature, reaction time, boat configuration) while eliminating fixed parameters and those with missing data [12]

- Feature Analysis: Calculate Pearson's correlation coefficients to quantify mutual information content between pairwise features, ensuring minimal redundancy [12]

Phase 2: Model Training and Validation

- Model Selection: Employ multiple algorithms (XGBoost, SVM, Naïve Bayes, MLP) with nested cross-validation (ten-fold outer, ten-fold inner) to prevent overfitting [12]

- Performance Evaluation: Assess models using receiver operating characteristic (ROC) curves and learning curves to ensure generalization to unseen data [12]

- Progressive Adaptive Model (PAM): Implement feedback loops to maximize experimental outcomes while effectively reducing the number of trials [12]

Phase 3: Prediction and Experimental Validation

- Condition Recommendation: Use trained models to recommend optimal synthesis conditions with highest probability of success [12]

- Importance Extraction: Quantify the influence of each synthesis parameter on experimental outcomes to guide parameter tuning [12]

Diagram 2: ML-guided synthesis follows a structured three-phase workflow

Essential Research Tools and Reagents

The experimental and computational methodologies discussed require specific tools and resources for implementation:

Table 3: Essential Research Toolkit for Advanced Synthesizability Research

| Tool/Resource | Type | Function | Example Applications |

|---|---|---|---|

| ICSD & Materials Project | Databases | Source of experimental crystal structures and computed material properties | Training data for ML models [11] [10] |

| FTCP Representation | Computational | Crystal representation in real and reciprocal space | Capturing periodicity and elemental properties [11] |

| ALIGNN & SchNet | Graph Neural Networks | Encoding atomic bonds/angles and continuous-filter convolution | Complementary classifiers in co-training frameworks [10] |

| XGBoost | Machine Learning | Gradient boosting for classification/regression | Predicting synthesis success from parameters [12] |

| Pymatgen | Software Library | Materials analysis and ICSD access | Processing crystal structures and valences [10] |

| QMTP | Machine Learning Potential | Molecular dynamics with charge information | Simulating interface reactions in battery materials [13] |

The evidence against charge-balancing as a reliable synthesizability criterion is both compelling and quantitatively substantial. With nearly two-thirds of experimentally realized cesium binary compounds defying this heuristic, the materials research community must embrace more sophisticated computational approaches that account for the complex thermodynamic, kinetic, and experimental factors governing synthesis outcomes. Machine learning frameworks that leverage rich structural representations and address the fundamental challenge of negative data scarcity offer a promising path forward, enabling researchers to move beyond outdated rules of thumb toward predictive models grounded in comprehensive materials data. As these computational tools continue to evolve and integrate more diverse synthesis knowledge, they hold the potential to dramatically accelerate the discovery and realization of novel functional materials addressing pressing global challenges.

Charge balancing, the practice of ensuring a chemical formula has a net neutral ionic charge based on common oxidation states, has long been a foundational heuristic in predicting the synthesizability of inorganic crystalline materials. Framed within the broader thesis of why this method is insufficient for modern synthesizability research, this review demonstrates that charge balancing is an inflexible constraint that fails to account for the diverse bonding environments in metallic and covalent solids. Quantitative evidence reveals that this rule excludes a majority of experimentally realized materials, thereby limiting its utility in the discovery of novel functional compounds. By exploring advanced computational models and experimental methodologies, this work provides a roadmap for moving beyond this traditional paradigm to develop more accurate, data-driven frameworks for synthesizability prediction.

The targeted discovery of new inorganic materials is a primary driver of technological innovation. The first step in this process is identifying synthesizable materials—those that are synthetically accessible through current methodologies but may not have been reported yet. For decades, charge balancing has served as a computationally inexpensive proxy for synthesizability. This approach filters candidate materials by ensuring a net neutral ionic charge for any of the elements' common oxidation states, operating on the chemically motivated principle that ionic compounds tend toward charge neutrality [14].

However, the central thesis of this review is that charge balancing is an inadequate standalone criterion for synthesizability prediction in modern materials research. Its fundamental failure stems from an over-reliance on ionic bonding models, rendering it incapable of accurately describing the complex bonding environments in metallic alloys and covalent solids. Recent data-driven analyses confirm that this rule cannot account for the majority of known synthesized materials, highlighting the urgent need for more sophisticated predictive frameworks [14].

Quantitative Evidence: The Statistical Failure of Charge Balancing

The limitations of charge balancing become starkly evident when its predictions are compared against databases of experimentally synthesized materials. Performance metrics reveal its significant shortcomings as a reliable screening tool.

Table 1: Performance of Charge Balancing in Predicting Synthesized Materials

| Material Category | Percentage Charge-Balanced | Key Finding |

|---|---|---|

| All Inorganic Crystalline Materials (ICSD) | 37% | Majority (63%) of known synthesized materials are not charge-balanced [14] |

| Ionic Binary Cesium Compounds | 23% | Even in typically ionic systems, the rule performs poorly [14] |

| Artificially Generated Compositions | N/A | Poor precision in identifying synthesizable candidates [14] |

The data in Table 1 underscores a critical point: enforcing a charge-balancing constraint would incorrectly eliminate nearly two-thirds of all known inorganic materials from consideration. This demonstrates that while charge neutrality might be a factor in some ionic solids, it is far from a universal synthesizability principle.

Fundamental Reasons for Failure: Bonding Environment Complexity

The failure of charge balancing is rooted in its inability to accommodate different chemical bonding paradigms.

The Metallic Bonding Challenge

In metallic bonding, valence electrons are delocalized and shared among a lattice of positive metal ions. This electron "sea" does not conform to the discrete electron transfers assumed by ionic charge-balancing models. Metallic alloys derive their stability from the collective interaction of these delocalized electrons with the ion cores, and not from the pairwise charge neutrality of their constituent atoms. Consequently, many stable metallic compounds have formulas that appear charge-imbalanced when analyzed through a purely ionic lens [14].

The Covalent Bonding Challenge

Covalent compounds are formed when atoms share electron pairs, a mechanism not governed by the complete electron transfer of ionic bonding. The sharing is often unequal, leading to polar covalent bonds, but the resulting partial charges are not accurately captured by the integer oxidation states used in traditional charge-balancing exercises [15]. For instance, in a covalent molecule like carbon tetrachloride (CCl₄), applying common oxidation states (C⁴⁺ and Cl⁻) suggests a balanced formula, but this is a descriptive formalism—the actual bonding involves shared electrons, not a literal transfer of four electrons from carbon to chlorine atoms. This model breaks down entirely for complex solid-state covalent networks.

Beyond Bonding: Kinetic Stabilization and Synthesis

Synthesizability is not determined by thermodynamics alone. A material can be kinetically stabilized even if it is not the most thermodynamically stable phase in its chemical space. Synthesis pathways can selectively nucleate a target material by minimizing unwanted side-products or leveraging specific reaction conditions that provide kinetic stabilization [14]. Charge balancing, being a static thermodynamic heuristic, cannot account for these dynamic synthetic realities.

Advanced Predictive Models: Moving Beyond the Heuristic

The limitations of charge balancing have spurred the development of sophisticated computational models that learn the complex, multi-faceted nature of synthesizability directly from experimental data.

Machine Learning and Deep Learning Frameworks

Machine learning models, particularly deep learning networks like SynthNN, represent a paradigm shift. These models leverage the entire space of synthesized inorganic chemical compositions from databases like the Inorganic Crystal Structure Database (ICSD) [14].

Table 2: Comparison of Synthesizability Prediction Methods

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Charge Balancing | Net neutral ionic charge | Computationally cheap; simple to implement | Inflexible; poor accuracy (37% recall); fails for metals/covalents [14] |

| DFT Formation Energy | Thermodynamic stability relative to competing phases | Provides energy landscape; well-established | Misses kinetically stable phases; computationally expensive [14] |

| SynthNN (Deep Learning) | Data-driven patterns from all known synthesized materials | High precision (7x higher than charge balance); accounts for complex factors [14] | Requires large datasets; "black box" nature |

These models utilize learned atom embeddings (atom2vec) to represent chemical formulas, optimizing the representation alongside other network parameters without pre-defined chemical rules. Remarkably, without explicit programming, SynthNN learns fundamental chemical principles such as charge-balancing, chemical family relationships, and ionicity, and integrates them into a more nuanced predictive framework [14].

The workflow for this data-driven approach to material discovery is outlined below.

Experimental Validation: The Role of Hydrogen Embrittlement Studies

Research on hydrogen embrittlement provides a compelling experimental case study in how materials behave in reactive environments beyond simple charge considerations. Hydrogen embrittlement is an environmentally induced failure where hydrogen atoms are absorbed on a metal surface, penetrate the bulk, and degrade mechanical properties, leading to a loss of ductility and toughness [16].

Experimental protocols for studying this phenomenon often involve:

- Electrochemical Hydrogen Charging: The material sample acts as the cathode in an electrochemical cell, with a platinum or graphite anode [17]. A power supply provides a controlled current density.

- Gaseous Hydrogen Charging: Exposure to high-pressure hydrogen gas at specified temperatures and durations to simulate service conditions [16] [17].

- Analysis: Subsequent mechanical testing (tensile, fatigue) and microstructural analysis quantify the embrittling effects of hydrogen [16].

Table 3: Key Research Reagents in Hydrogen Embrittlement Studies

| Reagent / Material | Function in Experimental Protocol |

|---|---|

| Sulphuric Acid (H₂SO₄) Electrolyte | Common electrolyte solution serving as the hydrogen source in electrochemical charging [17]. |

| Poisoning Agents (e.g., Thiourea, As₂O₃) | Added to the electrolyte to inhibit hydrogen molecule (H₂) formation, thereby enhancing hydrogen absorption into the metal lattice [17]. |

| Platinum or Graphite Anode | Serves as the counter electrode in the electrochemical charging circuit [17]. |

| High-Pressure Hydrogen Gas | Used in gaseous charging methods to simulate exposure in hydrogen storage or transport applications [16] [17]. |

This experimental domain highlights that material performance and failure are governed by complex interactions (e.g., hydrogen diffusion, dislocation density) that simple stoichiometric rules cannot predict [17].

The Scientist's Toolkit for Synthesizability Research

For researchers moving beyond charge balancing, the following tools and concepts are essential.

Table 4: Essential Toolkit for Modern Synthesizability Research

| Tool or Concept | Description | Role in Overcoming Charge Balancing Limits |

|---|---|---|

| Positive-Unlabeled (PU) Learning | A machine learning paradigm that treats non-synthesized materials as "unlabeled" rather than "negative" examples. | Accounts for the reality that unsynthesized materials may be synthesizable but not yet reported [14]. |

| Inorganic Crystal Structure Database (ICSD) | A comprehensive database of published inorganic crystal structures. | Provides the foundational data for training data-driven synthesizability models [14]. |

| Atom Embeddings (atom2vec) | A learned numerical representation of chemical elements. | Allows models to discover chemical relationships (e.g., ionicity, family trends) from data without explicit rules [14]. |

| Electrochemical Hydrogen Charging | An experimental method for introducing hydrogen into a material's structure. | Reveals material degradation mechanisms in hydrogen environments, relevant for functional material design [17]. |

The evidence is clear: charge balancing is a chemically intuitive but statistically and mechanistically insufficient criterion for predicting the synthesizability of inorganic materials. Its failure is rooted in a fundamental incompatibility with the physical nature of metallic and covalent bonding and its inability to incorporate kinetic and synthetic realities. The path forward lies in data-driven approaches that learn the complex, multi-dimensional patterns of synthesizability from the full expanse of experimental knowledge. Integrating deep learning models like SynthNN into computational screening workflows promises to dramatically increase the reliability and efficiency of material discovery, finally moving the field beyond the rigid constraints of the ionic bond paradigm. Future research will focus on enriching these models with synthetic pathway data and operational constraints, further closing the gap between computational prediction and laboratory realization.

In computational materials science and drug discovery, the initial design of novel molecules and materials often relies on fundamental stability criteria, with charge balancing being a primary consideration. While ensuring electroneutrality is a necessary first step, it is profoundly insufficient for predicting whether a proposed compound can be successfully synthesized in a laboratory. Real-world synthesizability is governed by a complex web of kinetic and technological factors that extend far beyond simple thermodynamic stability [18]. A material may be thermodynamically stable yet remain practically impossible to synthesize due to kinetic barriers, competing reaction pathways, or technological bottlenecks in the experimental process [19] [18]. This whitepaper delves into these complexities, providing researchers with a technical guide to the multifaceted challenges of synthesizability.

The Thermodynamic Foundation and Its Limits

The Principle of Detailed Balance

Thermodynamic feasibility, particularly the observance of detailed balance (or microscopic reversibility), is a fundamental constraint for any physically possible reaction system. Detailed balance demands that in thermodynamic equilibrium, the forward rate of a reaction equals its backward rate, resulting in a net flux of zero [19]. Violating this principle leads to thermodynamically infeasible models that describe "chemical perpetual-motion machines" [19].

For a cyclic reaction network, this imposes the Wegscheider condition, which requires that the product of the equilibrium constants around any closed cycle must equal one [19]. Failure to enforce this condition in kinetic models can result in spurious predictions and misleading sensitivity analyses, as parameters may be varied in physically impossible ways [19].

Table 1: Key Thermodynamic vs. Kinetic Concepts in Synthesis

| Concept | Thermodynamic Role | Kinetic Role |

|---|---|---|

| Detailed Balance | Ensures existence of thermodynamic equilibrium; imposes constraints on equilibrium constants [19]. | Governs forward/backward reaction rates at equilibrium; violated models predict impossible behavior [19]. |

| Stability | Determines if a material is stable at a given temperature and pressure (ΔG < 0) [18]. | Determines if a material forms within a practical timeframe, regardless of final stability [18]. |

| Reaction Pathway | Defines the overall energy difference between reactants and products. | Defines the specific route and energy barriers (activation energies) of the synthesis. |

| Competing Phases | Identifies which impurity phases are also thermodynamically stable. | Determines which impurities form fastest, often dominating the final product [18]. |

The Synthesizability Gap: Stable ≠ Synthesizable

The critical distinction between thermodynamic stability and practical synthesizability is a central challenge. As noted in materials discovery, "thermodynamically stable ≠ synthesizable" [18]. A compound can have a negative formation energy yet be inaccessible because all feasible synthesis pathways are blocked by high kinetic barriers or lead to metastable intermediates.

For example:

- Bismuth Ferrite (BiFeO₃): A promising multiferroic material that is thermodynamically stable only over a narrow window of conditions. Conventional synthesis attempts frequently produce unwanted impurities like Bi₂Fe₄O₉ or Bi₂₅FeO₃₉ because these competing phases are kinetically favorable to form [18].

- LLZO (Li₇La₃Zr₂O₁₂): A solid-state battery electrolyte. Its synthesis requires high temperatures (~1000 °C), which volatilizes lithium and promotes the formation of the impurity La₂Zr₂O₇. Solving one problem (achieving the correct phase) can exacerbate another (elemental loss) [18].

Kinetic Factors Governing Synthesizability

Kinetic Balance in Computational Models

In computational chemistry, the kinetic balance requirement is crucial for meaningful 4-component relativistic calculations. This principle mandates a specific relationship between the basis sets used to describe the large and small components of wavefunctions [20]. An imbalance can lead to unpredictable results and a plethora of superfluous solutions, cluttering the variational space for small components and causing numerical difficulties [20]. This concept, applied more broadly to materials modeling, underscores that a successful simulation must correctly balance the dynamic, kinetic processes that govern atomic and molecular assembly, not just the final, static electronic structure.

The Pathway Problem and Reaction Networks

Synthesizing a chemical compound is fundamentally a pathway problem, akin to crossing a mountain range where one cannot simply go straight over the top but must find a viable pass [18]. A material becomes difficult to synthesize when all obvious pathways encounter insurmountable kinetic barriers.

Reaction network-based approaches are increasingly used to address this. These methods generate hundreds of thousands of potential reaction pathways for a target compound, starting from various precursors. Some routes may begin with common precursors, while others involve rare intermediate phases. The goal is to identify low-barrier synthesis routes—the shortcuts around the mountain—by modeling pathways with thermodynamic principles and simulating phase evolution in a virtual reactor [18].

Diagram 1: Reaction Network for Synthesis

Case Study: The BaTiO₃ Synthesis Bottleneck

The synthesis of barium titanate (BaTiO₃) illustrates how kinetic convenience can dominate synthetic practice, often to the detriment of optimal performance. The conventional route uses BaCO₃ and TiO₂ as precursors. However, this reaction is known to proceed indirectly through intermediate phases (e.g., Ba₂TiO₄) and typically requires high temperatures (1000-1100°C) with long heating times (4-8 hours) [18].

Despite its inefficiency, this route remains the go-to approach because it is "good enough" and well-established. Analysis of published synthesis recipes reveals a striking lack of diversity: 144 out of 164 entries for BaTiO₃ use the same precursor combination [18]. This highlights a significant human bias in chemical experiment planning, which can sometimes lead to worse outcomes than randomly selected experiments [18]. Overcoming synthesizability challenges, therefore, requires systematically exploring beyond conventional, kinetically entrenched pathways.

Technological and Data Bottlenecks

The Data Scarcity Problem in Synthesis

While AI has shown remarkable progress in predicting material structures and properties, its application to synthesis is severely hampered by a data scarcity problem [18]. The fundamental issue is that simulating synthesis is vastly more complex than simulating an atomic structure. Reaction pathways involve numerous factors operating across vast spatiotemporal scales: time, temperature, atmosphere, pressure, defects, and grain boundaries [18].

Efforts to mine the scientific literature for synthesis data face significant limitations:

- Negative results (failed synthesis attempts) are almost never published [18] [21].

- The scope of published chemical reactions is surprisingly narrow, with researchers often avoiding unconventional "wacky" synthesis routes [18].

- Once a convenient route is established as "good enough," it becomes the conventional standard, regardless of potential alternatives that might offer superior performance [18].

Building a comprehensive synthesis dataset through experimentation alone is computationally intractable. Testing just binary reactions between 1,000 compounds would require a minimum of 500,000 experiments, far beyond the capacity of most high-throughput laboratories [18].

The AI-Driven Solution: Co-Designing Molecules and Pathways

A promising frontier in addressing synthesizability is the co-design of molecules and their synthesis pathways using advanced AI frameworks. The CGFlow method, for instance, introduces a dual-design approach that enables AI to simultaneously model a molecule's compositional structure and its continuous 3D state [22]. This integration is essential for generating molecules that are both biologically effective and chemically feasible to produce.

Built upon CGFlow, 3DSynthFlow is designed for target-based drug design, where a generated molecule must bind to a given target protein. Unlike traditional models that focus solely on structure or binding, 3DSynthFlow co-designs a molecule's binding pose and synthetic pathway [22]. This has yielded impressive results:

- Achieved state-of-the-art binding affinity on all 15 protein targets tested on the LIT-PCBA benchmark.

- Demonstrated 5.8 times greater efficiency in sampling viable candidates than previous 2D synthesis-based models.

- Reached a 62.2% synthesis success rate on the CrossDocked benchmark, vastly outperforming comparable models like MolCRAFT-large (3.9%) [22].

Diagram 2: AI-Driven Co-Design Workflow

Experimental Protocols and Research Toolkit

Protocol for Validating Synthesis Pathways

Objective: To experimentally validate a computationally predicted synthesis pathway for a novel material, assessing both thermodynamic and kinetic feasibility.

Materials & Equipment:

- High-purity precursor materials

- Programmable tube furnace with controlled atmosphere capability

- Quartz or alumina crucibles

- X-ray Diffractometer (XRD)

- Scanning Electron Microscope (SEM) with Energy-Dispersive X-ray Spectroscopy (EDS)

- Thermal Gravimetric Analyzer (TGA)

- Ball mill or mortar and pestle for powder mixing

Procedure:

- Precursor Preparation: Weigh precursors according to the stoichiometric ratio predicted by the computational model. Use a ball mill to homogenize the mixture for 1-2 hours.

- Reaction Profiling: Using TGA, heat a small sample (10-20 mg) at a constant rate (e.g., 10°C/min) to 100°C beyond the predicted synthesis temperature under the appropriate atmosphere (air, N₂, Ar). Monitor mass changes to identify key transition temperatures and potential intermediate phases.

- Phase Evolution Study: Seal larger batches (0.5-1 g) of the homogenized precursor in crucibles. Heat in the tube furnace using a series of temperatures and dwell times identified in Step 2. Quench samples after each temperature step for XRD analysis.

- Kinetic Parameter Determination: For each major phase transformation identified, perform isothermal experiments at multiple temperatures. Use XRD to quantify the fraction of product formed over time. Apply the Avrami or other appropriate kinetic model to extract activation energies.

- Optimization & Scale-Up: Based on the kinetic data, optimize the time-temperature profile to maximize target phase yield while minimizing impurities. Scale the reaction to 10-50 g to assess reproducibility and the impact of scaling on phase purity and morphology.

Analysis:

- Use XRD Rietveld refinement to quantify phase percentages in each sample.

- Perform SEM/EDS to examine morphology and elemental distribution, checking for homogeneity.

- Compare experimental activation energies with computational predictions to validate the model.

The Scientist's Toolkit for Synthesis Research

Table 2: Essential Research Reagents and Materials for Synthesis Validation

| Reagent/Material | Function | Application Example |

|---|---|---|

| Enamine Reaction Rules | A standardized set of chemical transformation rules used by AI systems to generate plausible synthetic pathways [22]. | Used in 3DSynthFlow to limit generation to practical synthesis steps for drug-like molecules [22]. |

| High-Purity Precursors | Starting materials with minimal impurities to avoid unintended side reactions and phase impurities. | Critical for synthesizing pure-phase LLZO, where precursor quality affects lithium volatility and phase purity [18]. |

| Controlled-Atmosphere Furnace | Enables synthesis under inert (Ar, N₂) or reactive (O₂) gases to control oxidation states and prevent decomposition. | Essential for reactions involving air-sensitive intermediates or precursors in multiferroic material synthesis [18]. |

| SCADA System Data | Provides real-time monitoring and control of industrial synthesis parameters (temperature, pressure, flow rates) [23]. | Integrated into AI platforms for real-time underperformance detection and power forecasting to reduce imbalance costs [24]. |

The journey from a computationally designed compound to a physically synthesized material navigates a complex web of interdependent factors. Charge balancing and thermodynamic stability are necessary but insufficient conditions for success. Real-world synthesizability is dominantly controlled by kinetic factors—the availability of a viable, low-energy barrier pathway—and technological bottlenecks, including data scarcity and the limitations of conventional synthesis intuition. The most promising approaches moving forward integrate computational design with pathway prediction from the outset, using AI frameworks that co-design the target material and its synthesis route simultaneously. By embracing this holistic view of synthesizability, researchers can transform the design-synthesis gap from a formidable barrier into a manageable, and ultimately solvable, set of technical challenges.

The New Synthesizability Toolkit: From PU-Learning to Integrated Models

For decades, charge-balancing has served as a foundational, chemically intuitive proxy for predicting whether a hypothetical inorganic crystalline material can be synthesized. This approach filters candidate materials based on a net neutral ionic charge calculated from common oxidation states. However, emerging evidence reveals this method to be critically insufficient for reliable synthesizability prediction. A quantitative analysis demonstrates that among all synthesized inorganic materials, only 37% are charge-balanced according to common oxidation states. The performance is even more deficient for specific material classes; among ionic binary cesium compounds, only 23% of known compounds are charge-balanced [14].

The fundamental failure of this heuristic stems from its inflexibility to account for the diverse bonding environments across different material classes, such as metallic alloys, covalent materials, and ionic solids. Furthermore, synthesizability depends on a complex array of factors beyond thermodynamic stability, including kinetic stabilization, selective nucleation, reactant costs, equipment availability, and human-perceived importance of the final product. Consequently, charge-balancing alone cannot accurately distinguish synthesizable from non-synthesizable materials, creating an urgent need for more sophisticated, data-driven approaches that learn the underlying principles of synthesizability directly from experimental data [14].

Machine Learning Approach: Learning Synthesizability from Data

Reformulating the Discovery Paradigm

A transformative alternative to rule-based heuristics involves reformulating material discovery as a synthesizability classification task. This approach employs deep learning models trained directly on comprehensive databases of known materials, enabling them to learn the complex, often implicit "rules" of synthesizability from the collective history of experimental success [14].

The SynthNN (Synthesizability Neural Network) model exemplifies this paradigm. It leverages the entire space of synthesized inorganic chemical compositions through a deep learning architecture that uses the atom2vec representation. This framework represents each chemical formula by a learned atom embedding matrix optimized alongside all other neural network parameters, automatically learning an optimal representation of chemical formulas directly from the distribution of previously synthesized materials without requiring pre-defined chemical knowledge or proxy metrics [14].

Key Methodological Components

Several technical innovations enable this data-driven approach:

Positive-Unlabeled Learning Framework: Since unsuccessful syntheses are rarely reported in scientific literature, SynthNN treats this lack of negative examples by creating a synthesizability dataset augmented with artificially generated "unsynthesized" materials, using a semi-supervised approach that probabilistically reweights these examples according to their likelihood of being synthesizable [14].

Integration with Discovery Workflows: Unlike standalone tools, synthesizability models like SynthNN are designed for seamless integration with computational material screening and inverse design workflows, serving as a synthesizability constraint to increase the reliability of identifying synthetically accessible materials [14].

Dual Active Learning Cycles: Advanced implementations incorporate nested active learning cycles where generative models propose new molecules that are evaluated through chemoinformatics oracles (drug-likeness, synthetic accessibility) and molecular modeling oracles (docking scores). Molecules meeting threshold criteria are used to fine-tune the generative model, creating a self-improving discovery cycle [25].

Experimental Validation and Performance Benchmarks

Quantitative Performance Comparison

Rigorous benchmarking against traditional methods demonstrates the superior performance of the data-driven approach:

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Precision | Key Limitations | Computational Efficiency |

|---|---|---|---|

| Charge-Balancing | Low (23-37% of known materials) | Inflexible to different bonding environments; ignores kinetic and non-physical factors | Computationally inexpensive |

| DFT-Calculated Formation Energy | Captures only 50% of synthesized materials | Fails to account for kinetic stabilization; requires crystal structure | Computationally intensive |

| SynthNN (ML Approach) | 7× higher precision than DFT-based methods | Requires large databases of known materials; black-box interpretation | Enables screening of billions of candidates |

The performance advantage extends beyond computational metrics. In a head-to-head material discovery comparison against 20 expert material scientists, SynthNN outperformed all experts, achieving 1.5× higher precision and completing the task five orders of magnitude faster than the best human expert [14].

Case Study: Drug Discovery with Integrated Synthesizability

The CGFlow framework exemplifies the next generation of synthesizability-aware design, introducing a dual-design approach that enables AI to simultaneously model a molecule's compositional structure and its 3D spatial configuration. This combination is essential for generating molecules that are both biologically effective and chemically feasible to produce [22].

Built on CGFlow, the 3DSynthFlow platform specifically addresses target-based drug design, where generated molecules must bind to disease-causing proteins. Unlike traditional models focusing solely on structure or binding, 3DSynthFlow co-designs a molecule's binding pose and synthetic pathway. Implementation results demonstrate its effectiveness [22]:

- Superior Binding: Achieved state-of-the-art binding affinity on all 15 protein targets tested on the LIT-PCBA benchmark

- Exceptional Efficiency: 5.8 times more efficient in sampling viable candidates than previous 2D synthesis-based models

- Unmatched Synthesizability: Achieved 62.2% synthesis success rate on the CrossDocked benchmark, vastly outperforming comparable models like MolCRAFT-large (3.9%)

Diagram 1: Integrated synthesizability prediction and active learning workflow

Essential Research Reagents and Computational Tools

Table 2: Key Research Reagents and Computational Tools for ML-Driven Material Discovery

| Tool/Database Name | Type | Primary Function | Application in Synthesizability Research |

|---|---|---|---|

| ICSD (Inorganic Crystal Structure Database) | Database | Comprehensive repository of synthesized inorganic crystalline structures | Provides training data for synthesizability models; represents collective experimental knowledge [14] |

| atom2vec | Algorithm | Learned atom embedding representation | Represents chemical formulas as optimized vectors without pre-defined chemical knowledge [14] |

| CGFlow/3DSynthFlow | Framework | Molecular and synthesis pathway co-design | Simultaneously designs molecule structure and synthetic pathway; ensures manufacturability [22] |

| GFlowNets (Generative Flow Networks) | Algorithm | Exploration of high-reward molecular structures | Efficiently explores chemical space for synthesizable candidates with high binding affinity [22] |

| VAE with Active Learning | Architecture | Generative model with iterative refinement | Generates novel molecules refined through chemoinformatics and molecular modeling oracles [25] |

| IBM RXN/AiZynthFinder | Software | Retrosynthetic analysis and reaction prediction | Predicts feasible synthetic pathways for candidate molecules [26] |

Detailed Experimental Protocols

Protocol 1: Training a Synthesizability Classification Model

Data Collection and Curation

- Extract known synthesized inorganic materials from ICSD (Inorganic Crystal Structure Database)

- Represent chemical compositions as tokenized formulas or feature vectors

- Generate artificial "unsynthesized" materials through combinatorial enumeration or perturbation of known materials

Model Architecture Setup

- Implement atom2vec embedding layer with tunable dimensionality

- Design neural network architecture (typically multilayer perceptron or convolutional)

- Configure for positive-unlabeled learning to handle lack of verified negative examples

Training Procedure

- Split data into training/validation sets with temporal holdout to prevent data leakage

- Train model to distinguish known materials from artificial negatives

- Optimize hyperparameters (embedding dimension, network depth, learning rate) via cross-validation

- Employ early stopping based on validation performance

Validation and Integration

- Benchmark against charge-balancing and formation energy baselines

- Integrate trained model as filter in high-throughput computational screening pipelines

- Deploy for prioritization of candidate materials for experimental synthesis [14]

Protocol 2: Active Learning for Generative Molecular Design

Initial Model Training

- Train variational autoencoder (VAE) on general molecular dataset (e.g., ZINC, ChEMBL)

- Fine-tune on target-specific training set to increase initial target engagement

Inner Active Learning Cycle (Chemical Optimization)

- Sample generated molecules from VAE

- Evaluate chemical validity, drug-likeness, and synthetic accessibility

- Calculate similarity to existing training set to promote novelty

- Add molecules meeting thresholds to temporal-specific set

- Fine-tune VAE on updated temporal-specific set

- Repeat for predetermined number of iterations

Outer Active Learning Cycle (Affinity Optimization)

- Perform docking simulations on accumulated molecules from temporal-specific set

- Transfer molecules with favorable docking scores to permanent-specific set

- Fine-tune VAE on permanent-specific set

- Iterate with nested inner cycles

Candidate Selection and Validation

- Apply stringent filtration based on binding pose analysis and interaction patterns

- Conduct absolute binding free energy simulations for top candidates

- Select final candidates for synthesis and experimental testing [25]

Diagram 2: Nested active learning cycles for generative molecular design

Discussion and Future Directions

The paradigm shift from heuristic-based to data-driven synthesizability prediction represents a fundamental transformation in materials discovery and drug development. By directly learning from the entire corpus of known synthesized materials, machine learning models can internalize complex chemical principles that elude simplified rules like charge-balancing. Remarkably, without explicit programming of chemical knowledge, models like SynthNN learn fundamental chemical principles including charge-balancing relationships, chemical family trends, and ionicity,

but do so in a nuanced, context-aware manner that explains their superior performance [14].

The integration of synthesizability prediction directly into generative molecular design frameworks addresses a critical bottleneck in the transition from computational prediction to experimental realization. The dramatically improved synthesis success rates demonstrated by platforms like 3DSynthFlow (62.2% vs. 3.9% for conventional approaches) highlight the practical impact of this paradigm shift [22].

Future developments will likely focus on expanding the chemical reaction space covered by these models, incorporating more complex transformations such as ring-forming reactions, and enhancing the integration of synthetic pathway prediction with molecular property optimization. As these technologies mature, they promise to significantly accelerate the discovery and development of new functional materials and therapeutic compounds by ensuring that computational designs are not only theoretically optimal but also practically achievable.

The Synthesizability Prediction Challenge: Beyond Charge Balancing

The prediction of material synthesizability is a cornerstone of accelerating materials discovery. Historically, physico-chemical heuristics like the Pauling Rules and charge-balancing criteria have been used as proxies for stability and synthesizability. However, these simplified approaches are often insufficient; more than half of the experimental materials in the Materials Project database do not meet these traditional criteria for synthesizability [27]. Charge balancing, while contributing to thermodynamic stability, fails to account for the complex kinetic factors and technological constraints that fundamentally influence synthesis outcomes [27]. For instance, many interesting metastable materials, which do not reside at the convex hull minimum of formation energy, can be synthesized under specific thermodynamic conditions and remain kinetically stabilized [27]. Furthermore, the failure to publish unsuccessful synthesis attempts creates a significant data gap, making it difficult to apply standard supervised classification methods that require well-defined positive and negative examples [27] [28]. This limitation necessitates advanced machine-learning approaches that can learn from incomplete data.

What is Positive and Unlabeled (PU) Learning?

Positive and Unlabeled (PU) learning is a semi-supervised classification method that trains an accurate binary classifier using only positive examples (e.g., known synthesizable materials) and unlabeled examples (a mixture of synthesizable and non-synthesizable materials whose status is unknown) [29]. This framework is particularly apt for materials science, where compiling a set of reliable negative examples (confirmed unsynthesizable materials) is notoriously challenging, expensive, and often impractical [30]. In essence, PU learning reframes the synthesizability classification problem from a supervised task requiring both positive and negative labels to a more realistic scenario that mirrors the actual data available to researchers.

Core Assumptions and General Workflow

The application of PU learning typically rests on the "selected completely at random" (SCAR) assumption. This assumes that the labeled positive examples are a random sample from the entire set of true positives. The general workflow involves several key steps [30]:

- Identification of Positive Samples: Gathering a set of confirmed synthesizable materials from experimental databases (e.g., ICSD).

- Construction of Unlabeled Set: Assembling a large set of theoretical or hypothetical materials whose synthesizability is unknown.

- Model Training: Employing specialized PU learning algorithms to identify patterns from the positive set and iteratively identify likely negative examples from the unlabeled set.

- Classifier Application: Using the trained model to predict the synthesizability of new, unseen material candidates.

Key Methodologies and Algorithms in PU Learning

PU learning algorithms can be broadly categorized by their strategy for handling unlabeled data. The following table summarizes the primary approaches.

Table 1: Categories of PU Learning Algorithms

| Category | Core Methodology | Key Characteristics | Examples |

|---|---|---|---|

| Two-Step Strategy | Identifies reliable negative examples from the unlabeled set, then applies standard supervised learning [29]. | Performance highly dependent on the quality of identified negative samples; can be prone to error propagation. | - |

| Biased Learning | Treats all unlabeled instances as negative examples, often with a weighting scheme to account for noise [29]. | Simple to implement but performance degrades if the unlabeled set contains many positive samples. | - |