Validating Transfer Learning Across Biological and Chemical Domains: From Materials Science to Precision Oncology

This article explores the rigorous validation of transfer learning (TL) as a powerful framework for overcoming data scarcity in biomedical research and drug development.

Validating Transfer Learning Across Biological and Chemical Domains: From Materials Science to Precision Oncology

Abstract

This article explores the rigorous validation of transfer learning (TL) as a powerful framework for overcoming data scarcity in biomedical research and drug development. It examines the foundational principles of TL, including cross-modality and cross-property knowledge transfer, and details methodological advances from real-world applications in predicting drug response and material properties. The scope includes a critical analysis of common challenges such as domain shift and model optimization, and provides a comparative evaluation of TL performance against traditional methods. Aimed at researchers and drug development professionals, this review synthesizes evidence from recent, high-impact studies to offer a practical guide for validating and deploying TL strategies that accelerate discovery and enhance predictive accuracy in clinical and materials science contexts.

The Core Principles and Imperative of Cross-Domain Knowledge Transfer

Transfer learning (TL) is a machine learning technique where a model developed for a specific source task is reused as the starting point for a model on a different, but related, target task [1] [2]. This approach leverages knowledge gained from solving one problem and applies it to a new problem, significantly improving computational efficiency and model performance, particularly in scenarios where labeled data is scarce [1] [2]. The technique is formally defined using the concepts of domains and tasks: a domain (D = {\mathcal{X}, P(X)}) consists of a feature space (\mathcal{X}) and a marginal probability distribution (P(X)), while a task (\mathcal{T} = {\mathcal{Y}, f(\cdot)}) consists of a label space (\mathcal{Y}) and an objective predictive function (f(\cdot)) [3]. Transfer learning aims to improve the learning of the target predictive function (fT(\cdot)) in domain (DT) by leveraging knowledge from source domain (DS) and source task (TS) [3].

In essence, transfer learning allows models to benefit from learned representations, enabling effective knowledge transfer to new tasks and resulting in improved learning performance and generalization [2]. This capability is especially valuable in scientific fields like biomedical engineering and materials science, where experimental data is often limited, expensive to produce, and requires specialized expertise [4] [5].

Fundamental Principles and Comparative Approaches

Key Mechanisms of Knowledge Transfer

Transfer learning operates through several distinct technical approaches, each with specific mechanisms for transferring knowledge:

Feature-representation Transfer: This approach uses the features extracted from the hidden layers of a pre-trained model as inputs for a new model. The convolutional layers of the source model are typically frozen and not updated during training on the target task [4] [3]. This method is particularly effective when the target dataset is small, as it prevents overfitting while leveraging general feature representations learned from large source datasets.

Fine-tuning (Parameter Transfer): This method involves not just using the feature representations but updating the pre-trained model's parameters (weights) on the target dataset. Typically, the earlier layers (which capture general features) are kept frozen or lightly tuned, while the later layers (which capture task-specific features) are more extensively updated [4] [3]. This approach is beneficial when the target dataset is sufficiently large to allow for safe parameter updates without catastrophic forgetting.

Sim2Real Transfer: A specialized form of transfer learning that bridges the gap between simulation and real-world data. This approach is particularly valuable in materials science, where extensive computational databases can be leveraged to predict real-world material properties despite the inherent domain shift between simulated and experimental data [5].

Comparative Analysis with Self-Supervised Learning

While transfer learning leverages knowledge from pre-trained models on labeled source datasets, self-supervised learning (SSL) represents a different approach to addressing data scarcity. The table below compares these two pivotal techniques:

Table 1: Comparison Between Transfer Learning and Self-Supervised Learning

| Aspect | Transfer Learning | Self-Supervised Learning |

|---|---|---|

| Primary Approach | Leverages knowledge from pre-training on a large-scale labeled dataset (e.g., ImageNet) [2] | Trains models using pretext tasks that don't require manual annotation [2] |

| Data Requirements | Source domain requires extensive labeled data | Utilizes large amounts of unlabeled data |

| Domain Considerations | May face domain mismatch issues between pre-training and target domains [2] | Requires careful design of pretext tasks to ensure meaningful representations [2] |

| Implementation Complexity | Relatively straightforward implementation with available pre-trained models | Higher complexity in designing effective pretext tasks |

| Typical Applications | Medical image classification, materials property prediction [3] [5] | Natural language processing, video recognition [2] |

Both approaches have demonstrated remarkable achievements in various fields, enabling breakthroughs in areas such as disease diagnosis, object recognition, and language understanding [2]. The selection between these approaches depends on the specific application constraints, particularly the availability of labeled data in related domains and computational resources.

Transfer Learning in Biomedical Applications

Clinical Non-Image Data Analysis

Transfer learning has seen rapid adoption in clinical research for non-image data, with a recent scoping review identifying 83 studies applying these techniques, 63% of which were published within just 12 months of the search date [4]. The applications span diverse data types, with time series data being the most common (61%), followed by tabular data (18%), audio (12%), and text (8%) [4].

A significant finding from this review is that 40% of studies applied image-based models to non-image data by first transforming the data into image formats (e.g., spectrograms for audio data or similar transformations for time series) [4]. This innovative approach leverages powerful pre-trained computer vision models like those trained on ImageNet, demonstrating the flexibility of transfer learning methodologies. The review also highlighted an interdisciplinary gap, with 35% of studies lacking any authors with health-related affiliations, underscoring the need for greater collaboration between technical and clinical researchers [4].

Medical Image Classification

In medical image analysis, transfer learning has become a fundamental tool to overcome data scarcity problems. A comprehensive literature review of 121 studies revealed distinct patterns in how transfer learning is implemented for medical image classification:

Table 2: Model Selection and TL Approaches in Medical Image Classification

| Aspect | Trends in Literature | Most Popular Examples |

|---|---|---|

| Model Selection | Majority empirically evaluated multiple models [3] | Inception most employed [3] |

| Model Depth | Deep models (33 studies), Shallow models (24 studies) [3] | ResNet, Inception (deep); AlexNet (shallow) [3] |

| TL Approach Selection | Majority benchmarked multiple approaches [3] | Feature extractor and fine-tuning from scratch most favored [3] |

| Single TL Approach | Feature extractor (38 studies), Fine-tuning from scratch (27 studies) [3] | Feature extractor hybrid (7 studies), Fine-tuning (3 studies) less common [3] |

The review demonstrated that despite data scarcity in medical domains, transfer learning consistently delivers effective performance. Based on the aggregated evidence, the study recommends using deep models like ResNet or Inception as feature extractors, which can save computational costs and time without degrading predictive power [3].

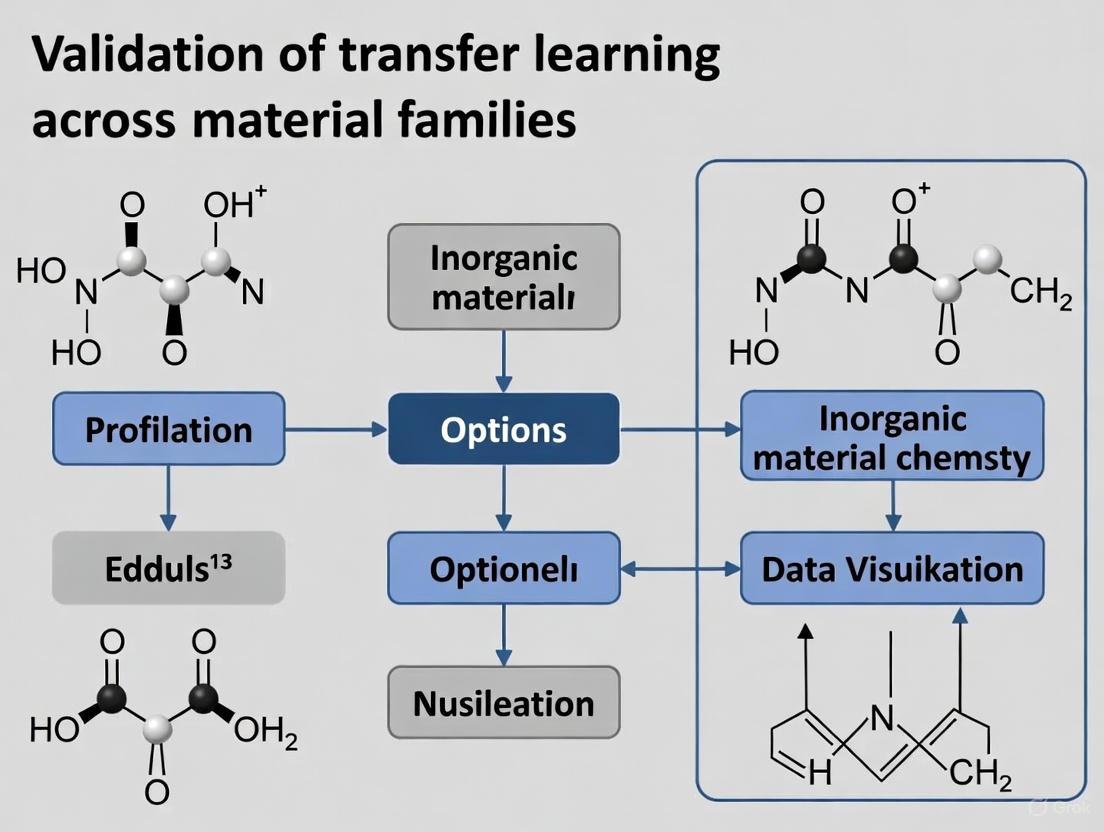

Figure 1: Transfer Learning Workflow for Biomedical Applications

Experimental Protocols in Biomedical Research

The experimental methodology for applying transfer learning in biomedical research typically follows a structured protocol:

Source Model Selection: Researchers typically select pre-trained models established on large datasets like ImageNet, with Inception and ResNet being particularly popular choices due to their depth and proven performance [3].

Data Preprocessing: For non-image data, transformation to image formats may be employed. This includes generating spectrograms from audio signals or converting time-series data into visual representations [4].

Transfer Learning Implementation: Based on the target dataset size and similarity to the source domain, researchers either:

- Use the pre-trained model as a feature extractor with frozen convolutional layers, or

- Employ fine-tuning where some layers are updated using the target dataset [3].

Performance Evaluation: Models are validated using standard metrics appropriate to the clinical task (e.g., accuracy, AUC-ROC for classification tasks) with careful separation of training, validation, and test sets [4].

Recent trends show increased use of foundational models and low-rank adaptations (LoRA) for time series forecasting in clinical contexts, which reduce training time and promote Green AI by lowering computational costs through model reuse [6].

Transfer Learning in Materials Science

Simulation-to-Real (Sim2Real) Transfer

Materials science faces unique data challenges, as experimental data is often scarce due to time-consuming, multi-stage workflows involving synthesis, sample preparation, and property measurements [5]. To overcome these limitations, researchers are developing extensive computational databases using molecular dynamics simulations and first-principles calculations [5]. Transfer learning enables the integration of these extensive simulation data with limited experimental data through Simulation-to-Real (Sim2Real) transfer.

This approach has been successfully applied to various materials systems, including:

- Polymer Properties: Predicting refractive index, density, specific heat capacity, and thermal conductivity using all-atom classical MD simulations from databases like RadonPy [5].

- Polymer-Solvent Miscibility: Integrating quantum chemistry datasets with limited experimental data to predict miscibility across wide chemical spaces [5].

- Inorganic Materials: Predicting lattice thermal conductivity by leveraging first-principles calculations as the source task when experimental data is limited to as few as 45 samples [5].

Scaling Laws in Sim2Real Transfer

A groundbreaking finding in materials science transfer learning is the existence of scaling laws that govern how prediction performance improves with increasing computational data. Theoretical and experimental studies have demonstrated that the generalization error in Sim2Real transfer follows a power-law relationship [5]:

For a fixed number of experimental samples (m), the upper bound for the generalization error is expressed as: [ \mathbb{E}[L(f_{n,m})] \le R(n) := Dn^{-\alpha} + C ] where (n) is the number of computational samples, (D) and (\alpha) are scaling factors, and (C) is the transfer gap representing the irreducible error due to domain differences between simulation and reality [5].

Table 3: Scaling Law Parameters in Materials Science Transfer Learning

| Parameter | Interpretation | Influence Factors |

|---|---|---|

| (n) | Number of computational samples | Simulation throughput, database size |

| (D) | Scaling factor | Task complexity, model architecture |

| (\alpha) | Decay rate | Relevance between source and target domains |

| (C) | Transfer gap | Consistency of simulations to real-world scenarios |

This scaling relationship has profound implications for materials informatics, as it offers a quantitative framework for planning computational database development. Researchers can estimate the sample size necessary to achieve desired performance levels and make informed decisions about resource allocation between computational and experimental approaches [5].

Experimental Protocols in Materials Science

The standard experimental protocol for Sim2Real transfer in materials science involves:

Computational Database Creation: Using high-throughput computational experiments (e.g., molecular dynamics simulations via RadonPy or first-principles calculations) to generate source data [5]. The polymer property prediction case study generated approximately 70,000 amorphous polymer samples through fully automated all-atom classical MD simulations [5].

Descriptor Engineering: Representing materials using compositional and structural feature vectors. In polymer research, a 190-dimensional descriptor vector represents the chemical structure of polymer repeating units [5].

Source Model Pretraining: Training property predictors using neural networks that map descriptor vectors to properties of interest. The model architecture typically consists of fully connected multi-layer neural networks [5].

Transfer to Experimental Domain: Fine-tuning the pre-trained models on limited experimental data (e.g., from databases like PoLyInfo for polymer properties) [5]. The fine-tuning process typically uses 80% of the experimental datasets for training, with the remainder held out for evaluation [5].

Performance Validation: Repeated random subsampling validation (e.g., 500 independent iterations) to ensure statistical significance of results, particularly important when working with small experimental datasets [5].

Figure 2: Sim2Real Transfer Learning Framework in Materials Science

Successful implementation of transfer learning across biomedical and materials science domains relies on specialized computational resources and databases:

Table 4: Essential Research Reagents for Transfer Learning Applications

| Resource Type | Specific Examples | Function and Application |

|---|---|---|

| Source Models | Inception, ResNet, VGG, AlexNet [3] | Pre-trained neural networks providing foundational feature extraction capabilities |

| Medical Databases | PoLyInfo [5], Monash Forecasting Repository [6] | Domain-specific datasets for target task fine-tuning |

| Materials Databases | RadonPy [5], Materials Project [5], AFLOWLIB [5] | Computational databases for polymer and inorganic materials properties |

| Simulation Tools | LAMMPS [5], First-principles calculation packages | Generate computational data for source tasks in materials science |

| Benchmarking Suites | Monash Forecasting Repository [6], ETT dataset [6] | Standardized datasets for comparing TL algorithm performance |

Implementation Tools and Techniques

Beyond data resources, specific implementation tools and techniques are essential for effective transfer learning:

Low-Rank Adaptation (LoRA) Techniques: Methods like LLIAM enable efficient fine-tuning of foundational models for time series forecasting, reducing training time and computational costs while maintaining performance [6].

Contrast Checking Tools: Tools like Polypane, Colour Contrast Checker, and Color Contrast Analyser help ensure visualizations meet accessibility standards, which is particularly important for interpreting model results and creating scientific communications [7].

Reproducibility Frameworks: Code sharing platforms and version control systems, utilized by only 27% of clinical transfer learning studies according to recent reviews, are critical for advancing reproducible research principles in the field [4].

Transfer learning has emerged as a transformative methodology that bridges multiple scientific disciplines, from biomedical research to materials science. The technique's fundamental value lies in its ability to leverage knowledge from data-rich domains to solve problems in data-scarce environments, effectively addressing the critical data scarcity challenge that pervades many scientific fields.

The comparative analysis presented in this guide reveals both universal principles and domain-specific considerations. While the underlying mechanisms of feature-representation transfer and fine-tuning remain consistent across domains, the implementation details vary significantly—from transforming non-image clinical data into spectrograms to leveraging computational materials databases for Sim2Real property prediction. The emergence of scaling laws in materials informatics provides a quantitative framework for resource planning, demonstrating the field's maturation toward predictive science.

As transfer learning continues to evolve, key challenges and opportunities emerge: the need for greater interdisciplinary collaboration between technical and domain experts, more widespread adoption of reproducible research principles, and continued development of efficient adaptation techniques like low-rank adaptations. The convergence of these approaches across biomedical and materials science domains highlights the unifying potential of transfer learning as a foundational methodology for scientific discovery in data-limited environments.

Transfer learning has emerged as a powerful strategy to overcome data scarcity in scientific domains. This guide objectively compares two foundational frameworks: cross-property and cross-modality transfer learning. Cross-property transfer learning leverages knowledge from large datasets of one material property to build accurate models for different properties with small datasets [8] [9]. Cross-modality transfer learning overcomes a more fundamental challenge: transferring knowledge between different types of data representations, such as from crystal structures to chemical compositions [10]. This comparison examines their experimental performance, methodologies, and applicability in materials and drug discovery research.

Experimental Performance Comparison

The table below summarizes quantitative performance comparisons for cross-property and cross-modality transfer learning frameworks against traditional machine learning approaches.

Table 1: Performance Comparison of Transfer Learning Frameworks

| Framework | Domain/Application | Baseline Model Performance | Transfer Learning Model Performance | Key Metric |

|---|---|---|---|---|

| Cross-Property (ElemNet) [8] | Predicting 39 computational material properties | ML/DL models trained from scratch outperformed for only 12/39 properties | TL models outperformed for 27/39 (≈69%) properties | Win Rate (Properties) |

| Cross-Property (ElemNet) [9] | Predicting 39 computational material properties | ML/DL models trained from scratch outperformed for only 2/39 properties | TL models outperformed for 37/39 (≈95%) properties | Win Rate (Properties) |

| Cross-Modality (CroMEL) [10] | Predicting experimental formation enthalpy | Not specified | R² Score > 0.95 | R² Score |

| Cross-Modality (CroMEL) [10] | Predicting experimental band gaps | Not specified | R² Score > 0.95 | R² Score |

| Cross-Modal (imKT) [11] | 18 tasks on JARVIS-DFT dataset | MatBERT model (SOTA) | MAE decreased by 15.7% on average | Mean Absolute Error (MAE) ↓ |

| Interproperty (GNN) [12] | Predicting PBEsol formation energy | No transfer: 26 meV/atom MAE | Full transfer: 19 meV/atom MAE (27% improvement) | Mean Absolute Error (MAE) ↓ |

Detailed Experimental Protocols

Cross-Property Deep Transfer Learning

The foundational protocol for cross-property transfer learning in materials science, as detailed by Gupta et al., involves a two-step process [8]:

- Source Model Training: A deep learning model (specifically ElemNet) is trained from scratch on a large source dataset (e.g., the OQMD with over 300,000 compounds) for an available property like formation energy. This model uses only raw elemental fractions as input.

- Knowledge Transfer to Target Property: The pre-trained source model is adapted to a small target dataset of a different property. This is achieved via:

- Fine-tuning: The weights of the source model are used as initializations and are further updated during training on the target dataset.

- Feature Extraction: The source model serves as a fixed feature extractor. The learned representations for the target data are used to train a separate, simpler predictive model.

A critical step in this protocol is data pre-processing to remove duplicates and overlapping compositions between source and target datasets, ensuring a fair evaluation and preventing data leakage [8].

Cross-Modality Transfer Learning with CroMEL

The CroMEL framework addresses the challenge of transferring knowledge from a data-rich modality (e.g., calculated crystal structures) to a data-poor, different modality (e.g., chemical compositions) [10]. Its experimental protocol is:

- Problem Formulation: A structure encoder ((π)) is trained on source data containing crystal structures and their properties. The goal is to create a composition encoder ((ψ)) that can generate embeddings from a chemical composition alone that are consistent with the structure encoder's embeddings.

- CroMEL Optimization: The core of the protocol is minimizing a divergence ((D{div})) between the probability distribution of structure embeddings ((Pπ)) and composition embeddings ((P_ψ)). This is implemented using a computable form based on the Wasserstein distance.

- Target Model Training: The optimized composition encoder ((ψ^*)) is then used as a feature extractor in the target domain. A prediction model ((f)) is trained on top of these features using the small experimental dataset where only chemical compositions are available, thus successfully transferring knowledge across modalities [10].

Two-Step Transfer Learning in Drug Response Prediction

A specialized two-step transfer learning protocol was developed for predicting Temozolomide (TMZ) response in Glioblastoma (GBM) [13]:

- Initial Pre-training: A DL model is pre-trained on a large source dataset (GDSC) containing cell cultures treated with various drugs (e.g., Oxaliplatin).

- Domain-Specific Refinement: The pre-trained model is refined on a domain-specific dataset (HGCC) containing TMZ-treated GBM cell cultures.

- Target Fine-tuning: The refined model is finally fine-tuned on the small, specific target dataset (GSE232173) for final validation. This two-step transfer was shown to be superior to both no transfer learning and single-step transfer learning [13].

Workflow and Relationship Diagrams

Cross-Property Transfer Learning Workflow

The diagram below illustrates the two-stage process of cross-property transfer learning.

Cross-Modality Knowledge Transfer Logic

This diagram outlines the logical structure of cross-modality transfer, focusing on aligning embeddings from different data types.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Resources for Transfer Learning Experiments

| Resource Name/Type | Function in Research | Specific Example(s) / Notes |

|---|---|---|

| Large-Scale Materials Databases | Serve as source domains for pre-training models. Provide large volumes of consistent data. | Open Quantum Materials Database (OQMD) [8], Materials Project (MP) [8], JARVIS-DFT [8] [11], AFLOW [12]. |

| Experimental Materials Datasets | Act as small target domains for evaluating transfer learning efficacy. | Experimentally measured formation enthalpies and band gaps [10], various chemical application datasets (thermoelectric, battery materials) [10]. |

| Pre-trained Model Architectures | Provide the foundational models whose knowledge is transferred. | ElemNet (for compositions) [8] [11], Graph Neural Networks (for crystal graphs) [11] [12], Chemical Language Models (CLMs) [11]. |

| Bioactivity & Drug Datasets | Enable transfer learning in drug discovery, from related drugs or cell lines to a specific target. | Genomics of Drug Sensitivity in Cancer (GDSC) [13], Human Glioblastoma Cell Culture (HGCC) [13], SARS-CoV-2 dataset (RxRx19a) [14]. |

| Molecular Representations | Act as input features or modalities for models. | Elemental Fractions (EF) [8], Physical Attributes (PA) [8], Crystal Structures, SMILES strings [15], Extended Connectivity Fingerprints (ECFP4) [16]. |

| Meta-Learning Algorithms | Complement transfer learning by identifying optimal training samples and mitigating negative transfer. | Used to balance negative transfer in protein kinase inhibitor prediction [16]. |

The experimental data demonstrates that both cross-property and cross-modality frameworks significantly enhance predictive modeling in small-data regimes. Cross-property transfer learning provides a robust, general-purpose approach, showing consistent improvements across dozens of material properties, especially when using simple but powerful inputs like elemental fractions [8] [9]. Its primary strength is leveraging existing large datasets for related but distinct prediction tasks.

Cross-modality transfer learning, particularly with frameworks like CroMEL, represents a more advanced paradigm. It breaks the constraint of identical input descriptors, enabling knowledge transfer from rich computational data (crystal structures) to practical experimental settings (chemical compositions) [10]. This offers a practical solution for real-world applications where acquiring detailed material descriptors is infeasible.

The emergence of meta-learning frameworks to mitigate negative transfer—where performance decreases due to low similarity between source and target tasks—highlights the growing sophistication of this field [16]. For researchers, the choice of framework depends on data availability and modality. Cross-property is ideal for leveraging existing property databases, while cross-modality is essential for bridging different types of data. These foundational frameworks validate transfer learning as a critical tool for accelerating discovery in materials science and drug development.

In the pursuit of accelerating scientific discovery, particularly in fields with scarce experimental data like materials science and drug development, transfer learning has emerged as a powerful paradigm. Its success, however, hinges on understanding and managing three interconnected concepts: domain shift, feature spaces, and the latent representation hypothesis. Domain shift refers to the problem where the data a model is trained on (the source domain) and the data it encounters in practice (the target domain) have different statistical distributions, leading to degraded performance [17]. A feature space is a structured, often lower-dimensional, representation of data constructed by a model, where similar items are positioned close to one another. The Latent Representation Hypothesis posits that data from different but related domains (e.g., different classes of materials or biological assays) can be mapped into a shared, low-dimensional latent space where their fundamental properties are aligned, thereby enabling effective knowledge transfer even when the raw data distributions differ [10] [18].

This guide objectively compares recent methodological approaches that operationalize this hypothesis, focusing on their performance in validating transfer learning across material families—a critical challenge in developing new materials and pharmaceuticals.

Experimental Comparisons of Cross-Domain Transfer Methods

To evaluate the practical efficacy of different strategies, we summarize quantitative results from recent studies that performed cross-domain knowledge transfer. The following table compares the performance of several key methods on their respective benchmarks.

Table 1: Experimental Performance of Cross-Domain Transfer Learning Methods

| Method | Source Domain | Target Domain | Key Metric | Performance | Reference |

|---|---|---|---|---|---|

| CroMEL (Cross-modality Material Embedding Loss) | Calculated Crystal Structures | Experimental Chemical Compositions | Average R²-score (14 datasets) | > 0.95 (Formation Enthalpies & Band Gaps) | [10] |

| DTL-PSO Framework (Deep Transfer Learning & Particle Swarm Optimization) | Porous Carbons | Metal-Organic Frameworks (MOFs) | R²-score | 0.982 | [19] |

| GUIDE (Generalization using Inferred Domains) | Web, DSLR Images (TerraIncognita dataset) | Unseen Camera Domains | Test Accuracy Improvement | +4.3% vs. Empirical Risk Minimization (ERM) | [20] |

| LCDA (Latent variable represented Conditional Distribution Alignment) | Various Manufacturing Source Domains | Target Industrial Regression Tasks | Prediction Accuracy | State-of-the-Art on Battery & Tool Wear Estimation | [21] |

The data demonstrates that methods explicitly designed for cross-modality transfer, such as CroMEL and the DTL-PSO framework, can achieve remarkably high predictive accuracy (R² > 0.95) even when the source and target data are structurally different [10] [19]. Furthermore, the success of the GUIDE method highlights that leveraging rich feature spaces from modern generative models (like diffusion models) can significantly improve generalization to entirely unseen domains, a common scenario in real-world applications [20].

Detailed Methodologies and Protocols

This section details the experimental protocols and workflows for the leading methods cited in this guide.

CroMEL: Cross-Modality Material Embedding Loss

The CroMEL framework addresses the challenge of transferring knowledge from calculated crystal structures (source domain) to prediction models that only have access to experimental chemical compositions (target domain) [10].

Workflow Overview:

- Input Data: A source dataset of calculated crystal structures and their properties, and a target dataset of chemical compositions and experimentally measured properties.

- Model Architecture: Two encoders are trained simultaneously: a structure encoder (( \pi )) for the source domain and a probabilistic composition encoder (( \psi )) for the target domain.

- Core Protocol (CroMEL Loss): The model is trained by minimizing a combined loss function on the source data:

- Prediction Loss: A standard regression loss (e.g., mean squared error) that ensures the structure encoder can accurately predict material properties from crystal structures.

- Distribution Alignment Loss (( D{div} )): This is the novel CroMEL component. It minimizes the statistical divergence (e.g., Wasserstein distance) between the latent distribution of the composition encoder ( P\psi ) and the latent distribution of the structure encoder ( P_\pi ). This forces the composition encoder to generate latent embeddings that are statistically indistinguishable from those of the crystal structures, thereby transferring the structural knowledge.

- Transfer: The optimized composition encoder ( \psi^* ) is then used with a new prediction model ( f ) trained solely on the target domain's experimental data, enabling accurate prediction of experimental properties from compositions alone [10].

DTL-PSO Framework for CO2 Adsorbent Forecasting

This hybrid framework combines deep transfer learning (DTL) with particle swarm optimization (PSO) to predict and optimize CO2 uptake across different classes of porous materials [19].

Workflow Overview:

- Data Compilation: A comprehensive dataset is compiled from literature, containing structural, chemical, and operational parameters (e.g., surface area, pore volume, heteroatom content, temperature, pressure) for porous carbons and Metal-Organic Frameworks (MOFs).

- Feature Extraction: An autoencoder is trained on the entire dataset to extract non-linear, low-dimensional latent features that capture the essential characteristics of all materials.

- Transfer Learning for Regression: The encoder part of the pre-trained autoencoder is used as a feature extractor. A regression model (e.g., a neural network) is then trained on the latent features from the source domain (porous carbons) and fine-tuned or directly applied to the target domain (MOFs). This constitutes the deep transfer learning (DTL) step.

- Optimization: The Particle Swarm Optimization (PSO) algorithm operates on the latent feature space to find the combination of material and operational parameters that maximizes the predicted CO2 uptake, as output by the DTL model [19].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for implementing the cross-domain validation research discussed in this guide.

Table 2: Essential Research Reagents for Cross-Domain Transfer Learning

| Research Reagent / Resource | Type | Function in Research | Exemplar Use Case |

|---|---|---|---|

| Pre-trained Diffusion Models | Software Model | Provides a rich feature space for unsupervised discovery of domain-specific variations, improving generalization to unseen domains. | GUIDE method for domain generalization in image analysis [20]. |

| Variational Autoencoders (VAEs) | Software Architecture | Learns a low-dimensional, generative latent representation from high-dimensional data, enabling mapping of different domains. | Mapping different medical measurement instruments to a joint latent space [18]. |

| Calculated Crystal Structure Databases | Dataset | Serves as a large, information-rich source domain for transfer learning to experimental data. | CroMEL framework for predicting experimental material properties [10]. |

| Particle Swarm Optimization (PSO) | Algorithm | A bio-inspired optimization algorithm that searches complex parameter spaces to find optimal configurations, such as maximizing material performance. | DTL-PSO framework for optimizing CO2 adsorbent materials [19]. |

| Wasserstein Distance / Maximum Mean Discrepancy (MMD) | Statistical Measure | Quantifies the divergence between two probability distributions; used as a loss function to align source and target latent distributions. | CroMEL loss [10] and other domain adaptation methods [21]. |

| Public Calculation Databases (e.g., PubChem, ChemDB, DrugBank) | Database | Provides vast virtual chemical spaces for virtual screening and as source data for pre-training models. | In silico drug discovery and material screening [22]. |

Implementing Transfer Learning: Architectures, Strategies, and Real-World Workflows

Transfer learning (TL) has emerged as a pivotal methodology for overcoming data sparsity constraints in scientific domains, particularly in drug discovery and materials science. By leveraging knowledge from data-rich source domains to improve performance on data-scarce target tasks, TL enables more effective machine learning applications where experimental data is expensive or time-consuming to acquire. The paradigm is especially valuable in discovery processes that rely on screening funnels, where different stages generate data at various scales and fidelities [23]. Within this framework, two architectural families have demonstrated particular promise: Graph Neural Networks (GNNs), which naturally operate on graph-structured data such as molecular representations, and Transformer-based models, which leverage attention mechanisms to capture complex dependencies across sequential and structured data. Understanding their comparative strengths, limitations, and optimal application domains is essential for researchers seeking to validate transfer learning approaches across material families.

Architectural Foundations: GNNs vs. Transformers

Graph Neural Networks (GNNs)

GNNs are specifically designed to process graph-structured data, where entities and their interrelations are represented as nodes and edges. The core learning mechanism involves message passing, where nodes iteratively aggregate feature information from their neighbors to learn rich hierarchical representations of graph-structured data [24]. Popular GNN variants include:

- Graph Convolutional Networks (GCNs): Update node representations by aggregating feature information from neighboring nodes [25]

- Graph Attention Networks (GATs): Assign different attention weights to neighbors during aggregation, focusing more on relevant nodes [25]

- Graph Isomorphism Networks (GINs): Use a sum aggregator to capture neighbor features without information loss, combined with MLPs for increased model capacity [25]

In transfer learning contexts, GNNs typically employ pre-training and fine-tuning strategies, where models first learn generalizable representations from large-scale source domains (e.g., low-fidelity screening data) before being adapted to specific target tasks with limited high-fidelity data [23].

Transformer-Based Models

Transformers utilize a self-attention mechanism to dynamically weight the importance of different elements in input sequences when generating representations. The Query-Key-Value (QKV) mechanism allows Transformers to compute attention scores based on current node features, enabling them to adapt to varying relational contexts [26]. While originally developed for sequential data, Transformer adaptations for scientific applications include:

- Graph Transformers: Incorporate graph structural information through positional encodings or attention masking [27]

- Chemical Language Models: Process molecular representations (e.g., SMILES) as sequences using natural language processing techniques [28]

- Multi-modal Transformers: Integrate diverse data types (e.g., molecular structures, protein sequences, and experimental readings) through embedding alignment [29]

Fundamental Differences and Similarities

Despite their architectural differences, GNNs and Transformers share significant similarities in their feature refinement strategies. Both architectures employ mechanisms for interacting with features from nodes of interest, with Transformers using query-key scores and GNNs utilizing edges [26]. The critical distinction lies in their handling of positional information: Transformers leverage dynamic attention to represent relative relationships, making them superior for sequential data where position is crucial, while GNNs rely on static adjacency matrices, making them potentially more efficient for position-agnostic domains [26].

Table 1: Fundamental Architectural Comparison

| Feature | Graph Neural Networks (GNNs) | Transformer-Based Models |

|---|---|---|

| Primary Operating Domain | Graph-structured data | Sequential and structured data |

| Core Mechanism | Message passing between connected nodes | Self-attention across all input elements |

| Positional Encoding | Typically not required; structure inherent in graph | Crucial for sequential data; requires explicit encoding |

| Computational Complexity | Generally lower; leverages graph sparsity | Generally higher; attends to all element pairs |

| Typical TL Approach | Pre-training on large molecular graphs, fine-tuning on target tasks | Domain-adaptive pre-training, prompt tuning, fine-tuning |

| Key Strength | Natural handling of topological relationships | Capturing long-range dependencies |

Performance Comparison: Experimental Evidence

Computational Efficiency

Empirical studies demonstrate significant differences in computational requirements between GNNs and Transformers. In position-agnostic domains such as single-cell transcriptomics, GNNs achieve competitive performance compared to Transformers while consuming substantially fewer resources – approximately 1/8 of the memory and about 1/4 to 1/2 of the computational resources in comparable implementations [26]. This efficiency advantage makes GNNs particularly valuable in resource-constrained environments or when scaling to extremely large datasets.

Transfer Learning Effectiveness

The relative performance of GNNs versus Transformers in transfer learning scenarios depends critically on domain characteristics and data availability:

Drug Discovery Applications: GNNs with adaptive readout functions have demonstrated substantial improvements in multi-fidelity learning, enhancing performance on sparse high-fidelity tasks by up to 8 times while using an order of magnitude less high-fidelity training data [23]. In transductive learning settings (where low-fidelity and high-fidelity labels are available for all data points), GNN-based transfer learning consistently outperformed label augmentation approaches in 80% of experiments [23].

Molecular Property Prediction: For quantum mechanics problems, standard GNNs remain competitive with Transformer approaches, particularly when employing extensive and non-local architectures [23]. However, in complex drug discovery tasks, vanilla GNNs significantly underperform without appropriate transfer learning strategies and adaptive readouts.

Edge-Set Attention Architectures: Recent hybrid approaches that combine GNN and Transformer principles demonstrate state-of-the-art performance across diverse tasks. The Edge-Set Attention (ESA) architecture, which considers graphs as sets of edges and employs masked attention mechanisms, outperforms both tuned GNN baselines and complex Transformer-based models across more than 70 node and graph-level tasks [27].

Table 2: Experimental Performance Comparison Across Domains

| Domain/Task | Best-Performing Architecture | Key Performance Metrics | Data Requirements |

|---|---|---|---|

| Single-Cell Transcriptomics | GNNs | Competitive accuracy with 1/8 memory, 1/4-1/2 computation [26] | Position-agnostic datasets |

| Drug Discovery (Multi-fidelity) | GNNs with adaptive readouts | 8x improvement, order of magnitude less high-fidelity data [23] | Low-fidelity source domain |

| Quantum Mechanics | Standard GNNs (extensive/non-local) | Competitive with Transformers [23] | Moderate dataset sizes |

| Broad Benchmark Tasks (70+ datasets) | Edge-Set Attention (Hybrid) | Outperforms both GNNs and Transformers [27] | Variable domain requirements |

| TMZ Response Prediction | Two-step TL with GNNs | Superior to single-step TL and benchmark methods [13] | Small target datasets |

Experimental Protocols and Methodologies

GNN Transfer Learning for Multi-Fidelity Molecular Data

Protocol Overview: This methodology addresses the screening cascade paradigm common in drug discovery, where low-fidelity, high-throughput data is abundant but high-fidelity experimental data is sparse [23].

Key Steps:

- Source Domain Pre-training: Train GNN on large-scale low-fidelity data (e.g., high-throughput screening results)

- Adaptive Readout Implementation: Replace standard readout functions (sum, mean, max) with neural network-based adaptive readouts

- Target Domain Fine-tuning: Transfer learned representations to high-fidelity prediction tasks with limited data

- Multi-fidelity Integration: Optionally incorporate actual low-fidelity labels as input features for high-fidelity models

Architecture Specifications:

- Base GNN: MPNN, GCN, or GAT variants

- Adaptive Readout: Attention-based pooling mechanisms

- Regularization: Variational graph autoencoders for structured latent space learning

Validation Framework: Performance evaluation under both transductive (low-fidelity labels available for all molecules) and inductive (low-fidelity labels only for source domain) settings across 37 protein targets and 12 quantum properties [23].

Transformer-Based Pre-training for Chemical Data

Protocol Overview: This approach leverages large-scale molecular representations (e.g., SMILES strings, molecular graphs with structural encodings) through Transformer architectures [28].

Key Steps:

- Chemical Language Pre-training: Train Transformer models on massive molecular datasets using masked token prediction objectives

- Domain-Adaptive Fine-tuning: Adapt pre-trained models to specific property prediction tasks with limited data

- Multi-modal Integration: Incorporate additional data modalities (protein sequences, experimental conditions) through embedding alignment

Architecture Specifications:

- Graphormer: Utilizes spatial encodings, centrality encodings, and edge encodings [27]

- Attention Mechanisms: Multi-head self-attention with structural biases

- Positional Encodings: Laplacian eigenvectors, spatial distances, or learned positional embeddings

Validation Framework: Benchmarking against GNN baselines across molecular property prediction tasks, with emphasis on out-of-distribution generalization [27].

Two-Step Transfer Learning for Limited Data Scenarios

Protocol Overview: This specialized protocol addresses extreme data sparsity scenarios, such as predicting drug response in rare cancers with limited patient samples [13].

Key Steps:

- Initial Pre-training: Train model on large source dataset with diverse samples (e.g., multiple cancer types and drugs)

- Domain-Specific Refinement: Fine-tune on intermediate dataset more closely related to target task

- Target Task Adaptation: Final fine-tuning on small target dataset

Implementation Example:

- Step 1: Pre-train on GDSC dataset (miscellaneous cell cultures treated by multiple drugs)

- Step 2: Refine on HGCC dataset (glioblastoma cell cultures treated with TMZ)

- Step 3: Final adaptation to small target dataset (GSE232173 with 22 GBM cell cultures) [13]

Diagram 1: Generalized Transfer Learning Workflow for GNNs and Transformers. This framework illustrates the common pre-training and fine-tuning paradigm used for both architectural families.

Table 3: Key Research Reagents and Computational Resources

| Resource | Type | Function in TL Research | Example Instances |

|---|---|---|---|

| Molecular Datasets | Data | Source and target domains for transfer learning | ChEMBL, BindingDB, DrugBank, QMugs, GDSC [25] [23] [30] |

| GNN Frameworks | Software | Implementation of graph neural network architectures | PyTor Geometric, Deep Graph Library (DGL) |

| Transformer Libraries | Software | Implementation of attention-based models | Hugging Face Transformers, fairseq |

| Benchmark Suites | Evaluation | Standardized performance assessment | MoleculeNet, OGB (Open Graph Benchmark) [25] |

| Pre-trained Models | Model Weights | Starting point for transfer learning | Graphormer, ChemBERTa, pre-trained GNNs on large molecular datasets [29] |

| Adaptive Readout Modules | Algorithmic Component | Enhanced graph-level representation learning | Attention-based pooling, neural readout functions [23] |

Implementation Guidelines and Decision Framework

Architecture Selection Criteria

Choosing between GNNs and Transformers for transfer learning applications depends on multiple factors:

- Data Modality: GNNs are preferable for inherently graph-structured data (molecules, protein interactions), while Transformers excel with sequential representations (protein sequences, SMILES strings) [26] [28]

- Positional Sensitivity: If relative positions between elements are crucial, Transformers' dynamic attention provides advantages; for position-agnostic data, GNNs offer computational benefits [26]

- Data Volume: For limited target data, GNNs with effective transfer learning strategies often outperform; with abundant data, Transformers may capture more complex relationships

- Computational Constraints: GNNs typically require fewer resources, making them suitable for deployment in resource-limited environments [26]

Mitigating Negative Transfer

A significant challenge in transfer learning is negative transfer – when knowledge from the source domain adversely affects target task performance. Combined meta-learning and transfer learning frameworks help identify optimal subsets of source samples for pre-training, algorithmically balancing negative transfer between domains [16]. Techniques include:

- Meta-Weight Learning: Deriving sample weights based on classification loss to guide learning

- Task Similarity Assessment: Quantifying similarity between source and target tasks before transfer

- Progressive Fine-tuning: Gradually adapting models from general to specific domains through intermediate steps [13]

Diagram 2: Architecture Selection Decision Framework. This flowchart guides researchers in selecting between GNNs, Transformers, or hybrid approaches based on project requirements.

Future Directions and Emerging Trends

The landscape of transfer learning architectures continues to evolve rapidly, with several promising directions emerging:

Foundation Models for Drug Discovery: The number of foundation models in pharmaceutical R&D has surged since 2022, with over 200 models published to date, supporting diverse applications from target discovery to molecular optimization [29]. These large-scale pre-trained models represent a significant shift toward general-purpose molecular AI systems.

Hybrid Architectures: Approaches like Edge-Set Attention (ESA) that combine the strengths of GNNs and Transformers demonstrate potential for outperforming both architectural families across diverse benchmarks [27]. These methods consider graphs as sets of edges and employ masked attention mechanisms while avoiding complex pre-processing steps.

Multi-Modal Transfer Learning: Integrating diverse data types (molecular structures, omics profiles, clinical outcomes) through unified Transformer-based architectures enables more comprehensive predictive modeling [30]. This approach aligns with the systems pharmacology perspective essential for multi-target drug discovery.

Meta-Learning Enhancements: Advanced meta-learning algorithms designed specifically to complement transfer learning show promise in identifying optimal training subsets and determining weight initializations for base models, effectively mitigating negative transfer [16].

As these architectural innovations mature, the validation of transfer learning approaches across material families will increasingly rely on systematic benchmarking across diverse domains, with careful attention to data characteristics, computational constraints, and application requirements.

In the field of artificial intelligence and machine learning, leveraging knowledge from pre-trained models has become a cornerstone for accelerating research, particularly in domains plagued by data scarcity. Within materials science and drug development, the validation of transfer learning techniques across diverse material families presents a critical challenge. Researchers are often faced with a strategic choice: whether to fully adapt a pre-trained model (fine-tuning), use it as a fixed feature extractor (feature extraction), or employ dimensionality reduction techniques (projection-based methods) to maximize predictive performance with limited data. Each approach offers distinct trade-offs in accuracy, computational demand, and data requirements that must be carefully considered within specific experimental contexts. This guide provides an objective comparison of these strategic approaches, supported by experimental data and detailed protocols from recent studies, to inform researchers and scientists in selecting optimal methodologies for their transfer learning validation across material families.

Conceptual Frameworks and Definitions

Fine-Tuning

Fine-tuning represents a comprehensive adaptation approach where a pre-trained model's parameters are further trained on a target task's dataset. This strategy involves unfreezing some or all layers of a frozen pre-trained base model and jointly training both the newly added classifier layers and the unfrozen layers of the base model [31] [32]. This process allows the model to evolve not only the additional layers but also some of the earlier layers of the pre-trained model to better suit the target domain. Fine-tuning typically requires a relatively large dataset similar to the original pre-training data to prevent overfitting and is computationally intensive, but offers potentially higher accuracy by adapting pre-trained features to the specifics of the target dataset [32] [33]. Regularization methods such as dropout and early stopping are often employed to mitigate overfitting risks, especially when the new task has significantly different features from the original pre-training task [31].

Feature Extraction

Feature extraction, in contrast, uses the pre-trained model as a fixed feature extractor where the learned representations are utilized to extract meaningful features from new data without modifying the pre-trained weights [31] [32]. In this approach, all layers of the pre-trained model remain frozen during training on the target task, and only newly added layers are trained from scratch. This method is particularly valuable when the target task has a small dataset or when computational resources are limited [31]. The underlying principle is that earlier layers of a pre-trained model comprise more generic features (e.g., edge detectors in images) that could be beneficial across numerous tasks, while later layers contain more specific details of the classes contained in the original dataset [31]. If the target task dataset is similar to the source task, training should focus on features from higher layers; for dissimilar datasets, features from lower layers (general features) are more appropriate [31].

Projection-Based Methods

Projection-based methods, often referred to as dimensionality reduction techniques, aim to transform high-dimensional data into a lower-dimensional space while preserving the essential structure and relationships within the data. These include techniques such as Principal Component Analysis (PCA), Independent Component Analysis (ICA), Dictionary Learning (DL), and Non-Negative Matrix Factorization (NNMF) [34]. Unlike fine-tuning and feature extraction which leverage pre-trained models, projection methods typically operate directly on the dataset to extract representative features that can compactly describe data distribution. These methods are particularly valuable for tackling the "curse of dimensionality" in domains with high-dimensional data and limited samples, such as neuroimaging and materials science [34]. The goal is to find a weight matrix W that can linearly transform the original n×p data matrix X into a new set of k features, where k

[34].<="" and="" f="XW," feature="" is="" k

Comparative Performance Analysis

Experimental Data from Materials Science

Recent research in materials science provides compelling experimental data for comparing the efficacy of different transfer learning approaches. A 2025 study introduced Cross-Modality Material Embedding Loss (CroMEL) for transferring knowledge between heterogeneous material descriptors, specifically from calculated crystal structures to experimental chemical compositions [10]. The prediction models based on transfer learning with CroMEL showed state-of-the-art prediction accuracy on 14 experimental materials datasets, achieving R²-scores greater than 0.95 in predicting experimentally measured formation enthalpies and band gaps of synthesized materials [10].

In organic photovoltaics research, transfer learning within graph neural networks (GNNs) addressed data scarcity for conjugated oligomers [35]. Using a pre-trained model from the PubChemQC dataset and fine-tuning with an original oligomer dataset, researchers achieved low mean absolute errors of 0.74 eV for HOMO, 0.46 eV for LUMO, and 0.54 eV for the HOMO-LUMO gap [35]. This approach successfully identified 46 promising conjugated oligomer candidates from a dataset of 3710 compounds, demonstrating the power of transfer learning in accelerating materials discovery.

Table 1: Performance Comparison of Transfer Learning Approaches in Materials Science

| Study | Domain | Approach | Performance Metrics | Dataset Size |

|---|---|---|---|---|

| Cross-modality material embedding [10] | Materials Science | Cross-modality transfer learning | R² > 0.95 for formation enthalpies and band gaps | 14 experimental datasets |

| Organic photovoltaics [35] | Conjugated oligomers | Fine-tuning pre-trained GNN | MAE: 0.46-0.74 eV for electronic properties | 610 original + 100K pre-training |

| Semantic segmentation [36] | Cell micrographs | Feature extraction with U-Net | Dice coefficient: 0.876, Jaccard index: 0.781 | 320 images |

Performance in Other Domains

Beyond materials science, comparative studies across domains provide additional insights into the relative strengths of these approaches. In biomedical image analysis, a 2025 comparative study of deep transfer learning models for semantic segmentation of human mesenchymal stem cell micrographs found that U-Net with feature extraction demonstrated the best segmentation accuracy with a Dice coefficient of 0.876 and Jaccard index of 0.781 [36]. DeepLabV3+ and Mask R-CNN also showed high performance, though slightly lower than U-Net [36].

In automated ICD coding for medical texts, research compared bag-of-words (BoW), word2vec (W2V), and BERT variants [37]. The optimal feature extraction method depended on code frequency thresholds: for frequent codes (threshold ≥140), fine-tuning the whole network of BERT variants was optimal (Micro-F1: 93.9%), while for infrequent codes (threshold <140), BoW performed best (Micro-F1: 83%) [37].

For predicting neuropsychological scores from functional connectivity data of stroke patients, a comparison of feature extraction methods found that Principal Component Analysis (PCA) and Independent Component Analysis (ICA) were the two best methods at extracting representative features, followed by Dictionary Learning (DL) and Non-Negative Matrix Factorization (NNMF) [34]. PCA-based models, especially when combined with L1 (LASSO) regularization, provided optimal balance between prediction accuracy, model complexity, and interpretability [34].

Table 2: Cross-Domain Performance Comparison of Feature Extraction Methods

| Domain | Best Performing Methods | Key Metrics | Considerations |

|---|---|---|---|

| Medical text coding [37] | BERT fine-tuning (frequent codes), BoW (infrequent codes) | Micro-F1: 93.9% (frequent), 83% (infrequent) | Code frequency threshold determines optimal method |

| Neuropsychological score prediction [34] | PCA, ICA | Optimal balance of accuracy and interpretability | Combined with L1 regularization |

| Biomedical image segmentation [36] | U-Net with feature extraction | Dice: 0.876, Jaccard: 0.781 | Computational efficiency varies by model |

Decision Framework and Workflows

Selecting the appropriate strategic approach depends on multiple factors including dataset size, similarity to pre-training data, computational resources, and performance requirements. The following decision framework visualizes the strategic selection process:

Diagram 1: Strategic Approach Selection Workflow

This decision framework highlights key considerations: feature extraction is ideal for small datasets with high similarity to pre-training data and limited computational resources [31] [32]; fine-tuning suits larger datasets with adequate computational resources [32] [33]; while projection-based methods are valuable when data similarity is low or when dealing with high-dimensional data with limited samples [34]. A hybrid approach that begins with feature extraction to establish a baseline and then progresses to fine-tuning can be optimal when resources permit [32].

Detailed Experimental Protocols

Cross-Modality Transfer Learning in Materials Science

The CroMEL framework demonstrates an advanced protocol for cross-modality knowledge transfer [10]. The methodology addresses the challenge of transferring knowledge from calculated crystal structures to composition-based prediction models trained on experimentally collected materials datasets, where collecting informative material descriptors beyond chemical compositions is often expensive or infeasible [10].

The mathematical formulation of the training problem for source feature extractors is defined as: g, π, ψ* = argmin∑L(ys, g(π(xs))) + Ddiv(Pπ||Pψ) where g is a trainable prediction network, π is a structure encoder, ψ is a probabilistic composition encoder, and Ddiv is a statistical distance to measure divergence between distributions [10].

Key steps in the CroMEL protocol:

- Data Preparation: Gather source dataset with calculated crystal structures and target dataset with experimental chemical compositions

- Encoder Training: Train structure encoder (π) and composition encoder (ψ) to minimize divergence between their probability distributions

- Cross-Modality Transfer: Replace conventional source feature extractor with the optimized probabilistic composition encoder

- Target Model Training: Train final prediction model on target dataset using f(ψ(x_t)) for predictions [10]

The following workflow diagram illustrates the CroMEL experimental protocol:

Diagram 2: CroMEL Cross-Modality Transfer Learning Protocol

Fine-Tuning Protocol for Graph Neural Networks

The organic photovoltaics study provides a detailed protocol for fine-tuning graph neural networks with transfer learning [35]. The methodology involves:

- Pre-training Phase: Train SchNet architecture on PubChemQC-100K dataset containing 100,000 organic small molecules and their electronic properties

- Model Architecture: Employ continuous-filter convolution layers that model atomic interactions as a function of interatomic distance, ideal for predicting quantum chemical properties

- Fine-tuning Phase: Transfer pre-trained model to conjugated oligomer dataset (CO-610) containing 610 unique oligomers with polymerization degrees from 4-10

- High-Throughput Screening: Integrate models with density functional theory for validation and candidate identification [35]

This approach achieved mean absolute errors of 0.46-0.74 eV for electronic properties despite data scarcity, demonstrating the effectiveness of transfer learning for materials discovery [35].

Feature Extraction Protocol for Biomedical Imaging

The semantic segmentation study outlines a feature extraction protocol for biomedical images [36]:

- Model Selection: Choose pre-trained models (U-Net, DeepLabV3+, SegNet, Mask R-CNN) with ImageNet weights

- Feature Extraction: Freeze encoder layers to retain pre-trained feature representations

- Classifier Adaptation: Replace and train final layers for specific segmentation task

- Performance Validation: Evaluate using Dice coefficient, Jaccard index, and pixel accuracy metrics [36]

This protocol yielded Dice coefficients up to 0.876 with only 320 training images, demonstrating the data efficiency of feature extraction approaches [36].

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Category | Specific Tools/Datasets | Function | Application Context |

|---|---|---|---|

| Pre-trained Models | SchNet [35], BERT [37], U-Net [36], ResNet [31] | Provide foundational feature representations | Computer vision, natural language processing, materials informatics |

| Datasets | ImageNet [31], PubChemQC [35], CO-610 [35], CES/ANES [38] | Source and target tasks for transfer learning | Model pre-training and domain-specific fine-tuning |

| Software Frameworks | TensorFlow, PyTorch [39], RDKit [35], Hugging Face Transformers [39] | Model implementation and training | General-purpose machine learning and specialized computational chemistry |

| Analysis Tools | PCA, ICA [34], Dictionary Learning [34] | Dimensionality reduction and feature extraction | Handling high-dimensional data and improving model interpretability |

The strategic selection between fine-tuning, feature extraction, and projection-based methods represents a critical decision point in validating transfer learning across material families. Experimental evidence demonstrates that fine-tuning excels when sufficient target domain data is available, enabling model specialization at higher computational cost. Feature extraction provides an efficient alternative for data-scarce environments, particularly when source and target domains share common characteristics. Projection-based methods offer robust solutions for high-dimensional data spaces where traditional transfer learning faces limitations. As materials science and drug development continue to embrace data-driven approaches, the thoughtful application of these strategic methodologies—informed by dataset characteristics, computational constraints, and performance requirements—will accelerate discovery and validation cycles across diverse material families.

The discovery of high-performance organic photovoltaic (OPV) materials has traditionally been a time-consuming and costly process, heavily reliant on experimental trial-and-error and incremental molecular modifications. However, a transformative shift is underway through the application of transfer learning, particularly using pre-trained Graph Neural Networks (GNNs). This approach addresses a fundamental challenge in materials informatics: the scarcity of high-quality, experimentally validated data for specific material properties like power conversion efficiency (PCE). Transfer learning enables models to acquire fundamental chemical knowledge from large-scale computational datasets and then fine-tune this knowledge on smaller, targeted experimental datasets. This paradigm is proving especially valuable in OPV research, where it accelerates the discovery of efficient donor-acceptor pairs by capturing intricate structure-property relationships that would be difficult to learn from limited experimental data alone.

This case study examines how pre-trained GNN frameworks are being validated across different material families and research institutions, demonstrating their growing role as a robust methodology for accelerating OPV discovery. We compare the performance of these approaches against traditional methods and provide detailed experimental protocols supporting their effectiveness.

Methodological Comparison of OPV Discovery Frameworks

Several research groups have developed distinct yet complementary deep-learning frameworks that leverage transfer learning for OPV material discovery. The table below systematically compares their architectures, data strategies, and key innovations.

Table 1: Comparison of Deep Learning Frameworks for OPV Discovery

| Framework | Core Architecture | Transfer Learning Strategy | Dataset Size | Key Innovation |

|---|---|---|---|---|

| SolarPCE-Net [40] | Dual-channel residual network with self-attention | Not explicitly pretrained; uses attention to capture D-A interactions | HOPV15 dataset | Quantifies interfacial donor-acceptor coupling effects through attention-weighted feature fusion |

| GNN + GPT-2 RL [41] [42] | Pretrained GNN with GPT-2 reinforcement learning | GNN pretrained on 51k molecules with HOMO/LUMO data; fine-tuned on OPV data | ~2,500 D-A pairs (targeting 3,000) | Combines predictive GNN with generative RL for end-to-end molecular design |

| DeepAcceptor [43] | abcBERT (GNN integrated with BERT) | Pretrained on 51k computational acceptors; fine-tuned on 1,027 experimental NFAs | 1,027 NFAs | Uses atom, bond, and connection information with masked molecular graph pretraining |

| GNN + LightGBM [44] | GNN with ensemble learning (LightGBM) | Two-stage: GNN predicts molecular properties; LightGBM predicts PCE from properties | 440 small molecule/fullerene pairs | Separates property prediction from efficiency modeling for interpretability |

Critical Performance Metrics and Validation

Quantitative validation is essential for establishing the reliability of these frameworks. The following table compares their predictive performance based on reported experimental results.

Table 2: Experimental Performance Comparison of OPV Discovery Frameworks

| Framework | Prediction Accuracy | Experimental Validation | Key Advantages | Limitations |

|---|---|---|---|---|

| SolarPCE-Net [40] | Superior to traditional methods (specific metrics not provided) | Screened undeveloped D-A combinations | Captures synergistic D-A coupling effects; interpretable via attention weighting | Limited dataset size; performance metrics not quantified |

| GNN + GPT-2 RL [41] [42] | Lower MSE vs. baselines; candidates with predicted PCE ~21% | Planned with experimental teams | Generates novel molecular structures; identifies efficiency-enhancing motifs | Predicted efficiencies require experimental validation |

| DeepAcceptor [43] | MAE = 1.78; R² = 0.67 on test set | 3 candidates synthesized with best PCE = 14.61% | User-friendly interface; specifically focused on acceptors for PM6 donor | Limited to acceptor design; dependent on specific donor pairing |

| GNN + LightGBM [44] | High accuracy (exact metrics not provided) | Validated with newly synthesized molecules | Fast prediction without DFT calculations; handles small datasets effectively | Limited transparency in accuracy reporting |

Experimental Protocols and Workflows

End-to-End AI-Driven OPV Discovery Workflow

The following diagram illustrates the complete integrated workflow for OPV discovery combining pretrained GNNs with generative reinforcement learning, as implemented in cutting-edge approaches [41] [42]:

Figure 1: Complete workflow for AI-driven OPV discovery integrating pretrained GNNs with generative models

GNN Pretraining Methodology

The pretraining phase is critical for transferring fundamental chemical knowledge. The following diagram details the specific methodology used in advanced frameworks [42] [43]:

Figure 2: Two-task GNN pretraining methodology combining reconstruction and property prediction

Detailed Experimental Protocols

Data Preparation:

- Collect ~51,000 organic molecules with SMILES notations and HOMO/LUMO data from quantum calculations

- Convert SMILES to graph representations with atom types, bond types, and adjacency matrices

- Implement masking strategy: randomly mask 15% of atoms and bonds for reconstruction task

Model Architecture:

- Implement Graph Neural Network with message-passing mechanism

- Configure Transformer encoder layers with multi-head self-attention

- Set embedding dimensions (typically 256-512) based on model capacity requirements

Training Procedure:

- Initialize model with random weights

- Train with combined loss function: Ltotal = Lreconstruction + λL_HOMO/LUMO

- Use Adam optimizer with learning rate 10⁻⁴ and batch size 32-64

- Apply early stopping based on validation reconstruction accuracy

Dataset Curation:

- Collect 1,027 experimentally characterized non-fullerene acceptors with PCE values

- Split data 80:10:10 for training, validation, and testing

- Ensure test set contains molecules with PCE distribution similar to training set

Fine-Tuning Process:

- Replace pretraining heads with PCE regression head

- Initialize with pretrained weights, freeze early layers initially

- Train with mean absolute error (MAE) loss: LPCE = |PCEpredicted - PCE_experimental|

- Use reduced learning rate (10⁻⁵) and smaller batch sizes (16-32)

- Gradually unfreeze layers for full network fine-tuning

Generator Setup:

- Initialize GPT-2 architecture with molecular SMILES tokenization

- Pretrain on existing OPV molecular structures for syntax learning

- Configure policy network with temperature sampling for exploration

Reinforcement Learning Loop:

- Use pretrained PCE predictor as reward function

- Implement policy gradient update with advantage estimation

- Apply balanced loss function: ℒ(x;θ) = [logPPrior(x) - logPAgent(x;θ) + σ·s(x)]²

- Include chemical validity constraints via RDKit validation

- Run for multiple epochs with reward shaping for stability

Successful implementation of pre-trained GNN approaches requires specific computational tools and datasets. The following table details the essential components of the OPV discovery toolkit.

Table 3: Essential Research Reagents and Computational Resources for OPV Discovery

| Resource Category | Specific Tools/Datasets | Function/Purpose | Accessibility |

|---|---|---|---|

| Benchmark Datasets | HOPV15 [40], CEPDB [44], Curated OPV Dataset [42] | Training and benchmarking models; contains molecular structures and experimental PCEs | Publicly available (CEPDB); Others may require permission |

| Molecular Representations | SMILES [42], Molecular Graphs [43], Molecular Fingerprints [44] | Convert chemical structures to machine-readable formats | Open-source tools (RDKit, OEChem) |

| GNN Architectures | Graph Convolutional Networks, Message-Passing Neural Networks [42] | Learn from graph-structured molecular data | Open-source frameworks (PyTorch Geometric, DGL) |

| Pretraining Resources | QM9 [42], Computational NFA Dataset [43] | Provide large-scale data for self-supervised pretraining | Publicly available |

| Validation Tools | RDKit [42], DFT Calculations [44] | Ensure chemical validity and predict electronic properties | Open-source (RDKit) and commercial (Gaussian) |

| Generative Models | GPT-2 [42], VAE [43], BRICS [43] | Create novel molecular structures for exploration | Open-source implementations |

The validation of pre-trained GNNs across multiple OPV research initiatives demonstrates the growing maturity of transfer learning approaches in materials science. Frameworks like DeepAcceptor, with its abcBERT model achieving MAE of 1.78 and R² of 0.67, and the GNN-GPT-2 pipeline generating candidates with predicted PCE approaching 21%, show remarkable predictive capability [43] [42]. The consistent finding that pretraining on quantum chemical properties enhances PCE prediction accuracy provides strong evidence for the transferability of fundamental chemical knowledge across material families.

The emerging paradigm combines several powerful elements: transfer learning to overcome data limitations, attention mechanisms to capture donor-acceptor interactions, and generative reinforcement learning for autonomous molecular design. As these frameworks continue to be refined and validated through experimental collaboration, they promise to significantly accelerate the discovery of high-efficiency organic photovoltaics, potentially reducing development timelines from years to months. Future research directions include developing more sophisticated cross-material transfer learning strategies, creating larger open-source datasets, and improving the interpretability of model predictions to provide clearer design guidelines for synthetic chemists.

A significant challenge in precision oncology is the accurate prediction of individual patient responses to anticancer drugs. While patient-derived organoids (PDOs) better preserve the characteristics of primary tumors than traditional 2D cell lines, their clinical application is hindered by time-consuming culture processes, high costs, and limited availability of large-scale pharmacogenomic data [45] [46]. This creates a "small data problem" familiar to materials science researchers, where limited datasets restrict the application of advanced deep learning models.

PharmaFormer addresses this bottleneck through a sophisticated transfer learning (TL) framework that integrates abundant drug sensitivity data from pan-cancer cell lines with the limited but biologically superior data from tumor-specific organoids [46] [47]. This approach mirrors TL strategies successfully applied in materials science, where models pre-trained on large datasets for one property are adapted to predict different properties with limited data [8] [48] [12].

Methodological Framework: The PharmaFormer Architecture

Model Architecture and Design Principles

PharmaFormer employs a custom Transformer-based neural network architecture specifically designed for clinical drug response prediction. The model processes two distinct input types through separate feature extractors [46]:

- Gene Expression Profiles: Bulk RNA-seq data from cell lines, organoids, or patient tumors processed through a feature extractor consisting of two linear layers with ReLU activation.

- Drug Molecular Structures: Simplified Molecular-Input Line-Entry System (SMILES) representations processed using Byte Pair Encoding followed by a linear layer and ReLU activation.

The extracted features are concatenated and passed through a Transformer encoder comprising three layers, each equipped with eight self-attention heads. The encoder output is flattened and processed through two linear layers with ReLU activation to generate the final drug response prediction [46].

Three-Stage Transfer Learning Workflow

PharmaFormer implements a sophisticated three-stage knowledge transfer pipeline that progressively adapts general patterns to specific clinical contexts.

Stage 1: Pre-training on Cell Line Data The model is initially pre-trained on extensive pharmacogenomic data from the Genomics of Drug Sensitivity in Cancer (GDSC) database, comprising gene expression profiles of over 900 cell lines and area under the dose–response curve (AUC) measurements for over 100 drugs. This stage uses 5-fold cross-validation to establish baseline predictive capabilities [46].

Stage 2: Organoid-Specific Fine-tuning The pre-trained model is subsequently fine-tuned using limited datasets of tumor-specific organoid drug response data. This stage employs L2 regularization and other optimization techniques to adapt the model parameters to the more clinically relevant organoid context without overfitting [46].