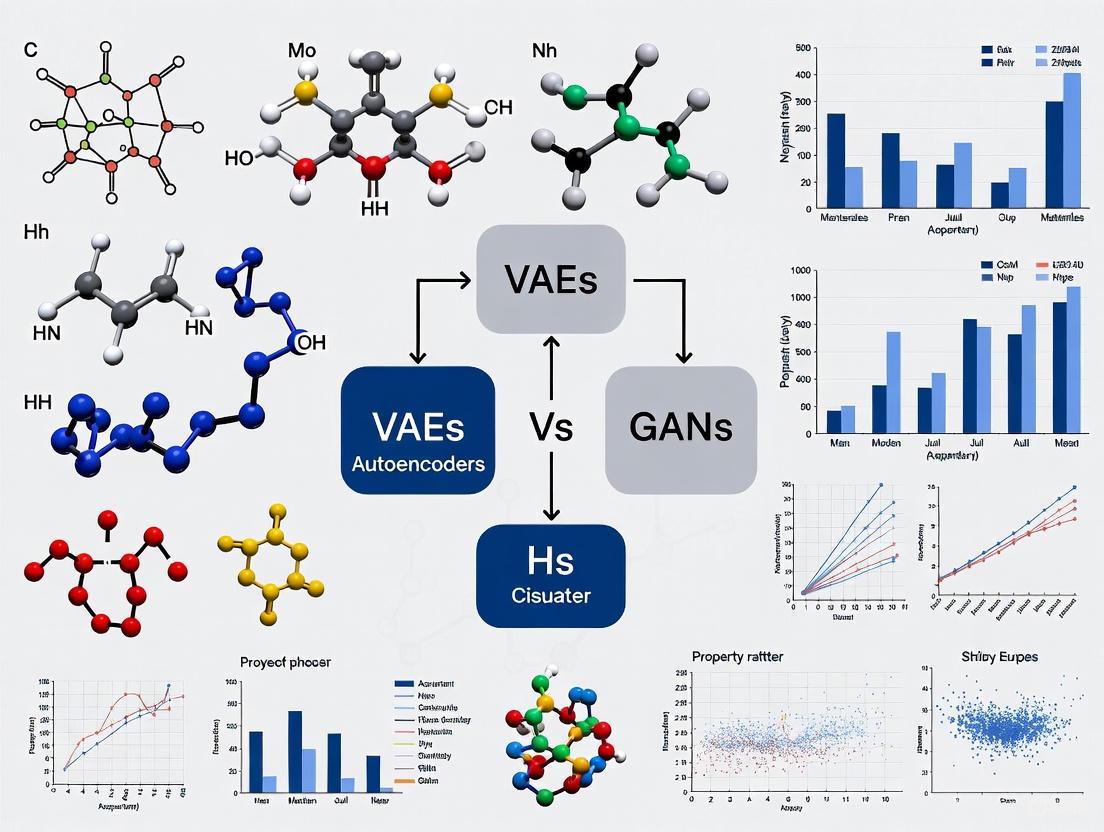

VAE vs. GAN for Materials Discovery: A Comparative Analysis for Accelerated Drug Development and Innovation

This article provides a comprehensive comparative analysis of two pivotal deep generative models—Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs)—in the context of materials discovery.

VAE vs. GAN for Materials Discovery: A Comparative Analysis for Accelerated Drug Development and Innovation

Abstract

This article provides a comprehensive comparative analysis of two pivotal deep generative models—Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs)—in the context of materials discovery. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of how these AI models learn material representations and enable inverse design. The scope extends to their methodological applications in generating novel candidates for catalysts, semiconductors, and drug-like molecules, addressing critical challenges like data scarcity and computational cost. We delve into troubleshooting common issues such as GAN training instability and VAE output blurriness, and present optimization strategies, including hybrid models. Finally, the article offers a rigorous validation and comparison of their performance, synthesizing key takeaways to guide model selection and outline future directions for AI-accelerated biomedical research.

Generative AI Fundamentals: How VAEs and GANs Power Inverse Materials Design

The Paradigm Shift from Edisonian Trial-and-Error to AI-Driven Inverse Design

The discovery of new materials and drug molecules has historically been a painstaking process, characterized by extensive Edisonian trial-and-error experimentation in laboratories worldwide. This conventional approach, while responsible for many breakthroughs, is often time-consuming, resource-intensive, and limited by human intuition and the practical constraints of exploring vast chemical spaces. The emergence of artificial intelligence (AI), particularly deep generative models, has initiated a paradigm shift toward inverse design—a computational framework where target properties are specified first, and AI algorithms generate candidate structures that meet these requirements [1] [2]. This approach effectively inverts the traditional discovery pipeline, promising accelerated development timelines and access to novel, high-performing materials and therapeutics.

Among the various generative AI models, Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) have emerged as two prominent architectures with distinct mechanisms and applications in scientific discovery. Understanding their comparative strengths, limitations, and optimal use cases is crucial for researchers aiming to harness AI's potential in materials science and drug development [3] [4]. This guide provides an objective comparison of these two technologies, supported by experimental data and detailed protocols from current research.

VAEs and GANs are both deep generative models, but they operate on fundamentally different principles and architectural philosophies, leading to divergent performance characteristics.

Variational Autoencoders (VAEs) utilize an encoder-decoder structure based on probabilistic principles. The encoder maps input data into a structured latent space, typically a Gaussian distribution, and the decoder reconstructs the data from this space. This architecture explicitly learns a compressed, continuous latent representation of the data, enabling smooth interpolation and meaningful exploration of the design space [3] [4]. The training objective is to maximize the likelihood of the input data while minimizing the Kullback-Leibler (KL) divergence between the learned latent distribution and a prior distribution, leading to generally stable training processes [4].

Generative Adversarial Networks (GANs) employ a game-theoretic framework involving two competing neural networks: a generator and a discriminator. The generator creates synthetic data from random noise, while the discriminator evaluates its authenticity against real data. This adversarial competition drives the generator to produce increasingly realistic outputs [4]. However, this process can be less stable than VAE training and susceptible to mode collapse, where the generator produces limited diversity [3] [4].

Table 1: Fundamental Differences Between VAE and GAN Architectures

| Feature | Variational Autoencoder (VAE) | Generative Adversarial Network (GAN) |

|---|---|---|

| Core Architecture | Encoder-Decoder with probabilistic latent space [4] | Generator-Discriminator in adversarial setup [4] |

| Training Objective | Likelihood maximization & KL divergence minimization [4] | Adversarial loss; generator fools discriminator [4] |

| Latent Space | Explicit, probabilistic (e.g., Gaussian), interpretable [3] [4] | Implicit, often random noise, less interpretable [4] |

| Training Stability | Generally more stable and consistent [3] [4] | Can be unstable; requires careful tuning [3] [4] |

| Output Quality | Can be blurrier; may lack fine detail [3] [4] | Often high-quality, sharp, and highly realistic [3] [4] |

| Output Diversity | Better coverage of data distribution; less prone to mode collapse [4] | High potential but susceptible to mode collapse [3] [4] |

Performance and Application in Scientific Discovery

Quantitative data from recent studies highlights how the theoretical differences between VAEs and GANs translate into practical performance in research settings. The choice of model often involves a trade-off between output quality, diversity, and training stability.

Table 2: Comparative Performance in Scientific Applications

| Application & Metric | VAE Performance | GAN Performance |

|---|---|---|

| Image Reconstruction (Materials) | 98.85% accuracy for 2D particle shapes [5] | High-quality, sharp synthetic microscopy images [6] |

| Inverse Design Accuracy (R²) | Sphericity: 0.9955, Packing Fraction: 0.9463 [5] | Probabilistic reconstruction of intermediate material states [6] |

| Latent Space Interpretation | High interpretability; disentangled geometric features [5] | Local smoothness used for Monte Carlo simulation of pathways [6] |

| Primary Scientific Use Cases | Inverse design with property constraints [5] [7], anomaly detection [3] | Data augmentation [3], simulating dynamic processes [6] |

Case Study: VAE for Inverse Design of Particle Shapes

A 2025 study demonstrated the application of a rotation- and reflection-invariant VAE for the inverse design of two-dimensional convex particle shapes with target sphericity (ψ) and saturated packing fraction (ϕS) [5].

Experimental Protocol:

- Dataset: A dataset of 1,689 convex particle shapes was constructed, comprising 1,278 generated random shapes and 411 shapes from prior studies.

- Model Architecture: A VAE with an invariant architecture was designed to ensure that different spatial orientations of the same shape were mapped to a unified latent representation.

- Training: The VAE was trained to encode the 2D shapes into a low-dimensional latent space. A Conditional VAE (CVAE) was then employed for inverse design, taking target property labels (ψ, ϕS) as input.

- Validation: The framework achieved an accurate latent representation with a reconstruction accuracy of 98.85%. The CVAE demonstrated high accuracy in generating shapes for target ψ and ϕS, with R² values of 0.9955 and 0.9463, respectively [5].

Case Study: GAN for Analyzing Material Dynamics

Another 2025 study utilized a deep generative model, specifically a GAN, to probabilistically reconstruct intermediate stages in nanoscale material evolution, such as phase transitions and chemical reactions, from sparse temporal observations [6].

Experimental Protocol:

- Imaging Data: Sequential snapshots of material transformations were obtained via techniques like coherent X-ray diffraction imaging (CXDI).

- Model Training: A GAN with a generator (G) and discriminator (D) was trained on the experimental images. The generator learned to create realistic material images from latent vectors, while the discriminator learned to distinguish real from generated images. The Wasserstein loss function with a gradient penalty was used to stabilize training.

- Monte Carlo Simulation: The trained generator was integrated into a Monte Carlo sampling scheme. Latent vectors were perturbed to explore the local latent space and generate ensembles of plausible intermediate material states not captured experimentally.

- Application: The framework was successfully applied to phenomena including gold nanoparticle diffusion and copper sulfidation, revealing previously unrecognized dynamic behaviors for future experimental validation [6].

The Scientist's Toolkit: Essential Reagents and Models

Successfully implementing generative AI requires more than just choosing an algorithm. It involves a suite of computational "reagents" and methodologies that form the foundation of a robust inverse design workflow.

Table 3: Key Research Reagent Solutions for AI-Driven Inverse Design

| Tool / Solution | Function | Relevance to VAE/GAN |

|---|---|---|

| Wasserstein GAN with Gradient Penalty (WGAN-GP) | A GAN variant that improves training stability by using a loss function based on Wasserstein distance and enforcing a Lipschitz constraint via gradient penalty [6]. | Critical for stabilizing GAN training in scientific applications, preventing mode collapse, and generating high-quality physical data [6]. |

| Conditional Variational Autoencoder (CVAE) | A VAE extension where the generation process is conditioned on specific labels (e.g., target properties) [5]. | Enables direct inverse design by generating structures that match user-defined property values, such as sphericity or packing fraction [5]. |

| Rotation- & Reflection-Invariant Architecture | A specialized neural network design that ensures a shape's learned representation is independent of its spatial orientation [5]. | Enhances VAE interpretability and generalizability by producing a unified latent code for geometrically equivalent structures [5]. |

| Monte Carlo (MC) Sampling in Latent Space | A statistical method for probabilistically exploring the local neighborhood of a data point in the latent space [6]. | Used with trained generators (GAN or VAE) to sample ensembles of plausible structural variations or transformation pathways [6]. |

| Graph-Based Representation | A method for representing material structures (e.g., truss metamaterials, molecules) as graphs with nodes and edges [7]. | Provides a compact, meaningful input for both VAEs and GANs, encoding topology and geometry for generative tasks [7]. |

The transition from Edisonian trial-and-error to AI-driven inverse design represents a fundamental acceleration in the pace of scientific discovery. For researchers and development professionals, the choice between VAE and GAN is not a matter of which is universally superior, but which is optimal for a specific problem.

- Choose a VAE when your priority is a stable training process, an interpretable and smooth latent space for reasoning, and tasks like inverse design under explicit property constraints or anomaly detection. Its probabilistic foundation is a key asset for exploring design spaces systematically [5] [3] [4].

- Choose a GAN when the primary objective is to generate high-fidelity, realistic data, such as in data augmentation for limited experimental datasets or simulating high-resolution structural evolution. The trade-off involves managing training instability and vigilance against mode collapse [6] [3].

As the field evolves, hybrid models and emerging architectures like diffusion models and generative flow networks (GFlowNets) are gaining traction [1] [8]. However, VAEs and GANs have laid a strong foundation, providing the scientific community with powerful and versatile tools to navigate the vast complexity of materials and molecular space in a targeted, rational, and efficient manner.

The exploration of chemical and materials space, estimated to exceed 10^60 feasible organic molecules, represents a monumental challenge in accelerated materials discovery [9]. Generative artificial intelligence (GAI) has emerged as a transformative approach to navigate this vast space, with Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) standing as two prominent architectures [9] [2]. While both can generate novel molecular structures, their underlying mechanisms and suitability for scientific discovery differ substantially. VAEs, introduced in 2013 by Kingma and Welling, are probabilistic generative models that learn a continuous, structured latent representation of input data [10] [11]. Their unique "compress-before-reconstruct" approach, which maps inputs into a probabilistic latent space, aligns naturally with the needs of semantic communication and efficient feature extraction in scientific applications [11]. This article provides a comparative analysis of the core architecture of VAEs against GANs, focusing on their application in materials discovery research. We dissect the probabilistic encoding and decoding mechanisms, present experimental performance data, and provide detailed methodologies for researchers seeking to implement these models.

Core Architectural Comparison: VAE vs. GAN

The fundamental difference between VAEs and GANs lies in their learning objectives and architectural design. VAEs are rooted in variational Bayesian inference, optimizing a lower bound (ELBO) on the data likelihood [10] [12]. In contrast, GANs establish a zero-sum game between two networks: a generator that creates candidates and a discriminator that evaluates them [13].

Table 1: Fundamental Architectural Differences Between VAE and GAN

| Feature | Variational Autoencoder (VAE) | Generative Adversarial Network (GAN) |

|---|---|---|

| Core Principle | Probabilistic encoding/decoding, variational inference [10] | Adversarial training, game theory between generator and discriminator [13] |

| Learning Objective | Maximize the Evidence Lower Bound (ELBO) [10] [12] | Minimax optimization: (\minG \maxD L(G,D)) [13] |

| Latent Space | Continuous, probabilistic (e.g., Gaussian) [10] [11] | Typically deterministic, can be continuous |

| Key Advantage | Stable training, meaningful latent space, principled uncertainty quantification [11] | Potential for generating highly realistic, sharp data samples [14] |

| Key Disadvantage | Generated samples can be blurrier than GANs [14] | Training instability, mode collapse (limited diversity) [13] [15] |

The Probabilistic Framework of VAEs

A VAE's architecture consists of two probabilistic neural networks: an encoder and a decoder [10] [11]. The encoder, (q_\phi(z|x)), takes input data (x) (e.g., a molecular structure) and outputs parameters (mean (\mu) and standard deviation (\sigma)) defining a probability distribution in the latent space (z) [10] [16]. This differs from a standard autoencoder, which outputs a single point in the latent space; the VAE's probabilistic output enables the generation of new, varied samples [12].

The decoder, (p_\theta(x|z)), maps a latent vector (z) back to the data space, reconstructing the input or generating a new sample [10]. A critical component linking these two is the reparameterization trick, which allows for gradient-based optimization through the random sampling process [10] [12]. The trick expresses the latent vector as (z = \mu + \sigma \cdot \epsilon), where (\epsilon) is noise sampled from a standard normal distribution (\mathcal{N}(0, I)). This makes the sampling operation differentiable [12] [16].

The Adversarial Framework of GANs

A GAN consists of a generator (G) and a discriminator (D) [13]. The generator takes random noise from a prior distribution (e.g., a multivariate normal) and maps it to the data space. The discriminator receives both real data and synthetic data from the generator and attempts to distinguish between them. The two networks are trained simultaneously in a competitive minimax game: the generator strives to produce data that fools the discriminator, while the discriminator works to correctly identify fake samples [13]. The objective function is: [ \minG \maxD L(G,D) = \mathbb{E}{x \sim p{data}}[\ln D(x)] + \mathbb{E}{z \sim pz}[\ln (1 - D(G(z)))] ] This adversarial training can produce highly realistic samples but is notoriously unstable and may suffer from mode collapse, where the generator fails to capture the full diversity of the training data [13] [15].

The VAE Loss Function: A Dual Objective

The VAE is trained by maximizing the Evidence Lower BOund (ELBO), which combines two distinct loss terms [10] [12]: [ L{\theta,\phi}(x) = \mathbb{E}{z \sim q\phi(\cdot|x)}[\ln p\theta(x|z)] - D{KL}(q\phi(z|x) \parallel p(z)) ]

- Reconstruction Loss: The first term, (\mathbb{E}{z \sim q\phi(\cdot|x)}[\ln p_\theta(x|z)]), measures how well the decoder reconstructs the input data from its latent representation. For binary data (e.g., binarized MNIST), this is often a binary cross-entropy loss, while for continuous data, it can be a mean-squared error [10] [16].

- KL Divergence Loss: The second term, (D{KL}(q\phi(z|x) \parallel p(z))), acts as a regularizer. It penalizes the divergence between the encoder's distribution (q_\phi(z|x)) and a simple prior (p(z)), typically the standard normal distribution (\mathcal{N}(0, I)) [10] [12]. This encourages the latent space to be compact, continuous, and smooth, facilitating meaningful interpolation and sample generation.

The total loss is the sum of the reconstruction loss and the KL loss, and the model parameters ((\phi, \theta)) are updated via backpropagation, with gradients flowing through the reparameterization trick [16].

Performance Comparison in Materials Discovery

The theoretical differences between VAEs and GANs lead to distinct performance characteristics in practical materials discovery applications. The table below summarizes quantitative comparisons based on experimental findings in the literature.

Table 2: Experimental Performance Comparison in Materials Discovery Applications

| Application Domain | Model Variant | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| General Molecular Generation | Probability Distribution-Learning Models (VAE, GAN) | Success in discovering molecules with 7 extreme target properties | Failed to discover target-hitting molecules [15] | Kim et al., 2024 [15] |

| General Molecular Generation | RL-Guided Combinatorial Chemistry (Non-probabilistic) | Success in discovering molecules with 7 extreme target properties | Discovered 1,315 target-hitting molecules out of 100,000 trials [15] | Kim et al., 2024 [15] |

| Image Generation (MNIST, CIFAR-10) | Standard VAE | Sample Quality (Qualitative Evaluation) | Generates blurry images with less distinct edges [14] | Huang et al., 2020 [14] |

| Image Generation | GAN with Decoder-Encoder Noises (DE-GAN) | Sample Quality / Training Convergence | Faster convergence and higher quality images than standard GAN [14] | Huang et al., 2020 [14] |

| Semantic Communication | VAE-enabled Architecture | Communication Overhead Reduction | Significant reduction vs. traditional systems [11] | Ren et al., 2024 [11] |

Case Study: The Extrapolation Challenge in Molecular Discovery

A critical challenge in materials discovery is extrapolation—discovering materials with properties superior to existing ones, often lying outside the distribution of training data [15]. Models that learn the empirical probability distribution of training data, including VAEs and GANs, struggle with this task because they are designed to generate data that approximates the training distribution [15]. As demonstrated in a toy problem aimed at discovering molecules hitting seven extreme target properties, both VAE and GAN models failed, while a reinforcement learning-guided combinatorial chemistry approach succeeded [15]. This highlights a fundamental limitation of standard VAEs and GANs in goal-directed discovery of materials with extreme or novel properties.

Hybrid Models: Combining Strengths

To overcome the limitations of individual models, researchers have developed hybrid approaches. For instance, GANs with decoder-encoder output noises (DE-GANs) use a pre-trained VAE to map random noise vectors to "informative" ones that carry the intrinsic distribution of the training images [14]. This hybrid model feeds these informative noises to the GAN's generator, which accelerates convergence and improves the quality of the generated images compared to standard GANs [14]. This demonstrates the potential of combining the stable representation learning of VAEs with the high-fidelity generation of GANs.

Experimental Protocols for Materials Research

For researchers aiming to implement these models, understanding the standard experimental workflow is crucial. Below is a detailed protocol for a typical molecular generation and validation pipeline using a generative model.

Step 1: Data Curation and Representation

- Action: Assemble a dataset of known molecules (e.g., from databases like ChEMBL or ZINC) relevant to the target material property [9] [15].

- Representation: Convert molecular structures into a machine-readable format. Common representations include:

- Critical Step: Split data into training, validation, and test sets.

Step 2: Model Selection and Architecture Design

- Action: Choose a base model (e.g., VAE, GAN, or a hybrid) and design its architecture.

- For a VAE:

- Encoder: For SMILES input, an RNN or transformer can be used; for graphs, a graph neural network. The output layer must parameterize the latent distribution (mean and variance vectors) [9] [16].

- Decoder: Typically mirrors the encoder architecture to reconstruct the input representation.

- Latent Space Dimension: A key hyperparameter to tune (e.g., 2 to 256 dimensions) [16].

- For a GAN:

- Generator: An RNN or multi-layer perceptron that maps noise to a molecular representation.

- Discriminator: A classifier (e.g., CNN or RNN) that distinguishes real from generated molecules.

Step 3: Model Training and Validation

- Action: Train the model on the prepared dataset.

- For VAE Training:

- Loss Function: Implement the combined loss (Reconstruction + KL Divergence) [16].

- Optimization: Use gradient-based optimizers like Adam. The reparameterization trick is essential for gradient flow [12] [16].

- Validation: Monitor both loss components to ensure the model is learning meaningful representations without over-regularizing (which would cause the KL loss to dominate and lead to poor reconstruction).

- For GAN Training:

- Training Loop: Implement an alternating training regimen, updating the discriminator and generator in separate steps [13].

- Validation: Monitor for mode collapse and use metrics like the Inception Score or Fréchet Distance if applicable.

Step 4: Sampling and Candidate Generation

- Action: Generate novel candidate materials.

- VAE Sampling: Sample a latent vector (z) from the prior distribution (\mathcal{N}(0, I)) and pass it through the decoder to generate a new molecule [10] [12].

- GAN Sampling: Sample a noise vector and pass it through the generator.

Step 5: In-Silico Validation

- Action: Filter generated candidates using computational methods before experimental synthesis.

- Methods:

Step 6: Experimental Synthesis and Testing

- Action: The most promising candidates are synthesized in the lab and their properties are experimentally validated [9]. This closes the discovery loop and can provide new data to refine the generative model.

Table 3: Essential "Research Reagent Solutions" for Computational Materials Discovery

| Item / Resource | Function / Purpose | Example Tools / Libraries |

|---|---|---|

| Molecular Datasets | Provides structured data for training generative models. | ChEMBL, ZINC, MOSES, QM9 [15] |

| Fragmentation Rules | Defines how molecular building blocks can be combined, enabling combinatorial generation. | BRICS (Breaking of Retrosynthetically Interesting Chemical Substructures) [15] |

| Differentiable Programming Framework | Provides the core infrastructure for building, training, and evaluating neural network models. | PyTorch, TensorFlow/Keras [16] |

| High-Throughput Simulation | Provides accurate property data for training and validation where experimental data is scarce. | Density Functional Theory (DFT), Molecular Dynamics (MD) [9] |

| Property Prediction Models | Fast, surrogate models for screening generated molecules and predicting their properties. | Random Forests, Support Vector Machines, Graph Neural Networks [9] |

VAEs and GANs offer powerful but distinct approaches to generative modeling in materials science. The probabilistic encoding and decoding architecture of VAEs provides a principled framework for learning a continuous and smooth latent space, enabling meaningful interpolation and relatively stable training [10] [11]. However, they can produce less sharp samples and, like GANs, struggle with the extrapolation required to discover materials with extreme properties because they model the empirical distribution of training data [15] [14]. GANs can achieve high sample fidelity but face challenges with training instability and mode collapse [13] [15]. The choice between them is application-dependent. For exploratory tasks where a structured latent space is valuable, VAEs are a robust choice. For achieving maximum realism in generated structures, GANs or hybrid models like DE-GAN [14] may be preferable. Future work will likely focus on hybrid models that combine the strengths of both architectures and on reinforcement learning methods that can more effectively navigate the chemical space towards desired goals without being constrained by the probability distribution of known data [15] [2].

Generative Adversarial Networks (GANs) represent a groundbreaking adversarial approach to generative modeling, fundamentally differing from traditional methods. Introduced by Ian Goodfellow in 2014, GANs frame the generation problem as a two-player contest between a generative network and a discriminative network [17]. This adversarial framework has proven exceptionally powerful in capturing complex, high-dimensional data distributions, producing outputs of remarkable realism in domains ranging from image synthesis to molecular design [18] [19].

In materials discovery research, where the chemical space is vast and the rules governing stable formations are complex, GANs offer a promising data-driven alternative to traditional rational design methods [20]. They operate on a "design without understanding" paradigm, capable of learning implicit chemical rules and constraints from known material data without requiring explicit programming of all physical laws [20] [21]. This review examines the core architectural principles of GANs, with particular emphasis on their adversarial training dynamics and the minimax game foundation, while contextualizing their performance against Variational Autoencoders (VAEs) for materials science applications.

Core Architectural Framework

The Adversarial Duo: Generator and Discriminator

The GAN architecture consists of two distinct neural networks that engage in competitive learning [17] [19]:

Generator (G): The "counterfeiter" that transforms random noise (typically from a Gaussian or uniform distribution) into synthetic samples attempting to mimic real data. The generator's objective is to produce outputs indistinguishable from genuine samples.

Discriminator (D): The "detective" that acts as a binary classifier, receiving both real samples from the training dataset and synthetic samples from the generator, then assigning probability estimates of authenticity to each.

This adversarial dynamic creates a self-improving feedback loop: as the discriminator enhances its detection capabilities, it forces the generator to refine its forgeries, which in turn pushes the discriminator to become more discerning [17]. During training, these networks alternate updates—the discriminator learns to better distinguish real from fake, while the generator learns to better fool the discriminator [17].

Table: Component Roles in GAN Architecture

| Component | Role | Input | Output | Analogy |

|---|---|---|---|---|

| Generator (G) | Creates synthetic data | Random noise | Synthetic samples | Counterfeiter |

| Discriminator (D) | Evaluates authenticity | Real & synthetic samples | Probability of authenticity | Detective |

The Minimax Game: Mathematical Foundation

The training process is formalized through a minimax game where the generator and discriminator have opposing objectives [17]. The value function V(D, G) is expressed as:

[ \minG \maxD V(D, G) = \mathbb{E}{x \sim p{data}(x)}[\log D(x)] + \mathbb{E}{z \sim pz(z)}[\log(1 - D(G(z)))] ]

Where:

- ( \mathbb{E}{x \sim p{data}(x)}[\log D(x)] ) represents the discriminator's reward for correctly identifying real data.

- ( \mathbb{E}{z \sim pz(z)}[\log(1 - D(G(z)))] ) represents the discriminator's reward for correctly identifying fake data and the generator's penalty for producing detectable fakes.

The discriminator's objective is to maximize this function, effectively maximizing the probability of correctly classifying both real and generated samples [17]. Conversely, the generator's objective is to minimize the function, specifically minimizing the term (\log(1 - D(G(z)))), which occurs when the discriminator is fooled into assigning high probabilities to generated samples [17].

Figure 1: GAN Training Workflow illustrating the adversarial relationship between generator and discriminator

Comparative Analysis: GANs vs. VAEs in Materials Discovery

Architectural and Philosophical Differences

While both GANs and VAEs are deep generative models, their underlying architectures and training objectives differ substantially, leading to complementary strengths and limitations for materials research [20].

VAEs (Variational Autoencoders) employ an encoder-decoder architecture based on variational inference [22] [23]. The encoder maps input data to a latent space characterized by mean and variance parameters, while the decoder reconstructs data from this latent representation [22]. A critical distinction is that VAEs learn to represent inputs as probability distributions rather than fixed points, enabling generation of new samples through sampling from the learned latent space [22]. Their training incorporates a reconstruction loss (typically mean squared error) combined with a KL divergence term that regularizes the latent space to approximate a standard Gaussian distribution [22] [23].

GANs, in contrast, utilize an adversarial framework without explicit reconstruction objectives or latent space regularization [17]. This fundamental difference leads to GANs typically generating samples with higher perceptual quality and sharper characteristics, while VAEs often produce more diverse but sometimes blurrier outputs [17] [22].

Table: Architectural Comparison Between GANs and VAEs

| Feature | Generative Adversarial Networks (GANs) | Variational Autoencoders (VAEs) |

|---|---|---|

| Core Architecture | Two competing networks: generator and discriminator | Encoder-decoder with variational inference |

| Training Objective | Minimax game | Evidence Lower Bound (ELBO) maximization |

| Loss Components | Adversarial loss | Reconstruction loss + KL divergence |

| Latent Space | No explicit structure; arbitrary prior | Regularized to approximate standard Gaussian |

| Sample Quality | Typically sharper, more realistic | Sometimes blurrier but more diverse |

| Training Stability | Often unstable; mode collapse issues | Generally more stable |

| Materials Applications | MATGAN for composition generation [20] | Conditional VAEs for inverse design [20] |

Performance Metrics in Materials Discovery

Quantitative evaluation of generative models for materials science presents unique challenges, as standard image quality metrics don't directly translate to material validity. Key performance indicators instead focus on chemical validity, novelty, and property optimization.

For material composition generation, the "needle in a haystack" problem is particularly acute—the feasible chemical space is exceedingly sparse within all possible element combinations [20]. For ternary materials, possible combinations exceed 10⁹, with only a minute fraction satisfying basic chemical rules like charge neutrality and electronegativity balance [20].

Table: Performance Comparison in Materials Generation Tasks

| Model | Charge Neutrality | Electronegativity Balance | Novelty | Training Stability |

|---|---|---|---|---|

| MATGAN (GAN) | 84.5% [20] | 84.5% [20] | High | Moderate |

| Material Transformer | 97.54% [20] | 91.40% [20] | High | High |

| Conditional VAE | <60% [20] | <60% [20] | Moderate | High |

| Crystal Transformer | Best performance [21] | Best performance [21] | High | High |

Experimental results demonstrate that discrete representation strategies significantly impact performance. Early approaches using real-valued vectors with conditional VAEs and GANs yielded less than 60% chemical validity [20]. Subsequent models like MATGAN employed one-hot binary matrix representations, increasing chemical validity to 84.5% by better capturing chemical constraints [20]. Transformer-based architectures have achieved the highest performance, with charge neutrality reaching 97.54% by treating material compositions as sequential data (e.g., representing SrTiO₃ as "SrTiOOO") [20].

Experimental Protocols for Materials Generation

MATGAN Implementation for Composition Generation

The MATGAN framework exemplifies a tailored GAN architecture for materials discovery [20]. Key implementation details include:

Representation Strategy: Materials compositions are encoded as one-hot binary matrices rather than real-valued vectors, enabling convolutional networks to better learn chemical patterns and constraints [20].

Training Dataset: Models are trained on known materials from the Inorganic Crystal Structure Database (ICSD) and Materials Project database, which provide examples of chemically valid compositions and their structures [20].

Evaluation Metrics: Generated compositions are assessed against charge neutrality and electronegativity balance requirements—fundamental chemical rules that determine whether a composition can form a stable compound [20].

Validation Pipeline: Promising candidates are further evaluated using crystal structure prediction algorithms and property prediction models before experimental synthesis [20].

GFlowNets for Sequential Molecular Building

Generative Flow Networks (GFlowNets) represent an alternative probabilistic framework particularly suited for chemical design problems [20]. Unlike GANs, GFlowNets construct objects through a sequential decision process by sampling from a probability distribution over possible building blocks [20]. They are trained to sample compositional structures with probability proportional to a given reward function, making them effective for exploration-exploitation tradeoffs in vast chemical spaces [20].

Figure 2: GFlowNet Sampling Process for sequential construction of materials

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Generative Materials Research

| Tool/Resource | Type | Function | Application Example |

|---|---|---|---|

| ICSD Database | Materials Database | Repository of known crystal structures | Training data for generative models [20] |

| Materials Project | Computational Database | DFT-calculated material properties | Training and validation dataset [20] |

| PyMatgen | Python Library | Materials analysis and structure manipulation | Processing crystal structures and descriptors [24] |

| MATGAN | GAN Implementation | Materials composition generation | Generating novel chemically-valid compositions [20] |

| Material Transformer | Transformer Model | Sequence-based material generation | High-validity composition design [20] |

| CryoDiff | Diffusion Model | Crystal structure generation with symmetry constraints | Topological insulator design [24] |

Optimization Strategies and Challenges

Addressing Training Instabilities

GAN training is notoriously unstable, with common failure modes including:

Mode Collapse: The generator produces limited varieties of samples, failing to capture the full diversity of the training distribution [17] [19].

Vanishing Gradients: The discriminator becomes too effective early in training, preventing generator learning [17].

Advanced variants have been developed to address these limitations:

Wasserstein GAN (WGAN): Replaces Jensen-Shannon divergence with Earth-Mover distance, providing more stable training and meaningful loss metrics [19].

Deep Convolutional GAN (DCGAN): Incorporates convolutional architectures, batch normalization, and carefully designed generator/discriminator balance to stabilize training [17].

Integration with Domain Knowledge

In materials science applications, successful GAN implementations often incorporate domain-specific constraints to guide the generation process:

Chemical Rule Embedding: Models can be conditioned on chemical properties or trained with reward functions that incorporate domain knowledge, such as charge balance constraints [20] [18].

Multi-objective Optimization: Reinforcement learning frameworks can be integrated with GAN training to simultaneously optimize for multiple material properties, such as conductivity, stability, and synthesizability [18].

Transfer Learning: Models pre-trained on large datasets can be fine-tuned for specific material classes with limited data, accelerating discovery in specialized domains [24].

The comparative analysis reveals that both GANs and VAEs offer distinct advantages for materials discovery, with the optimal choice dependent on specific research goals.

GAN architectures excel in generating high-quality, realistic samples when sufficient training data exists and exploration of the chemical space is desired [20] [17]. Their adversarial training produces sharp, convincing outputs but requires careful stabilization and monitoring. The demonstrated success of MATGAN in generating chemically valid compositions highlights GANs' potential for materials design [20].

VAEs provide greater training stability and a well-structured latent space suitable for interpolation and systematic exploration [22] [23]. While sometimes producing less sharp outputs, their probabilistic foundation and inherent regularization make them valuable for inverse design tasks where navigating the latent space is prioritized [22].

Emerging approaches increasingly leverage hybrid frameworks that combine the strengths of both architectures—using VAEs for initial exploration and GANs for refinement—or integrate transformer-based architectures that treat material design as a sequence generation problem [20] [21]. As generative AI continues evolving, its integration with autonomous laboratories and high-throughput computation promises to accelerate materials discovery from conceptual design to experimental realization [25] [24].

The Critical Role of the Latent Space in Navigating the Vast Chemical Universe

The structural diversity of the chemical universe is vast, with estimates exceeding 10^60 possible compounds for small molecules alone [26]. This immense scale renders traditional, experiment-led discovery processes impractical for exhaustive exploration. The field of materials science is consequently undergoing a paradigm shift, moving from experiment-driven approaches to artificial intelligence (AI)-driven inverse design [1]. In this new paradigm, generative models learn the probability distribution of existing materials data, enabling them to propose novel structures with targeted properties. Central to the success of this approach is the latent space—a compressed, abstract representation of data where essential features and underlying patterns are captured [27] [28]. This article provides a comparative analysis of how two leading generative models, Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs), construct and utilize this critical latent space for navigating the chemical universe in materials discovery and drug development.

Latent Space: The Core of Generative AI

What is Latent Space?

In deep learning, a latent space is an abstract, lower-dimensional representation of data that captures its essential features and underlying patterns [28]. It is "latent" because it encodes hidden characteristics not directly observable in the raw input data. By mapping high-dimensional data (like molecular structures) into this compressed space, machine learning models can more effectively understand, manipulate, and generate new data points [27]. The latent space acts as a bridge between the complex, high-dimensional world of raw data and a simplified representation where meaningful operations can be performed.

Key Properties of a Functional Latent Space

For a latent space to be useful in scientific discovery, it must exhibit two crucial properties [27]:

- Continuity: Nearby points in the latent space should decode into similar, meaningful content.

- Completeness: Sampling any point from the latent space should yield a valid, meaningful data instance.

These properties enable researchers to navigate the space systematically, interpolate between known structures, and generate novel, viable candidates.

Comparative Analysis: VAEs vs. GANs for Materials Discovery

Variational Autoencoders (VAEs)

VAEs are probabilistic generative models that learn a structured latent space for data generation [1]. They consist of an encoder that maps input data to a probability distribution (defined by a mean μ and variance σ), and a decoder that reconstructs data from samples drawn from this distribution [27] [29]. The training involves minimizing a loss function that combines reconstruction loss and Kullback-Leibler (KL) divergence, which regularizes the latent space to approximate a standard Gaussian distribution [30].

Key Advantages for Chemical Space:

- Provides a continuous, interpretable latent space suitable for optimization [30].

- The probabilistic nature allows for the generation of diverse samples [29].

- The encoded low-dimensional space enables efficient inverse design [1].

Generative Adversarial Networks (GANs)

GANs employ an adversarial training process between two networks: a generator that creates samples from random noise in the latent space, and a discriminator that distinguishes between real and generated samples [31] [28]. The generator learns to map points from a simple latent distribution to complex data distributions, while the discriminator pushes the generator toward producing increasingly realistic outputs.

Key Advantages for Chemical Space:

- Capable of generating high-resolution, realistic samples [31].

- The adversarial training can lead to sharp, detailed outputs [32].

- Can model complex, multi-modal data distributions effectively.

Performance Comparison in Materials Science Applications

The table below summarizes the quantitative performance of VAE and GAN models in key materials science tasks, based on experimental data from recent studies.

Table 1: Performance Comparison of Generative Models in Materials Discovery

| Model | Task | Dataset | Performance Metric | Score | Key Advantage |

|---|---|---|---|---|---|

| NP-VAE (Variant) [26] | Molecular Reconstruction & Generation | St. John et al. dataset (76k train, 5k test) | Reconstruction Accuracy | Higher than CVAE, CG-VAE, JT-VAE, HierVAE | Superior generalization ability |

| NP-VAE (Variant) [26] | Molecular Generation | Evaluation Dataset [26] | Generation Success Rate | 100% (via fragment-based generation) | Always produces chemically valid structures |

| VAE-based (Microstructure) [30] | Material Microstructure Reconstruction | Diverse material microstructures | Reconstruction Quality | Blurrier outputs | Provides compact, optimizable latent space |

| GAN-based (Microstructure) [32] | Scientific Image Generation | Astronomy, Medical Imaging | Visual Realism | High perceptual quality | Produces sharp, visually convincing outputs |

Latent Space Characteristics Comparison

The fundamental differences between VAEs and GANs lead to distinct latent space properties, which directly impact their applicability for materials discovery.

Table 2: Latent Space Characteristics: VAE vs. GAN

| Characteristic | Variational Autoencoders (VAEs) | Generative Adversarial Networks (GANs) |

|---|---|---|

| Space Structure | Probabilistic, explicitly regularized | Implicit, defined by generator mapping |

| Training Stability | Generally more stable | Can suffer from mode collapse |

| Interpretability | High - continuous, smooth transitions | Lower - less structured interpolation |

| Inverse Design Capability | Direct encoding/decoding | Requires additional optimization |

| Sample Diversity | Good, but can suffer from blurring | Potentially higher with successful training |

| Theoretical Guarantees | Bounded loss with KL-divergence | No convergence guarantees |

Experimental Protocols and Methodologies

Benchmarking Protocol for Molecular Generation

To evaluate the reconstruction accuracy and generation capabilities of molecular models, researchers typically follow this rigorous protocol [26]:

Dataset Preparation: Split a standardized dataset (e.g., St. John et al.'s dataset containing 86,000 total compounds) into training (76,000), validation (5,000), and test sets (5,000).

Model Training: Train generative models on the training set to learn the distribution of molecular structures.

Reconstruction Accuracy Assessment:

- For each test compound, perform 10 encodings and 10 decodings per encoding (100 total outputs per input).

- Calculate the proportion of compound structures that exactly match between input and output.

Validity Assessment:

- Sample 1000 latent vectors from the prior distribution N(0, I).

- Decode each vector 100 times.

- Calculate the proportion of chemically valid output compounds using toolkits like RDKit [26].

Comparison: Benchmark against state-of-the-art models (CVAE, GVAE, JT-VAE, HierVAE) using the same dataset and evaluation metrics.

Workflow: VAE for Molecular Discovery

The following diagram illustrates the complete experimental workflow for using VAEs in molecular discovery, from data preparation to inverse design.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The table below details key computational tools and resources essential for conducting research in generative models for chemistry.

Table 3: Essential Research Reagents and Solutions for Generative Chemical AI

| Tool/Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| RDKit [26] | Cheminformatics Library | Cheminformatics analysis and molecule validation | Check chemical validity of generated structures, calculate molecular descriptors |

| DrugBank [26] | Chemical Database | Repository of approved drug and drug-like molecules | Source of training data for generative models targeting drug discovery |

| QM9 [33] | Molecular Dataset | Dataset of quantum chemical properties for small molecules | Benchmarking generative models, training property predictors |

| TensorFlow/PyTorch [29] | Deep Learning Framework | Building and training neural network models | Implementing VAE, GAN, and other generative architectures |

| t-SNE/PCA [28] | Visualization Algorithm | Dimensionality reduction for latent space visualization | Projecting high-dimensional latent spaces to 2D/3D for analysis |

| Grammar VAE (GVAE) [26] | Specialized VAE Model | Generating valid SMILES strings by incorporating grammatical rules | Molecular generation with enforced syntactic validity |

| NP-VAE [26] | Specialized VAE Model | Handling large molecular structures with 3D complexity | Processing natural products and complex drug molecules with chirality |

Case Study: NP-VAE for Natural Product-Inspired Drug Discovery

The development of NP-VAE (Natural Product-oriented Variational Autoencoder) demonstrates how targeted improvements to VAE architecture can overcome specific challenges in navigating chemical space [26].

Experimental Methodology

Model Architecture: NP-VAE combines graph-based decomposition of compound structures into fragment units with Tree-LSTM networks, specifically designed to handle large molecular structures with 3D complexity, including chirality [26].

Training Data: The model was trained on heterogeneous data from DrugBank and natural product compound libraries, enabling it to learn features from both approved drugs and complex natural compounds [26].

Latent Space Construction: The model constructs a continuous latent space that incorporates both structural and functional information, enabling optimization for target properties.

Evaluation: The model was evaluated on its reconstruction accuracy, generation success rate, and ability to produce novel compounds with optimized functions when combined with docking analysis.

Latent Space Structure in NP-VAE

The following diagram illustrates how NP-VAE processes complex molecular structures to construct a meaningful latent space for drug discovery.

Results and Implications

NP-VAE demonstrated higher reconstruction accuracy compared to previous state-of-the-art models (CVAE, CG-VAE, JT-VAE, HierVAE) while maintaining a 100% generation success rate due to its fragment-based approach [26]. By exploring the acquired latent space, researchers succeeded in comprehensively analyzing compound libraries containing natural compounds and generating novel structures with optimized functions. This case highlights how tailoring the latent space construction to specific chemical challenges (large molecules, chirality) enables more effective navigation of relevant chemical spaces.

Future Directions and Hybrid Approaches

Integrating Predictive and Generative Capabilities

Recent research focuses on integrating the strengths of different approaches. The VAE-DKL (Deep Kernel Learning Variational Autoencoder) framework combines the generative power of VAEs with the predictive precision of Gaussian Process regression by structuring the latent space in alignment with target properties [33]. This enables high-precision property prediction while maintaining generative flexibility.

Combining VAEs with Diffusion Models

Another promising direction is the integration of VAEs with Denoising Diffusion Probabilistic Models (DDPM). The VAE-CDGM (VAE-guided Conditional Diffusion Generative Model) leverages the compact latent space of VAEs while utilizing diffusion models to refine the outputs, addressing the trade-off between reconstruction quality and optimization efficiency [30]. In this architecture, the VAE provides a low-dimensional, continuous latent space for efficient optimization, while the conditional diffusion model enhances the quality of the generated microstructures or molecules.

The latent space serves as the critical navigational map for exploring the vast chemical universe, enabling a shift from traditional trial-and-error discovery to rational inverse design. Our comparative analysis reveals that VAEs and GANs offer complementary strengths: VAEs provide structured, interpretable latent spaces suitable for optimization-driven discovery, while GANs excel at producing high-fidelity, realistic samples. The choice between them depends on the specific research goals—whether prioritization of exploration and optimization (favoring VAEs) or visual realism and detail (favoring GANs). Future advancements will likely emerge from hybrid models that integrate the strengths of multiple approaches, coupled with continued improvements in latent space interpretability and integration with experimental workflows. For researchers and drug development professionals, understanding these nuances in latent space design is paramount to leveraging generative AI for accelerated materials and drug discovery.

The exploration of chemical space represents one of the most formidable challenges in modern materials science, with the number of chemically feasible organic molecules alone estimated to exceed 10^60 candidates [9]. Traditional experimental approaches to materials discovery often require 10-20 years from initial discovery to deployment, creating an urgent need for computational methods that can accelerate this timeline [9]. Generative artificial intelligence models, particularly Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs), have emerged as transformative technologies capable of navigating this vast complexity by learning meaningful representations of molecular and crystalline structures. The effectiveness of these models, however, fundamentally depends on how matter is represented in digital form—from simplified molecular input line entry system (SMILES) strings and graph-based representations to sophisticated image-like encodings that capture spatial and structural relationships.

Each representation scheme carries distinct advantages and limitations for materials discovery applications. SMILES strings offer compact, sequential representations of molecular structures but struggle with capturing complex spatial relationships essential for understanding material properties. Crystal graph representations encode connectivity information within crystalline materials but present significant inversion challenges for generative modeling [34]. Image-like encodings, including 3D voxel representations and point cloud models, provide rich spatial information but often at substantial computational cost [35]. This comparative analysis examines how VAEs and GANs leverage these diverse representation schemes to advance materials discovery, evaluating their relative performance across multiple scientific domains through quantitative metrics and experimental validation.

Molecular Representations: SMILES, Graphs, and Image-like Encodings

SMILES-Based Representations

The Simplified Molecular Input Line Entry System (SMILES) provides a line notation for representing molecular structures using ASCII strings, encoding atoms, bonds, branching, and cyclic structures in a compact format [36]. This sequential representation has proven particularly amenable to generative models employing recurrent neural network architectures, though both VAEs and GANs have successfully utilized SMILES for molecular generation. The primary advantage of SMILES lies in its compactness and direct interpretability by chemical experts, while its limitations include the inability to directly represent stereochemistry and complex three-dimensional molecular conformations.

In practice, SMILES strings are typically converted into numerical representations using various fingerprinting techniques before being processed by generative models. For VAEs, these fingerprints are encoded into a continuous latent space that follows a predefined probability distribution, enabling smooth interpolation between molecular structures [9]. GANs utilize SMILES representations by training generators to produce realistic molecular fingerprints that discriminators cannot distinguish from real examples. The VGAN-DTI framework exemplifies this approach, combining GANs, VAEs, and multilayer perceptrons to improve drug-target interaction predictions with reported accuracy of 96% [36].

Graph-Based Representations

Graph representations conceptualize molecules as networks of atoms (nodes) connected by chemical bonds (edges), naturally capturing connectivity patterns and functional group relationships [34]. This representation has shown particular promise for inorganic materials discovery, where traditional SMILES notations are insufficient for describing crystalline structures with periodic boundary conditions. Crystal graph representations specifically encode unit cell parameters, atomic coordinates, and bond connectivity information, providing a comprehensive framework for generative modeling of crystalline materials [34].

Despite their representational power, graph-based approaches present significant challenges for generative models, particularly regarding inversion—the process of converting the generated representation back to a physically valid 3D structure [35]. VAEs addressing this challenge typically employ sophisticated encoder networks that map graph structures to latent distributions, with decoder networks reconstructing the graph features. GANs approach graph generation through adversarial training of graph generators against discriminators that evaluate structural validity. Recent innovations have developed invertible graph representations that minimize information loss during the encoding-decoding process, though these approaches remain computationally intensive for complex crystalline systems [35].

Image-like Encodings

Image-like encodings represent molecular and crystalline structures as 2D or 3D arrays, analogous to pixel-based image representations in computer vision. These encodings can take various forms, including 2D molecular depictions, 3D voxel grids of electron densities, and point cloud representations of crystal structures [37] [35]. The primary advantage of image-like encodings is their compatibility with well-established convolutional neural network architectures that excel at capturing spatial relationships and patterns.

For crystalline materials, point cloud representations have emerged as a particularly efficient encoding, representing crystal structures as sets of atomic coordinates and cell parameters with significantly reduced memory requirements compared to 3D voxel representations (by a factor of 400 in one reported study) [35]. This representation forms the basis for crystal structure generative models that avoid the inversion challenges associated with graph-based approaches. VAEs utilizing image-like encodings typically employ 3D convolutional encoders to map structural representations to latent distributions, with decoder networks reconstructing the spatial arrays. GANs leverage convolutional generators that transform random noise into realistic structural representations, with discriminators trained to distinguish generated from experimental structures [35].

Table 1: Comparison of Molecular and Material Representation Schemes

| Representation Type | Key Features | Advantages | Limitations | Suitable for |

|---|---|---|---|---|

| SMILES Strings | Sequential ASCII representation | Compact, interpretable, works with RNNs | Limited 3D information, stereochemistry challenges | Organic molecules, drug-like compounds |

| Graph Representations | Nodes (atoms) and edges (bonds) | Captures connectivity, functional groups | Inversion challenges for crystals | Organic molecules, some crystalline materials |

| Image-like Encodings | 2D/3D spatial arrays | Rich spatial information, CNN-compatible | Memory intensive for 3D voxels | Crystalline materials, molecular surfaces |

| Point Cloud Representations | Atomic coordinates + cell parameters | Inversion-free, memory efficient | Lacks inherent invariance | Crystal structure generation |

Comparative Performance: VAE vs. GAN Across Representation Schemes

Performance with SMILES and Molecular Representations

When applied to SMILES-based molecular representations, GANs and VAEs demonstrate distinct performance characteristics reflecting their underlying architectural differences. The VGAN-DTI framework exemplifies a hybrid approach that achieves 96% accuracy, 95% precision, 94% recall, and 94% F1 score in drug-target interaction prediction by combining the strengths of both architectures [36]. In this framework, VAEs excel at producing synthetically feasible molecules through their probabilistic encoder-decoder structure, while GANs generate structurally diverse compounds with desirable pharmacological characteristics [36].

Independent comparative studies using standardized datasets (MNIST, FashionMNIST, CIFAR10, and CelebA) have revealed that GANs typically produce higher-quality samples with greater perceptual sharpness, while VAEs generate more diverse samples with better coverage of the data distribution [38]. This performance pattern extends to molecular generation, where GANs tend to create more realistic-looking molecular structures while VAEs produce a broader exploration of chemical space. The Fréchet Inception Distance (FID) metric, commonly used to evaluate generative model performance, often favors GANs for simpler molecular representations while VAEs may outperform on more complex structural datasets [38].

Performance with Crystal Structure Representations

For crystalline materials discovery, representation choice significantly influences the relative performance of VAEs and GANs. In a comprehensive study comparing generative models for crystal structure prediction, diffusion models (a different class of generative models) outperformed both GANs and Wasserstein GANs, though GAN-based approaches demonstrated particular strengths when paired with appropriate representations [39]. The study utilized CrysTens, a specialized crystal encoding designed for deep learning models, and evaluated model performance using over fifty thousand Crystallographic Information Files from Pearson's Crystal Database [39].

GANs have demonstrated remarkable effectiveness with point cloud representations of crystal structures, successfully generating novel ternary Mg–Mn–O materials with reasonable calculated stability and band gaps [35]. This approach enabled the discovery of 23 new crystal structures with promising photoanode properties for water splitting applications—structures that conventional substitution-based discovery methods had overlooked [35]. VAEs applied to crystalline materials often struggle with the "latent space smoothness" problem, where the continuous latent space fails to capture discrete topological transitions between different crystal structures, sometimes resulting in generated structures with topological defects [40].

Quantitative Performance Comparison

Table 2: Quantitative Performance Comparison of VAE vs. GAN in Materials Discovery Applications

| Application Domain | Model Architecture | Representation Scheme | Key Performance Metrics | Reference |

|---|---|---|---|---|

| Drug-Target Interaction | VGAN-DTI (Hybrid) | Molecular fingerprints | 96% accuracy, 95% precision, 94% recall, 94% F1 | [36] |

| Crystal Structure Generation | GAN | Point cloud (CrysTens) | 23 novel stable Mg–Mn–O structures discovered | [35] |

| Crystal Structure Generation | Diffusion Model | CrysTens | Outperformed GAN and WGAN | [39] |

| Topological Magnetic Structures | VAE-GAN Hybrid | 2D spin structures | Improved diversity and fidelity over standalone models | [40] |

| Scientific Image Generation | StyleGAN (GAN) | Image-like encodings | High perceptual quality and structural coherence | [37] |

| Scientific Image Generation | Diffusion Models | Image-like encodings | High realism but struggled with scientific accuracy | [37] |

Experimental Protocols and Methodologies

VAE Architecture and Training Protocol

Variational Autoencoders employ a probabilistic encoder-decoder structure that encodes input data into a distribution over a latent space rather than a single point [36] [38]. The standard VAE architecture consists of an encoder network that maps input data to parameters of a latent distribution (typically mean and variance of a Gaussian distribution), and a decoder network that reconstructs data from samples drawn from this latent distribution [36]. The training objective combines a reconstruction loss term (measuring the similarity between input and reconstructed data) with a Kullback-Leibler (KL) divergence term that regularizes the latent distribution to approximate a prior distribution (typically standard normal).

The mathematical formulation of the VAE loss function is:

ℒVAE = 𝔼[log p(x|z)] - βDKL(q(z|x) || p(z))

where the first term represents the reconstruction loss, the second term is the KL divergence between the learned latent distribution q(z|x) and the prior p(z), and β is a coefficient controlling the regularization strength [36] [40]. For molecular generation, VAEs typically process structural representations through multiple fully-connected layers with ReLU activations, with output layers generating SMILES strings or molecular graph representations [36].

GAN Architecture and Training Protocol

Generative Adversarial Networks employ an adversarial training framework comprising two neural networks: a generator that creates synthetic samples from random noise, and a discriminator that distinguishes between real and generated samples [4]. The training process follows a minimax game where the generator aims to produce samples indistinguishable from real data, while the discriminator improves its ability to detect synthetic samples [4]. The standard GAN loss functions are:

Discriminator Loss: ℒD = -𝔼[log(D(x))] - 𝔼[log(1 - D(G(z)))]

Generator Loss: ℒG = -𝔼[log(D(G(z)))]

where x represents real data samples, z represents latent noise vectors, G is the generator function, and D is the discriminator function [36] [4]. For materials discovery applications, GAN generators typically transform random noise vectors into molecular representations through series of fully-connected or convolutional layers, while discriminators process these representations through similar architectures to produce binary real/fake classifications [35].

Hybrid VAE-GAN Architecture

Hybrid VAE-GAN architectures combine the representation learning capabilities of VAEs with the adversarial training framework of GANs to leverage the respective strengths of both approaches [40]. In these architectures, the VAE encoder learns a structured latent representation of input data, while the VAE decoder serves as the generator in the adversarial framework [40]. The discriminator evaluates both reconstructed samples (from the VAE) and generated samples (from the generator/decoder), providing additional training signal beyond the standard VAE reconstruction loss.

The loss functions for the hybrid model incorporate both VAE and GAN objectives:

Encoder Loss: ℒE = ℒVAE

Discriminator Loss: ℒD = -𝔼[log(D(xd))] - ½𝔼[log(1 - D(xp))] - ½𝔼[log(1 - D(x̃))]

Generator Loss: ℒG = ℒVAE + γ(-½𝔼[log(D(xp))] - ½𝔼[log(D(x̃))])

where xd represents real data samples, xp represents generated samples from prior noise, x̃ represents reconstructed samples, and γ controls the GAN loss contribution [40]. This hybrid approach has demonstrated particular effectiveness for generating topological magnetic structures, where it achieved improved diversity and fidelity compared to standalone VAE or GAN models [40].

Workflow Visualization

Research Reagent Solutions: Essential Tools for Implementation

Table 3: Essential Research Tools for Generative Materials Discovery

| Tool Category | Specific Solutions | Function | Compatibility |

|---|---|---|---|

| Representation Libraries | CrysTens [39], Point Cloud Encodings [35], SMILES Tokenizers [36] | Convert material structures to model-readable formats | VAE, GAN, Hybrid |

| Model Architectures | VAE with Probabilistic Encoder [36], GAN with Convolutional Networks [35], VAE-GAN Hybrid [40] | Core generative model implementation | Domain-dependent |

| Training Frameworks | TensorFlow, PyTorch, Custom Training Loops [4] | Model optimization and training | VAE, GAN, Hybrid |

| Evaluation Metrics | Fréchet Inception Distance (FID) [38], Reconstruction Loss [36], Formation Energy [35] | Quantify model performance and sample quality | VAE, GAN, Hybrid |

| Validation Tools | DFT Calculations [35], BindingDB [36], Domain Expert Assessment [37] | Validate generated materials scientifically | Experimental validation |

| Data Sources | Pearson's Crystal Database [39], Materials Project [35], BindingDB [36] | Training data for generative models | Domain-specific |

The comparative analysis of VAEs and GANs across diverse representation schemes reveals that the choice of representation frequently outweighs architectural considerations in generative materials discovery. SMILES strings provide accessibility for organic molecule generation but lack the spatial fidelity required for crystalline materials. Graph representations offer intuitive encoding of connectivity but present significant inversion challenges. Image-like encodings, particularly point cloud representations, demonstrate growing promise for complex crystalline systems by balancing representational richness with computational efficiency.

While GANs frequently excel in generating high-fidelity, realistic structures, VAEs provide more comprehensive exploration of chemical space with better coverage of possible structures [38]. The emerging trend of hybrid models leverages the complementary strengths of both architectures, with VAEs learning meaningful latent representations and GANs refining output quality through adversarial training [40]. As materials discovery increasingly prioritizes inverse design—generating structures with predefined target properties—the synergy between representation schemes and generative architectures will undoubtedly drive future innovations in this rapidly evolving field.

The critical importance of domain-specific validation cannot be overstated, as standard quantitative metrics often fail to capture scientific relevance and physical plausibility [37]. Ultimately, successful generative materials discovery requires tight integration between model architecture, representation scheme, and experimental validation, creating a virtuous cycle of model improvement and scientific discovery.

From Theory to Synthesis: Practical Applications of VAE and GAN in Material Science

The discovery of new functional materials is fundamental to technological progress in fields such as renewable energy, electronics, and healthcare. Traditional material discovery, often characterized by trial-and-error experimentation, is a time-consuming and resource-intensive process. The emergence of generative artificial intelligence (AI) presents a paradigm shift, enabling the inverse design of materials—discovering new structures with user-defined properties. Among generative models, Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) have demonstrated significant potential. This guide provides a comparative analysis of VAE and GAN methodologies within materials discovery research, focusing on a detailed case study of a VAE-driven breakthrough in crystal structure prediction (CSP). The performance, experimental protocols, and practical resources are synthesized to offer researchers an objective comparison of these competing technologies.

Comparative Performance: VAE vs. GAN for Materials Discovery

The table below summarizes the objective performance of recent VAE and GAN models as reported in materials discovery literature.

Table 1: Performance Comparison of VAE and GAN Models in Materials Discovery

| Model Name | Model Type | Primary Application | Key Performance Metrics | Reference / Dataset |

|---|---|---|---|---|

| Cond-CDVAE [41] | Conditional VAE | Crystal Structure Prediction | Accurately predicted 59.3% of 3547 unseen experimental structures within 800 samplings; 83.2% accuracy for structures with <20 atoms [41]. | MP60-CALYPSO (670,979 structures) [41] |

| VGAN-DTI [36] | Hybrid (VAE+GAN+MLP) | Drug-Target Interaction | Achieved 96% accuracy, 95% precision, 94% recall, and 94% F1 score for interaction prediction [36]. | BindingDB [36] |

| TransVAE-CSP [42] | Transformer-Enhanced VAE | Crystal Structure Generation | Outperformed existing methods in structure reconstruction and generation tasks across multiple metrics on carbon24, perov5, and mp_20 datasets [42]. | carbon24, perov5, mp_20 [42] |

| GAN Electrocatalyst Design [43] | GAN | Electrocatalyst Discovery | Generated 400,000 unique candidate compositions with 99.94% uniqueness; 70% met chemical validity and stability criteria [43]. | Materials Project (5,000+ compounds) [43] |

Key Performance Insights

- Accuracy vs. Diversity: VAEs, particularly in structured domains like crystals, demonstrate a strong capacity for generating physically plausible and accurate structures. The Cond-CDVAE model's high prediction accuracy for small-unit-cell structures highlights this precision [41]. In contrast, the highlighted GAN application excelled in generating a vast number of diverse and unique candidate compositions, showcasing a different strength [43].

- Architectural Trends: State-of-the-art performance often involves hybrid or enhanced architectures rather than pure VAEs or GANs. The integration of transformers for better symmetry handling [42] or the combination of VAE and GAN in a single framework [36] is a prevalent trend to overcome the limitations of individual model types.

Experimental Protocols: A Deep Dive into VAE-driven CSP

This section details the methodology from a landmark study on VAE for crystal structure prediction, providing a template for experimental design.

Cond-CDVAE Model Workflow

The Conditional Crystal Diffusion Variational Autoencoder (Cond-CDVAE) represents a advanced framework for predicting crystal structures under specific conditions, such as composition and pressure [41].

Table 2: Core Components of the Cond-CDVAE Model Architecture

| Component | Function | Architecture Details |

|---|---|---|

| Encoder | Maps a crystal structure to a probabilistic latent space. | Composed of SE(3)-equivariant graph neural networks to respect crystal symmetries. It outputs parameters (mean and variance) of a Gaussian distribution in the latent space [41]. |

| Decoder | Reconstructs/generates a crystal structure from a latent code. | A diffusion model that performs denoising steps. It uses a noise conditional score network and Langevin Dynamics to relax atoms into stable positions, conditioned on the desired composition and pressure [41]. |

| Conditioning Mechanism | Allows user control over generated structures. | Compositions and pressure values are fed as additional inputs to the decoder, guiding the generation process toward structures that meet these specific criteria [41]. |

Cond-CDVAE Workflow Diagram

Training and Validation Protocol

- Dataset Curation (MP60-CALYPSO): The model was trained on a massive, curated dataset of 670,979 locally stable crystal structures. This dataset amalgamated ambient-pressure structures from the Materials Project (MP) and high-pressure structures from CALYPSO community simulations, ensuring broad chemical and structural diversity across 86 elements [41].

- Conditional Training: The model was trained to learn the distribution of crystal structures conditioned on composition and pressure. This enables targeted generation for specific research goals [41].

- Benchmarking: Performance was validated by testing the model's ability to reproduce known, but unseen, experimental structures from databases like the Inorganic Crystal Structure Database (ICSD). The high success rate (59.3% overall, 83.2% for sub-20 atom cells) within a limited number of sampling attempts demonstrated efficiency superior to traditional CSP methods [41].

The Scientist's Toolkit: Essential Research Reagents

For researchers aiming to implement similar generative workflows, the following computational and data resources are essential.

Table 3: Key Research Reagents for Generative Materials Discovery

| Reagent / Resource | Type | Function in Research | Example in Use |

|---|---|---|---|

| Stable Materials Databases | Data | Provides training data on thermodynamically stable structures and their properties. | Materials Project (MP) [41] [43], JARVIS [34], AFLOWLIB [34]. |

| High-Throughput Computation Data | Data | Expands training data to include hypothetical, metastable, or high-pressure structures. | CALYPSO dataset [41]. |

| Structure Representations | Computational Method | Encodes crystal structure into a format readable by AI models while preserving physical invariances. | Crystal Graphs [34], Irreducible Representations [42], Adaptive Distance Expansion [42]. |

| Equivariant Neural Networks | Software/Model | Neural networks designed to inherently respect physical symmetries (rotation, translation), improving model accuracy and data efficiency. | SE(3)-Equivariant GNNs [41], E3nn framework [42]. |

| Generative Model Framework | Software/Model | The core AI architecture (e.g., VAE, GAN, Diffusion) used for the inverse design task. | Cond-CDVAE [41], TransVAE-CSP [42], GAN for electrocatalysts [43]. |

| Density Functional Theory (DFT) | Software/Validation | The computational workhorse for validating the stability and properties of AI-generated candidates through first-principles calculations. | Used to relax and verify the energy of generated structures in [41] and [43]. |

Architectural Comparison: How VAE and GAN Approaches Differ

Understanding the fundamental operational differences between VAEs and GANs is key to selecting the appropriate model.

VAE vs GAN Architecture Diagram

Core Operational Principles