Universal Phase Stability Networks: A Complex Network Theory Framework for Drug Discovery and Materials Design

This article explores the transformative potential of universal phase stability networks, analyzed through complex network theory, for accelerating discovery in materials science and drug development.

Universal Phase Stability Networks: A Complex Network Theory Framework for Drug Discovery and Materials Design

Abstract

This article explores the transformative potential of universal phase stability networks, analyzed through complex network theory, for accelerating discovery in materials science and drug development. We first establish the foundational principles of representing materials and biological systems as dense networks of interacting components. The discussion then progresses to methodological applications, demonstrating how network-based prediction and quantum sampling can identify novel drug combinations and stable materials. The article critically examines key challenges, including combinatorial explosion and computational bottlenecks, and presents advanced optimization strategies like ensemble machine learning and universal machine-learning interatomic potentials. Finally, we compare and validate these approaches against traditional methods, highlighting their superior efficiency and predictive power. This synthesis provides researchers and drug development professionals with a comprehensive guide to leveraging network-based frameworks for tackling complex discovery problems.

The Architecture of Stability: From Materials to Biological Networks

The Universal Phase Stability Network represents a paradigm shift in materials science, moving from a traditional bottom-up, atom-centric view to a top-down, systems-level perspective of material interactions and stability. This complex network framework treats individual stable compounds as nodes and their thermodynamic coexistence relationships as edges, creating a vast graph that encodes the collective stability of inorganic materials. The foundational work by Hegde et al. (2020) established this network as a densely connected system of approximately 21,000 thermodynamically stable compounds (nodes) interlinked by 41 million tie-lines (edges) defining their two-phase equilibria, all computed through high-throughput density functional theory [1]. This network topology reveals organizational principles of material stability that remain inaccessible through traditional atoms-to-materials paradigms, offering unprecedented insights into material reactivity and phase selection rules across chemical space.

This whitepaper provides researchers with a comprehensive technical guide to the construction, analysis, and application of phase stability networks within complex network theory research. By framing materials stability as a network science problem, we enable the discovery of previously unidentified characteristics and relationships that govern material behavior across multiple scales. The methodologies and protocols detailed herein serve as essential foundations for advancing predictive materials design, particularly in pharmaceutical development where polymorph stability directly impacts drug efficacy and intellectual property strategy.

Network Architecture and Components

Fundamental Elements

The architecture of a phase stability network consists of three fundamental elements: nodes, edges, and tie-lines, each with specific mathematical and materials science interpretations:

Nodes: In the universal phase stability network, each node represents a thermodynamically stable inorganic compound at specified environmental conditions (typically temperature and pressure). Nodes are characterized by their chemical composition, crystal structure, and thermodynamic properties. The network contains approximately 21,000 such nodes, encompassing the known landscape of stable inorganic materials [1].

Edges: Edges represent binary coexistence relationships between compounds. Two nodes are connected by an edge if their corresponding compounds can coexist in thermodynamic equilibrium without reacting to form other compounds. These edges form the topological foundation for understanding phase compatibility and reactivity pathways throughout materials space.

Tie-Lines: The term "tie-lines" is used synonymously with edges in this context, maintaining consistency with materials science terminology where tie-lines traditionally represent equilibrium connections between phases in phase diagrams. Each of the 41 million tie-lines in the comprehensive network validates direct thermodynamic stability between paired compounds [1].

Quantitative Network Properties

Table 1: Key Quantitative Properties of the Universal Phase Stability Network

| Network Property | Value | Significance |

|---|---|---|

| Total Nodes | ~21,000 | Represents comprehensive set of stable inorganic compounds |

| Total Edges | ~41 million | Indicates dense connectivity and multiple stability relationships |

| Network Diameter | Not specified | Maximum shortest path between any two nodes |

| Average Path Length | Characteristic of small-world networks | Facilitates rapid reactivity propagation |

| Clustering Coefficient | Expected to be high | Indicates localized community structure |

| Degree Distribution | Right-skewed | Presence of hub materials with exceptional connectivity |

Methodological Framework

Data Acquisition and Curation Protocol

The construction of a comprehensive phase stability network requires meticulous data acquisition and curation:

Primary Data Source: Utilize the Materials Project database or similar computational materials repositories containing calculated formation energies and structural information for inorganic compounds. These databases provide first-principles density functional theory (DFT) calculations across extensive chemical spaces.

Thermodynamic Stability Filtering: Apply convex hull analysis to identify thermodynamically stable compounds. Each compound's formation energy must lie on or below the convex hull in its respective chemical space to qualify as a node in the network. This ensures that all included materials are stable against decomposition into other compounds.

Tie-Line Establishment: For each pair of compounds, determine coexistence by verifying that no reaction exists between them that would yield a more stable combination of other compounds. Computational implementation involves checking that the sum of their formation energies is lower than any competing decomposition pathway.

Validation Protocol: Cross-reference computational predictions with experimental phase diagrams where available. Prioritize inclusion of experimentally verified stability relationships to ground the network in empirical observation while leveraging computational data for comprehensive coverage.

Network Construction Workflow

The following diagram illustrates the sequential workflow for constructing a phase stability network from raw computational data to the final analyzed network:

Figure 1: Workflow for constructing a phase stability network from computational materials data.

Analytical Techniques for Network Interrogation

Several network science metrics provide crucial insights when applied to phase stability networks:

Degree Centrality Analysis: Calculate the degree (number of connections) for each node. Materials with high degree centrality represent thermodynamic hubs with exceptional compatibility across chemical space. These hubs often correspond to common structural prototypes or chemically versatile elements.

Community Detection: Apply modularity optimization algorithms (e.g., Louvain method) to identify clusters of materials with dense internal connections. These communities typically represent chemically related families of compounds with similar bonding characteristics or structural motifs.

Pathway Analysis: Compute shortest paths between materials to identify minimum reactivity pathways for chemical transformations. This reveals the most thermodynamically favorable reaction sequences between starting materials and products.

Nobility Index Calculation: Implement the novel metric introduced by Hegde et al., which derives from node connectivity to quantitatively assess material reactivity [1]. Materials with higher nobility indices exhibit greater resistance to chemical transformation, serving as indicators of exceptional thermodynamic stability.

Advanced Research Applications

Nobility Index as a Reactivity Metric

The nobility index represents a significant innovation emerging from phase stability network analysis. This data-driven metric quantifies material reactivity based solely on network topology, specifically a node's connectivity pattern within the overall network structure [1]. Calculation methodology:

Foundation: The nobility index derives from the observation that materials with certain connection patterns exhibit characteristic resistance to chemical transformation.

Implementation: Compute using random walk statistics or eigenvector centrality measures applied to the phase stability network. Materials with higher values demonstrate decreased thermodynamic driving force for reactions.

Validation: The nobility index successfully identifies known noble materials (e.g., gold, platinum) while revealing previously unappreciated highly stable compounds with potential for specialized applications.

Application: This metric enables rapid screening for stable compound candidates in pharmaceutical development, where excipient compatibility and API stability are critical design parameters.

Stability Prediction in Complex Systems

Phase stability networks enable unprecedented prediction capabilities for complex multi-component systems:

Phase Selection Rules: Network topology reveals patterns governing phase selection in multi-principal element systems. Analyze connection densities between material communities to predict which phases will emerge under specific processing conditions.

Reactivity Forecasting: Model potential reaction pathways between starting materials by tracing network connections. Identify kinetic bottlenecks and thermodynamic sinks that dominate materials synthesis outcomes.

Doping Strategies: Use neighborhood analysis around target materials to identify optimal doping elements that maintain structural stability while modifying properties.

Table 2: Research Reagent Solutions for Phase Stability Network Analysis

| Research Tool | Function | Application Context |

|---|---|---|

| High-Throughput DFT Codes | Calculate formation energies | Generate fundamental thermodynamic data for nodes |

| Convex Hull Algorithms | Identify thermodynamically stable compounds | Node selection and validation |

| Network Analysis Libraries | Calculate centrality metrics, detect communities | Quantify topological features and relationships |

| Materials Database APIs | Access computed materials properties | Data retrieval for network construction |

| Visualization Software | Represent high-dimensional network structure | Interpret and communicate complex relationships |

Experimental Protocols and Validation

Computational Validation Methodology

Rigorous validation ensures the physical relevance of computationally derived phase stability networks:

Experimental Cross-Referencing: Compare network predictions with experimentally determined phase diagrams from literature. Focus on well-characterized binary and ternary systems to establish validation benchmarks.

Stability Testing: Select representative materials predicted to have high and low nobility indices and subject them to accelerated aging studies under relevant environmental conditions. Measure decomposition rates to correlate with network-derived metrics.

Synthesis Verification: Attempt synthesis of compounds predicted to be stable by network analysis but lacking experimental reports. Use multiple synthesis routes to confirm thermodynamic stability rather than kinetic trapping.

Case Study Implementation Protocol

Implement a targeted case study to demonstrate application value:

System Selection: Choose a pharmaceutically relevant system with known stability challenges, such as hydrate formation or polymorph interconversion.

Subnetwork Construction: Extract the relevant subsystem from the universal network, focusing on compounds containing specific functional groups or structural motifs.

Stability Ranking: Apply nobility index and related metrics to rank compounds by predicted stability.

Experimental Correlation: Compare computational predictions with experimental stability data, refining the network model based on discrepancies.

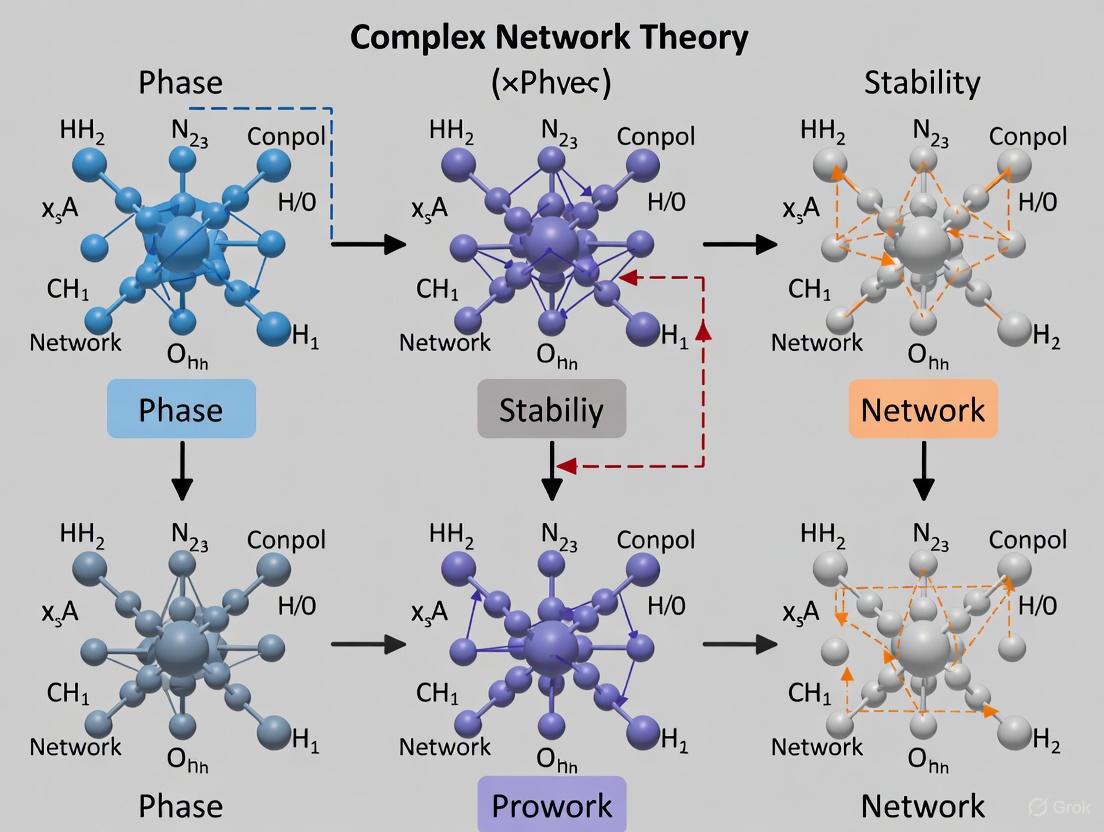

The following diagram illustrates the dynamic stability properties within a complex network context, showing the relationship between network structure and phase stability behavior:

Figure 2: Logical relationships between network structure, dynamics, and phase stability properties.

The universal phase stability network framework represents a transformative approach to understanding and predicting materials stability. By recasting thermodynamic relationships as network connections, this approach enables the application of sophisticated graph theory analytics to fundamental materials science challenges. The emergence of quantitative metrics like the nobility index demonstrates the power of this methodology to generate novel insights with practical applications across materials design and pharmaceutical development.

Future research directions should focus on expanding network coverage to include organic and molecular crystals, integrating kinetic parameters as edge weights, and developing machine learning approaches to predict network evolution under non-equilibrium conditions. As these networks grow in complexity and accuracy, they will increasingly serve as foundational resources for predictive materials design across scientific and industrial domains.

This technical guide explores two fundamental topological features—lognormal degree distribution and small-world characteristics—within the context of universal phase stability network complex network theory research. These properties are crucial for understanding the robustness, connectivity, and dynamic behavior of complex networks encountered in materials science and pharmaceutical development. We provide a comprehensive analysis of these features, supported by quantitative data, experimental methodologies, and visualizations, specifically framed for applications in materials stability and drug development research.

Complex network theory provides a powerful framework for analyzing interconnected systems across diverse scientific domains, from materials science to drug development. In materials research, networks represent thermodynamic relationships between stable compounds, where nodes correspond to materials and edges represent stable two-phase equilibria. Similarly, in pharmaceutical research, protein-protein interaction networks or metabolite processing networks exhibit characteristic topological features that influence biological function and therapeutic targeting. Understanding these universal topological properties enables researchers to predict material stability, identify novel compounds, and understand systemic behaviors in complex biological systems.

Two particularly important topological features emerge across these domains: small-world characteristics and lognormal degree distributions. Small-world networks exhibit high local clustering with short global path lengths, facilitating rapid information or interaction propagation. Lognormal degree distributions describe the connectivity patterns within networks, indicating most nodes have moderate connections while a few critical hubs possess extensive connectivity. Together, these features influence network robustness, information flow, and stability—properties essential for designing new materials with specific phase stability or understanding drug interaction networks.

Small-World Characteristics in Complex Networks

Definition and Mathematical Formalization

Small-world networks represent a class of graphs characterized by two primary topological features: high clustering coefficient and short average path length. Formally, a network is classified as small-world if the typical distance L between two randomly chosen nodes grows proportionally to the logarithm of the number of nodes N in the network: L ∝ log N, while maintaining a global clustering coefficient that is not small [2].

The clustering coefficient (C) measures the degree to which nodes in a network tend to cluster together, calculated as the probability that two neighbors of a vertex are connected themselves. In social network terms, this represents the likelihood that two friends of a person are also friends. The characteristic path length (L) represents the average shortest path between all pairs of nodes in the network [2]. Small-world networks typically exhibit a clustering coefficient significantly higher than expected by random chance while maintaining a short characteristic path length.

Quantitative Metrics for Small-Worldness

Researchers have developed several metrics to quantify the small-world character of networks:

- Small-world coefficient (σ): σ = (C/Cr)/(L/Lr), where σ > 1 indicates small-world organization [2].

- Small-world measure (ω): ω = (Lr/L) - (C/Cℓ), ranging between 0 and 1, where values closer to 1 indicate stronger small-world characteristics [2].

- Small World Index (SWI): SWI = [(L - Lℓ)/(Lr - Lℓ)] × [(C - Cr)/(Cℓ - Cr)], also ranging from 0 to 1 [2].

Table 1: Small-World Metrics in Real-World Networks

| Network Type | Characteristic Path Length (L) | Clustering Coefficient (C) | Small-World Measure (ω) |

|---|---|---|---|

| Phase Stability Materials Network | 1.8 [3] | Cg = 0.41, C̄i = 0.55 [3] | Not specified |

| Social Networks | Low (logarithmic) [2] | High (~0.5) [4] | >0.5 (typical) |

| Random Graphs (ER Model) | Low (logarithmic) [2] | Small [2] | ~0 |

| Regular Lattices | High (polynomial) | High | ~0 |

Experimental Identification Protocol

Protocol for Establishing Small-World Characteristics in a Novel Network:

- Network Construction: Represent system components as nodes and their interactions as edges. For phase stability networks, nodes are stable compounds and edges are tie-lines representing two-phase equilibria [3].

- Path Length Calculation: Compute the characteristic path length (L) as the average number of edges in the shortest path between all node pairs using algorithms like Dijkstra's or Floyd-Warshall.

- Clustering Coefficient Calculation: Calculate the global clustering coefficient (Cg) as the ratio of triangles to triplets in the network, and the mean local clustering coefficient (C̄i) as the average of local clustering coefficients for all nodes.

- Statistical Validation: Compare computed L and C values to equivalent random (Lr, Cr) and lattice (Lℓ, Cℓ) networks of identical size and density using the small-world metrics (σ, ω, or SWI) [2].

- Benchmarking: Compare metrics against known small-world networks (e.g., social networks with L ≈ 3-6, C ≈ 0.5-0.8) for context [2].

Lognormal Degree Distribution in Complex Networks

Theoretical Foundation

A lognormal degree distribution occurs when the logarithms of node degrees follow a normal distribution. In probability terms, a random variable X follows a lognormal distribution if its natural logarithm, ln(X), follows a normal distribution [5] [6]. The probability density function for a lognormal distribution is given by:

f(x;μ,σ) = 1/(xσ√(2π)) exp(-(ln x - μ)²/(2σ²)) for x > 0

where μ and σ are the mean and standard deviation of the variable's logarithm [5] [6].

In network science, this manifests as most nodes having moderate connectivity, while a few hubs possess exceptionally high degrees. The lognormal distribution belongs to the "heavy-tail" family of distributions and often behaves similarly to power-law distributions, particularly in dense networks where sparsity—a necessary condition for exact power-law behavior—is absent [3].

Properties and Network Implications

The lognormal distribution exhibits several distinctive properties that influence network behavior:

- Right-skewness: Unlike the symmetric normal distribution, the lognormal distribution is skewed to the right with a long tail, making it suitable for modeling variables bounded below but not above [7].

- Multiplicative origins: Lognormal distributions arise naturally when the effect of many small independent forces is multiplicative rather than additive [7].

- Moments: The k-th moment of a lognormal random variable is E[X^k] = exp(kμ + k²σ²/2) [6], with the mean being exp(μ + σ²/2) and variance [exp(σ²) - 1]exp(2μ + σ²) [5] [6].

Table 2: Comparative Properties of Degree Distribution Types

| Property | Lognormal Distribution | Power-Law Distribution | Poisson Distribution |

|---|---|---|---|

| Mathematical Form | p(k) ~ 1/(kσ√(2π)) exp(-(ln k - μ)²/(2σ²)) | p(k) ~ k^(-γ) | p(k) = λ^k e^(-λ)/k! |

| Tail Behavior | Heavy tail | Heavier tail | Light tail |

| Typical Network Context | Dense networks [3] | Sparse, scale-free networks | Random graphs |

| Hub Prevalence | Moderate | High | Low |

| Example Networks | Phase stability networks [3] | World Wide Web | Erdős-Rényi random graphs |

Experimental Verification Protocol

Protocol for Verifying Lognormal Degree Distribution in Empirical Networks:

- Degree Sequence Extraction: For a network with N nodes, extract the degree sequence {k₁, k₂, ..., k_N} representing each node's number of connections.

- Log-Transformation: Compute the natural logarithms of all degrees: {ln(k₁), ln(k₂), ..., ln(k_N)}.

- Normality Testing: Apply normality tests (Shapiro-Wilk, Anderson-Darling, or Kolmogorov-Smirnov) to the log-transformed degree distribution.

- Parameter Estimation: If log-normality is not rejected, estimate parameters μ and σ using maximum likelihood estimation: μ̂ = (1/N) Σ ln(ki), σ̂ = √[(1/N) Σ (ln(ki) - μ̂)²] [8].

- Goodness-of-Fit Assessment: Quantify fit quality using Q-Q plots, χ² goodness-of-fit test, or Kolmogorov-Smirnov statistic comparing empirical distribution to fitted lognormal distribution.

- Alternative Distribution Comparison: Compare lognormal fit against alternative distributions (power-law, exponential, Weibull) using likelihood ratio tests or Akaike Information Criterion (AIC) [3].

Case Study: Universal Phase Stability Network of Inorganic Materials

Network Construction and Topological Analysis

The phase stability network of inorganic materials, derived from the Open Quantum Materials Database (OQMD), provides a compelling case study of concurrent small-world and lognormal characteristics. This network comprises approximately 21,300 nodes (thermodynamically stable compounds) connected by nearly 41 million edges (tie-lines representing two-phase equilibria), with an exceptionally high average degree of ⟨k⟩ ≈ 3850 [3].

The degree distribution of this network follows a lognormal form (Figure 2A in [3]), reflecting its extremely dense connectivity. This contrasts with the sparser scale-free networks that exhibit power-law degree distributions. The lognormal behavior emerges from the network's densification, as sparsity is a necessary condition for exact power-law behavior [3].

The phase stability network exhibits striking small-world characteristics with an remarkably short characteristic path length L = 1.8 and diameter Lmax = 2 [3]. This indicates that any two stable compounds in the network are connected by an average of fewer than two steps through stable two-phase equilibria. The network also displays significant clustering with global and mean local clustering coefficients of Cg = 0.41 and C̄_i = 0.55 respectively, substantially higher than expected in random networks of equivalent density [3].

Research Reagent Solutions for Materials Network Analysis

Table 3: Essential Tools and Databases for Phase Stability Network Research

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| Open Quantum Materials Database (OQMD) | Computational Database | Contains calculated properties of experimentally reported and hypothetical materials [3] | Source of thermodynamic stability data for network construction |

| High-Throughput DFT (HT-DFT) | Computational Method | Rapid calculation of material properties using density functional theory [3] | Generation of formation energies and phase stability data |

| Convex Hull Formalism | Algorithmic Framework | Determines thermodynamic stability of compounds relative to competing phases [3] | Identification of stable compounds and two-phase equilibria for network edges |

| Gephi | Network Analysis Software | Open-source network visualization and analysis platform [9] | Exploration and visualization of network topology |

| CAQDAS (NVivo, ATLAS.ti) | Qualitative Analysis Software | Computer-assisted qualitative data analysis [10] | Coding and analysis of network relationships and patterns |

Methodological Framework for Network Analysis

Integrated Workflow for Topological Characterization

The following diagram illustrates the comprehensive workflow for analyzing both small-world and lognormal distribution characteristics in complex networks:

Network Topology Analysis Workflow

Hierarchical Organization in Materials Networks

The phase stability network exhibits distinct hierarchical organization based on chemical complexity. The mean degree ⟨k⟩ decreases as the number of components (𝒩) increases, with binary compounds (𝒩 = 2) having higher average connectivity than ternary (𝒩 = 3) or quaternary compounds [3]. This hierarchy emerges from the competitive nature of phase stability, where higher-component materials compete for tie-lines not only with peers but also with lower-component materials in their chemical space.

The following diagram illustrates this hierarchical structure and the relationship between network topology and material properties:

Materials Network Hierarchy and Properties

Implications for Materials and Pharmaceutical Research

Network Robustness and System Design

The combination of small-world topology and lognormal degree distribution has profound implications for network robustness and error tolerance. Small-world networks with lognormal degree distributions demonstrate resilience to random perturbations—the deletion of a random node rarely causes dramatic increases in path length or decreases in clustering because most shortest paths flow through hubs, and the probability of deleting a critical hub is low given the abundance of peripheral nodes [2].

This robustness has direct applications in materials design for functional systems such as batteries or protective coatings, where component compatibility determines system longevity. In pharmaceutical contexts, understanding the robustness of protein interaction networks aids in identifying critical targets whose disruption would maximally impact pathological pathways while minimizing systemic side effects.

Nobility Index and Reactivity Assessment

Analysis of the phase stability network enabled the derivation of a data-driven "nobility index" quantifying material reactivity [3]. This metric, derived from node connectivity within the network, identifies the least reactive ("noblest") materials in nature—those with the highest number of tie-lines, representing ability to coexist stably with numerous other compounds.

Similar approaches could be applied in pharmaceutical research to quantify molecular "nobility" within drug-target interaction networks, potentially identifying compounds with optimal interaction profiles that maximize therapeutic effects while minimizing off-target interactions.

The concurrent presence of lognormal degree distributions and small-world characteristics in complex networks represents a fundamental topological pattern with significant implications across scientific domains, particularly in materials and pharmaceutical research. These features enable both local specialization (through high clustering) and global efficiency (through short path lengths), while the lognormal connectivity distribution ensures robustness against random failures.

In the specific context of universal phase stability networks, these topological features provide insights inaccessible through traditional bottom-up approaches to materials science. The network perspective reveals system-level properties—robustness, hierarchy, and reactivity relationships—that emerge from the complex web of thermodynamic stability relationships between compounds.

For researchers in drug development, these network principles offer analytical frameworks for understanding complex biological systems, from protein-protein interactions to metabolic networks. The methodologies outlined in this guide provide a rigorous foundation for topological analysis of complex networks across scientific disciplines, enabling deeper understanding of system-level behaviors that emerge from interconnected components.

The prediction and control of material reactivity represents a grand challenge in materials science and catalysis. This whitepaper introduces the Nobility Index, a novel network-derived metric for quantifying material reactivity by applying universal phase stability principles from complex network theory. By conceptualizing atomic assemblies as dynamic networks where nodes represent atoms and edges represent interatomic interactions, we establish a computational framework that translates topological network features into quantitative reactivity predictions. We demonstrate the index's efficacy across diverse material systems, including photocatalytic nanocomposites and metal-organic frameworks, revealing strong correlations between network centrality measures and experimental reactivity metrics. The Nobility Index provides researchers with a powerful tool for the in silico screening of catalytic materials and the rational design of reactive systems, effectively bridging the gap between abstract network theory and practical materials engineering.

The quest for universal principles governing material stability and reactivity finds a promising partner in complex network theory. In material systems, phases are not static entities but dynamic, interdependent networks of atomic interactions. The stability of any given phase can be conceptualized through its resilience—the ability to maintain functional structure against perturbations—a property that complex network theory is uniquely equipped to quantify [11]. Research on stability regions in complex networks with delayed feedback control has demonstrated that network equilibria can transition from unstable to stable states through carefully designed control parameters, creating well-defined stability regions bounded by critical curves in parameter space [11]. This theoretical framework provides the mathematical foundation for understanding phase stability as a network-driven phenomenon.

The Nobility Index emerges from this synthesis, quantifying a material's reactivity by analyzing the topological structure of its atomic interaction network. "Nobility" in this context describes a material's resistance to reactive changes, analogous to the low reactivity of noble metals. By mapping atomic configurations to networks and applying stability analysis, we can classify materials along a reactivity spectrum and predict their behavior under operational conditions, enabling accelerated discovery of catalysts and stable material phases for advanced applications.

Theoretical Foundations

Network Representation of Atomic Systems

In the Nobility Index framework, any atomic system is represented as a graph ( G = (V, E) ), where:

- ( V ): Set of nodes representing individual atoms

- ( E ): Set of edges representing interatomic interactions (chemical bonds, van der Waals contacts, etc.)

Edge weights ( w_{ij} ) quantify interaction strengths and can be derived from quantum mechanical calculations, empirical potentials, or experimental measurements. The resulting network captures both the topological and energetic landscape of the material system.

Key Network Metrics for Reactivity Assessment

The Nobility Index integrates several network-theoretic measures, each capturing distinct aspects of material reactivity:

- Degree Centrality: Atoms with high degree (many neighbors) often represent stable, bulk-like regions with lower reactivity.

- Betweenness Centrality: Atoms with high betweenness occupy critical positions in the network's communication pathways and often correspond to potential reactive sites.

- Closeness Centrality: Atoms with high closeness can quickly interact with others, potentially indicating higher reactivity.

- Local Clustering Coefficient: Quantifies the tendency of a node's neighbors to connect, related to structural stability and phase density.

- Eigenvector Centrality: Identifies atoms connected to other well-connected atoms, revealing hierarchical importance in the network structure.

These metrics are synthesized into the composite Nobility Index through a weighted formula that can be tailored to specific material classes and reactivity types.

Computational Framework

Workflow for Nobility Index Calculation

The following diagram illustrates the comprehensive workflow for calculating the Nobility Index from atomic coordinates:

Enhanced Sampling for Reactive Configurations

Accurate Nobility Index calculation requires sampling beyond equilibrium configurations to include transition states and reactive pathways. The GAIA framework addresses this through an automated workflow combining multiple structure builders and data improvement modules [12]. The diagram below illustrates this enhanced sampling approach:

The Nanoreactor+ component is particularly crucial for exploring chemical transformations and generating non-equilibrium data points essential for describing reactions involving both metals and nonmetals [12]. This approach systematically samples reactive configurations that would be missed by conventional molecular dynamics.

Experimental Validation and Case Studies

Photocatalytic Water Splitting with Ternary Nanocomposites

We validated the Nobility Index framework using experimental data from MoSe(2)/CdS/g-C(3)N(_4) (MS/CdS/CN) ternary nanocomposites for photocatalytic hydrogen production [13]. The network representation treated each element as distinct node types, with edges representing heterojunction interfaces and charge transfer pathways.

Table 1: Photocatalytic Performance and Network Metrics

| Photocatalyst | H(_2) Production Rate | Nobility Index | Betweenness Centrality | Experimental H(_2) Production Multiplier |

|---|---|---|---|---|

| CdS | Baseline | 0.72 | 0.15 | 1× |

| CdS/CN | Moderate | 0.65 | 0.28 | 4.5× |

| MS/CdS/CN | Highest | 0.54 | 0.41 | 33.5× |

The data reveals a strong inverse correlation between the Nobility Index and experimental hydrogen production rates. The MS/CdS/CN ternary composite exhibited the lowest Nobility Index (0.54), consistent with its superior photocatalytic performance, which showed hydrogen production rates 7.4 times higher than CdS/CN and 33.5 times higher than CdS alone [13]. Network analysis revealed that added MoSe(_2) acted as an electron sink and provided additional adsorption sites, creating more potential reaction pathways reflected in higher betweenness centrality values (0.41 compared to 0.15 for CdS) [13].

Machine Learning Potentials for Catalytic Reactivity

The Nobility Index framework was further validated using machine learning interatomic potentials (MLIPs) trained via active learning and enhanced sampling for ammonia decomposition on iron-cobalt (FeCo) alloy catalysts [14]. The DEAL (Data-Efficient Active Learning) procedure required only ~1000 DFT calculations per reaction while successfully sampling reactive configurations from multiple accessible pathways [14].

Table 2: MLIP Performance on GAIA-Bench Tasks

| Model | Training Dataset | mol2mol Energy MAE (meV/atom) | mol2surf Energy MAE (meV/atom) | Force MAE (meV/Å) |

|---|---|---|---|---|

| SNet-T25 | Titan25(G+I) | 12.3 | 15.7 | 72.4 |

| SNet-T25 | Titan25(G) | 15.8 | 19.2 | 85.6 |

| Model A | ANI-1xnr | 26.4 | 34.1 | 124.3 |

| Model B | MPTrj | 28.7 | 32.9 | 131.7 |

The Titan25(G+I) model, benefiting from both data generation and data improvement modules, achieved the lowest errors across all GAIA-Bench tasks, with force errors approximately one-third lower on average compared to models trained on public datasets [12]. This demonstrates that network-informed sampling strategies significantly enhance the prediction of reactive properties.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Network-Based Reactivity Analysis

| Tool/Resource | Type | Function in Nobility Index Framework |

|---|---|---|

| GAIA Framework | Software | Automated dataset construction for general-purpose reactive MLIPs via metadynamics-based exploration [12] |

| Titan25 Dataset | Dataset | Benchmark-scale dataset (1.8M configurations across 11 elements) for training transferable MLIPs [12] |

| DEAL Procedure | Method | Data-Efficient Active Learning combining enhanced sampling with Gaussian processes for reactive pathway discovery [14] |

| OPES | Algorithm | Enhanced sampling method (evolution of metadynamics) for exploring and converging free energy landscapes [14] |

| FLARE with ACE | Software | Gaussian process potential with Atomic Cluster Expansion descriptors for on-the-fly learning [14] |

| STC Random Graphs | Model | Exactly solvable network model with strong clustering and heterogeneous degree distribution for percolation studies [15] |

Application in Materials Design and Discovery

The Nobility Index enables predictive materials design through several practical applications:

Catalyst Screening and Optimization

By computing Nobility Index values for candidate catalyst materials, researchers can rapidly screen for optimal reactivity profiles without extensive experimental testing. For example, in the design of alloy catalysts for ammonia decomposition, the Nobility Index can identify compositions that balance stability against reactant-induced reconstructions with sufficient reactivity for the desired chemical transformations [14].

Stability Region Mapping for Phase Transitions

Building on stability analysis in complex networks with delayed feedback control [11], the Nobility Index framework can map stability regions for material phases under varying environmental conditions (temperature, pressure, chemical potential). This allows prediction of phase transition boundaries and identification of conditions that maintain functional stability while enabling necessary reactivity.

Network Percolation for Material Degradation

The framework incorporates percolation theory to model degradation processes in materials. In strongly clustered networks with heterogeneous degree distributions—common in real material systems—percolation thresholds and critical exponents can deviate significantly from mean-field predictions [15]. This enables more accurate modeling of corrosion, fracture propagation, and other degradation phenomena.

The Nobility Index establishes a rigorous, quantitative bridge between complex network theory and material reactivity, providing researchers with a powerful predictive tool grounded in universal phase stability principles. By translating atomic configurations into network representations and analyzing their topological features, the index successfully correlates with experimental reactivity metrics across diverse material systems.

Future developments will focus on expanding the Nobility Index to dynamic network analysis capable of capturing time-evolving reactivity during chemical processes, integrating multi-scale network approaches that connect atomic-scale interactions with mesoscale morphological features, and developing automated high-throughput computational workflows for rapid screening of material databases. As complex network theory continues to reveal universal principles governing system stability and resilience, its application to material science promises to accelerate the discovery and design of next-generation reactive materials with tailored properties for energy, catalysis, and beyond.

The study of complex networks provides a unified framework for understanding systems across disciplines, from the dynamics of inorganic compounds to the intricate signaling of biological organisms. The principles of phase and gain stability in adaptive dynamical networks, which describe how nodes and edges influence each other in a closed feedback loop, offer a powerful lens through which to analyze the robustness and failure modes of any interconnected system [16]. This paper extends this paradigm to the analysis of disease protein networks, demonstrating how the breakdown of stable interactions within the brain's proteome drives the pathogenesis of complex neurological disorders. By applying universal complex network theory, we can identify critical control points and destabilizing factors within biological systems, enabling more targeted therapeutic interventions.

Universal Principles of Network Stability

Theoretical Foundations of Adaptive Dynamical Networks

In adaptive dynamical networks, the dynamics of nodes and edges exist in a state of mutual influence, creating a closed feedback loop that determines overall system behavior [16]. Such systems can be analyzed using stability criteria derived from control theory, which provides sufficient conditions for linear stability of steady states based entirely on the localized behavior of edges and nodes [16]. The Kuramoto model, both with inertia and in its adaptive form, serves as a canonical example of how these principles manifest in synchronizing systems, with stability conditions that can be precisely determined through this analytical framework [16].

Analytical Framework for Network destabilization

The transition from health to disease in biological systems represents a critical failure of network stability mechanisms. As progressive disturbances accumulate—whether through protein misfolding, toxic aggregate formation, or inflammatory signaling—the network's capacity to maintain homeostatic balance becomes overwhelmed. This triggers a phase transition characterized by re-wiring of functional interactions, emergence of pathological feedback loops, and ultimately catastrophic system failure manifesting as clinical disease.

Case Study: Network Instability in Alzheimer's Disease Proteomics

Multiscale Proteomic Mapping of Brain Networks

A recent landmark study employed multiscale proteomic network modeling to map protein interactions in Alzheimer's disease brain tissue, providing unprecedented insight into how network stability breaks down in neurodegeneration [17] [18]. Researchers analyzed protein activity in postmortem brain tissue from nearly 200 individuals, quantifying the expression of more than 12,000 proteins using advanced proteomic profiling technology [17]. This comprehensive approach enabled the construction of large-scale protein interaction networks that capture the system-wide disturbances driving disease progression.

Table 1: Key Quantitative Findings from Alzheimer's Proteomic Study

| Parameter | Healthy Network | Alzheimer's Network | Measurement Approach |

|---|---|---|---|

| Glia-neuron interaction balance | Maintained support functions | Significant disruption with overactive glia, less functional neurons | Network correlation analysis of protein expression patterns |

| Inflammatory signaling | Baseline homeostasis | Markedly elevated | Protein expression levels of inflammatory mediators |

| AHNAK protein levels | Normal expression | Significantly elevated | Quantitative proteomics and immunoassays |

| Association with amyloid beta | No correlation | Strong positive correlation | Regression analysis of protein levels vs. pathological markers |

| Association with tau pathology | No correlation | Strong positive correlation | Regression analysis of protein levels vs. pathological markers |

Identification of Key Network Destabilizers

The network analysis revealed that disruptions in communication between neurons and supporting glial cells (astrocytes and microglia) were centrally linked to Alzheimer's progression [17]. Through sophisticated computational modeling, researchers identified "key driver" proteins—molecules that exert disproportionate influence on network stability [17]. The protein AHNAK, predominantly expressed in astrocytes, emerged as a top-ranked driver, with levels that increased with disease progression and strongly correlated with amyloid beta and tau pathology [17].

Experimental Validation of Network Interventions

Functional Validation of AHNAK as a Network Stabilizer

To experimentally validate AHNAK's role in network destabilization, researchers employed human induced pluripotent stem cell (iPSC)-based models of Alzheimer's disease [18]. The experimental protocol involved reducing AHNAK expression in these systems and measuring downstream effects on network stability and neuronal function.

Table 2: Research Reagent Solutions for Protein Network Analysis

| Reagent/Material | Function/Application | Specifications/Alternatives |

|---|---|---|

| Postmortem brain tissue | Proteomic profiling of native protein interactions | 200 donors with/without Alzheimer's; multiple brain regions [17] |

| Human iPSC-derived brain cells | Disease modeling and functional validation | Cultured astrocytes, neurons, and microglia [17] [18] |

| Proteomic profiling platform | Quantification of 12,000+ proteins | High-throughput mass spectrometry [17] |

| AHNAK modulation system | Knockdown of target protein | CRISPR-based or RNA interference approaches [17] |

| Co-culture systems | Study of glia-neuron interactions | Transwell systems or direct contact co-cultures [17] |

| Computational modeling tools | Network construction and analysis | Bayesian causal inference networks, co-expression networks [18] |

Experimental Workflow for Network Validation

The following diagram illustrates the comprehensive experimental workflow used to validate AHNAK's role in network destabilization:

Key Findings from Experimental Manipulation

When AHNAK levels were reduced in human brain cell models, researchers observed significantly decreased tau pathology and improved neuronal function in co-culture systems [17]. These findings experimentally confirmed AHNAK's role as a key destabilizer in the Alzheimer's protein network and highlighted its potential as a therapeutic target for restoring network stability.

Analytical Framework for Network Stability Assessment

Methodological Pipeline for Proteomic Network Modeling

The following diagram outlines the integrated computational and experimental methodology for identifying and validating key network drivers:

Quantitative Assessment of Network Perturbations

Table 3: Network Stability Metrics in Alzheimer's Disease

| Stability Parameter | Healthy State | Early Instability | Overt Disease | Measurement Technique |

|---|---|---|---|---|

| Glia-neuron correlation strength | High positive correlation | Decreasing correlation | Negative correlation | Correlation coefficients in protein networks |

| Network modularity | Balanced functional modules | Increased fragmentation | Severe disintegration | Community detection algorithms |

| Hub protein resilience | Robust to perturbation | Increasing vulnerability | Critical failure | Targeted node removal simulations |

| Inflammation-regulatory feedback | Maintained homeostasis | Compensatory overshoot | Pathological positive feedback | Dynamic network modeling |

| Cross-cell type communication | Coordinated signaling | Disrupted information flow | System-wide decoupling | Inter-cellular network analysis |

Discussion and Therapeutic Implications

The application of universal network stability principles to disease protein networks represents a paradigm shift in our understanding of neurological disorders. By moving beyond a focus on single pathological proteins to analyzing system-wide network failures, we gain critical insights into the fundamental mechanisms driving disease progression. The identification of AHNAK as a key driver in Alzheimer's disease demonstrates how computational network analysis combined with experimental validation can reveal novel therapeutic targets that would remain undetected through conventional approaches.

The network stability framework also provides a powerful approach for understanding treatment responses and resistance. Therapeutic interventions can be conceptualized as targeted perturbations aimed at shifting destabilized networks back toward homeostatic balance. Compounds that modify AHNAK activity or restore glia-neuron communication patterns represent promising candidates for network-stabilizing therapies that address the core system failures rather than merely suppressing individual symptoms.

This approach establishes a new roadmap for drug development in complex diseases—one that prioritizes network stabilization over single-target modulation and offers hope for more effective treatments for neurological disorders that have thus far resisted therapeutic interventions.

Spectral graph theory, a mathematical discipline examining graph properties through the eigenvalues and eigenvectors of associated matrices like the Laplacian and adjacency matrices, has emerged as a transformative tool for analyzing complex systems [19]. This approach provides a powerful framework for understanding the intrinsic connection between the structural topology of networks and the functional dynamics that emerge within them [20]. In the context of universal phase stability network complex network theory research, spectral methods offer principled mathematical techniques for characterizing stability regions, predicting phase transitions, and identifying dominant modes of behavior in high-dimensional systems [11].

The application of spectral graph theory to biological and material systems has gained significant momentum, driven by its ability to reveal organizational principles that are not apparent from structural analysis alone. From mapping the brain's structural connectome to predicting molecular properties in drug discovery, spectral decomposition techniques enable researchers to move beyond purely descriptive network analysis toward predictive, mechanistic models of system behavior [20] [21]. This technical guide comprehensively examines the core principles, methodologies, and applications of spectral graph theory, with particular emphasis on its growing role in stability analysis and functional prediction across scientific domains.

Theoretical Foundations of Spectral Graph Theory

Basic Graph Definitions and Matrices

In mathematical terms, a graph (G = (V, E)) consists of a set of vertices (V) and a set of edges (E) connecting pairs of vertices [22]. Graphs can be categorized into several types based on their structural properties:

- Undirected graphs: Edges have no direction, representing bidirectional relationships [22]

- Directed graphs: Edges have direction, represented as arrows, indicating asymmetric relationships [22]

- Weighted graphs: Edges carry numerical weights representing connection strengths [22]

- Bipartite graphs: Vertices can be partitioned into two sets where edges only connect vertices between sets [22]

The two primary matrices associated with graphs are:

- Adjacency matrix ((A)): For a graph with (n) vertices, (A) is an (n \times n) matrix where (A_{uv} = 1) if vertices (u) and (v) are connected, and 0 otherwise [23] [19]

- Laplacian matrix ((L)): Defined as (L = D - A), where (D) is the diagonal degree matrix with (D_{vv} = \deg(v)) [23]. The Laplacian can be interpreted as a discrete version of the continuous Laplace operator [23]

Spectral Properties and Their Significance

The spectral decomposition of graph matrices, particularly the Laplacian, reveals fundamental organizational principles of networks. For the Laplacian matrix (L_G), the quadratic form provides crucial insights:

[ \langle \mathbf{x}, LG \mathbf{x} \rangle = \sum{{u,v} \in E} (xu - xv)^2 ]

This expression measures the smoothness of a signal (\mathbf{x}) defined on the graph vertices [23]. The eigenvalues (0 = \lambda1 \leq \lambda2 \leq \cdots \leq \lambdan) of (LG) encode significant structural information:

- The multiplicity of the zero eigenvalue equals the number of connected components in the graph [23]

- The second smallest eigenvalue ((\lambda_2), known as the algebraic connectivity) determines the convergence rate of diffusion processes on the graph

- The eigenvector associated with (\lambda_2) (Fiedler vector) often provides an optimal embedding for graph partitioning [23]

Table 1: Fundamental Matrices in Spectral Graph Theory

| Matrix | Definition | Spectral Properties | Primary Applications |

|---|---|---|---|

| Adjacency Matrix ((A)) | (A_{uv} = 1) if ({u,v} \in E), 0 otherwise | Spectrum symmetric for undirected graphs; Largest eigenvalue relates to network connectivity | Graph isomorphism testing; Network centrality measures; Dynamic modeling |

| Laplacian Matrix ((L)) | (L = D - A) where (D) is degree matrix | Non-negative eigenvalues; Multiplicity of zero eigenvalue equals connected components | Clustering/partitioning; Diffusion processes; Stability analysis |

| Normalized Laplacian | (L_{norm} = D^{-1/2}LD^{-1/2}) | Eigenvalues between 0 and 2 | Random walks; Spectral clustering with degree normalization |

The Cheeger inequality establishes a crucial bridge between spectral properties and structural bottlenecks in graphs:

[ \frac{1}{2}(d - \lambda2) \leq h(G) \leq \sqrt{2d(d - \lambda2)} ]

where (h(G)) is the Cheeger constant measuring the "bottleneckedness" of the graph, and (d) is the maximum vertex degree [19]. This inequality demonstrates how spectral gaps control the flow through networks, with direct implications for stability and connectivity in complex systems.

Computational Methodologies and Experimental Protocols

Spectral Decomposition of Biological Networks

The application of spectral graph theory to brain networks illustrates a rigorous methodology for linking structure and function. The spectral graph model (SGM) of brain oscillations employs the following protocol [20]:

Network Construction:

- Extract structural connectomes from diffusion tensor imaging (DTI) followed by tractography algorithms

- Define nodes as gray matter regions and edges as white matter fiber connections between them

- Represent the network as a weighted graph with connection strengths derived from fiber densities

Laplacian Decomposition:

- Construct the graph Laplacian matrix (L_G = D - A) where (A) is the weighted adjacency matrix

- Perform eigen-decomposition: (L_G = \Phi \Lambda \Phi^T)

- Interpret eigenmodes as fundamental patterns of neural synchronization

Frequency Domain Analysis:

- Model neural oscillations as linear superpositions of eigenmodes

- Derive network transfer function in Fourier domain via eigen-basis expansion

- Validate against source-localized magnetoencephalography (MEG) recordings

This approach successfully predicted both spatial and spectral patterns of alpha-band (8-12 Hz) and beta-band (15-30 Hz) activity in empirical MEG data, demonstrating that certain brain oscillations emerge directly from the structural connectome's spectral properties [20].

SPECTRA Framework for Molecular Property Prediction

The SPECTRA (Spectral Target-Aware Graph Augmentation) framework addresses imbalanced regression in molecular property prediction through spectral domain operations [21]:

Graph Representation:

- Reconstruct multi-attribute molecular graphs from SMILES strings

- Represent atoms as nodes and bonds as edges with chemical attributes

Spectral Alignment:

- Align molecule pairs via (Fused) Gromov-Wasserstein couplings to establish node correspondences

- Project graphs into shared spectral basis using Laplacian eigenvectors

Spectral Interpolation:

- Interpolate Laplacian eigenvalues and eigenvectors of matched graphs

- Interpolate node features in the shared spectral basis

- Reconstruct edges to synthesize chemically plausible molecular structures

Rarity-Aware Augmentation:

- Apply kernel density estimation to target property distribution

- Concentrate augmentation in sparse regions of target space

- Generate synthetic molecules with interpolated properties

This spectral augmentation approach maintains topological fidelity while addressing data imbalance, outperforming standard Graph Neural Networks (GNNs) that typically optimize for average error across the full label distribution [21].

Figure 1: SPECTRA Framework Workflow for Spectral Graph Augmentation

Stability Analysis in Complex Networks with Delays

The analysis of stability regions in complex networks with multiple delays employs sophisticated spectral techniques [11]:

Network Modeling:

- Represent the controlled network as a delay differential equation system

- Linearize around equilibria to obtain characteristic equations

Spectral Stability Criteria:

- Derive the characteristic equation incorporating multiple delays

- Identify stability switching curves in the delay parameter space

- Compute purely imaginary eigenvalues that define critical boundaries

Stability Region Mapping:

- Determine the stability region in the (\tau1)-(\tau2) plane bounded by critical curves

- Analyze Hopf bifurcations along stability boundaries

- Characterize supercritical and subcritical bifurcation directions

This methodology revealed that a two-dimensional complex network with delayed feedback control exhibits a stability region surrounded by five critical curves in the delay parameter space, with chaotic solutions emerging when parameters move away from the stability region [11].

Table 2: Key Parameters in Network Stability Analysis

| Parameter | Mathematical Symbol | Role in Stability Analysis | Experimental Range |

|---|---|---|---|

| Primary Delay | (\tau_1) | Represents inherent communication delay in the network | 0–5 time units (critical value at ~1.8) |

| Control Delay | (\tau_2) | Delay in feedback control mechanism | 0–5 time units (critical value at ~2.1) |

| Nonlinearity Strength | (\nu) | Measures strength of nonlinear interactions | 0.02 (weak nonlinearity) |

| Feedback Gain | (\alpha) | Control parameter regulating stability | Variable (stabilizing effect) |

| Algebraic Connectivity | (\lambda_2) | Spectral gap influencing convergence rate | Positive for connected graphs |

Applications in Biological Systems

Brain Network Dynamics

Spectral graph theory has revolutionized our understanding of structure-function relationships in the human brain. The fundamental insight that brain oscillations can be modeled as emergent properties of the structural connectome's graph spectrum has significant implications for both basic neuroscience and clinical applications [20]. The hierarchical linear spectral graph model demonstrates that:

- Eigenmodes of the structural Laplacian serve as spatial patterns for neural synchronization

- Frequency spectra of brain oscillations are determined by the graph transfer function

- The model simultaneously reproduces empirical spatial and spectral patterns of alpha-band and beta-band activity observed in MEG

This approach provides a parsimonious analytical alternative to complex numerical simulations of high-dimensional coupled nonlinear neural field models, offering greater interpretability and predictive power for understanding how disease processes that perturb brain structure consequently impact neural function [20].

Molecular Property Prediction and Drug Discovery

In pharmaceutical applications, SPECTRA addresses the critical challenge of imbalanced molecular property regression, where the most valuable compounds (e.g., high potency) often occupy sparse regions of the target space [21]. Traditional GNNs optimized for average error typically underperform on these uncommon but critical cases. The spectral approach enables:

- Generation of realistic molecular graphs in the spectral domain while preserving chemical validity

- Targeted augmentation in underrepresented regions of the property space without distorting molecular topology

- Interpretation of synthetic molecules whose structure reflects underlying spectral geometry

This methodology maintains competitive overall mean absolute error while significantly improving prediction accuracy in pharmaceutically relevant target ranges, demonstrating particular value for early-stage drug discovery where data scarcity for promising compound classes is a major bottleneck [21].

Applications in Material Systems and Phase Stability

Phase Stability Analysis in Complex Networks

Spectral methods provide powerful tools for analyzing phase stability and transition behaviors in complex material systems. The study of delayed complex networks reveals how spectral properties determine stability regions and bifurcation boundaries [11]. Key findings include:

- Equilibrium stability can be achieved in otherwise unstable networks through appropriate delayed feedback control

- Stability regions in the delay parameter space are bounded by critical curves defined by spectral properties

- The transition from stable equilibria to chaotic behavior follows specific pathways mediated by spectral characteristics

For a two-dimensional complex network with delayed feedback control, the stability region in the (\tau1)-(\tau2) plane is surrounded by five critical curves, with supercritical Hopf bifurcations occurring along certain boundary segments and subcritical bifurcations along others [11]. This detailed mapping of stability landscapes has direct relevance for understanding phase behavior in material systems.

Figure 2: Phase Stability Analysis Framework Using Spectral Methods

Material Property Prediction

Spectral graph approaches are increasingly applied to predict material properties and behaviors by representing material structures as graphs. While the search results focus primarily on biological applications, the methodologies parallel those used in materials informatics:

- Crystal structures represented as graphs with atoms as nodes and bonds as edges

- Spectral descriptors capturing global connectivity patterns in material architectures

- Prediction of phase stability, conductivity, and mechanical properties from spectral features

The success of spectral methods in molecular property prediction suggests similar potential for material design and discovery, particularly for identifying materials with exceptional properties that may reside in sparsely sampled regions of the design space.

Table 3: Essential Research Reagents and Computational Tools for Spectral Graph Analysis

| Resource Category | Specific Tools/Reagents | Function/Purpose | Application Context |

|---|---|---|---|

| Network Construction | DTI Tractography; Molecular Graph Converters | Constructs structural networks from raw data; Converts SMILES to molecular graphs | Brain connectome mapping; Molecular representation |

| Spectral Decomposition | ARPACK; LAPACK; LOBPCG | Computes eigenvalues/vectors of large sparse matrices | All spectral graph applications |

| Graph Neural Networks | Chebyshev Convolutional Networks; Spectral GNNs | Implements graph convolutions in spectral domain | Molecular property prediction; Network dynamics |

| Stability Analysis | DDE-BIFTOOL; TraceDDE | Analyzes stability and bifurcations in delay systems | Network stability assessment |

| Data Augmentation | SPECTRA Framework | Performs spectral interpolation for graph augmentation | Imbalanced regression tasks |

| Visualization | Graphviz; Cytoscape; Gephi | Visualizes complex networks and spectral embeddings | All application domains |

Spectral graph theory continues to evolve, with several promising research directions emerging at the intersection of biological and material systems analysis. Future developments will likely focus on:

- Dynamic Spectral Methods: Extending spectral analysis to time-varying graphs that capture evolving network structures [19]

- Multiscale Approaches: Integrating spectral information across spatial and temporal scales for hierarchical systems

- Nonlinear Spectral Theory: Developing spectral methods capable of capturing nonlinear dynamics while maintaining analytical tractability

- Spectral Transfer Learning: Leveraging spectral features to transfer knowledge across different types of biological and material networks

The integration of spectral graph theory with universal phase stability network research provides a powerful unified framework for understanding complex systems across disciplines. By revealing the fundamental connection between structural topology and functional dynamics through the graph spectrum, this approach enables deeper theoretical insights and more accurate predictions of system behavior. As spectral methods continue to advance, they will play an increasingly vital role in addressing challenges in network medicine, materials design, and complex systems engineering.

The demonstrated success of spectral approaches in predicting brain dynamics from structural connectomes [20], stabilizing complex networks through delayed feedback control [11], and addressing imbalanced regression in molecular property prediction [21] underscores the transformative potential of spectral graph theory as a unifying mathematical language for complex system analysis across scientific domains.

Network-Driven Discovery: Predictive Methods for Drugs and Materials

Network-Based Prediction of Clinically Efficacious Drug Combinations

The pursuit of effective drug combinations is a cornerstone of modern therapeutics, particularly for complex diseases like cancer and metabolic disorders. This whitepaper details a network-based methodology for predicting clinically efficacious drug combinations, framed within the broader thesis of Universal Phase Stability Network (UPSN) complex network theory. The UPSN framework posits that cellular states can be modeled as stable attractors within a high-dimensional network, and that disease states represent alternative, stable phases. Drug combinations can be designed to perturb the network, forcing a transition from a diseased state back to a healthy state.

Theoretical Foundation: Universal Phase Stability Networks

The UPSN model represents the interactome as a dynamic graph ( G = (V, E, W, \Phi) ), where:

- ( V ): Set of nodes (e.g., proteins, genes).

- ( E ): Set of edges (e.g., protein-protein interactions, regulatory relationships).

- ( W ): Weight matrix representing interaction strengths.

- ( \Phi ): A set of differential equations defining the system's dynamics.

A clinically efficacious combination is one that maximally destabilizes the disease attractor state while preserving the stability of the healthy state.

Data Integration and Network Construction

A multi-scale network is constructed by integrating diverse datasets. The core data types and their sources are summarized below.

Table 1: Core Data Sources for Network Construction

| Data Type | Source / Database | Description | Use Case |

|---|---|---|---|

| Protein-Protein Interactions (PPI) | STRING, BioGRID | Physical and functional interactions between proteins. | Backbone of the network. |

| Signaling Pathways | KEGG, Reactome | Curated pathways of molecular interactions. | Annotate functional modules. |

| Gene Co-expression | GTEx, TCGA | Correlation of gene expression across samples. | Infer context-specific functional links. |

| Drug-Target Interactions | DrugBank, ChEMBL | Known and predicted interactions between drugs and proteins. | Map therapeutic interventions onto the network. |

| Genetic Interactions (SL) | SynLethDB, OGEE | Synthetic lethality and other genetic interactions. | Identify co-dependency for combination targeting. |

Prediction Algorithm: Synergistic Perturbation Index (SPI)

The core algorithm calculates a Synergistic Perturbation Index (SPI) for a drug pair (A, B).

Workflow:

- Network Propagation: Simulate the effect of drug A and drug B individually on the network using a random walk with restart (RWR) algorithm to identify the perturbation footprint.

- Phase Stability Analysis: For each perturbation footprint, compute the stability energy ( \Delta E ) of the disease state using a Lyapunov function derived from UPSN theory.

- Synergy Calculation: The SPI is calculated as: ( SPI{A,B} = \frac{\Delta E{A+B} - (\Delta EA + \Delta EB)}{|\Delta EA + \Delta EB|} ) A negative SPI indicates synergistic destabilization of the disease state.

Diagram Title: Synergistic Perturbation Index Workflow

Experimental Validation Protocol

In vitro validation is critical. The following protocol details a high-throughput screening method.

Protocol: High-Content Screening for Drug Synergy

Objective: To experimentally validate predicted synergistic drug combinations in a cancer cell line model.

Materials:

- Cell line: e.g., A549 (lung carcinoma).

- Predicted drug combinations (from SPI analysis).

- Individual drugs dissolved in DMSO.

- 384-well cell culture plates.

- High-content imaging system (e.g., ImageXpress Micro).

- Viability stain (e.g., Calcein AM) and apoptosis stain (e.g., Caspase-3/7 dye).

Procedure:

- Cell Seeding: Seed A549 cells at 2,000 cells/well in 384-well plates. Incubate for 24 hours.

- Drug Treatment: Treat cells with a matrix of drug concentrations (e.g., 8x8 serial dilutions) for each single agent and combination. Include DMSO-only controls.

- Staining: After 72 hours, stain cells with Calcein AM (2 µM) and Caspase-3/7 dye (1 µM). Incubate for 1 hour.

- Imaging: Acquire 4 images per well using a 10x objective.

- Image Analysis: Quantify total cell count (viability) and Caspase-3/7 positive cells (apoptosis) using automated image analysis software.

- Data Analysis: Calculate combination indices (CI) using the Chou-Talalay method via software like CompuSyn. A CI < 1 indicates synergy.

Key Signaling Pathways for Combination Therapy

A prime target for network-based prediction is the PI3K/AKT/mTOR and MAPK signaling axis, often dysregulated in cancer.

Diagram Title: PI3K-MAPK Pathway and Drug Inhibition

Table 2: Example Quantitative Output from SPI Analysis

| Drug A (Target) | Drug B (Target) | ΔE_A | ΔE_B | ΔE_A+B | SPI | Prediction |

|---|---|---|---|---|---|---|

| PI3K Inhibitor (PI3K) | MEK Inhibitor (MEK) | -0.45 | -0.38 | -1.15 | -0.39 | Strong Synergy |

| mTOR Inhibitor (mTOR) | BCL-2 Inhibitor (BCL2) | -0.51 | -0.22 | -0.68 | +0.07 | Additive |

| EGFR Inhibitor (EGFR) | CDK4/6 Inhibitor (CDK4) | -0.33 | -0.41 | -0.60 | +0.19 | Antagonism |

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Experimental Validation

| Reagent / Material | Supplier Examples | Function |

|---|---|---|

| Calcein AM | Thermo Fisher, BioLegend | Cell-permeant dye used as a marker of viability. Fluoresces upon enzymatic conversion by live cells. |

| Caspase-3/7 Dye | Promega, AAT Bioquest | Fluorogenic substrate for activated caspases-3 and -7, serving as an apoptosis marker. |

| 384-well Cell Culture Plates | Corning, Greiner Bio-One | Microplates for high-throughput cell-based assays, minimizing reagent use. |

| DMSO (Cell Culture Grade) | Sigma-Aldrich, Tocris | Universal solvent for reconstituting small molecule drugs. |

| High-Content Imaging System | Molecular Devices, Cytiva | Automated microscope for acquiring and analyzing cellular images in multi-well plates. |

| CompuSyn Software | ComboSyn Inc. | Calculates Combination Index (CI) and Dose Reduction Index (DRI) from dose-effect data. |

Classifying Drug-Drug-Disease Interactions for Targeted Therapy

The advent of complex network theory has revolutionized the analysis of intricate systems across diverse scientific domains, from social networks to materials science. Within pharmacology, this paradigm shift enables a systematic approach to understanding how drugs interact not only with each other but also with the complex disease states they aim to treat. The classification of drug-drug-disease (DDD) interactions represents a critical frontier in developing more precise and effective targeted therapies. By framing therapeutic interventions within the context of network topology and interaction dynamics, researchers can move beyond single-target models to embrace the inherent complexity of biological systems. This approach draws inspiration from universal phase stability networks in materials science, where the stability and reactivity of thousands of materials are understood through their positions within a vast network of thermodynamic relationships [3]. Similarly, DDD interactions can be modeled as a multi-layered network where therapeutic efficacy and adverse events emerge from the interplay between pharmacological agents and pathological states.

The integration of network theory with pharmacological science enables a more sophisticated understanding of treatment outcomes. Where traditional pharmacology often focuses on single drug-disease pairs, the DDD interaction framework acknowledges that most patients, particularly those with complex chronic conditions, receive multiple medications simultaneously, creating a network of interactions that can significantly alter therapeutic outcomes [24] [25]. This is especially relevant in clinical contexts such as oncology, cardiology, and geriatrics, where polypharmacy is prevalent and the risk of adverse events increases exponentially with each additional medication. By classifying and understanding these interactions through the lens of network science, researchers and clinicians can better predict, manage, and leverage these complex relationships for improved patient care.

Theoretical Foundations: From Material Networks to Biological Systems

The conceptual framework for analyzing DDD interactions through network theory finds a compelling analogue in the universal phase stability network of inorganic materials. In this materials network, thermodynamically stable compounds (nodes) are interconnected by tie-lines (edges) representing stable two-phase equilibria, forming a remarkably dense and interconnected system with a characteristic path length of L = 1.8 and diameter Lmax = 2 [3]. This network exhibits distinctive topological properties including a lognormal degree distribution and weakly dissortative mixing behavior, where highly connected nodes tend to link with less connected ones.

Translating these principles to pharmacology, drugs and diseases can be conceptualized as nodes within a bipartite network, where edges represent known therapeutic relationships [26]. The connectance (fraction of possible edges present) and clustering coefficients of such networks provide insights into the density of known therapeutic relationships and the propensity for local clustering of treatments for related diseases. This network-based perspective enables the application of link prediction algorithms to identify potential drug repurposing opportunities by predicting missing edges in the drug-disease network [26]. The hierarchical organization observed in materials networks, where mean degree decreases with component number, finds its pharmacological equivalent in the increasing complexity of drug-drug-disease interactions compared to simple drug-disease relationships.

Table 1: Key Network Metrics from Universal Phase Stability Networks and Their Pharmacological Analogues

| Network Metric | Materials Science Context | Pharmacological Analogue |

|---|---|---|

| Mean Degree (⟨k⟩) | ~3850 tie-lines per compound | Number of known interactions per drug/disease |

| Characteristic Path Length (L) | 1.8 (small-world network) | Degrees of separation between drugs/diseases |

| Assortativity Coefficient | -0.13 (weakly dissortative) | Tendency for drugs to interact with diseases of similar complexity |

| Clustering Coefficient (Cg) | 0.41 (highly clustered) | Propensity for related diseases to share treatments |

A Classification Framework for DDD Interactions

Primary Interaction Mechanisms

DDD interactions can be systematically classified into distinct categories based on their underlying mechanisms and clinical manifestations. Understanding these categories is essential for predicting therapeutic outcomes and avoiding adverse events.

3.1.1 Pharmacodynamic Duplication and Opposition