The New Alchemy: How AI and Automation Are Revolutionizing the Discovery of Inorganic Crystalline Materials

The discovery of inorganic crystalline materials is undergoing a paradigm shift, moving from serendipitous finds to a targeted, data-driven science.

The New Alchemy: How AI and Automation Are Revolutionizing the Discovery of Inorganic Crystalline Materials

Abstract

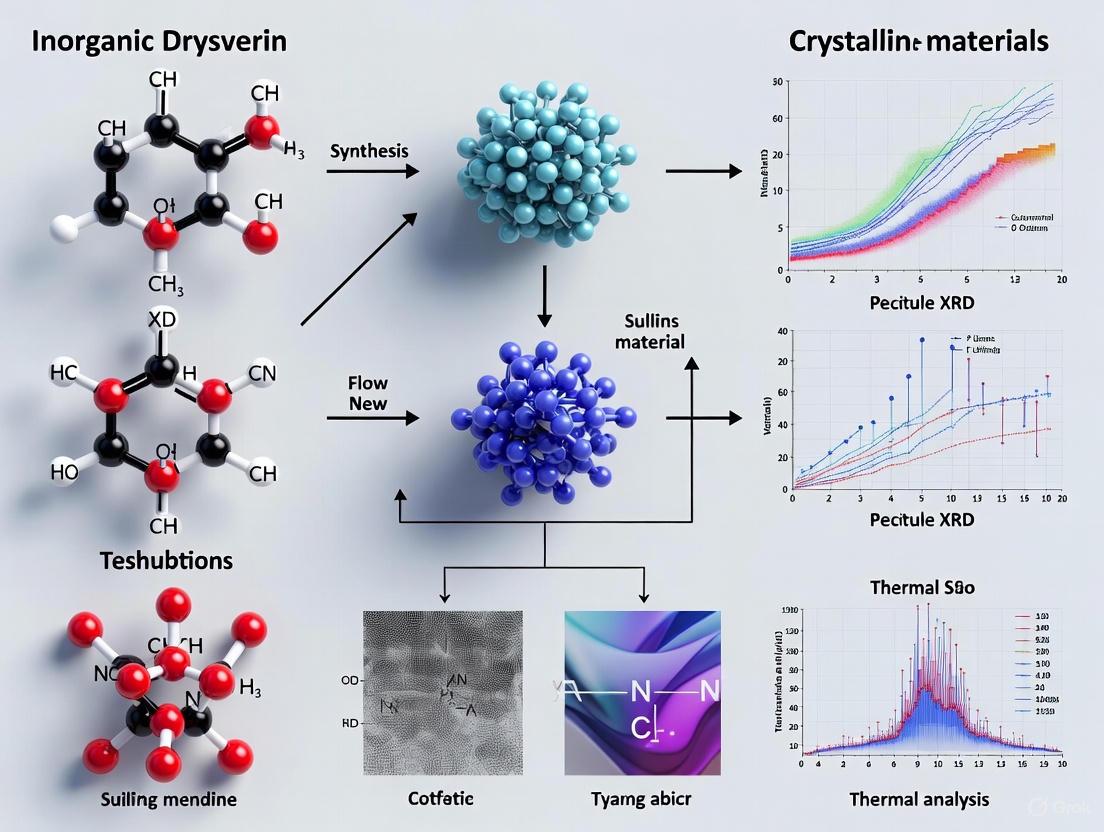

The discovery of inorganic crystalline materials is undergoing a paradigm shift, moving from serendipitous finds to a targeted, data-driven science. This article explores the foundational principles mapping the vast chemical space, the breakthrough AI models like GNoME and MatterGen generating millions of candidates, and the critical challenges of synthesizability and practicality. We examine how machine learning models now rival or surpass human experts in predicting stable compounds and how robotic labs are closing the loop from prediction to synthesis. For researchers in drug development and beyond, these advances promise to accelerate the creation of next-generation materials for energy, electronics, and medicine, provided the field can overcome hurdles in validation, scalability, and the integration of human chemical intuition.

Mapping the Unknown: Charting the Vast Combinatorial Space of Inorganic Crystals

The discovery of new inorganic crystalline materials is a cornerstone of technological advancement, driving innovations in areas from energy storage and catalysis to semiconductor design and carbon capture [1]. The fundamental challenge in this field, often termed the "needle in a haystack" problem, stems from the astronomical scale of possible compositions and structures. The combinatorial search space arising from the interplay of structural, chemical, and microstructural degrees of freedom is vast, with only a tiny fraction having been experimentally investigated [2]. This article delineates the scale of this challenge, quantifying the search space, and details the advanced computational strategies developed to navigate it efficiently.

Quantifying the Combinatorial Challenge

The scale of the challenge is not merely large; it is exponentially vast. High-throughput explorations of unknown crystalline materials have typically been on the order of 10^6 to 10^7 materials, which represents only a minuscule fraction of the potentially stable inorganic compounds [1]. This immense space arises from several combinatorial factors:

- Elemental Diversity: With over 100 stable elements in the periodic table, the number of possible multi-element combinations grows rapidly.

- Structural Configurations: For any given chemical composition, atoms can arrange themselves in a multitude of crystal structures, each defined by a space group, lattice parameters, and atomic coordinates (Wyckoff positions) [3] [4].

- Compositional Ratios: Even for a fixed set of elements, the stoichiometric ratios can vary, further expanding the possibilities.

This vastness makes traditional discovery methods, which rely on human intuition and trial-and-error experimentation, fundamentally inadequate. The following table summarizes the quantitative scale of the problem and current computational capabilities.

Table 1: Scale of the Materials Discovery Challenge and Generative Model Performance

| Aspect | Quantitative Measure | Reference / Context |

|---|---|---|

| Explored Search Space | Hundreds of thousands to millions of materials screened | State of high-throughput screening efforts [1] |

| Total Potential Space | Billions of potentially stable inorganic compounds | Fraction of explored vs. potential materials [1] |

| Generative Model Success Rate | >78% of generated structures are stable (within 0.1 eV/atom of convex hull) | MatterGen model performance [1] |

| Novelty of Generated Structures | 61% of generated structures are new (not in existing databases) | MatterGen evaluation on Alex-MP-ICSD dataset [1] |

| Structural Relaxation Quality | 95% of structures have RMSD < 0.076 Å from their DFT-relaxed structures | MatterGen output proximity to DFT local energy minimum [1] |

| Benchmark Prediction Accuracy | 93.3% accuracy in crystal structure prediction | ShotgunCSP benchmark on 90 different crystal structures [4] |

Methodological Frameworks for Navigating the Search Space

Generative Models for Inverse Design

A paradigm shift from high-throughput screening to inverse design has been enabled by generative models. These models directly generate candidate material structures that satisfy target property constraints, thereby focusing computational resources on the most promising regions of the search space [1].

MatterGen: A Diffusion-Based Foundational Model MatterGen is a diffusion model specifically tailored for designing crystalline materials across the periodic table [1]. Its methodology involves:

- Pretraining: A base model is trained on a large, diverse dataset of stable structures (e.g., Alex-MP-20 with 607,683 structures) to learn the underlying principles of inorganic crystals.

- Customized Diffusion Process: The model defines a crystalline material by its unit cell (atom types A, coordinates X, and periodic lattice L) and uses a corruption process that respects periodic boundaries and crystal symmetries.

- Fine-Tuning with Adapters: The base model can be fine-tuned on smaller, property-specific datasets using adapter modules. This allows the generation of materials with desired chemical composition, symmetry, and target properties (e.g., magnetic density, electronic properties) [1].

Table 2: Key Research Reagents and Computational Tools for Materials Discovery

| Tool / Solution | Type | Primary Function | Relevance to the Challenge |

|---|---|---|---|

| MatterGen | Generative AI Model | Generates stable, diverse inorganic materials across the periodic table | Directly addresses the scale challenge via inverse design [1] |

| ShotgunCSP | Machine Learning Workflow | Performs high-throughput virtual screening of candidate crystal structures | Reduces the need for iterative DFT calculations, lowering computational cost [4] |

| Density Functional Theory (DFT) | Computational Method | Calculates electronic structure and energy of material systems | The "oracle" that validates stability and properties; used for final candidate refinement [1] [4] |

| CGCNN (Crystal Graph Convolutional Neural Network) | Machine Learning Model | Predicts formation energies of crystal structures | Acts as a surrogate for DFT to rapidly pre-screen millions of candidates [4] |

| VESTA | Visualization Software | 3D visualization of structural models and volumetric data | Enables researchers to analyze and interpret generated crystal structures [5] |

| MOCU (Mean Objective Cost of Uncertainty) | Experimental Design Framework | Quantifies which experiment will most reduce model uncertainty | Guides optimal experimental resource allocation in the vast search space [2] |

Shotgun Crystal Structure Prediction (ShotgunCSP)

The ShotgunCSP method approaches the problem as a high-throughput virtual screening task, significantly reducing computational demands compared to conventional iterative methods [4]. Its workflow is a prime example of a detailed experimental protocol for navigating the compositional scale.

Detailed Protocol for ShotgunCSP [4]:

Energy Predictor Development:

- Pretraining: A Crystal Graph Convolutional Neural Network (CGCNN) is pretrained on a large dataset of diverse crystals with known DFT formation energies (e.g., 126,210 crystals from the Materials Project).

- Transfer Learning: For a target composition X, thousands of virtual crystal structures are randomly generated. Their formation energies are computed via single-point DFT calculations. This dataset is used to fine-tune the pretrained CGCNN, creating a specialized "local model" for accurately predicting energy differences between configurations of X.

Virtual Crystal Library Generation:

- Method 1 (ShotgunCSP-GT - Element Substitution): For a query composition, template crystal structures with the same composition ratio are collected from databases. Constituent elements are substituted, and atomic coordinates are slightly perturbed. A cluster-based selection (e.g., using DBSCAN on compositional descriptors) ensures template diversity and relevance.

- Method 2 (ShotgunCSP-GW - Wyckoff Position Generator): For novel compositions, crystal structures are generated de novo. A machine-learning predictor first suggests probable space groups and Wyckoff-letter assignments for the composition. The generator then creates symmetry-restricted atomic coordinates from all possible combinations of these Wyckoff positions.

Virtual Screening and Validation:

- The fine-tuned CGCNN model is used to predict the formation energies of millions of candidates in the virtual library.

- The most promising candidates (dozens to a hundred with the lowest predicted energies) are selected for full structural relaxation using DFT calculations, which serves as the final validation step.

Optimal Experimental Design

The Mean Objective Cost of Uncertainty (MOCU) framework is a materials design strategy that integrates computational models with physical knowledge to guide experiments [2]. Instead of random probes, it systematically identifies which measurement (e.g., synthesizing a specific doped alloy) will most effectively reduce model uncertainty and steer the search towards materials with targeted properties.

The challenge of enumerating billions of possible compositions in inorganic materials discovery is profound, but the development of sophisticated computational tools has created a viable path forward. Generative models like MatterGen enable direct inverse design, machine-learning surrogates like those in ShotgunCSP allow for exhaustive virtual screening at unprecedented scale, and optimal experimental design frameworks like MOCU intelligently guide resource allocation. While the combinatorial space remains astronomically large, these methodologies effectively map its most promising regions, dramatically accelerating the discovery of new functional materials that will power future technologies. The integration of AI-driven generative design, high-throughput computation, and targeted experimentation represents the modern, powerful toolkit for conquering the scale of the materials discovery challenge.

In the discovery of new inorganic crystalline materials, the initial screening and separation of chemical constituents often relies on the fundamental principles of filtration. Chemical filtration extends far beyond simple sieving; it is a complex process governed by interactions at the molecular and atomic levels, where concepts of charge neutrality and electronegativity play decisive roles [6]. As researchers develop advanced materials such as metal-organic frameworks (MOFs) for sustainable applications, understanding these filtration mechanisms becomes crucial for efficient materials synthesis and characterization [7]. This technical guide examines how filtration principles, particularly those involving electrostatic interactions and electronegativity differences, serve as critical first-pass methods in separating and preparing components for inorganic materials research, ultimately accelerating the discovery of novel crystalline compounds with tailored properties.

The paradigm of filtration has evolved from a mere mechanical separation technique to a sophisticated process that exploits subtle electrochemical gradients. In contemporary materials science, this approach enables researchers to selectively isolate intermediate compounds, purify precursor solutions, and engineer crystalline structures with specific functionality [7]. The integration of these principles is particularly relevant for developing next-generation materials like MOFs, where controlled assembly of metal ions and organic linkers dictates the resulting material's porosity, stability, and adsorption capacity [7].

Theoretical Foundations: From Macro-Separation to Atomic Interactions

The Dual Mechanisms of Filtration

Filtration physics is fundamentally divided into two sequential processes: transport and attachment [6]. Transport mechanisms deliver particles from the bulk suspension to the immediate vicinity of filter media, while attachment mechanisms secure them to the media surface.

Transport Mechanisms include [6]:

- Diffusion: Brownian motion moves particles randomly through collision with fluid molecules.

- Interception: Particles following fluid streamlines collide with filter media when their center of mass passes within one particle radius.

- Inertia: Particles with sufficient mass deviate from streamlines to impact filter media.

- Sedimentation: Gravitational forces cause heavier particles to settle onto filter surfaces.

- Hydrodynamic action: Fluid flow patterns direct particles toward collection surfaces.

Attachment Mechanisms involve [6]:

- Electrostatic attraction between oppositely charged particles and filter media.

- van der Waals forces that operate at very short ranges.

- Chemical bonding through specific functional groups.

- Electronegativity potential differences that create localized charge imbalances.

The Electronegativity Framework in Chemical Bonding

Electronegativity, defined as an atom's ability to attract electrons in chemical bonds, directly influences filtration efficiency through charge distribution phenomena [8]. Since Pauling's initial formulation in 1932, electronegativity has been a cornerstone concept for predicting electron density rearrangements in molecular systems [8]. The modern understanding recognizes electronegativity as a multidimensional property that can be refined through artificial intelligence approaches analyzing vast chemical datasets [8].

In filtration contexts, electronegativity differences between particles and filter media create electrostatic potentials that significantly impact attachment efficiency [9]. Atoms with higher electronegativity (e.g., fluorine, oxygen, chlorine) create regions of negative electrostatic potential (shown in red in computational models), while less electronegative atoms generate positive potentials (blue in visualization models) [9]. These potential differences drive the initial attachment phase in chemical filtration systems designed for materials separation.

Table 1: Electronegativity Values and Their Impact on Filtration Interactions

| Element | Pauling Electronegativity | Electrostatic Potential | Filtration Relevance |

|---|---|---|---|

| Fluorine (F) | 3.98 | Strongly negative | Enhances capture of cationic species |

| Oxygen (O) | 3.44 | Negative | Effective for metal ion adsorption |

| Nitrogen (N) | 3.04 | Moderately negative | Intermediate binding affinity |

| Carbon (C) | 2.55 | Slightly negative | Baseline interaction potential |

| Hydrogen (H) | 2.20 | Slightly positive | Weak electrostatic attraction |

Advanced Filtration Media: Materials and Mechanisms

Electret Media in Advanced Separation

Electret media represent a significant advancement in filtration technology, employing electrically charged fibers to enhance particle collection through additional electrostatic mechanisms [10]. These media achieve higher filtration efficiencies while maintaining lower pressure drops compared to purely mechanical filters, making them particularly valuable for energy-efficient separation processes in materials research laboratories [10].

The electrostatic enhancement in electret media operates through three primary mechanisms [10]:

- Coulombic forces between charged fibers and oppositely charged particles

- Induction forces where charged fibers polarize nearby neutral particles

- Image forces where charged particles induce polarization in neutral fibers

The performance of electret media is quantified through the single fiber efficiency model, which accounts for these electrostatic contributions through parameters such as the Coulombic force parameter (Kc) and inductive force parameter (KIn) [10]. Recent research has demonstrated that these efficiencies vary with operational pressure, requiring modified models for accurate prediction under different research conditions [10].

Metal-Organic Frameworks as Molecular Filters

Metal-organic frameworks (MOFs) represent a revolutionary class of porous materials that function as "crystalline sponges" with molecular-level filtration capabilities [7]. These structures consist of metal atoms joined by carbon-containing linkers, creating cage-like configurations with precisely tunable cavities [7]. The empty spaces within MOF structures can be engineered for specific separation tasks, including gas capture, water harvesting, and selective molecular filtration [7].

The development of MOFs by Nobel laureates Susumu Kitagawa, Richard Robson, and Omar M. Yaghi has opened new frontiers in filtration science [7]. Their cage-like structures with molecular-scale pores enable selective capture based on both size exclusion and electrochemical affinity, making them ideal for precision separation tasks in materials research pipelines [7]. Dr. Martin Attfield, a researcher at the University of Manchester's Centre for Nanoporous Materials, describes them as "crystalline material with lots of pores and spaces of molecular dimensions" that can be tailored through various metal-linker combinations [7].

Table 2: Filtration Media Classification and Applications in Materials Research

| Media Type | Mechanism Dominance | Research Applications | Limitations |

|---|---|---|---|

| Granulated Media | Depth filtration, attachment mechanisms | Precursor purification, byproduct removal | Requires backwashing, media degradation |

| Electret Media | Electrostatic attraction, Coulombic forces | Aerosol separation, cleanroom environments | Charge decay over time, humidity sensitivity |

| Membrane Filters | Straining, size exclusion | Sterile filtration, particle size classification | Fouling potential, pressure limitations |

| Metal-Organic Frameworks | Molecular recognition, adsorption | Gas separation, water harvesting, catalyst support | Cost of synthesis, stability issues |

Experimental Protocols and Methodologies

Quantifying Electronegativity in Filter Media

Modern approaches to electronegativity measurement have evolved from Pauling's original thermodynamic method to computational techniques leveraging artificial intelligence and large chemical datasets [8]. The protocol below outlines the process for generating multidimensional electronegativity values for filtration media characterization:

Materials and Equipment:

- QM9 dataset or equivalent computational chemistry database

- Graph Neural Network (GNN) framework, preferably PyTorch Geometric

- High-performance computing resources for quantum chemical calculations

- Standard reference compounds for validation

Procedure:

- Data Preparation: Compile a dataset of molecular structures and their corresponding atomization energies (U0) from the QM9 dataset or equivalent source [8].

- Model Architecture: Implement a Graph Convolutional Network (GCN) using the following framework:

- Molecular graphs with atoms as nodes and bonds as edges

- Node feature matrix initialized with one-hot encoding for element identity

- Normalized adjacency matrix representing molecular connectivity

- SiLU (Sigmoid Linear Unit) activation function for improved performance over ReLU

- Electronegativity Optimization: Train the model to minimize the difference between predicted and actual molecular stability using the modified Pauling equation:

- Transformation of one-hot encoding vectors into χML through linear regression: χML = Wx + b [8]

- Iterative update of atomic features through message passing between bonded atoms

- Validation: Compare predicted electronegativity values with known experimental outcomes for chemical systems.

This AI-driven approach generates multidimensional electronegativity vectors that more accurately predict filtration interactions and binding affinities than traditional Pauling values [8].

Testing Electret Filtration Efficiency

The following protocol measures the particle collection efficiency of electret media under varying pressure conditions, relevant for materials research applications:

Materials and Equipment:

- DMA-classified particles (10-600 nm size range)

- Electret filter media samples (charged and discharged states)

- Pressure-controlled filtration apparatus (0.33-3 atm range)

- Particle counting and sizing instrumentation

- Charge neutralization equipment for particle charge state control

Procedure:

- Sample Preparation: Condition electret media samples at controlled humidity and temperature for 24 hours prior to testing [10].

- Particle Classification: Generate aerosols with precise electrical mobility sizes (10-600 nm) using a Differential Mobility Analyzer (DMA) [10].

- Charge State Control: Prepare particles in three distinct charge states:

- Neutral (charge-equilibrated)

- Singly charged (unipolar)

- Fuchs' bipolar charge state

- Pressure Variation: Conduct filtration experiments across pressure range of 0.33-3 atm to determine Knudsen number (Kn) dependence [10].

- Efficiency Calculation: Measure particle penetration and calculate single fiber efficiency for each condition.

- Model Fitting: Determine Coulombic (ηC) and induced (ηIn) efficiency components using modified single fiber theory with pressure-dependent terms [10].

This methodology provides critical data for optimizing electret filters in research environments with varying pressure conditions, particularly relevant for gas separation processes in inorganic materials synthesis.

Computational Approaches and Data Analysis

AI-Driven Electronegativity Modeling

Recent advances in machine learning have enabled the development of multidimensional electronegativity scales that outperform traditional Pauling values in predicting molecular properties and interactions [8]. By applying graph neural networks to the QM9 dataset containing approximately 134,000 organic molecules, researchers can generate electronegativity values (χML) that more accurately reflect chemical behavior in complex environments [8].

The key innovation in this approach is the treatment of electronegativity as a learnable, multidimensional vector rather than a fixed scalar value [8]. This allows the property to capture subtleties in chemical environment that influence filtration interactions, such as:

- Bond orders and conjugation effects

- Neighboring atom influences

- Stereochemical constraints

- Solvation effects

Implementation of relational graph convolutional networks (RGCNs) further enhances this approach by separately handling different bond types within molecules, allowing for more precise prediction of interaction strengths in filtration contexts [8].

Filtration Process Workflow

The following diagram illustrates the integrated filtration process from initial transport to final attachment, highlighting the role of electrochemical properties:

Research Reagent Solutions for Filtration Studies

Table 3: Essential Materials for Filtration Research in Materials Discovery

| Reagent/Material | Function | Application Example |

|---|---|---|

| Electret Media | Provides electrostatic enhancement to mechanical filtration | Respirators, cleanroom filters, analytical separation |

| Metal-Organic Frameworks | Molecular-level selective capture | CO₂ sequestration, water harvesting, catalyst support |

| Granulated Activated Carbon | Adsorptive filtration of organic compounds | Water purification, solvent recovery, emissions control |

| Diatomaceous Earth | Pre-coat filtration media with high surface area | Beverage clarification, pharmaceutical processing |

| Activated Alumina | Selective adsorption of specific molecules | Water defluoridation, drying of gases and liquids |

| Zeolite Materials | Molecular sieving through precise pore structures | Petroleum cracking, gas separation, ion exchange |

Applications in Inorganic Crystalline Materials Research

MOFs for Sustainable Chemistry Applications

Metal-organic frameworks represent one of the most promising applications of filtration principles in materials discovery [7]. Their remarkable porosity and tunable cavities enable precise molecular separation capabilities that support sustainable chemistry initiatives:

Carbon Capture Applications: MOFs can capture CO₂ from industrial emissions with higher capacity and selectivity than traditional materials [7]. Their cage-like structures with molecular-scale pores can be functionalized to target specific greenhouse gases while excluding other atmospheric components.

Water Harvesting Systems: In arid environments, MOFs can extract atmospheric moisture during cool night periods and release potable water during daytime heating cycles [7]. This application demonstrates how molecular filtration principles can address critical resource challenges.

Catalytic Support Structures: The high surface area and selective permeability of MOFs make them ideal supports for catalytic processes in inorganic materials synthesis [7]. Their confined spaces can pre-organize reactant molecules, increasing reaction efficiency and selectivity.

Charge-Mediated Separation in Materials Synthesis

The strategic application of electronegativity differences and charge interactions enables precise separation of precursor materials in inorganic synthesis:

Ion-Selective Filtration: By engineering filter media with specific electronegativity profiles, researchers can selectively capture target ions from complex mixtures [11]. For instance, incorporating highly electronegative fluorine atoms into filter media enhances binding with cationic species, facilitating purification of metal salt precursors [11].

Crystal Habit Modification: Controlled filtration during crystallization can influence crystal growth patterns by selectively removing specific growth modifiers or impurities [6]. This approach enables finer control over crystal morphology and size distribution in synthesized materials.

Byproduct Removal: Continuous filtration systems can maintain reaction equilibrium by selectively removing reaction byproducts that would otherwise inhibit forward progress [6]. This is particularly valuable in multi-step inorganic synthesis pathways.

The integration of advanced filtration principles, particularly those leveraging charge neutrality and electronegativity concepts, provides powerful first-pass separation methodologies in inorganic crystalline materials research. As the field progresses, several emerging trends warrant attention:

The development of AI-refined electronegativity scales will enable more precise prediction of filtration interactions at the molecular level [8]. These data-driven approaches can account for complex chemical environments that influence separation efficiency. Additionally, the synthesis of novel MOF architectures with stimuli-responsive pores will create adaptive filtration systems that modify their selectivity based on environmental conditions [7]. Furthermore, hybrid systems combining multiple filtration mechanisms (electret, MOF, membrane) will address complex separation challenges in materials discovery pipelines.

As research continues, the refinement of chemical filters based on fundamental electrochemical principles will accelerate the discovery and optimization of inorganic crystalline materials with tailored properties for sustainable energy, environmental remediation, and advanced manufacturing applications.

The systematic discovery of new inorganic crystalline materials represents a cornerstone of technological advancement, fueling innovations across sectors including renewable energy, electronics, and medicine. Historically guided by intuition and serendipity, materials research has undergone a paradigm shift towards data-driven approaches enabled by comprehensive crystallographic databases. These repositories of known materials serve as the essential foundation upon which new discoveries are built, allowing researchers to identify trends, predict new stable compounds, and optimize properties without starting from first principles. The Inorganic Crystal Structure Database (ICSD) and the Materials Project (MP) are two pivotal resources that exemplify this approach, each offering unique capabilities for accelerating materials innovation. By centralizing and curating vast amounts of structural and computational data, these platforms provide researchers with unprecedented access to the collective knowledge of inorganic chemistry and solid-state physics, effectively creating a starting genome for materials design that dramatically reduces both development time and experimental costs.

The fundamental premise underlying these databases is that the known structures of inorganic compounds contain implicit rules and patterns that can be extracted through careful analysis. As articulated by the Materials Project, their decade-long effort to pre-compute properties of materials aims to accelerate discovery in applications ranging from "better batteries, solar energy, water splitting, optoelectronics, catalysts and more" [12]. This mirrors the practical philosophy behind ICSD, which through "continuous quality assurance" ensures that its collection of structures serves as a reliable basis for research [13]. Within the context of modern materials research, these resources have become indispensable tools, particularly as the integration of machine learning techniques with comprehensive materials data creates new pathways for identifying promising candidate materials with specific functional properties.

Database Architectures and Core Capabilities

The Inorganic Crystal Structure Database (ICSD): A Repository of Experimental Knowledge

The ICSD stands as the world's largest database for completely identified inorganic crystal structures, maintained by FIZ Karlsruhe with records dating back to 1913 [13]. This historically deep collection contains over 210,000 entries [14], with approximately 12,000 new experimental structures added annually [13]. The database's distinctive value lies in its curation of experimental results from published literature, providing researchers with experimentally verified structural information. Each entry in ICSD undergoes thorough quality checks before inclusion, with data quality certified by the Core Trust Seal since 2023 [15]. The scope encompasses inorganic and organometallic structures, with recent enhancements including expanded analysis of coordination polyhedra and standardized mineral classification [15].

The technical capabilities of ICSD support sophisticated materials investigation through multiple search modalities. Researchers can query by empirical formula, ANX formula, mineral names, crystal system, space group, and unit cell parameters [16]. The database provides comprehensive crystal structure data including unit cell parameters, space group, complete atomic parameters, site occupation factors, Wyckoff sequence, and mineral group classification [13]. A particularly powerful feature is the organization of approximately 80% of structures into about 9,000 structure types, enabling systematic searches across substance classes [13]. This structural typification allows researchers to recognize homologous compounds and identify families of materials with related characteristics.

The Materials Project: A Platform for Computed Materials Properties

The Materials Project represents a complementary approach, originating from a Department of Energy initiative to pre-compute properties of materials and make this data publicly available [12]. Rather than focusing exclusively on experimental results, MP employs high-throughput density functional theory (DFT) calculations to generate a massive repository of computed materials properties. This computational paradigm enables the systematic characterization of materials across multiple dimensions, including electronic structure, thermodynamic stability, and mechanical properties. The platform provides a sophisticated API (Application Programming Interface) that allows researchers to programmatically query materials data using property filters, material identifiers, and chemical systems [17].

The architecture of MP supports complex queries that integrate multiple material criteria. For example, researchers can search for materials containing specific elements with defined band gap ranges [17], identify stable materials on the convex hull with large band gaps [18], or query structures by their association with ICSD entries [18]. A critical aspect of MP's data is the transparency regarding computational methods, as different functionals (PBE, PBE+U, and r2SCAN) have been used for structure relaxation [17]. This allows researchers to understand the theoretical underpinnings of the computed properties and select appropriately validated data for their investigations.

Table 1: Comparative Capabilities of ICSD and Materials Project

| Feature | ICSD | Materials Project |

|---|---|---|

| Data Type | Experimental structures [13] | Computed properties via DFT [12] |

| Entry Count | >210,000 [14] | Not explicitly stated (massive scale) [12] |

| Temporal Coverage | 1913 to present [13] | Contemporary computational focus |

| Update Frequency | ~12,000 new structures/year [13] | Continuous addition of computed materials |

| Primary Access Method | Web interface [19] | API programmatic access [17] |

| Key Search Capabilities | Composition, mineral name, space group, cell parameters [16] | Material IDs, elements, chemical systems, property ranges [17] |

| Quality Assurance | Thorough experimental checks, Core Trust Seal [15] | Consistency of computational methods [17] |

| Unique Strengths | Historical experimental data, mineral classification [15] | Pre-computed properties for discovery [12] |

Methodologies for Database-Driven Research

Experimental Protocol: Querying and Extracting Structural Data

The practical application of these databases begins with formulating and executing precise queries to extract relevant structural information. The following methodologies outline standard protocols for leveraging each resource effectively.

ICSD Query Methodology: Accessing the ICSD typically begins with navigating to the institutional portal and authenticating [19]. The advanced search interface provides multiple chemistry-focused filters:

- Composition Search: Under the Chemistry option in the navigation menu, researchers can enter empirical formulas (with spaces between elements) and specify the number of permitted elements [19].

- Structure Type Search: Utilizing the 9,000 classified structure types to find isostructural compounds [13].

- Crystallographic Search: Querying by space group, unit cell parameters, or symmetry [16].

- Mineral Group Search: Leveraging the standardized mineral classification [15].

After executing a search, results appear in a Brief View showing ICSD accession number, structural formula, crystal type, and publication reference [19]. Entries deemed high-quality are marked with a star icon. Researchers can select promising entries and switch to Detailed View for comprehensive crystallographic data, including bond lengths and angles within the unit cell [19]. The interface enables download of CIF (Crystallographic Information File) files for further analysis using specialized software.

Materials Project API Protocol: Programmatic access to MP data employs the MPRester client within a Python environment, requiring an API key for authentication [17]:

For more sophisticated investigations, researchers can implement property-filtered searches:

To establish correlations between experimental and computed data, researchers can identify structures with ICSD associations:

This protocol enables the creation of a cross-walk between experimental structures in ICSD and computed properties in MP, facilitating validation and complementary analysis [18].

Data Integration and Workflow Design

The most powerful applications emerge from integrating data across these complementary resources. The following workflow diagram illustrates a systematic approach to database-driven materials discovery:

Diagram 1: Integrated materials discovery workflow (63 characters)

This methodology enables what is known as high-throughput virtual screening, where thousands of potential materials can be evaluated computationally before committing resources to synthesis and testing. The machine learning component is particularly powerful when trained on the rich features derived from crystal structures, such as coordination environments, symmetry operations, and electronic configurations. As demonstrated in research on rare-earth magnetic materials, these approaches "efficiently analyze vast experimental and computational datasets, thereby accelerating the exploration and development" of new functional materials [20].

Case Study: Accelerating Rare-Earth Magnetic Materials Development

The integration of database resources with machine learning has demonstrated particular efficacy in the development of rare-earth magnetic materials, which are critical for numerous technologies including renewable energy systems, electric vehicles, and data storage devices. Rare-earth elements possess unique atomic structures characterized by multiple unpaired 4f orbital electrons in inner shells, high atomic magnetic moments, and strong spin-orbit coupling [20]. These attributes create complex magnetic configurations that present both challenges and opportunities for materials design.

In one representative study, researchers combined data from ICSD and Materials Project to develop machine learning models predicting key magnetic properties such as Curie temperature and magnetic anisotropy [20]. The research workflow encompassed several specific applications:

- Property Prediction: Using structural descriptors derived from database entries to predict magnetic characteristics without resource-intensive computations [20].

- Composition Optimization: Identifying promising elemental substitutions to enhance performance while reducing dependence on critical rare-earth elements [20].

- Microstructural Analysis: Correlating crystal structure features with domain structure and hysteresis properties [20].

The coordination of experimental data from ICSD with computed properties from Materials Project enabled the training of more accurate and transferable models than would be possible with either resource alone. For instance, the combination of experimentally verified crystal structures with computationally derived magnetic moments created a robust training set for predicting new permanent magnet materials with improved energy product. This integrated approach exemplifies how database-informed discovery can address complex materials challenges that have resisted traditional investigative methods.

Table 2: Research Reagent Solutions for Materials Database Research

| Research Tool | Function | Application Example |

|---|---|---|

| MPRester API Client | Programmatic access to Materials Project data [17] | Querying materials by composition and properties [18] |

| CIF File Format | Standard format for crystallographic data exchange [19] | Transferring structures between databases and analysis tools |

| StructureMatcher (pymatgen) | Determining structural similarity between crystals [18] | Identifying equivalent structures across databases |

| JMol Visualization | Interactive 3D crystal structure viewing [16] | Visualizing coordination environments and symmetry |

| Bond Distance Analysis | Calculating interatomic distances and angles [19] | Verifying structural stability and bonding patterns |

Emerging Capabilities and Research Frontiers

The ongoing development of materials databases continues to expand their utility for discovery. The recently released ICSD Scientific Manual 2025 highlights significant enhancements including expanded representation and analysis of coordination polyhedra, uniform naming and classification of minerals, and integration of external links to additional data sources [15]. These improvements facilitate more sophisticated structure-property correlation studies and enable researchers to extract deeper insights from the curated structural data.

Concurrently, the Materials Project is advancing its capabilities for representing complex materials phenomena, including protocols for querying amorphous materials and handling multi-functional calculations [18]. A particularly important development is the integration of different computational functionals (PBE, PBE+U, and r2SCAN) with transparent documentation of which method was used for each calculated property [17]. This transparency is crucial for researchers who need to assess the reliability of computational predictions before proceeding with experimental validation.

The integration of machine learning with comprehensive materials data represents perhaps the most promising frontier. As noted in research on rare-earth magnetic materials, data mining techniques enable researchers to "efficiently analyze vast experimental and computational datasets, thereby accelerating the exploration and development of rare-earth magnetic materials" [20]. This synergistic combination of rich data resources and advanced analytics is creating new paradigms for materials discovery that transcend traditional trial-and-error approaches.

The transformative impact of comprehensive materials databases on inorganic crystalline materials research is undeniable. The ICSD provides an indispensable foundation of experimental knowledge, while the Materials Project offers powerful computational insights into material properties and behaviors. Together, these resources enable a systematic, knowledge-driven approach to materials discovery that leverages the collective understanding embodied in known structures to inform the design of new materials with targeted functionalities.

The integration of these databases into research workflows, as demonstrated through the methodologies and case studies presented herein, empowers scientists to navigate the vast complexity of inorganic materials space with unprecedented efficiency. By identifying patterns across known compounds, predicting promising candidates computationally, and prioritizing the most viable candidates for experimental synthesis, researchers can dramatically accelerate the development cycle for new materials addressing critical technological needs.

As these databases continue to evolve in scope and sophistication, and as machine learning techniques become increasingly integrated with materials informatics platforms, the pace of discovery will further accelerate. The systematic learning from known materials embodied in these resources represents nothing less than a fundamental transformation in how we approach the design and development of the inorganic crystalline materials that underpin modern technology and drive innovation across the scientific landscape.

The discovery of new inorganic crystalline materials is pivotal for advancements in technology and medicine. However, a vast region of chemically plausible compounds remains synthetically inaccessible, representing a significant frontier in materials science. This whitepaper examines the computational and experimental methodologies accelerating the identification and synthesis of these "missing" materials. We explore the integration of machine learning models like SynthNN for synthesizability prediction [21], crystal structure prediction (CSP) algorithms such as CALYPSO and USPEX for determining stable arrangements [22] [23], and expert-informed AI frameworks like Materials Expert-AI (ME-AI) for descriptor discovery [24]. A critical evaluation of current CSP algorithms using the CSPBench benchmark suite reveals that performance, while promising, is far from satisfactory, with many algorithms struggling to identify correct space groups [22]. Furthermore, we detail experimental validation protocols, including synthetic procedures and characterization techniques like single-crystal X-ray diffraction and second-harmonic generation, essential for confirming theoretical predictions [25]. By framing these developments within the broader thesis of materials discovery, this guide provides researchers with a comprehensive toolkit for navigating the challenges and opportunities in uncovering the next generation of functional inorganic materials.

The history of modern science is replete with breakthroughs enabled by the discovery of novel materials. Despite the vast number of known inorganic crystals, the chemical space of plausible but unsynthesized compounds is estimated to be significantly larger. The primary challenge in discovering these "missing" materials lies in reliably identifying which hypothetical compounds are synthetically accessible [21]. Traditional proxies for synthesizability, such as charge-balancing rules or thermodynamic stability calculated from density functional theory (DFT), have proven inadequate. For instance, charge-balancing criteria only apply to about 37% of synthesized inorganic materials, while DFT-based formation energy calculations fail to capture kinetic stabilization effects and miss approximately 50% of known compounds [21]. This gap between chemical plausibility and synthetic reality necessitates new approaches that move beyond simple heuristics.

The field is now undergoing a transformation driven by the emergence of large materials databases, sophisticated machine learning algorithms, and powerful crystal structure prediction methods. These tools allow researchers to systematically explore compositional and structural space, learning the complex patterns that distinguish synthesizable materials from those that are not. This guide provides an in-depth examination of these methodologies, offering a technical roadmap for researchers engaged in the discovery of new inorganic crystalline materials.

Computational Prediction Frameworks

Machine Learning for Synthesizability Classification

Machine learning models trained on comprehensive databases of known materials have emerged as powerful tools for predicting synthesizability directly from chemical composition.

SynthNN is a deep learning model that leverages the entire space of synthesized inorganic chemical compositions from databases like the Inorganic Crystal Structure Database (ICSD) [21]. Its architecture utilizes an atom2vec embedding matrix that learns optimal representations of chemical formulas directly from the distribution of synthesized materials, without requiring pre-defined features or assumptions about underlying chemical principles. Remarkably, this model demonstrates the ability to learn fundamental chemical concepts such as charge-balancing, chemical family relationships, and ionicity through data exposure alone [21].

In benchmark tests, SynthNN significantly outperforms both traditional approaches and human experts. It identifies synthesizable materials with 7× higher precision than DFT-calculated formation energies and achieves 1.5× higher precision than the best human expert, while completing the classification task five orders of magnitude faster [21]. The model employs a semi-supervised, positive-unlabeled (PU) learning approach to handle the lack of definitive negative examples, as unsynthesized materials may become accessible with advancing methodologies.

Materials Expert-AI (ME-AI) represents a different paradigm that incorporates human expertise into machine learning [24]. This framework translates the intuition of materials growers into quantitative descriptors by training on expert-curated experimental data. In one implementation, ME-AI was applied to a set of 879 square-net compounds described using 12 experimental features, training a Dirichlet-based Gaussian-process model with a chemistry-aware kernel [24].

Notably, ME-AI not only recovered the known structural descriptor ("tolerance factor") for identifying topological semimetals but also discovered new emergent descriptors, including one related to hypervalency and the Zintl line [24]. The model demonstrated surprising transferability, correctly classifying topological insulators in rocksalt structures despite being trained only on square-net topological semimetal data [24].

Crystal Structure Prediction (CSP) Algorithms

Crystal structure prediction involves determining the most stable crystalline arrangement of atoms given only a chemical composition. This represents a fundamental challenge in materials science due to the vast combinatorial space of possible arrangements [3] [23].

Table 1: Major Categories of Crystal Structure Prediction Algorithms

| Category | Representative Algorithms | Key Features | Limitations |

|---|---|---|---|

| Ab Initio/DFT-based | CALYPSO [22], USPEX [22] [23], CrySPY [22] | Combines global optimization (e.g., particle swarm, evolutionary algorithms) with DFT energy calculations; considers symmetry and physical constraints | Computationally expensive; limited by DFT accuracy for certain properties |

| Machine Learning Potential-based | GN-OA [22], AGOX with M3GNet [22], GOFEE [22] | Uses ML potentials for faster energy evaluations; active learning for potential refinement | Quality dependent on ML potential accuracy and training data |

| Template-based | TCSP [22], CSPML [22] | Leverages known structural prototypes; computationally efficient | Limited to known structural families; less effective for truly novel structures |

| Random Sampling | AIRSS [22] [23] | Stochastic generation of structures with physical/chemical constraints | Can require extensive sampling for complex systems |

Recent benchmarking studies using CSPBench, which includes 180 test structures, reveal that the performance of current CSP algorithms remains limited [22]. Most algorithms struggle to identify structures with correct space groups, except for template-based approaches when applied to test structures with similar templates [22]. However, ML potential-based CSP algorithms are achieving competitive performance compared to DFT-based methods, with their effectiveness strongly determined by both the quality of the neural potentials and the global optimization algorithms employed [22].

Benchmarking CSP Performance

Quantitative evaluation of CSP algorithms remains challenging. The CSPBench benchmark suite provides standardized metrics for assessing algorithm performance across diverse material classes [22]. Key findings from recent benchmarks include:

- ML potential-based methods are closing the gap with DFT-based approaches in terms of prediction accuracy while offering significant computational savings [22].

- Template-based methods perform well when similar structural prototypes exist but fail for truly novel structure types [22].

- The success of evolutionary algorithms like USPEX and CALYPSO depends heavily on proper handling of symmetry and implementation of specialized search techniques [22] [23].

Despite these advances, CSP performance is far from satisfactory, with no single algorithm dominating across all material classes [22]. This highlights the need for continued development of more robust and accurate CSP methodologies.

Experimental Validation Protocols

Synthesis Methodologies

Successfully synthesizing predicted materials requires careful selection of appropriate synthetic techniques based on the target material's composition and predicted stability.

High-Temperature Solid-State Reaction is a fundamental method for inorganic crystalline materials. A typical protocol involves:

- Precursor Preparation: Stoichiometric amounts of precursor compounds (e.g., oxides, carbonates, or metals) are accurately weighed and thoroughly mixed using mortar and pestle or ball milling.

- Reaction Process: The mixture is heated in a controlled atmosphere furnace (air, inert gas, or reducing atmosphere) at temperatures typically ranging from 500°C to 1500°C for several hours to days.

- Thermal Treatment: Multiple heating cycles with intermediate grinding are often employed to improve homogeneity and reaction completeness.

- Product Isolation: The resulting solid is cooled slowly (often at controlled rates) to promote crystal growth and minimize defects.

Chemical Vapor Transport is particularly effective for growing single crystals of layered or low-dimensional materials, such as the helical GaSI crystals recently reported [25]. The protocol typically includes:

- Ampoule Preparation: Precursor materials are sealed under vacuum in a quartz ampoule with a transport agent (e.g., iodine).

- Gradient Establishment: The ampoule is placed in a multi-zone furnace with a temperature gradient (e.g., 350°C to 400°C for GaSI [25]).

- Crystal Growth: Volatile compounds transport material from the hot zone to the cold zone, where crystals nucleate and grow over periods of days to weeks.

- Crystal Harvesting: The ampoule is carefully opened in a controlled environment to prevent oxidation or hydrolysis of the product.

Characterization Techniques

Confirming the structure and properties of newly synthesized materials requires multiple complementary characterization methods.

Single Crystal X-ray Diffraction (SCXRD) is the gold standard for determining crystal structure. The experimental workflow involves:

- Crystal Selection: A high-quality single crystal of appropriate size (typically 0.1-0.3 mm) is selected under a microscope.

- Data Collection: The crystal is mounted on a diffractometer and exposed to X-ray radiation while being rotated through various orientations.

- Structure Solution: Phase problem is solved using direct methods or Patterson synthesis.

- Structure Refinement: Atomic positions and thermal parameters are iteratively refined against the diffraction data.

For the helical GaSI crystals, SCXRD confirmed a non-centrosymmetric primitive unit cell (space group P-4) with a stable, non-natural helical cross-section described as a "squircle" geometry [25].

Second Harmonic Generation (SHG) is particularly valuable for characterizing non-centrosymmetric crystals. The experimental setup includes:

- Excitation Source: A pulsed laser (commonly Nd:YAG at 1064 nm) is focused onto the crystalline sample.

- Detection System: The frequency-doubled output (532 nm for Nd:YAG) is collected using photomultiplier tubes or CCD detectors.

- Signal Analysis: The SHG intensity is measured relative to known standards to quantify the non-linear optical response.

In GaSI, pronounced SHG activity provided additional confirmation of its non-centrosymmetric structure [25].

Additional characterization techniques include:

- Energy-Dispersive X-ray Spectroscopy (EDS): For elemental composition verification.

- X-ray Photoelectron Spectroscopy (XPS): For chemical state analysis.

- Electron Microscopy (SEM/TEM): For morphological and structural analysis at micro- to nanoscale.

- Thermal Analysis (TGA/DSC): For stability and phase transition studies.

Workflow Visualization

The following diagram illustrates the integrated computational and experimental workflow for identifying and synthesizing novel inorganic materials:

Integrated Workflow for Materials Discovery

Table 2: Key Computational Tools for Predicting Novel Materials

| Tool/Platform | Type | Primary Function | Access |

|---|---|---|---|

| SynthNN [21] | Deep Learning Model | Predicts synthesizability from chemical composition | Research Use |

| ME-AI [24] | Machine Learning Framework | Discovers descriptors from expert-curated data | Research Use |

| CSPBench [22] | Benchmark Suite | Evaluates CSP algorithm performance | Open Source |

| CALYPSO [22] [23] | CSP Algorithm | Particle swarm optimization-based structure prediction | Academic Free |

| USPEX [22] [23] | CSP Algorithm | Evolutionary algorithm for structure prediction | Academic Free |

| AIRSS [22] [23] | CSP Algorithm | Ab initio random structure searching | Open Source |

| ChemFH [26] | Screening Platform | Identifies assay false positives in drug discovery | Free Access |

Table 3: Essential Experimental Resources for Synthesis and Characterization

| Resource Category | Specific Examples | Key Applications |

|---|---|---|

| Synthesis Equipment | Tube furnaces with atmosphere control, glove boxes, high-pressure reactors | Material synthesis under controlled conditions |

| Structure Determination | Single-crystal X-ray diffractometer, powder X-ray diffractometer | Determining crystal structure and phase purity |

| Property Characterization | Second harmonic generation setup, UV-Vis-NIR spectrophotometer, PPMS | Measuring optical, electronic, and magnetic properties |

| Chemical Databases | Inorganic Crystal Structure Database (ICSD) [24] [21], Materials Project | Reference data for known structures and properties |

Research Methodology Visualization

The following diagram outlines the core research methodology for identifying plausible but unsynthesized compounds, integrating both computational and experimental approaches:

Core Research Methodology

Challenges and Future Directions

Despite significant advances, several challenges remain in the systematic identification of synthesizable inorganic materials:

Data Quality and Availability: Machine learning approaches require large, high-quality datasets. Current materials databases contain inconsistencies and reporting biases that can limit model performance. Future efforts should focus on standardizing data reporting and developing more comprehensive databases that include both successful and unsuccessful synthesis attempts [21].

Algorithmic Limitations: As demonstrated by CSPBench, current CSP algorithms still struggle with complex structures and accurate energy ranking [22]. Improving the accuracy of machine learning potentials and developing better global optimization algorithms represent key research priorities.

Multi-objective Optimization: In practice, researchers seek materials that combine synthesizability with specific functional properties. Future tools need to integrate synthesizability prediction with property optimization in multi-objective frameworks.

Transferability and Generalization: Models trained on known materials may perform poorly on truly novel composition spaces. Developing approaches that can extrapolate beyond training data, perhaps through improved physics-informed machine learning, remains an important challenge [24] [21].

The integration of human expertise with artificial intelligence, as exemplified by the ME-AI framework, offers a promising path forward [24]. By combining the pattern recognition capabilities of machine learning with the deep chemical intuition of experienced materials scientists, the field can accelerate progress toward the systematic discovery of the "missing" compounds that will enable future technological innovations.

The identification of plausible but unsynthesized inorganic compounds represents both a grand challenge and significant opportunity in materials science. Through the integrated application of machine learning-based synthesizability prediction, advanced crystal structure algorithms, and targeted experimental validation, researchers are developing systematic approaches to navigate this unexplored chemical space. Frameworks like ME-AI that combine human expertise with artificial intelligence are particularly promising for discovering meaningful descriptors and patterns [24]. While current methodologies still face limitations in accuracy and generalizability, the rapid pace of advancement in computational materials science suggests that the systematic discovery of new functional materials is an increasingly achievable goal. The continued development and integration of these tools will ultimately transform materials discovery from a largely empirical process to a more rational and efficient endeavor, unlocking novel compounds with tailored properties for applications across technology, medicine, and energy.

The AI Arsenal: From Generative Models to Autonomous Synthesis Labs

The discovery of new inorganic crystalline materials is a fundamental driver of technological progress, influencing sectors ranging from renewable energy and electronics to healthcare. Traditional material discovery has relied on a slow, expensive process of trial-and-error experimentation, often guided by human intuition and limited computational screening. This paradigm is being transformed by generative artificial intelligence (AI), which enables the direct design of novel, stable crystal structures. This whitepaper provides an in-depth technical overview of three pioneering generative AI systems—GNoME, MatterGen, and SynthNN—framed within the broader context of a new computational paradigm for inorganic materials research. These tools represent a significant shift from screening known materials to generating previously unenvisioned candidates with targeted properties, thereby accelerating the entire materials discovery pipeline.

Core Architectures and Methodologies

GNoME (Graph Networks for Materials Exploration)

GNoME, developed by Google DeepMind, is a state-of-the-art deep learning tool designed to predict the stability of novel crystalline materials at an unprecedented scale [27] [28]. Its architecture and training methodology are engineered for high-throughput discovery.

- Architecture: GNoME utilizes graph neural networks (GNNs), a class of deep learning models particularly suited to representing crystalline structures [27] [29]. In this framework, a crystal is represented as a graph where atoms are nodes and the connections between them are edges. This representation allows the model to naturally capture the local chemical environments and bonding interactions that determine material stability [29].

- Training and Active Learning: The model was initially trained on crystal structure and stability data from the Materials Project [27]. A key to its success is an active learning pipeline. The process is iterative: GNoME generates candidate crystal structures, which are then evaluated using Density Functional Theory (DFT) calculations, a first-principles computational method for investigating material properties [27] [28]. The results of these DFT calculations are fed back into the model as high-quality training data, creating a self-improving discovery flywheel. This process boosted the discovery rate of stable materials from under 10% to over 80% [27] [29].

- Discovery Pipelines: GNoME employs two parallel pipelines for candidate generation [29] [28]:

- Structural Pipeline: Creates candidates by making modifications (e.g., symmetry-aware partial substitutions) to known crystal structures.

- Compositional Pipeline: Explores randomized chemical formulas without structural prerequisites, using AI to predict stability from composition alone.

MatterGen

MatterGen, developed by Microsoft, introduces a different paradigm: a generative model that directly creates novel inorganic materials conditioned on desired property constraints [30] [1] [31].

- Architecture: MatterGen is a diffusion model customized for the unique symmetries and periodicity of crystalline materials [30] [1]. Similar to how diffusion models generate images by iteratively denoising random pixels, MatterGen generates crystal structures by gradually refining atom types, their coordinates, and the periodic lattice from a noisy, random initial state [1] [32].

- Conditioning and Fine-Tuning: A core innovation of MatterGen is its use of adapter modules for fine-tuning [1] [31]. After pre-training a base model on a large dataset of stable structures, these modules allow the model to be fine-tuned on smaller, labeled datasets. This enables the generation of materials that satisfy specific constraints, such as a target chemical composition, symmetry (space group), or mechanical, electronic, and magnetic properties [30] [1].

- Handling Compositional Disorder: The model incorporates a novel structure-matching algorithm that accounts for compositional disorder—a common phenomenon where different atoms can randomly occupy the same crystallographic site [30]. This provides a more realistic definition of novelty and uniqueness for generated materials.

SynthNN

While GNoME and MatterGen focus on generating stable crystal structures, SynthNN addresses a critical subsequent challenge: predicting the synthesizability of a material—that is, whether it can be experimentally realized with current methodologies [33].

- Architecture and Objective: SynthNN is a deep learning classification model that predicts synthesizability directly from a chemical formula, without requiring structural information [33]. This is crucial for screening vast numbers of hypothetical compositions.

- Training Data and Approach: The model is trained on data from the Inorganic Crystal Structure Database (ICSD), which contains experimentally synthesized materials [33]. A major challenge is the lack of definitive data on unsynthesizable materials. To address this, SynthNN uses a positive-unlabeled (PU) learning approach, where it is trained on known synthesized materials (positive examples) and artificially generated unsynthesized materials, which are treated as unlabeled and probabilistically weighted [33].

- Learned Chemical Principles: Remarkably, without being explicitly programmed with chemical rules, SynthNN learns fundamental principles like charge-balancing and chemical family relationships from the data itself [33]. It has been shown to outperform both human experts and traditional proxy metrics like charge-balancing in identifying synthesizable materials [33].

Table 1: Summary of Core AI Model Architectures

| Model | Primary Approach | Core Input | Primary Output | Key Innovation |

|---|---|---|---|---|

| GNoME | Graph Neural Network (GNN) | Crystal Structure or Composition | Stability Prediction | Active learning with DFT validation [27] [28] |

| MatterGen | Diffusion Model | Property Constraints / Noise | Novel Crystal Structure | Adapter modules for property-conditioned generation [30] [1] |

| SynthNN | Deep Learning Classifier | Chemical Formula | Synthesizability Score | Positive-unlabeled (PU) learning from experimental data [33] |

Performance and Experimental Validation

The efficacy of these AI tools is demonstrated not only by computational metrics but also through experimental synthesis in laboratories.

Quantitative Performance Metrics

- GNoME has discovered 2.2 million new crystal structures predicted to be stable, which is equivalent to nearly 800 years' worth of knowledge based on traditional methods [27]. From these, 380,000 are identified as the most stable and promising for experimental synthesis [27]. External researchers have already independently synthesized 736 of these predicted structures, validating the model's accuracy [27] [28].

- MatterGen generates structures that are more than twice as likely to be new and stable compared to previous generative models [1] [31]. Furthermore, 95% of its generated structures are very close to their local energy minimum (within 0.076 Å RMSD after DFT relaxation), indicating they require minimal structural adjustment [1]. In a proof-of-concept, a material generated by MatterGen (TaCr₂O₆) was synthesized, and its measured bulk modulus was within 20% of the target value [30] [1].

- SynthNN demonstrates a 7x higher precision in identifying synthesizable materials compared to using DFT-calculated formation energies alone [33]. In a head-to-head challenge, it achieved 1.5x higher precision than the best human expert and completed the task five orders of magnitude faster [33].

Table 2: Summary of Key Performance and Discovery Metrics

| Metric | GNoME | MatterGen | SynthNN |

|---|---|---|---|

| Primary Output Volume | 2.2 million new crystals [27] | N/A (Generative) | N/A (Classifier) |

| Stable Candidates | 380,000 stable materials [27] | >2x more SUN* materials vs. prior models [1] | N/A |

| Experimental Validation | 736 independently synthesized [27] [28] | 1 novel material (TaCr₂O₆) synthesized [30] | Outperforms human experts [33] |

| Key Performance Gain | 80% prediction precision [27] | 95% of structures near DFT local minimum [1] | 7x higher precision vs. formation energy [33] |

| *SUN: Stable, Unique, and New |

Detailed Experimental Protocols

The validation of AI-predicted materials involves a multi-step process combining computational validation and experimental synthesis.

3.2.1 Computational Validation via Density Functional Theory (DFT) For a generated crystal structure to be considered viable, it must first be validated as stable using DFT.

- Structure Relaxation: The AI-generated crystal structure is used as the input for a DFT calculation. The calculation iteratively adjusts atomic positions and lattice parameters to find the lowest-energy (relaxed) configuration of that structure [1] [28].

- Stability Assessment (Convex Hull): The energy of the relaxed structure is compared to a reference database of known stable materials (e.g., from the Materials Project) to construct a convex hull of stability [27] [1]. A material is typically considered stable if its energy per atom lies on or very close (e.g., within 50-100 meV/atom) to this convex hull, meaning there is no combination of other phases into which it can decomposes to lower its energy [27] [1] [28].

- Property Calculation: Once stability is confirmed, additional DFT calculations can be performed to predict functional properties, such as electronic band structure, magnetic moments, or ionic conductivity [28].

3.2.2 Autonomous Robotic Synthesis (A-Lab) The integration of AI discovery with automated synthesis represents a groundbreaking advance.

- Recipe Generation: An AI system (like GNoME) provides a target stable crystal structure. A separate AI planner then devises potential solid-state synthesis recipes, identifying precursor compounds and proposing reaction conditions [27].

- Robotic Execution: In a facility like the A-Lab at Lawrence Berkeley National Laboratory, robotic arms execute the synthesis recipes. They perform tasks such as weighing powdered solid precursors, mixing them, and loading them into crucibles [27].

- Reaction and Analysis: The robotic system places the crucible in a furnace and runs the reaction under the specified conditions (temperature, time, atmosphere). After the reaction, the resulting powder is automatically transported to an X-ray diffractometer for structural characterization [27].

- Iterative Learning: The synthesized material's X-ray diffraction pattern is compared to the pattern expected from the target structure. If the synthesis is unsuccessful, the AI planner analyzes the result, formulates a new hypothesis, and initiates another synthesis attempt with modified conditions. This creates a closed-loop, autonomous discovery and synthesis pipeline [27].

Workflow Visualization

The following diagrams, generated with Graphviz DOT language, illustrate the core workflows of the featured AI models and the integrated discovery-synthesis pipeline.

GNoME Active Learning Workflow

MatterGen Conditional Generation Workflow

Integrated AI-Driven Discovery Pipeline

The Scientist's Toolkit: Research Reagent Solutions

The experimental validation of AI-generated materials relies on a suite of computational and physical resources. The following table details key components of this research toolkit.

Table 3: Essential Research Tools for AI-Driven Materials Discovery

| Tool / Resource | Type | Primary Function | Example/Provider |

|---|---|---|---|

| Density Functional Theory (DFT) | Computational Method | Validates stability and predicts properties of generated structures via quantum mechanical calculations [27] [1]. | VASP (Vienna Ab initio Simulation Package) [28] |

| Materials Database | Data Resource | Provides structured, curated data on known materials for model training and stability assessment (convex hull construction) [27] [33]. | Materials Project (MP) [27], Inorganic Crystal Structure Database (ICSD) [33] |

| Solid-State Precursors | Laboratory Reagent | High-purity powdered elements or compounds used as starting materials in robotic solid-state synthesis [27]. | Commercial chemical suppliers (e.g., Sigma-Aldrich, Alfa Aesar) |

| Automated Robotic Lab | Physical Infrastructure | Executes high-throughput synthesis and characterization, enabling rapid experimental validation of AI predictions [27]. | A-Lab (Lawrence Berkeley National Lab) [27] |

| X-Ray Diffractometer (XRD) | Analytical Instrument | Characterizes synthesized powders to determine if the experimental crystal structure matches the AI-predicted structure [27]. | Powder X-ray Diffractometer |

Discussion and Future Outlook

The advent of GNoME, MatterGen, and SynthNN marks a pivotal shift in materials science. GNoME demonstrates the power of scale and active learning for exhaustive exploration of chemical space. MatterGen establishes the potential of generative models for inverse design, where materials are engineered from a set of desired properties rather than discovered through modification of known ones. SynthNN adds a critical layer of practical insight by predicting which computationally stable materials are most likely to be synthesizable, bridging the gap between prediction and realization.

Looking forward, the integration of these tools into a cohesive pipeline is the logical next step. One can envision a workflow where MatterGen generates candidates for specific applications, GNoME filters them for thermodynamic stability, and SynthNN prioritizes the most synthesizable targets for autonomous robotic synthesis in facilities like the A-Lab. This would create a high-throughput, closed-loop materials discovery engine.

Challenges remain, including the need for more and higher-quality experimental data, the development of models that better account for kinetic stability and synthesis pathways, and the extension of these approaches to more complex material systems such as disordered crystals and nano-structured materials. Nevertheless, by providing researchers with these powerful AI tools, the field is poised to dramatically accelerate the development of next-generation technologies, from better batteries and carbon capture materials to advanced semiconductors.

The discovery of new inorganic crystalline materials is fundamental to technological progress, from developing better batteries to creating novel semiconductors. Traditional methods for crystal structure prediction, such as density functional theory (DFT), provide high accuracy but are computationally intensive and time-consuming [34]. The field is now undergoing a transformative shift with the adoption of artificial intelligence, particularly Graph Neural Networks and Transformer architectures. These models offer a powerful framework for representing and predicting crystal structures by directly encoding their innate graph-like nature, where atoms naturally form nodes and chemical bonds constitute edges [35] [36]. This paradigm shift enables researchers to rapidly screen thousands of potential materials in silico, significantly accelerating the discovery cycle for new inorganic crystalline materials with targeted properties.

Graph Neural Networks for Crystal Structures

Core Architectural Principles

Graph Neural Networks operate on a fundamental principle: they represent a crystal structure as a graph where atoms serve as nodes and chemical bonds form edges. This representation allows GNNs to learn from the structural relationships within the crystal lattice. Most GNNs for materials science employ a message-passing framework, where information is iteratively exchanged between connected nodes (atoms) and their local environments [35]. This process enables the network to capture complex atomic interactions and chemical environments that determine material properties. The Crystal Graph Convolutional Neural Network (CGCNN) exemplifies this approach, creating graph representations from crystal structures that encode atomic information and bonding relationships to predict material properties [36].

Advanced GNN Architectures and Their Applications

Recent advancements have produced specialized GNN architectures tailored to the unique challenges of crystalline materials. The MatDeepLearn (MDL) framework implements various graph-based models including CGCNN, Message Passing Neural Networks (MPNN), MEGNet, SchNet, and Graph Convolutional Networks (GCNs) [35]. These architectures have demonstrated exceptional performance in predicting material properties across diverse systems. For high-entropy materials—complex systems with multiple principal elements—researchers have developed Kolmogorov-Arnold GNNs (KA-GNNs) that integrate KAN modules into node embedding, message passing, and readout components [37]. These networks utilize Fourier-series-based univariate functions to enhance function approximation, providing improved expressivity, parameter efficiency, and interpretability for molecular property prediction [37].

Transformer Architectures for Crystalline Materials

Self-Attention Mechanisms for Global Relationships