SynthNN: How Deep Learning Predicts Material Synthesizability to Accelerate Drug Discovery

This article explores SynthNN, a groundbreaking deep learning model designed to predict the synthesizability of inorganic crystalline materials—a critical challenge in materials science and drug development.

SynthNN: How Deep Learning Predicts Material Synthesizability to Accelerate Drug Discovery

Abstract

This article explores SynthNN, a groundbreaking deep learning model designed to predict the synthesizability of inorganic crystalline materials—a critical challenge in materials science and drug development. We delve into the foundational principles of synthesizability prediction, moving beyond traditional proxies like thermodynamic stability. The discussion covers SynthNN's unique methodology, which leverages positive-unlabeled learning and data from known material compositions without requiring prior chemical knowledge. For researchers and drug development professionals, we provide a comparative analysis against expert judgment and other computational methods, address common implementation challenges, and showcase its practical application in successful experimental pipelines. Finally, we examine the model's validation and its performance against newer AI approaches, concluding with its profound implications for streamlining the discovery of synthetically accessible materials and therapeutics.

The Synthesizability Challenge: Why Predicting Material Creation is Hard

Defining Synthesizability in Materials Science and Drug Development

Synthesizability is a critical concept in both materials science and drug development, referring to the feasibility of successfully creating a proposed molecule or material through chemical synthesis in a laboratory setting. It is not merely an inherent property of a substance, but a multifaceted assessment contingent on available starting materials, known reaction pathways, equipment, cost, and time [1]. The accurate prediction of synthesizability is a cornerstone for accelerating the discovery of new functional materials and therapeutic compounds, as it ensures that computationally designed candidates can be translated into physical entities for testing and application.

Defining Synthesizability Across Disciplines

The core definition of synthesizability shares common ground across fields, but the specific challenges and emphases differ, particularly between inorganic crystalline materials and organic drug-like molecules.

Inorganic Materials Science

For inorganic crystalline materials, synthesizability is defined as a material being synthetically accessible through current synthetic capabilities, regardless of whether it has been synthesized yet [2]. The primary challenge lies in the lack of well-understood reaction mechanisms compared to organic chemistry. Synthesis often depends on a complex interplay of thermodynamic and kinetic stabilization, reaction pathway selection, and selective nucleation of the target material [2] [3]. Furthermore, the decision to synthesize a material involves non-physical considerations such as reactant cost, equipment availability, and the perceived importance of the final product [2]. This makes synthesizability difficult to predict based on thermodynamic constraints alone.

Drug Development

In drug development, a molecule is considered synthesizable if a viable synthesis route of reactions from readily available starting materials to the target molecule can be found [1]. However, synthesizability is not a binary judgment but a matter of degree, heavily influenced by the stage of the drug discovery project and the resources one is willing to commit [4]. In early stages like hit-finding, the focus is on simple, tractable molecules that can be made quickly. In later stages like lead optimization, if a molecule shows high promise, chemists may engage in complex "synthetic heroics" to make it, effectively "teaching it to fly" [4]. A key emerging concept is "in-house synthesizability," which tailors the synthesizability assessment to the specific, limited collection of building blocks available in a particular laboratory, rather than assuming near-infinite commercial availability [5].

Quantitative Synthesizability Prediction: The SynthNN Model

A significant advance in computational materials science is the development of the deep learning synthesizability model, SynthNN, designed for inorganic crystalline materials.

Model Rationale and Workflow

Traditional proxies for synthesizability, such as enforcing a charge-balancing criteria, have proven inadequate, capturing only 37% of known synthesized inorganic materials [2]. SynthNN reformulates material discovery as a synthesizability classification task. It leverages the entire space of synthesized inorganic chemical compositions from the Inorganic Crystal Structure Database (ICSD) and uses a semi-supervised learning approach to learn the chemistry of synthesizability directly from the data of all experimentally realized materials [2] [6] [7].

Table 1: Key Features and Performance of the SynthNN Model

| Aspect | Description |

|---|---|

| Model Type | Deep learning classification model (SynthNN) [2] |

| Input | Chemical formulas (no structural information required) [2] |

| Core Methodology | Uses atom2vec learned atom embeddings; positive-unlabeled (PU) learning [2] |

| Key Advantage | Learns chemical principles (e.g., charge-balancing, ionicity) from data without prior knowledge [2] [7] |

| Performance vs. DFT | 7x higher precision than DFT-calculated formation energies [2] [6] |

| Performance vs. Experts | 1.5x higher precision and 100,000x faster than best human expert [2] |

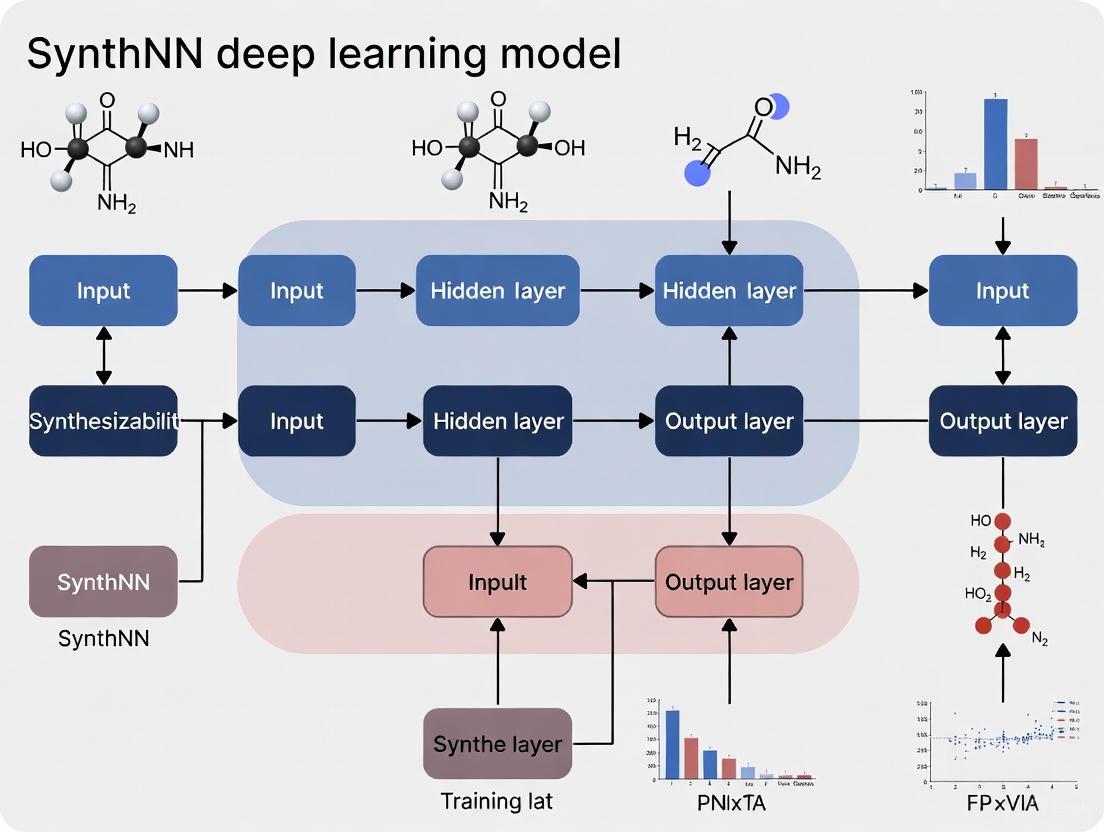

Figure 1: The SynthNN prediction workflow, which transforms a chemical formula into a synthesizability classification through learned embeddings and a deep neural network [2] [6] [7].

Experimental Protocol: SynthNN Model Training and Validation

Objective: To train and validate a deep learning model (SynthNN) for predicting the synthesizability of inorganic crystalline materials from their chemical composition.

Materials and Reagents:

- Software: Python environment with deep learning libraries (e.g., TensorFlow, PyTorch).

- Training Data: Chemical formulas extracted from the Inorganic Crystal Structure Database (ICSD) [2] [8].

- Compute Resource: Standard workstation or high-performance computing node with GPU acceleration.

Procedure:

- Data Curation: Extract and clean chemical formulas of synthesized inorganic materials from the ICSD to form the set of positive (synthesized) examples [2].

- Generation of Unlabeled Data: Artificially generate a large set of chemical formulas that are not present in the ICSD. This set constitutes the unlabeled data, as these materials could be unsynthesizable or simply not yet synthesized [2].

- Model Architecture Setup: Implement a neural network using an atom embedding layer (atom2vec) to represent each chemical formula. The embedding dimensionality is a key hyperparameter [2].

- Positive-Unlabeled (PU) Training: Train the SynthNN model using a semi-supervised PU learning algorithm. This involves treating the artificially generated formulas as unlabeled data and probabilistically reweighting them according to their likelihood of being synthesizable [2] [8].

- Model Validation: Benchmark the trained model against a hold-out test set. Compare its performance against baseline methods like random guessing, charge-balancing, and predictions from human experts [2].

Synthesizability in De Novo Drug Design

In drug development, ensuring synthesizability is paramount for the practical application of generative models that design novel molecules de novo.

In-House Synthesizability Scoring

A key innovation is the development of rapidly retrainable in-house synthesizability scores. These models predict whether a molecule can be synthesized using a specific, limited inventory of building blocks available in a researcher's own laboratory [5]. This approach contrasts with traditional Computer-Aided Synthesis Planning (CASP) that assumes access to millions of commercial building blocks.

Experimental Findings: A study transferring CASP from 17.4 million commercial building blocks (Zinc) to a small laboratory setting with only ~6,000 in-house building blocks (Led3) showed a relatively modest decrease of –12% in the CASP success rate for solving synthesis routes. The primary trade-off was that routes using in-house blocks were, on average, two reaction steps longer than those using the vast commercial library [5].

Protocol: Implementing an In-House Synthesizability Workflow

Objective: To generate and experimentally validate novel, biologically active drug candidates that are synthesizable exclusively from an in-house collection of building blocks.

Materials and Reagents:

- Software: AiZynthFinder or similar CASP toolkit; QSAR modeling software; generative molecular design software (e.g., based on recurrent neural networks or variational autoencoders) [5].

- Building Blocks: Curated list of in-house available building blocks (e.g., 5,000-6,000 compounds) with associated chemical data [5].

- Laboratory Equipment: Standard synthetic chemistry apparatus for organic synthesis and purification (e.g., fume hood, glassware, rotary evaporator, HPLC). Biochemical assay kits for target protein activity evaluation [5].

Procedure:

- Workflow Setup and Synthesizability Score Training:

- Deploy a CASP tool (e.g., AiZynthFinder) configured with your in-house building block list.

- Generate a dataset of molecules and run synthesis planning to determine which are solvable with in-house blocks.

- Use this data to train a fast, random forest-based synthesizability classification model that acts as a proxy for full CASP [5].

Multi-Objective De Novo Molecular Generation:

- Use a generative model to propose new molecular structures.

- Employ a multi-objective optimization function that combines:

- A QSAR model predicting the desired biological activity (e.g., IC50 for a target protein).

- The in-house synthesizability score to prioritize molecules that can be made [5].

- Generate a library of candidate molecules ranked by the multi-objective score.

Synthesis and Experimental Validation:

- Select top-ranking candidates for further analysis.

- For each candidate, run the full CASP tool to obtain detailed, multi-step synthesis routes using only in-house blocks.

- Synthesize the candidates following the AI-suggested routes.

- Purify the compounds and experimentally evaluate their biochemical activity in assays to confirm the computational predictions [5].

Table 2: Research Reagent Solutions for In-House Drug Design

| Reagent / Resource | Function in the Workflow |

|---|---|

| In-House Building Block Collection | Provides the foundational chemical resources for all proposed synthesis routes, defining the space of in-house synthesizable molecules [5]. |

| CASP Tool (e.g., AiZynthFinder) | Performs retrosynthetic analysis to deconstruct target molecules into available building blocks and plans feasible synthetic routes [5]. |

| Generative Molecular Model | Proposes novel molecular structures that are optimized for desired properties like target activity and synthesizability [5] [1]. |

| QSAR Model | Provides a fast computational prediction of a molecule's biological activity, serving as one of the primary objectives for optimization [5]. |

Figure 2: In-house de novo drug design workflow that integrates building block availability, molecular generation, multi-objective scoring, and experimental validation [5].

The definition of synthesizability is evolving from a simplistic, binary concept to a nuanced, context-dependent one. In materials science, models like SynthNN demonstrate that synthesizability can be learned from historical data, dramatically accelerating the discovery of new inorganic crystals. In drug development, the focus is shifting towards pragmatic in-house synthesizability, which aligns computational design with practical laboratory constraints. Together, these advanced computational approaches are closing the gap between in-silico design and real-world synthesis, making the process of molecular and materials discovery more efficient and reliable.

The acceleration of materials discovery hinges on the accurate identification of synthesizable compounds. For decades, the computational materials science community has relied on two fundamental approaches for this task: the heuristic principle of charge-balancing and energy-based assessments via density functional theory (DFT). These methods serve as preliminary filters to distinguish potentially synthesizable materials from those that are not. However, within the context of developing deep learning models like SynthNN for synthesizability prediction, understanding the specific limitations of these traditional approaches becomes paramount. This document details the quantitative shortcomings and procedural constraints of charge-balancing and DFT calculations, providing a foundational rationale for the development and adoption of more advanced, data-driven synthesizability models.

Quantitative Comparison of Synthesizability Assessment Methods

The table below summarizes the key performance metrics and limitations of traditional synthesizability assessment methods compared to modern machine learning approaches.

Table 1: Performance Comparison of Synthesizability Assessment Methods

| Method | Key Principle | Reported Precision/Accuracy | Primary Limitations |

|---|---|---|---|

| Charge-Balancing | Net neutral ionic charge based on common oxidation states [2] | Only 37% of known synthesized inorganic materials are charge-balanced [2] | Overly inflexible; fails for metallic/covalent systems; poor for ionic binaries (e.g., only 23% of Cs compounds) [2] |

| DFT Formation Energy | Thermodynamic stability relative to decomposition products [2] | Captures only ~50% of synthesized inorganic materials [2] | Fails to account for kinetic stabilization and non-physical synthesis factors [2] |

| DFT (Kinetic Stability) | Absence of imaginary phonon frequencies [9] | 82.2% Accuracy [9] | Computationally expensive; materials with imaginary frequencies can be synthesized [9] |

| SynthNN (Deep Learning) | Data-driven model learning from known compositions [2] | 7x higher precision than DFT formation energy [2] | Requires large datasets; performance depends on data quality and representation |

| CSLLM (Large Language Model) | Fine-tuned LLM on crystal structure data [9] | 98.6% Accuracy [9] | Requires sophisticated text representation of crystal structures; risk of "hallucination" [9] |

Limitations of the Charge-Balancing Principle

Protocol for Charge-Balancing Assessment

Application Note: This protocol outlines the procedure for evaluating the synthesizability of an inorganic crystalline material using the charge-balancing heuristic.

Materials & Reagents:

- Chemical Formula: The stoichiometric composition of the target material.

- Oxidation State Table: A reference of common oxidation states for elements (e.g., O: -2, Alkali metals: +1).

Procedure:

- Assign Oxidation States: For each element in the chemical formula, assign its most common oxidation state.

- Calculate Total Charge: Multiply each oxidation state by its stoichiometric coefficient in the formula and sum the results to obtain the total charge.

- Assess Synthesizability: If the total charge equals zero, the material is predicted to be synthesizable. A non-zero total charge leads to a prediction of non-synthesizability.

Limitations & Data Interpretation: The critical limitation of this method is its extremely low recall. As evidenced in Table 1, this method incorrectly labels a majority of known, synthesized materials as non-synthesizable. Its performance is notably poor even for typically ionic systems like binary cesium compounds, where only 23% are charge-balanced [2]. The method fails because it cannot account for diverse bonding environments (e.g., metallic or covalent bonds) and real-world synthesis conditions that stabilize non-charge-neutral compositions [2].

Workflow and Failure Analysis of Charge-Balancing

The following diagram illustrates the charge-balancing protocol and its primary points of failure when applied to real-world material systems.

Limitations of Density Functional Theory (DFT)

Protocol for DFT-Based Synthesizability Assessment

Application Note: This protocol describes the use of DFT-calculated formation energy and energy above the convex hull to assess thermodynamic stability, a common proxy for synthesizability.

Materials & Reagents:

- Crystal Structure: An initial atomic structure of the target material (e.g., in POSCAR or CIF format).

- DFT Software: A quantum chemistry code (e.g., VASP, Quantum ESPRESSO).

- Computational Resources: High-performance computing (HPC) cluster.

- Reference Database: A database of stable phases (e.g., the Materials Project) to construct the convex hull.

Procedure:

- Structure Relaxation: Perform a full geometry optimization of the target crystal structure using DFT to find its ground state configuration.

- Formation Energy Calculation: Calculate the formation energy (ΔH~f~) of the relaxed structure relative to its constituent elements in their standard states.

- Convex Hull Construction: Compute the phase diagram for the relevant chemical system. The convex hull is defined by the set of thermodynamically stable phases with the lowest formation energies at their specific compositions.

- Energy Above Hull Calculation: Determine the energy above the convex hull (E~hull~) for the target material. This represents the energy difference between the target and the most stable combination of other phases on the convex hull at the same composition.

- Assess Synthesizability: A material is often considered potentially synthesizable if its E~hull~ is below a threshold, commonly 0 eV/atom (truly stable) or a small positive value (e.g., 10-50 meV/atom, metastable).

Limitations & Data Interpretation: While DFT is a powerful and robust electronic structure method [10], its use for synthesizability prediction has profound limitations:

- Incomplete Picture: Thermodynamic stability is a necessary but not sufficient condition for synthesizability. DFT typically overlooks finite-temperature effects, entropic factors, and kinetic barriers that govern synthetic accessibility [11].

- Metastable Materials: Many successfully synthesized materials are metastable (E~hull~ > 0) and are missed by strict hull-based filters [9].

- Functional and Basis Set Dependence: The accuracy of results is highly dependent on the choice of exchange-correlation functional and atomic orbital basis set. Outdated defaults (e.g., B3LYP/6-31G*) can yield poor results, necessitating careful selection of modern, robust method combinations [10].

- Computational Cost: Although faster than high-level wavefunction theories, DFT calculations for complex crystals remain computationally expensive, limiting high-throughput screening [2] [10].

Workflow and Failure Analysis of DFT-Based Assessment

The diagram below outlines the DFT-based assessment workflow and highlights where its fundamental approximations lead to failures in predicting real-world synthesizability.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Computational and Data Resources for Synthesizability Research

| Item Name | Function/Application | Relevance to SynthNN Development |

|---|---|---|

| ICSD (Inorganic Crystal Structure Database) | Primary source of positive (synthesized) training data [2] [9]. | Provides the foundational dataset of experimentally realized structures for model training. |

| Materials Project / OQMD / JARVIS | Databases of calculated (including theoretical) crystal structures [9] [11]. | Source for generating negative or unlabeled training examples; benchmark for performance. |

| DFT Software (VASP, Quantum ESPRESSO) | Calculates formation energy and energy above hull for stability assessment [2]. | Provides baseline metrics for comparing and validating ML model performance. |

| Atom2Vec / Material String | Learned or engineered representation of chemical compositions or structures [2] [9]. | Converts raw chemical data into a format suitable for deep learning model input. |

| Positive-Unlabeled (PU) Learning Algorithms | Machine learning framework to learn from positive (synthesized) and unlabeled data [2] [9]. | Critical for handling the lack of definitive negative examples in materials data. |

The limitations of traditional charge-balancing and DFT-based methods are both quantitative and fundamental. Charge-balancing acts as an overly restrictive filter, while DFT's thermodynamic focus fails to capture the kinetic and pathway-dependent nature of real-world synthesis. These shortcomings, validated by low precision and accuracy metrics, create a significant bottleneck in computational materials discovery pipelines. It is this precise gap in capability that justifies the development and integration of advanced deep learning models like SynthNN. By learning directly from the full distribution of synthesized materials, SynthNN and subsequent models such as CSLLM internalize complex chemical principles beyond simple heuristics or total energy calculations, thereby offering a more reliable and effective tool for predicting material synthesizability.

A significant challenge in data-driven materials and drug discovery is the inherent bias in available data. Public databases are overwhelmingly populated with successful synthesis reports, while data on failed attempts are rarely published. This creates a "data problem" where machine learning models must learn the concept of synthesizability—whether a material or compound can be successfully synthesized—from only positive examples and artificially generated negatives. Within the context of SynthNN deep learning model research, addressing this data imbalance is crucial for developing accurate synthesizability predictors. This application note details the methodologies, protocols, and computational tools required to construct effective training datasets and models under these constrained data conditions, with applications spanning both inorganic crystalline materials and organic compound synthesis.

Dataset Construction Methods

Constructing representative datasets for synthesizability prediction requires careful consideration of data sources, labeling strategies, and augmentation techniques. The approaches vary between domains but share common principles for handling positive-unlabeled learning scenarios.

Table 1: Primary Data Sources for Synthesizability Prediction

| Data Type | Source Name | Content Description | Domain |

|---|---|---|---|

| Positive Examples | Inorganic Crystal Structure Database (ICSD) | Experimentally synthesized inorganic crystalline materials [2] | Materials Science |

| Positive Examples | ChEMBL, ZINC15 | Commercially available or synthesized molecules [12] | Drug Discovery |

| Theoretical Structures | Materials Project, OQMD, JARVIS | Computationally predicted structures [9] | Materials Science |

| Artificial Negatives | GDBChEMBL, Nonpher | Computationally generated unsynthesized molecules [12] | Drug Discovery |

| Text-Mined Synthesis Data | Literature-extracted datasets | Synthesis parameters extracted from scientific articles [13] | Cross-Domain |

Positive-Unlabeled Learning Frameworks

The core challenge in synthesizability prediction is the lack of verified negative examples. Positive-unlabeled learning provides a principled framework for this scenario, where models are trained using confirmed positive samples and "unlabeled" samples that are treated as potential negatives.

The SynthNN model addresses this through a semi-supervised approach that treats unsynthesized materials as unlabeled data and probabilistically reweights these materials according to their likelihood of being synthesizable [2]. This approach falls under the broader category of positive-unlabeled learning algorithms, which have been successfully applied to predict synthesizability across various domains:

- 2D MXenes and 3D crystals: Transductive bagging PU learning approaches have achieved over 75% and 87.9% accuracy respectively [9]

- General perovskites: Inductive PU learning with domain-specific transfer learning has demonstrated superior performance compared to tolerance factor-based approaches [13]

- Ternary oxides: Human-curated literature data enables PU learning models to predict solid-state synthesizability [13]

In the drug discovery domain, DeepSA addresses similar challenges by training on molecules labeled by retrosynthetic analysis, where compounds requiring ≤10 synthetic steps are considered easy-to-synthesize (ES) and those requiring >10 steps or failing route prediction are labeled hard-to-synthesize (HS) [12].

SynthNN Architecture and Implementation

Model Framework

The SynthNN framework implements a deep learning approach to synthesizability prediction that leverages the entire space of synthesized inorganic chemical compositions. Key architectural components include:

- Atom2Vec Representation: Chemical formulas are represented by a learned atom embedding matrix optimized alongside all other parameters of the neural network [2]

- Domain Adaptation: The model learns chemical principles of charge-balancing, chemical family relationships, and ionicity directly from data without prior chemical knowledge [2]

- Positive-Unlabeled Training: Artificially generated unsynthesized materials are treated as unlabeled data and probabilistically reweighted during training

The model reformulates material discovery as a synthesizability classification task, enabling identification of synthesizable materials with 7× higher precision than DFT-calculated formation energies and outperforming human experts by 1.5× higher precision with completion rates five orders of magnitude faster [2].

Advanced Architectures

Recent advancements have extended beyond SynthNN's composition-based approach. The Crystal Synthesis Large Language Models framework utilizes three specialized LLMs to predict synthesizability, synthetic methods, and suitable precursors for 3D crystal structures [9]. This multi-component architecture achieves state-of-the-art accuracy of 98.6% in synthesizability prediction, significantly outperforming traditional methods based on thermodynamic and kinetic stability [9].

Performance Benchmarks and Validation

Quantitative Performance Metrics

Table 2: Performance Comparison of Synthesizability Prediction Models

| Model Name | Domain | Accuracy | Precision | Key Differentiators |

|---|---|---|---|---|

| SynthNN | Inorganic Crystalline Materials | Not specified | 7× higher than DFT [2] | Composition-based; outperforms human experts |

| CSLLM | 3D Crystal Structures | 98.6% [9] | Not specified | Structure-based; suggests methods & precursors |

| DeepSA | Organic Compounds | 89.6% AUROC [12] | Not specified | SMILES-based; discriminates synthesis difficulty |

| PU Learning (Jang et al.) | Hypothetical Compounds | Not specified | Not specified | CLscore for non-synthesizable identification |

| Solid-state PU Model | Ternary Oxides | Not specified | Not specified | Human-curated literature data |

Experimental Validation Protocols

Validating synthesizability predictions requires rigorous experimental protocols to confirm model accuracy:

Protocol 1: Experimental Synthesis Verification

- Objective: Validate computationally predicted synthesizable materials through laboratory synthesis

- Materials: Predicted synthesizable compounds, precursor materials, synthesis equipment

- Procedure:

- Select high-priority candidates based on synthesizability scores (e.g., RankAvg > 0.95) [11]

- Apply retrosynthetic planning to generate viable precursor combinations

- Balance chemical reactions and compute precursor quantities

- Execute solid-state synthesis using predicted temperature parameters

- Characterize products using X-ray diffraction (XRD) for phase identification

- Validation: Compare XRD patterns with target crystal structures to confirm successful synthesis

Protocol 2: Cross-Database Benchmarking

- Objective: Evaluate model performance across multiple materials databases

- Materials: Structures from Materials Project, GNoME, Alexandria, and ICSD

- Procedure:

- Curate balanced dataset with synthesizable and non-synthesizable examples

- Calculate CLscores for all structures using pre-trained PU learning model [9]

- Set CLscore threshold (e.g., <0.1) for non-synthesizable classification

- Train model on subset and evaluate on held-out test set

- Assess generalization on structures with complexity exceeding training data

Protocol 3: Human Expert Comparison

- Objective: Benchmark model performance against domain experts

- Materials: Set of candidate materials for synthesizability assessment

- Procedure:

- Select diverse set of material compositions for evaluation

- Have domain experts assess synthesizability using traditional methods

- Run model predictions on same material set

- Compare precision, recall, and assessment time

- Statistically analyze performance differences

Computational Implementation Protocols

Data Preprocessing Workflow

Protocol 4: Training Data Preparation

- Input: Raw composition data from ICSD and generated compositions

- Processing Steps:

- Filter compositions by element count (e.g., ≤7 elements) and atom count (e.g., ≤40 atoms) [9]

- Exclude disordered structures to focus on ordered crystal structures

- Convert compositions to atom2vec representations

- Apply standardization to chemical formulas

- Split data into training/validation/test sets (typical ratio: 90/5/5)

- Output: Processed dataset ready for model training

Protocol 5: Negative Example Generation

- Input: Theoretical structures from computational databases

- Processing Steps:

- Collect theoretical structures from Materials Project, OQMD, JARVIS

- Calculate CLscores using pre-trained PU learning model [9]

- Select structures with lowest CLscores (e.g., <0.1) as non-synthesizable examples

- Balance dataset with approximately equal synthesizable and non-synthesizable examples

- Visualize dataset diversity using t-SNE for crystal systems and element distribution

- Output: Balanced dataset for synthesizability classification

Model Training and Optimization

Protocol 6: SynthNN Model Training

- Framework: Deep learning with atom embeddings

- Hyperparameters:

- Atom embedding dimension (optimized during training)

- Neural network architecture (number of layers, neurons)

- Learning rate and optimization algorithm

- Positive-unlabeled weighting parameter (N_synth)

- Batch size and training epochs

- Training Procedure:

- Initialize atom embedding matrix with random weights

- Forward pass: compute synthesizability probability

- Calculate loss with PU-weighted negative examples

- Backpropagate errors and update parameters

- Validate on held-out set and apply early stopping

- Output: Trained SynthNN model for synthesizability prediction

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Tool/Resource | Type | Function | Example Sources |

|---|---|---|---|

| ICSD Database | Data Resource | Source of verified synthesizable inorganic materials [2] | FIZ Karlsruhe |

| Materials Project | Data Resource | Source of theoretical structures for negative examples [9] | LBNL |

| atom2vec | Algorithm | Learns optimal representation of chemical formulas [2] | Custom implementation |

| Positive-Unlabeled Learning | Framework | Handles lack of verified negative examples [2] [13] | Various implementations |

| Retrosynthetic Analysis | Software | Generates synthetic routes and identifies precursors [9] | Retro*, AiZynthFinder |

| DFT Calculations | Computational Method | Provides formation energies for stability assessment [2] | VASP, Quantum ESPRESSO |

| XRD Characterization | Experimental Method | Verifies successful synthesis of predicted materials [11] | Laboratory equipment |

| Text-Mining Pipelines | Data Extraction | Extracts synthesis information from literature [13] | Custom NLP pipelines |

Application Protocols

Materials Discovery Pipeline

Protocol 7: Integrated Synthesizability-Guided Discovery

- Objective: Identify novel synthesizable materials computationally and verify experimentally

- Workflow:

- Screen computational databases (e.g., 4.4 million structures) [11]

- Apply synthesizability score (e.g., RankAvg > 0.95) to identify candidates

- Filter by element constraints (exclude platinum group, toxic elements)

- Apply retrosynthetic planning to suggest precursors

- Predict synthesis parameters (temperature, atmosphere)

- Execute high-throughput synthesis

- Characterize products via XRD

- Expected Outcomes: Successful synthesis of 7 out of 16 targets demonstrated in recent studies [11]

Drug Discovery Implementation

Protocol 8: Compound Prioritization for Medicinal Chemistry

- Objective: Identify synthesizable drug candidates from virtually generated compounds

- Workflow:

- Generate candidate compounds using AI-based molecular generation models

- Convert structures to SMILES representation

- Apply synthesizability predictor (e.g., DeepSA, SAscore, SCScore)

- Rank compounds by synthesizability score

- Filter out compounds with synthesizability scores below threshold

- Proceed with experimental synthesis of top candidates

- Validation: Compare predicted synthesizability with actual laboratory synthesis outcomes

The "data problem" in synthesizability prediction—learning from successful syntheses and artificial negatives—represents both a challenge and opportunity in computational materials and drug discovery. The SynthNN framework and related approaches demonstrate that through careful data curation, positive-unlabeled learning strategies, and domain-adapted model architectures, it is possible to develop accurate predictors that significantly accelerate the discovery of novel materials and compounds. The protocols and methodologies outlined in this application note provide researchers with practical tools to implement these approaches in their own workflows, ultimately bridging the gap between computational prediction and experimental realization.

The discovery of novel inorganic crystalline materials is a cornerstone of technological advancement. A critical, unsolved challenge in this field is the reliable prediction of whether a hypothetical chemical composition is synthesizable—that is, synthetically accessible with current capabilities, regardless of whether its synthesis has been reported yet [2]. Traditional proxies for synthesizability, such as charge-balancing rules and density functional theory (DFT)-calculated formation energies, have proven inadequate as they fail to capture the complex and multi-factorial nature of real-world synthesis [2]. The SynthNN deep learning model represents a paradigm shift by leveraging the entire space of known inorganic compositions to directly predict synthesizability, offering a robust, data-driven solution to this complex chemical problem [2].

Core Innovation: Learning Chemistry from Data

SynthNN's foundational innovation lies in its reformulation of material discovery as a synthesizability classification task. Unlike traditional methods that rely on pre-defined chemical rules or thermodynamic calculations, SynthNN employs an atom2vec framework [2]. This approach uses a learned atom embedding matrix that is optimized alongside all other parameters of the neural network.

- Data-Driven Representation: The model learns an optimal representation of chemical formulas directly from the distribution of previously synthesized materials, without requiring prior chemical knowledge or structural information [2].

- Learned Chemical Principles: Experiments indicate that SynthNN autonomously learns fundamental chemical principles from the data, including charge-balancing, chemical family relationships, and ionicity, and utilizes these to generate predictions [2].

Quantitative Performance and Benchmarking

SynthNN's performance was rigorously benchmarked against established computational methods and human experts, demonstrating its significant advantages.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Key Metric | Performance | Key Advantage |

|---|---|---|---|

| SynthNN | Precision | 7x higher than DFT-based formation energy [2] | Data-driven, learns from all known compositions |

| Charge-Balancing | Coverage of Known Materials | Only 37% of known synthesized materials are charge-balanced [2] | Chemically intuitive but inflexible |

| DFT Formation Energy | Coverage of Synthesized Materials | Captures only ~50% of synthesized inorganic crystalline materials [2] | Accounts for thermodynamics but not kinetics |

| Human Experts | Precision & Speed | 1.5x higher precision; 5 orders of magnitude faster than the best expert [2] | Scalable and consistently high-performing |

Experimental Protocol and Workflow

Implementing SynthNN involves a structured workflow from data preparation to model inference. The following protocol details the key steps.

Data Preparation and Curation

- Positive Data Source: Extract chemical formulas of synthesized inorganic crystalline materials from the Inorganic Crystal Structure Database (ICSD) [2].

- Handling Unlabeled Data: Generate a large set of artificial chemical formulas not present in the ICSD to represent unsynthesized/unsynthesizable materials. This creates a Positive-Unlabeled (PU) learning scenario [2].

- Semi-Supervised Learning: Employ a PU learning algorithm that treats unsynthesized materials as unlabeled data and probabilistically reweights them according to their likelihood of being synthesizable [2].

Model Architecture and Training

- Input Representation: Chemical formulas are fed into the model using the atom2vec framework, which learns a continuous vector representation for each element [2].

- Network Structure: A deep neural network architecture processes the atom embeddings. The specific number of layers and the dimensionality of the embeddings are treated as hyperparameters [2].

- Training Regime: The model is trained on the prepared synthesizability dataset. The ratio of artificially generated formulas to synthesized formulas (referred to as ( N_{synth} )) is a key hyperparameter optimized during training [2].

Model Inference and Screening

- Input: A candidate chemical formula.

- Processing: The model computes a synthesizability score.

- Output: A classification (synthesizable/not synthesizable) or a probability score that can be used to prioritize candidates in large-scale computational screens [2].

Figure 1: The SynthNN development and application workflow, illustrating the flow from data preparation to synthesizability prediction.

Figure 2: The core learning mechanism of SynthNN, demonstrating how chemical principles are derived directly from data.

Table 2: Essential Resources for Synthesizability Prediction Research

| Resource / Tool | Type | Primary Function in Research |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Database | The primary source of positive examples (synthesized materials) for model training [2]. |

| Atom2Vec | Algorithm / Framework | Learns optimal, continuous vector representations of chemical elements directly from data, forming the input layer of SynthNN [2]. |

| Positive-Unlabeled (PU) Learning | Machine Learning Paradigm | A semi-supervised approach that handles the lack of definitive negative data (unsynthesizable materials) by treating them as unlabeled examples [2]. |

| Density Functional Theory (DFT) | Computational Method | Provides thermodynamic stability metrics (e.g., formation energy) used as a baseline for comparing SynthNN's performance [2]. |

| Deep Neural Network (DNN) | Model Architecture | The core classifier that processes atom embeddings to output a synthesizability probability [2]. |

Inside SynthNN: Architecture, Training, and Real-World Deployment

The challenge of predicting whether a hypothetical inorganic crystalline material is synthetically accessible is a fundamental bottleneck in accelerating materials discovery. Traditional computational approaches, such as density functional theory (DFT) calculations of formation energy, serve as imperfect proxies for synthesizability, while the expert judgment of solid-state chemists, though valuable, does not scale for the rapid exploration of vast chemical spaces [2]. The SynthNN deep learning model represents a paradigm shift by directly addressing the synthesizability classification task, achieving a reported 1.5× higher precision than the best human expert and completing the task five orders of magnitude faster [2]. A cornerstone of this model's architecture is its use of learned, distributed representations of atoms, a concept pioneered by the atom2vec embedding framework.

The core analogy behind atom2vec is that "if one may know a word by the company it keeps, then the same might be said of an atom" [14]. Inspired by the Word2Vec algorithm in natural language processing (NLP), atom2vec aims to derive vector representations of atoms that encapsulate their chemical nature and relationships by analyzing their co-occurrence patterns within a large database of known crystal structures [14] [15]. Within the SynthNN architecture, these embeddings are not pre-defined but are learned end-to-end. The model leverages an atom embedding matrix that is optimized alongside all other parameters of the neural network, allowing it to learn the optimal representation of chemical formulas directly from the distribution of previously synthesized materials [2]. This enables SynthNN to infer complex chemical principles such as charge-balancing, chemical family relationships, and ionicity directly from data, without prior explicit programming of these rules [2] [16].

Technical Architecture and Dataflow

The architecture integrating atom2vec principles within a synthesizability prediction model like SynthNN involves a sequential flow from chemical composition to a final synthesizability probability. The following workflow diagram delineates this process.

Diagram 1: High-level dataflow of the SynthNN model, from chemical composition to synthesizability prediction.

Input and Atom Embedding Layer

The model input is a chemical formula, represented as a set of constituent atoms. For instance, the formula "CsCl" would be decomposed into the atoms {Cs, Cl}. In the initial embedding layer, each atom in the periodic table is associated with a dense, continuous vector of a predefined dimensionality d (a model hyperparameter). This layer is implemented as a lookup table, often called an embedding matrix, where the row corresponding to an atom's index is its d-dimensional vector [2].

- Function: This layer converts an atomic symbol (a categorical value) into a numerical, differentiable representation that the neural network can process.

- Initialization: The embedding vectors are typically initialized randomly and are then updated during model training via backpropagation [14] [2].

- Learning Objective: Through training, the model adjusts these vectors so that atoms frequently found in similar chemical environments across the training database have similar vector representations. This process captures latent chemical properties [14] [15].

Compositional Representation via Pooling

A single material composition comprises multiple atoms. To create a fixed-length, composition-level representation from its constituent atom vectors, a pooling operation is applied. This step is analogous to forming a sentence representation from its constituent word vectors in NLP [14].

Common pooling strategies include:

- Sum Pooling: The vectors of all atoms in the formula are summed element-wise.

- Average Pooling: The vectors of all atoms are averaged element-wise.

For a formula like "SiO₂", the pooling layer would execute an operation such as vec(Si) + 2 * vec(O), where vec() denotes the embedding lookup. The resulting pooled vector is a single, d-dimensional representation of the entire chemical formula, which is then passed to downstream neural network layers [14] [2].

Classification Backbone

The pooled compositional representation is fed into a standard multilayer perceptron (MLP), which consists of a series of fully connected (dense) layers with non-linear activation functions (e.g., ReLU, sigmoid). This MLP acts as the classifier, learning the complex, non-linear mapping between the composed material representation and its probability of being synthesizable [2].

The final layer typically uses a sigmoid activation function to output a value between 0 and 1, interpreted as the probability that the input chemical formula is synthesizable. During training, a decision threshold (e.g., 0.5) is applied to this probability to make a binary classification, and the model's weights—including the entire embedding matrix—are updated to minimize the classification error on the training data [16].

Key Variants of Atomic Embeddings

The foundational atom2vec concept has been extended in several ways. The table below summarizes the prominent unsupervised approaches for generating distributed atomic representations.

Table 1: Comparison of Key Atomic Embedding Techniques

| Method | Core Data Source | Learning Algorithm | Key Principle |

|---|---|---|---|

| Atom2Vec [15] | Database of material compositions & structures | Matrix Factorization (SVD) | Derives atom vectors from a co-occurrence matrix of atoms and their chemical environments. |

| Mat2Vec [14] | Scientific text (abstracts from materials science literature) | Word2Vec (Skip-gram) | Learns atom representations from their context in millions of scientific abstracts. |

| SkipAtom [14] | Crystal structure graphs from materials databases | Skip-gram with Negative Sampling | Predicts neighboring atoms in a crystal structure graph to learn atom embeddings. |

The SkipAtom variant is of particular note for structural property prediction. It explicitly models a crystal structure as a graph, where atoms are nodes and bonds are edges. The unsupervised learning task is formulated to maximize the log-probability of predicting a context atom given a target atom within the same local structural environment [14]. The objective function is:

[ \frac{1}{|M|} \sum{m\in M}\sum{a\in Am}\sum{n\in N(a)}\log p(n|a) ]

Here, (M) is the set of materials, (A_m) is the set of atoms in material (m), and (N(a)) are the neighbors of atom (a) in the structure graph. The probability (p(n|a)) is typically computed using a softmax function over the inner product of the target and context atom vectors [14].

Experimental Protocol and Benchmarking

Model Training and Data Curation for SynthNN

The development of a synthesizability prediction model like SynthNN requires a specific dataset and a tailored training protocol to handle the inherent lack of confirmed negative examples.

Table 2: SynthNN Performance at Different Decision Thresholds [16]

| Decision Threshold | Precision | Recall |

|---|---|---|

| 0.10 | 0.239 | 0.859 |

| 0.20 | 0.337 | 0.783 |

| 0.30 | 0.419 | 0.721 |

| 0.40 | 0.491 | 0.658 |

| 0.50 | 0.563 | 0.604 |

| 0.60 | 0.628 | 0.545 |

| 0.70 | 0.702 | 0.483 |

| 0.80 | 0.765 | 0.404 |

| 0.90 | 0.851 | 0.294 |

Dataset Construction:

- Positive Examples: Sourced from the Inorganic Crystal Structure Database (ICSD), which contains compositions of known, synthesized crystalline inorganic materials [2] [16].

- Negative Examples: Artificially generated from a vast space of plausible but unsynthesized chemical compositions. This creates a Positive-Unlabeled (PU) learning scenario, as some "negative" examples could be synthesizable but are simply absent from the ICSD [2].

Training Protocol (PU Learning):

- The model is trained on a mixture of positive (synthesized) and artificially generated negative examples.

- A semi-supervised learning approach is employed, which treats the artificially generated materials as unlabeled data. These examples are probabilistically reweighted according to their likelihood of being synthesizable to account for the incomplete labeling [2].

- A key hyperparameter is (N_{synth}), the ratio of artificially generated formulas to synthesized formulas used during training [2].

Evaluation:

- Model performance is benchmarked against baselines like random guessing and the charge-balancing rule.

- As shown in Table 2, the choice of decision threshold on the output probability allows for a precision-recall trade-off tailored to the specific application (e.g., high-recall for broad screening vs. high-precision for candidate selection) [16].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for Implementing and Experimenting with atom2vec and SynthNN

| Resource | Function/Description | Example/Reference |

|---|---|---|

| Materials Databases | Provides structured data on crystal structures and compositions for training embedding models. | Inorganic Crystal Structure Database (ICSD) [2] [16], Materials Project [17] |

| Local Environment Analysis | Algorithm for identifying coordination environments and structure motifs (e.g., octahedra, tetrahedra) in crystal structures. | Implementation in pymatgen [17] |

| Graph Construction Algorithm | Method to convert a crystal structure into a graph of atomic connections for models like SkipAtom. | Voronoi decomposition with solid angle weights [14] |

| Positive-Unlabeled Learning Algorithm | A semi-supervised learning framework to handle datasets without confirmed negative examples. | Class-weighting of unlabeled examples [2] |

| Pre-trained Models & Code | Provides a starting point for prediction and further model development. | Official SynthNN GitHub Repository [16] |

Advanced Architectural Evolutions

The principle of using learned embeddings for fundamental units has inspired architectures beyond simple compositional models. A significant advancement is the Atom-Motif Dual Graph Network (AMDNet), which incorporates higher-order building blocks into the graph representation [17].

Whereas atom-based graph networks represent crystals as graphs with atoms as nodes, AMDNet introduces structure motifs—such as SiO₄ tetrahedra or MnO₆ octahedra—as additional nodes. This creates a dual graph where motif nodes and atom nodes are connected, allowing the graph neural network to explicitly process both atomic and supra-atomic structural information. This motif-centric approach has been shown to improve the prediction of electronic properties like band gaps, demonstrating the value of embedding and combining multi-scale features [17]. The following diagram illustrates this enhanced architecture.

Diagram 2: The Atom-Motif Dual Graph Network (AMDNet) architecture, which incorporates structure motifs as explicit nodes in the graph to enhance predictive performance for electronic properties.

The discovery of novel, synthesizable materials is a fundamental driver of innovation across numerous scientific and industrial fields. However, the challenge of reliably predicting whether a hypothetical inorganic crystalline material is synthetically accessible has long hindered autonomous materials discovery. Traditional approaches, such as density-functional theory (DFT) calculations for thermodynamic stability or the enforcement of charge-balancing rules, have proven insufficient, capturing only a fraction of synthesized materials [2]. This application note details the methodology for developing a deep learning synthesizability model (SynthNN) that overcomes these limitations by integrating the Inorganic Crystal Structure Database (ICSD) with a Positive-Unlabeled (PU) Learning framework. This protocol is designed for researchers and scientists engaged in computational materials discovery and drug development, providing a robust workflow for identifying synthetically accessible candidates with high precision [2].

Core Components and Research Reagents

The experimental framework relies on several key "research reagents" – critical datasets, software tools, and algorithms. The table below catalogues these essential components.

Table 1: Key Research Reagents and Solutions

| Reagent/Solution | Type | Primary Function | Key Specifications |

|---|---|---|---|

| ICSD [18] [19] | Database | Serves as the authoritative source of positive (synthesized) material examples. | >210,000 entries; data from 1913 onwards; ~12,000 new entries annually. |

| Atom2Vec [2] | Algorithm | Generates optimal vector representations (embeddings) of chemical formulas directly from data. | Learned embedding dimensionality is a key hyperparameter. |

| PU Learning Framework [2] [20] | Machine Learning Paradigm | Enables model training using only positive (ICSD) and unlabeled (generated) examples. | Handles lack of confirmed negative data; employs semi-supervised class-weighting. |

| SynthNN Model [2] | Deep Learning Architecture | The core classifier that predicts the synthesizability of a given inorganic chemical formula. | A neural network that leverages atom embeddings and operates without structural input. |

| Artificially Generated Formulas | Dataset | Creates a pool of "unlabeled" examples, representing potentially unsynthesizable compositions. | The ratio of generated formulas to ICSD formulas ( ( N_{synth} ) ) is a critical hyperparameter. |

Workflow and Signaling Pathways

The following workflow diagram outlines the logical sequence and data flow for training the SynthNN model, from data acquisition to final model deployment.

Diagram 1: SynthNN training and deployment workflow.

Detailed Experimental Protocols

Protocol: Curation of the Positive Dataset from ICSD

Objective: To extract a high-quality set of synthesized inorganic crystalline materials to serve as positive examples for model training.

- Data Source Access: Obtain a subscription to the ICSD, available via FIZ Karlsruhe or the National Institute of Standards and Technology (NIST) [18] [19].

- Data Extraction: Download the entire database or a curated subset. The database contains over 210,000 entries, with all important crystal structure data, including unit cell parameters, space group, and complete atomic parameters [18].

- Data Parsing: Extract the chemical composition (molecular formula) for each entry. For the initial SynthNN model, structural information is not required as input [2].

- Quality Control: Rely on the ICSD's internal quality assurance processes, which involve thorough checks and continuous updates to modify, supplement, or remove duplicates [18]. The processed list of chemical formulas constitutes the set of Positive Examples.

Protocol: Generation of the Unlabeled Dataset

Objective: To create a large and diverse set of chemical formulas that represent the space of potentially unsynthesizable materials.

- Strategy: Artificially generate a vast number of plausible inorganic chemical formulas that are not present in the ICSD. The ratio of these generated formulas ((N_{synth})) to the positive ICSD formulas is a critical hyperparameter [2].

- Considerations: It is crucial to recognize that this unlabeled set is not a pure collection of negative examples. It will contain some materials that are synthesizable but have not been reported in the ICSD, or that have yet to be synthesized. This is the core challenge that PU learning is designed to address [2].

Protocol: Implementation of the PU Learning Algorithm

Objective: To train a classifier that distinguishes synthesizable materials from the unlabeled pool, accounting for the ambiguous nature of the unlabeled data.

- Feature Representation: Employ the atom2vec algorithm to convert each chemical formula into a numerical vector. The dimensionality of this learned representation is a key hyperparameter to be optimized [2].

- Model Architecture: Construct a deep neural network (SynthNN) that takes the atom2vec representations as input. The specific architecture (number of layers, nodes, activation functions) must be defined and tuned.

- PU Training Loop:

- The model is trained on the combined set of positive (ICSD) and unlabeled (generated) examples.

- Implement a semi-supervised learning approach that treats the unlabeled materials as having uncertain labels. The algorithm probabilistically reweights these examples during training according to their likelihood of being synthesizable [2].

- This process allows SynthNN to learn the underlying "chemistry of synthesizability"—such as charge-balancing principles, chemical family relationships, and ionicity—directly from the data distribution, without explicit human-defined rules [2].

Protocol: Model Benchmarking and Performance Evaluation

Objective: To quantitatively assess the performance of the trained SynthNN model against established baselines and human expertise.

- Establish Baselines: Compare SynthNN against two primary baselines:

- Random Guessing: Predictions weighted by class imbalance.

- Charge-Balancing: Predicts a material as synthesizable only if it is charge-balanced according to common oxidation states [2].

- Define Metrics: Calculate standard binary classification metrics, including Precision, Recall, and F1-score. Due to the PU learning context, the F1-score is particularly informative [2].

- Human Expert Comparison: Conduct a head-to-head discovery task where the model's candidate materials are compared against those identified by a panel of expert material scientists [2].

- Quantitative Analysis: The performance data should be summarized in a clear table for easy comparison.

Table 2: Performance Benchmarking of Synthesizability Prediction Methods

| Method | Key Principle | Precision | Relative Speed | Key Limitation |

|---|---|---|---|---|

| Charge-Balancing [2] | Net neutral ionic charge | Low (23-37% of known compounds) | Fast | Inflexible; fails for metallic/covalent materials. |

| DFT Formation Energy [2] | Thermodynamic stability | 1x (Baseline) | Slow (Calculation intensive) | Fails to account for kinetic stabilization. |

| Human Expert [2] | Specialized domain knowledge | 1x (Baseline) | 1x (Baseline) | Limited to narrow chemical domains. |

| SynthNN (PU Learning) [2] | Data-driven classification from ICSD | 7x higher than DFT; 1.5x higher than human experts | 100,000x faster than human experts | Requires a robust database like ICSD. |

The integration of the ICSD with a Positive-Unlabeled learning framework provides a powerful and efficient pipeline for predicting the synthesizability of inorganic crystalline materials. This protocol outlines a data-driven approach that surpasses traditional physical proxies and human intuition in both precision and speed. By following these application notes, researchers can implement and refine SynthNN-type models, thereby significantly enhancing the reliability of computational material screening and accelerating the discovery of novel, synthetically accessible materials.

The SynthNN model represents a significant methodological shift in predicting the synthesizability of inorganic crystalline materials by relying exclusively on chemical composition data, completely bypassing the need for atomic structural information [2]. This approach reformulates material discovery as a synthesizability classification task, leveraging the entire space of synthesized inorganic chemical compositions to generate predictions [2]. By operating solely on compositional data, SynthNN addresses a critical bottleneck in computational materials screening: the unavailability of precise crystal structures for hypothetical or yet-to-be-discovered materials. This capability is particularly valuable for high-throughput virtual screening of novel material compositions where structural details remain unknown, enabling researchers to prioritize synthetic efforts toward the most promising candidates before investing resources in structural determination or prediction.

Technical Implementation and Architecture

Atom2Vec Representation Framework

SynthNN employs a deep learning architecture based on the atom2vec framework, which represents each chemical formula through a learned atom embedding matrix that is optimized alongside all other neural network parameters [2]. This approach automatically learns optimal representations of chemical formulas directly from the distribution of previously synthesized materials without requiring pre-defined feature engineering. The dimensionality of this representation is treated as a hyperparameter determined during model development [2]. Notably, this method requires no prior chemical knowledge or assumptions about factors influencing synthesizability, as the underlying "chemistry" of synthesizability is learned entirely from the data of experimentally realized materials. The model demonstrates an ability to learn fundamental chemical principles including charge-balancing, chemical family relationships, and ionicity from composition data alone, utilizing these learned principles to generate synthesizability predictions [2].

Positive-Unlabeled Learning Methodology

A fundamental challenge in synthesizability prediction is the lack of confirmed negative examples (definitively unsynthesizable materials) in scientific literature. SynthNN addresses this through a semi-supervised positive-unlabeled (PU) learning approach that treats potentially synthesizable but unsynthesized materials as unlabeled data and probabilistically reweights them according to their likelihood of being synthesizable [2]. The training dataset is constructed from the Inorganic Crystal Structure Database (ICSD) for positive examples (confirmed synthesized materials), augmented with artificially generated unsynthesized materials. The ratio of artificially generated formulas to synthesized formulas used in training is a key model hyperparameter (N_synth) [2]. This methodology allows SynthNN to effectively learn from incomplete labeling, a common scenario in materials informatics where negative examples are rarely documented.

Comparative Performance Analysis

Quantitative Performance Metrics

The performance of SynthNN has been systematically evaluated against traditional computational methods and human experts, demonstrating significant advantages in both accuracy and efficiency.

Table 1: Performance Comparison of Synthesizability Assessment Methods

| Method | Precision | Key Advantages | Computational Requirements |

|---|---|---|---|

| SynthNN (Composition-Only) | 7× higher than DFT formation energy [2] | No structural data needed; high throughput | Computationally efficient for screening billions of candidates [2] |

| DFT Formation Energy | ~50% of synthesized materials captured [2] | Well-established physical basis | Computationally intensive; requires structural data |

| Charge-Balancing Approach | Only 37% of known compounds correctly identified [2] | Simple heuristic; no computation | Minimal computation but poor accuracy |

| Human Experts | 1.5× lower precision than SynthNN [2] | Domain knowledge application | Time-consuming; limited to specialized domains |

Advantages Over Structure-Dependent Approaches

The composition-only approach of SynthNN provides several distinct advantages over structure-dependent methods. By eliminating the requirement for atomic coordinates, space group information, and lattice parameters, SynthNN can evaluate materials for which no structural data exists, including completely novel compositions outside existing structural databases [2]. This capability is particularly valuable for exploring uncharted regions of chemical space where structural analogs are unavailable. Additionally, the computational efficiency of composition-based screening enables evaluation of billions of candidate materials, a scale impractical for structure-based methods that typically require resource-intensive density functional theory calculations [2]. In direct benchmarking against expert materials scientists, SynthNN achieved 1.5× higher precision while completing the classification task five orders of magnitude faster than the best human expert [2].

Experimental Validation Protocols

Model Training and Validation Workflow

The experimental protocol for developing and validating SynthNN follows a structured workflow to ensure robust performance evaluation and minimize overfitting.

Performance Benchmarking Protocol

To ensure meaningful evaluation, SynthNN undergoes rigorous benchmarking against multiple established methods following a standardized protocol:

- Dataset Preparation: Curate a balanced dataset containing known synthesized materials from ICSD and artificially generated unsynthesized compositions [2]

- Baseline Establishment: Implement random guessing and charge-balancing baselines for performance comparison [2]

- Expert Comparison: Conduct head-to-head material discovery comparison against domain experts with timing and precision metrics [2]

- Statistical Validation: Calculate standard performance metrics including precision, recall, and F1-score with appropriate adjustments for PU learning scenarios [2]

- Cross-Validation: Employ spatial k-fold cross-validation techniques to ensure model generalizability and avoid overfitting [11]

This comprehensive validation strategy ensures that performance claims are statistically robust and comparable across different synthesizability assessment methods.

Research Reagent Solutions

Table 2: Essential Research Materials and Computational Tools for Synthesizability Prediction

| Resource/Tool | Function | Application Context |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Source of positive training examples [2] | Provides confirmed synthesized materials for model training |

| Atom2Vec Framework | Composition representation learning [2] | Converts chemical formulas to optimized feature representations |

| Positive-Unlabeled Learning Algorithms | Handling of unlabeled negative examples [2] | Manages lack of confirmed unsynthesizable materials in literature |

| Deep Learning Framework (e.g., TensorFlow, PyTorch) | Neural network implementation [2] | Enables model architecture development and training |

| High-Performance Computing (HPC) Resources | Training and inference acceleration [2] | Facilitates screening of billions of candidate compositions |

Integration with Materials Discovery Workflows

Screening Pipeline Implementation

The composition-only approach of SynthNN enables seamless integration into computational materials screening pipelines, providing an efficient filter to prioritize candidates for further investigation.

Complementary Role in Multi-Stage Screening

While SynthNN operates exclusively on composition data, it serves as a critical first-pass filter in multi-stage materials discovery pipelines. Compositions flagged as highly synthesizable by SynthNN can be prioritized for subsequent computational and experimental validation, including:

- Crystal structure prediction algorithms

- Density functional theory calculations for property assessment

- Experimental synthesis planning and execution This integrated approach maximizes resource efficiency by focusing expensive computational and experimental resources on the most promising candidates identified through initial composition-based screening [2].

Limitations and Boundary Conditions

The composition-only approach of SynthNN, while broadly applicable, exhibits specific limitations that researchers should consider when implementing this methodology. The model cannot differentiate between different polymorphs of the same chemical composition, as it lacks structural information that would distinguish between alternative crystal arrangements [2]. This limitation becomes significant when synthesizability depends on specific structural features rather than overall composition. Additionally, while SynthNN learns chemical principles like charge balancing from data, its predictions remain constrained by the distribution of materials in its training dataset, potentially limiting extrapolation to completely novel chemical spaces without structural analogs in existing databases. Nevertheless, for high-throughput screening of novel compositions where structural data is unavailable, SynthNN provides an unparalleled advantage in identifying synthesizable candidates for further investigation.

The accelerating capability of computational models to design novel functional materials has starkly revealed a critical bottleneck: the profound difficulty of predicting whether a theoretically proposed material can be successfully synthesized in a laboratory. Traditional computational screening methods have heavily relied on thermodynamic stability metrics, particularly the energy above the convex hull (Ehull), as a proxy for synthesizability. However, synthesis is a complex process governed by kinetic pathways, precursor selection, and experimental conditions that extend far beyond thermodynamic equilibrium. This limitation has created a formidable barrier to the experimental realization of computationally discovered materials, necessitating a paradigm shift toward data-driven synthesizability prediction.

The development of the SynthNN deep learning model represents a significant advancement in this domain. By training directly on the distribution of known synthesized compositions from the Inorganic Crystal Structure Database (ICSD), SynthNN learns the complex chemical principles that influence synthesizability without relying on predefined rules or structural information [2]. This approach reformulates material discovery as a synthesizability classification task, enabling the identification of synthesizable materials with 7× higher precision than traditional formation energy calculations and outperforming human experts by achieving 1.5× higher precision in significantly less time [2].

Integrating synthesizability prediction early in the discovery workflow is particularly crucial for inverse design applications, where generative models produce novel material structures optimized for specific properties. Without synthesizability constraints, these generated materials often remain theoretical curiosities. This application note details protocols for embedding SynthNN and related synthesizability models into end-to-end computational workflows, bridging the gap between virtual screening and experimental realization.

Computational Frameworks for Synthesizability Prediction

Model Architectures and Performance Benchmarks

Current synthesizability prediction frameworks employ diverse architectural approaches, each with distinct advantages for integration into discovery pipelines. The table below summarizes the quantitative performance of leading models.

Table 1: Performance Comparison of Synthesizability Prediction Models

| Model Name | Input Type | Architecture | Key Performance Metric | Reference |

|---|---|---|---|---|

| SynthNN | Chemical composition | Deep learning (atom2vec) | 7× higher precision than DFT formation energy | [2] |

| CSLLM | Crystal structure | Fine-tuned Large Language Model | 98.6% accuracy, outperforms Ehull (74.1%) and phonon stability (82.2%) | [9] |

| PU Learning Model | Crystal structure | Positive-unlabeled learning | Generates CLscore for synthesizability; used to curate negative samples | [9] |

| InvDesFlow-AL | Crystal structure | Active learning-based diffusion model | Identifies 1,598,551 materials with Ehull < 50 meV/atom | [21] |

The exceptional performance of CSLLM demonstrates how large language models, when fine-tuned on comprehensive crystallographic data, can achieve unprecedented accuracy in synthesizability classification. This model utilizes a specialized text representation of crystal structures—termed "material string"—that encodes essential lattice, composition, atomic coordinate, and symmetry information in a format amenable to LLM processing [9]. This approach has shown remarkable generalization capability, maintaining 97.9% prediction accuracy even for complex structures with large unit cells that considerably exceed the complexity of its training data [9].

Workflow Integration Strategies

The integration of synthesizability prediction into computational workflows follows two principal paradigms: sequential filtering and embedded constraint. In the sequential approach, virtual screening generates candidate materials based on target properties, after which synthesizability filters (like SynthNN or CSLLM) prioritize candidates for experimental validation. This method benefits from modularity, allowing independent improvement of property prediction and synthesizability models.

In contrast, the embedded constraint approach incorporates synthesizability directly into the objective function of generative models. The InvDesFlow-AL framework exemplifies this strategy through its active learning cycle, where a generative model produces candidate structures that undergo DFT relaxation and synthesizability assessment [21]. The most promising candidates are then used to iteratively refine the generative model, gradually steering it toward regions of chemical space rich in synthesizable, high-performance materials. This tight integration has demonstrated remarkable success in inverse design tasks, notably identifying Li2AuH6 as a conventional BCS superconductor with an ultra-high transition temperature of 140 K [21].

Diagram 1: Integrated discovery workflow with synthesizability prediction. The workflow combines generative design and high-throughput screening, with synthesizability assessment acting as a critical gate before computationally intensive DFT validation.

Application Protocols for Discovery Workflows

Protocol 1: Virtual Screening with Synthesizability Filtering

This protocol details a sequential workflow for large-scale virtual screening of material databases, incorporating synthesizability as a critical filtering step.

Materials and Computational Resources:

- Starting Database: Materials Project, OQMD, JARVIS, or other crystallographic databases

- Property Prediction Models: Graph neural networks or other machine learning models for target properties

- Synthesizability Model: Pre-trained SynthNN (for composition) or CSLLM (for full structure)

- Computing Infrastructure: High-performance computing cluster for parallel screening

Procedure:

- Define Target Properties: Establish quantitative criteria for the desired material functionality (e.g., band gap range, formation energy, specific conductivity).

- Initial Database Filtering: Apply basic filters based on composition, element count, or structural complexity to reduce the search space to manageable dimensions.

- Property Prediction: Apply specialized property prediction models to identify candidates meeting target specifications. For composition-based screening without structural information, use models trained exclusively on compositional features.

- Synthesizability Assessment: Apply synthesizability classification to property-optimized candidates:

- For composition-based assessment using SynthNN:

- Input chemical formulas into the pre-trained model

- Retrieve synthesizability probability scores

- Apply threshold (typically >0.5) to identify synthesizable candidates [2]

- For structure-based assessment using CSLLM:

- Convert crystal structures to "material string" representation

- Process through fine-tuned LLM

- Classify based on output probabilities with >98% reported accuracy [9]

- For composition-based assessment using SynthNN:

- Priority Ranking: Combine property optimization and synthesizability scores to generate a ranked candidate list for experimental pursuit.

Validation: In benchmark studies, this approach identified synthesizable materials with 7× higher precision than screening based solely on DFT-calculated formation energies [2].

Protocol 2: Inverse Design with Embedded Synthesizability Constraints

This protocol implements an active learning framework where synthesizability is directly embedded into the generative process, enabling inverse design of novel, synthesizable materials.

Materials and Computational Resources:

- Generative Model: Diffusion model (e.g., InvDesFlow-AL), variational autoencoder, or other structure-generating architecture

- Property Prediction: Differentiable property predictors for guidance during generation

- Synthesizability Model: Differentiable synthesizability estimator or surrogate model

- DFT Calculator: For final validation of generated structures

Procedure:

- Model Initialization: Pre-train a generative model on a broad database of known inorganic crystals (e.g., Alex-MP-20, GNoME) to learn fundamental chemical and structural principles [21].

- Conditional Generation: Generate candidate structures conditioned on target properties through:

- Conditional diffusion processes with property-based guidance