Stacked Generalization Machine Learning: Advanced Predictive Modeling for Material Stability and Drug Development

This article provides a comprehensive exploration of stacked generalization, an advanced ensemble machine learning technique, and its application in predicting material stability and properties crucial for drug development.

Stacked Generalization Machine Learning: Advanced Predictive Modeling for Material Stability and Drug Development

Abstract

This article provides a comprehensive exploration of stacked generalization, an advanced ensemble machine learning technique, and its application in predicting material stability and properties crucial for drug development. It covers foundational concepts, methodological implementation, optimization strategies, and comparative validation. Tailored for researchers and pharmaceutical professionals, the content demonstrates how stacking integrates multiple base models with a meta-learner to achieve superior predictive accuracy and robustness compared to single-model approaches. By incorporating real-world case studies and interpretability frameworks like SHAP, this guide serves as a practical resource for developing reliable predictive models that can accelerate material discovery and formulation in biomedical research.

Stacked Generalization Fundamentals: From Core Concepts to Material Science Applications

Stacked generalization, commonly known as stacking, represents an advanced ensemble machine learning method that integrates multiple predictive models through a meta-learning framework to achieve superior performance compared to any single constituent model. This approach systematically deduces and corrects the biases of base learners by combining their predictions in an optimally weighted manner, typically through cross-validation and a meta-learner architecture. First introduced by Wolpert in 1992, stacked generalization has evolved into formal implementations like the Super Learner, with proven theoretical guarantees that it performs asymptotically at least as well as the best individual model in the ensemble [1] [2]. While initially conceptualized decades ago, stacking has gained significant traction in recent years across diverse fields including genomic selection, energy systems prognostics, and medical diagnostics, demonstrating its capacity to enhance predictive accuracy, improve robustness, and mitigate overfitting [3] [4] [5]. This protocol outlines the fundamental principles, implementation workflows, and practical applications of stacked generalization, providing researchers with a structured framework for deploying this advanced ensemble method in computational research, particularly in material stability and drug development contexts.

Conceptual Foundation and Historical Development

Stacked generalization operates on the principle of combining multiple "level-zero" base algorithms through a "higher-level" meta-model that learns how to optimally integrate their predictions [1]. Unlike simpler ensemble methods like bagging or boosting that typically combine homogeneous model types through averaging or weighted voting, stacking leverages heterogeneous models that capture different aspects of the underlying patterns in the data [6]. The meta-model in stacking effectively learns the relative competencies of each base learner across different regions of the feature space, creating a sophisticated combination that capitalizes on their collective strengths while mitigating individual weaknesses.

The methodology was first formally introduced by David Wolpert in 1992 as a scheme for minimizing generalization error by deducing the biases of generalizers with respect to a provided learning set [2]. Wolpert demonstrated that stacked generalization could be viewed as a more sophisticated version of cross-validation, employing strategies beyond simple winner-takes-all combinations. Later developments by Breiman and van der Laan et al. established theoretical foundations, including constraints for convex combinations and proofs of asymptotic optimality [1] [3]. The formalization of the Super Learner algorithm by van der Laan and colleagues provided a rigorous implementation framework with V-fold cross-validation at its core, establishing stacking as a theoretically grounded approach with guaranteed performance properties [1].

Theoretical Advantages and Performance Guarantees

The principal theoretical advantage of stacked generalization lies in its asymptotic performance guarantee – in large samples, the algorithm will perform at least as well as the best individual predictor included in the ensemble [1]. This property makes stacking particularly valuable in research contexts where model selection uncertainty exists, as it provides insurance against selecting a suboptimal single model. The diversity of the base learner library is crucial to this performance; a larger and more diverse library enhances the potential for superior performance [1].

Stacking demonstrates particular effectiveness in addressing the bias-variance tradeoff inherent in predictive modeling. By combining multiple models with different inductive biases, stacking reduces both variance (through averaging effects) and bias (through complementary model strengths) [6]. Furthermore, the cross-validation framework inherent in proper stacking implementation provides robust protection against overfitting, as the meta-learner is trained on out-of-sample predictions from the base models [1] [7]. This makes stacking particularly valuable for small to moderate-sized datasets common in scientific research, where overfitting presents a significant concern.

Fundamental Principles and Methodology

Core Architecture and Components

The architecture of stacked generalization consists of two primary layers: the base model layer (level-zero models) and the meta-model layer (level-one learner) [4]. The base models comprise a diverse collection of prediction algorithms, which can include parametric regression models, non-parametric methods, and complex machine learning approaches. These models are chosen specifically for their complementary strengths and diverse inductive biases. The meta-model is a learning algorithm that takes the predictions from the base models as its input features and learns to combine them optimally to produce the final prediction [3] [4].

The combination process in stacking is typically implemented under specific constraints to ensure stability and performance. Commonly, a convex combination is enforced, requiring non-negative weights that sum to one [1]. This constraint improves numerical stability and interpretability while maintaining theoretical performance guarantees. The objective function for this combination is typically aligned with the research goal, such as minimizing mean squared error for continuous outcomes or maximizing area under the ROC curve for classification tasks [1].

The Cross-Validation Foundation

A critical methodological component of stacked generalization is the use of V-fold cross-validation to generate inputs for the meta-model [1] [7]. This process involves:

- Splitting the training data into V mutually exclusive and exhaustive folds

- For each fold v, training each base model on all data except fold v

- Using these trained models to generate predictions for the held-out fold v

- Collecting these cross-validated predictions for all observations to create a new dataset (the "level-one" data) where each instance contains the base model predictions and the true outcome value [1]

This cross-validation framework ensures that the predictions used to train the meta-model are truly out-of-sample for each base model, preventing information leakage and overfitting. The resulting level-one data has the same size as the original training set but contains the base models' generalized predictions rather than the original features [7].

The following diagram illustrates the complete stacked generalization workflow, from initial data partitioning to final model generation:

Implementation Protocols

Standard Super Learner Protocol

The following step-by-step protocol implements the standard Super Learner, which represents a formalized implementation of stacked generalization:

Table 1: Step-by-Step Super Learner Protocol

| Step | Action | Key Considerations |

|---|---|---|

| 1. Data Partitioning | Split data into V mutually exclusive folds (typically V=5 or V=10) | Ensure folds maintain distribution of outcome; stratify for classification |

| 2. Base Model Training | For each fold v, train each base model on all data except fold v | Use diverse algorithms (GAM, splines, random forests, etc.) [1] |

| 3. Cross-validation Prediction | Use each trained base model to predict outcomes for held-out fold v | Store these out-of-sample predictions for all observations |

| 4. Risk Estimation | For each algorithm, compute average performance across all folds | Use appropriate loss function (MSE for continuous, rank loss for AUC) [1] |

| 5. Level-One Data Creation | Create new dataset with CV predictions as features and true outcomes as response | This dataset has same number of rows as original training data |

| 6. Meta-Learner Training | Train meta-model on level-one data to combine base model predictions | Use non-negative least squares or constrained regression [1] |

| 7. Full Model Training | Retrain all base models on complete training dataset | Maintains maximum information for final predictions |

| 8. Prediction Generation | Combine full base model predictions using meta-learner weights | Apply to new data using the complete stacked system |

This protocol emphasizes the critical distinction between the cross-validation phase (steps 2-4) used to generate training data for the meta-learner, and the final model building phase (steps 7-8) that utilizes the entire dataset for maximum predictive performance [1]. The risk estimation in step 4 provides a honest assessment of each base model's performance and can be used for the Discrete Super Learner (selecting the single best model) even if proceeding to full stacking.

Advanced Implementation: Evolutionary Stacked Generalization

For complex prediction problems with high-dimensional feature spaces, such as those encountered in material stability research or genomic selection, an evolutionary optimization approach can enhance standard stacking:

Table 2: Evolutionary Stacking Enhancement Protocol

| Component | Standard Approach | Evolutionary Enhancement |

|---|---|---|

| Feature Selection | Use all available features or manual selection | Implement MIC and AIC for automated input variable selection [4] |

| Hyperparameter Tuning | Manual tuning or grid search | Apply improved Grasshopper Optimization Algorithm (IGOA) [4] |

| Base Model Selection | Pre-specified model types | Algorithmic selection of complementary models with low correlation [4] |

| Meta-Learner Training | Standard regression or classification | Regularized Extreme Learning Machine (RELM) for enhanced generalization [4] |

| Validation | Standard V-fold cross-validation | Nested cross-validation with optimization in inner loops |

The evolutionary approach introduces intelligent optimization at multiple stages of the stacking pipeline, addressing key challenges in complex domains. The Maximum Information Coefficient (MIC) and Akaike Information Criterion (AIC) component selects input variables by measuring correlation between features and outputs, reducing dimensionality while retaining predictive information [4]. The Improved Grasshopper Optimization Algorithm (IGOA) enhances hyperparameter tuning through Chebyshev chaotic mapping initialization and spiral position update mechanisms, avoiding local optima while searching for optimal model configurations [4].

Application Case Studies Across Domains

Genomic Selection in Plant and Animal Breeding

Stacked generalization has demonstrated significant value in genomic selection, where the goal is to predict breeding values for desirable traits based on genome-wide markers:

Table 3: Stacking Performance in Genomic Selection Applications

| Species | Dataset Characteristics | Base Models | Performance Results |

|---|---|---|---|

| Rice | 3,686 SNPs from 198 accessions, 30 quantitative traits [3] | Six linear mixed and Bayesian models [3] | Lower or comparable MSE to individual methods; reduced overfitting [3] |

| Barley | 5,160 SNPs from 307 accessions, 8 traits [3] | rrBLUP, gBLUP, Bayesian models [3] | Superior stability across different trait types [3] |

| Maize | 45,438 SNPs from 262 accessions, 11 traits [3] | Linear mixed models and nonlinear alternatives [3] | Consistent performance across environmental conditions [3] |

| Mice | 10,346 SNPs from 1,181 samples, 20 traits [3] | Bayesian and mixed effect models [3] | Resistance to overfitting demonstrated through hypothesis testing [3] |

In these genomic selection applications, stacking integrated methods including rrBLUP (ridge regression BLUP), gBLUP (genomic BLUP), and various Bayesian models (BayesA, BayesB) [3]. The meta-model employed was typically a neural network (multi-layer perceptron) that learned to weight the contributions of each base model according to their predictive strengths for specific traits [3]. This approach proved particularly valuable given that no single method demonstrated universal superiority across all traits, species, and environmental conditions, echoing the fundamental premise that stacking performance depends on library diversity [3].

Energy Systems Prognostics

In prognostics and health management for energy systems, stacking has addressed the challenging problem of predicting remaining useful life (RUL) of proton exchange membrane fuel cells (PEMFC):

This architecture combines support vector regression (SVR) for its high-dimensional data processing capabilities with gated recurrent units (GRU) for their strong sequence learning capacity [4]. The meta-model employs a regularized extreme learning machine (RELM) that provides stable generalization ability [4]. Experimental results demonstrated that this stacked approach outperformed individual models across different operating conditions, achieving superior prediction accuracy for future degradation trend and remaining useful life [4].

Medical Diagnostics and Cancer Detection

Stacked generalization has shown remarkable performance in medical diagnostics, particularly in cancer detection where accurate classification is critical:

Table 4: Stacking for Cancer Detection Performance

| Dataset | Characteristics | Base Models | Meta-Model | Performance |

|---|---|---|---|---|

| WBC (Breast Cancer) | 569 patients, 30 features, 37.2% malignant cases [5] | Logistic Regression, Naïve Bayes, Decision Tree [5] | Multilayer Perceptron [5] | 100% accuracy with 6 selected features [5] |

| LCP (Lung Cancer) | 15 features, binary classification [5] | Logistic Regression, Naïve Bayes, Decision Tree [5] | Multilayer Perceptron [5] | 100% accuracy with 5 selected features [5] |

The cancer detection application employed a sophisticated multistage feature selection process combining filter, wrapper, and embedded methods to reduce the feature space while maintaining diagnostic information [5]. The stacking ensemble leveraged the complementary strengths of logistic regression (linear relationships), Naïve Bayes (probabilistic structure), and decision trees (nonlinear interactions) [5]. The multilayer perceptron meta-model learned to optimally combine these diverse perspectives, achieving perfect classification performance on benchmark datasets with reduced feature sets [5]. This demonstrates stacking's capacity to integrate multiple modeling paradigms while maintaining interpretability through feature selection.

Research Reagent Solutions

Implementing effective stacked generalization requires both computational frameworks and methodological components. The following table details essential "research reagents" for constructing stacked models:

Table 5: Essential Research Reagents for Stacked Generalization

| Reagent Category | Specific Examples | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Base Model Algorithms | Generalized additive models (GAM) with splines [1]; Multivariate adaptive regression splines (MARS/earth) [1]; Bayesian GLMs [1]; Polynomial adaptive regression splines [1]; Support vector regression [4]; Gated recurrent units [4] | Capture diverse patterns and relationships in data | Select for complementarity rather than individual performance [4] |

| Meta-Learners | Non-negative least squares [1]; Regularized extreme learning machine [4]; Multilayer perceptron [5]; Logistic regression [3] | Optimally combine base model predictions | Constrain to convex combinations for stability [1] |

| Optimization Tools | Improved grasshopper optimization algorithm (IGOA) [4]; V-fold cross-validation [1]; Maximum information coefficient [4] | Tune hyperparameters and select features | Implement chaotic decreasing factors to avoid local optima [4] |

| Feature Selection Methods | Maximum information coefficient (MIC) [4]; Akaike information criterion (AIC) [4]; Hybrid filter-wrapper approaches [5] | Identify informative feature subsets | Balance relevance with redundancy reduction [5] |

| Validation Frameworks | 5-fold or 10-fold cross-validation [1]; Nested cross-validation; Statistical hypothesis testing [3] | Provide honest performance assessment and prevent overfitting | Ensure computational feasibility with complex ensembles |

These research reagents constitute the essential methodological toolkit for implementing stacked generalization across diverse domains. The selection of base models should prioritize architectural diversity and complementary inductive biases rather than simply choosing the best-performing individual models [4]. Similarly, meta-learners should be matched to the characteristics of the prediction task, with regularization employed to maintain generalization performance [1] [4]. The optimization and validation components ensure that the stacked ensemble achieves its theoretical performance advantages in practical applications.

Stacked generalization represents a sophisticated ensemble methodology that transcends simple model averaging or voting schemes by implementing a principled, meta-learning approach to combination. Through its cross-validation foundation and theoretical performance guarantees, stacking provides researchers with a robust framework for maximizing predictive accuracy while mitigating overfitting. The protocol outlined in this document provides both standard and advanced implementations suitable for various research contexts, from genomic selection to energy prognostics and medical diagnostics.

The case studies demonstrate stacking's versatility across domains with distinct data characteristics and modeling challenges. In genomic selection, stacking integrated diverse linear and Bayesian models to achieve stable performance across species and traits [3]. In energy systems, it combined temporal and structural models for accurate remaining useful life prediction [4]. In medical diagnostics, stacking with feature selection achieved perfect classification while maintaining interpretability [5]. These successes highlight stacking's capacity to synthesize diverse modeling perspectives into superior predictive performance.

For researchers pursuing material stability studies or drug development applications, stacked generalization offers a powerful approach to navigating model uncertainty and complexity. By implementing the protocols and leveraging the reagent solutions described herein, scientists can build ensembles that adapt to their specific data environments and research questions, ultimately accelerating discovery through enhanced predictive capability.

Stacked generalization, or stacking, is an advanced ensemble machine learning technique designed to enhance predictive performance by combining multiple models. Unlike bagging or boosting, which aggregate homogeneous models, stacking integrates diverse, or heterogeneous, models through a layered learning architecture [8] [9]. This approach is particularly powerful in complex research domains such as material stability and drug development, where it leverages the strengths of various algorithms to achieve superior accuracy and generalization [10] [11]. This article details the core architecture of stacking—encompassing base learners, meta-learners, and the operational workflow—framed within the context of computational material science research.

Core Concepts of Stacked Generalization

The architecture of stacking is structured into two primary layers: the base layer and the meta-layer.

Base Learners (Level-0 Models): This layer consists of multiple, diverse machine learning models that are trained directly on the original dataset [9]. The strength of stacking relies on this diversity; using different algorithms (e.g., Decision Trees, Support Vector Machines, and linear models) ensures that each model captures unique patterns and relationships within the data [8]. The predictions of these models form the basis for the next layer of learning.

Meta-Learner (Level-1 Model): The meta-learner is a model that learns how to best combine the predictions made by the base learners [8] [9]. Instead of being trained on the original features, it is trained on a new dataset composed of the cross-validated predictions from the base models. Its purpose is to discern when each base model is most reliable and to integrate their outputs optimally for a final prediction [8].

The "Super Learner" algorithm, a formalization of stacking, provides a theoretical guarantee that the stacked ensemble will perform as well as or better than any single base model included in the ensemble, asymptotically [8].

The Stacking Workflow: A Detailed Protocol

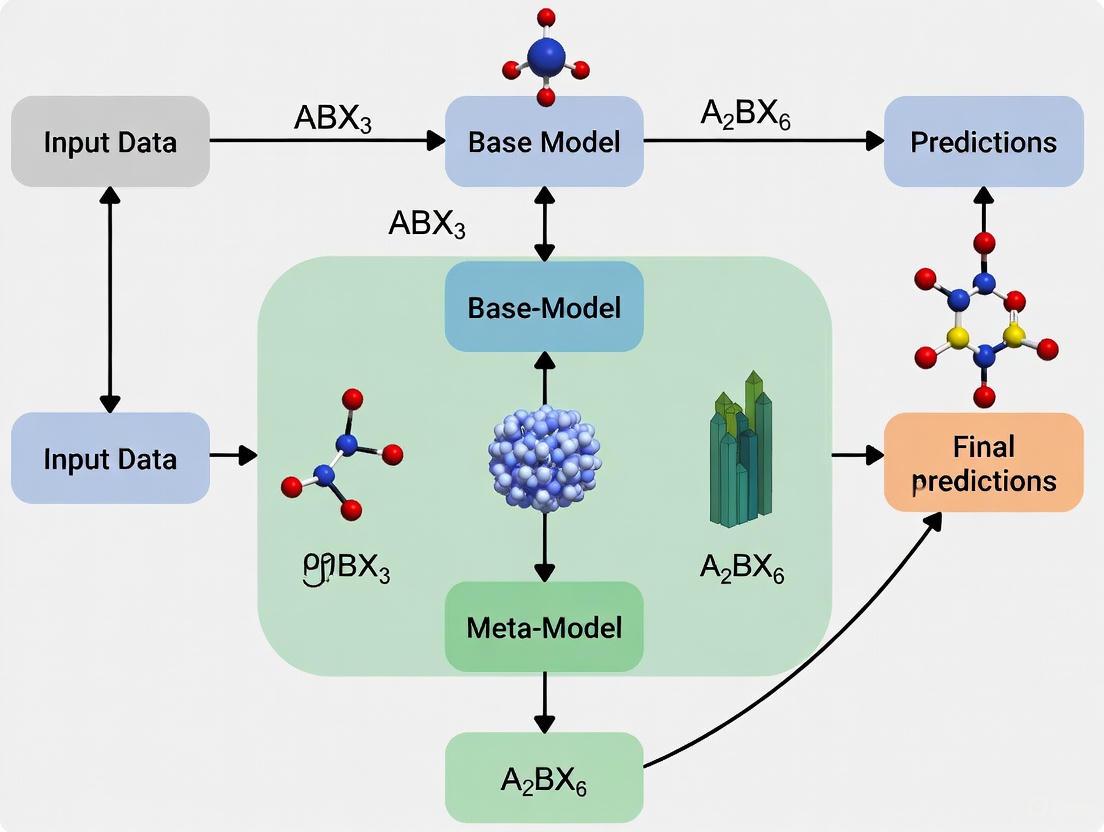

The process of building a stacked ensemble requires a systematic, multi-stage workflow to prevent data leakage and ensure robust generalization. The following protocol, illustrated in Figure 1, outlines these critical steps.

Figure 1. Stacked Generalization Workflow. This diagram illustrates the two-stage training process involving base learners and a meta-learner, using k-fold cross-validation to prevent overfitting.

Protocol 1: Construction of the Stacked Ensemble

This protocol describes the end-to-end process for creating a stacked model, from data partitioning to final model generation [8] [9].

- Step 1: Data Preparation and Partitioning. Split the full dataset into a training set and a hold-out test set. The training set will be used for all model development and validation, while the test set will be reserved for the final evaluation of the stacked ensemble.

- Step 2: Base Learner Training with k-Fold Cross-Validation. For each base learner, train the model using k-fold cross-validation on the training set.

- Use the same k-fold splits for every base learner to ensure consistency [8].

- For each fold, train the model on (k-1) folds and generate predictions on the withheld validation fold.

- Collect these out-of-fold predictions for each data point in the training set. These are known as the cross-validated predictions.

- Step 3: Create the Level-One Data Matrix. Combine the cross-validated predictions from all base learners into a new feature matrix, often denoted as Z. Each column in Z represents the predictions from one base learner, and each row corresponds to a data point in the original training set. The true target values (y) for the training set are retained as the labels for this new matrix.

- Step 4: Train the Meta-Learner. Train the chosen meta-learning algorithm on the level-one data matrix (Z) and the true target values. The meta-learner learns the optimal way to weight and combine the predictions of the base learners.

- Step 5: Refit Base Learners and Finalize Ensemble. Retrain each of the base learners on the entire, original training set (without cross-validation). The final stacked ensemble consists of these fully-trained base learners and the trained meta-learner.

Protocol 2: Generating Predictions with the Stacked Ensemble

This protocol outlines the procedure for using the trained stacked ensemble to make predictions on new, unseen data [9].

- Step 1: Generate Base-Level Predictions. Pass the new data instance through each of the trained base learners to obtain a set of initial predictions.

- Step 2: Create Level-One Instance. Collect the predictions from all base learners for this instance and form them into a new feature vector, which is a single row with the same structure as the level-one data matrix Z.

- Step 3: Generate Final Prediction. Feed this new feature vector into the trained meta-learner, which will produce the final, ensemble prediction.

Performance Metrics and Quantitative Comparison

The effectiveness of a stacked ensemble is validated through rigorous comparison against its constituent models. The following table summarizes typical performance metrics from a materials informatics study predicting the work function of MXenes, where a stacked model achieved state-of-the-art results [10].

Table 1: Comparative Performance of Stacked Model vs. Base Learners in MXene Work Function Prediction

| Model / Metric | Coefficient of Determination (R²) | Mean Absolute Error (MAE) | Root Mean Square Error (RMSE) |

|---|---|---|---|

| Stacked Model | 0.95 | 0.20 | N/A |

| Base Learner A | 0.89 | 0.28 | N/A |

| Base Learner B | 0.91 | 0.25 | N/A |

| Base Learner C | 0.87 | 0.30 | N/A |

Note: Data adapted from a study on predicting MXenes' work function using stacked machine learning [10].

The superior performance of the stacked ensemble, as evidenced by the higher R² and lower MAE, highlights its capacity to integrate the strengths of individual models and mitigate their weaknesses. This leads to more accurate and robust predictions, which is critical in research applications.

Essential Research Reagents and Computational Tools

Implementing a stacked ensemble requires a suite of software libraries and computational tools. The table below functions as a "Scientist's Toolkit," detailing key "research reagents" for a successful stacking experiment.

Table 2: Research Reagent Solutions for Stacking Experiments

| Reagent / Tool Name | Type / Category | Primary Function in Stacking Protocol |

|---|---|---|

| Scikit-learn | Python Library | Provides a unified API for implementing base learners (e.g., RF, SVM) and meta-learners, as well as tools for data preprocessing and k-fold cross-validation [10]. |

| H2O.ai | AutoML Platform | Offers an automated and highly scalable implementation for training and tuning stacked ensembles, including built-in cross-validation management [8] [11]. |

| MLxtend | Python Library | Contains a user-friendly StackingClassifier and StackingRegressor that simplifies the process of building stacked models without manual level-one data creation [9]. |

| Pandas | Python Library | Essential for data manipulation, feature engineering, and the construction of the level-one data matrix from cross-validated predictions [10] [9]. |

| SHAP | Interpretation Tool | Explains the output of the stacked ensemble by quantifying the contribution of each base learner's prediction to the final meta-learner's output, enhancing interpretability [10] [11]. |

Advanced Architectural Considerations

For researchers aiming to optimize a stacked ensemble, several advanced considerations are critical.

- Base Learner Diversity: The greatest gains from stacking are realized when the base learners are highly variable and make uncorrelated errors [8]. Combining a diverse set of algorithms (e.g., tree-based models, linear models, kernel-based models) is more effective than using multiple instances of the same algorithm type.

- Meta-Learner Selection: While any algorithm can serve as the meta-learner, simpler models are often preferred. Regularized linear models (e.g., LASSO or Ridge Regression) are common choices as they help prevent overfitting by assigning weights to the base learners' predictions. Random Forests or Gradient Boosting Machines can also be effective, particularly for capturing non-linear combinations [8].

- Mitigating Overfitting: The use of k-fold cross-validated predictions to build the level-one data matrix is the primary defense against overfitting [8]. This ensures that the meta-learner is trained on predictions that the base models made on data they were not directly trained on. An additional measure is to use a hold-out set, distinct from the training set used for base learner CV, to train the meta-learner.

- Interpretability with SHAP: The "black box" nature of complex ensembles can be mitigated using SHapley Additive exPlanations (SHAP). SHAP values can be applied to the meta-learner to interpret its behavior, showing how much each base model's prediction pushed the final output higher or lower for a given instance, thus providing global and local interpretability [10] [11].

The architectural relationship between data, base learners, and the meta-learner in a functioning stacked ensemble is summarized in the following system diagram.

Figure 2. Stacking Ensemble Inference Architecture. This diagram shows the data flow when a new instance is processed by the trained ensemble to produce a final prediction.

Stacked generalization, commonly known as stacking, is an advanced ensemble machine learning method that combines multiple base models through a meta-learning framework to enhance predictive performance and generalization capabilities. Unlike conventional ensemble approaches that use simple averaging or voting, stacking employs a learned combination mechanism where predictions from diverse base models serve as input features for a meta-model that generates final predictions. This architecture enables the ensemble to capitalize on the unique strengths of individual algorithms while mitigating their weaknesses, typically reducing prediction variance and improving robustness on unseen data.

In materials science research, particularly in stability prediction and property optimization, stacking has demonstrated remarkable effectiveness in addressing complex nonlinear relationships between composition, processing parameters, and material performance. Recent applications across diverse domains—from predicting MXenes' work functions to forecasting hardness in high-entropy nitride coatings and assessing dump slope stability in geotechnical engineering—consistently show that stacked ensembles outperform individual models, achieving performance improvements of approximately 10% or higher in multiple studies [10] [11] [12]. The method's ability to manage high-dimensional data with complex interactions makes it particularly valuable for materials informatics where traditional trial-and-error approaches are computationally prohibitive.

Theoretical Foundations: Variance Reduction Mechanisms

The Bias-Variance Decomposition Framework

The theoretical superiority of stacking originates from its nuanced approach to the bias-variance tradeoff, fundamental to supervised learning. A model's expected prediction error can be decomposed into three components: bias (error from erroneous assumptions), variance (error from sensitivity to fluctuations in training data), and irreducible error. Single complex models often achieve low bias but high variance, making them prone to overfitting. Stacking addresses this limitation through two primary mechanisms:

Diversity Integration: By combining multiple algorithms with different inductive biases (e.g., tree-based methods, linear models, support vector machines), stacking creates an ensemble where individual models make uncorrelated errors. When these diverse predictions are optimally combined, errors tend to cancel out, significantly reducing overall variance without substantially increasing bias [13] [8].

Meta-Learning Optimization: The meta-model learns optimal weighting schemes for base model predictions, effectively functioning as a adaptive bias-variance balancer. Theoretical work has established that super learners represent an asymptotically optimal system for learning, guaranteed to perform at least as well as the best single base model in large samples [1] [8].

Mathematical Formalization of Stacking

The stacking framework can be formally described as a two-level learning process. Given a set of L base models ( M1, M2, ..., ML ) and a dataset ( D = {(xi, yi)}{i=1}^N ), the first level generates cross-validated predictions from each base model. These predictions form a new feature matrix ( Z ) where each column ( Zj ) contains predictions from model ( Mj ). The meta-model then learns the mapping:

[ f_{\text{meta}}: Z \rightarrow Y ]

This secondary learning process enables the ensemble to identify contexts where specific base models excel, creating a specialized delegation system that no single model can achieve independently [1] [8]. The convex combination constraint often applied to meta-learning weights (( \alphak \geq 0, \sum{k=1}^L \alpha_k = 1 )) further enhances stability by preventing extreme weight assignments [1].

Quantitative Performance Evidence Across Domains

Recent empirical studies across multiple scientific domains provide compelling evidence of stacking's effectiveness for improving generalization. The following table summarizes key performance comparisons between stacked ensembles and individual models:

Table 1: Performance Comparison of Stacked Models vs. Individual Algorithms

| Application Domain | Best Individual Model Performance (R²) | Stacked Model Performance (R²) | Performance Improvement | Key Metrics |

|---|---|---|---|---|

| MXenes Work Function Prediction [10] | 0.85-0.90 (estimated) | 0.95 | ~10% | MAE: 0.2 eV |

| Refractory HEN Coating Hardness [12] | ~0.82 | 0.901 | 10% | - |

| Dump Slope Stability Prediction [11] | Varies by algorithm | H2O AutoML best performer | Outperformed all base models | R², MAE, RMSE |

| Boston Housing Price Prediction [8] | Varies by algorithm | Superior to individual models | Optimal combination | RMSE reduction |

The consistency of these improvements across different domains and data characteristics underscores stacking's robustness. Particularly notable is the MXenes study, where stacking achieved a remarkably low mean absolute error of 0.2 eV while maintaining high interpretability through SHAP analysis [10]. In the refractory metal high-entropy nitride coatings research, the 10% accuracy improvement translated to practically significant gains in predicting mechanical properties under extreme conditions [12].

Table 2: Error Metric Comparisons Across Stacking Applications

| Study | Base Model MAE/RMSE | Stacked Model MAE/RMSE | Variance Reduction |

|---|---|---|---|

| MXenes Work Function [10] | ~0.26 eV (literature) | 0.2 eV | 23% improvement |

| Dump Slope Stability [11] | Varies by model | H2O AutoML minimal | Significant variance reduction |

| Super Learner Demonstration [1] | GAM: 2.58, Earth: 2.48 (MSE) | 0.387×GAM + 0.613×Earth | Optimal weighted combination |

Beyond accuracy metrics, stacking demonstrates superior stability in creation—the consistency of model outputs when trained with different random seeds or environmental variations. While individual models like random forests and deep neural networks can exhibit significant output variations based on initialization parameters, stacked ensembles show greater stability, particularly when base model diversity is properly managed [14].

Implementation Protocols for Materials Research

Standardized Stacking Workflow

The following experimental protocol outlines a systematic approach for implementing stacked generalization in materials stability research:

Phase 1: Data Preparation and Feature Engineering

- Curate high-quality datasets with comprehensive feature representation, including composition descriptors, processing parameters, and stability metrics

- Implement rigorous data cleaning procedures addressing missing values using appropriate imputation methods (Random Forest imputation demonstrated superior performance in HEN coatings study [12])

- Conduct feature selection to reduce dimensionality while preserving physical significance (e.g., using Pearson correlation thresholds |R| = 0.85 for feature grouping as in MXenes research [10])

- Partition data into training (80%), validation (10%), and test sets (10%) with stratification to maintain distribution consistency

Phase 2: Base Model Selection and Training

- Select diverse, high-performance algorithms spanning different learning paradigms (e.g., Random Forests, Gradient Boosting Machines, XGBoost, Support Vector Regressors, Neural Networks)

- Implement k-fold cross-validation (typically V=5 or V=10) with identical fold assignments across all base models to ensure consistent comparison

- Train each base model on the training set while preserving cross-validated predictions using settings like

keep_cross_validation_predictions = TRUEin h2o [8] - Perform hyperparameter optimization for each base model using Bayesian optimization or grid search

Phase 3: Meta-Model Development

- Construct level-one data by combining cross-validated predictions from all base models into an N×L feature matrix, where N is training sample size and L is number of base models

- Select an appropriate meta-learning algorithm (linear models, regularized regression, and random forests are common choices)

- Train the meta-model on level-one data to learn optimal combination weights while avoiding overfitting through regularization

- Validate the stacking ensemble on held-out validation data and refine as needed

Phase 4: Evaluation and Interpretation

- Assess final model performance on untouched test data using multiple metrics (R², MAE, RMSE, MAPE)

- Perform model interpretability analysis using SHAP (SHapley Additive exPlanations) to quantify feature importance and validate physical relevance [10] [11]

- Compare stacked ensemble performance against individual base models and simple averaging ensembles

- Conduct sensitivity analysis to evaluate model stability under data perturbations [14]

Research Reagent Solutions: Computational Tools

Table 3: Essential Software Tools for Stacking Implementation

| Tool/Category | Specific Examples | Function in Stacking Pipeline | Implementation Considerations |

|---|---|---|---|

| Automated Machine Learning Platforms | H2O AutoML [11] [8], Lazy Predict [11] | Automated base model selection and hyperparameter optimization | Reduces manual tuning effort; provides performance baselines |

| Ensemble Learning Libraries | Scikit-learn, SuperLearner [1], subsemble [8], caretEnsemble [8] | Pre-built stacking implementation with cross-validation | Varies in computational efficiency and meta-learners available |

| Interpretability Frameworks | SHAP (SHapley Additive exPlanations) [10] [11] [12] | Model interpretation and feature importance quantification | Essential for materials insight generation beyond prediction |

| Hyperparameter Optimization | Bayesian optimization, grid search, random search | Tuning base model and meta-model parameters | Critical for maximizing ensemble performance |

Advanced Methodological Considerations

Domain-Specific Adaptation Strategies

Successful application of stacking in materials stability research requires careful adaptation to domain-specific characteristics:

Data Scarcity Mitigation: Materials datasets often face limitations in sample size. Techniques such as SISSO (Sure Independence Screening and Sparsifying Operator)-constructed descriptors can enhance feature representation in small-data regimes, as demonstrated in MXenes work function prediction [10]. Additionally, synthetic data generation through physical simulations can expand effective training sets.

Physical Constraint Integration: Unlike generic machine learning applications, materials informatics benefits from incorporating domain knowledge directly into the stacking architecture. This can include:

- Constraining meta-model weights to maintain physical interpretability

- Incorporating known structure-property relationships as prior knowledge

- Using materials-specific feature representations (e.g., crystal graphs, composition descriptors)

Multi-fidelity Data Integration: Materials data often combines high-fidelity experimental measurements with lower-fidelity computational results. Stacking architectures can be extended to leverage multi-fidelity learning by treating predictions from physics-based models as additional base models.

Variance Reduction Optimization Techniques

Several advanced strategies can further enhance stacking's variance reduction capabilities:

Heterogeneous Base Model Selection: Prioritize algorithm diversity over individual performance when selecting base models. Combining tree-based methods (RF, GBM), kernel methods (SVM), linear models (regularized regression), and neural networks creates error decorrelation essential for variance reduction [13].

Multi-Level Stacking Architectures: For exceptionally complex problems, deep stacking hierarchies with multiple meta-learning layers can capture intricate interaction patterns, though at the cost of interpretability and computational requirements.

Dynamic Weighting Schemes: Implement context-aware meta-models that adapt base model weights based on input characteristics, creating specialized sub-ensembles for different regions of the feature space.

Visualization of Stacking Architecture

The following diagram illustrates the complete stacking workflow with emphasis on the variance reduction mechanism:

Diagram 1: Stacked Generalization Architecture for Variance Reduction

Stacked generalization represents a paradigm shift in predictive modeling for materials stability research, offering systematic variance reduction and enhanced generalization through its sophisticated multi-layer learning architecture. The consistent demonstration of 10% or higher accuracy improvements across diverse materials domains, combined with robust theoretical foundations, positions stacking as an essential methodology for modern materials informatics. The provided protocols and implementation frameworks enable materials researchers to leverage this powerful approach while maintaining physical interpretability through advanced explanation techniques. As materials datasets continue to grow in size and complexity, stacking's ability to integrate diverse modeling paradigms while controlling variance will become increasingly valuable for accelerating materials discovery and optimization.

Predicting material stability is a cornerstone of materials science, crucial for applications from catalysis and electronics to drug development. However, this task presents significant challenges that traditional computational and experimental approaches struggle to address efficiently. The fundamental hurdle lies in the complex relationship between a material's composition, structure, and its thermodynamic stability. As highlighted in recent benchmarking efforts, a key disconnect exists between commonly used computational proxies, such as formation energy calculated via Density Functional Theory (DFT), and true thermodynamic stability, which is more accurately represented by the energy above the convex hull [15]. Performing high-throughput DFT calculations for each candidate material is often impractical due to enormous computational and time costs, rendering traditional trial-and-error approaches less feasible [10].

Furthermore, the sheer scale of unexplored chemical space is vast. While ~10^5 material combinations have been tested experimentally and ~10^7 simulated, upwards of ~10^10 possible quaternary materials are allowed by chemical rules [15]. This combinatorial explosion makes exhaustive screening impossible, creating an urgent need for methods that can accelerate discovery. Machine learning (ML) offers promising alternatives by efficiently identifying patterns within large datasets, handling multidimensional data, and quantifying prediction uncertainty [15]. However, standard ML models face their own challenges, including limited predictive accuracy, susceptibility to overfitting with high-dimensional features, and a lack of interpretability—the notorious "black box" problem [10]. This application note explores how stacked generalization, an advanced ensemble ML technique, is uniquely suited to overcome these challenges and provides a robust framework for predicting material stability.

Key Challenges in Material Stability Prediction

The Fundamental Obstacles

The prediction of material stability is fraught with intrinsic and methodological difficulties. The core challenges can be summarized as follows:

- Prospective vs. Retrospective Performance: Models performing well on retrospective test splits often fail in real-world discovery campaigns due to unrealistic data splits and substantial covariate shift between training and application distributions [15].

- Misaligned Metrics: Global regression metrics like Mean Absolute Error (MAE) can be misleading. Models with strong MAE can produce high false-positive rates if accurate predictions lie close to the decision boundary (e.g., 0 eV/atom above hull), leading to wasted resources [15].

- Data Scarcity and Bias: For many promising material classes, such as Metal-Organic Frameworks (MOFs) and Transition Metal Complexes (TMCs), large, high-quality experimental datasets are scarce. Furthermore, data extracted from the literature suffers from publication bias, lacking "failed" experiments crucial for training robust stability classifiers [16].

- Interpretability Gap: The "black box" nature of many complex ML models makes it difficult to extract physical insights or understand the intrinsic relationship between material features and stability, limiting their utility for guiding design [10].

Quantitative Evidence: Performance Gaps in Stability Prediction

Table 1: Challenges in material stability prediction evidenced by performance variations across methodologies.

| Challenge Area | Evidence / Manifestation | Impact on Discovery |

|---|---|---|

| Model Generalization | Performance drop between retrospective validation and prospective application [15]. | High opportunity cost from false positives and missed stable candidates. |

| Data Fidelity | Reliance on computed formation energy rather than energy above convex hull [15]. | Inaccurate assessment of true thermodynamic stability. |

| Feature Dimensionality | 98 initial features for MXenes required reduction to 15 key features to avoid overfitting [10]. | Models fail to generalize to new, unseen compositions or structures. |

| Experimental Data Curation | Only ~3,000 thermal stability (Td) values and ~2,000 solvent removal stability labels extracted from ~4,000 MOF manuscripts [16]. | Data scarcity limits model accuracy and chemical space coverage. |

Stacked Generalization: A Suited Solution

Core Principles and Workflow

Stacked generalization, or stacking, is an ensemble machine learning technique designed to minimize the generalization error rate. It operates by integrating the predictions of multiple base models (level-0 models) through a meta-model (level-1 model) that learns how to best combine them [17]. This approach recognizes that different ML algorithms capture distinct patterns in the data; stacking leverages their collective strengths and mitigates individual weaknesses.

The process involves generating predictions from diverse base models (e.g., Random Forest, Gradient Boosting, Support Vector Machines) on a training set. These predictions then become the input features for a meta-model, which undergoes secondary training to produce the final, refined prediction [10]. This workflow effectively reduces overfitting, bias, and variance, leading to enhanced predictive performance and superior generalization on unseen data compared to any single model [10] [17].

Why Stacking is Suited for Stability Prediction

Stacking directly addresses the core challenges of stability prediction:

- Enhanced Accuracy and Generalization: By combining multiple models, stacking achieves a more robust and accurate prediction than individual models. For instance, in predicting MXene work functions, a stacked model integrating high-quality descriptors achieved a coefficient of determination (R²) of 0.95 and a Mean Absolute Error (MAE) of 0.2 eV, significantly improving predictive performance [10].

- Mitigation of Overfitting: The stacked framework inherently reduces the risk of overfitting. A study on psychosocial maladjustment found that a stacked model (LDS-R) demonstrated the best comprehensive performance and generalization error in external validation, outperforming its constituent base models [17]. This robustness is critical for reliable prospective screening.

- Compatibility with Interpretability Frameworks: Stacking can be effectively combined with model interpretation tools like SHapley Additive exPlanations (SHAP). SHAP analysis can be applied to the final stacked model to quantify the contribution of each input feature, transforming the "black box" into a transparent "glass box" and revealing structure-property relationships [10] [11] [17].

The following diagram illustrates the typical workflow for applying stacked generalization to material stability prediction, integrating both the model architecture and the critical steps for ensuring interpretability and reliability.

Experimental Protocols for Stacked Generalization

Protocol: Implementing a Stacked Model for Stability Prediction

This protocol outlines the key steps for developing a stacked generalization model to predict material stability, drawing from successful applications in materials science [10] and other fields [17].

Objective: To build a robust, generalized predictive model for material stability (e.g., energy above hull, thermal decomposition temperature) using stacked generalization.

Step-by-Step Methodology:

Data Curation and Partitioning

- Source: Gather a dataset of known materials with target stability property. Sources include computational databases (The Materials Project, AFLOW, OQMD, C2DB [10] [15]) or literature-curated experimental data [16].

- Split: Partition data into training (~80%) and hold-out test sets (~20%). Ensure the test set is representative of the prospective application or originates from a new, external source to simulate real-world performance [15].

Feature Engineering and Descriptor Construction

- Initial Screening: Calculate a comprehensive set of features (compositional, structural, electronic). Reduce dimensionality by removing highly correlated features (|Pearson R| > 0.85) [10].

- Advanced Descriptors: Employ the SISSO (Sure Independence Screening and Sparsifying Operator) method to construct powerful, non-linear descriptors that capture fundamental physical relationships between features and the target property [10].

Base Model Training and Validation

- Selection: Choose diverse, high-performing algorithms as base models (Level-0). Common choices include Random Forest (RF), Gradient Boosting Decision Tree (GBDT), LightGBM (LGB), and Support Vector Classification/Regression (SVC/SVR) [10] [17].

- Training: Train each base model on the training set using k-fold cross-validation. The cross-validated predictions on the training set are saved—these become the meta-features for the next step.

Meta-Model Training and Stacking

- Assembly: Create a new dataset where the features are the cross-validated predictions (meta-features) from all base models, and the target is the true value.

- Training: Train a meta-model (Level-1) on this new dataset. A linear model or a simple, robust algorithm like Random Forest is often effective [17]. This model learns the optimal way to combine the base predictions.

Model Interpretation and Prospective Validation

- Interpretation: Apply SHAP (SHapley Additive exPlanations) analysis to the final stacked model. This identifies the most important features and quantifies their impact on stability predictions, providing crucial scientific insight [10] [11] [17].

- Validation: Rigorously evaluate the model on the held-out test set. For a true test of utility, perform prospective benchmarking by predicting the stability of a new, independently sourced set of candidate materials and validate top candidates with higher-fidelity methods (e.g., DFT) [15].

Table 2: Key computational tools and resources for building stacked models for material stability prediction.

| Category | Tool / Resource | Function & Application |

|---|---|---|

| Data Sources | C2DB (Computational 2D Materials Database) [10] [18] | Provides calculated properties for thousands of 2D materials for training and validation. |

| Materials Project, AFLOW, OQMD [15] | High-throughput DFT databases for bulk inorganic crystals, containing formation energies and stability data. | |

| CoRE MOF Database [16] | A collection of experimentally synthesized, computationally refined Metal-Organic Framework structures. | |

| Feature Engineering | SISSO [10] | A "glass-box" ML method that constructs optimal, interpretable descriptors from a large feature space. |

| Machine Learning Libraries | Scikit-learn [10] | A Python library providing implementations of base models (RF, GBM, SVR) and meta-modeling utilities. |

| LazyPredict [11] | An AutoML library useful for rapidly benchmarking multiple base models to select the best performers. | |

| Model Interpretation | SHAP (SHapley Additive exPlanations) [10] [11] [17] | A unified framework to explain the output of any ML model, quantifying feature importance and effects. |

| Validation Frameworks | Matbench Discovery [15] | An evaluation framework for benchmarking ML models on prospective materials discovery tasks. |

Predicting material stability remains a formidable challenge due to data limitations, model generalization issues, and interpretability gaps. Stacked generalization emerges as a powerfully suited technique to address these challenges head-on. By integrating diverse base models through a meta-learner, it delivers superior predictive accuracy and enhanced robustness crucial for prospective materials discovery. Its compatibility with interpretability frameworks like SHAP ensures that these advanced models yield not just predictions, but also actionable physical insights. The provided protocols and toolkit offer a clear roadmap for researchers to implement this powerful approach, accelerating the rational design of novel, stable materials for energy, electronics, and pharmaceutical applications.

Stacked generalization, or stacking, is an advanced ensemble machine learning technique designed to enhance predictive performance by combining multiple models. This methodology operates through a two-layer structure: a set of base learners (individual models) that make initial predictions from the original data, and a meta-learner that integrates these predictions to generate a final, refined output [19]. This approach is particularly valuable in scientific domains like materials stability research and drug development, where it effectively leverages the strengths of diverse algorithms to improve accuracy and robustness beyond the capabilities of any single model [19] [20]. The core principle is that by combining models with different inductive biases, the meta-learner can learn how to best weigh their opinions, often leading to superior generalization on complex tasks where the optimal model type is not known a priori [19].

Common Base Learners in Scientific Research

Base learners are heterogeneous, meaning they employ a variety of learning algorithms to ensure diversity in their predictive approaches. This diversity is crucial for the success of the ensemble, as it captures different patterns and relationships within the data [19] [20]. Three of the most widely used base learners for classification and regression tasks are Random Forest, Support Vector Machine, and Decision Trees.

Summary of Common Base Learners

| Algorithm | Core Learning Principle | Key Advantages | Common Applications in Science |

|---|---|---|---|

| Decision Tree (DT) | A tree-like model that splits data into branches based on feature values, using criteria like Gini impurity or information gain to make sequential decisions [21] [22] [23]. | High interpretability, requires minimal data preprocessing, and can model non-linear relationships [22] [23]. | Customer segmentation, credit scoring [22]. |

| Random Forest (RF) | An ensemble of multiple decorrelated Decision Trees, using bagging and feature randomness to produce a final prediction through averaging or majority voting [21] [24] [23]. | Reduces overfitting risk, robust to outliers and noise, provides feature importance scores, and generally offers high accuracy [21] [24] [23]. | Materials discovery [15], drug target prediction [25] [26], gene expression classification [24]. |

| Support Vector Machine (SVM) | Finds the optimal hyperplane that best separates classes in a high-dimensional space, maximizing the margin between them. The "kernel trick" handles non-linear problems [21] [22]. | Effective in high-dimensional spaces, versatile with different kernels, and robust to overfitting, especially in complex, non-linearly separable problems [21] [22]. | Bioinformatics (e.g., protein sequence classification), image recognition, text classification [22]. |

Meta-Learners for Predictive Integration

The meta-learner is a model trained on the predictions of the base learners to produce a final output. Its role is to discern under which circumstances each base learner performs best and to combine their strengths optimally [19].

Overview of Prominent Meta-Learners

| Meta-Learner | Description | Role in Stacking |

|---|---|---|

| Linear/Logistic Regression (LR) | A linear model used for regression (Linear) or classification (Logistic) tasks. As a meta-learner, it learns the optimal linear combination of the base learners' predictions [19]. | Provides a simple, interpretable, and often effective way to weight the contributions of different base models. It can serve as a strong baseline for more complex meta-learners [19]. |

| Multi-Layer Perceptron (MLP) | A class of feedforward neural network with multiple layers (input, hidden, output) that learns non-linear mappings using backpropagation [21] [19]. | Capable of learning complex, non-linear interactions between the predictions of the base learners. This can capture higher-order patterns that a linear meta-learner might miss, potentially leading to performance gains [19]. |

Experimental Protocol for Stacked Generalization

The following protocol outlines the key steps for developing a stacked ensemble, drawing from methodologies successfully applied in pharmaceutical and materials science research [19] [20].

Data Preprocessing and Feature Engineering

- Handling Missing Data: Impute missing values in categorical variables with the mode and in continuous variables with the mean of the respective column [23].

- Categorical Variable Encoding: Convert categorical features into numerical values using techniques like label encoding (e.g., mapping 'Male' to 1 and 'Female' to 0) or one-hot encoding [23].

- Feature Selection: For high-dimensional data, employ feature selection methods to identify the most predictive subset. A random forest-based sequential forward feature selection can be used for this purpose [20].

Training Base Learners with Out-of-Sample Predictions

To prevent data leakage and overfitting when training the meta-learner, it is critical to generate cross-validated, out-of-sample predictions from the base learners.

- Resampling: Use a resampling method like bootstrapping or stratified k-fold cross-validation (e.g., 5-fold or 10-fold) on the training set [19] [20].

- Model Fitting and Prediction: For each resample, fit each base learner (e.g., Random Forest, SVM) on the training fold and use it to generate predictions for the validation fold (out-of-sample).

- Aggregation: Repeat the process multiple times until every data point in the original training set has one or more out-of-sample predictions. For bootstrapping, average multiple predictions for each patient to obtain a single, robust out-of-sample prediction per base learner [19].

- Test Set Predictions: To make predictions on a hold-out test set, the base learners can be refitted on the entire training set, or their predictions can be averaged from multiple bootstrap models [19].

Training the Meta-Learner

- Construct Meta-Features: The out-of-sample predictions from all base learners form a new dataset, the "meta-features," which serve as the input for the meta-learner.

- Model Training: Train the chosen meta-learner (e.g., Logistic Regression or an MLP) on this new dataset, using the true target variable as the label [19].

- Hyperparameter Tuning: Optimize the hyperparameters of both the base learners and the meta-learner. This can be done using the out-of-sample predictions framework within the training data or a hold-out validation set. Advanced optimization techniques like Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO) can be employed for complex models like deep neural networks [25].

Model Evaluation and Interpretation

- Performance Metrics: Evaluate the final stacked model on a held-out test set. Use metrics appropriate to the task:

- Interpretation: Use techniques like permutation feature importance to assess the contribution of each base learner's predictions to the meta-learner's final decision [19]. For individual predictions, Local Interpretable Model-agnostic Explanations (LIME) can provide insight [20].

Diagram 1: Stacked Generalization Workflow illustrating the two-level architecture where base learners generate out-of-sample predictions, which are then combined by a meta-learner.

This table details key computational "reagents" and their functions for building stacked ensembles in scientific discovery.

Research Reagent Solutions for Stacked Generalization

| Category / Item | Function in the Workflow | Example Use-Case |

|---|---|---|

| Data & Feature Processing | ||

| Bootstrap Samples | Random sampling with replacement to create multiple training datasets for generating out-of-sample predictions and reducing variance [19] [24]. | Creating diverse subsets for training multiple Random Forest trees [19]. |

| Feature Selection Algorithm (e.g., Random Forest based) | Identifies the optimal subset of features from a high-dimensional dataset to improve model efficiency and performance [20]. | Selecting 9 key patient attributes from 472 EHR features for predicting drug concentration [20]. |

| Base Learner Algorithms | ||

| Random Forest | Serves as a robust, high-performance base learner that reduces overfitting through ensemble averaging [21] [24]. | Pre-screening thermodynamically stable inorganic crystals in a high-throughput materials discovery pipeline [15]. |

| Support Vector Machine (SVM) | Acts as a powerful base learner for high-dimensional data, effective with non-linear kernels [21] [22]. | Classifying protein sequences or predicting molecular structures in bioinformatics [22]. |

| Decision Tree | Provides a simple, interpretable base model; often used as a component within Random Forest [21] [23]. | Creating a baseline model for credit approval decisions [23]. |

| Meta-Learner Algorithms | ||

| Logistic Regression | A linear model that learns to weight the predictions of base learners for a final classification output [19]. | Combining ridge regression and MLP predictions to classify patient dropout risk [19]. |

| Multi-Layer Perceptron (MLP) | A neural network meta-learner that captures complex, non-linear interactions between base learner predictions [19] [25]. | Serving as a meta-learner to non-linearly combine predictions from statistical and ML models for depression outcome prediction [19]. |

| Optimization & Interpretation | ||

| Hyperparameter Optimization (e.g., HSAPSO) | Automates the tuning of model parameters (e.g., in an MLP) to maximize predictive performance and stability [25]. | Fine-tuning the layers and neurons of a Stacked Autoencoder for drug classification, achieving 95.5% accuracy [25]. |

| Model Interpretation (LIME, PDP) | Provides post-hoc explanations for the model's global and local predictions, enhancing trust and insight [20]. | Explaining the prediction of an individual's olanzapine drug concentration using a stacked regressor [20]. |

Diagram 2: Stacking Model Architecture showing the flow from raw features through heterogeneous base learners to the meta-learner for final integration.

Building Robust Stacking Models: A Step-by-Step Methodology for Material and Pharmaceutical Data

Data Preparation and Feature Engineering for Material Stability Datasets

Within the framework of a broader thesis on stacked generalization for machine learning (ML) in material stability research, the integrity and predictive power of the final model are fundamentally dependent on the quality of the underlying data. Stacked generalization, or stacking, combines multiple base models to improve predictive performance, making the preparation of its input features a critical step. This document provides detailed application notes and protocols for preparing material stability datasets, with a specific focus on the requirements for building robust stacked ML systems. We summarize quantitative data from large-scale computational studies, provide methodologies for key experiments, and outline essential feature engineering techniques to create a reliable foundation for predictive modeling of both stable and metastable materials [27].

Material Stability Dataset Characterization

Large-scale density-functional theory (DFT) calculations have generated extensive datasets that map the landscape of stable and metastable materials. The following table summarizes key properties of a prominent dataset, providing a quantitative overview essential for planning ML projects [27].

Table 1: Characterization of a Large-Scale Material Stability Dataset

| Property | Description | Value/Example |

|---|---|---|

| Dataset Size | Number of crystalline materials | ~175,000 compounds [27] |

| Stability Scope | Includes stable and nearly stable materials | On convex hull or within 100 meV/atom [27] |

| Computational Method | Primary DFT functionals used | PBEsol (geometry), SCAN (energies) [27] |

| Chemical Diversity | Number of different elements covered | Up to Bismuth (excluding noble gases) [27] |

| Composition Types | Distribution of materials by element count | Dominated by ternary, then binary and quaternary [27] |

| Structural Complexity | Typical number of atoms in primitive cell | Peak centered around five atoms [27] |

| Crystal Systems | Most represented crystal systems | Trigonal and Orthorhombic [27] |

The thermodynamic stability of a material is typically evaluated by its energy above the convex hull ((E{\text{hull}})), which represents the energy difference per atom from the most stable phase mixture at that composition. For ML purposes, materials with an (E{\text{hull}}) within 0.1 eV/atom are often considered relevant for training, as this cutoff encompasses metastable, experimentally accessible phases and helps account for potential errors in DFT-calculated formation energies [27].

Data Preparation Protocols

Data Acquisition and Consolidation

The starting point for constructing a comprehensive stability dataset often involves consolidating data from multiple sources, such as the AFLOW database and the Materials Project, to ensure broad chemical and structural coverage [27].

Protocol 1: Data Sourcing and Homogenization

- Objective: To assemble a homogeneous dataset from multiple computational databases.

- Materials & Software:

- Procedure: a. Data Retrieval: Download crystal structures, formation energies, and calculated band gaps. b. Functional Homogenization: Filter calculations to ensure consistent use of exchange-correlation functional (e.g., PBE), pseudopotentials, and Hubbard U-parameters [27]. c. Duplicate Removal: Identify and remove entries with identical space group, composition, and total energy (rounded to a predefined precision, e.g., the 4th digit) [27]. d. Outlier Removal: Apply strategies to filter out ill-converged or physically implausible calculations, such as removing specific flagged prototypes [27]. e. Convex Hull Construction: Calculate the phase diagram and convex hull of thermodynamic stability for all remaining structures, applying necessary energy corrections [27].

- Output: A curated, consistent dataset of material formations and their calculated properties, ready for feature engineering.

Stability Labeling and Validation

Protocol 2: Convex Hull Analysis and Stability Labeling

- Objective: To classify materials as stable or metastable based on their energy above the convex hull.

- Materials & Software:

- Input: Curated dataset from Protocol 1.

- Software: Phase diagram construction tools in Pymatgen.

- Procedure: a. Compute the formation energy for each material in the dataset. b. Construct the convex hull for all chemical compositions present. c. For each material, calculate (E{\text{hull}}), its energy above the convex hull. d. Apply a stability cutoff (e.g., 100 meV/atom) to define the target variable for ML [27]. e. Labeling: Assign stability labels (e.g., "Stable" for (E{\text{hull}} = 0), "Metastable" for (0 < E{\text{hull}} \leq \text{cutoff}), "Unstable" for (E{\text{hull}} > \text{cutoff})).

- Output: A labeled dataset where each material has a definitive stability classification.

Feature Engineering for Stability Prediction

Feature engineering transforms raw material data into informative descriptors that ML models can use to learn the underlying patterns of stability. The table below catalogs key feature categories.

Table 2: Feature Engineering Taxonomy for Material Stability

| Feature Category | Description | Example Descriptors | Relevance to Stability |

|---|---|---|---|

| Stoichiometric Attributes | Features derived from composition alone, ignoring structure. | Elemental fractions, atomic fractions, weight fractions. | Captures basic chemical tendencies. |

| Elemental Property Statistics | Statistical moments (mean, range, std, mode) of elemental properties. | Electronegativity, atomic radius, valence electrons, melting point [27]. | Encodes chemical similarity and bonding character. |

| Crystal Structure | Descriptors of the atomic arrangement. | Space group number, crystal system, Wyckoff positions, site symmetries [27]. | Directly related to thermodynamic stability. |

| Electronic Structure | Features derived from electronic calculations. | Band gap (PBEsol/SCAN), density of states, band centers [27]. | Provides insight into bonding and stability. |

| Volume-Derived Metrics | Properties related to the unit cell volume. | Volume per atom, density. | Correlates with bonding and phase stability. |

Integrated Workflow for Stacked Generalization

The following diagram illustrates the complete data preparation and feature engineering pipeline, designed to feed into a stacked generalization model.

The Scientist's Toolkit

This section details the essential computational reagents and software solutions required to implement the protocols described in this document.

Table 3: Essential Research Reagent Solutions for Computational Material Stability

| Reagent / Software Solution | Function | Application Note |

|---|---|---|

| Vienna Ab initio Simulation Package (VASP) | Performs DFT calculations for geometry optimization and single-point energy evaluations [27]. | Used with PBEsol for structures and SCAN for accurate energies. Requires significant CPU resources [27]. |

| Pymatgen Library | Python library for materials analysis [27]. | Critical for structure manipulation, parsing VASP outputs, phase diagram analysis, and feature generation [27]. |

| AFLOW & Materials Project APIs | Programmatic interfaces to large materials databases. | Primary sources for initial data retrieval and consolidation into a homogeneous set [27]. |

| Stability Protocol Template | A structured document detailing the stability study design. | Defines the "what, why, and how" of the stability assessment, including test parameters, acceptance criteria, and storage of data results [28]. |

| High-Performance Computing (HPC) Cluster | Provides the computational power for DFT calculations and ML model training. | Essential for processing the ~175k materials, requiring millions of CPU hours [27]. |

Within the framework of a broader thesis on stacked generalization for machine learning-based material stability research, the selection of high-quality descriptors is paramount. The predictive performance and interpretability of the final stacked model are directly contingent on the input features provided by the base learners. This protocol outlines advanced feature selection methodologies, specifically the integration of the Sure Independence Screening and Sparsifying Operator (SISSO) with hybrid filter-wrapper approaches. These techniques are designed to navigate the high-dimensional feature spaces typical in materials science, such as those encountered in predicting corrosion resistance or phase stability in multi-principal element alloys (MPEAs), to yield robust, physically interpretable, and computationally efficient descriptors [29] [30].

Theoretical Foundation and Comparative Analysis

Core Methodologies

SISSO Algorithm: SISSO is a powerful symbolic regression method that constructs a vast feature space by applying a set of mathematical operators (e.g.,

+,-,×,÷,exp,log,^2) to primary features [29]. It then employs a two-step process: Sure Independence Screening (SIS) to select features highly correlated with the target property, followed by a Sparsifying Operator (SO), typically L0-regularization, to identify a minimal set of descriptors that form a predictive model [29]. Its primary advantage is the generation of compact, white-box models that can offer deep physical insights [29].Hybrid Filter-Wrapper Methods: These methods combine the computational efficiency of filter methods with the high accuracy of wrapper methods [31]. The filter stage first ranks or weights features using fast, model-agnostic statistical measures (e.g., Mutual Information, F-score) [32] [33]. The wrapper stage then employs an evolutionary or metaheuristic algorithm (e.g., Whale Optimization Algorithm, Harris Hawks Optimization) guided by a classifier's performance to search for an optimal feature subset from the pre-filtered candidates [31] [32]. This synergy effectively mitigates the "curse of dimensionality" and computational cost associated with pure wrapper methods on high-dimensional data [33].

Comparative Analysis of Feature Selection Techniques

Table 1: A comparison of key feature selection methodologies relevant for materials informatics.

| Method Category | Mechanism | Advantages | Limitations | Ideal Use Case |

|---|---|---|---|---|

| SISSO | Constructs features via operators; Uses SIS & L0-SO for selection [29]. | Generates interpretable, analytical models; Exhaustive search of defined space [29]. | Combinatorial explosion with high feature complexity [34]. | Deriving physical laws from primary features; Symbolic regression. |

| i-SISSO | Integrates mRMR (filter) before the SO step to reduce candidate space [34]. | Drastically reduces computation time; Maintains model accuracy [34]. | Dependent on the effectiveness of the mRMR pre-selection. | High-dimensional problems where pure SISSO is computationally prohibitive. |

| Hybrid Filter-Wrapper | Filter stage ranks features; Wrapper stage uses an optimizer for final selection [31] [32]. | Balances speed and accuracy; Effective for high-dimensional data [31] [33]. | Performance depends on choice of filter metric and optimizer [32]. | Gene expression data; Classifying material properties from vast feature sets. |

| Pure Wrapper | Uses a learning algorithm (e.g., KNN, SVM) to evaluate subsets [31]. | High classification accuracy; Considers feature interactions. | Computationally very expensive; Risk of overfitting [31]. | Datasets with a moderate number of features. |

| Pure Filter | Ranks features based on statistical scores (e.g., Pearson correlation) [33]. | Fast and computationally efficient; Model-independent. | May select redundant features; Ignores interaction with classifier [31]. | Pre-screening for very high-dimensional data. |

Integrated Application Protocols

This section provides detailed workflows for implementing these advanced feature selection methods.

Protocol A: Implementing the SISSO Algorithm

SISSO is ideal for discovering compact, analytical expressions that describe material properties.

1. Data Preparation and Input File Formatting:

- Format training data in a space-delimited file named

train.dat. - The first column is the material name, the second is the target property, and subsequent columns are primary features [29].

- Ensure the data is properly normalized to ensure stable feature construction.

2. Configuration of SISSO.in File:

Key parameters to set in the input file include [29]: