Robustness in the Wild: A 2025 Guide to Evaluating Generative AI Against Noisy Biomedical Data

This article provides a comprehensive framework for researchers and drug development professionals to evaluate and enhance the robustness of generative AI models against noisy training data.

Robustness in the Wild: A 2025 Guide to Evaluating Generative AI Against Noisy Biomedical Data

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to evaluate and enhance the robustness of generative AI models against noisy training data. It covers foundational principles, cutting-edge evaluation metrics, and practical mitigation strategies tailored for biomedical applications. By exploring methods from automated metrics to human evaluation protocols, and highlighting real-world case studies in AI-driven drug discovery, this guide aims to equip scientists with the tools to build more reliable, generalizable, and clinically viable generative models.

Defining Robustness: Why Noisy Data is a Critical Challenge for Generative AI in Biomedicine

What is Model Robustness? Core Definitions and Significance for Reliable AI

Model robustness is a foundational property for trustworthy Artificial Intelligence (AI) systems, defined as the capacity of a machine learning model to sustain stable predictive performance when confronted with variations and changes in input data [1]. In practical terms, a robust model maintains reliability when faced with real-world uncertainties that differ from ideal training conditions [2] [3]. For researchers and drug development professionals, ensuring model robustness is particularly crucial when deploying AI in sensitive domains where erroneous predictions could have serious consequences [2] [1].

The significance of robustness extends beyond mere performance metrics, forming a cornerstone of Trustworthy AI alongside other critical aspects like fairness, transparency, privacy, and accountability [1]. Robust AI systems demonstrate resilience against various challenges including noisy data, distribution shifts, and adversarial manipulations [3]. This resilience enables reliable deployment in dynamic real-world environments, from clinical decision support systems to autonomous vehicles and fraud detection [2] [1] [3].

Core Concepts and Definitions: Beyond Accuracy

Distinguishing Accuracy from Robustness

While often conflated, accuracy and robustness serve distinct purposes in model evaluation. Accuracy reflects how well a model performs on clean, familiar, and representative test data, whereas robustness measures how reliably the model performs when inputs are noisy, incomplete, adversarial, or from a different distribution [2]. This distinction reveals why a model achieving 99% laboratory accuracy might fail completely when deployed in production environments with real-world variability [2].

The Robustness vs. Accuracy Trade-off

In many cases, a fundamental trade-off exists between model robustness and accuracy [3]. Maximizing accuracy on a specific dataset may result in overfitting, where models learn patterns too specific to the training set and fail to generalize [2] [3]. Conversely, excessive simplification to improve robustness can lead to underfitting, where models fail to capture essential data complexities [3]. Striking the appropriate balance requires careful model design and evaluation tailored to the specific application context and risk tolerance [1].

Complementary Relationship with Generalizability

Robustness complements but extends beyond traditional i.i.d. (independently and identically distributed) generalizability. While i.i.d. generalization ensures stable performance under static environmental conditions with in-distribution data, robustness focuses on maintaining predictive performance in dynamic environments where input data constantly changes [1]. Thus, i.i.d. generalization represents a necessary but insufficient condition for robustness [1].

Table: Key Characteristics of Robust vs. Fragile Models

| Aspect | Robust Model | Fragile Model |

|---|---|---|

| Performance Stability | Maintains performance with input variations | Performance degrades with slight input changes |

| Handling of Noisy Data | Resilient to noise and corruptions | Sensitive to noise and artifacts |

| Distribution Shifts | Adapts to gradual data drift | Fails with distribution shifts |

| Adversarial Examples | Resists manipulated inputs | Vulnerable to adversarial attacks |

| Real-world Deployment | Consistent performance in production | Unpredictable performance in production |

Key Challenges to Achieving Robustness

Data-Centric Challenges

Multiple data-related factors undermine model robustness. Overfitting to training data occurs when models learn patterns too specific to the training set [2]. Lack of data diversity in training datasets fails to capture the full range of scenarios models will encounter in production [2]. Biases in data from skewed or imbalanced datasets lead to unfair or unstable predictions [2]. Additionally, distribution shifts between training and real-world data significantly challenge model performance [2] [3].

Model-Centric Challenges

Model architecture and training approaches introduce additional robustness challenges. Exploitation of irrelevant patterns and spurious correlations that don't hold in production settings can undermine reliability [1]. Difficulty adapting to edge-case scenarios that are underrepresented in training samples limits comprehensive understanding [1]. Susceptibility to adversarial attacks targets vulnerabilities in overparameterized modern ML models [1]. Furthermore, inability to generalize to gradually-drifted data leads to concept drift as learned concepts become obsolete [1].

Methodologies for Robustness Assessment

Performance on Out-of-Distribution (OOD) Data

Testing with out-of-distribution data evaluates how models handle inputs that differ from training distribution [2]. For example, testing a model trained on clean handwritten digits with blurred or distorted digits reveals performance limitations [2]. OOD detection involves identifying instances at test time that differ significantly from in-data distribution and might result in mispredictions [1].

Stress Testing with Noisy or Corrupted Inputs

Stress testing introduces controlled modifications to model inputs to observe response behaviors [2]. This includes adding random noise to images, replacing words in sentences, or applying simulated corruptions [2]. For security-sensitive systems, these tests include adversarial examples that deliberately probe failure modes to assess adversarial robustness [2].

Confidence Calibration and Uncertainty Estimation

Robust models should provide well-calibrated confidence estimates alongside predictions [2]. In a well-calibrated model, a 99% confidence score should correspond to 99% accuracy [2]. Miscalibrated models may display excessive confidence in incorrect predictions, creating safety risks in critical applications [2]. Techniques like temperature scaling or Bayesian methods help verify reliability of model confidence estimates [2].

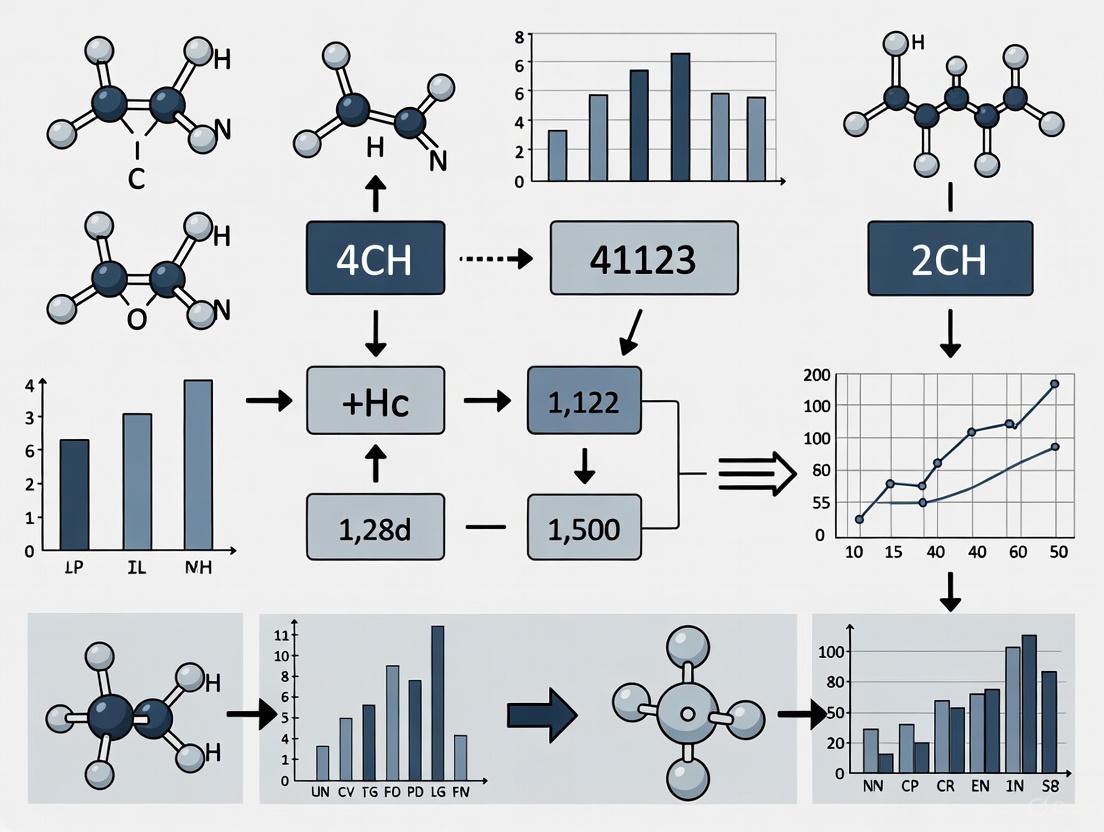

Diagram 1: Comprehensive robustness assessment workflow integrating multiple evaluation methodologies.

Experimental Protocols for Robustness Evaluation

Cross-Validation for Robustness Improvement

Cross-validation determines model performance across diverse data splits, enhancing reliability and reducing overfitting risks [2]. k-fold cross-validation partitions data into k equal parts, training on k-1 parts and testing on the remainder, repeating k times [2]. Stratified sampling maintains consistent class distribution across folds, particularly valuable for imbalanced datasets [2]. Nested cross-validation uses outer and inner loops for hyperparameter tuning and performance estimation, preventing data leakage and providing realistic performance estimates [2].

Noise Robustness Evaluation in Quantum Neural Networks

Recent research has systematically evaluated robustness against various quantum noise channels in Hybrid Quantum Neural Networks (HQNNs) [4]. Experimental protocols assessed three HQNN algorithms—Quantum Convolution Neural Network (QCNN), Quanvolutional Neural Network (QuanNN), and Quantum Transfer Learning (QTL)—under different noise conditions [4]. Researchers introduced five quantum gate noise models (Phase Flip, Bit Flip, Phase Damping, Amplitude Damping, and Depolarization Channel) at varying probabilities to measure performance degradation [4].

Table: Experimental Results - Noise Robustness in Quantum Neural Networks [4]

| Model Architecture | Noise-Free Accuracy | Phase Flip Resilience | Bit Flip Resilience | Depolarization Channel Resilience | Overall Robustness Ranking |

|---|---|---|---|---|---|

| Quanvolutional Neural Network (QuanNN) | 92.3% | High | High | Medium | 1 |

| Quantum Convolution Neural Network (QCNN) | 87.1% | Medium | Low | Low | 3 |

| Quantum Transfer Learning (QTL) | 89.6% | Medium | Medium | Medium | 2 |

Uncertainty Quantification and OOD Detection

Uncertainty quantification methodologies evaluate uncertainties in model predictions, assessing confidence levels considering data variance and model error [1]. This includes distinguishing between aleatoric uncertainty (non-reducible, inherent data randomness) and epistemic uncertainty (reducible, from model limitations) [1]. Effective uncertainty quantification enables AI systems to "know what they don't know," allowing uncertain predictions to be excluded from decision-making flows to mitigate risks [1].

Technical Approaches to Enhance Robustness

Data-Centric Enhancement Strategies

Data augmentation creates diversified training datasets through techniques like rotation, scaling, or color jittering for images, or synonym replacement for text [3]. Data cleaning and normalization address inconsistencies and missing values while normalizing feature scales [3]. Debiasing techniques identify and mitigate sampling and representation biases in training data [1].

Model-Centric Enhancement Strategies

Regularization methods including L1/L2 regularization, dropout, and early stopping prevent overfitting by constraining model complexity [3]. Adversarial training explicitly incorporates adversarial examples during training to build resilience against malicious manipulations [1]. Transfer learning and domain adaptation leverage pre-trained models and adapt them to handle distribution shifts [3]. Randomized smoothing creates certifiably robust models by adding noise during training and inference [1].

Ensemble and Post-Training Methods

Bagging (Bootstrap Aggregating) trains multiple models on different random data samples and aggregates predictions, reducing variance and sensitivity to specific training instances [2]. Random Forest algorithms exemplify bagging by combining multiple decision trees [2]. Ensemble learning combines diverse models with different strengths and weaknesses, creating more robust overall systems through techniques like stacking and boosting [3]. Model pruning and repair techniques remove redundant parameters or directly fix robustness flaws post-training [1].

Diagram 2: Multi-faceted approach to enhancing model robustness through complementary technical strategies.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table: Essential Materials for Robustness Research Experiments

| Research Component | Function/Purpose | Example Applications |

|---|---|---|

| Adversarial Attack Libraries | Generate controlled adversarial examples | Testing model resilience (e.g., FGSM, PGD attacks) |

| Data Augmentation Tools | Create training data variations | Improving generalization to OOD data |

| Uncertainty Quantification Frameworks | Measure predictive uncertainty | Identifying low-confidence predictions |

| Noise Injection Modules | Simulate realistic data corruptions | Stress testing under noisy conditions |

| Cross-Validation Pipelines | Assess performance stability | Detecting overfitting and variance issues |

| Ensemble Modeling Frameworks | Combine multiple model predictions | Improving stability through diversity |

| Benchmark Datasets with Shifts | Evaluate OOD performance | Testing on deliberately distribution-shifted data |

| Robustness Metrics Packages | Quantify resilience aspects | Measuring adversarial accuracy, consistency |

Model robustness represents an essential requirement for deploying trustworthy AI systems in critical domains including healthcare, finance, and drug development [2] [1]. By understanding core robustness concepts, implementing comprehensive assessment methodologies, and applying appropriate enhancement techniques, researchers can develop AI systems that maintain reliable performance under real-world conditions [2] [3]. The continuing advancement of robustness assurance techniques remains vital for realizing AI's full potential while minimizing operational risks and ensuring safety [1].

Future research directions include developing more efficient robustness evaluation protocols, creating standardized benchmarks for comparative analysis, and establishing formal certifications for robust AI systems in regulated industries [1] [4]. For drug development professionals and researchers, prioritizing model robustness ensures that AI-powered discoveries and decisions maintain their validity when applied to diverse populations and real-world clinical settings [2] [1].

The increasing deployment of machine learning and generative artificial intelligence in high-stakes fields, from healthcare to finance, has placed a critical spotlight on model robustness. A common adversary in these real-world applications is noise, which can manifest as corrupted input data, inaccurate training labels, or domain shifts between training and deployment environments. The ability of a model to withstand such noise is not merely a performance metric but a determinant of its real-world viability, influencing its generalizability, the fairness of its outcomes, and its ultimate success in clinical settings. This guide provides a comparative analysis of contemporary noise-robust generative models, evaluating their performance, experimental methodologies, and applicability within a rigorous research framework focused on robustness against noisy training data.

Comparative Analysis of Noise-Robust Generative Models

The table below objectively compares three advanced approaches designed to handle different types of noise, summarizing their core noise-handling strategies, performance on key benchmarks, and primary limitations.

| Model / Approach | Core Noise Handling Mechanism | Reported Performance Highlights | Key Limitations |

|---|---|---|---|

| Noise-Robust qGANs (Quantum Generative Adversarial Networks) [5] | Hybrid architectures (Wasserstein GAN with Gradient Penalty, Quantum CNN) trained with seamless PyTorch-Qiskit integration for stability on noisy quantum hardware [5]. | Up to 80% lower Wasserstein distance under 5% depolarizing noise vs. prior qGANs; below 1% pricing error in European call option pricing on IBM 20-qubit systems [5]. | Specialized for quantum computing hardware; performance is tied to specific circuit ansätze (e.g., EfficientSU2) and may not translate directly to classical models [5]. |

| GeNRT (Generative Noise-Robust Training) [6] [7] | Uses generative models (normalizing flows) to model target domain class-wise distributions for feature augmentation (D-CFA) and enforces generative-discriminative classifier consistency (GDC) to mitigate pseudo-label noise [6] [7]. | Achieves state-of-the-art comparable performance on Office-Home, VisDA-2017, PACS, and Digit-Five UDA benchmarks; effective in single-source and multi-source domain adaptation [6]. | Relies on the quality of initial pseudo-labels to learn initial class-wise distributions; computational overhead from training multiple generative models per class [6]. |

| NRFlow [8] [9] | Incorporates second-order dynamics (acceleration fields) into flow-based generative models, providing theoretical noise robustness guarantees and enhancing trajectory smoothness [8] [9]. | Demonstrates improved smoothness and stability in learned transport trajectories in complex, noisy environments; formal robustness guarantees derived [8] [9]. | Increased model complexity due to the joint training of first-order and high-order fields; a very recent model (2025) with empirical benchmarks still being fully established [8]. |

Detailed Experimental Protocols and Workflows

A critical factor in evaluating any model is the transparency and rigor of its experimental protocol. Below, we detail the methodologies used to generate the performance data for the featured models.

Protocol for Noise-Robust qGANs

This protocol is designed to validate quantum models on near-term noisy hardware [5].

- 1. Data Preparation: The model is trained on 2D Gaussian and log-normal distributions, which are directly relevant to financial applications like risk assessment and option pricing [5].

- 2. Model Training: A hybrid qGAN is constructed, combining a Wasserstein GAN with Gradient Penalty (WGAN-GP) or Maximum Mean Discrepancy (MMD) loss function with expressive quantum circuits like Quantum Convolutional Neural Networks (QCNNs) or the EfficientSU2 ansatz. Training is performed using a seamless PyTorch-Qiskit integration [5].

- 3. Noise Injection & Evaluation: The model's performance is evaluated under a simulated 5% depolarizing noise channel, a common model for quantum hardware errors. Fidelity is assessed using the Wasserstein distance between the generated and target distributions. Final validation involves applying the trained model to a Quantum Amplitude Estimation (QAE) algorithm for European call option pricing, with the error rate versus classical pricing models calculated [5].

Protocol for GeNRT in Unsupervised Domain Adaptation (UDA)

This protocol tests robustness against label noise arising from domain shift [6] [7].

- 1. Problem Setup: A labeled source dataset (e.g., real-world photos) and an unlabeled target dataset from a different distribution (e.g., clip art) are defined. The goal is to train a model on the source data that performs well on the target data.

- 2. Baseline Pseudo-Labeling: An initial discriminative classifier (e.g., a CNN) is trained on the source data and used to generate initial (noisy) pseudo-labels for the target data [6].

- 3. Generative Model Training: A set of normalizing flow-based generative models are trained, one for each class, to learn the class-wise feature distribution of the pseudo-labeled target data [6].

- 4. Noise-Robust Training via D-CFA and GDC:

- D-CFA (Distribution-based Class-wise Feature Augmentation): For each pseudo-labeled target instance, a new feature is sampled from the generative model of its assigned class. This "clean" feature is mixed with the original feature to create an augmented, less noisy representation for training [6].

- GDC (Generative-Discriminative Consistency): The predictions from the discriminative classifier are regularized to match the posterior probabilities calculated by an ensemble of all the class-wise generative models, improving robustness [6].

- 5. Evaluation: The final model is evaluated on the true labels of the target domain across standard UDA benchmarks like Office-Home and VisDA-2017, reporting top-1 classification accuracy [6].

Visualizing Model Architectures and Workflows

The following diagrams illustrate the core logical workflows of the featured models, providing a clear schematic of their approach to handling noise.

Diagram 1: GeNRT Workflow for UDA

This diagram outlines the process by which GeNRT uses generative models to correct noisy pseudo-labels in domain adaptation [6] [7].

Diagram 2: Noise-Robust qGAN Training

This diagram shows the hybrid classical-quantum architecture used to train qGANs robust to quantum hardware noise [5].

Diagram 3: NRFlow's High-Order Mechanism

This diagram illustrates how NRFlow extends flow-based models with second-order dynamics for robust trajectory estimation [8] [9].

The Scientist's Toolkit: Key Research Reagents and Solutions

For researchers aiming to implement or build upon these models, the following table details essential computational tools and platforms referenced in the studies.

| Tool / Material | Function in Research | Relevant Model / Context |

|---|---|---|

| PyTorch with Qiskit Integration | Enables seamless hybrid classical-quantum workflow, allowing model training that is stable on both simulators and real quantum hardware [5]. | Noise-Robust qGANs [5] |

| Normalizing Flows | A class of generative models used to learn flexible, invertible transformations of probability densities, enabling precise sampling for feature augmentation [6]. | GeNRT [6] [7] |

| IBM's 20-Qubit Superconducting Systems | Real, noisy intermediate-scale quantum (NISQ) hardware used for the final validation of model performance in an applied financial task [5]. | Noise-Robust qGANs [5] |

| SPI-1005 (Ebselen) | An investigational new drug that mimics glutathione peroxidase activity, used in clinical research to target oxidative stress in noise-induced hearing loss [10]. | Clinical Audiology / Drug Development [10] |

The pursuit of noise-robust generative models is a multi-faceted challenge spanning quantum computing, classical domain adaptation, and novel theoretical frameworks. As evidenced by the comparative data, models like GeNRT excel in mitigating label noise in domain adaptation, while noise-robust qGANs demonstrate a clear path toward practical quantum advantage on noisy hardware. The emerging NRFlow framework promises enhanced theoretical guarantees through high-order dynamics. For researchers and drug development professionals, the choice of model hinges on the specific nature of the noise and the deployment context. The experimental protocols and tools outlined herein provide a foundational toolkit for rigorously evaluating model robustness, a non-negotiable prerequisite for successful deployment in high-stakes clinical and real-world environments.

The robustness of generative models is a cornerstone of reliable artificial intelligence (AI) research, particularly for high-stakes fields like drug development. A model's performance in controlled, clean laboratory conditions often proves brittle when confronted with the messy reality of real-world data. This fragility frequently stems from three pervasive types of noisy data: label errors, textual inconsistencies, and distribution shifts. Systematically evaluating a model's resilience to these imperfections is not merely an academic exercise; it is a critical step in ensuring that AI tools can be trusted in clinical and research settings. This guide provides a structured framework for conducting such evaluations, comparing the effectiveness of various mitigation strategies through objective experimental data and standardized metrics.

Label Errors

Label errors occur when the annotated output of a dataset does not match the true, underlying value. These inaccuracies can severely degrade model performance, as the model learns incorrect associations from the training data.

Experimental Protocol for Assessing Impact

To evaluate a model's susceptibility to label errors, a common methodology involves the controlled introduction of label noise into a clean dataset.

- Noise Simulation: A dataset with verified, high-quality labels is selected. A predetermined proportion of the training labels (e.g., 30-45%) are then randomly corrupted. This can be done via a uniform flip to an incorrect class or more complex, structured noise patterns.

- Model Training: The generative model is trained on this partially corrupted dataset.

- Performance Benchmarking: The model's performance is evaluated on a held-out test set with clean, verified labels. Standard metrics such as F1-score, accuracy, and precision are recorded and compared against a baseline model trained on the pristine dataset [11].

Mitigation Strategies and Comparative Performance

GMM-cGAN for Encrypted Traffic Classification: This hybrid approach sequentially tackles label correction and data augmentation. It first employs a Gaussian Mixture Model (GMM) to probabilistically identify and correct mislabeled samples based on their feature-space density. A Conditional Generative Adversarial Network (cGAN) then generates high-quality synthetic samples conditioned on the corrected labels, mitigating data scarcity [11].

Experimental Data: The table below summarizes the performance of GMM-cGAN against a state-of-the-art baseline (RAPIER) on three network security datasets under conditions of extreme data scarcity (1,000 samples) and high label noise (45%) [11].

Table 1: Performance Comparison of Label-Noise Mitigation Methods

| Dataset | Baseline (RAPIER) F1-Score | GMM-cGAN F1-Score | Improvement (%) |

|---|---|---|---|

| CIRA-CIC-DoHBrw-2020 | 0.73 | 0.89 | 22.1 |

| CSE-CIC-IDS2018 | 0.78 | 0.88 | 13.4 |

| TON-IoT | 0.85 | 0.91 | 6.4 |

Diagram 1: GMM-cGAN label correction and data augmentation pipeline.

Textual Inconsistencies

Textual inconsistencies encompass a range of issues in language data, including paraphrasing, spelling errors, and syntactic variations. For Vision-Language Models (VLMs), this also includes noise in the visual domain, such as blur or compression artifacts, that affects textual understanding.

Experimental Protocol for Assessing Impact

Evaluating robustness to textual and visual noise requires a systematic corruption of input data.

- Synthetic Noise Introduction: A clean dataset (e.g., Flickr30k, NoCaps) is selected. Synthetic noise is applied to the images at incremental severity levels. This can include:

- Pixel-level distortions: Gaussian noise with varying standard deviation.

- Blurring: Motion blur with different kernel sizes.

- Compression artifacts: JPEG compression at different quality levels [12].

- Model Evaluation: VLMs are tasked with generating captions for these corrupted images. Their outputs are compared to ground-truth captions using a suite of metrics.

- Metric Suite:

- Lexical Metrics: BLEU, METEOR, ROUGE-L, and CIDEr, which measure n-gram overlap and fluency.

- Neural Metrics: Sentence embeddings from models like Sentence Transformers are used to compute cosine similarity, capturing semantic alignment beyond literal word matching [12].

Mitigation Strategies and Comparative Performance

Deep Learning-Based Audio Enhancement: In the medical domain, this method acts as a preprocessing step to clean noisy inputs. For respiratory sound classification, deep learning models (e.g., time-domain Wave-U-Net or time-frequency-domain Conformer-based networks) are trained to denoise audio recordings. This provides a cleaner signal for both downstream AI models and human clinicians, improving diagnostic confidence and system trust [13].

Experimental Data: The table below shows the performance improvement from integrating an audio enhancement module for respiratory sound classification on noisy data [13].

Table 2: Performance of Audio Enhancement on Noisy Medical Data

| Dataset | Baseline (Noise Augmentation) ICBHI Score | With Audio Enhancement ICBHI Score | Improvement (Percentage Points) |

|---|---|---|---|

| ICBHI Respiratory Sound | - | - | 21.88 |

| Formosa Breath Sound | - | - | 4.1 |

VLM Robustness Findings: Studies on VLMs reveal that larger model size does not universally confer greater robustness. The descriptiveness of ground-truth captions significantly influences measured performance, and certain noise types like JPEG compression and motion blur cause dramatic performance degradation across models [12].

Distribution Shifts

Distribution shifts occur when the data a model encounters during deployment differs from its training data. This is a fundamental challenge for deploying models in new environments or with underrepresented populations.

Experimental Protocol for Assessing Impact

Robustness to distribution shifts is typically measured through out-of-distribution (OOD) testing.

- Dataset Selection: Models are trained on a "source" domain dataset (e.g., data from one hospital) and evaluated on a separate "target" domain dataset (e.g., data from a different hospital with different imaging equipment or patient demographics) [14] [15].

- Shift Measurement: Statistical two-sample tests can be employed to quantify the shift between training and test distributions across different data representations, such as word frequency, sentence-level embeddings, or feature-space densities [16].

- Performance and Fairness Metrics: Beyond overall accuracy, it is critical to measure the performance gap between overrepresented and underrepresented subgroups in the OOD data (e.g., different ethnicities, hospitals, or age groups) to assess fairness [14].

Mitigation Strategies and Comparative Performance

Diffusion Models for Data Augmentation: This approach uses diffusion models to learn the underlying distribution of available data (both labeled and unlabeled) and generate synthetic samples to strategically augment the training set. The generative model can be conditioned on labels and sensitive attributes (e.g., "hospital ID" or "ethnicity") to create a more balanced and diverse dataset, specifically enhancing representation for underrepresented groups [14].

Experimental Data: In medical imaging tasks, supplementing real training data with synthetic samples generated by diffusion models has been shown to improve OOD diagnostic accuracy and reduce fairness gaps.

Table 3: Diffusion-Based Augmentation for Distribution Shifts

| Modality / Task | Primary Metric | Key Finding |

|---|---|---|

| Histopathology (CAMELYON17) | Top-1 Accuracy | Improved OOD accuracy and closed fairness gap between hospitals [14]. |

| Dermatology | High-Risk Sensitivity | Improved diagnostic accuracy for underrepresented groups OOD [14]. |

| Chest X-Ray | ROC-AUC | Improved overall OOD performance and subgroup fairness [14]. |

Automated Shift Detection (MedShift): For medical data where sharing raw data is infeasible, the MedShift pipeline uses unsupervised anomaly detectors (e.g., Autoencoders, GANs) trained on an internal "source" dataset. These detectors are then shared with external institutions, which use them to compute anomaly scores for their own "target" data, identifying potential shift samples without violating privacy [15].

Diagram 2: Privacy-preserving distribution shift detection with MedShift.

Standardized Evaluation Metrics for Generative Model Robustness

To objectively compare generative models, standardized evaluation metrics are essential. The field is moving beyond simple fidelity measures to more comprehensive statistical tests.

Table 4: Novel Metrics for Evaluating Generative Models on Tabular Data

| Metric | Full Name | Principle | Strengths |

|---|---|---|---|

| FAED | Fréchet AutoEncoder Distance | Measures the Fréchet Distance between real and synthetic data in the latent space of a pre-trained Autoencoder. | Effectively captures quality decrease, mode drop, and mode collapse [17]. |

| FPCAD | Fréchet PCA Distance | Measures the Fréchet Distance after projecting real and synthetic data onto principal components. | Lightweight, does not require model training [17]. |

| RFIS | - | Inspired by the Inception Score (IS), it assesses the quality and diversity of generated samples. | Adapted from a proven image domain metric for tabular data [17]. |

This table details key computational "reagents" and methodologies essential for conducting robustness evaluations.

Table 5: Essential Resources for Robustness Evaluation Experiments

| Resource / Solution | Function in Evaluation | Exemplar Use-Case |

|---|---|---|

| Adversarial Training | Improves model resistance to maliciously crafted input perturbations [18]. | Securing models in safety-critical applications like autonomous vehicles. |

| Statistical Two-Sample Tests | Provides a principled methodology for detecting distribution shifts between datasets [16]. | Quantifying the shift between training data from one hospital and test data from another. |

| Fréchet Distance Metrics (FAED/FPCAD) | Quantifies the similarity between the distributions of real and synthetic data [17]. | Benchmarking the performance of different generative models for tabular data synthesis. |

| Lexical & Neural Evaluation Metrics | Provides a multi-faceted assessment of generative text output quality under noise [12]. | Evaluating the robustness of Vision-Language Models to image corruptions. |

| Diffusion Models | Generates high-fidelity, steerable synthetic data to augment underrepresented classes or conditions [14]. | Improving model fairness and OOD performance for medical image classification. |

| Unsupervised Anomaly Detectors (e.g., Autoencoders) | Learns a representation of "normal" in-distribution data to identify OOD samples [15]. | Privacy-preserving curation of external medical datasets. |

The noise shift phenomenon represents a critical challenge in the development and deployment of denoising generative models. This issue manifests as a performance degradation that occurs when there is a mismatch between the noise distributions encountered during training and those present during inference. As generative models increasingly serve as foundational tools across scientific domains—including drug development where they model molecular structures and predict protein folding—understanding and mitigating noise shift has become paramount. This guide examines the pervasiveness of this phenomenon through a comparative analysis of recent methodological approaches, providing researchers with experimental data and protocols to evaluate model robustness.

The core of the problem lies in the inherent vulnerability of denoising-based generative models to discrepancies in noise characteristics. These models, including diffusion models and flow matching techniques, learn to reverse a predefined noising process; when the actual noise during deployment diverges from this training specification, their generative capabilities deteriorate substantially. This guide systematically compares contemporary solutions, analyzing their experimental performance and providing methodologies for assessing robustness in research applications.

Understanding Noise Shift: Mechanisms and Manifestations

Theoretical Foundations of Noise Conditioning

Most denoising generative models operate on the principle of learning to reverse a carefully controlled noising process. During training, a data point x is corrupted according to the equation z = a(t)x + b(t)ε, where t represents a timestep or noise level, a(t) and b(t) are schedule functions, and ε is noise typically sampled from a standard normal distribution [19]. The model is then trained to recover the original data from this corrupted version, with noise conditioning—providing the noise level t as an input—being widely regarded as essential for learning the reverse process across all noise levels [19].

The noise shift phenomenon occurs when this carefully constructed training paradigm breaks down during inference. This can happen through several mechanisms:

- Resolution-dependent perceptual effects: The same absolute noise level removes disproportionately more perceptual information from lower-resolution images than from higher-resolution ones [20].

- Exposure bias: The discrepancy between training-time noise distributions and those encountered during inference [20].

- Hardware-induced noise: On quantum devices, noise from imperfect qubits and gates can degrade model performance unless specifically mitigated [5].

- Domain-specific shifts: In unsupervised domain adaptation, noise in pseudo-labels creates distributional shifts that corrupt the learning process [6].

Visualizing the Noise Shift Phenomenon

The following diagram illustrates how the noise shift phenomenon manifests across different resolutions due to perceptual disparities in noise impact:

This perceptual disparity creates a fundamental train-test mismatch where models must denoise images drawn from distributions increasingly distant from their training data as resolution changes, leading to the characteristic performance degradation of the noise shift phenomenon [20].

Comparative Analysis of Noise-Robust Methodologies

Performance Benchmarking Across Approaches

The table below summarizes the performance of various methods addressing noise shift, as measured by the Fréchet Inception Distance (FID) on standard datasets:

| Method | Core Approach | Dataset | Performance (FID) | Noise Conditioning |

|---|---|---|---|---|

| NoiseShift [20] | Resolution-aware noise recalibration | LAION-COCO (SD3.5) | 15.89% improvement | Required, but recalibrated |

| Noise-Unconditional EDM Variant [19] | Removal of explicit noise conditioning | CIFAR-10 | 2.23 FID | Not required |

| EDM (Baseline) [19] | Standard noise-conditioned diffusion | CIFAR-10 | 1.97 FID | Required |

| GeNRT [6] | Generative-discriminative consistency | Office-Home | State-of-the-art | Required (implicitly) |

| Quantum GAN with WGAN-GP [5] | Hybrid quantum-classical architecture | 2D Gaussian | 80% lower Wasserstein distance | Required |

Performance metrics reveal that while noise conditioning has been considered essential for denoising generative models, recent approaches challenge this paradigm. The noise-unconditional EDM variant achieves competitive performance (2.23 FID) while eliminating explicit noise conditioning, suggesting that models can implicitly learn noise level estimation [19]. Meanwhile, NoiseShift demonstrates that calibrating existing noise conditioning to specific resolutions yields substantial improvements (15.89% FID improvement for SD3.5) [20].

Application-Specific Performance Characteristics

| Method | Target Application | Strengths | Computational Overhead | Limitations |

|---|---|---|---|---|

| NoiseShift [20] | Low-resolution generation | Training-free, compatible with existing models | Minimal (one-time calibration) | Resolution-specific calibration needed |

| Noise-Unconditional Models [19] | General-purpose generation | Simplified architecture, enables Langevin dynamics | Reduced (no conditioning inputs) | Performance gap in some configurations |

| GeNRT [6] | Unsupervised domain adaptation | Robust to pseudo-label noise | Moderate (generative feature augmentation) | Complex training pipeline |

| Quantum GAN [5] | Distribution learning on quantum hardware | Noise-robust on near-term devices | High (quantum resources required) | Specialized hardware needed |

Application-specific analysis reveals a trade-off between specialization and generality. NoiseShift excels in resolution generalization without retraining, making it suitable for deployment scenarios requiring multi-resolution support [20]. In contrast, GeNRT's approach of generative-discriminative consistency provides robustness against label noise in domain adaptation, addressing a different manifestation of the noise shift phenomenon [6].

Experimental Protocols and Methodologies

NoiseShift Calibration Protocol

The NoiseShift method employs a systematic approach to address resolution-dependent noise miscalibration:

Problem Identification: Recognize that identical noise levels have unequal perceptual impacts across resolutions, with low-resolution images losing semantic content more rapidly [20].

Coarse-to-Fine Grid Search: For each target resolution, perform a search to identify the optimal surrogate timestep

t̃that minimizes denoising prediction error compared to the nominal timestept.Calibration Mapping: Establish a resolution-specific mapping function

f(t, resolution) → t̃that aligns the reverse diffusion process with the appropriate noise distribution for that resolution.Inference Application: During sampling at non-training resolutions, preserve the standard schedule but feed the network the calibrated timestep conditioning

t̃instead of the nominal valuet.

This protocol requires no model retraining or architectural modifications, making it readily applicable to existing deployed models. The calibration needs to be performed only once per resolution and can be reused for all subsequent generations at that resolution [20].

Noise-Unconditional Training Methodology

The approach for training generative models without explicit noise conditioning involves:

Architecture Modification: Remove all noise-level conditioning inputs from the model architecture while maintaining the same core network structure (e.g., U-Net) [19].

Training Objective Adjustment: Maintain the standard denoising objective

ℒ(θ) = 𝔼x,ε,t[w(t)∥NNθ(z) - r(x,ε,t)∥²]but without providingtas an input to the network [19].Blind Denoising Leverage: Rely on the network's ability to implicitly estimate noise levels from the corrupted input

zalone, similar to classical blind image denoising approaches.Error Bound Analysis: Apply theoretical error analysis to predict performance degradation, with the finding that most models exhibit only graceful degradation without noise conditioning [19].

This methodology challenges the long-standing assumption that noise conditioning is indispensable for denoising generative models, potentially simplifying architectures and enabling applications of classical sampling techniques like Langevin dynamics [19].

Research Reagent Solutions Toolkit

| Research Tool | Function | Implementation Notes |

|---|---|---|

| Normalizing Flows [6] | Models class-wise target distributions for feature augmentation | Used in GeNRT for Distribution-based Class-wise Feature Augmentation (D-CFA) |

| Wasserstein GAN with Gradient Penalty (WGAN-GP) [5] | Provides training stability under noisy conditions | Combined with quantum circuits for noise-robust training on quantum hardware |

| Quantum Convolutional Neural Networks (QCNNs) [5] | Expressive quantum circuits for noisy quantum data | Enhances capacity to model complex, multi-modal distributions on quantum devices |

| EfficientSU2 Ansätze [5] | Parameterized quantum circuit architecture | Offers expressive quantum states while maintaining trainability under noise |

| PyTorch-Qiskit Integration [5] | Enables hybrid quantum-classical model training | Facilitates stable optimization on both simulators and real quantum hardware |

| U-Net Architecture [19] | Backbone for denoising networks | Effective for both noise-conditional and unconditional variants |

This toolkit provides essential components for developing noise-robust generative models across both classical and quantum computing paradigms. The selection of appropriate tools depends on the specific manifestation of noise shift being addressed and the computational platform available.

Visualizing the NoiseShift Calibration Workflow

The following diagram illustrates the end-to-end NoiseShift calibration and inference process:

The noise shift phenomenon presents a fundamental challenge to the real-world deployment of denoising generative models across scientific domains, including drug development where reliable generation under varying conditions is crucial. This comparative analysis demonstrates that while the phenomenon manifests differently across contexts—from resolution dependencies to quantum hardware noise—recent methodologies offer promising mitigation strategies.

The experimental evidence suggests that no single approach universally dominates; rather, the selection of an appropriate noise robustness strategy depends on the specific application requirements and constraints. Training-free calibration methods like NoiseShift offer immediate practical benefits for existing models, while architectural innovations in noise-unconditional models may provide longer-term foundations for more robust generative modeling. As these technologies continue to evolve, rigorous evaluation of noise shift robustness will remain essential for ensuring reliable performance in scientific and clinical applications.

The Evaluator's Toolkit: Key Metrics and Methods for Assessing Robustness

The evaluation of generative models presents a significant challenge in artificial intelligence research, particularly as these models are increasingly deployed in high-stakes fields like drug development. Quantitative metrics provide essential tools for objectively measuring model performance and progress. Within the specific research context of evaluating model robustness against noisy training data, understanding the strengths and limitations of these metrics becomes paramount. Noisy conditions—such as corrupted labels in image data or unreliable observations in robotic control—can severely degrade model performance, making the choice of evaluation metric critical for accurate assessment.

This guide provides a comparative analysis of four cornerstone automatic evaluation metrics: BLEU (Bilingual Evaluation Understudy), ROUGE (Recall-Oriented Understudy for Gisting Evaluation), Perplexity, and the Fréchet Inception Distance (FID). We examine their underlying mechanisms, ideal applications, and how they behave when confronted with the challenging conditions of noisy data, providing researchers with the experimental protocols and contextual understanding necessary for their effective application.

Metric Fundamentals and Comparative Analysis

◆ BLEU (Bilingual Evaluation Understudy)

BLEU is a string-matching algorithm developed to evaluate machine translation (MT) systems by measuring the similarity between machine-generated output and human-produced reference translations [21] [22]. Its core premise is that "the closer a machine translation is to a professional human translation, the better it is" [21]. Despite its known flaws, BLEU remains widely used as a primary metric in MT research [21].

Mechanism: BLEU operates by calculating n-gram precision between the candidate and reference texts. It compares contiguous sequences of words (unigrams, bigrams, trigrams, etc.), giving higher weight to longer matching word sequences [21] [22]. The score is primarily based on precision (how many words in the candidate appear in the reference) with a brevity penalty to prevent overly short outputs. Scores are typically reported on a 0 to 1 scale, though they are often communicated as 0 to 100 for simplicity [22].

◆ ROUGE (Recall-Oriented Understudy for Gisting Evaluation)

ROUGE is a set of metrics for evaluating automatic summarization and machine translation. Unlike BLEU's precision-oriented approach, ROUGE is fundamentally recall-oriented, measuring how much of the reference content is captured by the generated text [23]. It is case-insensitive and widely used in Natural Language Processing (NLP) for its robustness in quantifying how consistently a generation model preserves relevant content compared to reference summaries [23].

Mechanism: The ROUGE family includes several variants:

- ROUGE-N: Measures n-gram recall between a candidate summary and reference summaries.

- ROUGE-L: Assesses the longest common subsequence (LCS) between texts, capturing sentence-level structure and fluency. ROUGE's recall-focused nature makes it particularly valuable for tasks where capturing the essential information from the source material is more critical than the precise wording of the output [23] [12].

◆ Perplexity

Perplexity is an information-theoretic metric that quantifies how well a probability model predicts a sample. For language models, it measures the uncertainty a model experiences when predicting the next token in a sequence [24] [25]. It serves as a proxy for model confidence, with lower perplexity indicating that the model is more certain in its predictions and is generally considered to be performing better [25].

Mechanism: Perplexity is defined as the exponential of the average negative log-likelihood of a sequence of words or tokens [24] [26]. Mathematically, for a sequence of tokens, it is calculated as:

PPL = exp(1/N * ∑_{i=1}^N -log P(w_i | w_1, ..., w_{i-1}))

where P(w_i | w_1, ..., w_{i-1}) is the model's predicted probability for the i-th token given the preceding context, and N is the total number of tokens [26]. A lower perplexity score means the model is choosing between fewer, more likely options at each step.

◆ Fréchet Inception Distance (FID)

FID is a metric for evaluating the quality of images generated by generative models, particularly Generative Adversarial Networks (GANs). It measures the distance between feature vectors calculated for real and generated images, providing a statistical similarity measure between the two distributions [27]. Lower FID scores indicate that the two groups of images are more similar, with a perfect score of 0.0 signifying identical image sets [27].

Mechanism: FID uses the pre-trained Inception v3 model to extract feature vectors from both real and generated images [27]. The computation involves:

- Computing the mean (µ) and covariance (Σ) of the feature activations for both real and generated image sets.

- Calculating the Fréchet distance (Wasserstein-2 distance) between these two multivariate Gaussian distributions using the formula:

FID = ||µ_r - µ_g||^2 + Tr(Σ_r + Σ_g - 2*(Σ_r*Σ_g)^(1/2))whereTris the trace of the matrix [27]. This approach captures visual quality and diversity in a way that correlates well with human perception.

Table 1: Fundamental Characteristics of Automatic Evaluation Metrics

| Metric | Primary Domain | Core Principle | Optimal Value | Key Strengths |

|---|---|---|---|---|

| BLEU | Machine Translation | N-gram Precision | Higher (Closer to 1) | Fast, inexpensive, correlates with human judgment when properly used [21] |

| ROUGE | Summarization/Translation | N-gram Recall | Higher | Recall-oriented, effective for content preservation assessment [23] |

| Perplexity | Language Modeling | Predictive Uncertainty | Lower | Computationally efficient, intuitive, useful for real-time training monitoring [24] [25] |

| FID | Image Generation | Distribution Distance | Lower (0.0 is perfect) | Correlates with human perception of image quality, uses robust feature extraction [27] |

Performance Under Noisy Training Conditions

The robustness of evaluation metrics becomes critically important when generative models are trained on noisy data—a common scenario in real-world applications where clean, perfectly labeled datasets are often unavailable. Recent research provides insights into how these metrics perform under such challenging conditions.

Noise in Conditional Generation: Studies on conditional diffusion models reveal that their performance significantly degrades with noisy conditions, such as corrupted labels in image generation or unreliable observations in visuomotor policy generation [28]. One study introduced a robust learning framework employing pseudo conditions and Reverse-time Diffusion Condition (RDC) to address extremely noisy conditions, achieving state-of-the-art performance across various noise levels [28]. This highlights the importance of developing noise-resistant training methodologies and the metrics to evaluate them.

Vision-Language Model Robustness: Comprehensive evaluations of Vision-Language Models (VLMs) under controlled perturbations (lighting variation, motion blur, compression artifacts) have shown that lexical-based metrics like BLEU, METEOR, ROUGE, and CIDEr remain valuable for quantifying performance degradation [12]. The study found that certain noise types, such as JPEG compression and motion blur, dramatically degrade performance across models, which these metrics reliably detect [12]. However, neural-based similarity measures using sentence embeddings often provide additional insights into semantic alignment that purely lexical metrics might miss.

Language Model Fine-tuning: Research into LLM fine-tuning robustness has discovered a strong relationship between token-level perplexity and model generalization. Studies show that fine-tuning with data containing a reduced prevalence of high-perplexity tokens significantly improves out-of-domain (OOD) robustness [26]. This suggests that perplexity itself can be a valuable indicator for constructing training datasets that maintain model performance under distribution shifts, and that selectively masking high-perplexity tokens during training can preserve OOD performance comparable to using LLM-generated data [26].

Table 2: Metric Performance and Considerations Under Noisy Conditions

| Metric | Sensitivity to Noise | Robustness Characteristics | Noise-Specific Considerations |

|---|---|---|---|

| BLEU | High | Vulnerable to lexical variations; different correct translations of the same source can score poorly [21] | Single reference tests problematic; multiple references improve robustness [22] |

| ROUGE | Moderate | Recall-orientation can be advantageous when precise wording varies but meaning persists [23] | More resilient to paraphrasing than BLEU, but still primarily surface-level [12] |

| Perplexity | Variable | Directly measures model uncertainty, which increases with noisy data [26] | Can guide robust training strategies (e.g., masking high-perplexity tokens) [26] |

| FID | Moderate | Measures distributional similarity rather than exact matches [27] | Statistical approach provides inherent robustness to minor image perturbations |

Experimental Protocols and Methodologies

BLEU/ROUGE Evaluation Protocol for Noisy Conditions

The evaluation of text generation models under noisy conditions typically follows this workflow:

Dataset Preparation: Select a standardized dataset appropriate for the task (translation, summarization). Introduce controlled noise into the training data, such as:

- Label noise: Randomly corrupt a percentage of labels in classification tasks.

- Textual noise: Introduce spelling errors, paraphrasing, or syntactic noise [12].

- Semantic noise: Alter meaning while preserving grammatical correctness.

Model Training: Train multiple model versions or architectures on both clean and noisy variants of the dataset to establish performance baselines.

Reference Collection: For the test set, obtain multiple high-quality human reference translations or summaries. Using multiple references is critical as it accounts for legitimate variation in correct outputs [21] [22].

Metric Calculation:

Validation: Correlate automatic metric scores with human judgments of quality to ensure metric reliability under noisy conditions [21].

Perplexity Evaluation Protocol for Robust Fine-tuning

Recent research into robust fine-tuning employs perplexity analysis as an active component of the training strategy rather than just an evaluation metric [26]:

Baseline Perplexity Calculation: Compute the perplexity of the ground truth training data using the pre-trained model before fine-tuning. This establishes a baseline understanding of how "familiar" the training data is to the model [26].

High-Perplexity Token Identification: Analyze the distribution of token-level perplexity across the dataset. Identify tokens with perplexity values above a determined threshold that correlates with performance degradation [26].

Selective Token Masking (STM): Implement a masking strategy that removes or masks high-perplexity tokens during training. This creates a lower-perplexity training subset that has been shown to improve out-of-domain robustness [26].

Comparative Evaluation: Fine-tune models on:

- The original ground truth data

- The selectively masked low-perplexity data

- LLM-generated data (for comparison) Evaluate all models on both in-domain and out-of-domain tasks to measure robustness preservation [26].

FID Evaluation Protocol for Noisy Image Data

Evaluating image generation models under noisy conditions with FID requires careful experimental design:

Noise Introduction: Create synthetically noisy variants of standard image datasets (e.g., CIFAR-10, Flickr30k) with controlled perturbations [12]:

Model Training: Train generative models (GANs, diffusion models) on both clean and noisy training sets.

Feature Extraction: For both real validation images and generated images:

- Use the Inception v3 model (trained on ImageNet) with the final classification layer removed.

- Extract feature vectors from the last pooling layer (2,048 dimensions) [27].

Statistical Calculation:

- Compute the mean (µ) and covariance (Σ) of the feature vectors for both real and generated image sets.

- Calculate the FID score using the formula:

FID = ||µ_r - µ_g||² + Tr(Σ_r + Σ_g - 2*(Σ_r*Σ_g)^(1/2))[27].

Benchmarking: Compare FID scores across different noise conditions and model architectures to identify robustness patterns [12].

Table 3: Key Experimental Resources for Robust Generative Model Evaluation

| Resource Category | Specific Examples | Function in Evaluation | Relevance to Noise Robustness |

|---|---|---|---|

| Standardized Datasets | CIFAR-10/100 [28], Flickr30k [12], NoCaps [12], MBPP [26], MATH [26] | Provides controlled benchmarks for fair comparison | Enable systematic introduction of synthetic noise at controlled levels |

| Pre-trained Models | Inception v3 [27], Sentence Transformers [12], Llama3-8B [26], BLIP-2 [12] | Feature extraction (FID) or baseline for perplexity calculation | Establish baseline performance and feature representations |

| Evaluation Toolkits | SacreBLEU, TorchMetrics, Hugging Face Evaluate | Standardized metric implementation | Ensure reproducibility and comparability across studies |

| Noise Injection Tools | Custom perturbation pipelines, albumentations, torchvision transforms | Systematic creation of noisy training and test conditions | Enable controlled robustness testing across noise types and levels |

| Analysis Frameworks | Selective Token Masking (STM) [26], RDC [28], MMIO [12] | Specialized techniques for robustness enhancement and measurement | Provide mechanistic insights into model behavior under noise |

Automatic quantitative metrics provide indispensable tools for evaluating generative models, each with distinct strengths and limitations in the context of noisy training data. BLEU offers precision-focused translation assessment but exhibits sensitivity to lexical variation. ROUGE's recall-oriented approach better captures content preservation in summarization. Perplexity provides unique insights into model uncertainty and can actively guide robust training strategies. FID delivers distribution-based image quality assessment that correlates well with human perception.

Under noisy conditions—increasingly common in real-world applications—the behavior of these metrics becomes more complex. Research shows that while all metrics detect performance degradation under noise, their interpretability varies significantly. The most effective evaluation approaches combine multiple metrics with human judgment and domain-specific validation. Furthermore, metrics like perplexity are evolving from passive evaluation tools to active components in robust training methodologies, highlighting the dynamic nature of generative model assessment. For researchers evaluating model robustness, a multifaceted approach that understands both the mathematical foundations and practical behaviors of these metrics under challenging conditions is essential for accurate performance characterization.

Within the broader context of research on the robustness of generative models trained on noisy data, the selection of evaluation metrics is paramount. Noisy, mislabeled, or uncurated training datasets can cause models to generate low-quality or irrelevant outputs, making reliable evaluation critical for diagnosing and correcting these failures [29]. While human evaluation is the gold standard, it is expensive, time-consuming, and prone to bias [30]. Therefore, researchers largely depend on automated, quantitative metrics.

The Inception Score (IS) and the CLIP Score are two such metrics that approach the evaluation problem from fundamentally different angles. IS, one of the earlier proposed metrics, assesses the quality and diversity of generated images based on a pre-trained image classification model [30]. In contrast, the more recent CLIP Score measures the alignment between a generated image and its conditioning text prompt using a vision-language model [31] [32]. This guide provides a detailed, objective comparison of these two metrics, focusing on their application in robust generative model research, particularly in scenarios involving noisy training data.

Metric Fundamentals: A Head-to-Head Comparison

At their core, IS and CLIP Score are designed for different evaluation paradigms: IS for unconditional or class-conditional generation, and CLIP Score for text-conditional generation.

Inception Score (IS) measures the quality and diversity of generated images without direct reference to real images [30]. It uses a pre-trained Inception-v3 model (typically trained on ImageNet) to compute:

- Image Fidelity: Whether an image contains a clear, recognizable object. This is reflected in a low entropy (high confidence) for the conditional label distribution

p(y|x)[30]. - Diversity: Whether the set of generated images covers a wide range of classes. This is reflected in a high entropy for the marginal class distribution

p(y)[30].

The score is formally computed as IS = exp( E_x [ KL( p(y|x) || p(y) ] ), where a higher score indicates better perceived quality and diversity [30] [33].

CLIP Score measures the compatibility between an image and a text caption. It leverages OpenAI's CLIP model, which is pre-trained on hundreds of millions of image-text pairs to create a shared embedding space [31] [32]. The score is calculated as the cosine similarity between the image and text embeddings extracted by the CLIP model [32] [33]. A higher CLIP Score indicates stronger semantic alignment between the generated image and the prompt [31].

The table below summarizes their fundamental characteristics.

Table 1: Fundamental Characteristics of IS and CLIP Score

| Feature | Inception Score (IS) | CLIP Score |

|---|---|---|

| Primary Objective | Assess image quality & diversity (intrinsic) [30] | Assess text-image alignment (extrinsic) [31] [32] |

| Core Mechanism | KL divergence of class distributions from an Inception-v3 model [30] | Cosine similarity in CLIP's vision-language embedding space [32] |

| Requires Real Images? | No (unreferenced metric) [33] | No (unreferenced metric) [33] |

| Typical Use Case | Unconditional or class-conditional image generation [33] | Text-conditional image generation [31] [33] |

| Key Weaknesses | Does not compare to real data; sensitive to model weights; fails on non-ImageNet classes [30] | Depends on CLIP's training data and biases; may not fully capture visual quality [30] |

Experimental Comparison and Performance

Evaluating metrics against a common standard—human judgment—reveals their practical strengths and weaknesses. The following diagram illustrates the logical workflow for calculating each score, highlighting their distinct operational pathways.

Quantitative Benchmarking

A comparative analysis on the TikTok dataset for video generation (where metrics are applied frame-wise or feature-wise) demonstrates the alignment of these metrics with human judgment. While this involves video, the principles translate to image evaluation.

Table 2: Metric Performance on a Video Benchmark (Correlation with Human Judgment) [34]

| Metric | Correlation with Human Ratings | Key Observation |

|---|---|---|

| Inception Score (IS) | Used as a unary metric (no reference), but correlation not explicitly stated [34]. | As an unreferenced metric, it may not reliably capture gradual quality improvements from model refinements [30]. |

| CLIP Score | Not the highest correlation in the benchmark [34]. | Effective for measuring prompt alignment but may not correlate perfectly with human ratings of visual or motion quality [34]. |

The data suggests that while CLIP Score directly measures an important aspect of conditional generation (prompt alignment), it may not be a holistic measure of quality. IS, being unreferenced, provides an intrinsic measure of quality and diversity but may not reflect a model's ability to mimic a target dataset.

Robustness to Noisy Training Data

The core challenge in our thesis context is robustness against noisy labels. Research indicates that IS has specific vulnerabilities. Since IS relies on a classifier's confidence, a model can learn to "fool" the Inception network into giving high-confidence predictions, generating adversarial examples that achieve a high IS but lack perceptual quality [30] [35]. This is a critical failure mode when models are trained on noisy data, as they may learn spurious correlations that exploit the evaluation metric rather than learning true data manifolds.

CLIP Score, by virtue of using a much larger and more diverse training set (400x more data than Inception-v3) and a different learning objective (contrastive image-text alignment), offers a different and often more robust feature space [30]. Newer metrics like CLIP-Maximum Mean Discrepancy (CMMD) are being proposed to replace FID, specifically because CLIP embeddings are more robust and do not assume a normal distribution of features, making them less prone to manipulation and more aligned with human perception [30].

Experimental Protocols for Researchers

To ensure reproducible and comparable results, follow these standardized protocols when using IS and CLIP Score.

Protocol for Inception Score (IS)

- Model and Setup: Use a pre-trained Inception-v3 model. It is critical to use the same model implementation (e.g., PyTorch vs. Keras) as the metric is sensitive to weight differences [30].

- Image Generation: Generate a large set of images (typically 50,000) from the model under evaluation [30].

- Inference: Pass each generated image through the Inception-v3 model to obtain the conditional class probability distribution

p(y|x). - Calculation:

- Compute the marginal class distribution

p(y)by averaging allp(y|x)over the entire set of generated images. - For each image, compute the KL divergence

KL( p(y|x) || p(y) ). - Average the KL divergences over all images and take the exponential of the result [30].

- Compute the marginal class distribution

Key Considerations: IS is best suited for models trained on ImageNet-like classes. It does not measure diversity within a class and can be gamed, so it should not be used as the sole metric [30] [33].

Protocol for CLIP Score

- Model and Setup: Use a pre-trained CLIP model (e.g.,

openai/clip-vit-base-patch16). - Data Preparation: Have the set of generated images and their corresponding text prompts.

- Inference:

- Pass all images through the CLIP image encoder to get image embeddings.

- Pass all text prompts through the CLIP text encoder to get text embeddings [31].

- Calculation:

Key Considerations: The CLIP Score reflects semantic alignment but not necessarily pixel-level visual quality. It is influenced by the domain and biases present in CLIP's training data [31].

The Scientist's Toolkit: Essential Research Reagents

Implementing these evaluation metrics requires specific software tools and models, which function as the essential "reagents" in computational experiments.

Table 3: Key Research Reagents for Evaluation Metrics

| Reagent / Resource | Function / Description | Role in Evaluation |

|---|---|---|

| Inception-v3 Model | A pre-trained convolutional neural network for image classification [30]. | The foundational network for extracting image features and class probabilities required to compute the Inception Score. |

| CLIP Model | A vision-language model pre-trained on a vast corpus of image-text pairs to align visual and textual concepts [31] [32]. | Provides the joint embedding space necessary for calculating the semantic alignment between an image and a text prompt. |

| TorchMetrics | A library of standardized metrics for machine learning, often including implementations of FID and IS [36]. | Provides reliable, pre-written code for calculating metrics, ensuring consistency and reducing implementation errors. |

| Clean Evaluation Dataset | A curated dataset, such as ImageNet-1k, with reliable labels [36]. | Serves as a ground truth for reference-based metrics (like FID) and for benchmarking the performance of generative models. |

| Benchmark Prompts | Curated prompt datasets (e.g., DrawBench, PartiPrompts) for standardized qualitative and quantitative evaluation [31]. | Enables fair and consistent comparison of text-conditional models by testing performance across diverse and challenging prompts. |

The choice between Inception Score and CLIP Score is not a matter of which is universally superior, but which is fit-for-purpose within a specific research context, especially when dealing with noisy training data.

- Inception Score remains a useful, though dated, metric for quickly assessing the intrinsic quality and diversity of unconditionally generated images. Its primary vulnerability in robustness research is its susceptibility to adversarial manipulation and its lack of a direct comparison to real data [30] [35].

- CLIP Score has emerged as the standard for evaluating text-to-image generation, directly measuring a model's ability to follow instructions. Its robustness stems from CLIP's rich, semantically grounded feature space, making it less prone to certain adversarial attacks and more aligned with human judgment of semantic content [30] [31] [32].

For a comprehensive evaluation of generative models, particularly in the challenging context of noisy data, relying on a single metric is insufficient. A robust evaluation framework should combine CLIP Score to measure conditional alignment, complemented by a distribution-based metric like Frèchet Inception Distance (FID) or its robustified variants (e.g., using CLIP embeddings) to assess realism and diversity against a clean reference set [30] [36]. Finally, qualitative human evaluation on benchmark prompts remains an essential step to validate and interpret the quantitative results provided by these automated metrics [31].

The application of generative models in drug discovery has revolutionized the pharmaceutical industry, enabling the rapid analysis of vast chemical spaces and prediction of compound efficacy. However, the performance of these models is critically dependent on the quality of their training data. Noisy datasets, containing mislabeled examples or corrupted text, can significantly degrade model reliability and generalizability, presenting a substantial challenge in high-stakes fields like drug development. This article explores the robustness of generative models against noisy training data, with a specific focus on TDRanker, a novel noise identification technique. Framed within the context of drug discovery, we compare the performance of TDRanker against alternative methodologies, providing experimental data and detailed protocols to guide researchers and scientists in selecting optimal strategies for data refinement.

The Critical Need for Noise-Robust Models in Drug Discovery

In pharmaceutical research, generative models are deployed across the entire drug development lifecycle, from initial drug screening and lead compound optimization to predicting physicochemical properties and ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) profiles [37]. These models often rely on large-scale annotated datasets derived from scientific literature, high-throughput screening, and clinical trials, which are inherently prone to label and text noise [38] [39].

The immense volume of available chemical compounds—a virtual space exceeding 10^60 molecules—creates a significant challenge in the drug discovery process [37]. When generative models are trained on noisy data, the resulting predictions on compound potency, binding affinity, or toxicity can be unreliable. For instance, Graph Neural Networks (GNNs) trained to predict protein-ligand affinities have been shown to primarily 'remember' chemically similar molecules from their training set rather than genuinely learning protein-ligand interactions, leading to overrated and potentially misleading predictions [40]. This noise sensitivity underscores the necessity for robust data-cleaning techniques like TDRanker to ensure that AI applications in drug development are both accurate and reliable.

Comparative Analysis of Noise Identification Approaches

TDRanker: A Novel Approach for Generative Models

TDRanker (Training Dynamics Ranker) is a recently proposed methodology specifically designed to identify noise in datasets used for instruction fine-tuning of autoregressive language models (ArLMs), such as GPT-2 and LaMini [38] [39]. Its core innovation lies in leveraging training dynamics to rank datapoints from easy-to-learn to hard-to-learn. Noisy instances, which are often ambiguous or mislabeled, typically manifest as consistently hard-to-learn throughout the training process.

Unlike previous noise detection techniques designed for autoencoder models (AeLMs), TDRanker accounts for the fundamental differences in learning dynamics exhibited by generative, autoregressive architectures [39]. It demonstrates robust performance across multiple model architectures and varying dataset noise levels, achieving at least 2x faster denoising compared to previous techniques [38]. When applied to real-world classification and generative tasks, TDRanker significantly improves both data quality and final model performance, offering a scalable solution for refining instruction-tuning datasets [38].

Alternative Methodologies for Noise Robustness

Other research avenues have approached the noise problem from different angles. The GeNRT (Generative models for Noise-Robust Training) framework, for instance, was developed for Unsupervised Domain Adaptation (UDA) in computer vision [41]. It integrates normalizing flow-based generative modeling with discriminative convolutional neural networks (CNNs) to mitigate label noise from pseudo-labels in unlabeled target domains. Its two key components are:

- Distribution-based Class-wise Feature Augmentation (D-CFA): Models the class-wise distributions of the target domain to generate clean, synthetic features that augment the original, potentially noisy data [41].

- Generative and Discriminative Consistency (GDC): Enforces prediction consistency between a generative classifier (formed by class-wise generative models) and the standard discriminative classifier, thereby improving robustness against label noise [41].

In the quantum computing domain, progress has been made with noise-robust quantum Generative Adversarial Networks (qGANs). Hybrid qGAN architectures combining Wasserstein GAN with gradient penalty (WGAN-GP) and maximum mean discrepancy (MMD) losses have shown improved capacity to model complex distributions and better resilience to noise on near-term quantum hardware, achieving up to 80% lower Wasserstein distance under 5% depolarizing noise [5].

Table 1: Comparative Overview of Noise-Robust Methods for Generative Models

| Method | Core Principle | Model Architecture Suitability | Key Reported Advantage |

|---|---|---|---|

| TDRanker [38] [39] | Ranks data by training dynamics (easy-to-learn to hard-to-learn) | Autoregressive LMs (GPT-2, LaMini), Autoencoders (BERT) | 2x faster denoising; Improved performance on classification/generation tasks |

| GeNRT [41] | Generative feature augmentation & generative-discriminative consistency | CNNs for Unsupervised Domain Adaptation (UDA) | State-of-the-art on UDA benchmarks (Office-Home, VisDA-2017); mitigates pseudo-label noise |

| Noise-Robust qGANs [5] | Hybrid quantum-classical loss functions (WGAN-GP, MMD) | Quantum Generative Adversarial Networks (qGANs) | 80% lower Wasserstein distance under 5% noise; stable training on real quantum hardware |

Quantitative Performance Comparison

Experimental evaluations across different domains highlight the relative strengths of these approaches. The following table summarizes key quantitative findings from the reviewed research.

Table 2: Summary of Experimental Performance Data

| Method | Dataset(s) | Key Metric | Result | Comparison Baseline |

|---|---|---|---|---|

| TDRanker [38] | Classification & Generative Tasks | Data Denoising Speed | At least 2x faster | Previous noise detection techniques |

| TDRanker [38] | Classification & Generative Tasks | Model Performance | Significant Improvement | Models trained on non-denoised data |

| GeNRT [41] | Office-Home, VisDA-2017, PACS, Digit-Five | Classification Accuracy | Comparable to SOTA | State-of-the-art UDA methods |

| Noise-Robust qGANs [5] | 2D Gaussian, log-normal distributions | Wasserstein Distance | Up to 80% lower | Prior qGAN designs under 5% depolarizing noise |

| Noise-Robust qGANs [5] | European call option pricing | Pricing Error | Below 1% | - |

Experimental Protocols for Noise Identification and Robustness

TDRanker Methodology and Workflow

The TDRanker framework operates through a defined workflow to identify and mitigate noisy data instances.

Diagram Title: TDRanker Noise Identification Workflow

Detailed Protocol:

- Model Fine-Tuning: Begin with the standard instruction fine-tuning process of the target autoregressive language model (e.g., GPT-2, LaMini-Cerebras-256M) on the dataset suspected to contain noise [38] [39].