Reinforcement Learning for Molecular Optimization: A Guide to Generative AI in Drug Discovery

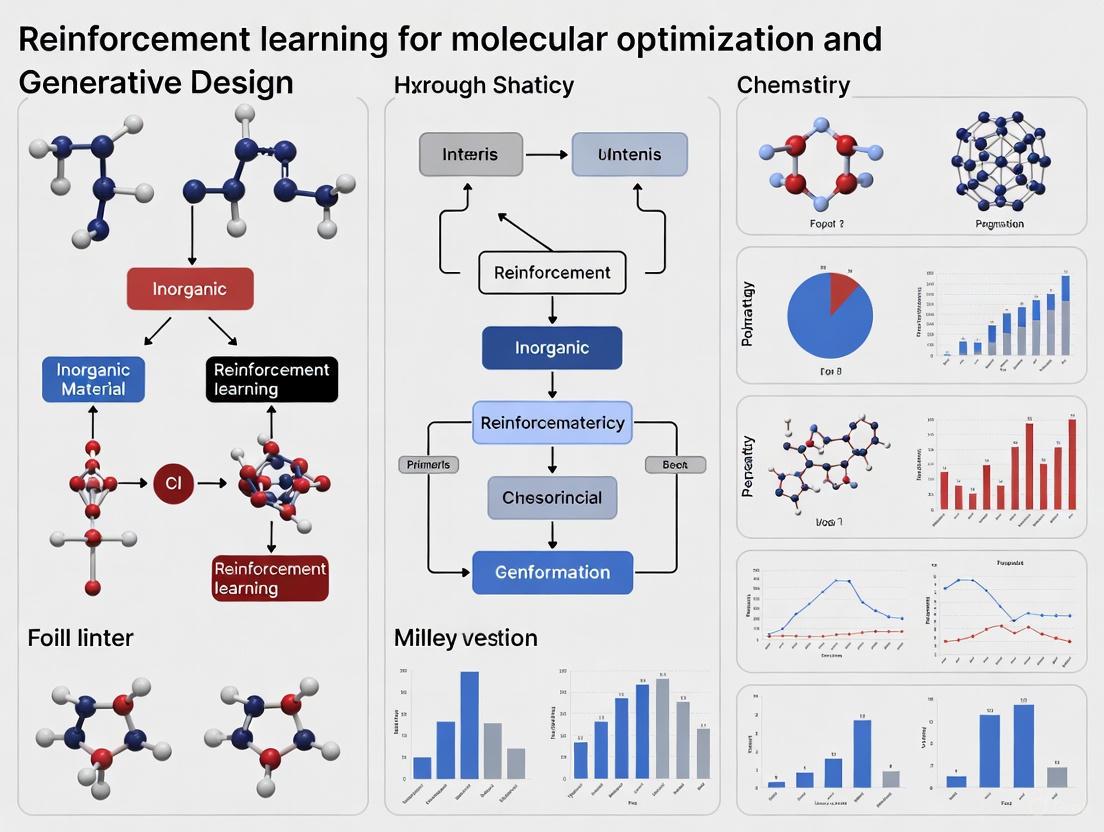

This article provides a comprehensive overview of the application of Reinforcement Learning (RL) in molecular optimization and generative design for drug discovery.

Reinforcement Learning for Molecular Optimization: A Guide to Generative AI in Drug Discovery

Abstract

This article provides a comprehensive overview of the application of Reinforcement Learning (RL) in molecular optimization and generative design for drug discovery. It covers foundational concepts where RL agents learn to optimize molecules by interacting with a chemical environment, receiving rewards for improved properties. The piece delves into key methodological frameworks, including REINVENT, MolDQN, and latent space optimization, highlighting their use in tasks like scaffold hopping and multi-parameter optimization. It further addresses critical challenges such as sparse rewards and chemical validity, presenting technical solutions like experience replay and transfer learning. Finally, the article discusses validation strategies, from benchmarking on tasks like penalized LogP optimization to experimental confirmation of generated bioactive compounds, offering researchers and drug development professionals a roadmap for implementing and evaluating RL in their workflows.

Core Concepts: How Reinforcement Learning is Revolutionizing Molecular Design

Fundamental Concepts: MDPs in Molecular Design

The application of Reinforcement Learning (RL) to chemistry fundamentally relies on framing molecular design as a Markov Decision Process (MDP). This formulation provides a mathematical structure for sequential decision-making, which is inherent to the process of constructing or optimizing a molecule step-by-step.

An MDP is defined by the quintuple ( \langle S, A, R, P, \rho_0 \rangle ) [1]. In the context of molecular design:

- ( S ) represents the state space, where each state ( s \in S ) is a tuple ( (m, t) ), containing a valid molecule ( m ) and the current step number ( t ) [2].

- ( A ) represents the action space, which is the set of all valid chemical modifications that can be applied to a molecule, such as adding an atom or changing a bond [2].

- ( R ) is the reward function ( R: S \times A \times S \to \mathbb{R} ), which assigns a numerical score to transitions, guiding the RL agent toward molecules with desired properties [1].

- ( P ) is the state transition probability ( P(s{t+1} | st, a_t) ), which for molecular design is often deterministic—meaning a given action on a molecule leads to a single, predictable new molecule [2].

- ( \rho_0 ) is the initial state distribution, typically a starting molecule or a set of starting conditions [1].

This MDP framework allows an RL agent to learn a policy ( \pi_\theta ) for sequentially building molecules, one token or one structural modification at a time, with the goal of maximizing the cumulative reward, which reflects the success of the final molecule [1].

Defining Molecular States and Actions

The precise definition of states and actions is critical for creating an efficient and chemically valid MDP.

State Representation: The state ( s = (m, t) ) must encode the current molecule. This can be achieved through several representations, each with advantages and drawbacks, as shown in Table 1. A step limit ( T ) is often explicitly included in the state to define terminal states and control how far the agent can explore from the starting point in chemical space [2].

Action Space Design: The action space must be defined to ensure that all generated molecules are chemically valid. The MolDQN framework [2] [3] achieves this by defining a discrete action space encompassing three core types of modifications:

- Atom Addition: Adding an atom from a predefined set of elements (e.g., C, O) and simultaneously forming a valence-allowed bond between this new atom and the existing molecule.

- Bond Addition: Increasing the bond order between two atoms that have free valence. This includes creating new single, double, or triple bonds, or increasing the order of an existing bond.

- Bond Removal: Decreasing the bond order of an existing bond, or completely removing it. To avoid fragmented molecules, bonds are only fully removed if the resulting molecule has zero or one disconnected atom.

To generate chemically reasonable structures, heuristic rules can be incorporated, such as prohibiting bond formation between atoms that are already in rings to avoid generating molecules with high strain [2].

Key RL Algorithms and Implementation Frameworks

Different RL algorithms can be applied to solve the molecular MDP. The choice of algorithm often depends on the molecular representation (e.g., graph, SMILES string, latent vector) and the desired trade-off between stability, sample efficiency, and exploration.

Policy Gradient and REINFORCE

The REINFORCE algorithm [1] is a policy gradient method that directly optimizes the policy parameters ( \theta ) by following the gradient of the expected reward. Its update rule is given by: [ \nabla J(\theta) = \mathbb{E}{\tau \sim \pi\theta} \left[ \sum{t=0}^{T} \nabla\theta \log \pi\theta(at | s_t) \cdot R(\tau) \right] ] where ( \tau ) is a full trajectory (a complete molecule generation sequence).

REINFORCE is particularly well-suited for pre-trained chemical language models because it allows for large policy updates and treats the entire sequence of tokens needed to generate a molecule (e.g., a SMILES string) as a single action [1]. Several extensions enhance its performance:

- Baselines: Subtracting a baseline ( b ) from the reward reduces the variance of the gradient estimate, speeding up learning. Common choices are a moving-average baseline (MAB) or a leave-one-out baseline (LOO) [1].

- Hill Climbing: This strategy retains only the top-k molecules from a generated batch for policy updates, which has been shown to improve learning efficiency [1].

Value-Based Learning and MolDQN

The MolDQN framework [2] [3] utilizes value-based deep reinforcement learning, specifically Deep Q-Networks (DQN). Instead of learning a policy directly, it learns a Q-function ( Q(s, a) ) that estimates the future expected reward for taking action ( a ) in state ( s ). It incorporates advanced RL techniques like double Q-learning and randomized value functions to improve stability. A key feature of MolDQN is that it operates without pre-training on any dataset, avoiding biases inherent in the training data and enabling exploration of novel chemical regions [2] [3].

Latent Space Reinforcement Learning

The MOLRL framework [4] converts the problem into a continuous optimization task. It uses a pre-trained generative model, such as a Variational Autoencoder (VAE), to map discrete molecules into a continuous latent space. An RL agent, such as a Proximal Policy Optimization (PPO) algorithm, then navigates this latent space to find regions that decode into molecules with desired properties. This approach bypasses the need to explicitly define chemical rules for actions, as the generative model's decoder ensures chemical validity [4]. The quality of the latent space—its reconstruction ability, validity rate, and continuity—is paramount for this method's success [4].

Table 1: Comparison of Molecular Representation and Action Spaces in RL Frameworks

| Framework | Molecular Representation | Action Space | Core Algorithm | Key Feature |

|---|---|---|---|---|

| MolDQN [2] [3] | Molecular Graph | Discrete, graph modifications (add/remove atom/bond) | Deep Q-Network (DQN) | 100% chemical validity via defined actions; no pre-training. |

| REINFORCE for CLMs [1] | SMILES String | Discrete, next token prediction | REINFORCE Policy Gradient | Leverages pre-trained chemical language models; high sample efficiency. |

| MOLRL [4] | Latent Vector (from VAE) | Continuous, vector manipulation | Proximal Policy Optimization (PPO) | Continuous space optimization; agnostic to underlying generative model. |

| IB-MDP [5] | Explicit Environment Model | Model-based actions | Implicit Bayesian MDP | Integrates historical data via similarity metric for robust planning. |

Quantitative Performance Comparison

Evaluating RL methods requires standardized benchmarks. A common single-objective task is the constrained optimization of penalized LogP (pLogP), which measures a molecule's hydrophobicity while penalizing synthetic inaccessibility and the presence of long cycles. The goal is to significantly improve the pLogP of a set of starting molecules while maintaining a threshold of similarity to the original structure [4].

Table 2: Performance on the pLogP Optimization Benchmark

| Method | Representation | Average pLogP Improvement | Notable Strength |

|---|---|---|---|

| Jin et al. (2018) [4] | Graph | Baseline | -- |

| MolDQN [2] [3] | Graph | Comparable or superior to state-of-the-art | Effective multi-objective optimization. |

| MOLRL (VAE-CYC) [4] | Latent (VAE) | High performance | Demonstrates effectiveness of a continuous, structured latent space. |

| MOLRL (MolMIM) [4] | Latent (MolMIM) | High performance | Shows framework's adaptability to different generative models. |

For real-world drug discovery, multi-objective optimization is essential. The MolDQN framework was extended to simultaneously maximize drug-likeness (QED) while maintaining similarity to a starting molecule, a common requirement in lead optimization [2] [3]. The IB-MDP algorithm also demonstrated significant improvements over traditional rule-based methods by making more efficient decisions on resource allocation, effectively balancing the dual objectives of reducing state uncertainty and optimizing expected costs [5].

Experimental Protocols and Workflows

Protocol: Molecule Optimization with MolDQN

Objective: To optimize a molecule for a specific property (e.g., pLogP or QED) using graph-based modifications and Deep Q-Learning [2] [3].

Initialization:

- Define the initial molecule ( m0 ) and set the state to ( s0 = (m_0, 0) ).

- Set the maximum number of steps per episode, ( T ).

- Initialize the replay buffer and the Q-network with random weights.

Action Selection & Execution:

- For the current state ( st = (mt, t) ), the agent selects an action ( a_t ) from the valid action space (atom addition, bond addition, bond removal).

- Validity Check: The environment only allows actions that do not violate chemical valence rules. Invalid actions are masked out.

- The action is applied deterministically, resulting in a new molecule ( m_{t+1} ).

Reward Calculation:

- A reward ( rt ) is calculated based on the property of the new molecule ( m{t+1} ). To emphasize the final result, rewards are discounted by ( \gamma^{T-t} ) (e.g., with ( \gamma = 0.9 )).

Learning:

- The transition ( (st, at, rt, s{t+1}) ) is stored in the replay buffer.

- The Q-network is updated by sampling mini-batches from the replay buffer and minimizing the temporal difference error, using techniques like double Q-learning to stabilize training.

Termination:

- The episode terminates when ( t = T ). The process is repeated for multiple episodes until convergence.

Protocol: Latent Space Optimization with MOLRL

Objective: To optimize molecules by navigating the latent space of a pre-trained generative model using the PPO algorithm [4].

Model Pre-training:

- Train a generative model (e.g., a VAE with a cyclical annealing schedule) on a large corpus of molecules (e.g., the ZINC database). Ensure the model has a high reconstruction rate and a continuous latent space.

Environment Setup:

- The state is a latent vector ( z_t ), sampled from the prior distribution or encoded from a starting molecule.

- The action is a vector ( \Delta z ) that perturbs the current latent vector: ( z{t+1} = zt + \Delta z ).

- The new state ( z{t+1} ) is decoded into a molecule ( m{t+1} ).

Reward Calculation:

- If the decoded SMILES is invalid, the reward is 0 or a negative penalty.

- If valid, the reward is a function of the molecule's properties (e.g., pLogP, binding affinity).

Policy Optimization:

- The PPO algorithm is used to train a policy ( \pi_\theta(\Delta z | z) ) that outputs perturbations. PPO's trust region mechanism helps ensure stable training in the complex latent landscape.

- The policy is updated to maximize the expected cumulative reward.

Protocol: REINFORCE for Chemical Language Models

Objective: To fine-tune a pre-trained chemical language model (CLM) to generate molecules with desired properties [1].

Prior Policy:

- Start with a CLM (e.g., a transformer) that has been pre-trained on a large dataset of SMILES strings. This model serves as the initial policy ( \pi_\theta ).

Molecule Generation:

- The policy autoregressively generates a molecule token-by-token, forming a complete SMILES string (a trajectory ( \tau )).

Reward Assignment:

- The generated molecule is evaluated by a reward function ( R(\tau) ) based on its properties.

- A baseline ( b ) (e.g., a moving average of recent rewards) is subtracted from the reward to reduce variance.

Policy Update:

- The REINFORCE gradient is computed: [ \nabla J(\theta) = \mathbb{E}{\tau \sim \pi\theta} \left[ \sum{t=0}^{T} \nabla\theta \log \pi\theta(at | s_t) \cdot (R(\tau) - b) \right] ]

- The policy parameters ( \theta ) are updated via gradient ascent. A regularization term is often added to prevent the policy from straying too far from the pre-trained prior, ensuring generated molecules remain drug-like.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Computational Tools for RL in Chemistry

| Tool / "Reagent" | Function | Application Example |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit. | Used to parse SMILES strings, check molecular validity, calculate molecular descriptors (e.g., LogP, QED), and handle chemical reactions [2] [4]. |

| ZINC Database | A freely available database of commercially available compounds. | Serves as a standard dataset for pre-training generative models and benchmarking optimization algorithms [4]. |

| SMILES/DeepSMILES | String-based representations of molecular structure. | The "language" for chemical language models (CLMs). The grammar ensures syntactic validity [1]. |

| Chemical Language Model (CLM) | A pre-trained neural network (e.g., Transformer) on SMILES strings. | Provides a prior policy for REINFORCE, enabling efficient exploration of chemically plausible space [1]. |

| Variational Autoencoder (VAE) | A generative model that maps molecules to a continuous latent space. | Creates a smooth space for continuous optimization with algorithms like PPO in the MOLRL framework [4]. |

| Docking Simulation Software | Predicts the binding pose and affinity of a small molecule to a protein target. | Acts as a physics-based reward oracle in outer active learning cycles, guiding generation toward bioactive molecules [6]. |

| Active Learning (AL) Framework | An iterative process that selects the most informative data points for evaluation. | Integrated with VAEs to iteratively refine the generative model using feedback from expensive physics-based oracles [6]. |

The Problem of Sparse Rewards in Molecular Optimization and Its Impact on Learning

In the field of computational drug discovery, reinforcement learning (RL) has emerged as a powerful paradigm for de novo molecular design. A significant obstacle within this domain is the problem of sparse rewards, a phenomenon where the vast majority of generated molecules receive no meaningful feedback from the environment during training. This sparsity arises because specific bioactivity is a target property existing only in a small fraction of molecules, unlike fundamental physical properties that every molecule possesses [7]. When a generative model trained on a generic dataset begins optimization, the probability of randomly sampling a molecule with high activity for a specific protein target is exceptionally low. Consequently, the RL agent is predominantly trained on negative examples (inactive molecules), causing it to struggle with exploration and fail to learn an optimal strategy for maximizing expected reward [7]. This sparse reward problem represents a critical bottleneck, limiting the efficiency and success of RL in designing novel bioactive compounds.

Quantifying the Sparse Reward Challenge

The sparsity of rewards is particularly acute when optimizing for complex biological activities compared to simple physicochemical properties. The table below summarizes performance comparisons that highlight this challenge and the efficacy of proposed solutions.

Table 1: Analysis of Sparse Reward Solutions in Molecular Optimization

| Method / Aspect | Key Finding / Performance Metric | Implication for Sparse Rewards |

|---|---|---|

| Naive Policy Gradient [7] | Failed to discover molecules with high active class probability for EGFR. | Demonstrates complete failure mode under sparse rewards. |

| Policy Gradient + Fine-Tuning & Experience Replay [7] | Successfully generated molecules with high predicted activity; experimental validation confirmed potent EGFR inhibitors. | Overcomes sparsity by leveraging prior knowledge and reusing successful experiences. |

| MOLRL (Latent Space PPO) [4] | Achieved comparable or superior performance on benchmark tasks (e.g., penalized LogP optimization). | Transforms problem to continuous space; PPO's robustness aids exploration. |

| RL vs. Bayesian Optimization (BO) [8] | PPO succeeded on 31% of complex samples (5-segment gradient) vs. 24% for BO. | RL can outperform other methods in high-complexity, potentially sparse environments. |

| Multi-Objective Optimization [9] [10] | Generated compounds with a good balance of conflicting pharmacological attributes. | Mitigates sparsity by providing multiple, richer feedback signals. |

Table 2: Impact of Technical Strategies on Model Performance

| Technical Strategy | Effect on Validity/Uniqueness | Effect on Activity |

|---|---|---|

| Policy Gradient Only [7] | Low | Low |

| + Fine-Tuning [7] | Moderate | Moderate |

| + Experience Replay [7] | Moderate | Moderate |

| + Fine-Tuning + Experience Replay [7] | High | High |

Experimental Protocols for Addressing Reward Sparsity

Protocol 1: Experience Replay and Fine-Tuning

This protocol is designed to overcome sparse rewards by retaining and leveraging successful examples [7].

- Pre-training: A generative model (e.g., a Recurrent Neural Network) is initially trained on a vast dataset of drug-like molecules (e.g., ChEMBL) in a supervised manner to produce valid SMILES strings. This is the "naive" generator.

- Experience Replay Buffer Initialization: The pre-trained model generates an initial set of molecules. Those with predicted active class probabilities exceeding a predefined threshold are admitted into an experience replay buffer.

- Reinforcement Learning Cycle:

- Generation: The current policy (generator) is used to produce a batch of molecules.

- Evaluation: A Reward Predictor, such as a Random Forest ensemble QSAR model, evaluates the generated molecules and assigns rewards based on the target property (e.g., active class probability for EGFR).

- Optimization: The policy is updated using a policy gradient algorithm, utilizing the rewards.

- Buffer Update: Molecules with high reward scores from the current batch are added to the experience replay buffer.

- Fine-Tuning: The policy is periodically fine-tuned on the curated contents of the experience replay buffer, reinforcing the generation of successful patterns.

Protocol 2: Latent Space Optimization with PPO

This protocol converts the discrete molecular optimization problem into a continuous one, facilitating more efficient exploration [4].

- Generative Model Pre-training: An autoencoder (e.g., a Variational Autoencoder with cyclical annealing or a MolMIM model) is pre-trained on a large chemical database (e.g., ZINC). The model must achieve high reconstruction performance and validity to ensure a meaningful latent space.

- Latent Space Evaluation: The continuity of the latent space is evaluated by perturbing latent vectors with Gaussian noise and measuring the structural similarity (e.g., Tanimoto) between original and decoded molecules. A smooth decay in similarity is desirable.

- RL Agent Training:

- State: The current state is a latent vector,

z, representing a molecule. - Action: The action is a change in the latent space (

Δz), defining a movement to a new region. - Transition: The new state is

z' = z + Δz. - Reward: The new latent vector

z'is decoded into a molecule. A reward is calculated based on the molecule's properties. For a single-property task like optimizing penalized LogP (pLogP), the reward is the pLogP value. For scaffold-constrained optimization, the reward can be a weighted sum of pLogP and a penalty for dissimilarity from a target scaffold. - Learning: A PPO agent is trained to maximize the cumulative reward by learning a policy that maps states to actions in the latent space.

- State: The current state is a latent vector,

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for Molecular RL

| Research Reagent | Function in Experimental Protocol |

|---|---|

| Generative Model (e.g., RNN, VAE, Graph Neural Network) [7] [10] | The core "policy" that proposes new molecular structures; often pre-trained on general chemical databases. |

| Reward Predictor (e.g., Random Forest QSAR Model, Docking Score Function) [7] | Provides the reward signal by predicting the property or activity of a generated molecule; a key source of sparsity if highly selective. |

| Experience Replay Buffer [7] | A memory that stores high-reward molecules; used to fine-tune the generative model and mitigate forgetting of successful strategies. |

| Latent Space Model (e.g., pre-trained VAE) [4] | Encodes molecules into a continuous vector representation, enabling the use of efficient continuous-space optimization algorithms like PPO. |

| Reference Database (e.g., ChEMBL, ZINC) [7] [4] | Provides the initial training data for the generative model and benchmarks for evaluating chemical diversity and novelty. |

Workflow and Logical Diagrams

Diagram 1: Combating sparse rewards with experience replay and fine-tuning.

Diagram 2: Molecular optimization in the latent space using PPO.

Molecular representation is a foundational step in computational chemistry and drug discovery, converting chemical structures into a format that machine learning models can process. The choice of representation directly influences a model's ability to predict properties, optimize structures, and generate novel candidates. Within the context of reinforcement learning (RL) for molecular optimization, the representation forms the state space upon which agents operate. This article details three pivotal representations: SMILES strings, a line notation; molecular graphs, a graph-based model; and latent space embeddings, a compressed feature vector. We provide a structured comparison, experimental protocols for their application in RL, and a toolkit for implementation.

Molecular Representation Formats: A Comparative Analysis

The following table summarizes the core characteristics, advantages, and disadvantages of the three primary molecular representation formats.

Table 1: Comparative Analysis of Molecular Representation Formats

| Feature | SMILES Strings | Molecular Graphs | Latent Space Embeddings |

|---|---|---|---|

| Representation Type | Line notation (string) | Mathematical graph (nodes & edges) | Continuous vector (compressed features) |

| Primary Data Structure | ASCII string | Tuple ( G = (\mathcal{V}, \mathcal{E}, X, E) ) [11] | Dense vector (e.g., 128-512 dimensions) |

| Human Readability | High (for trained chemists) | Low (requires visualization) | None (black-box model) |

| Machine Learning Suitability | Sequential models (RNNs, Transformers) | Graph Neural Networks (GNNs) | Any dense vector model (MLPs) |

| Handles 3D/Stereochemistry | Yes (with isomeric SMILES) [12] | Yes (via 3D coordinate extension ( G^{(3D)} )) [11] | Implicitly, if 3D info is encoded |

| Key Advantage | Compact, simple to generate [13] | Structurally faithful, 100% validity in RL [2] | Dimensionality reduction, enables interpolation [14] |

| Key Challenge in RL | High invalid rate during generation [2] [15] | Complex action space definition [2] | Decoupling and interpreting dimensions [16] |

Detailed Representations and Methodologies

SMILES (Simplified Molecular-Input Line-Entry System)

SMILES is a line notation using ASCII characters to represent molecular structures [12]. It is a linguistic construct with a simple vocabulary and grammar rules, containing the same information as an extended connection table but in a more compact form [13].

Specification Rules:

- Atoms: Atoms are represented by their atomic symbols. Elements in the "organic subset" (B, C, N, O, P, S, F, Cl, Br, I) can be written without brackets if they have the implied number of hydrogens. All other elements, atoms with formal charges, or non-standard isotopes must be enclosed in brackets, with hydrogens and charges specified (e.g.,

[Na+],[OH-]) [12] [13]. - Bonds: Single (

-), double (=), triple (#), and aromatic (:) bonds are used. Single and aromatic bonds can be omitted between adjacent atoms [12]. - Branches: Side chains are specified using parentheses, which can be nested (e.g.,

CC(C)C(=O)Ofor isobutyric acid) [13]. - Cyclic Structures: Rings are opened by breaking one bond per cycle, with matching numerical labels placed after the connected atoms to indicate closure (e.g.,

c1ccccc1for benzene) [12]. - Stereochemistry: Tetrahedral chirality is specified using

@and@@symbols (e.g.,N[C@@H](C)C(=O)Ofor L-alanine) [13].

A key concept is canonical SMILES, where an algorithm generates a unique, standardized string for a given molecular structure, which is crucial for database indexing [12].

Molecular Graphs

A molecular graph ( G ) is formally defined as a tuple ( G = (\mathcal{V}, \mathcal{E}, X, E) ), where:

- ( \mathcal{V} ) is the set of vertices (atoms).

- ( \mathcal{E} ) is the set of edges (bonds).

- ( X ) is a matrix of node features (e.g., atom type, formal charge).

- ( E ) contains edge features (e.g., bond type, stereochemistry) [11].

This representation naturally captures the connectivity and local environment of atoms, making it powerful for graph-based machine learning. Recent advances include hierarchical representations that decompose the graph into atom, motif (functional group), and molecule tiers, improving interpretability and prediction accuracy [11]. Extensions to 3D molecular graphs ( G^{(3D)} ) incorporate spatial coordinates ( \mathcal{C} ) to model geometric and non-covalent interactions [11].

Latent Spaces

A latent space is a compressed, lower-dimensional representation of data that preserves the underlying essential structure [14]. In machine learning, data points (like molecules) are mapped to vectors (embeddings) in this space, where proximity implies similarity [16]. This process is a form of dimensionality reduction [14].

Learning Latent Spaces with Autoencoders: Autoencoders are neural networks designed for this compression. They consist of an encoder that maps input data to a latent vector, and a decoder that attempts to reconstruct the original input from this vector. The model is trained to minimize the difference (reconstruction loss) between the original and reconstructed input [14]. Variational Autoencoders (VAEs) are a probabilistic variant that encodes latent space as a distribution (mean μ and standard deviation σ), enabling the generation of novel, realistic data samples by sampling from this distribution [14]. The latent space must exhibit continuity (similar points decode to similar structures) and completeness (any point decodes to a valid structure) [14].

Application in Reinforcement Learning for Molecular Optimization

Reinforcement Learning (RL) formulates molecular optimization as a Markov Decision Process (MDP). An agent modifies a molecular structure (state) through a series of valid actions to maximize a reward signal based on desired properties.

RL Protocol Using Molecular Graph Representation (MolDQN)

The MolDQN framework ensures 100% chemical validity by defining actions directly on the molecular graph [2].

Protocol:

- State Definition: The state ( s ) is defined as ( (m, t) ), where ( m ) is the current molecule and ( t ) is the step number, with a maximum step limit ( T ) [2].

- Action Space Definition: The action space ( \mathscr{A} ) consists of chemically valid modifications:

- Atom Addition: A new atom from a predefined set ( \mathcal{E} ) is added, connected to the existing molecule by a valence-allowed bond. All possible bond orders are considered as separate actions [2].

- Bond Addition: The bond order between two atoms with free valence is increased (e.g., no bond → single, single → double) [2].

- Bond Removal: The bond order between two atoms is decreased (e.g., triple → double, double → single, single → no bond). If bond removal results in a disconnected atom, it is removed [2].

- State Transition: ( {P_{sa}} ) is deterministic; applying an action ( a ) to molecule ( m ) leads to a unique new molecule ( m' ) [2].

- Reward Function: A reward ( \mathcal{R} ) is assigned based on the molecule's properties. Rewards are given at each step but are discounted by ( \gamma^{T-t} ) (where ( \gamma ) is typically 0.9) to prioritize the final state's reward [2].

- RL Algorithm: The Deep Q-Network (DQN) algorithm is used to learn the action-value function, which estimates the expected cumulative reward of taking a given action in a given state [2].

Diagram 1: MolDQN RL Workflow for Graph-Based Optimization

RL Protocol Using SMILES and Transformer-Based Representation

An alternative approach uses transformer models, pre-trained to generate molecules similar to an input, which are then fine-tuned with RL for property optimization [15].

Protocol (REINVENT framework):

- Pre-training (Prior): A transformer model is trained on a large dataset of molecular pairs (e.g., from PubChem) to learn the probability ( \mathrm{P}(T|X; \boldsymbol{\uptheta}_{\text{prior}}) ) of generating a tokenized SMILES sequence ( T ) given an input molecule ( X ) [15].

- Reinforcement Learning Fine-tuning:

- Agent Initialization: The RL agent is initialized with the pre-trained transformer model (the "prior") [15].

- Sampling: In each RL step, the agent (with parameters ( \boldsymbol{\uptheta} )) generates a batch of SMILES strings given an input molecule [15].

- Scoring: A scoring function ( S(T) ), which aggregates multiple desired properties, evaluates the generated molecules. A diversity filter is applied to penalize frequently generated structures and encourage novelty [15].

- Loss Calculation & Update: The agent is updated by minimizing a loss function that encourages high scores while preventing excessive deviation from the prior, ensuring the generated SMILES remain valid [15]: ( \mathcal{L}(\boldsymbol{\uptheta}) = \left( \mathrm{NLL}{\text{aug}}(T|X) - \mathrm{NLL}(T|X; \boldsymbol{\uptheta}) \right)^2 ) where ( \mathrm{NLL}{\text{aug}}(T|X) = \mathrm{NLL}(T|X; \boldsymbol{\uptheta}_{\text{prior}}) - \sigma S(T) ) [15].

Diagram 2: Transformer-Based RL (REINVENT) Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Computational Tools for Molecular Representation and RL

| Tool Name / Resource | Type | Primary Function in Research |

|---|---|---|

| RDKit | Cheminformatics Library | Processes SMILES strings, calculates molecular properties, handles canonicalization, and generates molecular graphs from structures [2] [15]. |

| MolGraph [11] / Deep Graph Library (DGL) | Graph Neural Network Framework | Provides APIs for building GNNs, automating the featurization of molecular graphs into tensors, and training models for property prediction. |

| TensorFlow/PyTorch | Deep Learning Framework | Enables the construction and training of autoencoders, transformer models, and RL agents for molecular design tasks [14] [15]. |

| REINVENT [15] | RL Framework for Molecular Design | A specialized platform for integrating generative models (like transformers) with reinforcement learning, facilitating multi-parameter optimization. |

| QuickVina 2 (QVina2) [17] | Molecular Docking Software | Used in structure-based drug design to predict the binding pose and affinity of generated ligands against a protein target, validating design hypotheses. |

| Ziv-Lempel Compression | Data Compression | Demonstrates the high compressibility of SMILES strings, reducing database storage requirements significantly [13]. |

In the context of reinforcement learning (RL) for molecular optimization, the reward function is the central mechanism that guides the generative agent toward designing molecules with desirable characteristics. It translates complex, multi-faceted design goals into a single, computable score that the RL agent seeks to maximize. For generative design in drug discovery, an effective reward function must balance the pursuit of biological activity with essential pharmaceutical developability criteria. This document details the protocol for constructing a robust reward function that integrates predictive Quantitative Structure-Activity Relationship (QSAR) models, Quantitative Estimate of Drug-likeness (QED), and Synthetic Accessibility (SA) scores, providing a framework for the de novo design of viable drug candidates [18] [10].

Core Components of the Reward Function

The proposed reward function is a weighted sum of multiple components, each quantifying a critical aspect of a successful drug molecule. The general form is:

R(m) = w₁·Rᵩₛₐᵣ(m) + w₂·RQED(m) + w₃·RSA(m)

Where:

- m: The generated molecule.

- Rᵩₛₐᵣ(m): The component based on the predicted bioactivity from the QSAR model.

- R_QED(m): The component quantifying drug-likeness.

- R_SA(m): The component estimating the ease of synthesis.

- w₁, w₂, w₃: Weights that balance the importance of each objective.

The following sections break down the formulation and calculation of each component.

QSAR-Based Bioactivity Reward (Rᵩₛₐᵣ)

The QSAR component rewards molecules predicted to have high potency against the biological target.

Rationale: A stacking-ensemble QSAR model, which combines multiple machine learning algorithms, can achieve state-of-the-art predictive performance for biological activity (e.g., pIC50 or pKi), as demonstrated by a model for Syk inhibitors that achieved a correlation coefficient of 0.78 on the test set [18]. This model serves as a fast, computational proxy for expensive and time-consuming wet-lab experiments during the generative phase.

Calculation Protocol:

- Input: SMILES string of the generated molecule

m. - Featurization: Convert the SMILES string into a molecular fingerprint or descriptor vector using a standardized method (e.g., ECFP4 fingerprints) that matches the input requirements of the pre-trained QSAR model.

- Prediction: Input the feature vector into the pre-trained stacking-ensemble QSAR model to obtain the predicted bioactivity value, typically pIC50 (negative log of the half-maximal inhibitory concentration).

- Normalization: Scale the predicted pIC50 value to a normalized reward score between 0 and 1.

- Rᵩₛₐᵣ(m) = (pIC50predicted - pIC50min) / (pIC50max - pIC50min)

- Here,

pIC50_minandpIC50_maxare the minimum and maximum pIC50 values observed in the training dataset, defining the bounds for normalization.

Drug-Likeness Reward (R_QED)

This component rewards molecules that exhibit properties typical of successful oral drugs.

Rationale: The Quantitative Estimate of Drug-likeness (QED) is a quantitative metric that encapsulates the desirability of a molecule's physicochemical profile based on key properties like molecular weight, logP, and the number of hydrogen bond donors and acceptors [10]. A higher QED score indicates a higher probability of the molecule having drug-like properties.

Calculation Protocol:

- Input: SMILES string of the generated molecule

m. - Calculation: Use a cheminformatics toolkit (e.g., RDKit) to calculate the QED score directly from the molecular structure.

qed_score = rdkit.Chem.QED.qed(m)

- Reward Assignment: The QED score itself, which ranges from 0 to 1, can be used directly as the reward component.

- RQED(m) = qedscore

Synthetic Accessibility Reward (R_SA)

This component penalizes molecules that are predicted to be difficult or impractical to synthesize in a laboratory.

Rationale: De novo generated molecules can often be synthetically complex. The Synthetic Accessibility (SA) score estimates the ease of synthesis, often based on molecular complexity and fragment contributions. Rewarding high synthetic accessibility is crucial for ensuring that generated molecules are not just computationally plausible but also practically viable [10].

Calculation Protocol:

- Input: SMILES string of the generated molecule

m. - Calculation: Compute a synthetic accessibility score. For example, using a scoring function similar to the one implemented in RDKit, which combines fragment contributions and molecular complexity.

sa_score = sascorer.calculateScore(m)

- Normalization and Inversion: Typical SA scores are lower for easier-to-synthesize molecules. Therefore, the score must be inverted and normalized to create a reward where higher values are better.

- RSA(m) = 1 - (sascore - samin) / (samax - sa_min)

sa_minandsa_maxare the practical lower and upper bounds of the SA scorer used.

Quantitative Metrics and Tuning Parameters

The table below summarizes the core metrics and typical parameters for each reward component, providing a reference for implementation and tuning.

Table 1: Summary of Reward Function Components and Parameters

| Reward Component | Core Metric | Data Source for Model | Typical Value Range | Implementation Notes |

|---|---|---|---|---|

| QSAR (Rᵩₛₐᵣ) | pIC50 (predicted) | Public/Proprietary IC50 data (e.g., ChEMBL) [18] | Normalized to [0, 1] | A stacking ensemble of RFR, XGB, and SVR is recommended for robust prediction [18]. |

| Drug-likeness (R_QED) | QED Score | Based on known drug property distributions [10] | 0 (low) to 1 (high) | Can be calculated directly with RDKit. A desirable target is >0.7. |

| Synthetic Accessibility (R_SA) | SA Score | Based on fragment contributions and complexity [10] | Normalized to [0, 1] | Invert the raw score so that higher reward = easier synthesis. |

Table 2: Example Weighting Schemes for Different Objectives

| Research Objective | w₁ (QSAR) | w₂ (QED) | w₃ (SA) | Use Case Scenario |

|---|---|---|---|---|

| High-Potency Hit Finding | 0.80 | 0.10 | 0.10 | Early-stage discovery, prioritizing maximum activity. |

| Lead Optimization | 0.50 | 0.25 | 0.25 | Balancing potency with developability for candidate selection. |

| Library Enhancement | 0.20 | 0.40 | 0.40 | Designing diverse, synthesizable compounds with good properties. |

Integrated Experimental Protocol

This protocol outlines the end-to-end process for implementing and executing an RL-based molecular generation campaign using the defined reward function.

Phase 1: Preparation of the QSAR Model

- Data Curation: Collect and curate a dataset of molecules with experimentally determined IC50 values for the target of interest from databases like ChEMBL [18].

- Data Preprocessing: Remove duplicates and outliers. Convert IC50 to pIC50 (-log10(IC50)). Split the data into training and test sets.

- Model Training and Validation:

- Featurization: Encode molecules using ECFP4 fingerprints or other relevant descriptors.

- Training: Train multiple machine learning models (e.g., Random Forest, XGBoost, Support Vector Regression).

- Ensemble Construction: Implement a stacking ensemble model, using the predictions of the base models as input to a final meta-regressor (e.g., Linear Regression) to achieve superior predictive performance (e.g., R² > 0.75) [18].

- Validation: Validate the model's performance on the held-out test set.

Phase 2: RL-Based Molecular Generation

- Generative Model Setup: Select a suitable RL-based generative model, such as a graph-based model (e.g., GCPN, GraphAF) [10] or a fragment-based approach (e.g., FREED++) [18].

- Reward Function Integration: Program the reward function

R(m)as described in Section 2, integrating the pre-trained QSAR model, QED, and SA calculators. - Agent Training:

- The agent (generative model) iteratively proposes new molecules.

- For each proposed molecule

m, the rewardR(m)is computed. - The agent's policy is updated using a policy gradient method (e.g., Proximal Policy Optimization - PPO) [4] to maximize the expected cumulative reward.

- Training continues for a set number of episodes or until convergence, indicated by the stable generation of high-reward molecules.

Phase 3: Post-Generation Analysis

- Selection: Filter the generated molecules based on a high composite reward score and thresholds for individual components (e.g., pIC50 > 7, QED > 0.6).

- Diversity and Novelty Check: Assess the structural diversity and novelty of the top candidates compared to known inhibitors in the training set.

- Experimental Validation: Synthesize the top-ranked, novel candidates and subject them to in vitro biological testing to validate the model predictions.

Workflow and Signaling Pathways

The following diagram illustrates the logical workflow and data flow of the integrated reinforcement learning system for molecular generation.

Molecular Optimization via RL

The Scientist's Toolkit: Research Reagent Solutions

This section lists the essential computational tools and data resources required to implement the described protocol.

Table 3: Essential Research Reagents and Tools

| Tool / Resource | Type | Primary Function in Protocol | Reference/Source |

|---|---|---|---|

| ChEMBL Database | Data Repository | Source of experimental bioactivity (IC50) data for QSAR model training. | [18] |

| RDKit | Cheminformatics Library | Calculates molecular descriptors, fingerprints, QED scores, and SA scores. | [4] [10] |

| scikit-learn / PyCaret | ML Library / AutoML | Framework for building and evaluating the stacking-ensemble QSAR model. | [18] |

| FREED++ / GCPN | Generative Model | RL-based molecular generation frameworks that can be customized with a reward function. | [18] [10] |

| ZINC Database | Compound Database | Provides a source of drug-like molecules for pre-training generative models or benchmarking. | [4] |

| Optuna | Hyperparameter Optimization | Automates the tuning of hyperparameters for the QSAR and RL models. | [18] |

Frameworks in Action: REINVENT, MolDQN, and Latent Space Optimization

Generative artificial intelligence (GenAI) has emerged as a transformative tool in molecular design, enabling the exploration of vast chemical spaces to discover novel compounds with desired properties [19]. Within this field, policy-based reinforcement learning (RL) represents a cornerstone methodology for guiding the generation of Simplified Molecular-Input Line-Entry System (SMILES) strings toward specific biological and physicochemical objectives. The REINVENT platform, primarily built upon the REINFORCE algorithm, has established itself as a reference implementation for AI-driven molecular design, successfully supporting real-world drug discovery projects [20]. These methods frame molecular generation as an inverse design problem, aiming to map a set of desired properties back to the vastness of chemical space [20]. This Application Note provides a detailed examination of the REINFORCE algorithm's implementation within molecular generation frameworks like REINVENT, including standardized protocols for its application in lead optimization and scaffold hopping scenarios.

Core Principles and Algorithmic Foundations

The REINFORCE Algorithm in Chemical Language Models

The REINFORCE algorithm, a policy gradient method, is particularly well-suited for optimizing chemical language models (CLMs) due to its compatibility with pre-trained models and its effectiveness in handling the sequential nature of SMILES generation [21] [22]. In this framework, the process of generating a molecule one token at a time is treated as a Markov Decision Process (MDP) [21] [22].

The fundamental objective of REINFORCE is to maximize the expected reward of generated molecular sequences. The policy parameters θ are updated using the gradient of the performance measure J(θ), as defined by the policy gradient theorem [21] [22]:

∇J(θ) = 𝔼[∑∇θlogπθ(at|st) · R(τ)]

Where:

- πθ(at|st) represents the probability of taking action at (selecting the next token) given the current state st (the sequence of tokens generated so far)

- R(τ) denotes the cumulative reward for the complete trajectory τ (the fully generated SMILES string)

A critical enhancement to this basic formulation involves incorporating a baseline b to reduce the variance of gradient estimates, leading to more stable training [21] [22]:

∇J(θ) = 𝔼[∑∇θlogπθ(at|st) · (R(τ) - b)]

Common baseline implementations include the moving average baseline (MAB) and leave-one-out baseline (LOO), which have demonstrated improved learning efficiency in molecular optimization tasks [22].

REINVENT Architecture and Components

REINVENT 4 implements REINFORCE within a comprehensive generative framework that utilizes recurrent neural networks (RNNs) and transformer architectures to drive molecule generation [20]. The software integrates several machine learning paradigms, including transfer learning, reinforcement learning, and curriculum learning, within a unified architecture [20].

Key components of the REINVENT ecosystem include:

- Prior Agent: A foundation model trained in an unsupervised fashion on large public datasets of molecules (e.g., ChEMBL, ZINC) that captures the underlying probability distribution of SMILES strings and serves as the initial policy [20].

- Agent Model: The model being optimized through RL, which starts as a copy of the prior and is progressively updated to maximize the reward signal.

- Scoring Function: A modular component that evaluates generated molecules based on multiple criteria and returns a scalar reward value between 0 and 1.

- Experience Replay: A mechanism that stores high-reward molecules from previous iterations for reuse in training, preventing catastrophic forgetting and improving sample efficiency [22].

Table 1: Core Components of the REINVENT Framework

| Component | Function | Implementation in REINVENT |

|---|---|---|

| Prior Agent | Unbiased molecule generator; represents chemical space of training data | RNN or Transformer trained on 1.5M+ drug-like molecules |

| Agent Model | Learnable policy optimized for specific objectives | Copy of prior updated via policy gradient |

| Scoring Function | Evaluates generated molecules against design goals | Python class with modular components (e.g., QED, SA Score, custom predictors) |

| Experience Replay | Stores high-performing molecules from previous iterations | Buffer with configurable capacity and sampling strategy |

Quantitative Performance Benchmarks

The performance of REINVENT and its underlying REINFORCE algorithm has been extensively evaluated across multiple molecular optimization benchmarks. The platform has demonstrated superior sample efficiency in molecular optimization tasks compared to many alternative methods [20].

Table 2: Performance Benchmarks for REINFORCE-based Molecular Optimization

| Benchmark/Task | Algorithm | Performance Metrics | Comparative Results |

|---|---|---|---|

| Penalized LogP Optimization | REINFORCE + Prior | 80% of generated molecules achieve pLogP > 5.0 | Outperforms graph-based and VAE approaches in sample efficiency [20] |

| DRD2 Activity Optimization | REINVENT (REINFORCE) | >90% predicted activity at convergence | Surpasses GAN and random search in success rate [21] |

| Scaffold-Constrained Optimization | MOLRL (PPO in latent space) | 60-70% success rate in generating active compounds | Comparable to state-of-the-art methods while maintaining scaffold constraints [4] |

| Multi-Objective Optimization | REINVENT 4 (RL/CL) | Generates molecules satisfying 3+ constraints simultaneously | Demonstrated in prospective studies for in-house drug discovery [20] |

Recent advancements have demonstrated that REINFORCE-based approaches can successfully generate molecules with specific substructure constraints while simultaneously optimizing molecular properties, a task highly relevant to real drug discovery scenarios [4]. When compared to other RL algorithms like Proximal Policy Optimization (PPO) or Advantage Actor-Critic (A2C), REINFORCE has shown particular strength in scenarios involving pre-trained policies, as is the case with chemical language models initialized on large molecular datasets [22].

Experimental Protocols

Protocol 1: Lead Optimization with REINVENT

Objective: Optimize a hit compound for improved binding affinity while maintaining drug-like properties.

Materials and Reagents:

- REINVENT 4 software (available under Apache 2.0 license)

- Pre-trained prior model (included in repository)

- Target-specific activity prediction model (e.g., Random Forest, CNN, or docking integration)

- Starting molecule(s) in SMILES format

Procedure:

Configuration Setup:

- Create a TOML configuration file defining the run parameters

- Set

num_epochs: 500-1000 - Set

batch_size: 128 (adjust based on GPU memory) - Configure

learning_rate: 0.0001-0.0005

Scoring Function Design:

- Implement a composite scoring function with the following components:

- Activity Score: Weight: 0.6 - Output from target-specific prediction model

- Drug-likeness Score: Weight: 0.2 - Quantitative Estimate of Drug-likeness (QED)

- Synthetic Accessibility: Weight: 0.2 - SA Score penalty

- Normalize each component to [0,1] range

- Apply thresholding if necessary (e.g., minimum QED = 0.5)

- Implement a composite scoring function with the following components:

Prior-Agent Initialization:

- Initialize the agent model as a copy of the pre-trained prior

- Set the

sigmaparameter (controls influence of prior): 120-256

Training Loop:

- For each epoch:

- Agent generates a batch of SMILES strings

- Scoring function evaluates each molecule

- Policy gradient update computed using REINFORCE

- Top 20% of molecules added to experience replay buffer

- Agent sampled from replay buffer for 10% of next batch

- For each epoch:

Validation and Analysis:

- Monitor average reward and diversity metrics across epochs

- Inspect top-performing molecules for chemical novelty and validity

- Validate top candidates through molecular docking or experimental assays

Troubleshooting:

- Low Diversity: Reduce sigma parameter; increase experience replay sampling proportion

- Training Instability: Implement gradient clipping; decrease learning rate

- Poor Validity Rate: Ensure proper tokenization; consider alternative representations (SELFIES)

Protocol 2: Scaffold Hopping with Constrained Generation

Objective: Generate novel molecular scaffolds with similar biological activity to a reference compound.

Materials and Reagents:

- REINVENT 4 with conditional generator capabilities

- Reference active compound in SMILES format

- 3D molecular shape comparison tool (e.g., ROCS implementation)

- Activity prediction model for target of interest

Procedure:

Conditional Generator Setup:

- Utilize REINVENT 4's conditional agent architecture

- Configure as

P(T|S)where S is the reference scaffold [20]

Multi-component Scoring Function:

- Shape Similarity: Weight: 0.4 - 3D molecular shape overlap to reference (Tanimoto Combo)

- Pharmacophore Match: Weight: 0.3 - Key interaction pattern preservation

- Scaffold Diversity: Weight: 0.2 - Bemis-Murcko scaffold dissimilarity to reference

- Activity Prediction: Weight: 0.1 - Predicted target activity

Staged Learning Configuration:

- Stage 1 (Epochs 1-200): Focus on shape similarity and pharmacophore match

- Stage 2 (Epochs 201-500): Gradually increase scaffold diversity weight

- Stage 3 (Epochs 501+): Fine-tune with balanced objective weights

Hill Climbing Strategy:

Output Analysis:

- Cluster generated molecules by scaffold

- Evaluate scaffold novelty relative to training set

- Confirm maintained activity through prediction models or experimental testing

Implementation Workflow

The following diagram illustrates the complete REINFORCE-based molecular optimization workflow as implemented in REINVENT:

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Resource | Type | Function | Availability |

|---|---|---|---|

| REINVENT 4 | Software Framework | Open-source generative AI for molecular design | GitHub: MolecularAI/REINVENT4 (Apache 2.0) |

| ChEMBL Database | Data Resource | Curated bioactive molecules for prior training | https://www.ebi.ac.uk/chembl/ |

| ZINC Database | Data Resource | Commercially available compounds for training | http://zinc.docking.org/ |

| RDKit | Cheminformatics Library | SMILES processing, descriptor calculation, and chemical validity checks | Open-source (BSD license) |

| SA Score | Predictive Model | Synthetic accessibility assessment | Integrated in RDKit |

| QED | Computational Metric | Quantitative estimate of drug-likeness | Integrated in RDKit |

| SELFIES | Molecular Representation | Grammar ensuring 100% valid molecular generation | GitHub: https://github.com/aspuru-guzik-group/selfies |

| Pre-trained Prior Models | AI Model | Foundation models for initializing REINVENT agents | Included with REINVENT 4 repository |

The integration of policy-based methods, particularly the REINFORCE algorithm within the REINVENT platform, provides researchers with a powerful and validated framework for directed molecular generation. The protocols outlined in this Application Note represent current best practices for leveraging these tools in practical drug discovery scenarios. As the field evolves, emerging techniques such as latent space diffusion models [23], alternative baseline strategies [22], and multi-objective optimization schemes continue to enhance the capabilities of reinforcement learning-based molecular design. The REINFORCE algorithm's particular strength when combined with pre-trained chemical language models ensures its continued relevance in the generative molecular design toolkit, striking an effective balance between exploration of novel chemical space and exploitation of known bioactive regions.

Molecular optimization, a critical process in drug discovery, involves designing novel chemical compounds with enhanced properties, such as improved drug-likeness or biological activity. Reinforcement Learning (RL) presents a powerful framework for this task by formulating molecular design as a sequential decision-making process. Among RL approaches, value-based methods, particularly those utilizing Double Q-learning, offer distinct advantages in stability and sample efficiency. The Molecule Deep Q-Network (MolDQN) framework exemplifies this approach, combining domain knowledge from chemistry with advanced RL to enable direct, valid modifications of molecular structures [2]. Unlike generative models that may rely on pre-training and struggle with chemical validity, MolDQN operates by defining a chemically constrained action space, ensuring 100% validity of generated molecules while achieving competitive performance on benchmark tasks [2] [3]. This document details the application, protocols, and key resources for implementing MolDQN, providing a practical guide for researchers and scientists in drug development.

Core Principles and Methodologies

The MolDQN Framework

The MolDQN framework formulates molecular optimization as a Markov Decision Process (MDP), which is then solved using a value-based RL algorithm featuring Double Q-learning and randomized value functions [2]. Its key innovations include:

- Chemically Valid Action Space: It defines a set of permissible actions that correspond to chemically plausible modifications, thereby guaranteeing that every intermediate and final molecule in the optimization trajectory is valid [2].

- Operation Without Pre-training: MolDQN learns from scratch, avoiding the biases inherent in pre-training on existing datasets, which can limit the exploration of novel chemical space [2].

- Multi-Objective Optimization: The framework can be extended to simultaneously optimize multiple properties, a common requirement in real-world drug discovery projects where, for example, one might aim to maximize drug-likeness while maintaining structural similarity to a lead compound [2] [3].

Formulating the Molecular MDP

The MDP in MolDQN is formally defined by the tuple (S, A, {P_sa}, R):

- State Space (

S): A states ∈ Sis a tuple(m, t), wheremis a valid molecule andtis the number of steps taken so far. The process is terminated whentreaches a predefined maximumT[2]. - Action Space (

A): An actiona ∈ Ais a valid modification on a moleculem, falling into one of three categories:- Atom Addition: Adding an atom from a predefined set of elements (e.g., C, O, N) and connecting it to the existing molecule with a valence-allowed bond. This action typically replaces an implicit hydrogen atom [2].

- Bond Addition: Increasing the bond order between two atoms with free valence. This includes creating new single, double, or triple bonds or increasing the order of an existing bond (e.g., single to double) [2].

- Bond Removal: Decreasing the bond order between two atoms (e.g., triple to double, double to single, or single to no bond). To avoid fragmented molecules, bonds are only completely removed if the resulting molecule has zero or one disconnected atom [2].

- Transition Probability (

{P_sa}): The state transitions are deterministic. Applying an actionato a statesreliably leads to a specific new molecule state [2]. - Reward Function (

R): The reward is based on the molecular properties of interest (e.g., penalized logP or QED). Rewards are provided at every step but are discounted by a factor ofγ^(T-t)to emphasize the value of the final state [2].

The Double Q-Learning Advantage

MolDQN employs Double Q-learning to mitigate the overestimation bias of standard Q-learning. In this paradigm, two Q-networks are used: a primary network for action selection and a target network for value evaluation. The target network's parameters are periodically updated from the primary network, leading to more stable and reliable training [2] [24]. The loss function used to train the network is a Huber loss between the model's predicted Q-value and the target reward, which is computed as target_reward = reward(state) + gamma * baseline_model(next_state) [24].

Experimental Protocols and Benchmarks

Performance Benchmarking

MolDQN has been evaluated on standard molecular optimization tasks, demonstrating strong performance against contemporary models. The tables below summarize its performance on optimizing penalized logP (a measure of hydrophobicity adjusted for synthetic accessibility and ring size) and QED (a quantitative estimate of drug-likeness) [25].

Table 1: Benchmarking MolDQN on Penalized logP and QED Optimization

| Method | Penalized logP (1st/2nd/3rd) | Validity | QED (1st/2nd/3rd) | Validity |

|---|---|---|---|---|

| Random Walk | -0.65 / -1.72 / -1.88 | 100% | 0.64 / 0.56 / 0.56 | 100% |

| JT-VAE | 5.30 / 4.93 / 4.49 | 100% | 0.925 / 0.911 / 0.910 | 100% |

| GCPN | 7.98 / 7.85 / 7.80 | 100% | 0.948 / 0.947 / 0.946 | 100% |

| MolDQN-naive | 8.69 / 8.68 / 8.67 | 100% | 0.934 / 0.931 / 0.930 | 100% |

| MolDQN-bootstrap | 9.01 / 9.01 / 8.99 | 100% | 0.948 / 0.944 / 0.943 | 100% |

Table 2: Constrained Optimization (Similarity ≥ δ) Performance (Improvement in pLogP)

| Similarity (δ) | JT-VAE Improvement | GCPN Improvement | MolDQN-naive Improvement | MolDQN-bootstrap Improvement |

|---|---|---|---|---|

| 0.0 | 1.91 ± 2.04 | 4.20 ± 1.28 | 4.83 ± 1.30 | 4.88 ± 1.30 |

| 0.2 | 1.68 ± 1.85 | 4.12 ± 1.19 | 3.79 ± 1.32 | 3.80 ± 1.30 |

| 0.4 | 0.84 ± 1.45 | 2.49 ± 1.30 | 2.34 ± 1.18 | 2.44 ± 1.25 |

| 0.6 | 0.21 ± 0.71 | 0.79 ± 0.63 | 1.40 ± 0.92 | 1.30 ± 0.98 |

Protocol: Implementing a MolDQN Experiment

The following workflow details the key steps in conducting a molecular optimization experiment using the MolDQN framework.

Step-by-Step Protocol:

Problem Formulation:

MDP Initialization:

Agent Setup:

- Initialize the main and target Q-networks. These are typically multi-layer perceptrons (MLPs) that take a molecular fingerprint concatenated with the remaining steps as input [2] [24].

- Choose an optimizer (e.g., Adam) and set hyperparameters (learning rate, batch size, target network update frequency).

Experience Generation (Rollout):

Q-Learning Update:

- Gather experiences (state, action, reward, next state) in a dataset.

- For a batch of experiences, compute the target Q-value:

target = r(s_t) + γ * Q_target(s_{t+1}, argmax_a Q_main(s_{t+1}, a)). This is the Double Q-learning step [24]. - Train the main Q-network by minimizing the Huber loss between its predictions and the target values.

Iteration and Termination:

- Periodically update the target Q-network by copying weights from the main network.

- Repeat steps 4 and 5 until the agent reaches a terminal state (

t = T) or the performance converges. - The final output is the molecule from the terminal state with the highest cumulative reward.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for MolDQN

| Tool/Resource | Type | Primary Function in MolDQN |

|---|---|---|

| RDKit | Software Library | Cheminformatics toolkit used to represent molecules, enumerate chemically valid actions, and calculate molecular properties [2] [24]. |

| PyTorch/TensorFlow | Deep Learning Framework | Provides the environment for building, training, and evaluating the Deep Q-Networks. |

| Double Q-Learning | Algorithm | RL algorithm used to reduce overestimation bias in Q-value updates, enhancing training stability [2] [24]. |

| Molecular Fingerprint (e.g., ECFP6) | Molecular Representation | Converts a molecule into a fixed-length bit vector that serves as input features for the Q-network [24]. |

| Huber Loss | Loss Function | A robust loss function used for regression that is less sensitive to outliers than mean squared error, used to train the Q-network [24]. |

| ZINC/ChEMBL | Molecular Database | Source of initial molecules for optimization or benchmarking. |

MolDQN establishes a robust, value-based approach for molecular optimization in drug discovery. Its core strength lies in the seamless integration of deep reinforcement learning with fundamental chemical principles, ensuring the generation of valid and novel molecules. By providing a detailed protocol and listing essential tools, this document aims to equip researchers with the knowledge to apply and extend the MolDQN framework for their molecular design challenges, thereby accelerating the efficient exploration of chemical space.

The exploration of chemical space for novel molecules with desired properties is a fundamental challenge in drug discovery. Traditional methods often struggle with the vastness of this space and the complex, multi-objective nature of molecular optimization. Latent Space Optimization (LSO) has emerged as a powerful computational strategy, converting the problem of discrete molecular generation into a continuous optimization task within the compressed latent representation of a deep generative model [4] [26] [27]. By navigating this latent space, researchers can indirectly design valid and syntactically correct molecules without explicitly defining chemical rules.

This application note details the integration of Proximal Policy Optimization (PPO), a state-of-the-art reinforcement learning (RL) algorithm, with the latent spaces of autoencoder-based generative models for molecular design. We frame this methodology within a broader thesis on reinforcement learning for molecular optimization, presenting it as a robust and sample-efficient framework for de novo drug design. The content is structured to provide researchers and drug development professionals with both the theoretical foundation and the practical protocols necessary to implement this approach.

Theoretical Foundation

Latent Space Optimization in Molecular Design

Latent Space Optimization (LSO) reframes the problem of molecular generation as a continuous search problem. It leverages generative models, such as autoencoders, which are trained to encode molecules into a lower-dimensional latent vector and decode these vectors back into molecular structures [4]. The core LSO objective is defined as:

$$\bm{z}^* = \arg\max_{\bm{z} \in \mathcal{Z}} f(g(\bm{z}))$$

Here, ( g: \mathcal{Z} \to \mathcal{X} ) is the generative model that maps a latent vector ( \bm{z} ) to a molecule ( \bm{x} ), and ( f ) is a black-box objective function that scores the molecule based on a desired property (e.g., bioactivity, solubility) [26]. Operating in the latent space ( \mathcal{Z} ) is advantageous because it is often more structured and smooth than the original data manifold, simplifying the optimization process [26].

Proximal Policy Optimization (PPO)

PPO is a policy gradient algorithm renowned for its stability and sample efficiency in complex environments [28]. Its key innovation is a clipped surrogate objective function that prevents destructively large policy updates, maintaining a trust region without the computational expense of second-order optimization methods like its predecessor, TRPO [28].

The PPO objective function is: $$L^{CLIP}(\theta) = \hat{\mathbb{E}}t \left[ \min\left( rt(\theta) \hat{A}t, \text{clip}(rt(\theta), 1-\epsilon, 1+\epsilon) \hat{A}t \right) \right]$$ where ( rt(\theta) = \frac{\pi\theta(at | st)}{\pi{\theta{\text{old}}}(at | st)} ) is the probability ratio, ( \hat{A}t ) is the estimated advantage at timestep ( t ), and ( \epsilon ) is a hyperparameter that clips the probability ratio, thus limiting the policy update [28]. This makes PPO particularly suited for optimizing in the continuous, high-dimensional latent spaces of generative models.

Integrated Framework: PPO for Latent Space Navigation

The MOLRL (Molecule Optimization with Latent Reinforcement Learning) framework exemplifies the synergy between PPO and autoencoders [4] [29]. In this paradigm:

- Agent: The PPO policy, which proposes new points in the latent space.

- Action: A step in the continuous latent space, defined as a vector ( \Delta z ).

- State: The current location in the latent space, represented by the latent vector ( z ).

- Reward: The score from a property prediction model (oracle) for the molecule decoded from the new latent vector ( z + \Delta z ) [4].

The PPO agent learns a policy for traversing the latent space, seeking regions that decode to molecules with optimized properties. This approach is architecture-agnostic, having been successfully paired with both Variational Autoencoders (VAEs) and Mutual Information Machine (MolMIM) autoencoders [4] [29].

Essential Research Reagents and Computational Tools

Table 1: Key Research Reagents and Computational Tools for PPO-based Latent Space Optimization.

| Tool / Reagent | Type | Function in the Workflow | Exemplars & Notes |

|---|---|---|---|

| Generative Model | Software Model | Creates the continuous latent space for optimization; encodes and decodes molecules. | Variational Autoencoder (VAE) [4], MolMIM Autoencoder [4], Diffusion/Flow Matching models [26]. |

| Property Predictor (Oracle) | Software Model | Provides the reward signal by scoring generated molecules on target properties. | QSAR Model [7], Docking Software [30], Calculated Properties (e.g., QED, LogP) [4]. |

| Reinforcement Learning Library | Software Library | Provides the implementation of the PPO algorithm. | stable-baselines3 [28], other deep RL frameworks. |

| Chemical Database | Dataset | Pre-training the generative model and, optionally, the property predictor. | ZINC [4], ChEMBL [7]. |

| Cheminformatics Toolkit | Software Library | Handles molecular validation, feature calculation, and similarity assessment. | RDKit [4] (for validity checks and Tanimoto similarity). |

Detailed Experimental Protocol

The following diagram illustrates the end-to-end workflow for molecular optimization using PPO in an autoencoder's latent space.

Protocol Steps

Phase 1: Generative Model Preparation and Validation

- Model Selection and Pre-training:

- Select an autoencoder architecture (e.g., VAE, MolMIM). Train the model on a large, diverse chemical database (e.g., ZINC, ChEMBL) to learn meaningful molecular representations [4].

- Critical Validation: Before proceeding with LSO, the generative model must be rigorously validated on:

- Reconstruction Accuracy: The average Tanimoto similarity between original and reconstructed molecules should be high [4].

- Validity Rate: The percentage of randomly sampled latent vectors that decode to valid SMILES strings should be high (>90%) to ensure the RL agent does not waste steps on invalid states [4].

- Latent Space Continuity: Small perturbations in the latent space should lead to structurally similar molecules. This can be tested by adding Gaussian noise to latent vectors and measuring the decay in Tanimoto similarity of the decoded molecules [4].

Phase 2: PPO-based Latent Space Optimization

Problem Formulation:

- Define Objective: Formally define the objective function ( f(x) ) that scores a molecule ( x ). This can be a single property (e.g., penalized LogP) or a weighted sum of multiple properties [4] [27].

- Initialize Agent: Initialize the PPO policy network. The input layer dimensions must match the dimensionality of the autoencoder's latent space.

Training Loop:

- For each training episode:

- State Initialization: Start from an initial latent vector ( z0 ), which can be random or the encoding of a starting molecule.

- Action Selection: The PPO policy, given the current state ( zt ), samples an action ( \Delta zt ) (a step in the latent space).

- State Transition: Apply the action to obtain a new latent vector: ( z{t+1} = zt + \Delta zt ).

- Decoding and Reward: Decode ( z{t+1} ) into a molecule ( x{t+1} ). Evaluate ( x{t+1} ) using the oracle to obtain the reward ( r{t+1} = f(x_{t+1}) ).

- Policy Update: Store the transition ( (zt, \Delta zt, r{t+1}, z{t+1}) ). Use a batch of such transitions to update the PPO policy network by maximizing the clipped surrogate objective [4] [28].

- For each training episode:

Advanced Configuration for Sparse Rewards:

- In tasks like designing bioactive compounds, rewards can be sparse (most molecules are inactive). To improve learning, incorporate:

Key Experimental Results and Benchmarks

The following tables summarize quantitative results from studies applying latent space optimization, including the MOLRL framework, to common molecular optimization tasks.

Table 2: Performance on Constrained Single-Property Optimization (pLogP Maximization).

| Method | Generative Model | Average pLogP Improvement ↑ | Key Achievement |

|---|---|---|---|

| MOLRL (PPO) [4] | VAE (Cyclical Annealing) | Comparable or Superior to state-of-the-art | Effectfully navigates latent space under similarity constraints |

| MOLRL (PPO) [4] | MolMIM | Comparable or Superior to state-of-the-art | Demonstrates method's agnosticism to underlying architecture |

| JT-VAE [4] | VAE | Baseline performance | A commonly cited benchmark in the field |

Table 3: Performance on Multi-Objective and Scaffold-Constrained Tasks.

| Task Type | Method | Performance Summary |

|---|---|---|

| Multi-Objective Optimization | Multi-Objective LSO [27] | Significantly improves the Pareto front for multiple properties (e.g., bioactivity and synthetic accessibility) via iterative weighted retraining. |

| Scaffold-Constrained Optimization | MOLRL (PPO) [4] [29] | Successfully generates molecules containing a pre-specified substructure while simultaneously optimizing for target molecular properties. |

| Bioactive Compound Design (Sparse Reward) | RL with Fine-Tuning [7] | Overcame sparse rewards using transfer learning, experience replay, and reward shaping, leading to experimentally validated EGFR inhibitors. |

Troubleshooting and Advanced Applications

Common Challenges and Solutions

- Challenge: Poor Quality or Invalid Generated Molecules

- Solution: Verify the validity and reconstruction rate of the pre-trained generative model. Techniques like cyclical annealing for VAEs can mitigate posterior collapse and improve latent space organization [4].

- Challenge: Unstable or Slow PPO Training

- Challenge: Handling Multiple, Competing Objectives

- Solution: Implement a multi-objective LSO approach. Use Pareto efficiency to rank molecules and guide the optimization process, or employ a weighted sum of objectives in the reward function [27].

Advanced Application: Activity Cliff-Aware Optimization

Activity cliffs, where small structural changes lead to large activity shifts, pose a significant challenge. The ACARL framework integrates an Activity Cliff Index (ACI) and a contrastive loss into the RL process [30]. This biases the PPO agent to explore regions of the latent space near known activity cliffs, potentially leading to more potent compounds.

The integration of Proximal Policy Optimization with the latent spaces of autoencoder models represents a powerful and flexible paradigm for targeted molecular generation. This approach bypasses the need for explicit chemical rules by performing efficient, sample-aware navigation in a continuous representation of chemical space. As demonstrated by the MOLRL framework and related methods, this technique achieves state-of-the-art performance on standard benchmarks and is readily adaptable to complex, real-world drug discovery tasks, including multi-property optimization and scaffold-constrained design. By providing detailed protocols and benchmarks, this application note equips researchers with the tools to implement and advance this promising methodology for generative molecular design.

Transformer-Based Generative Models Enhanced with Reinforcement Learning

The convergence of transformer-based generative models with reinforcement learning (RL) is forging new pathways in molecular optimization and generative design. This paradigm addresses a critical limitation of generative models trained solely with likelihood-based objectives: their frequent misalignment with complex, real-world goals such as specific physiochemical properties or biological activity in drug candidates [31]. RL provides a principled framework for steering these powerful generative processes toward predefined, often multi-faceted, objectives.

In molecular design, this synergy allows researchers to reframe generative tasks as sequential decision-making problems. An agent learns to optimize a policy for generating molecular structures, receiving rewards based on the properties of the created molecules [4] [32]. Transformer architectures are particularly well-suited for this integration. Their attention mechanism excels at managing long-range dependencies and high-dimensional data, effectively tackling classic RL challenges like credit assignment and operating in partially observable environments [33]. This document details the practical application notes and experimental protocols for implementing these hybrid models in molecular optimization research.

Application Notes

Core Principles and Current Applications

The integration of RL with transformer-based generative models transforms the model from a passive generator into an active, goal-oriented agent. The transformer serves as the policy network, and its outputs are guided by reward signals derived from the properties of the generated molecules. This approach is particularly valuable in goal-directed molecular generation, where the objective is to discover molecules with optimized properties such as drug-likeness (QED), solubility (LogP), or binding affinity [4] [32].