Reducing Computational Cost in DFT Stability Calculations: 2025 Guide with Machine Learning & Best Practices

Density Functional Theory (DFT) is a cornerstone of computational chemistry and materials science, but its high computational cost remains a major bottleneck for high-throughput screening and large-scale dynamic simulations, particularly...

Reducing Computational Cost in DFT Stability Calculations: 2025 Guide with Machine Learning & Best Practices

Abstract

Density Functional Theory (DFT) is a cornerstone of computational chemistry and materials science, but its high computational cost remains a major bottleneck for high-throughput screening and large-scale dynamic simulations, particularly in drug development and materials discovery. This article provides a comprehensive guide for researchers and scientists on modern strategies to drastically reduce this cost without sacrificing accuracy. We explore the foundational challenges of traditional DFT, detail cutting-edge methodological alternatives like machine-learned Neural Network Potentials (NNPs) and learned exchange-correlation functionals, and offer practical troubleshooting and optimization protocols for existing DFT workflows. Finally, we present a rigorous framework for validating and comparing the performance of these accelerated methods against gold-standard computational and experimental data, empowering professionals to make informed choices for their specific stability calculation needs.

Why is DFT So Expensive? Understanding the Bottlenecks in Stability Calculations

Density Functional Theory (DFT) is a pivotal computational method used across physics, chemistry, and materials science for studying the electronic structure of many-body systems. At its core lies the Kohn-Sham (KS) equation, which must be solved to determine the ground-state energy and electron density of a system. Despite its widespread use, a significant challenge limits its application: the substantial computational resources required to construct and solve the Kohn-Sham Hamiltonian [1]. The computational cost of traditional KS-DFT calculations typically scales as (O(N^3)) to (O(N^4)), where (N) represents the number of electrons in the system [2] [1]. This polynomial scaling means that as researchers study larger and more complex systems—such as nanostructures, interfaces, or biological molecules—the computational time and memory requirements can become prohibitively expensive, creating a major bottleneck in computational materials science and drug development [2].

Frequently Asked Questions (FAQs)

Q1: Why do my DFT calculations become exponentially slower when I study larger molecular systems?

The computational bottleneck arises primarily from the mathematical operations involved in solving the Kohn-Sham equations. In conventional DFT implementations using atomic orbitals or plane-wave basis sets, the Hamiltonian matrix that must be constructed and diagonalized is dense, and the diagonalization step scales cubically with system size ((O(N^3))) [2]. Additionally, the self-consistent field (SCF) procedure requires multiple iterations to achieve convergence, with each iteration involving this expensive matrix manipulation [1]. For systems containing hundreds to thousands of atoms, this combination of factors leads to dramatically increased computation times.

Q2: What are the main computational bottlenecks in a standard Kohn-Sham DFT calculation?

The primary bottlenecks occur in several key areas:

- Hamiltonian Construction: Building the Kohn-Sham Hamiltonian, which consists of kinetic energy, external potential, Hartree (Coulomb) potential, and exchange-correlation potential terms [1]

- Matrix Diagonalization: Solving the large eigenvalue problem to obtain Kohn-Sham orbitals and energies ((O(N^3)) scaling) [2]

- SCF Convergence: The need for multiple iterations to achieve self-consistency between the electron density and the potential [3]

- Memory Requirements: Storage of large Hamiltonian, overlap, and density matrices that grow with system size [2]

Q3: Are there alternative DFT approaches that offer better computational scaling?

Yes, several advanced approaches address scaling limitations:

- Real-space KS-DFT: Discretizes the KS Hamiltonian directly on finite-difference grids in real space, producing sparse matrices that enable better parallelization [2]

- Orbital-free DFT (OF-DFT): Bypasses the Kohn-Sham orbitals entirely but requires accurate kinetic energy functionals [2]

- Linear Scaling DFT: Exploits the "nearsightedness" of electronic matter to achieve (O(N)) scaling for insulating systems [2]

- Machine Learning Accelerations: Deep learning models can predict molecular Hamiltonians directly from atomic configurations, potentially bypassing expensive SCF iterations [1]

Troubleshooting Guides

Problem: Slow SCF Convergence

Symptoms:

- Self-consistent field iterations failing to converge within the default number of cycles

- Oscillating or divergent total energy during SCF cycles

- Extended computation time even for moderately sized systems

Solutions:

- Optimize Mixing Parameters: Implement Bayesian optimization algorithms to determine optimal charge mixing parameters, which can systematically reduce the number of SCF iterations required for convergence [3]

- Use Improved Initial Guess: Start from better initial electron densities, such as those from machine learning predictions or superposition of atomic densities

- Adjust Convergence Thresholds: Implement a multi-stage convergence strategy with looser thresholds initially, tightening as you approach self-consistency

Experimental Protocol: Bayesian Optimization for SCF Convergence

- Run preliminary calculations to establish baseline convergence behavior

- Define parameter space for charge mixing parameters (mixing mode, mixing amplitude, number of Kerker cycles)

- Set up Bayesian optimization with total energy convergence as the objective function

- Run optimization cycle across multiple systems to find robust parameter sets

- Validate optimized parameters on test systems not included in training

- Implement optimized parameters in production calculations [3]

Problem: Memory Limitations for Large Systems

Symptoms:

- Calculation termination due to insufficient memory

- Inability to handle systems with more than 500 atoms

- Severe performance degradation due to memory swapping

Solutions:

- Switch to Real-space DFT: Implement finite-difference or finite-element discretization that produces sparse matrices instead of dense ones [2]

- Use Parallelization: Distribute computational load across multiple nodes using space-filling curves for efficient domain decomposition [2]

- Employ Linear-scaling Methods: Implement algorithms that exploit spatial locality of electronic structure [2]

Problem: Inaccurate Results with Smaller Grids

Symptoms:

- Energy differences sensitive to integration grid size

- Inconsistent forces during geometry optimization

- Poor comparison with experimental observables

Solutions:

- Use UltraFine Grids: Employ the UltraFine integration grid (or equivalent) as the default for production calculations [4]

- Perform Convergence Tests: Systematically test key properties (energy, forces) against grid size before production runs

- Maintain Consistency: Use identical grids for all calculations when comparing energies or computing energy differences [4]

Performance Comparison of Computational Approaches

Table 1: Comparison of DFT Methodologies and Their Computational Characteristics

| Method | Computational Scaling | Key Features | Best Use Cases |

|---|---|---|---|

| Traditional KS-DFT (GGA) | (O(N^3)) - (O(N^4)) [2] [1] | Dense Hamiltonian matrix; Well-established | Small molecules (< 100 atoms) |

| Real-space KS-DFT | Better parallelization efficiency [2] | Sparse Hamiltonian; High parallelization | Large nanostructures (100-10,000 atoms) [2] |

| Machine Learning Hamiltonians | Reduced SCF iterations [1] | Direct Hamiltonian prediction; Physical constraints | Large molecular systems [1] |

| Orbital-free DFT | (O(N)) [2] | No Kohn-Sham orbitals; Approximate kinetic energy | Very large metallic systems |

Table 2: Quantitative Performance Improvements of Advanced Methods

| Methodology | Performance Improvement | System Tested | Key Innovation |

|---|---|---|---|

| Real-space KS-DFT with parallelization | Simulation of 20nm Si nanocluster (200,000+ atoms) using 8192 nodes [2] | Silicon nanoclusters | Finite-difference grids; Massive parallelization [2] |

| WALoss with Hamiltonian learning | 18% faster SCF convergence; 1347x reduction in total energy error [1] | Molecules (40-100 atoms) | Wavefunction Alignment Loss [1] |

| Bayesian optimized mixing | Reduced SCF iterations [3] | Various molecular systems | Systematic parameter optimization [3] |

Experimental Protocols

Protocol: Implementing Real-space KS-DFT for Large Systems

Objective: Utilize real-space discretization to enable DFT calculations for systems containing thousands of atoms [2].

Methodology:

- Domain Discretization: Represent the simulation domain using finite-difference grids instead of traditional plane-wave or atomic orbital basis sets

- Sparse Hamiltonian Construction: Build the Kohn-Sham Hamiltonian directly on the grid points, resulting in a sparse matrix structure

- Parallelization Strategy: Implement space-filling curves for efficient domain decomposition across multiple processors [2]

- Iterative Diagonalization: Use subspace filtering and Rayleigh-Ritz methods to solve the sparse eigenvalue problem efficiently [2]

- Poisson Equation Solution: Employ multigrid methods for efficient solution of the electrostatic potential [2]

Validation:

- Compare binding energies, electronic densities, and structural properties against conventional DFT for small systems

- Benchmark parallel scaling efficiency on target architecture

- Verify conservation of key physical invariants (total charge, virial theorem)

Protocol: Machine Learning Accelerated Hamiltonian Construction

Objective: Use deep learning models to predict Kohn-Sham Hamiltonians directly from atomic structures, reducing reliance on expensive SCF iterations [1].

Methodology:

- Dataset Generation: Create training set of molecular structures and corresponding Hamiltonians (e.g., PubChemQH dataset for molecules with 40-100 atoms) [1]

- Model Architecture: Implement SE(3)-equivariant neural network (e.g., WANet) using eSCN convolution and sparse mixture of experts [1]

- Loss Function: Employ Wavefunction Alignment Loss (WALoss) that aligns eigenspaces of predicted and ground-truth Hamiltonians [1]

- Training: Optimize model parameters to minimize WALoss while maintaining physical constraints

- Inference: Use predicted Hamiltonian as initial guess or direct replacement for conventional Hamiltonian construction

Validation Metrics:

- Total energy error relative to conventional DFT

- Molecular orbital energy differences

- HOMO-LUMO gap accuracy

- SCF convergence acceleration factor [1]

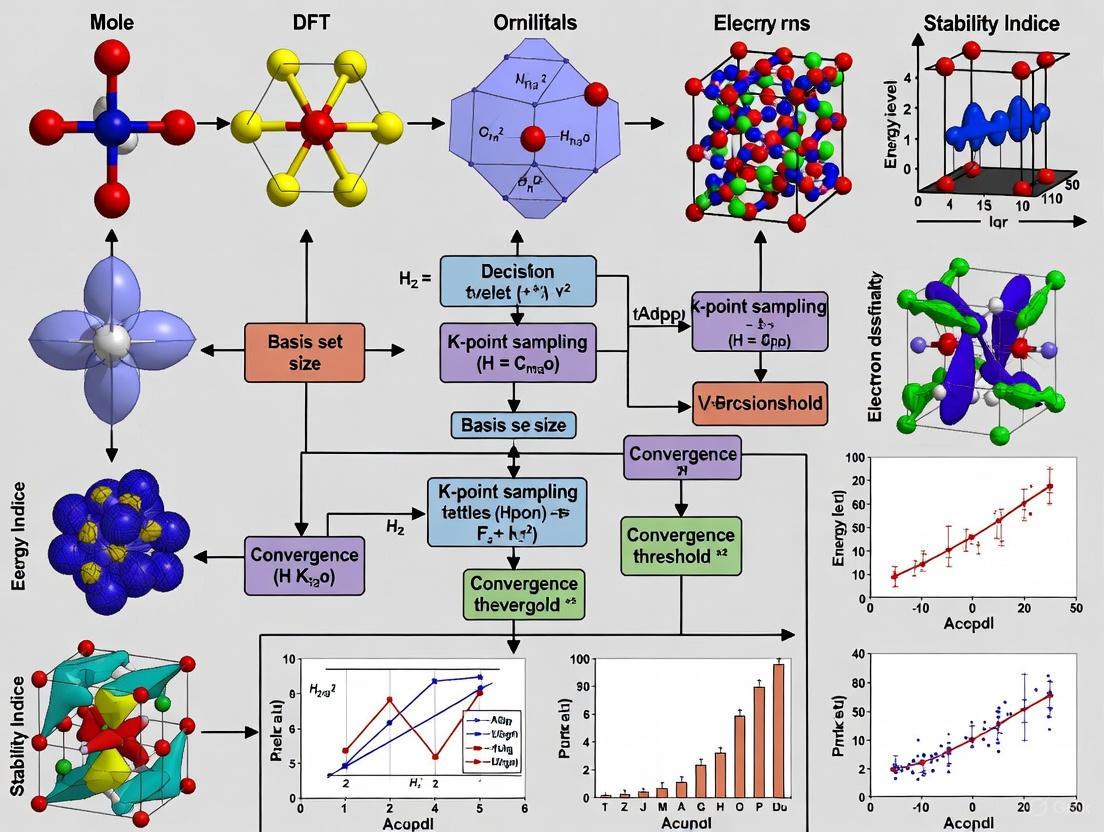

Computational Workflow Visualization

Computational Scaling Bottlenecks in KS-DFT

Research Reagent Solutions

Table 3: Computational Tools for Addressing Kohn-Sham Scaling Challenges

| Tool/Software | Function | Key Features for Scalability |

|---|---|---|

| Real-space DFT Codes (PARSEC, ARES, SPARC, OCTOPUS) | Large-scale electronic structure simulations | Sparse Hamiltonian representation; Massive parallelization capabilities [2] |

| Machine Learning Frameworks (PyTorch, TensorFlow) | Hamiltonian prediction and acceleration | SE(3)-equivariant networks; Wavefunction Alignment Loss [1] |

| Bayesian Optimization Libraries | Parameter optimization | Automated convergence optimization; Reduced SCF iterations [3] |

| Hybrid Functional Implementations (HSE06, ωB97XD) | Accurate electronic structure calculation | Balanced accuracy/computational cost; Range-separated hybrids [4] [5] |

Troubleshooting Guides and FAQs

FAQ: System Setup and Fundamental Costs

What are the primary factors that determine the computational cost of a DFT stability calculation? The computational cost is primarily driven by three factors: the system size (number of electrons and atoms), the choice of the exchange-correlation functional (with more advanced functionals being more expensive), and the type of property being predicted. Ground-state energy calculations are considered "primary" properties and are less costly, while "secondary" properties like mechanical moduli or dynamic simulations require additional, more expensive computations [6] [7].

Why should I avoid using the popular B3LYP/6-31G* method combination? Despite its historical popularity, the B3LYP/6-31G* combination is now considered outdated. It suffers from known inherent errors, including missing London dispersion effects and a strong basis set superposition error (BSSE). Today, more accurate, robust, and sometimes computationally cheaper composite methods are available, such as B3LYP-3c or r2SCAN-3c [7].

Is DFT a suitable method for all chemical systems? No. DFT is highly effective for systems with a single-reference electronic structure, such as most diamagnetic closed-shell organic molecules. However, its performance can be poor for systems with significant multi-reference character, such as some radicals, systems with low band gaps, or strongly correlated systems. For these, more advanced wavefunction-theory-based approaches may be necessary [7].

FAQ: Managing Computational Expense

How can I accurately model intermolecular interactions like van der Waals forces without excessive cost? Standard DFT functionals often fail to describe long-range van der Waals (dispersion) forces correctly. The recommended practice is to use dispersion-corrected DFT. This involves adding an empirical dispersion correction to the exchange-correlation functional, which significantly improves the accuracy for systems dominated by or competing with dispersion interactions, such as biomolecules or noble gas atoms [8] [9].

My project involves predicting mechanical properties. What specific challenges should I anticipate? Predicting mechanical properties like elastic constants (Young's modulus, shear modulus) is more costly than calculating formation energies. These are "secondary properties" that require additional calculations involving applied perturbations (e.g., structural strain) to probe the material's response. This process is computationally intensive, which is why such data is scarcer in public databases [6] [9].

What are my options for studying very large systems or performing high-throughput screening? For large systems or high-throughput studies, consider these strategies:

- Multi-level Approaches: Use a cheaper but robust method for initial screening or geometry optimizations, and a more accurate (and expensive) method for final single-point energy calculations [7].

- Machine Learning (ML): Train ML models on existing DFT databases to predict properties like formation energy or stability instantly, bypassing direct DFT calculations for initial screening [10] [6].

- Orbital-Free DFT (OFDFT): This is a less popular but closely related approach to the original HK theorems that uses approximate functionals for the kinetic energy, which can reduce cost for large systems [8].

Troubleshooting Guide: Common Problems and Solutions

| Problem | Possible Cause | Solution |

|---|---|---|

| Inaccurate intermolecular interaction energies | Lack of proper dispersion correction [8]. | Employ a dispersion-corrected functional (e.g., DFT-D3) [9]. |

| Calculation is too slow for a large system | Use of a high-level functional/basis set is computationally prohibitive. | Implement a multi-level protocol: use a cost-effective composite method (e.g., r2SCAN-3c) for pre-optimization, then a higher-level method for final energy [7]. |

| Lack of thermodynamic stability data for screening | Energy above convex hull (E$_Hull$) calculations require competing phase data and are computationally intensive [6] [10]. | Use a composition-based machine learning model (e.g., ECSG, Roost) trained on large materials databases for rapid preliminary stability assessment [10]. |

| Predicted band gaps are inaccurate | Well-known limitation of standard DFT functionals (band gap problem) [8]. | Use more advanced functionals (e.g., hybrid functionals) or many-body perturbation theory (GW), though these are more computationally expensive. |

| System has suspected multi-reference character | Standard DFT is not designed for biradicals, some transition states, or strongly correlated systems [7]. | Check for low-lying triplet states using an unrestricted broken-symmetry DFT calculation. For confirmed multi-reference cases, switch to wavefunction-based methods. |

Experimental Protocols and Workflows

General Decision Workflow for DFT Calculations

The following diagram outlines a general decision tree for setting up a computational chemistry project, from defining the chemical problem to selecting the appropriate electronic structure method.

Protocol 1: Calculating Thermodynamic Stability with DFT and ML

Aim: To determine the thermodynamic stability of a compound by computing its energy above the convex hull (E$_Hull$).

- Define the Chemical Space: Identify all known and competing phases in the relevant chemical phase diagram [10].

- Geometry Optimization: For the target compound and all competing phases, perform a DFT calculation to relax the atomic coordinates and cell parameters until the ground-state geometry and energy are found [8].

- Calculate Formation Energies: Compute the formation energy (E$_f$) for each compound from its elemental constituents.

- Construct the Convex Hull: Plot the formation energies of all compounds against composition. The convex hull is the set of points connecting the most stable phases at each composition [10].

- Determine E$Hull$: The energy above the convex hull for a compound is the vertical energy difference between its E$f$ and the hull. A value of 0 eV/atom indicates thermodynamic stability [6].

- (Optional) ML Screening: For high-throughput discovery, use a pre-trained ML model (e.g., ECSG framework) to predict E$_Hull$ directly from composition or structure, bypassing steps 2-5 for initial screening [10].

Protocol 2: A Multi-Level Approach for Cost-Effective Geometry and Energy Calculation

Aim: To balance accuracy and computational cost for systems with 50-100 atoms or many conformers.

- Initial Geometry Optimization: Use a computationally efficient composite method (e.g., r2SCAN-3c or B97M-V/def2-SVPD with empirical corrections) to obtain a reasonable molecular structure [7].

- High-Level Single-Point Energy Calculation: Using the optimized geometry from step 1, perform a more accurate (and expensive) single-point energy calculation with a higher-level functional (e.g., a hybrid functional like ωB97M-V) and a larger basis set (e.g., def2-QZVP). This provides a more reliable final energy [7].

- Frequency Calculation (if needed): To confirm the structure is a minimum and to compute thermodynamic corrections, a frequency calculation can be performed. For large systems, this can be done at the lower level of theory used in step 1 to save resources [7].

The Scientist's Toolkit: Research Reagent Solutions

Key Computational Models and Functionals

| Item Name | Function / Application | Key Consideration |

|---|---|---|

| Kohn-Sham DFT (KS DFT) | The most common DFT framework. Reduces the many-electron problem to a system of non-interacting electrons moving in an effective potential [8]. | The accuracy depends heavily on the approximation used for the exchange-correlation functional. |

| Hybrid Functionals | A class of functionals (e.g., B3LYP) that mix a portion of exact Hartree-Fock exchange with DFT exchange-correlation. Generally more accurate but more expensive than pure DFT functionals [7]. | Recommended for more accurate thermochemistry but requires more computational resources. |

| Composite Methods | Methods (e.g., r2SCAN-3c, B3LYP-3c) that combine a functional with a specific basis set and empirical corrections to correct for systematic errors like dispersion and BSSE [7]. | Offer excellent accuracy-to-cost ratios, often outperforming outdated popular choices like B3LYP/6-31G*. |

| Dispersion Corrections | Add-on terms (e.g., DFT-D3, D4) that account for long-range van der Waals interactions, which are poorly described by standard functionals [8] [9]. | Essential for modeling molecular crystals, supramolecular systems, and any system where dispersion is significant. |

| Machine Learning (ML) Models | Surrogate models (e.g., CrysCo, ECSG, Roost) trained on DFT databases to predict material properties directly from composition or structure [6] [10]. | Drastically reduces computational cost for high-throughput screening; performance depends on the quality and size of training data. |

Workflow for a Hybrid ML-DFT Materials Discovery Pipeline

This diagram illustrates how machine learning can be integrated with DFT to create an efficient, multi-stage pipeline for discovering new materials with desired properties.

Frequently Asked Questions (FAQs)

1. What is the fundamental trade-off between accuracy and speed in molecular simulations? The core trade-off is between the high accuracy but low computational speed of quantum mechanical methods like Density Functional Theory (DFT) and the high speed but lower accuracy of classical force fields. DFT provides quantum-level accuracy but its high computational cost limits the accessible system sizes and simulation timescales. Classical force fields enable larger and longer simulations but often struggle to accurately describe complex interactions, such as bond formation and breaking, without extensive, system-specific parameterization [11] [12].

2. Why do my simulations of chemical reactions or high-energy materials yield inaccurate results with classical force fields? Classical force fields often use fixed bond connections and pre-defined parameters, making them inherently unsuitable for simulating processes where chemical bonds are formed or broken. While reactive force fields (ReaxFF) exist, they may still exhibit "significant deviations" from DFT-level accuracy and require complex parameterization for new systems. This is particularly critical for high-energy materials, where inaccuracies in describing reaction potential energy surfaces can lead to wrong predictions of material stability and decomposition mechanisms [12].

3. My molecular dynamics simulations are too slow to reach biologically relevant timescales. What is the bottleneck? The primary bottleneck is the requirement for small integration time steps (femtoseconds) to maintain numerical stability in traditional Molecular Dynamics (MD). This is necessary to accurately compute atomic forces at each step, which is computationally expensive even with classical force fields. This fundamentally limits the physical timescales that can be practically simulated [11].

4. How can I improve the accuracy of my force field without making simulations prohibitively expensive? Traditional force-field parameter optimization is itself a slow process, as it often requires running numerous time-consuming MD simulations to evaluate each parameter set. One significant bottleneck is the repetitive molecular dynamics calculations needed to fine-tune these parameters [13].

Troubleshooting Guides

Issue: Slow Convergence in DFT Self-Consistent Field (SCF) Calculations

Problem: DFT calculations, while cheaper than some quantum methods, still require considerable computational power. A major contributor to this cost is the number of self-consistent field (SCF) iterations needed to achieve electronic convergence [3].

Solution:

- Action: Optimize charge mixing parameters instead of using default values.

- Protocol: Implement a data-efficient Bayesian optimization algorithm to find the optimal charge mixing parameters for your specific system.

- Expected Outcome: This can significantly reduce the number of SCF iterations required for convergence, leading to faster DFT simulations without sacrificing accuracy [3].

- Verification: This optimization procedure should become a standard part of convergence testing, alongside traditional cutoff-energy and k-point convergence tests [3].

Issue: Inaccurate Force Field for Predicting Material Properties

Problem: A classical force field fails to reproduce key experimental properties, such as elastic constants or lattice parameters, or shows poor transferability to systems not included in its parameterization.

Solution:

- Action: Adopt a machine learning (ML)-driven force field parameter optimization strategy.

- Protocol: Substitute the most time-consuming part of the optimization—the MD simulations—with a machine learning surrogate model.

- Implementation Details:

- Acquire training data by running a subset of MD simulations across the parameter space.

- Train a neural network surrogate model to predict target properties (e.g., conformational energies, bulk-phase density) from force field parameters.

- Use this fast surrogate model to guide the optimization process.

- Expected Outcome: This workflow can reduce the required optimization time by a factor of approximately 20 while producing force fields of similar quality [13].

Issue: Need for Quantum Accuracy in Large-Scale or Long-Timescale MD

Problem: Your research requires the accuracy of quantum methods (DFT) for simulating reactive processes or complex material behaviors, but the system size or simulation timeframe makes this computationally infeasible.

Solution:

- Action: Utilize a general neural network potential (NNP) trained on DFT data.

- Protocol: The EMFF-2025 model is an example of a general NNP for C, H, N, O systems. It uses a transfer learning strategy, building upon a pre-trained model (DP-CHNO-2024) and incorporating minimal new DFT data via the DP-GEN framework.

- Validation: The model should achieve DFT-level accuracy, with mean absolute errors (MAE) for energy within ± 0.1 eV/atom and forces within ± 2 eV/Å [12].

- Application: Such a model can accurately predict crystal structures, mechanical properties, and thermal decomposition behaviors of complex materials like high-energy materials at a fraction of the computational cost of direct DFT-MD [12].

Experimental Protocols & Workflows

Protocol 1: Fused Data Training for High-Accuracy Machine Learning Potentials

This protocol outlines a method to create a highly accurate ML potential by combining data from DFT calculations and experimental measurements, correcting for inherent DFT inaccuracies [14].

DFT Database Generation:

- Perform DFT calculations on a diverse set of atomic configurations (e.g., equilibrated, strained, and randomly perturbed structures for different phases).

- The target outputs are energy, forces, and virial stress for each configuration. A typical database may contain thousands of samples [14].

Experimental Data Collection:

- Gather target experimental properties. For a titanium model, this included temperature-dependent elastic constants and lattice parameters of the hcp phase across a temperature range (e.g., 4-973 K) [14].

Model Training with Alternating Trainers:

- Step A - DFT Trainer: For one epoch, modify the ML potential's parameters (θ) to match the predicted energies, forces, and virial stress with the target DFT values from the database.

- Step B - EXP Trainer: For one epoch, optimize parameters (θ) so that properties (e.g., elastic constants) computed from ML-driven MD simulations match the experimental values. Use methods like Differentiable Trajectory Reweighting (DiffTRe) to compute gradients without backpropagating through the entire simulation [14].

- Iterate between Step A and Step B until convergence.

This fused approach results in an ML potential that faithfully reproduces both the DFT training data and key experimental observables [14].

Protocol 2: Accelerating Force Field Parameter Optimization with a Surrogate Model

This protocol details how to speed up the multi-scale optimization of force-field parameters, specifically Lennard-Jones parameters for carbon and hydrogen [13].

Training Data Acquisition:

- Define the parameter space for the force field parameters you wish to optimize.

- Run a set of traditional MD simulations across this parameter space to compute your target properties (e.g., n-octane's relative conformational energies and its bulk-phase density).

Data Preparation and Model Selection:

- Prepare the data: the inputs are the force field parameters, and the outputs are the resulting properties from the MD simulations.

- Select and train a machine learning model (e.g., a neural network) to act as a surrogate. This model will learn the mapping from parameters to properties.

Gradient-Based Optimization:

- Substitute the slow MD simulations with the fast ML surrogate model in the optimization loop.

- Use a gradient-based optimizer to find the parameter set that minimizes the difference between the surrogate-predicted properties and the target properties.

Validation:

- Run a final MD simulation using the optimized parameters to confirm that the target properties are reproduced as expected.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and methods discussed in this guide.

| Research Reagent / Method | Function / Description |

|---|---|

| Density Functional Theory (DFT) | A quantum mechanical method for electronic structure calculations. Provides high accuracy for energy and forces but is computationally expensive for large systems [3] [12] [15]. |

| Classical Force Fields | Empirical potentials that compute atomic interactions using pre-defined functional forms and parameters. Fast but can lack accuracy and transferability, especially for reactive systems [12]. |

| Reactive Force Fields (ReaxFF) | A class of force fields that can model bond formation and breaking. More versatile than classical FFs but may still have accuracy limitations compared to DFT [12]. |

| Neural Network Potentials (NNPs) | Machine learning models trained on quantum mechanical data that can achieve near-DFT accuracy with much lower computational cost during simulation [12] [14]. |

| Bayesian Optimization | A data-efficient algorithm for global optimization. Used to find optimal simulation parameters (e.g., charge mixing in DFT) to accelerate convergence [3]. |

| Differentiable Trajectory Reweighting (DiffTRe) | A method that enables training ML potentials directly on experimental data without backpropagating through the entire MD simulation, making top-down learning feasible [14]. |

| DP-GEN (Deep Potential Generator) | An active learning framework for generating training datasets and building accurate neural network potentials in a robust and automated manner [12]. |

Workflow Diagrams

Traditional vs. Modern Simulation Trade-offs

Machine Learning Potential Development Workflow

Fused Data Training Protocol

Frequently Asked Questions (FAQs)

1. What is 'chemical accuracy' and why is it a 1 kcal/mol target? Chemical accuracy is the ability of computational methods to calculate thermochemical properties, such as enthalpies of formation, to within 1 kilocalorie per mole (kcal/mol) (approximately 4 kJ/mol) of experimentally determined values [16]. This specific threshold was established as a pragmatic goal by pioneers like John Pople, who recognized that for computational chemistry to be a truly predictive tool, it needed to match the typical uncertainty of experimental thermochemical measurements [16].

2. Why is achieving chemical accuracy so important for computational chemistry? Reaching this accuracy threshold signifies a shift from qualitative modeling to quantitative prediction [16]. It allows computational simulations to reliably predict experimental outcomes, which can dramatically accelerate the design of new molecules and materials—from drugs to batteries—by reducing the reliance on costly and time-consuming laboratory trial-and-error [17] [16]. At room temperature, a 1.4 kcal/mol difference translates to about a 10-fold change in equilibrium or rate constants, making the 1 kcal/mol target directly relevant to predicting chemical behavior [16].

3. My DFT calculations are not converging. What are the common causes? Non-convergence in DFT simulations is a frequent issue. Here are the most common culprits and their solutions:

- Incorrect SCF Parameters: The self-consistent field (SCF) iteration process may fail to converge due to suboptimal charge-mixing parameters. Using data-efficient algorithms like Bayesian optimization can systematically optimize these parameters and reduce the number of SCF steps required [3].

- Problematic Input Data: The presence of missing values in your input data can cause immediate failures in the underlying R code or other computational engines. Always use data investigation tools to summarize your data and filter out or replace missing values before calculation [18].

- Insufficient System Resources: Large-scale DFT simulations require considerable computational power. Ensure you have allocated enough memory and processing time, especially when increasing system size or using more complex functionals [17] [3].

4. How can I reduce the computational cost of my DFT stability calculations?

- Optimize Convergence Parameters: As mentioned, optimizing charge-mixing parameters via Bayesian optimization can significantly reduce the number of SCF iterations, leading to direct time savings [3].

- Leverage Machine-Learned Force Fields: For extensive molecular dynamics simulations, consider using Neural Network Potentials (NNPs) like EMFF-2025 or the Skala functional. These models are trained on high-accuracy DFT data and can achieve DFT-level accuracy for properties like structure and mechanical stability at a fraction of the computational cost [12] [17].

- Adopt a Systematic Workflow: Perform standard convergence tests (e.g., for cutoff energy and k-points) to avoid using unnecessarily high computational settings that do not improve your result [3].

5. What is the fundamental challenge preventing DFT from achieving chemical accuracy? The fundamental bottleneck is the exchange-correlation (XC) functional [17]. In DFT, the many-electron Schrödinger equation is reformulated to be computationally tractable, but this introduces a universal term called the XC functional, for which the exact form is unknown [17]. For decades, scientists have relied on hundreds of different approximations for this functional, but their limited accuracy (with errors typically 3 to 30 times larger than the 1 kcal/mol target) has prevented DFT from being a fully predictive tool [17].

Troubleshooting Guide: Common DFT Error Messages

| Error Message / Symptom | Likely Cause | Solution |

|---|---|---|

| SCF convergence failure | Suboptimal charge mixing parameters; insufficient SCF iterations [3]. | Use Bayesian optimization to find better mixing parameters; increase the maximum SCF steps [3]. |

| "Missing values in object" (R-based tools) | Input data contains NA or blank values [18]. |

Run a data summary tool to identify fields with missing data. Use a Filter or Formula tool to remove or impute these values [18]. |

| "Estimation and validation samples exceed 100%" | The sample sizes for model estimation and validation are set to sum to more than 100% of the available data [18]. | Adjust the sample settings so that the estimation and validation percentages sum to 100% [18]. |

| High computational cost for large systems | Using standard DFT on large molecules or long time-scale MD simulations [17] [12]. | Switch to a machine-learned potential like an NNP that has been trained for your chemical system, offering near-DFT accuracy with lower cost [17] [12]. |

| Low predictive accuracy vs. experiment | Using an XC functional with inherent inaccuracies for your specific chemical property [17]. | Adopt a next-generation, deep-learning-based XC functional like Skala, which is designed to learn the functional directly from high-accuracy data and reach chemical accuracy [17]. |

Experimental Protocols for High-Accuracy Computation

Protocol 1: Generating a Machine-Learned Density Functional

This methodology is based on the approach used by Microsoft Research to develop the Skala functional [17].

1. Objective: To create a deep-learning-based exchange-correlation (XC) functional that achieves chemical accuracy (1 kcal/mol) for molecular atomization energies.

2. Research Reagent Solutions (Key Materials)

| Item | Function / Description |

|---|---|

| High-Accuracy Wavefunction Methods | Computationally expensive "gold-standard" quantum chemistry methods (e.g., CCSD(T)) used to generate the reference energy data for training [17]. |

| Diverse Molecular Dataset | A large set of molecular structures covering a specific region of chemical space (e.g., main-group molecules). Diversity is critical for model generalizability [17]. |

| Scalable Compute Pipeline | Cloud or high-performance computing (HPC) resources (e.g., Microsoft Azure) to manage the massive data generation and model training workload [17]. |

| Deep-Learning Architecture (Skala) | A specialized neural network designed to learn meaningful representations directly from the electron density, avoiding hand-crafted features [17]. |

3. Workflow Diagram: High-Level Workflow for ML Functional Development

4. Detailed Procedure:

- Step 1: Data Generation. Build a scalable pipeline to produce a vast and highly diverse set of molecular structures. The Microsoft team generated a dataset two orders of magnitude larger than previous efforts [17].

- Step 2: Reference Energy Calculation. Use substantial computational resources to compute the corresponding atomization energy labels for these structures. This involves employing high-accuracy wavefunction methods, a process guided by domain experts to ensure data quality at the target accuracy level [17].

- Step 3: Model Training. Design and train a dedicated deep-learning architecture on the generated data. The key innovation is to let the model learn relevant representations of the electron density directly from the data, moving beyond the traditional "Jacob's Ladder" hierarchy of hand-designed descriptors [17].

- Step 4: Validation. Rigorously assess the trained model's performance on a well-known, independent benchmark dataset (like W4-17) that was not part of the training set. The goal is to confirm that the model generalizes and achieves chemical accuracy [17].

Protocol 2: Developing a General Neural Network Potential (NNP)

This protocol is adapted from the development of the EMFF-2025 potential for energetic materials [12].

1. Objective: To create a general NNP for molecular systems (e.g., C, H, N, O-based) that provides DFT-level accuracy for both mechanical properties and chemical reactivity at a lower computational cost.

2. Workflow Diagram: NNP Development via Transfer Learning

3. Detailed Procedure:

- Step 1: Leverage a Pre-trained Model. Start with an existing, broadly pre-trained NNP model. This model already contains learned representations of atomic interactions from a large database of DFT calculations [12].

- Step 2: Targeted Data Generation. For the specific class of materials you are interested in (e.g., high-energy materials), perform a limited number of new DFT calculations to generate structural and energetic data. This is much more efficient than generating a massive dataset from scratch [12].

- Step 3: Transfer Learning. Fine-tune the pre-trained NNP model using the new, targeted dataset. This process allows the model to adapt its general knowledge to the specific characteristics of your materials while maintaining high data efficiency [12].

- Step 4: Model Validation. Validate the final NNP (e.g., EMFF-2025) by comparing its predictions of energies and forces directly with DFT results. Further validation involves applying the NNP in molecular dynamics simulations to predict crystal structures, mechanical properties, and decomposition behaviors, benchmarking these results against available experimental data [12].

Modern Solutions: Leveraging Machine Learning for DFT-Level Accuracy at a Fraction of the Cost

Frequently Asked Questions (FAQs)

Q1: What are Neural Network Potentials, and how do they fundamentally differ from traditional force fields and density functional theory (DFT) calculations? Neural Network Potentials are machine-learned models that approximate the solution of the Schrödinger equation, enabling atomistic simulations with quantum-level accuracy but at a fraction of the computational cost. Unlike traditional molecular mechanics force fields, which use simple parametric equations and are often limited in accuracy and transferability, NNPs learn complex relationships from quantum mechanical data. They are vastly faster than direct DFT calculations, which can take years for moderately sized molecules like propane, making NNPs a scalable alternative for molecular dynamics simulations [19].

Q2: My NNP produces high-energy forces and unphysical molecular geometries. What could be wrong? This is a classic sign of the model operating outside its training domain. NNPs struggle to extrapolate to unseen atomic configurations. To troubleshoot:

- Verify Training Data Coverage: Ensure the chemical elements and molecular motifs in your system are well-represented in the NNP's original training data (e.g., an NNP trained only on organic molecules H, C, N, O will fail on a system containing sulfur) [19].

- Inspect the Input Structure: Check for highly strained bonds, steric clashes, or unusual coordination geometries that were not present in the training set. A quick single-point DFT calculation on the problematic structure can help confirm if the issue is with the NNP or the structure itself.

- Solution - Transfer Learning: If your system is underrepresented, the most effective strategy is to perform transfer learning. Augment the pre-trained NNP with a small amount of new, high-quality DFT data specific to your system of interest, as demonstrated by the development of the EMFF-2025 model [12].

Q3: When I run a hybrid NNP/MM simulation in GROMACS, I get unphysical results at the boundary between the regions. How can I fix this? This is a common challenge in hybrid simulations. The GROMACS NNP/MM interface uses a mechanical embedding scheme, and cutting through chemical bonds is not properly handled. To address this [20]:

- Avoid Cutting Bonds: Redefine your NNP region (

nnp-input-group) to include complete molecules or functional groups. Do not have covalent bonds crossing the NNP/MM boundary. - Check Coupling Terms: Remember that the coupling term ( E_{NNP-MM} ) only includes non-bonded interactions. There is currently no cap (like a link atom) for broken bonds, which can create unrealistic chemical environments that the NNP cannot correctly interpret [20].

- Validate the Subsystem: Run a short pure NNP simulation on your defined subsystem alone to confirm it remains stable and physical before attempting the full hybrid simulation.

Q4: How do I export a pre-trained PyTorch NNP model for use in simulation software like GROMACS? Most modern simulation packages require models to be exported in a specific, portable format. For GROMACS, you must export your model using TorchScript. Below is an example code snippet for wrapping and exporting a model like ANI-2x, which also handles unit conversions between the software and the model [20].

Q5: What are the key metrics to benchmark the accuracy of a new NNP against DFT? The standard approach is to compare the NNP's predictions on a held-out test dataset of DFT calculations. The key quantitative metrics are [12]:

- Mean Absolute Error (MAE) of Energy: Typically reported in eV/atom. A well-trained general-purpose NNP should achieve an MAE within ± 0.1 eV/atom across a diverse test set.

- Mean Absolute Error (MAE) of Forces: Reported in eV/Å. Force MAE is often a more sensitive metric of model quality and should ideally be within ± 2 eV/Å. These metrics should be plotted against DFT references to ensure predictions align closely with the diagonal [12].

Troubleshooting Guide: Common NNP Error Messages and Solutions

| Error Message / Symptom | Likely Cause | Solution |

|---|---|---|

| "Model output is NaN" or simulation crashes with unphysical forces. | Input configuration is far outside the model's training domain (OOD). | Verify the chemical composition and geometry of your input structure. Perform transfer learning with relevant data [12]. |

| High energy/force MAE during validation on a known test set. | Insufficient or low-quality training data; inadequate model architecture or training procedure. | Curate a more diverse and representative training dataset. Re-tune hyperparameters or consider a more modern architecture (e.g., graph neural networks) [19] [12]. |

| Slow performance during NNP/MM simulation. | NNP inference is computationally expensive; running on CPU instead of GPU. | Use the GMX_NN_DEVICE=cuda environment variable to run the NNP on a GPU, ensuring GROMACS is linked with a CUDA-enabled LibTorch [20]. |

GROMACS fails to load the model file (model.pt). |

Version mismatch between training and inference libraries; incorrect model export. | Ensure the LibTorch version linked to GROMACS matches the one used to export the model. Use the TorchScript export method as shown in the FAQ [20]. |

Quantitative Performance Comparison: NNPs vs. Traditional Methods

The primary value of NNPs lies in their ability to approach quantum-level accuracy at dramatically reduced computational costs. The table below summarizes a typical performance benchmark, as demonstrated by state-of-the-art models like EMFF-2025.

Table 1: Benchmarking NNP performance and cost against traditional computational methods. [19] [12]

| Method | Typical System Size | Time Scale | Accuracy (Energy MAE) | Key Limitation |

|---|---|---|---|---|

| Density Functional Theory (DFT) | 100s of atoms | Picoseconds | Ground Truth | Prohibitively high computational cost for large systems/long times [19]. |

| Classical Force Fields (MM) | Millions of atoms | Microseconds+ | Low (System-specific) | Poor accuracy for chemical reactions; requires parameterization for each system [19]. |

| Neural Network Potentials (NNPs) | 10,000s to 100,000s of atoms [21] | Nanoseconds | High (e.g., ~0.1 eV/atom) [12] | Dependency on quality and breadth of training data [19]. |

Experimental Protocol: Validating an NNP for Material Property Prediction

This protocol outlines the steps to validate a general-purpose NNP, like EMFF-2025, for predicting the mechanical properties and thermal stability of high-energy materials (HEMs), ensuring reliability before application in production research [12].

1. Model Acquisition and System Setup

- Obtain a pre-trained model (e.g., EMFF-2025, ANI-2x, Egret-1) and integrate it with your MD engine (e.g., GROMACS, LAMMPS).

- Prepare the initial crystal structure of the material (e.g., an HEM like RDX or CL-20) using data from repositories like the Materials Project [19].

2. Property Prediction and Validation

- Energy and Forces: Run a single-point calculation on a relaxed crystal structure and compare the energy and atomic forces against a reference DFT calculation. Plot the results to confirm they align with the diagonal, and calculate the MAE to ensure it meets benchmarks (e.g., energy MAE < 0.1 eV/atom) [12].

- Mechanical Properties: Perform MD simulations at low temperatures (e.g., 300 K) to calculate elastic constants and bulk moduli. Benchmark these predicted properties against known experimental data or high-level DFT results [12].

- Thermal Decomposition: Run high-temperature MD simulations (e.g., 2000-3000 K) to observe initial decomposition reactions and mechanisms. Use Principal Component Analysis (PCA) to map the chemical space and identify common decomposition pathways across different materials [12].

The workflow for this validation process is summarized in the following diagram:

Table 2: Key software, datasets, and models for NNP-driven research, crucial for reducing DFT computational costs. [19] [21] [12]

| Category | Item | Function & Application |

|---|---|---|

| Simulation Software | GROMACS (with NNPot) | Molecular dynamics engine; performs pure NNP and hybrid NNP/MM simulations [20]. |

| PyTorch / LibTorch | Machine learning library; used for training new NNPs and running inference in MD codes [20]. | |

| Pre-trained Models | ANI (e.g., ANI-2x) | Accurate NNP for organic molecules containing H, C, N, O; good for drug discovery [19]. |

| EMFF-2025 | General NNP for C, H, N, O-based high-energy materials; predicts mechanical and chemical properties [12]. | |

| Egret-1 / AIMNet2 | Family of open-source NNPs for organic chemistry; powers fast, accurate simulations [21]. | |

| Training Datasets | Materials Project (MPtrj) | Open repository of periodic DFT data for inorganic materials; used for training solid-state NNPs [19]. |

| Open Catalyst (OC20/OC22) | Massive dataset of DFT relaxations for surface catalysis and adsorbates [19]. | |

| QM9 | Dataset of DFT calculations for ~134k small organic molecules; used for molecular NNP training [19]. |

Technical Specifications & Performance Data

The following tables summarize the key technical specifications and quantitative performance metrics of the EMFF-2025 potential, enabling researchers to quickly assess its capabilities.

Table 1: Core Model Specifications of EMFF-2025

| Specification Category | Detail |

|---|---|

| Model Type | General Neural Network Potential (NNP) |

| Target System | High-energy materials (HEMs) with C, H, N, O elements [12] |

| Architecture Basis | Deep Potential (DP) scheme [12] |

| Key Innovation | Transfer learning from a pre-trained model (DP-CHNO-2024) with minimal new DFT data [12] |

| Primary Applications | Predicting crystal structures, mechanical properties, and thermal decomposition characteristics of HEMs [12] |

Table 2: Model Performance and Accuracy Metrics

| Performance Metric | Result |

|---|---|

| Energy Prediction Accuracy | Mean Absolute Error (MAE) predominantly within ± 0.1 eV/atom [12] |

| Force Prediction Accuracy | Mean Absolute Error (MAE) mainly within ± 2 eV/Å [12] |

| Validation Method | Systematic benchmarking against DFT calculations and experimental data [12] |

| Key Scientific Finding | Uncovered that most HEMs follow similar high-temperature decomposition mechanisms [12] |

Frequently Asked Questions (FAQs) & Troubleshooting

This section addresses common practical challenges and conceptual questions encountered when integrating EMFF-2025 into research workflows.

Q1: Our molecular dynamics (MD) simulations using EMFF-2025 fail to converge or yield unrealistic structures during geometry optimization. What could be the issue?

- A: This is a common challenge often related to the choice of geometry optimizer. Benchmark tests on drug-like molecules show that the success rate and number of imaginary frequencies in optimized structures are highly dependent on the optimizer-NNP pairing [22].

- Recommended Action: For the EMFF-2025 class of NNP, consider using the Sella optimizer with internal coordinates. One study found this combination led to a high number of successful optimizations (20-25 out of 25) and a low average number of steps, indicating robust convergence [22].

- Avoid: The

geomeTRIC (tric)optimizer showed poor performance with several NNPs, successfully optimizing only 1 out of 25 systems in one benchmark [22].

Q2: How can I improve the prediction of decomposition temperatures (Td) for energetic materials to better match experimental values?

- A: Conventional periodic models in MD simulations are known to overestimate decomposition temperatures, sometimes by over 400 K. An optimized MD protocol has been developed to address this [23].

- Solution 1: Use Nanoparticle Models. Replace periodic bulk crystal models with nanoparticle structures. This incorporates surface effects that initiate decomposition more realistically, significantly reducing the Td overestimation [23].

- Solution 2: Reduce Heating Rates. Use lower heating rates (e.g., 0.001 K/ps) in your simulations. This approach has been shown to reduce the deviation from experimental Td to as low as 80 K [23].

- Result: Applying this optimized protocol to eight representative EMs resulted in a thermal stability ranking with excellent agreement to experiments (R² = 0.969) [23].

Q3: How does EMFF-2025 improve upon traditional ReaxFF for simulating reactive processes?

- A: While ReaxFF has been widely used, it can struggle to achieve the accuracy of density functional theory (DFT) in describing reaction potential energy surfaces, sometimes leading to significant deviations [12]. EMFF-2025, as an NNP, is designed to overcome the long-standing trade-off between computational accuracy and efficiency, offering DFT-level accuracy while being more efficient than traditional force fields and DFT calculations [12].

Q4: Is EMFF-2025 suitable for studying mechanical properties, or is it only for chemical reactions?

- A: Yes, EMFF-2025 is a versatile framework designed for the comprehensive prediction of both mechanical properties at low temperatures and chemical behavior at high temperatures [12]. It has been validated for predicting the structure and mechanical properties of 20 high-energy materials [12].

Experimental Protocols & Workflows

Optimized Protocol for Thermal Stability Assessment

This detailed protocol allows for the reliable prediction of decomposition temperatures, a critical property for energetic material safety and performance.

Step 1: Model Construction

- Do not use a perfect periodic bulk crystal.

- Construct a nanoparticle model of the energetic material. Studies show that surface effects dominate over particle size in initiating decomposition [23].

Step 2: Simulation Parameters

- Set a low heating rate. A rate of 0.001 K/ps is recommended to achieve Td values within 80 K of experimental results [23].

- Use the EMFF-2025 potential to run the molecular dynamics simulation.

Step 3: Data Analysis

- Monitor the simulation for the onset of decomposition reactions.

- Record the temperature at which rapid decomposition begins as the predicted Td.

- For a set of materials, the protocol yields a thermal stability ranking that can be directly compared to experimental data [23].

The following workflow diagram visualizes this optimized protocol for thermal stability ranking:

General Workflow for Model Application and Validation

This broader workflow outlines the steps for employing the EMFF-2025 potential in a typical research scenario, from problem definition to result validation.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools for EMFF-2025 Research

| Tool / Reagent | Function / Description | Relevance to EMFF-2025 |

|---|---|---|

| DeePMD-kit | A deep learning package for many-body potential energy representation and molecular dynamics [24]. | The software framework used to develop and apply the DP-based EMFF-2025 potential [12]. |

| DP-GEN (Deep Potential Generator) | A framework for sampling the configuration space and generating a training database via active learning [12]. | Used in the development of EMFF-2025 to incorporate new training data efficiently [12]. |

| Sella Optimizer | An open-source optimizer for geometry optimization, effective with internal coordinates [22]. | Recommended for robust geometry optimization when using NNPs like EMFF-2025 [22]. |

| L-BFGS Optimizer | A classic quasi-Newton algorithm for optimization [22]. | An alternative optimizer; performance is NNP-dependent and may require more steps [22]. |

| FIRE Optimizer | A first-order, molecular-dynamics-based minimizer for fast structural relaxation [22]. | An alternative optimizer; can be faster but potentially less precise for complex molecules [22]. |

Troubleshooting Guide: Common Issues and Solutions

Q1: The predicted charge density leads to inaccurate total energies and forces in non-self-consistent calculations (NSCF). How can this be improved?

A1: This common issue often stems from the model learning the total charge density (TCD) from scratch, which can be numerically challenging. Implement the Δ-SAED (Superposition of Atomic Electron Densities) method.

- Root Cause: Machine learning models must learn the complex spatial variations of the total charge density, including core electron regions, which can dominate the learning objective and reduce accuracy for valence electrons critical for chemical bonding.

- Solution: Instead of predicting

ρ_total, train your model to predict the difference charge density (DCD),ρ_d(r) = ρ_total(r) - ρ_SAED(r), whereρ_SAED(r)is the simple superposition of isolated atomic electron densities [25]. - Procedure:

- Data Preparation: For your training structures, compute

ρ_SAEDusing your DFT code's atomic plugins or a standalone tool. - Target Calculation: Calculate the DCD for your training set:

ρ_d = ρ_DFT - ρ_SAED. - Model Training: Train your deep learning model to map atomic structures to

ρ_d. - Inference: During prediction, obtain the final charge density as

ρ_predicted = ρ_SAED + ρ_d_predicted.

- Data Preparation: For your training structures, compute

- Expected Outcome: This approach introduces a strong physical prior. The model only needs to learn the deviation from the atomic superposition, which is typically smoother and chemically more relevant. This has been shown to improve prediction accuracy for over 90% of structures in benchmark datasets like QM9 and Materials Project, leading to more stable NSCF calculations [25].

Q2: My model suffers from poor transferability and fails to generalize to larger systems or unseen configurations.

A2: Transferability is a key challenge that can be addressed through fingerprint design and a two-step prediction strategy.

- Root Cause: The model's atomic fingerprints may not sufficiently capture the chemical environment, or the training data may lack the required diversity in system sizes and configurations.

- Solution 1: Use Advanced Fingerprints. Employ rotation-invariant atomic descriptors like the Atom-Centered Symmetry Functions (ACSF) or AGNI fingerprints [26]. These systematically describe an atom's local environment, ensuring model invariance to translation, rotation, and atom permutation.

- Solution 2: Adopt a Two-Step Learning Workflow. Emulate the logical structure of DFT itself.

- Procedure:

- For Step 1, represent the charge density using a basis set like Gaussian-type orbitals (GTOs), allowing the model to learn the optimal basis coefficients from data [26].

- Ensure your training dataset includes a wide variety of system types (molecules, polymers, crystals) and snapshots from molecular dynamics trajectories to introduce configurational diversity [26].

- Expected Outcome: This workflow aligns the model with DFT's first principles, where all ground-state properties are determined by the electron density. This leads to more accurate and transferable predictions for systems outside the immediate training set [26].

Q3: Solving the response equations (Sternheimer equations) in Density-Functional Perturbation Theory (DFPT) is computationally expensive and unstable.

A3: This is a known numerical challenge, particularly for metallic systems. A novel Schur complement approach can enhance efficiency.

- Root Cause: The Sternheimer equations can be ill-conditioned, and standard iterative solvers may require many expensive Hamiltonian applications to converge [27].

- Solution: Implement a Schur complement-based algorithm that leverages extra orbitals (e.g., from a previous self-consistent field calculation) as a preconditioner [27].

- Procedure: This method is mathematically complex but has been implemented in codes like DFTK. The core idea is to use a subspace spanned by the ground-state and a few extra orbitals to project the response equations, creating a smaller, better-conditioned problem to solve [27].

- Expected Outcome: This approach can reduce the number of required Hamiltonian applications—the most expensive step in DFPT—by up to 40%, leading to a significant reduction in computational cost and improved numerical stability [27].

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of an end-to-end ML-DFT framework over traditional DFT?

A1: The primary advantage is a massive reduction in computational cost while maintaining chemical accuracy. A well-trained ML model can emulate the essence of DFT, mapping an atomic structure directly to its electronic charge density and derived properties, bypassing the explicit, iterative solution of the Kohn-Sham equations. This results in orders of magnitude speedup, with computational cost that scales linearly with system size, enabling the study of large systems and long timescales that are currently inaccessible to routine DFT [26].

Q2: Which properties can a comprehensive ML-DFT framework predict?

A2: A robust framework can predict a wide range of electronic and atomic properties.

- Electronic Structure: Electronic charge density, density of states (DOS), band gap (E_g), valence band maximum (VBM), and conduction band minimum (CBM) [26].

- Atomic & Global Properties: Total potential energy, atomic forces, and the stress tensor [26]. The prediction of atomic forces is particularly crucial for performing stable molecular dynamics simulations.

Q3: My DFT+U calculation produces unrealistic occupation matrices or over-elongates chemical bonds. What could be wrong?

A3: This is a common pitfall in DFT+U calculations.

- Unphysical Occupations: This may arise from non-normalized projections. Try switching the

U_projection_typeto'norm_atomic'to check if this yields more reasonable results [28]. - Over-elongated Bonds: Large U values can over-correct delocalization error, leading to exaggerated bond lengths. Consider using a structurally-consistent U procedure, where U is calculated on the DFT geometry, then the structure is relaxed with that U, and the process is repeated until consistency is achieved. For covalent systems, a DFT+U+V approach with an intersite V term may be necessary to correctly describe hybridization [28].

Quantitative Performance Data

Table 1: Benchmarking ML-DFT Model Performance on Standard Datasets

This table summarizes the performance of a state-of-the-art charge density model (Charge3Net) when trained on Total Charge Density (TCD) versus Difference Charge Density (DCD, i.e., Δ-SAED). The metric ε_mae is the mean absolute error in the charge density prediction, normalized by the total charge [25].

| Dataset | Description | Model Target | ε_mae (Mean Absolute Error) |

Key Outcome |

|---|---|---|---|---|

| QM9 | ~134k organic molecules [25] | TCD (Baseline) | Benchmark Value | — |

| DCD (Δ-SAED) | Reduction for >99% of structures | Robust improvement in accuracy [25] | ||

| NMC | Nickel Manganese Cobalt oxide battery materials [25] | TCD (Baseline) | Benchmark Value | — |

| DCD (Δ-SAED) | Reduction for >99% of structures | Robust improvement in accuracy [25] | ||

| Materials Project (MP) | Diverse inorganic crystals [25] | TCD (Baseline) | Benchmark Value | — |

| DCD (Δ-SAED) | Reduction for ~90% of structures | Significant improvement for most structures [25] |

Table 2: Computational Efficiency of ML-DFT Emulation

| Computational Aspect | Traditional DFT | ML-DFT Emulation | Implication |

|---|---|---|---|

| Kohn-Sham Solving | O(N^3) scaling (N = number of electrons) [25] |

Bypassed entirely [26] | Fundamental shift to inference cost |

| Overall Cost Scaling | Cubic (O(N^3)) or slightly better [25] |

Linear (O(N)) with a small prefactor [26] |

Enables large-scale simulations |

| DFPT Response Equations | Iterative solution, can be unstable [27] | Novel Schur solver: ~40% fewer matrix-vector products [27] | Direct and significant speedup for property calculations |

Experimental Protocols

Protocol 1: Implementing the Δ-SAED Method for Charge Density Prediction

Purpose: To enhance the accuracy and transferability of machine learning charge density predictions by leveraging the physical prior of superposition of atomic electron densities.

Materials:

- Software: A DFT code (e.g., VASP, Quantum ESPRESSO), a deep learning framework (e.g., PyTorch, TensorFlow), and a charge density model (e.g., Charge3Net).

- Data: A dataset of atomic structures and their corresponding DFT-calculated total charge densities.

Steps:

- Compute Reference SAED: For every atomic structure in your dataset, calculate

ρ_SAED(r) = Σ_i ρ_atomic_i(|r - R_i|), whereρ_atomic_iis the electron density of an isolated atom of typeiat positionR_i. This can often be done using plugins or utilities in standard DFT codes. - Calculate Difference Charge Density (DCD): For each structure, compute the target for the machine learning model:

ρ_DCD(r) = ρ_DFT_total(r) - ρ_SAED(r). - Model Training:

- Use atomic fingerprints (e.g., AGNI, ACSF) as the model's input features.

- Set the model's training target to be

ρ_DCDinstead ofρ_total. - Train the model using a standard regression loss function (e.g., Mean Absolute Error) between the predicted and true DCD.

- Inference and Reconstruction: To predict the total charge density of a new structure:

- Compute its

ρ_SAED. - Use the trained model to predict

ρ_DCD_predicted. - Reconstruct the final charge density:

ρ_total_predicted = ρ_SAED + ρ_DCD_predicted.

- Compute its

Troubleshooting Tip: If the model performance is poor, verify the accuracy of your generated ρ_SAED by visualizing it for a simple molecule (e.g., H₂) and comparing it with a known standard [25].

Protocol 2: End-to-End ML-DFT Workflow for Property Prediction

Purpose: To predict a comprehensive set of material properties (energy, forces, DOS, etc.) from an atomic structure using a deep learning framework that emulates DFT.

Materials:

- Software: As in Protocol 1.

- Data: A database of atomic structures with corresponding DFT-calculated properties (charge density, energy, forces, DOS, etc.). The database should include configurational diversity, ideally from MD snapshots [26].

Steps:

- Data Preparation and Fingerprinting:

- Procure a diverse set of atomic structures (molecules, polymers, crystals).

- For each atomic configuration, compute rotation-invariant atomic fingerprints (e.g., AGNI fingerprints) for every atom [26].

- Step 1 - Charge Density Model:

- Train a deep neural network (DNN) whose input is the atomic fingerprints and whose output is a representation of the electronic charge density (e.g., coefficients of a Gaussian-type orbital basis set) [26].

- The model learns the optimal basis set from the data.

- Step 2 - Property Prediction Models:

- For each property of interest (e.g., total energy, atomic forces), train a separate DNN.

- The input to these networks is a combination of the original atomic fingerprints and the predicted charge density descriptors from Step 1 [26].

- This two-step approach mirrors the first-principles concept of DFT, where the charge density determines all ground-state properties.

- Validation: Test the model on a held-out set of structures. Evaluate the accuracy of the charge density (using

ε_mae), energies (Mean Absolute Error in eV/atom), and forces (MAE in eV/Å) against DFT reference data [26].

Workflow Diagrams

Diagram Title: ML-DFT Two-Step Prediction Workflow

Diagram Title: Δ-SAED Charge Density Training and Prediction

Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets for ML-DFT

This table lists key software, datasets, and methodological "reagents" required for building and testing end-to-end ML-DFT frameworks.

| Item Name | Type | Function / Purpose | Key Features / Notes |

|---|---|---|---|

| VASP [26] | Software | First-principles DFT code | Used to generate the reference training data (charge densities, energies, forces). |

| AGNI Fingerprints [26] | Method | Atomic-scale descriptor | Creates rotation-invariant fingerprints of an atom's chemical environment for ML input. |

| Δ-SAED Method [25] | Algorithm | Charge density learning | Improves ML model accuracy by using difference charge density as the training target. |

| Charge3Net [25] | Software / Model | E(3)-equivariant neural network | A state-of-the-art grid-based model for predicting electron charge density. |

| Schur Complement Solver [27] | Algorithm | DFPT equation solver | Increases efficiency and stability of response property calculations in DFPT. |

| QM9 Dataset [25] | Dataset | Benchmark organic molecules | Contains ~134k small organic molecules; standard for benchmarking quantum ML models. |

| Materials Project Database [25] | Dataset | Inorganic crystal structures | A vast database of computed crystal structures and properties for training and testing. |

Frequently Asked Questions (FAQs) and Troubleshooting

General Questions about the Skala Model

Q1: What is the Skala model and how does it differ from traditional functionals? Skala is a modern, deep learning-based exchange-correlation (XC) functional for Density Functional Theory (DFT). Unlike traditional functionals constructed with hand-crafted features, Skala bypasses these approximations by learning complex, non-local representations directly from vast amounts of high-accuracy data [29] [30]. It aims to achieve the accuracy of higher-rung "Jacob's Ladder" functionals (like hybrids) at the computational cost of semi-local functionals (GGA or meta-GGA), thereby breaking the traditional trade-off paradigm [29].

Q2: What specific computational cost reductions can I expect with Skala? Independent analysis suggests that Skala can reduce processing time by up to 90% while maintaining high accuracy, effectively combining hybrid-level accuracy with semi-local computational costs [31]. This is achieved because the deep learning model captures complex effects without explicitly solving the more expensive equations found in higher-rung functionals.

Q3: On what types of systems was Skala trained and validated? Skala was trained on an unprecedented volume of diverse, high-accuracy reference data, including coupled cluster atomization energies and other public benchmarks for small molecules [29] [32]. It achieves chemical accuracy (errors below 1 kcal/mol, specifically 1.06 kcal/mol on benchmark tests) for atomization energies of small molecules and is competitive with best-performing hybrid functionals across general main group chemistry [29] [31] [30].

Installation and Setup

Q4: Where can I access the Skala functional? The Skala functional is available for research purposes through several channels [30] [32]:

- The Azure AI Foundry catalog

- As a Python package (

microsoft-skala) on PyPI, which includes a PyTorch implementation and hookups to quantum chemistry packages like PySCF and ASE. - A development version of a C++ library (GauXC) with an add-on supporting PyTorch-based functionals like Skala, which can be used to integrate Skala into third-party DFT codes.

Q5: I am getting import errors when trying to use the Python package. What should I check?

- Ensure you have installed the correct package using

pip install microsoft-skala[32]. - Verify that all dependencies, such as PyTorch, are installed and compatible with your version of the package.

- Check that your environment is correctly configured, especially if you are using hookups to PySCF or ASE.

Performance and Accuracy

Q6: Skala's result for my molecule's atomization energy is not near the benchmark value. What could be wrong? First, verify that your system falls within the "chemical space" that Skala was trained on, which is currently main group chemistry [30]. Performance for transition metal complexes or systems with strong correlation (e.g., localized d- or f-states) may be less reliable, as these are known challenges for DFAs and are a focus for future versions of Skala [33] [30].

Q7: Why does my band structure calculation for a solid (like silicon) show spurious oscillations or an unreasonable band gap when using a machine-learned functional? This is a known issue for some machine-learned functionals trained solely on molecular data. The failure often stems from a lack of the homogeneous electron gas constraint [33]. A modified functional like DM21mu, which includes this constraint, demonstrates that it is possible to correct these spurious band structures and predict reasonable band gaps [33]. When applying Skala to extended solids, check its documentation for similar physical constraints.

Functional Comparison and the Jacob's Ladder Paradigm

The following table summarizes how Skala's performance compares to traditional functionals on Jacob's Ladder.

Table 1: Comparing Skala to Traditional Functionals on Jacob's Ladder

| Functional Type | Representative Examples | Typical Accuracy for Atomization Energies | Computational Cost | Key Differentiator of Skala |

|---|---|---|---|---|

| Semi-Local (GGA) | PBE [33] | High error (e.g., >5 kcal/mol) | Low | Skala achieves much higher accuracy at a similar cost [29]. |

| Hybrid | B3LYP [34] | Moderate to High (~2-4 kcal/mol) | High (due to exact exchange) | Skala aims for competitive accuracy at a fraction of the cost [29] [30]. |

| Machine Learned (Skala) | Skala | Chemical Accuracy ( ~1.06 kcal/mol) [31] | Low (similar to semi-local) | Learns non-local effects directly from data, bypassing hand-crafted features [29]. |

Experimental Protocols and Troubleshooting

Protocol 1: Validating Skala's Performance on Molecular Atomization Energies

This protocol outlines how to reproduce the core accuracy claim of the Skala model for small molecules.

Objective: To calculate the atomization energy of a small organic molecule (e.g., from the ANI-1 or other benchmark dataset) and verify that the error is within chemical accuracy (1 kcal/mol).

Materials and Software:

- Quantum Chemistry Code: PySCF or another code integrated with the Skala package [32].

- Skala Functional: Installed via the

microsoft-skalaPython package [32]. - Reference Data: High-accuracy coupled-cluster or experimental atomization energies for your test molecules [29].

Step-by-Step Workflow:

- System Setup: Define the molecular geometry and basis set for your calculation.

- Functional Selection: Configure the DFT calculator to use the Skala exchange-correlation functional.

- Energy Calculation: Run a single-point energy calculation for the molecule and its constituent atoms.

- Result Analysis: Calculate the atomization energy as: Atomization Energy = E(molecule) - Σ E(atoms). Compare this value to the high-accuracy reference data.

- Troubleshooting:

- Symptom: The calculated atomization energy is significantly off.

- Action: Double-check that the molecular geometry is correct and that the system consists of main-group elements. Ensure you are using a sufficiently large basis set.

Protocol 2: Comparing Computational Cost and Workflow

This protocol helps you quantitatively compare the cost of Skala against a standard hybrid functional.

Objective: To measure the wall-time and self-consistent field (SCF) iteration count for a medium-sized organic molecule using Skala versus a standard hybrid functional like PBE0.

Materials and Software:

- DFT Code: A code that supports both Skala and standard hybrid functionals (e.g., via the GauXC library) [32].

- Molecule: A suitable test molecule (e.g., a drug-like molecule with 20-50 atoms).

Step-by-Step Workflow:

- Baseline Measurement: Run a DFT calculation for your test molecule using the PBE0 hybrid functional. Record the total wall time and the number of SCF iterations to convergence.

- Skala Measurement: Run the same calculation under identical conditions (same hardware, convergence criteria, basis set, etc.) but using the Skala functional. Record the same metrics.

- Data Analysis: Calculate the percentage reduction in time and SCF iterations. The expectation is a significant reduction (e.g., up to 90% time savings) with Skala while maintaining similar accuracy [31].

- Troubleshooting:

- Symptom: The SCF cycle with Skala fails to converge.

- Action: Adjust the charge mixing parameters. Bayesian optimization of these parameters has been shown to systematically improve SCF convergence [3].

Table 2: Essential Computational Tools for Working with Skala

| Tool / Resource | Function / Purpose | Access / Link |

|---|---|---|

| Azure AI Foundry | Cloud platform to run and experiment with the Skala model. | https://labs.ai.azure.com/ [30] |

microsoft-skala PyPI Package |

Python package to integrate Skala into local workflows with PySCF and ASE. | pip install microsoft-skala [32] |