PU Learning for Synthesizability Classification: A Practical Guide for Drug Development and Materials Discovery

This comprehensive guide explores the implementation of Positive-Unlabeled (PU) learning to solve the critical challenge of synthesizability classification in drug development and materials science.

PU Learning for Synthesizability Classification: A Practical Guide for Drug Development and Materials Discovery

Abstract

This comprehensive guide explores the implementation of Positive-Unlabeled (PU) learning to solve the critical challenge of synthesizability classification in drug development and materials science. Traditional methods relying on thermodynamic stability often fail to account for kinetic factors and experimental constraints, while the scarcity of published negative data (failed synthesis attempts) makes conventional supervised learning impractical. This article details how PU learning frameworks leverage known synthesizable compounds and large unlabeled datasets to build accurate classifiers. Covering foundational concepts, methodological implementations like co-training and large language models, troubleshooting for false positives, and rigorous validation techniques, we provide researchers and drug development professionals with actionable strategies to prioritize synthesizable candidates, bridging the gap between computational prediction and experimental realization.

Bridging the Virtual and Physical Worlds: The Critical Role of PU Learning in Synthesizability Prediction

The discovery of new functional materials and therapeutic compounds is a fundamental driver of technological and medical progress. However, the transition from a computationally designed candidate to a physically realized entity remains a major bottleneck. For decades, researchers have relied on thermodynamic stability metrics, such as formation energy and energy above the convex hull (E(_{\text{hull}})), as proxies for synthesizability. Similarly, in drug discovery, heuristic scores like Synthetic Accessibility (SA) have been used to estimate how readily a molecule can be made. While these tools provide valuable initial guidance, they consistently fall short because they fail to capture the complex, kinetically driven reality of synthetic processes. Stability is a necessary but insufficient condition for synthesizability; a material or compound can be thermodynamically stable yet practically impossible to synthesize due to insurmountable kinetic barriers, unknown reaction pathways, or specific technological constraints.

This application note argues for a paradigm shift from these traditional proxies towards data-driven, machine learning approaches, specifically Positive and Unlabeled (PU) learning. This shift is necessitated by a critical data challenge: while examples of successfully synthesized compounds (positive data) are often recorded, documented failures (negative data) are exceptionally rare in scientific literature. PU learning provides a robust framework for learning from this inherently one-sided data, enabling more accurate and realistic synthesizability predictions to guide experimental efforts.

The Limitations of Traditional Synthesizability Proxies

The Stability-Synthesizability Disconnect

Traditional stability metrics offer an incomplete picture of synthesizability. Energy above the convex hull (E({\text{hull}})) measures a material's thermodynamic stability relative to its competing phases. While a low E({\text{hull}}) is a good indicator of stability, it does not guarantee that a material can be synthesized.

- Kinetic Barriers: E(_{\text{hull}}) is calculated from internal energies at 0 K and 0 Pa, ignoring the kinetic factors that dominate real-world synthesis. A reaction may be thermodynamically favorable but have a kinetic barrier that prevents it from occurring under reasonable conditions [1]. A classic example is martensite, which is synthesized not through a low-energy pathway but via rapid quenching of austenite [1].

- Entropic and Environmental Factors: Standard E(_{\text{hull}}) calculations do not account for entropic contributions to stability or the specific reaction conditions (e.g., temperature, pressure, atmosphere) required for synthesis [1]. The actual thermodynamic stability of a material varies with synthesis conditions.

The following table summarizes key limitations of traditional stability metrics and heuristics:

Table 1: Limitations of Traditional Synthesizability Proxies

| Proxy Metric | Primary Function | Key Limitations |

|---|---|---|

| Energy Above Hull (E(_{\text{hull}})) [2] [1] | Measures thermodynamic stability of a crystal structure relative to competing phases. | Ignores kinetic barriers, entropic effects, and synthesis condition dependence. A low value is not a guarantee of synthesizability. |

| Formation Energy [3] | Calculates the energy released upon forming a material from its elements. | A thermodynamic property that does not correlate directly with the feasibility of the synthetic pathway. |

| Synthetic Accessibility (SA) Score [4] | Heuristic based on molecular fragment complexity and frequency. | Correlates with molecular complexity rather than explicit synthesizability; can miss route-specific challenges. |

| Tolerance Factors [1] | Empirical rules (e.g., for perovskites) to predict crystal structure stability. | Often oversimplified; may exclude synthesizable compositions and include non-synthesizable ones. |

The Data Scarcity Problem

A fundamental obstacle in training data-driven synthesizability models is the scarcity of negative data. Scientific publications and lab notebooks overwhelmingly report successful syntheses, while failed attempts are rarely documented in a structured, accessible way [3] [1]. This creates a scenario where researchers have a set of confirmed positive examples and a much larger set of unlabeled examples that may contain both positive (not-yet-synthesized) and negative (unsynthesizable) candidates. Treating the unlabeled set as definitively negative introduces significant label noise and biases models towards overly optimistic predictions [5] [6] [7].

PU Learning: A Framework for Learning from Partial Data

Core Concept and Workflow

Positive and Unlabeled (PU) learning is a semi-supervised machine learning paradigm designed to learn from only positive and unlabeled data, without confirmed negative examples. This directly addresses the data scarcity problem in synthesizability prediction. The core idea is to identify reliable negative examples from the unlabeled data and iteratively refine a classifier.

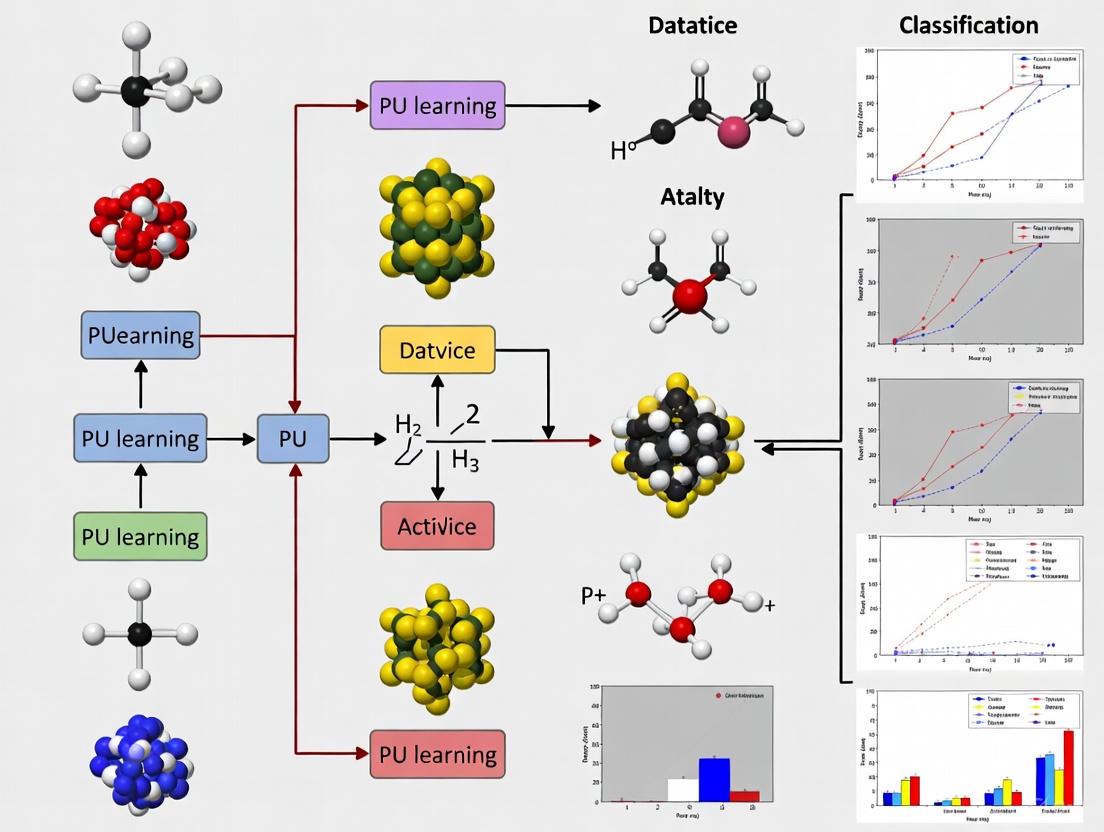

The general workflow for applying PU learning to synthesizability prediction involves several key stages, from data preparation to model deployment, as visualized below:

Key PU Learning Techniques for Synthesizability Prediction

Several PU learning strategies have been successfully adapted for scientific discovery:

- Bagging and Ensemble Methods: Frameworks like NAPU-bagging SVM train multiple SVM classifiers on resampled "bags" containing positive, negative, and unlabeled data. This ensemble approach manages false positive rates while maintaining high recall, which is critical for compiling a list of viable candidate compounds for further testing [5].

- Dual-Classifier Co-Training: The SynCoTrain framework employs two complementary graph convolutional neural networks (SchNet and ALIGNN) that iteratively exchange predictions. This co-training process mitigates individual model bias and enhances generalizability by leveraging different architectural inductive biases [3].

- Reliable Negative Sampling via OCSVM and KNN: The DDI-PULearn method uses a One-Class Support Vector Machine (OCSVM) under a high-recall constraint and a K-Nearest Neighbors (KNN) approach based on cosine similarity to generate initial "seeds" of reliable negative samples. An iterative SVM then identifies the full set of reliable negatives from the unlabeled data for final model training [7].

Application Notes and Protocols

This section provides detailed methodologies for implementing a PU learning framework for synthesizability prediction, based on proven approaches from recent literature.

Protocol 1: Solid-State Synthesizability Prediction for Ternary Oxides

Objective: To predict the likelihood that a hypothetical ternary oxide can be synthesized via solid-state reaction. Background: This protocol is adapted from the work of Chung et al. (2025), which utilized a human-curated dataset to train a PU learning model [1] [8].

- Step 1: Data Curation and Feature Engineering

- Data Source: Extract known ternary oxides and their synthesis information from the Materials Project database and the Inorganic Crystal Structure Database (ICSD). Manually curate records from literature to confirm synthesis via solid-state reaction [1] [8].

- Positive Labeling: Label a composition as positive if at least one literature record confirms its synthesis via solid-state reaction (e.g., 3,017 entries) [1].

- Feature Set: Compute a comprehensive set of features for each composition, including:

- Stoichiometric Attributes: Mean atomic number, electronegativity, atomic radii, valence electron counts.

- Structural Descriptors: Energy above hull (E(_{\text{hull}})), volume per atom, density.

- Thermodynamic Properties: Formation energy, entropy-forming ability descriptors.

- Step 2: Model Training with PU Learning

- Algorithm Selection: Implement a bagging SVM or iterative Bayesian classifier.

- Training Loop:

- Train an initial classifier using the confirmed positive set and a small set of randomly sampled unlabeled data (treated as temporary negatives).

- Use the trained classifier to score the entire unlabeled set. Extract the most confidently predicted negative samples as "reliable negatives."

- Retrain the classifier using the original positives and the newly identified reliable negatives.

- Iterate steps 2-3 until model performance on a hold-out validation set converges.

- Step 3: Validation and Prospective Prediction

- Validation: Evaluate the model using a temporal hold-out, where materials discovered after a certain date are used as the test set, simulating a real-world discovery campaign [2].

- Prediction: Apply the final model to a large set of hypothetical ternary oxides from the Materials Project to rank them by predicted synthesizability.

Table 2: Key Research Reagents and Computational Tools for Solid-State Synthesizability Prediction

| Tool / Reagent | Type | Function in Protocol |

|---|---|---|

| Materials Project API [1] | Database | Source of crystal structures, formation energies, and energy above hull for hypothetical and known materials. |

| pymatgen [1] | Python Library | Used for materials analysis and feature generation (e.g., computing structural and electronic descriptors). |

| Human-Curated Dataset [1] [8] | Data | Provides high-quality, verified positive examples for model training, overcoming noise in text-mined data. |

| Scikit-learn | Python Library | Provides implementations of SVM and other classifiers, along with utilities for data preprocessing and validation. |

Protocol 2: Multi-Target Drug Ligand Screening with NAPU-Bagging SVM

Objective: To identify multi-target-directed ligands (MTDLs) with high recall and controlled false positive rates. Background: This protocol is based on the NAPU-bagging SVM method developed for drug discovery, which is particularly suited for scenarios where high recall is critical [5].

- Step 1: Data Preparation and Molecular Representation

- Data Source: Collect bioactivity data from public repositories like ChEMBL. Assemble known actives (positives) for the target proteins of interest.

- Molecular Representation: Convert molecular structures into numerical features using Extended-Connectivity Fingerprints (ECFP4) or learned representations from graph neural networks [5].

- Unlabeled Set: The unlabeled set consists of all other compounds in the chemical space of interest without confirmed activity data.

- Step 2: NAPU-Bagging SVM Implementation

- Bagging: Create multiple bootstrap samples (bags) from the training data. Each bag contains all positive samples, a subset of generated negative samples, and a subset of the unlabeled data.

- Negative Augmentation: In each bag, augment the reliable negatives with additional putative negatives sampled from the unlabeled set.

- Ensemble Training: Train an independent SVM classifier on each bag.

- Prediction Aggregation: For a new molecule, aggregate the prediction scores from all SVM classifiers in the ensemble to produce a final synthesizability score.

- Step 3: Virtual Screening and Validation

- Screening: Rank a large virtual library of compounds using the ensemble model's score.

- Validation: Validate top-ranking candidates using molecular docking and molecular dynamics simulations to assess binding modes and affinity before experimental testing.

The logical flow of the NAPU-bagging SVM process, illustrating how the ensemble model is constructed and applied, is shown below:

Performance and Validation

Evaluating synthesizability predictors requires moving beyond standard regression metrics to task-relevant classification metrics. As highlighted in the Matbench Discovery framework, a model with excellent mean absolute error (MAE) can still have an unacceptably high false-positive rate if its predictions cluster near the decision boundary [2]. The following table compares the performance of various modern approaches as reported in the literature.

Table 3: Performance Comparison of Synthesizability Prediction Methods

| Method / Model | Application Domain | Key Performance Highlights | Validation Approach |

|---|---|---|---|

| SynCoTrain [3] | Synthesizability of Inorganic Crystals (Oxides) | Achieved high recall on internal and leave-out test sets; robust performance by mitigating model bias through co-training. | Retrospective splitting and leave-out sets. |

| NAPU-bagging SVM [5] | Multi-Target-Directed Ligands (Drug Discovery) | Maintained high true positive rate (recall) while managing false positive rate; identified novel MTDL hits for ALK-EGFR. | Case studies on specific target pairs (e.g., ALK-EGFR, dopamine receptors) with docking validation. |

| Human-Curated PU Model [1] [8] | Solid-State Synthesizability of Ternary Oxides | Identified 134 out of 4,312 hypothetical compositions as synthesizable; superior data quality enabled more reliable predictions. | Analysis of Ehull vs. synthesizability; outlier detection in text-mined data. |

| DDI-PULearn [7] | Drug-Drug Interaction Prediction | Significantly outperformed methods using random negatives and other state-of-the-art methods on multiple datasets (Enzymes, Ion Channels, GPCRs). | Comparison with 5 state-of-the-art methods using AUC metrics. |

| Universal Interatomic Potentials (UIPs) [2] | Crystal Stability Prediction (as a synthesizability proxy) | Surpassed other ML methodologies in accuracy and robustness for pre-screening thermodynamically stable materials; reduced false-positive rates. | Prospective benchmarking using the Matbench Discovery framework. |

To facilitate the adoption of PU learning for synthesizability classification, the following table details key computational tools and data resources.

Table 4: Research Reagent Solutions for PU Learning in Synthesizability

| Resource Name | Type | Description and Function |

|---|---|---|

| Matbench Discovery [2] | Evaluation Framework | A Python package and leaderboard for benchmarking ML energy models, helping to evaluate model performance on realistic prospective tasks. |

| Materials Project [1] | Database | A core database of computed materials properties for over 100,000 inorganic compounds, essential for feature generation and sourcing hypothetical candidates. |

| ChEMBL [5] | Database | A manually curated database of bioactive molecules with drug-like properties, providing positive data for drug-target interaction and synthesizability models. |

| AiZynthFinder [4] | Retrosynthesis Tool | A retrosynthesis software used as an oracle to assess the synthesizability of generated molecules by predicting viable synthetic routes. |

| Scikit-learn | Software Library | A fundamental Python library providing implementations of SVM, ensemble methods, and data preprocessing tools needed to build PU learning models. |

| ICSD [1] | Database | The Inorganic Crystal Structure Database, a primary source for experimentally confirmed crystal structures used to define positive examples. |

| OCSVM & KNN Algorithms [7] | Algorithm | Techniques used within PU learning frameworks (e.g., DDI-PULearn) to generate initial reliable negative samples from unlabeled data. |

In data-driven materials science and drug development, predicting whether a novel material can be synthesized or a drug candidate can be successfully developed is a critical challenge. This task is fundamentally a binary classification problem, requiring both positive examples (successfully synthesized materials, effective drugs) and negative examples (failed syntheses, ineffective compounds) to train accurate predictive models. However, a pervasive data scarcity problem exists: while positive data are often documented in research articles and databases, reliable negative data are frequently absent from the scientific record. Failed experiments and unsuccessful synthesis attempts are systematically underpublished due to publication bias, leaving a critical gap in the data landscape.

This absence of confirmed negative data renders traditional supervised machine learning approaches suboptimal, as they rely on balanced, fully-labeled datasets. Positive-Unlabeled (PU) Learning has emerged as a powerful semi-supervised framework to address this exact challenge. PU learning algorithms enable the training of classifiers using only a set of confirmed positive examples and a set of unlabeled data that contains a mixture of both positive and hidden negative instances. Within the context of synthesizability classification and drug development, this approach allows researchers to leverage the wealth of available positive data (e.g., from the Inorganic Crystal Structure Database - ICSD) and vast unlabeled data (e.g., hypothetical structures from the Materials Project) without needing explicitly confirmed negative samples, thus overcoming a major bottleneck in predictive model development [9] [10] [3].

Quantitative Landscape of Data Scarcity in Materials Science

The scale of the negative data gap and the corresponding application of PU learning can be quantified from recent landmark studies in materials science. The table below summarizes key metrics that illustrate the data landscape and model performance.

Table 1: Quantitative Data Scarcity and PU Learning Performance in Recent Synthesizability Studies

| Study & Material Focus | Positive Data Source (Count) | Unlabeled/Negative Data Source (Count) | PU Learning Performance |

|---|---|---|---|

| Chung et al. (2025) [9] [1]Ternary Oxides | Human-curated literature (4,103 entries) | Hypothetical compositions (4,312) | 134 hypothetical compositions predicted as synthesizable |

| SynCoTrain (2025) [3]Oxide Crystals | Experimental Data (Not Specified) | Not Specified | High recall on internal and leave-out test sets |

| CSLLM (2025) [10]3D Crystal Structures | ICSD (70,120 structures) | Theoretical Databases (80,000 non-synthesizable structures identified via PU learning) | 98.6% synthesizability prediction accuracy |

The success of PU learning is further validated by its ability to identify data quality issues. For instance, a simple screening of a text-mined dataset using a human-curated PU dataset identified 156 outliers from a subset of 4,800 entries, of which only 15% were extracted correctly, highlighting the critical need for reliable data in model training [9].

Experimental Protocols for PU Learning in Synthesizability Classification

This section provides detailed methodologies for implementing PU learning, drawing from proven frameworks in recent literature.

Protocol: Human-Curated Data Collection for Solid-State Synthesizability

Application Note: This protocol is designed for building a high-quality, reliable dataset for training synthesizability prediction models, specifically addressing the inaccuracies of fully automated text-mining approaches [9] [1].

- Candidate Identification: Download a list of potential candidate materials from a computational database (e.g., 21,698 ternary oxides from the Materials Project).

- Positive Data Proxy Filtering: Filter entries using a proxy for successful synthesis (e.g., the presence of an Inorganic Crystal Structure Database (ICSD) ID), yielding a reduced set (e.g., 6,811 entries).

- Domain Refinement: Apply domain-specific filters (e.g., remove non-metal elements and silicon) to finalize the dataset for manual inspection (e.g., 4,103 entries).

- Manual Literature Curation: Systematically search the scientific literature for each entry using:

- The original paper associated with the ICSD ID.

- The first 50 search results sorted from oldest to newest in Web of Science using the chemical formula.

- The top 20 relevant results from Google Scholar using the chemical formula.

- Data Labeling and Extraction:

- Label: Assign a "solid-state synthesized" label if at least one record of solid-state synthesis is found.

- Extract: For positive entries, collect associated reaction conditions: highest heating temperature, pressure, atmosphere, mixing/grinding conditions, number of heating steps, cooling process, precursors, and single-crystalline status.

- Alternative Labels: Label as "non-solid-state synthesized" if the material was synthesized by another method, or "undetermined" if evidence is insufficient.

- Data Validation: Perform random spot-checking (e.g., 100 entries) by a second domain expert to ensure labeling accuracy and consistency.

Protocol: The SynCoTrain Dual Classifier Co-Training Framework

Application Note: The SynCoTrain framework mitigates model bias and enhances generalizability by leveraging two complementary graph neural networks that iteratively refine predictions on unlabeled data [3].

- Model Selection and Initialization: Select two complementary graph neural network architectures, such as SchNet (which focuses on atomic environments) and ALIGNN (which incorporates bond-angle information). Initialize these models with random weights.

- Prepare Training Sets: Create a positive training set

Pfrom confirmed positive examples (e.g., synthesized materials from ICSD) and an unlabeled training setUcontaining both positive and hidden negative examples (e.g., hypothetical structures from the Materials Project). - Initial Training Phase: Independently train both classifiers (SchNet and ALIGNN) on the initial positive set

Pand a small, randomly selected subset ofU. - Iterative Co-Training Loop:

a. Prediction: Each classifier predicts labels for all instances in the unlabeled set

U. b. Selection: For each classifier, select the most confident predictions (both positive and negative) fromU. The specific negative examples identified by one model are based on its current state of learning. c. Exchange: The two classifiers exchange their sets of confidently labeled instances. d. Update: Each classifier's training data is augmented with the new labeled instances provided by its peer. e. Retraining: Both classifiers are retrained on their newly augmented training sets. - Convergence Check: Repeat the co-training loop until a stopping criterion is met (e.g., a fixed number of iterations or minimal change in the models' parameters).

- Inference: For final synthesizability prediction on a new candidate material, use the consensus or averaged prediction from the two trained classifiers.

Protocol: Leveraging Large Language Models (LLMs) for Synthesizability

Application Note: This protocol uses fine-tuned LLMs to achieve high-accuracy synthesizability classification by transforming crystal structures into a text-based representation that the model can process [10].

- Dataset Curation:

- Positive Examples: Collect confirmed synthesizable crystal structures from a reliable database like the ICSD. Apply filters (e.g., maximum of 40 atoms, 7 elements) to ensure data homogeneity (e.g., 70,120 structures).

- Negative Examples: Use a pre-trained PU learning model to assign a synthesizability score (e.g., CLscore) to a large pool of theoretical structures from multiple databases (e.g., over 1.4 million structures). Select structures with the lowest scores (e.g., CLscore < 0.1) as high-confidence negative examples (e.g., 80,000 structures).

- Text Representation Creation: Convert all crystal structures into a condensed text format, or "material string," that includes space group, lattice parameters, and a minimal set of atomic coordinates with their Wyckoff positions to eliminate redundancy.

- LLM Fine-Tuning: Fine-tune a base LLM (e.g., LLaMA) on the curated dataset of positive and negative examples, using the material strings as input and the synthesizability label as the target output.

- Model Validation: Rigorously test the fine-tuned LLM on a held-out test set. Evaluate its performance against traditional metrics like energy above hull and phonon stability.

- Prediction and Deployment: Use the fine-tuned Synthesizability LLM to predict the synthesizability of new, hypothetical crystal structures by converting them into the material string format and querying the model.

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational tools and data resources essential for implementing the protocols described in this article.

Table 2: Essential Tools and Resources for PU Learning in Synthesizability Research

| Tool/Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| ICSD [10] | Database | Source of confirmed synthesizable crystal structures (positive examples). | Data Curation |

| Materials Project [1] [10] | Database | Source of hypothetical/unlabeled crystal structures for training and prediction. | Data Curation, PU Learning |

| SchNet [3] | Graph Neural Network | A deep learning model for molecular and material systems that learns representations based on atomic interactions. | SynCoTrain Protocol |

| ALIGNN [3] | Graph Neural Network | A graph neural network that incorporates both bond and bond-angle information for improved material property prediction. | SynCoTrain Protocol |

| Material String [10] | Data Representation | A condensed text representation of a crystal structure that includes lattice, atomic coordinates, and symmetry for LLM processing. | LLM Protocol |

| Pre-trained PU Model [10] | Software Model | A model used to generate proxy labels (e.g., CLscore) for unlabeled data, helping to identify reliable negative examples. | Data Curation for LLM Protocol |

| CSLLM Framework [10] | Software Framework | An integrated framework of three fine-tuned LLMs for predicting synthesizability, synthesis method, and precursors. | LLM Protocol & Deployment |

Positive-Unlabeled (PU) learning is a specialized branch of machine learning designed for scenarios where training data consists of confirmed positive examples and a set of unlabeled examples that may contain both positive and negative instances [11]. This learning paradigm addresses a fundamental challenge present in many scientific domains: the absence of explicitly confirmed negative data. In traditional binary classification, models learn from both positive and negative examples to establish a decision boundary. However, in numerous real-world applications, including materials science and drug development, obtaining reliable negative examples is often impractical, expensive, or theoretically unsound [12] [13]. Failed synthesis attempts or unsuccessful clinical trials are frequently unpublished, and the absence of evidence for a property cannot be treated as definitive evidence of its absence [12] [14]. PU learning provides a framework to overcome this data limitation by developing classifiers that can distinguish between positive and negative classes using only positive and unlabeled data, making it particularly valuable for synthesizability classification and drug repositioning research [15] [13].

The core challenge of PU learning stems from what is known as the "open world" setting in knowledge representation [14]. In this setting, the observation of a phenomenon (e.g., a synthesizable material, an effective drug) definitively establishes its presence, but the lack of observation cannot be reliably interpreted as evidence of absence. This is because negative outcomes may result from methodological limitations, technological constraints, or simply a lack of investigation under appropriate conditions [12] [14]. PU learning algorithms navigate this ambiguity by making carefully considered assumptions about the underlying data distribution and labeling mechanism to extract meaningful signals from partially labeled datasets.

Fundamental Assumptions and Mechanisms

Core Theoretical Assumptions

PU learning methodologies rely on several key assumptions that enable learning from partially labeled data. The Selected Completely At Random (SCAR) assumption posits that labeled positive examples constitute a random sample from all positive examples, meaning the probability of a positive example being labeled is independent of its features [11]. Under SCAR, the labeled positive distribution matches the overall positive distribution. A more flexible assumption is Selected At Random (SAR), where the probability of a positive example being labeled may depend on its attributes [11]. In this case, the labeling mechanism is described by a propensity score e(x) = Pr(s=1|y=1,x), representing the probability that a positive example x is selected to be labeled [11].

Two additional assumptions about data structure enable the identification of reliable negative examples: the smoothness assumption (similar instances have similar probabilities of being positive) and separability assumption (a natural division exists between positive and negative classes) [16]. These assumptions facilitate the identification of reliable negative examples from the unlabeled set, which forms the basis for most PU learning algorithms.

Data Scenarios and Probabilistic Framework

PU learning operates under two primary data scenarios. The single-training-set scenario occurs when positive and unlabeled examples come from the same dataset, representing an i.i.d. sample from the true distribution where only a fraction of positive examples are labeled [11]. This scenario commonly arises in applications such as personalized advertising and survey data with under-reporting. The case-control scenario involves positive and unlabeled examples drawn from two independent datasets, where the unlabeled set represents an i.i.d. sample from the true population distribution [11]. This scenario typically occurs when one dataset is known to contain only positive examples, such as specialized collection centers for positive cases.

The probabilistic foundation of PU learning defines several key quantities [14]. Let γ represent the true positive rate (probability a positive example is correctly classified), η the false positive rate (probability a negative example is incorrectly classified as positive), and ρ the precision (probability a positive prediction is correct). The class prior π = Pr(y=1) represents the proportion of positive examples in the underlying distribution, while θ = Pr(ŷ=1) denotes the probability of a positive prediction. These fundamental probabilities relate through the equation θ = πγ + (1-π)η, forming the basis for deriving performance metrics in PU settings [14].

Performance Evaluation in PU Learning

Evaluation Challenges and Metrics

Evaluating classifier performance in PU learning presents unique challenges because traditional metrics computed on positive versus unlabeled data do not reflect true performance on positive versus negative data [14]. Standard binary classification metrics become distorted when the unlabeled set contains unknown positives, leading to potentially misleading conclusions about model quality. The relationship between observed performance (on positive vs. unlabeled data) and true performance (on positive vs. negative data) depends critically on two factors: the fraction of positive examples in the unlabeled data and potential mislabeling noise in the positive set [14].

Table 1: Traditional Performance Metrics and Their PU Learning Corrections

| Metric | Standard Formula | PU Correction Factor | Corrected Formula |

|---|---|---|---|

| Accuracy | πγ + (1-π)(1-η) | Requires π and labeling noise estimate | acc = πγ + (1-π)(1-η) |

| Balanced Accuracy | (γ + (1-η))/2 | Requires π and labeling noise estimate | bacc = (1 + γ - η)/2 |

| F-measure | 2πγ/(π+θ) | Requires π and θ | F = 2πγ/(π+θ) |

| Matthews Correlation Coefficient | (π(1-π)(γ-η))/√(θ(1-θ)π(1-π)) | Requires π and θ | mcc = √(π(1-π)/θ(1-θ))·(γ-η) |

Performance estimation can be corrected with knowledge or accurate estimates of class priors in the unlabeled data and potential labeling noise in the positive set [14]. Research has demonstrated that without appropriate correction, performance estimates can be wildly inaccurate, potentially leading to incorrect conclusions about model efficacy and deployment decisions with significant practical consequences [14].

Estimating Class Priors

Accurate estimation of the class prior (π) - the proportion of positive examples in the entire population - is crucial for both learning algorithms and performance evaluation in PU settings [11]. Various methods have been developed for class prior estimation, including AlphaMax [14], which addresses the challenge of differentiating between true positives and mislabeled negatives in the labeled set. The class prior enables the derivation of the actual positive and negative distributions from the unlabeled data, facilitating proper model training and evaluation. In practice, domain knowledge often complements statistical approaches for class prior estimation, particularly in scientific domains where theoretical understanding of the problem can inform reasonable bounds on this parameter.

Implementation Protocols and Methodologies

Two-Step Framework Protocol

The two-step approach represents the most widely adopted methodology for PU learning, consisting of identification of reliable negative examples followed by classifier training [16].

Step 1: Reliable Negative Identification

- Train a classifier to distinguish between labeled positive instances (P) and unlabeled instances (U)

- Identify instances in U with lowest probability P(s=1) of belonging to the labeled class

- Select these low-probability instances as initial reliable negatives (RN)

- Optional refinement: Use spy instances or expectation-maximization to improve RN set quality

Step 2: Classifier Training

- Train a binary classifier using labeled positives (P) and reliable negatives (RN)

- Apply trained classifier to remaining unlabeled instances

- Iteratively refine model through self-training or co-training approaches

The Spy-EM (Spy with Expectation Maximization) method enhances this basic framework by introducing "spy" instances - randomly selected positive examples added to the unlabeled set - to better estimate the probability threshold for reliable negative identification [16].

Advanced Implementation: Co-Training Protocol

Co-training represents an advanced PU learning methodology that leverages multiple complementary classifiers to improve generalization and mitigate model bias [12]. The SynCoTrain framework demonstrates this approach for materials synthesizability prediction:

Co-training workflow for PU learning

Co-Training Protocol Steps:

- Initialize Two Classifiers: Select two classifiers with complementary inductive biases (e.g., ALIGNN with bond-angle encoding and SchNet with continuous convolution filters) [12]

- Independent Reliable Negative Identification: Each classifier identifies reliable negatives from the unlabeled set using its unique feature representation

- Classifier Training: Train each classifier using original positives and reliable negatives identified by the other classifier

- Prediction Exchange: Classifiers exchange predictions on uncertain unlabeled instances

- Iterative Refinement: Repeat steps 2-4 for multiple rounds to expand training sets and refine decision boundaries

- Final Model Creation: Combine classifier predictions through averaging or stacking to produce final synthesizability scores

This co-training approach demonstrates robust performance in synthesizability prediction, achieving high recall on internal and leave-out test sets by balancing individual model biases [12].

Table 2: Essential Research Reagents for PU Learning Implementation

| Resource Category | Specific Tools/Methods | Function/Purpose |

|---|---|---|

| Base Classifiers | SchNet, ALIGNN, Random Forest, SVM | Encode domain-specific structures and patterns for initial classification |

| Feature Encoders | Graph Neural Networks, Molecular descriptors | Transform raw data (e.g., crystal structures, molecular graphs) into feature representations |

| Prior Estimation | AlphaMax, CDME, EN algorithms | Estimate class prior π essential for performance correction and risk estimation |

| Reliable Negative Identification | Spy-EM, Ranking-based methods, Density-based selection | Identify high-confidence negative examples from unlabeled set |

| Performance Evaluation | Corrected Accuracy, Balanced Accuracy, F-measure, MCC | Assess true classifier performance accounting for PU data characteristics |

| AutoML Systems | GA-Auto-PU, BO-Auto-PU, EBO-Auto-PU | Automate model selection and hyperparameter tuning for PU problems |

| Domain-Specific Tools | Materials Project database, ClinicalTrials.gov parser | Provide domain-specific positive and unlabeled data sources |

Applications in Scientific Research

Synthesizability Prediction for Materials Discovery

PU learning has demonstrated significant utility in predicting material synthesizability, where the positive class consists of experimentally synthesized materials and unlabeled data includes computationally predicted but experimentally untested candidates [12] [15]. The SynCoTrain model exemplifies this application, specifically designed for oxide crystals and employing a dual-classifier co-training framework with SchNet and ALIGNN architectures [12]. This approach addresses the critical limitation in materials discovery where traditional stability metrics (e.g., formation energy, distance from convex hull) provide incomplete synthesizability assessments by ignoring kinetic factors and technological constraints [12].

The synthesizability prediction protocol involves:

- Positive Set Curation: Collect experimentally synthesized materials from databases (e.g., Materials Project)

- Unlabeled Set Construction: Include hypothetical materials with negative formation energy but unknown synthesizability

- Feature Extraction: Encode crystal structures using graph representations capturing atomic interactions

- Model Training: Implement co-training framework with dual classifiers to mitigate bias

- Validation: Assess performance through hold-out testing and comparison with stability predictions

This approach has achieved recall rates of 83.4% with estimated precision of 83.6% in test datasets, successfully guiding experimental exploration of quaternary oxide compositional spaces and leading to new phase discovery [15].

Drug Repositioning and Polypharmacy Side Effect Prediction

In pharmaceutical applications, PU learning enables drug repositioning by identifying new therapeutic uses for existing drugs when negative clinical trial data is scarce or unavailable [13]. Similarly, PU-MLP applies multi-layer perceptrons with feature extraction to predict polypharmacy side effects, achieving AUPR scores of 0.99 through sophisticated handling of positive and unlabeled drug combinations [17].

Drug repositioning with PU learning

The drug repositioning protocol incorporates LLMs for enhanced negative data identification:

- Positive Data Collection: Compile drugs with proven efficacy for specific disease indications

- Clinical Trial Analysis: Extract terminated or failed trials from databases (e.g., ClinicalTrials.gov)

- LLM-Powered Negative Identification: Employ GPT-4 to analyze trial outcomes and identify true negatives based on efficacy failure or toxicity

- Feature Representation: Create optimal drug representations using random forests, GNNs, and dimensionality reduction

- Model Training: Implement PU learning with multi-layer perceptrons or ensemble methods

- Candidate Scoring: Rank drug repositioning candidates by predicted efficacy

This approach has demonstrated substantial improvement in predictive accuracy, achieving Matthews Correlation Coefficient of 0.76 compared to 0.55 for conventional PU learning methods in prostate cancer drug repositioning [13].

Future Directions and Advanced Methodologies

Emerging research in PU learning explores automated machine learning (AutoML) systems specifically designed for PU problems [16]. Systems like GA-Auto-PU, BO-Auto-PU, and EBO-Auto-PU address the method selection challenge through genetic algorithms, Bayesian optimization, and hybrid approaches, significantly outperforming baseline PU learning methods across diverse datasets [16]. The integration of large language models for negative data labeling represents another advancement, particularly in domains with complex textual data like clinical trial outcomes [13].

Future developments will likely address current limitations in handling high-dimensional data, improving theoretical understanding of generalization bounds, and developing more robust class prior estimation techniques. As synthetic data generation methods using GANs, VAEs, and LLMs mature [18], they may provide additional strategies for addressing data scarcity in PU learning scenarios, particularly for synthesizability classification where experimental data remains limited.

Predicting whether a hypothetical material or molecular compound can be successfully synthesized is a critical challenge in materials science and drug discovery. Traditional methods that rely on thermodynamic stability metrics often fail to account for kinetic factors and technological constraints, leading to a significant gap between computational predictions and experimental success [12]. Positive-Unlabeled (PU) learning has emerged as a powerful machine learning framework to address this challenge. It is specifically designed for scenarios where only positive examples (e.g., successfully synthesized crystals or bioactive molecules) are available, alongside a large set of unlabeled data (e.g., hypothetical structures or untested compounds), with no confirmed negative examples [12] [19] [20]. This semi-supervised approach mitigates the pervasive problem of missing negative data, as failed synthesis attempts are seldom published [12]. By learning the characteristics of known positives and iteratively refining predictions on unlabeled data, PU learning enables accurate and generalizable synthesizability classification, bridging the gap between in-silico design and real-world laboratory synthesis.

Quantitative Performance of PU Learning Models

The performance of PU learning models for synthesizability prediction has been quantitatively evaluated across various material systems and benchmarks. The following tables summarize key performance metrics and model characteristics from recent state-of-the-art research.

Table 1: Performance Metrics of Recent Synthesizability Prediction Models

| Model / Framework | Material Type | Key Performance Metric | Value | Reference / Benchmark |

|---|---|---|---|---|

| CSLLM (Synthesizability LLM) | 3D Inorganic Crystals | Accuracy | 98.6% | [10] |

| SynCoTrain (Dual Classifier) | Oxide Crystals | Recall (Internal & Leave-out Test Sets) | High (Specific value not reported) | [12] |

| PU-GPT-embedding | General Inorganic Crystals | Performance vs. Graph-based Models | Outperforms PU-CGCNN | [21] |

| Composition + Structure Ensemble | General Inorganic Crystals | Ranking-based Ensemble | RankAvg Score Used | [22] |

| Pre-trained PU Learning Model (Jang et al.) | 3D Crystals (for screening) | CLscore Threshold for Negatives | < 0.1 | [10] |

Table 2: Data and Algorithmic Characteristics of PU Learning Approaches

| Aspect | SynCoTrain [12] | Composition/Structure Ensemble [22] | LLM-based Approaches [21] [10] |

|---|---|---|---|

| Core PU Method | Mordelet and Vert base PU learner; Co-training | Binary cross-entropy on labeled data | Fine-tuning on balanced datasets; PU-classifier on embeddings |

| Data Source | Materials Project | Materials Project | ICSD (Positive), Materials Project et al. (Negative via PU screening) |

| Positive Data | Synthesizable oxides | Compositions with synthesized polymorphs | Experimentally validated structures from ICSD |

| Unlabeled/Negative Data | Hypothetical structures | Compositions with only theoretical polymorphs | Structures with low CLscore from pre-trained PU model |

| Key Innovation | Dual GCNN classifiers (SchNet & ALIGNN) | Rank-average fusion of composition & structure models | "Material string" text representation; Fine-tuned specialist LLMs |

Application Notes & Protocols

Protocol 1: Implementing a Dual-Classifier Co-Training Framework for Inorganic Crystals

This protocol, based on the SynCoTrain framework, is designed for predicting the synthesizability of inorganic crystal structures, such as oxides [12].

1. Data Curation and Preprocessing

- Data Source: Acquire crystal structure data from public databases like the Materials Project (MP) [12] [22] [21].

- Labeling:

- Stratification: Randomly hold out a portion (e.g., 20%) of both positive and unlabeled data as a test set for final model evaluation [21].

2. Model Architecture and Training (Co-Training)

- Classifier A (ALIGNN): Implement the Atomistic Line Graph Neural Network, which explicitly encodes atomic bonds and bond angles [12].

- Classifier B (SchNet): Implement the SchNet model, which uses continuous-filter convolutional layers to represent atomic interactions [12].

- PU Learning Base: Utilize the base PU learning method by Mordelet and Vert, which functions as a robust binary classifier for the positive and unlabeled data [12].

- Co-Training Loop:

- Independently train both classifiers on the initial labeled positive set and the entire unlabeled set using the base PU learning algorithm.

- Each classifier predicts labels for the unlabeled set.

- The classifiers exchange their most confident predictions, effectively expanding the training set for the other model.

- Iterate this process, allowing the models to collaboratively refine the decision boundary.

3. Model Evaluation

- Primary Metric: Use Recall (True Positive Rate) as the primary metric, as precision and false positive rates cannot be directly calculated without true negative labels [12] [21].

- Validation: Assess performance on the held-out test set. High recall indicates the model successfully identifies most synthesizable materials [12].

Protocol 2: LLM-Based Synthesizability Prediction with Explainability

This protocol leverages Large Language Models (LLMs) for high-accuracy prediction and, uniquely, provides human-readable explanations for its predictions [21] [10].

1. Data Preparation and Text Representation

- Data Sourcing: Curate a balanced dataset with known synthesizable (e.g., from ICSD) and non-synthesizable examples. Non-synthesizable examples can be generated by screening theoretical databases with a pre-trained PU model to select low-scoring structures [10].

- Text Representation: Convert crystal structures from CIF format into a human-readable text description. Use tools like

Robocrystallographerto generate descriptions that include space group, lattice parameters, atomic coordinates, and local coordination environments [21]. Alternatively, develop a custom condensed "material string" representation for efficiency [10].

2. Model Selection and Fine-Tuning

- Option A: Fine-tuning an LLM Classifier

- Model: Select a capable base LLM (e.g., GPT-4o-mini).

- Fine-tuning: Fine-tune the LLM on the dataset of text-described crystals and their synthesizability labels. This teaches the model to directly classify structures from their text description [21].

- Option B: LLM Embeddings with a PU Classifier

- Embedding Generation: Use a pre-trained text embedding model (e.g., OpenAI's

text-embedding-3-large) to convert the text descriptions of crystals into high-dimensional vector representations [21]. - Classifier Training: Train a standard binary PU-learning classifier (e.g., a neural network) on these LLM-generated embeddings. This approach often yields superior performance and is more cost-effective than full LLM fine-tuning [21].

- Embedding Generation: Use a pre-trained text embedding model (e.g., OpenAI's

3. Prediction and Explanation Generation

- Synthesizability Score: The fine-tuned LLM or the PU classifier outputs a probability score indicating synthesizability.

- Explanation Generation: Use the fine-tuned LLM in a chat interface. Prompt it to explain the reasoning behind its classification for a specific crystal structure. The LLM can generate narratives highlighting structural or chemical features that influence synthesizability [21].

Table 3: Key Computational Tools and Datasets for PU Learning in Synthesizability

| Tool / Resource | Type | Function in Research | Example/Reference |

|---|---|---|---|

| Materials Project (MP) | Database | Primary source of crystal structures (both synthesized and hypothetical) for training and evaluation. | [12] [22] [21] |

| Inorganic Crystal Structure Database (ICSD) | Database | Source of confirmed synthesizable (positive) crystal structures. | [21] [10] |

| ALIGNN | Software Model | Graph Neural Network classifier that incorporates bond and angle information. | [12] |

| SchNet | Software Model | Graph Neural Network classifier using continuous-filter convolutions. | [12] |

| Robocrystallographer | Software Tool | Generates human-readable text descriptions from crystal structure files (CIF). | [21] |

| Pre-trained Text Embedding Models | Software Model | Converts text descriptions of crystals into numerical vector representations for machine learning. | text-embedding-3-large [21] |

| PU-Bench | Benchmark | Standardized framework for fairly evaluating and comparing different PU learning algorithms. | [23] [24] |

| Large Language Model (LLM) | Software Model | Base model for fine-tuning on synthesizability tasks or for generating explanatory text. | GPT-4o-mini [21] |

Building Effective PU Learning Models: From Co-Training Frameworks to LLM Applications

In materials science, predicting whether a theoretical material can be successfully synthesized—a property known as synthesizability—is a critical bottleneck in the discovery pipeline. Traditional computational methods often rely on thermodynamic proxies like formation energy, but these fail to account for kinetic factors and technological constraints that significantly influence synthesis outcomes [3] [25]. A major complication is the scarcity of reliable negative data; failed synthesis attempts are rarely published in scientific literature or recorded in public databases [25] [10]. This creates an ideal scenario for Positive and Unlabeled (PU) Learning, a semi-supervised machine learning approach that trains a classifier using only labeled positive examples and a set of unlabeled examples (which contain both positive and hidden negative instances) [3] [25]. The SynCoTrain framework represents a significant architectural advancement in this domain, employing a dual-classifier, co-training mechanism to accurately predict the synthesizability of inorganic crystals, particularly oxides, while effectively addressing the challenges of model bias and data scarcity [3] [25].

SynCoTrain is a semi-supervised machine learning model specifically designed for synthesizability prediction. Its core innovation lies in a co-training framework that leverages two complementary Graph Convolutional Neural Networks (GCNNs): SchNet and ALIGNN [25].

- SchNet (SchNetPack): This GCNN uses a unique continuous convolution filter suitable for encoding atomic structures, which can be interpreted as providing a physicist's perspective on the data [25].

- ALIGNN (Atomistic Line Graph Neural Network): This GCNN directly encodes both atomic bonds and bond angles into its architecture, offering a perspective that aligns with a chemist's view of the data [25].

These two models possess different inductive biases. By combining their predictions, SynCoTrain mitigates the inherent bias of any single model, thereby enhancing the generalizability of its predictions—a crucial feature for forecasting outcomes on novel, out-of-distribution materials [25]. The framework operates iteratively. In each co-training cycle, the two learning agents exchange the knowledge they have gained from the data. The final labels are determined based on the average of their predictions. This collaborative process increases prediction reliability and accuracy, analogous to two experts reconciling their views before finalizing a complex decision [25].

Performance Metrics and Quantitative Data

SynCoTrain and other modern synthesizability prediction models have demonstrated robust performance, significantly outperforming traditional stability-based proxies. The following table summarizes key quantitative metrics from recent studies.

Table 1: Performance Comparison of Synthesizability Prediction Models

| Model / Approach | Accuracy | Recall / True Positive Rate | Estimated Precision | Key Application Area |

|---|---|---|---|---|

| SynCoTrain (Co-training + PU) | Not Explicitly Reported | High recall on internal and leave-out test sets [25] | Not Explicitly Reported | Oxide Crystals [3] [25] |

| CSLLM (Synthesizability LLM) | 98.6% [10] | Not Explicitly Reported | Not Explicitly Reported | Arbitrary 3D Crystal Structures [10] |

| Semi-Supervised Learning (Stoichiometry Focus) | Not Explicitly Reported | 83.4% [15] | 83.6% [15] | Inorganic Compositions/Stoichiometries [15] |

| Teacher-Student Dual Network | 92.9% [10] | Not Explicitly Reported | Not Explicitly Reported | 3D Crystals [10] |

| PU Learning Model (Jang et al.) | 87.9% [10] | Not Explicitly Reported | Not Explicitly Reported | 3D Crystals [10] |

| Thermodynamic Proxy (Energy Above Hull ≥0.1 eV/atom) | 74.1% [10] | Not Explicitly Reported | Not Explicitly Reported | General Screening [10] |

| Kinetic Proxy (Lowest Phonon Frequency ≥ -0.1 THz) | 82.2% [10] | Not Explicitly Reported | Not Explicitly Reported | General Screening [10] |

Detailed Experimental Protocol for SynCoTrain

This protocol outlines the steps for implementing the SynCoTrain framework to predict the synthesizability of oxide crystals, as derived from the foundational research [25].

Data Acquisition and Preprocessing

- Source Data: Obtain crystal structure data for oxide crystals from the Inorganic Crystal Structure Database (ICSD), accessed via the Materials Project API [25].

- Define Classes:

- Data Filtering:

- Use the

get_valencesfunction frompymatgento include only oxides where the oxidation state of oxygen is -2 and the oxidation numbers of all elements are determinable [25]. - Perform data cleaning by removing potential outliers from the experimental data, such as the ~1% of records with an energy above hull greater than 1 eV/atom, as these may indicate corrupt entries [25].

- For the initial training described, this process resulted in 10,206 experimental (positive) and 31,245 theoretical (unlabeled) data points [25].

- Use the

Model Training and Co-Training Procedure

- Base Classifier Initialization: Initialize two distinct GCNN models, SchNet and ALIGNN, which will serve as the dual classifiers in the co-training framework [25].

- PU Learning Iteration:

- Each base classifier (SchNet and ALIGNN) independently learns the distribution of synthesizable crystals using the base PU Learning method introduced by Mordelet and Vert [25].

- In this method, each classifier is trained on the labeled positive set

Pand the unlabeled setU. The objective is to iteratively refine the ability to identify positive instances withinU[25].

- Cross-Prediction and Data Exchange:

- After each training iteration, each classifier predicts labels for the unlabeled data

U. - The classifiers then exchange a subset of their most confident predictions to augment the other model's training pool [25].

- After each training iteration, each classifier predicts labels for the unlabeled data

- Label Reconciliation:

- Final synthesizability labels for the unlabeled data are determined based on the average of the predictions from both classifiers [25].

- Performance Validation:

- Validate the model's performance by measuring recall on a held-out internal test set and a leave-out test set to ensure it achieves high sensitivity in identifying synthesizable materials [25].

Workflow and System Architecture Diagrams

SynCoTrain Co-Training Workflow

Dual-Classifier PU Learning Architecture

Table 2: Key Resources for Implementing Dual-Classifier Synthesizability Models

| Resource / Reagent | Type | Function / Application | Example / Source |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Data Source | Primary source of experimentally synthesized (positive) and theoretical (unlabeled) crystal structures [25] [10]. | FIZ Karlsruhe |

| Materials Project API | Data Access Tool | Programmatic access to crystal structure data and computed properties, including theoretical structures [25]. | materialsproject.org |

| pymatgen | Software Library | Python library for materials analysis; used for structure manipulation, oxidation state analysis, and data preprocessing [25]. | Python Package |

| SchNetPack | Deep Learning Model | Graph CNN using continuous-filter convolutions to model atomic interactions from a physics-based perspective [25]. | GitHub Repository |

| ALIGNN | Deep Learning Model | Graph CNN that incorporates bond and angle information via line graphs, providing a chemistry-informed perspective [25]. | GitHub Repository |

| Positive and Unlabeled (PU) Learning Algorithm | Machine Learning Method | Core learning algorithm that enables training with only positive and unlabeled examples, mitigating the lack of negative data [25]. | Mordelet & Vert Method |

| High-Performance Computing (HPC) Cluster | Computational Resource | Essential for training large graph neural networks on thousands of crystal structures within a reasonable time frame. | Local/Cloud Infrastructure |

Leveraging Graph Neural Networks for Crystal and Molecular Representation

Graph neural networks (GNNs) have emerged as transformative tools for representing non-Euclidean data in chemical and materials science. Their inherent capacity to model atoms as nodes and bonds as edges aligns perfectly with structural representations of molecules and crystals. This document provides detailed application notes and protocols for implementing GNNs, with a specific focus on integrating Positive-Unlabeled (PU) learning frameworks for synthesizability classification—a critical bottleneck in materials discovery and drug development. We summarize performance benchmarks across molecular property prediction tasks, outline step-by-step experimental methodologies, and provide accessible visualization code to bridge the gap between theoretical model development and practical application.

In computational chemistry and materials informatics, the representation of crystals and molecules is a foundational challenge. Traditional fingerprint-based or descriptor-based methods often struggle to capture complex topological features. Graph Neural Networks (GNNs) offer a powerful alternative by directly operating on the inherent graph structure of molecular systems, where atoms are represented as nodes and chemical bonds as edges [26]. This paradigm has led to breakthroughs in predicting molecular properties, drug-target interactions, and toxicity assessment [26].

A significant application of these representations is in predicting material synthesizability—whether a theoretically proposed material can be experimentally realized. Most computational screening approaches rely on thermodynamic stability metrics like energy above hull (E_hull), but this is an insufficient proxy as it ignores kinetic barriers and experimental conditions [1]. Furthermore, a major impediment to data-driven synthesizability prediction is the lack of negative examples (failed synthesis attempts) in scientific literature [1] [27]. Positive-Unlabeled (PU) Learning directly addresses this by training classifiers using only positive and unlabeled data, making it perfectly suited for synthesizability classification [1] [27]. This document details the integration of GNN-based representation with PU learning to create powerful models for materials discovery.

Data Presentation: Quantitative Benchmarks of GNN Architectures

Extensive evaluations on benchmark datasets demonstrate the performance of various GNN architectures. The following tables summarize key quantitative results for property prediction and synthesizability classification.

Table 1: Performance Comparison of GNN Models on Molecular Property Prediction (QM9 Dataset)

| Model Architecture | Accuracy (%) | F1-Score | Primary Application |

|---|---|---|---|

| Graph Isomorphism Network (GIN) | 92.7 | 0.924 | Molecular Point Group Prediction [28] |

| Kolmogorov-Arnold GNN (KA-GNN) | Consistent outperformance | N/A | General Molecular Property Prediction [29] |

| KA-Graph Convolutional Network (KA-GCN) | Superior to conventional GCN | N/A | Molecular Property Prediction [29] |

| KA-Graph Attention Network (KA-GAT) | Superior to conventional GAT | N/A | Molecular Property Prediction [29] |

Table 2: PU-Learning Frameworks for Synthesizability Prediction

| Model Name | Core Methodology | Target Material Class | Key Performance |

|---|---|---|---|

| SynCoTrain [27] | Dual classifier co-training (SchNet & ALIGNN) | Oxide Crystals | High recall on internal & leave-out test sets |

| PU Learning Model [1] | Positive-Unlabeled learning from literature | Ternary Oxides | 134 of 4312 hypothetical compositions predicted synthesizable |

| Gu et al. Model [1] | Inductive PU learning & transfer learning | Perovskites | Outperformed tolerance factor-based approaches |

Experimental Protocols

This section provides a detailed, actionable protocol for implementing a GNN-driven PU learning pipeline for synthesizability classification, drawing from established methodologies [1] [27].

Protocol: Synthesizability Classification via GNN-based PU Learning

I. Data Preparation and Curation

- Source Raw Data: Download crystal structures from databases such as the Materials Project or the Inorganic Crystal Structure Database (ICSD) [1].

- Define and Label Data:

- Positive (P) Set: Curate entries with confirmed synthesis via solid-state reaction from literature. Manual curation is often necessary for reliability [1].

- Unlabeled (U) Set: All remaining entries, which constitute a mixture of synthesizable (unreported) and non-synthesizable materials.

- Featurization: Convert each crystal structure into a graph representation.

- Nodes: Represent atoms. Initialize node features using atomic properties (e.g., atomic number, radius).

- Edges: Represent bonds or atomic interactions. Initialize edge features using bond properties (e.g., bond type, length) [29].

- Data Split: Partition the P and U sets into training, validation, and test sets (e.g., 80/10/10). Ensure no data leakage by splitting based on unique compositions or chemical systems.

II. Model Architecture and Training Setup

- Select GNN Backbone: Choose a GNN architecture for graph representation learning. Suitable choices include:

- Graph Isomorphism Network (GIN): For its strong discriminative power in capturing graph topology [28].

- ALIGNN or SchNet: For capturing bond angles and 3D geometric information [27].

- Kolmogorov-Arnold GNN (KA-GNN): For enhanced expressivity and parameter efficiency by using learnable activation functions on edges [29].

- Integrate PU Learning Framework: Implement a co-training strategy using two complementary GNN classifiers (e.g., SchNet and ALIGNN as in SynCoTrain) [27].

- Each classifier predicts the probability of a sample being synthesizable.

- The classifiers iteratively exchange high-confidence predictions on the U set to refine each other's decision boundaries.

- Define Loss Function: The total loss is a combination of the supervised loss on the P set and the unsupervised consistency loss between the two classifiers on the U set.

- ( \mathcal{L} = \mathcal{L}{\text{supervised}}(P) + \lambda \mathcal{L}{\text{consistency}}(U) )

- where ( \lambda ) is a weighting hyperparameter.

III. Model Evaluation and Validation

- Performance Metrics: Evaluate the model using standard metrics on the held-out test set: Accuracy, F1-Score, and especially Recall (to capture the ability to identify true synthesizable materials) [27].

- Validation: Perform a manual literature check for a subset of the model's high-confidence predictions on the test set to empirically assess the rate of false positives [1].

Mandatory Visualizations

The following diagrams, generated with Graphviz, illustrate the core model architecture and workflow. The color palette adheres to the specified brand guidelines, with text colors explicitly set for high contrast against node backgrounds.

GNN-PU Model Architecture

KA-GNN Layer Design

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for GNN-based Synthesizability Prediction

| Item Name | Function / Role | Example / Note |

|---|---|---|

| GNN Backbones | Core model for learning from graph-structured data. | Graph Isomorphism Network (GIN) [28], ALIGNN, SchNet [27]. |

| PU Learning Framework | Manages the semi-supervised learning paradigm. | SynCoTrain's dual-classifier co-training [27]. |

| KAN Modules | Enhances model expressivity and interpretability. | Replaces MLPs in GNNs with learnable activation functions [29]. |

| Materials Databases | Source of crystal structures and properties. | Materials Project, ICSD [1]. |

| Human-Curated Datasets | Provides high-quality, reliable labels for training. | Manually extracted synthesis data from literature [1]. |

Fine-Tuning Large Language Models (LLMs) for High-Accuracy Classification

The accurate classification of data into categories is a cornerstone of scientific research, particularly in fields like materials science and drug development. Traditional supervised learning requires large, fully-labeled datasets, which are often unavailable for emerging research problems. This challenge is pronounced in synthesizability classification, where the goal is to predict whether a hypothetical material can be successfully synthesized. The scientific literature and experimental databases are rich with examples of successful syntheses (positive instances) but contain scarce, if any, confirmed reports of failures (negative instances). This creates an ideal scenario for Positive-Unlabeled (PU) learning, a semi-supervised learning technique. This Application Note details a methodology for fine-tuning Large Language Models (LLMs) to achieve high-accuracy classification within a PU learning framework, specifically for predicting material synthesizability.

Background and Principles

Positive-Unlabeled (PU) Learning

PU learning is a specialized branch of semi-supervised binary classification that trains a model using only a set of labeled positive examples and a set of unlabeled examples, the latter containing both unknown positive and negative instances [14] [30]. This framework is particularly suited to scientific domains like synthesizability prediction, where failed synthesis attempts are rarely published, making explicit negative data scarce [12] [31]. The core challenge in PU learning is that models trained and evaluated on positive versus unlabeled data will have performance metrics that do not reflect their true ability to distinguish positive from negative examples, a process which requires careful correction methods to estimate true performance [14].

LLMs for Scientific Classification

LLMs, pre-trained on vast corpora of text, possess a deep understanding of language and complex relationships. Through fine-tuning, these general-purpose models can be specialized for specific tasks, such as classification. The process involves adapting a pre-trained LLM to a new domain or task by continuing the training process on a specialized dataset [32]. For classification, a common technique is to replace the model's final output layer (designed for next-token prediction) with a new classification head, effectively turning the LLM into a powerful feature extractor and classifier [33].

Application in Synthesizability Classification

The fusion of PU learning with fine-tuned LLMs presents a powerful solution for synthesizability prediction. Recent studies demonstrate the efficacy of this approach. For instance, the Crystal Synthesis LLM (CSLLM) framework fine-tunes LLMs to predict the synthesizability of 3D crystal structures. By representing crystal structures as text and training on a balanced dataset of synthesizable and non-synthesizable materials identified via a PU learning model, CSLLM achieved a state-of-the-art 98.6% accuracy in testing [31]. This significantly outperformed traditional methods based on thermodynamic stability (74.1% accuracy) and kinetic stability (82.2% accuracy) [31]. Similarly, the SynCoTrain framework employs a dual-classifier co-training approach with graph neural networks within a PU learning context, demonstrating robust performance for predicting the synthesizability of oxide crystals [12]. These successes highlight the potential of combining structured scientific data with advanced language model fine-tuning.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Approach | Reported Accuracy | Key Features |

|---|---|---|---|

| CSLLM [31] | LLM Fine-tuning + PU Learning | 98.6% | Uses text representation of crystals; predicts methods and precursors |

| SynCoTrain [12] | Dual-Classifier Co-training + PU Learning | High Recall | Uses SchNet & ALIGNN; mitigates model bias |

| Thermodynamic Screening [31] | Energy above convex hull | 74.1% | Based on formation energy |

| Kinetic Screening [31] | Phonon spectrum analysis | 82.2% | Based on lattice dynamics |

Experimental Protocols

Protocol 1: Fine-Tuning an LLM for Classification

This protocol outlines the process of converting a pre-trained generative LLM into a classifier for a binary task such as spam detection, which is analogous to distinguishing between synthesizable and non-synthesizable materials.

Key Reagents and Resources:

- Pre-trained LLM: A base model (e.g., from the LLaMA family) [31] [32].

- Dataset: A labeled dataset for the target classification task. For balanced results, ensure similar amounts of data for each class [33].

- Computing Hardware: A GPU with sufficient VRAM (e.g., an RTX 3090, with training times potentially under 30 seconds for smaller tasks) [33].

- Software: PyTorch or TensorFlow, and associated libraries for data loading and training.

Methodology:

- Model Architecture Modification ("Decapitation"): Remove the final output layer of the LLM, which projects to the vocabulary space. Replace it with a new, randomly initialized linear layer (the "classification head") that maps the model's hidden dimension to a 2-dimensional output (for binary classification) [33].

- Input Representation and Tokenization: Convert input text (e.g., a crystal's text representation or a sentence) into tokens. For classification, the model's prediction is based on the last token's hidden state, as it contains information from the entire sequence [33].

- Training Configuration:

- Loss Function: Use cross-entropy loss, calculated between the logits vector for the last token and the target category [33].

- Optimization: Use a standard optimizer (e.g., AdamW) with a low learning rate.

- Parameter Freezing: To reduce computational cost and prevent overfitting, it is common practice to freeze the gradients of most of the original LLM's layers, training only the final layers and the new classification head [33].

- DataLoader Setup: Use

drop_last=Truein the training DataLoader to discard the last incomplete batch, ensuring consistent batch sizes and stable gradient updates [33].

- Validation and Testing: Monitor accuracy on validation and test sets. Performance can be visualized with loss and accuracy curves over training epochs.

Diagram 1: LLM fine-tuning for classification. The final layer is replaced, and only the classification head and sometimes the last LLM layers are trained.

Protocol 2: Implementing PU Learning for Synthesizability Prediction

This protocol describes the workflow for applying PU learning to predict material synthesizability, a process that can be enhanced by using a fine-tuned LLM as the classifier.

Key Reagents and Resources:

- Positive Data: Experimentally confirmed synthesizable materials from databases like the Inorganic Crystal Structure Database (ICSD) [31] [20].

- Unlabeled Data: A large set of hypothetical or computationally generated material structures from sources like the Materials Project (MP) [12] [31].

- Feature Representation: Materials must be converted into a model-readable format. For LLMs, this involves creating a text-based "material string" that includes essential crystal information (composition, lattice parameters, atomic coordinates) [31]. Alternative approaches use graph convolutional networks like ALIGNN or SchNet to learn from atomic structures directly [12].

- PU Learning Algorithm: A chosen PU learning method, such as the two-step strategy (identifying reliable negatives followed by supervised learning) [30].

Methodology:

- Data Curation:

- Positive Set: Collect known synthesizable materials (e.g., 70,120 crystal structures from ICSD) [31].

- Unlabeled Set: Assemble a large pool of theoretical structures. A pre-trained PU model can be used to assign a synthesizability score (e.g., CLscore) to these structures. Those with the lowest scores can be treated as a proxy for negative examples to create a balanced dataset for initial model training [31].

- Model Training with PU Framework:

- The base classifier (e.g., a fine-tuned LLM or a GCNN) is trained to distinguish the labeled positive examples from the unlabeled set.

- Advanced frameworks like SynCoTrain employ co-training, where two different classifiers (e.g., SchNet and ALIGNN) iteratively exchange predictions on the unlabeled data to refine the decision boundary and reduce model bias [12].

- Performance Estimation:

- Standard performance metrics (accuracy, precision, recall) calculated on the PU data are not representative of true performance. Correction methods that account for the class prior (the fraction of positive examples in the unlabeled data) are necessary to recover true accuracy, balanced accuracy, F-measure, and Matthews correlation coefficient [14].

Diagram 2: PU learning workflow for synthesizability classification. The model iteratively learns from positive and unlabeled data to identify reliable negatives.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Resources for LLM-based Synthesizability Classification

| Item Name | Function / Purpose | Examples / Specifications |

|---|---|---|

| Pre-trained LLM | Serves as the base model for feature extraction and subsequent fine-tuning. | LLaMA, GPT models [31] [32]. |

| Material Datasets | Provides positive and unlabeled data for training and evaluation. | ICSD (positive), Materials Project (unlabeled) [12] [31]. |

| Text Representation | Converts crystal structures into a text format processable by an LLM. | "Material String", CIF, or POSCAR formats [31]. |

| PU Learning Algorithm | Enables model training in the absence of confirmed negative data. | Two-step methods, co-training (SynCoTrain) [12] [30]. |

| Computational Framework | Provides the software environment for model training and inference. | PyTorch, TensorFlow, Hugging Face Transformers [33]. |

| High-Performance Computing | Accelerates the computationally intensive fine-tuning process. | GPU clusters (e.g., NVIDIA RTX 3090, A100) [33]. |

Developing In-House Synthesizability Scores for Resource-Limited Environments