Predicting Synthesis Feasibility of Inorganic Materials: AI, Machine Learning, and Data-Driven Approaches

The acceleration of inorganic materials discovery is critically dependent on accurately predicting synthesis feasibility.

Predicting Synthesis Feasibility of Inorganic Materials: AI, Machine Learning, and Data-Driven Approaches

Abstract

The acceleration of inorganic materials discovery is critically dependent on accurately predicting synthesis feasibility. This article provides a comprehensive overview for researchers and development professionals on the computational methods transforming this field. We explore the foundational challenge of defining 'synthesizability' beyond simple thermodynamics, cover cutting-edge machine learning models like deep learning synthesizability classifiers (SynthNN) and retrosynthesis planners (Retro-Rank-In, ElemwiseRetro), and examine the emerging role of large language models. The content details troubleshooting for common pitfalls in autonomous discovery workflows and presents rigorous validation metrics for comparing model performance. By integrating insights from recent breakthroughs, this article serves as a guide for reliably integrating synthesizability predictions into the materials discovery pipeline, thereby reducing costly experimental failures.

Defining Synthesizability: The Core Challenge in Inorganic Materials Discovery

The discovery of new inorganic materials is undergoing a paradigm shift, driven by computational power and artificial intelligence (AI). High-throughput calculations and generative models can now propose thousands of candidate materials with exceptional predicted properties in hours [1]. However, a critical bottleneck impedes this pipeline: the synthesizability bottleneck. This term describes the significant chasm between computationally designed materials and their successful experimental realization in the laboratory. A material's theoretical existence, no matter how promising its properties, is meaningless without a viable pathway to synthesize it. As McDermott (2025) notes, "Most of these predicted materials will never be successfully made in the lab" [1]. The challenge is that thermodynamic stability, a common computational filter, does not equate to synthesizability; a material may be stable but lack a kinetically accessible pathway to form under practical conditions [1]. This whitepaper provides an in-depth technical guide to the core challenges of synthesizability prediction and the advanced computational methodologies being developed to bridge this gap, framing the discussion within the broader thesis that predicting synthesis feasibility is the next frontier in inorganic materials research.

The Core Challenge: Why Synthesis is a Bottleneck

Synthesizing a chemical compound is fundamentally a pathway problem. It is not merely about the stability of the final destination but about finding a viable route to get there. As McDermott analogizes, it is "like crossing a mountain range; you can’t simply go straight over the top. You need a viable path" [1]. This path-dependency introduces immense complexity, governed by kinetic barriers, competing phases, and sensitive reaction conditions.

The Data Problem in Synthesis Prediction

A primary reason AI has not yet solved synthesis is a fundamental data problem. While large, well-curated datasets of atomic structures (e.g., the Materials Project) have enabled AI models for property prediction, no equivalent comprehensive database exists for synthesis recipes [1]. Building one would be a monumental, if not intractable, task. It would require experimentally testing millions of reaction combinations—including failed attempts—across every possible set of temperature, pressure, atmosphere, and precursor conditions [1]. This scale is well beyond the capacity of even the most advanced high-throughput laboratories.

Furthermore, data mined from scientific literature is inherently biased and incomplete. Failed synthesis attempts are almost never published, meaning machine learning models are trained on a curated set of successful outcomes without learning from negative examples, which are equally informative [1] [2]. The literature also suffers from a "convention bias," where researchers repeatedly use the same well-established precursors and routes. For example, in the case of barium titanate (BaTiO₃), the majority of published recipes use the same two precursors (BaCO₃ + TiO₂), despite the fact that this route requires high temperatures and long heating times and proceeds through intermediates [1]. This bias limits the diversity of synthesis knowledge available for AI training.

Limitations of Traditional Stability Metrics

Computational materials science has long relied on thermodynamic stability as a proxy for synthesizability. The most common metric is the energy above hull (Ehull), which measures the energy difference between a material and its most stable decomposed phases [2]. While a low Ehull is a necessary condition for stability, it is insufficient to guarantee synthesizability.

Kinetic barriers can prevent the formation of an otherwise thermodynamically favorable material. A well-known example is martensite, a metastable phase of steel synthesized through rapid quenching, a process governed by kinetics, not equilibrium thermodynamics [2]. Moreover, Ehull is typically calculated from internal energies at 0 K and 0 Pa, ignoring the entropic contributions and the actual conditions (e.g., high temperature) under which synthesis occurs [2]. Consequently, a non-negligible number of hypothetical materials with low Ehull have never been synthesized, while many metastable materials with higher E_hull are routinely made in labs [2] [3].

Computational Methodologies for Predicting Synthesizability

To overcome the limitations of stability metrics, researchers are developing sophisticated machine learning approaches that learn directly from experimental synthesis data. The table below summarizes the dominant methodologies and their key characteristics.

Table 1: Computational Methodologies for Predicting Material Synthesizability

| Methodology | Core Principle | Key Advantage | Reported Performance | Primary Reference |

|---|---|---|---|---|

| Positive-Unlabeled (PU) Learning | Learns from confirmed synthesizable (positive) data, treating unlabeled data as a mixture of positive and negative examples. | Overcomes the lack of confirmed negative (non-synthesizable) data. | 83.4% recall, 83.6% precision for stoichiometry [4]; 87.9% accuracy for 3D crystals [3] | [2] [4] [3] |

| Large Language Models (LLMs) | Fine-tunes LLMs on text representations of crystal structures to predict synthesizability, methods, and precursors. | High accuracy and generalization; can predict synthesis routes and precursors. | 98.6% accuracy for synthesizability; >90% for method classification [3] | [3] |

| Ranking-Based Retrosynthesis | Embeds targets and precursors in a shared latent space and ranks precursor sets by their compatibility with the target. | Can recommend novel precursors not seen in training data. | State-of-the-art in out-of-distribution generalization [5] | [5] |

| Reaction Network Modeling | Generates hundreds of thousands of potential reaction pathways and models them using thermodynamics and machine learning. | Grounded in chemistry principles; finds non-obvious, low-energy synthesis routes. | Identifies viable, scalable recipes [1] | [1] |

| Quantum Calculations | Uses quantum mechanics (e.g., DFT) to simulate reaction energy profiles and transition states. | Provides fundamental physical insights into kinetic and thermodynamic feasibility. | Predicts feasibility before lab work [6] | [6] |

Detailed Experimental Protocol: Positive-Unlabeled Learning for Solid-State Synthesizability

The following protocol is adapted from the work of Chung et al. (2025) in Digital Discovery [2], which provides a robust framework for building a PU learning model for synthesizability prediction.

1. Data Collection and Curation:

- Source Data: Download ternary oxide entries from the Materials Project database. Use Inorganic Crystal Structure Database (ICSD) IDs as an initial proxy for "synthesized" materials.

- Manual Labeling: Manually extract synthesis information from the scientific literature for each composition. This critical step involves:

- Examining papers associated with ICSD IDs.

- Searching Web of Science and Google Scholar using the chemical formula as a query.

- Labeling Schema: For each ternary oxide, assign one of three labels:

- Solid-State Synthesized: At least one record of synthesis via a solid-state reaction.

- Non-Solid-State Synthesized: The material has been synthesized, but not via a solid-state reaction.

- Undetermined: Insufficient evidence to confirm solid-state synthesis.

- Data Extraction: For solid-state synthesized entries, extract available parameters: highest heating temperature, pressure, atmosphere, mixing/grinding conditions, number of heating steps, cooling process, and precursors.

2. Data Processing and Feature Engineering:

- Define Solid-State Reaction: Establish clear criteria for what constitutes a solid-state reaction (e.g., no melting of all starting materials, no use of flux for crystal growth).

- Feature Calculation: Compute relevant features for each composition, which may include:

- Elemental descriptors (electronegativity, atomic radius, etc.).

- Thermodynamic features from DFT (e.g., formation energy, E_hull).

- Structural descriptors.

3. Model Training with PU Learning:

- Algorithm Selection: Implement a PU learning algorithm, such as the transductive bagging approach by Mordelet et al. [2].

- Training Set: Use the manually labeled "Solid-State Synthesized" data as positive (P) examples. Treat all other data (unlabeled, U) as a mixture of potential positive and negative examples.

- Training: The model learns to identify patterns that distinguish the known positive examples from the unlabeled set, effectively learning to identify likely negative examples within the U set.

4. Model Validation and Testing:

- Validation: Use a held-out test set of manually verified data to evaluate performance metrics like recall, precision, and accuracy.

- Outlier Detection: The curated dataset can also be used to identify and correct errors in fully automated text-mined datasets, improving the quality of data available for future models [2].

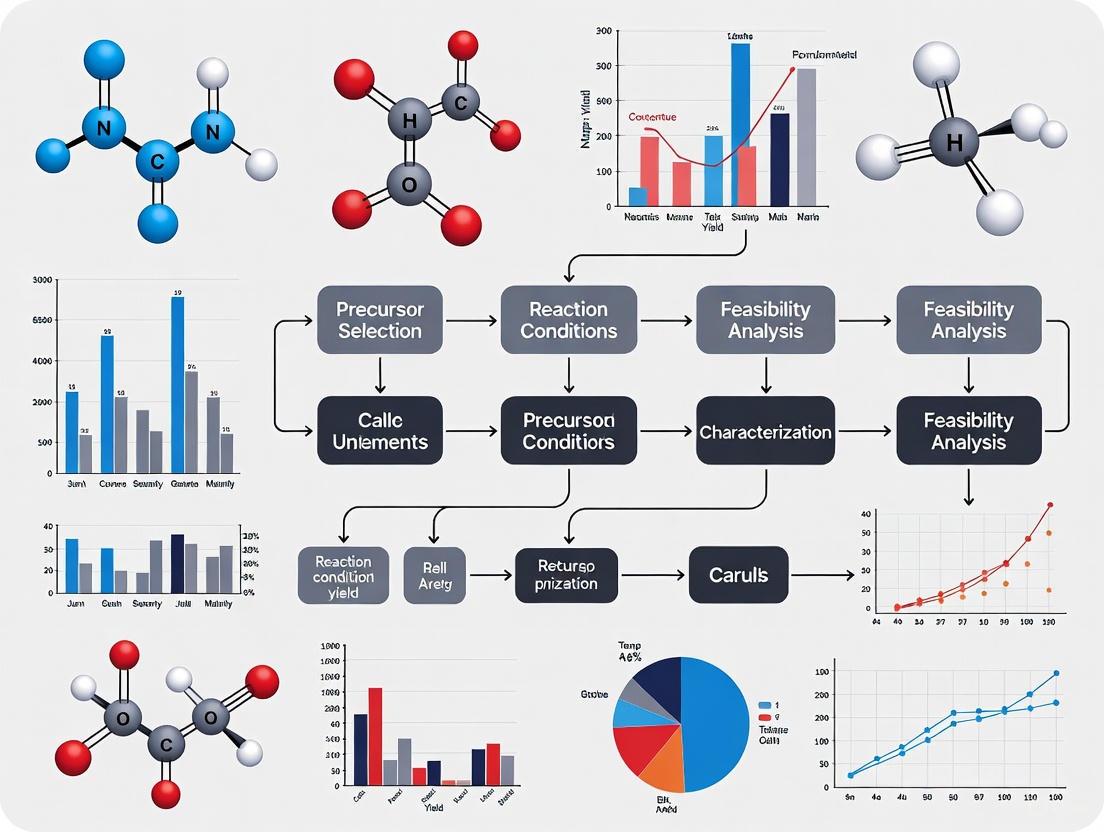

Workflow Diagram: Integrating Computational and Experimental Efforts

The following diagram visualizes a modern, closed-loop workflow for overcoming the synthesizability bottleneck by integrating computational predictions with experimental validation.

Diagram 1: Closed-loop workflow for materials discovery.

The Scientist's Toolkit: Key Research Reagents and Solutions

The experimental validation of synthesizability predictions relies on a suite of standard and advanced techniques. The following table details key reagents, instruments, and computational tools essential for research in this field.

Table 2: Essential Research Toolkit for Synthesis Feasibility Research

| Tool/Reagent | Function/Description | Application in Synthesizability |

|---|---|---|

| Solid-State Precursors | High-purity metal oxides, carbonates, hydroxides, etc., used as starting materials. | Reacted at high temperatures to form target ternary/quaternary oxides. Purity is critical to avoid impurities [1] [2]. |

| Autonomous Laboratory | Robotic system that executes high-throughput synthesis and characterization. | Enables rapid, 24/7 experimental validation of computationally predicted materials and recipes [2]. |

| Crystal Synthesis LLM (CSLLM) | A specialized large language model fine-tuned on crystal structure data. | Predicts synthesizability of 3D structures (>98% accuracy), suggests synthetic methods, and identifies precursors [3]. |

| X-ray Diffraction (XRD) | Analytical technique for determining the crystal structure of a material. | The primary method for verifying successful synthesis of the target phase and detecting unwanted impurity phases [1]. |

| Positive-Unlabeled Learning Model | A semi-supervised machine learning model. | Predicts the likelihood that a material with a given stoichiometry is synthesizable, despite lacking negative data [2] [4]. |

| Retro-Rank-In Framework | A ranking-based machine learning model for retrosynthesis. | Recommends and ranks viable precursor sets for a target material, including novel precursors not in its training data [5]. |

| Density Functional Theory (DFT) | Computational method for modeling electronic structure. | Calculates key stability metrics like energy above hull (E_hull) and simulates reaction energy profiles [2] [6]. |

The synthesizability bottleneck represents the most significant impediment to the full realization of computational materials design. While formidable, the challenge is being met with a new generation of sophisticated, data-driven tools. The shift from relying solely on thermodynamic metrics toward models that learn directly from experimental data—using PU learning, large language models, and ranking-based retrosynthesis—is a profound and necessary evolution. The future of materials discovery lies in closed-loop workflows, where computational predictions directly guide automated experiments, and the results of those experiments, including failures, are fed back to refine and retrain the models. As these tools mature and synthesis databases grow in both quantity and quality, the bottleneck will slowly but surely open, accelerating the translation of groundbreaking theoretical materials into real-world technologies that address critical challenges in energy, electronics, and beyond.

In inorganic materials research, the thermodynamic property of formation energy has traditionally served as a primary indicator for predicting synthesis feasibility. This whitepaper examines the critical limitations of relying solely on this metric, arguing that formation energy provides an incomplete picture of synthesizability. By exploring kinetic barriers, precursor reactivity, and non-equilibrium conditions, we demonstrate why materials with negative formation energies may remain stubbornly unsynthesizable, while others with positive formation energies can be successfully realized. The paper further presents a modern framework integrating computational guidelines and data-driven methods to create a more comprehensive approach to synthesis prediction, ultimately accelerating the discovery and development of novel functional materials.

Formation energy, calculated from the energy difference between a compound and its constituent elements in their standard states, has long served as a foundational metric in computational materials science. A negative formation energy indicates thermodynamic stability, suggesting that a material should form spontaneously under equilibrium conditions. This principle has guided initial materials screening for decades, with high-throughput computational searches often prioritizing compounds with increasingly negative formation energies.

However, this thermodynamic focus presents a significant bottleneck in the materials discovery pipeline. The persistent challenge in experimental synthesis lies in the multitude of conditions that must be optimized in synthesis routes, creating a complex multidimensional challenge that cannot be captured by a single thermodynamic parameter [7]. In practice, chemists can only evaluate a limited subset of experimental conditions, traditionally relying on chemical literature, experience, and simple heuristics to identify influential factors for reaction success [8]. This review examines why formation energy alone is insufficient for predicting synthesis outcomes and explores the advanced computational and data-driven methodologies that are reshaping synthesis feasibility prediction in inorganic materials research.

The Critical Limitations of Formation Energy

Kinetic Barriers and Synthesis Pathways

While formation energy describes the thermodynamic favorability of a final product, it provides no information about the energy landscape between reactants and products. Kinetic barriers, determined by intermediate states and transition energies, often dictate whether a synthesis will succeed or fail under practical conditions.

- Activation Energies: Synthesis reactions require overcoming activation barriers that formation energy calculations do not capture. These kinetic limitations can prevent the formation of thermodynamically stable compounds.

- Alternative Pathways: Materials with unfavorable bulk formation energies might be accessible through alternative synthesis pathways that bypass thermodynamic limitations through metastable intermediates or non-equilibrium conditions.

- Complex Landscapes: The energy landscape of materials synthesis involves multiple dimensions including temperature, pressure, and chemical potential, which single-formation-energy values cannot represent [7].

The Metastability Challenge

The synthesis of metastable materials represents a particularly compelling case where formation energy alone fails to predict experimental outcomes.

Table 1: Relationship Between Material Stability and Synthesis Feasibility

| Material Type | Thermodynamic Stability | Synthesis Feasibility | Key Determining Factors |

|---|---|---|---|

| Stable Phase | Negative formation energy | High | Thermodynamics drive synthesis |

| Metastable Phase | Positive formation energy | Variable | Kinetic barriers, precursor selection, processing conditions |

| Severely Metastable | Highly positive formation energy | Low | Requires specialized non-equilibrium techniques |

Metastable materials, which possess higher energy than the global thermodynamic minimum, often exhibit exceptional functional properties but defy traditional formation energy-based predictions. Their synthesis requires careful navigation of kinetic pathways to avoid conversion to more stable phases [9]. The thermodynamic scale of inorganic crystalline metastability demonstrates that many promising functional materials lie outside the realm of thermodynamic stability, necessitating prediction methods beyond formation energy [9].

The Multi-dimensional Nature of Synthesis Parameters

Experimental synthesis represents a complex optimization problem across numerous parameters that formation energy cannot capture. Synthesis feasibility depends on multiple interacting variables including:

- Precursor Reactivity: The chemical reactivity of starting materials significantly influences reaction pathways.

- Temperature Profiles: Heating rates, maximum temperatures, and dwell times affect phase formation.

- Atmospheric Conditions: Oxygen partial pressure, inert gas flow, and other atmospheric factors can determine synthesis success.

- Processing Techniques: The specific synthesis method (solid-state, sol-gel, vapor deposition) introduces different kinetic constraints.

This multidimensional parameter space explains why chemists in typical laboratory settings can only evaluate a limited subset of experimental conditions, and why simple heuristics based on formation energy often prove inadequate [7].

Computational and Data-Driven Advancements

Physical Models Beyond Thermodynamics

Modern computational guidelines incorporate physical models based on both thermodynamics and kinetics to provide more comprehensive synthesis guidance. By embedding the interplay between thermodynamics and kinetics as domain-specific knowledge, both predictive performance and interpretability of models are markedly enhanced [7]. This "bottom-up" strategy constructs mathematical models from the atomistic level for complex chemical synthesis processes, facilitating deeper understanding of the relevant factors.

These advanced models consider:

- Phase Stability under different chemical potentials

- Reaction Kinetics and diffusion barriers

- Nucleation Barriers and growth mechanisms

- Surface and Interface energies that dominate in nanoscale systems

Machine Learning in Materials Synthesis

Machine learning (ML) techniques have emerged as powerful tools for addressing the limitations of traditional metrics like formation energy. ML can bypass time-consuming experimental synthesis and excavate structure-property relationships, possessing the potential to identify materials with high synthesis feasibility and suggest suitable experimental conditions [7]. The applications of ML in inorganic material synthesis have established a closed-loop optimization framework to create an intelligent research paradigm, significantly increasing the success rate of experiments [9].

Table 2: Machine Learning Approaches in Materials Synthesis

| ML Technique | Application in Synthesis | Data Requirements | Limitations |

|---|---|---|---|

| Supervised Learning | Predicting synthesis outcomes from parameters | Large labeled datasets | Limited by data scarcity |

| Unsupervised Learning | Identifying patterns in synthesis data | Unlabeled experimental data | Interpretation challenges |

| Transfer Learning | Leveraging knowledge across material systems | Multiple related datasets | Domain adaptation issues |

| Active Learning | Guiding iterative experimentation | Initial small dataset | Requires experimental validation |

The primary data acquisition approaches for ML include high-throughput experimental data collection and scientific literature knowledge mining [7]. Applications of ML-assisted inorganic material synthesis are now being categorized according to different data sources, creating a more systematic approach to the field.

Experimental Data Infrastructure

High-Throughput Experimental Databases

The development of large-scale experimental databases has been crucial for advancing beyond formation-energy-based predictions. The High Throughput Experimental Materials (HTEM) Database represents a significant step forward, containing 140,000 sample entries characterized by structural (100,000), synthetic (80,000), chemical (70,000), and optoelectronic (50,000) properties of inorganic thin film materials [8].

This database infrastructure enables:

- Data Mining across diverse materials systems

- Pattern Recognition in synthesis parameters

- Machine Learning model training

- Hypothesis Generation for new syntheses

The HTEM database demonstrates how high-throughput experimental (HTE) approaches can generate the comprehensive datasets needed to move beyond simple thermodynamic descriptors. These datasets include synthesis conditions such as temperature (83,600 entries), x-ray diffraction patterns (100,848), composition and thickness (72,952), optical absorption spectra (55,352), and electrical conductivities (32,912) [8].

Laboratory Information Management Systems

The data infrastructure supporting modern synthesis prediction relies on sophisticated laboratory information management systems (LIMS). These systems automatically harvest materials data from synthesis and characterization instruments into a data warehouse, then use extract-transform-load (ETL) processes to align synthesis and characterization data and metadata into databases with object-relational architecture [8].

This infrastructure enables consistent interaction between client applications and materials databases through application programming interfaces (API), allowing both materials scientists and computer scientists to access materials datasets for visualization, data mining, and machine learning purposes [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Advanced Synthesis Prediction

| Tool/Resource | Function | Application in Synthesis Feasibility |

|---|---|---|

| High-Throughput Experimental Systems | Parallel synthesis of material libraries | Generates large-scale synthesis data for ML training |

| Computational Thermodynamics Software | Calculates phase diagrams and stability | Provides baseline thermodynamic assessment |

| Kinetic Modeling Tools | Simulates reaction pathways and barriers | Predicts synthesis pathways beyond thermodynamics |

| Material Descriptors | Quantifies chemical and physical properties | Enables feature-based ML predictions |

| HTEM Database | Stores and serves experimental data | Provides training data for synthesis prediction models |

| Domain Knowledge | Expert understanding of synthesis mechanisms | Guides model development and interpretation |

Methodologies and Workflows

Integrated Synthesis Prediction Workflow

The following diagram illustrates the modern workflow for synthesis feasibility prediction that integrates computational guidance with data-driven methods:

Data-Driven Synthesis Optimization Framework

This diagram details the closed-loop optimization framework that enables continuous improvement of synthesis predictions:

Challenges and Future Perspectives

Despite promising advancements, the use of ML techniques in inorganic material synthesis remains a nascent and evolving field. Even the most state-of-the-art ML models still cannot provide accurate predictions regarding optimal synthesis routes and outcomes [7]. Several critical challenges persist:

- Data Scarcity: Despite databases like HTEM, comprehensive synthesis data covering diverse material systems remains limited.

- Class Imbalance: Successful synthesis outcomes are typically underrepresented compared to failed attempts in experimental records.

- Interpretability: Complex ML models often function as "black boxes," providing limited insight into underlying synthesis mechanisms.

- Domain Integration: Bridging the gap between computation-guided/ML-assisted strategies and experiments requires both theorists and experimentalists to contribute their respective expertise [7].

Future progress will require development of high-quality experimental datasets as a prerequisite for seeking global phenomenological descriptions of synthesis processes. Material descriptors based on thermodynamics and kinetics must be integrated into ML models to improve both performance and interpretability [7]. From the theoretical perspective, "bottom-up" strategies that construct mathematical models from the atomistic level for complex chemical synthesis processes will facilitate deeper understanding of thermodynamics and kinetics.

Formation energy remains a valuable but incomplete metric for predicting synthesis feasibility in inorganic materials research. Its limitations in addressing kinetic barriers, metastability, and multidimensional synthesis parameters necessitate more comprehensive approaches. The integration of computational guidelines based on both thermodynamics and kinetics with data-driven machine learning methods represents a transformative advancement in the field. By establishing closed-loop optimization frameworks that connect computational prediction with high-throughput experimental validation, the materials research community is developing an intelligent paradigm for synthesis design. This approach significantly increases experimental success rates and accelerates the discovery of novel functional materials, ultimately bridging the gap between computational prediction and experimental realization in inorganic materials synthesis.

The discovery of novel inorganic materials is pivotal for advancements in energy and electronics. Traditional heuristic rules, particularly charge-balancing, have long served as a foundational filter for predicting stable compounds. However, this reliance on simplistic chemical principles often fails to accurately predict synthesizable materials, overlooking complex thermodynamic and kinetic factors governing real-world synthesis. This whitepaper details the inherent limitations of traditional heuristics and presents a modern, data-driven synthesizability assessment framework. By integrating compositional and structural predictors with machine learning, this approach demonstrates superior capability in identifying experimentally viable materials, as validated through high-throughput laboratory experiments.

The search for new inorganic materials with target properties traditionally navigates an immense compositional space. Forming a four-component compound from the first 103 elements of the periodic table, for example, results in more than 10^12 combinations, an intractable space for exhaustive experimentation or first-principles computation [10]. To manage this complexity, researchers have historically relied on heuristic rules—simplified principles based on chemical intuition and empirical observation.

The most prominent among these is the charge-balancing heuristic, which applies principles of valency to filter chemically implausible compositions. This rule posits that stable, neutral compounds tend to form when the total positive charge from cations balances the total negative charge from anions [10]. While this and other heuristics like electronegativity balance have reduced the quaternary compositional space from over 10^12 to a more manageable 10^10 combinations [10], they constitute a coarse filter. They were never designed to capture the intricate finite-temperature effects, kinetic barriers, and complex synthesis pathway dependencies that ultimately determine whether a predicted material can be realized in a laboratory [11]. This whitepaper examines the specific shortfalls of charge-balancing heuristics and frames a modern, data-driven alternative within the critical context of synthesis feasibility prediction for inorganic materials research.

Limitations of Traditional Charge-Balancing Heuristics

Traditional heuristics, while useful for initial screening, introduce significant limitations that hinder the discovery of novel, synthesizable materials.

Oversimplification of Chemical Stability

Charge-balancing primarily assesses thermodynamic stability at zero Kelvin, often using density functional theory (DFT) to compute convex-hull stabilities [11]. This approach overlooks critical real-world factors:

- Finite-Temperature Effects: Entropic contributions and kinetic barriers that govern synthetic accessibility at experimental conditions are ignored [11].

- Metastable Phases: Many experimentally accessible and functional materials are metastable. For instance, the cristobalite phase of SiO₂, a common material, is not listed among the 21 lowest-energy SiO₂ structures identified by the Materials Project [11].

- Synthesis Pathway Dependence: The heuristic does not account for the specific precursors or reaction kinetics required to form a phase, which can be the decisive factor in successful synthesis [11].

Inability to Predict synthesizability

The core failure of traditional heuristics is their conflation of computational stability with experimental synthesizability.

- Abundance of Predicted Materials: Current databases like the Materials Project, GNoME, and Alexandria contain millions of predicted structures, vastly outnumbering known synthesized compounds [11]. The charge-balancing heuristic, and the DFT stability calculations it often accompanies, offer little guidance for prioritizing which of these many "stable" candidates are truly synthesizable.

- The synthesizability Gap: A structure predicted to be stable on a convex hull is not necessarily synthesizable. The practical likelihood of laboratory synthesis depends on additional compositional and structural constraints not captured by charge-balancing alone [11].

Neglect of Structural and Compositional Complexity

Heuristics like charge-balancing operate on a simplified compositional model.

- Structural Signals: They completely ignore the crystal structure, which contains critical signals for stability, such as local coordination environments, motif stability, and packing, which influence a compound's viability [11].

- Elemental Constraints: Rules based on valency and electronegativity may fail to account for practical constraints like precursor availability, elemental volatility, and redox potential during solid-state reactions [11].

A Modern Framework for synthesizability Prediction

To overcome the limitations of traditional heuristics, a new paradigm integrates machine learning with complementary compositional and structural descriptors to directly predict synthesizability.

Problem Formulation and Model Architecture

The goal is to learn a synthesizability score, ( s(x) \in [0,1] ), that estimates the probability that a compound ( x ), represented by its composition ( xc ) and crystal structure ( xs ), can be experimentally synthesized [11].

The model architecture integrates two parallel encoders:

- Compositional Encoder (( f_c )): A fine-tuned transformer model (e.g., MTEncoder) that processes the chemical stoichiometry [11].

- Structural Encoder (( f_s )): A graph neural network (e.g., JMP model) that processes the crystal structure graph [11].

The outputs of these encoders, ( \mathbf{z}c ) and ( \mathbf{z}s ), are fed into separate multi-layer perceptron (MLP) heads that output independent synthesizability scores. The model is trained end-to-end on a dataset of known synthesized and non-synthesized materials from databases like the Materials Project, minimizing binary cross-entropy loss [11].

Key Experimental Protocols and Data Curation

A detailed methodology for implementing and validating a synthesizability prediction pipeline is outlined below.

Table 1: Data Curation Protocol for synthesizability Model Training

| Step | Description | Key Considerations |

|---|---|---|

| 1. Data Source | Extract compositions and structures from the Materials Project (MP). | MP ensures consistency between composition and relaxed crystal structure [11]. |

| 2. Labeling | Label compositions as synthesizable (( y=1 )) if any polymorph has a matching entry in the Inorganic Crystal Structure Database (ICSD). Label as unsynthesizable (( y=0 )) if all polymorphs are flagged as "theoretical" in MP [11]. | Avoids artifacts from experimental entries (e.g., non-stoichiometry, dopants) [11]. |

| 3. Dataset Splitting | Stratify the final dataset (e.g., 49k synthesizable, 129k unsynthesizable compositions) into train/validation/test splits. | Ensures representative distribution of positive and negative examples during model development [11]. |

Table 2: Model Training and Screening Protocol

| Step | Description | Implementation Details |

|---|---|---|

| 1. Model Training | Fine-tune compositional and structural encoders end-to-end. | Training is typically performed on high-performance computing clusters (e.g., NVIDIA H200) with early stopping based on validation AUPRC [11]. |

| 2. Screening | Apply the trained model to a large pool of candidate structures (e.g., 4.4 million). | For each candidate, the model outputs a synthesizability probability [11]. |

| 3. Ranking | Aggregate predictions from both composition and structure models using a rank-average ensemble (Borda fusion). | Ranks candidates by RankAvg(i) score, which ranges from 1/N to 1, rather than applying a probability threshold. Candidates with scores >0.95 are considered highly synthesizable [11]. |

| 4. Synthesis Planning | Use precursor-suggestion models (e.g., Retro-Rank-In) and condition-prediction models (e.g., SyntMTE) on top-ranked candidates to predict viable solid-state precursors and calcination temperatures [11]. | Models are trained on literature-mined corpora of solid-state synthesis recipes [11]. |

The following workflow diagram illustrates the complete synthesizability-guided pipeline from computational screening to experimental validation.

Synthesizability Guided Discovery Pipeline

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and experimental resources essential for implementing a modern synthesizability-guided discovery pipeline.

Table 3: Essential Research Reagents and Resources

| Item / Resource | Function / Description | Role in the Workflow |

|---|---|---|

| Materials Project Database | A database of computed materials properties and crystal structures. | Provides the foundational data for training synthesizability models and sourcing candidate structures [11]. |

| MTEncoder / JMP Model | Pre-trained machine learning models for composition and structure encoding. | Serve as the backbone encoders in the synthesizability model, providing a powerful starting point through transfer learning [11]. |

| Retro-Rank-In | A precursor-suggestion model. | Generates a ranked list of viable solid-state precursors for a given target composition [11]. |

| SyntMTE | A synthesis condition prediction model. | Predicts the calcination temperature required to form the target phase from given precursors [11]. |

| High-Throughput Laboratory Platform | Automated systems for solid-state synthesis. | Enables rapid experimental validation of computationally predicted candidates [11]. |

Comparative Analysis: Heuristics vs. Data-Driven Prediction

The performance gap between traditional heuristics and modern data-driven approaches is stark, as demonstrated by experimental outcomes.

Table 4: Comparison of Filtering Methodologies

| Criterion | Traditional Heuristics (e.g., Charge-Balancing) | Data-Driven synthesizability Model |

|---|---|---|

| Basis of Prediction | Rules of thumb (valency, electronegativity) and zero-K DFT stability [10]. | Machine learning trained on experimental synthesis data [11]. |

| Input Features | Primarily composition. | Composition and full crystal structure [11]. |

| Output | Binary classification (plausible/implausible). | Probabilistic synthesizability score and ranked candidate list [11]. |

| Handling of Metastability | Poor; favors thermodynamically ground-state phases. | Good; can identify metastable phases that are kinetically accessible [11]. |

| Experimental Success Rate | Not specifically designed to predict synthesis. | Successfully guided the synthesis of 7 out of 16 characterized target structures, including novel compounds [11]. |

The following diagram visualizes the conceptual shift from a heuristic-based filter to an integrated ML-based prioritization system, highlighting the additional signals considered.

Paradigm Shift from Heuristics to ML

The limitations of traditional charge-balancing heuristics are clear and consequential. Their oversimplified view of chemical stability, inability to reliably predict synthesizability, and neglect of structural complexity render them insufficient for navigating the vast landscape of predicted inorganic materials. The emerging paradigm, which leverages integrated machine learning models trained on both composition and structure, offers a powerful and empirically validated alternative. This synthesizability-guided framework successfully bridges the gap between computational prediction and experimental realization, dramatically accelerating the discovery of novel, feasible inorganic materials. As the field progresses, the adoption of such data-driven methodologies will be indispensable for the efficient advancement of materials science and its applications in drug development, energy storage, and beyond.

The prediction of synthesis feasibility stands as a critical bottleneck in the discovery cycle for novel inorganic materials. While high-throughput computational screening can rapidly identify thousands of theoretically stable compounds with promising properties, the experimental realization of these predictions often proves challenging, if not impossible [12]. This discrepancy highlights the crucial role of experimental materials databases as foundational resources for developing data-driven synthesis models. The Inorganic Crystal Structure Database (ICSD) represents the world's largest repository of completely identified inorganic crystal structures, with its first records dating back to 1913 and approximately 12,000 new structures added annually [13]. This whitepaper examines the ICSD and related data resources within the context of synthesis feasibility prediction, analyzing the inherent data biases that influence machine learning (ML) models and providing methodological frameworks for mitigating these limitations in research practice.

Core Materials Databases: Characteristics and Applications

The Inorganic Crystal Structure Database (ICSD)

Maintained by FIZ Karlsruhe and the National Institute of Standards and Technology (NIST), the ICSD provides comprehensive crystal structure data including unit cell parameters, space group, atomic coordinates, site occupation factors, and derived properties [13] [14]. Its historical depth and rigorous quality control make it particularly valuable for studying structural trends across chemical systems. The database contains over 210,000 entries, serving as a critical reference for materials characterization and comparative analysis [14].

Table 1: Key Features of Major Materials Databases for Synthesis Prediction

| Database | Primary Content | Data Sources | Key Applications in Synthesis Prediction | Notable Limitations |

|---|---|---|---|---|

| ICSD [13] [14] | Inorganic crystal structures (over 210,000 entries) | Peer-reviewed literature (1913-present) | Structure type analysis (80% allocated to ~9,000 types); identification of synthesizable phases; precursor selection | Crystallographic focus with limited synthesis protocol details |

| Materials Project [12] | Computed material properties via DFT | High-throughput first-principles calculations | Predicting thermodynamic stability; formation energy calculations | Theoretical predictions may diverge from experimental synthesizability |

| Text-Mined Synthesis Data [15] | Experimental parameters from literature | Natural language processing of scientific papers | Training ML models for parameter optimization; predicting synthesis outcomes | Sparse, high-dimensional data requiring specialized processing |

Beyond the ICSD, researchers increasingly rely on computationally generated databases like the Materials Project, which contains density functional theory (DFT) calculations for hundreds of thousands of materials [12]. While these resources provide consistent thermodynamic data at scale, they often lack experimental synthesis information. Specialized datasets extracted via text-mining of scientific literature help bridge this gap by capturing experimental parameters such as heating temperatures, reaction times, and precursor choices [15]. The integration of these complementary data types—experimental structures, computed properties, and synthesis protocols—creates a more comprehensive foundation for predictive synthesis models.

Critical Data Biases and Their Impact on Synthesis Prediction

Data Scarcity and Sparsity

The most significant challenge in ML-guided inorganic materials synthesis is data scarcity—for any specific material system of interest, only limited synthesis data may be available. For instance, a study on SrTiO₃ synthesis had to work with fewer than 200 text-mined synthesis descriptors [15]. This problem is compounded by data sparsity, where synthesis routes exist in a high-dimensional parameter space (including precursors, temperatures, times, atmospheres, and processing methods) with most parameter combinations unexplored in literature [15]. This combination creates a "combinatorial explosion" of possible synthesis conditions with relatively few documented examples, making it difficult for ML models to learn robust structure-synthesis relationships.

Reporting and Selection Biases

Experimental materials databases exhibit substantial reporting biases, as successfully synthesized and characterized materials are overwhelmingly represented compared to failed attempts. This creates a significant "positive-only" bias in training data, where ML models learn from successful syntheses but lack explicit information about which parameter combinations lead to failure [12]. Furthermore, the scientific literature demonstrates a pronounced selection bias toward materials with novel or technologically relevant properties, certain structural families, and compositions from well-established synthetic protocols. This results in uneven coverage across chemical spaces, with some regions densely populated with data while others remain virtually unexplored.

Thermodynamic versus Kinetic Prioritization

The ICSD and similar structural databases primarily contain thermodynamically stable compounds that can be synthesized through conventional methods, creating a systematic underrepresentation of metastable phases that may possess unique functional properties [12]. This thermodynamic bias is particularly problematic for synthesis prediction of novel materials, as many computationally predicted compounds with promising properties are metastable. The focus on final crystalline products rather than intermediate phases or reaction pathways further limits understanding of kinetic factors that ultimately determine synthesis feasibility, such as activation energies for nucleation and diffusion [12].

Methodological Frameworks for Bias Mitigation

Data Augmentation and Representation Learning

To address data scarcity, researchers have developed innovative data augmentation techniques that incorporate synthesis data from related material systems. One effective approach uses ion-substitution similarity functions to create an augmented dataset with an order of magnitude more data (e.g., increasing from <200 to 1,200+ synthesis descriptors for SrTiO₃) by weighting syntheses of chemically similar compounds [15].

For handling sparse, high-dimensional synthesis data, variational autoencoders (VAEs) have demonstrated superior performance compared to linear dimensionality reduction techniques like Principal Component Analysis (PCA). VAEs learn compressed, lower-dimensional representations of synthesis parameters that preserve critical information while reducing the "curse of dimensionality" [15]. In synthesis target prediction tasks between SrTiO₃ and BaTiO₃, VAE-processed features achieved 74% accuracy, matching the performance of using original canonical features and significantly outperforming PCA-reduced features (68% accuracy for 10-D PCA) [15].

Diagram 1: ML workflow for handling sparse synthesis data.

Integrating Expert Knowledge and Multi-Fidelity Data

The Materials Expert-Artificial Intelligence (ME-AI) framework addresses data limitations by incorporating experimental intuition into ML models through curated, measurement-based data and chemistry-aware kernels [16]. This approach effectively "bottles" the insights of expert materials growers, translating them into quantitative descriptors that can guide synthesis predictions. In one implementation, ME-AI successfully identified hypervalency as a decisive chemical descriptor for topological semimetals in square-net compounds, demonstrating how domain knowledge enhances model interpretability and performance [16].

Multi-fidelity learning integrates data from diverse sources with varying levels of accuracy and completeness, including high-throughput computations, experimental literature, and targeted experiments. This approach maximizes information extraction while acknowledging the different uncertainty levels associated with each data type.

Table 2: Research Reagent Solutions for Synthesis Data Science

| Reagent/Tool | Function | Application Example | Considerations |

|---|---|---|---|

| Variational Autoencoder (VAE) [15] | Non-linear dimensionality reduction of sparse synthesis parameters | Compressing 100+ synthesis parameters to 10-20 latent features | Requires data augmentation for small datasets; superior to PCA for non-linear relationships |

| Ion-Substitution Similarity [15] | Data augmentation using chemically related compounds | Expanding SrTiO₃ dataset with BaTiO₃, CaTiO₃ syntheses | Domain knowledge crucial for defining appropriate similarity metrics |

| Gaussian Process with Chemistry-Aware Kernel [16] | Property prediction with uncertainty quantification | Identifying topological materials from structural descriptors | Incorporates domain knowledge directly into model architecture |

| Text-Mining Pipelines [15] | Extraction of synthesis parameters from literature | Converting unstructured experimental sections to structured data | Natural language ambiguity requires careful validation |

Experimental Validation and Active Learning

Closed-loop experimental validation systems integrate computational prediction with automated synthesis and characterization, progressively refining models with real-world feedback. This active learning approach directly addresses reporting biases by generating targeted data for uncertain parameter regions [12]. High-throughput experimental synthesis combined with rapid characterization techniques (such as in situ X-ray diffraction) provides the dense, consistent data required for robust model training, effectively filling gaps in existing literature-derived datasets [12].

Case Studies and Applications

SrTiO₃ and BaTiO₃ Synthesis Prediction

A benchmark study demonstrating the VAE approach achieved 74% accuracy in distinguishing between synthesis parameters for SrTiO₃ versus BaTiO₃—closely matching human expert intuition, which achieves approximately 78% accuracy for similar prediction tasks [15]. This performance significantly outperformed classifiers using PCA-reduced features (68% accuracy for 10-dimensional PCA), highlighting the value of non-linear dimensionality reduction for sparse synthesis data [15].

TiO₂ Polymorph and MnO₂ Phase Selection

VAE-learned latent representations have enabled visual exploration of synthesis parameter spaces to identify driving factors for specific polymorph outcomes. For TiO₂ systems, this approach helped identify parameters favoring brookite phase formation over anatase or rutile [15]. Similarly, for MnO₂, analysis of the latent space revealed correlations between alkali-ion intercalation and polymorph selection, providing insights for targeting specific structural variants [15].

Diagram 2: Data biases in materials databases and corresponding mitigation strategies.

The ICSD and related materials databases provide indispensable foundations for data-driven synthesis prediction, yet their inherent biases and limitations necessitate careful methodological approaches. Successful synthesis feasibility prediction requires acknowledging and addressing data scarcity, sparsity, and reporting biases through techniques such as data augmentation, variational autoencoders, and expert knowledge integration. As these methods mature and experimental data continue to grow, the materials science community moves closer to robust predictive frameworks that can significantly accelerate the discovery and synthesis of novel functional materials. Future progress will depend on continued development of specialized algorithms for materials data, increased data standardization and sharing, and tighter integration between computational prediction and experimental validation.

AI and Machine Learning Methodologies for Synthesis Prediction

The discovery of novel inorganic crystalline materials is a cornerstone of technological advancement, enabling breakthroughs across applications from clean energy to information processing [17]. However, the first and most critical step in this discovery process—identifying which hypothetical chemical compositions are synthetically accessible—remains a significant challenge [18] [19]. Synthesizability classification refers to the computational task of predicting whether a proposed inorganic material can be experimentally realized through current synthetic capabilities, regardless of whether it has been previously reported [18]. This problem is distinct from thermodynamic stability prediction, as synthesizability incorporates kinetic factors, experimental constraints, and human decision-making that cannot be captured by formation energy calculations alone [19].

Traditional approaches to assessing synthesizability have relied heavily on expert intuition, trial-and-error experimentation, and computational proxies such as charge-balancing rules or density functional theory (DFT)-calculated formation energies [18] [19]. However, these methods face fundamental limitations. Charge-balancing criteria, while chemically intuitive, prove insufficient as they incorrectly classify many known synthesized materials; remarkably, only 37% of synthesized inorganic compounds in the Inorganic Crystal Structure Database (ICSD) satisfy common charge-balancing rules [18]. Similarly, formation energy thresholds fail to account for kinetic stabilization and experimental realities, capturing only approximately 50% of known synthesized materials [18]. The development of deep learning models for synthesizability classification represents a paradigm shift, enabling data-driven predictions informed by the entire landscape of previously synthesized materials rather than relying on simplified physical proxies.

Deep Learning Approaches for Synthesizability Classification

Model Architectures and Representations

Deep learning models for synthesizability prediction employ diverse architectures and material representations to overcome the limitations of traditional approaches:

SynthNN: This model utilizes an atom2vec representation that learns optimal embeddings for chemical elements directly from the distribution of synthesized materials [18]. The approach reformulates material discovery as a classification task, processing chemical formulas through a deep neural network without requiring crystal structure information. Remarkably, without explicit programming of chemical rules, SynthNN learns fundamental principles including charge-balancing, chemical family relationships, and ionicity through data exposure alone [18].

Fourier-Transformed Crystal Properties (FTCP) Models: Some approaches represent crystal structures in both real and reciprocal space, using discrete Fourier transforms of elemental property vectors to capture periodicity and convoluted elemental properties [19]. These representations are processed through convolutional neural network encoders to predict synthesizability scores, achieving high precision in classifying ternary and quaternary compounds.

Graph Neural Networks (GNNs): Models like the Graph Networks for Materials Exploration (GNoME) process crystal structures as graphs with atoms as nodes and bonds as edges [17]. These architectures have demonstrated exceptional capability in predicting stability, with active learning frameworks enabling the discovery of millions of potentially stable crystals through iterative prediction and DFT verification.

Table 1: Deep Learning Models for Synthesizability Classification

| Model Name | Input Representation | Architecture | Key Advantages |

|---|---|---|---|

| SynthNN | atom2vec embeddings | Deep neural network | Requires only chemical composition; learns chemical principles implicitly |

| FTCP-SC | Fourier-transformed crystal properties | CNN encoder with classifier | Captures crystal periodicity in reciprocal space; suitable for structured materials |

| GNoME | Crystal graph | Graph neural network | Excellent for stability prediction; enables active learning discovery |

| CGCNN | Crystal graph | Convolutional neural network | Processes both atomic properties and bonding information |

The Synthesizability Classification Workflow

The process of developing and applying synthesizability classification models involves several critical steps, from data preparation through model deployment, as visualized below:

Diagram 1: Synthesizability Classification Workflow

Addressing the Positive-Unlabeled Learning Challenge

A fundamental challenge in synthesizability classification is the lack of definitive negative examples—materials confirmed to be unsynthesizable—since unsuccessful syntheses are rarely reported in scientific literature [18]. To address this, models employ positive-unlabeled (PU) learning frameworks:

Training Data Construction: Models are trained on known synthesized materials from databases like the Inorganic Crystal Structure Database (ICSD) as positive examples, augmented with artificially generated chemical formulas treated as unsynthesized (but potentially synthesizable) examples [18].

Semi-supervised Learning: The artificially generated "unsynthesized" materials are treated as unlabeled data and probabilistically reweighted according to their likelihood of being synthesizable [18]. This approach acknowledges that some materials in the "unsynthesized" set may be synthesizable but haven't been reported or discovered yet.

Transductive Learning: Some implementations use bagging support vector machines to handle the large amount of unlabeled data resulting from the tiny fraction of chemical space that has been experimentally explored [18].

Performance Comparison and Experimental Validation

Quantitative Performance Metrics

Deep learning models for synthesizability classification have demonstrated remarkable performance advantages over traditional computational methods and human experts:

Table 2: Performance Comparison of Synthesizability Prediction Methods

| Method | Precision | Recall | Key Limitations |

|---|---|---|---|

| SynthNN | 7× higher than DFT formation energy | Not specified | Cannot differentiate polymorphs of same composition |

| FTCP-SC Model | 82.6% (ternary crystals) | 80.6% (ternary crystals) | Requires crystal structure information |

| Charge-Balancing | 37% of known materials satisfy | Poor recall for ionic compounds | Inflexible; fails for metallic/covalent materials |

| DFT Formation Energy | 50% of known materials captured | Limited by kinetic factors | Computationally expensive; ignores experimental factors |

| Human Experts | 1.5× lower precision than SynthNN | Varies by specialization | Domain-specific knowledge; slow evaluation |

In head-to-head material discovery comparisons, SynthNN outperformed all 20 expert materials scientists, achieving 1.5× higher precision and completing the classification task five orders of magnitude faster than the best human expert [18]. For newly discovered materials, FTCP-based models demonstrated an 88.6% true positive rate when tested on compounds added to databases after 2019, indicating strong predictive capability for novel chemical spaces [19].

Integration with Materials Screening Workflows

The practical value of synthesizability classifiers emerges when integrated into computational materials discovery pipelines:

Pre-screening Filter: SynthNN can process billions of candidate compositions to identify promising synthesizable materials before resource-intensive DFT calculations [18]. This dramatically improves the efficiency of computational discovery efforts.

Stability-Ranked Discovery: The GNoME framework combines stability predictions with ab initio random structure searching (AIRSS) to discover potentially stable crystals, successfully identifying 2.2 million structures with stability competitive to known materials [17].

Composition-Focused Exploration: For materials where crystal structure is unknown, composition-based models like SynthNN enable exploration across the entire chemical composition space without structural constraints [18].

Experimental Protocols and Implementation

Data Preparation and Model Training

Implementing synthesizability classification requires careful data curation and model configuration:

Data Sources: The primary data source is the Inorganic Crystal Structure Database (ICSD), containing nearly all reported synthesized inorganic crystalline materials [18] [19]. Additional computational data from the Materials Project provides formation energies and structural information for stability benchmarking.

Feature Engineering: For composition-only models, atom2vec embeddings are learned directly from the data distribution. For structure-aware models, crystal graphs or FTCP representations encode atomic properties, bonding, and periodicity information [19].

Hyperparameter Optimization: Critical hyperparameters include the embedding dimension for atom vectors, the ratio of artificially generated formulas to synthesized formulas (N_synth), and network architecture details optimized through cross-validation [18].

Table 3: Essential Resources for Synthesizability Research

| Resource | Type | Function | Access |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Database | Comprehensive repository of synthesized inorganic crystals; ground truth for training | Commercial license |

| Materials Project (MP) | Database | DFT-calculated properties for known and hypothetical materials; stability benchmarks | Public API |

| Python Materials Genomics (pymatgen) | Software Library | Materials analysis and workflow management | Open source |

| Fourier-Transformed Crystal Properties (FTCP) | Representation | Encodes crystal structures in real and reciprocal space | Open implementation |

| atom2vec | Representation | Learned elemental embeddings from material distribution | Research implementation |

Future Directions and Implementation Considerations

The development of deep learning models for synthesizability classification represents a transformative advancement in materials informatics, yet several challenges and opportunities remain. Future research directions include integrating synthetic pathway prediction with synthesizability assessment, enabling not just identification of synthesizable materials but also recommendations for potential synthesis routes [18]. Additionally, developing models that can explicitly incorporate experimental constraints such as precursor availability, required pressure/temperature conditions, and reaction kinetics would bridge the gap between computational prediction and laboratory realization [19].

For researchers implementing these methodologies, key considerations include the trade-off between composition-based and structure-aware models. Composition-only approaches enable broader exploration of chemical space but cannot differentiate between polymorphs of the same composition [18]. Structure-aware models provide greater specificity but require crystal structure information that may not be available for novel materials [19]. The integration of synthesizability classifiers with high-throughput computational screening and inverse design frameworks will continue to accelerate the discovery of novel functional materials by ensuring that computational predictions align with experimental feasibility.

As these models evolve, they develop emergent capabilities including accurate prediction of materials with five or more unique elements—previously challenging for human intuition—and improved generalization across diverse chemical spaces [17]. The scaling laws observed in models like GNoME suggest that continued expansion of materials data and model complexity will yield further improvements in prediction accuracy and reliability [17].

Retrosynthesis planning is a critical strategic process that works backward from a desired target compound to identify simpler, readily available precursor compounds from which it can be synthesized. In organic chemistry, this process can be broken down into multiple steps with smaller building blocks. However, in inorganic chemistry, this approach is largely inapplicable due to the periodic, three-dimensional arrangement of atoms in inorganic materials. The synthesis of inorganic materials typically remains a one-step process where a set of precursors react to form the target compound, with no general unifying theory to guide the process. This complexity has traditionally forced researchers to rely on trial-and-error experimentation, creating a significant bottleneck in the discovery of new materials for technologies such as renewable energy and electronics [5].

The advent of machine learning (ML) presents an opportunity to bridge this knowledge gap by learning directly from synthesis data. The core task of precursor recommendation—suggesting a set of precursors {A, B...} for a target material C—has become a focal point for computational research. This whitepaper details and compares the operational frameworks of two significant ML approaches in this domain: the established ElemwiseRetro and the novel ranking-based framework, Retro-Rank-In, situating them within the broader research objective of predicting synthesis feasibility in inorganic materials research [5].

Core Frameworks and Methodologies

ElemwiseRetro: A Template-Based Classification Approach

ElemwiseRetro represents an earlier class of ML models that frame retrosynthesis as a multi-label classification problem. This method employs domain heuristics and a classifier for template completions [5].

- Core Learning Problem: The model functions as a multi-label classifier (θ_MLC) over a predefined set of precursor classes. During training, it learns to map a target material to a combination of precursors from a fixed library.

- Inference and Limitations: In practice, for a given target material, ElemwiseRetro selects and recombines precursors that exist within its training set. A significant limitation of this approach is its inability to recommend precursors outside its training vocabulary. Since precursors are represented via one-hot encoding in the final classification layer, the model cannot propose novel precursor materials, thereby restricting its utility in exploratory materials discovery where new precursors are often considered [5].

Retro-Rank-In: A Novel Ranking-Based Framework

Retro-Rank-In is a recently proposed framework that fundamentally reformulates the retrosynthesis problem to overcome the limitations of classification-based models like ElemwiseRetro [5] [20].

- Core Learning Problem: Instead of multi-label classification, Retro-Rank-In learns a pairwise ranker (θ_Ranker). This ranker evaluates the chemical compatibility between a target material and a candidate precursor, predicting the likelihood that they can co-occur in a viable synthetic route. This reformulation allows for inference on entirely novel precursors and precursor sets [5].

- Model Architecture: The framework consists of two core components:

- Composition-Level Transformer-Based Encoder: This module generates chemically meaningful representations for both target and precursor materials. It processes a sequence constructed from elemental embeddings and stoichiometric fractions. The encoder is pretrained on large-scale datasets using multi-task learning, including masked element prediction and regression on computed material properties, which fosters generalizability [20].

- Pairwise Ranker: A binary classifier that takes the representations of the target and a precursor candidate and outputs a compatibility score. During inference, these scores are used to rank potential precursor sets, with the joint probability of a set calculated assuming independence among precursors [5] [20].

The following workflow diagram illustrates the end-to-end process of the Retro-Rank-In framework.

Quantitative Performance Comparison

The performance of retrosynthesis models is typically evaluated using Top-K accuracy metrics, which measure the frequency with which the verified precursor set appears within the model's top K recommendations. Evaluations are conducted on challenging dataset splits designed to test generalization by ensuring no material system overlaps between training and test sets [5] [20].

Table 1: Comparative Performance of Retrosynthesis Frameworks

| Model | Core Methodology | Ability to Discover New Precursors | Top-K Accuracy (Representative) | Generalization to New Systems |

|---|---|---|---|---|

| ElemwiseRetro | Multi-label Classification | ✗ No | Medium (e.g., ~45% Top-3) | Medium |

| Synthesis Similarity | Retrieval of Known Syntheses | ✗ No | Low | Low |

| Retrieval-Retro | Retrieval + Multi-label Classification | ✗ No | Medium | Medium |

| Retro-Rank-In | Pairwise Ranking | ✓ Yes | High (e.g., ~60% Top-3) | High |

The quantitative results demonstrate that Retro-Rank-In sets a new state-of-the-art, particularly in out-of-distribution generalization and candidate set ranking. For instance, Retro-Rank-In was able to correctly predict the verified precursor pair \ce{CrB + \ce{Al}} for the target \ce{Cr2AlB2}, despite never encountering this specific combination during training—a capability absent in prior classification-based work [5].

Detailed Experimental Protocol

To ensure reproducibility and provide a clear roadmap for researchers, this section outlines a detailed experimental protocol for implementing and evaluating the Retro-Rank-In framework, based on the methodologies cited in the source material.

Table 2: Research Reagent and Computational Solutions

| Item / Resource | Function / Description | Example / Specification |

|---|---|---|

| Inorganic Solid-State Reaction Dataset | Primary data for training and evaluation. Contains historical synthesis routes from scientific literature. | Databases like the one used by Prein et al., containing reactions in a (Target, {Precursor1, Precursor2...}) format [5]. |

| Materials Project DFT Database | Source of domain knowledge for pretraining; provides computed formation enthalpies and material properties. | ~80,000 computed compounds; used for multi-task pretraining of the encoder [5]. |

| Compositional Featurization | Converts a material's chemical formula into a machine-readable input. | Represented as a stoichiometric vector (\mathbf{x}T = (x1, x2, \dots, xd)) for a target material (T) [5]. |

| Transformer Encoder | Core neural network architecture for generating material representations. | A model pretrained on tasks like masked element prediction and property regression [20]. |

| Pairwise Ranker (Binary Classifier) | Scores the compatibility between a target and a precursor candidate. | A neural network that outputs a probability score for viable co-occurrence [5] [20]. |

Implementation Workflow

The logical flow of the experimental procedure, from data preparation to model inference, is depicted in the following diagram.

Step 1: Data Preparation and Preprocessing

- Data Collection: Assemble a comprehensive dataset of inorganic solid-state synthesis reactions. Each data point should be a (Target, {Precursor_Set}) pair, derived from curated scientific literature.

- Data Splitting: Partition the dataset into training, validation, and test sets. To rigorously evaluate generalization, use splits that ensure no overlap of material systems (e.g., no chemical elements or crystal structures in common) between the training and test sets.

- Featurization: Convert the elemental composition of each target and precursor material into a stoichiometric vector, (\mathbf{x}).

Step 2: Encoder Pretraining

- Input Sequence Construction: For each material composition, create an input sequence for the transformer. This involves combining high-dimensional elemental embeddings with sinusoidal embeddings representing stoichiometric fractions. A special [CPD] token is prepended to aggregate the compound-level representation.

- Multi-task Learning: Pretrain the transformer encoder on a large, unlabeled dataset of inorganic compositions (e.g., from the Materials Project). The pretraining objectives should include:

- Masked Element Prediction: Randomly masking elements in the input sequence and training the model to predict them.

- Property Regression: Predicting computed properties like formation enthalpy to infuse domain knowledge.

- Space Group Classification: Classifying the crystal system to incorporate structural information [20].

Step 3: Ranker Training

- Pairwise Data Sampling: Construct training pairs for the ranker. For a known synthesis pair (Target, Precursor_Set), create positive examples by pairing the target with each valid precursor. Generate negative examples through sampling, such as pairing the target with random, unlikely precursors from the chemical space.

- Model Training: Train the pairwise ranker (a binary classifier) using the fixed, pretrained encoder. The model learns to assign a high compatibility score to (target, precursor) pairs that are known to react and a low score to negative pairs. The loss function is typically a ranking loss that maximizes the score difference between positive and negative examples.

Step 4: Inference and Evaluation

- Candidate Generation: For a novel target material, generate a candidate pool of potential precursors. This pool can be constructed using heuristic rules or sampled from a large database of known inorganic compounds.

- Scoring and Ranking: Encode the target and all candidate precursors using the pretrained encoder. Use the trained ranker to compute a compatibility score for each (target, candidate) pair.

- Set Ranking: To rank precursor sets (\mathbf{S} = {P1, P2, ..., Pm}), calculate the joint probability score, often under an assumption of independence: (score(\mathbf{S}) = \prod{Pi \in \mathbf{S}} \text{Ranker}(T, Pi)).

- Performance Assessment: Evaluate the model using Top-K accuracy on the held-out test set, reporting the percentage of test targets for which the ground-truth precursor set is found within the top K ranked suggestions.

The comparison between ElemwiseRetro and Retro-Rank-In highlights a pivotal evolution in computational retrosynthesis for inorganic materials: the shift from a closed-world classification paradigm to an open-world ranking paradigm. While ElemwiseRetro is limited to recombining known precursors, Retro-Rank-In's reformulation of the problem as a pairwise ranking task enables the discovery of novel precursors, a critical capability for de novo materials discovery [5].

The superior performance of Retro-Rank-In, particularly in challenging generalization scenarios, underscores the importance of its key innovations: the use of a shared latent space for targets and precursors, the integration of broad chemical knowledge via large-scale pretraining, and its flexible ranking architecture. For researchers and drug development professionals, these frameworks represent powerful tools that can accelerate the design-synthesis cycle. Future directions in this field may involve the integration of structural data beyond composition, the incorporation of kinetic and thermodynamic constraints more explicitly, and further refinement of ranking methodologies to better model the interdependencies within precursor sets [5] [20]. By moving beyond the limitations of trial-and-error, these data-driven approaches offer a robust foundation for predicting synthesis feasibility and unlocking the vast potential of the inorganic materials space.

The discovery and synthesis of new inorganic materials are fundamental to technological progress in fields ranging from renewable energy to electronics. However, the transition from a computationally predicted material to a physically synthesized one remains a severe bottleneck, often relying on empirical trial-and-error methods that are slow and resource-intensive [21] [22]. The central challenge in inorganic materials research is twofold: first, identifying thermodynamically stable compounds, and second, assessing their synthesizability—evaluating metastable lifetimes, reaction energies, and feasible synthetic routes [21].

In this context, network science has emerged as a powerful and revolutionary paradigm. By representing complex chemical spaces as graphs, where nodes are materials and edges represent thermodynamic or reaction relationships, researchers can apply sophisticated topological analysis to navigate the high-dimensional space of inorganic synthesis [21]. This approach provides a formal framework to systematically explore the synthesizability of inorganic compounds, thereby bridging the critical gap between virtual materials design and their actual experimental fabrication [21] [22]. This whitepaper serves as a technical guide to the core concepts, methodologies, and applications of network science in predicting the synthesis feasibility of inorganic materials.

Theoretical Foundations of Materials Networks

Graph Theory Basics for Materials Science

A network, or graph, is a mathematical structure used to represent a complex system composed of interacting parts. It is defined as a set of nodes (vertices) connected by edges (links) [23]. In materials reaction networks, the nodes typically represent crystalline compounds, while the edges can represent different types of relationships:

- Undirected edges may represent thermodynamic relationships or similarity metrics [23].

- Directed edges often represent successful chemical reactions proceeding from precursors to products [21] [23].

- Weighted edges can incorporate additional information such as reaction energies, kinetic barriers, or similarity scores [23].

This graph-based representation is particularly suited to chemical reaction spaces because it naturally handles their high-dimensionality without requiring coordinate systems or dimensionality reduction, thus avoiding information loss [21].

Key Network Topological Metrics

The power of network analysis lies in quantifying topological features that reveal a node's structural importance and the overall system's organization. Key metrics relevant to materials synthesis include:

- Degree: The number of connections a node has to other nodes. A high degree may indicate a commonly used precursor or a thermodynamically stable compound [21].

- Betweenness centrality: Measures how often a node acts as a bridge along the shortest path between two other nodes. Nodes with high betweenness may represent critical intermediates in synthesis pathways [21].

- Clustering coefficient: Quantifies the degree to which nodes tend to cluster together, potentially identifying communities of chemically similar compounds [21].