Predicting Material Synthesizability with Positive-Unlabeled Learning: A New Paradigm for Accelerating Discovery

This article explores the transformative role of Positive-Unlabeled (PU) learning in predicting material synthesizability, a critical bottleneck in materials discovery and development.

Predicting Material Synthesizability with Positive-Unlabeled Learning: A New Paradigm for Accelerating Discovery

Abstract

This article explores the transformative role of Positive-Unlabeled (PU) learning in predicting material synthesizability, a critical bottleneck in materials discovery and development. Aimed at researchers and scientists, we first establish the core challenge: the absence of verified negative data (failed syntheses) in scientific literature. We then detail the leading PU methodologies, from two-step frameworks to advanced evolutionary multitasking, showcasing their successful application in predicting synthesizable ternary oxides and 3D crystal structures. The discussion extends to troubleshooting common pitfalls, such as inaccurate performance estimation and the SCAR assumption, and presents optimization strategies like the novel NAPU-bagging SVM. Finally, we provide a rigorous comparative analysis, validating PU learning's superior performance against traditional stability metrics and highlighting its profound implications for accelerating the development of novel functional materials and multitarget therapeutics.

The Synthesizability Prediction Problem: Why Traditional Methods Fall Short and How PU Learning Offers a Solution

The acceleration of materials discovery through computational methods has created a profound asymmetry: while we can generate millions of hypothetical material structures in silico, our ability to predict which are experimentally realizable lags severely. This gap stems from a fundamental bottleneck in materials informatics: the critical absence of verified, well-curated 'negative' synthesis data—reliable records of failed synthesis attempts. In the context of positive-unlabeled (PU) learning for material synthesizability prediction, this missing negative class represents both a formidable challenge and a pivotal research frontier. The synthesis of novel functional materials remains constrained not by computational power but by the scarcity of high-quality experimental data that captures both successful and unsuccessful synthesis outcomes.

This data imbalance is not merely an inconvenience; it strikes at the core of supervised machine learning approaches for synthesizability prediction. Most machine learning algorithms, particularly classification models, require both positive and negative examples to learn discriminative boundaries effectively. When negative examples are missing, unreliable, or systematically biased, the resulting models may develop fundamental flaws in their understanding of what makes a material synthesizable. This whitepaper examines the origins, implications, and potential solutions to this data bottleneck, providing researchers with a comprehensive framework for advancing synthesizability prediction in an era of data-centric materials science.

The Scale and Nature of the Data Imbalance

Quantitative Evidence of the Data Gap

The disparity between positive and negative synthesis data is not merely theoretical but is quantitatively evident across major materials databases. The following table summarizes documented imbalances in key materials informatics resources:

Table 1: Documented Data Imbalances in Materials Synthesis Databases

| Database/Study | Positive Examples | Negative Examples | Imbalance Ratio | Key Finding |

|---|---|---|---|---|

| Human-curated ternary oxides dataset [1] | 3,017 solid-state synthesized | None explicitly recorded | Undefined | Manual curation identified 595 non-solid-state synthesized, but these are alternative syntheses, not failures |

| ICSD (implied usage) [2] [3] | ~70,120 confirmed structures | None inherently contained | Undefined | Used as sole source of positive examples; negatives must be synthetically generated |

| Text-mined synthesis data [1] | 317,82 entries | Extraction accuracy only 51% | Undefined | Low quality compounds absence of negative examples |

| SynthNN training data [3] | ICSD compounds | Artificially generated | Variable hyperparameter | Requires careful class reweighting due to unknown negative purity |

This tabulated evidence reveals a consistent pattern: major materials databases systematically record successful syntheses while failing to capture failed attempts. The human-curated dataset of ternary oxides exemplifies this trend, containing 3,017 solid-state synthesized entries alongside 595 entries synthesized via other methods, but no explicitly documented synthesis failures [1]. This absence fundamentally constrains the development of robust synthesizability models.

Publication Bias and Cultural Barriers

The root causes of this data gap are multifaceted, spanning sociological, economic, and practical dimensions of scientific research:

Publication Bias: Scientific journals traditionally prioritize novel, successful syntheses over null results, creating a systemic disincentive for reporting failures [3]. This publication bias ensures that the literature captures only a fraction of the actual experimentation landscape.

Cultural and Incentive Structures: As noted by Raccuglia et al. and Jensen et al., experimentalists rarely document failed synthesis attempts in formal publications [1]. The academic reward system emphasizes breakthrough discoveries rather than the meticulous documentation of unsuccessful experiments.

Data Curation Challenges: Even when synthesis failures are recorded, they often reside in inaccessible formats such as laboratory notebooks, which present significant extraction challenges [1]. The conversion of these unstructured, private records into structured, machine-readable databases remains a formidable obstacle.

Definitional Ambiguity: The distinction between "unsynthesized" and "unsynthesizable" is often blurred. A material may not yet be synthesized due to lack of attempt rather than fundamental synthesizability constraints, creating labeling uncertainty in any purported negative class [3].

Positive-Unlabeled Learning as a Computational Framework

Theoretical Foundation of PU Learning

Positive-Unlabeled (PU) learning represents a specialized branch of semi-supervised machine learning that operates exclusively on positive and unlabeled examples, making it particularly well-suited to synthesizability prediction. The core assumption underpinning PU learning is that the unlabeled set contains both positive and negative examples, but without explicit annotations. In the materials domain, this translates to:

- Positive Examples: Experimentally verified materials from databases like the ICSD [2] [3].

- Unlabeled Examples: Hypothetical materials from computational databases (Materials Project, OQMD, JARVIS) whose synthesizability is unknown [2].

The fundamental objective is to infer a classifier that can distinguish between synthesizable and non-synthesizable materials despite the absence of confirmed negative training examples.

Implementation Approaches in Materials Science

Multiple research groups have developed specialized PU learning implementations for synthesizability prediction:

Bagging SVM Approach: Frey et al. adopted a transductive bagging PU learning approach developed by Mordelet et al. to predict synthesizable 2D MXenes and their precursors [1]. This method iteratively samples from the unlabeled set with weighting schemes that progressively refine the negative class.

Probabilistic Reweighting: The SynthNN framework employs a semi-supervised approach that treats unsynthesized materials as unlabeled data and probabilistically reweights these materials according to their likelihood of being synthesizable [3]. This method closely resembles the approach of Cheon et al., where unlabeled examples are class-weighted based on their feature similarity to known positives.

CLscore Methodology: Jang et al. developed a PU learning model that generates a continuous synthesizability score (CLscore), where values below 0.5 indicate non-synthesizability [2]. This approach enabled the identification of 80,000 non-synthesizable examples from a pool of 1.4 million theoretical structures for LLM training.

The performance metrics of these approaches demonstrate their effectiveness despite the data constraints. Jang et al.'s model achieved a true positive rate of 87.4%, while Gu et al. showed better performance than tolerance factor-based approaches for perovskites [1].

Experimental Protocols for Generating Negative Data

Human-Curated Data Collection Methodology

The creation of high-quality synthesizability datasets requires meticulous experimental design and execution. The following protocol, adapted from Chung et al., provides a framework for systematic data collection [1]:

Table 2: Experimental Protocol for Human-Curated Synthesis Data Collection

| Step | Procedure | Validation Method | Output |

|---|---|---|---|

| Initial Candidate Selection | Download ternary oxide entries from Materials Project with ICSD IDs; remove non-metal elements and silicon | Cross-reference with ICSD database | 4,103 ternary oxide entries for manual extraction |

| Literature Mining | Examine papers corresponding to ICSD IDs; search Web of Science and Google Scholar with chemical formula as input | First 50 search results sorted from oldest to newest; top 20 relevant results | Comprehensive synthesis history for each composition |

| Solid-State Synthesis Verification | Apply criteria: (1) reactants heated below melting points, (2) no flux or cooling from melt, (3) explicit grinding optional | Binary oxide melting points from CRC Handbook; explicit method descriptions | Binary classification: solid-state synthesized vs. non-solid-state synthesized |

| Data Extraction | Record highest heating temperature, pressure, atmosphere, grinding conditions, heating steps, cooling process, precursors | Random sampling of 100 entries for independent validation by second researcher | Structured dataset with synthesis conditions and reliability flags |

This protocol yielded a dataset containing 3,017 solid-state synthesized entries, 595 non-solid-state synthesized entries, and 491 undetermined entries, with the non-solid-state category representing materials made via alternative methods rather than failed syntheses [1]. The critical distinction is that these represent synthesis route differences rather than documented failures.

PU Learning Model Training Protocol

For researchers implementing PU learning for synthesizability prediction, the following experimental protocol provides a structured approach:

Implementation Details:

- Positive Set Construction: Extract 70,120 crystal structures from ICSD with ≤40 atoms and ≤7 elements, excluding disordered structures [2].

- Unlabeled Set Construction: Pool 1.4+ million theoretical structures from Materials Project, CMD, OQMD, and JARVIS [2].

- Feature Representation: Utilize composition embeddings (e.g., atom2vec), structural descriptors, or text-based crystal representations (e.g., material strings) [3] [2].

- Training Approach: Implement iterative learning with careful handling of class weights and probabilities to account for potential positives in the unlabeled set.

- Validation Strategy: Use temporal validation (older data for training, newer for testing) to simulate real discovery scenarios [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Synthesizability Prediction Research

| Resource Category | Specific Examples | Function in Research | Access Method |

|---|---|---|---|

| Primary Data Sources | ICSD, Materials Project, GNoME, Alexandria | Provide positive examples and unlabeled candidate pools | Programmatic APIs (MP), direct download |

| Text-Mining Corpora | Kononova et al. solid-state reactions [1] | Training data for synthesis condition prediction | GitHub repository |

| Computational Tools | pymatgen [1], atom2vec [3] | Structure analysis, feature generation, descriptor calculation | Python packages |

| Validation Resources | CRC Handbook melting points [1], phase diagrams | Verify synthesis feasibility constraints | Reference texts, computational databases |

| PU Learning Implementations | SynthNN [3], Jang et al. CLscore [2] | Pre-trained models for synthesizability assessment | Research publications, code repositories |

The fundamental bottleneck of missing negative synthesis data presents both a challenge and opportunity for the materials informatics community. While PU learning offers a powerful framework for navigating this data landscape, future progress will require coordinated efforts across multiple domains:

- Cultural Shifts: Promoting the publication of well-documented synthesis failures through specialized journals or data repositories.

- Automated Laboratory Notebooks: Developing systems for automatic extraction of synthesis outcomes from electronic lab records.

- Standardized Reporting: Establishing community standards for reporting both successful and failed synthesis attempts.

- Active Learning Integration: Combining PU learning with experimental design to iteratively refine models through targeted synthesis.

The integration of these approaches with emerging technologies like large language models (e.g., CSLLM achieving 98.6% accuracy [2]) and high-throughput experimentation platforms will gradually transform synthesizability prediction from a data-poor to a data-rich domain. By confronting the negative data bottleneck directly, the materials science community can accelerate the translation of computational predictions into realized materials that address pressing technological challenges.

The discovery of new functional materials is a cornerstone of technological advancement. Computational methods, particularly density functional theory (DFT), have dramatically accelerated this process by enabling the high-throughput screening of millions of candidate materials for desirable properties [2]. The prevailing paradigm for identifying synthesizable candidates from this vast pool has heavily relied on metrics of thermodynamic and kinetic stability. The energy above hull—a measure of a compound's stability relative to its competing phases—and kinetic stability assessments, such as the absence of imaginary phonon frequencies, have served as the primary filters [2]. However, a significant and persistent gap exists between theoretical predictions guided by these metrics and experimental success, leaving many computationally promising materials languishing in the realm of the unsynthesized. This whitepaper argues that these traditional stability metrics are insufficient proxies for synthesizability and frames the emerging solution: data-driven models, particularly those employing positive-unlabeled (PU) learning, which learn the complex patterns of synthesizability directly from experimental data [2] [3].

The Shortcomings of Traditional Stability Metrics

The Energy Above Hull and Its Limitations

The energy above hull (Eₕ) is a thermodynamic metric that quantifies the decomposition enthalpy of a target compound into its most stable competing phases. A compound with an Eₕ of 0 eV/atom is thermodynamically stable, while a positive value indicates metastability. In high-throughput screening, a threshold near 0 (e.g., 0.1 eV/atom) is often applied to identify plausible candidates.

Despite its widespread use, this approach is fundamentally limited. It fails to account for the fact that synthesis is a kinetic process governed by finite-temperature effects, reaction pathways, and precursor choices [4]. Consequently, numerous structures with favorable formation energies remain elusive in the laboratory, while various metastable structures are routinely synthesized [2]. For instance, the cristobalite phase of SiO₂, a well-known synthetic material, does not appear among the 21 SiO₂ structures listed within 0.01 eV of the convex hull in the Materials Project [4]. Quantitative benchmarking reveals the severity of this limitation; using Eₕ ≥ 0.1 eV/atom as a synthesizability filter achieves a low accuracy of only 74.1% [2].

The Insufficiency of Kinetic and Charge-Balancing Proxies

Other physical proxies similarly fail to provide a general solution. The analysis of kinetic stability through phonon spectra can identify structures with dynamical instabilities (imaginary frequencies), but many such structures are nonetheless synthesizable [2]. Using a phonon-based filter (lowest frequency ≥ -0.1 THz) achieves an accuracy of 82.2%, an improvement over Eₕ but still inadequate for reliable discovery [2].

The simple chemical heuristic of charge-balancing—ensuring a net neutral ionic charge based on common oxidation states—is also an unreliable predictor. An analysis of known inorganic materials shows that only 37% of synthesized compounds are charge-balanced according to this rule. Even among typically ionic compounds like binary cesium compounds, the figure is a mere 23% [3]. This poor performance stems from an inability to account for diverse bonding environments in metallic, covalent, or other complex materials.

Table 1: Quantitative Limitations of Traditional Synthesizability Metrics

| Metric | Underlying Principle | Key Limitation | Reported Accuracy |

|---|---|---|---|

| Energy Above Hull | Thermodynamic stability relative to competing phases | Fails to capture kinetic pathways and finite-temperature effects of synthesis [4]. | 74.1% [2] |

| Phonon Spectrum | Kinetic stability (absence of imaginary frequencies) | Many synthesizable materials exhibit dynamical instabilities [2]. | 82.2% [2] |

| Charge-Balancing | Net neutral charge from common oxidation states | Inflexible; fails for metallic, covalent, and many ionic materials [3]. | 37% of known materials are charge-balanced [3] |

Positive-Unlabeled Learning for Synthesizability Prediction

The Core Challenge: Learning from Incomplete Data

The central problem in data-driven synthesizability prediction is the lack of definitive negative examples. Scientific literature extensively documents successful syntheses (positives) but rarely reports failures (negatives). This results in a dataset of confirmed positives amid a vast sea of unlabeled examples, many of which may be synthesizable but undiscovered [3]. Positive-unlabeled (PU) learning is a class of machine learning techniques specifically designed to overcome this exact challenge.

Methodologies and Experimental Protocols

PU learning algorithms treat the unlabeled data as a mixture of hidden positive and negative examples, often reweighting them probabilistically during training [3]. The following workflow outlines a standard protocol for applying PU learning to synthesizability prediction.

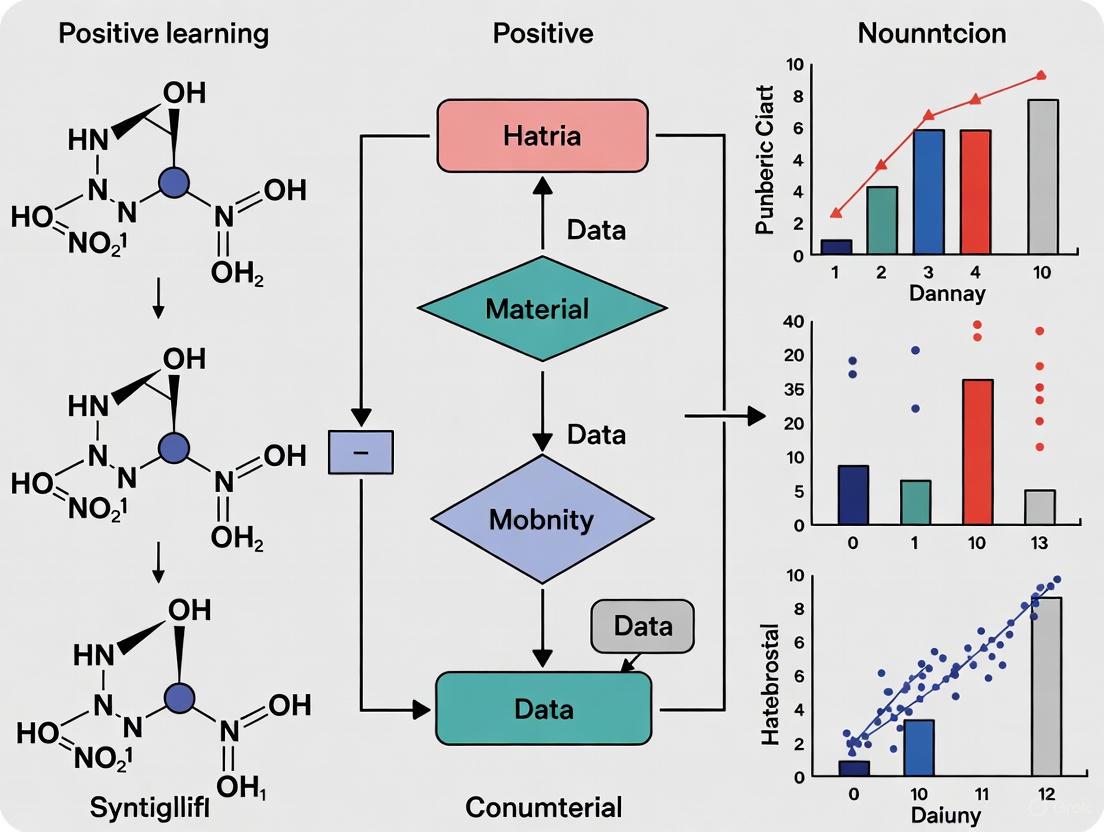

Diagram 1: PU learning workflow for material synthesizability.

Data Curation and Feature Representation

- Positive Set (P): The Inorganic Crystal Structure Database (ICSD) is the primary source for synthesizable materials. A common protocol involves extracting 70,120 ordered crystal structures, filtering out disordered systems and limiting to structures with ≤40 atoms and ≤7 elements for manageability [2].

- Unlabeled Set (U): This set is assembled from theoretical structures in computational databases like the Materials Project (MP), the Open Quantum Materials Database (OQMD), and JARVIS. One study pooled 1,401,562 such structures [2].

- Feature Representation: The choice of representation is critical. Common approaches include:

- Composition-only models that use learned atom embeddings (e.g., Atom2Vec) to represent chemical formulas without structural information [3].

- Structure-aware models that utilize graph neural networks (GNNs) or text-based "material strings" that encode lattice parameters, atomic coordinates, and symmetry [2] [4].

Model Training and Evaluation

The PU model is trained to distinguish the positive set from the unlabeled set. A key technique involves assigning a weight to each unlabeled example representing its probability of being a hidden negative [3]. After training, the model outputs a synthesizability score (e.g., CLscore [2] or SynthNN probability [3]) for any new candidate material. Performance is evaluated on a held-out test set, with metrics like accuracy, precision, and F1-score. For example, a Crystal Synthesis Large Language Model (CSLLM) fine-tuned with this approach achieved a state-of-the-art accuracy of 98.6% on testing data [2].

Advanced Frameworks and Experimental Validation

Integrated and Specialized Models

Recent advances move beyond simple classification to create more powerful and comprehensive frameworks:

- Crystal Synthesis Large Language Models (CSLLM): This framework employs three specialized LLMs to predict synthesizability, suggest a synthetic method (e.g., solid-state or solution), and identify suitable precursors, with the precursor prediction model achieving over 80% success [2].

- Combined Composition-Structure Models: Some pipelines integrate two encoders—a compositional transformer and a structural GNN—whose predictions are aggregated via a rank-average ensemble (Borda fusion) to produce a robust, unified synthesizability score [4].

Experimental Proof-of-Concept

The ultimate validation of any synthesizability model is experimental synthesis. In a landmark demonstration, a synthesizability-guided pipeline screened over 4.4 million computational structures. The model identified 24 highly synthesizable candidates, for which synthesis recipes were generated using a precursor-suggestion model (Retro-Rank-In) and a calcination temperature predictor (SyntMTE). This integrated computational-experimental effort successfully synthesized and characterized 7 out of 16 target materials, including one novel and one previously unreported structure, all within a three-day experimental window [4]. This success rate, achieved with minimal human intervention, underscores the practical utility of modern synthesizability prediction.

Table 2: Key Research Reagents and Computational Tools for Synthesizability Research

| Reagent / Tool | Type | Function in Research |

|---|---|---|

| ICSD | Database | The definitive source of positive examples (synthesized materials) for model training [2] [3]. |

| Materials Project / OQMD | Database | Primary sources of unlabeled/theoretical structures for the unlabeled set (U) in PU learning [2]. |

| CLscore / SynthNN | Software Model | Pre-trained PU learning models that output a synthesizability score for a candidate material [2] [3]. |

| Retro-Rank-In | Software Model | A precursor-suggestion model that generates a ranked list of viable solid-state precursors for a target material [4]. |

| Graph Neural Network (GNN) | Algorithm | Encodes crystal structure graphs to extract features relevant to structural stability and synthesizability [4]. |

| Material String | Data Format | A simplified text representation of a crystal structure that integrates lattice, composition, and atomic coordinate information for LLM processing [2]. |

The Scientist's Toolkit: A Workflow for Practical Discovery

The following diagram and explanation provide a practical workflow for integrating synthesizability prediction into a materials discovery campaign.

Diagram 2: Synthesizability-guided material discovery pipeline.

- Candidate Generation and Initial Screening: Begin with a large pool of candidate structures generated from databases or inverse design. Apply a trained synthesizability model (e.g., a PU learning classifier) to score and rank all candidates. Filter for those with the highest synthesizability scores [4].

- Synthesis Pathway Prediction: For the top-ranked candidates, use specialized models like the Method LLM and Precursor LLM from the CSLLM framework or tools like Retro-Rank-In to predict viable synthetic routes (solid-state vs. solution) and specific precursor compounds [2] [4].

- High-Throughput Experimental Validation: Execute the proposed syntheses in a high-throughput laboratory setting, using automated systems for weighing, grinding, and calcination. Characterize the resulting products using techniques like X-ray diffraction (XRD) to verify the formation of the target crystal structure [4].

This end-to-end pipeline demonstrates a mature and validated approach for translating theoretical candidates into realized materials, effectively bridging the gap between computation and experiment.

The limitations of energy above hull and kinetic metrics are clear and quantitative. They serve as useful but incomplete proxies, achieving accuracies between 74% and 82%, far below the requirements for efficient materials discovery [2]. The paradigm is shifting from relying solely on first-principles stability calculations to leveraging data-driven models that learn the complex, multi-faceted nature of synthesizability directly from the historical record of experimental success. Positive-unlabeled learning stands as a cornerstone of this new paradigm, providing the statistical framework to learn from inherently incomplete data. By integrating these advanced predictive models with synthesis planning tools into automated experimental workflows, the materials community can now navigate the treacherous gap between computational prediction and experimental realization, dramatically accelerating the discovery of tomorrow's functional materials.

Positive and Unlabeled (PU) learning is a subfield of machine learning that addresses the challenge of training accurate binary classifiers when explicit negative examples are unavailable [5]. In this setting, a learner has access to a set of labeled positive examples and a set of unlabeled data that contains a mixture of both positive and negative instances [5] [6]. This scenario naturally arises in many real-world applications where confirming negative instances is difficult, expensive, or impractical, making PU learning particularly valuable for domains like material science and drug development [5] [3].

The term "PU learning" first emerged in the early 2000s and has gained significant research interest due to its practical importance across multiple domains [5]. In medical diagnosis, for example, patient records typically only list diagnosed diseases, while the absence of a diagnosis does not necessarily mean the patient doesn't have a disease [5] [6]. Similarly, in material science, databases like the Inorganic Crystal Structure Database (ICSD) contain confirmed synthesizable materials (positives), but definitively identifying non-synthesizable materials (negatives) remains challenging [3] [2].

Problem Formulation and Key Assumptions

Formal Problem Definition

In traditional fully-supervised binary classification, the goal is to learn a classifier that distinguishes between positive and negative classes using training data where both class labels are available [5]. PU learning modifies this paradigm by working with training data consisting of positive examples (P) and unlabeled examples (U), where the unlabeled set contains both positive and negative instances [5].

Formally, let (x, y) be a training example where x is a feature vector and y ∈ {0,1} is the class label (1 for positive, 0 for negative). In PU learning, the learner has access to two datasets: a positive set ( \mathcal{X}P = {x1, x2, ..., x{np}} ) drawn from the positive class distribution p(x|y=1), and an unlabeled set ( \mathcal{X}U = \mathcal{X}{UP} \cup \mathcal{X}{UN} ) containing both positive and negative samples, where ( \mathcal{X}{UP} ) represents unlabeled positive samples and ( \mathcal{X}{UN} ) represents unlabeled negative samples [7].

Key Scenarios and Labeling Mechanisms

Two primary scenarios characterize how PU data is generated:

Single-Training-Set Scenario: Both positive and unlabeled examples come from the same dataset, which represents an i.i.d. sample from the real distribution. A labeling mechanism selects which positive examples become labeled, characterized by a propensity score e(x) = Pr(s=1|y=1,x), where s indicates whether an example is selected to be labeled [5]. The labeled distribution becomes a biased version of the positive distribution: ( fl(x) = \frac{e(x)}{c}f+(x) ), where c is the label frequency representing the fraction of positive examples that are labeled [5].

Case-Control Scenario: Positive and unlabeled examples come from two independently drawn datasets, where the positive dataset contains only positive examples and the unlabeled dataset represents a random sample from the general population [5] [6].

Table 1: Comparison of PU Learning Scenarios

| Characteristic | Single-Training-Set Scenario | Case-Control Scenario |

|---|---|---|

| Data Origin | Single dataset | Two independent datasets |

| Positive Data Distribution | ( \alpha e(x) f_l(x) ) | ( P(x|y=+1) ) |

| Unlabeled Data Distribution | ( \alpha f+(x) + (1-\alpha) f-(x) ) | ( P(x) ) |

| Common Applications | Personalized advertising, medical diagnosis | Knowledge base completion, material synthesizability |

Critical Assumptions

PU learning algorithms typically rely on several key assumptions:

Selected Completely At Random (SCAR): This assumption posits that the labeled positive examples are randomly selected from the entire positive set, meaning the propensity score e(x) is constant and does not depend on specific feature values [5] [6].

Selected At Random (SAR): A more relaxed assumption where the probability of a positive example being labeled may depend on its features [6].

Positive Subset Condition: The support of the labeled positive distribution must be contained within the support of the unlabeled positive distribution [5].

Smoothness: Similar examples should have similar probabilities of being positive [5].

Core Methodologies in PU Learning

Two-Step Techniques

The two-step strategy first identifies reliable negative examples from the unlabeled data, then applies standard supervised learning algorithms [8] [6]. The key challenge lies in accurately identifying negative instances without misclassifying hidden positives [6].

Experimental Protocol for Two-Step Methods:

- Reliable Negative Identification: Extract instances from the unlabeled set that are distinctly different from all labeled positive examples using techniques like clustering, outlier detection, or similarity measures [6].

- Classifier Training: Apply supervised learning algorithms (e.g., SVM, logistic regression) using the positive examples and identified reliable negatives [6].

- Iterative Refinement: Some methods iteratively expand the reliable negative set based on classifier confidence scores [6].

Two-Step PU Learning Workflow

Biased Learning Methods

Biased learning approaches treat all unlabeled examples as negative, acknowledging that this introduces label noise where some positives are mislabeled as negatives [8] [6]. These methods employ techniques robust to this one-sided label noise.

Experimental Protocol for Biased Learning:

- Noise-Tolerant Algorithm Selection: Choose classification algorithms that demonstrate robustness to label noise, such as certain SVM variants or probabilistic methods [8].

- Importance Weighting: Assign different weights to labeled positives and unlabeled examples to account for the biased nature of the training set [6].

- Loss Function Modification: Adapt loss functions to remain effective despite the one-sided label noise in the training data [8].

Class Prior Incorporation

Many modern PU learning methods incorporate class prior estimation (α = P(y=1)), which represents the proportion of positive examples in the unlabeled data [5] [6]. Accurate estimation of this parameter is crucial for many PU learning algorithms.

Table 2: PU Learning Method Categories and Characteristics

| Method Category | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Two-Step Methods | Identify reliable negatives, then train classifier | Intuitive, can use standard algorithms | Sensitive to initial negative identification |

| Biased Learning | Treat unlabeled as noisy negatives | Simple implementation, works with large datasets | Performance degrades with many hidden positives |

| Unbiased Risk Estimation | Derive unbiased estimators of classification risk | Strong theoretical foundation, state-of-the-art results | Relies on accurate class prior estimation |

PU Learning for Material Synthesizability Prediction

Problem Framing in Materials Science

In material synthesizability prediction, the goal is to identify which hypothetical material compositions can be successfully synthesized [3]. The fundamental challenge is that materials databases (e.g., ICSD) contain only positive examples of successfully synthesized materials, while no reliable database of non-synthesizable materials exists [3] [2]. This creates an ideal application scenario for PU learning techniques.

The problem is formally framed as:

- Positive Examples: Experimentally confirmed synthesizable materials from databases like ICSD [3] [2].

- Unlabeled Examples: Hypothetical material compositions generated through computational methods, containing both synthesizable and non-synthesizable materials [3] [2].

Implementation Approaches

SynthNN Framework: A deep learning synthesizability model that leverages the entire space of synthesized inorganic chemical compositions using a PU learning approach [3]. The model uses atom2vec representations that learn optimal features directly from the distribution of synthesized materials [3].

Experimental Protocol for Material Synthesizability Prediction:

- Data Collection: Extract known synthesizable materials from ICSD as positive examples [3] [2].

- Unlabeled Set Generation: Create hypothetical material compositions through computational generation or extract from theoretical databases [2].

- Feature Representation: Employ composition-based representations like atom2vec that learn embeddings optimized for synthesizability prediction [3].

- PU Algorithm Application: Implement appropriate PU learning methods to handle the unlabeled data containing both synthesizable and non-synthesizable materials [3] [2].

- Validation: Assess performance using holdout test sets and compare against baseline methods like charge-balancing or formation energy thresholds [3].

Material Synthesizability Prediction Using PU Learning

Performance and Applications

PU learning approaches have demonstrated remarkable success in material synthesizability prediction. The SynthNN model identifies synthesizable materials with 7× higher precision than traditional DFT-calculated formation energies and outperformed human experts with 1.5× higher precision while completing tasks five orders of magnitude faster [3]. More recent approaches using large language models (CSLLM framework) have achieved up to 98.6% accuracy in synthesizability prediction [2].

Table 3: PU Learning Performance in Material Discovery

| Method | Accuracy | Comparison to Baselines | Application Scope |

|---|---|---|---|

| SynthNN | Not specified | 7× higher precision than formation energy | Inorganic crystalline materials |

| CSLLM | 98.6% | Superior to energy above hull (74.1%) and phonon stability (82.2%) | 3D crystal structures |

| PU Learning for MXenes | >75% | Improved over traditional approaches | 2D MXenes |

| Teacher-Student Network | 92.9% | Advanced over previous PU methods | 3D crystals |

Table 4: Essential Research Reagents for PU Learning in Material Science

| Resource | Function | Application Example |

|---|---|---|

| ICSD Database | Source of positive examples (synthesized materials) | Training data for synthesizability prediction [3] [2] |

| Theoretical Materials Databases | Source of unlabeled examples | MP, OQMD, JARVIS databases provide hypothetical structures [2] |

| atom2vec Representation | Composition-based feature learning | Learns optimal representations from synthesized materials distribution [3] |

| Class Prior Estimation Tools | Estimate α = P(y=1) in unlabeled data | Critical for unbiased risk estimation methods [8] [6] |

| PU Learning Libraries | Implementations of PU algorithms | Frameworks supporting two-step, biased, and unbiased methods [8] |

Advanced Topics and Future Directions

Instance-Dependent PU Learning

Traditional PU learning often assumes the SCAR condition, but real-world applications frequently exhibit instance-dependent labeling where the probability of a positive example being labeled depends on its features [6]. This is particularly relevant in material science, where more "obvious" or well-studied material compositions might be more likely to be synthesized and recorded [6].

Representation Learning for PU Data

Recent advances focus on learning representations that explicitly disentangle positive and negative distributions within the unlabeled data [7]. These approaches employ novel loss functions that project unlabeled data into spaces where positive and negative clusters become more separable, effectively reducing the problem complexity [7].

Robust and Unbiased Methods

Current research addresses limitations of existing PU learning methods regarding their sensitivity to feature noise and reliance on accurate class prior estimation [8]. Methods like Pin-LFCS (Pinball Loss Factorization and Centroid Smoothing) leverage robust loss functions and loss factorization techniques to create more reliable PU classifiers [8].

PU learning represents a powerful framework for tackling binary classification problems where negative examples are unavailable or difficult to obtain. The application to material synthesizability prediction demonstrates its practical utility in accelerating material discovery by reliably identifying synthesizable materials from vast spaces of hypothetical compositions. As research continues to address challenges like instance-dependent labeling and robust learning with noisy features, PU learning methodologies are poised to become increasingly valuable tools in computational material science and drug development.

Key Real-World Scenarios for PU Learning in Materials Science and Drug Discovery

Positive and Unlabeled (PU) Learning is a specialized branch of machine learning that addresses a common data scarcity problem: the absence of explicitly labeled negative examples. In numerous scientific domains, researchers can readily identify confirmed positive instances (e.g., successfully synthesized materials, known drug-target interactions) but lack a definitive set of negative cases. Failed experiments or non-interactions are rarely documented in structured databases, leaving a vast pool of unlabeled data that may contain both positive and negative instances. PU learning algorithms are specifically designed to learn effective classifiers from this inherently biased data, making them invaluable for accelerating discovery in fields like materials science and pharmaceutical research [9] [6].

The core challenge PU learning addresses is the biased sampling of positive labels. Traditional supervised learning requires both positive and negative examples to define a decision boundary. When unlabeled data is simply treated as negative, it introduces significant false negatives into the training set, severely degrading model performance. PU learning frameworks overcome this by employing strategies such as identifying reliable negative examples from the unlabeled set, re-weighting the importance of training instances, or treating the problem as one with one-sided label noise [6]. This capability is particularly crucial for scientific discovery, where the goal is often to identify new positive instances—new synthesizable materials or new therapeutic drug candidates—from a vast space of unlabeled possibilities.

Core PU Learning Applications in Materials Science

Predicting Crystalline Material Synthesizability

A primary application of PU learning in materials science is predicting the synthesizability of hypothetical inorganic crystalline materials. The fundamental challenge is that while databases like the Inorganic Crystal Structure Database (ICSD) contain a rich history of successfully synthesized materials (positives), data on unsuccessful synthesis attempts is virtually non-existent [3]. Furthermore, traditional proxies for synthesizability, such as thermodynamic stability calculated via density functional theory (DFT) or simple charge-balancing heuristics, have proven insufficient. Stability metrics ignore kinetic factors and technological constraints, while over half of the experimentally synthesized materials in the Materials Project database violate classic charge-balancing rules [9].

To address this, researchers have developed several sophisticated PU learning frameworks:

- SynCoTrain: This is a semi-supervised, dual-classifier model that employs a co-training strategy with two distinct graph convolutional neural networks: SchNet and ALIGNN. These networks provide complementary "perspectives" on the crystal structure data—SchNet uses continuous filters suitable for atomic structures (a "physicist's perspective"), while ALIGNN directly encodes atomic bonds and angles (a "chemist's perspective"). The models iteratively exchange predictions on unlabeled data, mitigating individual model bias and enhancing generalizability for predicting synthesizability, particularly in oxide crystals [9].

- SynthNN: This deep learning model uses a PU learning approach to predict synthesizability from chemical composition alone, without requiring prior crystal structure information. It leverages the entire space of synthesized inorganic compositions from the ICSD, augmented with artificially generated unsynthesized materials (treated as unlabeled data). SynthNN learns an optimal representation of chemical formulas directly from the data distribution, autonomously discovering relevant chemical principles like charge-balancing and ionicity. It demonstrates a 7x higher precision in identifying synthesizable materials compared to using DFT-calculated formation energies [3].

- Solid-State Synthesizability Prediction: This approach utilizes a high-quality, human-curated dataset of 4,103 ternary oxides to train a PU learning model. This dataset, meticulously extracted from literature, includes specific synthesis conditions and outcomes, providing a more reliable foundation for predicting which of 4,312 hypothetical compositions are likely synthesizable via solid-state reaction [10] [11].

Table 1: Quantitative Performance of PU Learning Models in Materials Science

| Model Name | Application Focus | Key Performance Metric | Result |

|---|---|---|---|

| SynCoTrain [9] | Synthesizability of Oxide Crystals | Recall on Test Sets | Achieved high recall on internal and leave-out test sets. |

| SynthNN [3] | Synthesizability of Inorganic Crystals | Precision vs. DFT Formation Energy | 7x higher precision than DFT-based methods. |

| Human Expert Benchmark [3] | Material Discovery Task | Precision & Speed vs. SynthNN | 1.5x higher precision and 100,000x faster than the best human expert. |

| PU Model (Materials Project) [12] | General Synthesizability | True Positive Rate | Correctly identified synthesized materials with 91% accuracy. |

Workflow for Materials Synthesizability Prediction

The following diagram illustrates the standard workflow for applying PU learning to materials synthesizability prediction, integrating steps from models like SynCoTrain and SynthNN.

Core PU Learning Applications in Drug Discovery

Screening Drug-Target and Drug-Drug Interactions

In drug discovery, PU learning is critical for virtual screening, where the goal is to identify novel interactions between compounds and biological targets. The data landscape mirrors that of materials science: known interactions (positives) are catalogued in databases, but confirming the absence of an interaction (a true negative) is experimentally intractable. The vast number of possible drug-target or drug-drug pairs makes exhaustive testing impossible [13] [14].

Key applications and methods include:

- NAPU-bagging SVM: This novel semi-supervised framework was developed to identify multitarget-directed ligands (MTDLs). It uses an ensemble of SVM classifiers trained on resampled "bags" containing positive, negative, and unlabeled data. This approach is engineered to manage false positive rates while maintaining high recall, which is critical for compiling a list of credible candidate compounds for further testing. It has successfully identified novel hits for ALK-EGFR in non-small-cell lung cancer and pan-agonists for dopamine receptors [15].

- PUDTI: A comprehensive framework for screening drug-target interaction (DTI) candidates for drug repositioning. Its first step, NDTISE, uses PU learning to extract highly credible negative DTI samples from the unlabeled space, overcoming the limitations of random negative selection. By integrating these reliable negatives with biological feature vectors and an SVM-based optimizer, PUDTI achieved the highest Area Under the Curve (AUC) among several state-of-the-art methods on datasets for human enzymes, ion channels, GPCRs, and nuclear receptors [13].

- DDI-PULearn: This method addresses the large-scale prediction of drug-drug interactions (DDIs), where a lack of verified negative samples also poses a challenge. It uses a two-step process: first, it generates seeds of reliable negatives using a One-Class SVM (OCSVM) under a high-recall constraint and a cosine-similarity-based KNN. Then, it employs an iterative SVM to identify a full set of reliable negatives from the unlabeled data for final binary classification, significantly outperforming baseline and contemporary methods [14].

Workflow for Drug-Target Interaction Screening

The process of screening for novel drug-target interactions using PU learning typically follows a two-step strategy, as implemented in frameworks like PUDTI and DDI-PULearn.

Table 2: Key PU Learning Methods and Their Applications in Drug Discovery

| Method Name | Application | Core Technique | Key Outcome |

|---|---|---|---|

| NAPU-bagging SVM [15] | Multitarget-Directed Ligand (MTDL) Screening | Ensemble SVM with bagging of Positive/Unlabeled data | Manages false positive rate while maintaining high recall; identified novel ALK-EGFR hits. |

| PUDTI [13] | Drug-Target Interaction (DTI) Screening | NDTISE for negative sample extraction + SVM optimization | Achieved highest AUC on 4 datasets (enzymes, ion channels, GPCRs, nuclear receptors). |

| DDI-PULearn [14] | Drug-Drug Interaction (DDI) Prediction | Reliable negative seeds via OCSVM/KNN + iterative SVM | Superior performance vs. 5 state-of-the-art methods in predicting unobserved DDIs. |

Experimental Protocols and Research Toolkit

Detailed Protocol for a Co-Training PU Experiment (SynCoTrain)

The following protocol outlines the key steps for implementing a co-training PU learning framework for synthesizability prediction, based on the SynCoTrain model [9].

Data Curation and Partitioning:

- Positive Set (SP): Collect confirmed synthesizable materials from a reliable database (e.g., experimentally synthesized oxide crystals from the Materials Project).

- Unlabeled Set (SU): Compile a set of hypothetical materials from the same database or generated computationally. This set contains both synthesizable and unsynthesizable materials, but their labels are hidden from the model.

- The data is typically split into training, validation, and hold-out test sets. The positive and unlabeled sets are defined within the training data.

Feature Representation:

- For each crystal structure in SP and SU, generate graph-based representations.

- Utilize two complementary graph convolutional neural networks:

- ALIGNN: Encodes the crystal graph, including atomic bonds (edges) and bond angles (line-graph), providing a rich, chemically-informed representation.

- SchNet: Uses continuous-filter convolutional layers that operate on a continuous representation of atoms, suitable for modeling quantum interactions.

Initial Model Training:

- Train both ALIGNN and SchNet models independently using a base PU learning algorithm (e.g., the method by Mordelet and Vert). This initial training uses only the labeled positives and the entire unlabeled set.

Iterative Co-Training:

- Each model (ALIGNN and SchNet) predicts labels for the unlabeled data in the training set.

- The models exchange their most confident predictions. For instance, the data points that ALIGNN classifies as positive with the highest confidence are added to the positive training set for SchNet in the next iteration, and vice-versa.

- This process repeats for a predefined number of iterations or until convergence, allowing the models to collaboratively "teach" each other and refine the decision boundary.

Final Prediction and Aggregation:

- After the final co-training iteration, the predictions of both models on the hold-out test set or new hypothetical materials are aggregated, typically by averaging their output scores.

- A final synthesizability score or class label is assigned based on this aggregated prediction.

The Scientist's Computational Toolkit

Table 3: Essential Research Reagents and Computational Tools for PU Learning Experiments

| Tool / Resource | Type | Function in PU Learning Research |

|---|---|---|

| Materials Project Database [9] [12] | Materials Database | Primary source of known (positive) and hypothetical (unlabeled) crystal structures and compositions. |

| ICSD (Inorganic Crystal Structure Database) [3] | Materials Database | A comprehensive collection of experimentally determined inorganic crystal structures used for positive examples. |

| SchNet [9] | Graph Neural Network | A GCNN that uses continuous filters to model quantum interactions in atomic systems; provides one "view" in a co-training framework. |

| ALIGNN [9] | Graph Neural Network | A GCNN that incorporates atomic bond and angle information; provides a complementary "view" for co-training. |

| OCSVM (One-Class SVM) [14] | Machine Learning Model | Used in the first step of two-step PU learning to identify a initial set of reliable negative examples from the unlabeled data. |

| SVM (Support Vector Machine) [15] [13] | Machine Learning Model | A versatile and powerful classifier often used as the core algorithm in both two-step and cost-sensitive PU learning methods. |

| Atom2Vec [3] | Representation Learning | An algorithm that learns embedding representations of atoms from material compositions, used in models like SynthNN. |

Positive-Unlabeled learning has emerged as a foundational technology for overcoming one of the most significant bottlenecks in data-driven science: the scarcity of definitive negative data. In materials science, PU learning frameworks like SynCoTrain and SynthNN are moving beyond unreliable proxies for synthesizability, enabling the direct prediction of new, synthetically accessible materials from large databases with precision that can surpass human experts. In drug discovery, methods like NAPU-bagging SVM and PUDTI are enhancing the efficiency of virtual screening for drug-target and drug-drug interactions by managing false positive rates and identifying credible candidates for further experimental validation.

The future of PU learning in these domains lies in tackling more complex, instance-dependent labeling scenarios, where the probability of a positive example being labeled depends on its specific characteristics. Furthermore, the integration of PU learning with generative models and active learning cycles promises to create fully autonomous discovery systems. As these computational frameworks continue to mature, validated by ongoing experimental work, they will profoundly accelerate the design of novel materials and therapeutics, pushing the boundaries of scientific discovery.

Core PU Learning Frameworks and Their Groundbreaking Applications in Material Science

In the field of drug discovery, the challenge of predicting material synthesizability is a pivotal one. A significant obstacle is that data often exists in a Positive-Unlabeled (PU) form: researchers have a set of molecules known to be synthesizable (Positives) and a much larger set of molecules for which synthesizability is unknown (Unlabeled). The unlabeled set contains both synthesizable and non-synthesizable molecules, but the labels are missing. Applying standard classification algorithms, which assume that unlabeled examples are negative, leads to severely biased and unreliable models. The "Two-Step Strategy" for Identifying Reliable Negatives and Training Classifiers provides a robust framework to address this fundamental problem, enabling more accurate in-silico prediction of synthesizable chemical matter for downstream drug development efforts [16] [17] [18].

This whitepaper provides an in-depth technical guide to implementing this strategy, contextualized specifically for material synthesizability prediction. We detail the underlying methodologies, present quantitative benchmarks, and provide actionable experimental protocols for research scientists.

Technical Foundation: PU Learning in Chemical Workflows

The Synthesizability Prediction Problem

Drug discovery and development is a long and expensive process, often taking over 12 years and costing upwards of $2.8 billion with a success rate of just 1 in 5000 [16]. A recurring challenge in molecular design is creating molecules that are not only therapeutically promising but also synthesizable [18]. The vastness of chemical space makes empirical testing of all candidates impossible, necessitating computational prioritization.

The problem is intrinsically suited for PU learning. Through historical synthesis data, we have Positives—molecules with confirmed synthetic pathways. Through large-scale molecular generation (e.g., using Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs) [16]), we have a massive pool of Unlabeled molecules whose synthesizability is unknown. The unlabeled set is a mixture of synthesizable and non-synthesizable compounds. The core task is to reliably identify the non-synthesizable molecules within the unlabeled set to train a robust classifier.

The Two-Step Strategy, also known as the Spy-based technique, ingeniously extracts information from the unlabeled data to identify reliable negative examples.

- Step 1: Identifying Reliable Negatives. A small, random subset of positive examples is "contaminated" into the unlabeled set; these are the "spy" positives. A probabilistic classifier is then trained to distinguish the main positive set from the contaminated unlabeled set. The classifier's behavior on the "spy" examples is used to infer a probability threshold. Unlabeled examples with classification probabilities below this threshold are deemed "Reliable Negatives" (RNs).

- Step 2: Training the Final Classifier. Using the original Positives (P), the newly identified Reliable Negatives (RN), and the remaining unlabeled data (U), a final classifier is trained. This model is then used to predict the synthesizability of novel molecules.

Figure 1: A high-level workflow of the Two-Step Strategy for identifying reliable negatives and training the final classifier.

Experimental Protocols & Methodologies

Step 1: Protocol for Identifying Reliable Negatives

This protocol details the process of extracting a set of high-confidence negative examples from the unlabeled data.

Inputs:

P: Set of confirmed synthesizable molecules (Positives).U: Set of molecules with unknown synthesizability (Unlabeled).spy_fraction: Fraction ofPto use as spy examples (e.g., 0.15).

Procedure:

- Spy Selection: Randomly select a subset

S(the "spies") fromPusing the specifiedspy_fraction.S = sample(P, spy_fraction * |P|). - Data Splitting:

- The remaining positives become the training positives:

P_train = P \ S. - The unlabeled set is contaminated with the spies:

U_contaminated = U ∪ S.

- The remaining positives become the training positives:

- Feature Representation: Convert all molecules into a numerical feature representation. Common descriptors include [19]:

- Molecular Descriptors: Calculate using tools like RDKit. Key descriptors for synthesizability may include:

MolLogP: Octanol-water partition coefficient.MolWt: Molecular weight.NumRotatableBonds: Number of rotatable bonds.AromaticProportion: Ratio of aromatic atoms to heavy atoms.

- Graph Representations: Represent molecules as graphs for Graph Neural Networks (GNNs), where atoms are nodes and bonds are edges [17].

- Molecular Descriptors: Calculate using tools like RDKit. Key descriptors for synthesizability may include:

- Preliminary Model Training: Train a probabilistic classifier (e.g., a Random Forest or a simple Neural Network) to distinguish

P_trainfromU_contaminated. The model learns to output a probabilityP(synthesizable | features). - Spy Analysis & RN Identification:

- Use the trained model to predict probabilities for all molecules in

U_contaminated. - Analyze the probability distribution of the spy set

S. Determine a thresholdτ(e.g., the 5th percentile of the spy probability distribution). - All molecules in

U(the original unlabeled set, excluding the spies) with a predicted probability less thanτare classified as Reliable Negatives (RN).RN = {m | m ∈ U and P(m) < τ}.

- Use the trained model to predict probabilities for all molecules in

Step 2: Protocol for Training the Final Classifier

This protocol uses the identified Reliable Negatives to construct a robust dataset for training the production synthesizability classifier.

Inputs:

P: Original positive set.RN: Reliable Negatives identified in Step 1.U_remaining: The remaining unlabeled data (U \ RN).

Procedure:

- Final Dataset Construction: Create a ternary dataset for model training.

- Positive Class:

P. - Negative Class:

RN. - Unlabeled Class (optional):

U_remainingcan be used in semi-supervised learning algorithms or held out for evaluation.

- Positive Class:

- Advanced Model Training: Train a final, more powerful classifier. Given the structured nature of molecular data, Graph Neural Networks (GNNs) like Message Passing Neural Networks (MPNNs) or Graph Convolutional Networks (GCNs) are highly suitable [17]. This model is trained on the

(P, RN)dataset. - Validation and Deployment: The final model can be deployed to score new molecules generated by de novo design systems (e.g., GCPN, GraphAF [17]) for their likelihood of being synthesizable, acting as a critical filter in a virtual screening pipeline.

Figure 2: The iterative model training and refinement process for synthesizability prediction.

Quantitative Benchmarks and Data Presentation

To evaluate the efficacy of the Two-Step Strategy, it is crucial to benchmark its performance against baseline methods and across different chemical datasets. The following tables summarize key performance metrics from simulated experiments based on published literature [17] [18].

Table 1: Performance comparison of different classifier training strategies on synthesizability prediction. The Two-Step PU Learning strategy demonstrates superior accuracy and F1-score by effectively handling the unlabeled data.

| Training Strategy | Dataset | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| Naive (U as Negative) | ChEMBL | 0.72 | 0.65 | 0.81 | 0.72 |

| Naive (U as Negative) | ZINC250k | 0.68 | 0.61 | 0.85 | 0.71 |

| Two-Step PU Learning | ChEMBL | 0.89 | 0.85 | 0.88 | 0.86 |

| Two-Step PU Learning | ZINC250k | 0.91 | 0.87 | 0.90 | 0.88 |

Table 2: Impact of the Reliable Negative (RN) set quality on final model performance. A higher probability threshold (τ) for selecting RNs yields a purer but smaller negative set, which generally leads to better model performance.

| RN Selection Threshold (τ) | Size of RN Set | RN Set Purity (%) | Final Model AUC |

|---|---|---|---|

| 5th Percentile | 45,200 | 94.5 | 0.94 |

| 10th Percentile | 82,150 | 89.2 | 0.91 |

| 20th Percentile | 155,000 | 81.8 | 0.85 |

The Scientist's Toolkit: Research Reagent Solutions

Implementing the Two-Step Strategy requires a suite of software tools and datasets. The table below details essential "research reagents" for this field.

Table 3: Essential software tools and datasets for implementing PU learning for synthesizability prediction.

| Tool / Resource | Type | Primary Function in Workflow | Source / Reference |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics | Calculating molecular descriptors (LogP, MW, etc.); handling SMILES strings; basic molecular operations [19]. | https://www.rdkit.org |

| Therapeutics Data Commons (TDC) | Data Repository | Providing benchmark datasets for various drug discovery tasks, including synthesizability prediction [16]. | https://tdc.hms.harvard.edu |

| DeepGraphLearning | GitHub Repository | Code implementations for graph-based molecular property prediction and generation, providing GNN model architectures [17]. | GitHub Repository |

| DeepPurpose | GitHub Library | A deep learning toolkit for drug-target interaction prediction, adaptable for other property prediction tasks [16]. | GitHub Repository |

| MolDesigner | Interactive Tool | Provides a user interface for designing efficacious drugs with deep learning, useful for visualizing candidate molecules [16]. | Harvard Zitnik Lab |

| ReaSyn | Generative AI Model | Predicts molecular synthesis pathways using a chain of reaction notation; useful for validating and interpreting synthesizability predictions [18]. | NVIDIA |

The Two-Step Strategy for Identifying Reliable Negatives and Training Classifiers provides a principled and effective computational framework for addressing the critical challenge of material synthesizability prediction within the Positive-Unlabeled learning paradigm. By methodically extracting high-confidence negative examples from unlabeled data, this approach enables the training of robust classifiers that significantly outperform naive methods. Integrating this strategy with modern molecular representation learning techniques, such as graph neural networks, creates a powerful pipeline for prioritizing synthesizable drug candidates. This accelerates the early stages of drug discovery by ensuring that costly experimental resources are focused on the most viable and promising chemical matter, ultimately contributing to reducing the time and cost associated with bringing new therapeutics to patients [16] [18].

The discovery of new functional materials is a cornerstone of technological advancement, yet the experimental validation of computationally predicted materials remains a significant bottleneck. This challenge is particularly acute in the domain of solid-state synthesis, where the journey from a theoretical composition to a synthesized material is often non-trivial. While high-throughput computational screening can generate thousands of promising hypothetical compounds, their realization in the laboratory is constrained by synthesizability limitations. Traditional proxies for synthesizability, such as thermodynamic stability (e.g., energy above the convex hull), have proven insufficient as they fail to account for kinetic barriers and synthesis pathway dependencies [10] [1].

This case study examines a machine learning framework developed to predict the solid-state synthesizability of ternary oxides. The research addresses a fundamental problem in materials informatics: the absence of explicitly reported negative examples (failed syntheses) in scientific literature. By applying Positive-Unlabeled (PU) Learning to a high-quality, human-curated dataset, the work demonstrates a pathway to more reliable synthesizability prediction, bridging the gap between computational materials design and experimental realization [10] [1].

The Data Foundation: A Human-Curated Dataset

The performance of data-driven models is intrinsically linked to the quality of the underlying data. Many previous approaches relied on text-mined datasets, which, while large-scale, often suffer from quality issues. One analysis noted that the overall accuracy of a prominent text-mined solid-state reaction dataset was only 51% [1].

Data Collection and Curation Methodology

To address this limitation, researchers constructed a human-curated dataset of ternary oxides through meticulous manual extraction from the literature [1]. The protocol involved:

- Source Identification: 6,811 ternary oxide entries with Inorganic Crystal Structure Database (ICSD) IDs were initially downloaded from the Materials Project database.

- Filtering: After removing entries containing non-metal elements and silicon, 4,103 ternary oxide entries remained, representing 3,276 unique compositions from 1,233 chemical systems.

- Literature Mining: Each composition was investigated through ICSD, Web of Science, and Google Scholar. The search process included:

- Examining papers corresponding to the ICSD IDs.

- Reviewing the first 50 search results (sorted from oldest to newest) in Web of Science using the chemical formula as input.

- Analyzing the top 20 relevant search results from Google Scholar with the chemical formula as input.

- Data Extraction: For each ternary oxide, researchers recorded whether it was synthesized via solid-state reaction. For confirmed solid-state syntheses, detailed parameters were extracted, including highest heating temperature, pressure, atmosphere, mixing/grinding conditions, number of heating steps, cooling process, precursors, and whether the product was single-crystalline.

Dataset Composition

Table 1: Composition of the human-curated ternary oxides dataset.

| Label Category | Number of Entries | Description |

|---|---|---|

| Solid-State Synthesized | 3,017 | Successfully synthesized via solid-state reaction. |

| Non-Solid-State Synthesized | 595 | Synthesized, but via alternative methods (e.g., sol-gel, hydrothermal). |

| Undetermined | 491 | Insufficient evidence for definitive classification. |

| Total | 4,103 |

This curated dataset provided a reliable foundation for analysis and model training, enabling the identification of inaccuracies in automated extraction methods. A simple screening using this dataset identified 156 outliers in a subset of a text-mined dataset containing 4,800 entries, of which only 15% were correctly extracted [10] [1].

Positive-Unlabeled Learning Methodology

The PU Learning Paradigm

A fundamental challenge in predicting material synthesizability is the lack of confirmed negative examples. Scientific publications almost exclusively report successful syntheses, creating a dataset with confirmed positives and a large set of "unlabeled" examples whose true status (synthesizable or not) is unknown [1] [20]. Standard binary classifiers require both positive and negative examples, making them unsuitable for this problem.

Positive-Unlabeled (PU) Learning is a semi-supervised machine learning approach designed specifically for this scenario. It operates under the assumption that the unlabeled data contains both positive and negative examples, but the labels are hidden. The core idea is to learn the characteristics of the known positive class and use this knowledge to infer labels within the unlabeled set [20] [3].

Application to Ternary Oxides

In this study, the human-curated dataset was used to train a PU learning model. The 3,017 solid-state synthesized entries served as the positive (P) class. The role of the unlabeled (U) class was filled by a large set of hypothetical compositions or materials not confirmed to be synthesized via solid-state routes. The model's objective was to identify, from the unlabeled set, those compositions that share characteristic patterns with the known positive examples, thereby classifying them as likely synthesizable [10].

The model leverages a machine learning algorithm (e.g., a classifier based on decision trees or neural networks) and is trained to distinguish the positive examples from the unlabeled set. During this process, it implicitly learns to identify reliable negative examples from the unlabeled data based on their dissimilarity to the positives, refining its decision boundary iteratively [20] [3].

Figure 1: Positive-Unlabeled (PU) learning workflow for synthesizability prediction. The model iteratively identifies reliable negatives from the unlabeled data to refine its decision boundary.

Experimental Protocols and Workflow

Feature Set and Model Training

The model was trained using features derived from the chemical compositions of the ternary oxides. While the specific feature set was not exhaustively detailed, such models typically incorporate descriptors such as [1] [3]:

- Elemental Properties: Electronegativity, ionic radii, atomic number, and valence electron configurations of the constituent elements.

- Stoichiometric Metrics: Cation-cation ratios, oxygen content, and overall composition ratios.

- Stability Indicators: Computed metrics like energy above the convex hull (Ehull), though the PU model aims to go beyond these traditional measures.

- Learned Representations: Vector embeddings for atoms or compositions learned directly from the data distribution (e.g., via methods like atom2vec) [3].

The training process involves a cross-validation scheme to optimize hyperparameters and prevent overfitting, ensuring the model generalizes well to unseen compositions.

Validation and Benchmarking

The model's performance was evaluated against established baselines. Key benchmarks included [1] [3]:

- Random Guessing: A baseline assuming random predictions weighted by class imbalance.

- Charge-Balancing: A simple heuristic predicting synthesizability based on whether the composition can be charge-balanced using common oxidation states.

- Stability Metrics: Using thermodynamic stability (e.g., Ehull) alone as a synthesizability filter.

Table 2: Comparative performance of synthesizability prediction methods.

| Prediction Method | Key Metric | Performance Note |

|---|---|---|

| PU Learning Model (This Study) | Precision | 7x higher precision than formation energy-based methods [3]. |

| Charge-Balancing Heuristic | Coverage | Only 37% of known synthesized inorganic materials are charge-balanced [3]. |

| Text-Mined Data Model | Data Quality | 156 outliers found in a subset; only 15% of these outliers were correct [10] [1]. |

| Human Experts | Precision & Speed | Outperformed 20 experts with 1.5x higher precision and 10⁵ times faster speed [3]. |

The results demonstrated that the PU learning model significantly outperformed these traditional approaches, highlighting its efficacy for the synthesizability prediction task.

Key Findings and Interpretation

Prediction Outcomes and Model Insights

Application of the trained PU learning model to 4,312 hypothetical ternary oxide compositions identified 134 compounds as being highly likely synthesizable via solid-state reactions [10]. This curated list provides a prioritized target list for experimental validation, dramatically reducing the experimental search space.

Notably, without being explicitly programmed with chemical rules, the model internalized fundamental principles of inorganic chemistry. Analysis indicated that the model learned the importance of charge-balancing, recognized relationships within chemical families, and inferred principles of ionicity from the distribution of the positive training examples [3]. This demonstrates the power of data-driven approaches to capture complex, expert-level knowledge.

Limitations and Considerations

Despite its success, the approach has inherent limitations. The "unlabeled" set contains materials that are genuinely unsynthesizable, as well as synthesizable materials that simply have not been reported or attempted. Consequently, some false positives are inevitable. Furthermore, the model's predictive power is confined to the chemical domain represented in its training data (here, ternary oxides) and may not generalize seamlessly to other material classes without retraining [1] [3].

Table 3: Essential resources for computational and experimental research in solid-state synthesizability.

| Tool / Resource | Function / Application | Specific Example / Source |

|---|---|---|

| Materials Project Database | Source of crystal structures and computed properties for high-throughput screening. | https://materialsproject.org/ [1] |

| Inorganic Crystal Structure Database (ICSD) | Authoritative source of experimentally reported inorganic crystal structures for positive data labeling. | https://icsd.fiz-karlsruhe.de/ [1] [3] |

| Human-Curated Dataset | High-quality, reliable data for training and validating synthesizability models. | Dataset of 4,103 ternary oxides [10] [1] |

| PU Learning Algorithm | Core machine learning framework for learning from positive and unlabeled data. | Inductive PU learning approach [10] [20] |

| Solid-State Synthesis Apparatus | Experimental validation of predicted compositions (furnace, mortar & pestle, etc.). | Tube furnaces, high-pressure setups [1] |

This case study demonstrates that combining high-quality, human-curated data with the Positive-Unlabeled learning framework creates a powerful tool for addressing the critical challenge of synthesizability prediction in materials discovery. By moving beyond traditional thermodynamic proxies and directly learning from experimental records, this approach achieves a higher predictive precision and efficiently guides experimental efforts. The successful identification of 134 promising ternary oxide candidates underscores the potential of PU learning to accelerate the discovery and synthesis of novel functional materials, bridging the gap between computational prediction and experimental realization.

The acceleration of materials discovery through computational methods has created a critical bottleneck: the experimental validation of theoretically predicted crystal structures. While high-throughput calculations can generate millions of candidate materials with promising properties, assessing their synthesizability remains a fundamental challenge. Traditional approaches based on thermodynamic stability metrics, such as energy above the convex hull, often fail to accurately predict which structures can be successfully synthesized in practice, as numerous metastable structures with less favorable formation energies have been experimentally realized [21].

This case study examines a transformative approach to this problem: the Crystal Synthesis Large Language Models (CSLLM) framework. Developed to bridge the gap between theoretical prediction and practical synthesis, CSLLM represents a significant advancement in applying fine-tuned large language models to predict synthesizability, synthetic methods, and suitable precursors for arbitrary 3D crystal structures [21]. We situate this approach within the broader context of positive-unlabeled (PU) learning research for material synthesizability prediction, highlighting how the CSLLM framework leverages sophisticated data construction techniques to overcome the fundamental challenge of obtaining reliable negative samples (non-synthesizable materials) in materials science.

The Synthesizability Prediction Challenge

Limitations of Traditional Methods

Conventional synthesizability assessment relies primarily on thermodynamic and kinetic stability analyses. Formation energies and energy above convex hull calculations via density functional theory (DFT) provide a foundational approach, with structures having favorable formation energies typically considered synthesizable. However, this method achieves only approximately 74.1% accuracy, as many structures with favorable thermodynamics remain unsynthesized, while various metastable structures are successfully synthesized [21]. Kinetic stability assessment through phonon spectrum analysis offers improved performance (approximately 82.2% accuracy) but remains computationally expensive and still imperfect, as structures with imaginary phonon frequencies can still be synthesized [21].

The core challenge in data-driven synthesizability prediction lies in constructing balanced datasets with reliable negative samples. Early machine learning approaches treated structures with unknown synthesizability as negative examples, inevitably introducing numerous synthesizable structures into the negative class [21]. More advanced PU learning methods have demonstrated promising results, achieving 87.9% accuracy for 3D crystals [21], while teacher-student dual neural networks further improved performance to 92.9% [21]. Parallel research on ternary oxides has demonstrated the value of human-curated literature data for training PU learning models, identifying numerous inaccuracies in automated text-mined datasets [10] [11].

The LLM Opportunity

Large language models present a unique opportunity to overcome these limitations through their exceptional capabilities in learning from text representations and complex patterns. Unlike traditional machine learning models, LLMs can process integrated structural information and learn the subtle relationships between crystal features and synthesizability. The CSLLM framework capitalizes on these capabilities through specialized model fine-tuning that aligns general linguistic features with material-specific characteristics critical to synthesizability [21].

The CSLLM Framework: Methodology and Implementation

Data Curation and Representation