Phase Stability Networks: A New Paradigm for Materials Science and Drug Development

This article explores the phase stability network of inorganic materials as a transformative framework for understanding material reactivity and thermodynamic relationships.

Phase Stability Networks: A New Paradigm for Materials Science and Drug Development

Abstract

This article explores the phase stability network of inorganic materials as a transformative framework for understanding material reactivity and thermodynamic relationships. Moving beyond traditional atoms-to-materials approaches, we examine how complex network analysis of thousands of stable compounds reveals previously inaccessible characteristics. For researchers and drug development professionals, we detail methodological advances from high-throughput computation and machine learning, address key challenges in stability prediction and optimization, and validate these approaches through comparative analysis with experimental data. This synthesis provides critical insights for accelerating materials design and enhancing drug product stability in clinical development.

Unraveling the Universal Phase Stability Network of Inorganic Materials

::: {.abstract} A fundamental transformation is underway in materials science, shifting from a traditional, atomistic, trial-and-error approach to a holistic, network-based, and artificially intelligent paradigm. This whitepaper details this paradigm shift, framed within the groundbreaking context of the phase stability network of all inorganic materials. We present quantitative network metrics, delineate experimental and computational protocols for high-throughput data generation, and provide a comprehensive toolkit of generative AI models accelerating the inverse design of novel materials for research and drug development. :::

Historically, materials discovery has been an experiment-driven process, reliant on intuition and painstaking laboratory work—a timeline that often spans decades from conception to deployment [1] [2]. A transformative, complementary approach is emerging: a top-down study of the organizational structure of networks of materials themselves [3]. This paradigm treats not atoms, but entire materials as the fundamental units, analyzing their complex equilibria relationships as a network. Unlocking the structure-property relationships has largely been pursued via bottom-up investigations. In contrast, the top-down approach unravels the complete "phase stability network of all inorganic materials" as a densely connected complex network of thousands of thermodynamically stable compounds (nodes) interlinked by millions of tie-lines (edges) defining their two-phase equilibria [3]. Analyzing the topology of this network uncovers characteristics inaccessible from traditional atoms-to-materials paradigms, such as a data-driven metric for material reactivity known as the "nobility index" [3].

Quantitative Frameworks: The Phase Stability Network

The phase stability network represents a monumental achievement in computational materials science, mapping the relationships between inorganic materials at a systems level. The key quantitative characteristics of this network, derived from high-throughput density functional theory (DFT) calculations, are summarized in Table 1 [3].

Table 1: Quantitative Metrics of the Phase Stability Network

| Network Metric | Quantitative Value | Significance |

|---|---|---|

| Stable Compounds (Nodes) | 21,000 | The set of thermodynamically stable inorganic materials forming the network's basis. |

| Tie-Lines (Edges) | 41 million | Represent two-phase equilibria between compounds, defining the network's connectivity. |

| Key Derived Metric | Nobility Index | A quantitative, data-driven measure of material reactivity derived from node connectivity. |

The Scientist's Toolkit: Research Reagent Solutions

The construction and interrogation of the phase stability network, along with the subsequent generative design of new materials, rely on a suite of advanced computational and data tools. These are the essential "research reagents" for modern, data-driven materials science.

Table 2: Essential Research Reagents for AI-Driven Materials Discovery

| Tool / Reagent | Type | Primary Function |

|---|---|---|

| High-Throughput DFT | Computational Method | Generates foundational energy and stability data for thousands of compounds at quantum-mechanical accuracy [3] [1]. |

| Curated Materials Databases | Data Resource | Provide structured, accessible repositories of experimental and computational data for model training and validation [1]. |

| Generative AI Models (e.g., GFlowNets, VAEs, Diffusion Models) | AI Software | Enable inverse design by learning probability distributions of materials structures to generate novel, stable candidates matching desired properties [1]. |

| Machine-Learned Potentials (MLPs) | Computational Model | Bridge the accuracy of DFT and the scale of molecular dynamics, allowing realistic simulation of material behavior under various conditions [1]. |

| Validation Platforms (e.g., MatterSim) | AI Simulation Software | Act as a gatekeeper, applying rigorous computational analysis to predict the stability and viability of AI-generated materials under real-world conditions (e.g., temperature, pressure) [2]. |

Experimental & Computational Protocols

The shift to a network- and AI-driven paradigm requires robust, standardized methodologies for data generation, model training, and material validation.

Protocol for High-Throughput Data Generation and Network Construction

- High-Throughput DFT Calculation: Using computational frameworks, perform first-principles DFT calculations on a vast space of potential inorganic compounds to determine their formation energy and thermodynamic stability [3] [1].

- Tie-Line Identification: For each pair of computed compounds, analyze their convex hull relationships. A tie-line (edge) is established if two compounds are found to be in stable two-phase equilibrium [3].

- Network Assembly: Represent each stable compound as a node and each identified tie-line as an edge, constructing the graph structure of the phase stability network.

- Topological Analysis: Apply graph theory algorithms to the assembled network to compute node connectivity, centrality measures, and derive emergent metrics like the nobility index [3].

- Data Reporting: Adhere to standardized reporting guidelines for numerical data, including estimates of both statistical imprecision and systematic inaccuracy, and provide comprehensive descriptions of computational procedures to ensure reproducibility [4].

Protocol for Generative Inverse Design using AI

- Material Representation: Convert known material structures into a suitable digital representation for AI models, such as graph-based formats (atom-bond graphs), sequence-based (e.g., SMILES), or voxel-based grids [1].

- Model Training: Train a generative model (e.g., a Generative Flow Network - GFlowNet, or a Variational Autoencoder - VAE) on the represented materials data. The model learns the underlying probability distribution

P(x)of the training data, creating a latent space that encodes structure-property relationships [1]. - Property-Guided Generation: Sample the model's latent space, guided by user-defined property constraints (e.g., high thermal conductivity, target bandgap). The model decodes these points in latent space to propose novel, stable material structures that meet the criteria [2].

- Stability and Property Validation: Use independent validation tools to apply rigorous computational checks on the generated candidates. This filters out theoretically unstable or non-viable suggestions before experimental synthesis is attempted [2].

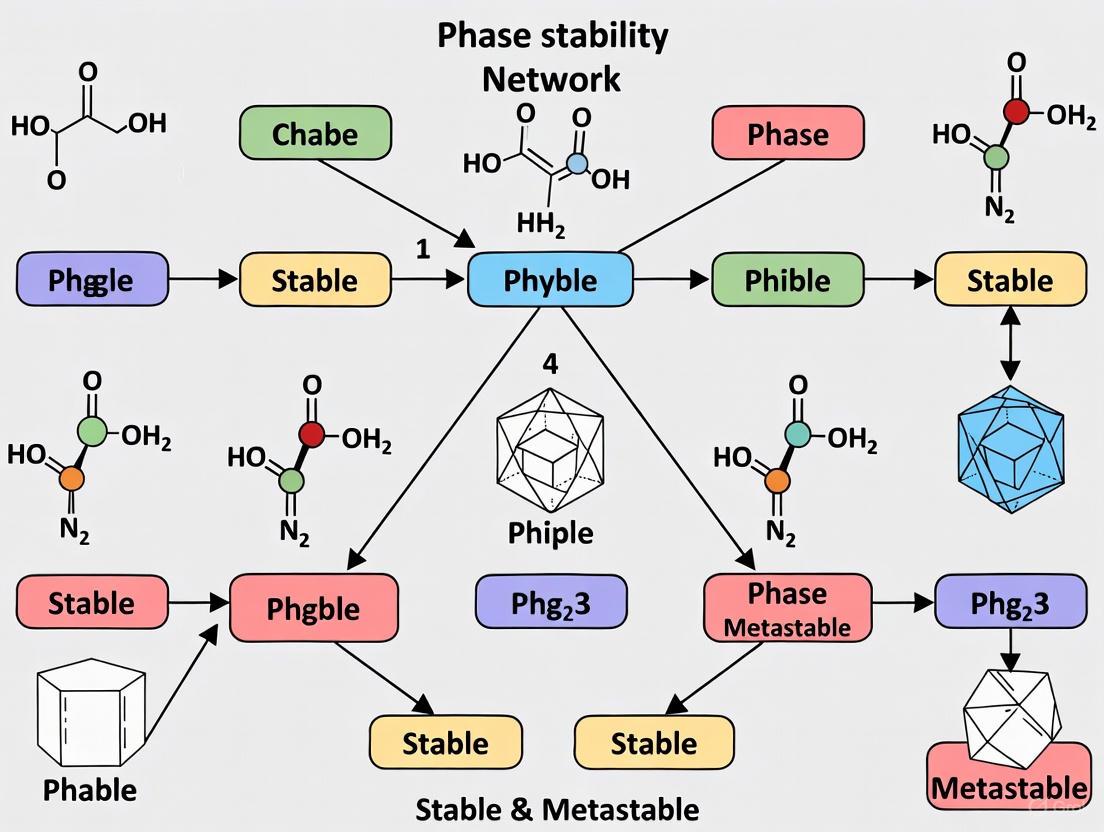

Diagram 1: The shift from traditional, sequential discovery to an integrated, AI-driven workflow.

AI Generative Models: The Engine of Inverse Design

Generative models represent the core engine of the new inverse design capability, moving beyond simple property prediction to the creation of novel materials. As detailed in Table 3, a diverse ecosystem of models exists, each with distinct principles and applications in materials science [1].

Table 3: Generative AI Models for Materials Discovery

| Model Type | Key Principle | Example in Materials Science | Application |

|---|---|---|---|

| Variational Autoencoders (VAEs) | Learn a probabilistic latent space of data; new data is generated by sampling from this space and decoding [1]. | Used for generating novel molecular structures. | Designing new organic molecules and catalysts. |

| Generative Adversarial Networks (GANs) | Use a generator network to create data and a discriminator network to distinguish real from generated data, training adversarially [1]. | Applied to generate crystal structures and optimize material properties. | Discovering new crystalline compounds. |

| Diffusion Models | Iteratively denoise a random signal to generate new data samples that match the training data distribution. | DiffCSP, SymmCD for crystal structure prediction [1]. | Predicting stable crystal structures from noise. |

| Generative Flow Networks (GFlowNets) | Learn a policy to generate compositional objects through a sequence of actions, biasing generation towards high-reward (e.g., high stability) candidates [1]. | Crystal-GFN for generating stable crystals [1]. | Composition-based discovery of inorganic materials. |

| Transformers | Use self-attention mechanisms to understand context and relationships in sequential data. | MatterGPT, Space Group Informed Transformer for generating materials [1]. | Sequence-based design of polymers and molecules. |

Diagram 2: The iterative workflow of AI-driven inverse materials design.

The paradigm shift from atoms to networks, powered by high-throughput computation and generative AI, is fundamentally restructuring materials science. The phase stability network provides a macroscopic lens through which to understand material relationships and reactivity, while generative models like MatterGen enable the direct, rational design of new materials. This synergistic approach, integrating network theory with AI-driven inverse design, dramatically accelerates the discovery timeline. It holds immense promise for addressing global challenges in sustainability, healthcare, and energy by rapidly delivering advanced materials for applications ranging from drug delivery systems to next-generation batteries. ::: ::: {.footer} This technical whitepaper synthesizes findings from current peer-reviewed literature and cutting-edge industrial research. :::

The phase stability network represents a transformative, top-down approach for understanding the relationships between inorganic crystalline materials. Moving beyond traditional bottom-up investigations of atomic structure, this network-based framework models the complete thermodynamic stability landscape of inorganic materials [3]. This paradigm shift allows researchers to uncover material characteristics and reactivity metrics that remain inaccessible through conventional atoms-to-materials paradigms [3]. The construction of this network marks a significant milestone in materials science, enabling systematic exploration of material reactivity and stability across chemical space.

This network approach is particularly valuable for accelerating the design of functional materials essential for technological advances in energy storage, catalysis, and carbon capture [5]. By mapping the complex equilibrium relationships between thousands of compounds, researchers can identify novel materials with desired properties more efficiently than through traditional experimentation and human intuition alone [5]. The phase stability network thus serves as a foundational resource for inverse materials design, where target properties constrain the search for new stable compounds.

Core Network Architecture and Construction

Data Source and Computational Framework

The phase stability network is constructed from first-principles computational data generated through high-throughput density functional theory (DFT) calculations [3]. This massive dataset encompasses 21,000 thermodynamically stable inorganic compounds (nodes) interconnected by 41 million tie lines (edges) representing their two-phase equilibria [3]. The network is formulated as a complex, densely-connected graph where nodes correspond to stable compounds and edges represent verified thermodynamic coexistence relationships.

The reference data for stability determinations is typically drawn from comprehensive materials databases including:

- The Materials Project (MP) [5]

- Alexandria dataset [5]

- Inorganic Crystal Structure Database (ICSD) [5]

Stability is quantified by the energy above the convex hull, with structures generally considered stable if their energy per atom after DFT relaxation is within 0.1 eV per atom above the convex hull defined by reference datasets [5].

Quantitative Network Specifications

Table 1: Core Network Architecture Specifications

| Network Component | Specification | Description |

|---|---|---|

| Nodes | 21,000 | Thermodynamically stable inorganic compounds |

| Edges | 41 million | Tie lines defining two-phase equilibria |

| Stability Threshold | ≤0.1 eV/atom | Energy above convex hull reference |

| Data Source | High-throughput DFT | Density functional theory calculations |

| Network Type | Complex, densely connected | Non-random topological structure |

Key Methodologies and Experimental Protocols

High-Throughput Density Functional Theory

High-throughput DFT calculations provide the foundational data for constructing phase stability networks. The standard workflow involves:

Structure Relaxation Protocol:

- Initialization: Begin with crystallographic information files (CIFs) from reference databases

- Electronic Structure Calculation: Employ plane-wave basis sets with pseudopotentials

- Geometry Optimization: Iteratively relax atomic positions, cell shape, and volume until forces converge below 0.01 eV/Å

- Energy Calculation: Compute final total energy for the fully relaxed structure

- Validation: Compare calculated lattice parameters with experimental values where available

Stability Assessment:

- Reference State Selection: Identify all competing phases in the relevant chemical space

- Convex Hull Construction: Calculate the lower convex envelope of formation energies

- Energy Above Hull: Compute ΔE~hull~ = E~material~ - E~hull~ for each compound

- Stability Classification: Designate materials with ΔE~hull~ ≤ 0.1 eV/atom as "stable"

This methodology ensures consistent thermodynamic data across the entire network, enabling robust stability comparisons between diverse material systems.

Network Analysis and the Nobility Index

The topology of the phase stability network enables derivation of quantitative metrics for material reactivity. The "nobility index" is calculated through analysis of node connectivity within the network [3]. Materials with higher connectivity to other stable phases demonstrate greater resistance to chemical transformation, analogous to the noble metals in traditional chemistry.

The protocol for nobility index determination involves:

- Degree Centrality Calculation: Quantify the number of direct connections (edges) for each node

- Network Community Analysis: Identify clusters of highly interconnected materials

- Betweenness Centrality Assessment: Measure how often a node appears on shortest paths between other nodes

- Metric Validation: Compare nobility rankings with experimental corrosion resistance data

This data-driven approach successfully identifies the noblest materials in nature based solely on their topological position within the phase stability network [3].

Generative Models for Inverse Materials Design

Recent advances leverage phase stability networks as training data for generative models that design novel stable materials. MatterGen represents a state-of-the-art diffusion-based generative model that creates stable, diverse inorganic materials across the periodic table [5].

The MatterGen workflow comprises:

Diffusion Process for Crystalline Materials:

- Representation: Define materials by unit cell (atom types A, coordinates X, periodic lattice L)

- Corruption Process: Apply customized noise to each component with physically motivated limiting distributions

- Reverse Process: Train score network to denoise structures while respecting crystal symmetries

- Sampling: Generate novel structures by reversing the corruption process from random noise

Fine-tuning for Property Optimization:

- Adapter Modules: Inject tunable components into base model for property conditioning

- Classifier-Free Guidance: Steer generation toward target properties (chemistry, symmetry, electronic properties)

- Validation: Assess stability through DFT relaxation of generated structures

This approach generates structures that are more than twice as likely to be new and stable compared to previous methods, with generated structures being more than ten times closer to the DFT local energy minimum [5].

Research Reagent Solutions and Computational Tools

Table 2: Essential Research Tools and Resources

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| Materials Project | Database | Curated DFT calculations for inorganic materials | Public |

| Alexandria Dataset | Database | Expanded set of computed materials structures | Public |

| ICSD | Database | Experimentally determined crystal structures | Subscription |

| VASP | Software | DFT calculations for electronic structure | Commercial |

| MatterGen | Software | Generative model for materials design | Research |

| pymatgen | Library | Python materials analysis | Open Source |

| AFLOW | Database & Tools | High-throughput computational framework | Public |

Network Visualization and Structural Relationships

Network Architecture and Workflow

Applications in Materials Design and Discovery

The phase stability network enables multiple advanced applications in materials research and development:

Inverse Design of Functional Materials

Generative models like MatterGen leverage the phase stability network for inverse materials design, successfully creating stable new materials with target properties including specific chemistry, symmetry, and electronic, mechanical, and magnetic characteristics [5]. This approach demonstrates particular value for designing materials with multiple property constraints, such as high magnetic density combined with chemical composition exhibiting low supply-chain risk [5].

Reactivity Prediction and Classification

The nobility index derived from network connectivity provides a quantitative, data-driven metric for material reactivity [3]. This enables rapid screening of corrosion-resistant materials and identification of compounds with extreme resistance to chemical transformation, supporting the development of durable materials for harsh environments.

Discovery of Synthesizable Materials

Validation studies confirm that generative models trained on phase stability networks can rediscover thousands of experimentally verified structures not present in their training data [5]. This demonstrates the network's utility in predicting synthesizable materials, with one generated structure successfully synthesized and measured to have property values within 20% of the design target [5].

Advanced Experimental Workflow

Materials Discovery Workflow

The phase stability network of 21,000 nodes and 41 million thermodynamic connections represents a paradigm shift in materials research methodology. By modeling the complete stability landscape of inorganic materials as a complex network, researchers can derive fundamental insights into material reactivity and stability relationships that transcend traditional structure-property paradigms. The nobility index exemplifies how network topology can yield quantitative, data-driven metrics for predicting material behavior.

The integration of these networks with generative models like MatterGen demonstrates particular promise for accelerating materials discovery, enabling inverse design of stable materials with targeted functional properties. As these approaches mature, they will increasingly reduce reliance on serendipitous discovery and move the field toward rational, predictive materials design. Future developments will likely focus on expanding network coverage to include metastable phases, incorporating kinetic barriers, and integrating with experimental synthesis databases to create comprehensive materials development frameworks.

The analysis of complex networks has revolutionized the understanding of diverse systems from social interactions to biological processes. In materials science, applying network theory to phase stability data has uncovered fundamental organizational principles governing inorganic materials. This technical guide explores two pivotal network metrics—lognormal degree distribution and small-world characteristics—within the context of the phase stability network of all inorganic materials. We examine how these topological features influence material reactivity, synthesizability, and discovery, providing researchers with experimental protocols, computational methodologies, and visualization tools to advance predictive materials design.

Complex network theory provides powerful analytical frameworks for understanding interconnected systems across biological, social, and technological domains. In materials science, traditional bottom-up approaches investigating atomic structure and bonding are now complemented by top-down network analysis that reveals organizational patterns across thousands of materials [6]. The phase stability network represents a transformative paradigm where thermodynamically stable compounds form nodes interconnected by edges representing two-phase equilibria [3]. Analysis of this network, constructed from high-throughput density functional theory (HT-DFT) data, reveals consistent architectural features—specifically lognormal degree distributions and small-world characteristics—that encode fundamental principles of material stability and reactivity [7] [6].

These topological properties are not merely statistical curiosities but have practical implications for predicting material behavior. The connectivity distribution across the network influences which materials can stably coexist in multi-component systems, while short path lengths enable efficient exploration of compositional space [6]. Understanding these metrics provides researchers with a powerful framework for accelerating materials discovery and predicting synthesizability, ultimately bridging the gap between computational prediction and experimental realization [8].

Lognormal Degree Distribution in Materials Networks

Theoretical Foundations and Significance

Degree distribution describes the probability distribution of connections across nodes in a network, fundamentally shaping its topology and robustness. In the phase stability network of inorganic materials, the degree distribution follows a lognormal form rather than the power-law distribution characteristic of scale-free networks [6]. A lognormal distribution arises when a variable results from the product of multiple independent random factors, following the central limit theorem in logarithmic space [9]. This distribution is characterized by a bell-shaped concentration of values with a heavy right tail, distinguishing it from normal and power-law distributions [9].

The emergence of lognormal distribution in the materials network reflects the complex interplay of factors governing material stability. Each material's connectivity (number of tie-lines) represents the product of multiple chemical and thermodynamic constraints rather than a single dominant factor. The lognormal behavior results from the network's extremely dense connectivity, as sparsity is a necessary condition for exact power-law emergence [6]. This distribution represents a reconciliatory solution to the longstanding debate about whether real-world networks follow power-law or lognormal distributions, as lognormal tails can approximate power-law behavior [9].

Experimental Evidence from Phase Stability Networks

Analysis of the complete inorganic materials network reveals a densely connected system of approximately 21,300 nodes (stable compounds) interconnected by roughly 41 million edges (tie-lines defining two-phase equilibria) [6]. The connectivity distribution across this network follows a distinct lognormal pattern, with an average of approximately 3,850 edges per node [6]. This exceptional density distinguishes materials networks from other complex networks where sparser connections are typical.

Table 1: Key Topological Properties of the Phase Stability Network

| Network Property | Value | Significance |

|---|---|---|

| Number of Nodes | ~21,300 | Thermodynamically stable inorganic compounds |

| Number of Edges | ~41 million | Two-phase equilibria between compounds |

| Mean Degree (⟨k⟩) | ~3,850 | Average number of tie-lines per material |

| Degree Distribution | Lognormal | Heavy-tailed distribution of connectivity |

| Characteristic Path Length (L) | 1.8 | Average shortest distance between nodes |

| Network Diameter (Lmax) | 2 | Maximum shortest path between any two nodes |

| Global Clustering Coefficient (Cg) | 0.41 | Probability adjacent nodes are connected |

| Mean Local Clustering Coefficient | 0.55 | Average of local clustering coefficients |

The lognormal distribution manifests differently across material classes, with mean degree decreasing as the number of elemental constituents increases [6]. This chemical hierarchy emerges because higher-component materials compete for tie-lines with lower-component materials across multiple chemical subspaces. For example, a ternary compound competes not only with other ternaries but also with binary compounds in relevant subsystems, creating an inherent connectivity constraint [6].

Methodologies for Measuring Degree Distribution

Quantifying degree distribution in materials networks requires constructing the complete phase stability network from thermodynamic data. The following protocol outlines this process:

Data Acquisition: Extract formation energies for all known and hypothetical inorganic compounds from high-throughput DFT databases such as the Open Quantum Materials Database (OQMD), which contains calculations for over 500,000 materials [6] [8].

Convex Hull Construction: For each chemical subsystem, construct the convex hull of formation energies to identify thermodynamically stable phases. Materials lying on the hull surface are considered stable nodes in the network.

Tie-Line Identification: Determine all two-phase equilibria between stable compounds, represented as edges in the network. Each tie-line indicates that two materials can stably coexist without reacting.

Degree Calculation: For each node (material), compute its degree (k) as the number of tie-lines connected to it. This represents how many other materials it can stably coexist with in two-phase equilibria.

Distribution Fitting: Plot the probability distribution p(k) and fit with lognormal, power-law, and exponential functions using maximum likelihood estimation. Statistical tests (e.g., Kolmogorov-Smirnov) determine the best fit, with lognormal expected for dense materials networks [6].

The experimental workflow for constructing and analyzing the phase stability network can be visualized as follows:

Small-World Characteristics in Materials Networks

Defining Small-World Properties

Small-world networks represent a distinctive topological class characterized by two defining properties: high clustering coefficient and short characteristic path length [10] [11]. Formally, a small-world network exhibits a characteristic path length (L) that grows proportionally to the logarithm of the number of nodes (L ∝ log N), while maintaining a global clustering coefficient that is not small [10]. This combination creates networks with specialized regions capable of efficient global information transfer [12].

In social networks, this architecture produces the famous "six degrees of separation" phenomenon, where any two people connect through short acquaintance chains [10]. Similarly, in materials networks, small-world topology enables efficient navigation through chemical space despite the network's enormous size. The high clustering reflects localized communities of strongly interconnected materials, while short path lengths ensure minimal intermediate steps between any two compounds [6]. This architecture creates optimal conditions for both specialized processing (through clustering) and efficient information transfer (through short paths) [12].

Quantitative Metrics for Small-World Analysis

Several metrics quantify small-world characteristics in networks:

Characteristic Path Length (L): The average number of edges in the shortest path between all node pairs. For the materials network, L = 1.8, indicating remarkably efficient connectivity [6].

Clustering Coefficient (C): Measures the degree to which nodes cluster together, calculated as the probability that two neighbors of a node are connected themselves. The materials network shows Cg = 0.41 (global) and C̄i = 0.55 (mean local) [6].

Small-World Coefficient (σ): Defined as σ = (C/Crand)/(L/Lrand), where Crand and Lrand represent values from equivalent random networks. Networks with σ > 1 are considered small-world [10] [12].

Alternative Metric (ω): A more robust measure comparing clustering to lattice networks and path length to random networks: ω = (Lrand/L) - (C/Clatt). Values near zero indicate small-world organization [12] [11].

Table 2: Small-World Metrics for the Phase Stability Network

| Metric | Value | Comparison to Random Network | Interpretation |

|---|---|---|---|

| Characteristic Path Length (L) | 1.8 | Similar to random (L ≈ Lrand) | Enables efficient navigation |

| Global Clustering Coefficient (Cg) | 0.41 | Much higher than random (C ≫ Crand) | Forms specialized communities |

| Network Diameter | 2 | Much smaller than random | Maximum 2 steps between any materials |

| Small-World Coefficient (σ) | >1 | Significantly greater than 1 | Confirms small-world topology |

| Assortativity Coefficient | -0.13 | Weakly dissortative | Hubs connect to less-connected nodes |

Experimental Evidence in Materials Networks

The phase stability network exhibits striking small-world characteristics with an exceptionally short characteristic path length (L = 1.8) and minimal network diameter (Lmax = 2) [6]. This remarkable connectivity arises from the presence of highly connected hub materials—particularly noble gases and stable binary halides—that bridge diverse regions of chemical space. These hubs create shortcuts that dramatically reduce the number of intermediate steps between any two materials [6].

The materials network also demonstrates high clustering (Cg = 0.41), significantly exceeding values expected in random networks of equivalent density [6]. This reflects the formation of tightly interconnected local communities where materials sharing chemical similarities or structural features form dense clusters. The combination of short paths and high clustering creates an optimal architecture for materials discovery, enabling both specialized investigation within chemical families and efficient exploration across diverse compositional spaces [8].

Methodologies for Characterizing Small-World Properties

The following experimental protocol enables quantification of small-world characteristics in materials networks:

Network Construction: Build the phase stability network as described in Section 2.3, ensuring complete representation of all stable materials and their tie-lines.

Path Length Calculation:

- Compute the shortest path between all node pairs using algorithms such as Floyd-Warshall or Dijkstra's algorithm

- Calculate the characteristic path length (L) as the mean of all shortest paths

- Identify the network diameter (Lmax) as the longest shortest path

Clustering Coefficient Computation:

- For each node i with degree ki, compute the local clustering coefficient: Ci = (2ei)/(ki(ki-1)), where ei represents actual edges between neighbors

- Calculate the mean local clustering coefficient (C̄i) by averaging all Ci values

- Compute the global clustering coefficient (Cg) using the triplets method

Control Network Generation:

- Create equivalent random networks with the same number of nodes and edges using the Erdős-Rényi model

- Generate equivalent lattice networks for comparison

Small-World Metric Calculation:

- Compute σ = (C/Crand)/(L/Lrand)

- Compute ω = (Lrand/L) - (C/Clatt)

- Compare values to established thresholds for small-world classification

The relationships between these key metrics and their role in identifying small-world networks can be visualized as follows:

Research Reagent Solutions: Essential Materials for Network Analysis

Conducting network analysis of phase stability requires specific computational tools and data resources. The following table details essential "research reagents" for this emerging field:

Table 3: Essential Research Reagents for Materials Network Analysis

| Resource/Tool | Function | Application in Materials Network Research |

|---|---|---|

| High-Throughput DFT Databases (OQMD, Materials Project) | Provides formation energies for stable and hypothetical compounds | Source data for node creation and convex hull construction |

| Convex Hull Algorithms | Identifies thermodynamically stable phases from formation energies | Determines which materials form nodes in the stability network |

| Network Analysis Libraries (NetworkX, igraph) | Computes network metrics and properties | Calculates degree distribution, path length, clustering coefficients |

| Statistical Testing Frameworks | Determines best-fit distributions for degree data | Differentiates between lognormal, power-law, and exponential distributions |

| Crystallographic Databases (ICSD, CSD) | Provides experimental structural data | Validates computational predictions and establishes discovery timelines |

| Machine Learning Platforms | Builds predictive models from network properties | Predicts synthesizability and identifies promising hypothetical materials |

Implications for Materials Discovery and Design

The topological features of the phase stability network have profound implications for materials research and development. The lognormal degree distribution directly enables quantification of material reactivity through the "nobility index," which uses node connectivity to identify the most chemically inert compounds [6]. This data-driven metric provides a rational approach to identifying optimal materials for applications requiring extreme stability or corrosion resistance.

The small-world characteristics of the materials network facilitate efficient discovery pathways, as the short distances between nodes enable researchers to navigate chemical space with minimal intermediate steps [8]. Analysis of network evolution reveals that materials discovery follows preferential attachment principles, with new materials more likely to connect to highly connected hubs [8]. This understanding enables predictive modeling of synthesizability, helping prioritize hypothetical materials for experimental investigation.

Furthermore, the decreasing connectivity with increasing number of elemental constituents provides insight into the scarcity of high-component stable materials [6]. This hierarchical structure suggests fundamental constraints on materials discovery that complement traditional energy-based explanations, offering new perspectives on the ultimate limits of stable inorganic compounds.

The phase stability network of inorganic materials exhibits distinctive architectural features—lognormal degree distribution and small-world characteristics—that encode fundamental principles of material behavior. These topological properties provide powerful analytical frameworks for predicting reactivity, guiding materials discovery, and understanding systemic constraints on stable compound formation. As high-throughput computational methods continue to expand materials databases, network-based approaches will play an increasingly vital role in unlocking structure-property relationships and accelerating the design of novel materials with tailored functionalities. The integration of these network metrics with traditional materials science paradigms represents a promising frontier for both fundamental research and practical applications.

The design and discovery of advanced inorganic materials have traditionally been guided by bottom-up investigations of structure-property relationships, focusing on how atomic arrangements and interatomic bonding determine macroscopic behavior. However, a paradigm shift is emerging through top-down studies of the organizational structure of networks of materials themselves. Within this context, research has unraveled the complete "phase stability network of all inorganic materials" as a densely connected complex network of 21,000 thermodynamically stable compounds (nodes) interlinked by 41 million tie lines (edges) that define their two-phase equilibria, as computed by high-throughput density functional theory [3]. Analyzing the topology of this network enables the identification of material characteristics inaccessible from traditional atoms-to-materials paradigms. From this analysis, researchers have derived a rational, data-driven metric for material reactivity known as the "nobility index," which quantitatively identifies the noblest materials in nature [3].

Theoretical Foundation: The Phase Stability Network

The phase stability network represents a groundbreaking approach to understanding materials relationships through complex network theory. This framework reconceptualizes the entire landscape of inorganic materials as an interconnected system rather than a collection of isolated compounds.

Network Structure and Components

Table: Phase Stability Network Components

| Component | Description | Scale |

|---|---|---|

| Nodes | Thermodynamically stable inorganic compounds | 21,000 compounds |

| Edges (Tie Lines) | Two-phase equilibria between stable compounds | 41 million connections |

| Data Source | High-throughput density functional theory calculations | First-principles computations |

| Connectivity | Measures reactivity relationships between materials | Network topology analysis |

The network's structure emerges from thermodynamic stability data computed through high-throughput density functional theory (DFT), creating a comprehensive map of stability relationships across inorganic materials space [3]. Each node represents a thermodynamically stable compound, while edges represent demonstrable two-phase equilibria between these compounds. This intricate web of connections captures the reactive pathways through which materials can transform into other stable compounds under various conditions.

Topological Analysis Methodology

The analytical power of the phase stability network derives from graph-theoretical metrics applied to its topology:

- Degree Centrality: The number of direct connections (edges) each node possesses to other nodes in the network

- Betweenness Centrality: The extent to which a node lies on the shortest paths between other nodes

- Community Detection: Identification of densely connected subgroups within the broader network

- Path Length Analysis: Measurement of the shortest connectivity routes between material pairs

These topological metrics enable the quantification of material reactivity in ways previously impossible through conventional materials science approaches, directly leading to the derivation of the nobility index.

The Nobility Index: Definition and Computation

Conceptual Framework

The nobility index represents a data-driven reactivity metric derived from a material's connectivity within the phase stability network. Fundamentally, this index quantifies a material's tendency to remain in its elemental or compound form rather than reacting to form other compounds. Materials with high nobility index scores exhibit minimal reactive pathways to other compounds, making them exceptionally stable and inert—the modern equivalent of "noble" materials that extend beyond traditional noble metals.

The underlying principle states that materials with fewer connections in the phase stability network demonstrate higher nobility, as they participate in fewer two-phase equilibria and thus have limited thermodynamic driving forces to form other compounds. This contrasts with highly connected materials that readily transform into numerous other stable compounds.

Computational Methodology

The nobility index is computed through the following workflow:

Network Construction:

- Enumerate all stable inorganic compounds from reference databases (Materials Project, ICSD)

- Compute two-phase equilibria between all compound pairs using thermodynamic data

- Construct adjacency matrix representing the phase stability network

Connectivity Analysis:

- Calculate degree centrality for each node (material) in the network

- Normalize connectivity metrics against network size and composition

- Apply logarithmic scaling to handle the wide dynamic range of connectivity values

Index Formulation:

- Invert the normalized connectivity metric (higher nobility = lower connectivity)

- Rescale to appropriate numerical range for interpretability

- Validate against known noble materials and experimental reactivity data

Table: Nobility Index Calculation Parameters

| Parameter | Specification | Purpose |

|---|---|---|

| Reference Dataset | 21,000 stable compounds from Materials Project/ICSD | Ensures comprehensive coverage |

| Thermodynamic Threshold | Formation energy < 0 eV/atom at 0K | Defines thermodynamic stability |

| Connectivity Metric | Normalized degree centrality | Quantifies reactive pathways |

| Validation Method | Correlation with experimental corrosion/oxidation data | Confirms predictive power |

Experimental Validation and Protocols

Computational Validation Framework

The nobility index requires rigorous validation against both computational and experimental benchmarks to establish its predictive credibility:

Stability Prediction Accuracy:

- Compare nobility index rankings with known noble materials (Au, Pt, Ir)

- Calculate correlation with experimental corrosion rates across material classes

- Assess predictive power for novel noble material identification

Cross-Validation Methodology:

- Implement k-fold cross-validation across different network subsets

- Test robustness to missing data and thermodynamic uncertainties

- Validate against materials synthesized after model development

Materials Synthesis and Characterization

Experimental validation of nobility index predictions requires synthesis and characterization of identified materials:

Table: Research Reagent Solutions for Experimental Validation

| Reagent/Material | Function | Specifications |

|---|---|---|

| High-Purity Elements | Precursors for material synthesis | 99.99% purity, metallurgical grade |

| Arc Melting System | Synthesis of intermetallic compounds | Water-cooled copper hearth, argon atmosphere |

| Spark Plasma Sintering | Rapid consolidation of powders | Vacuum environment, programmable temperature |

| Electrochemical Cell | Corrosion resistance testing | Three-electrode setup, potentiostat control |

| X-ray Diffractometer | Phase identification and purity | Cu Kα radiation, Rietveld refinement capability |

| XPS Spectrometer | Surface chemistry analysis | Monochromatic Al Kα source, UHV conditions |

Integration with Modern Materials Design Frameworks

The nobility index finds practical application within contemporary AI-driven materials discovery platforms, enhancing their capability for inverse design. Advanced frameworks like MatterGen and Aethorix v1.0 leverage data-driven metrics to accelerate the discovery of novel inorganic materials with targeted properties [5] [13].

Inverse Design Implementation

Modern generative models for materials design employ diffusion-based approaches to directly generate stable crystal structures. These models represent crystalline materials as ( M=(A,X,L) ), where ( A ) represents atom species, ( X ) denotes fractional coordinates, and ( L ) is the periodic lattice [13]. The nobility index serves as a critical filtering criterion in the generation process, ensuring synthesized materials possess desired stability characteristics.

The integration occurs through a multi-stage workflow:

- Constraint Definition: Specify target chemistry, symmetry, and property constraints including minimal nobility index thresholds

- Candidate Generation: Use diffusion models to generate novel crystal structures satisfying compositional constraints

- Stability Screening: Evaluate generated structures using nobility index and formation energy calculations

- Property Optimization: Select candidates with optimal combinations of nobility and functional properties

Industrial Applications and Use Cases

The nobility index enables targeted materials design for specific industrial applications:

Table: Application-Specific Nobility Requirements

| Application Domain | Target Nobility Index | Key Performance Metrics |

|---|---|---|

| High-Temperature Alloys | >0.85 (90th percentile) | Creep resistance, oxidation stability |

| Medical Implants | >0.90 (95th percentile) | Biocompatibility, corrosion resistance |

| Electrocatalysts | 0.70-0.85 (moderate) | Surface activity, dissolution resistance |

| Protective Coatings | >0.95 (99th percentile) | Environmental barrier performance |

Industrial validation demonstrates the practical utility of the nobility index. In one case study, a generated material was synthesized with measured property values within 20% of the target specification, confirming the predictive capability of this approach [5].

Future Directions and Research Opportunities

The nobility index establishes a foundation for several promising research directions:

- Dynamic Nobility Metrics: Extending the concept to incorporate temperature and pressure dependencies

- Multi-Scale Reactivity Modeling: Integrating nobility index with mesoscale microstructure evolution

- Aqueous/Environmental Stability: Developing environment-specific nobility indices for corrosion applications

- Machine Learning Enhancement: Combining network-based nobility with deep learning property predictors

These advancements will further solidify the nobility index as an essential tool in the computational materials design toolkit, enabling more efficient discovery of materials with tailored stability and reactivity profiles.

The pursuit of understanding structure-property relationships represents a fundamental objective in materials science. Traditionally, this endeavor has been approached through bottom-up investigations focusing on how atomic arrangements and interatomic bonding determine macroscopic behavior. However, a paradigm shift is emerging through the application of complex network theory to analyze the organizational structure of materials themselves. This approach enables a top-down study of material interactions, revealing patterns and characteristics inaccessible through traditional atoms-to-materials paradigms. Central to this new perspective is the concept of the phase stability network—a complex web of thermodynamic relationships that governs material behavior and reactivity across chemical systems. Within this network, a distinct chemical hierarchy emerges, dictated primarily by the number of components in a material, which systematically influences its thermodynamic stability and connectivity within the universal phase diagram [6].

The phase stability network of inorganic materials represents a comprehensive map of thermodynamic relationships between stable compounds. Constructed from high-throughput density functional theory (HT-DFT) calculations, this network encompasses approximately 21,000 thermodynamically stable compounds (nodes) interconnected by roughly 41 million tie-lines (edges) representing stable two-phase equilibria at T = 0 K [6].

Key Network Characteristics

- Connectivity Density: The network exhibits remarkable connectivity with a mean degree (⟨k⟩) of approximately 3,850, indicating each stable compound can form stable two-phase equilibria with thousands of others on average [6]

- Small-World Properties: The network demonstrates small-world characteristics with an extremely short characteristic path length (L = 1.8) and diameter (Lmax = 2), enabling efficient navigation between material nodes [6]

- Topological Structure: Degree distribution follows a lognormal form rather than scale-free behavior, reflecting the network's exceptional density compared to other complex networks [6]

Quantitative Evidence: Component Count and Stability

Analysis of the phase stability network reveals a clear hierarchical organization based on the number of chemical components (N) in a material, where N = 2 for binary, N = 3 for ternary compounds, etc.

Table 1: Network Properties by Number of Components

| Number of Components (N) | Average Number of Tie-Lines (⟨k⟩) | Distribution of Stable Materials | Formation Energy Requirement |

|---|---|---|---|

| Binary (N=2) | Highest | Moderate | Less stringent |

| Ternary (N=3) | Intermediate | Peak abundance | Moderate |

| Quaternary (N=4) | Lower | Declining | More stringent |

| Higher (N>4) | Lowest | Rare | Most stringent |

This hierarchical structure emerges from fundamental thermodynamic competition. Lower-component materials (e.g., binaries) dominate regions of chemical space and enjoy preferential stability, while higher-component materials must overcome significant energetic hurdles to remain stable [6]. The data reveals that high-N compounds require substantially lower (more negative) formation energies than their low-N counterparts to achieve stability, as they compete not only with other compounds in their own chemical space but also with binary compounds in all constituent sub-systems [6].

Table 2: Impact of Component Count on Material Properties

| Property | Relationship with Component Count (N) | Scientific Implication |

|---|---|---|

| Mean Degree (⟨k⟩) | Decreases with increasing N | Reduced connectivity in phase space |

| Formation Energy Threshold | Becomes more negative with increasing N | Increased stability requirements |

| Discovery Probability | Peaks at N=3, decreases rapidly | Combinatorial explosion vs. stability loss |

| Competitive Pressure | Increases with N | Competition with lower-N systems |

Theoretical Framework: Mechanisms of Hierarchy

The observed chemical hierarchy stems from fundamental principles of thermodynamics and the geometry of composition space.

Energetic Competition Model

Lower-component materials create a "stability floor" that higher-component materials must surpass. This phenomenon creates what researchers have described as a "volcano plot" for stable ternary nitrides as a function of energetic competition with their corresponding binary nitrides [6]. The convex hull construction in thermodynamic modeling inherently favors simpler compounds, as higher-component materials must lie below all possible combinations of lower-component phases in energy space to remain stable.

Composition Space Topology

As the number of components increases, the composition simplex gains dimensionality while the relative volume-to-surface ratio diminishes. This mathematical reality, combined with combinatorial explosion of possible competing phases, creates intrinsic limitations on the stability of high-component materials. Widom (1981) argued that the peak near N = 3 or 4 in stability distributions arises from this competition between combinatorial explosion and diminishing volume-to-surface ratio in the composition simplex as N increases [6].

Experimental and Computational Methodologies

High-Throughput Computational Analysis

The phase stability network was constructed using the Open Quantum Materials Database (OQMD), containing calculations of nearly all crystallographically ordered, structurally unique materials experimentally observed to date and a large number of hypothetical materials—totaling more than half a million structures [6].

Key Protocol Steps:

- Database Curation: Collect calculated properties from HT-DFT databases (OQMD, Materials Project, Alexandria)

- Convex Hull Construction: Calculate stability fields using convex hull formalism at T = 0 K

- Tie-Line Identification: Identify all two-phase equilibria between stable compounds

- Network Representation: Represent stable materials as nodes and two-phase equilibria as edges

- Topological Analysis: Apply complex network theory metrics to characterize connectivity patterns

Experimental Phase Diagram Determination

For experimental validation, binary phase diagrams are determined through controlled laboratory studies:

Experimental Protocol:

- Sample Preparation: Create mixtures of pure components in varying proportions

- Thermal Processing: Heat samples to target temperatures in controlled furnaces until equilibrium is established

- Quenching: Rapidly cool samples to preserve high-temperature phase assemblages

- Phase Identification: Analyze quenched products using microscopy and diffraction techniques

- Diagram Construction: Plot phase stability fields against temperature and composition variables [14]

Advanced Research Tools and Applications

Generative Models for Materials Design

Recent advances in generative artificial intelligence have created new pathways for exploring the chemical hierarchy. MatterGen, a diffusion-based generative model, specifically addresses the challenge of designing stable materials across component counts by directly generating crystal structures with target properties [5].

Key Capabilities:

- Stability Prediction: Generated structures show 78% stability rate below 0.1 eV/atom from convex hull

- Property Optimization: Can be fine-tuned for specific chemical, mechanical, electronic, and magnetic properties

- Cross-Component Design: Generates stable materials across binary, ternary, and higher-component systems

Research Reagent Solutions

Table 3: Essential Research Materials and Computational Tools

| Resource/Tool | Function/Role | Application Context |

|---|---|---|

| Open Quantum Materials Database (OQMD) | Provides calculated properties of >500,000 materials via HT-DFT | Phase stability network construction |

| High-Throughput DFT Calculations | Determines thermodynamic stability of crystal structures | Convex hull analysis and tie-line identification |

| MatterGen Generative Model | Directly generates stable crystal structures given property constraints | Inverse materials design across component counts |

| Experimental Phase Diagram Apparatus | Determines phase stability fields through controlled heating/quenching | Validation of computational predictions |

Implications for Materials Design and Discovery

The chemical hierarchy framework fundamentally reshapes materials discovery paradigms. Understanding how component count affects stability enables more efficient exploration of chemical space by prioritizing systems with higher probabilities of yielding stable compounds.

The "nobility index"—derived from node connectivity in the phase stability network—provides a quantitative metric for material reactivity [6]. This data-driven approach reveals that materials with exceptionally high connectivity (such as noble gases and binary halides) serve as network hubs, creating the remarkably short path lengths observed in the materials network [6].

Furthermore, the peak in stable material distribution at N = 3 suggests significant untapped potential in quaternary and higher-component systems, though their discovery requires navigating increasingly stringent stability requirements. This insight guides resource allocation in materials discovery efforts, emphasizing the need for sophisticated computational screening and advanced synthesis techniques to access these challenging regions of chemical space.

Computational Methods and Machine Learning for Phase Stability Analysis

High-Throughput Density Functional Theory (HT-DFT) Foundations

High-Throughput Density Functional Theory (HT-DFT) represents a paradigm shift in computational materials science, enabling the rapid screening and discovery of novel materials by automating thousands of first-principles calculations. This approach has become indispensable for navigating the vast compositional space of inorganic compounds, where traditional trial-and-error methods are prohibitively time-consuming and expensive [15]. Within the specific context of phase stability research, HT-DFT provides the foundational data required to construct comprehensive thermodynamic networks—the complex web of stable compounds and their equilibria that delineates the energy landscape of all inorganic materials [6]. By systematically computing formation energies and decomposition pathways for thousands of compounds, HT-DFT allows researchers to map the phase stability network, revealing quantitative metrics for material reactivity and guiding the targeted discovery of new, thermodynamically stable compounds [8] [6].

Core Methodological Framework

Fundamental DFT Principles in High-Throughput Context

HT-DFT investigations build upon the well-established principles of Density Functional Theory, which formulates the quantum mechanical many-body problem in terms of the electron density. The Hohenberg-Kohn theorems establish that the ground state energy is a unique functional of this density, while the Kohn-Sham equations provide a practical framework for solving the system by introducing a set of non-interacting electrons that reproduce the same density [15]. In HT-DFT workflows, these equations are solved numerically across hundreds or thousands of different chemical compositions and crystal structures, requiring careful attention to numerical convergence parameters including plane-wave energy cutoffs and k-point sampling for Brillouin zone integration [16]. The efficiency of these calculations relies heavily on the choice of exchange-correlation functional, with the Generalized Gradient Approximation (GGA), particularly the Perdew-Burke-Ernzerhof (PBE) parameterization, serving as the most common selection due to its favorable balance between accuracy and computational cost [17] [18].

Thermodynamic Stability Assessment

A central objective of HT-DFT screening is the assessment of thermodynamic stability through the construction of energy convex hulls. The formation energy of a compound, (Hf^{ABO3}), is calculated according to:

[Hf^{ABO3} = E(ABO3) - \muA - \muB - 3\muO]

where (E(ABO3)) is the total energy of the perovskite, and (\muA), (\muB), and (\muO) are the chemical potentials of the constituent elements [18]. The convex hull distance, (H{stab}^{ABO3}), representing the energy above the hull, is then defined as:

[H{stab}^{ABO3} = Hf^{ABO3} - H_{hull}]

where (H{hull}) is the convex hull energy at the composition of interest [18]. Compounds lying on the convex hull ((H{stab}^{ABO_3} \leq 0)) are considered thermodynamically stable, while those above the hull are metastable or unstable. In practice, a small positive tolerance (approximately 0.025 eV/atom, near room-temperature thermal energy) is often applied to identify potentially synthesizable compounds [18].

Table 1: Key Properties Computed in Typical HT-DFT Studies

| Property | Computational Method | Significance in Phase Stability |

|---|---|---|

| Formation Energy | DFT total energy differences | Determines thermodynamic stability relative to competing phases |

| Decomposition Energy | Convex hull construction | Energy penalty for decomposition to stable phases; key stability metric [17] |

| Band Gap | DFT band structure calculation | Critical for optoelectronic applications; influences phase stability through electronic contributions |

| Oxygen Vacancy Formation Energy | Defect supercell calculations [18] | Relevance for catalytic and energy applications |

| Lattice Parameters | Geometry optimization | Influences stability through steric constraints and mechanical stability |

Workflow Implementation and Automation

Standardized HT-DFT Protocol

The implementation of a robust HT-DFT workflow requires meticulous automation at each stage, from initial structure generation to final property analysis. A representative protocol, as applied in the screening of ABX₃ halide perovskite alloys, encompasses several methodical stages [17]:

- Chemical Space Definition: Explicitly define the compositional space by selecting permissible elements for each crystallographic site. For example, a halide perovskite study might incorporate 5 A-site cations (e.g., Cs, MA, FA), 6 B-site divalent metals (e.g., Pb, Sn, Ge), and 3 X-site halogens (I, Br, Cl) [17].

- Structure Generation: For each unique composition, generate initial crystal structures. For ordered compounds, this involves creating pristine unit cells. For alloys, employ approaches like the Special Quasirandom Structure (SQS) method to simulate random site occupancy [17].

- Automated DFT Calculations: Execute DFT calculations with standardized parameters across all structures. This typically employs plane-wave basis sets with PAW pseudopotentials and GGA-PBE functionals. More advanced functionals like HSE06 may be applied to a subset for improved accuracy [17].

- Property Extraction: Programmatically extract target properties from converged calculations, including total energies (for formation energies), electronic densities of states (for band gaps), and optimized geometries [17] [18].

- Stability Analysis and Screening: Construct convex hulls using the computed formation energies and pre-existing thermodynamic data from databases like the OQMD to identify stable compounds [18]. Apply secondary filters based on additional properties (e.g., band gap ranges, structural distortion tolerances) [17].

Diagram 1: HT-DFT Workflow for Phase Stability Analysis. The workflow illustrates the cyclic process of materials discovery, where identified hypothetical compounds can feedback into new DFT calculations, and discovered materials can prompt the expansion of the chemical space.

Uncertainty Quantification and Parameter Optimization

A critical yet often overlooked aspect of HT-DFT is the rigorous quantification of numerical uncertainties. Recent advances enable automated optimization of convergence parameters by treating the target precision as the primary input rather than specific cutoff values [16]. This approach involves:

- Systematic Error Analysis: Mapping the dependence of total energies and derived properties (e.g., bulk modulus) on convergence parameters like plane-wave energy cutoff (ε) and k-point density (κ) across multiple volumes.

- Statistical Error Assessment: Quantifying the variability introduced by basis set changes during volume variations [16].

- Automated Parameter Selection: Implementing algorithms that identify the computational setup minimizing resource usage while guaranteeing errors remain below a user-defined threshold for properties of interest [16].

This methodology has demonstrated that conventional parameter choices in major high-throughput projects can yield errors in bulk modulus predictions exceeding 5-10 GPa for certain elements, highlighting the necessity of element-specific, precision-targeted convergence parameters [16].

Phase Stability Network Construction and Analysis

From HT-DFT Data to Materials Networks

The thermodynamic stability information generated through HT-DFT enables the construction of phase stability networks—complex graphs where nodes represent thermodynamically stable compounds and edges (tie-lines) represent stable two-phase equilibria between them [8] [6]. This network perspective transforms our understanding of materials reactivity from isolated binary or ternary systems to a unified, global stability landscape. The resulting network for all inorganic materials is remarkably dense and interconnected, comprising approximately 21,000 stable compounds (nodes) linked by over 41 million tie-lines (edges), with an average connectivity of ~3,850 tie-lines per compound [6]. This high connectivity emerges from the non-reactivity of noble gases and highly stable binary halides, which form tie-lines with nearly all other materials in the network [6].

Table 2: Key Topological Metrics of the Universal Phase Stability Network [6]

| Network Metric | Value | Interpretation |

|---|---|---|

| Number of Nodes (Stable Compounds) | ~21,300 | Total thermodynamically stable inorganic materials |

| Number of Edges (Tie-Lines) | ~41 million | Stable two-phase equilibria between compounds |

| Mean Degree (⟨k⟩) | ~3,850 | Average number of tie-lines per compound |

| Network Diameter (Lₘₐₓ) | 2 | Maximum number of edges between any two compounds |

| Characteristic Path Length (L) | 1.8 | Average number of edges between any two compounds |

| Global Clustering Coefficient (Cg) | 0.41 | Probability that two neighbors of a node are connected |

| Assortativity Coefficient | -0.13 | Tendency for highly connected nodes to link with less-connected nodes |

Network-Driven Materials Discovery

The topology of the phase stability network reveals fundamental principles governing materials discovery and reactivity:

- Scale-Free Lognormal Distribution: The degree distribution of the network follows a lognormal form rather than a power law, a consequence of its extreme density. This "heavy-tail" distribution indicates the presence of highly connected hub materials that disproportionately influence overall network stability [6].

- Hierarchical Organization: Network connectivity exhibits a clear chemical hierarchy, with mean degree (⟨k⟩) decreasing as the number of components (𝒩) in a compound increases. This reflects the heightened competition for stability that higher-component compounds face against numerous lower-component competitors in their chemical space [6].

- Nobility Index: The connectivity of materials within this network serves as a data-driven metric for reactivity, termed the "nobility index." Materials with higher connectivity (more tie-lines) are less reactive, as they can coexist stably with numerous other compounds. This quantitative framework enables the systematic identification of the most noble (least reactive) materials in nature [6].

- Discovery Prediction: The temporal evolution of network properties, combined with machine learning, enables predictions about which hypothetical compounds are most likely to be synthesizable. By training models on historical discovery patterns and network centrality measures, researchers can prioritize computational predictions for experimental validation [8].

Integration with Machine Learning and Future Outlook

The synergy between HT-DFT and machine learning (ML) represents the cutting edge of computational materials design. ML models trained on HT-DFT datasets dramatically accelerate materials screening by learning complex structure-property relationships, enabling property prediction with minimal computational cost [19] [20]. Ensemble methods that integrate diverse feature representations—including elemental statistics, graph-based representations of crystal structures, and electron configuration descriptors—have demonstrated remarkable accuracy in predicting thermodynamic stability, achieving area under curve (AUC) scores of 0.988 while requiring only one-seventh of the training data compared to conventional models [19]. These ML-DFT frameworks have successfully identified novel catalyst candidates [20] and predicted previously undiscovered perovskite compositions [17] [19], validating the combined approach as a powerful paradigm for next-generation materials discovery.

Table 3: Essential Computational Tools for HT-DFT Research

| Tool Category | Representative Examples | Primary Function |

|---|---|---|

| DFT Codes | VASP [18], Quantum ESPRESSO | Perform electronic structure calculations |

| High-Throughput Frameworks | AFLOW, pyiron [16], qmpy [18] | Automate workflow management and job submission |

| Materials Databases | Materials Project [16], OQMD [8] [18] [6], JARVIS [19] | Provide reference data for stability analysis and model training |

| Structure Generation Tools | pymatgen, SQS method [17] | Create initial crystal structures for calculations |

| Machine Learning Libraries | XGBoost [20], Roost [19], ECCNN [19] | Train predictive models on HT-DFT data |

The CALPHAD method, an acronym for CALculation of PHAse Diagrams, is a powerful computational framework designed to model the phase stability and thermodynamic properties of multi-component materials systems [21]. Originating in the early 1970s through the pioneering work of Larry Kaufman and H. Bernstein, CALPHAD was developed to overcome the limitations of purely experimental phase diagram determination, which became increasingly impractical as alloy systems grew more complex [21]. At its core, CALPHAD is a phenomenological approach for predicting the thermodynamic, kinetic, and other properties of multicomponent materials systems by describing the properties of the fundamental building blocks of materials—the phases—starting from pure elements and binary and ternary systems [22]. This methodology has evolved into a central pillar of Integrated Computational Materials Engineering (ICME) and the Materials Genome Initiative, enabling faster, more reliable, and cost-effective development of advanced metallic materials [21].

Framed within the context of research on the phase stability network of all inorganic materials, CALPHAD provides the foundational thermodynamic data and modeling approach that makes understanding such large-scale networks possible. This network, revealed through high-throughput density functional theory (HT-DFT), can be represented as a densely connected complex network of thermodynamically stable compounds (nodes) interlinked by tie-lines (edges) defining their two-phase equilibria [6]. The CALPHAD method's ability to systematically model these thermodynamic relationships between phases makes it an essential tool for navigating and interpreting this complex network, particularly for predicting material reactivity and identifying stable combinations in multi-component systems [6].

Core Methodology: A Step-by-Step Technical Guide

The CALPHAD methodology transforms a variety of experimental and computational data on materials systems into physically-based mathematical models through a rigorous, iterative process [22]. The core methodology consists of four main steps, with validation as a critical final stage.

Data Capture and Assessment

The first step in CALPHAD modeling involves a rigorous evaluation of all available experimental and theoretical data for the material system of interest [21]. This comprehensive data collection includes:

- Phase equilibrium data from differential thermal analysis (DTA), X-ray diffraction (XRD), and microscopy

- Thermochemical measurements such as enthalpies of mixing from calorimetry, heat capacities, and activities from EMF measurements

- Ab initio calculations (e.g., DFT-based enthalpies of formation) for systems where experimental data is lacking or uncertain [22] [21]

- Estimated or extrapolated data using empirical rules, machine learning, or other estimation techniques [22]

The critical assessment and selection of consistent, reliable data is essential, as the quality of the resulting CALPHAD model depends strongly on the validity and coverage of this foundational dataset [21]. This requires careful human judgment to resolve discrepancies between different data sources and ensure overall consistency.

Thermodynamic Modeling and Model Selection

In this phase, each identified phase in the system is described using an analytical expression for its molar Gibbs free energy as a function of temperature, pressure, and composition [21]. The expression typically includes:

- A reference term based on the pure elements (Gibbs energy of the pure elements in their standard state)

- An ideal mixing term accounting for the configurational entropy of mixing

- An excess term to describe non-ideal interactions between components

- Additional terms for magnetic, elastic, or electronic contributions when relevant [21]

The Compound Energy Formalism (CEF) is widely used to handle ordered phases, stoichiometric compounds, interstitial solutions, and ionic materials [21]. In CEF, atoms or species are distributed across multiple sublattices, capturing order-disorder transitions, defects, and site preferences. This flexibility makes CEF essential for modeling real complex phases found in engineering materials.

Optimization

After assigning models to phases, the free parameters of these models are fitted to the input data collected in the first step through a process called optimization [22]. This demands extensive human judgment at different stages, mainly because the free parameters of all phases must be thermodynamically consistent with each other [22]. The optimization is typically performed using nonlinear least-squares minimization, as implemented in specialized modules like the PARROT module in Thermo-Calc or the Pandat Optimizer [22] [21].

A key component of this modeling process is the use of Redlich-Kister polynomials to describe the excess Gibbs energy of mixing in solution phases:

$$ G^{ex} = xA xB \sum{i=0}^{n} Li (xA - xB)^i $$

Where ( xA ) and ( xB ) are the mole fractions of components A and B, respectively, and ( L_i ) are interaction parameters that may themselves be temperature-dependent [21]. This expression captures non-ideal interactions and can be extended to ternary and multicomponent systems via generalized Redlich-Kister expansions.

Database Storage and Validation

Once the parameters for all phases are fitted to the experimental data, the Gibbs energy functions with their optimized parameters are stored in a structured text file, or database, with a specific format readable by CALPHAD software [22]. The final and critical step in developing a CALPHAD database is validation against experimental results not used during the optimization [22]. For multicomponent databases, validation against data from commercial and other multicomponent alloys is essential. If agreement with real multicomponent systems is unsatisfactory, re-optimization of one or more lower-order systems may be necessary to improve predictive capability [22].

Table 1: Core Steps in the CALPHAD Methodology

| Step | Key Activities | Outputs |

|---|---|---|

| Data Capture & Assessment | Collect experimental phase equilibria, thermochemical data; Perform ab initio calculations; Critically evaluate data quality and consistency | Critically assessed dataset for optimization |

| Thermodynamic Modeling | Select appropriate Gibbs energy models for each phase; Apply Compound Energy Formalism for complex phases; Define model parameters | Mathematical representations of all phases in the system |

| Optimization | Fit model parameters to experimental data using least-squares minimization; Ensure thermodynamic consistency across all phases | Optimized parameters for Gibbs energy models |

| Database Storage & Validation | Store optimized parameters in database format; Validate predictions against independent experimental data; Re-optimize if necessary | Validated thermodynamic database for multicomponent systems |

Workflow Visualization

The following diagram illustrates the iterative CALPHAD methodology workflow, highlighting the critical role of validation and potential re-optimization:

Key Concepts and Mathematical Framework

Thermodynamic Extrapolation to Multicomponent Systems

A fundamental strength of the CALPHAD method is its ability to reliably extrapolate from binary and ternary systems to predict properties of higher-order multicomponent systems [22] [21]. This is achieved through geometric extrapolation schemes that combine lower-order data in a thermodynamically consistent way. The most commonly used models include:

Muggianu Model: Assumes symmetric behavior and averages binary interaction parameters across all components. It ensures smooth extrapolation and is best suited for systems with similar atomic sizes and behaviors [21]. The simplified form of the Muggianu extrapolation expression is:

$$ G^{ex}{ABC} = xA xB L{AB}^{ABC} + xB xC L{BC}^{ABC} + xC xA L{CA}^{ABC} $$

Where ( L{AB}^{ABC} ), ( L{BC}^{ABC} ), and ( L{CA}^{ABC} ) are the composition-dependent interaction parameters in the ternary system, and ( xA ), ( xB ), and ( xC ) are the mole fractions of components A, B, and C, respectively [21].

Kohler Model: Also symmetric but maintains binary interaction behavior in the ternary by weighting deviations in a way that respects pure component influence. Works well when the system is relatively ideal and composition is evenly distributed [21].