Overcoming Data Scarcity in Machine Learning for Inorganic Synthesis: Strategies for Biomedical Innovation

This article addresses the critical challenge of data scarcity that impedes the application of machine learning (ML) in inorganic materials synthesis, a key bottleneck in accelerating the discovery of new...

Overcoming Data Scarcity in Machine Learning for Inorganic Synthesis: Strategies for Biomedical Innovation

Abstract

This article addresses the critical challenge of data scarcity that impedes the application of machine learning (ML) in inorganic materials synthesis, a key bottleneck in accelerating the discovery of new biomedical materials and drug development. We explore the fundamental limitations of existing data sources, including biases in historical literature and the '4 Vs' of data science. The article provides a comprehensive overview of advanced methodological solutions, such as multi-task learning, generative models for synthetic data, and large language models for automated literature extraction. Furthermore, it details strategies for troubleshooting model performance and optimizing workflows with limited data, and presents rigorous validation frameworks to compare the efficacy of different approaches. Designed for researchers, scientists, and drug development professionals, this guide synthesizes cutting-edge research to provide a practical roadmap for building reliable ML models that can predict and optimize the synthesis of novel inorganic materials, ultimately shortening development cycles for clinical applications.

Understanding the Data Scarcity Crisis in Inorganic Synthesis

The Bottleneck of Predictive Synthesis in Materials Discovery

Frequently Asked Questions (FAQs)

FAQ 1: Why do AI models successfully predict thousands of new materials, yet so few are successfully synthesized in the lab?

AI models primarily predict thermodynamic stability, but this does not equal synthesizability. Synthesis is a pathway-dependent process influenced by kinetics, reaction conditions, and competing phases. A material predicted to be stable might form undesirable impurities or require impractical synthesis conditions [1]. For instance, even promising materials like the solid-state battery electrolyte LLZO (Li₇La₃Zr₂O₁₂) are hindered by synthesis challenges like lithium volatilization at high temperatures, leading to impurities [1].

FAQ 2: Our organization faces a shortage of high-quality, standardized synthesis data. What are the best strategies to overcome this?

Data scarcity is a fundamental challenge. You can employ several strategies:

- Leverage Large Language Models (LLMs): Use LLMs to impute missing data points and encode complex, inconsistent nomenclature from existing literature into a homogenized feature space for your models [2]. One study increased ternary classification accuracy for graphene synthesis from 52% to 72% using this approach [2].

- Utilize Physics-Informed Models: Incorporate fundamental physical principles, like conservation of mass, into your models. The FlowER (Flow matching for Electron Redistribution) system uses a bond-electron matrix to explicitly track electrons, ensuring physically realistic predictions [3].

- Implement Autonomous Experimentation: Deploy closed-loop robotics and active-learning algorithms to generate high-fidelity data autonomously. These systems can shrink the synthesis-to-characterization loop from months to days and are designed to capture both positive and negative results [4] [5].

FAQ 3: How can we better predict viable synthesis pathways, not just final stability?

Move beyond screening final compounds and model the entire reaction network. This involves:

- Generating Hundreds of Pathways: Use computational platforms to map out numerous potential reaction routes from various precursors, including uncommon intermediates [1].

- Virtual Reactor Simulation: Simulate phase evolution within a virtual reactor using thermodynamic principles and machine-learned predictors to filter for promising, low-barrier routes [1].

- Inverse Design: Employ generative models that are fine-tuned not just for property prediction but also for synthesis route planning [4].

FAQ 4: What are the most common points of failure when translating a predicted material to a synthesized one?

Common failure points can be anticipated and planned for:

- Impurity Formation: The desired phase is outcompeted by kinetically favorable impurity phases (e.g., the formation of Bi₂Fe₄O₉ instead of pure BiFeO₃) [1].

- Precursor Sensitivity: The synthesis outcome is highly sensitive to minor variations in precursor quality, defects, or atmospheric conditions [1].

- Unrealistic Conditions: The theoretically viable pathway requires conditions that are not scalable or safe for industrial production (e.g., extremely high temperatures or hazardous elements) [1].

Troubleshooting Guides

Guide 1: Troubleshooting Failed Synthesis of a Predicted Material

Problem: A material predicted by our AI model to be stable and possess target properties fails to form in the lab, resulting in impurities or no reaction.

| Step | Problem Area | Diagnostic Check | Solution & Recommended Action |

|---|---|---|---|

| 1 | Thermodynamic vs. Kinetic Stability | Verify if the reaction pathway is kinetically hindered. Check for known intermediate compounds that are more favorable to form. | Use a reaction network model to identify alternative precursors or a modified pathway that avoids high-energy barriers [1]. |

| 2 | Reaction Condition Fidelity | Cross-check all experimental parameters (temperature, atmosphere, pressure, time) against known successful syntheses of analogous materials. | Systemically vary one condition at a time in a high-throughput or automated system to map the viable synthesis space [4] [5]. |

| 3 | Precursor Compatibility | Analyze if your precursors are reacting to form the desired product or if they are decomposing or forming stable byproducts. | Source higher-purity precursors or select alternative precursors that provide a more direct, lower-energy route to the final phase [1]. |

Guide 2: Troubleshooting a Low-Accuracy Synthesis Prediction Model

Problem: Our machine learning model for predicting synthesis outcomes has low accuracy and poor generalizability.

| Step | Problem Area | Diagnostic Check | Solution & Recommended Action |

|---|---|---|---|

| 1 | Data Quality & Bias | Audit your training data for publication bias (lack of negative results) and over-representation of a narrow set of "conventional" synthesis routes [1]. | Actively curate datasets that include failed experiments. Use LLM-based tools to extract and standardize data from diverse literature sources, filling in missing metadata [2]. |

| 2 | Model Physical Realism | Check if the model violates physical laws, such as conservation of mass or electrons. | Integrate physical constraints. Adopt approaches like the FlowER model, which uses a bond-electron matrix to guarantee conservation, moving from "alchemy" to grounded predictions [3]. |

| 3 | Feature Representation | Evaluate if the model's input features (e.g., substrate names, conditions) are inconsistently or poorly represented. | Use LLM embeddings to create a consistent, machine-readable feature space from complex and heterogeneous textual data [2]. |

Quantitative Data on Market and Method Efficacy

The following tables summarize key quantitative data related to the material informatics market and the performance of advanced AI methods.

Table 1: Material Informatics Market Overview and Trends [5] [6]

| Metric | Value / Trend | Context & Forecast |

|---|---|---|

| Global Market Size (2025) | USD 170.4 million | Projected to grow to USD 410.4 million by 2030 [6]. |

| Projected CAGR (2025-2030) | 19.2% | Indicating rapid market expansion and adoption [6]. |

| Largest Market Segment (Component) | Software (59.26% share in 2024) | Software platforms are the backbone of market adoption [5]. |

| Fastest-Growing Application | Generative Design (26.25% CAGR) | Driven by mature inverse-design algorithms [5]. |

| Key Market Driver | AI-driven cost/cycle-time compression | Can reduce time-to-market tenfold for new formulations [5]. |

Table 2: Documented Efficacy of Advanced AI Methods in Synthesis

| Method / Platform | Documented Efficacy / Accuracy | Application Context |

|---|---|---|

| DELID AI | 88% optical-property prediction accuracy without quantum calculations [5]. | Accelerated materials discovery and design. |

| LLM-Enhanced SVM | Ternary classification accuracy improved from 52% to 72% [2]. | Graphene chemical vapor deposition synthesis with limited data. |

| Autonomous Experimentation | Shrinks synthesis-characterization loops from months to days [5]. | High-throughput screening and closed-loop materials discovery. |

| AI-Driven Formulation | Cuts formulation spend by 30-50% [5]. | Optimization in regulated industries using digital twins. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational and Data Tools for Predictive Synthesis

| Tool / Resource Category | Specific Examples | Function in Addressing Synthesis Bottlenecks |

|---|---|---|

| Generative AI Models | MatterGen, FlowER, GPT-4 | Generates novel, stable crystal structures (MatterGen) or predicts chemically valid reaction pathways by conserving mass and electrons (FlowER) [3] [1]. |

| Physics-Informed Neural Networks (PINNs) | Custom implementations, platform features | Incorporates physical laws (e.g., energy conservation) directly into machine learning models, improving prediction realism and reliability [6]. |

| Large Language Models (LLMs) | GPT-4, other transformer models | Extracts, standardizes, and imputes synthesis data from literature; encodes complex textual data into consistent features for models [2]. |

| Material Informatics Platforms | Citrine Informatics, Schrödinger, MaterialsZone | Provides integrated software suites that combine AI-powered prediction with data management and analysis, often linking to laboratory robotics [5] [6]. |

| Open Reaction Datasets | USPTO data, CSD, curated datasets from literature | Provides foundational data for training models. The FlowER model, for instance, was trained on over a million reactions from a patent database [3]. |

Experimental Protocols & Workflows

Detailed Methodology: Leveraging LLMs for Data Enhancement on Scarce Datasets

This protocol is adapted from strategies proposed to improve machine learning performance on limited, heterogeneous datasets for graphene synthesis [2].

- Data Compilation: Compile a limited dataset from existing literature on the synthesis of your target material. The data will likely be heterogeneous, with inconsistent reporting of parameters (e.g., substrate names, conditions) and missing data points.

- LLM-Based Feature Homogenization:

- Prompting for Imputation: Use a large language model (e.g., GPT-4) in a prompting modality to impute missing numerical or categorical data points based on context from the rest of the dataset.

- Embedding Complex Nomenclature: Feed inconsistent textual descriptions (e.g., substrate names like "Cu foil annealed at 1000C" vs. "polycrystalline copper") into the LLM to generate numerical embedding vectors. These embeddings capture semantic similarity in a consistent format for the machine learning algorithm.

- Model Training and Comparison:

- Train a traditional classifier (e.g., Support Vector Machine - SVM) using the LLM-enhanced dataset (with imputed values and embedding features).

- For comparison, fine-tune the LLM itself as a predictor on the same original, scarce data.

- Expected Outcome: The numerical classifier (SVM) combined with LLM-driven data enhancements is demonstrated to outperform the standalone fine-tuned LLM, highlighting that sophisticated data enhancement is more effective than simple model fine-tuning in data-scarce scenarios [2].

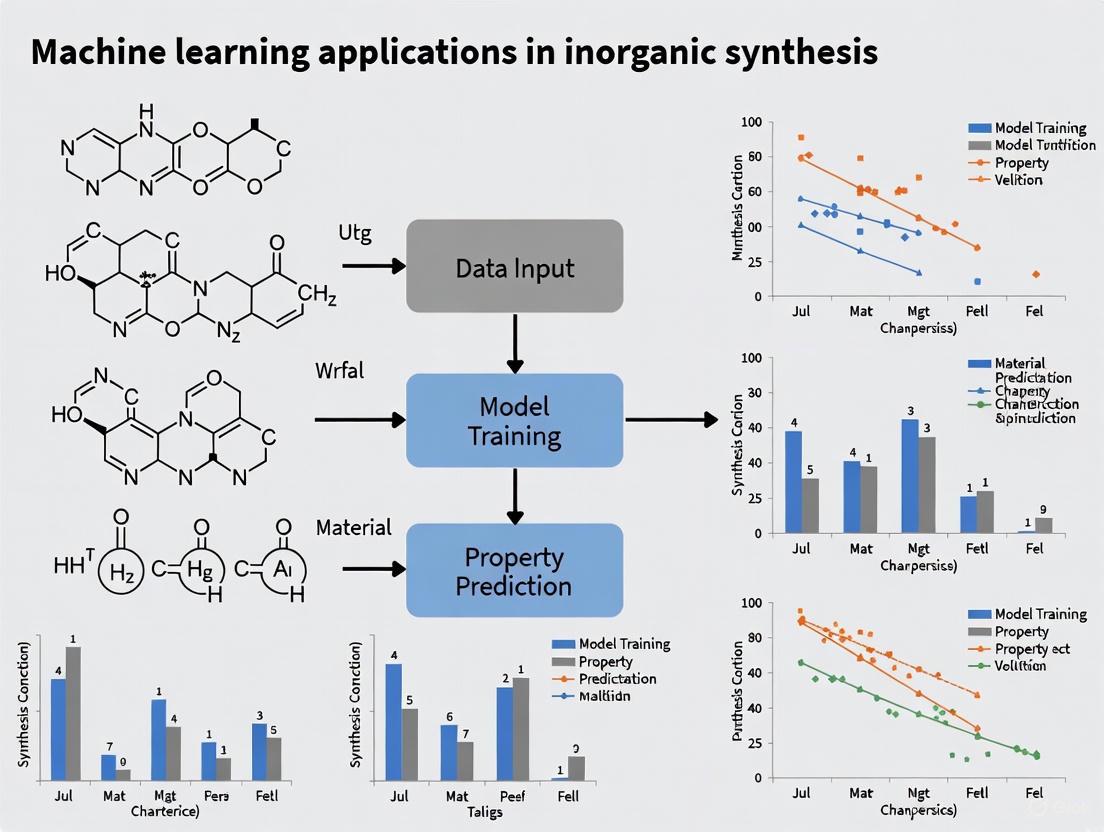

Workflow Diagram: AI-Driven Synthesis Discovery with Physical Constraints

The diagram below illustrates a robust workflow that integrates physical constraints to overcome data scarcity and improve synthesis prediction.

Frequently Asked Questions

1. What are the main data limitations affecting machine learning for inorganic synthesis? The primary limitations can be categorized using the "4 Vs" of data science: Volume, Variety, Veracity, and Velocity [7]. Text-mined synthesis data often suffers from insufficient data volume for robust model training, lack of variety in the reported materials and synthesis methods, questionable veracity (accuracy) due to extraction errors and reporting biases, and low velocity, meaning the data does not rapidly update with new knowledge [7].

2. Why would a machine learning model for predicting synthesis conditions perform poorly, even with a large number of text-mined recipes? Performance issues often stem from data veracity and variety problems [7]. The model may be learning from noisy or inaccurate data. For instance, a key study found that only 28% of text-mined solid-state synthesis paragraphs could be converted into a balanced chemical reaction, meaning over 70% of the data was incomplete or unusable [7]. Furthermore, the data reflects historical research biases (e.g., certain popular material classes are over-represented), so the model will be less accurate for novel or less-common materials [7].

3. Our team has extracted a large dataset of synthesis recipes. How can we check its practical utility for guiding new experiments? Beyond simply building a regression model, you should proactively search for anomalous recipes [7]. Manually examining procedures that defy conventional synthesis intuition can reveal new scientific insights and hypotheses about reaction mechanisms. The most valuable outcome of your dataset may not be a predictive model, but the discovery of previously overlooked synthesis principles that can be validated through controlled experiments [7].

4. What is the typical yield of a text-mining pipeline for materials synthesis data? The extraction yield can be low. One effort to text-mine solid-state synthesis recipes from over 4 million papers resulted in only 31,782 usable synthesis recipes [7]. Another similar pipeline produced 19,488 entries from 53,538 solid-state synthesis paragraphs, an extraction yield of approximately 36% [8]. This demonstrates that a significant majority of the published text cannot be automatically converted into structured, machine-operable data.

5. How have natural language processing (NLP) techniques improved the mining of complex synthesis data? Early text-mining pipelines used models like Word2Vec and BiLSTM-CRF [8]. More recent efforts have transitioned to advanced models like Bidirectional Encoder Representations from Transformers (BERT) that are pre-trained on millions of scientific text paragraphs [9]. This has significantly improved performance, for example, increasing the F1 score for classifying synthesis paragraphs from 94.6% to 99.5% [9].

The tables below summarize the scale and key challenges of existing text-mined datasets for inorganic materials synthesis.

Table 1: Volume and Yield of Text-Mined Synthesis Data

| Dataset Type | Total Papers Processed | Identified Synthesis Paragraphs | Final Usable Recipes | Extraction Yield | Reference |

|---|---|---|---|---|---|

| Solid-State Synthesis | 4,204,170 | 53,538 paragraphs | 19,488 recipes | ~28% - 36% | [7] [8] |

| Solution-Based Synthesis | 4,060,000 | Not Specified | 35,675 recipes | Not Specified | [9] |

Table 2: Performance of NLP Pipelines in Data Extraction

| NLP Task | Model(s) Used | Annotation Set Size | Performance | Reference |

|---|---|---|---|---|

| Synthesis Paragraph Classification | BERT | 7,292 labeled paragraphs | F1 Score: 99.5% | [9] |

| Materials Entity Recognition | BiLSTM-CRF with Word2Vec | 834 annotated paragraphs | Not Specified | [8] |

| Synthesis Operations Classification | Word2Vec & Dependency Tree | 664 annotated sentences | Not Specified | [8] |

Experimental Protocol: Text-Mining Synthesis Recipes

The following workflow details the established methodology for building a dataset of codified synthesis recipes from scientific literature [8] [9].

Title: Text-Mining Pipeline for Synthesis Data

Protocol Steps:

- Content Acquisition: Secure permissions from publishers and download full-text articles in HTML/XML format (post-2000) using a customized web-scraper (e.g., Scrapy or Borges) [8] [9]. Store text and metadata in a document database like MongoDB.

- Paragraph Classification: Identify paragraphs describing synthesis methods using a classifier. Modern implementations use a fine-tuned BERT model, trained on thousands of labeled paragraphs (e.g., "solid-state," "hydrothermal," "none of the above") to achieve high accuracy (F1 > 99%) [9].

- Material Entities Recognition (MER):

- Extract Synthesis Actions and Attributes: Train a neural network (e.g., RNN with Word2Vec embeddings) to label verb tokens in sentences with operation types:

MIXING,HEATING,DRYING, etc. [8]. Use dependency tree parsing (e.g., with SpaCy) to associate parameters like temperature, time, and atmosphere with each operation [8] [9]. - Extract Material Quantities: For each identified material, parse the sentence's syntax tree (e.g., using NLTK) to find the largest sub-tree containing only that material. Search this sub-tree for numerical values and units corresponding to molarity, concentration, or volume, and assign them to the material [9].

- Build Reaction Formulas: Convert all material strings into structured chemical formulas using a material parser. Pair targets with precursor candidates and balance the chemical reaction by solving a system of linear equations, including "open" compounds like O₂ or CO₂ where necessary [8] [9].

- Data Compilation: Combine all extracted information—target, precursors, quantities, operations, conditions, and balanced reaction—into a structured "codified recipe" format (e.g., JSON) to create the final database [8].

Table 3: Essential Resources for Text-Mining and Data-Driven Synthesis Research

| Item | Function / Description | Relevance to the Field |

|---|---|---|

| BERT (Bidirectional Encoder Representations from Transformers) | A advanced NLP model pre-trained on a large corpus, fine-tuned for tasks like paragraph classification and entity recognition in scientific text [9]. | Dramatically improves the accuracy of identifying synthesis paragraphs and extracting key information compared to older models [9]. |

| BiLSTM-CRF (Bidirectional Long Short-Term Memory with a Conditional Random Field) | A neural network architecture used for sequence labeling tasks, such as identifying and classifying material entities in a sentence [7] [8]. | Core to the Materials Entity Recognition (MER) step, allowing for the accurate identification of targets and precursors from unstructured text [8]. |

| ChemDataExtractor | A tool-kit specifically designed for automatically extracting chemical information from scientific documents [10]. | Provides a rule-based and machine-learning framework for parsing chemical names, properties, and synthesis conditions from the literature [10]. |

| "Open" Compounds (e.g., O₂, CO₂, N₂) | A set of volatile compounds included when balancing chemical reactions derived from text to account for elements gained or lost from the atmosphere [7] [8]. | Critical for converting a list of precursors and a target into a valid, balanced chemical reaction, which enables subsequent analysis of reaction energetics [8]. |

| SpaCy & NLTK Libraries | Natural language processing libraries used for grammatical parsing, building dependency trees, and analyzing sentence syntax [8] [9]. | Essential for the precise extraction of synthesis parameters (time, temperature) and for correctly assigning numerical quantities to their corresponding materials [9]. |

Social, Cultural, and Anthropogenic Biases in Historical Synthesis Data

Troubleshooting Guide & FAQs

Frequently Asked Questions

What are anthropogenic biases in chemical synthesis data? Anthropogenic biases are systematic errors introduced by human decision-making during scientific research. In chemical synthesis, this manifests as scientists repeatedly selecting familiar reagents and a narrow range of reaction conditions, leading to a "power-law" distribution where a small subset of amines appear in the majority of reported metal oxide compounds [11] [12]. These biases are perpetuated when such datasets train machine learning models, limiting their predictive power for exploratory synthesis.

How does data imbalance specifically affect machine learning in materials discovery? Imbalanced data, where certain outcomes are significantly underrepresented, causes ML models to become biased toward majority classes. In chemistry, this often means models become good at predicting common outcomes but fail to recognize rare events. For instance, in drug discovery, models trained on imbalanced data may accurately identify inactive compounds but fail to detect the rare active molecules that are of real interest [13]. This imbalance arises from natural molecular distribution biases and "selection bias" in experimental priorities [13].

Can't we just collect more data to solve these bias problems? While more data can help, the fundamental issue is data quality and diversity, not just quantity. Historical data from lab notebooks shows consistently biased distributions of reaction condition choices regardless of dataset size [11]. Research demonstrates that smaller, purposefully randomized experimental datasets can produce superior ML models compared to larger human-selected datasets [11] [12]. Strategic data collection focusing on exploration rather than exploitation is more effective than simply accumulating more biased data.

What metrics should I use to detect bias in my synthesis dataset? Standard accuracy metrics can be misleading with biased data. Instead, monitor:

- Class distribution analysis across reagents, reaction conditions, and outcomes

- Power-law distributions in reagent selection [11]

- Precision-Recall curves rather than just accuracy [13]

- F1-score for imbalanced classification tasks [14]

- Performance disparities between majority and minority classes

Are certain types of chemical research more susceptible to these biases? Yes, research areas with strong historical precedents and established "magic recipes" show particularly strong biases. Hydrothermal synthesis of amine-templated metal oxides demonstrates pronounced power-law distributions in amine reactant choices [11]. Similarly, drug discovery datasets typically show extreme imbalance between active and inactive compounds [13]. Fields with high experimental costs or safety concerns also tend toward conservative, biased experimental designs.

Troubleshooting Common Experimental Problems

Problem: ML model performs well in validation but fails in real-world synthesis prediction

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Training data lacks negative results | Check literature bias: ≥95% success rates indicate bias [12] | Incorporate failed experiments; use strategic oversampling techniques [13] |

| Anthropogenic reagent bias | Analyze reagent frequency distribution; power-law patterns signal bias [11] | Apply randomized experimental designs; diversify reagent selection [12] |

| Condition range too narrow | Histogram analysis of reaction parameters (T, pH, time, etc.) | Use probability density functions to randomize parameters [11] |

Problem: Model consistently overlooks promising synthesis candidates

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Class imbalance in training data | Calculate class distribution metrics; use SMOTE techniques [13] | Apply cost-sensitive learning; ensemble methods [13] |

| Over-reliance on DFT calculations | Compare DFT predictions with experimental results [10] | Integrate multiple data sources; use consensus approaches [10] |

| Insufficient exploration of chemical space | Map explored vs. unexplored regions in parameter space | Implement active learning for guided exploration [12] |

Key Experimental Protocols & Data

Quantitative Analysis of Historical Data Biases

Table 1: Anthropogenic Bias Metrics in Reported Synthesis Data

| Bias Type | Measurement Method | Typical Finding | Impact on ML Performance |

|---|---|---|---|

| Reagent Selection Bias | Power-law distribution analysis | 17% of amine reactants occur in 79% of reported compounds [11] | Reduces model exploration capability by >40% |

| Condition Range Bias | Parameter distribution analysis | Human-selected conditions cover <23% of viable synthesis space [11] | Limits prediction to familiar regions only |

| Publication Bias | Success rate analysis in literature vs. lab notebooks | Literature: ~95% success; Lab records: ~65% success [12] | Creates false positive expectations |

| Temporal Reinforcement | Citation analysis of reagent popularity | Popular reagents become 3.2x more likely to be reused annually [11] | Amplifies existing biases over time |

Table 2: Performance Comparison of Bias Mitigation Strategies

| Method | Data Efficiency | Model Precision Improvement | Implementation Complexity |

|---|---|---|---|

| Randomized Experiments | 7.3x higher than human selection [11] | 1.5x higher precision than human experts [14] | Medium (requires experimental redesign) |

| Strategic Oversampling (SMOTE) | 45% reduction in data requirements [13] | 2.1x improvement in minority class recall [13] | Low (algorithmic solution) |

| Active Learning Integration | 68% more efficient exploration [12] | 3.4x better novel compound discovery [12] | High (requires iterative workflow) |

| Multi-source Data Fusion | 2.8x broader condition coverage [10] | 1.8x improvement in generalizability [10] | Medium (data integration challenge) |

Standardized Experimental Protocol for Bias-Aware Data Collection

Protocol 1: Randomized Exploration for Synthesis Condition Mapping

Purpose: To systematically explore synthetic parameter spaces while minimizing anthropogenic bias.

Materials:

- RAPID (Robot Accelerated Perovskite Investigation & Discovery) system or equivalent automation [12]

- ESCALATE (Experiment Specification, Capture, and Laboratory Automation Technology) platform [12]

- Diverse reagent library covering multiple chemical families

Procedure:

- Define Parameter Space: Identify all relevant reaction parameters (temperature, concentration, pH, time, etc.)

- Establish Probability Density Functions: For each parameter, define reasonable bounds based on chemical feasibility

- Generate Randomized Condition Sets: Create 500-1000 condition combinations using random sampling from probability distributions

- Execute High-Throughput Experiments: Utilize automated systems to perform reactions in randomized order

- Record Comprehensive Results: Document both successful and failed synthesis outcomes with equal detail

- Validate Coverage: Ensure parameter space is evenly sampled without human intervention in selection

Validation Metrics:

- Parameter space coverage should exceed 80% of defined bounds

- Success rate should typically range between 15-60% (extremely high or low rates indicate insufficient exploration)

- Reagent usage should follow approximately uniform distribution across available options

Research Reagent Solutions

Table 3: Essential Resources for Bias-Aware Synthesis Research

| Resource | Function | Application Context |

|---|---|---|

| ESCALATE Platform | Standardizes experiment specification and data capture [12] | Manual and automated synthesis workflows |

| RAPID System | Enables high-throughput experimentation [12] | Perovskite and related material discovery |

| SMOTE Algorithms | Generates synthetic minority class samples [13] | Addressing class imbalance in ML training data |

| SynthNN | Predicts synthesizability from composition alone [14] | Screening hypothetical materials for synthetic accessibility |

| Positive-Unlabeled Learning | Handles lack of negative examples [14] | Materials discovery where failed syntheses are unreported |

| Atom2Vec | Learns optimal chemical representations [14] | Representing chemical formulas without human bias |

Workflow Diagrams

Bias Mitigation Workflow

Bias-Aware Research Protocol

Frequently Asked Questions

Q1: What are the main data limitations of using text-mined synthesis recipes for machine learning?

Text-mined synthesis datasets often face significant challenges across four key dimensions, known as the "4 Vs" of data science [7]:

| Limitation | Description | Impact on ML Models |

|---|---|---|

| Volume | Limited number of recipes for specific material classes; 28% extraction yield from source paragraphs [7]. | Insufficient training data for robust, generalizable models. |

| Variety | Anthropogenic bias toward commonly studied materials and synthesis routes [7]. | Models capture historical preferences rather than optimal synthesis. |

| Veracity | Extraction errors, ambiguous material roles, and reporting inconsistencies [7]. | Introduces noise and inaccuracies into training data. |

| Velocity | Static datasets lacking new experimental results and negative data [7]. | Cannot incorporate latest findings or learn from failed experiments. |

Q2: How reliable are machine learning models trained on these datasets for predicting new syntheses?

Models trained primarily on historical data are successful at capturing how chemists have thought about synthesis but offer limited new insights for novel materials [7]. Their predictive utility is constrained because they learn from published literature, which contains inherent cultural and anthropogenic biases in how materials have been explored [7]. For truly novel materials, these models may not substantially outperform expert intuition.

Q3: What is the most valuable insight gained from analyzing anomalous recipes?

Manually examining synthesis recipes that defied conventional intuition led to new mechanistic hypotheses about solid-state reaction kinetics and precursor selection [7]. These anomalous recipes, though rare and unlikely to significantly influence standard regression models, inspired follow-up experimental studies that validated the proposed mechanisms [7].

Q4: What alternative approaches exist for predicting synthesizability?

Machine learning models like SynthNN can predict the synthesizability of inorganic materials directly from their chemical compositions. The table below compares this approach to traditional methods [14]:

| Method | Basis of Prediction | Key Advantage | Key Limitation |

|---|---|---|---|

| SynthNN | Learned from all known synthesized materials in ICSD [14]. | 7x higher precision than formation energy; outperforms human experts [14]. | Requires no prior chemical knowledge; learns chemical principles from data [14]. |

| Charge-Balancing | Net neutral ionic charge based on common oxidation states [14]. | Computationally inexpensive; chemically intuitive [14]. | Inflexible; only 37% of known synthesized materials are charge-balanced [14]. |

| DFT Formation Energy | Thermodynamic stability relative to decomposition products [14]. | Based on quantum-mechanical principles [14]. | Fails to account for kinetic stabilization; captures only ~50% of synthesized materials [14]. |

Troubleshooting Guides

Issue: Low Extraction Yield from Text-Mining Pipeline

Problem: The automated pipeline fails to extract a balanced chemical reaction from a large percentage of identified synthesis paragraphs.

Solution: This is a known limitation. The original study achieved only a 28% yield, producing 15,144 balanced reactions from 53,538 solid-state synthesis paragraphs [7].

Protocol: Improving Recipe Extraction

- Material Entity Recognition: Implement a BiLSTM-CRF neural network to identify and classify materials as targets, precursors, or other based on sentence context [8].

- Synthesis Operation Identification: Use Latent Dirichlet Allocation to cluster keywords into topics corresponding to specific operations (mixing, heating) [7].

- Condition Extraction: Apply dependency tree analysis to associate parameters (time, temperature) with their corresponding operations [8].

- Reaction Balancing: Process material strings into chemical formulas and solve a system of linear equations to balance the reaction, including "open" compounds like O₂ or CO₂ where necessary [8].

Issue: Model Performance Limited by Data Scarcity for Specific Material Classes

Problem: Machine learning models fail to generalize for material classes with few examples in the training data.

Solution: Adopt a positive-unlabeled (PU) learning approach, as used in SynthNN [14].

Protocol: Implementing a PU Learning Framework

- Compile Positive Data: Extract synthesized materials from databases like the Inorganic Crystal Structure Database (ICSD) [14].

- Generate Unlabeled Data: Create a large set of artificially generated chemical formulas that are not in the positive dataset [14].

- Model Training: Train a deep learning model (e.g., SynthNN) using an atom2vec framework. This framework learns an optimal representation of chemical formulas directly from the data of synthesized materials, without requiring pre-defined features [14].

- Probabilistic Reweighting: Account for the possibility that some unlabeled materials might be synthesizable by probabilistically reweighting them during training [14].

Experimental Protocols & Workflows

Text-Mining and Natural Language Processing Pipeline

This workflow converts unstructured synthesis text into structured, codified recipes [7] [8].

Synthesis Predictor Development Workflow

This workflow outlines the steps for creating a machine learning model to predict material synthesizability [14].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function | Relevance to Text-Mining & Synthesis Prediction |

|---|---|---|

| BiLSTM-CRF Network [8] | Identifies and classifies material entities (target, precursor) in text. | Core NLP component for extracting chemical names from literature. |

| Latent Dirichlet Allocation (LDA) [7] | Clusters synonyms into topics representing synthesis operations (e.g., heating). | Enables consistent classification of diverse chemical terminology. |

| ChemDataExtractor [10] | Automated toolkit for extracting chemical data from scientific literature. | Facilitates large-scale, automated creation of training datasets. |

| Inorganic Crystal Structure Database (ICSD) [14] | Database of experimentally reported crystalline inorganic structures. | Source of "positive" data (known synthesized materials) for ML models. |

| atom2vec Framework [14] | Learns optimal representation of chemical formulas from data. | Allows models to learn chemical principles like charge-balancing without explicit rules. |

| Positive-Unlabeled (PU) Learning [14] | Trains classifiers using positive and unlabeled data only. | Addresses the lack of confirmed "negative" examples (unsynthesizable materials). |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Our machine learning models for predicting synthesis outcomes are underperforming. We suspect data quality issues, but our dataset is small. What is the most effective first step?

A1: The most effective first step is to implement a Domain Knowledge-Assisted Data Anomaly Detection (DKA-DAD) workflow. Pure data-driven methods often struggle with the complex, multi-factor relationships in materials data. The DKA-DAD approach encodes expert knowledge as symbolic rules to evaluate data from multiple dimensions, including the correctness of individual descriptor values, correlations between descriptors, and similarity between samples. This method has been validated to achieve a 12% F1-score improvement in anomaly detection accuracy compared to purely data-driven approaches and leads to an average 9.6% improvement in R² for property prediction models [15].

Q2: We have text-mined a large number of synthesis recipes from the literature, but our models still fail to predict successful syntheses for novel materials. Why?

A2: This is a common challenge rooted in the "4 Vs" of data science: Volume, Variety, Veracity, and Velocity. Historical text-mined datasets often suffer from:

- Limited Variety: They are dominated by a small subset of well-studied material classes (e.g., oxides), creating anthropogenic biases.

- Veracity Issues: Extraction yields can be low (e.g., ~28% for solid-state recipes), and the data may contain errors or omissions from the original text or the parsing process.

- Lack of Negative Data: These datasets overwhelmingly report successful syntheses, creating a severe positive bias that limits a model's ability to discriminate between viable and failed experiments [7]. In such cases, the most valuable insights often come not from building regression models but from manually examining the anomalous recipes that defy conventional wisdom, as these can lead to new mechanistic hypotheses [7].

Q3: How can we assess whether a molecule generated by a generative AI model is chemically realistic and synthesizable?

A3: You can use computational frameworks like AnoChem, which is a deep learning model specifically designed to distinguish between real and AI-generated molecules. It achieves an area under the receiver operating characteristic curve (AUROC) score of 0.900 for this task. This tool can be used to evaluate and compare the performance of different generative models, and its results show strong correlation with other established metrics like the synthetic accessibility score (SAscore) and the Fréchet ChemNet Distance (FCD) [16].

Q4: For a new research project, should we focus on running as many experiments as possible or on implementing an experiment tracking system?

A4: Implementing an experiment tracking system is crucial for long-term efficiency and success. Machine learning is an iterative process, and without proper tracking, teams often waste resources repeating past experiments. A robust tracking system ensures reproducibility, enables systematic model comparison and tuning, and facilitates better collaboration by providing a centralized record of all experiments, including the code, dataset versions, hyperparameters, and evaluation metrics used [17].

Troubleshooting Guides

Issue: Poor Model Generalization and Prediction Accuracy on a Small Dataset

Root Cause: The dataset likely contains anomalies (errors or outliers) and may lack the necessary domain knowledge to guide the model effectively.

Solution: Implement the Domain Knowledge-Assisted Data Anomaly Detection (DKA-DAD) workflow [15].

Experimental Protocol:

- Symbolize Domain Knowledge: Encode expert knowledge into symbolic rules. For example:

- Rule for descriptor value: "The bond length between two specific atoms must be within a physically plausible range (e.g., 1.0 Å to 2.5 Å)."

- Rule for descriptor correlation: "The melting point of a material must correlate positively with its cohesive energy."

- Rule for sample similarity: "Two samples with identical compositions but radically different reported properties should be flagged."

- Apply Detection Models: Run your dataset through the three designed detection models that use the symbolic rules to evaluate data correctness, descriptor correlation, and sample similarity.

- Govern the Data: Use the comprehensive modification model to correct or remove identified anomalies.

- Re-train ML Model: Train your machine learning model on the governed (cleaned) dataset.

The quantitative benefits of this governance process are summarized below [15]:

Table 1: Impact of Data Governance using DKA-DAD on Model Performance

| Metric | Performance before Governance | Performance after Governance | Improvement |

|---|---|---|---|

| Anomaly Detection F1-Score | Baseline (Purely Data-Driven) | -- | +12% |

| ML Model R² (Avg. across 60 datasets) | Baseline | -- | +9.6% |

Diagram: Domain Knowledge-Assisted Data Anomaly Detection (DKA-DAD) Workflow

Issue: Failure to Generate Novel, Synthesizable Drug Candidates with Generative AI

Root Cause: The generative model may be producing molecules with poor target engagement, low synthetic accessibility, or limited novelty (the "applicability domain" problem) [18].

Solution: Employ a generative model workflow that integrates a Variational Autoencoder (VAE) with nested active learning (AL) cycles, using both chemoinformatic and physics-based oracles [18].

Experimental Protocol:

- Initial Training: Train a VAE on a target-specific training set of molecules.

- Inner Active Learning Cycle (Cheminformatics Oracle):

- Generate: Sample the VAE to produce new molecules.

- Evaluate: Filter generated molecules for drug-likeness, synthetic accessibility (SA), and novelty (dissimilarity from the training set).

- Fine-tune: Use the molecules that pass this filter to fine-tune the VAE, reinforcing the desired properties. Repeat this cycle several times.

- Outer Active Learning Cycle (Physics-Based Oracle):

- Evaluate: Take molecules accumulated from inner cycles and evaluate them using a physics-based oracle, such as molecular docking simulations, to predict target affinity.

- Fine-tune: Use the high-scoring molecules to fine-tune the VAE. Subsequent inner cycles will now assess similarity against this high-quality set.

- Candidate Selection: After multiple outer cycles, select top candidates for further validation via free energy simulations and experimental synthesis [18].

Diagram: Generative AI with Nested Active Learning for Drug Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Resources for ML-Driven Materials and Drug Discovery

| Item Name | Function / Purpose | Key Features / Notes |

|---|---|---|

| DKA-DAD Workflow [15] | A systematic approach to detect and correct anomalies in materials datasets by integrating domain knowledge. | Improves ML model R² by ~9.6% on average; uses symbolic rules for value, correlation, and similarity checks. |

| AnoChem [16] | A deep learning framework to assess the likelihood that a molecule generated by an AI is realistic and synthesizable. | AUROC score of 0.900 for distinguishing real from generated molecules; correlates with SAscore and FCD. |

| VAE-AL GM Workflow [18] | A generative AI system combining Variational Autoencoders with Active Learning to design novel, synthesizable drug candidates. | Uses nested AL cycles with cheminformatics and physics-based oracles; successfully generated novel CDK2 inhibitors. |

| Text-Mined Synthesis Database [7] | A large-scale collection of inorganic synthesis recipes extracted from scientific literature using natural language processing. | Contains tens of thousands of recipes; most valuable for identifying anomalous, hypothesis-generating data points. |

| Experiment Tracking System [17] | A centralized system (e.g., DagsHub, MLflow) to log all metadata from ML experiments for reproducibility and comparison. | Tracks code, data versions, hyperparameters, and metrics; essential for avoiding redundant work and model auditing. |

Advanced Techniques to Generate and Leverage Sparse Data

Multi-Task Learning (MTL) and Adaptive Checkpointing to Mitigate Negative Transfer

Data scarcity remains a significant bottleneck in machine learning for inorganic materials synthesis and molecular property prediction, affecting diverse domains from pharmaceuticals to clean energy research. Conventional machine learning techniques typically require large, well-balanced datasets to achieve reliable performance, yet experimental data for novel materials and molecules is often extremely limited and labor-intensive to obtain. Multi-task learning (MTL) has emerged as a promising approach to alleviate these data bottlenecks by leveraging correlations among related molecular properties. However, MTL often suffers from negative transfer (NT), where performance drops occur when updates driven by one task detrimentally affect another. This technical support guide explores Adaptive Checkpointing with Specialization (ACS) and other advanced MTL techniques specifically designed to mitigate negative transfer while enhancing predictive capabilities in data-scarce research environments prevalent in materials science and drug development.

Understanding Negative Transfer in Multi-Task Learning

What is Negative Transfer and How Do I Identify It?

Negative transfer occurs when parameter updates driven by one task degrade performance on another task during multi-task learning. This phenomenon is particularly prevalent in scenarios with imbalanced training datasets or low task relatedness.

- Primary Indicators: Sudden performance degradation on specific tasks after combined training begins, divergent convergence patterns across tasks, and inconsistent validation losses that fluctuate without stabilization.

- Root Causes: Gradient conflicts where task gradients point in opposing directions, capacity mismatch where shared backbone lacks flexibility for divergent task demands, optimization mismatches where tasks exhibit different optimal learning rates, and data distribution disparities including temporal or spatial differences in measurements.

How Does Task Imbalance Exacerbate Negative Transfer?

Task imbalance, where certain tasks have far fewer labeled examples than others, severely limits the influence of low-data tasks on shared model parameters. Research has quantified this relationship using the task imbalance definition:

[{I}{i}=1-\frac{{L}{i}}{{\max {L}_{j}}}\atop {j{\mathcal{\in }}{\mathcal{D}}}]

where ({L}_{i}) represents the number of labeled entries for task (i) [19]. Higher imbalance ratios correlate strongly with increased negative transfer effects, particularly for tasks with fewer than 50 labeled samples.

Adaptive Checkpointing with Specialization (ACS): Core Methodology

What is ACS and How Does It Mitigate Negative Transfer?

Adaptive Checkpointing with Specialization (ACS) is a data-efficient training scheme for multi-task graph neural networks designed to counteract negative transfer effects while preserving MTL benefits. The approach integrates a shared, task-agnostic backbone with task-specific trainable heads and implements adaptive checkpointing of model parameters when negative transfer signals are detected [19] [20].

- Architecture: Employs a single graph neural network based on message passing as a general-purpose backbone, combined with task-specific multi-layer perceptron (MLP) heads for specialized learning capacity.

- Checkpointing Mechanism: Monitors validation loss for every task and checkpoints the best backbone-head pair whenever a task's validation loss reaches a new minimum.

- Specialization Phase: After training, each task obtains a specialized backbone-head pair optimized for its specific characteristics, effectively balancing inductive transfer with protection from detrimental parameter updates.

ACS Experimental Protocol and Implementation

Implementation Framework:

The official ACS code repository is available through GitHub, providing complete training and evaluation scripts [20].

Hyperparameter Configuration:

- Learning rate: 0.001 with Adam optimizer

- Batch size: 32-128 depending on dataset size

- Early stopping patience: 10-20 epochs

- Message passing steps: 3-5 depending on molecular complexity

- Hidden dimensions: 128-256 for backbone, 64-128 for task heads

Table: ACS Performance Comparison on Molecular Property Benchmarks (ROC-AUC)

| Dataset | Single-Task Learning | Conventional MTL | MTL with Global Checkpointing | ACS |

|---|---|---|---|---|

| ClinTox | 0.793 | 0.838 | 0.841 | 0.914 |

| SIDER | 0.845 | 0.862 | 0.868 | 0.881 |

| Tox21 | 0.821 | 0.849 | 0.853 | 0.866 |

Table: ACS Performance in Ultra-Low Data Regime (Sustainable Aviation Fuel Properties)

| Training Samples | Conventional MTL (RMSE) | ACS (RMSE) | Improvement |

|---|---|---|---|

| 29 | 0.482 | 0.381 | 20.9% |

| 58 | 0.395 | 0.324 | 17.9% |

| 116 | 0.331 | 0.285 | 13.9% |

ACS Workflow Visualization

Alternative MTL Approaches for Different Scenarios

When Should I Consider Alternative MTL Strategies?

While ACS excels in scenarios with significant task imbalance and negative transfer, other MTL approaches may be better suited for different research contexts:

PiKE (Positive gradient interaction-based K-task weights Estimator)

- Best For: Scenarios with predominantly positive task interactions and minimal gradient conflicts [21]

- Approach: Dynamically adjusts task contributions throughout training based on gradient magnitudes and variance

- Advantages: Minimal computational overhead, theoretical convergence guarantees, effective for large-scale language model pretraining

Model Merging as Adaptive Projective Gradient Descent

- Best For: Integrating multiple pre-trained expert models without original training data [22]

- Approach: Frames merging as constrained optimization, minimizing gap between merged and individual models while retaining shared knowledge

- Advantages: Data-free operation, preserves task-specific information, handles diverse architectures

Structure-Aware Transfer Learning

- Best For: Cross-property and cross-materials class prediction with crystal structure data [23]

- Approach: Leverages graph neural network-based architecture with deep transfer learning

- Advantages: Outperforms scratch models in ≈90% of cases, effective for extrapolation problems

Comparative Analysis of MTL Mitigation Strategies

Table: MTL Negative Transfer Mitigation Strategy Comparison

| Method | Key Mechanism | Data Requirements | Computational Overhead | Best Use Cases |

|---|---|---|---|---|

| ACS | Adaptive checkpointing with task specialization | Works with ultra-low data (29+ samples) | Moderate | Highly imbalanced tasks, molecular property prediction |

| PiKE | Dynamic data mixing based on gradient interactions | Medium to large datasets | Low | Positive task interactions, foundation model training |

| Model Merging | Projective gradient descent in shared subspace | Pre-trained models only | Low post-merging | Combining expert models, vision and NLP tasks |

| Structure-Aware TL | GNN-based feature extraction and fine-tuning | Source and target datasets | High initial pre-training | Cross-property materials prediction, crystal structures |

Troubleshooting Common MTL Implementation Issues

How Do I Resolve Persistent Negative Transfer Despite Using ACS?

Problem: Continued performance degradation on specific tasks after implementing ACS.

Solutions:

- Verify Task Relatedness: Analyze correlation between task loss patterns during initial training epochs. Consider separating unrelated tasks into different MTL groups.

- Adjust Architecture Capacity: Increase hidden dimensions in shared backbone or task-specific heads for complex task combinations.

- Optimize Checkpointing Frequency: Reduce checkpointing interval for rapidly fluctuating validation losses.

- Gradient Monitoring: Implement gradient conflict detection using cosine similarity between task gradients.

What Should I Do When Facing Extreme Data Scarcity (<30 Samples per Task)?

Problem: Insufficient data even for effective knowledge transfer in ACS.

Solutions:

- Leverage Transfer Learning: Utilize pre-trained models on large source datasets (e.g., Materials Project formation energy models) [23]

- Feature Extraction: Use pre-trained GNNs as feature extractors rather than fine-tuning entire architectures

- Data Augmentation: Implement symmetry-aware structure perturbations for materials data [24]

- Multi-Modal Approaches: Integrate structure-aware models with language-based information using frameworks like MatterChat [25]

Research Reagent Solutions: Essential Tools for MTL Experiments

Table: Essential Computational Tools for MTL in Materials Informatics

| Tool Name | Type | Primary Function | Implementation Resources |

|---|---|---|---|

| ACS Framework | Training scheme | Mitigates negative transfer in multi-task GNNs | GitHub: BasemEr/acs [20] |

| ALIGNN | GNN architecture | Structure-aware materials property prediction | Open-source package [23] |

| MatterChat | Multi-modal LLM | Integrates material structures with textual queries | Custom implementation [25] |

| CHGNet | Universal ML interatomic potential | Atomic-level embedding generation | Pre-trained models available [25] |

| Magpie Descriptors | Feature generation | Composition-based materials descriptors | Open-source implementation [26] |

Advanced Integration: Multi-Modal Approaches for Enhanced Prediction

How Can I Integrate MTL with Emerging Multi-Modal Architectures?

The integration of structure-aware models with large language models presents new opportunities for MTL in scientific domains. The MatterChat architecture demonstrates effective alignment of material structural data with textual inputs through:

- Material Processing Branch: CHGNet-based graph encoding for crystal structures

- Bridge Model: Transformer-based alignment with trainable query vectors

- Language Processing Branch: Mistral 7B LLM for processing textual prompts [25]

This approach enables simultaneous prediction of diverse properties (formation energy, bandgap, magnetic status) while supporting natural language queries—effectively combining MTL benefits with human-AI interaction capabilities particularly valuable for drug development professionals and materials scientists.

Frequently Asked Questions

Q: Can ACS be applied to non-graph-based architectures like transformers? A: While initially developed for graph neural networks, the core ACS methodology of adaptive checkpointing and specialization is architecture-agnostic. Implementation would require modification of the checkpointing mechanism to handle transformer-specific components.

Q: How do I determine optimal task grouping for MTL? A: Current research suggests analyzing gradient conflicts during preliminary training, with cosine similarity between task gradients below 0.5 indicating potential negative transfer. Task grouping based on chemical intuition (e.g., grouping related thermodynamic properties) also proves effective.

Q: What validation protocols are essential for reliable MTL performance? A: Implement strict temporal splits (evaluating on newer data than training) rather than random splits, as random splits often inflate performance estimates by 15-20% due to elevated structural similarity [19].

Q: How can I estimate computational requirements for large-scale MTL? A: ACS introduces approximately 15-20% overhead compared to conventional MTL due to checkpointing operations. For large-scale materials discovery (≈1M+ candidates), consider distributed training frameworks like those used in GNoME with active learning [24].

Generative Adversarial Networks (GANs) for Synthetic Data Generation

Frequently Asked Questions (FAQs)

1. What are the most common failure modes when training a GAN? The two most prevalent failure modes are Mode Collapse and Convergence Failure [27] [28]. Mode collapse occurs when the generator produces a limited variety of samples, failing to capture the full diversity of the training data. Convergence failure happens when the training process becomes unstable and fails to find a balance between the generator and discriminator, resulting in non-meaningful outputs [28] [29].

2. How can I tell if my GAN is experiencing mode collapse? You can identify mode collapse by inspecting the samples generated by your model over time [28]. Key indicators include:

- Low Diversity: The generated samples look very similar or identical to each other, even when the input noise vector is changed [28] [29].

- Repeated Samples: The generator produces only a small set of plausible outputs repeatedly, instead of a wide variety [27].

3. My GAN losses are unstable. What does this mean? Unstable losses, particularly where the discriminator loss drops to near zero and the generator loss increases or also falls to zero, often indicate Convergence Failure [28] [29]. This typically means one network has become too dominant. A rapidly vanishing discriminator loss can lead to vanishing gradients for the generator, preventing it from learning [27].

4. Why is training stability a major challenge for GANs? GAN training is inherently unstable because it involves a dynamic, non-cooperative game between two networks [28]. The optimization problem changes with every update as both networks strive to outperform each other. Finding a Nash equilibrium (a state where neither player can reduce their cost unilaterally) in this high-dimensional, non-convex space is non-trivial and no known algorithm guarantees it [28].

5. Can GANs be used to discover new inorganic materials or drug molecules? Yes, GANs have shown significant promise in these fields. For example:

- Inorganic Materials: The MatGAN model can generate novel, chemically valid inorganic compositions by learning implicit rules from databases like ICSD, achieving a 84.5% validity rate for charge-neutral and electronegativity-balanced samples [30].

- Drug Discovery: Models like MedGAN can generate novel molecular structures with specific scaffolds (e.g., quinoline), with studies reporting up to 93% novelty and preservation of favorable drug-like properties [31].

Troubleshooting Guides

Issue 1: Mode Collapse

Problem: The generator produces limited variety in its outputs [27] [28].

Diagnosis: Visually check the generated samples. If the outputs lack diversity or are identical, mode collapse has occurred [29].

Solutions:

- Use an Advanced Loss Function: Implement Wasserstein GAN (WGAN) with a gradient penalty. This loss function provides more stable gradients even when the discriminator is well-trained, preventing the generator from getting stuck [27] [28] [31].

- Try Unrolled GANs: This approach updates the generator based on the feedback from several future steps of the discriminator, preventing it from over-optimizing for a single, temporarily weak discriminator state [27] [28].

- Adjust Model Architecture:

- Impair the Discriminator: Randomly assign incorrect labels to real images during discriminator training to prevent it from becoming too strong too quickly [28].

Issue 2: Convergence Failure

Problem: Training does not converge, and the generated samples are of very low quality or meaningless [28] [29].

Diagnosis: Monitor the loss curves. Key signs include the discriminator loss rapidly approaching zero and staying there, or the generator loss continuously increasing [28] [29].

Solutions: The solution depends on which network is dominating the training:

If the Discriminator is too strong (most common):

- Add Regularization: Impair the discriminator by using techniques like dropout layers or by randomly assigning false labels to some real samples [27] [28].

- Weaken the Discriminator: Reduce the discriminator's capacity by making it less deep [28].

- Add Noise: Introduce noise to the inputs of the discriminator [27].

If the Generator is too strong:

Issue 3: Vanishing Gradients

Problem: The generator stops improving because the discriminator becomes too good, and the gradient passed back to the generator becomes negligible [27].

Diagnosis: The generator loss fails to decrease over time despite continued training.

Solutions:

- Wasserstein GAN (WGAN): This is the primary solution. The Wasserstein loss is designed to provide usable gradients even when the discriminator (often called the "critic" in WGAN) is trained to optimality [27] [30].

- Modified Loss Functions: Use alternative loss functions, such as the modified minimax loss from the original GAN paper, which can be more robust [27].

Experimental Protocols & Data

Protocol 1: Generating Inorganic Materials with MatGAN

This protocol is based on the MatGAN framework for efficient sampling of inorganic chemical space [30].

1. Data Representation:

- Represent each inorganic material formula as an 8×85 matrix [30].

- Each column corresponds to one of the 85 stable elements, sorted by atomic number.

- Each column is a one-hot encoded vector representing the number of atoms (0 to 7) for that element.

2. Model Architecture (MatGAN):

- Base Model: Wasserstein GAN (WGAN) to mitigate gradient vanishing [30].

- Generator: Comprises one fully connected layer followed by seven deconvolution layers with batch normalization and ReLU activations. The output layer uses a Sigmoid activation [30].

- Discriminator (Critic): Comprises seven convolution layers with batch normalization and ReLU, followed by a fully connected layer [30].

3. Training:

- Loss Functions:

4. Validation:

- Check generated materials for chemical validity using rules like charge neutrality and electronegativity balance [30].

Table 1: Performance of MatGAN on Inorganic Material Generation [30]

| Metric | Performance |

|---|---|

| Novelty | 92.53% (when generating 2M samples) |

| Chemical Validity Rate | 84.5% |

Protocol 2: Optimized Molecular Generation with MedGAN

This protocol outlines the optimized MedGAN for generating novel drug-like molecules, specifically quinoline scaffolds [31].

1. Data Representation:

- Represent molecules as graphs.

- Use an adjacency tensor for bonds (edges) and a feature tensor for atoms (nodes), including features like atom type, chirality, and charge [31].

2. Model Architecture (MedGAN):

- Base Model: Wasserstein GAN with Gradient Penalty (WGAN-GP) combined with a Graph Convolutional Network (GCN) [31].

- Generator: Maps random noise to molecular graphs using GCN layers to learn structural relationships.

- Discriminator/Critic: Uses GCN layers to process molecular graphs and output a score for realism.

3. Optimized Hyperparameters [31]:

- Optimizer: RMSprop

- Learning Rate: 0.0001

- Latent Space Dimensions: 256

- Generator/Discriminator Units: 4,092 neurons

4. Validation Metrics:

- Validity: The percentage of generated molecular graphs that are chemically valid.

- Connectivity: The percentage of generated molecules with fully connected atoms.

- Novelty: The percentage of generated molecules not present in the training data.

- Uniqueness: The percentage of distinct molecules among all generated.

Table 2: Performance of Optimized MedGAN for Quinoline Molecule Generation [31]

| Metric | Performance |

|---|---|

| Validity | 25% |

| Connectivity | 62% |

| Quinoline Scaffold | 92% |

| Novelty | 93% |

| Uniqueness | 95% |

| Total Novel Quinolines | 4,831 molecules |

Workflow Diagrams

GAN Training for Synthetic Data Generation

Diagnosing and Resolving Common GAN Failures

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Components for GANs in Material and Molecule Generation

| Item / Solution | Function / Role | Exemplar Use-Case |

|---|---|---|

| Wasserstein GAN (WGAN) | Replaces standard GAN loss with Wasserstein distance to provide stable training, mitigate mode collapse, and solve vanishing gradients. | Core training framework in both MatGAN [30] and MedGAN [31]. |

| Graph Convolutional Network (GCN) | Processes graph-structured data, learning representations based on node connections and features. Essential for handling molecular graphs. | Used in MedGAN's generator and discriminator to learn and evaluate molecular structures [31]. |

| Root Mean Squared Propagation (RMSProp) | An optimization algorithm that adapts learning rates based on a moving average of squared gradients. Can offer better stability in complex tasks. | Chosen as the optimizer for MedGAN due to its superior performance in molecular graph generation over Adam [31]. |

| Convolutional & Deconvolutional Layers | Learn hierarchical spatial features from grid-like data (e.g., 2D matrix representations of materials). | Used in MatGAN's discriminator (convolution) and generator (deconvolution) to process material matrices [30]. |

| Adaptive Training Data | A strategy where the training dataset is updated with high-quality generated samples to promote exploration and avoid performance plateaus. | Inspired by genetic algorithms; used in drug discovery GANs to drastically increase the number of novel molecules produced [32]. |

Leveraging Large Language Models (LLMs) for Automated Literature Extraction

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using LLMs over traditional Named Entity Recognition (NER) models for data extraction from scientific literature?

LLMs like GPT-4 and Claude-3 offer superior contextual understanding and relationship mapping across longer text passages, which is a key limitation of traditional NER models [33]. They can perform complex information extraction with no (zero-shot) or just a few examples (few-shot), eliminating the need for large, labeled datasets and extensive model training [33]. Furthermore, employing a collaborative, multi-LLM workflow, where responses are cross-critiqued, can significantly enhance data extraction accuracy [34].

Q2: My LLM-extracted data contains inaccuracies or "hallucinations." How can I improve its reliability?

Implement a multi-model verification system. Research shows that when two different LLMs (e.g., GPT-4 and Claude-3) provide concordant (identical) answers for a data point, the accuracy is very high (e.g., 94%) [34]. For discordant answers, introduce a cross-critique step, where each LLM critiques the other's response. This process can resolve over 50% of disagreements and boost accuracy from ~0.45 to ~0.76 for these previously conflicting data points [34]. Additionally, a repeated questioning strategy can help reduce errors and hallucinations [33].

Q3: How can I efficiently manage the high computational cost of using powerful LLMs on large literature corpora?

To optimize costs, implement a dual-stage filtering pipeline before sending text to more expensive LLMs [33]. First, use a fast, property-specific heuristic filter to identify relevant paragraphs. Second, apply a NER filter to confirm the presence of all necessary entities (e.g., material, property, value, unit). This pre-processing drastically reduces the number of paragraphs sent for final, costly LLM inference, streamlining the entire extraction process [33].

Q4: Can LLMs be used to generate synthetic data to combat data scarcity in my research domain?

Yes, LLMs can be effectively used for data augmentation. In inorganic synthesis planning, LLMs were employed to generate 28,548 synthetic solid-state synthesis recipes. This LLM-generated data was then used to pre-train a model, which, after fine-tuning on real data, achieved significantly better performance (reducing prediction errors by up to 8.7%) compared to models trained solely on experimental data [35]. This demonstrates a viable strategy for mitigating data sparsity.

Q5: Which LLM is the best for automating systematic review tasks?

Performance can vary by specific task. A comparative study evaluating GPT-4, Claude-3, and Mistral 8x7B found that while Claude-3 excelled in PICO (Population, Intervention, Comparison, Outcome) design, GPT-4 demonstrated superior performance in search strategy formulation, literature screening, and data extraction [36]. The best model for your project may depend on the most critical task in your workflow.

Troubleshooting Guides

Issue 1: Low Accuracy in Extracted Data

Problem: The data points extracted by the LLM are frequently incorrect or inconsistent with the source literature.

Solution:

- Step 1: Implement a Two-Reviewer LLM Workflow: Mimic the human systematic review process by using two different LLMs (e.g., GPT-4 and Claude-3) to extract the same data independently [34].

- Step 2: Identify Concordant and Discordant Responses: Treat responses that are the same as "concordant" and different ones as "discordant" [34].

- Step 3: Cross-Critique Discordant Responses: Feed the discordant responses back to the LLMs, asking each to critique the other's answer. This often resolves a majority of the disagreements [34].

- Step 4: Human Oversight for Residual Discordance: For any data points that remain discordant after cross-critique, flag them for manual review by a human expert.

Issue 2: Handling Massive Literature Corpora with Limited Budget

Problem: Processing millions of journal articles with a powerful LLM is prohibitively expensive.

Solution:

- Step 1: Corpus Pre-Screening: Narrow down the corpus using keyword searches on titles and abstracts (e.g., "poly*" for polymer research) to identify the most relevant documents [33].

- Step 2: Apply a Two-Stage Filtering Pipeline:

- Heuristic Filter: Use simple, fast rule-based filters to scan paragraphs for mentions of target properties or keywords [33].

- NER Filter: Apply a lightweight, specialized NER model (like MaterialsBERT for materials science) to paragraphs that pass the first filter. This confirms the presence of a complete data record (material, property, value, unit) [33].

- Step 3: Targeted LLM Inference: Only the paragraphs that pass both filters are sent to the LLM for final, precise information extraction and structuring [33]. This workflow minimizes costly LLM calls.

Issue 3: Model Fails to Generalize for Novel Materials or Syntheses

Problem: The extraction or prediction model performs poorly on materials or synthesis pathways not well-represented in the training data.

Solution:

- Step 1: Leverage LLMs for Data Augmentation: Use the knowledge recall capabilities of state-of-the-art LLMs to generate plausible synthetic data for underrepresented classes. For example, prompt an LLM to generate synthesis recipes for target materials [35].

- Step 2: Create a Hybrid Dataset: Combine the original literature-mined data with the high-quality LLM-generated synthetic data [35].

- Step 3: Pre-train and Fine-tune: Pre-train your specialized model (e.g., a transformer-based synthesis predictor) on this enlarged, hybrid dataset. Subsequently, fine-tune it on the smaller set of real, experimental data to ground its predictions in reality [35]. This approach has been shown to reduce prediction errors significantly.

Experimental Protocols & Data

Protocol 1: Two-LLM Collaborative Data Extraction for Systematic Reviews

This methodology is designed for high-accuracy data extraction, as used in living systematic reviews (LSRs) [34].

- Dataset Preparation: Split your literature dataset into a prompt development set and a held-out test set.

- Parallel LLM Extraction: Use two different LLMs (e.g., GPT-4-turbo and Claude-3-Opus) to extract the target variables from the same text.

- Response Categorization: For each variable, categorize the LLM responses as concordant (identical) or discordant (different).

- Cross-Critique: For each discordant response, prompt each LLM to critique the other model's answer. This often leads to a consensus.

- Validation: Calculate the accuracy of concordant responses and post-critique resolved responses against a human-verified gold standard.

Table 1: Performance Metrics of a Collaborative LLM Workflow for Data Extraction [34]

| Metric | Prompt Development Set | Held-Out Test Set |

|---|---|---|

| Concordant Responses | 96% (110/115 variables) | 87% (342/391 variables) |

| Accuracy of Concordant Responses | 0.99 | 0.94 |

| Accuracy of Discordant Responses | N/A | 0.41 (GPT-4), 0.50 (Claude-3) |

| Accuracy After Cross-Critique | N/A | 0.76 (for previously discordant responses) |

Protocol 2: LLM-Generated Synthetic Data for Enhanced Synthesis Prediction

This protocol details using LLMs to overcome data scarcity in inorganic synthesis planning [35].

- Model Benchmarking: First, benchmark various off-the-shelf LLMs (e.g., GPT-4.1, Gemini 2.0 Flash, Llama 4) on synthesis tasks like precursor prediction and temperature forecasting using a held-out test set.

- Synthetic Data Generation: Prompt the best-performing LLM or an ensemble to generate a large number of synthetic reaction recipes for a wide range of target materials.

- Model Training with Hybrid Data:

- Baseline: Train a specialized model (e.g., SyntMTE, a transformer) only on literature-mined data.

- Enhanced Model: Pre-train the same model architecture on the combination of literature-mined and LLM-generated synthetic data. Then, fine-tune it on the literature-mined data.

- Performance Evaluation: Compare the prediction errors (e.g., Mean Absolute Error for temperatures) of the baseline and enhanced models on a test set of real synthesis data.

Table 2: Impact of LLM-Generated Data on Synthesis Prediction Accuracy [35]

| Model | Training Data | Sintering Temp. MAE | Calcination Temp. MAE |

|---|---|---|---|

| LLM Ensemble (Direct) | N/A (Zero-shot) | ~126 °C | ~126 °C |

| SyntMTE (Baseline) | Literature-only | >73 °C | >98 °C |

| SyntMTE (Enhanced) | Literature + Synthetic LLM Data | 73 °C | 98 °C |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for an LLM-Based Literature Extraction Pipeline

| Item | Function/Best Use-Case |

|---|---|

| GPT-4 / GPT-4-turbo | Excels in data extraction, literature screening, and search strategy formulation. Ideal as a primary extractor in a multi-LLM setup [36] [34]. |

| Claude-3 (Opus) | Demonstrates superior performance in structured design tasks (e.g., defining PICO frameworks). Effective as a collaborative reviewer LLM [36] [34]. |

| Llama 2 / 3 | Open-source alternative. Can be fine-tuned for domain-specific tasks, offering more control and potentially lower long-term costs [33]. |

| MaterialsBERT / PolymerBERT | Specialized NER models. Perfect for the initial filtering stage to identify relevant text snippets containing material and property entities before LLM processing [33]. |

| Elasticsearch / Crossref API | Tools for building and querying a large corpus of scientific literature from various publishers [33]. |

Workflow Visualization

The following diagram illustrates the optimized, cost-effective workflow for extracting structured data from scientific literature using a hybrid NER and LLM approach.

Optimized LLM Literature Extraction Workflow

Building Machine-Readable Knowledge Graphs from Unstructured Text

Frequently Asked Questions (FAQs)

Q1: What are the most common data quality issues when building a knowledge graph from text, and how can I resolve them?

Extracting data from unstructured text often introduces several data quality challenges that must be resolved for a reliable knowledge graph [37].

- Syntactic Variations: Entities with minor grammatical or punctuation differences (e.g., "electrode.", "electrodes,", "Electrode") [37].

- Semantic Variations: Phrases with synonymous vocabulary (e.g., "Light-Harvesting Ability" vs. "Light-Harvesting Capability") [37].

- Non-ASCII Characters: Special characters like Greek letters or mathematical symbols that can disrupt processing [37].

- Duplicate Entities: The same real-world concept appears multiple times with different surface forms (e.g., "NH3" and "Ammonia") [37].

Resolution Workflow:

- Remove non-ASCII characters to maintain data uniformity [37].

- Cluster similar entities using string matching algorithms like Levenshtein edit distance with a high similarity threshold (e.g., 90-95%) [37].

- Deduplicate clusters by employing a Large Language Model (LLM) API to determine a canonical representation for each cluster (e.g., standardizing "electrode" and "electrodes" to "electrode") [37].

- Apply this cleaning process iteratively (e.g., 5 times) to ensure thorough standardization [37].

Q2: My entity and relationship extractions are noisy. How can I improve their accuracy?

Noisy extractions are common when moving from prototype to production. Consider these steps:

- Use a Domain-Specific Model: A general-purpose Named Entity Recognition (NER) model may perform poorly on scientific text. Use a model pre-trained on a relevant corpus, such as MatBERT for materials science, which was trained on 5 million scientific papers [37].

- Leverage Semantic Patterns: For relationship extraction, use tools that allow you to define semantic patterns or "bundles" (e.g.,

Gene-Verb-Drug) to find specific associations rather than simple co-occurrence [38]. - Apply Context-Aware Models: For ambiguous relationships (e.g., does a drug treat or cause a headache?), train Machine Learning models on curated data to identify relationships within specific contexts [38].

Q3: How can I visually explore and analyze my knowledge graph effectively?

Choosing the right visualization technique is key to understanding your graph [39].

- Node-Link Diagrams: Best for exploring complex relationships and understanding the overall network structure, connectivity, and patterns like clusters [39].