Optimizing Hyperparameters for Materials Property Prediction: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on optimizing hyperparameters in machine learning models for materials and molecular property prediction.

Optimizing Hyperparameters for Materials Property Prediction: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing hyperparameters in machine learning models for materials and molecular property prediction. Covering foundational principles to advanced validation, it explores key challenges like dataset redundancy and data scarcity, details cutting-edge methods from graph neural networks to automated optimization frameworks, and offers practical troubleshooting strategies. By synthesizing the latest research, this guide aims to equip scientists with the knowledge to build more accurate, reliable, and generalizable predictive models, thereby accelerating the discovery of new functional materials and therapeutic compounds.

Laying the Groundwork: Core Concepts and Challenges in Materials Informatics

The Critical Role of Hyperparameter Tuning in Model Accuracy and Generalization

Troubleshooting Guides

FAQ 1: My dataset for a new material's property is very small. How can I tune hyperparameters effectively without overfitting?

Issue: Data scarcity for specific material properties (e.g., mechanical properties like elastic modulus) makes standard hyperparameter tuning prone to overfitting and poor generalization.

Solution: Employ Transfer Learning (TL) from a data-rich source task.

- Methodology: Utilize a model pre-trained on a large, related dataset (e.g., formation energy) as a starting point for training on your small, target dataset (e.g., shear modulus) [1]. This approach leverages generalized features learned from the large dataset, acting as a regularizer to prevent overfitting on the small dataset [1].

- Protocol:

- Identify a Source Model: Select a pre-trained model from a data-rich property prediction task. The formation energy is a commonly used and effective source task [1].

- Model Adaptation: Replace the final output layer of the pre-trained model to match your target property (e.g., from predicting formation energy to predicting bulk modulus).

- Fine-Tuning: Continue training (fine-tune) the adapted model on your small, target dataset. Use a lower learning rate during this phase to avoid catastrophic forgetting of the previously learned features.

FAQ 2: The hyperparameter search space is large, and a full Grid Search is too computationally expensive for my large-scale materials screening. What are efficient alternatives?

Issue: Grid Search is computationally prohibitive for exploring large hyperparameter spaces, especially with complex models like Graph Neural Networks (GNNs) on massive materials databases.

Solution: Implement Bayesian Optimization for hyperparameter search.

- Methodology: Bayesian Optimization constructs a probabilistic model of the objective function (model performance) to intelligently select the most promising hyperparameters to evaluate next, significantly reducing the number of configurations needed [2] [3].

- Protocol:

- Define Search Space: Specify the hyperparameters and their ranges (e.g., learning rate: [1e-5, 1e-2], number of GNN layers: [3, 10]).

- Choose Surrogate Model: Select a model like Gaussian Process to approximate the objective function.

- Select Acquisition Function: Use a function like Expected Improvement to decide which hyperparameters to test next.

- Iterate: Repeatedly evaluate the model, update the surrogate, and select new hyperparameters until a performance threshold or computational budget is met. Studies show Bayesian Optimization can achieve higher performance with reduced computation time compared to Grid Search [2].

FAQ 3: After tuning, my model's predictions on novel chemical compositions are poor. How can I diagnose if the issue is with hyperparameters or model architecture?

Issue: Poor generalization to new data can stem from inadequate hyperparameters or an insufficiently powerful model architecture that cannot capture complex material relationships.

Solution: Benchmark your model's performance against state-of-the-art architectures and their known effective hyperparameter ranges.

- Methodology: Compare your model's performance on a validation set with held-out compositions against advanced frameworks. For materials property prediction, architectures that explicitly model many-body interactions (e.g., up to four-body) often show superior performance [1].

- Protocol:

- Implement a Baseline: Train a advanced model, such as a Transformer-Graph framework like CrysCo that uses edge-gated attention and incorporates four-body interactions, using hyperparameters reported in literature [1].

- Comparative Validation: Evaluate both your model and the baseline on the same validation set containing unseen compositions.

- Diagnosis: If the baseline model significantly outperforms yours, the issue likely lies with your model architecture's capacity to represent material structures. If performance is similar, the problem may reside in your hyperparameter tuning strategy or data quality.

FAQ 4: My tuned model is a "black box." How can I understand which features or hyperparameters most influence its predictions for my material design goals?

Issue: Lack of interpretability in complex, tuned models hinders physical insights and trust in the predictions for guiding material synthesis.

Solution: Integrate interpretability tools like SHapley Additive exPlanations (SHAP) into your workflow.

- Methodology: SHAP analysis quantifies the contribution of each input feature (e.g., composition, processing parameters) to a single prediction, providing model-agnostic interpretability [4] [5] [6].

- Protocol:

- Train Final Model: Train your model using the optimized hyperparameters on the full dataset.

- Compute SHAP Values: Use a SHAP library (e.g., the

shapPython package) on your trained model and a representative sample of your data. - Analyze Results: Generate summary plots to identify global feature importance and force plots to explain individual predictions. For instance, in concrete strength prediction, SHAP has revealed that the water-to-binder ratio and curing age are the most influential features [5].

FAQ 5: I need to optimize for multiple material properties simultaneously (e.g., strength and conductivity). How does hyperparameter tuning change for multi-objective problems?

Issue: Single-model hyperparameter tuning is designed to optimize for one objective, whereas material design often requires balancing multiple, sometimes competing, properties.

Solution: Utilize multi-objective optimization frameworks.

- Methodology: Frameworks like MatSci-ML Studio incorporate multi-objective optimization engines that can search for hyperparameters and model configurations that yield the best compromise between several target properties [4].

- Protocol:

- Define Objectives: Clearly specify the multiple properties to be predicted and optimized (e.g., compressive strength and thermal conductivity).

- Set Up Multi-Objective Optimization: Use a tool that supports this, defining the objective space and any constraints.

- Pareto Front Analysis: The output will not be a single "best" model but a set of models representing the Pareto front—optimal trade-offs between the objectives. Researchers can then select a model from this front based on their specific priority balance.

Experimental Protocols & Data

The table below summarizes the core hyperparameter optimization methods discussed, helping you choose the right strategy for your materials informatics project.

Table 1: Comparison of Hyperparameter Optimization Methods

| Method | Core Principle | Advantages | Disadvantages | Ideal Use Case in Materials Science |

|---|---|---|---|---|

| Grid Search [7] | Exhaustive search over a predefined set of values. | Simple to implement; guaranteed to find best combination in grid. | Computationally intractable for large search spaces or high-dimensional problems. | Small, well-understood hyperparameter spaces with few parameters. |

| Random Search [7] | Randomly samples hyperparameters from specified distributions. | More efficient than Grid Search; better at exploring large spaces. | Can miss optimal regions; no learning from past evaluations. | Initial exploration of a broad hyperparameter space with a limited budget. |

| Bayesian Optimization [2] [3] | Builds a probabilistic model to guide the search towards promising configurations. | High sample efficiency; effective for expensive-to-evaluate models. | Overhead of building the surrogate model; performance depends on the surrogate and acquisition function. | Tuning complex models (e.g., GNNs, Transformers) where each training run is computationally costly [2]. |

| Genetic Algorithm [7] | A metaheuristic inspired by natural selection, using operations like mutation and crossover. | Good for complex, non-differentiable search spaces; can find global optima. | Can require many evaluations; computationally intensive. | Problems with categorical or conditional hyperparameters where gradient-based methods are unsuitable. |

The Scientist's Toolkit: Essential Software for Automated Workflows

Automating the machine learning pipeline is key to reproducible and efficient materials research. The following table lists essential tools mentioned in the literature.

Table 2: Key Research Reagent Solutions for Materials Informatics

| Tool / Framework | Type | Primary Function | Relevance to Hyperparameter Tuning |

|---|---|---|---|

| AutoGluon, TPOT, H2O.ai [8] | AutoML Library | Automates end-to-end ML pipeline, including model selection and hyperparameter tuning. | Reduces manual effort; provides strong baselines through advanced automated tuning. |

| MatSci-ML Studio [4] | GUI-based Toolkit | User-friendly platform for data management, preprocessing, model training, and interpretation. | Incorporates automated hyperparameter optimization via Optuna, making HPO accessible to non-programmers. |

| Optuna [4] | Hyperparameter Optimization Framework | A dedicated library for efficient and scalable hyperparameter search using Bayesian optimization. | Enables custom, scalable HPO experiments with state-of-the-art pruning algorithms. |

| ALIGNN, MEGNet [1] | Specialized ML Model | Graph Neural Network models designed for atomic systems and crystal structures. | Their performance is highly dependent on correct hyperparameters (e.g., number of layers, hidden dimensions), necessitating rigorous tuning. |

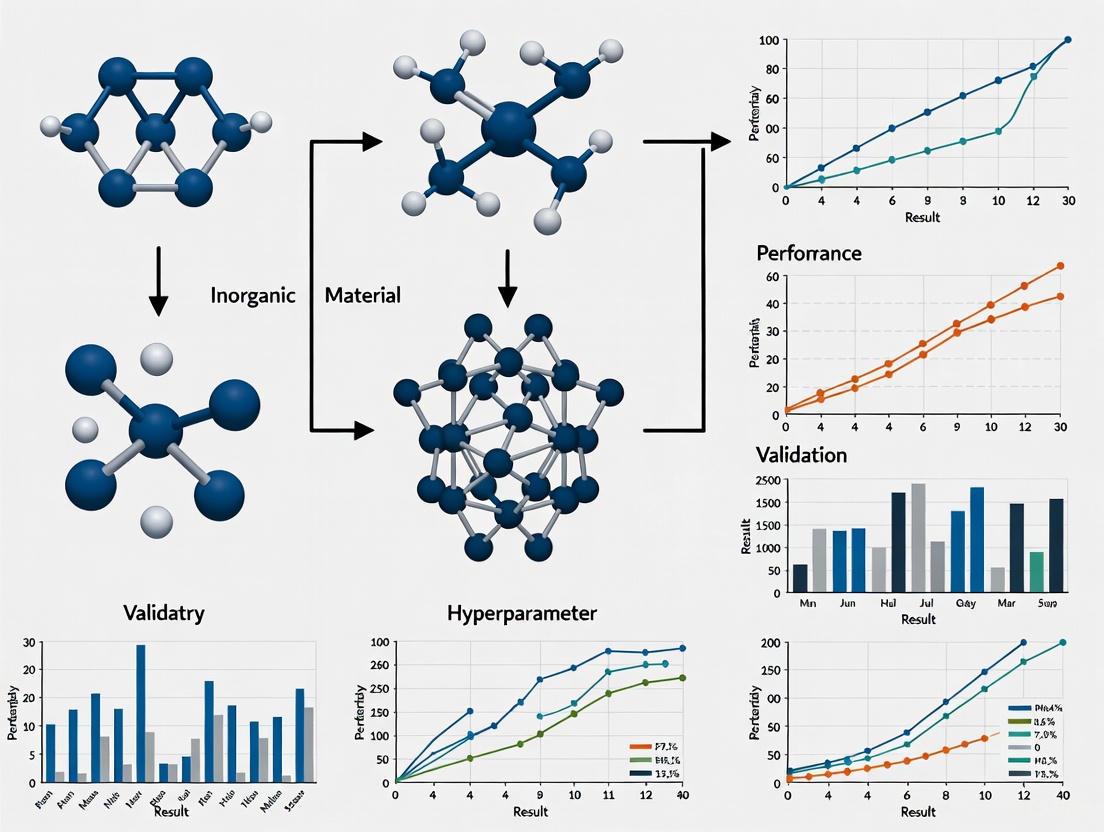

Workflow Visualizations

Hyperparameter Optimization Workflow for Materials Property Prediction

This diagram outlines a robust, iterative workflow for hyperparameter tuning, integrating solutions to common troubleshooting issues.

Transfer Learning Protocol for Data-Scarce Properties

This diagram details the transfer learning protocol, a key solution for dealing with small datasets for specific material properties.

Frequently Asked Questions

Q1: My model's predictions are unstable, with the loss value fluctuating wildly between training steps. What is the most likely cause and how can I fix it? A: This is a classic symptom of a learning rate that is set too high. The model's parameter updates are too large, causing it to repeatedly overshoot the minimum of the loss function [9]. To resolve this:

- Immediate Action: Reduce your learning rate by an order of magnitude (e.g., from 0.01 to 0.001) and resume training.

- Systematic Approach: Implement a learning rate schedule, such as time-based decay or exponential decay, which systematically reduces the learning rate over time to enable stable convergence [9].

- Use Adaptive Methods: Consider using optimizers with adaptive learning rates, like Adam or RMSProp, which adjust the rate for each parameter [9].

Q2: When using a physics-informed loss function, the physics constraint (e.g., stress equilibrium) is not being satisfied, even though the data loss is low. What should I check? A: This indicates an imbalance in your loss weights. The weight assigned to the physics-based regularization (PBR) term is likely too low relative to the data loss term [10].

- Diagnosis: Manually adjust the loss weight (e.g.,

lambda_physics) for the PBR term upward. Research shows that independently fine-tuning this hyperparameter for each specific loss function and dataset is critical for performance [10]. - Advanced Tuning: Investigate methods like Relative Loss Balancing to dynamically adjust the weights during training, preventing one loss term from dominating the other [10].

Q3: For predicting materials properties, my model performs well on abundant data (e.g., formation energy) but poorly on data-scarce properties (e.g., elastic moduli). What architectural choices can help? A: This is a common challenge. The key is to use architectures and strategies designed for limited data:

- Transfer Learning: Initialize your model using a pre-trained foundation model on a data-rich source task (like formation energy prediction). Then, fine-tune it on your data-scarce target task. This leverages previously learned patterns [1] [11].

- Hybrid or Feature-Based Models: For very small datasets, use models that incorporate physically meaningful features (e.g., from matminer) instead of purely graph-based models. The MODNet framework, which uses feature selection and joint learning, has been shown to outperform graph networks on small datasets [12].

- Joint Learning: Train a single model to predict multiple properties simultaneously. This allows the model to learn shared representations, effectively increasing the data available for learning each individual property [12].

Q4: How can I efficiently find a good set of hyperparameters without exhaustive manual trial and error? A: Employ systematic Hyperparameter Optimization (HPO) algorithms instead of manual search [13] [14].

- For Low-Dimensional Spaces: Start with Grid Search, which exhaustively tries all combinations in a predefined set. Be warned that it can be computationally expensive [13].

- For Higher-Dimensional Spaces: Use Random Search or, more efficiently, Bayesian Optimization. Bayesian Optimization builds a probabilistic model to predict promising hyperparameters, leading to better results with fewer trials [13] [14].

- For Speed and Efficiency: Consider the Hyperband algorithm, which uses early-stopping to quickly discard poorly performing configurations. It has been shown to find high-performing models in a fraction of the time required by other methods [14].

Troubleshooting Guides

Issue 1: Slow or Stalled Training

This issue manifests as the model taking an excessively long time to improve or the loss curve flattening prematurely.

- Possible Cause 1: Learning Rate Too Low

- Symptoms: Consistent, but very slow decrease in loss.

- Solution: Increase the learning rate. Use a Learning Rate Finder test: train the model for a few epochs while increasing the learning rate from a very low to a very high value. Plot the loss against the learning rate; the optimal rate is typically at the point of steepest descent [15].

- Possible Cause 2: Getting Stuck in Local Minima

- Symptoms: Loss plateaus at a suboptimal value.

- Solution: Introduce momentum into your optimizer. Momentum helps the optimization algorithm to navigate through flat regions and escape shallow local minima by accumulating velocity in the direction of persistent reduction [9].

- Possible Cause 3: Inadequate Model Capacity

Issue 2: Poor Generalization (Overfitting)

The model performs well on training data but poorly on validation or test data.

- Possible Cause 1: Overly Complex Model for the Dataset Size

- Symptoms: A large gap between training and validation loss.

- Solution: Apply regularization techniques. Increase dropout rates or add L1/L2 regularization to the model's weights. If the dataset is very small, prefer feature-based models like MODNet over very deep GNNs [12].

- Possible Cause 2: Insufficient Training Data

- Symptoms: High variance in model performance.

- Solution: Leverage transfer learning. Use a pre-trained model from a large materials database (like the Materials Project) and fine-tune it on your specific, smaller dataset. Frameworks like MatGL provide such pre-trained models [11].

Issue 3: Unstable Training with Physics-Informed Loss

Training is chaotic or diverges when physics-based regularization terms are added to the loss function.

- Possible Cause: Improperly Balanced Loss Weights

- Symptoms: Wild oscillations in the total loss or the individual loss components.

- Solution: Carefully tune the loss weights. The data loss and physics loss terms may operate on different scales. Treat the weight of the PBR term (e.g.,

alphainL_total = L_data + alpha * L_physics) as a critical hyperparameter. Studies show that tuning this for each specific case is essential for success [10].

Experimental Protocols for Hyperparameter Optimization

Protocol 1: Bayesian Optimization for Learning Rate and Batch Size

This protocol is designed to efficiently find the optimal combination of learning rate and batch size for a target model and dataset.

- Objective: To minimize the validation loss or maximize the validation accuracy.

- Hyperparameter Search Space:

- Learning Rate: Log-uniform distribution between ( 10^{-5} ) and ( 10^{-1} ).

- Batch Size: Choice from [16, 32, 64, 128, 256].

- Methodology:

- Define the Model: Fix the model architecture and other hyperparameters.

- Set Up Optimizer: Use a Bayesian optimization library (e.g., Optuna, Scikit-Optimize).

- Run Trials: For each trial (a set of hyperparameters), train the model for a fixed number of epochs.

- Evaluate: Record the validation loss/accuracy at the end of training.

- Iterate: The Bayesian algorithm uses past results to suggest more promising hyperparameters for the next trial.

- Conclusion: After a predetermined number of trials (e.g., 50), select the hyperparameters from the trial with the best validation performance [16] [14].

Table: Sample Results from a Bayesian Optimization Run for a Real Estate Price Prediction Model [16]

| Trial | Learning Rate | Batch Size | Validation RMSE | Validation R² |

|---|---|---|---|---|

| 1 | 0.0012 | 32 | 0.451 | 0.89 |

| 2 | 0.0008 | 64 | 0.432 | 0.90 |

| ... | ... | ... | ... | ... |

| Best | 0.0005 | 128 | 0.398 | 0.92 |

Protocol 2: Systematic Tuning of Physics-Informed Loss Weights

This protocol outlines a method for balancing multiple terms in a composite loss function, common in physics-informed deep learning.

- Objective: To find the loss weight

alphathat yields a model satisfying both data fidelity and physical constraints. - Hyperparameter Search Space:

- Loss Weight (

alpha): Log-uniform distribution between ( 10^{-3} ) and ( 10^{3} )).

- Loss Weight (

- Methodology:

- Define Loss Function:

L_total = L_data + alpha * L_physics. - Fix Other Parameters: Keep the model architecture, learning rate, and other hyperparameters constant.

- Grid Search: Train the model for a range of

alphavalues (e.g., [0.001, 0.01, 0.1, 1, 10, 100, 1000]). - Evaluate: For each trained model, evaluate both the data error (e.g., MAE on a test set) and the physics error (e.g., the degree to which the stress equilibrium is violated).

- Select Optimal Value: Choose the value of

alphathat provides the best trade-off, ensuring the physics error is sufficiently low without significantly degrading the data error [10].

- Define Loss Function:

Table: Example Evaluation of Loss Weight Tuning for a Stress Field Prediction Model (Illustrative Data)

| Loss Weight (alpha) | Data MAE (Test Set) | Physics Loss (Stress Equilibrium) |

|---|---|---|

| 0.001 | 0.05 | 0.45 |

| 0.01 | 0.06 | 0.21 |

| 0.1 | 0.07 | 0.09 |

| 1 | 0.08 | 0.03 |

| 10 | 0.11 | 0.02 |

| 100 | 0.15 | 0.02 |

Standard Hyperparameter Tuning Workflow

The following diagram illustrates a generalized, effective workflow for hyperparameter optimization, integrating the concepts and protocols discussed above.

The Scientist's Toolkit: Essential Research Reagents

This table lists key software tools, libraries, and datasets that form the essential "reagents" for modern research in materials property prediction and hyperparameter optimization.

Table: Key Resources for Hyperparameter Optimization in Materials Informatics

| Tool / Resource | Type | Function | Reference / Source |

|---|---|---|---|

| MatGL | Software Library | An open-source, "batteries-included" library for graph deep learning in materials science. Provides pre-trained models and potentials. | [11] |

| MODNet | Software Framework | A framework using feature selection and joint learning, particularly effective for small datasets. | [12] |

| Bayesian Optimization (Optuna) | Algorithm / Library | A smart hyperparameter tuning strategy that builds a probabilistic model to guide the search. | [14] |

| Hyperband | Algorithm | A hyperparameter optimization algorithm that uses early-stopping for dramatic speed-ups. | [14] |

| Learning Rate Schedules | Technique | Methods (e.g., step decay, exponential decay) to adjust the learning rate during training for better convergence. | [9] |

| Materials Project (MP) | Database | A large, open-source database of computed materials properties, often used as a source for training and benchmarking. | [1] [12] |

| Pymatgen | Software Library | A robust, open-source Python library for materials analysis, central to many materials informatics workflows. | [11] |

Troubleshooting Guides and FAQs

FAQ: Why does my model perform well in validation but fails to predict new, dissimilar materials?

This is a classic sign of data redundancy and overfitting. When a dataset contains many highly similar materials (a common result of historical "tinkering" in material design), a random train-test split can lead to over-optimistic performance estimates [17] [18]. Your model learns the over-represented patterns in the training set but lacks the ability to generalize to out-of-distribution (OOD) samples [19].

- Diagnosis: Check if your dataset has redundancy. Use structural or compositional similarity analysis (e.g., with the MD-HIT algorithm) to see if your test set contains materials very similar to those in your training set [17] [18].

- Solution: Implement a redundancy-controlled train-test split to ensure your test set contains materials that are sufficiently distinct from the training samples. This provides a more realistic evaluation of your model's true predictive capability [17].

FAQ: How can I train a accurate model when I only have a very small amount of data?

This challenge, known as data scarcity, is common when properties are expensive to measure (e.g., via DFT calculations or experiments) [20] [21]. Several strategies can help:

- Leverage Related Data (Transfer Learning): Use an "Ensemble of Experts" (EE) approach. This method uses models pre-trained on large datasets of different, but physically related properties. The knowledge from these "experts" is then used to make accurate predictions for your target property, even with very limited data [21].

- Multi-Task Learning (MTL): Train a single model to predict multiple properties at once. This allows the model to leverage correlations between tasks, improving performance on the primary task with scarce data [20].

- Advanced MTL Training: In cases of severe data imbalance between tasks, use specialized training schemes like Adaptive Checkpointing with Specialization (ACS). This method mitigates "negative transfer," where learning one task harms another, by checkpointing the best model parameters for each task during training [20].

- Synthetic Data: In extreme data-scarcity, frameworks like MatWheel can generate synthetic material data using conditional generative models to augment your small dataset [22].

FAQ: My model's training error is very low, but its test error is high. What is happening?

This is a clear indicator of overfitting. Your model has learned the training data—including its noise and irrelevant details—too well, compromising its ability to generalize to unseen data [23].

- Diagnosis: Always compare performance metrics on both training and test sets. A large gap between low training error and high test error signifies overfitting [23].

- Solution:

- Hyperparameter Tuning: Optimize parameters that control model complexity, such as regularization strength, learning rate, or tree depth. Advanced tuning methods like Bayesian Optimization (e.g., with Optuna) are more efficient than Grid or Random Search [24] [25] [26].

- Data Pruning: Remove redundant data from your training set. Research shows that up to 95% of data in large material datasets can be redundant. Using pruning algorithms or uncertainty-based active learning to build a smaller, more informative training set can improve robustness and training efficiency [19].

- Early Stopping: Halt the training process when the performance on a validation set stops improving.

Quantitative Data on Data Challenges

Table 1: Impact of Data Redundancy on Model Performance

This table summarizes findings from a large-scale study on data redundancy, showing how much data can be removed without significantly harming in-distribution prediction performance [19].

| Material Property | Dataset | Machine Learning Model | % of Data Identified as Informative | Impact on ID Performance (vs. Full Model) |

|---|---|---|---|---|

| Formation Energy | JARVIS-18 | Random Forest (RF) | 13% | RMSE increase < 6% [19] |

| Formation Energy | Materials Project-18 | Random Forest (RF) | 17% | RMSE increase < 6% [19] |

| Formation Energy | JARVIS-18 | XGBoost (XGB) | 20-30% | RMSE increase 10-15% [19] |

| Formation Energy | JARVIS-18 | ALIGNN (GNN) | 55% | RMSE increase 15-45% [19] |

Table 2: Performance of Low-Data Regime Strategies

This table compares methods designed to operate effectively when labeled training data is scarce [20] [21].

| Method | Principle | Application Scenario | Reported Performance |

|---|---|---|---|

| Ensemble of Experts (EE) | Uses knowledge from models pre-trained on related properties. | Predicting glass transition temperature (Tg) and Flory-Huggins parameter with scarce data [21]. | Significantly outperforms standard ANNs under severe data scarcity conditions [21]. |

| Adaptive Checkpointing with Specialization (ACS) | A Multi-Task Learning scheme that avoids negative transfer. | Predicting molecular properties (e.g., toxicity) with task imbalance and few labels [20]. | Achieved accurate predictions with as few as 29 labeled samples; matched or surpassed state-of-the-art on MoleculeNet benchmarks [20]. |

| MatWheel | Generates synthetic material data using conditional generative models. | Material property prediction in extreme data-scarce scenarios [22]. | Achieved performance close to or exceeding that of models trained on real samples [22]. |

Experimental Protocols

Protocol 1: MD-HIT for Redundancy-Controlled Dataset Splitting

Purpose: To create training and test sets that minimize data redundancy, enabling a more realistic evaluation of a model's generalization power [17] [18].

Methodology:

- Representation: Represent each material in your dataset by its composition or crystal structure.

- Similarity Calculation:

- Clustering/Grouping: Use a clustering algorithm (e.g., hierarchical clustering) to group materials based on their pairwise similarity.

- Splitting: Instead of random splitting, ensure that all materials within a specific cluster are assigned to either the training set or the test set. This creates a test set with materials that are structurally or compositionally distinct from the training set.

Protocol 2: Pruning Algorithm for Building Informative Training Sets

Purpose: To iteratively remove redundant data from a large pool, creating a small but highly informative training subset [19].

Methodology:

- Initial Split: Randomly split the available data pool into two subsets, A and B.

- Model Training & Prediction: Train a model on subset A and use it to predict the properties of samples in subset B.

- Error Analysis & Pruning: Identify the samples in subset B that were predicted with low error (these are considered "easy" or redundant) and prune them.

- Iteration: Merge the remaining, high-error samples from B with A to form a new pool. Repeat steps 1-3 until the desired dataset size is reached or performance converges. The final set A is the pruned, informative training set.

Protocol 3: Adaptive Checkpointing with Specialization (ACS) for Multi-Task Learning

Purpose: To train a multi-task model that shares knowledge between tasks while protecting individual tasks from harmful interference (negative transfer), which is crucial when tasks have imbalanced data [20].

Methodology:

- Architecture: Use a shared graph neural network (GNN) backbone with task-specific multi-layer perceptron (MLP) heads.

- Training:

- Train the entire model (shared backbone + all task heads) on all available tasks.

- Continuously monitor the validation loss for each individual task throughout the training process.

- Checkpointing: For each task, independently save a checkpoint of the model (both the shared backbone and the task-specific head) whenever a new minimum validation loss is achieved for that task.

- Specialization: After training, you obtain a set of specialized models, each consisting of the best checkpointed backbone-head pair for its respective task.

Workflow and Relationship Diagrams

Diagram 1: Material Model Evaluation with Redundancy Control

Diagram 2: Diagnosing and Mitigating Overfitting

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Material Property Prediction

This table lists key algorithms and software solutions mentioned in the research for addressing data challenges.

| Tool / Algorithm | Type | Primary Function | Key Application in Research |

|---|---|---|---|

| MD-HIT [17] [18] | Software Algorithm | Dataset redundancy reduction | Creates non-redundant train/test splits for realistic model evaluation. |

| Pruning Algorithm [19] | Data Selection Method | Identifies informative data subsets | Builds efficient training sets by removing up to 95% of redundant data. |

| ACS (Adaptive Checkpointing) [20] | MTL Training Scheme | Mitigates negative transfer in MTL | Enables accurate prediction with as few as 29 labeled samples per task. |

| Ensemble of Experts (EE) [21] | Transfer Learning Framework | Leverages knowledge from related tasks | Predicts complex properties (e.g., Tg) under severe data scarcity. |

| Bayesian Optimization (e.g., Optuna) [24] [26] | Hyperparameter Tuning Method | Efficiently searches hyperparameter space | Outperforms Grid/Random Search in speed and accuracy for model tuning. |

The Impact of Dataset Redundancy on Performance Estimation and Generalization

Frequently Asked Questions (FAQs)

FAQ 1: Why does my model perform well during validation but fails to discover new, promising materials? This is a classic sign of dataset redundancy and an overestimation of your model's true capabilities. High performance on a random test split often occurs because the test set contains materials very similar to those in the training set, a problem known as overfitting to redundant data. However, this high performance does not translate to out-of-distribution (OOD) samples, which are materials from new chemical families or structural classes not seen during training. In real-world materials discovery, the goal is often to find these novel OOD materials, where model performance tends to be significantly lower [17] [27].

FAQ 2: I have a large dataset; shouldn't that guarantee a better model? Not necessarily. A "bigger is better" mentality can be misleading. Studies have shown that many large materials datasets contain a significant amount of redundant data due to the historical approach of studying similar material types (e.g., many perovskite structures similar to SrTiO3). It has been demonstrated that up to 95% of data in some large materials datasets can be removed with little to no impact on in-distribution prediction performance. This redundant data does not help with—and can even worsen—performance on OOD samples [19] [28].

FAQ 3: How is dataset redundancy related to my hyperparameter optimization (HPO) process? Dataset redundancy can severely compromise your HPO. The goal of HPO is to find hyperparameters that maximize your model's generalization to new data. If your validation set is constructed from a random split of a redundant dataset, you are effectively optimizing your hyperparameters for interpolation within over-represented material clusters, not for generalization to new types of materials. This means the "optimal" hyperparameters you find may perform poorly in real-world discovery tasks [17] [29]. Using redundancy-controlled splits for HPO is crucial for finding models that are truly robust.

FAQ 4: What is the difference between in-distribution (ID) and out-of-distribution (OOD) performance?

- In-Distribution (ID) Performance: This is the model's performance on a test set where the samples are randomly drawn from the same dataset as the training data. In redundant datasets, this can lead to overly optimistic performance estimates [19].

- Out-of-Distribution (OOD) Performance: This evaluates the model on materials that are structurally or chemically distinct from those in the training set. This is a more rigorous and realistic measure of a model's prediction capability and generalization power, which is critical for materials discovery [17] [27].

Troubleshooting Guides

Problem: Over-optimistic performance estimates during model evaluation.

- Symptoms: High accuracy/R² (e.g., >0.95 R²) on random test splits, but the model fails when applied to new material families or external datasets.

- Root Cause: The standard random train-test split has led to information leakage because of high similarity between training and test samples [17].

- Solution: Implement redundancy-controlled dataset splitting.

- Step 1: Choose a similarity metric. For material data, this can be based on composition (e.g., chemical formula) or crystal structure (e.g., structure-based features) [17].

- Step 2: Apply a redundancy reduction algorithm like MD-HIT. This algorithm ensures that no pair of materials in your training and test sets have a similarity greater than a defined threshold (e.g., 95% sequence identity for proteins, analogous for materials) [17].

- Step 3: Perform your model training and hyperparameter optimization using this redundancy-controlled split. The resulting performance metrics will better reflect your model's true generalization capability.

Problem: Poor model generalization on out-of-distribution materials.

- Symptoms: Significant performance degradation when predicting properties for materials that are chemically or structurally distant from the training set.

- Root Cause: The training data is biased toward over-represented material types, and the model has not learned underlying physical principles that transfer well [19] [27].

- Solution: Employ a cluster-based train-test split or leverage uncertainty quantification.

- Step 1 (Cluster-based Splitting): Use a method like Leave-One-Cluster-Out Cross-Validation (LOCO CV). This involves:

- Clustering all materials in your dataset based on their features (e.g., using composition or structure descriptors).

- Iteratively leaving one entire cluster out as the test set and training on the remaining clusters.

- This forces the model to predict on truly distinct material families, providing a realistic assessment of its exploratory power [17].

- Step 2 (Uncertainty-based Active Learning): If you are actively acquiring new data, use uncertainty quantification (UQ). Train your model and use its predictive uncertainty to select the most informative samples for the next round of data acquisition (e.g., DFT calculations). This builds smaller, more informative datasets that improve model robustness and OOD performance [17] [19].

- Step 1 (Cluster-based Splitting): Use a method like Leave-One-Cluster-Out Cross-Validation (LOCO CV). This involves:

Quantitative Impact of Data Redundancy

The tables below summarize key findings from recent studies on data redundancy in materials informatics.

Table 1: Impact of Training Set Pruning on In-Distribution Prediction Performance [19] [28] This table shows that a large fraction of data can be removed with minimal performance loss on standard test sets, indicating high redundancy.

| Material Property | Dataset | Model | Performance with 100% Data (RMSE) | Performance with 20% Data (RMSE) | Relative Increase in RMSE |

|---|---|---|---|---|---|

| Formation Energy | MP18 | RF | 0.159 eV/atom | 0.168 eV/atom | +5.7% |

| Formation Energy | MP18 | XGB | 0.120 eV/atom | 0.140 eV/atom | +16.7% |

| Formation Energy | MP18 | ALIGNN | 0.065 eV/atom | 0.085 eV/atom | +30.8% |

| Band Gap | MP18 | RF | 0.613 eV | 0.738 eV | +20.4% |

Table 2: Out-of-Distribution vs. In-Distribution Performance of GNN Models [27] This table illustrates the significant performance gap between ID and OOD evaluation, highlighting the generalization problem.

| Model | MatBench Task (ID) | OOD Task (Average) | Generalization Gap |

|---|---|---|---|

| coGN | Best on ID | Significant performance drop | Large |

| ALIGNN | High | More robust OOD performance | Smaller |

| CGCNN | High | More robust OOD performance | Smaller |

Experimental Protocols

Protocol 1: Evaluating Redundancy with the MD-HIT Algorithm

- Objective: To create training and test sets with controlled similarity, enabling a realistic evaluation of model generalization [17].

- Materials/Software: A materials dataset (e.g., from Materials Project), material descriptors (e.g., composition features, crystal structure graphs), MD-HIT code.

- Methodology:

- Feature Generation: Convert all materials in your dataset into a numerical representation (e.g., using Matminer features for composition or structural fingerprints).

- Similarity Calculation: Compute the pairwise similarity between all materials (e.g., using cosine similarity).

- MD-HIT Clustering: Apply the MD-HIT algorithm to group materials whose similarity exceeds a predefined threshold (e.g., 0.95).

- Dataset Splitting: From each cluster, randomly assign materials to either the training or test set, but ensure no two highly similar materials are in both sets. This creates a redundancy-controlled split.

- Expected Outcome: A more reliable estimate of model performance on novel materials, typically resulting in a lower but more realistic performance metric on the test set compared to a random split [17].

Protocol 2: Dataset Pruning for Efficient Hyperparameter Optimization

- Objective: To identify a small, informative subset of data for faster and more robust hyperparameter tuning [19].

- Materials/Software: A large materials dataset, a base ML model (e.g., Random Forest, GNN), a pruning algorithm.

- Methodology:

- Initial Split: Randomly split the dataset into a large pool (e.g., 90%) and a hold-out test set (10%).

- Iterative Pruning:

- Split the pool into subsets A and B.

- Train a model on A and predict on B.

- Identify and remove the samples in B that the model predicts with high confidence (low error), as these are considered "learnable" and potentially redundant.

- Merge the remaining, more challenging samples from B with A to form a new pool.

- HPO on Pruned Set: Use the final, smaller pruned dataset for computationally intensive hyperparameter optimization.

- Expected Outcome: A significant reduction in the computational cost of HPO while maintaining, or only slightly degrading, final model performance on in-distribution tasks [19].

Workflow Visualization

The following diagram illustrates the recommended workflow for managing dataset redundancy, from problem identification to solution.

Diagram 1: Troubleshooting workflow for issues arising from dataset redundancy.

The Scientist's Toolkit

Table 3: Essential Resources for Redundancy-Aware Materials Informatics

| Tool / Algorithm | Type | Primary Function | Relevance to Redundancy |

|---|---|---|---|

| MD-HIT [17] | Algorithm | Dataset splitting with similarity control | Creates non-redundant train/test splits to prevent overestimation. |

| Pruning Algorithm [19] | Algorithm | Identifies informative data subsets | Removes redundant data for efficient training and HPO. |

| ALIGNN [19] | Graph Neural Network | State-of-the-art materials property prediction | Used in benchmarks to demonstrate redundancy impact and OOD gaps. |

| LOCO CV [17] | Evaluation Method | Leave-One-Cluster-Out Cross-Validation | Rigorously tests model extrapolation capability to new material families. |

| Uncertainty Quantification (UQ) [17] | Method | Estimates model prediction uncertainty | Guides active learning to acquire non-redundant, informative data. |

| MatBench [27] | Benchmarking Suite | Standardized benchmarks for ML models | Provides tasks for evaluating ID and OOD performance. |

Troubleshooting Guides & FAQs

This section addresses common challenges researchers face when applying machine learning to materials property prediction.

Data-Related Issues

Problem: My dataset is too small for training a accurate Graph Neural Network (GNN). What can I do?

- Answer: Small datasets are a common challenge in materials science. A pathway to overcome this is Transfer Learning (TL) [30].

- Pre-training (PT): First, train a model (e.g., an ALIGNN network) on a large, general materials dataset, such as formation energies from the Open Quantum Materials Database (OQMD) or Materials Project. This helps the model learn fundamental chemical and structural patterns [30].

- Fine-tuning (FT): Then, take the pre-trained model and re-train (fine-tune) it on your small, specific target dataset. This process allows the model to leverage general knowledge and specialize with limited data [30].

- Multi-Property Pre-training (MPT): For even better generalization, pre-train a single model on multiple different material properties simultaneously before fine-tuning. This creates a more robust starting model, especially for out-of-domain predictions (e.g., fine-tuning on a 2D materials dataset after pre-training on 3D materials) [30].

Problem: How do I handle duplicate entries in my materials dataset?

- Answer: Duplicate compositions with different property values can skew your model. Here are common strategies [31]:

- Drop all but one at random: A simple approach if property values are not too dissimilar.

- Take the mean or median value: Useful for creating a single, representative data point.

- Distinguish between duplicates: The best approach is to add additional features that encode the reason for the difference, such as structural information (crystal system), synthesis conditions (temperature, pressure), or measurement method [31].

Model Performance & Optimization

Problem: My deep learning model's performance has plateaued, and I suspect suboptimal hyperparameters. How can I improve it systematically?

- Answer: Manually tuning hyperparameters is inefficient. Implement a systematic Hyperparameter Optimization (HPO) workflow [32]:

- Choose an HPO Algorithm: Avoid inefficient grid search. Instead, use modern methods like Hyperband (for computational efficiency) or Bayesian Optimization (for sample efficiency, especially with a low trial budget). A combination like Bayesian Optimization with Hyperband (BOHB) can also be effective [32].

- Select a Software Platform: Use libraries like KerasTuner (user-friendly) or Optuna (highly configurable) that allow parallel execution of HPO trials, drastically reducing optimization time [32].

- Optimize Comprehensively: Ensure you tune a wide range of hyperparameters, including structural ones (number of layers, neurons per layer) and algorithmic ones (learning rate, batch size, optimizer type) [32].

Problem: My model is too large and slow for deployment or further experimentation. How can I make it more efficient?

- Answer: Consider these model optimization techniques [33] [34]:

- Pruning: Identify and remove less important weights or neurons from the trained network. This simplifies the model without significantly impacting accuracy. You can use structured pruning (removing entire channels) or unstructured pruning (removing individual weights) [33] [34].

- Quantization: Reduce the numerical precision of the model's weights and activations, typically from 32-bit floating-point to 16-bit or 8-bit integers. This reduces the model's memory footprint and computational requirements, speeding up inference [33] [34].

- Knowledge Distillation: Train a smaller "student" model to mimic the behavior of a larger, more accurate "teacher" model. The student model learns from the teacher's output distributions, often achieving comparable performance with a fraction of the parameters [33].

Problem: My deep neural network is a "black box." How can I understand why it makes a specific prediction?

- Answer: Leverage Explainable AI (XAI) techniques to interpret your model [35].

- Post-hoc Explanations: Use methods that explain the model's decisions after it has been trained. For instance, salience maps can highlight which parts of an input crystal structure or molecular graph were most influential for a given prediction [35].

- Feature Importance: Apply techniques that quantify the contribution of different input features (descriptors) to the model's overall predictions, helping you connect model behavior to materials science domain knowledge [35].

Algorithm Selection

Problem: Which machine learning algorithm should I start with for my composition-based property prediction task?

- Answer: The choice depends on your data size, computational resources, and need for interpretability [31].

- Random Forest: A safe and powerful starting point. It works well out-of-the-box, doesn't require feature scaling, and can learn complex non-linear relationships [31].

- Support Vector Machines (SVM): Can deliver excellent performance but requires careful hyperparameter tuning. Training can be slow for very large datasets (e.g., >10,000 samples) [31].

- Graph Neural Networks (GNNs): The best choice if you have atomic structure data and a sufficiently large dataset (typically >10,000 data points). They directly model the material as a graph (atoms as nodes, bonds as edges), leading to high accuracy but requiring more computational resources and tuning [36] [30].

- Linear Regression: A very fast and interpretable baseline model. However, its limited complexity often leads to underfitting on non-linear problems [31].

Table 1: Comparison of Common Optimization Algorithms

| Algorithm | Key Principles | Pros | Cons | Ideal Use Case |

|---|---|---|---|---|

| Gradient Descent [37] [38] | Iteratively moves parameters in the direction of the negative gradient to minimize the loss function. | Simple, guaranteed convergence (with right conditions). | Can be slow for large datasets; may get stuck in local minima. | Foundation for understanding optimization; not typically used directly for large-scale deep learning. |

| Stochastic Gradient Descent (SGD) [37] [38] | Uses a single data point (or a mini-batch) to compute the gradient per update. | Faster updates, less memory intensive, can escape local minima. | Noisy updates can cause convergence oscillations. | Training large-scale deep learning models. |

| Adam [38] | Combines ideas from Momentum and RMSprop. Uses adaptive learning rates for each parameter. | Fast convergence, handles noisy gradients well, requires little tuning. | Can sometimes generalize less well than SGD in some tasks. | Default choice for many deep learning applications, including materials property prediction [32]. |

| Genetic Algorithms [37] | Inspired by natural selection. Uses a population of solutions, selection, crossover, and mutation. | Good for complex, non-differentiable, or discrete search spaces. | Computationally expensive, can be slow to converge. | Hyperparameter optimization and neural architecture search. |

Table 2: Comparison of Hyperparameter Optimization (HPO) Methods

| Method | Description | Pros | Cons |

|---|---|---|---|

| Grid Search [34] | Exhaustively searches over a predefined set of hyperparameters. | Simple, guaranteed to find best combination in grid. | Computationally intractable for high-dimensional spaces (curse of dimensionality). |

| Random Search [37] [32] | Randomly samples hyperparameter combinations from predefined distributions. | More efficient than grid search; often finds good solutions faster. | May still waste resources evaluating poor hyperparameters; no learning from past trials. |

| Bayesian Optimization [32] | Builds a probabilistic model of the objective function to direct the search to promising hyperparameters. | Sample-efficient; requires fewer trials to find good hyperparameters. | Overhead of building the surrogate model; can be complex to implement. |

| Hyperband [32] | Accelerates random search through adaptive resource allocation and early-stopping of poorly performing trials. | High computational efficiency; fast identification of good configurations. | Less sample-efficient than Bayesian optimization on its own. |

Experimental Protocols

Protocol: Hyperparameter Optimization with KerasTuner

This protocol outlines a step-by-step methodology for optimizing deep neural networks using Hyperband in KerasTuner [32].

Define the Model Building Function: Create a function that takes a

hp(hyperparameters) object and returns a compiled Keras model. Inside this function, define the search space:- Number of layers:

hp.Int('num_layers', 2, 10) - Units per layer:

hp.Int('units_' + str(i), min_value=32, max_value=512, step=32) - Learning rate:

hp.Float('lr', min_value=1e-5, max_value=1e-2, sampling='log') - Dropout rate:

hp.Float('dropout', 0.0, 0.5) - Choice of optimizer:

hp.Choice('optimizer', ['adam', 'rmsprop'])

- Number of layers:

Instantiate the Tuner: Create a

Hyperbandtuner object, specifying the model-building function, the objective (e.g.,val_mean_absolute_error), and the maximum number of epochs per trial.Execute the Search: Run the tuner, providing the training and validation data. The tuner will automatically manage the training and evaluation of multiple configurations in parallel.

Retrieve Best Hyperparameters: After the search completes, get the optimal set of hyperparameters.

Train the Final Model: Build and train the final model using the best hyperparameters on the combined training and validation dataset, then evaluate on the held-out test set.

Protocol: Transfer Learning for Small Datasets

This protocol describes a pre-training and fine-tuning strategy to improve GNN performance on small target datasets [30].

Pre-training Phase:

- Data Acquisition: Obtain a large source dataset (e.g., DFT formation energies from the Materials Project, containing >100,000 entries).

- Model Selection: Choose a GNN architecture like ALIGNN or CGCNN.

- Initial Training: Train the model on this large source dataset until convergence. This model learns a general representation of atomic structures and their relationships to a fundamental property.

Fine-tuning Phase:

- Data Preparation: Prepare your smaller target dataset (e.g., a few hundred experimental band gaps or shear moduli).

- Model Initialization: Load the pre-trained model's weights as the starting point for your new model.

- Selective Re-training: Re-train the model on the target dataset. Strategies include:

- Strategy 1 (Full Fine-tuning): Re-train all layers of the network on the new data.

- Strategy 2 (Partial Fine-tuning): Freeze the weights of the initial layers (which capture general features) and only re-train the higher-level, task-specific layers. This can help prevent overfitting on very small datasets.

- Hyperparameter Adjustment: Use a lower learning rate for fine-tuning than was used for pre-training to avoid catastrophic forgetting of the pre-trained knowledge.

Workflow & System Diagrams

HPO and TL Workflow

Model Optimization Techniques

Table 3: Key Computational Tools & Datasets

| Item Name | Type / Category | Function & Application |

|---|---|---|

| Matminer [31] | Python Library | A primary tool for data mining materials properties. Provides featurization methods to convert compositions and structures into machine-readable vectors, and access to several public datasets. |

| ALIGNN [30] | Model Architecture | A state-of-the-art Graph Neural Network (GNN) that uses atomic coordinates and bond angles to accurately predict a wide range of material properties from structural information. |

| KerasTuner [32] | HPO Software | A user-friendly, intuitive Python library for performing hyperparameter optimization. It integrates seamlessly with TensorFlow/Keras workflows and supports algorithms like Hyperband and Bayesian Optimization. |

| Optuna [32] | HPO Software | A more advanced, define-by-run optimization framework that is highly configurable. Ideal for complex search spaces and for implementing custom HPO algorithms like BOHB. |

| Materials Project [31] | Database | A widely used open database providing computed properties (e.g., formation energy, band gap) for over 100,000 inorganic crystals, essential for pre-training models. |

| JARVIS [30] | Database | The Joint Automated Repository for Various Integrated Simulations provides DFT-computed data for both 3D and 2D materials, useful for benchmarking and transfer learning. |

Advanced Methods and Practical Applications in Hyperparameter Optimization

Hyperparameter optimization is a critical step in developing robust machine learning models, especially in scientific fields like materials property prediction. Unlike model parameters learned during training, hyperparameters are configuration variables set before the learning process begins. Examples include the learning rate for a neural network or the number of trees in a Random Forest. Selecting the optimal set of hyperparameters can significantly enhance a model's predictive accuracy and generalizability.

This technical support guide provides a comparative analysis of three prominent optimization methods—Grid Search, Random Search, and Bayesian Optimization—framed within the context of materials science research. It includes troubleshooting guides and FAQs to help researchers efficiently navigate the hyperparameter tuning process.

The table below summarizes the core characteristics, advantages, and limitations of the three optimization methods.

| Optimization Method | Core Principle | Key Advantages | Key Limitations | Best-Suited Use Cases |

|---|---|---|---|---|

| Grid Search | Exhaustively searches over a predefined set of hyperparameter values [39]. | • Simple to implement and parallelize.• Guaranteed to find the best combination within the grid. | • Computationally expensive and slow [39].• Suffers from the "curse of dimensionality".• Restricted to discrete, pre-specified ranges [39]. | Small, low-dimensional search spaces where an exhaustive search is feasible. |

| Random Search | Randomly samples hyperparameter combinations from specified distributions [39]. | • More efficient than Grid Search for high-dimensional spaces [39].• Can explore a wider and continuous range of values. | • Can still be inefficient, as it evaluates every trial independently [39].• No intelligence in sampling; may miss promising regions. | Problems with a moderate number of hyperparameters, where a broader exploration is needed. |

| Bayesian Optimization | Builds a probabilistic model of the objective function to guide the search toward promising hyperparameters [39]. | • Highly sample-efficient; converges faster with fewer trials [39].• Balances exploration and exploitation intelligently [40]. | • Higher computational overhead per trial.• Can be more complex to set up. | Complex models with many hyperparameters and computationally expensive training cycles (e.g., large neural networks, ensemble models) [39]. |

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My Bayesian Optimization with Optuna is converging too quickly on a suboptimal result. What could be wrong?

- Potential Cause: The sampler might be exploiting known good regions too aggressively and failing to explore the search space adequately.

- Solution: Experiment with different samplers. The default Tree-structured Parzen Estimator (TPE) is generally effective, but for complex spaces, try the Covariance Matrix Adaptation Evolution Strategy (CMA-ES), which is better at exploring and can escape local minima [41]. You can also try widening the initial ranges of your hyperparameters to force more exploration in early trials.

Q2: How can I save computational resources during hyperparameter optimization?

- Solution: Use Pruning. Frameworks like Optuna offer automated pruning to halt unpromising trials early [40] [41]. If your model's performance (e.g., validation loss) is significantly worse than the best result from previous trials at the same training epoch, Optuna can automatically stop that trial, freeing up resources for more promising configurations.

Q3: For materials property prediction, my model performs well on validation data but poorly on truly novel chemical compositions. How can I improve extrapolation?

- Solution: Investigate Meta-Learning and Extrapolative Training. A emerging approach is Extrapolative Episodic Training (E²T) [42]. This meta-learning technique involves training a model on a series of artificially created "extrapolation tasks." For example, the model is trained on data from one polymer class (the support set) and tested on a different polymer class (the query set). This forces the model to learn how to adapt to unseen material domains, thereby improving its extrapolative capabilities [42].

Q4: My optimization process is taking too long. What are my options?

- Solution 1: Leverage Distributed Computing. Optuna supports distributed optimization with little to no changes to the code. You can run trials in parallel across multiple CPUs or machines to accelerate the search [43].

- Solution 2: Reduce Dimensionality. If your dataset has many input features, consider using Principal Component Analysis (PCA) before training the model. As demonstrated in research on predicting column load capacity, PCA can reduce multicollinearity and computational complexity without significantly sacrificing predictive performance [44].

Q5: After optimization, how can I understand which hyperparameters were most important?

- Solution: Use Hyperparameter Importance Analysis. Optuna provides functionality to compute the importance of each hyperparameter after a study is complete, using methods like fANOVA [40]. This analysis tells you which parameters had the greatest influence on your model's performance, providing valuable insights for future experiments and model design.

Experimental Protocol: A Case Study in Materials Science

The following protocol details a real-world experiment comparing Grid Search, Random Search, and Bayesian Optimization for predicting the peak axial load capacity (PALC) of steel-reinforced concrete-filled square steel tubular (SRCFSST) columns under high temperatures [44]. This serves as a template for a rigorous comparative analysis.

1. Objective To evaluate the efficacy of three hyperparameter optimization techniques (Grid Search, Random Search, Bayesian Optimization) when applied to a PCA-XGBoost model for predicting a key mechanical property (PALC).

2. Materials and Dataset

- Dataset: 135 experimental instances from prior studies on SRCFSST columns [44].

- Input Features: Material properties and column dimensions.

- Target Variable: Peak Axial Load Capacity (PALC).

3. Methodology

- Data Preprocessing: Standardize all input features to have zero mean and unit variance.

- Dimensionality Reduction: Apply Principal Component Analysis (PCA), retaining components that explain 99% of the variance in the data [44].

- Model: XGBoost (Extreme Gradient Boosting), a powerful ensemble algorithm known for high performance in materials informatics [45] [44].

- Hyperparameter Tuning:

- Grid Search (GS): Perform an exhaustive search over all specified combinations of hyperparameters.

- Random Search (RS): Sample a specified number of random combinations from the hyperparameter distributions.

- Bayesian Optimization (BO): Use the Bayesian Optimization implementation in Optuna with the TPE sampler to intelligently search the hyperparameter space.

- Validation: Employ 5-fold cross-validation to ensure the robustness and generalizability of the results [44].

- Performance Metrics: Evaluate models using R-squared (R²), Root Mean Squared Error (RMSE), and Mean Absolute Error (MAE).

4. Key Results (Summary) The Bayesian-Optimized PCA-XGB model achieved the highest predictive performance on the test dataset [44]:

- R²: 0.928

- MAE: 2.3%

- RMSE: 3.5% Statistical tests (paired t-tests) confirmed the Bayesian Optimization model's superiority was significant (p < 0.05) compared to the other methods [44].

Experimental Workflow for HPO Comparison

Bayesian Optimization Process with Optuna

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table lists key computational "reagents" and tools essential for conducting hyperparameter optimization studies in computational materials science.

| Tool / Solution | Function / Purpose | Relevant Context |

|---|---|---|

| Optuna | A define-by-run hyperparameter optimization framework that implements state-of-the-art algorithms like Bayesian Optimization [43]. | Ideal for efficiently tuning complex models; supports pruning and distributed computing [40] [43]. |

| Scikit-learn | A core machine learning library providing implementations of models, preprocessing tools, and simple Grid/Random Search [39]. | Foundation for building many ML pipelines and for conducting baseline optimization comparisons. |

| XGBoost | An optimized gradient boosting library known for high performance in tabular data tasks, including materials property prediction [45]. | Frequently used as a powerful predictive model whose performance is enhanced by effective hyperparameter tuning [44]. |

| Principal Component Analysis (PCA) | A dimensionality reduction technique that transforms features into a lower-dimensional space while retaining critical information [44]. | Used to reduce model complexity and computational burden before hyperparameter tuning [44]. |

| Tree-structured Parzen Estimator (TPE) | A Bayesian optimization algorithm that models "good" and "bad" parameter distributions to guide the search [41]. | The default sampler in Optuna; highly effective for a wide range of problems [40] [41]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the main advantages of using a hybrid Transformer-Graph model over a pure GNN or Transformer for materials property prediction?

Hybrid Transformer-Graph models combine the strengths of both architectures. Graph Neural Networks (GNNs) are exceptional at capturing local atomic structures and bonds within a material, effectively modeling many-body interactions (e.g., two-body bonds, three-body angles) [1]. Transformers, with their self-attention mechanism, excel at identifying global, long-range dependencies and contextual information across the entire structure [46]. By integrating them, the model can simultaneously learn from both local atomic environments and the global crystal structure, leading to more accurate predictions of complex properties like formation energy and elastic moduli [1]. This hybrid approach has been shown to outperform state-of-the-art pure models on several materials property regression tasks [1].

FAQ 2: My dataset for a target property (e.g., mechanical properties) is very small. How can transfer learning help?

Transfer learning is a powerful strategy to address data scarcity. The process involves two key steps [47] [48]:

- Pre-training: A model is first trained on a large, data-rich "source" task. In materials science, this is often the prediction of a fundamental property like formation energy (Eform) or total energy from a massive database like the Materials Project, which contains millions of calculations [48].

- Fine-tuning: The pre-trained model is then used as a starting point and further trained (fine-tuned) on the smaller, data-scarce "target" task, such as predicting bulk modulus.

This approach is effective because the model has already learned general, underlying representations of atomic structures and chemistry from the large dataset. This significantly reduces the amount of data needed for the target task to achieve high accuracy, acting as a form of regularization to prevent overfitting [1] [48]. Research has demonstrated that transfer learning can achieve chemical accuracy on a target property even when the fine-tuning dataset is limited to a few thousand data points [48].

FAQ 3: What are the common hyperparameter tuning pitfalls when working with these large, hybrid models?

Effective hyperparameter tuning is crucial for model performance. Common pitfalls include:

- Overfitting the Validation Set: When an extensive hyperparameter search is performed on a single validation set, the model may become overly specialized to that set, compromising its performance on truly unseen data (the test set) [49]. Using nested cross-validation provides an unbiased estimate of generalization performance [49].

- Ignoring Computational Budget: Exhaustive methods like Grid Search can become computationally prohibitive for large models and hyperparameter spaces [49] [50]. It is essential to set a computational budget (e.g., a maximum number of trials) upfront.

- Inefficient Search Strategy: Using Grid Search for a high-dimensional hyperparameter space is often inefficient. The performance of a model often depends heavily on only a few key parameters [49]. More efficient methods like Random Search, Bayesian Optimization, or Early Stopping-based algorithms like Hyperband can find good hyperparameters with far fewer evaluations [49] [50].

FAQ 4: How do I decide between "full transfer" and "only regression head" fine-tuning?

The choice depends on the similarity between your source and target tasks and the size of your target dataset [48]:

- Only Regression Head (Feature Extraction): In this approach, the main body of the pre-trained network (the "backbone" or "embedding network") is frozen, and only the final regression or classification layers (the "head") are trained on the new data. This is faster and requires fewer resources. It is best when the target dataset is very small or when the features learned in the pre-training are highly generalizable to the new task [47] [48].

- Full Transfer (Fine-Tuning): Here, you unfreeze all or some of the pre-trained model's layers and continue training with the new data. This allows the model to adjust its previously learned features to the specifics of the new task. This method is typically more effective when the target dataset is relatively large and somewhat similar to the original pre-training data [47] [48]. Empirical results in materials informatics show that full transfer often leads to the lowest prediction errors [48].

Troubleshooting Guides

Issue 1: Model performance is poor after transfer learning.

- Potential Cause: Catastrophic Forgetting. The model may have lost the valuable general knowledge from the source task during fine-tuning.

- Solution:

- Use a lower learning rate for the fine-tuning phase to make smaller, more careful updates to the weights [47].

- Experiment with progressively unfreezing layers instead of the entire network at once. Start with the last few layers and gradually unfreeze earlier ones.

- Implement a learning rate schedule that warms up or adapts during training.

Issue 2: Training is slow, and memory usage is too high.

- Potential Cause: The self-attention mechanism in the Transformer has a computational complexity that grows quadratically with sequence length (e.g., the number of atoms or tokens) [46].

- Solution:

- Reduce the batch size.

- Investigate and implement more efficient attention mechanisms, such as linear attention variants or sparse attention, which are active areas of research [46] [51].

- If using a hybrid model, analyze the model architecture to ensure that the more efficient components (like the GNN or SSM) handle the bulk of the processing, with attention used sparingly [51].

Issue 3: The model fails to capture periodic boundary conditions in crystal structures.

- Potential Cause: Standard graph representations may not adequately encode the infinite repeating nature of crystal lattices.

- Solution: Ensure your graph construction method explicitly accounts for periodicity. This can be done by incorporating periodic minimum image conventions when creating edges between atoms across unit cell boundaries. Some frameworks, like the one proposed by Hoffmann et al., use innovative graph representations that leverage interatomic distances to capture these structural characteristics effectively [1].

Experimental Protocols & Data

Detailed Methodology: Transfer Learning for Material Properties

The following workflow, as successfully implemented in recent studies [1] [48], details the steps for applying transfer learning to predict data-scarce material properties.

1. Data Preparation and Partitioning:

- Source Task Data: Obtain a large dataset of material structures and a common property. Example: Use the DCGAT database with ~1.8 million structures and their PBE-DFT formation energies (

Eform) [48]. - Target Task Data: Prepare the smaller dataset for the property of interest. Example: A subset of ~10,000-50,000 structures with PBEsol-DFT calculated bulk modulus (

K) or energy above the convex hull (Ehull) [1] [48]. - Partitioning: Split both source and target datasets into training, validation, and test sets (e.g., 80/10/10%). It is critical that the test set for the target task is held out and never used during pre-training or the hyperparameter tuning of the fine-tuned model [49].

2. Model Pre-training:

- Initialize a hybrid Transformer-Graph model (e.g., CrysCo, which combines a Crystal GNN and a compositional Transformer) [1].

- Train the model on the large source task (e.g., predicting

Eformon the 1.8M samples) until validation loss converges.

3. Model Fine-Tuning:

- Take the pre-trained model and replace the final output layer (the "regression head") to predict the target property (e.g., bulk modulus

K). - Strategy Selection:

- Option A (Only Regression Head): Freeze all weights of the pre-trained model and only train the new output layer.

- Option B (Full Transfer): Unfreeze all weights and train the entire model on the target task using a low learning rate (e.g., 1/10th of the original learning rate).

4. Hyperparameter Tuning & Evaluation:

- Use the target task's validation set to perform a limited hyperparameter search, focusing on the learning rate, number of epochs, and possibly the number of unfrozen layers.

- Finally, evaluate the best-performing model on the held-out test set to obtain an unbiased measure of generalization performance [48].

Diagram Title: Transfer Learning Workflow for Material Properties

Quantitative Performance of Transfer Learning Strategies

The table below summarizes the performance of different transfer learning strategies, measured by Mean Absolute Error (MAE), for predicting the energy above the convex hull (Ehull) using different density functionals. Data adapted from Hoffmann et al. [48].

| Target Functional | Training Strategy | MAE (meV/atom) | Relative Improvement vs. No Transfer |

|---|---|---|---|

| PBEsol | No Transfer | 26 | - |

| Only Regression Head | 22 | 15% | |

| Full Transfer | 19 | 27% | |

| SCAN | No Transfer | 31 | - |

| Only Regression Head | 26 | 16% | |

| Full Transfer | 22 | 29% |

Hyperparameter Optimization Techniques Comparison

The table below compares common hyperparameter optimization (HPO) techniques, highlighting their suitability for tuning complex hybrid models [49] [50].

| Method | Key Principle | Pros | Cons | Best For |

|---|---|---|---|---|

| Grid Search | Exhaustive search over a specified subset of hyperparameters. | Simple, guarantees finding best combination in the grid. | Computationally expensive (curse of dimensionality), inefficient. | Small, low-dimensional hyperparameter spaces. |

| Random Search | Randomly samples a fixed number of hyperparameter combinations from a space. | More efficient than grid search; good for high-dimensional spaces. | May miss the optimal point; no learning from past evaluations. | Initial, broad exploration of a large search space. |

| Bayesian Optimization | Builds a probabilistic model to select the most promising hyperparameters. | Highly efficient; converges to good parameters with fewer trials. | More complex to set up; can be sensitive to model parameters. | Expensive model evaluations where trial efficiency is critical. |

The Scientist's Toolkit: Research Reagent Solutions

This table lists key computational "reagents" and tools essential for building and training Transformer-Graph hybrid models for materials informatics.

| Item / Tool Name | Function / Purpose | Example / Note |

|---|---|---|

| Crystal Graph Representation | Represents a crystal structure as a graph for GNN input (nodes=atoms, edges=bonds). | Includes periodicity; can be extended with 4-body interactions (dihedral angles) [1]. |

| Pre-trained Model Weights | Provides a parameter initialization for transfer learning, reducing data needs and training time. | Models pre-trained on large databases like Materials Project (PBE data) [48]. |

| Hyperparameter Optimization Library | Automates the search for optimal training and model parameters. | Libraries like Optuna, Hyperopt, or KerasTuner implement Bayesian Optimization, Hyperband, etc. [49] [50]. |

| Multi-fidelity Datasets | Provides data from different levels of accuracy (e.g., PBE vs. SCAN DFT) for effective transfer learning [48]. | Enables knowledge transfer from low-cost, high-volume data to high-cost, high-accuracy data. |

| Edge-Gated Attention (EGAT) | A GNN layer that updates node and edge features using attention, capturing complex atomic interactions [1]. | Used in CrysGNN to update bond angles and distances simultaneously. |

| Compositional Feature Embedder | Encodes the elemental composition of a material, often using attention mechanisms. | CoTAN network in the CrysCo framework [1]. |

Hyperparameter Tuning for Physics-Informed Neural Networks (PINNs) and Regularization

Troubleshooting Guides

Guide 1: PINN Converging to Physically Incorrect Solutions

Problem: My PINN model for solving an initial value problem is converging to a steady, non-zero solution that is not physically correct.

Diagnosis: This is a common issue where the PINN gets trapped in a local minimum of the physics loss corresponding to an unstable fixed point of the dynamical system. The physics loss (ℒ_f) can achieve a global optimum at these fixed points, regardless of their stability [52] [53].

Solution: Implement a stability-informed regularization scheme to reshape the loss landscape and penalize convergence to unstable fixed points [52].

Procedure:

- Add a Regularization Term: Modify your total loss function to include a new regularization term (R):

L = L_IC + L_f + C * R[53]. - Compute the Regularization Components: Calculate the regularization term

Ras the average over all collocation points of the product of two factors:- Static Equilibrium Factor (RSE): Use a Gaussian kernel,

R_SE(t) = exp( -‖x'_θ(t)‖² / ε ), to ensure regularization is active only near fixed points (where time derivatives are near zero) [52] [53]. - Local Stability Factor (RLS): For a first-order ODE system

x' = f(x), compute the JacobianJ(x) = ∂f/∂xat the candidate solutionx_θ(t). Sum the positive real parts of its eigenvalues:R_LS(t) = Σ max(Re(λ), 0)for all λ in the spectrum ofJ[52] [53]. This penalizes local instability.

- Static Equilibrium Factor (RSE): Use a Gaussian kernel,

- Apply a Decaying Coefficient: Use a linearly decaying coefficient

Cto reduce the regularization's influence later in training:C = max( C_0 * (γ - epoch)/N_epochs, 0 ), whereC_0is the initial weight, andγdetermines when regularization phases out [53].

Expected Outcome: This method has shown substantial improvements, increasing success rates from 0% to 100% in systems like the pitchfork bifurcation and van der Pol oscillator [53].