Optimizing Deep Learning Parameters for Accurate Material Synthesizability Prediction in Drug Development

Accelerating the discovery of synthesizable materials is a critical bottleneck in drug development.

Optimizing Deep Learning Parameters for Accurate Material Synthesizability Prediction in Drug Development

Abstract

Accelerating the discovery of synthesizable materials is a critical bottleneck in drug development. This article provides a comprehensive guide for researchers and scientists on optimizing deep learning models to predict material synthesizability accurately. We explore the foundational principles distinguishing synthesizability from thermodynamic stability, detail state-of-the-art methodologies including specialized large language models and graph neural networks, and present strategies for hyperparameter tuning and data handling. The guide also covers rigorous validation frameworks and comparative performance analysis against traditional methods, concluding with the transformative implications of these optimized models for streamlining the design of novel biomedical materials.

Understanding Synthesizability: The Core Challenge in Computational Material Discovery

Synthesizability FAQs for the Deep Learning Researcher

FAQ 1: Why is my deep learning model predicting thousands of thermodynamically stable materials, but experimental teams cannot synthesize them?

Thermodynamic stability, often assessed via a low energy above the convex hull from Density Functional Theory (DFT) calculations, is only one factor influencing synthesizability. A material's real-world formation is a kinetic, pathway-dependent process. Your model may be overlooking critical synthesis barriers [1].

- The Pathway Problem: Synthesizing a material is like crossing a mountain range; you need a viable path around the peaks, not just a straight line to the destination. A thermodynamically stable compound might have high energy barriers to its formation, making kinetically favorable competing phases form instead [1].

- Beyond the Hull: Many metastable structures (with positive energy above hull) are successfully synthesized, while numerous stable structures remain unrealized. This demonstrates the limitation of using thermodynamic stability as a sole proxy for synthesizability [2].

FAQ 2: What are the key data-related challenges in training deep learning models for synthesizability prediction?

The primary challenge is the lack of large, high-quality, and balanced datasets for synthesis, which creates a fundamental bottleneck for model training [1].

- Data Scarcity and Bias: Unlike the ~200,000 entries in computational databases like the Materials Project, there is no equivalent comprehensive database for synthesis recipes and conditions, including failed attempts [1]. Furthermore, published literature is biased towards successful syntheses and commonly used "good enough" recipes, limiting the diversity of known pathways [1].

- The Positive-Unlabeled (PU) Problem: In synthesizability prediction, we have a set of known, synthesized materials (positives) and a vast set of hypothetical materials (unlabeled). The key challenge is that the unlabeled set contains both synthesizable and non-synthesizable materials, making it difficult to train a standard binary classifier [3] [2] [4].

FAQ 3: How can I integrate synthesizability directly into my generative deep learning pipeline for materials design?

There are two main approaches: using a synthesizability metric as an objective function, or using a synthesizability-constrained generative model [5].

- Post-hoc Filtering vs. Direct Optimization: You can use a trained synthesizability prediction model (a "synthesizability oracle") to filter the outputs of a generative model. However, with a sample-efficient generative model, it is possible to directly optimize for this synthesizability oracle within the generation loop, leading to better performance under constrained computational budgets [5].

- Moving Beyond Heuristics: Common synthesizability heuristics (e.g., based on molecular complexity) are often correlated with retrosynthesis model success for drug-like molecules. However, this correlation diminishes for other classes, like functional materials. Directly using a retrosynthesis model in the optimization loop can then provide a significant advantage [5].

Experimental Protocols & Methodologies

This section details key experimental and computational protocols cited in synthesizability research.

Protocol: Active Learning for Crystal Structure Discovery (GNoME-like Workflow)

This methodology uses scaled deep learning with active learning to discover stable crystals, expanding the known materials space [6].

Detailed Workflow:

- Initialization: Train an initial Graph Neural Network (GNN) model on existing stable crystal data (e.g., from the Materials Project).

- Candidate Generation:

- Structural Path: Generate diverse candidate structures using symmetry-aware partial substitutions (SAPS) and other modifications of known crystals.

- Compositional Path: Generate candidate chemical formulas using relaxed oxidation-state constraints.

- Model Filtration: Use the trained GNN ensemble to filter candidates by predicting their decomposition energy and uncertainty.

- DFT Verification: Perform DFT calculations with standardized settings on the filtered candidates to compute their relaxed energies and verify stability.

- Active Learning Loop: Incorporate the newly computed structures and energies into the training data for the next round of model training. Iterate steps 2-5 multiple times to progressively improve model accuracy and discovery efficiency [6].

Protocol: A Synthesizability-Driven Crystal Structure Prediction (CSP) Framework

This protocol integrates symmetry and machine learning to efficiently locate synthesizable structures [3].

Detailed Workflow:

- Structure Derivation:

- Construct a database of prototype structures from experimentally synthesized crystals.

- Use group-subgroup transformation chains to systematically generate derivative candidate structures while retaining the spatial arrangements of real prototypes.

- Eliminate redundant, conjugate subgroups to ensure crystallographically unique candidates.

- Subspace Identification and Filtration:

- Classify the derived structures into distinct configuration subspaces labeled by their Wyckoff encodes.

- Use a machine-learning model to predict the probability of synthesizable structures existing within each subspace and filter out low-probability subspaces.

- Energetic and Synthesizability Evaluation:

- Perform structural relaxations on all candidates within the promising subspaces.

- Employ a fine-tuned, structure-based synthesizability evaluation model to identify low-energy, high-synthesizability candidates [3].

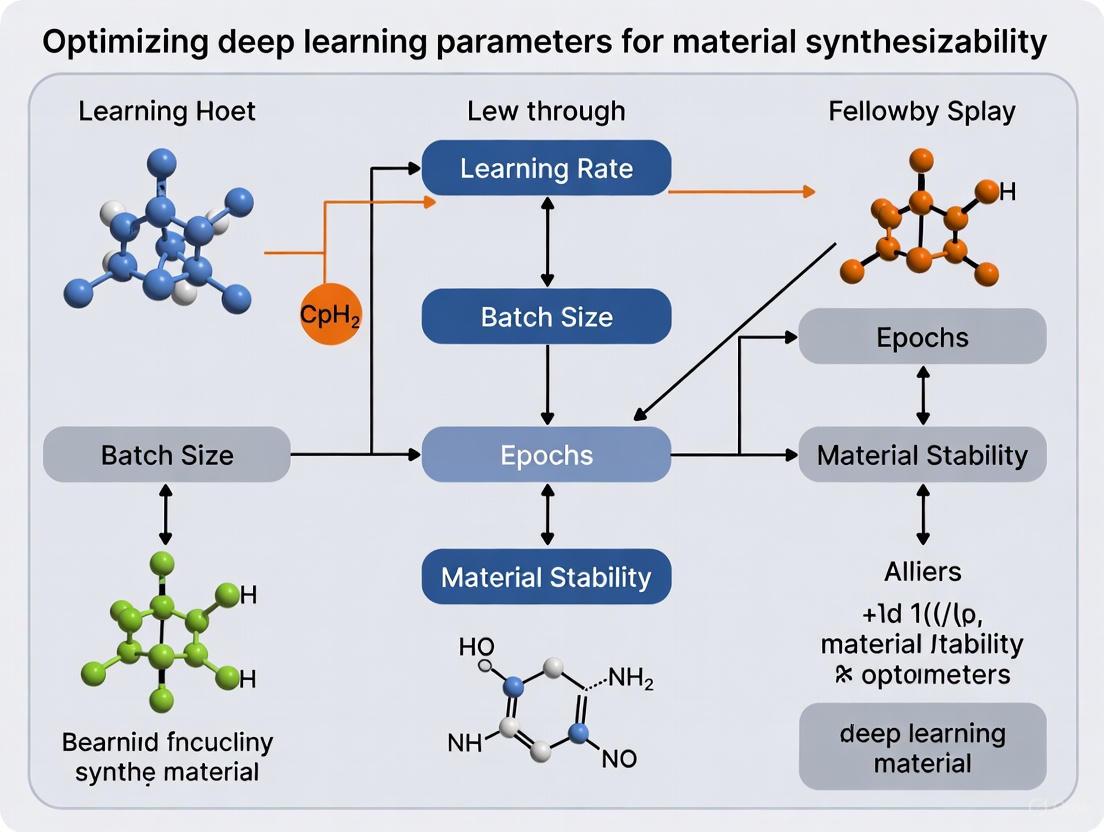

Workflow Visualization

Synthesizability-Driven CSP Framework

Deep Learning for Synthesizability Prediction

Table 1: Comparison of Synthesizability Assessment Methods

| Method | Core Principle | Key Metric(s) | Reported Accuracy/Performance | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Thermodynamic Stability [1] [2] | Favors the most stable phase at equilibrium. | Energy above convex hull (DFT). | Not a direct measure of synthesizability. | Physically intuitive; widely computed. | Fails for many metastable and kinetically stabilized phases. |

| Retrosynthesis Models [5] | Predicts a viable synthetic pathway from commercial building blocks. | Solvability (Route Found/Not Found). | Varies by model and search constraints. | Provides an explicit, actionable synthesis plan. | Computationally expensive; inference cost can be prohibitive. |

| Synthesizability Heuristics [5] | Assesses molecular complexity based on group frequencies. | SA Score, SYBA, SC Score. | Correlated with solvability for drug-like molecules. | Very fast to compute. | Correlation breaks down for other molecule classes (e.g., materials); can overlook promising candidates. |

| LLM-based Prediction [2] | Fine-tuned language model predicts synthesizability from text-based crystal structure representation. | Accuracy, Precision, Recall. | Up to 98.6% accuracy on test data [2]. | High accuracy and generalization; can also predict methods and precursors. | Requires careful dataset curation and fine-tuning; "hallucination" risk. |

| PU-Learning Models [3] [4] | Trains a classifier using known synthesized (Positive) and hypothetical (Unlabeled) structures. | CLscore, Precision, Recall. | ~87.9% - 92.9% accuracy reported in prior works [2]. | Directly addresses the data constraint of the field. | Performance depends on the quality of the representation and PU-learning algorithm. |

Table 2: Key Research Reagent Solutions for Synthesizability Research

| Item / Resource | Function in Research | Example Use Case |

|---|---|---|

| DFT Codes (VASP, etc.) [6] | Provides first-principles calculation of formation energies and electronic structures to assess thermodynamic stability. | Calculating the energy above the convex hull for candidate materials in the GNoME pipeline [6]. |

| Retrosynthesis Platforms (AiZynthFinder, ASKCOS, IBM RXN) [5] | Predicts feasible synthetic routes and assesses synthesizability for organic molecules. | Used as an "oracle" in a generative molecular design loop to directly optimize for synthesizable candidates [5]. |

| Crystal Structure Databases (ICSD, Materials Project) [6] [2] | Serves as a source of confirmed synthesizable (positive) data for training machine learning models. | Curating a dataset of 70,120 synthesizable structures from ICSD to train the CSLLM framework [2]. |

| Graph Neural Networks (GNNs) [6] | Models the structure-property relationships of crystals for large-scale screening and prediction. | The GNoME framework uses GNNs to predict crystal stability at scale, enabling the discovery of millions of new structures [6]. |

| Large Language Models (LLMs e.g., GPT, LLaMA) [2] [4] | Fine-tuned to predict synthesizability, synthesis methods, and precursors from text-based crystal structure descriptions. | The CSLLM framework uses specialized LLMs to achieve 98.6% accuracy in synthesizability prediction and suggest synthetic routes [2]. |

| Positive-Unlabeled (PU) Learning Algorithms [3] [2] [4] | Enables training of classifiers from labeled positive data (synthesized) and unlabeled data (hypothetical). | Identifying 80,000 non-synthesizable structures from a pool of 1.4 million theoretical ones by selecting those with the lowest CLscore [2]. |

Frequently Asked Questions

Q1: Why does my deep learning model for synthesizability prediction perform well on the training set but fails on new, hypothetical compositions?

This is a classic sign of overfitting, often caused by a dataset that lacks diversity and is limited to known, synthesized materials [1]. The model has memorized the training data instead of learning generalizable rules.

- Solution: Incorporate synthetic data and domain randomization. Generate hypothetical, unsynthesized material compositions and treat them as negative examples or unlabeled data during training. Techniques like PU (Positive-Unlabeled) learning can probabilistically reweight these examples to account for the fact that some might be synthesizable [7]. Ensure your dataset includes variations in elemental combinations and stoichiometries beyond those found in existing databases.

Q2: Our lab has generated a large amount of synthesis data, including failed attempts. How can we best structure this data for a deep learning model?

Structuring this data correctly is crucial for teaching a model not just what works, but what doesn't.

- Solution: Create a structured schema for each experiment. Essential fields include:

- Precursors: List of chemical inputs and their quantities.

- Conditions: Time, temperature, atmosphere, and pressure [1].

- Output: The resulting phase (e.g., BiFeO₃, Bi₂Fe₄O₉ impurity).

- Label: A binary or multi-class label (e.g., "success," "failed-impurity," "failed-no-reaction"). Use a tool like XGBoost, which efficiently handles structured, tabular data and includes built-in regularization to prevent overfitting on your high-dimensional data [8].

Q3: What is the most efficient way to tune our model's hyperparameters given our limited computational resources?

With large datasets and complex models, hyperparameter tuning can be computationally expensive.

- Solution: Avoid exhaustive Grid Search. Instead, use Random Search or, for greater efficiency, Bayesian Optimization [9]. Bayesian Optimization builds a probabilistic model of the objective function (like validation loss) and uses it to direct the search to the most promising hyperparameter combinations, significantly reducing the number of training runs needed to find an optimal configuration [9].

Q4: We want to use a pretrained model on general chemical data and fine-tune it for our specific synthesizability prediction task. What is the best practice?

Fine-tuning allows you to leverage knowledge from a broader domain.

- Solution:

- Select a Model: Choose a model pretrained on a large corpus of chemical compositions or structures [8].

- Adjust Final Layers: Replace the model's final prediction layers with new ones tailored to your specific classification task (e.g., synthesizable/unsynthesizable) [8].

- Train with Low Learning Rate: Use a lower learning rate during fine-tuning to make small, precise adjustments to the weights without overwriting the valuable features the model has already learned [8]. Always use cross-validation during this process to ensure the model generalizes well.

Experimental Protocols for Key Scenarios

Protocol 1: Creating a Positive-Unlabeled (PU) Dataset for Synthesizability Classification

This methodology is for training a model to predict whether a hypothetical material is synthesizable.

- Positive Data Collection: Extract all known inorganic crystalline materials from the Inorganic Crystal Structure Database (ICSD). These are your confirmed "Positive" examples [7].

- Unlabeled Data Generation: Algorithmically generate a large number of hypothetical chemical compositions that are not present in the ICSD. This pool constitutes your "Unlabeled" data, which contains both synthesizable and unsynthesizable materials [7].

- Data Representation: Convert all chemical formulas into a numerical representation. The atom2vec method can be used, which learns an optimal vector representation for each atom directly from the distribution of the data [7].

- Model Training (PU Learning): Train a deep learning classifier (e.g., SynthNN) on the combined Positive and Unlabeled datasets. The loss function should be designed to account for the fact that the Unlabeled set contains hidden positive examples [7].

Protocol 2: Hyperparameter Tuning via Bayesian Optimization

This protocol outlines a method for efficiently finding the best hyperparameters for a deep learning model.

- Define Search Space: Identify the hyperparameters to tune and their value ranges. Key hyperparameters for deep learning include:

- Learning Rate (e.g., log-uniform between 1e-5 and 1e-2)

- Batch Size (e.g., 16, 32, 64)

- Dropout Rate (e.g., uniform between 0.2 and 0.5)

- Number of Hidden Layers [9]

- Choose Objective Function: Define the metric to maximize (e.g., validation set accuracy or F1-score) [9].

- Initialize Optimization: Run a few random trials from the search space to gather initial performance data [9].

- Iterative Loop:

- The Bayesian optimization algorithm uses all previous results to build a surrogate model (e.g., a Gaussian Process) of the objective function.

- The algorithm then selects the next hyperparameter combination to evaluate by maximizing an acquisition function (e.g., Expected Improvement), which balances exploration of unknown areas and exploitation of known promising areas.

- Train the model with the proposed hyperparameters, record the performance, and update the surrogate model.

- Repeat until a performance plateau or computational budget is reached [9].

Research Reagent Solutions

The table below lists key computational "reagents" and tools essential for experiments in deep learning for synthesizability.

| Item | Function/Benefit |

|---|---|

| Unity/Unreal Engine | A photorealistic game engine used to develop simulators and generate high-quality, perfectly labeled synthetic data for training models, bypassing the need for physical experiments [10]. |

| OpenVINO Toolkit | Optimizes and deploys deep learning models for fast inference on Intel hardware, accelerating the screening of candidate materials [8]. |

| Optuna | An open-source framework for automated hyperparameter optimization. It efficiently searches the hyperparameter space using algorithms like Bayesian optimization, reducing manual tuning time [8]. |

| Atom2Vec | A material composition representation method that learns feature embeddings for atoms directly from data, without requiring pre-defined chemical knowledge, allowing the model to discover synthesizability principles on its own [7]. |

| WebAIM Contrast Checker | A tool to verify that the color contrast in all visualizations (e.g., charts, diagrams) meets accessibility standards (WCAG), ensuring clarity and readability for all researchers [11]. |

Workflow Visualization

Figure 1. High-level workflow for developing a synthesizability prediction model, integrating real and synthetic data sources.

Figure 2. Logic of using Positive and Unlabeled (PU) learning to overcome the lack of confirmed negative data.

Key Deep Learning Architectures for Materials Informatics

This technical support center provides troubleshooting guides and frequently asked questions (FAQs) for researchers employing deep learning architectures in materials informatics, with a special focus on optimizing parameters for material synthesizability research.

Frequently Asked Questions (FAQs)

1. What are the key deep learning architectures used for material property prediction? Several deep learning architectures have been developed to learn from different materials representations [12]:

- IRNet (Individual Residual Network): A very deep fully connected network that uses individual residual learning around each layer to successfully alleviate the vanishing gradient problem, enabling deeper learning on large materials datasets [12].

- Graph Neural Networks: Models like Crystal Graph Convolutional Neural Networks (CGCNN) learn material properties directly from the connection of atoms in the crystal, providing a universal representation of crystalline materials [12].

- SchNet: Uses continuous-filter convolutional layers to model quantum interactions in molecules for predicting total energy and interatomic forces [12].

- ElemNet: A deep neural network that automatically captures essential chemistry between elements from elemental fractions to predict formation enthalpy without domain-knowledge feature engineering [12].

- 3D Convolutional Neural Networks (3D-CNN): Some frameworks represent crystalline materials as color-coded three-dimensional images, from which a convolutional encoder learns latent structural and chemical features for tasks like synthesizability classification [13].

2. How can we predict whether a hypothetical material is synthesizable using deep learning? Predicting synthesizability is a major challenge. Deep learning approaches often treat this as a classification problem, but face the issue of limited data on non-synthesizable (negative) samples [13] [14]. Advanced frameworks address this by:

- Semi-Supervised Learning: The Teacher-Student Dual Neural Network (TSDNN) leverages a large amount of unlabeled data to improve performance. A teacher model provides pseudo-labels for unlabeled data, which a student model then learns from, significantly improving synthesizability prediction accuracy [14].

- Large Language Models (LLMs): The Crystal Synthesis LLM (CSLLM) framework uses specialized language models fine-tuned on a comprehensive dataset of synthesizable and non-synthesizable crystal structures, achieving state-of-the-art accuracy by representing crystal structures as text [15].

3. My deep learning model's performance is degrading as I make the network deeper. Why does this happen and how can I fix it? This is a classic symptom of the vanishing gradient problem, where gradients become exponentially small as they are backpropagated from the output layer to the initial layers, halting effective training [12].

- Solution: Implement residual learning. Architectures like IRNet introduce shortcut connections around each layer (or stack of layers). Instead of the layer learning an underlying mapping, it learns a residual mapping, which has been proven to make very deep networks easier to train and converge [12].

4. What are the essential steps for debugging a deep learning model in materials science? A systematic approach to debugging is crucial [16] [17]:

- Start Simple: Begin with a simple architecture (e.g., a fully connected network with one hidden layer) and a small, manageable dataset to establish a baseline and increase iteration speed [16].

- Overfit a Single Batch: Try to drive the training error on a single batch of data arbitrarily close to zero. Failure to do so can reveal issues like flipped signs in the loss function, numerical instability, or an incorrect data pipeline [16].

- Check Intermediate Outputs: Use debugging tools to track the outputs and gradients at each layer, similar to setting breakpoints in standard software debugging. This helps identify where a network might be failing [17].

- Compare to a Known Result: Reproduce the results of an official model implementation on a similar or benchmark dataset. Stepping through both codes line-by-line can help identify discrepancies [16].

5. Do I need a massive labeled dataset to apply deep learning in materials informatics? Not necessarily. While deep learning often benefits from large datasets, several strategies work around data scarcity:

- Leveraging Unlabeled Data: Semi-supervised learning models like TSDNN effectively exploit the large amount of unlabeled data available in materials databases to boost performance when labeled data is limited [14].

- Transfer Learning: Pre-trained models, often on large datasets like the OQMD or Materials Project, can be fine-tuned for new tasks with smaller datasets [12] [18].

- Hybrid Models: Integrating physics-based simulations with data-driven learning can reduce the reliance on massive, purely data-driven training sets [18].

Troubleshooting Guide: Common Experimental Issues and Solutions

Issue 1: Model Performance is Poor or Unstable

| Symptoms | Possible Causes | Diagnostic Steps | Solutions |

|---|---|---|---|

| Training loss does not decrease, or validation loss oscillates/explodes [16] [17]. | - Incorrect weight initialization.- Learning rate too high or too low.- Vanishing/exploding gradients.- Numerical instability. | - Check initial loss matches expected chance performance [17].- Monitor gradient magnitudes across layers.- Check for inf or NaN values in tensors [16]. |

- Use standard initialization schemes from frameworks.- Tune learning rate; use adaptive optimizers like Adam.- Use residual connections (e.g., IRNet) and ReLU activation [12] [17]. |

| Model overfits (low training error, high test error). | - Model too complex for data.- Insufficient training data.- No regularization. | - Compare training vs. validation loss curves. | - Apply L1/L2 weight regularization, Dropout, or Early Stopping [17].- Simplify the model architecture [16]. |

| Performance is worse than a known baseline or published result [16]. | - Implementation bugs (often silent).- Incorrect data pre-processing.- Hyperparameter choices. | - Overfit a single batch to catch bugs [16].- Line-by-line code comparison with a known correct implementation.- Verify input data normalization. | - Start with a simple, proven architecture and sensible hyperparameter defaults [16].- Build complicated data pipelines only after a simple version works [16]. |

Issue 2: Challenges Specific to Synthesizability Prediction

| Symptoms | Possible Causes | Diagnostic Steps | Solutions |

|---|---|---|---|

| Model fails to generalize to new, hypothetical materials. | - Severe bias in training data (mostly synthesizable samples) [14].- Model learns only from a narrow set of compositions/structures. | - Evaluate model on a balanced test set containing "crystal anomalies" [13].- Analyze performance across different crystal systems. | - Use semi-supervised learning (e.g., TSDNN) to leverage unlabeled data and mitigate bias [14].- Ensure training dataset is comprehensive and includes diverse crystal structures [15]. |

| Inability to predict synthesis routes or precursors. | - Model is only trained for binary classification (synthesizable/not). | - Review model capabilities and outputs. | - Employ a multi-task framework like CSLLM, which uses specialized models to predict synthesizability, synthetic methods, and suitable precursors [15]. |

Experimental Protocols for Key Studies

Protocol 1: Implementing an IRNet for Property Prediction

This protocol is based on the framework for enabling deeper learning on big materials data [12].

- Input Representation: Prepare your vector-based materials representation (e.g., composition-derived attributes, structure-derived attributes).

- Network Architecture:

- Construct a deep fully connected network (e.g., 10 to 48 layers).

- Implement an Individual Residual (IR) block. Each block should consist of a fully connected layer, followed by batch normalization and a ReLU activation function.

- Around each IR block, add a shortcut connection that adds the block's input to its output. This forces the block to learn a residual mapping.

- Training: Train the network on a large dataset (e.g., ~436k samples from OQMD) using a regression loss function like Mean Absolute Error (MAE).

- Validation: Compare the MAE of the deep IRNet to a plain deep neural network and traditional machine learning models (e.g., Random Forest) on a held-out test set.

Protocol 2: Setting up a TSDNN for Synthesizability Classification

This protocol outlines the semi-supervised teacher-student approach for formation energy and synthesizability prediction [14].

- Data Preparation:

- Labeled Data (Limited): Gather a small set of known synthesizable (positive) materials.

- Unlabeled Data (Large): Gather a large pool of materials with unknown synthesizability status.

- PU-Learning Pre-processing: Use an iterative Positive-Unlabeled (PU) learning procedure to select the most likely negative samples from the unlabeled set. This creates an initial, noisy training set.

- Teacher-Student Training:

- Teacher Model: Train a initial model (e.g., a CGCNN) on the noisy labeled set from step 2.

- Pseudo-labeling: Use the teacher model to predict labels (pseudo-labels) for the entire unlabeled dataset.

- Student Model: Train a second model (the student) on a combination of the original labeled data and the newly pseudo-labeled data.

- Iteration: The trained student model can then become the teacher for a new iteration, further refining the pseudo-labels and improving model performance.

The table below summarizes quantitative performance data for key deep learning models in materials informatics, particularly for stability and synthesizability prediction.

Table 1: Performance Comparison of Key Deep Learning Models in Materials Informatics

| Model Name | Architecture Type | Primary Application | Key Performance Metric | Reported Result |

|---|---|---|---|---|

| IRNet [12] | Very Deep Fully Connected Network with Individual Residual Learning | Formation Enthalpy Prediction | Mean Absolute Error (MAE) | 0.038 eV/atom (vs. 0.072 eV/atom for Random Forest) |

| CSLLM (Synthesizability LLM) [15] | Fine-tuned Large Language Model | Synthesizability Classification | Accuracy | 98.6% |

| Teacher-Student DNN (TSDNN) [14] | Semi-Supervised Dual Neural Network | Synthesizability Classification | True Positive Rate | 92.9% (vs. 87.9% for baseline PU learning) |

| Crystal Graph CNN (CGCNN) [12] | Graph Neural Network | Formation Energy Prediction | MAE (Regression) | Used as a baseline in TSDNN study [14] |

| 3D-CNN with Convolutional Encoder [13] | 3D Convolutional Neural Network | Synthesizability Classification | Classification Accuracy | Demonstrated accurate classification across broad crystal types |

Workflow Visualization: Deep Learning for Material Synthesizability

The following diagram illustrates a generalized workflow for applying deep learning to predict material synthesizability, integrating concepts from the cited architectures.

Table 2: Essential Data, Tools, and Models for Materials Informatics Experiments

| Resource Name | Type | Function / Application | Reference / Source |

|---|---|---|---|

| OQMD (Open Quantum Materials Database) | Data Repository | Source of DFT-computed formation energies and other properties for hundreds of thousands of materials; used for training property prediction models. | [12] |

| Materials Project (MP) | Data Repository | A vast database of computed material properties for inorganic compounds; used for training and benchmarking. | [12] [14] |

| ICSD (Inorganic Crystal Structure Database) | Data Repository | A critical source of experimentally synthesizable crystal structures used as positive examples for synthesizability models. | [15] [14] |

| CGCNN (Crystal Graph Convolutional Neural Network) | Software Model | A foundational graph neural network architecture that learns from crystal structures directly; often used as a building block or baseline. | [12] [14] |

| PU Learning Algorithm | Methodology | A semi-supervised technique to identify likely negative (non-synthesizable) samples from a pool of unlabeled data, crucial for creating training sets. | [14] |

| CIF (Crystallographic Information File) | Data Format | Standard text file format for representing crystal structure information; a common input for deep learning models. | [15] |

Why Traditional DFT and Convex-Hull Methods Fall Short

Frequently Asked Questions (FAQs)

1. Why can't DFT-calculated formation energy alone reliably predict if a material is synthesizable? Density Functional Theory (DFT) calculates a material's formation energy to determine its thermodynamic stability. A stable material is one that is unlikely to decompose into other, more stable phases. However, synthesizability is not governed by thermodynamics alone. A material might be thermodynamically stable but impossible or exceedingly difficult to synthesize under practical laboratory conditions due to kinetic barriers or the lack of a viable synthesis pathway. Furthermore, DFT fails to account for non-physical considerations such as reactant cost, equipment availability, and human-perceived importance of the final product. Consequently, using formation energy as a proxy for synthesizability captures only about 50% of synthesized inorganic crystalline materials [7].

2. What is the fundamental limitation of the convex-hull method in materials discovery? The convex-hull method identifies the most thermodynamically stable phases in a chemical system. A material is considered stable if its energy lies on or very near this convex hull. The primary limitation is that this is a screening method, not a generative one. It is fundamentally limited to exploring variations of already-known materials through substitutions and prototypes. This means it can only explore a tiny fraction (in the orders of 10^6–10^7 materials) of the vast space of potentially stable inorganic compounds, which is why it has historically been ineffective at predicting stability for materials with more than four unique elements [6].

3. Besides stability, what other chemical factors influence synthesizability that traditional methods miss? Traditional methods like charge-balancing, which ensures a net neutral ionic charge, are often used as a synthesizability proxy. However, this approach is inflexible and fails to account for different bonding environments. For instance, among all known inorganic materials, only 37% are charge-balanced according to common oxidation states. Even among typically ionic compounds like binary cesium compounds, only 23% are charge-balanced. This indicates that synthesizability depends on learning complex, implicit chemical principles like charge-balancing, chemical family relationships, and ionicity directly from data, which is beyond the scope of rule-based methods [7].

4. How do data scarcity and generalization issues hinder traditional machine learning models for property prediction? Traditional machine learning (ML) and deep learning models for material properties require large, high-quality datasets for accurate predictions. Many important material properties have small datasets (a few thousand data points or fewer), leading models to suffer from high variance and overfitting. Without a clear relationship between molecular structure and properties, these data-driven models exhibit prediction errors that can misguide molecular screening. Furthermore, these models often struggle with extrapolation, performing poorly on material compositions or structures that are not represented in their training data, thus reducing the reliability of the design outcomes [19] [20].

Troubleshooting Guides

Issue 1: Low Success Rate in Discovering Novel, Stable Materials

Problem: Your computational screening pipeline, based on DFT convex-hull stability, is failing to identify a significant number of novel, stable candidates, especially in complex chemical spaces with more than four elements.

Diagnosis: This is a fundamental limitation of screening-based approaches. You are likely exploring a confined region of chemical space near known materials, missing the vast space of undiscovered, stable crystals.

Solution: Integrate a generative AI model into your discovery workflow.

- Recommended Tool: Use a generative model like MatterGen or GNoME.

- Mechanism: These models are trained on vast datasets of known crystals and learn the underlying rules of stable crystal structure. They can directly generate novel candidate structures across the periodic table.

- Protocol:

- Pretraining: The model (e.g., MatterGen) is pretrained on a large, diverse dataset of stable structures (e.g., ~600,000 from Materials Project and Alexandria) to learn general stability principles [21].

- Generation: The model generates candidate crystal structures. For example, GNoME has generated 2.2 million structures predicted to be stable, expanding the number of known stable materials by an order of magnitude [6].

- Fine-Tuning: The base model can be fine-tuned with adapter modules on a smaller dataset labeled with your target property (e.g., magnetic moment, band gap) to steer the generation toward materials with specific desired functionalities [21].

- Validation: Perform DFT calculations on the top-generated candidates to verify their stability and properties.

Issue 2: Poor Reliability of Property Predictions for Novel Material Classes

Problem: Your machine learning model's property predictions are inaccurate when applied to new types of materials not well-represented in the training data, leading to failed experimental validation.

Diagnosis: The model is likely performing poorly due to an out-of-distribution problem. Its predictions are unreliable because the new materials are too dissimilar from those it was trained on.

Solution: Implement a reliability quantification framework based on molecular similarity.

- Recommended Tool: Develop a model that uses a Molecular Similarity Coefficient (MSC) and a associated Reliability Index (R) [19].

- Mechanism: This approach measures how similar a target molecule is to the ones in the training dataset. Predictions for molecules with high similarity to the training set are deemed more reliable.

- Protocol:

- Similarity Calculation: For a target molecule, calculate its MSC relative to all molecules in the available property database.

- Tailored Training Set: Select the most similar molecules from the database to create a customized, small training set.

- Model Training & Prediction: Train your property prediction model (e.g., a Group Contribution method or Support Vector Regression) on this tailored set and make the prediction.

- Reliability Quantification: Calculate the Reliability Index based on the similarities of the molecules in the training set. A higher R-index indicates a more reliable prediction, helping you decide whether to trust the prediction or prioritize experimental validation [19].

Issue 3: Inefficient Optimization of Material Synthesis Parameters

Problem: Experimentally optimizing synthesis parameters (e.g., temperature, humidity, duration) to produce a high-quality material is taking too long, requiring a year or more of manual trial-and-error.

Diagnosis: Relying solely on researcher intuition and manual experimentation creates a bottleneck in the materials development cycle.

Solution: Deploy an autonomous, AI-driven laboratory.

- Recommended Tool: Use a platform like AutoBot [22].

- Mechanism: An automated platform uses machine learning to direct robotic systems in synthesizing and characterizing materials. It runs an iterative learning loop where each experiment's results inform the choice of the next most informative set of parameters to test.

- Protocol:

- Automated Synthesis: A robotic system synthesizes samples (e.g., perovskite films), varying multiple parameters precisely.

- Automated Characterization: The platform immediately characterizes the samples using integrated techniques (e.g., UV-Vis and photoluminescence spectroscopy).

- Data Fusion and Scoring: Data from various characterization techniques are fused into a single score representing material quality.

- Machine Learning Decision: A machine learning algorithm analyzes the relationship between synthesis parameters and the quality score, then decides the next set of parameters to test to maximize information gain. This process allowed AutoBot to find optimal synthesis conditions by sampling just 1% of 5,000+ possible combinations in a few weeks, a task that would take a year manually [22].

Comparative Data: Traditional Methods vs. Modern AI Approaches

The table below summarizes key performance metrics that highlight why modern AI methods are surpassing traditional approaches.

Table 1: Quantitative Comparison of Material Discovery Methods

| Method | Key Metric | Performance | Primary Limitation |

|---|---|---|---|

| Charge-Balancing [7] | Precision in identifying synthesizable materials | Low (Only 37% of known materials are charge-balanced) | Inflexible; cannot account for metallic, covalent, or kinetically stabilized materials. |

| DFT + Convex-Hull Screening [7] [6] | Hit rate for synthesizable materials | ~50% | Misses kinetically stabilized phases and is limited to known chemical spaces. |

| GNoME (AI Screening) [6] | Hit rate for stable materials | >80% (with structure), >33% (composition only) | Requires robust generation of candidate structures. |

| SynthNN (AI Synthesizability) [7] | Precision in identifying synthesizable materials | 7x higher than DFT formation energy | Learns from existing data; may be biased by historical synthesis choices. |

| MatterGen (Generative AI) [21] | Percentage of generated structures that are Stable, Unique, and New (SUN) | More than 2x higher than previous generative models | Computational cost of training and fine-tuning. |

Experimental Protocols

Protocol 1: Training a Deep Learning Synthesizability Classifier (SynthNN)

This protocol outlines the procedure for developing a model that classifies materials as synthesizable based on composition alone [7].

- Data Curation: Compile a dataset of positive examples from the Inorganic Crystal Structure Database (ICSD), which contains experimentally synthesized crystalline materials.

- Data Augmentation: Artificially generate a large set of "unsynthesized" material compositions to serve as negative examples. A semi-supervised Positive-Unlabeled (PU) learning approach is used to account for the possibility that some of these generated materials could be synthesizable.

- Model Architecture: Employ a deep learning model (e.g., SynthNN) that uses an

atom2vecstyle embedding matrix. This allows the model to learn optimal chemical representations directly from the data without relying on pre-defined features like charge balance. - Training: Train the neural network on the curated dataset. The model learns the complex chemical principles governing synthesizability from the distribution of all known synthesized materials.

- Validation: Benchmark the model's precision against baseline methods like random guessing and charge-balancing. The model should be evaluated using metrics like F1-score, accounting for the PU learning framework.

Protocol 2: Implementing a Transfer Learning Strategy for Small Datasets

This protocol is for improving property prediction accuracy when the target property has a limited dataset [20].

- Base Model Selection: Choose a powerful Graph Neural Network (GNN) architecture, such as the Atomistic Line Graph Neural Network (ALIGNN).

- Pre-Training (PT): Pre-train the GNN on a large, general source dataset from a materials database (e.g., formation energies from the Materials Project). This teaches the model fundamental chemistry and structural relationships.

- Fine-Tuning (FT): Take the pre-trained model and fine-tune it on your smaller, target dataset (e.g., piezoelectric modulus). The fine-tuning can involve retraining all or a subset of the model's layers with a low learning rate.

- Performance Evaluation: Compare the transfer-learned model's Mean Absolute Error (MAE) and R² score against a model trained from scratch on the small target dataset. The PT/FT model is expected to achieve lower errors, especially on very small datasets (e.g., N=100).

Workflow Visualization

The following diagram illustrates the core iterative workflow of a modern, AI-accelerated materials discovery platform, contrasting it with traditional methods.

The Scientist's Toolkit: Key Research Reagents & Computational Solutions

Table 2: Essential Tools for Modern Computational Materials Research

| Tool / Solution Name | Type | Primary Function |

|---|---|---|

| GNoME [6] | Graph Neural Network | Discovers stable crystals by predicting formation energy and stability at scale, enabling large-scale screening. |

| MatterGen [21] | Generative AI Model | Inverse design of novel, stable inorganic materials with targeted properties by generating candidate structures. |

| SynthNN [7] | Deep Learning Classifier | Predicts the synthesizability of a material from its chemical composition, learning from historical data. |

| AutoBot [22] | Autonomous Laboratory | Automates the synthesis, characterization, and AI-driven optimization of material synthesis parameters. |

| ALIGNN [20] | Graph Neural Network | Accurately predicts material properties from atomic structure; serves as a strong base model for transfer learning. |

| Molecular Similarity Framework [19] | Reliability Metric | Quantifies the confidence and reliability of a molecular property prediction based on similarity to training data. |

Architectures and Workflows: Implementing Deep Learning for Synthesizability Prediction

Specialized Large Language Models for Crystal Structures

Frequently Asked Questions

Q1: My LLM-generated crystal structures are chemically invalid. What should I check? This commonly occurs when the model's tokenization process misinterprets crystallographic information. Ensure your input representation uses standardized formats like CIF or the simplified "material string" developed for CSLLM, which integrates essential crystal information without redundancy [15]. For autoregressive models like CrystaLLM, verify that the tokenization vocabulary adequately covers all space groups and atomic symbols in your target structures [23]. Implement validity checks using machine learning interatomic potentials as a filtering step, which has been shown to validate 78.38% of generated structures as metastable [24].

Q2: How can I improve synthesizability prediction accuracy for hypothetical crystals? Traditional stability metrics like energy above hull (74.1% accuracy) and phonon stability (82.2% accuracy) underperform compared to specialized LLM approaches. The Crystal Synthesis LLM framework achieves 98.6% accuracy by using a balanced dataset of 70,120 synthesizable structures from ICSD and 80,000 non-synthesizable structures identified through positive-unlabeled learning [15]. For limited data scenarios, teacher-student dual neural networks (TSDNN) improve synthesizability prediction true positive rates from 87.9% to 92.9% while using 98% fewer parameters [14].

Q3: What are the computational requirements for fine-tuning crystal structure LLMs? Requirements vary significantly by approach. The MatLLMSearch framework utilizes pre-trained LLMs without additional fine-tuning, substantially reducing overhead [24]. For custom fine-tuning, CrystaLLM demonstrated effective performance with both 25-million and 200-million parameter models, with training duration spanning weeks to months depending on GPU resources [23]. For limited computational resources, consider leveraging existing APIs or focusing on smaller, specialized architectures.

Q4: How do I handle data imbalance in synthesizability training datasets? Address this through semi-supervised learning techniques. The TSDNN approach effectively exploits large amounts of unlabeled data through its teacher-student architecture [14]. For crystal anomaly detection, strategically select negative samples from unobserved structures of well-studied chemical compositions, ensuring balance between classes by restricting anomaly structures to match synthesized structure counts [13]. Positive-unlabeled learning algorithms can generate reliable negative samples from theoretical databases [15].

Q5: Can LLMs suggest synthetic methods and precursors for generated crystals? Yes, specialized frameworks like CSLLM include separate LLMs for synthesizability prediction (98.6% accuracy), method classification (91.0% accuracy), and precursor identification (80.2% success rate) [15]. These models are fine-tuned on comprehensive datasets encompassing synthesis literature and precursor relationships. For binary and ternary compounds, this approach successfully identifies solid-state synthesis precursors while calculating reaction energies and performing combinatorial analysis to suggest additional options.

Performance Comparison of Crystal Structure LLMs

| Model | Primary Function | Accuracy/Performance | Key Innovation |

|---|---|---|---|

| MatLLMSearch [24] | Crystal structure generation | 78.38% metastable rate (MLIP); 31.7% DFT-verified stability | Evolution-guided pre-trained LLMs without fine-tuning |

| CrystaLLM [23] | Autoregressive crystal generation | Correct CIF syntax; physically plausible structures | Direct CIF tokenization and generation |

| CSLLM [15] | Synthesizability & precursor prediction | 98.6% synthesizability accuracy; >90% method classification | Three specialized LLMs for synthesizability, methods, precursors |

| 3D CNN Synthesizability [13] | Synthesizability classification | Accurate across broad crystal structure types | 3D voxel image representation with convolutional encoder |

| Teacher-Student DNN [14] | Formation energy & synthesizability | 92.9% true positive rate (from 87.9% baseline) | Semi-supervised learning with dual-network architecture |

Experimental Protocols

Protocol 1: Training a Crystal Structure Generation LLM

Objective: Develop an LLM for generating valid crystal structures without extensive fine-tuning.

Materials and Setup:

- Pre-trained LLM with sufficient parameter capacity (e.g., 200M parameters)

- Crystallographic Information File (CIF) dataset (e.g., ~2.2 million files)

- Tokenization vocabulary covering atomic symbols, space groups, and numerical digits

- Evolutionary algorithm framework for guided generation

- Validation infrastructure (ML interatomic potentials, DFT)

Procedure:

- Data Preparation: Standardize CIF files, ensuring consistent formatting and removing disordered structures. Withhold 10,000 files for testing.

- Tokenization: Implement byte-level tokenization accommodating crystallographic notation, including special characters for symmetry operations.

- Model Architecture: Employ decoder-only Transformer architecture optimized for sequential token prediction.

- Training: Train autoregressively using next-token prediction on sequences of tokenized CIF content. Monitor cross-entropy loss on validation set.

- Generation: Implement conditional generation starting with cell composition or space group prompts. Use sampling techniques with temperature control for diversity.

- Validation: Validate generated structures using machine learning interatomic potentials (for metastability) and DFT calculations (for stability).

Troubleshooting: If generated structures show chemical implausibility, verify tokenization handles numerical precision adequately. For low stability rates, incorporate evolutionary guidance during generation to perform implicit crossover and mutation operations [24].

Protocol 2: Synthesizability Prediction with Limited Labeled Data

Objective: Predict synthesizability of hypothetical crystals using semi-supervised learning.

Materials and Setup:

- Labeled synthesizable crystals from ICSD (~70,000 structures)

- Unlabeled theoretical structures from materials databases (~1.4 million structures)

- Teacher-student dual neural network architecture

- Graph neural network for structure representation

Procedure:

- Data Curation: Collect confirmed synthesizable crystals from ICSD, filtering by element count and disorder. Extract theoretical structures from Materials Project, OQMD, JARVIS, and Computational Materials Database.

- Initial Negative Sampling: Use pre-trained PU learning model to assign CLscores, selecting structures with scores <0.1 as negative examples.

- Representation Learning: Convert crystals to graph representations with nodes as atoms and edges as bonds, incorporating periodicity.

- Teacher Model Training: Train initial model on labeled positive and pseudo-negative samples using crystal graph convolutional neural network.

- Student Model Training: Use teacher predictions on unlabeled data to train student model with consistency regularization.

- Iterative Refinement: Repeat pseudo-labeling and training cycles, with each iteration refining negative sample selection.

- Evaluation: Assess on holdout set with known synthesizability status, using accuracy, precision, recall, and F1-score metrics.

Troubleshooting: If model shows bias toward synthesizable materials, adjust the negative selection threshold or incorporate active learning to identify ambiguous cases for expert labeling [14].

Research Reagent Solutions

| Resource | Function | Application Example |

|---|---|---|

| Crystallographic Open Database [13] | Source of synthesizable crystal structures | Training data for synthesizability classification |

| Materials Project Database [15] | Repository of theoretical crystal structures | Source of negative samples for synthesizability training |

| Machine Learning Interatomic Potentials [24] | Rapid validation of structural stability | Filter for LLM-generated crystal structures |

| Positive-Unlabeled Learning [15] | Identification of non-synthesizable examples | Handling data imbalance in synthesizability prediction |

| Evolutionary Search Algorithms [24] | Guided exploration of chemical space | Enhancing LLM generation with chemical validity |

| Text-based Crystal Representations [15] | Simplified structure encoding | Efficient fine-tuning of LLMs for materials tasks |

Workflow Visualization

Crystal Structure Discovery Workflow

CSLLM Multi-Task Prediction Architecture

Graph Neural Networks for Structure-Property Mapping

Core Concepts: GNNs for Materials Science

What is a Graph Neural Network (GNN) and why is it suitable for structure-property mapping? A Graph Neural Network (GNN) is a class of deep learning models designed to perform inference on data described by graphs. They are optimized to leverage the structure and properties of graphs, making them exceptionally suitable for structure-property mapping in domains like materials science and chemistry because many materials and molecules can be naturally represented as graphs, where atoms are nodes and chemical bonds are edges [25] [26]. Their core capability is relational learning—understanding connections between entities—which allows them to capture complex interactions within a material's structure that critically determine its macroscopic properties [27] [28].

How does the Message Passing framework work? Most modern GNNs used in materials science operate under the Message Passing Neural Network (MPNN) framework [26]. In this framework, each node in the graph gathers "messages" (feature vectors) from its neighboring nodes. This process typically involves three key steps [26]:

- Message (M): For each node, a message is computed from its neighbors' features.

- Aggregation (∑): These messages are aggregated (e.g., summed or averaged).

- Update (U): The node's own feature vector is updated based on the aggregated messages. This "message passing" step is repeated multiple times, allowing each node to incorporate information from its broader context within the graph, ultimately building a rich representation that encodes both the node's properties and its structural environment [26].

Troubleshooting Guide: Common GNN Performance Issues

Issue 1: Model Fails to Learn from the Data

| Observation | Potential Cause | Diagnostic Check | Remediation Strategy |

|---|---|---|---|

| Training loss does not decrease; model performance is no better than a simple baseline [16] | Implementation Bugs: Incorrect shapes, loss function, or data preprocessing [16]. | Overfit a single batch: Try to drive the training error on a very small batch of data (e.g., 2-4 examples) arbitrarily close to zero. Failure indicates a likely bug [16]. | Start with a simple, lightweight implementation (<200 lines). Use off-the-shelf, tested components where possible. Step through your model creation and inference with a debugger to check tensor shapes and data types [16]. |

| Inadequate Model Complexity: The model is too simple for the task. | Compare your model's performance on a benchmark dataset to known results [16]. | If it underperforms on benchmarks, increase model complexity (e.g., more message passing layers, larger hidden dimensions) or try a more powerful architecture [27]. | |

| Poorly Chosen Hyperparameters: Default parameters may be unsuitable. | Perform a systematic hyperparameter search (e.g., grid search, random search) focusing on learning rate and layer depth [27]. | Use sensible defaults to start: ReLU activation, no regularization, and normalized input data [16]. Then, tune based on validation performance. |

Issue 2: Model Overfits the Training Data

| Observation | Potential Cause | Diagnostic Check | Remediation Strategy |

|---|---|---|---|

| Validation loss starts to increase while training loss continues to decrease [16] | Limited Training Data: The dataset is too small to generalize. | Check the model's performance on a held-out test set that was not used during training. | Implement graph-specific regularization techniques such as graph dropout [27]. Use data augmentation methods specific to graph structures (e.g., graph perturbations) [27]. |

| Excessive Model Complexity: The model has too many parameters. | Evaluate if performance improves when using a simpler architecture (e.g., fewer GNN layers). | Apply regularization (e.g., L2 regularization, dropout) and consider reducing model size or the number of message passing steps [27] [16]. | |

| Insufficient Regularization. | Monitor the gap between training and validation error; a large gap suggests overfitting. | Use cross-validation on graph-structured data to better estimate generalization performance [27]. |

Issue 3: Poor Generalization to New Graph Types or Sizes

| Observation | Potential Cause | Diagnostic Check | Remediation Strategy |

|---|---|---|---|

| Model performs well on some microstructures/molecules but poorly on others [28] | Architecture Selection: The chosen GNN variant lacks the necessary expressive power. | Test different GNN architectures (e.g., GCN, GAT, GIN) on your specific graph types [27]. | Graph Attention Networks (GAT) are often better for heterogeneous graphs with varying node importance. Graph Isomorphism Networks (GIN) can be more suitable for tasks requiring structural invariance [27]. |

| Inadequate Feature Engineering: Raw node features lack meaningful structural information. | Inspect the learned node embeddings to see if they capture relevant distinctions. | Incorporate structural node features (e.g., positional encoding) and utilize multi-hop neighborhood information [27]. | |

| Over-smoothing: Node representations become indistinguishable after too many message passing layers [26]. | Check if performance degrades as you increase the number of GNN layers. | Reduce the number of message passing layers. Use skip connections to preserve information from earlier layers [26]. |

Issue 4: Computational Bottlenecks and Scalability

| Observation | Potential Cause | Diagnostic Check | Remediation Strategy |

|---|---|---|---|

| Training is slow or runs out of memory, especially with large graphs [27] | Inefficient Message Passing: Naive implementation for large, dense graphs. | Profile your code to identify the most time-consuming operations. | Use efficient sampling techniques like GraphSAGE (neighborhood sampling) or Cluster-GCN to train on subgraphs [27]. |

| Large Graph Size. | Monitor GPU memory usage during training. | Leverage pre-trained graph embeddings and transfer learning to reduce the need for training from scratch on large datasets [27]. |

Frequently Asked Questions (FAQs)

1. What are the most common mistakes when first implementing a GNN? The most common pitfalls include [27]:

- Improper Graph Preprocessing: Neglecting to normalize node features or mishandling graph connectivity.

- Inadequate Hyperparameter Tuning: Using default parameters without validation, especially for learning rate and layer depth.

- Inappropriate Model Architecture Selection: Choosing a GNN variant that is not well-suited to the graph's characteristics.

- Neglecting Graph Representation Quality: Using raw features without considering structural or topological information.

2. How do I represent a polycrystalline material or a molecule as a graph for a GNN?

- For a molecule: Nodes represent atoms, and edges represent chemical bonds. Node features can include atom type, charge, etc., while edge features can include bond type and distance [26].

- For a polycrystalline material: Each grain is represented as a node. The node feature vector typically includes physical descriptors like grain size, orientation (e.g., Euler angles), and the number of neighboring grains. An adjacency matrix is built where a connection exists between two nodes if the corresponding grains are in physical contact [28].

3. My GNN's performance is unstable. What could be the cause?

Training instability in GNNs can be caused by factors like numerical instability (e.g., using exp or log operations without safeguards), vanishing/exploding gradients, or a high learning rate [16]. A good practice is to ensure input data is normalized and to use built-in functions from deep learning frameworks to avoid manual implementation of sensitive operations [16].

4. How can I make my GNN model more interpretable for my research? Some GNNs, particularly those using attention mechanisms like GAT, can inherently provide some interpretability by revealing which neighbors a node attends to most strongly. Furthermore, techniques from explainable AI (XAI) can be applied to quantify the importance of each feature in each grain or atom to the final predicted property, helping to generate scientific insight [28].

Experimental Protocol: Building a GNN for Property Prediction

The following workflow outlines the key steps for developing a GNN model to predict properties from material structures.

GNN Modeling Workflow

1. Data Preparation & Graph Construction

- Input: Raw material data (e.g., CIF files for crystals, microstructure images, molecular SMILES strings) [15] [28].

- Action: Convert the structure into a graph.

- Nodes: Identify the fundamental entities (atoms, grains).

- Node Features: For each node, compute a feature vector. For a grain, this could be size, orientation (Euler angles), and number of neighbors [28]. For an atom, this could be atom type, valence, etc [26].

- Edges: Define connections based on spatial proximity or chemical bonds.

- Edge Features (optional): Include information like bond type or distance between grain centroids [26].

- Output: A set of graphs

G = (F, A), whereFis the node feature matrix andAis the adjacency matrix [28].

2. Model Architecture Selection & Implementation

- Choose a GNN variant: Select a base architecture like Graph Convolutional Network (GCN) [28], Graph Attention Network (GAT), or GraphSAGE based on your data and task [27].

- Implement the MPNN: The core of the model will consist of several message passing layers. The update function for a GCN layer, for example, can be expressed as [28]:

F(n+1) = σ( D̂^(-1/2)  D̂^(-1/2) F(n) W(n) )Where = A + I(adds self-loops),D̂is the degree matrix ofÂ,F(n)is the node feature matrix at layern,W(n)is a trainable weight matrix, andσis an activation function like ReLU [28]. - Add a Readout Function: After the message passing layers, aggregate the updated node features into a single graph-level representation using a permutation-invariant function like summing, averaging, or a more advanced learned aggregator [26].

3. Training, Evaluation, and Interpretation

- Training: Use a loss function appropriate for your task (e.g., Mean Squared Error for regression) and an optimizer like Adam [29].

- Evaluation: Rigorously evaluate the model on a held-out test set using relevant metrics (e.g., Mean Absolute Error, Accuracy). Compare its performance to simple baselines and known results to ensure it is learning meaningfully [16].

- Interpretation: Use built-in attention weights or post-hoc explanation methods to determine which parts of the input graph were most influential for the prediction, thereby connecting model output back to physical insight [28].

| Category | Item / Resource | Function / Purpose |

|---|---|---|

| Software & Libraries | PyTorch Geometric (PyG) / Deep Graph Library (DGL) | Specialized libraries that provide efficient, pre-implemented versions of common GNN layers and graph utilities [29]. |

| TensorFlow / PyTorch | General-purpose deep learning frameworks that form the foundation for building and training custom GNN models [29]. | |

| Datasets | Materials Project [15], Inorganic Crystal Structure Database (ICSD) [15], OQMD [15] | Large-scale databases containing crystal structures and computed properties, essential for training and benchmarking models for materials science [15]. |

| Model Architectures | Graph Convolutional Network (GCN) [28] | A foundational and widely used GNN architecture that performs a normalized aggregation of neighbor features [28]. |

| Graph Attention Network (GAT) [27] | Uses attention mechanisms to assign different importance weights to different neighbors, beneficial for heterogeneous graphs [27]. | |

| Graph Isomorphism Network (GIN) [27] | A maximally powerful GNN in terms of distinguishing graph structures, often used for graph classification tasks [27]. | |

| Feature Engineering | Positional Encoding | A technique to inject information about a node's position within the overall graph structure, which standard GNNs might otherwise fail to capture [27]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is an end-to-end predictive pipeline in materials science? An end-to-end predictive pipeline is a unified framework that uses machine learning to automate the entire process of materials discovery, from initial literature search and candidate material prediction to the recommendation of synthesis routes and precursors. This approach aims to significantly reduce the time and cost associated with traditional trial-and-error methods by leveraging large language models (LLMs) and graph neural networks (GNNs) [30] [15].

FAQ 2: How can deep learning models predict whether a theoretical crystal structure is synthesizable? Deep learning models can be trained on comprehensive datasets containing both synthesizable (e.g., from the Inorganic Crystal Structure Database) and non-synthesizable crystal structures. The model learns the complex patterns and features that distinguish synthesizable materials. For instance, the Crystal Synthesis Large Language Model (CSLLM) framework achieves 98.6% accuracy in predicting synthesizability by using a text representation of crystal structures fine-tuned on a balanced dataset of over 150,000 materials [15].

FAQ 3: My model's performance is worse than published results. What are the common causes? Common causes for poor model performance include:

- Implementation bugs that are often invisible and don't cause crashes.

- Suboptimal hyperparameter choices, as deep learning models are highly sensitive to parameters like learning rate.

- Data-related issues, such as insufficient training examples, noisy labels, imbalanced classes, or a mismatch between your data distribution and the test set [16]. A systematic troubleshooting strategy, starting with a simple model architecture and gradually increasing complexity, is recommended to isolate the issue [16].

FAQ 4: What should I do if my deep learning model fails to learn anything useful from my materials data? A critical first step is to overfit a single batch of data. This heuristic can catch a significant number of bugs. If the training error on a single, small batch cannot be driven close to zero, it indicates a fundamental problem such as an incorrect loss function, a flipped sign in the gradient, numerical instability, or issues in the data pipeline [16].

FAQ 5: How do I represent a crystal structure for a Large Language Model (LLM)? Since LLMs process text, crystal structures must be converted into a efficient text format. While CIF and POSCAR files are common, they can contain redundancies. The CSLLM framework introduced a "material string" representation, which integrates essential crystal information (lattice parameters, composition, atomic coordinates, symmetry) in a compact, reversible text format suitable for efficient LLM fine-tuning [15].

Troubleshooting Guides

Guide 1: Debugging Low Predictive Accuracy in Synthesizability Models

If your model for predicting material synthesizability is underperforming, follow this systematic debugging workflow:

Diagram 1: Workflow for debugging low model accuracy.

Procedure:

- Start Simple: Begin with a straightforward architecture, such as a fully-connected network or a simple GNN, and sensible hyperparameter defaults. This establishes a baseline and reduces the number of potential failure points [16].

- Interrogate Your Data

- Balance: Ensure your dataset has a balanced number of synthesizable and non-synthesizable examples. The CSLLM framework used 70,120 synthesizable structures from ICSD and 80,000 non-synthesizable structures identified via a positive-unlabeled learning model [15].

- Quality: Manually explore and verify the labels and quality of your data. No model can compensate for fundamentally flawed data [31].

- Overfit a Single Batch: Try to overfit a very small batch of data (e.g., 5-10 examples). If the model cannot achieve near-zero training error, this signals a potential bug in the model implementation, loss function, or data preprocessing [16].

- Compare to a Known Result: Benchmark your model's performance against a published baseline or a known implementation on a similar dataset. This can help you identify if the issue is with your implementation or your specific data [16].

Guide 2: Resolving Issues in End-to-End Pipeline Integration

An end-to-end pipeline involves multiple components. Failures can occur in the handoffs between them.

Diagram 2: Information flow between specialized agents in a pipeline.

Procedure:

- Verify Component Input/Output: Check that the output format of one agent (e.g., the

Literature Scouterthat extracts reaction conditions) is compatible with the input format expected by the next agent (e.g., theExperiment Designer) [30]. - Inspect Human-in-the-Loop Decision Points: Even in automated systems, human chemists are often essential for evaluating the correctness of an agent's responses and interconnecting different agents. Ensure these decision points are clearly defined and that the human operator has the necessary context [30].

- Check External Tool Integration: If agents use external tools (e.g., a Python interpreter for calculations or a database search API), confirm that these tools are functioning correctly and that the agent can parse their outputs [30].

Data & Performance Benchmarks

Table 1: Comparison of Synthesizability Prediction Methods

This table compares the performance of different computational methods for predicting whether a material can be synthesized.

| Prediction Method | Key Principle | Reported Accuracy/Performance | Primary Limitation |

|---|---|---|---|

| Thermodynamic Stability | Calculates energy above the convex hull via DFT [6] | 74.1% accuracy [15] | Many metastable materials are synthesizable; many stable materials are not [15] |

| Kinetic Stability | Analyses phonon spectra to assess dynamic stability [15] | 82.2% accuracy [15] | Computationally expensive; structures with imaginary frequencies can be synthesized [15] |

| PU Learning Model | Uses positive-unlabeled learning to score synthesizability (CLscore) [15] | ~87.9% accuracy for 3D crystals [15] | Accuracy is moderate and can be system-dependent [15] |

| Crystal Synthesis LLM (CSLLM) | LLM fine-tuned on a balanced dataset of 150k+ structures using a text representation [15] | 98.6% accuracy on test data [15] | Requires a large, high-quality, balanced dataset for training [15] |

Table 2: Key LLM-Based Agents for Chemical Synthesis Pipelines

Specialized AI agents can handle different tasks in an automated synthesis pipeline. The following table details agents from the LLM-RDF framework [30].

| LLM-Based Agent | Primary Function | Specific Task Example |

|---|---|---|

| Literature Scouter | Automated literature search and information extraction [30] | Searching databases for synthetic methods that use air to oxidize alcohols to aldehydes and summarizing reaction conditions [30] |

| Experiment Designer | Designs experiments and screens conditions [30] | Planning a high-throughput substrate scope study for a catalytic system [30] |

| Hardware Executor | Interfaces with automated laboratory hardware [30] | Executing the planned high-throughput screening on an automated experimental platform [30] |

| Spectrum Analyzer | Analyzes spectral data [30] | Interpreting gas chromatography (GC) results from reaction screening [30] |

| Result Interpreter | Interprets experimental results and suggests next steps [30] | Analyzing HTS data to identify successful conditions and guide optimization [30] |

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

This table lists key resources used in developing and running advanced predictive pipelines for material synthesis.

| Item / Solution | Function / Purpose | Example / Specification |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | A definitive database of experimentally synthesized crystal structures used as positive examples for training synthesizability models [15] | Source of ~70,000+ confirmed synthesizable crystal structures [15] |

| Positive-Unlabeled (PU) Learning Model | A machine learning technique to identify non-synthesizable structures from large databases of theoretical predictions, creating negative samples for training [15] | Used to generate CLscores; structures with a score < 0.1 are considered non-synthesizable [15] |

| Graph Neural Networks (GNNs) | A class of deep learning models that operate on graph structures, ideal for representing crystal structures where atoms are nodes and bonds are edges [6] | Used in GNoME for materials discovery; can predict formation energy and stability [6] |

| Material String Representation | A simplified text representation of a crystal structure that condenses information about lattice, composition, and atomic coordinates for efficient LLM processing [15] | An alternative to verbose CIF files; enables fine-tuning of LLMs on crystal structure data [15] |

| Large Language Model (LLM) | The base model (e.g., GPT-4, LLaMA) that is fine-tuned on scientific data to power specialized agents and predict synthesizability [30] [15] | Framework backbone for tasks like literature mining (LLM-RDF) and synthesizability prediction (CSLLM) [30] [15] |

Troubleshooting Guide

Q1: The model's synthesizability predictions are inaccurate for my novel, complex crystal structure. What could be wrong? A: This often stems from input data or representation issues. Follow this diagnostic protocol:

Step 1: Verify Material String Formatting Ensure your crystal structure's text representation (the "material string") is correctly generated. The format is:

Space Group | a, b, c, α, β, γ | (AtomSite1-WyckoffSite1[WyckoffPosition1,x1,y1,z1]; AtomSite2-WyckoffSite2[WyckoffPosition2,x2,y2,z2]; ...)[2]. Incorrect lattice parameters, atomic coordinates, or Wyckoff positions will lead to faulty feature extraction.Step 2: Check Training Data Boundaries The CSLLM framework was trained on structures with a maximum of 40 atoms per unit cell and 7 different elements [2]. Predictions for structures exceeding these complexity limits may be unreliable. Simplify your input structure or consider alternative methods.

Step 3: Assess Data Pre-processing Confirm your data cleaning pipeline. For crystal structures from databases, standardize formats and handle missing values using methods like binning, regression, or clustering to smooth noise [32]. Inconsistent or noisy input data is a primary cause of performance degradation.

Q2: How can I improve the precursor prediction success rate beyond the reported 80.2%? A: The Precursors LLM can be enhanced with post-processing validation:

Step 1: Perform Reaction Energy Calculation Use the precursors suggested by the LLM to calculate the reaction energy via Density Functional Theory (DFT). A highly negative reaction energy (exothermic) generally corroborates the prediction [2].

Step 2: Conduct Combinatorial Analysis The LLM may suggest multiple potential precursors. Systematically evaluate different combinations of these suggested precursors to identify the mixture with the most favorable thermodynamic profile [2].

Step 3: Fine-tune on Domain-Specific Data If you have a proprietary dataset for your material class (e.g., metal-organic frameworks), perform additional fine-tuning of the pre-trained Precursors LLM on this specialized data to improve its domain-specific accuracy.

Q3: What are the common pitfalls when fine-tuning the CSLLM models on a custom dataset? A: The main challenges are dataset construction and model alignment:

Pitfall 1: Imbalanced Dataset The original Synthesizability LLM was trained on a balanced dataset of 70,120 synthesizable (from ICSD) and 80,000 non-synthesizable structures [2]. Ensure your custom dataset has a similar balance between positive and negative examples to avoid biasing the model.

Pitfall 2: Mislabeled Negative Samples "Non-synthesizable" structures must be carefully curated. The CSLLM framework used a pre-trained PU learning model to select theoretical structures with a low CLscore (<0.1) as reliable negative examples [2]. Using unvetted theoretical structures as negatives can introduce label noise and hurt performance.

Pitfall 3: Ignoring Domain Adaptation The base LLM possesses broad linguistic knowledge that is aligned with material-specific features during fine-tuning [2]. Do not skip the fine-tuning step or use an insufficient number of training epochs, as this can lead to persistent "hallucinations" and unreliable outputs.

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the CSLLM framework? A: The CSLLM (Crystal Synthesis Large Language Models) framework is the first to use three specialized fine-tuned LLMs to address the challenge of crystal synthesizability holistically. It predicts 1) whether a 3D crystal structure is synthesizable, 2) the likely synthetic method (e.g., solid-state or solution), and 3) suitable chemical precursors, all within a single, integrated framework [2].