Optimizing Chemical Potential Ranges for Advanced Material Formation: Strategies for Researchers and Drug Developers

This article provides a comprehensive guide for researchers and drug development professionals on optimizing chemical potential ranges to control material formation.

Optimizing Chemical Potential Ranges for Advanced Material Formation: Strategies for Researchers and Drug Developers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing chemical potential ranges to control material formation. It explores the foundational role of the Potential Energy Surface (PES) in dictating molecular stability and reactivity. The review covers a spectrum of methodological approaches, from traditional force fields to modern machine learning potentials and statistical optimization techniques like Design of Experiments (DoE). It further addresses critical troubleshooting aspects for navigating complex energy landscapes and outlines robust validation frameworks to benchmark computational predictions against experimental data. By synthesizing insights from foundational concepts to cutting-edge applications, this work aims to equip scientists with the knowledge to accelerate the design of novel materials, including pharmaceuticals and energy storage compounds.

Understanding the Potential Energy Surface: The Foundation of Material Stability and Reactivity

The Concept of the Potential Energy Surface (PES) and the Global Minimum

Frequently Asked Questions (FAQs)

1. What is a Potential Energy Surface (PES), and why is it fundamental to my research? A Potential Energy Surface (PES) describes the potential energy of a system, such as a collection of atoms, as a function of its geometric parameters, typically the positions of the atoms [1] [2]. It is a multidimensional landscape where each point represents a specific molecular geometry and its associated energy. For a system with two degrees of freedom, this can be visualized as a terrain where the height corresponds to energy [1]. The PES is critical for theoretically exploring molecular properties, predicting stable shapes, and computing chemical reaction rates [1].

2. What does the "Global Minimum" represent on a PES? The global minimum (GM) is the geometry corresponding to the lowest point on the PES [3]. It represents the most thermodynamically stable configuration of a molecular or material system. Accurately locating the GM is essential for predicting properties like thermodynamic stability, reactivity, and biological activity [3].

3. My global optimization calculation is trapped in a local minimum. How can I escape? Entrapment in local minima is a common challenge. Effective strategies involve using global optimization (GO) methods that combine global exploration with local refinement [3]. Stochastic methods, such as Simulated Annealing or Genetic Algorithms, incorporate randomness to help the search escape local minima and sample the PES more broadly [3]. Ensuring your algorithm balances "exploration" of new regions with "exploitation" of promising low-energy areas is key.

4. How do I choose between stochastic and deterministic global optimization methods? The choice depends on your system and research goals.

- Stochastic Methods (e.g., Genetic Algorithms, Simulated Annealing) use randomness and are well-suited for exploring complex, high-dimensional energy landscapes with many local minima. They do not guarantee finding the GM but are powerful for broad sampling [3].

- Deterministic Methods rely on analytical information like energy gradients and follow defined, non-random paths. They can offer precise convergence but may be less effective for very complex landscapes and can be computationally expensive [3].

- Hybrid approaches that combine features of both are increasingly popular for enhancing performance [3].

5. What is the significance of a saddle point on the PES? Saddle points, specifically first-order saddle points, are critical points on the PES that represent transition states between local minima (e.g., reactants and products) [1] [3]. They are the highest energy point on the minimum energy path (MEP) and are characterized by a single imaginary vibrational frequency [3]. Identifying them is crucial for studying reaction mechanisms and kinetics.

Troubleshooting Common Experimental & Computational Issues

Issue 1: Failure to Locate the Global Minimum in Complex Systems

| Symptom | Potential Cause | Solution |

|---|---|---|

| The same local minimum is repeatedly found, even with different initial guesses. | The algorithm lacks sufficient exploration power and is trapped. | Switch from a purely local optimizer to a dedicated GO method. Implement a Basin Hopping algorithm, which transforms the PES into a set of inter-connected local minima, simplifying the landscape for more efficient global exploration [3]. |

| The number of located minima scales exponentially with system size, making the search intractable. | The high dimensionality and complexity of the PES. | Integrate machine learning (ML) techniques to guide the traditional GO search. ML can learn from previous evaluations to predict promising regions of the PES, significantly accelerating convergence [3]. |

Issue 2: Inefficient Sampling of the Potential Energy Surface

| Symptom | Potential Cause | Solution |

|---|---|---|

| The search spends too much computational resources on high-energy, uninteresting regions. | Inefficient sampling strategy. | Employ Parallel Tempering Molecular Dynamics (PTMD), which runs multiple simulations at different temperatures. Allowing exchanges between them improves sampling efficiency and helps overcome high energy barriers [3]. |

| The search misses important low-energy configurations. | The initial population of candidate structures lacks diversity. | Use a combination of random sampling and physically motivated perturbations to generate the initial candidate structures for the GO algorithm [3]. |

Detailed Experimental Protocols for Global Optimization

Protocol 1: Standard Workflow for Global Minimum Search

This is a typical two-step process combining global search and local refinement [3].

- Initial Population Generation: Create a diverse set of initial candidate structures using techniques like random sampling or heuristic design.

- Local Optimization: Each candidate structure is locally optimized to find the nearest stationary point on the PES.

- Redundancy Removal: Eliminate duplicate or symmetrically equivalent structures from the pool of candidates.

- Frequency Analysis: Perform vibrational frequency calculations on the remaining structures to confirm they are true local minima (all frequencies real).

- Identification of Putative GM: The structure with the lowest energy among the unique minima is designated as the putative global minimum.

Protocol 2: Exploring Reaction Pathways with Global Reaction Route Mapping (GRRM)

This single-ended method is designed to locate not only minima but also transition states for reaction pathway exploration [3].

- Starting Point: Begin from a local minimum or any point on the PES.

- Pathway Exploration: The algorithm follows the potential energy gradient to systematically locate adjacent transition states and minima.

- Network Mapping: Construct a network of reaction pathways connecting the various minima via the transition states.

- Global Landscape: This approach aims to build a comprehensive map of the low-energy regions of the PES, revealing the global reaction route.

Research Reagent Solutions: Computational Tools for PES Exploration

The following table details essential computational methods and their functions in exploring Potential Energy Surfaces.

| Research Reagent / Method | Function & Application |

|---|---|

| Genetic Algorithm (GA) | A population-based stochastic method that applies evolutionary principles (selection, crossover, mutation) to optimize structural populations over generations [3]. |

| Basin Hopping (BH) | A stochastic global optimization method that transforms the PES into a discrete set of local minima, simplifying the landscape for more efficient exploration [3]. |

| Simulated Annealing (SA) | A stochastic method that uses a temperature-cooling scheme to allow the system to escape local minima, analogous to the annealing process in metallurgy [3]. |

| Particle Swarm Optimization (PSO) | A population-based stochastic algorithm inspired by the collective motion of biological swarms (e.g., bird flocks) to search for optimal structures [3]. |

| Molecular Dynamics (MD) | A deterministic method that explores atomic motion by integrating Newton's equations of motion. It can be used for GO, especially when enhanced with techniques like Parallel Tempering [3]. |

| Stochastic Surface Walking (SSW) | A method that enables adaptive exploration of the PES through guided stochastic steps, facilitating transitions between local minima [3]. |

| Density Functional Theory (DFT) | A first-principles quantum mechanical method widely used to calculate the energy for a given atomic arrangement on the PES with a good balance of accuracy and cost [3]. |

| Auxiliary DFT (ADFT) | A low-scaling variant of Kohn-Sham DFT that is particularly suited for large, complex systems and provides stable analytic derivatives for efficient PES exploration [3]. |

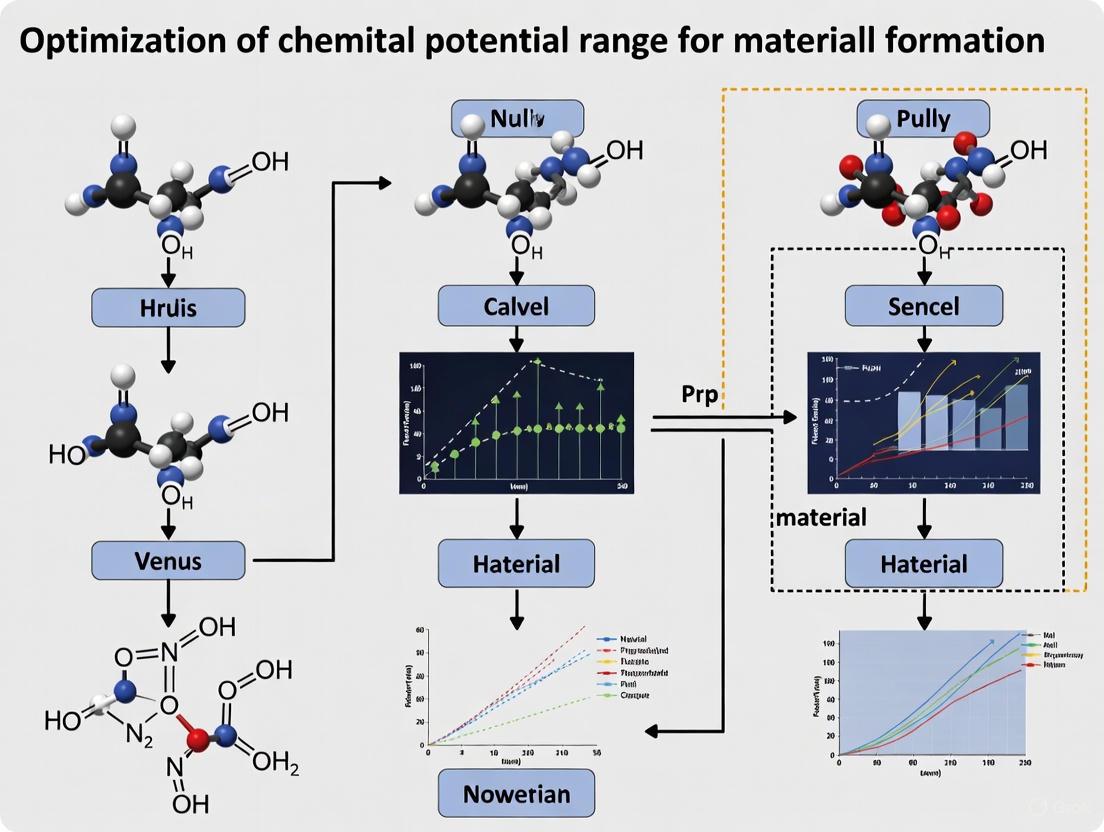

Visualization of Concepts and Workflows

PES Topography and Optimization

Global Optimization Method Selection

Local Minima, Transition States, and Reaction Pathways

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My geometry optimization keeps converging to a high-energy local minimum. How can I improve my search for the global minimum?

A1: High-energy convergence often indicates insufficient sampling of the potential energy surface (PES). Implement a global optimization (GO) strategy that combines stochastic and deterministic methods [3]. For molecular systems, consider using Basin Hopping (BH) or Parallel Tempering Molecular Dynamics (PTMD) to escape local minima [3]. For drug-like molecules, recent benchmarks show that the Sella optimizer with internal coordinates finds local minima with fewer imaginary frequencies compared to other methods [4].

Q2: How can I reliably distinguish between a true local minimum and a transition state after optimization?

A2: True local minima should exhibit zero imaginary frequencies in vibrational frequency analysis, while transition states display exactly one imaginary frequency [3]. Always perform frequency calculations to confirm the nature of stationary points. The Stochastic Surface Walking (SSW) method is particularly effective for systematically exploring both minima and transition states on the PES [3].

Q3: Which neural network potential (NNP) optimizer provides the best balance between convergence speed and reliability for molecular systems?

A3: Optimizer performance depends on your specific NNP and molecular system. Recent benchmarking studies indicate that Sella with internal coordinates achieves the fastest convergence (average 13.8-23.3 steps) while maintaining good reliability across multiple NNP architectures [4]. However, ASE/L-BFGS provides the most consistent success rates for completing optimizations across different NNPs [4].

Q4: What strategies can help map complex reaction pathways involving multiple intermediates and transition states?

A4: Implement the Global Reaction Route Mapping (GRRM) approach, which systematically locates all important minima and transition states around a starting structure [3]. Combine this with modern machine learning potentials like EMFF-2025, which can achieve DFT-level accuracy in mapping chemical space and structural evolution across temperatures [5].

Common Optimization Errors and Solutions

Table: Troubleshooting Common Optimization Problems

| Problem | Possible Causes | Solutions |

|---|---|---|

| Failure to converge | Noisy PES, poor step size, insufficient iterations | Switch to noise-tolerant optimizers (FIRE), increase maximum steps to 500, use internal coordinates [4] |

| Convergence to saddle points | Inadequate convergence criteria, missing frequency validation | Implement multiple convergence criteria (energy, gradient RMS, displacement), always perform frequency calculations [4] [3] |

| Inconsistent results across NNPs | Architecture-dependent optimizer performance | Test multiple optimizer-NNP combinations; L-BFGS generally shows good transferability [4] |

| High computational cost | Inefficient PES exploration, redundant calculations | Use transfer learning with pre-trained models (e.g., EMFF-2025), implement hybrid GO algorithms [5] [3] |

Experimental Protocols

Protocol 1: Global Minimum Search for Molecular Structures

Purpose: To locate the global minimum energy structure of a molecular system using a combined stochastic-deterministic approach.

Materials and Methods:

- Software: GRRM, SSW, or BH implementation [3]

- Computational Level: Neural Network Potential (EMFF-2025) or DFT [5]

- System Preparation: Generate initial population of candidate structures through random sampling or heuristic design [3]

Procedure:

- Initial Sampling: Generate 100-1000 initial structures using random sampling or physically motivated perturbations [3]

- Local Optimization: Refine each structure to the nearest local minimum using efficient local optimizers (L-BFGS or Sella) [4] [3]

- Redundancy Removal: Eliminate duplicate structures using symmetry and energy criteria [3]

- Frequency Validation: Confirm true minima through vibrational frequency analysis (0 imaginary frequencies) [3]

- Iterative Refinement: Apply stochastic steps (BH or SSW) to escape local minima and continue search [3]

- Convergence Check: Continue until no new lower-energy structures are found after multiple iterations [3]

Validation:

- Compare predicted properties (structure, mechanical properties) with experimental data [5]

- Verify consistency across multiple GO runs with different initial conditions [3]

Protocol 2: Reaction Pathway Mapping with Neural Network Potentials

Purpose: To map complete reaction pathways and identify key transition states using machine learning potentials.

Materials and Methods:

- NNP Model: EMFF-2025 for C, H, N, O systems or similar general potential [5]

- Optimizers: Sella with internal coordinates for transition states, L-BFGS for minima [4]

- Analysis: Principal Component Analysis (PCA) for chemical space visualization [5]

Procedure:

- Initial Structure Preparation: Select reactant and product structures from global minimum search [3]

- Transition State Search: Apply single-ended methods or SSW to locate first-order saddle points [3]

- Pathway Verification: Confirm reaction pathways through intrinsic reaction coordinate (IRC) calculations [3]

- NNP Molecular Dynamics: Perform MD simulations at relevant temperatures to observe decomposition mechanisms [5]

- Chemical Space Analysis: Use PCA and correlation heatmaps to visualize structural evolution and relationships [5]

Validation:

- Benchmark against DFT calculations for energy and force predictions (MAE < 0.1 eV/atom for energy, < 2 eV/Å for forces) [5]

- Verify decomposition mechanisms and kinetics against experimental data [5]

Research Reagent Solutions

Table: Essential Computational Tools for Reaction Pathway Analysis

| Tool Name | Type | Function | Key Features |

|---|---|---|---|

| EMFF-2025 | Neural Network Potential | Predicts structures, mechanical properties, decomposition characteristics | DFT-level accuracy for C,H,N,O systems; transfer learning capability [5] |

| Sella | Geometry Optimizer | Transition state and minimum optimization | Internal coordinates; efficient convergence; minimal imaginary frequencies [4] |

| GRRM | Global Reaction Route Mapper | Comprehensive pathway mapping | Locates all minima and transition states around starting structure [3] |

| geomeTRIC | Geometry Optimizer | Molecular structure optimization | Translation-Rotation Internal Coordinates (TRIC); L-BFGS with line search [4] |

| Basin Hopping | Global Optimization Algorithm | Global minimum search | Transforms PES into discrete minima; efficient for complex landscapes [3] |

| OMol25 eSEN | Neural Network Potential | High-accuracy energy predictions | Trained on Open Molecules 2025 dataset; good optimization performance [4] |

Method Performance Benchmarking

Table: Optimizer Performance Across Different Neural Network Potentials

| Optimizer | Success Rate (%) | Average Steps | Minima Found (%) | Imaginary Freq/Structure |

|---|---|---|---|---|

| ASE/L-BFGS | 88-100 | 99.9-120.0 | 64-84 | 0.16-0.35 |

| ASE/FIRE | 60-100 | 105.0-159.3 | 44-84 | 0.16-0.45 |

| Sella (internal) | 80-100 | 13.8-23.3 | 60-96 | 0-0.33 |

| geomeTRIC (tric) | 4-100 | 11-195.6 | 4-92 | Varies significantly |

Data compiled from benchmarks of OrbMol, OMol25 eSEN, AIMNet2, and Egret-1 NNPs [4]

Workflow Visualization

Molecular Optimization Pathway

Potential Energy Surface Features

Challenges of High-Dimensional and Rugged Energy Landscapes

FAQs: Navigating Complex Energy Landscapes

FAQ 1: What are the primary computational challenges when searching for stable states on a high-dimensional energy landscape?

The main challenge is the exponential growth in the number of local minima and saddle points as the number of dimensions (or degrees of freedom) increases. Theoretical models suggest the number of minima scales as (N_{\text{min}}(N) = \exp(\xi N)), where (\xi) is a system-dependent constant and (N) relates to the system size [3]. This "combinatorial explosion" makes it practically impossible to exhaustively search the landscape. Furthermore, the energy surface develops a complex "spider's web" structure where low-free-energy regions occupy only a small fraction of the total space, causing uniform sampling methods to waste significant time in high-energy, irrelevant regions [6].

FAQ 2: My global optimization algorithm gets trapped in local minima. What strategies can help it escape?

Employing stochastic global optimization methods is a standard strategy to overcome this. These algorithms incorporate randomness, allowing them to jump over energy barriers that trap deterministic searches.

- Basin Hopping (BH): This method transforms the potential energy surface into a collection of interpenetrating staircases, simplifying the landscape to a set of local minima. It combines Monte Carlo steps with local minimization, enabling escapes from local minima [3].

- Simulated Annealing (SA): This technique uses a controlled, stochastic cooling schedule. By initially allowing the system to accept higher-energy configurations, it can cross barriers, and gradually reducing this probability encourages convergence to a low-energy state [3].

- Stochastic Activation–Relaxation Technique (START): This algorithm is designed explicitly for Free Energy Surfaces (FES). It combines saddle optimization to escape a minimum and minimum optimization to relax into a new basin, using noise from finite-time averaging to its advantage for a global search [6].

FAQ 3: How can I efficiently locate key transition states (saddle points) on a high-dimensional free energy surface?

The Stochastic Activation–Relaxation Technique (START) is an advanced method for this purpose. It locates "landmarks" – minima and saddle points – on a high-dimensional FES without requiring a prior analytical form of the surface. START operates "on-the-fly" by combining techniques from stochastic optimization and machine learning. It uses the forces and Hessians estimated from molecular dynamics or Monte Carlo simulations (which are inherently noisy) to drive the search for these critical points, making it highly efficient for navigating complex landscapes [6].

FAQ 4: Can machine learning assist in the exploration and prediction of material stability?

Yes, machine learning and deep learning are revolutionizing this field. A prominent example is the Graph Networks for Materials Exploration (GNoME) framework. GNoME uses graph neural networks trained on large-scale active learning from databases like the Materials Project. It can predict the stability of crystal structures with high accuracy, discovering millions of new stable crystals and expanding the known stable materials by an order of magnitude. These models show emergent generalization, accurately predicting stability even for structures with five or more unique elements, which are notoriously difficult to explore [7].

FAQ 5: What is a suitable descriptor for a universal machine learning model predicting multiple material properties?

Electronic charge density is a powerful, physically grounded descriptor for a universal model. According to the Hohenberg-Kohn theorem, the ground-state wavefunction (and thus all electronic properties) is uniquely determined by the electronic charge density. Recent research has demonstrated that using the electronic charge density from first-principles calculations as the sole input to a deep learning model enables accurate prediction of eight different material properties. Furthermore, multi-task learning with this descriptor improves prediction accuracy for individual properties, showing excellent transferability [8].

Troubleshooting Common Experimental & Computational Issues

Symptom: Poor convergence or low hit rate in virtual screening for lead compound optimization.

- Potential Cause 1: The initial compound library lacks diversity or is too restricted by classical chemical intuition.

- Solution: Broaden the candidate generation process. Use methods like symmetry-aware partial substitutions (SAPS) and ab initio random structure searching (AIRSS) to create a more diverse set of candidate structures. Then, employ a robust machine learning model (e.g., a graph neural network) to filter these candidates before expensive DFT calculations, creating an active learning loop [7].

- Potential Cause 2: The molecular descriptor used does not capture sufficient spatial and topological information.

- Solution: Adopt a model that fuses multiple information types. For instance, the TSGNN model uses a dual-stream architecture. One stream processes topological information (atom connectivity) via a Graph Neural Network, while the other processes spatial information (atomic coordinates) via a Convolutional Neural Network. This ensures that molecules with the same topology but different spatial configurations—and thus different properties—are correctly distinguished [9].

Symptom: Inability to accurately rank the relative stability of predicted candidate structures.

- Potential Cause: The algorithm targets only the potential energy surface, ignoring entropic contributions that determine true thermodynamic stability at finite temperatures.

- Solution: Shift the focus from the potential energy surface to the free energy surface (FES). Use enhanced sampling techniques like metadynamics or parallel tempering to generate the FES. Algorithms like START can then be applied to locate minima and saddle points on this FES, and the relative free energies of these landmarks can be quantified, providing a thermodynamically valid ranking [6].

Key Experimental Protocols & Workflows

Protocol 1: The GNoME Framework for Stable Crystal Discovery

This protocol outlines the workflow for large-scale, machine-learning-guided materials discovery [7].

- Candidate Generation: Generate a diverse pool of candidate crystal structures using two parallel frameworks:

- Structural Framework: Modify existing crystals using an expanded set of substitutions, including Symmetry-Aware Partial Substitutions (SAPS).

- Compositional Framework: Generate reduced chemical formulas with relaxed oxidation-state constraints.

- Model Filtration: Filter the massive candidate pool using a trained GNoME model.

- For the structural framework, use volume-based test-time augmentation and deep ensembles for uncertainty quantification.

- For the compositional framework, predict stability directly from the chemical formula.

- DFT Verification: Perform Density Functional Theory (DFT) calculations on the filtered candidates using standardized settings (e.g., with VASP) to verify stability and obtain accurate energies.

- Active Learning: Incorporate the newly calculated structures and their energies into the training dataset.

- Iterate: Retrain the GNoME model on the expanded dataset and repeat the process for multiple rounds to continuously improve model accuracy and discovery efficiency.

The following workflow diagram illustrates this iterative discovery process:

Protocol 2: Navigating Landmarks with the START Algorithm

This protocol details the procedure for locating minima and saddle points on a high-dimensional free energy surface [6].

- Define Collective Variables (CVs): Identify a set of coarse-grained variables ( s = (s1, ..., sn) ) that describe the slow, collective motions of the system.

- Initialize: Start from a point ( s_0 ) on the FES.

- Stochastic Optimization Loop: Iteratively perform the following two steps:

- Escape Minimum (Saddle Search): Use an optimization method (e.g., based on Activation–Relaxation Technology) to climb from the current minimum towards a first-order saddle point.

- Relax to New Minimum: From the saddle point, perform a minimum optimization to relax into a new local minimum. The update for the CVs ( s ) can follow a rule like: ( s{k+1} = sk + \delta s \frac{F(sk)}{\|F(sk)\|} ), where ( F(s_k) ) is the estimated mean force.

- Landmark Cataloging: Record each newly found minimum and saddle point as a "landmark."

- Build Network Representation: Represent all landmarks and the connections (transition paths) between them as a graph.

- Compute Free Energies: Use enhanced sampling techniques, now seeded with the known landmark locations, to efficiently and quantitatively compute the relative free energies of the minima.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 1: Key Computational Tools and Their Functions in Energy Landscape Exploration

| Tool/Solution Name | Primary Function | Key Application in Research |

|---|---|---|

| Global Optimization Algorithms (Stochastic) [3] | Navigate complex Potential Energy Surfaces (PES) to find the global minimum. | Locating the most stable molecular conformations, crystal polymorphs, or cluster structures. |

| Enhanced Sampling Methods (e.g., Metadynamics, Parallel Tempering) [6] | Accelerate the exploration of Free Energy Surfaces (FES) by overcoming high energy barriers. | Calculating relative free energies between stable states and elucidating reaction pathways. |

| Graph Neural Networks (GNNs) [7] [9] | Model relationships in structured data, representing atoms as nodes and bonds as edges. | Predicting material properties and stability directly from atomic structure and composition. |

| Electronic Charge Density [8] | Serve as a universal descriptor for machine learning models. | Enabling accurate, multi-property prediction within a single, unified framework. |

| Density Functional Theory (DFT) [3] [7] | Perform first-principles quantum mechanical calculations to determine electronic structure. | Providing accurate ground-truth energies for validating predictions and training machine learning models. |

| Stochastic Activation–Relaxation Technique (START) [6] | Locate minima and saddle points on high-dimensional FES without an explicit function. | Mapping the key "landmarks" and connectivity of a complex free energy landscape. |

Table 2: Performance Comparison of Selected Methods for Landscape Navigation

| Method Category | Example Algorithm(s) | Key Performance Metrics | Application Context | Reference |

|---|---|---|---|---|

| Machine Learning / Deep Learning | GNoME (GNN) | - Discovers 2.2 million stable crystals- Hit rate: >80% (with structure)- Prediction error: 11 meV/atom | High-throughput discovery of inorganic crystal structures | [7] |

| Stochastic Global Optimization | Basin Hopping, Simulated Annealing | - Effective for locating global minimum on PES- Scales exponentially with system size (( \exp(\xi N) )) | Molecular conformations, cluster structure prediction | [3] |

| Free Energy Surface Optimization | START | - Locates landmarks (minima, saddles) on HDFES | Biomolecular structure prediction, crystal polymorph ranking | [6] |

| Universal Property Prediction | MSA-3DCNN (on charge density) | - Average R²: 0.66 (single-task)- Average R²: 0.78 (multi-task) | Predicting eight different ground-state material properties from one descriptor | [8] |

Chemical Potential Analysis as an Alternative to Traditional Methods

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using chemical potential analysis over the traditional van't Hoff method?

The primary advantage is that chemical potential analysis decouples solid-state material properties from gas-phase contributions, which are convolved in the van't Hoff method. The traditional van't Hoff analysis, which uses oxygen partial pressure (pO₂), yields enthalpies (ΔHvtH) and entropies (ΔSvtH) that inherently include gas-phase terms. In contrast, the chemical potential method directly yields the solid-state reduction enthalpy (δHr) and entropy (δSr) through the relationship ΔμO = δHr - TδSr. This provides a more direct and transparent view of the material's intrinsic properties, facilitating better comparison with first-principles calculations and revealing temperature dependencies that contain important information about the defect mechanism [10].

Q2: In what specific research areas is chemical potential analysis particularly valuable?

This method is particularly valuable in:

- Solar Thermochemical Fuels (STCH): For designing and optimizing oxide working materials for water and CO₂ splitting cycles, as it helps clarify the thermodynamic properties governing reduction and oxidation steps [10].

- Molten Salt Research for Nuclear Technologies: For accurately predicting thermodynamic properties like melting points, solubilities, and redox potentials, which are critical for next-generation nuclear reactor designs and pyrochemical reprocessing [11].

- Energetic Materials (HEMs) Design: For understanding the decomposition mechanisms and stability of high-energy materials, where neural network potentials can predict behavior with DFT-level accuracy but at a much lower computational cost [5].

Q3: My machine learning interatomic potential (MLIP) simulations for chemical potentials have high statistical uncertainty. What could be wrong?

High uncertainty in MLIP-based chemical potential calculations, especially in molten salts, has been noted in the literature [11]. The issue can stem from the method used to compute chemical potentials in the liquid phase. Some studies have found that transforming an entire system of particles (e.g., from Lennard-Jones or ideal gas particles to interacting ions) provides more reliable and lower-variance results compared to methods that only insert a single ion pair into the liquid [11]. Ensuring your training data for the MLIP is robust and carefully validating your free energy methodology against DFT for smaller systems can also help mitigate this problem.

Q4: Which geometry optimizer should I use with a Neural Network Potential (NNP) for reliable structural relaxation?

The choice of optimizer significantly impacts the success rate, speed, and quality of optimizations. Performance is highly dependent on the specific NNP. Recent benchmarks on drug-like molecules show that:

- Sella (with internal coordinates) often provides an excellent balance, frequently achieving a high success rate and the lowest average number of steps to convergence [4].

- ASE's L-BFGS is generally a reliable and robust choice, often yielding a high number of successful optimizations across different NNPs [4].

- ASE's FIRE is also a good option but may result in more optimized structures that are saddle points (not true minima) compared to other methods [4]. It is crucial to test different optimizers with your specific NNP and system of interest.

Troubleshooting Guides

Issue: Inconsistent or Inaccurate Reduction Enthalpies/Entropies

Problem: When analyzing thermogravimetric analysis (TGA) data, the derived reduction enthalpies and entropies seem inconsistent, or do not align well with computational predictions.

Solution: Switch from a van't Hoff analysis to a chemical potential analysis.

Protocol:

- Data Conversion: Convert your measured oxygen partial pressures (pO₂) at different temperatures (T) and a constant oxygen deficiency (δ) into oxygen chemical potentials (ΔμO) using the formula:

ΔμO = (H°* - cpT) + kBT[ln(pO₂/p°) - (S°* - cpln(T/T*))/kB]where H°* and S°* are the standard enthalpy and entropy of O₂ at standard temperature T* and pressure p° [10]. - Linear Regression: Plot ΔμO against temperature (T) for a fixed δ.

- Extract Solid-State Properties: Perform a linear fit. According to the equation

ΔμO = δHr - TδSr, the y-intercept is the differential reduction enthalpy (δHr), and the slope is the negative of the differential reduction entropy (-δSr) [10]. This directly gives you the solid-state properties, free from gas-phase convolutions.

Issue: Molecular Optimizations with NNPs Fail to Converge or Find Incorrect Minima

Problem: Geometry optimizations using a Neural Network Potential (NNP) fail to converge within the step limit, or they converge to saddle points (indicated by imaginary frequencies) instead of true local minima.

Solution: Systematically evaluate and select the appropriate optimization algorithm and convergence settings.

Protocol:

- Optimizer Selection: Based on benchmark data [4], prioritize the following optimizers for testing:

- Sella (internal coordinates)

- ASE L-BFGS

- Convergence Criteria: Ensure convergence is not solely based on the maximum force component (

fmax). If your software allows, enable additional criteria such as the root-mean-square (RMS) of the gradient and the maximum displacement. This improves the rigor of the convergence check [4]. - Post-Optimization Validation: Always perform a vibrational frequency calculation on the optimized structure.

- A true local minimum will have zero imaginary frequencies.

- The presence of imaginary frequencies indicates a saddle point, and the optimization should be restarted from a different initial geometry or with a different optimizer [4].

Supporting Data: The table below summarizes the performance of different optimizer-NNP combinations for optimizing 25 drug-like molecules, highlighting the variation in success rates.

Table 1: Benchmarking Optimizer and NNP Performance for Molecular Optimization [4]

| Optimizer | OrbMol | OMol25 eSEN | AIMNet2 | Egret-1 | GFN2-xTB |

|---|---|---|---|---|---|

| ASE/L-BFGS | 22 | 23 | 25 | 23 | 24 |

| ASE/FIRE | 20 | 20 | 25 | 20 | 15 |

| Sella | 15 | 24 | 25 | 15 | 25 |

| Sella (internal) | 20 | 25 | 25 | 22 | 25 |

| geomeTRIC (tric) | 1 | 20 | 14 | 1 | 25 |

Number of molecules successfully optimized (max. 250 steps).

Issue: High Computational Cost of Ab Initio Chemical Potential Calculations

Problem: Calculating chemical potentials and free energies with ab initio molecular dynamics (AIMD) is prohibitively expensive for large systems or long time scales.

Solution: Use a Machine Learning Interatomic Potential (MLIP) trained on DFT data to accelerate simulations without sacrificing accuracy.

Protocol for Molten Salts (e.g., LiCl) [11]:

- Generate Training Data: Perform a set of DFT calculations (AIMD) on the system (e.g., solid and liquid LiCl) to collect a diverse set of atomic configurations, energies, and forces.

- Train an MLIP: Train a machine learning force field (e.g., a neural network potential) to reproduce the DFT energies, forces, and stresses. Validate the MLIP by ensuring it reproduces structural properties like the radial distribution function, g(r), against DFT and experiment.

- Compute Chemical Potentials: Use an alchemical transformation method within molecular dynamics simulations powered by the MLIP.

- For the liquid phase: Use thermodynamic integration to transmute LiCl ion pairs from non-interacting ideal gas particles into fully interacting ions. This can be done for a single pair or the entire system.

- For the solid phase: Use the Einstein crystal method as a reference state for thermodynamic integration.

- Predict Properties: Locate the melting point by finding the temperature where the chemical potentials of the solid and liquid phases cross. Compare your prediction (e.g., 880 ± 18 K for LiCl) to the experimental value (883 K) to validate the approach [11].

Workflow Visualization

Chemical Potential Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Chemical Potential and Material Property Analysis

| Tool / Solution | Function / Description | Key Application in Research |

|---|---|---|

| Density Functional Theory (DFT) | A first-principles computational method for electronic structure calculations, providing accurate energies and forces. | Generates reference data for training machine learning potentials and serves as a benchmark for accuracy [5] [11]. |

| Neural Network Potentials (NNPs) | Machine-learning-based interatomic potentials trained on DFT data. Offer near-DFT accuracy at a fraction of the computational cost. | Enables large-scale molecular dynamics simulations for free energy and chemical potential calculations in complex materials [5] [11]. |

| Deep Potential (DP) | A specific and scalable framework for developing NNPs, known for robustness in reactive processes. | Used for simulating energetic materials and other complex systems to predict mechanical properties and decomposition mechanisms [5]. |

| Sella & geomeTRIC | Advanced geometry optimization libraries that often use internal coordinates for efficient structural relaxation. | Crucial for optimizing molecular structures to local minima using NNPs, a common step in computational workflows [4]. |

| Global Optimization Algorithms (e.g., GA, SA) | Algorithms designed to locate the global minimum on a complex potential energy surface, often combining stochastic search with local refinement. | Used for predicting the most stable chemical structures, such as molecular conformations, crystal polymorphs, and cluster geometries [3]. |

Computational and Statistical Methods for Navigating Chemical Space

Frequently Asked Questions (FAQs)

Q1: What are the fundamental differences between Genetic Algorithms (GAs) and Simulated Annealing (SA) for optimizing chemical systems?

Genetic Algorithms are population-based evolutionary algorithms that maintain and improve a set of candidate solutions through selection, crossover, and mutation operations. They are particularly effective for exploring complex, discrete search spaces common in molecular composition optimization [12] [13]. In contrast, Simulated Annealing is a single-solution method inspired by the metallurgical annealing process, which probabilistically accepts worse solutions to escape local optima using a temperature-controlled acceptance function [14] [15]. For chemical optimization problems, GAs typically find higher-quality solutions but require longer computation times, while SA converges faster but may settle for inferior local optima [12] [16].

Q2: How do I decide whether to use SA or a GA for my materials optimization problem?

The choice depends on your specific constraints regarding solution quality, computational resources, and problem structure. Use Genetic Algorithms when: you need the highest possible solution quality, your parameter space has strong epistatic interactions (where parameters strongly influence each other's effects), and you can afford longer runtimes [12] [13]. Choose Simulated Annealing when: you have limited computational resources, need faster results, are working with continuous parameters, or when your problem landscape is relatively smooth with correlated neighboring solutions [14] [15]. For discrete molecular composition problems with no meaningful gradient information, both methods outperform traditional gradient-based approaches [12] [17].

Q3: What are the critical hyperparameters I need to tune for each algorithm in chemical applications?

Table: Essential Hyperparameters for Chemical Optimization Algorithms

| Algorithm | Critical Hyperparameters | Chemical Optimization Considerations |

|---|---|---|

| Simulated Annealing | Initial temperature, Cooling schedule, Neighborhood structure, Markov chain length | Temperature should allow ~80% initial acceptance; cooling rate 0.8-0.99; neighborhood should maintain chemical feasibility [14] [18] |

| Genetic Algorithms | Population size, Crossover rate, Mutation rate, Selection pressure, Generation count | Population size 50-100; higher mutation for diversity; fitness-proportional selection maintains solution diversity [12] [13] |

Q4: How can I prevent premature convergence to local optima when optimizing chemical reaction mechanisms?

For Simulated Annealing, ensure your initial temperature is sufficiently high to allow widespread exploration and use a cooling schedule that decreases temperature slowly enough to thoroughly explore each temperature level [14] [15]. For Genetic Algorithms, maintain population diversity through appropriate mutation rates (typically 0.01-0.1 per gene), implement fitness sharing or niching techniques, and periodically introduce new random individuals [13]. For chemical reaction optimization specifically, consider using multi-objective approaches that simultaneously optimize for multiple experimental datasets to constrain the solution space more effectively [17].

Q5: What are the best practices for representing chemical structures and reaction parameters in these algorithms?

Discrete chemical compositions (e.g., polymer units, catalyst components) are effectively represented as integer-coded strings or permutations where each position corresponds to a specific chemical building block [12]. Continuous reaction parameters (temperature, concentration, time) should be represented as real-valued parameters with appropriate bounds based on chemical feasibility [17]. For complex molecular optimization, consider hybrid representations that combine discrete selection of chemical units with continuous optimization of their proportions or reaction conditions [13].

Troubleshooting Guides

Problem: Algorithm Converges Too Quickly to Suboptimal Solutions

Symptoms: Your optimization consistently returns the same mediocre solution regardless of parameter adjustments, or fails to discover chemically novel candidates.

Diagnosis and Solutions:

For Simulated Annealing:

- Increase initial temperature to allow more random exploration in early iterations [14]

- Slow the cooling rate (use values >0.95 for exponential cooling) to spend more time at each temperature level [18]

- Diversify neighborhood generation by implementing multiple move types (swaps, perturbations, reconstructions) [15]

For Genetic Algorithms:

Algorithm Convergence Troubleshooting

Problem: Excessive Computation Time for Complex Chemical Systems

Symptoms: Single iterations take impractically long, preventing adequate exploration of the chemical space, or complete runs require days/weeks to converge.

Diagnosis and Solutions:

Optimize Fitness Evaluation:

- Cache expensive computations - Store and reuse previously evaluated chemical configurations [17]

- Use surrogate models - Implement approximate fitness functions for initial screening, with full evaluation only for promising candidates [13]

- Parallelize evaluations - Exploit population-based nature of GAs or multiple Markov chains in SA [15]

Algorithm-Specific Accelerations:

Table: Performance Optimization Strategies for Chemical Applications

| Bottleneck | SA-Specific Fixes | GA-Specific Fixes |

|---|---|---|

| Slow fitness evaluation | Use simplified physical models for initial screening | Evaluate individuals asynchronously; terminate poor performers early |

| Large parameter space | Focus moves on most promising degrees of freedom | Structured initialization using chemical knowledge to seed population |

| Many local optima | Restart with best solution when temperature drops below threshold | Island models with occasional migration between subpopulations |

Problem: Solutions Violate Chemical Constraints or Synthetic Feasibility

Symptoms: The algorithm suggests chemically impossible structures, unrealistic reaction conditions, or synthetically inaccessible molecules.

Diagnosis and Solutions:

Constraint Handling Strategies:

- Penalty functions - Add large penalty terms to fitness for constraint violations [14]

- Repair mechanisms - Transform invalid solutions into valid ones through chemical-knowledge-based rules [17]

- Feasibility-preserving operators - Design custom mutation and crossover that maintain chemical validity [13]

Domain-Specific Implementation:

- Incorporate chemical rules directly into neighborhood moves for SA (e.g., only generate valid molecular substitutions) [12]

- Use chemical-feasible initializations for GAs by seeding population with known valid structures [17] [13]

- Implement constraint-aware crossover that exchanges chemically compatible fragments [13]

Chemical Constraint Handling Methods

Experimental Protocols for Chemical Optimization

Protocol 1: Simulated Annealing for Reaction Condition Optimization

Objective: Optimize temperature, concentration, and catalyst loading for maximum yield in a complex organic synthesis.

Materials and Setup:

- Representation: Real-valued vector [temperature (°C), concentration (M), catalyst_load (mol%)]

- Search Space: Temperature: 25-150°C, Concentration: 0.1-2.0M, Catalyst: 1-20 mol%

- Fitness Function: Reaction yield (%) with penalty for byproduct formation

Procedure:

- Initialization: Set initial temperature to 1000, initial random solution within bounds

- Cooling Schedule: Use exponential cooling with α=0.92

- Neighborhood Generation: Gaussian perturbation with σ=2% of parameter range

- Acceptance Probability: Standard Metropolis criterion P=exp(-ΔE/T)

- Termination: After 50 iterations without improvement or temperature < 0.001

Chemical Validation: Confirm top solutions with experimental testing; ensure thermal stability at suggested temperatures [18] [15]

Protocol 2: Genetic Algorithm for Molecular Component Selection

Objective: Discover optimal polymer sequence from library of 50 molecular units for thermal conductivity enhancement.

Materials and Setup:

- Representation: Integer-coded sequence of molecular unit indices (length: 20 units)

- Search Space: Permutations with repetition from library of 50 available units

- Fitness Function: Thermal conductivity calculated using Green's function method [12]

Procedure:

- Initialization: Population of 100 random sequences

- Selection: Tournament selection with size 3

- Crossover: Single-point crossover with probability 0.8

- Mutation: Point mutation with probability 0.05 per gene

- Elitism: Preserve top 5 individuals each generation

- Termination: After 200 generations or convergence

Chemical Validation: Synthesize and test top 3 candidate sequences; verify chemical stability and processability [12] [16]

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Resources for Chemical Optimization

| Tool/Resource | Function/Purpose | Chemical Application Examples |

|---|---|---|

| Paddy Algorithm | Evolutionary optimization with density-based propagation [13] | Polymer design, experimental condition selection, molecular generation |

| Hyperopt | Bayesian optimization with Tree of Parzen Estimators [13] | Neural network hyperparameter tuning for chemical prediction models |

| EvoTorch | Evolutionary algorithms library with GPU support [13] | Large-scale molecular optimization, parallel fitness evaluation |

| Green's Function Method | Thermal conductance calculation for molecular structures [12] | Screening polymer sequences for thermal interface materials |

| Ax Platform | Bayesian optimization framework with adaptive experimentation [13] | Closed-loop optimization of chemical reaction conditions |

Performance Comparison in Chemical Domains

Table: Algorithm Performance in Material and Chemical Optimization Tasks

| Application Domain | Best Performing Algorithm | Key Performance Metrics | Considerations for Chemical Applications |

|---|---|---|---|

| Thermal conductivity of 1D chains | Genetic Algorithms [12] [16] | GA solutions 10-30% better thermal conductance; 2-3x longer computation time | GA better exploits structural building blocks; effective for discrete composition spaces |

| Chemical kinetics optimization | Genetic Algorithms [17] | More robust convergence; handles multi-objective constraints effectively | Multi-objective GA successfully incorporates PSR and flame data simultaneously |

| VLSI circuit design | Simulated Annealing [15] | Proven industrial-scale success for placement and routing | Fast convergence acceptable when good solutions sufficient; preferred under time constraints |

| Molecular generation | Mixed results [13] | Paddy (evolutionary) shows robust performance across diverse tasks | Newer evolutionary methods balance exploration/exploitation for chemical spaces |

| Vehicle routing with constraints | Simulated Annealing [15] | Effective for combinatorial problems with hard constraints | Adaptable to chemical logistics and supply chain optimization |

Deterministic Global Optimization and Hybrid Search Strategies

Frequently Asked Questions (FAQs)

Q1: My global optimization for a new dual-atom catalyst is consistently converging to local minima, missing the global optimum. How can I overcome these energy barriers?

A1: This is a common challenge when exploring complex potential energy surfaces (PES). We recommend extending the configuration space with additional degrees of freedom to circumvent barriers.

- Methodology: Implement a machine-learning-based method that introduces extra dimensions to the atomic configuration space. This includes variables for 1) chemical identities (allowing interpolation between elements), 2) the degree of atomic existence ("ghost" atoms), and 3) atomic positions in a higher-dimensional space (4-6 dimensions) [19].

- Workflow: A Gaussian process surrogate model, trained on Density Functional Theory (DFT) energies and forces, uses a vectorial fingerprint that incorporates these new variables. This allows the optimization to navigate a smoother, modified energy landscape, effectively bypassing barriers encountered in the conventional 3D space [19].

- Application: This technique has been successfully applied for the global optimization of clusters, periodic systems, and specific structures like a Fe-Co dual atom catalyst in nitrogen-doped graphene [19].

Q2: Our high-throughput experimentation (HTE) for reaction optimization is too slow. How can we more efficiently navigate large condition spaces to find optimal yields and selectivity?

A2: Traditional grid-based HTE can be inefficient. A machine learning-driven Bayesian optimization workflow is designed for this exact problem.

- Solution: Employ a scalable framework like "Minerva" for multi-objective Bayesian optimization integrated with automated HTE [20].

- Process: The workflow begins with quasi-random Sobol sampling to diversify initial data. A Gaussian Process (GP) regressor then models reaction outcomes. An acquisition function (e.g., q-NParEgo, TS-HVI) balances the exploration of new conditions with the exploitation of promising ones to select the next batch of experiments [20].

- Outcome: In a case study optimizing a Ni-catalyzed Suzuki reaction, this method identified conditions with 76% yield and 92% selectivity, outperforming chemist-designed HTE plates. It also accelerated pharmaceutical process development, identifying high-performing conditions (>95% yield/selectivity) in weeks instead of months [20].

Q3: When using hybrid search in my retrieval system, how do I choose the best parameters to balance lexical and semantic results?

A3: Static parameter configurations often fail for all queries. A dynamic, machine-learning-driven approach is superior.

- Static Optimization: First, identify a baseline global configuration by evaluating parameter combinations (normalization technique, combination method, lexical/neural weights) against metrics like NDCG@10 [21]. A typical finding is L2 normalization, arithmetic mean combination, with a 0.4 lexical and 0.6 neural weight [21].

- Dynamic Optimization: For further gains, build a model that predicts the optimal parameters per query. This model uses features from the query itself and the initial results from both lexical and neural searches [21].

- Result: This model-based approach has been shown to improve over globally optimized parameters, achieving relative gains of +8.9% in DCG@10 and +7.4% in Precision@10 [21].

Q4: Which optimization algorithm should I choose for a complex, high-dimensional engineering problem where I am unsure if the landscape is unimodal or multimodal?

A4: Leverage modern hybrid metaheuristic algorithms designed to balance exploration and exploitation.

- The Challenge: The "No Free Lunch" theorem states no single algorithm is best for all problems. Unimodal landscapes benefit from strong exploitation, while multimodal landscapes require robust exploration to avoid local optima [22].

- Hybrid Solution: Algorithms like the DE/VS hybrid combine Differential Evolution (DE), which provides robust exploration, with Vortex Search (VS), which excels at exploitation [23]. Another example is BAGWO, which hybridizes the Beetle Antennae Search (BAS—good for multimodal functions) and the Grey Wolf Optimizer (GWO—good for unimodal functions) [22].

- Key Feature: These hybrids often use a hierarchical subpopulation structure and dynamic parameter adjustment to automatically balance the search strategy, leading to consistently superior performance across various benchmark functions and real-world problems [23] [22].

Troubleshooting Guides

Issue: Optimization Stagnation in Local Minima

Symptoms: The optimization algorithm converges repeatedly to the same sub-optimal solution, and the objective function shows no significant improvement over multiple iterations.

Diagnosis and Solutions:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Verify with a known benchmark | Test your algorithm on a standard benchmark function (e.g., from CEC 2005/2017) to confirm it performs as expected in a controlled environment [22]. |

| 2 | Expand the configuration space | Introduce extra dimensions or "ghost" atoms to your material's representation. This allows the optimizer to circumvent energy barriers by traversing a smoother, modified potential energy surface [19]. |

| 3 | Switch to a hybrid algorithm | Implement a hybrid algorithm like DE/VS or BAGWO. These are specifically engineered to balance global exploration (searching new areas) and local exploitation (refining good solutions), preventing premature convergence [23] [22]. |

| 4 | Increase batch diversity | If using Bayesian optimization with HTE, adjust the acquisition function to favor more exploration (q-NParEgo is highly scalable for this). This ensures your experimental batch probes diverse regions of the reaction condition space [20]. |

Issue: Poor Search Result Relevance in Material Databases

Symptoms: Queries for material data or scientific documents return results that are lexically correct but semantically irrelevant, or vice-versa.

Diagnosis and Solutions:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Implement Hybrid Search | Combine sparse (keyword-based, e.g., BM25) and dense (embedding-based, e.g., neural network) retrieval methods. This ensures both lexical matching and semantic understanding are utilized [24] [21]. |

| 2 | Optimize global parameters | Systematically tune parameters like normalization technique (L2, minmax), combination method (arithmeticmean), and weight balance between lexical and neural search. Use metrics like NDCG@10 to evaluate performance [21]. |

| 3 | Deploy dynamic prediction | For the highest performance, train a machine learning model to predict the optimal hybrid search parameters for each individual query based on its features and preliminary result sets [21]. |

Issue: High Experimental Noise in High-Throughput Screening

Symptoms: Results from parallel experiments are inconsistent, making it difficult for the optimization algorithm to discern clear trends.

Diagnosis and Solutions:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Validate HTE platform | Ensure consistency in robotic liquid handling, temperature control across reaction wells, and analytical measurement calibration. |

| 2 | Use robust ML models | Select machine learning models like Gaussian Processes that naturally handle uncertainty. The "Minerva" framework has demonstrated robustness to chemical noise commonly found in real-world HTE data [20]. |

| 3 | Incorplicate uncertainty guidance | Leverage the uncertainty predictions from the GP model within the Bayesian optimization loop. The acquisition function can then be weighted to also explore points with high uncertainty, which helps to reduce noise over time and clarify the true performance landscape [20]. |

Experimental Protocols & Workflows

Protocol 1: Machine Learning-Driven Global Structure Optimization

Purpose: To find the global minimum energy structure of a material system (e.g., a nanoparticle or catalyst) by circumventing local energy barriers [19].

Methodology Details:

- System Representation: Define the atomic system using a fingerprint that includes:

- Standard 3D spatial coordinates.

- ICE (Interpolation of Chemical Elements): Define groups of atoms that can interpolate between different chemical elements (e.g., between Al, Cu, and Ag). The variable ( q{i,e} ) represents the degree to which atom ( i ) is element ( e ) [19].

- Ghost Atoms: Introduce additional "ghost" atoms with fractional existence (( qi \in [0,1] )) to allow atoms to appear or disappear during optimization, facilitating barrier crossing [19].

- Hyperspace Coordinates: Embed the structure in a higher-dimensional space (4-6D) to create alternative pathways around barriers [19].

- Surrogate Model Training: Train a Gaussian Process model on a dataset of energies and forces calculated from DFT. The model learns the complex relationship between the extended structural fingerprint and the system's energy [19].

- Bayesian Search Loop: Use the trained model to predict energies and uncertainties for new candidate structures. An acquisition function guides the selection of the most promising structures for the next DFT calculation, iteratively refining the search towards the global minimum [19].

Protocol 2: Multi-Objective Chemical Reaction Optimization with HTE

Purpose: To efficiently identify reaction conditions that simultaneously optimize multiple objectives (e.g., yield, selectivity, cost) within a large, multidimensional search space [20].

Methodology Details:

- Define Condition Space: Enumerate all plausible reaction parameters (solvents, catalysts, ligands, temperatures, concentrations) as a discrete combinatorial set. Apply chemical knowledge filters to exclude unsafe or impractical combinations (e.g., temperature > solvent boiling point) [20].

- Initial Sampling: Use quasi-random Sobol sampling to select an initial batch of experiments (e.g., a 96-well plate). This ensures the initial data broadly covers the defined search space [20].

- ML Optimization Loop:

- Modeling: Train a Gaussian Process (GP) regressor on the collected experimental data to predict outcomes (yield, selectivity) and their uncertainties for all possible conditions.

- Selection: Use a scalable multi-objective acquisition function (e.g., q-NParEgo, TS-HVI) to select the next batch of experiments. This function balances exploring uncertain regions and exploiting conditions predicted to be high-performing.

- Experimentation & Iteration: Run the selected experiments on the HTE platform, add the new data to the training set, and repeat the loop until objectives are met or the experimental budget is exhausted [20].

Performance Data & Reagent Solutions

Table 1: Hybrid Search Optimization Performance Metrics

The following table summarizes the quantitative improvements achieved by optimizing hybrid search parameters, moving from a baseline to a globally optimized configuration, and finally to a dynamic, model-based approach [21].

| Metric | Baseline | Global Parameter Optimization | Relative Change (vs. Baseline) | Model-Based Dynamic Optimization | Relative Change (vs. Global) |

|---|---|---|---|---|---|

| DCG@10 | 8.82 | 9.30 | +5.4% | 10.13 | +8.9% |

| NDCG@10 | 0.23 | 0.25 | +8.7% | 0.27 | +8.0% |

| Precision@10 | 0.24 | 0.27 | +12.5% | 0.29 | +7.4% |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Optimization | Example / Technical Note |

|---|---|---|

| Air-Stable Nickel(0) Catalysts | Earth-abundant alternative to precious metal catalysts (e.g., Pd) for cross-coupling reactions. Enables safer, more scalable, and sustainable synthesis pipelines [25]. | Complexes developed by Keary M. Engle at Scripps Research. Bench-stable, activated under standard conditions, and effective for C-C and C-heteroatom bond formation [25]. |

| Multi-Enzyme Biocatalytic Cascade | Replaces long, multi-step synthetic routes with a single, efficient, aqueous-phase process. Dramatically reduces waste, isolations, and organic solvent use [25]. | Merck's 9-enzyme cascade for Islatravir production. Converts simple achiral feedstock to complex API in one stream, demonstrated on 100 kg scale [25]. |

| Phase-Change Materials (PCMs) | Serve as thermal energy storage mediums in thermal batteries. Their high heat capacity enables efficient heating/cooling systems for lab and plant facilities, aiding decarbonization [26]. | Paraffin wax, salt hydrates, fatty acids, polyethylene glycol. Used in thermal energy storage systems for air conditioning and industrial process heat [26]. |

| Vector Database (e.g., Pinecone, Weaviate) | Provides efficient storage, indexing, and querying of high-dimensional vectors (embeddings). Essential for implementing fast and scalable semantic/neural search in material science databases [24]. | Integrated with frameworks like LangChain to build hybrid retrieval systems that combine dense vector search with sparse keyword search [24]. |

Leveraging Neural Network Potentials for DFT-Level Accuracy at Lower Cost

Frequently Asked Questions (FAQs)

Q1: What is the typical accuracy I can expect from a modern Neural Network Potential compared to DFT? Modern, well-trained NNPs can achieve accuracy very close to their DFT training data. Quantitative benchmarks show that for energy predictions, the mean absolute error (MAE) can be predominantly within ± 0.1 eV/atom, and for atomic forces, the MAE can be within ± 2 eV/Å [5]. This makes them suitable for studying a wide range of physicochemical properties.

Q2: My research involves charged molecules or open-shell systems. Are there NNPs that can handle this? Yes, next-generation NNPs are being developed specifically to handle charged and open-shell systems. For instance, the AIMNet2 model is designed to be applicable to species in both neutral and charged states, using a method called Neural Charge Equilibration (NQE) to properly describe electronic structure in ionic or open-shell species [27].

Q3: How much data is needed to create a general NNP? Can I use a pre-trained model for my specific system? While training a general NNP from scratch requires large, diverse datasets (e.g., hundreds of thousands to millions of structures [28]), a powerful strategy is to use transfer learning. You can start with a pre-trained, general model (like EMFF-2025 or Egret-1) and fine-tune it for your specific chemical space with a minimal amount of new DFT data, saving significant computational time and cost [5].

Q4: For simulating large systems or long timescales, how do NNPs compare to traditional force fields in speed? NNPs provide a favorable balance. They are orders of magnitude faster than quantum mechanical methods like DFT, making large-scale molecular dynamics simulations feasible. However, they remain slower than conventional classical force fields. The key advantage is achieving near-DFT accuracy for processes where classical force fields are inadequate, such as chemical reactions [28].

Q5: What are the key limitations of current NNPs that I should consider for my project? The field is advancing rapidly, but current limitations include:

- Accuracy and Reliability: While highly accurate, NNPs may not yet universally achieve "chemical accuracy" (1 kcal/mol) for all properties and require validation for specific applications [28].

- Long-Range Interactions: Handling non-local electrostatic interactions efficiently remains a challenge, though methods to incorporate physics-based long-range terms are being actively developed [27].

- Generality: Many models are restricted to a subset of the periodic table and may not handle all spin states or charged systems, though this is improving [28].

Troubleshooting Guides

Issue 1: Poor Energy and Force Prediction on New Molecular Systems

Problem: Your NNP model, which performed well on its training data, shows significant errors when applied to a new type of molecule or material not represented in the original training set.

Solution: This is a classic case of limited model transferability. The recommended solution is to employ a transfer learning workflow.

Protocol: A Transfer Learning Strategy for System-Specific Refinement

- Identify a Pre-Trained Model: Start with a general pre-trained model that covers the elements in your system (e.g., EMFF-2025 for C, H, N, O-based energetic materials [5] or Egret-1 for bioorganic molecules [28]).

- Generate Targeted DFT Data: Perform a limited number of DFT calculations on representative configurations of your new system. This should include not just equilibrium structures but also non-equilibrium snapshots (e.g., from preliminary DFT-MD runs) to capture the relevant potential energy surface [5].

- Fine-Tune the Model: Use your new, small DFT dataset to further train (fine-tune) the pre-trained NNP. This process adjusts the model's parameters to specialize in your chemical space of interest without forgetting the general knowledge from its initial training.

- Validate: Rigorously benchmark the fine-tuned model's predictions against held-out DFT calculations for your system to ensure improved accuracy.

The following diagram illustrates this iterative workflow:

Issue 2: Handling Electrostatic and Long-Range Interactions

Problem: Your NNP fails to accurately model properties that depend on long-range electrostatics, such as polarization or ion diffusion.

Solution: Ensure you are using an NNP architecture that explicitly accounts for long-range interactions, rather than relying solely on a short-range local atomic environment.

Protocol: Selecting and Applying a Long-Range Capable NNP

- Architecture Selection: Choose an NNP that integrates explicit physical terms for long-range forces. For example, the AIMNet2 model decomposes the total energy into local, dispersion, and Coulombic terms:

UTotal = ULocal + UDisp + UCoul[27]. - Model Workflow Understanding: Recognize that in such models, a short-range neural network potential (

ULocal) is combined with physics-based corrections for dispersion (UDisp, e.g., DFT-D3) and electrostatics (UCoul), the latter often calculated from atom-centered partial charges [27]. - Input Requirements: Be aware that these models may require the entire system's connectivity or a larger cutoff for the long-range terms to function correctly, as opposed to a fixed short-range cutoff used for the local neural network part.

The diagram below outlines the architecture of a hybrid physics-ML model like AIMNet2:

Issue 3: High Computational Cost of Data Generation for Training

Problem: Generating a massive dataset of DFT calculations to train a robust NNP from scratch is prohibitively expensive.

Solution: Implement a data distillation or active learning strategy to maximize the informational value of each quantum chemistry calculation, minimizing the total number needed.

Protocol: Data Distillation for Efficient Training Set Construction

- Initial Sampling: Begin with an initial, diverse set of molecular configurations. This can be generated using classical molecular dynamics or semi-empirical methods (like GFN2-xTB [28]) to explore conformational space cheaply.

- Iterative Data Addition: Use an active learning loop, such as the DP-GEN framework [5]. The steps are:

- Train an initial NNP on your current DFT dataset.

- Run simulations with this NNP to explore new configurations.

- Identify configurations where the model is uncertain (e.g., through committee models or high predicted variance).

- Perform DFT calculations only on these most "informative" configurations.

- Add them to the training set and retrain the model.

- Convergence: Repeat this process until the model's predictions stabilize and no new high-uncertainty regions are found, indicating adequate coverage of the chemical space relevant to your simulation.

Performance Benchmarks of Modern NNPs

The following table summarizes the reported performance of several recent Neural Network Potentials, highlighting their target applications and accuracy.

Table 1: Benchmarking Modern Neural Network Potentials

| Model Name | Key Elements/Systems Covered | Reported Accuracy (vs. DFT) | Primary Application Context |

|---|---|---|---|

| EMFF-2025 [5] | C, H, N, O | Energy MAE: < ±0.1 eV/atomForce MAE: < ±2 eV/Å | High-energy materials (HEMs); mechanical properties & decomposition mechanisms |

| Egret-1 [28] | H, C, N, O, F, P, S, Cl, Br, I | Equals or exceeds routine quantum-chemical methods (e.g., on torsion scans, conformer ranking) | Bioorganic molecules & main-group chemistry |

| AIMNet2 [27] | 14 elements, neutral & charged | Outperforms GFN2-xTB; on par with reference DFT for interaction energies, torsion profiles | Broad organic and elemental-organic molecules, including charged & open-shell systems |

| ANI-nr [5] | C, H, N, O | Excellent agreement with experiment & previous quantum studies | Condensed-phase organic reactions |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Model Resources for NNP Implementation

| Resource | Type | Primary Function | Reference/Source |

|---|---|---|---|

| Pre-trained NNP Models (EMFF-2025, Egret-1, AIMNet2) | Software Model | Provides a ready-to-use, general-purpose potential for specific element sets, eliminating initial training cost. | [5] [28] [27] |

| DP-GEN | Software Framework | An active learning platform for automating the data generation and training cycle of NNPs, implementing the "data distillation" protocol. | [5] |

| MACE Architecture | Software Architecture | A high-body-order equivariant message-passing neural network architecture that forms the basis for models like Egret-1, providing high accuracy. | [28] |

| Transfer Learning Strategy | Methodology | A technique to adapt a general pre-trained NNP to a specific system with minimal new data, solving transferability issues. | [5] |

| Hybrid Physics-ML Potential | Model Design | An NNP architecture (e.g., AIMNet2) that combines a local neural network energy with explicit physics-based long-range dispersion and electrostatic terms. | [27] |

Design of Experiments (DoE) for Efficient Multi-Factor Optimization

Troubleshooting Guides

Guide 1: Resolving Common DoE Preparation and Execution Errors

Problem: The process is unstable, leading to noisy and inconclusive results.

- Root Cause: Conducting a DoE on a process that is not in a state of statistical control, with special causes of variation (e.g., machine breakdowns, unstable settings) affecting the output [29].

- Solution:

- Use Statistical Process Control (SPC) charts to monitor the process before starting the DoE [29].

- Identify and eliminate special causes of variation.

- Perform a series of trial runs under constant conditions to establish and verify baseline stability and repeatability before introducing experimental factor changes [29].

Problem: Uncontrolled input conditions are distorting the effects of the factors being tested.

- Root Cause: Inconsistent raw materials, different operators, or changing environmental conditions not accounted for in the experimental design [29].

- Solution:

- Secure a single, consistent batch of materials for the entire experiment [29].

- Keep all machine settings and parameters not being actively tested constant and document them [29].

- Use a single trained operator for all trials, or if impossible, use randomization or blocking (e.g., treating different days or shifts as blocks) to account for operator variability [29].

Problem: The measurement system is unreliable, making it impossible to detect real effects.

- Root Cause: Uncalibrated instruments, or a measurement system with poor repeatability and reproducibility [29].

- Solution:

Problem: Human errors during experimental trials lead to anomalous results.

- Root Cause: Lack of standardized procedures, checklists, or mistake-proofing for setting up and running each experimental trial [29].

- Solution:

Guide 2: Addressing Challenges in Data Analysis and Model Interpretation

Problem: After a screening design, it is impossible to tell which factor or interaction is causing an effect.

- Root Cause: Aliasing in fractional factorial designs, where the effects of two or more factors or interactions are confounded and cannot be distinguished from one another [30].

- Solution:

- A Priori Knowledge: Use your existing process knowledge to determine which confounded effect is more likely to be significant.

- Sequential Experimentation: Use techniques like "folding" the design or adding "axial runs" to break the aliases and de-confound the effects in a subsequent experiment [31].

- Follow-up Design: If interactions are found to be important, transition to a higher-resolution design, such as a full factorial or a Response Surface Methodology (RSM) design, for the significant factors [31].

Problem: The model fails to find a clear optimum, or the predicted optimum does not perform as expected in validation runs.

- Root Cause: The experimental region may contain curvature that a simple two-level factorial design cannot model, as it only fits a linear model [32] [30].

- Solution:

- Check for Curvature: Incorporate center points into your factorial design. A significant difference between the data at the center point and the predictions from the linear model indicates curvature [30].

- Upgrade the Design: Move to a Response Surface Methodology (RSM) design, such as a Central Composite Design or a Box-Behnken Design. These designs include points that allow for the estimation of quadratic (squared) terms, which model curvature and can locate a precise optimum [32] [30] [33].

Problem: The optimization seems like a compromise between multiple, conflicting responses (e.g., high yield and high selectivity).

- Root Cause: Trying to optimize multiple responses separately rather than simultaneously [32].

- Solution:

- Use the desirability function approach available in most statistical software [32].

- This method mathematically transforms each response into an individual desirability value (ranging from 0 to 1) and then combines them into a single composite desirability score.

- The software can then search for the factor settings that maximize this overall desirability, providing a true multi-response optimum [32].

Frequently Asked Questions (FAQs)

FAQ 1: When should I use a Screening Design versus an Optimization Design?

- Answer: The choice is sequential. Use a screening design (e.g., Fractional Factorial, Plackett-Burman) in the early stages when you have a large number of potential factors (e.g., 5 or more) and your goal is to efficiently identify the few critical ones [30] [31] [33]. Once the vital few factors are identified, use an optimization design (e.g., Response Surface Methodology) with those factors to model curvature and locate the precise optimal settings [30] [33].

FAQ 2: My One-Factor-at-a-Time (OFAT) optimization worked fine. Why should I switch to DoE?