Navigating the Energy Landscape: AI-Driven Strategies for Advanced Inorganic Materials Synthesis

The synthesis of novel inorganic materials is fundamentally governed by navigating complex, multi-dimensional energy landscapes, where the discovery of both stable ground states and valuable metastable phases resides.

Navigating the Energy Landscape: AI-Driven Strategies for Advanced Inorganic Materials Synthesis

Abstract

The synthesis of novel inorganic materials is fundamentally governed by navigating complex, multi-dimensional energy landscapes, where the discovery of both stable ground states and valuable metastable phases resides. This article explores the transformative integration of artificial intelligence, computational modeling, and targeted experiments to map and traverse these landscapes efficiently. We detail foundational concepts, advanced methodologies like generative models and crystal structure prediction, and optimization frameworks that minimize traditional trial-and-error. The content further covers validation techniques and comparative analyses of emerging materials for energy storage and electronics, providing researchers and scientists with a comprehensive roadmap to accelerate the design and discovery of next-generation functional materials.

Understanding the Energy Landscape: The Foundation of Inorganic Materials Discovery

Defining the Energy Landscape in Materials Science

The concept of an energy landscape is a powerful framework for understanding the stability and evolution of physical systems, from folding proteins to synthesizing inorganic materials. An energy landscape represents the energy of a system as a function of all its possible conformations or states [1]. It encodes the relative stabilities of different states—such as native, metastable intermediate, or unstable amorphous forms—and the energy barriers that separate them [1]. In the context of inorganic materials synthesis, comprehending this landscape is crucial for optimizing experimental conditions to navigate toward desired crystalline phases and away from kinetic traps.

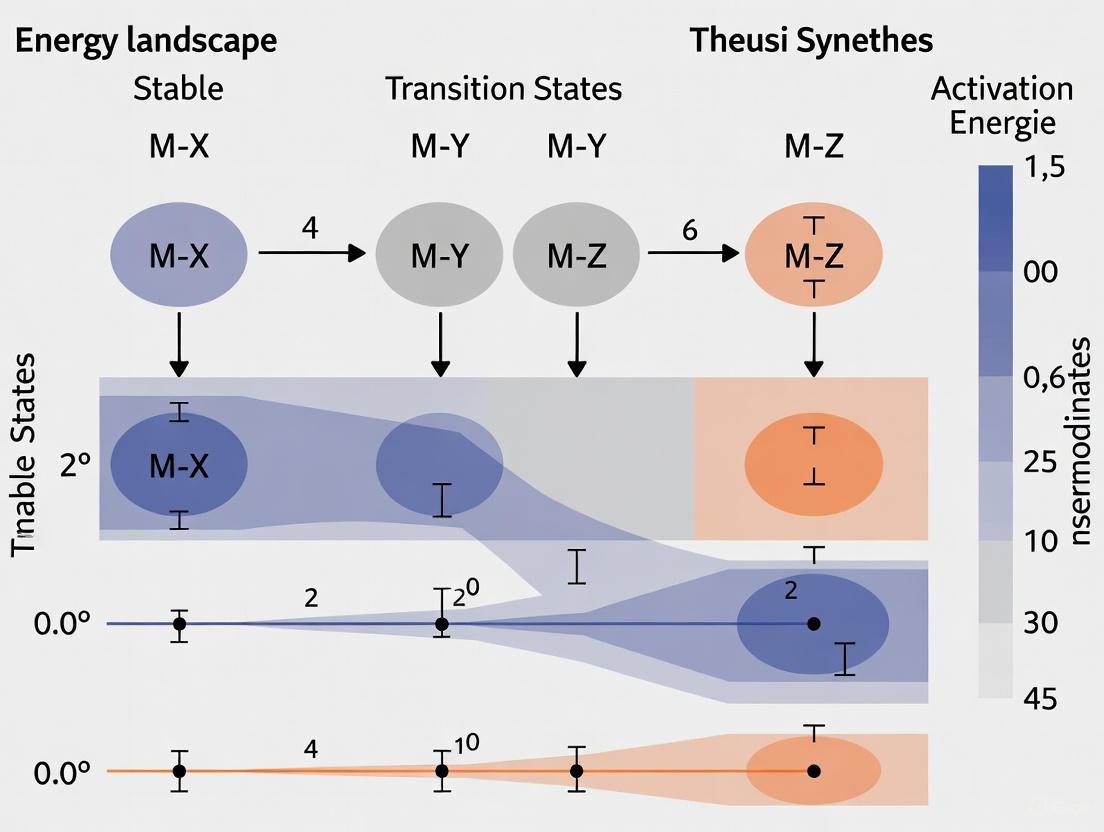

The landscape is often visualized as a rugged surface with multiple hills and valleys (Figure 1). The system's state is represented by a ball on this surface, tending to move downhill to minimize its energy but capable of crossing barriers to transition between stable basins [2]. The principles of statistical physics dictate that a state with low energy occurs with high probability, and transitions between states occur frequently if the energy barrier separating them is low [2]. This dynamic view allows scientists to comprehend synthesis outcomes as an ensemble of locally stable configurations that stochastically switch between one another based on their relative stability and the kinetics of transformation [2].

Table 1: Key Characteristics of Energy Landscapes

| Feature | Description | Implication for Materials Synthesis |

|---|---|---|

| Local Minima | Stable or metastable states where the system resides | Corresponds to specific material phases or polymorphs |

| Energy Barriers | Height differences between minima and saddle points | Determines transformation kinetics and reaction rates |

| Basin of Attraction | Region of the landscape that funnels into a specific minimum | Defines the range of experimental conditions yielding a specific phase |

| Global Minimum | The most thermodynamically stable state | The target equilibrium material phase |

| Landscape Roughness | Prevalence of small, local barriers | Impacts diffusion and nucleation processes |

Core Principles and Theoretical Foundations

The Funneled Landscape for Native States

A key principle in energy landscape theory is the concept of the "funnel." In a funnelled energy landscape for a system like a protein or a growing crystal, high-energy, high-entropy disordered states at the top of the funnel can fold or assemble along various paths down to a low-energy, low-entropy native conformation [1]. Native folding is relatively efficient because the native state is comprised of a network of mutually supportive stabilizing contacts, making these pathways "minimally frustrated" [1]. In materials science, this parallels the journey from a disordered precursor or solution to a well-defined, stable crystalline material.

Kinetic Control and Trapping

In contrast to efficient native folding, materials synthesis often involves multiple competing conformations separated by substantial kinetic barriers [1]. The landscape for intermolecular aggregation and crystal growth can thus be much rougher. This roughness is experimentally supported by the observation that crystallization and phase transformation are complex, often involving many different intermediates and competing pathways [1]. Kinetic barriers arise from the competing effects of enthalpy and entropy during structural changes: structured states are generally enthalpically favored but entropically disfavored, and mismatches in the free-energy changes caused by enthalpy and entropy reduction result in barriers [1]. If a metastable polymorph is more thermodynamically stable than the native fold under certain conditions, it is only the kinetic barriers that prevent spontaneous conversion [1]. Modulating these kinetic barriers through additives, temperature, or other synthesis parameters offers a strategic approach to direct synthesis toward a specific material phase.

A Computational Workflow for Energy Landscape Analysis

Energy landscape analysis (ELA) allows researchers to construct quantitative landscapes from multivariate data. The following workflow, adapted from methods used for complex systems like the brain, provides a framework for analyzing materials systems [2].

Figure 1: A computational workflow for energy landscape analysis from multivariate data, illustrating the sequence from data input to the construction of a disconnectivity graph.

Data Acquisition and Binarization

The input data for this form of ELA are multivariate time series with N variables in discrete time, denoted by x(t) = (x₁(t), x₂(t), ..., x_N_(t)), where *t = 1, 2, ..., T is the number of observations [2]. In a materials context, these variables could represent signals from different characterization techniques (e.g., Raman spectra, XRD peak intensities) or the state of different local atomic environments during a molecular dynamics simulation. Each variable xᵢ(t) is then binarized into a spin state sᵢ(t) ∈ {-1, +1}, where +1 indicates a "high" state (e.g., high local order, active reaction coordinate) and -1 indicates a "low" state [2]. The system's binarized state at time t is called its activity pattern, s(t) = (s₁(t), s₂(t), ..., sN(t)) [2].

Model Fitting and Energy Calculation

The core of the analysis is fitting a Pairwise Maximum Entropy Model (PMEM), also known as an Ising model or Boltzmann machine, to the distribution of observed activity patterns [2]. This model aims to find the probability distribution P(s) that has the same mean values and pairwise correlations as the empirical data while being as random as possible in all other aspects. The model's energy for a given state s is given by:

E(s) = - Σ hᵢ sᵢ - Σ Jᵢⱼ sᵢ sⱼ

where hᵢ is the external field acting on variable i, and Jᵢⱼ is the coupling strength between variables i and j [2]. The probability of observing state s is then P(s) ∝ exp(-E(s)/k_ B _T), where k_ B _ is Boltzmann's constant and T is temperature. The parameters {hᵢ}, {Jᵢⱼ} are inferred from the binarized time series data using maximum likelihood estimation or other inference algorithms [2].

Constructing the Disconnectivity Graph

Once the energy E(s) is known for all relevant states, a disconnectivity graph can be constructed (Figure 2). This graph is a tree that shows the relationships between the local minima [2]. Each leaf node represents a local minimum, and the branching points indicate the energy level that must be overcome to transition between different basins. The height of a branch point corresponds to the energy barrier between minima [2]. This graph provides a simplified, yet quantitative, map of the complex, high-dimensional energy landscape, revealing the stable states and the likely pathways for transitions between them.

Figure 2: A schematic disconnectivity graph. Each leaf node (α, β, γ) is a stable local minimum. The branching points show the energy barriers that must be crossed for transitions. The vertical axis represents energy.

Table 2: Key Parameters for the Pairwise Maximum Entropy Model (Ising Model)

| Parameter | Symbol | Interpretation in Materials Science |

|---|---|---|

| Spin State | sᵢ ∈ {-1, +1} | Binary state of a local environment (e.g., ordered/disordered) |

| External Field | hᵢ | Intrinsic bias of variable i toward a high or low state |

| Coupling Strength | Jᵢⱼ | Energetic interaction or cooperative influence between variables i and j |

| Activity Pattern | s = (s₁, ..., sN) | A microscopic configuration of the entire system |

| Energy | E(s) | Stability of a given configuration; lower energy states are more probable |

Experimental and Computational Methodologies

Single-Molecule Force Spectroscopy (SMFS)

While originally developed for protein folding, the principles of SMFS are translatable to the study of single polymers or molecular complexes relevant to materials science. In SMFS, the extension of a molecule is measured as its structure changes in response to a denaturing force applied to its ends [1]. This technique captures the statistical mechanics of structural fluctuations, allowing for the measurement of energy landscapes in great detail. Data from such experiments can be used to reconstruct the full energy profile, including critical features like barrier heights, positions, and diffusion coefficients [1]. This approach allows a quantitative comparison of native folding versus misfolding, highlighting fundamental differences in dynamics [1].

Iterative Landscape Reconstruction

A recent advanced methodology involves an iterative algorithm for optimizing free energy landscape reconstructions without requiring a priori knowledge of the landscape [3]. This approach (Figure 3) works by 1) taking experimental or simulated trajectory data; 2) reconstructing an 'approximate' energy landscape; 3) deriving optimal control protocols from low-dimensional differentiable simulations on this candidate landscape; 4) re-running the experiment or simulation using the updated protocol; and 5) iterating until convergence [3]. This method can yield substantially reduced variance and bias in free energy landscape reconstructions compared to naive approaches [3].

Figure 3: An iterative algorithm for improving energy landscape reconstructions without prior knowledge, using updated control protocols derived from candidate landscapes.

Challenges and Recommended Practices

A significant challenge in ELA is the proper determination of kinetic barriers. The barrier height, ΔG‡, is often inferred from the rate constant, k, using the relationship k = k₀ exp(-ΔG‡/k_ B _T) [1]. A common pitfall is incorrectly assuming a fixed prefactor k₀. A better framework is Kramers' theory for diffusive barrier crossing, which accounts for the intrachain diffusion coefficient and the local curvature of the landscape [1]. For the Ising-model-based ELA, it is recommended to keep the number of variables N on the order of 10 to ensure reliable model fitting with a manageable state space of 2^N configurations [2]. The binarization of continuous data must also be done carefully, as it can significantly impact the results.

The Scientist's Toolkit: Research Reagents and Computational Solutions

Table 3: Essential "Reagents" for Energy Landscape Research

| Tool / Reagent | Function | Example Application |

|---|---|---|

| Pairwise Maximum Entropy Model (Ising Model) | Infers effective interactions and fields from data to compute a probabilistic energy landscape. | Mapping state transitions in multivariate time series from simulations or experiments [2]. |

| Single-Molecule Force Spectroscopy (SMFS) | Applies mechanical force to directly probe the energy landscape of individual molecules. | Measuring unfolding/refolding pathways of polymers or molecular aggregates [1]. |

| Disconnectivity Graph Analysis | Visualizes the complex, high-dimensional landscape as a tree of minima and barriers. | Identifying stable polymorphs and the transition states between them [2]. |

| Kramers' Rate Theory | Provides a physically realistic framework for relating transition rates to energy barrier heights. | Quantifying kinetic barriers from observed transition frequencies [1]. |

| Iterative Control Protocols | Optimizes non-equilibrium driving protocols to improve the accuracy of landscape reconstruction. | Reducing variance and bias in free energy estimates from steered molecular dynamics [3]. |

| Diffusion Decomposition of Gaussian Approximation (DDGA) | A numerical framework for quantifying the energy landscape of high-dimensional stochastic oscillatory systems. | Analyzing cyclic processes in material transformations or chemical reactions [4]. |

The energy landscape paradigm provides a unifying language to describe the thermodynamics and kinetics of materials synthesis. By moving from a qualitative to a quantitative understanding of these landscapes—through techniques like the Ising model analysis, single-molecule spectroscopy, and iterative reconstruction—researchers can identify the critical intermediates and transition states that dictate synthesis outcomes. This deeper insight is invaluable for rationally designing synthesis protocols, anticipating and avoiding kinetic traps, and accelerating the discovery of novel functional materials. The integration of computational guidance and data-driven machine learning models with this physical framework holds the promise of creating an intelligent, closed-loop research paradigm, significantly increasing the success rate and efficiency of inorganic material synthesis [5].

The Critical Role of Metastable Phases for Advanced Applications

The pursuit of advanced functional materials has driven a paradigm shift from traditional thermodynamic equilibrium synthesis toward the strategic exploitation of metastable phases. These phases, characterized by higher Gibbs free energy than their stable counterparts yet persisting through kinetic constraints, are rapidly emerging as pivotal components in catalysis, energy storage, and biological systems [6]. Within the broader context of energy landscape research for inorganic materials synthesis, metastable phases represent accessible local minima that can be kinetically trapped during synthesis, thus avoiding the global free energy minimum of the stable phase [6] [7]. This approach enables researchers to preserve chemical simplicity while unlocking novel functionalities that are otherwise difficult to achieve through compositionally complex strategies such as extensive doping or multi-element alloying [6].

The fundamental distinction between thermodynamic metastability (kinetically trapped states) and dynamic metastability (states sustained only under non-equilibrium conditions) provides a crucial framework for understanding their behavior [6]. With advances in materials science, thermodynamically metastable phases now span from metals and compounds to frameworks and other crystalline polymers, while dynamically metastable systems include single-atom, high-entropy, and responsive framework materials [6]. The ability to navigate and control this complex energy landscape represents a frontier in inorganic materials synthesis, offering pathways to materials with unprecedented properties.

Theoretical Foundations: Energy Landscape and Phase Stability

Thermodynamic-Kinetic Adaptability

Metastable phases occupy a unique position in materials science, exhibiting what has been termed "thermodynamic-kinetic adaptability" [6]. This concept bridges the gap between experimental observations and theoretical predictions, describing how these materials can adapt to the driving forces of nucleation and growth rather than directly transforming into the corresponding stable crystal phase [6]. During catalytic reactions, the geometric and electronic structure of the metastable phase can adapt to the adsorption and desorption of foreign molecules, thereby optimizing reaction barriers and accelerating reaction kinetics [6].

The high Gibbs free energy and easily adjustable d-band center associated with metastable phases demonstrate exceptional reactivity across multiple catalytic domains [6]. This inherent adaptability stems from their position on the energy landscape—they possess sufficient stability to be synthesized and utilized, yet sufficient free energy to drive reactive processes that would be thermodynamically unfavorable for stable phases.

Landscape-Inversion Phase Transitions

A recently discovered phenomenon known as Landscape-Inversion Phase Transitions (LIPT) has broadened our fundamental understanding of phase-ordering kinetics [7]. In this non-standard scenario, the periodic energy landscape of a system can be inverted by changing a single parameter, leading to novel phase-ordering phenomena [7]. This inversion enables the spontaneous formation of metastable phases directly from an unstable equilibrium via asymmetric spinodal decomposition, where the system phase separates into two coexisting equilibrium phases of different relative stability [7].

In experimental realizations using 2D colloidal crystals, this process leads to a transient coexistence of stable and metastable phases, demonstrating that metastable domains can form spontaneously during the initial stages of phase separation [7]. This mechanism differs fundamentally from traditional nucleation pathways and provides new avenues for controlling domain sizes and lifetimes through external parameters such as magnetic fields [7].

Synthesis and Stabilization Methodologies

Controlled Synthesis Techniques

The controlled synthesis of metastable phases presents significant challenges due to their inherent thermodynamic instability relative to stable phases. Recent advances have developed sophisticated pathways that leverage parameters such as pressure, temperature, and chemical environments to achieve precise control over metastable phase formation [6].

Table 1: Synthesis Techniques for Metastable Phases

| Synthesis Method | Key Controlling Parameters | Resulting Phase Characteristics | Applications |

|---|---|---|---|

| Physical Vapor Deposition (PVD) | Surface diffusion distance, deposition rate | Metastable ceramic coatings (e.g., TiAlN) | Protective coatings, hard coatings [8] |

| High-Pressure Low-Temperature (HPLT) | Pressure (250-300 MPa), temperature (-25 to -15°C) | Ice I to Ice III phase transitions | Microbial destruction, food preservation [9] |

| Mechanochemical Synthesis | Milling intensity, duration | ZnSe and other semiconductor phases | Functional materials preparation [6] |

| Solvent-Free Protocols | Precursor salts, temperature cycling | Metal halide perovskites | Photodetectors, optoelectronics [6] |

A key challenge in synthesis involves addressing the limitations of conventional thermodynamic phase diagrams, which predict equilibrium phases but fail to account for non-equilibrium products formed under fluctuating temperature and pressure conditions [6] [8]. The CALPHAD (Calculation of Phase Diagrams) approach has been extended to address this challenge through modeling of atomic surface diffusion in processes like magnetron sputtering, enabling the calculation of metastable phase diagrams for PVD coating materials [8].

Stabilization Mechanisms

Stabilizing metastable phases against transformation to their stable counterparts requires strategic intervention at the atomic scale. The primary mechanisms include:

Atomic Migration Control: Managing atomic diffusion (atoms/ions moving across the lattice) and shear mechanisms to prevent reconstruction into stable phases [6].

Atomic Pinning: Introducing strategic dopants or defects that pin the atomic structure in the metastable configuration, effectively increasing the energy barrier for phase transformation [6].

Perturbation Engineering: Applying controlled perturbations to overcome energy barriers in metastable systems. For example, using 5% sodium chloride solution as a perturbation source can reduce the transition pressure of milk by 43 MPa and increase the transition temperature by 4.1°C, enabling phase transition at lower pressure and higher temperature [9].

Thermal instability remains a hallmark of metastable phases, wherein reducing the Gibbs free energy of formation is a fundamental requirement for realizing high-purity metastable phase materials [6].

Characterization and Analytical Approaches

Phase Transition Monitoring

Advanced characterization techniques are essential for identifying and monitoring metastable phases during synthesis and application. High-resolution electron microscopy has proven particularly valuable for identifying materials reconstructions and revealing true active phases in catalytic reactions [6]. Experimental approaches for characterizing metastable phase transitions include:

- In-situ X-ray scattering for monitoring cubic to hexagonal transformations in materials like Ti₁₋ₓAlₓN [8]

- Radial distribution function (g(r)) analysis of colloidal crystals to distinguish different crystalline lattices through their characteristic peaks [7]

- Three-dimensional atom probe investigations of thin films like Ti-Al-N to characterize phase distribution at atomic scales [8]

Metastable Phase Diagram Modeling

The development of metastable phase diagrams represents a significant advancement beyond traditional equilibrium phase diagrams. These diagrams account for the non-equilibrium conditions prevalent in processes like PVD, where materials are generally far from equilibrium [8]. Recent modeling methodologies incorporate:

- CALPHAD approach combined with first-principles calculations

- High-throughput magnetron sputtering experiments to model atomic surface diffusion

- Simon-like models and polynomial formulas for fitting phase transition data with high reliability (R² values of 0.997 for ice I) [9]

These approaches enable the establishment of databases containing both stable and metastable phase diagrams, guiding the design of ceramic coating materials through the relationship between composition, processing, microstructure, and performance [8].

Application Domains and Performance Metrics

Catalysis and Energy Conversion

Metastable phase materials demonstrate exceptional performance across multiple catalytic domains due to their unique electronic structures and extraordinary physicochemical properties [6]. Their applications span:

- Photocatalysis: Enhanced light absorption and long-lived carrier excitation [6]

- Electrocatalysis: Stronger charge transfer effects and tunable d-band centers [6]

- Thermal Catalysis: Optimized desorption and activation energies [6]

- Cross Catalysis: Multiple forms of energy integration (photoelectrocatalysis, photothermal catalysis) [6]

An impressive example includes the anomalous Nernst effect observed at the intersection of Fermi liquid and strange metal phases in topological superconductors represented by metastable 2M-WS₂ [6].

Functional Materials and Coatings

Metastable phases enable advanced functional materials with tailored properties:

- Specialty Ceramic Coatings: TiAlN coatings with enhanced hardness and age-hardening characteristics, with further property improvements through Ru addition [8]

- Thin Film Metallic Glasses: Zr-based metallic glass thin films for surgical blade coatings, significantly improving sharpness and durability [8]

- Transparent Conducting Oxides: GZO and AZO films with optimized electrical and optical properties for device applications [8]

- Accident-Tolerant Nuclear Materials: FeCrAl alloys developed as accident-tolerant fuel cladding materials for nuclear applications [8]

Microbial Destruction and Food Safety

High-Pressure Low-Temperature (HPLT) processing leveraging metastable phase transitions between ice I and ice III has demonstrated significant effectiveness in microbial destruction [9]. The phase transition from ice I to ice III produces approximately 18% volume change, generating substantial mechanical stress that facilitates microbial cell rupture [9].

Table 2: Microbial Destruction Efficacy via Metastable Phase Transition

| Processing Condition | Phase State | E. coli Inactivation (log reduction) | Key Characteristics |

|---|---|---|---|

| Before phase transition (250 MPa) | Metastable ice I | 1.11 log | Pre-transition inactivation |

| During phase transition (250-300 MPa) | Ice I → Ice III transition | Additional 1.26 log | Discontinuous, mutational destruction |

| Complete phase transition | Stable ice III | Maximum destruction | Segmentation behavior observed |

Phase transition microbial destruction is characterized by discontinuity, mutation, and segmentation when the phase transition pressure interval (250-300 MPa) is carefully refined [9]. This approach provides opportunities for commercial application of high-pressure-low-temperature technology for microbial destruction and quality enhancement [9].

Experimental Protocols and Methodologies

High-Pressure Low-Temperature Phase Transition Analysis

Objective: To characterize phase transition positions under high pressure thermodynamic metastable state and evaluate their influence on microbial destruction characteristics [9].

Materials and Equipment:

- Special high-pressure cooling system (self-cooling unit placed inside conventional HP chamber)

- Whole milk (4% fat content) as model system

- Non-pathogenic Escherichia coli as microbial indicator

- Aqueous sodium chloride solutions (5%, 10%) as perturbation sources

- High-pressure equipment with pressure capability to 400 MPa

Procedure:

- Prepare T-shaped sample containers using rectangular wood blocks and empty centrifugal tubes (25 mm diameter × 100 mm height) placed within flexible tubes [9].

- Inoculate milk samples with E. coli culture to achieve appropriate initial microbial load.

- Load samples into high-pressure cooling system and subject to predetermined pressure-temperature conditions.

- Refine pressure range (250-300 MPa) to investigate metastable phase transition behavior.

- Apply perturbation using sodium chloride solutions to lower phase transition pressure.

- Monitor phase transitions and corresponding microbial destruction efficacy.

- Model phase transition data using Simon-like models and polynomial formulas.

Key Measurements:

- Phase transition pressure and temperature coordinates

- Microbial inactivation (log reduction) before, during, and after phase transition

- Perturbation effectiveness in reducing transition pressure

Colloidal Crystal Phase Transition Studies

Objective: To investigate asymmetric spinodal decomposition and spontaneous formation of metastable phases in 2D colloidal crystals [7].

Materials and Equipment:

- Paramagnetic colloidal particles for 2D crystal formation

- Striped magnetic substrate generating alternating magnetic fields

- Uniform external magnetic field source with rapid switching capability

- High-resolution imaging system for monitoring structural changes

Procedure:

- Arrange paramagnetic particles along parallel lines following domain walls of striped substrate.

- Apply uniform external magnetic field H > Hₛ to establish initial rhomboidal structure with angle αb ≈ 7°.

- Rapidly quench system by switching external field to H = 0.

- Monitor dynamics of structural rearrangement via time evolution of radial distribution function g(r).

- Identify characteristic peaks corresponding to different crystalline structures.

- Document transient formation of metastable rectangular structure with αm = 0.

- Analyze phase coexistence duration and front propagation mechanisms.

Key Measurements:

- Time evolution of g(r) peaks characteristic of different structures

- Duration of transient phase coexistence

- Domain sizes and lifetimes of metastable phases under varying field conditions

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Metastable Phase Studies

| Material/Reagent | Function/Application | Specific Examples |

|---|---|---|

| Paramagnetic Colloidal Particles | Model systems for studying phase transition kinetics | 2D colloidal crystals for LIPT investigation [7] |

| Ti, Al, N Targets | PVD deposition of metastable ceramic coatings | TiAlN coatings with enhanced hardness [8] |

| Zr-based Alloy Targets | Thin film metallic glass deposition | ZrCuFeAlAg TFMG for dental applications [8] |

| GZO/AZO Precursors | Transparent conducting oxide films | Ga-doped ZnO, Al-doped ZnO for optoelectronics [8] |

| FeCrAl Alloy Components | Accident-tolerant nuclear materials | Fuel cladding development [8] |

| Sodium Chloride Solutions | Perturbation source for phase transition studies | 5%, 10% solutions for lowering transition pressure [9] |

Computational and AI-Enabled Discovery

The discovery of novel metastable phase materials presents considerable challenges due to the fundamental limitations of conventional thermodynamic phase diagrams [6]. These diagrams, traditionally employed to predict equilibrium phases, fail to account for the complex formation of non-equilibrium products under fluctuating conditions [6]. This limitation has stimulated the development of AI-assisted approaches:

Machine Learning Integration: Leveraging artificial intelligence technology to guide the discovery of new metastable phase materials and explore implications for catalytic development [6]. ML models can predict synthesis conditions and properties of metastable materials beyond the scope of traditional thermodynamic calculations.

High-Throughput Computational Screening: Combining first-principles calculations with combinatorial experiments to efficiently map metastable phase spaces and identify promising candidates for specific applications [8].

These computational approaches are transforming metastable materials design from empirical exploration to predictive science, significantly shortening development timelines and reducing research costs [6] [8].

Visualizing Metastable Phase Transitions

Metastable phase materials represent a transformative frontier in inorganic materials synthesis, offering pathways to enhanced functionality without compositional complexity. Their unique thermodynamic-kinetic adaptability enables exceptional performance across catalysis, energy storage, functional coatings, and safety applications [6]. The systematic understanding of synthesis pathways, stabilization mechanisms, and characterization techniques provides researchers with robust methodologies for harnessing these materials' potential.

Future developments in the field will likely focus on several key areas: (1) enhanced computational and AI-driven discovery of novel metastable phases beyond traditional thermodynamic predictions [6]; (2) advanced stabilization strategies enabling longer-lived metastable states under operational conditions; and (3) integration of metastable phase engineering into commercial applications from energy conversion to biomedical devices [8]. As control over the energy landscape of inorganic materials continues to refine, metastable phases will play an increasingly critical role in advancing technological capabilities across multiple disciplines.

The synthesis of novel inorganic materials is a cornerstone of technological advancement, pivotal for applications ranging from renewable energy to pharmaceuticals. However, this endeavor is perpetually challenged by three fundamental obstacles: the propensity of reactions to become kinetically trapped in undesired metastable states, the phenomenon of polymorphism wherein multiple distinct crystal structures can form from the same composition, and the sheer vastness of the inorganic compositional space. These challenges are elegantly unified under the framework of the energy landscape, a multidimensional map describing how the energy of a system depends on its structural configuration [10]. Navigating this landscape is the central task of materials synthesis. The goal is to identify pathways that lead to the global minimum energy structure—the most stable phase—while avoiding the numerous local minima that represent kinetic traps or less desirable polymorphs. This guide delves into the core principles and modern methodologies for overcoming these challenges, providing researchers with strategies to efficiently design and synthesize target inorganic materials.

The Energy Landscape Conceptual Framework

The energy landscape concept provides a powerful lens through which to view and understand the challenges of materials synthesis [10]. In this conceptualization, a material's energy (or enthalpy) is plotted against its structural degrees of freedom, such as unit cell parameters and atomic positions.

- Global and Local Minima: The lowest point in the deepest valley represents the global minimum energy structure, the thermodynamically stable phase. Other valleys correspond to local minima, which are metastable polymorphs or intermediate compounds.

- Saddle Points: The passes between valleys are saddle points, representing the transition states for structural transformations. The energy barriers between minima determine the kinetics of phase transformations.

- Landscape Topology: The typical energy landscape for a chemical system is not random; low-energy minima are often clustered in "funnels," which are small regions of structural similarity [10]. The shape of this landscape is influenced by external conditions such as pressure and temperature, which can stabilize or destabilize different minima.

Table 1: Key Features of an Energy Landscape and Their Synthesis Implications.

| Landscape Feature | Structural Meaning | Synthesis Implication |

|---|---|---|

| Global Minimum | The most thermodynamically stable crystal structure. | The target phase for applications requiring maximum stability. |

| Local Minimum | A metastable polymorph or by-product phase. | A kinetic trap that can prevent the reaction from reaching the target. |

| Saddle Point | The transition state between two structures. | Defines the energy barrier for a phase transformation; key for kinetics. |

| Funnel | A region of the landscape containing structurally similar minima. | Guides structure prediction efforts to promising areas of chemical space. |

Navigating Vast Compositional Space

The number of possible stoichiometric inorganic compounds formed from the first 103 elements is astronomically large, exceeding 10^12 for quaternary combinations alone [11]. Exhaustively screening this space with high-throughput experiments or first-principles computations is intractable. The following strategies have been developed to navigate this vastness.

Computational Screening and AI-Driven Discovery

Initial screening can be performed using computationally inexpensive filters to eliminate chemically implausible compositions. Applying principles of valency and electronegativity reduces the quaternary compositional space from over 10^12 to a more manageable 10^10 combinations [11]. Subsequent screening can use estimates of simple properties, such as band gaps, based solely on chemical composition to identify candidates for specific applications like photoelectrochemical water splitting [11].

Recent advances leverage generative artificial intelligence (AI) to accelerate discovery. Frameworks like MatAgent use large language models (LLMs) as a central reasoning engine to propose new material compositions iteratively [12]. This approach integrates external tools—including a materials knowledge base, the periodic table, and memory of past proposals—to guide the exploration toward user-defined target properties. A structure estimator (e.g., a diffusion model) then generates candidate crystal structures for the proposed compositions, and a property evaluator (e.g., a graph neural network) provides feedback, creating a closed-loop discovery system [12].

Predicting Synthesizability

A critical step in screening is predicting whether a hypothetical material is synthesizable. While charge-balancing is a common heuristic, it is an inflexible constraint that fails to account for diverse bonding environments and only applies to about 37% of known synthesized inorganic materials [13].

Machine learning models trained directly on databases of known materials, such as the Inorganic Crystal Structure Database (ICSD), offer a more powerful alternative. SynthNN is a deep learning model that learns the optimal descriptors for synthesizability from the data itself, capturing complex factors beyond simple charge-balancing or thermodynamic stability [13]. In benchmarks, SynthNN identifies synthesizable materials with 7x higher precision than using DFT-calculated formation energies alone and has been shown to outperform human experts in discovery tasks [13].

Table 2: Computational Methods for Navigating Compositional Space.

| Method | Primary Function | Key Advantage | Example/Reference |

|---|---|---|---|

| Chemical Rule Filtering | Rapid elimination of implausible compositions. | Drastically reduces search space using simple rules (valency). [11] | SMACT package [11] |

| High-Throughput DFT | Calculate stability and properties of candidates. | High accuracy for formation energy and electronic structure. | Materials Project [12] |

| Generative AI | Propose novel compositions with target properties. | Interpretable, iterative exploration guided by AI reasoning. [12] | MatAgent framework [12] |

| Synthesizability Prediction | Classify materials as synthesizable or not. | Learns complex, data-driven criteria beyond thermodynamics. [13] | SynthNN model [13] |

Overcoming Kinetic Traps

Kinetic traps are local minima on the energy landscape that consume reactants and sequester them in metastable states, preventing the formation of the target material. This is a prevalent issue in solid-state synthesis and self-assembly.

Thermodynamic Precursor Selection

A powerful strategy to avoid kinetic traps is the careful thermodynamic design of precursor materials. The principle is to choose precursors such that the reaction pathway to the target material has a high driving force and avoids low-energy, competing intermediates.

A robotic inorganic materials synthesis laboratory was used to validate this strategy for 35 quaternary oxides [14]. The key principles for precursor selection are:

- Two-Precursor Reactions: Reactions should ideally initiate between only two precursors to minimize simultaneous pairwise reactions that form by-products.

- High-Energy Precursors: Precursors should be relatively high in energy (unstable), maximizing the thermodynamic driving force for the final reaction step.

- Deepest Point on Hull: The target material should be the lowest-energy phase on the compositional line (isopleth) connecting the two precursors.

- Minimal Competing Phases: The reaction pathway should intersect as few other stable phases as possible.

- Large Inverse Hull Energy: If by-products are unavoidable, the target should be substantially lower in energy than its neighboring stable phases, ensuring a large driving force for its nucleation [14].

Table 3: Experimental Validation of Precursor Selection Principles. Adapted from data in [14].

| Target Material | Traditional Precursors | Designed Precursors | Experimental Outcome |

|---|---|---|---|

| LiBaBO₃ | Li₂CO₃, B₂O₃, BaO | LiBO₂, BaO | Traditional precursors failed to yield strong signals of the target. Designed precursors produced LiBaBO₃ with high phase purity. [14] |

| LiZnPO₄ | Li₂O, ZnO, P₂O₅ | LiPO₃, ZnO | The reaction LiPO₃ + ZnO provides a substantial driving force (ΔE) and places LiZnPO₄ at the deepest point on the convex hull, favoring its formation. [14] |

Hierarchical Assembly and Pathway Engineering

In self-assembly, kinetic traps can arise from the formation of malformed intermediates. Studies on the assembly of T=3 icosahedral capsids from DNA origami triangles have shown that hierarchical assembly pathways are more robust than egalitarian ones [15].

- Egalitarian Assembly: All binding sites have equal strengths. This often leads to slower assembly and a higher prevalence of kinetic traps.

- Hierarchical Assembly: Binding sites have unequal strengths, prompting the system to first form specific intermediates (e.g., dimers or pentamers) before completing the final structure. This pathway is faster and produces higher yields of the target structure across a wider range of affinity parameters [15].

This principle was demonstrated by tuning the binding affinities on the edges of triangular subunits. When the affinity for twofold (dimer) or fivefold (pentamer) interactions was stronger than the others, assembly followed a hierarchical pathway and was more efficient [15].

Protocol: Computational Analysis of Kinetic Barriers

Understanding kinetic barriers is crucial for processes like chemical recycling of polymers via ring-closing depolymerization (RCD). The following protocol outlines a high-throughput computational method to assess these barriers [16].

- Objective: To compute the enthalpic energy barriers for the ring-closing depolymerization of 6-membered aliphatic polycarbonates in different solvent environments.

- System Preparation: Model the depolymerization reaction using a short oligomer chain segment. Common monomers include a series of carbonates with varying substituents at the C2 carbon (e.g., 1a-g from the study [16]).

- Computational Method:

- Employ semi-empirical methods like Density-Functional Tight-Binding (DFTB) for rapid screening. Validate trends with higher-level Density Functional Theory (DFT) calculations on a subset of systems.

- For each carbonate and solvent system (e.g., acetonitrile, toluene, tetrahydrofuran), identify the key reaction steps: initial state, transition state (TS), and final cyclic carbonate state.

- Optimize the geometries of all states and calculate the single-point energies. The enthalpic barrier is taken as the energy difference between the transition state and the initial state.

- Data Analysis: Compare absolute barrier heights and relative changes between solvents. Correlate barriers with solvent interaction energies and molecular volume of substituents to elucidate structure-activity relationships. Experimental validation is crucial to confirm that lower computed barriers correlate with higher observed depolymerization yields [16].

Controlling Polymorphism

Polymorphism, the ability of a single composition to crystallize in multiple distinct structures, is a major challenge in materials science and pharmaceuticals. The target polymorph may be metastable, and its crystallization is often kinetically controlled.

Concentration-Driven Kinetic Trapping

Synthesis conditions can be manipulated to selectively isolate a metastable polymorph. A striking example is the synthesis of M₂(dobdc) metal-organic frameworks (MOFs), where simply increasing the reaction concentration kinetically traps a new photoluminescent framework, CORN-MOF-1, instead of the thermodynamic Mg₂(dobdc) phase [17].

- Protocol: High-Concentration Solvothermal Synthesis of CORN-MOF-1 (Mg) [17]

- Reagents: H₄dobdc (linker), Mg(NO₃)₂·6H₂O (metal source), solvent mixture (DMF/EtOH/H₂O in 18:1:1 ratio).

- Procedure:

- In a sealed vessel, combine H₄dobdc and Mg(NO₃)₂·6H₂O (2.50 equiv. per linker) in the solvent mixture.

- Use a high linker concentration (0.10 M to 1.50 M). Vigorous stirring (~700 rpm) is required to prevent inorganic impurities.

- Heat the reaction mixture at 120 °C for 24 hours.

- Upon cooling, collect the resulting solid via centrifugation or filtration. The product is CORN-MOF-1 (Mg), which can be confirmed by PXRD.

- Key Insight: Under these high-concentration conditions, the reaction is kinetically driven toward CORN-MOF-1, a phase with partially protonated linkers and formate groups. This polymorph exhibits intense photoluminescence, a property distinct from the thermodynamic Mg₂(dobdc) phase [17].

The Challenge of Predicting Crystallization Kinetics

While Crystal Structure Prediction (CSP) methods have improved, predicting which of the many computationally predicted polymorphs will actually form under a given set of experimental conditions remains difficult. This is largely due to the challenge of predicting crystallization kinetics [18]. The relative stability of polymorphs is determined by their Gibbs free energy, but the first phase to crystallize is often the one with the fastest nucleation kinetics, not necessarily the most stable one. For practical applications, such as in pharmaceuticals, a complete picture of a molecule's phase behavior, including unary and binary phase diagrams, is often necessary for formulation design and stability assessment [18].

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 4: Essential Computational and Experimental Tools for Energy Landscape Navigation.

| Tool / Resource | Function / Description | Relevance to Synthesis Challenges |

|---|---|---|

| SMACT Package [11] | An open-source Python package for the computational screening of stoichiometric inorganic materials. | Navigating vast compositional space by filtering based on chemical rules (valency, electronegativity). |

| Robotic Synthesis Lab [14] | An automated platform for high-throughput and reproducible powder inorganic materials synthesis. | Enables large-scale experimental validation of synthesis hypotheses (e.g., precursor selection) with minimal human effort. |

| DFTB (Density-Functional Tight-Binding) [16] | A semi-empirical quantum mechanical method approximating DFT. | Allows for high-throughput computation of kinetic barriers (e.g., for depolymerization) that would be intractable with full DFT. |

| SynthNN Model [13] | A deep learning classification model that predicts the synthesizability of inorganic chemical formulas. | Filters hypothetical materials generated from screening or AI, increasing the likelihood that predicted materials are synthetically accessible. |

| DNA Origami Subunits [15] | Nanoscale, monodisperse colloids with programmable geometry and tunable, addressable bond strengths. | A model experimental platform for studying self-assembly pathways and engineering strategies (e.g., hierarchical assembly) to avoid kinetic traps. |

| MatAgent Framework [12] | An LLM-driven generative AI framework that iteratively proposes and refines material compositions. | Accelerates the exploration of compositional space for target properties by leveraging AI reasoning and external knowledge bases. |

From Ground-State to Non-Equilibrium Synthesis

The synthesis of inorganic materials is fundamentally a journey across a complex, high-dimensional energy landscape. Traditional solid-state synthesis strategies often target the most thermodynamically stable, ground-state phases. However, this approach can be kinetically trapped by low-energy, competing by-products, preventing the realization of desired target materials or novel non-equilibrium phases. Navigating this landscape requires a sophisticated understanding of both thermodynamic driving forces and kinetic pathways. This guide synthesizes contemporary principles and experimental protocols for designing synthesis routes that either efficiently reach the ground state or deliberately access valuable non-equilibrium states of matter. By framing synthesis within the context of its underlying energy landscape, researchers can make informed decisions to streamline the manufacturing of complex functional materials and accelerate the discovery and realization of new phases.

Core Principles: Thermodynamic and Kinetic Pathways

Designing Precursors for Efficient Ground-State Synthesis

For conventional solid-state synthesis, the selection of precursor materials is paramount. The primary objective is to identify precursor combinations that circumvent kinetically competitive by-products while maximizing the thermodynamic driving force for fast reaction kinetics. The following five principles provide a framework for selecting effective precursors from a multicomponent convex hull [14]:

- Initiate with Two Precursors: Reactions should, if possible, initiate between only two precursors to minimize the chances of simultaneous pairwise reactions between three or more, which often lead to undesired intermediate phases.

- Utilize High-Energy Precursors: Precursors should be relatively high in energy (unstable), as this maximizes the overall thermodynamic driving force and thereby accelerates the reaction kinetics toward the target phase.

- Target the Deepest Hull Point: The target material should be the deepest point in the reaction convex hull between the two precursors. This ensures the thermodynamic driving force for nucleating the target is greater than for any competing phases.

- Minimize Competing Phases: The composition slice formed between the two precursors should intersect as few other competing phases as possible, minimizing opportunities to form by-products.

- Prioritize Large Inverse Hull Energy: If by-products are unavoidable, the target phase should have a large "inverse hull energy"—meaning it is substantially lower in energy than its neighbouring stable phases. This provides selectivity even if intermediates form.

When ranking potential precursor pairs, principle 3 (deepest hull point) should be prioritized first, followed by principle 5 (large inverse hull energy), as these most directly govern the selectivity and success of the reaction [14].

Accessing Non-Equilibrium Topological Order

Moving beyond the ground state, time-periodic driving (Floquet systems) can induce novel non-equilibrium phases of matter with properties forbidden by equilibrium thermodynamics [19]. A paradigmatic example is the Floquet Kitaev model, a periodically driven version of Kitaev's honeycomb model that exhibits Floquet topological order (FTO). Key signatures of this non-equilibrium phase include [19]:

- Chiral Edge Modes with Zero Chern Number: The emergence of topologically protected chiral edge modes hosting non-Abelian Majorana modes, even when the Chern numbers of all bulk bands are zero. This is in sharp contrast to equilibrium settings, where a non-zero integer Chern number is requisite for chiral modes.

- Dynamical Anyon Transmutation: Unique to the Floquet setting is the presence of two distinct anyon types that transmute between each other, alternating with twice the period of the drive.

Quantum processors offer a powerful platform to realize and probe these highly entangled non-equilibrium phases, which are generically difficult to simulate classically [19].

Quantitative Data and Comparison

The following tables summarize key quantitative data from seminal studies in both ground-state and non-equilibrium synthesis.

Table 1: Quantitative Analysis of Synthesis Pathways for Selected Target Materials [14]

| Target Material | Precursor Set | Reaction Energy (meV/atom) | Inverse Hull Energy (meV/atom) | Experimental Phase Purity |

|---|---|---|---|---|

| LiBaBO3 | Li2CO3, B2O3, BaO | -336 | -22 | Low / Non-detectable |

| LiBaBO3 | LiBO2, BaO | -192 | -153 | High |

| LiZnPO4 | Zn2P2O7, Li2O | -148 | Not Deepest Point | Not Reported |

| LiZnPO4 | Zn3(PO4)2, Li3PO4 | -40 | -40 | Not Reported |

| LiZnPO4 | LiPO3, ZnO | -161 | -121 | High (Predicted) |

Table 2: Key Signatures and Parameters in Floquet Topological Order [19]

| System/Signature | Key Parameter | Equilibrium Requirement | Non-Equilibrium (Floquet) Realization |

|---|---|---|---|

| Chiral Majorana Edge Mode | Chern Number | Non-zero integer | Can exist with zero bulk Chern number |

| Bulk Topological Order | Anyon Statistics | Static anyon types | Distinct anyon types transmute with 2T period |

| Floquet Kitaev Model | Driving Strength (JT) | N/A | FTO phase exists near JT = 1 |

Experimental Protocols

Robotic Workflow for High-Throughput Solid-State Synthesis

A robotic inorganic materials synthesis laboratory automates the powder synthesis workflow, enabling high-throughput and reproducible testing of synthesis hypotheses. The detailed protocol for validating precursor principles is as follows [14]:

- Target Selection: Define a diverse set of target multi-component oxides (e.g., 35 quaternary Li-, Na-, and K-based oxides, phosphates, and borates).

- Precursor Preparation: The robotic system automates the weighing and handling of various powder precursors (e.g., 28 unique precursors spanning 27 elements).

- Ball Milling: Precursors are automatically transferred to ball mills for mechanical mixing to ensure homogeneity.

- Oven Firing: The mixed powders are loaded into furnaces and fired according to programmed temperature and time profiles.

- X-ray Characterization: The reaction products are automatically characterized using X-ray diffraction (XRD) to determine phase purity.

- Data Analysis: XRD patterns are analyzed to compare the success and purity of reactions using different precursor pairs.

This automated platform allowed for the execution of 224 distinct reactions by a single human experimentalist, providing a large-scale validation of thermodynamic precursor selection principles [14].

Probing Floquet Topological Order on a Quantum Processor

The experimental realization and characterization of the Floquet Kitaev model on a superconducting quantum processor involve the following key methodologies [19]:

A. Realizing the Floquet Kitaev Model:

- System: Spin-1/2 degrees of freedom arranged on the vertices of a honeycomb lattice with three types of bonds (X, Y, Z).

- Stroboscopic Evolution: The system evolves under the repeated application of the Floquet unitary, UT = UZ(JT) UY(JT) UX(JT), where Uα(JT) = exp{-i(π/4) JT Σ{⟨j,k⟩α} αj αk} and α ∈ {X, Y, Z}.

- Implementation: The driving terms U_α are implemented on the quantum processor using sequences of single-qubit rotations and two-qubit C-PHASE gates. The dynamics are controlled by the coupling parameter J and the driving period T.

B. State Preparation and Measurement:

- Flux-Free State Preparation: A unitary circuit UFF prepares the system in a flux-free state |ΨFF⟩, which is an eigenstate of all plaquette operators W_P = +1. This is analogous to preparing a toric code ground state.

- Majorana Dynamics: The dynamics of emergent Majorana excitations are monitored by measuring the density operator of a complex fermion, nF(j,k) = (i ϕjk cj ck + 1)/2. This operator can be written as a Pauli string and measured directly.

- Interferometric Algorithm: A devised interferometric algorithm allows for the introduction and measurement of a bulk topological invariant, probing the dynamical transmutation of anyons for systems of up to 58 qubits.

Visualization of Synthesis Pathways and Principles

Precursor Selection Workflow

The following diagram illustrates the logical decision process for selecting optimal solid-state synthesis precursors based on thermodynamic principles.

Floquet Kitaev Model Experimental Cycle

This diagram outlines the core workflow for creating and characterizing a non-equilibrium topologically ordered state on a quantum processor.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagent Solutions for Inorganic Synthesis & Quantum Simulation

| Item / Solution | Function / Description | Application Context |

|---|---|---|

| High-Purity Binary Oxide Precursors | Base starting materials for solid-state reactions (e.g., ZnO, B₂O₃, Li₂O, P₂O₅). | Ground-State Synthesis [14] |

| Pre-synthesized Metastable Precursors | High-energy intermediates (e.g., LiBO₂, LiPO₃) used to bypass low-energy by-products and maximize driving force. | Ground-State Synthesis [14] |

| Superconducting Qubit Array | A two-dimensional lattice of artificial atoms serving as a programmable quantum magnet. | Non-Equilibrium Synthesis [19] |

| C-PHASE Gate | A fundamental two-qubit entangling gate used to construct the interacting terms of the model Hamiltonian. | Non-Equilibrium Synthesis [19] |

| Single-Qubit Rotation Gates | Quantum operations used to construct the driving terms UX, UY, U_Z of the Floquet unitary. | Non-Equilibrium Synthesis [19] |

| Quantum State Tomography Tools | Methods and algorithms to reconstruct the quantum state from measurements, enabling the imaging of edge modes and anyon dynamics. | Non-Equilibrium Synthesis [19] |

The Confluence of Composition Space and Structure Space

The discovery and synthesis of novel inorganic materials are fundamentally governed by the intricate relationship between composition space and structure space. This confluence defines the energy landscape that dictates thermodynamic stability and functional properties. This whitepaper details a quantitative framework and experimental methodologies for navigating this landscape, with a focus on generative artificial intelligence and high-throughput computational screening. By establishing baselines between traditional and generative approaches, we provide researchers with protocols for targeting materials with tailored electronic, catalytic, and magnetic functionalities.

Inorganic solid-state chemistry is a cornerstone of technological advancement, critical for developing materials with tailored electronic, magnetic, and optical properties [20]. The search for new functional materials, however, is constrained by the vastness of possible chemical compositions and their associated crystal structures. This complex relationship is described by two interconnected domains: composition space (the combinations of elemental species and their ratios) and structure space (the arrangement of atoms within a crystal lattice). Their confluence creates a high-dimensional energy landscape where minima correspond to thermodynamically stable compounds. Navigating this landscape efficiently is a central challenge in materials science. Recent advances, particularly from generative artificial intelligence (AI), offer promising pathways for targeted discovery by learning the underlying rules that connect composition to stable structure [21].

Quantitative Frameworks for Landscape Mapping

A critical step in mapping the energy landscape is the quantitative benchmarking of discovery methodologies. The performance of various generative techniques can be evaluated against established baseline methods.

Table 1: Benchmarking Performance of Material Discovery Methods Against Baselines [21]

| Method Category | Examples | Novelty Generation | Stability Rate | Ability to Target Properties (e.g., Band Gap) |

|---|---|---|---|---|

| Baseline Approaches | Random enumeration of charge-balanced prototypes; Data-driven ion exchange | Generates novel materials that often resemble known compounds | High | Limited |

| Generative AI Models | Diffusion Models; Variational Autoencoders (VAEs); Large Language Models (LLMs) | Excels at proposing novel structural frameworks | Improves with more training data | More effective when sufficient training data exists |

Table 2: Post-Generation Screening Metrics for Improved Success Rates [21]

| Screening Filter | Function | Impact on Success Rate | Computational Cost |

|---|---|---|---|

| Stability Filter | Assesses thermodynamic stability using pre-trained universal interatomic potentials | Substantial improvement | Low-cost |

| Property Filter | Screens for target properties like electronic band gap and bulk modulus | Substantial improvement | Low-cost |

Experimental and Computational Protocols

Baseline Methodology: Ion Exchange

Ion exchange is a established synthetic pathway for exploring nearby regions of the composition-structure landscape [21].

- Precursor Selection: Identify a known parent compound with a structure that can host different ions.

- Reaction Environment: Immerse the parent crystal in a molten salt or solution containing the guest ions to be exchanged (e.g., LiGdF₄ as a host for Yb³⁺/Er³⁺ doping [20]).

- Control Parameters: Carefully control temperature, reaction time, and ion concentration to drive the exchange.

- Characterization: Use X-ray diffraction (XRD) to confirm the preservation of the parent structure and techniques like energy-dispersive X-ray spectroscopy (EDS) to verify composition change.

Generative AI Workflow for Targeted Discovery

This protocol leverages machine learning for broader exploration [21].

- Data Curation: Assemble a high-quality training set of known inorganic crystals from databases like the Materials Project or the Inorganic Crystal Structure Database (ICSD).

- Model Training: Train a generative model (e.g., Diffusion, VAE, LLM) on the curated data to learn the probability distribution of stable compositions and structures.

- Sampling: Generate candidate materials by sampling from the trained model.

- Stability & Property Screening: Pass all generated candidates through low-cost, pre-trained machine learning filters to predict stability and key properties, weeding out unstable or non-functional proposals.

- Synthesis & Validation: Proceed with experimental synthesis, such as ultra-rapid anodization [20] or solid-state reaction, for the most promising candidates, followed by advanced characterization.

Diagram 1: Generative AI discovery workflow with feedback.

Advanced Characterization Protocol

For synthesized materials, detailed structural and property analysis is crucial [20].

- Structural Analysis: Use a combination of Neutron and Synchrotron Radiation Diffraction to solve and refine crystal structures, particularly for detecting light atoms and structural water [20].

- Morphological Analysis: Use Field-Emission Scanning Electron Microscopy (FESEM) to analyze crystal size, shape, and distribution. For nanocrystals, confirm crystallite size with Transmission Electron Microscopy (TEM) [20].

- Spectroscopic Analysis:

- Up-Conversion Luminescence: Under 980 nm laser excitation, measure emission spectra to study energy transfer processes in doped materials (e.g., Yb→Er, Er→Gd) [20].

- Electron Paramagnetic Resonance (EPR): Probe local coordination and magnetic interactions of paramagnetic ions like Gd³⁺ [20].

- X-ray Photoelectron Spectroscopy (XPS): Identify elemental composition, chemical states, and the presence of oxygen vacancies [20].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagent Solutions for Inorganic Solid-State Synthesis

| Item | Function/Description | Example Use Case |

|---|---|---|

| Metal Salt Precursors | Source of cationic species in synthesis (e.g., nitrates, acetates). | Cobalt nitrate for Co₃O₄ synthesis via thermolysis [20]. |

| Dopant Ions | Elements introduced in small quantities to modify properties. | Yb³⁺/Er³⁺ for up-conversion luminescence in LiGdF₄ [20]. |

| Molten Salt Media | High-temperature solvent for ion exchange and crystal growth. | Used in the ion exchange baseline method [21]. |

| Anodization Electrolyte | Solution for electrochemical synthesis of oxide films. | With Ni additives to modify Co₃O₄ nanostructure morphology [20]. |

| Universal Interatomic Potentials | Pre-trained machine learning force fields for stability prediction. | Low-cost post-generation screening of candidate structures [21]. |

Visualization of the Confluence

The relationship between composition space, structure space, and the resulting energy landscape can be visualized as a interconnected network where generative models learn to navigate the probabilities of stability.

Diagram 2: Confluence of composition and structure defining the energy landscape.

AI and Computational Tools for Mapping and Traversing Synthesis Pathways

Generative AI Models for De Novo Materials Design

The design of new inorganic materials is a fundamental driver of technological advances in areas such as energy storage, catalysis, and carbon capture. [22] Traditional materials discovery has relied heavily on experimental trial-and-error and human intuition, limiting the number of candidates that can be practically tested. The conceptual framework of energy landscapes provides a powerful theoretical foundation for understanding and navigating the complex process of materials synthesis. [23] In this paradigm, a material's energy function possesses numerous local minima separated by barriers across a high-dimensional state space, graphically resembling a mountainous landscape. [23] These minima correspond to metastable chemical compounds, while the barriers represent kinetic obstacles to phase transformations.

The synthesis pathway a system follows on this landscape is determined by both thermodynamic driving forces and kinetic trapping phenomena. Preferential trapping occurs when a system's dynamics become confined to certain regions of the state space, representing metastable compounds of relevance for applications. [23] Guiding the dynamics of a system into desired regions requires optimally tuned control parameters. Without careful navigation, synthetic pathways can become kinetically trapped by low-energy intermediate by-products, consuming the thermodynamic driving force needed to reach the target material. [14] Generative artificial intelligence represents a transformative approach to navigating these complex energy landscapes, enabling the direct computational design of materials with target properties before experimental synthesis is ever attempted.

Generative AI Fundamentals for Materials Design

Generative AI models represent a paradigm shift from traditional screening-based approaches for materials discovery. Instead of filtering through existing databases of known compounds, these models directly generate novel crystal structures based on desired property constraints, a process known as inverse design. [22] [24] This capability is particularly valuable because known materials databases represent only a tiny fraction of the potentially stable inorganic compounds that could exist. [22]

Several architectural approaches have been developed for generative materials design:

- Diffusion models: These generate samples by reversing a fixed corruption process using a learned score network. [22] For materials, this involves gradually refining atom types, coordinates, and periodic lattice from noisy initial states.

- Transformer networks: Initially developed for natural language processing, these have been adapted to generate material designs from text prompts, enabling natural-language-driven design. [25]

- Adversarial networks: These employ a generator-discriminator framework to produce realistic material structures.

A key advancement in this field is MatterGen, a diffusion-based generative model specifically designed for inorganic materials across the periodic table. [22] [24] Its diffusion process is uniquely tailored to crystalline materials, respecting periodicity and symmetries that are fundamental to material systems. The model generates structures that are more than twice as likely to be new and stable compared to previous methods, and more than ten times closer to the local energy minimum. [22]

Methodologies and Experimental Protocols

Model Architecture and Training

MatterGen employs a specialized diffusion process that operates on three core components of a crystalline material: atom types (A), coordinates (X), and periodic lattice (L). [22] Each component has a custom corruption process with a physically motivated limiting noise distribution:

- Coordinate diffusion uses a wrapped Normal distribution that respects periodic boundary conditions and approaches a uniform distribution at the noisy limit.

- Lattice diffusion takes a symmetric form approaching a distribution whose mean is a cubic lattice with average atomic density from training data.

- Atom type diffusion occurs in categorical space where individual atoms are corrupted into a masked state.

To reverse this corruption process, MatterGen learns a score network that outputs invariant scores for atom types and equivariant scores for coordinates and lattice, eliminating the need to learn symmetries from data. [22] For property-constrained generation, the model incorporates adapter modules that enable fine-tuning on labeled datasets. These tunable components are injected into each layer of the base model to alter outputs based on property labels, working in combination with classifier-free guidance to steer generation toward targets. [22]

The base model is trained on the Alex-MP-20 dataset, comprising 607,683 stable structures with up to 20 atoms recomputed from the Materials Project and Alexandria databases. [22] This large, diverse training set enables the model to learn the fundamental principles of stable crystal structure formation across the periodic table.

Structural Constraint Integration

For designing materials with exotic quantum properties, conventional generative models often struggle because they primarily optimize for stability rather than specific structural features. The SCIGEN (Structural Constraint Integration in GENerative model) approach addresses this limitation by enforcing geometric constraints during the generation process. [26]

SCIGEN is a computer code that ensures diffusion models adhere to user-defined structural rules at each iterative generation step, blocking generations that don't align with specified geometric patterns. [26] This enables the creation of materials with specific architectural features like Kagome or Lieb lattices that are associated with quantum phenomena such as spin liquids or flat bands. [26] The experimental protocol involves:

- Constraint definition: Specifying desired geometric patterns (e.g., Archimedean lattices)

- Constrained generation: Generating candidate materials that adhere to these patterns

- Stability screening: Filtering candidates for thermodynamic stability

- Property validation: Running detailed simulations to understand atomic behavior

- Experimental synthesis: Creating physical samples for validation

This approach has successfully generated over 10 million material candidates with targeted geometric patterns, leading to the discovery and synthesis of previously unknown compounds with exotic magnetic properties. [26]

Text-to-Material Generation

A particularly innovative approach uses transformer neural networks to convert descriptive text directly into material designs. The CLIP-VQGAN framework enables this translation through an iterative process that combines a generator (VQGAN) and classifier (CLIP) that work in tandem to produce images matching text prompts. [25]

The experimental workflow involves:

- Text prompt input: Providing descriptive text (e.g., "a regular lattice of steel")

- Image generation: Iterative generation of 2D material designs that match the text

- 3D model construction: Converting resulting images into three-dimensional models

- Additive manufacturing: Physical realization via 3D printing

- Property validation: Mechanical testing and computational analysis

This natural-language-driven approach creates a direct bridge between human conceptualization and computational material design, potentially revolutionizing how researchers interact with design tools. [25]

Performance Metrics and Experimental Validation

Quantitative Performance Assessment

Rigorous computational evaluation is essential for validating generative models. Standard assessment metrics include the percentage of generated materials that are stable, unique, and new (SUN), and the average root-mean-square deviation (RMSD) between generated structures and their DFT-relaxed forms. [22]

Table 1: Performance Comparison of Generative Models for Materials Design

| Model | % SUN Materials | Average RMSD (Å) | Property Constraints | Element Diversity |

|---|---|---|---|---|

| MatterGen | 75% below 0.1 eV/atom above convex hull | <0.076 | Chemistry, symmetry, mechanical, electronic, magnetic properties | Across periodic table |

| MatterGen-MP | 60% more than previous SOTA | 50% lower than previous SOTA | Limited property tuning | Restricted training set |

| CDVAE (Previous SOTA) | Baseline | Baseline | Mainly formation energy | Limited element sets |

| DiffCSP | Lower than MatterGen | Higher than MatterGen | Limited | Narrow subset |

The stability of generated materials is evaluated by calculating their energy above the convex hull defined by reference datasets, with structures within 0.1 eV per atom generally considered synthesizable. [22] Uniqueness measures whether generated structures duplicate others from the same method, while novelty assesses whether they match known structures in extended reference databases. [22]

Experimental Validation

Computational predictions require experimental validation to demonstrate real-world applicability. In one compelling case, MatterGen was used to design a novel material, TaCr2O6, conditioned on a target bulk modulus of 200 GPa. [24] The synthesis and measurement process included:

- Precursor selection: Choosing appropriate starting materials

- Solid-state reaction: Combining precursors under controlled conditions

- Structural characterization: Using X-ray diffraction to verify crystal structure

- Property measurement: Experimentally determining mechanical properties

The synthesized material's structure aligned with MatterGen's prediction, exhibiting a measured bulk modulus of 169 GPa compared to the 200 GPa design target—a relative error below 20%, which is considered remarkably close from an experimental perspective. [24] This successful validation demonstrates the potential for generative AI to significantly accelerate the design-measure-synthesize cycle for functional materials.

Table 2: Experimentally Validated Materials from Generative AI Models

| Generated Material | Target Property | Measured Property | Error | Synthesis Method |

|---|---|---|---|---|

| TaCr2O6 | Bulk modulus: 200 GPa | Bulk modulus: 169 GPa | <20% | Solid-state reaction |

| TiPdBi | Kagome lattice geometry | Magnetic properties confirmed | Aligned with predictions | Solid-state synthesis |

| TiPbSb | Specific geometric pattern | Exotic magnetic traits | Consistent with predictions | High-temperature synthesis |

Research Reagent Solutions

The experimental realization of AI-generated materials requires carefully selected precursors and synthesis conditions. The following reagents and instruments form the essential toolkit for validating generative AI predictions:

Table 3: Essential Research Reagents and Instruments for Materials Synthesis

| Reagent/Instrument | Function | Application Example |

|---|---|---|

| Binary oxide precursors | Starting materials for solid-state reactions | Synthesis of multicomponent oxides |

| Robotic synthesis laboratory | High-throughput, reproducible powder synthesis | Automated precursor prep, ball milling, oven firing |

| DFT simulation software | Calculating formation energies and properties | Evaluating stability above convex hull |

| High-performance computing | Running complex simulations | DFT relaxation of generated structures |

| X-ray diffractometer | Crystallographic characterization | Phase identification and structure verification |

| Ball milling equipment | Homogenizing precursor mixtures | Ensuring uniform reaction environments |

| Controlled atmosphere furnaces | High-temperature materials processing | Solid-state reactions under controlled conditions |

Workflow Visualization

The following diagrams illustrate key processes in generative AI-driven materials design, created using Graphviz DOT language with specified color palettes and contrast requirements.

Generative AI Materials Design Workflow

Energy Landscape Navigation for Synthesis

Generative AI models represent a fundamental shift in materials design methodology, moving beyond traditional screening approaches to actively create novel materials tailored to specific applications. By integrating with the energy landscape framework, these models provide a principled approach to navigating the complex thermodynamic and kinetic factors that govern materials synthesis.

The most significant advances will likely come from closing the loop between generative design, computational screening, and experimental synthesis. Integration with robotic laboratories enables high-throughput validation and continuous model improvement based on experimental feedback. [14] As these models evolve, they hold the potential to dramatically accelerate the discovery of materials for transformative technologies including energy storage, quantum computing, and carbon capture.

Crystal Structure Prediction (CSP) and Stability Calculations