Multimodal Data Parsing for Materials Informatics: Techniques, Applications, and Future Directions

This article provides a comprehensive overview of multimodal data parsing and its transformative impact on materials informatics.

Multimodal Data Parsing for Materials Informatics: Techniques, Applications, and Future Directions

Abstract

This article provides a comprehensive overview of multimodal data parsing and its transformative impact on materials informatics. It explores the foundational principles of processing heterogeneous data types—including spectral, microscopic, textual, and tabular information—to accelerate materials discovery and characterization. The content covers core methodologies like multimodal fusion and alignment, addresses practical challenges such as handling missing data and ensuring interoperability, and examines validation frameworks for assessing model performance. Designed for researchers, scientists, and drug development professionals, this review synthesizes current trends, highlights practical applications in biomedical research, and outlines a forward-looking perspective on integrating these techniques into intelligent, automated materials development pipelines.

Understanding Multimodal Data Parsing: Core Concepts and Material Science Imperatives

Defining Modality and Multimodal Parsing in Materials Science

Modern materials research generates complex, heterogeneous datasets that span multiple scales and data types, from atomic composition and processing parameters to macroscopic properties and performance characteristics. This inherent complexity necessitates a paradigm shift from single-modality analysis to multimodal learning, an approach that jointly analyzes these diverse data types—or modalities—to uncover deeper insights and overcome the limitations of data scarcity. In artificial intelligence (AI), a modality refers to a specific type or form of data representation and communication [1]. In the specific context of materials science, modalities encompass the diverse types of data generated throughout the material lifecycle, such as chemical composition, synthesis parameters, microstructural images from microscopy, spectral data, and mechanical property measurements [2] [3].

Multimodal parsing is the computational framework that enables the integration, alignment, and joint analysis of these disparate modalities. This process is crucial for modeling the complex, hierarchical relationships in material systems, often described by the processing-structure-properties-performance chain. By effectively parsing multimodal data, researchers can build more robust models that accelerate the discovery and design of novel materials, even when certain data types are incomplete—a common challenge in experimental materials science [2]. This technical guide explores the core concepts, methodologies, and applications of modality and multimodal parsing, providing a foundation for their implementation in advanced materials information research.

Core Concepts and Definitions

Modality in Context

In materials science, the concept of modality extends beyond simple data types to encompass the entire multi-scale characterization of a material. The following table categorizes common modalities encountered in materials research:

Table: Common Modalities in Materials Science Data

| Modality Category | Specific Examples | Typical Data Form |

|---|---|---|

| Composition & Processing | Chemical formula, synthesis parameters (e.g., temperature, flow rate) | Tabular data, numerical vectors [2] |

| Structure & Morphology | Crystal structure, micrographs (SEM, TEM), grain size distribution | Graph representations (for crystal structures), 2D images [2] [3] |

| Properties & Performance | Mechanical properties (e.g., yield strength, modulus), electronic properties, spectral data (XRD, FTIR) | Numerical vectors, line spectra (DOS), time-series data [2] [3] |

| Textual Descriptions | Scientific abstracts, machine-generated crystal descriptions (e.g., from Robocrystallographer) | Natural language text [3] |

The Principle of Multimodal Parsing

Multimodal parsing is the computational engine that transforms a collection of individual modalities into a unified, knowledge-rich representation. It involves several key processes:

- Feature Extraction: Converting raw, high-dimensional data from each modality into meaningful, lower-dimensional feature vectors using specialized encoders (e.g., Convolutional Neural Networks for images, Graph Neural Networks for crystal structures) [2] [3].

- Cross-Modal Alignment: Mapping these extracted features from different modalities into a shared latent space where semantically similar material representations are close to one another, regardless of their original form [2] [3].

- Joint Representation Learning: Fusing the aligned features to create a comprehensive representation that captures the complex, non-linear interactions between different aspects of the material, such as how processing parameters influence microstructure [2].

This integrated approach allows models to reason about materials in a holistic manner, leading to improved performance on predictive tasks and enabling novel discovery workflows.

A Framework for Implementation: MatMCL

The MatMCL (Multimodal Contrastive Learning for Materials) framework provides a concrete architecture for implementing multimodal parsing, specifically designed to handle the challenges of real-world materials data, such as missing modalities [2].

MatMCL employs a structure-guided pre-training (SGPT) strategy, which uses a contrastive learning objective to align representations from different modalities. The core architecture consists of the following components [2]:

- Unimodal Encoders: Specialized neural networks that convert raw data from each modality into a feature vector. For example, a table encoder (e.g., an FT-Transformer or MLP) processes numerical processing parameters, while a vision encoder (e.g., a Vision Transformer or CNN) processes microstructural images from SEM [2].

- Multimodal Encoder: A model (e.g., a transformer with cross-attention) that takes features from multiple modalities and learns a fused, joint representation of the material system [2].

- Projection Head: A shared neural network that maps all representations—unimodal and multimodal—into a joint latent space where contrastive learning is performed [2].

- Downstream Task Modules: Prediction, generation, or retrieval heads that utilize the pre-trained encoders for specific applications like property prediction or microstructure generation [2].

The Experimental Workflow

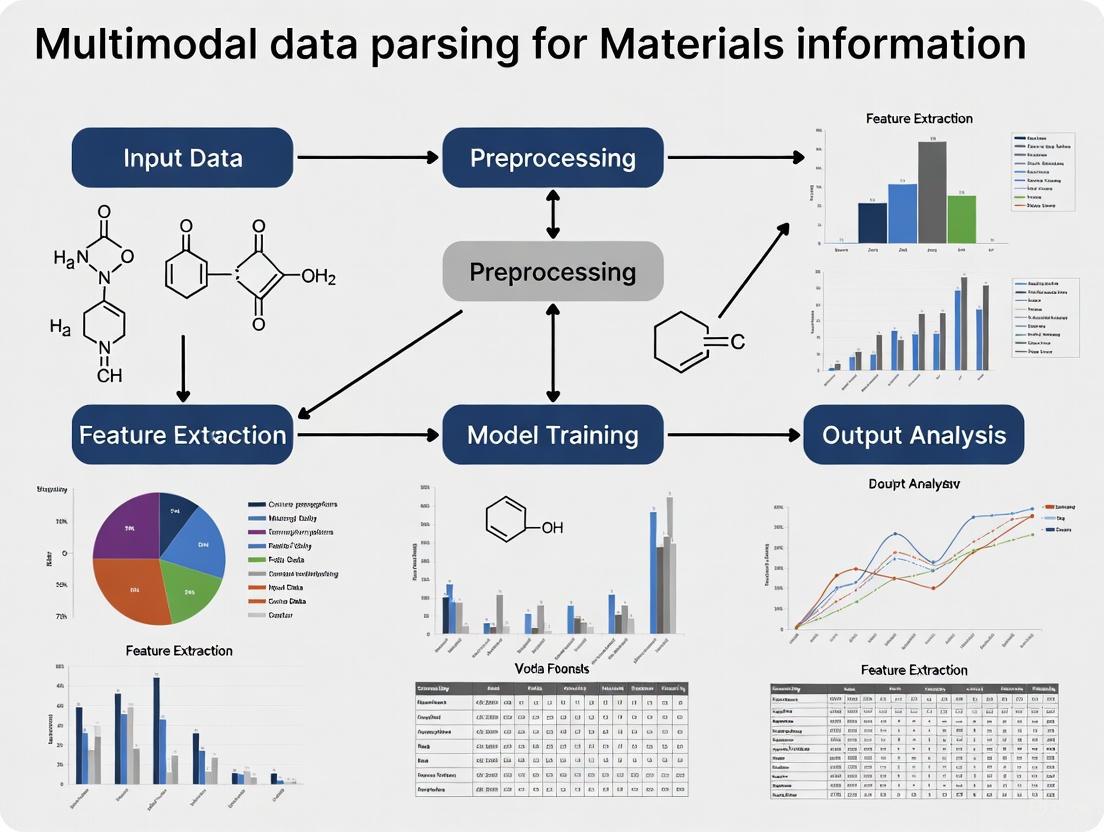

The following diagram illustrates the end-to-end experimental workflow of the MatMCL framework, from data preparation to downstream application:

Detailed Experimental Protocol: Structure-Guided Pre-Training

The SGPT phase is critical for teaching the model the fundamental relationships between modalities. The following protocol is adapted from the electrospun nanofiber case study [2]:

Objective: To learn a joint latent space where representations of the same material from different modalities (e.g., its processing parameters and its microstructure) are aligned closely together.

Input Data: A batch of ( N ) material samples, each with:

- Processing conditions ( { \mathbf{x}i^t }{i=1}^N ) (e.g., flow rate, voltage, concentration)

- Microstructural images ( { \mathbf{x}i^v }{i=1}^N ) (e.g., SEM micrographs)

- Fused input ( { (\mathbf{x}i^t, \mathbf{x}i^v) }_{i=1}^N ) for the multimodal anchor [2].

Procedure:

- Feature Encoding: For each sample ( i ) in the batch, process each modality through its respective encoder to obtain feature vectors:

- Table representation: ( \mathbf{h}i^t = ft(\mathbf{x}i^t) )

- Vision representation: ( \mathbf{h}i^v = fv(\mathbf{x}i^v) )

- Multimodal representation: ( \mathbf{h}i^m = fm(\mathbf{x}i^t, \mathbf{x}i^v) ) [2]

- Projection: Map all feature vectors into the joint latent space using a shared projection network ( g(\cdot) ):

- ( \mathbf{z}i^t = g(\mathbf{h}i^t) ), ( \mathbf{z}i^v = g(\mathbf{h}i^v) ), ( \mathbf{z}i^m = g(\mathbf{h}i^m) ) [2]

- Contrastive Learning: Use the fused representation ( \mathbf{z}_i^m ) as an anchor. Define positive and negative pairs:

- Positive pairs: ( (\mathbf{z}i^m, \mathbf{z}i^t) ) and ( (\mathbf{z}i^m, \mathbf{z}i^v) ) (different views of the same material).

- Negative pairs: ( (\mathbf{z}i^m, \mathbf{z}j^t) ) and ( (\mathbf{z}i^m, \mathbf{z}j^v) ) for ( i \neq j ) (representations from different materials) [2].

- Loss Calculation: Apply a contrastive loss function (e.g., NT-Xent) that minimizes the distance between positive pairs in the latent space while maximizing the distance between negative pairs. This jointly trains all encoders and the projector [2].

Output: Pre-trained and aligned encoders ( ft, fv, f_m ) that can generate meaningful representations even when some modalities are missing during downstream task execution.

The following diagram visualizes the flow of data and the contrastive learning process within the SGPT module:

Essential Research Reagents and Computational Tools

Implementing a multimodal parsing framework requires a suite of computational "reagents" and data sources. The following table details key components and their functions in the research workflow.

Table: Research Reagent Solutions for Multimodal Materials Informatics

| Category | Reagent / Tool | Function in the Workflow |

|---|---|---|

| Data Sources | Materials Project Database [3] | Provides curated, multi-property data on a vast number of crystalline structures, serving as a benchmark for training and validation. |

| Self-Constructed Datasets (e.g., Electrospun Nanofibers [2]) | Provides specialized, experimentally obtained multimodal data (processing, SEM, mechanical properties) for specific material classes. | |

| Encoders | Graph Neural Networks (e.g., PotNet [3]) | Acts as the crystal structure encoder, processing the graph representation of a crystal's atomic structure. |

| Vision Transformers (ViT) / Convolutional Neural Networks (CNN) [2] | Acts as the vision encoder, learning rich features directly from raw microstructural images (e.g., SEM, TEM). | |

| FT-Transformer / Multilayer Perceptron (MLP) [2] | Acts as the table encoder, modeling the non-linear effects of numerical processing parameters and compositions. | |

| Frameworks & Algorithms | Contrastive Learning (e.g., CLIP-inspired [2] [3]) | The core self-supervised algorithm for aligning different modalities in a shared latent space without explicit labels. |

| Multi-stage Learning (MSL) [2] | Extends the pre-trained framework to guide complex design tasks, such as composite material design. | |

| Validation & Analysis | Tensile Testing [2] | Provides ground-truth mechanical property data (e.g., fracture strength, elastic modulus) for model validation. |

| Cross-Modal Retrieval Module [2] | Enables quantitative testing of model understanding by retrieving relevant information across different modalities. |

Performance and Quantitative Validation

Rigorous validation is essential to demonstrate the superiority of multimodal parsing over traditional single-modality approaches. In the case study on electrospun nanofibers, the MatMCL framework was evaluated on several downstream tasks [2].

Table: Performance Metrics for Downstream Tasks in Multimodal Parsing

| Downstream Task | Key Metric | Performance / Outcome |

|---|---|---|

| Mechanical Property Prediction | Prediction Accuracy (with missing structural data) | MatMCL showed improved prediction of mechanical properties (e.g., fracture strength, elastic modulus) even when microstructural image data was unavailable during inference, highlighting its robustness to incomplete data [2]. |

| Conditional Structure Generation | Quality of Generated Microstructures | The framework successfully generated realistic microstructures from a given set of processing parameters, demonstrating its understanding of processing-structure relationships [2]. |

| Cross-Modal Retrieval | Retrieval Accuracy | MatMCL enabled accurate retrieval of relevant processing conditions when queried with a microstructure image, and vice-versa, proving effective knowledge extraction across modalities [2]. |

| Material Discovery | Identification of Stable, Novel Candidates | The MultiMat framework, a related approach, demonstrated novel material discovery by screening for stable materials with desired properties through latent space similarity searches [3]. |

The adoption of multimodal parsing represents a transformative advancement in computational materials science. By moving beyond single-modality analysis, frameworks like MatMCL and MultiMat provide a powerful methodology for modeling the complex, hierarchical relationships that define material behavior [2] [3]. The core strength of this approach lies in its ability to leverage the complementary nature of diverse data types, creating AI models that are not only more accurate but also more robust to the incomplete datasets typical of experimental research.

This paradigm enables novel scientific workflows, from predicting properties with missing data to generating new structures and discovering materials with targeted characteristics. As materials data continues to grow in volume and variety, the principles of defining modality and implementing effective multimodal parsing will become increasingly central to accelerating the design and discovery of next-generation materials.

The Critical Role of Parsing in Establishing Processing-Structure-Property Relationships

In materials science, the fundamental paradigm for designing new materials revolves around understanding the Processing-Structure-Property (PSP) relationships. Establishing these relationships requires integrating and interpreting diverse, complex data generated throughout the materials lifecycle. Parsing, the computational process of extracting structured information from raw, often heterogeneous data sources, serves as the critical foundation for this undertaking. Within the context of modern materials informatics, effective parsing enables the transformation of multimodal data into actionable knowledge, thereby accelerating the discovery and development of advanced materials [4] [5].

The challenge is particularly pronounced because materials data is inherently multimodal. It spans computational outputs from density functional theory (DFT) and molecular dynamics (MD) simulations [6], experimental characterizations from techniques like solid-state NMR [5], architectural drawings [7], and textual specifications. This article provides an in-depth technical examination of parsing methodologies that underpin the establishment of robust PSP relationships, framing them within a broader thesis on multimodal data parsing for materials information research.

Parsing Fundamentals for Multimodal Data

The core objective of parsing in materials science is to convert unstructured or semi-structured data into a structured, machine-readable format that can be integrated into predictive models. This process is the first and most critical step in the materials informatics pipeline, as the quality of the parsed data directly dictates the performance of downstream machine learning models [4] [8].

A sophisticated parsing workflow must handle several data modalities, each with its own unique structure and interpretation challenges. The following diagram illustrates a generalized parsing workflow for heterogeneous materials data, from raw input to structured knowledge.

Methodologies for Parsing Diverse Data Modalities

Parsing Computational Materials Data

Computational simulations like Density Functional Theory (DFT) and Molecular Dynamics (MD) generate complex, multi-step data. The FireWorks workflow software provides a structured approach to parsing this data by modeling computational workflows as Directed Acyclic Graphs (DAGs) [6]. Each computational job ("Firework") contains atomic tasks ("Firetasks") that execute sequentially, with dependencies explicitly defined. At the workflow's conclusion, an analysis FireTask parses all output files, extracts relevant properties, and generates a standardized JSON report or MongoDB document. This structured parsing transforms raw simulation outputs into a queryable database, directly linking computational processing conditions to predicted material structures and properties [6].

Parsing Spectral Data for Structure Analysis

Solid-state NMR (ssNMR) spectra provide critical information about domain structures in polymers but present parsing challenges due to broad, overlapping spectral peaks from domains with different molecular mobility. A developed parsing methodology uses Short-Time Fourier Transform (STFT) to decompose free-induction decay (FID) signals into time-frequency components [5]. The parsing workflow involves:

- Signal Transformation: Applying STFT to 1H-static ssNMR FIDs to obtain frequency, intensity, and T2 relaxation time.

- Component Separation: Fitting the time-frequency data to separate domain components based on distinct T2 relaxation times: Mobile (~0.96 ms), Intermediate (Mobile) (~0.55 ms), Intermediate (Rigid) (~0.32 ms), and Rigid (~0.11 ms).

- Ratio Calculation: Using 3D modeling and Bayesian optimization to calculate the volume ratios of each domain component, minimizing error between simulated and original data.

- Data Integration: The parsed domain ratios are integrated with meta-information (elements, functional groups, thermophysical properties) for subsequent analysis using self-organizing maps (SOM) and market basket analysis [5].

Parsing Textual and Geometric Data

Technical documents contain crucial processing information in textual and tabular formats. Parsing this data employs a hybrid approach:

- Textual Parameter Extraction: Combining regular expressions, domain-specific terminology dictionaries, and a BiLSTM-CRF deep learning model to achieve high-precision extraction of material parameters and properties from unstructured text [7]. This method reported a precision of 83.56% and recall of 86.91% for parameter extraction.

- Geometric Data Parsing: For DXF drawing files, parsing involves vector element analysis, layer semantic analysis, and spatial topological relationship reconstruction. This enables structured extraction of key component geometry, achieving F1 scores of 98.1% for wall line recognition and 92.2% for column recognition [7].

Table 1: Performance Metrics of Multimodal Data Parsing Methods

| Data Modality | Parsing Method | Key Performance Metric | Reported Value |

|---|---|---|---|

| Spectral Data (ssNMR) | STFT + Bayesian Optimization | T2 Relaxation Time Resolution | 4 distinct domains separated [5] |

| Textual Specifications | BiLSTM-CRF Model | Precision / Recall | 83.56% / 86.91% [7] |

| Architectural Drawings | Vector & Topology Analysis | F1 Score (Wall Lines) | 98.1% [7] |

| Architectural Drawings | Vector & Topology Analysis | F1 Score (Columns) | 92.2% [7] |

| Tabular Data | Multi-scale Sliding Window | Recall (Door/Window Params) | 95.0% [7] |

Establishing Processing-Structure-Property Relationships Through Parsed Data

Interpretable Deep Learning for Structure-Property Mapping

Once data is parsed into structured representations, it can be used to train models that map material structures to properties. The Self-Consistent Attention Neural Network (SCANN) architecture exemplifies this approach, using an attention mechanism to predict properties and interpret structure-property relationships [4]. SCANN operates by:

- Representing Local Structures: For each atom in a material structure, Voronoi tessellation identifies neighboring atoms. The geometrical influence of each neighbor is encoded as a vector based on Euclidean distance and Voronoi solid angle.

- Recursive Learning: A series of local attention layers recursively refine the representation of each atom's local environment by applying attention mechanisms to its neighbors' representations.

- Global Representation: A global attention layer combines these local representations into a complete material structure representation, quantitatively measuring the contribution (attention) of each local structure to the global property [4].

This architecture not only achieves prediction accuracy comparable to state-of-the-art models but also provides interpretability by identifying which local atomic environments most significantly influence specific properties like molecular orbital energies or formation energies.

Integrated Analysis for Polymer Design

In polymer science, parsing enables the direct linking of processing conditions to domain structures and final properties. The domain ratios parsed from ssNMR data serve as structural descriptors. For instance, analysis reveals that poly(ε-caprolactone) (PCL) contains a high proportion (37.7%) of Mobile domains, while poly(3-hydroxybutyrate-co-3-hydroxyhexanoate) (PHBH) is dominated by Rigid domains (50.5%) [5]. These parsed structural metrics are then integrated with processing parameters and performance data using methods like self-organizing maps (SOM) and market basket analysis to uncover complex, non-linear PSP relationships that guide the design of polymers with tailored properties.

Table 2: Experimental Protocol for Parsing-Based PSP Relationship Analysis

| Protocol Step | Technical Specification | Purpose/Function |

|---|---|---|

| Data Acquisition | 1H-static ssNMR, DFT/MD simulations, textual specifications | Generate raw, multimodal data on material processing and characterization [5] [6] |

| Data Preprocessing | Vector cleanup, layer standardization, signal filtering | Remove noise, correct misalignments, and standardize data formats [7] [5] |

| Modality-Specific Parsing | STFT, BiLSTM-CRF, FireWorks DAGs, geometric feature analysis | Extract structured descriptors (domain ratios, formation energies, geometric parameters) [5] [7] [6] |

| Data Integration | JSON/MongoDB documentation, feature vector concatenation | Create unified representation of processing, structure, and property parameters [6] [5] |

| Relationship Modeling | SCANN, SOM, Market Basket Analysis, XGBoost | Identify and model complex, non-linear PSP relationships [4] [5] [8] |

| Validation | First-principles calculations, train-test-split validation | Confirm predictive accuracy and physical meaningfulness of parsed descriptors and models [4] |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Parsing-Driven Materials Research

| Reagent / Tool | Function / Application | Technical Notes |

|---|---|---|

| FireWorks Workflow Software | Parsing and managing DFT/MD computational workflows as Directed Acyclic Graphs (DAGs) | Enables execution tracking, data provenance, and standardized output parsing into JSON/MongoDB [6] |

| STFT + Bayesian Optimization Package | Deconvoluting overlapping ssNMR spectra to resolve domain-specific T2 relaxation times | Critical for parsing domain mobility distribution in polymers; requires optimization to minimize fitting error [5] |

| BiLSTM-CRF Model | Named Entity Recognition (NER) for extracting material parameters from unstructured text | Combines deep learning (BiLSTM) with rule-based constraints (CRF); domain dictionary enhances accuracy [7] |

| Vector & Topology Analysis Library | Parsing DXF files to extract component geometry and spatial relationships | Relies on layer semantic analysis and spatial topology reconstruction for automated 3D model generation [7] |

| SCANN Framework | Interpretable deep learning for structure-property prediction with attention mechanisms | Uses Voronoi tessellation for local structure definition; provides atomic-level insight into property determinants [4] |

| Self-Organizing Map (SOM) | Visualizing and clustering high-dimensional parsed material data | Reveals hidden patterns in the integrated space of processing parameters, structural descriptors, and properties [5] |

Parsing is the foundational enabler for establishing quantitative Processing-Structure-Property relationships in modern materials science. By transforming multimodal, heterogeneous data—from computational outputs and spectral signatures to textual descriptions and geometric layouts—into structured, interoperable descriptors, parsing bridges the gap between raw data and actionable knowledge. The methodologies and tools detailed in this technical guide, from interpretable deep learning architectures like SCANN to specialized parsing protocols for NMR and textual data, provide researchers with a reproducible framework to accelerate the design and discovery of next-generation materials. As materials data continues to grow in volume and complexity, the critical role of advanced parsing will only intensify, making it an indispensable component of the materials informatics paradigm.

In materials science and informatics, the systematic acquisition and analysis of data are fundamental to establishing the critical processing-structure-property-performance relationships that govern material behavior [9]. Modern research generates a plethora of data types, each capturing distinct aspects of material characteristics. This technical guide provides a comprehensive overview of four principal data modalities—spectral, microscopic, textual, and tabular—framed within the context of multimodal data parsing for accelerated materials discovery and development. The integration of these diverse data types through advanced artificial intelligence and machine learning methods is revolutionizing how researchers approach materials design, particularly in pharmaceutical development where material properties directly impact drug efficacy, stability, and delivery [10] [9].

Table 1: Core Material Data Modalities in Materials Informatics

| Data Modality | Primary Information Captured | Common Acquisition Techniques | Typical Data Structure |

|---|---|---|---|

| Spectral | Chemical composition, molecular structure, functional groups | UV-vis, NIR, IR, Raman, XRF, LIBS [11] | Hyperspectral cube (x, y, λ) [11] |

| Microscopic | Morphology, microstructure, spatial distribution | Optical microscopy, electron microscopy, scanning probe microscopy [12] | High-resolution 2D/3D image data [12] |

| Textual | Experimental observations, synthesis procedures, literature knowledge | Scientific publications, lab notebooks, technical manuals [13] | Unstructured or semi-structured text [14] |

| Tabular | Quantitative measurements, material properties, composition data | High-throughput experimentation, computational simulations [15] | Structured tables with rows and columns [15] |

Spectral Data

Fundamental Principles and Acquisition

Spectral data encompasses measurements of how materials interact with electromagnetic radiation across various wavelengths [16]. The foundational principle of spectroscopy involves studying light-matter interactions to obtain detailed information about reflectance, emission, or absorption properties [16]. Each material possesses a unique spectral signature—akin to a fingerprint—that enables identification based on chemical composition and physical characteristics [16]. Spectral sensors (spectrometers) capture and measure light reflected or emitted by objects in the form of reflectance spectra, which are typically presented as graphs of intensity versus wavelength [16].

Advanced spectroscopic imaging integrates spatial information with chemical or physical data, enabling comprehensive material characterization [11]. This is achieved through the creation of a hyperspectral data cube, where the X and Y axes represent spatial dimensions and the Z-axis represents spectral information across wavelengths [11]. The process involves systematically measuring spectra across sample surfaces, either through physical rastering, scanning optics with array detectors, or selective subsampling [11].

Experimental Methodology: Hyperspectral Data Cube Construction

Protocol Title: Construction of Hyperspectral Data Cubes for Material Characterization

Objective: To generate a three-dimensional hyperspectral data cube integrating spatial and spectral information for comprehensive material analysis.

Materials and Equipment:

- Illumination source covering relevant wavelength ranges

- Spectrometer with appropriate spectral range (UV-vis, NIR, IR, etc.)

- Positioning system for precise sample movement

- Data acquisition software

- Sample mounting apparatus

Procedure:

- Sample Preparation: Mount the target material securely to ensure stability during measurement.

- System Calibration: Perform wavelength and intensity calibration using standard reference materials.

- Data Acquisition:

- Illuminate the sample with a broadband light source

- Collect light interacting with the sample (reflected, emitted, or transmitted)

- Measure intensity (I) at each wavelength (λ) for an initial spatial point

- Systematically move the spectrometer across the sample surface using raster scanning

- Repeat spectral measurement for each spatial location on the XY-plane

- Data Cube Construction: Compile individual spectra into a stacked structure where:

- X and Y dimensions represent spatial coordinates

- Z dimension represents spectral wavelengths

- Each pixel contains complete spectral information for its spatial location

- Data Processing: Apply chemometrics and machine learning algorithms to extract chemical and physical parameters [11]

Technical Considerations: The specific wavelength range should be selected based on the application—UV (190-360 nm) for electronic transitions, visible (360-780 nm) for color analysis, NIR for overtone vibrations, and IR for molecular vibrations [11].

Research Reagent Solutions for Spectral Analysis

Table 2: Essential Resources for Spectral Data Acquisition and Analysis

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Spectral Sensors | Point spectrometers, Imaging spectrometers (hyperspectral cameras) [16] | Capture and measure light spectra from materials |

| Wavelength Ranges | VNIR (400-1000 nm), NIR (1000-1700 nm), SWIR (1000-2500 nm), MWIR (3000-5000 nm), LWIR (8000-12000 nm) [16] | Target specific molecular vibrations and transitions |

| Accessories | Integrating spheres, cosine correctors, cuvettes, fiber optics [17] | Enable specific measurement geometries and sample types |

| Analysis Software | Chemometrics packages, machine learning algorithms [11] | Extract meaningful information from complex spectral data |

Microscopic Data

Imaging Modalities and Applications

Microscopic data provides structural information across multiple scales, from atomic arrangements to microstructural features [12]. Modern microscopy techniques generate high-resolution images that reveal critical insights into material morphology, phase distribution, grain boundaries, and defect structures [12]. The primary challenge in contemporary microscopy is the conversion of "big visual data" into interpretable information, as automated microscopy systems can acquire thousands of images within hours, far exceeding human analysis capacity [12].

Key microscopy modalities include:

- Optical Microscopy: For microstructural analysis at micrometer resolution

- Electron Microscopy: For nanoscale and atomic-level resolution

- Scanning Probe Microscopy: For surface topography and properties

- Fluorescence Microscopy: For specific component identification in biological materials

Experimental Methodology: Grain Segmentation in Polycrystalline Materials

Protocol Title: Deep Learning-Based Segmentation of Grain Structures in Microscopic Images

Objective: To accurately segment grain boundaries and instances in polycrystalline materials using a transfer learning approach with synthetic data augmentation.

Materials and Equipment:

- Polycrystalline material samples (e.g., iron)

- Optical microscope with digital imaging capabilities

- Sample preparation equipment (polishing, etching)

- Computing hardware with GPU acceleration

- Monte Carlo Potts simulation software

- Image style transfer model (GAN-based)

Procedure:

- Real Data Acquisition:

- Prepare material samples through polishing and etching

- Capture optical images of polycrystalline structure (e.g., 136 serial sections at 2800×1600 resolution)

- Manually annotate grain boundaries to create ground truth labels (2 semantic classes: grain and grain boundary)

- Split data into training (100 images) and test (36 images) sets

- Pre-process into 400×400 pixel patches for computational efficiency [18]

Simulated Data Generation:

- Establish 3D simulated model of polycrystalline materials using Monte Carlo Potts model

- Generate 2D images by slicing simulated 3D image in normal direction

- Extract boundary pixels of each grain to obtain simulated labels

- Ensure geometric and topological consistency with real data [18]

Synthetic Data Creation:

- Train image style transfer model (GAN) using real dataset

- Transform simulated label images into synthetic images incorporating real image features

- Validate that synthetic images maintain label information while adopting realistic appearance [18]

Model Training and Validation:

- Train segmentation models (e.g., U-Net architecture) using combinations of real, simulated, and synthetic data

- Evaluate performance on held-out test set using accuracy metrics

- Demonstrate competitive performance with model trained on synthetic data plus 35% real data versus 100% real data [18]

Technical Considerations: This approach addresses the data scarcity problem in material microscopy by leveraging physical simulations and transfer learning, significantly reducing experimental burden while maintaining segmentation accuracy [18].

Research Reagent Solutions for Microscopic Analysis

Table 3: Essential Resources for Microscopic Data Acquisition and Analysis

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Microscopy Platforms | Optical microscopes, Electron microscopes (SEM, TEM), Scanning probe microscopes [12] | High-resolution imaging at appropriate scales |

| Sample Preparation | Polishing equipment, Etching solutions, Coating systems | Prepare samples for optimal imaging quality |

| Analysis Software | ImageJ, Cell tracking algorithms, Deep learning frameworks (PyTorch, TensorFlow) [12] | Automated analysis of complex microscopic data |

| Simulation Tools | Monte Carlo Potts model, Phase-field simulations [18] | Generate synthetic data for training models |

Textual Data

Characteristics and Applications in Materials Science

Textual data in materials science encompasses a wide range of unstructured and semi-structured information, including scientific publications, experimental protocols, laboratory notebooks, technical manuals, and patent documents [13]. This modality captures critical contextual knowledge about synthesis procedures, experimental observations, material processing conditions, and research outcomes that may not be fully represented in structured data formats.

The primary challenge with textual materials lies in designing them for optimal usability by specific target audiences [13]. Effective text design must consider audience characteristics, purpose, and context of use—what works for expert researchers may not be suitable for cross-cultural applications or those with different linguistic, educational, or intellectual backgrounds [13].

Text Design and Management Methodologies

Protocol Title: Structured Approach to Technical Textual Material Design and Management

Objective: To create usable and effective textual materials tailored to specific audience needs and research purposes within materials science contexts.

Materials and Equipment:

- Content management systems

- Text analysis software

- Digital publishing platforms

- Cross-cultural review resources

Procedure:

- Audience Analysis:

- Identify primary and secondary user groups

- Assess linguistic capabilities, educational backgrounds, and technical expertise

- Determine cultural considerations for international audiences

Purpose Definition:

- Clarify primary use cases (learning, reference, procedure execution)

- Avoid purpose confusion that leads to usability compromises

- Establish clear success metrics for textual effectiveness

Content Structuring:

- Select appropriate format based on purpose:

- Integrated text-graphics for procedures

- Vertical/horizontal division for balanced content

- Variable illustration sizing for complex concepts

- Sparse graphics with heavy text for expert users

- Implement consistent organizational frameworks

- Select appropriate format based on purpose:

Usability Enhancement:

- Incorporate learning principles: advanced organizers, concrete examples, self-check questions

- Utilize formatting options: headings, bold/italic emphasis, quotes, URLs

- Support multiple text value types: String (short text), Text (multi-line), Text with layout, Text with tags and layout [14]

Validation and Iteration:

- Conduct usability testing with target audience representatives

- Gather feedback on clarity, effectiveness, and accessibility

- Revise materials based on empirical observations

Technical Considerations: The four common formats for technical procedural information include: (1) completely integrated text and graphics, (2) vertically or horizontally divided frames, (3) variable-sized illustrations supporting text, and (4) sparse graphics with extensive text for familiar content [13].

Tabular Data

Structure and Standards in Materials Informatics

Tabular data represents structured information organized in rows and columns, forming the backbone of quantitative materials informatics [15]. This modality efficiently captures measured properties, compositional information, processing parameters, and performance characteristics in a format amenable to statistical analysis and machine learning applications.

The preferred format for tabular data in materials informatics is comma-separated values (CSV), which offers cross-platform compatibility and programmatic accessibility compared to proprietary spreadsheet formats [15]. The pandas library in Python has emerged as the standard tool for manipulating tabular data, providing powerful structures like DataFrames (for 2D heterogeneous data) and Series (for 1D homogeneous data) [15].

Methodologies for Tabular Data Management

Protocol Title: Best Practices for Tabular Data Structure and Analysis in Materials Research

Objective: To create, manage, and analyze tabular materials data following FAIR (Findable, Accessible, Interoperable, Reusable) principles for maximum research impact.

Materials and Equipment:

- Python programming environment with pandas library

- Data visualization tools (Matplotlib, Plotly, Seaborn)

- Version control system (Git)

- Data repositories for sharing and preservation

Procedure:

- Data Structure Design:

- Define clear column names representing specific variables

- Ensure consistent data types within columns

- Include metadata describing units, measurement conditions, and uncertainties

- Establish primary keys for data record uniqueness

Data Creation and Import:

- Use pd.DataFrame() constructor with dictionary of columnname:valuelist pairs

- Alternatively, create from 2D arrays with explicit column naming

- For existing data, utilize pd.read_csv() with parameters:

- sep=',' for comma separation (or other delimiters)

- skiprows to exclude header comments

- names for column renaming

- nrows for large file handling

Data Validation:

- Verify data types and ranges for each column

- Check for missing values and inconsistencies

- Confirm unit consistency across related datasets

- Validate against known physical constraints and relationships

Exploratory Data Analysis:

- Compute descriptive statistics (mean, median, standard deviation)

- Generate visualizations (scatter plots, histograms, correlation matrices)

- Identify outliers and anomalous patterns

- Explore relationships between processing parameters and properties

Data Export and Sharing:

- Export to CSV for broad accessibility

- Include comprehensive metadata documentation

- Apply appropriate data licenses and citation information

- Deposit in community-recognized repositories

Technical Considerations: Tabular data provides the foundation for establishing processing-structure-property-performance relationships in materials science [15]. Properly structured tables enable efficient implementation of machine learning algorithms for materials prediction and optimization [9].

Multimodal Data Integration Framework

The true power of materials informatics emerges from the integration of multiple data modalities into a unified analytical framework. Deep learning methods have demonstrated remarkable capabilities in processing and correlating heterogeneous data types, including atomistic, image-based, spectral, and textual information [9]. This multimodal approach enables the establishment of comprehensive structure-property relationships that would be difficult to discern from individual data sources alone.

Integrated Experimental Methodology

Protocol Title: Multimodal Data Parsing for Materials Property Prediction

Objective: To integrate spectral, microscopic, textual, and tabular data modalities for accelerated materials discovery and property prediction.

Materials and Equipment:

- Cross-modal data integration platform

- Deep learning framework (PyTorch, TensorFlow)

- High-performance computing resources

- Multimodal materials dataset

Procedure:

- Data Collection:

- Acquire spectral data capturing chemical composition

- Collect microscopic images revealing microstructure

- Extract textual information from synthesis protocols and literature

- Compile tabular data containing measured properties

Data Preprocessing:

- Normalize each modality to common scale and representation

- Extract features using modality-specific encoders:

- Convolutional Neural Networks (CNNs) for images

- Recurrent Neural Networks (RNNs) or transformers for text

- Spectral convolution networks for spectroscopic data

- Create aligned multimodal representations

Model Architecture Design:

- Implement cross-modal attention mechanisms

- Design fusion layers for integrated representation learning

- Incorporate uncertainty quantification methods

- Enable interpretability for scientific insight generation

Training and Validation:

- Utilize transfer learning to address data scarcity

- Apply multi-task learning for related property prediction

- Implement cross-validation strategies

- Benchmark against physics-based models and experimental results

Knowledge Extraction:

- Identify dominant features influencing target properties

- Discover new material design rules

- Generate hypotheses for experimental validation

- Update models with new data in continuous learning framework

Technical Considerations: Multimodal data integration faces challenges including data heterogeneity, varying scales, and different levels of uncertainty [9]. Deep learning approaches can help bridge these gaps through representation learning and cross-modal alignment, potentially uncovering previously unrecognized relationships in materials behavior [10] [9].

The systematic characterization and integration of spectral, microscopic, textual, and tabular data modalities represents a transformative approach to materials research and development. Each modality offers unique insights—spectral data reveals chemical composition, microscopic data captures structural features, textual data provides contextual knowledge, and tabular data enables quantitative analysis. The emerging paradigm of multimodal data parsing, powered by advanced deep learning methods, is accelerating materials discovery by establishing comprehensive processing-structure-property relationships across diverse data types. For researchers in pharmaceutical development and materials science, mastering these data modalities and their integration is becoming increasingly essential for addressing complex challenges in drug formulation, delivery system design, and material performance optimization. As materials informatics continues to evolve, the development of standardized protocols, shared data resources, and interoperable analysis frameworks will further enhance our ability to extract meaningful insights from multimodal materials data.

The convergence of artificial intelligence (AI) and materials science has given rise to the field of materials informatics, which promises to significantly accelerate the discovery and design of novel materials [19]. However, the real-world application of AI in materials science faces three fundamental challenges: the scarcity of high-quality experimental data, the inherent heterogeneity of multimodal data, and the multiscale complexity of material systems [2]. These challenges are particularly acute when seeking to establish processing-structure-property-performance relationships, a core objective of materials research. This whitepaper details these challenges and presents advanced computational frameworks, including multimodal learning and transfer learning, which are designed to overcome these obstacles within the context of multimodal data parsing for materials informatics.

Data Scarcity in Materials Science

Data scarcity is a pervasive issue that substantially limits the predictive reliability of AI models in materials science. This scarcity primarily stems from the high cost and complexity of material synthesis and characterization, which naturally limits the volume of available data [2].

Strategies to Overcome Data Scarcity

Transfer Learning is a powerful technique for bridging sparse datasets. It involves using information from one dataset to inform a model on another, which preserves contextual differences in underlying measurements. The table below summarizes three key transfer learning architectures and their effectiveness in different materials science contexts [20].

Table 1: Transfer Learning Architectures for Overcoming Data Scarcity

| Architecture Type | Description | Best-Suited Application |

|---|---|---|

| Multi-task | Simultaneously learns multiple related tasks, sharing representations between them. | Most improves classification performance (e.g., of color with band gaps). |

| Difference | Models the difference between data sources or fidelity levels. | Most accurate for multi-fidelity data (e.g., mixed DFT and experimental band gaps). |

| Explicit Latent Variable | Learns an explicit latent variable representing hidden contextual factors. | Most accurate for complex relationships; enables cancellation of errors in functions depending on multiple tasks (e.g., activation energies in NO reduction). |

Multimodal Learning (MML) also serves as a potent remedy for data scarcity. By integrating multiple types of data (modalities), such as processing parameters and microstructural images, MML enhances the model's understanding of complex material systems and mitigates the limitations of small datasets [2].

Experimental Protocol: Leveraging Transfer Learning

- Data Preparation: Assemble the primary dataset (

Dataset A) for the target property, which is small, and a larger, related secondary dataset (Dataset B). - Model Pre-training: Train a base model on

Dataset B. This process allows the model to learn general, transferable features and patterns. - Model Fine-tuning: Use the weights from the pre-trained model to initialize a new model for the target task. Fine-tune this model on

Dataset A. This allows the model to adapt its previously learned knowledge to the specific, data-scarce problem [20].

Data Heterogeneity

Data heterogeneity in materials informatics refers to the challenges of managing and processing data that varies dramatically in format, size, content, and structure, often distributed across multiple institutions [21]. This includes the model heterogeneity and data heterogeneity encountered when training complex AI models.

Manifestations of Data Heterogeneity

- Multimodal, Multi-institutional Data: Combinatorial materials science produces large, complex datasets from synthesis, processing, and characterization (e.g., X-ray diffraction). These datasets are often distributed and vary substantially in format and content, creating a significant data management and integration challenge [21].

- Model Heterogeneity in AI Training: In Multimodal Large Language Models (LLMs), different modules (e.g., modality encoders, LLM backbones, modality generators) vary dramatically in size and operator complexity. This heterogeneity introduces severe pipeline bubbles during training, leading to poor GPU utilization [22].

- Data Heterogeneity in AI Training: The intricate and unstructured nature of multimodal input data leads to training "stragglers," where some data batches take longer to process than others. This prolongs training duration and exacerbates pipeline bubbles [22].

Solutions for Data Heterogeneity

Technical Framework: The MMScale framework is designed to address heterogeneity in multimodal LLM training. Its core techniques are [22]:

- Adaptive Resource Allocation (for Model Heterogeneity): This technique tailors resource allocation and parallelism strategies separately for each model module (encoder, backbone, generator) to minimize pipeline bubbles.

- Data-Aware Reordering (for Data Heterogeneity): This involves strategically reordering training data at two levels—inter-microbatch and intra-microbatch—to evenly distribute computational load and minimize training delays.

Data Management Infrastructure: Developing standardized dashboards and data infrastructures is crucial for organizing, analyzing, and visualizing large "data lakes" composed of heterogeneous combinatorial datasets [21]. The guiding principle is to prioritize standardisation and the creation of FAIR (Findable, Accessible, Interoperable, and Reusable) data repositories [19].

Multiscale Complexity

Real-world material systems exhibit a hierarchical nature, characterized by multiple scales of information—from atomic composition and microstructure to macroscopic properties [2]. This multiscale complexity poses a significant challenge for AI models to accurately represent and integrate these correlated features.

A Framework for Multiscale, Multimodal Learning

The MatMCL framework is a versatile multimodal learning approach specifically designed to tackle multiscale complexity [2].

Table 2: Core Modules of the MatMCL Framework

| Module Name | Function | Key Benefit |

|---|---|---|

| Structure-Guided Pre-training (SGPT) | Aligns processing and structural modalities via a fused material representation using contrastive learning. | Enables robust property prediction even when structural information is missing. |

| Property Prediction | Predicts material properties from the aligned multimodal representations. | Improves prediction accuracy without requiring complete structural data. |

| Cross-Modal Retrieval | Allows for querying and extracting knowledge across different modalities. | Uncovers processing-structure-property relationships. |

| Conditional Structure Generation | Generates microstructures from given processing parameters. | Facilitates the inverse design of materials. |

Experimental Protocol: Structure-Guided Pre-training for Multiscale Learning

- Data Input: For a batch of N samples, input the processing conditions ( {\mathbf{x}{i}^{t}}{i=1}^{N} ) and microstructure images ( {\mathbf{x}{i}^{v}}{i=1}^{N} ).

- Modality Encoding:

- Process the tabular processing parameters with a table encoder (e.g., an FT-Transformer or MLP) to get representations ( {\mathbf{h}{i}^{t}}{i=1}^{N} ).

- Process the microstructure images with a vision encoder (e.g., a Vision Transformer or CNN) to get representations ( {\mathbf{h}{i}^{v}}{i=1}^{N} ).

- Multimodal Fusion: Fuse the processing and structure representations ( {\mathbf{x}{i}^{t}, \mathbf{x}{i}^{v}}{i=1}^{N} ) using a multimodal encoder (e.g., a Transformer with cross-attention) to obtain fused embeddings ( {\mathbf{h}{i}^{m}}_{i=1}^{N} ).

- Contrastive Alignment: Project all representations into a joint latent space using a shared projector. Use the fused representation ( \mathbf{z}{i}^{m} ) as an anchor and align it with its corresponding unimodal representations ( \mathbf{z}{i}^{t} ) and ( \mathbf{z}_{i}^{v} ) (positive pairs), while pushing away representations from other samples (negative pairs). This self-supervised step forces the model to learn the underlying correlations between processing conditions and microstructure [2].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational tools and resources essential for implementing the advanced frameworks discussed in this whitepaper.

Table 3: Key Research Reagents & Computational Solutions

| Tool/Resource Name | Type | Function/Purpose |

|---|---|---|

| MMScale [22] | Training Framework | An efficient and adaptive framework for training Multimodal LLMs that addresses model and data heterogeneity to achieve high efficiency on large-scale GPU clusters. |

| MatMCL [2] | Multimodal Learning Framework | A versatile MML framework that handles missing modalities and facilitates cross-modal interaction and transformation for multiscale material systems. |

| Multi-task/Difference/Explicit Latent Variable Architectures [20] | Transfer Learning Model | Specific neural network architectures designed to effectively perform transfer learning, overcoming data scarcity by leveraging information from related datasets. |

| FT-Transformer & Vision Transformer (ViT) [2] | Neural Network Architecture | Advanced encoder architectures used within MatMCL for modeling tabular data and image data, respectively, to capture complex, non-linear relationships. |

| StepCCL [22] | Computational Library | A custom collective communication library designed to hide communication overhead within computation, optimizing large-scale distributed training. |

| FAIR Data Repositories [19] | Data Infrastructure | Standardized data repositories adhering to the Findable, Accessible, Interoperable, and Reusable (FAIR) principles, which are critical for addressing data heterogeneity. |

The intertwined challenges of data scarcity, heterogeneity, and multiscale complexity represent significant but surmountable barriers in materials informatics. As detailed in this whitepaper, the strategic application of transfer learning, robust data management infrastructures, and advanced multimodal learning frameworks like MatMCL and MMScale provides a clear pathway forward. Progress will depend on the continued development of modular AI systems, the widespread adoption of standardized FAIR data practices, and sustained cross-disciplinary collaboration. By addressing these core challenges, the materials science community can unlock transformative advances in the discovery and design of novel functional materials.

The convergence of high-throughput experimentation (HTE) and the FAIR (Findable, Accessible, Interoperable, and Reusable) data principles represents a fundamental shift in materials science and pharmaceutical research. This transformation is driven by the need to accelerate discovery while ensuring the growing volumes of complex, multimodal data remain valuable for future reuse. HTE enables the rapid exploration of vast chemical and materials spaces through automated, parallelized experimentation, dramatically increasing the pace of data generation. However, this acceleration creates a critical bottleneck in data management, where the value of experimental outputs depends entirely on how well they can be integrated, analyzed, and reused. The FAIR principles provide a framework to overcome this bottleneck by ensuring data are machine-actionable and semantically rich, enabling both human understanding and computational analysis. Within the context of multimodal data parsing for materials informatics, this integration is essential for building comprehensive processing-structure-property-performance relationships that drive innovation in functional materials and drug development.

High-Throughput Experimentation: Methodologies and Quantitative Impact

High-Throughput Experimentation employs automated, parallelized platforms to efficiently explore experimental parameter spaces, drastically reducing the time and resources required for discovery and optimization. In pharmaceutical development, HTE has become indispensable for candidate screening and reaction optimization. A 20-year implementation journey at AstraZeneca demonstrates the profound impact of systematized HTE, where automation of powder dosing using systems like CHRONECT XPR enabled the screening of diverse solids—including transition metal complexes, organic starting materials, and inorganic additives—with high precision: deviations of <10% at sub-milligram masses and <1% at masses >50 mg [23]. This technological advancement reduced manual weighing time from 5-10 minutes per vial to under 30 minutes for an entire experiment, while simultaneously eliminating significant human errors associated with manual handling at small scales [23].

The experimental workflow for pharmaceutical HTE typically involves several standardized protocols. Library Validation Experiments (LVEs) screen building block chemical space against variables like catalyst type and solvent choice in 96-well array manifolds at milligram scales [23]. Reaction screening employs inert atmosphere gloveboxes and robotic liquid handling systems with resealable gaskets to prevent solvent evaporation [23]. In oncology discovery, implementation of integrated HTE workflows at AstraZeneca facilities increased average quarterly screen sizes from ~20-30 to ~50-85, while the number of conditions evaluated grew from <500 to approximately 2000 per quarter over a seven-quarter period [23].

In materials chemistry, flow chemistry has emerged as a powerful HTE tool that addresses limitations of traditional batch-based screening. Flow HTE enables investigation of continuous variables (temperature, pressure, reaction time) dynamically throughout experiments, providing wider process windows and improved safety profiles for hazardous chemistry [24]. The methodology is particularly valuable in photochemical reaction optimization, where flow reactors enable efficient photochemical processes by minimizing light path length and precisely controlling irradiation time, overcoming challenges of poor light penetration and non-uniform irradiation in batch systems [24]. Automated platforms integrate flow chemistry with inline/real-time process analytical technologies (PAT), creating efficient HTE workflows requiring less material and human intervention [24].

Table 1: Quantitative Impact of HTE Implementation in Pharmaceutical Research

| Metric | Pre-Automation Performance | Post-Automation Performance | Implementation Context |

|---|---|---|---|

| Weighing Time | 5-10 minutes per vial | <30 minutes for complete experiment | Solid dosing with CHRONECT XPR [23] |

| Weighing Accuracy | Significant human error at small scales | <10% deviation (sub-mg to low mg)<1% deviation (>50 mg) | Wide range of solid materials [23] |

| Quarterly Screen Size | ~20-30 screens per quarter | ~50-85 screens per quarter | AstraZeneca Oncology Discovery [23] |

| Conditions Evaluated | <500 per quarter | ~2000 per quarter | AstraZeneca Oncology Discovery (7 quarters) [23] |

| Photochemical Scale-up | Laboratory scale (grams) | 6.56 kg per day throughput | Photoredox fluorodecarboxylation [24] |

Diagram 1: High-Throughput Experimentation Workflow

FAIR Data Principles: Implementation Frameworks for Materials Science

The FAIR principles provide a structured framework for scientific data management, emphasizing machine-actionability to handle the increasing volume, complexity, and creation speed of research data [25]. FAIR represents four foundational pillars: Findability (easy location of data and metadata through persistent identifiers and rich descriptions), Accessibility (retrieval using standardized protocols with authentication where necessary), Interoperability (integration with other data through common languages and vocabularies), and Reusability (comprehensive description with clear provenance and licensing) [25]. These principles address critical challenges in modern materials research, where multimodal datasets from combinatorial materials science are often too large and complex for human reasoning alone, distributed across institutions, and variable in format, size, and content [21].

Implementation of FAIR principles occurs through structured "FAIRification" processes. The NOMAD Laboratory platform exemplifies this approach through its schema-based Metainfo system, which preserves structural semantics of standardized data formats like NeXus and makes them interoperable across materials science domains [26]. Recent extensions to NeXus standards developed through FAIRmat collaboration include NXapm for atom probe microscopy, NXem for electron microscopy, and application definitions for various spectroscopy techniques, creating cross-domain standards for experimental materials data [26]. These standardization efforts follow transparent, community-driven processes where definitions are openly discussed and refined on GitHub before official adoption [26].

Best practices for FAIR implementation include incorporating FAIR considerations at project inception, utilizing domain-specific metadata standards, applying clear usage licenses, and engaging data stewards with specialized knowledge in data governance and lifecycle management [27]. The Datatractor framework addresses tool discoverability and inconsistent usage instructions that hinder FAIR implementation by providing a curated registry of data extraction tools with standardized, lightweight schema descriptions, enabling machine-actionable installation and use [28]. This approach addresses inefficiencies of tool reimplementation while offering both public-facing data extraction services and integration capabilities for research data management systems [28].

Table 2: FAIR Data Implementation Frameworks and Standards

| Framework/Standard | Primary Domain | Key Features | Implementation Examples |

|---|---|---|---|

| NOMAD Metainfo | Materials Science | Schema-based system for metadataPreserves structural semanticsEnables cross-platform interoperability | Oasis for community data sharingNeXus format integration [26] |

| NeXus Standard Extensions | Experimental Materials Science | NXapm (atom probe microscopy)NXem (electron microscopy)Optical spectroscopy definitions | Community-driven development via GitHubFull NOMAD platform integration [26] |

| Datatractor | Chemical & Materials Sciences | Curated registry of extraction toolsStandardized schema descriptionsMachine-actionable installation | Public data extraction servicesRDM system integration [28] |

| Perovskite JSON Schema | Hybrid Perovskite Materials | Standardized composition reportingIUPAC-compliant descriptionsMachine-readable representations | Hybrid Perovskite Ions DatabaseNOMAD API accessibility [26] |

Diagram 2: FAIR Data Principles Implementation Ecosystem

Multimodal Data Parsing: Bridging HTE and FAIR Data

Multimodal data parsing provides the critical technical bridge connecting high-throughput experimentation with FAIR data ecosystems, transforming heterogeneous experimental outputs into structured, interoperable information. In materials science, this involves integrating diverse data modalities including synthesis conditions, characterization results (e.g., XRD patterns), property measurements, and computational descriptors [21]. The parsing challenge is particularly acute for legacy building documentation in construction materials, where differences in design standards and drafting conventions across historical periods create diverse and complex representations that resist automated processing [7]. Similar challenges exist in materials informatics, where heterogeneous data formats and incomplete parameter information hinder the development of comprehensive materials databases.

Advanced parsing methodologies employ hybrid approaches combining rule-based and machine-learning techniques. For architectural and materials data, vector element parsing with layer semantic analysis enables structured extraction of key component geometry, while spatial topological relationship analysis improves modeling accuracy [7]. In text parsing, combining regular expressions, domain-specific terminology dictionaries, and BiLSTM-CRF deep learning models significantly improves extraction accuracy of unstructured parameters from scientific literature and experimental documentation [7]. For complex nested tables common in materials characterization data, multi-scale sliding windows with geometric feature analysis enable automatic detection and parameter extraction [7].

Experimental results demonstrate the effectiveness of these approaches. In architectural data parsing, F1 scores for wall line, wall, and column recognition reach 98.1%, 84.9%, and 92.2% respectively, while door and window recognition achieves 74.3% and 76.2% F1 scores [7]. For text parameter extraction, the PENet model achieves precision of 83.56% and recall of 86.91%, and table parameter extraction recalls for doors/windows and structure reach 95.0% and 96.7% respectively [7]. These parsing capabilities enable what is described as "Beyond 3D" multi-dimensional BIM integration, where drawings provide geometry and topology, text contributes materials and performance data, and tables supply identifiers and specifications [7].

Integrated Workflow: From Experimentation to Data-Driven Discovery

The complete integration of HTE, multimodal parsing, and FAIR data management creates a powerful ecosystem for accelerated discovery. This workflow begins with automated experimental execution, where platforms like the CHRONECT XPR system handle powder dosing of diverse solid materials with minimal deviation from target masses [23]. In parallel, liquid handling systems prepare reagent solutions in multi-well plates within inert atmosphere gloveboxes. The experimental phase employs either batch-based approaches in 96- or 384-well plates or continuous flow systems that enable investigation of continuous variables like temperature, pressure, and reaction time [24].

Following experimental execution, multimodal data parsing extracts and standardizes parameters from heterogeneous sources. For photochemical reactions, this includes parsing reaction conditions, light intensity parameters, conversion metrics, and spectral data [24]. The parsed data then undergoes FAIRification through platforms like NOMAD, where it is enriched with standardized metadata using domain-specific schemas, assigned persistent identifiers, and registered in searchable resources [26]. This process employs application definitions like NXoptical_spectroscopy for optical spectroscopy data or NXmpes for photoemission spectroscopy, ensuring semantic interoperability across experimental techniques [26].

The resulting FAIR data ecosystem enables advanced data mining and machine learning applications. For perovskite materials research, a standardized JSON schema following IUPAC recommendations enables both human- and machine-readable descriptions of over 300 identified perovskite ions, capturing descriptors including composition, molecular formula, SMILES representation, IUPAC name, and CAS number [26]. Similar approaches for metal-organic frameworks (MOFs), electrospun PVDF piezoelectrics, and 3D printed mechanical metamaterials facilitate the mapping of complex structure-property-processing relationships [19]. The curated Hybrid Perovskite Ions Database, accessible via the NOMAD API, demonstrates how standardized data enables researchers worldwide to upload, share, and reuse consistent materials data in line with FAIR principles [26].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for HTE and FAIR Data Workflows

| Tool/Platform | Function | Application Context |

|---|---|---|

| CHRONECT XPR Workstation | Automated powder dispensing (1 mg to grams)Handles free-flowing to electrostatic powdersCompact footprint for glovebox integration | High-throughput screening of solid catalysts, reactants, and additives in pharmaceutical and materials synthesis [23] |

| NOMAD Laboratory Platform | FAIR data management, storage, and sharingSchema-based Metainfo systemNeXus standard integration | Materials science data repository enabling cross-platform interoperability and data reuse across computational and experimental domains [26] |

| Flow Photochemical Reactors | Enables photochemical HTE with controlled irradiationMinimized light path lengthPrecise residence time control | Photoredox reaction screening and optimization, including flavin-catalyzed fluorodecarboxylation and cross-electrophile coupling [24] |

| Datatractor Framework | Curated registry of data extraction toolsStandardized schema descriptionsMachine-actionable installation | Metadata extraction from scientific literature and experimental documentation for chemical and materials sciences [28] |

| BiLSTM-CRF Models | Named Entity Recognition (NER) for textual dataDomain-specific terminology integrationUnstructured parameter extraction | Parsing architectural texts, material specifications, and experimental protocols for multimodal data integration [7] |

| Multi-well Plate Reactors | Parallel reaction screening (96/384-well)Miniaturized reaction volumes (~300 μL)Integrated mixing and cooling | Initial reaction condition screening, catalyst evaluation, and solvent optimization in pharmaceutical and materials chemistry [24] |

The integration of high-throughput experimentation with FAIR data principles through advanced multimodal parsing represents a paradigm shift in materials and pharmaceutical research. This synergy addresses both the acceleration of discovery and the long-term value preservation of experimental data, creating a foundation for sustainable, data-driven scientific progress. The transformation is evidenced by quantitative improvements in pharmaceutical screening throughput, precision gains in experimental execution, and robust frameworks for data interoperability across research communities.

Future developments will focus on enhancing semantic interoperability, advancing autonomous experimentation systems, and refining hybrid parsing models that combine rule-based and machine-learning approaches. Initiatives like FAIR 2.0 aim to extend the FAIR guiding principles to address semantic interoperability challenges more comprehensively, ensuring data and metadata are not only accessible but also meaningful across different systems and contexts [27]. Similarly, the development of FAIR Digital Objects (FDOs) seeks to standardize data representation, facilitating seamless data exchange and reuse globally [27]. In computational materials science, hybrid models combining the strengths of traditional neural network potentials with foundation model concepts show promise for improving predictive accuracy and computational efficiency [28]. As these technologies mature, the research community moves closer to fully autonomous discovery systems where HTE, multimodal parsing, and FAIR data management create a continuous cycle of hypothesis generation, experimental validation, and knowledge extraction.

Multimodal Parsing Techniques and Real-World Material Applications

In the field of materials information research, the integration of heterogeneous data—from atomic-scale microscopy and spectral analysis to macroscopic mechanical properties and scientific literature—presents a significant computational challenge. Effectively parsing this multimodal data is crucial for accelerating the discovery and development of new materials and pharmaceuticals. Two competing artificial intelligence (AI) paradigms have emerged to address this complexity: the well-established modular pipeline and the increasingly prominent end-to-end vision-language model.

This whitepaper provides an in-depth technical comparison of these two approaches, framing them within the specific context of multimodal data parsing for materials science and drug development. It is structured to equip researchers and scientists with the knowledge to select and implement the optimal strategy for their specific research challenges, supported by quantitative data, detailed experimental protocols, and practical toolkits.

Defining the Approaches

Modular Pipelines

A modular pipeline decomposes a complex task, such as analyzing a material's structure-property relationship, into a sequence of discrete, specialized components or sub-tasks. Each component is designed, optimized, and validated independently, with the output of one module serving as the input for the next [29]. In a typical materials science workflow, this might involve a series of steps such as data ingestion, preprocessing, feature extraction, and predictive modeling.

End-to-End Models

An end-to-end model seeks to directly map raw, multimodal inputs (e.g., a scanning electron microscopy image and a textual description of processing parameters) to a desired output (e.g., a prediction of tensile strength) using a single, unified model, most often a deep neural network [29]. This approach minimizes human intervention in intermediate stages, relying on the model's internal architecture to learn optimal representations and sub-tasks from the data.

Comparative Analysis: Performance and Practical Considerations

The choice between modular and end-to-end approaches involves trade-offs across multiple dimensions, including performance, resource requirements, and operational flexibility. The following table synthesizes a comparative analysis based on recent implementations and research.

Table 1: Comparative analysis of modular pipeline and end-to-end approaches

| Aspect | Modular Pipelines | End-to-End Models |

|---|---|---|

| Performance Metrics | High reliability in controlled tasks (e.g., 92.3% success rate for template-based DAG generation) [30]. Excels in precision-focused tasks like variant calling in genomics [31]. | Often leads in overall accuracy on complex tasks (e.g., state-of-the-art precision/recall) [29]. Superior on integrated reasoning benchmarks like MatVQA [32]. |

| Data Requirements | Can be effective with smaller, well-defined datasets for individual components. | Data-intensive; requires large amounts of high-quality, multimodal data for training [29]. |

| Computational Cost | Inference can be efficient; total cost depends on pipeline complexity. | Training is computationally expensive and often intractable; inference can also be costly [29]. |

| Explainability & Debugging | High; failures are easily diagnosable to specific components, facilitating correction [29]. | "Black box" nature makes it difficult to locate the source of errors or understand decisions [29]. |

| Flexibility & Updating | Updating a single component is straightforward; however, output/input format changes can require downstream revisions [29]. | Highly flexible; can be retrained for new tasks with new data, often without architectural changes [29]. |

| Development Effort | High; requires significant design choices and expertise to define components and interactions [29]. | Lower initial effort; avoids thorny component design problems but requires deep learning expertise [29]. |

| Optimization | Suboptimal; components are optimized independently, errors accumulate, and downstream info cannot inform upstream components [29]. | Optimal for the global task; the entire model is jointly optimized, allowing all parts to co-adapt [29]. |

| Risk Mitigation | Easier to validate and control individual components, reducing risks of biased or incorrect output [29]. | Higher risk of biased, incorrect, or offensive output derived directly from training data [29]. |

Experimental Protocols for Materials Research

To ground this comparison in practical research, below are detailed methodologies for implementing each approach in a scenario involving the prediction of a material's properties from its processing conditions and microstructure.

Protocol 1: Modular Pipeline for Structure-Property Prediction

This protocol is inspired by established data management and bioinformatics principles [33] [31].

Objective: To predict the mechanical properties of an electrospun nanofiber material based on processing parameters and SEM microstructural images, using a modular, reproducible pipeline.

Workflow Overview: The following diagram illustrates the sequence of discrete, containerized modules in this pipeline.

Methodology Details:

Data Ingestion & Curation:

- Inputs: A dataset containing processing parameters (e.g., flow rate, voltage, concentration) and corresponding SEM images [2].