Multi-Fidelity Machine Learning: Revolutionizing Computational Materials Design and Drug Development

This comprehensive review explores the transformative potential of multi-fidelity machine learning (MFML) in computational materials design and drug discovery.

Multi-Fidelity Machine Learning: Revolutionizing Computational Materials Design and Drug Development

Abstract

This comprehensive review explores the transformative potential of multi-fidelity machine learning (MFML) in computational materials design and drug discovery. MFML strategically integrates data of varying accuracy and computational cost—from fast approximate calculations to expensive high-fidelity simulations and experiments—to dramatically accelerate the discovery and optimization of new materials and therapeutic compounds. The article establishes foundational principles, surveys cutting-edge methodological frameworks including multi-fidelity Bayesian optimization and surrogate modeling, and provides practical troubleshooting guidance for real-world implementation. Through validation case studies and comparative analysis across materials science and biomedical domains, we demonstrate how MFML achieves superior computational efficiency and prediction accuracy compared to traditional single-fidelity approaches, offering a paradigm shift for researchers tackling complex design challenges under constrained resources.

Understanding Multi-Fidelity Learning: Core Principles and Data Sources for Materials Science

In computational materials design, fidelity refers to the level of detail, accuracy, and computational expense of a simulation or model. The fundamental challenge researchers face is the inherent trade-off: higher-fidelity methods provide greater accuracy and predictive power but require substantial computational resources, while lower-fidelity approaches offer computational efficiency at the cost of reduced accuracy and physical detail. Multi-fidelity learning strategically integrates data from multiple levels of this spectrum to accelerate materials discovery and design, leveraging inexpensive low-fidelity data to guide exploration while reserving high-fidelity computations for the most promising candidates [1].

This paradigm is particularly powerful in materials science, where exploring vast chemical spaces with first-principles calculations alone is computationally prohibitive. Multi-fidelity optimization (MFO) has thus emerged as an essential tool, systematically using low-fidelity information to reduce reliance on costly high-fidelity analysis while ensuring convergence to high-fidelity optimal designs [1]. This framework enables researchers to navigate complex design spaces more efficiently, balancing precision with practical computational constraints.

The Fidelity Spectrum in Materials Modeling

The concept of fidelity in computational materials science spans a continuous spectrum, but can be broadly categorized into distinct levels. Each level serves different purposes within the materials discovery and optimization pipeline.

Table: The Fidelity Spectrum in Computational Materials Design

| Fidelity Level | Typical Methods | Computational Cost | Accuracy | Primary Use Cases |

|---|---|---|---|---|

| Low-Fidelity | Force-field methods, Empirical potentials, Coarse-grained models | Low | Low to Medium | High-throughput screening, Early-stage exploration, Large-system dynamics |

| Medium-Fidelity | Tight-binding, Semi-empirical quantum chemistry, Classical molecular dynamics | Medium | Medium | Structure-property analysis, Pre-screening for high-fidelity methods |

| High-Fidelity | Density Functional Theory (DFT), Ab initio molecular dynamics | High | High | Accurate property prediction, Final validation, Electronic structure analysis |

| Very High-Fidelity | Coupled-cluster (CCSD(T)), Quantum Monte Carlo | Very High | Very High | Benchmarking, Training data for machine learning potentials |

Real-world materials optimization tasks are characterized by multiple challenges, including high levels of noise, multiple fidelities, multiple objectives, linear constraints, non-linear correlations, and failure regions [2]. Effective multi-fidelity strategies must account for these complexities, often employing surrogate modeling to create computationally efficient approximations that closely resemble real-world tasks within explored boundaries.

Quantitative Data on Fidelity Trade-offs

Understanding the concrete computational costs and accuracy metrics across the fidelity spectrum is crucial for effective research planning and resource allocation.

Table: Quantitative Comparison of Computational Methods for Materials Property Prediction

| Method | System Size (Atoms) | Time Scale | Accuracy (Formation Energy) | Relative Computational Cost |

|---|---|---|---|---|

| Classical Force Fields | 10,000 - 1,000,000 | Nanoseconds to microseconds | > 100 meV/atom | 1x (Reference) |

| Density Functional Theory | 100 - 1,000 | Picoseconds to nanoseconds | 10-100 meV/atom | 100-10,000x |

| Quantum Monte Carlo | 10 - 100 | Static calculations | < 10 meV/atom | 10,000-1,000,000x |

| M3GNet ML Potential [3] | 1,000 - 100,000 | Nanoseconds | ~25 meV/atom (vs. DFT) | 10-100x (vs. DFT) |

| CHGNet ML Potential [3] | 1,000 - 100,000 | Nanoseconds | ~28 meV/atom (vs. DFT) | 10-100x (vs. DFT) |

The emergence of machine learning interatomic potentials (MLIPs) has created a new category in the fidelity spectrum, offering near-DFT accuracy at a fraction of the computational cost [3]. Foundation potentials (FPs) with coverage across the periodic table demonstrate how graph neural networks (GNNs) can handle diverse chemistries and structures effectively, bridging the gap between low-fidelity empirical potentials and high-fidelity quantum mechanical methods [3].

Multi-Fidelity Experimental Protocols

Protocol: Multi-Fidelity Optimization of Hard-Sphere Particle Packing

This protocol adapts the methodology from the materials science optimization benchmark dataset for hard-sphere packing simulations, which embodies characteristics typical of real-world materials optimization tasks [2].

Application: Optimizing particle packing fractions in hard-sphere systems with multiple fidelity levels and failure regions.

Materials and Input Parameters:

- Nine input parameters with linear constraints controlling particle size distributions and simulation conditions

- Two discrete fidelities (algorithm selection) each with continuous fidelity parameters

- Lubachevsky–Stillinger and force-biased algorithms for packing generation

Procedure:

- Low-Fidelity Screening Phase: Perform 1,000-5,000 random simulations using the faster Lubachevsky–Stillinger algorithm across the parameter space. Log all input parameters and resulting packing fractions.

- Failure Probability Mapping: Create a separate dataset mapping input parameter sets to estimated simulation failure probabilities. This accounts for heteroskedastic noise characteristic of real materials optimization.

- Surrogate Model Development: Train a multi-fidelity surrogate model incorporating both the low-fidelity data and failure probabilities. Use percentile ranks for groups of identical parameter sets to handle non-Gaussian noise.

- High-Fidelity Validation: Select 100-200 promising parameter configurations identified by the surrogate model for validation with the more accurate force-biased algorithm.

- Iterative Refinement: Update the surrogate model with high-fidelity results and refine the optimization. Focus computational resources on regions with optimal predicted packing fractions and low failure probability.

Expected Outcomes: This protocol typically identifies optimal packing parameters with 70-80% fewer high-fidelity evaluations compared to single-fidelity approaches, while accurately modeling failure regions and noise characteristics.

Protocol: Multi-Fidelity Materials Property Prediction with MatGL

This protocol utilizes the Materials Graph Library (MatGL) for predicting materials properties across multiple fidelity levels [3].

Application: Predicting formation energies, band gaps, and other materials properties using graph neural networks with multi-fidelity data.

Materials and Input Parameters:

- Pymatgen Structure or Molecule objects representing atomic structures

- Graph converter for transforming atomic configurations into DGL graphs

- Cutoff radius (typically 4-6 Å) defining bonds between atoms

- Multi-fidelity labels (e.g., formation energies from different computational methods)

Procedure:

- Data Preparation: Create an MGLDataset containing structures with properties calculated at different fidelity levels (e.g., DFT, higher-level theory, experimental data). Include optional global state features (u) for handling multi-fidelity data.

- Graph Conversion: Use MatGL's graph converter to transform structures into directed or undirected graphs with nodes (atoms) and edges (bonds). The converter uses a cutoff radius to define connectivity.

- Model Selection: Choose an appropriate GNN architecture from MatGL:

- M3GNet (Materials 3-body Graph Network): For universal interatomic potentials

- MEGNet (MatErials Graph Network): For property predictions

- CHGNet (Crystal Hamiltonian Graph Network): For electronic structure-informed potentials

- Multi-Fidelity Training: Utilize MatGL's training module with PyTorch Lightning. The model learns relationships across fidelity levels, using low-fidelity data to guide exploration and high-fidelity data for accuracy.

- Prediction and Validation: Use the trained model's

predict_structuremethod for new materials. For interatomic potentials, use the Potential class wrapper to handle energy scaling and compute forces/stresses.

Expected Outcomes: Models trained with multi-fidelity data typically achieve 30-50% higher data efficiency compared to single-fidelity approaches while maintaining accuracy on high-fidelity predictions.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Multi-Fidelity Materials Design

| Tool/Resource | Type | Primary Function | Application in Multi-Fidelity Research |

|---|---|---|---|

| MatGL (Materials Graph Library) [3] | Software Library | Graph deep learning for materials science | Provides implementations of GNN architectures (M3GNet, MEGNet) and pre-trained foundation potentials for multi-fidelity learning |

| Pymatgen [3] | Python Library | Materials analysis | Structure manipulation, analysis, and conversion to graph representations for MatGL |

| DGL (Deep Graph Library) [3] | Software Library | Graph neural network framework | Backend for efficient GNN training and inference, offering superior memory efficiency for large graphs |

| Hard-Sphere Packing Dataset [2] | Benchmark Data | Multi-fidelity optimization benchmark | Provides validated dataset with failure regions and noise characteristics for testing multi-fidelity methods |

| LAMMPS/ASE [3] | Simulation Interface | Atomistic simulations | Enables use of pre-trained potentials in molecular dynamics simulations across fidelity levels |

| Multi-Fidelity Surrogate Models [1] | Methodological Framework | Bridging fidelity levels | Gaussian processes and other surrogate models that integrate low and high-fidelity data for efficient optimization |

Advanced Multi-Fidelity Architectures in MatGL

The Materials Graph Library implements sophisticated multi-fidelity capabilities through its modular architecture. MatGL is organized around four core components: data pipeline, model architectures, model training, and simulation interfaces [3].

A key innovation in MatGL's approach to multi-fidelity learning is the inclusion of global state features (u) in architectures like MEGNet and M3GNet, which provide greater expressive power for handling multi-fidelity data [3]. This allows the models to incorporate fidelity level as an explicit input feature, enabling seamless learning across different accuracy levels.

MatGL further distinguishes between invariant and equivariant GNNs in their handling of symmetry constraints. Invariant GNNs use scalar features like bond distances and angles, ensuring predicted properties remain unchanged with respect to translation, rotation, and permutation. Equivariant GNNs properly handle the transformation of tensorial properties like forces and dipole moments with respect to rotations, allowing use of directional information from relative bond vectors [3]. This theoretical foundation enables more physically meaningful multi-fidelity learning across different property types.

The library's Potential class implements best practices for multi-fidelity interatomic potentials, including energy scaling using formation or cohesive energy references with elemental ground states or isolated atoms as zero references [3]. This normalization accounts for systematic differences between fidelity levels and ensures consistent property predictions across the materials space.

Multi-fidelity methods represent a paradigm shift in computational materials design, transforming the traditional cost-accuracy trade-off from a limitation into an opportunity for strategic resource allocation. By intelligently leveraging relationships across the fidelity spectrum, researchers can dramatically accelerate materials discovery while maintaining the accuracy required for predictive design.

The protocols and frameworks presented here provide practical pathways for implementing multi-fidelity strategies in real-world materials research. As the field advances, key challenges remain in scalability, optimal fidelity management, and automating the selection of appropriate fidelity combinations for different materials classes. The continued development of benchmark datasets, open-source tools like MatGL, and standardized protocols will be crucial for advancing multi-fidelity learning from specialized technique to mainstream methodology in computational materials science.

In computational materials design and drug development, researchers routinely face a fundamental trade-off: high-fidelity (HiFi) data from experiments or sophisticated simulations are accurate but costly and time-consuming to produce, while low-fidelity (LoFi) data from approximations or simpler models are affordable but potentially less reliable [4] [5]. Multi-fidelity models (MFMs) address this challenge by providing a framework that strategically integrates data of varying cost and accuracy to maximize predictive power while minimizing overall resource expenditure [6]. These methods leverage the correlations between different data fidelities to build predictive models that can achieve accuracy comparable to those built exclusively on high-fidelity data, but at a fraction of the cost [7] [4].

The core principle of multi-fidelity modeling lies in its ability to fuse information from multiple sources. In materials science, this might involve combining high-throughput computational screening data with limited experimental validation [8] [6]. For drug development, this could mean integrating data from rapid in silico docking studies with costly in vitro assays [9]. By learning the relationships between these different data tiers, MFMs create a surrogate model that guides the efficient allocation of resources, ensuring that expensive high-fidelity evaluations are reserved for the most promising candidates [6] [4].

The Theoretical Framework of Multi-Fidelity Learning

Defining Fidelity in Scientific Contexts

In scientific applications, "fidelity" refers to the accuracy and reliability of a data source or model in representing the true system of interest. Fidelity exists on a spectrum [5]:

- Low-Fidelity Data (LoFi) originates from simplified models that use approximations to simulate a system rather than modeling it exhaustively. Examples include density functional theory (DFT) calculations with simpler exchange-correlation functionals in materials science [8] [10], or coarse-grained molecular dynamics simulations in drug discovery.

- High-Fidelity Data (HiFi) comes from sources that closely match the real-world operational context, such as experimental measurements in materials science [10] or clinical trial data in pharmaceutical development.

The fundamental challenge MFMs address is that HiFi data are expensive and scarce, while LoFi data are more abundant but potentially biased or noisy [8] [5]. Multi-fidelity methods overcome this by learning the complex relationships between different fidelity levels, effectively transferring information from low-cost sources to enhance predictions at the highest fidelity level [6] [4].

Mathematical Foundations and Relationship Learning

Multi-fidelity models typically employ a structured approach to relationship learning between fidelity levels. One prominent framework is the auto-regressive Gaussian process model [5], which formulates the relationship between successive fidelities as:

Where z_t(x) represents the output at fidelity level t, ρ_(t-1) is a scaling constant that quantifies the correlation between fidelities t and t-1, and δ_t(x) is an independent Gaussian process representing the bias term [5]. This formulation allows the model to capture both the correlation between fidelity levels and the unique characteristics of each level.

Alternative approaches include co-kriging methods [6] [4] and multi-fidelity neural networks [7], each with particular strengths depending on the data structure and application domain. The choice of relationship model depends on factors such as the number of fidelity levels, the nature of the correlation between them, and the computational budget available for model training.

Quantitative Performance Advantages of Multi-Fidelity Approaches

Computational Efficiency Gains

Research demonstrates that multi-fidelity approaches can significantly reduce computational costs while maintaining accuracy comparable to single-fidelity methods relying exclusively on high-fidelity data. The table below summarizes key performance metrics reported across various studies:

Table 1: Quantitative Performance of Multi-Fidelity Models Across Domains

| Application Domain | Cost Reduction | Accuracy Maintained | Reference |

|---|---|---|---|

| Materials Design Optimization | ~67% (3x faster) | Equivalent to single-fidelity BO | [6] |

| Composite Laminate Damage Analysis | Significant computational advantage | Without compromising accuracy | [7] |

| Molecular Discovery | High-scoring candidates at "a fraction of the budget" | While maintaining diversity | [9] |

| Band Gap Prediction | Improved performance with limited experimental data | Lower MAE compared to single-fidelity | [10] |

These efficiency gains stem from the strategic allocation of resources across the fidelity spectrum. Multi-fidelity Bayesian optimization, for instance, dynamically determines whether to evaluate a candidate using cheap low-fidelity assessments or expensive high-fidelity measurements, focusing resources only where they provide the most information value [6].

Addressing Data Scarcity and Quality Challenges

In computational materials discovery, ML-accelerated discovery requires large amounts of high-fidelity data to reveal predictive structure-property relationships [8]. However, for many material properties of interest, the challenging nature and high cost of data generation has resulted in a data landscape that is both scarcely populated and of dubious quality [8]. Multi-fidelity approaches help overcome these limitations through several mechanisms:

- Consensus across methods: Using agreement across different density functional theory functionals to improve prediction reliability [8]

- Transfer learning: Leveraging patterns learned from abundant low-fidelity data to inform high-fidelity predictions [10]

- Denoising techniques: Improving data quality through algorithms that identify and correct systematic errors in low-fidelity sources [10]

These approaches are particularly valuable when high-fidelity experimental data are limited, as is common in early-stage materials discovery and drug development programs [8] [9].

Application Protocols for Materials and Drug Discovery

Multi-Fidelity Bayesian Optimization for Materials Screening

Bayesian optimization provides a powerful framework for materials design when coupled with multi-fidelity data [6]. The following protocol outlines a standardized approach for implementing multi-fidelity Bayesian optimization in computational materials screening:

Table 2: Protocol for Multi-Fidelity Bayesian Optimization in Materials Screening

| Step | Procedure | Technical Specifications | Output |

|---|---|---|---|

| 1. Problem Formulation | Define design space and fidelity hierarchy | Identify 2+ fidelity levels (e.g., DFT functionals, experimental validation) | Fidelity cost structure and correlation assumptions |

| 2. Initial Sampling | Collect initial data across fidelities | Latin hypercube sampling with balanced distribution across fidelities | Initial training dataset D = {(xi, fi, c_i)} |

| 3. Multi-Fidelity Surrogate Modeling | Train multi-output Gaussian process | Implement auto-regressive correlation structure [5] | Surrogate model with uncertainty quantification |

| 4. Acquisition Function Optimization | Apply Targeted Variance Reduction (TVR) | Select (x, f) pair minimizing variance per unit cost at promising locations [6] | Next sample and fidelity level to evaluate |

| 5. Experimental Evaluation | Conduct measurement at selected fidelity | Follow standardized protocols for the fidelity level (e.g., DFT calculation parameters) | New observation y |

| 6. Model Update | Incorporate new data into surrogate | Update Gaussian process hyperparameters | Improved surrogate model |

| 7. Iteration | Repeat steps 4-6 until budget exhaustion | Monitor convergence via expected improvement | Final optimized material candidates |

This protocol replaces traditional "computational funnel" approaches with a dynamic, adaptive method that learns fidelity relationships on-the-fly rather than requiring pre-specified accuracy hierarchies [6]. Implementation requires specialized software libraries such as GPyTorch or BoTorch for the multi-fidelity Gaussian process modeling, coupled with domain-specific simulation tools for evaluation at each fidelity level.

Multi-Fidelity Active Learning with GFlowNets for Molecular Discovery

For molecular discovery tasks where the goal is to identify diverse, high-performing candidates, multi-fidelity active learning with GFlowNets provides an effective protocol [9]:

State Space Definition: Define the discrete compositional space (e.g., molecular graphs, crystal structures) and available fidelity levels (e.g., computational docking, binding assays).

GFlowNet Training: Train a generative flow network to sample candidates proportional to a reward function, initially using low-fidelity proxies.

Multi-Fidelity Acquisition: Apply an acquisition policy that selects both the candidate and the fidelity level for evaluation, balancing exploration and exploitation across the fidelity spectrum.

Active Learning Loop: Iteratively update the GFlowNet sampler and reward model based on newly acquired data, gradually shifting resources toward higher fidelities as promising regions are identified.

This approach has demonstrated the ability to discover high-scoring molecular candidates at a fraction of the budget of single-fidelity counterparts while maintaining diversity—a critical advantage over reinforcement learning-based alternatives [9].

Visualization of Multi-Fidelity Workflows

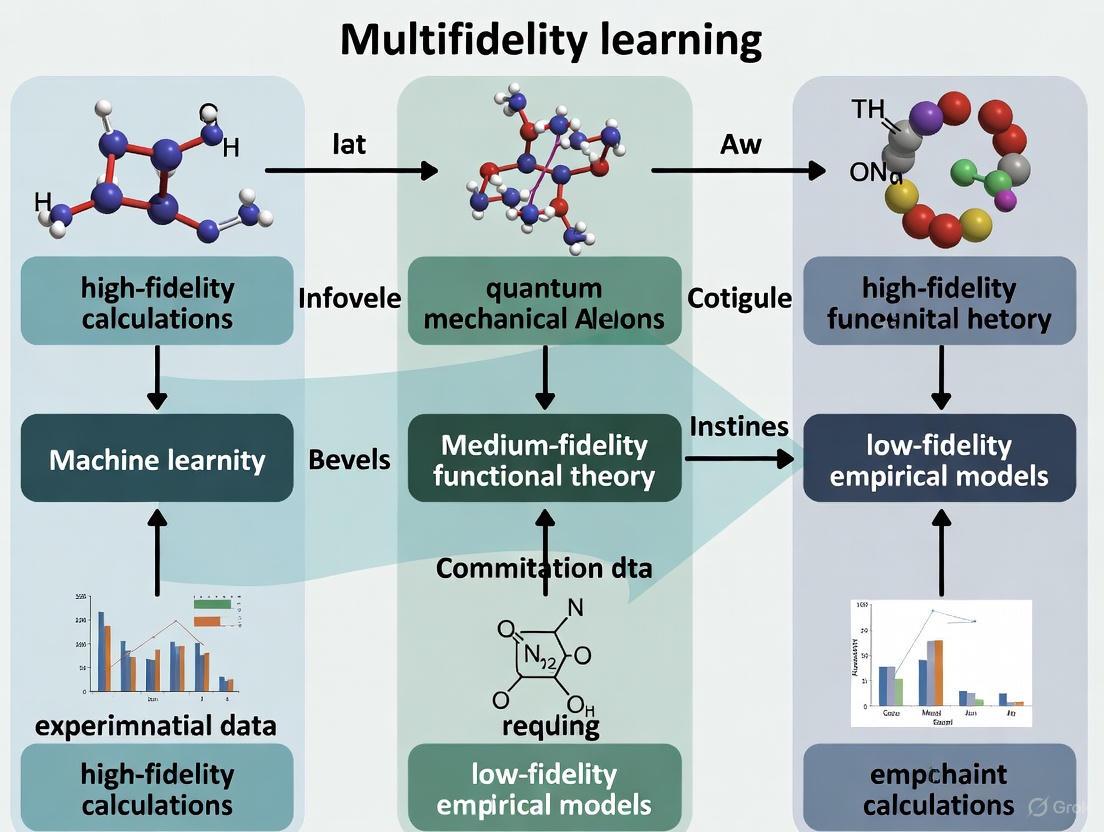

Multi-Fidelity Surrogate Modeling Workflow

The following diagram illustrates the complete workflow for constructing and applying multi-fidelity surrogate models in computational materials design:

Diagram 1: Multi-fidelity surrogate modeling workflow

Multi-Fidelity Bayesian Optimization Cycle

The iterative cycle of multi-fidelity Bayesian optimization demonstrates how information flows between different components to efficiently guide experimental design:

Diagram 2: Multi-fidelity Bayesian optimization cycle

Successful implementation of multi-fidelity modeling requires both computational tools and domain-specific resources. The following table outlines key components of the multi-fidelity research toolkit:

Table 3: Essential Research Reagents and Computational Resources for Multi-Fidelity Modeling

| Resource Category | Specific Tools/Platforms | Function in Multi-Fidelity Research |

|---|---|---|

| Multi-Fidelity Modeling Libraries | GPyTorch, Emukit, SMT | Implementation of multi-output Gaussian processes and other MF surrogate models |

| Optimization Frameworks | BoTorch, Dragonfly, OpenBox | Bayesian optimization with multi-fidelity capabilities |

| Materials Databases | Materials Project, Cambridge Structural Database (CSD) [8] | Sources of multi-fidelity materials data for training and validation |

| Electronic Structure Codes | VASP, Quantum ESPRESSO, Gaussian | Generation of computational fidelity data at different theory levels |

| Data Extraction Tools | ChemDataExtractor [8] | Automated extraction of experimental data from literature for high-fidelity training |

| Workflow Management | AiiDA, FireWorks | Automation of multi-fidelity simulation pipelines and data management |

These resources provide the foundation for building end-to-end multi-fidelity research pipelines, from data generation and collection through model development and validation.

Multi-fidelity modeling represents a paradigm shift in computational materials design and drug development, transforming the traditional trade-off between data cost and accuracy into a synergistic relationship. By strategically leveraging cheaper, lower-quality data to guide the targeted acquisition of expensive, high-quality measurements, these methods enable researchers to explore larger design spaces and identify optimal candidates with significantly reduced resources [6] [7].

As the field advances, several emerging trends are poised to further enhance the capabilities of multi-fidelity approaches. The integration of multi-fidelity active learning with advanced generative methods like GFlowNets shows particular promise for discovering diverse, high-performing candidates in molecular design spaces [9]. Similarly, denoising techniques that explicitly address systematic errors in low-fidelity data sources can improve the quality of information transfer across fidelity levels [10]. As these methodologies mature and become more accessible through standardized protocols and open-source tools, they will play an increasingly central role in accelerating scientific discovery across materials science and pharmaceutical development.

Multi-fidelity data, comprising information of varying cost, accuracy, and abundance, has emerged as a cornerstone of modern computational materials design [11] [4]. This paradigm recognizes the inherent trade-off between the computational expense of a method and the precision of its results. In materials science, this frequently manifests as large volumes of inexpensive, lower-fidelity data (e.g., from certain density functional theory calculations) complementing smaller, costlier sets of high-fidelity data (e.g., from advanced quantum methods or experiments) [11] [6]. The strategic integration of these diverse data streams through multi-fidelity machine learning models enables researchers to achieve predictive accuracy that would be prohibitively expensive using high-fidelity data alone [12]. This Application Note delineates the common sources of multi-fidelity data, provides structured protocols for its utilization, and visualizes the workflows essential for advancing computational materials design.

The generation of multi-fidelity data in materials research can be systematically categorized into three primary sources: data derived from different computational algorithms, data originating from varying hyperparameters within a single method, and the integration of experimental data with computational results.

Multi-Fidelity Data from Different Algorithms

The most prevalent source of multi-fidelity data stems from applying different computational methodologies to the same material system. These methods inherently possess varying levels of accuracy and associated computational cost.

Table 1: Multi-Fidelity Data from Different Computational Algorithms

| Fidelity Level | Computational Method | Typical Application | Characteristics & Accuracy |

|---|---|---|---|

| Low-Fidelity (LF) | Empirical Potentials [11] | Preliminary screening, large-scale molecular dynamics | Fast computation; limited transferability and accuracy. |

| Low/Medium-Fidelity | DFT with GGA functionals (e.g., PBE) [11] [12] | High-throughput calculation of electronic properties | Systematic errors (e.g., band gap underestimation of 30-100% [11]); good balance of speed/accuracy. |

| High-Fidelity (HF) | DFT with meta-GGA (e.g., SCAN) or hybrid functionals (e.g., HSE) [11] [12] | Accurate property prediction for validation | Improved description of bonds & electronic structure; 5-50x more costly than GGA. |

| Highest-Fidelity | Post-HF Methods (e.g., CCSD(T)) or Experiments [6] [12] | Ground-truth validation and final candidate assessment | "Chemical accuracy" (<1 kcal/mol) or experimental truth; often prohibitively expensive for large datasets. |

A quintessential example is found in the Materials Project database, where band gaps for compounds are computed with different functionals. The number of data points calculated with the PBE functional vastly exceeds those from more accurate but costly methods like HSE or SCAN, naturally creating a multi-fidelity hierarchy [11]. In aerospace and mechanical engineering, analogous hierarchies are created using different Computational Fluid Dynamics (CFD) models or finite element models of varying complexity [11] [13].

Multi-Fidelity Data from Different Hyperparameters

Within a single computational method, fidelity can be modulated by adjusting hyperparameters that control the numerical accuracy and computational expense of the calculation.

Table 2: Multi-Fidelity Data from Varying Hyperparameters in DFT

| Hyperparameter | Low-Fidelity Setting | High-Fidelity Setting | Impact on Cost & Accuracy |

|---|---|---|---|

| Plane-Wave Cutoff Energy | Low (e.g., 400 eV) | High (e.g., 600 eV) | Higher cutoff improves basis set completeness and energy convergence, increasing compute time [11]. |

| k-point Mesh Density | Sparse (e.g., 2x2x2) | Dense (e.g., 8x8x8) | Denser k-point sampling better integrates the Brillouin zone, critical for metals and accurate forces [11]. |

| Geometry Convergence Criteria | Relaxed (e.g., 0.1 eV/Å) | Strict (e.g., 0.01 eV/Å) | Stricter criteria ensure atomic configurations are closer to true minima, requiring more ionic steps [11]. |

| Self-Consistency Convergence | Partial (e.g., 100 iterations) | Full (e.g., 600+ iterations) | Full self-consistent field convergence is necessary for accurate electron densities and derived properties [11]. |

Using different mesh sizes, time steps, or convergence criteria are common techniques to generate low-fidelity and high-fidelity data pairs from the same underlying physical model, providing a controlled approach for multi-fidelity learning [11] [4].

Integration of Experimental and Computational Data

The ultimate multi-fidelity framework integrates computational data with experimental measurements. Experimental results are typically considered the highest-fidelity source but are often scarce and expensive. Computational data, while potentially bearing systematic errors, can be generated in large quantities to guide experimentation [6]. This fusion is powerfully applied in Bayesian optimization for materials discovery, where a multi-output Gaussian process dynamically learns the relationship between computational predictions and experimental results, thereby reducing the total number of expensive experiments required to find optimal materials [6] [14].

Protocols for Multi-Fidelity Model Implementation

Protocol 1: Multi-Fidelity Graph Neural Network for Interatomic Potentials

This protocol details the construction of a multi-fidelity machine learning interatomic potential (MLIP) using the M3GNet architecture, leveraging low-fidelity and high-fidelity Density Functional Theory (DFT) data [12].

The Scientist's Toolkit: Research Reagent Solutions

- Low-Fidelity Dataset (e.g., PBE/GGA): A large dataset (~100k+ structures) of energies and forces computed with a fast, semi-local functional like PBE. Function: Provides broad coverage of the chemical and configurational space.

- High-Fidelity Dataset (e.g., SCAN): A smaller, targeted dataset (~1-10k structures) of energies and forces computed with a more accurate functional like SCAN. Function: Provides a high-accuracy benchmark for refining the model.

- M3GNet Architecture: A graph neural network with a fidelity embedding feature. The fidelity level (e.g., 0 for LF, 1 for HF) is encoded as an integer and input as part of the model's global state [12].

- Sampling Strategy (e.g., DIRECT): A method for strategically selecting which structures from the low-fidelity dataset to recompute at high-fidelity to ensure robust coverage of the configuration space [12].

Procedure

- Data Curation: Assemble a low-fidelity dataset (e.g., PBE) and a smaller high-fidelity dataset (e.g., SCAN). Ensure a significant overlap in the structural types between the two sets.

- Training Set Assembly: Construct the multi-fidelity training set. A successful implementation used 80% of the available low-fidelity data combined with only 10% of the high-fidelity data (selected from structures within the 80% LF set) [12].

- Model Configuration: Modify the M3GNet input to accept a fidelity index. This index is embedded into a vector and used to update the graph's global state, allowing the network to learn fidelity-specific relationships [12].

- Model Training: Train the M3GNet model on the combined multi-fidelity dataset. The model will simultaneously learn from the abundant low-fidelity data and the accurate high-fidelity data, with the fidelity embedding guiding the correlation between fidelities.

- Validation & Testing: Reserve the remaining high-fidelity data (the 20% not used in training, ensuring no structural overlap) for validation and testing to benchmark the model's performance on unseen high-fidelity data.

Expected Outcomes: A multi-fidelity M3GNet model trained with 10% high-fidelity SCAN data can achieve energy and force accuracies comparable to a single-fidelity model trained on 8 times the amount of SCAN data, demonstrating significant data efficiency [12].

Protocol 2: Multi-Fidelity Bayesian Optimization for Materials Discovery

This protocol outlines the use of Multi-Fidelity Bayesian Optimization (MFBO) to iteratively guide experiments by leveraging cheaper computational or experimental proxies [6] [14].

Procedure

- Problem Formulation: Define the objective (e.g., maximize the yield of a catalytic reaction). Identify the high-fidelity function,

f_HF(x)(e.g., actual experimental yield), and at least one low-fidelity function,f_LF(x)(e.g., computational descriptor or bench-top NMR measurement), along with their respective costs [14]. - Initial Design: Collect a small initial set of paired observations

{x_i, f_LF(x_i), f_HF(x_i)}to seed the model. - Model Construction: Build a multi-output Gaussian Process (GP) surrogate model. This model learns a joint distribution over the functions, dynamically capturing the correlation between the low-fidelity and high-fidelity data sources [6].

- Acquisition Function Optimization: Use a multi-fidelity acquisition function, such as Targeted Variance Reduction (TVR), to select the next sample point

x_nextand its fidelity levelz_next. TVR chooses the(x, z)pair that minimizes the prediction variance at the most promising candidate point (from a standard acquisition function like Expected Improvement) per unit cost [6]. - Iterative Loop:

a. Evaluate the selected

f_z_next(x_next)(e.g., run a cheap computation or a targeted experiment). b. Update the multi-output GP model with the new data. c. Repeat steps 4-5 until the experimental budget is exhausted.

Expected Outcomes: MFBO can reduce the overall optimization cost by a factor of three on average compared to single-fidelity Bayesian optimization that uses only high-fidelity data, by smartly allocating resources to cheaper fidelities [6].

Workflow Visualization

Multi-Fidelity Data Integration Workflow

The following diagram illustrates the logical flow of integrating multi-fidelity data from various sources into a unified machine learning model for predictive materials design.

Multi-Fidelity Modeling Workflow

Multi-Fidelity Bayesian Optimization Loop

The following diagram details the iterative decision-making process of Multi-Fidelity Bayesian Optimization, which dynamically selects both the next point to evaluate and the fidelity level at which to evaluate it.

MF Bayesian Optimization Loop

The strategic generation and integration of multi-fidelity data represent a paradigm shift in computational materials design. By understanding and leveraging common data sources—ranging from hierarchies of DFT algorithms and hyperparameters to the ultimate integration of computation and experiment—researchers can construct powerful, data-efficient models. The protocols and workflows detailed herein provide a concrete foundation for implementing multi-fidelity learning. As these methodologies mature, they promise to significantly accelerate the discovery and development of new materials by maximizing the informational return on every computational and experimental investment.

In computational materials design, a fundamental trade-off exists between the accuracy of a computational method and its associated computational cost. This gives rise to a natural hierarchy of fidelity, where methods range from fast, approximate empirical potentials to highly accurate but expensive experimental validations and high-level quantum mechanics calculations. Multifidelity learning (MFL) provides a powerful framework to systematically integrate data from these different levels, creating models that are both accurate and computationally efficient to execute [11]. This paradigm is transforming computational materials science by enabling researchers to leverage the vast amounts of existing low-fidelity data while strategically incorporating limited high-fidelity data to achieve predictive accuracy. The core principle involves learning the complex relationships between different levels of theory and experiment, thereby extracting maximum knowledge from each data source [6] [12]. This approach is particularly vital for properties like band gaps, which are notoriously challenging for standard density functional theory (DFT) functionals, and for developing reliable interatomic potentials where high-fidelity data is scarce [12] [11]. This Application Note details the protocols and tools for implementing this hierarchical fidelity framework to accelerate materials discovery and drug development.

The Fidelity Hierarchy in Computational Materials Science

The hierarchy of computational methods is characterized by increasing physical accuracy and computational expense. Understanding the role and limitations of each level is crucial for effective multifidelity integration.

Table 1: The Hierarchy of Computational and Experimental Methods

| Fidelity Level | Typical Methods | Key Characteristics | Primary Use Cases |

|---|---|---|---|

| Low-Fidelity | Empirical Potentials (e.g., for Cr₂O₃ [15]), DFT with GGA functionals (e.g., PBE) | Low computational cost; known systematic errors (e.g., band gap underestimation); large datasets available | High-throughput screening; molecular dynamics simulations of large systems |

| Medium-Fidelity | Meta-GGA functionals (e.g., SCAN), hybrid DFT | Improved accuracy for diverse bonding environments; higher computational cost (up to 10-100x PBE) | Training data for high-accuracy machine learning potentials; property prediction for complex systems |

| High-Fidelity | High-level quantum chemistry (e.g., CCSD(T)), RPA | Approaches "chemical accuracy"; computationally prohibitive for large systems or many configurations | Providing benchmark data for small systems; validating lower-fidelity methods |

| Experimental Validation | Synthesis & characterization (e.g., powder diffraction, elastic property measurement) | Ground-truth data; can be time-consuming and resource-intensive [16] | Final validation of computational predictions; integration into multifidelity models as the target fidelity [6] |

Quantitative Performance of Multifidelity Approaches

Multifidelity models have demonstrated significant gains in data efficiency and accuracy across multiple materials systems.

Table 2: Performance Benchmarks of Multifidelity Learning

| System Studied | Multifidelity Approach | Key Result | Reference |

|---|---|---|---|

| Silicon M3GNet IPs | M3GNet with fidelity embedding (RPBE/PBE + SCAN) | Achieved accuracy of a single-fidelity SCAN model using only 10% of the SCAN data (an 8x data efficiency improvement) [12] | Chen et al., 2025 [12] |

| Excitation Energies (QeMFi) | MFML with compute-time informed scaling (θ) | High accuracy achieved with only 2 target-fidelity (def2-TZVP) samples when leveraging many lower-fidelity samples [17] | Vinod & Zaspel, 2025 [17] |

| Polymer & Material Band Gaps | Multi-output Gaussian Process; Graph Networks | MAE improvement of 22-45% for band gap prediction by incorporating low-fidelity PBE data [6] [12] | Patra et al., 2022; Chen et al., 2022 [6] [12] |

| General Materials Properties | Multi-fidelity data learning (information fusion, Bayesian optimization) | Outperformed models using only high-fidelity data, especially effective with comprehensive correction strategies for noise [11] | Liu et al., 2023 [11] |

Figure 1: The Multifidelity Learning Workflow. This diagram illustrates the hierarchical relationships between different computational and experimental fidelities and the primary multifidelity learning strategies that connect them.

Detailed Protocols for Multifidelity Implementation

Protocol 1: Constructing a High-Fidelity Graph Learning Potential (M3GNet)

This protocol outlines the data-efficient construction of a high-fidelity M3GNet interatomic potential (IP) using a multi-fidelity dataset, as demonstrated for silicon and water [12].

1. Research Reagent Solutions

- Low-Fidelity Dataset: A large set of structures with energies and forces computed using a fast, semi-local DFT functional like PBE or RPBE.

- High-Fidelity Dataset: A smaller, strategically sampled set of structures with energies and forces computed using a high-accuracy functional like SCAN.

- Software: Access to the M3GNet architecture, which includes a global state feature for fidelity embedding.

- Sampling Tool: Implementation of a sampling algorithm like DIRECT (Dimensionality-Reduced Encoded Cluster with Stratified) for robust coverage of the configuration space.

2. Step-by-Step Procedure

- Dataset Curation: Compile your low-fidelity (PBE/RPBE) dataset. Identify a subset of structures for high-fidelity (SCAN) calculation. Ensure a significant overlap in structures between the two datasets to facilitate relationship learning.

- Fidelity Encoding: Encode the fidelity information as integers (e.g., 0 for low-fidelity, 1 for high-fidelity). This integer is embedded as a vector and fed into the global state feature of the M3GNet model.

- Data Partitioning: Split the data into training, validation, and test sets. A robust strategy is to use 80% of the low-fidelity data combined with 10% of the high-fidelity data (selected from structures within the 80% low-fidelity set) for training. The remaining 20% of SCAN data, from structures not in the training set, should be divided equally for validation and testing.

- Model Training: Train the M3GNet model on the combined multi-fidelity dataset. The model will automatically learn the complex functional relationship between the low- and high-fidelity potential energy surfaces through the fidelity embedding and global state feature.

- Validation and Testing: Evaluate the model's performance on the validation and test sets. Key metrics are the Mean Absolute Error (MAE) of energies and forces compared to the high-fidelity reference data. The model should achieve accuracy comparable to a single-fidelity model trained on a much larger high-fidelity dataset.

3. Troubleshooting and Notes

- Data Imbalance: The DIRECT sampling approach is crucial when the high-fidelity dataset is small, as it ensures optimal coverage of the configuration space.

- Architecture Requirement: The use of a global state feature, as in M3GNet, is key for effective fidelity embedding. Verify that your chosen model architecture supports this.

This protocol describes the application of MFML for predicting vertical excitation energies using the QeMFi benchmark dataset, focusing on the impact of data scaling factors [17].

1. Research Reagent Solutions

- Dataset: The QeMFi dataset, which contains ~135,000 molecular geometries with excitation energies calculated at five DFT fidelities (STO-3G, 321G, 631G, def2-SVP, def2-TZVP) and associated compute times.

- Model: A Multifidelity Machine Learning model, such as the one based on Kernel Ridge Regression (KRR) or an optimized MFML (o-MFML) variant.

- Scaling Factors: Predefined scaling factors (γ) to determine the number of training samples at each fidelity, or compute-time informed factors (θ).

2. Step-by-Step Procedure

- Define Fidelity Hierarchy: Order your data fidelities from lowest (e.g., STO-3G) to highest (e.g., def2-TZVP), which is the target fidelity.

- Set Scaling Factor: Choose a scaling strategy.

- Fixed Scaling (γ): Traditionally, a factor of 2 is used, meaning each lower fidelity uses twice as many training samples as the fidelity above it.

- Time-Informed Scaling (θ): Calculate scaling factors based on the actual compute-time difference between fidelities, as provided in datasets like QeMFi. This directly optimizes for computational cost savings.

- Construct Training Sets: For a given number of target-fidelity samples (e.g.,

n_target), calculate the number of samples at each lower fidelity asn_low = n_target * (γ)^d, wheredis the fidelity level's distance from the target. - Train MFML Model: Systematically combine several Δ-ML-like models across more than two fidelities using the constructed training sets.

- Evaluate with Error Contours: Analyze the model's performance using error contours, which plot the model error against the number of training samples used at two different fidelities. This helps visualize the contribution of each fidelity to the overall accuracy.

3. Troubleshooting and Notes

- The Γ-Curve: For a fixed, small number of target-fidelity samples (e.g., 2), systematically increase the scaling factor γ. Plot the resulting model error against the total time-cost of generating the training data. This "Γ-curve" identifies the point of optimal cost-accuracy trade-off, often demonstrating that high accuracy is achievable with minimal high-fidelity data.

Figure 2: Protocol for Multifidelity ML. This workflow outlines the key steps for implementing a Multifidelity Machine Learning study, from data organization to model evaluation.

Protocol for Experimental Validation and Integration

Computational predictions, especially those guiding resource-intensive synthesis, require robust experimental validation to verify their real-world applicability [16].

1. Research Reagent Solutions

- Public Experimental Databases: Utilize resources like the High Throughput Experimental Materials Database (HTE-MD), Materials Genome Initiative (MGI) data, PubChem, or OSCAR for initial comparisons.

- Collaboration with Experimentalists: For direct validation, partner with synthesis and characterization labs.

- Characterization Techniques: Powder X-ray diffraction (for crystal structure), spectroscopy (for electronic properties), and mechanical tests (for elastic properties).

2. Step-by-Step Procedure

- Prioritize Candidates: Use the multifidelity model to screen and identify the most promising candidate materials or molecules for a target property.

- Initial In-Silico Comparison: Compare the structure and predicted properties of novel candidates to existing, well-characterized molecules in public databases (e.g., PubChem) to assess novelty and plausibility [16].

- Experimental Synthesis: Collaborate with experimentalists to synthesize the top-predicted candidates.

- Materials Characterization: Characterize the synthesized materials using relevant techniques (e.g., powder diffraction for structure, UV/Vis for optical properties) to obtain ground-truth data [18].

- Model Refinement and Iteration: Integrate the experimental results as the highest-fidelity data into the multifidelity model. This feedback loop allows for dynamic model refinement and improves future prediction cycles [6].

3. Troubleshooting and Notes

- Synthesizability: Computational models may generate structures that are difficult or impossible to synthesize. Tools to quantify synthesizability can help filter candidates.

- Data Availability: The increasing availability of public experimental data makes initial validation more accessible than ever, even without a direct experimental collaboration [16].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational and Data Resources for Multifidelity Research

| Tool/Resource Name | Type | Function in Multifidelity Research |

|---|---|---|

| QeMFi Dataset [17] | Benchmark Data | Provides a standardized benchmark for excitation energies and compute times across 5 DFT fidelities for 135k geometries. |

| M3GNet Architecture [12] | Machine Learning Model | A graph neural network interatomic potential with a global state feature, enabling direct fidelity embedding for multi-fidelity learning. |

| MedeA Flowcharts [18] | Simulation Protocol Tool | A visual programming environment for designing and executing systematic computational materials science studies, such as structure solution from powder diffraction data. |

| Materials Project (MP) [11] | Computational Database | A primary source of low-fidelity (e.g., PBE) data; also contains higher-fidelity (e.g., HSE, SCAN) data for some properties, enabling multi-fidelity dataset construction. |

| Multi-output Gaussian Process [6] | Statistical Model | A Bayesian model that learns relationships between multiple fidelities simultaneously, used for information fusion and optimization. |

| DIRECT Sampling [12] | Sampling Algorithm | A strategy for selecting a representative subset of high-fidelity data points to ensure robust coverage of the configuration space when data is limited. |

In computational materials design, the quest for accurate property prediction is often constrained by a fundamental trade-off: the high computational cost of achieving high accuracy versus the affordability of lower-fidelity methods. This cost-accuracy trade-off inherently leads to the generation of multi-fidelity data, where data points exhibit varying levels of precision and systematic bias [11]. For instance, in predicting material properties like band gaps, results can range from highly accurate but scarce experimental measurements to abundant but approximate calculations from density functional theory (DFT) with different exchange-correlation functionals [10]. Understanding and characterizing the specific imperfections—namely, systematic errors and random noise—present at each fidelity level is paramount for developing robust multi-fidelity learning models. These models aim to leverage all available information efficiently, harnessing the volume of low-fidelity data while anchoring predictions to the accuracy of high-fidelity data [19] [4]. This document outlines a formal framework for characterizing these imperfections, providing application notes and detailed experimental protocols tailored for research in computational materials design.

Theoretical Framework: Error Decomposition in Multi-Fidelity Data

From a machine learning (ML) perspective, the total error in a dataset can be decomposed into bias, variance, and noise. In multi-fidelity modeling, the "noise" encompasses both the systematic errors (biases from the data-producer's standpoint) and random noise inherent in the data generation process [10].

- Systematic Errors: These are consistent, reproducible inaccuracies introduced by the approximations of a specific method. In computational materials science, a prime example is the systematic underestimation (30-100%) of band gaps by DFT calculations using local and semi-local functionals compared to experimental results [10] [11]. These errors are often deterministic and can be modeled, for instance, as a linear transformation between fidelities.

- Random Noise: This refers to non-deterministic fluctuations that can arise from various sources. In DFT, for example, random errors can stem from the initial trial charge density used in calculations or different convergence criteria during self-consistent field cycles [11]. Unlike systematic errors, random noise is unpredictable and must be treated statistically.

The following diagram illustrates the relationship between different data fidelities and the types of imperfections that must be characterized.

Figure 1: Multi-Fidelity Data Relationship and Error Sources. This diagram shows the typical hierarchy from low-fidelity (LF) to high-fidelity (HF) models and true experimental values. Both LF and HF data are subject to random noise, while systematic error is introduced by the approximations inherent in the computational models.

Quantitative Characterization of Imperfections

A critical step is to quantitatively assess the errors present in different data sources. The following tables summarize common metrics and provide an example from band gap prediction, a well-studied problem in materials informatics [10].

Table 1: Summary of Key Error Metrics for Characterizing Multi-Fidelity Data

| Metric | Formula | Application in Multi-Fidelity Context |

|---|---|---|

| Mean Error (ME) | ( \frac{1}{n}\sum{i=1}^{n}(y{\text{pred},i} - y_{\text{true},i}) ) | Measures average systematic bias of a model or fidelity level. A non-zero ME indicates a consistent over- or under-estimation. |

| Mean Absolute Error (MAE) | ( \frac{1}{n}\sum{i=1}^{n}|y{\text{pred},i} - y_{\text{true},i}| ) | Quantifies the average magnitude of total error, combining both systematic and random components. Useful for overall accuracy assessment. |

| Root Mean Square Error (RMSE) | ( \sqrt{\frac{1}{n}\sum{i=1}^{n}(y{\text{pred},i} - y_{\text{true},i})^2} ) | Similar to MAE but gives a higher weight to large errors. Sensitive to outliers. |

| Standard Deviation of Error | ( \sqrt{\frac{1}{n-1}\sum{i=1}^{n}((y{\text{pred},i} - y_{\text{true},i}) - \text{ME})^2} ) | Estimates the magnitude of random noise around the systematic bias. |

Table 2: Example Error Analysis for Band Gap Predictions Using Different DFT Functionals [10]

| DFT Functional (Fidelity) | Mean Error (ME) vs. Exp. (eV) | Mean Absolute Error (MAE) vs. Exp. (eV) | Primary Nature of Imperfection |

|---|---|---|---|

| PBE (LF) | -0.34 | ~0.4-0.6 (est.) | Strong systematic underestimation |

| HSE (HF) | -0.09 | ~0.2-0.3 (est.) | Minor systematic error |

| SCAN (LF) | -0.68 | ~0.7-0.9 (est.) | Severe systematic underestimation |

| GLLB (LF) | +0.65 | ~0.7-0.9 (est.) | Systematic overestimation |

Experimental Protocols for Error Assessment

This section provides a detailed, step-by-step methodology for characterizing systematic errors and random noise in multi-fidelity datasets, using computational materials science as the primary context.

Protocol: Assessment of Systematic Errors and Random Noise

1. Objective: To quantitatively determine the systematic bias and random noise component for a given low-fidelity data source relative to a high-fidelity reference.

2. Research Reagent Solutions & Materials: Table 3: Essential Materials and Computational Tools for Error Characterization

| Item | Function/Description | Example Tools/Databases |

|---|---|---|

| High-Fidelity Reference Data | Serves as the "ground truth" for quantifying errors in lower-fidelity data. | Experimental datasets (e.g., from ICSD); High-level ab initio calculations (e.g., CCSD(T)). |

| Low-Fidelity Data | The data whose imperfections are to be characterized. | DFT data (e.g., from PBE functional); Data from coarse-grid or partially converged simulations. |

| Statistical Software | For calculating error metrics and performing regression analysis. | Python (with Pandas, NumPy, SciPy), R, MATLAB. |

| Data Visualization Tools | For generating scatter plots, residual plots, and histograms to visually inspect errors. | Matplotlib, Seaborn, Gnuplot. |

3. Procedure:

- Data Curation and Alignment:

- Identify the intersection of materials/compounds for which both high-fidelity (HF) and low-fidelity (LF) data are available. This forms the dataset

LF ∩ HF. - Ensure the data is correctly paired and units are consistent.

- Identify the intersection of materials/compounds for which both high-fidelity (HF) and low-fidelity (LF) data are available. This forms the dataset

Initial Visualization and Linear Correlation Analysis:

- Generate a scatter plot of LF values (

P_LF) against HF values (P_HF). - Perform a linear regression (

P_HF = a * P_LF + b) on theLF ∩ HFdata to model the simplest form of systematic relationship. - A strong linear correlation suggests that a significant portion of the discrepancy is a systematic bias that can be corrected.

- Generate a scatter plot of LF values (

Quantification of Systematic Error:

- Calculate the Mean Error (ME) for the LF data relative to the HF reference using the formula in Table 1. The ME is a direct measure of the average systematic bias.

- Optional Scaling: Apply the scaling relation found in Step 2 (

P_scaled = a * P_LF + b) to the raw LF data. Recalculate the ME of the scaled data. A ME approaching zero indicates a successful linear correction of the systematic bias [10].

Quantification of Random Noise:

- Calculate the residuals:

Residual_i = P_LF,i - P_HF,i(orP_scaled,i - P_HF,iif scaling was applied). - Compute the standard deviation of these residuals. This metric estimates the magnitude of the random noise that cannot be explained by a simple linear systematic bias.

- Calculate the residuals:

Categorical Error Analysis:

- Partition the

LF ∩ HFdataset into meaningful categories (e.g., for band gaps: metals, small-gap semiconductors, wide-gap semiconductors). - Calculate the ME and MAE for each category separately. This reveals if the systematic error is consistent across different material classes or if it is category-dependent [10].

- Partition the

The workflow for this protocol is summarized in the following diagram.

Figure 2: Workflow for Systematic Error and Random Noise Assessment. This protocol provides a step-by-step guide for characterizing imperfections in low-fidelity data relative to a high-fidelity standard.

Protocol: Multi-Fidelity Learning via Iterative Denoising

1. Objective: To leverage both low- and high-fidelity data to train a superior machine learning model by iteratively "denoising" the lower-fidelity labels.

2. Procedure:

- Model Initialization: Train an initial ML model (

M1) exclusively on the available high-fidelity data. - Prediction and Temporary Label Generation: Use model

M1to predict the properties of all materials in the low-fidelity dataset. These predictions (P_M1) serve as temporary, "denoised" target labels for the LF data. The underlying assumption is thatM1's predictions, while trained on limited data, are less biased than the raw LF values. - Expanded Model Training: Train a second ML model (

M2) on a combined dataset that includes both the original HF data and the LF data with their newly assignedP_M1target labels. - Iteration to Convergence: The process can be repeated: use model

M2to generate new temporary labels for the LF data, then train a modelM3on the HF data and the relabeled LF data. This continues until the performance on a validation set stabilizes [10] [11]. This approach has been shown to provide significant improvement over models trained only on high-fidelity data or models trained on naively combined multi-fidelity data [10].

The Scientist's Toolkit: Multi-Fidelity Data & Correction Strategies

To effectively work with multi-fidelity data, researchers should be familiar with the common sources of such data and the modeling strategies designed to exploit them.

Table 4: Multi-Fidelity Data Sources and Learning Strategies in Materials Science

| Category | Item / Method | Function / Key Principle |

|---|---|---|

| Common Sources of Multi-Fidelity Data | Different Algorithms (e.g., PBE vs. HSE DFT) | Provides data with different levels of physical approximation, leading to systematic accuracy differences [10] [11]. |

| Different Hyperparameters (e.g., k-points, cut-off energy) | Using the same method with different computational settings generates data of varying cost and convergence quality [11]. | |

| Experimental vs. Computational Data | The classic high-cost/high-accuracy (experimental) vs. low-cost/lower-accuracy (computational) fidelity pairing [10]. | |

| Multi-Fidelity Modeling Strategies | Iterative Denoising | Treats LF data as noisy labels and iteratively refines them using a model trained on HF data [10] [11]. |

| Multi-Fidelity Surrogate Models (MFSM) | Builds a single surrogate model (e.g., Co-Kriging) that explicitly fuses data from multiple fidelities [19] [4] [20]. | |

| Multi-Fidelity Hierarchical Models (MFHM) | Uses different fidelities hierarchically (e.g., for adaptive sampling) without building an explicit fused surrogate architecture [4]. | |

| Scalar Correction (Additive/Multiplicative) | Applies a simple linear or multiplicative correction to LF data to align it with HF trends [10] [19]. | |

| Comprehensive Correction | A strategy that may combine elements of additive, multiplicative, and other corrections, often proving highly effective on noisy datasets [11]. |

The systematic characterization of errors across fidelity levels is not merely an academic exercise but a foundational step for efficient and accurate computational materials design. By rigorously applying the protocols outlined here—quantifying systematic biases with metrics like ME, estimating random noise via standard deviation, and employing advanced multi-fidelity learning strategies like iterative denoising—researchers can transform the challenge of imperfect, multi-source data into an opportunity. This structured approach to understanding and mitigating data imperfections ensures that maximum knowledge is extracted from every available data point, ultimately accelerating the discovery and optimization of new materials.

Multi-Fidelity Methodologies and Implementation Frameworks for Practical Applications

Multi-fidelity (MF) surrogate modeling has emerged as a crucial methodology in computational materials design, enabling researchers to make a strategic trade-off between simulation accuracy and computational cost. These models integrate data from multiple sources of varying fidelity—from fast, approximate calculations to slow, high-accuracy simulations—to construct predictive models that achieve high accuracy at a fraction of the computational expense of relying solely on high-fidelity data [21] [6]. The core premise underlying multi-fidelity approaches is that while low-fidelity (LF) models may be less accurate, they often capture essential trends and patterns that can be systematically corrected using limited high-fidelity (HF) data [22].

For materials science researchers facing computationally expensive design challenges, multi-fidelity methods offer a pathway to accelerate discovery and optimization cycles. Traditional approaches often rely on computational funnels that apply increasingly accurate methods to screen candidate materials, but these require upfront knowledge of method accuracy and fixed resource allocation [6]. In contrast, modern multi-fidelity surrogate models dynamically learn relationships between different data sources, adapting resource allocation based on evolving understanding of the design space [6].

This article examines three principal methodological approaches for multi-fidelity surrogate modeling: Co-Kriging, which extends Gaussian process regression to hierarchical data; Stochastic Radial Basis Functions (SRBF), which combine basis function approximations with noise handling capabilities; and Neural Network approaches, particularly deep learning architectures that can capture complex, nonlinear relationships between fidelities. Each method offers distinct advantages for specific scenarios in computational materials design, from navigating non-hierarchical data structures to handling high-dimensional parameter spaces and noisy simulations.

Methodological Approaches

Co-Kriging for Multi-Fidelity Modeling

Co-Kriging stands as one of the most established methodologies for multi-fidelity surrogate modeling, extending the Kriging approach to multiple data fidelities through an autoregressive framework. The foundational Kennedy-O'Hagan (KOH) autoregressive model assumes that high-fidelity responses can be modeled as a scaled version of low-fidelity responses plus a discrepancy term [21] [23]. This relationship is expressed as:

( yH(\mathbf{x}) = \rho(\mathbf{x}) yL(\mathbf{x}) + \delta(\mathbf{x}) )

where ( \rho(\mathbf{x}) ) represents the scale factor correlating the fidelities, and ( \delta(\mathbf{x}) ) is the discrepancy function, both typically modeled as Gaussian processes [21].

A significant advancement in Co-Kriging addresses the challenge of non-hierarchical low-fidelity models, where multiple LF sources exist without clear fidelity ranking. Zhang et al. developed the NHLF-Co-Kriging method, which scales multiple LF models with different factors and ensembles them [21]. The discrepancy between the HF model and this ensemble is then modeled with a Gaussian process. To ensure the discrepancy function remains tractable, an optimization problem minimizes the second derivative of the discrepancy GP's predictions, resulting in more reasonable scale factor selection and improved accuracy under limited computational budgets [21].

For researchers implementing Co-Kriging, critical considerations include:

- Data hierarchy: Traditional Co-Kriging requires clearly hierarchical data, while newer variants like NHLF-Co-Kriging handle non-hierarchical scenarios [21].

- Computational complexity: Co-Kriging scales cubically with the total number of data points, which can become prohibitive for large datasets [6].

- Implementation framework: The recursive Co-Kriging formulation by Le Gratiet enables efficient cross-validation, while nonlinear extensions by Perdikaris capture more complex inter-fidelity relationships [21].

Table 1: Co-Kriging Method Variants and Their Applications

| Method Variant | Key Characteristics | Reported Applications | Advantages |

|---|---|---|---|

| Kennedy-O'Hagan (KOH) | Standard autoregressive formulation | Aerospace design, marine engineering | Strong theoretical foundation |

| Non-Hierarchical Co-Kriging (NHLF-Co-Kriging) | Handles multiple non-hierarchical LF models | Cases with varying fidelity levels across design space | Flexible scale factor estimation |

| Recursive Co-Kriging | Fast cross-validation procedure | General engineering optimization | Improved computational efficiency |

| Nonlinear Co-Kriging | Captures nonlinear fidelity relationships | Complex physical systems | Enhanced representation capability |

Stochastic Radial Basis Functions (SRBF)

Stochastic Radial Basis Functions (SRBF) provide an alternative multi-fidelity modeling approach that combines classical radial basis function approximation with statistical treatment of noisy evaluations. This method is particularly valuable for engineering design problems where computational simulations exhibit inherent numerical noise due to discretization errors, convergence tolerances, or other numerical approximations [22].

The SRBF approach formulates multi-fidelity prediction through hierarchical superposition. For ( N ) fidelity levels (with ( l=1 ) as highest fidelity), the prediction is constructed as:

( \hat{f}(\mathbf{x}) = \tilde{f}N(\mathbf{x}) + \sum{l=1}^{N-1} \tilde{\varepsilon}_l(\mathbf{x}) )

where ( \tilde{f}N(\mathbf{x}) ) is the surrogate of the lowest-fidelity model, and ( \tilde{\varepsilon}l(\mathbf{x}) ) are surrogate models of the errors between consecutive fidelity levels [22]. This recursive correction framework allows progressive refinement from the lowest to the highest fidelity.

A key advantage of SRBF methods is their integration with active learning strategies. In the approach presented by Serani et al., the method adaptively queries new training data by selecting both design points and fidelity levels based on a benefit-cost ratio [22]. The selection uses lower confidence bounding (LCB), which balances performance prediction and associated uncertainty to prioritize promising design regions while accounting for evaluation costs at different fidelities [22].

Implementation considerations for SRBF include:

- Noise handling: SRBF incorporates least squares regression and in-the-loop optimization of hyperparameters to address noisy training data [22].

- Fidelity management: The method generalizes to arbitrary numbers of hierarchical fidelity levels, making it suitable for multi-grid resolution approaches common in computational fluid dynamics [22].

- Computational efficiency: By strategically allocating evaluations across fidelities, SRBF achieves comparable accuracy to high-fidelity-only models with significantly reduced computational burden [22].

Neural Network Approaches

Neural network architectures have emerged as powerful frameworks for multi-fidelity surrogate modeling, particularly for problems exhibiting strong nonlinearities, high dimensionality, or discontinuous responses. Unlike methods based on Gaussian processes, neural networks can capture complex mappings between fidelities without restrictive prior assumptions about their relationships [23].

The Multi-Fidelity Deep Neural Network (MFDNN) represents a significant advancement in this domain. In aerodynamic shape optimization, MFDNN models correlate configuration parameters with aerodynamic performance by "blending different fidelity information and adaptively learning their linear or nonlinear correlation without any prior assumption" [23]. The architecture typically employs a composite structure where lower-fidelity predictions inform higher-fidelity approximations through specialized connections or latent space representations.

For interatomic potential development in materials science, the M3GNet architecture incorporates fidelity information through a global state feature. The fidelity level (e.g., PBE vs. SCAN functional) is encoded as an integer and embedded as a vector input to the graph neural network [12]. This embedding automatically learns the complex functional relationship between different fidelities and their associated potential energy surfaces during training [12].

Critical implementation aspects of neural network approaches include:

- Data efficiency: Multi-fidelity neural networks can achieve accuracy comparable to single-fidelity models trained on 8× more high-fidelity data [12].

- Architecture flexibility: Networks can be designed with various fusion strategies, including transfer-learning stacks, linear-nonlinear decompositions, or hybrid ensembles [24].

- Training strategies: Curriculum learning approaches that progress from coarse-resolution tasks to high-accuracy ones stabilize generalization and reduce HF query requirements [24].

Table 2: Neural Network Architectures for Multi-Fidelity Modeling

| Architecture | Fidelity Integration Method | Best-Suited Applications | Notable Capabilities |

|---|---|---|---|

| MFDNN | Composite network structure | Aerodynamic shape optimization, High-dimensional problems | Nonlinear correlation learning |

| M3GNet | Global state feature embedding | Interatomic potentials, Materials property prediction | Handling arbitrary chemistries |

| Cascaded Ensemble | Sequential fidelity refinement | Structural dynamics, Composite materials | Uncertainty quantification |

| Transfer-Learning Stacks | Progressive fine-tuning | Limited high-fidelity data scenarios | Leveraging pre-trained models |

Quantitative Comparison of Methods

Understanding the relative performance characteristics of different multi-fidelity approaches is essential for selecting appropriate methodologies for specific materials design challenges. The following table synthesizes quantitative findings from the literature regarding the accuracy and efficiency improvements afforded by various techniques.

Table 3: Performance Comparison of Multi-Fidelity Surrogate Modeling Approaches

| Method | Reported Accuracy Improvement | Computational Savings | Key Application Results |

|---|---|---|---|

| NHLF-Co-Kriging | More reasonable scale factor selection | Not explicitly quantified | Improved prediction accuracy under limited computational budget [21] |

| SRBF with Active Learning | Better prediction of design performance | Significant reduction in computational effort | 43% cost savings in gas turbine optimization [21]; Outperformed HF-only models under limited budget [22] |

| MFDNN | More accurate than Co-Kriging for nonlinear problems | Remarkable improvement in optimization efficiency | Successful aerodynamic optimization of RAE2822 airfoil and DLR-F4 wing-body [23] |

| Multi-Fidelity Bayesian Optimization | 22-45% MAE improvement in bandgap prediction | 3× reduction in optimization cost on average | Accelerated materials discovery across three design problems [6] |

| M3GNet with Fidelity Embedding | Comparable to single-fidelity with 8× less data | Requires only 10% high-fidelity data | Accurate silicon and water potential development [12] |

Application Notes for Materials Design

Multi-Fidelity Optimization Framework

The integration of multi-fidelity surrogate models into optimization frameworks presents distinctive advantages for computational materials design. A robust multi-fidelity optimization pipeline typically incorporates several key components: adaptive sampling strategies that balance exploration and exploitation, infilling methods that update databases across fidelities, and model management techniques that leverage inexpensive low-fidelity evaluations while preserving high-fidelity accuracy [23].

For aerodynamic shape optimization, MFDNN-based frameworks employ dual infilling strategies to enhance optimization effectiveness. The high-fidelity infilling strategy adds the current optimal solution from the surrogate model to the HF database to improve local accuracy, while the low-fidelity infilling strategy generates solutions distributed uniformly throughout the design space to avoid local optima and explore unknown regions [23]. This balanced approach enables efficient convergence to globally optimal designs.

In Bayesian optimization contexts, the Targeted Variance Reduction (TVR) algorithm provides a systematic approach for multi-fidelity candidate selection. After computing a standard acquisition function (e.g., Expected Improvement) on target fidelity samples, TVR selects the combination of input sample and fidelity that minimizes the variance of model prediction at the point with the greatest acquisition function score per unit cost [6]. This strategy dynamically balances information gain with evaluation expense throughout the optimization process.

Handling Non-Hierarchical Fidelity Data

A common challenge in practical materials design applications is the presence of multiple low-fidelity models without clear hierarchical relationships. These non-hierarchical scenarios arise when different simplification methods yield LF models with varying correlation to the HF model across the design space [21]. For example, in composite materials design, different physical approximations or numerical discretizations may produce LF models that each capture certain aspects of the high-fidelity response better in specific regions of the parameter space.

The NHLF-Co-Kriging method addresses this challenge by scaling multiple LF models with different factors and assembling them into an ensemble, with a separate discrepancy model correcting the ensemble's deviation from HF data [21]. The optimization process for determining scale factors minimizes the second derivative of the discrepancy function's predictions, promoting smoother corrections that are easier to model accurately [21].