Machine Learning vs Heuristics for Material Synthesizability: A Data-Driven Guide for Researchers

Accelerating the discovery of novel functional materials and drug candidates is paramount, yet the practical challenge of synthesizability remains a major bottleneck.

Machine Learning vs Heuristics for Material Synthesizability: A Data-Driven Guide for Researchers

Abstract

Accelerating the discovery of novel functional materials and drug candidates is paramount, yet the practical challenge of synthesizability remains a major bottleneck. This article provides a comprehensive analysis for researchers and drug development professionals, contrasting traditional heuristic methods with emerging machine learning (ML) approaches for predicting and ensuring synthesizability. We explore the foundational principles of both paradigms, detail cutting-edge ML frameworks like CSLLM and SynFormer that achieve over 98% accuracy, and examine practical strategies for optimizing model performance and integrating in-house resource constraints. Through comparative validation of benchmarks and success rates, we demonstrate how data-driven synthesizability prediction is bridging the gap between computational design and experimental realization, ultimately paving the way for more efficient and successful discovery pipelines in biomedicine and materials science.

Defining the Synthesizability Challenge: From Chemical Intuition to Computational Prediction

The Critical Gap Between Thermodynamic Stability and Experimental Synthesizability

Computational materials design has undergone a revolutionary transformation through data-driven strategies and high-throughput screening, enabling the prediction of novel compounds with targeted functionalities. Generative artificial intelligence now facilitates exploration across chemical spaces comprising millions of known and hypothetical materials. However, this abundance of computational candidates presents a fundamental challenge: most theoretically predicted materials identified as thermodynamically stable are not experimentally synthesizable [1]. This critical gap between computational prediction and experimental realization represents a significant bottleneck in materials discovery pipelines across diverse fields, including energy storage, catalysis, electronics, and drug development.

The intricate nature of materials synthesis introduces complex factors beyond thermodynamic equilibrium, often leading to cost-inefficient failures in materials design [2]. While thermodynamic stability—typically assessed through density functional theory (DFT) calculations of formation energy or energy above the convex hull—remains a valuable initial filter, it proves insufficient as a standalone predictor of synthesizability. Numerous structures with favorable formation energies have never been synthesized, while various metastable structures with less favorable formation energies are routinely synthesized and utilized [3]. This paradox highlights the multifaceted nature of synthesizability, which encompasses kinetic stabilization, precursor availability, reaction pathway complexity, and evolving synthetic methodologies.

This whitepaper examines the critical limitations of thermodynamic stability as a predictor of experimental synthesizability and explores emerging computational strategies to bridge this divide. Framed within the context of a broader thesis comparing machine learning versus heuristic approaches, we provide researchers with a comprehensive technical guide to current methodologies, quantitative performance comparisons, experimental protocols, and practical toolkits for enhancing synthesizability prediction in materials design workflows.

Quantitative Comparison of Synthesizability Prediction Methods

The table below summarizes the performance characteristics of major synthesizability prediction approaches, highlighting the evolving landscape from traditional heuristics to advanced machine learning models.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Basis | Key Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Formation Energy/Energy Above Hull [4] [3] | DFT-calculated thermodynamic stability | ~50% of synthesized materials captured [4] | Strong physical basis; widely available | Misses kinetically stabilized phases; poor precision (7× lower than SynthNN) [4] |

| Charge Balancing [4] | Heuristic based on common oxidation states | 37% of known compounds charge-balanced [4] | Computationally inexpensive; chemically intuitive | Inflexible; performs poorly for metallic/covalent materials (23% for binary Cs) [4] |

| SynthNN [4] | Deep learning on known compositions | 7× higher precision than DFT; outperforms human experts by 1.5× [4] | Learns chemical principles from data; composition-only input | Requires representative training data; black-box nature |

| Semi-Supervised Learning (PU Learning) [2] | Positive-unlabeled learning on stoichiometries | 83.4% recall; 83.6% precision [2] | Handles unlabeled data effectively | Complex training procedure |

| CSLLM Framework [3] | Fine-tuned large language models on crystal structures | 98.6% accuracy; surpasses thermodynamic (74.1%) and kinetic (82.2%) methods [3] | Exceptional generalization; predicts methods and precursors | Requires structure input; computational intensity |

Experimental Protocols for Synthesizability Prediction

Deep Learning Composition-Based Classification (SynthNN)

Objective: To predict synthesizability of inorganic chemical formulas without structural information using deep learning [4].

Materials and Data Preparation:

- Positive Samples: Extract known synthesized crystalline inorganic materials from Inorganic Crystal Structure Database (ICSD). Ensure data cleaning to remove duplicates and disordered structures [4].

- Negative Samples: Generate artificial unsynthesized materials through combinatorial composition generation. Acknowledge that some may be synthesizable but not yet reported (unlabeled data) [4].

- Data Representation: Utilize atom2vec representation, which learns optimal chemical formula representations directly from data distribution rather than relying on predefined descriptors [4].

Model Architecture and Training:

- Implement a deep neural network with atom embedding matrices optimized alongside other parameters.

- Employ positive-unlabeled (PU) learning framework to handle incomplete labeling of negative examples.

- Use probabilistic reweighting of unlabeled examples according to likelihood of synthesizability.

- Set ratio of artificial to synthesized formulas (N_synth) as a hyperparameter.

- Validate using temporal splitting to assess predictive capability for novel discoveries [4].

Validation:

- Conduct head-to-head comparison with human experts on material discovery tasks.

- Evaluate precision and computational efficiency compared to traditional methods [4].

Semi-Supervised Learning for Stoichiometry Synthesizability

Objective: To predict likelihood of synthesizing inorganic materials from elemental stoichiometries using positive-unlabeled learning [2].

Data Curation:

- Positive Data: Compile synthesized inorganic compositions from ICSD and literature sources.

- Unlabeled Data: Generate hypothetical compositions through element substitution and combinatorial approaches across quaternary systems (e.g., CuO-Fe₂O₃-V₂O₅) [2].

Model Implementation:

- Train semi-supervised classifier using positive and unlabeled examples.

- Apply model to construct continuous synthesizability phase maps for compositional systems.

- Use model to prioritize synthetic targets for experimental exploration [2].

Experimental Validation:

- Select top-ranking predicted synthesizable compositions for laboratory synthesis.

- For successful syntheses, characterize resulting phases through structural analysis (XRD, TEM).

- Report discovery of new phases (e.g., Cu₄FeV₃O₁₃) as validation of predictive capability [2].

Crystal Structure Synthesizability Prediction via Large Language Models

Objective: To predict synthesizability, synthetic methods, and precursors for 3D crystal structures using specialized large language models [3].

Dataset Construction:

- Positive Examples: Curate 70,120 synthesizable crystal structures from ICSD with ≤40 atoms and ≤7 elements, excluding disordered structures [3].

- Negative Examples: Screen 1,401,562 theoretical structures from multiple databases (MP, CMD, OQMD, JARVIS) using pre-trained PU learning model. Select 80,000 structures with lowest CLscore (<0.1) as non-synthesizable examples [3].

Text Representation Development:

- Create "material string" representation: SP | a, b, c, α, β, γ | (AS1-WS1[WP1...]) integrating space group, lattice parameters, atomic species, Wyckoff sites, and positions [3].

- Ensure reversible encoding of crystal structure information in concise text format.

Model Fine-Tuning:

- Develop Crystal Synthesis LLM (CSLLM) framework with three specialized models for synthesizability, methods, and precursors.

- Fine-tune LLMs on material string representations using constructed dataset.

- Evaluate generalizability on complex structures with large unit cells [3].

Precursor Prediction:

- For precursor identification, combine model predictions with reaction energy calculations and combinatorial analysis.

- Validate precursor predictions against known synthetic routes [3].

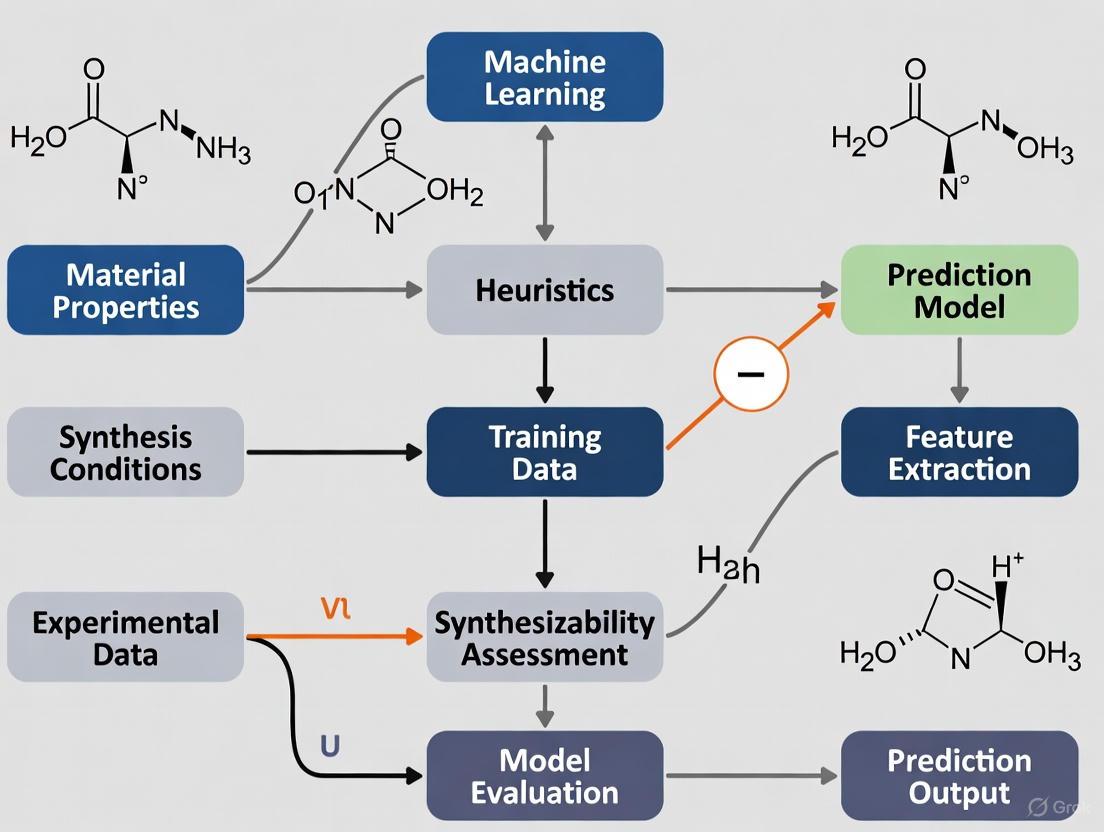

Workflow Visualization of Synthesizability Prediction Approaches

Synthesizability Prediction Workflow Comparison

Machine Learning Approaches for Synthesizability Prediction

Table 2: Computational Tools and Databases for Synthesizability Prediction

| Resource | Type | Primary Function | Application in Synthesizability |

|---|---|---|---|

| ICSD [2] [3] | Database | Repository of experimentally synthesized inorganic crystal structures | Source of positive examples for training; reference for known synthesizable materials |

| Materials Project [5] [3] | Database | DFT-calculated properties of known and hypothetical materials | Source of structural and thermodynamic data; candidate generation |

| OQMD [5] [6] | Database | Quantum mechanical calculations for materials | Stability network construction; historical discovery timeline analysis |

| MD-HIT [5] | Algorithm | Dataset redundancy control for materials | Creates non-redundant benchmark datasets; prevents performance overestimation |

| Atom2Vec [4] | Representation | Learned atomic representations from data | Composition featurization without predefined descriptors |

| CSLLM [3] | Framework | Specialized LLMs for crystal synthesis | End-to-end synthesizability, method, and precursor prediction |

| AiZynthFinder [7] | Tool | Retrosynthesis planning using reaction templates | Synthetic pathway assessment for molecular materials |

Discussion: Machine Learning vs. Heuristics in Synthesizability Research

The evolution from heuristic to data-driven approaches represents a paradigm shift in synthesizability prediction. Traditional heuristics like charge balancing, while chemically intuitive and computationally efficient, demonstrate fundamental limitations in predictive accuracy, capturing only 23-37% of known synthesized materials [4]. Thermodynamic stability metrics, though physically grounded, similarly fail to account for the complex kinetic and practical factors governing experimental synthesis.

Machine learning approaches address these limitations by learning the implicit patterns of synthesizability directly from comprehensive databases of realized materials. SynthNN demonstrates this capability by autonomously learning chemical principles like charge balancing, chemical family relationships, and ionicity without explicit programming [4]. The exceptional performance of large language models like CSLLM (98.6% accuracy) further suggests that these models capture complex, multidimensional relationships between composition, structure, and synthesizability that elude simpler heuristic rules [3].

However, the machine learning paradigm introduces new challenges. The "black box" nature of complex models can obscure the chemical rationale behind predictions, potentially limiting researcher trust and utility for hypothesis generation. Training data limitations remain significant, particularly for negative examples (non-synthesizable materials), which are addressed through innovative approaches like positive-unlabeled learning [2] and historical network analysis [6]. Dataset redundancy issues, as addressed by MD-HIT, can lead to overoptimistic performance estimates if not properly controlled [5].

The most promising path forward appears to be hybrid approaches that leverage the interpretability of heuristics with the predictive power of machine learning. Domain adaptation techniques show potential for improving out-of-distribution prediction performance, addressing a key limitation of current models [8]. Integration of retrosynthesis models directly into optimization loops represents another advancement, particularly for functional materials where traditional heuristics show diminished correlation with synthesizability [7] [9].

As synthesizability prediction continues to mature, the development of more robust metrics, standardized benchmarks, and integrated workflows will be essential for narrowing the divide between virtual screening and real-world materials realization. The convergence of large-scale data, advanced algorithms, and experimental validation promises to transform synthesizability from a persistent bottleneck into an enabling capability for accelerated materials discovery.

In the field of materials synthesizability research, heuristic methods provide interpretable, rule-based scores for prioritizing candidate compounds before costly experimental synthesis. These methods leverage foundational chemical principles—such as thermodynamic stability, structural similarity, and compositional rules—to estimate synthesis likelihood. As machine learning (ML) models emerge as powerful alternatives, understanding the capabilities, limitations, and underlying assumptions of these heuristics is critical for selecting appropriate prioritization strategies. This technical guide details prominent heuristic methods, their experimental validation protocols, and their role within a broader strategy integrating both heuristic and ML approaches for materials discovery [1] [10].

The accelerated discovery of novel functional materials through computational screening creates a critical bottleneck: predicting which hypothetical compounds are synthetically accessible. Synthesizability is a multi-faceted property influenced by thermodynamic, kinetic, and experimental factors. Heuristic methods, or rule-based scores, offer a transparent and computationally efficient first-pass filter for assessing synthesizability. They are derived from empirical observations and long-standing chemical principles, providing a benchmark against which more complex, data-driven ML models are often compared [11] [1].

This guide examines the dominant heuristic scores used in inorganic materials research, dissecting their formal definitions and, more importantly, their foundational assumptions. A clear understanding of these assumptions is necessary to contextualize their predictions and to frame their integration with modern ML approaches [10].

Core Heuristic Scores for Materials Synthesizability

The following rule-based scores are commonly employed in computational materials design pipelines to prioritize candidates for synthesis.

Table 1: Core Heuristic Scores for Synthesizability Assessment

| Heuristic Score | Formal Definition & Calculation | Primary Reference Data |

|---|---|---|

| Energy Above Hull (Eₕᵤₗₗ) | Eₕᵤₗₗ = Eᶜᵒᵐᵖᵒᵘⁿᵈ - Eᵖʰᵃˢᵉ ᵈⁱᵃᵍʳᵃᵐCalculated via a convex hull construction from first-principles total energies of a compound and all other competing phases in its compositional space [11]. | DFT-calculated formation energies from materials databases (e.g., Materials Project, OQMD) [11]. |

| Distance to Known Composition | D = 1 - max(Jᵢ)Where Jᵢ is the Jaccard index between the element set of the target composition and the i-th known composition in a reference database [1]. | Historical databases of experimentally synthesized compositions (e.g., ICSD). |

| Charge Neutrality | A binary check: Is the nominal sum of cationic and anionic charges in the unit cell equal to zero? [1] | N/A (Applied chemical principle). |

| Electronegativity Balance | Assessed via Pauling electronegativity differences to flag compositions likely to form covalent or ionic bonds, avoiding metallic glass formers [1]. | Tabulated elemental electronegativity values. |

Underlying Assumptions and Critical Analysis

Every heuristic operates on a set of simplifying assumptions, which define the boundaries of its predictive utility.

Table 2: Underlying Assumptions and Practical Limitations of Heuristic Scores

| Heuristic Score | Core Underlying Assumptions | Known Limitations & Failure Modes |

|---|---|---|

| Energy Above Hull (Eₕᵤₗₗ) | 1. Ground-State Proxy: Phase stability at zero temperature and pressure is a primary indicator of synthesizability.2. Ignored Kinetics: Assumes that a thermodynamically stable compound will have a viable kinetic pathway to formation.3. DFT Fidelity: Relies on the accuracy of Density Functional Theory (DFT) for energy calculations, which can be inadequate for correlated electron systems [11]. | Fails for metastable materials (e.g., diamonds) that are kinetically stabilized. Does not provide any guidance on actual synthesis conditions such as precursors or temperature [11]. |

| Distance to Known Composition | 1. Historical Bias: Assumes the chemical space of previously synthesized materials is a reliable proxy for future synthesizability.2. Element-Centric: Prioritizes elemental combinations over structural motifs, ignoring polymorphic possibilities [11]. | Perpetuates historical research biases, potentially overlooking novel compositions in unexplored regions of chemical space [11]. |

| Charge Neutrality & Electronegativity | 1. Simple Bonding Models: Assumes that simple ionic and covalent bonding models are sufficient to describe complex solid-state bonding.2. No Quantitative Scale: These are often pass/fail filters without a graduated scale of synthesizability likelihood [1]. | Overly simplistic; many known materials exhibit complex bonding not captured by these simple rules (e.g., Zintl phases, metal-organic frameworks). |

Experimental Validation Protocols

Validating any synthesizability prediction, whether from heuristics or ML, requires controlled experimental synthesis attempts. The following protocol outlines a standard methodology for such validation.

Precursor Selection and Reaction Planning

- Solid-State Synthesis: Select high-purity, solid precursors (typically oxides, carbonates). The selection is often guided by text-mined historical data, which can identify commonly used precursor combinations for target material classes [11].

- Reaction Balancing: Use software tools (e.g., via the Materials Project API) to balance the chemical reaction, including volatile species like O₂ or CO₂, to ensure mass and charge conservation [11].

Synthesis Workflow Execution

The experimental workflow for solid-state synthesis involves a sequence of material processing and analysis steps, as visualized below.

Diagram Title: Solid-State Synthesis Validation Workflow

Characterization and Success Criteria

- Primary Characterization: Powder X-ray Diffraction (XRD) is used to identify the crystalline phases present in the final product.

- Success Metric: Synthesis is deemed successful if the XRD pattern of the reaction product matches the reference pattern for the target compound as the major phase, with minimal impurities.

The Scientist's Toolkit: Research Reagent Solutions

The following reagents and materials are essential for the experimental validation of synthesizability predictions via solid-state synthesis.

Table 3: Essential Materials for Solid-State Synthesis Validation

| Reagent/Material | Function in Experiment | Technical Specification Examples |

|---|---|---|

| High-Purity Oxide/Carbonate Precursors | Source of cationic elements for the target material. | e.g., TiO₂ (99.99%), Li₂CO₃ (99.99%), SrCO₃ (99.9%). Purity is critical to avoid side reactions. |

| Grinding Media (Alumina/Zirconia) | For mechanical homogenization of precursor mixtures in ball milling. | Alumina (Al₂O₃) or zirconia (ZrO₂) milling balls, various diameters (e.g., 3-10 mm). |

| Organic Binder (e.g., PVA) | Temporary binder to aid in the formation of robust pellets. | Polyvinyl Alcohol (PVA) solution, ~2% wt/vol in water. |

| High-Temperature Furnace | Provides controlled atmosphere and temperature for solid-state reaction. | Tube furnace capable of >1200°C, with gas flow control (O₂, N₂, Ar). |

| Platinum or Alumina Crucibles | Inert containers to hold samples during high-temperature treatment. | Pt crucibles for oxidizing atmospheres; Al₂O₃ crucibles for general use. |

| X-Ray Diffractometer | Definitive characterization of synthesized crystal phases. | Powder XRD system with Cu Kα radiation, Bragg-Brentano geometry. |

Heuristics vs. Machine Learning: A Comparative Workflow

The emerging paradigm in predictive synthesis integrates the interpretability of heuristics with the pattern-recognition power of ML. The following diagram illustrates how these methods can be combined in a modern materials discovery pipeline.

Diagram Title: Integrated Heuristic and ML Screening Pipeline

Comparative Advantages and Integration

- Heuristic Strengths: Heuristics are computationally cheap, transparent, and excel at providing rapid, physically intuitive screening. They are highly effective for initial candidate set reduction from millions to thousands [1].

- ML Strengths and Data Challenges: Machine learning models, particularly foundation models, can learn complex, non-linear relationships from large text-mined and experimental datasets [10]. However, their performance is contingent on data quality and volume. As noted by Sun et al., historical synthesis data often suffers from limitations in "volume, variety, veracity, and velocity," which can constrain the predictive utility of ML models trained on it [11].

- Synergistic Integration: A combined pipeline uses heuristics for initial coarse-grained filtering. The resulting smaller, more tractable candidate set is then evaluated by a more computationally expensive ML model that can predict nuanced synthesis outcomes, such as precursor selection or heating profiles, which are beyond the scope of simple rules [11] [10]. This hybrid approach leverages the respective strengths of both methodologies.

The discovery of new functional materials is a cornerstone of technological advancement, from renewable energy solutions to next-generation electronics. For decades, computational materials discovery has relied on density functional theory (DFT) to predict material stability, typically using thermodynamic metrics like formation energy and energy above the convex hull. However, a significant bottleneck has emerged: many computationally designed materials, despite being thermodynamically stable, are not synthesizable in laboratory conditions [12] [11]. This creates a critical gap between theoretical predictions and experimental realization, limiting the practical impact of materials informatics.

The emerging paradigm seeks to address this challenge through machine learning (ML) approaches that learn synthesizability directly from complex, multi-modal data. Unlike traditional heuristics based solely on thermodynamic stability, these models incorporate diverse features including crystal structure, composition, and historical synthesis data. The fundamental shift is from "Is this material stable?" to "Can this material be synthesized?"—a question that depends on kinetic factors, precursor availability, and synthetic pathways that transcend simple thermodynamic considerations [3] [13]. This technical guide explores the core machine learning paradigms transforming synthesizability prediction, providing researchers with methodologies, experimental protocols, and computational tools to bridge the gap between in-silico design and real-world synthesis.

Core Machine Learning Paradigms for Synthesizability Prediction

Structural and Compositional Feature Integration

Early ML approaches to synthesizability prediction operated on limited feature sets, typically considering either composition or structure in isolation. Modern frameworks have demonstrated that integrating complementary signals from both domains significantly enhances predictive performance:

- Compositional encoders transform elemental stoichiometry into rich feature representations using fine-tuned transformer architectures [13]. These models capture elemental chemistry, precursor availability, and redox constraints based on historical synthesis data.

- Structural graph neural networks operate on crystal structure graphs, encoding local coordination environments, motif stability, and packing arrangements that influence synthetic accessibility [12] [13].

- Unified models employ dual-encoder architectures that process composition and structure simultaneously, with cross-attention mechanisms that learn interactions between elemental properties and structural motifs [13].

The rank-average ensemble method provides an effective strategy for combining predictions from multiple specialized models. This approach converts probabilities to ranks across candidates and computes an aggregate ranking, enhancing robustness across diverse chemical spaces [13].

Large Language Models for Crystallographic Information

The recent adaptation of large language models (LLMs) to crystallographic data represents a paradigm shift in synthesizability prediction. The Crystal Synthesis Large Language Models (CSLLM) framework demonstrates how domain-adapted LLMs can achieve remarkable accuracy:

- Textual representation of crystals: Specialized "material string" representations compress essential crystallographic information (space group, lattice parameters, Wyckoff positions) into tokenizable sequences [3].

- Multi-task specialization: CSLLM employs three specialized LLMs for synthesizability classification (98.6% accuracy), synthetic method prediction (91.0% accuracy), and precursor identification (80.2% success) [3].

- Domain-focused fine-tuning: By aligning LLMs' broad linguistic capabilities with materials-specific features, the models refine their attention mechanisms to reduce hallucinations and improve reliability [3].

This approach significantly outperforms traditional synthesizability screening based on thermodynamic stability (74.1% accuracy) and kinetic stability via phonon spectrum analysis (82.2% accuracy) [3].

Active Learning with Multi-Modal Feedback

The CRESt (Copilot for Real-world Experimental Scientists) platform exemplifies how active learning systems can accelerate materials discovery through multi-modal data integration:

- Beyond Bayesian optimization: Traditional Bayesian optimization operates in constrained design spaces. CRESt enhances this approach by incorporating literature knowledge, experimental results, microstructural images, and human feedback to redefine search spaces dynamically [14].

- Robotic high-throughput experimentation: Automated systems perform synthesis, characterization, and testing, with results fed back to update models in closed-loop cycles [14].

- Computer vision for reproducibility: Integrated cameras and vision-language models monitor experiments, detect issues, and suggest corrections, addressing the critical challenge of experimental reproducibility [14].

In one application, CRESt explored over 900 chemistries and conducted 3,500 electrochemical tests, discovering a multi-element catalyst with 9.3-fold improvement in power density per dollar over pure palladium [14].

Table 1: Performance Comparison of Major Synthesizability Prediction Approaches

| Method | Key Features | Accuracy/Performance | Limitations |

|---|---|---|---|

| Thermodynamic Stability | Energy above convex hull | 74.1% accuracy [3] | Overlooks kinetic factors and precursor availability |

| Structural GNNs | Crystal graph representations | 92.9% accuracy (teacher-student) [3] | Limited composition awareness |

| CSLLM Framework | Material strings, specialized LLMs | 98.6% accuracy [3] | Data curation challenges for rare compounds |

| Unified Composition-Structure | Rank-average ensemble | 7/16 successful syntheses [13] | Computational intensity |

| CRESt Active Learning | Multi-modal feedback, robotics | 9.3x performance improvement [14] | Requires extensive instrumentation |

Experimental Protocols and Methodologies

Data Curation and Representation Strategies

Robust synthesizability prediction requires carefully curated datasets that balance synthesizable and non-synthesizable examples:

- Positive examples: Experimentally confirmed structures from databases like the Inorganic Crystal Structure Database (ICSD), filtered for ordered structures with limited element diversity (e.g., ≤7 elements, ≤40 atoms) [3].

- Negative examples: Theoretical structures with low synthesizability scores from PU learning models (CLscore <0.1), drawn from computational databases (Materials Project, OQMD, JARVIS) [3].

- Stratified splits: Ensure representative distribution across crystal systems (cubic, hexagonal, tetragonal, etc.) and compositional diversity [3].

For structural representation, the Wyckoff encode method efficiently captures symmetry information by representing structures based on their Wyckoff positions rather than atomic coordinates, enabling more effective sampling of promising configuration spaces [12].

Model Training and Validation Protocols

Standardized training protocols ensure reproducible model performance:

- Architecture specifications: For unified composition-structure models, implement separate encoders for composition (transformer-based) and structure (graph neural network), with MLP heads for synthesizability classification [13].

- Training regimen: Optimize using binary cross-entropy loss with early stopping based on validation AUPRC, employing large-scale computational resources (e.g., NVIDIA H200 clusters) [13].

- Validation metrics: Comprehensive assessment including accuracy, AUC-ROC, AUPRC, and generalization to complex structures with large unit cells [3].

The Construction Zone Python package provides methodology for generating complex nanoscale atomic structures, enabling systematic sampling of realistic nanomaterials for training and evaluation [15].

Experimental Validation Workflows

Experimental validation remains the ultimate test for synthesizability predictions:

- Precursor prediction: Apply models like Retro-Rank-In to generate ranked lists of viable solid-state precursors for target compounds [13].

- Parameter optimization: Use synthesis prediction models (e.g., SyntMTE) to determine calcination temperatures and reaction conditions [13].

- High-throughput synthesis: Execute proposed syntheses in automated laboratories with robotic liquid handling, carbothermal shock systems, and automated electrochemical workstations [14] [13].

- Characterization: Validate products through automated X-ray diffraction (XRD), electron microscopy, and performance testing [13].

In one implementation, this workflow successfully synthesized 7 of 16 target compounds predicted to be highly synthesizable, with the entire experimental process completed in just three days [13].

Signaling Pathways and Workflow Visualizations

ML-Driven Crystal Structure Prediction

CSLLM Synthesis Prediction Framework

CRESt Active Learning Platform

Table 2: Essential Resources for Synthesizability Research

| Resource | Type | Function | Example Implementation |

|---|---|---|---|

| Construction Zone | Software Package | Algorithmic generation of complex nanoscale atomic structures | Python package for sampling realistic nanomaterials with defects and variations [15] |

| CRESt Platform | Integrated System | Robotic high-throughput materials testing with multi-modal feedback | Combines liquid-handling robots, carbothermal shock synthesis, automated electrochemistry [14] |

| CSLLM Framework | Model Architecture | Specialized LLMs for synthesizability, methods, and precursors | Three LLM system using material string representation [3] |

| Wyckoff Encode | Algorithm | Symmetry-guided structure derivation and subspace classification | Method for efficient configuration space sampling [12] |

| Retro-Rank-In | Prediction Model | Precursor suggestion for solid-state synthesis | Ranked precursor recommendations based on literature mining [13] |

| SyntMTE | Prediction Model | Calcination temperature parameter prediction | Temperature optimization for target phase formation [13] |

Quantitative Performance Benchmarks

Table 3: Experimental Validation Results Across Studies

| Study/System | Candidates Screened | Synthesizability Criteria | Experimental Validation | Key Outcomes |

|---|---|---|---|---|

| Synthesizability-Driven CSP [12] | 554,054 from GNoME | Structure-based evaluation model | Reproduction of 13 known XSe structures | 92,310 structures filtered as highly synthesizable |

| Unified Composition-Structure [13] | 4.4 million computational structures | Rank-average > 0.95 | 16 targets experimentally characterized | 7 successfully synthesized, including 1 novel compound |

| CSLLM Framework [3] | 105,321 theoretical structures | Synthesizability LLM classification | N/A (computational study) | 45,632 synthesizable materials identified |

| CRESt Platform [14] | 900+ chemistries | Multi-modal active learning | 3,500 electrochemical tests | Catalyst with 9.3x power density improvement per dollar |

The paradigm shift from heuristic stability rules to data-driven synthesizability prediction represents a transformative advancement in materials discovery. By leveraging complex multi-modal data—from crystal structures and compositions to historical synthesis recipes and experimental outcomes—machine learning models are increasingly capable of distinguishing theoretically plausible materials from experimentally accessible ones. The integration of large language models, active learning systems, and high-throughput experimentation creates a powerful framework for accelerating the translation of computational predictions to synthesized materials.

Future research directions include developing more sophisticated cross-modal architectures that better integrate compositional and structural information, improving few-shot learning capabilities for rare-element compounds, and creating more comprehensive synthesis route prediction systems that account for complex reaction pathways. As these methodologies mature, they promise to significantly compress the materials discovery timeline, enabling researchers to focus experimental resources on the most promising candidates and ultimately bridging the long-standing gap between computational design and laboratory realization.

Data Scarcity and the Positive-Unlabeled (PU) Learning Problem in Materials Science

The discovery of new functional materials is a cornerstone for addressing critical challenges in energy storage, catalysis, and electronic devices. However, a significant bottleneck persists: the majority of computationally designed materials are impractical to synthesize in the laboratory [16]. This challenge is compounded by a fundamental data scarcity problem in materials science. While databases of successfully synthesized materials exist, comprehensive data on failed synthesis attempts are rarely published or systematically collected [17] [4]. This lack of negative examples creates a fundamental obstacle for applying traditional supervised machine learning to predict material synthesizability.

The materials community has historically relied on chemical heuristics—traditional rules of thumb derived from chemical knowledge and intuition—to guide synthesis efforts. Rules such as Pauling's rules for ionic crystals or charge-balancing criteria have served as important screening tools [18] [17]. However, statistical evaluation has revealed significant limitations in these traditional approaches. For instance, more than half of the experimentally synthesized materials in the Materials Project database do not meet classical charge-balancing criteria [17], and only 37% of known inorganic materials are charge-balanced according to common oxidation states [4]. This performance gap has motivated the development of more sophisticated, data-driven approaches that can learn complex patterns beyond simplified chemical rules.

The PU Learning Paradigm: Learning from Positive and Unlabeled Data

Problem Formulation and Core Assumptions

Positive and Unlabeled (PU) learning is a semi-supervised machine learning framework designed specifically for scenarios where only positive examples (successfully synthesized materials) and unlabeled examples (materials with unknown synthesizability status) are available [19]. This formulation perfectly matches the data landscape in materials synthesis, where we have:

- Positive examples: Materials confirmed through experimental synthesis and recorded in databases like the Inorganic Crystal Structure Database (ICSD) or Materials Project.

- Unlabeled examples: Hypothetical materials generated through computational design, whose synthesizability remains unknown.

The core challenge in PU learning is that the unlabeled set contains a mixture of both synthesizable (positive) and unsynthesizable (negative) materials, without explicit labels to distinguish them. The objective is to train a classifier that can identify synthesizable candidates from the unlabeled pool by learning the hidden patterns characteristic of positive examples.

Key Methodological Approaches

Several PU learning strategies have been developed for materials synthesizability prediction:

Bagging SVM Approach: The original PU learning implementation for materials used a bagging approach with Support Vector Machines (SVMs) where different random subsets of unlabeled data are temporarily labeled as negative [19]. A decision tree classifier is trained on these positive and pseudo-negative examples, with the process repeated through bootstrapping to build a robust model. This approach identified 18 new potentially synthesizable MXenes [19].

Risk Estimator Methods: Modern PU learning methods like unbiased PU (uPU) and non-negative PU (nnPU) utilize the prior probability of positive samples to constrain the learning process on unlabeled data [20]. These methods employ empirical risk estimators that account for the absence of true negative labels during training.

Co-training Frameworks: SynCoTrain employs a dual-classifier co-training framework with two complementary graph convolutional neural networks: SchNet and ALIGNN [17]. These networks offer different "perspectives" on the data—ALIGNN encodes atomic bonds and angles (chemist's perspective), while SchNet uses continuous convolution filters suitable for atomic structures (physicist's perspective). The models iteratively exchange predictions to reduce individual biases and improve generalizability.

Table 1: Comparison of PU Learning Methods for Materials Synthesizability Prediction

| Method | Core Approach | Key Features | Reported Performance |

|---|---|---|---|

| Basic PU Learning [19] | Bagging SVM with decision trees | Bootstrapping with random negative sampling | True Positive Rate: 0.91 over Materials Project database |

| SynCoTrain [17] | Dual-classifier co-training (SchNet + ALIGNN) | Reduces model bias, handles structure data | High recall on internal and leave-out test sets |

| SynthNN [4] | Deep learning with atom2vec embeddings | Composition-based (no structure required) | 7× higher precision than DFT formation energies |

| Stoichiometry Model [2] | Positive-unlabeled learning for compositions | Treats arbitrary elemental combinations | Recall: 83.4%, Precision: 83.6% for test set |

Experimental Protocols and Implementation

Data Preparation and Feature Engineering

Successful implementation of PU learning for materials requires careful data preparation:

Data Sources and Curation:

- Positive Data: Experimentally confirmed materials from ICSD [4] or Materials Project [19]

- Unlabeled Data: Hypothetical materials from computational generation or database entries without synthesis confirmation

- Feature Extraction: Using tools like Matminer to featurize compounds [19] or learned representations like atom2vec embeddings [4]

Material Representation:

- Composition-based: Using only chemical formula, enabling screening of hypothetical materials without known structures [4]

- Structure-based: Incorporating crystal structure information through graph representations [17]

- Elemental Features: Periodic table properties and chemistry-informed representations that build in the structure of the periodic table [21]

Model Training and Evaluation

The training process follows specific protocols to handle the absence of negative examples:

PU Learning Workflow:

Evaluation Strategies:

- Hold-out Validation: Using known synthesized materials as test positives

- Precision Estimation: Accounting for potential synthesizable materials in the unlabeled set [2]

- Ablation Studies: Comparing against traditional heuristics and thermodynamic proxies [4]

Table 2: Performance Comparison of Synthesizability Prediction Methods

| Method | True Positive Rate | Precision | Key Advantage | Limitations |

|---|---|---|---|---|

| Charge-Balancing Heuristic [4] | N/A | 37% (on known materials) | Chemically intuitive, fast | Misses many synthesizable materials |

| DFT Formation Energy [4] | ~50% | Low (varies) | Physics-based | Computationally expensive, ignores kinetics |

| PU Learning (General) [19] [2] | 83.4-91% | 83.6% (estimated) | Data-driven, accounts for multiple factors | Requires careful implementation |

| Human Experts [4] | Variable | Lower than SynthNN | Domain knowledge | Slow, inconsistent |

Data Resources:

- Materials Project Database: Contains over 131,000 inorganic compounds with computational data [19]

- ICSD (Inorganic Crystal Structure Database): Comprehensive repository of experimentally characterized inorganic crystal structures [4]

- pumml Repository: Open-source Python implementation of PU learning for materials [22]

Computational Tools:

- Matminer: Feature extraction and data mining tools for materials science [19]

- SchNetPack: Graph neural network for atomistic systems using continuous-filter convolutions [17]

- ALIGNN: Atomistic Line Graph Neural Network that encodes bond and angle information [17]

Case Studies and Experimental Validation

MXene Discovery Using PU Learning

In one pioneering application, PU learning was used to predict synthesizable 2D MXenes [19]. The model was trained on positive examples of known MXenes and their 3D precursor MAX phases. The algorithm learned complex patterns related to atomic bonding, electron distribution, and structural arrangements. This approach identified 18 new potentially synthesizable MXenes [19], demonstrating the ability to capture synthesizability factors beyond simple thermodynamic stability.

Quaternary Oxide Exploration

Researchers applied PU learning to guide experimental exploration of the quaternary oxide system comprising CuO, Fe₂O₃, and V₂O₅ [2]. The model constructed a continuous synthesizability phase map that agreed well with available synthetic data. This guidance led to the discovery of a new phase, Cu₄FeV₃O₁₃, demonstrating the practical utility of PU learning in directing experimental resources toward promising compositional regions.

SynCoTrain for Oxide Crystals

The SynCoTrain framework specifically targeted oxide crystals, a well-studied material class with extensive experimental data [17]. By focusing on a single material family, the approach balanced dataset variability with computational efficiency while maintaining high prediction reliability. The co-training architecture proved particularly effective in mitigating model bias and improving generalizability to new compositions.

Integration with Traditional Knowledge and Future Outlook

Complementing Chemical Heuristics

Rather than replacing traditional chemical knowledge, PU learning approaches work in concert with established heuristics. As highlighted in [18], "heuristic and machine learning approaches are at their best when they work together." Machine learning models can internalize and extend traditional chemical intuition—for instance, SynthNN was found to learn the principles of charge-balancing, chemical family relationships, and ionicity from data alone, without explicit programming of these rules [4].

The relationship between traditional heuristics and machine learning is bidirectional. While classical chemical heuristics rely on limited datasets and human pattern recognition, machine learning leverages larger datasets to extract more complex patterns [18]. Furthermore, traditional chemical concepts commonly serve as features that enhance machine learning techniques, creating a synergistic relationship rather than a competitive one.

Technical Challenges and Future Directions

Despite promising results, several challenges remain in applying PU learning to materials synthesizability:

Data Quality and Representation:

- Handling materials with f-electrons, which exhibit strange behavior and are hard to describe [19]

- Developing better representations that capture kinetic and synthetic factors beyond thermodynamics

- Incorporating synthesis conditions and pathways into predictions

Methodological Improvements:

- Addressing the "overfitted risk estimation" problem where models fit too closely to the limited positive data [20]

- Developing better frameworks for extremely imbalanced scenarios common in materials science

- Creating more interpretable models that provide chemical insights alongside predictions

Experimental Validation:

- Closing the loop between prediction and experimental synthesis

- Reporting negative results to improve future datasets

- Developing autonomous laboratories for rapid experimental validation [16]

The challenge of data scarcity in materials science, particularly the absence of confirmed negative examples, has found a promising solution in Positive and Unlabeled learning. By reformulating synthesizability prediction as a PU learning problem, researchers have developed models that significantly outperform traditional heuristics and human experts in both accuracy and efficiency. These approaches successfully bridge the gap between computational materials design and experimental realization, learning complex patterns that encompass but extend beyond traditional chemical intuition.

As materials data continues to grow and PU methodologies advance, the integration of data-driven approaches with physical knowledge will be crucial. Future progress will likely come from hybrid approaches that combine the interpretability of traditional heuristics with the predictive power of machine learning, ultimately accelerating the discovery of novel materials to address pressing technological challenges.

Next-Generation Synthesizability Prediction: ML Frameworks and Real-World Applications

The prediction of crystal synthesizability represents a critical bottleneck in accelerating materials discovery. Traditional approaches reliant on thermodynamic and kinetic stability metrics, such as energy above the convex hull and phonon spectra, exhibit significant limitations as they fail to capture the complex experimental factors governing synthesis. This whitepaper details the Crystal Synthesis Large Language Model (CSLLM) framework, a transformative approach that leverages fine-tuned large language models to accurately predict synthesizability, synthetic methods, and precursors for inorganic crystal structures. CSLLM achieves a remarkable 98.6% accuracy in synthesizability classification, substantially outperforming traditional heuristic methods. By bridging the gap between computational materials design and experimental realization, the CSLLM framework establishes a new paradigm for machine learning-driven synthesizability prediction, moving beyond the constraints of rule-based stability screening.

The discovery of novel functional materials is often hampered by the challenge of synthesizability. Conventional materials design paradigms have heavily relied on density functional theory (DFT) to calculate thermodynamic stability, typically using the energy above the convex hull (Ehull) as a primary heuristic for synthesizability screening [23]. While materials with low or negative Ehull are generally more likely to be synthesizable, this correlation is imperfect. A significant population of metastable compounds (with Ehull > 0) are experimentally synthesizable, while many theoretically stable compounds remain elusive [23] [3]. This gap underscores the limitation of purely thermodynamic heuristics, which ignore critical experimental factors such as kinetics, precursor selection, and synthesis route.

Machine learning (ML) has emerged as a powerful tool to model this complex relationship. Early ML models, such as those using positive-unlabeled (PU) learning, demonstrated the capability to predict synthesizability from composition or structure with improved accuracy over stability metrics alone [2]. However, these models often exhibited moderate accuracy or were confined to specific material systems [3]. The advent of large language models (LLMs) presents a paradigm shift. With their extensive architectures and ability to learn from text-based representations, LLMs can capture intricate patterns in materials data that are inaccessible to simpler ML models or human-derived heuristics. The CSLLM framework represents the cutting edge of this approach, leveraging domain-specific fine-tuning to achieve unprecedented predictive performance.

The CSLLM Framework: Architecture and Core Components

The CSLLM framework addresses the synthesizability challenge by decomposing it into three distinct tasks, each handled by a specialized LLM [24] [3]:

- Synthesizability Prediction: Binary classification of a crystal structure as synthesizable or non-synthesizable.

- Synthesis Method Classification: Identifying the appropriate synthetic method (e.g., solid-state or solution).

- Precursor Identification: Recommending suitable chemical precursors for solid-state synthesis.

Data Curation and Text Representation

A key innovation underpinning CSLLM is the construction of a comprehensive and balanced dataset for training and evaluation.

- Positive Data: 70,120 synthesizable crystal structures were curated from the Inorganic Crystal Structure Database (ICSD), all confirmed by experiment [3].

- Negative Data: 80,000 non-synthesizable structures were identified by applying a pre-trained PU learning model to a pool of over 1.4 million theoretical structures from databases like the Materials Project and OQMD. Structures with a low CLscore (<0.1) were selected as negative examples [3].

To enable LLM processing, an efficient text representation for crystal structures, termed "material string," was developed. This format condenses essential crystallographic information—space group, lattice parameters, and unique atomic Wyckoff positions—into a concise, reversible string, avoiding the redundancy of full CIF files [3]. The material string provides a compact and information-rich input for model fine-tuning.

Model Fine-Tuning and Performance

The core LLMs within CSLLM were fine-tuned on the constructed dataset using the material string representation. The performance of each model is summarized in Table 1.

Table 1: Performance Metrics of the CSLLM Framework Components

| CSLLM Component | Primary Task | Key Performance Metric | Reported Result |

|---|---|---|---|

| Synthesizability LLM | Binary Classification (Synthesizable/Non-synthesizable) | Accuracy | 98.6% [3] |

| Comparison to Ehull (≥0.1 eV/atom) | +24.5% improvement (74.1% vs. 98.6%) [3] | ||

| Comparison to Phonon Stability (≥ -0.1 THz) | +16.4% improvement (82.2% vs. 98.6%) [3] | ||

| Methods LLM | Multi-class Classification (Synthesis Route) | Classification Accuracy | 91.0% [3] |

| Precursors LLM | Precursor Recommendation (for Binary/Ternary Compounds) | Prediction Success Rate | 80.2% [3] |

The Synthesizability LLM's performance is particularly noteworthy. It not only achieves state-of-the-art accuracy but also demonstrates exceptional generalization, maintaining 97.9% accuracy when tested on complex structures with large unit cells that exceeded the complexity of its training data [3]. This performance stems from domain-focused fine-tuning, which aligns the model's broad linguistic capabilities with material-specific features, refining its attention mechanisms and reducing incorrect "hallucinations" [3].

Experimental Protocols and Workflow

The development and application of the CSLLM framework follow a structured experimental pipeline, from data preparation to final prediction.

Dataset Construction Protocol

- Data Sourcing: Download CIF files from ICSD and theoretical databases (MP, OQMD, JARVIS).

- Preprocessing: Filter structures to a maximum of 40 atoms and 7 distinct elements. Remove disordered structures.

- Labeling:

- Assign positive labels (synthesizable) to all ICSD structures.

- Calculate CLscore for all theoretical structures using a pre-trained PU learning model [3].

- Assign negative labels (non-synthesizable) to theoretical structures with CLscore < 0.1.

- Data Conversion: Convert all curated CIF files into the material string text representation.

- Data Splitting: Partition the dataset into training, validation, and test sets, ensuring no data leakage between splits.

Model Training and Evaluation Protocol

- Model Selection: Choose a base, open-source LLM (e.g., a LLaMA variant) as the foundation for each specialized model [3].

- Fine-Tuning: Employ supervised fine-tuning on the training set using the material strings as input and the corresponding labels (synthesizability, method, precursors) as targets.

- Hyperparameter Tuning: Optimize learning rate, batch size, and number of epochs on the validation set.

- Performance Benchmarking: Evaluate the fine-tuned Synthesizability LLM against traditional methods by calculating the accuracy of Ehull and phonon stability thresholds on the same test set [3].

Application Workflow for Predicting New Materials

The operational workflow for using CSLLM to assess novel theoretical materials is depicted in the diagram below.

The implementation and application of frameworks like CSLLM rely on a suite of data, software, and computational resources.

Table 2: Key Research Reagent Solutions for LLM-Driven Synthesis Prediction

| Category | Item / Resource | Function / Description | Example Sources |

|---|---|---|---|

| Data Sources | Inorganic Crystal Structure Database (ICSD) | Provides experimentally synthesizable crystal structures as positive training data and ground truth [3]. | FIZ Karlsruhe |

| Theoretical Materials Databases | Sources of non-synthesizable/ theoretical structures for negative data and candidate screening [3]. | Materials Project, OQMD, JARVIS | |

| Software & Models | Pre-trained PU Learning Model | Provides a CLscore to identify non-synthesizable structures from theoretical databases for dataset construction [3]. | Jang et al. |

| Robocrystallographer | Generates deterministic, human-readable textual descriptions of crystal structures from CIF files for alternative LLM input [25]. | Materials Project | |

| CrystaLLM | An alternative LLM approach for generating plausible crystal structures, showcasing the versatility of text-based modeling [26]. | N/A | |

| Infrastructure | Large Language Models (Base) | Foundational models that are fine-tuned on domain-specific data to create specialized predictors [3]. | LLaMA |

| High-Performance Computing (HPC) | Provides the computational resources required for training and fine-tuning large language models. | Local clusters/Cloud platforms |

The CSLLM framework demonstrates a decisive shift from heuristic-based to ML-driven synthesizability prediction. By achieving 98.6% accuracy, it significantly surpasses the predictive power of traditional stability metrics, which are insufficient proxies for real-world synthesizability. The framework's ability to also recommend synthesis methods and precursors provides an integrated, practical tool for experimentalists. This marks a significant step toward closing the loop between computational materials design and experimental synthesis, accelerating the discovery of novel functional materials for applications from energy storage to drug development. Future work will focus on expanding the scope of precursors and synthesis conditions, further solidifying the role of LLMs as an indispensable tool in the materials scientist's arsenal.

The discovery of novel functional molecules is a central challenge in chemical science, crucial for advances in healthcare, energy, and sustainability [27]. However, the practical adoption of generative AI for molecular design has been significantly limited by a persistent problem: these models frequently propose molecules that are difficult or impossible to synthesize in the laboratory [28] [27]. This synthesizability gap represents a critical barrier to transforming computational designs into tangible discoveries. The scientific community has approached this challenge through two fundamentally distinct paradigms. The first relies on heuristic scoring functions—simplified, rule-based metrics that estimate synthetic accessibility based on molecular characteristics [29]. The second, more recent approach employs data-driven machine learning—sophisticated models that directly predict viable synthetic pathways using knowledge extracted from chemical reaction data [28] [27]. This technical guide examines SynFormer and SynthFormer, two transformative frameworks situated at the forefront of this methodological shift from heuristics to machine learning for ensuring molecular synthesizability.

Core Architectures and Approaches

SynFormer is a generative AI framework specifically designed for navigatable synthesizable chemical space. Its core innovation lies in being synthesis-centric—it generates synthetic pathways rather than just molecular structures, ensuring that every designed molecule is synthetically tractable by construction [28] [27]. The framework employs a scalable transformer architecture and incorporates a denoising diffusion module for building block selection from large commercial catalogs [28]. It operates on a synthesizable chemical space defined by purchasable building blocks and known chemical transformations, theoretically covering a space broader than the tens of billions of molecules in Enamine's REAL Space [27].

SynthFormer is a Transformer-based framework specifically focused on predicting the synthesizability of inorganic crystalline materials [30]. It combines Fourier-transformed crystal representations with positive-unlabeled learning and uncertainty calibration to guide experimental materials discovery [30]. While both models share the "Former" suffix indicating transformer architectures, they target distinct domains—SynFormer for organic small molecules via synthetic pathway generation, and SynthFormer for inorganic crystals via synthesizability prediction.

Key Technical Specifications

Table 1: Core Technical Specifications of SynFormer and SynthFormer

| Specification | SynFormer | SynThFormer |

|---|---|---|

| Primary Domain | Organic small molecules | Inorganic crystalline materials |

| Core Approach | Synthetic pathway generation | Synthesizability prediction |

| Architecture | Transformer with diffusion module | Transformer with Fourier representations |

| Synthesizability Enforcement | By construction (pathway-based) | Predictive scoring |

| Key Components | Reaction templates, building blocks, pathway notation | Fourier-transformed crystal representations, positive-unlabeled learning |

| Training Data | 115 reaction templates + 223,244 building blocks | Materials project data (inferred) |

| Primary Output | Synthetic pathways | Synthesizability scores |

Methodology: Architectural Deep Dive

SynFormer's Pathway Generation Mechanism

SynFormer employs a sophisticated representation of synthetic pathways using a postfix notation system that linearizes synthetic pathways for autoregressive decoding [28] [27]. This representation uses four token types: [START], [END], [RXN] (reaction), and [BB] (building block). The model is built on a transformer architecture that processes these token sequences, with specialized components for handling different aspects of the generation process.

Figure 1: SynFormer's encoder-decoder architecture for pathway generation

For building block selection from massive commercial catalogs, SynFormer incorporates a denoising diffusion module rather than a static classification head. This approach generates Morgan fingerprints which are then used to retrieve the nearest building blocks from available candidates, enabling generalization to unseen building blocks [27].

Model Instantiations and Training

The SynFormer framework includes two primary instantiations [27]:

SynFormer-ED: An encoder-decoder model that generates synthetic pathways corresponding to a given input molecule for exact or approximate reconstruction.

SynFormer-D: A decoder-only model for generating synthetic pathways amenable to fine-tuning toward specific property goals.

Both models are trained on a simulated chemical space derived from a curated set of 115 reaction templates and 223,244 commercially available building blocks from Enamine's U.S. stock catalog, extending beyond Enamine's REAL Space [27]. The training incorporates commercially available building blocks and reaction templates that can be modified prior to retraining, providing flexibility for different chemical domains.

Experimental Framework & Validation

Benchmarking Protocols and Metrics

Researchers have established comprehensive experimental protocols to validate synthesizable molecular design frameworks. The key benchmarking tasks include:

Retrosynthesis Planning: Evaluating the model's ability to reconstruct known synthetic pathways for molecules from standard databases like Enamine, ChEMBL, and ZINC250k [31]. The primary metric is success rate—the percentage of molecules for which the model can generate a valid synthetic pathway.

Goal-Directed Molecular Optimization: Assessing performance in optimizing specific chemical properties while maintaining synthesizability. This is typically measured by optimization score, which combines property improvement with synthesizability maintenance [31].

Synthesizable Analog Generation: Testing the model's capability to generate synthesizable analogs of query molecules for hit expansion in drug discovery [31]. Success is measured by structural diversity, synthesizability rate, and similarity to target properties.

Quantitative Performance Analysis

Table 2: Performance Comparison on Retrosynthesis Planning Tasks (Success Rate %)

| Method | Enamine | ChEMBL | ZINC250k |

|---|---|---|---|

| SynNet | 25.2 | 7.9 | 12.6 |

| SynFormer | 63.5 | 18.2 | 15.1 |

| ReaSyn | 76.8 | 21.9 | 41.2 |

Table 3: Performance on Goal-Directed Molecular Optimization (Optimization Score)

| Method | Optimization Score |

|---|---|

| DoG-Gen | 0.511 |

| SynNet | 0.545 |

| SynthesisNet | 0.608 |

| Graph GA-SF | 0.612 |

| Graph GA-ReaSyn | 0.638 |

The experimental results demonstrate SynFormer's significant improvement over earlier synthesizable molecule generation methods like SynNet, particularly on the Enamine dataset where it achieves a 63.5% success rate compared to SynNet's 25.2% [31]. This performance advantage stems from its more comprehensive exploration of synthesizable chemical space and effective pathway generation mechanism.

Table 4: Essential Research Reagents and Computational Resources

| Resource | Type | Function | Availability |

|---|---|---|---|

| Enamine Building Block Catalog | Chemical Database | Provides commercially available molecular building blocks | Upon request from Enamine |

| Reaction Template Set (115 templates) | Chemical Rules | Defines allowed chemical transformations for synthesis | Curated from REAL Space + augmentations |

| ChEMBL Database | Molecular Database | Benchmarking and validation dataset | Publicly available |

| ZINC250k Dataset | Molecular Database | Standard benchmark for molecular generation | Publicly available |

| RDKit | Software Library | Reaction execution and cheminformatics | Open source |

| Pre-trained Model Weights | AI Model | Pre-trained SynFormer models for inference | Available from GitHub repository |

Implementation and Hardware Requirements

Successful implementation of these frameworks requires specific hardware configurations. Based on the reported experiments [32]:

- Optimal Hardware: NVIDIA RTX 4090 GPU

- Processing Time: Seconds to 30 seconds per molecule on GPU vs. 30 seconds to 2 minutes on CPU

- Critical Consideration: Sufficient RAM to prevent subprocess hanging during pathway generation

The codebase and pre-trained models are publicly available through GitHub repositories, enabling researchers to build upon these frameworks for their own molecular design applications [32].

ML vs Heuristics: A Technical Examination

The Heuristic Paradigm: Limitations and Correlations

Traditional heuristic approaches to synthesizability assessment include metrics such as the Synthetic Accessibility (SA) score, SYnthetic Bayesian Accessibility (SYBA), and Synthetic Complexity (SC) score [29]. These methods are typically based on molecular complexity features or fragment frequencies in known databases. While these heuristics offer computational efficiency, they face fundamental limitations:

Heuristic scores assess molecular complexity rather than explicit synthesizability, and their correlation with actual synthetic feasibility varies significantly across chemical domains [29]. Research has shown that while heuristics can be well-correlated with retrosynthesis model solvability for "drug-like" molecules, this correlation diminishes substantially when moving to other classes of molecules, such as functional materials [29].

The Machine Learning Paradigm: Advantages and Trade-offs

Machine learning approaches, particularly pathway-based generation frameworks like SynFormer, offer distinct advantages through their more direct modeling of chemical reality:

Explicit Synthesizability Enforcement: By generating synthetic pathways using known reaction templates and purchasable building blocks, SynFormer ensures synthesizability by construction rather than through post-hoc assessment [28] [27].

Domain Adaptability: ML models can maintain performance across diverse chemical domains where heuristic correlations break down, as demonstrated by SynFormer's application to both drug discovery and materials science [29] [27].

Discovery of Novel Chemical Space: ML approaches can identify promising molecules that would be overlooked by heuristic filters due to their ability to recognize synthesizable but structurally complex molecules [29].

Figure 2: Methodology comparison between heuristic and ML-based approaches

However, ML approaches come with computational costs. Retrosynthesis models can require minutes per evaluation when used post-hoc, making them prohibitive for direct use in optimization loops without sample-efficient generative models like Saturn that enable more feasible integration [29].

Hybrid Approaches and Future Directions

The most promising future direction lies in hybrid approaches that leverage the strengths of both paradigms. Recent work demonstrates that with sufficiently sample-efficient generative models, it becomes feasible to directly optimize for synthesizability using retrosynthesis models while maintaining computational practicality [29]. Furthermore, ML-guided building block filtering can enhance genetic algorithms like SynGA to achieve state-of-the-art performance in synthesizable molecular design [33].

The evolution from heuristic scoring to machine learning approaches for synthesizable molecular design represents a significant paradigm shift in computational chemistry and materials science. Frameworks like SynFormer and SynthFormer exemplify how deep learning architectures can be specifically designed to address the critical challenge of synthesizability that has long impeded the practical application of generative molecular AI. By directly generating synthetic pathways rather than just molecular structures, these models bridge the gap between computational design and experimental realization. As the field advances, the integration of these approaches with high-throughput experimentation and autonomous discovery platforms will further accelerate the design-make-test cycle, ultimately enabling more efficient discovery of novel functional molecules for addressing pressing challenges across healthcare, energy, and sustainability.

Computer-Aided Synthesis Planning (CASP) has emerged as a transformative technology in molecular design, enabling the identification of viable synthetic routes for target molecules by recursively deconstructing them into commercially available building blocks [34]. However, a significant limitation hindering broader adoption is the substantial computational cost of full synthesis planning, where a single run can require "from minutes to several hours" depending on the selected retrosynthesis neural network [34]. This computational burden renders direct CASP integration impractical for most optimization-based de novo drug design methods, which typically require thousands of iterations to achieve convergence [34].

CASP-based synthesizability scores address this limitation by providing fast, learned approximations of full synthesis planning outcomes [34]. These scores are machine learning models trained to predict the likelihood that a synthesis route can be found for a given molecule, or to estimate properties of potential synthesis routes, without performing the actual retrosynthetic analysis [34]. The learning task can be formulated either as a classification of synthesis planning outcomes or as a regression predicting route properties [34]. By capturing the relationship between molecular structure and synthesizability, these scores enable rapid virtual screening and synthesis-aware molecular generation, effectively bridging the gap between computational design and practical synthetic feasibility.

The importance of these scores extends beyond mere synthesizability assessment. In resource-constrained environments such as academic laboratories or small biotech companies, the concept of "in-house synthesizability" – tailored to available building block collections – becomes more valuable than general synthesizability [34]. CASP-based scores can be adapted to this specific context, ensuring that generated molecules can be synthesized with locally available resources rather than assuming near-infinite building block availability [34].

Core Methodological Approaches

Technical Foundations and Implementation Frameworks

CASP-based synthesizability scores are built upon two primary methodological foundations: classification-based and regression-based approaches. Classification formulations train models to predict the binary outcome of synthesis planning success – whether a viable route can be identified using available building blocks and reaction templates [34]. Regression formulations instead predict continuous properties of potential synthesis routes, such as step count, expected yield, or synthetic complexity [34] [35]. Both approaches rely on molecular representations that capture structural features relevant to synthetic feasibility, with extended connectivity fingerprints (ECFP) and MinHashed Atom Pair fingerprints (MAP4) being commonly employed [35].

Recent work has introduced specialized scoring functions tailored to specific aspects of synthesis planning. The Synthetic Potential Score (SPScore) developed by Liu et al. uses a multilayer perceptron trained on existing reaction corpora to evaluate the potential of enzymatic or organic reactions for synthesizing a molecule [35]. This approach employs a margin ranking loss rather than standard classification, encouraging the model to rank the more promising reaction type higher based on relative differences between organic and enzymatic synthesis scores [35]. The resulting scores range from 0 to 1 and can be interpreted as the probability of a molecule being promisingly synthesized by each reaction type [35].

For in-house synthesizability assessment, a rapidly retrainable scoring approach has demonstrated success in capturing synthesizability with limited building block resources [34]. This method requires only a well-chosen dataset of approximately 10,000 molecules for training, enabling quick adaptation to changes in building block inventory through iterative synthesis planning and model retraining [34]. The implementation typically involves molecular fingerprint representation coupled with neural network classifiers, balancing accuracy with computational efficiency.

Comparative Analysis of Scoring Approaches

Table 1: Comparison of CASP-Based Synthesizability Scoring Methods

| Method Type | Training Objective | Molecular Representation | Key Advantages | Limitations |

|---|---|---|---|---|

| Classification-Based | Predicts synthesis planning success/failure [34] | ECFP, MAP4, graph representations [35] | Directly models the binary decision needed for virtual screening | Does not provide route quality information |

| Regression-Based | Predicts route properties (step count, complexity) [34] [35] | ECFP, MAP4, structural descriptors [35] | Provides quantitative synthesis difficulty assessment | May not directly correlate with synthesizability |

| SPScore | Margin ranking loss for reaction type preference [35] | ECFP4, MAP4 with varying dimensions [35] | Unifies step-by-step and bypass synthesis strategies | Requires separate databases for different reaction types |

| In-House synthesizability Score | Classification tailored to specific building blocks [34] | Fingerprint-based representations [34] | Adapts to local laboratory resources | Requires retraining for different building block sets |

Experimental Protocols and Validation

Workflow for In-House Synthesizability Scoring

A comprehensive protocol for developing and validating in-house synthesizability scores involves multiple stages, beginning with the establishment of a building block inventory. As demonstrated in recent work, this process starts with curating available building blocks – approximately 6,000 in-house compounds in a representative case study – followed by generating a training dataset of 10,000 molecules with known synthesis outcomes [34]. The synthesis planning toolkit AiZynthFinder is then deployed with the restricted building block set to determine solvability for each molecule in the training set [34].

The model training phase utilizes molecular fingerprints as input features, with the binary synthesis outcome (solvable/unsolvable) as the training target. A neural network classifier is trained to predict the probability of synthesizability, with performance validated against held-out test sets [34]. For optimal performance, the training dataset should encompass diverse chemical spaces, potentially derived from sources like Papyrus or ChEMBL [34]. The final model achieves rapid inference times (sub-second per molecule) while maintaining high accuracy in predicting synthesizability within the constrained building block environment [34].

Performance Benchmarking Methodologies

Rigorous benchmarking is essential for validating CASP-based scores against full synthesis planning. The standard evaluation protocol involves calculating solvability rates across diverse molecular datasets, comparing the performance between limited in-house building blocks and extensive commercial compound libraries [34]. Key metrics include success rate differentials and route length comparisons.

In a representative benchmark, synthesis planning with only 5,955 in-house building blocks achieved solvability rates of approximately 60% for drug-like molecules, compared to 70% with 17.4 million commercial building blocks – a modest decrease of just 12% despite a 3000-fold reduction in available building blocks [34]. The primary trade-off was longer synthesis routes, with in-house building blocks requiring an average of two additional reaction steps [34].

Table 2: Quantitative Performance of CASP-Based Scores in De Novo Molecular Design

| Evaluation Metric | In-House Building Blocks (~6,000) | Commercial Building Blocks (~17.4M) | Performance Gap |

|---|---|---|---|

| Solvability Rate | ~60% [34] | ~70% [34] | -12% to -17% |

| Average Route Length | 2 steps longer [34] | Baseline length [34] | +2 steps |

| Training Data Requirements | ~10,000 molecules [34] | Millions of molecules [34] | -90%+ |

| Inference Speed | Sub-second per molecule [34] | Minutes to hours per molecule [34] | 100-1000x faster |