Machine Learning in Solid-State Synthesis: Predictive Models, Precursor Selection, and Clinical Translation

This article explores the transformative role of machine learning (ML) in predicting and optimizing solid-state synthesis, a critical process for developing new materials.

Machine Learning in Solid-State Synthesis: Predictive Models, Precursor Selection, and Clinical Translation

Abstract

This article explores the transformative role of machine learning (ML) in predicting and optimizing solid-state synthesis, a critical process for developing new materials. Aimed at researchers and drug development professionals, we first establish the fundamental challenges that make synthesis prediction a bottleneck. We then delve into cutting-edge ML methodologies, from text mining literature data to advanced algorithms for precursor selection and optimizating reaction pathways. A critical evaluation follows, comparing the performance of different models against traditional methods and addressing real-world troubleshooting and data quality issues. Finally, we validate these approaches against experimental results and discuss their profound implications for accelerating the discovery and development of novel biomedical materials, from drug formulations to clinical therapeutics.

The Solid-State Synthesis Bottleneck: Why Machine Learning is a Game-Changer

Solid-state synthesis is a fundamental method for creating novel materials, particularly inorganic compounds and ceramics. This high-temperature process involves the direct reaction of solid precursors to form a new material through the diffusion of atoms or ions. Unlike solution-based methods, solid-state reactions are particularly valuable for producing thermally stable phases and is central to the discovery of new functional materials, including high-temperature superconductors, ionic conductors, and magnetic materials [1].

The process typically involves meticulous weighing of precursor powders, grinding or milling to achieve homogeneity, and subsequent heating at elevated temperatures, often with intermediate regrinding steps to promote complete reaction. Despite its conceptual simplicity, predicting the outcome of a solid-state reaction remains a significant challenge due to the complex interplay of thermodynamic and kinetic factors [1].

Data Extraction and Curation for Synthesis Prediction

The foundation of any effective machine-learning model is high-quality, structured data. For solid-state synthesis, this involves the meticulous extraction of synthesis parameters from diverse sources, primarily scientific literature and patents.

Table 1: Data Types in Solid-State Synthesis Records

| Data Category | Description | Examples | Data Structure Type |

|---|---|---|---|

| Structured Data [2] | Data fitting a predefined schema (rows/columns). Easier to search and analyze. | Final heating temperature, number of heating steps, precursor identities. | Structured |

| Unstructured Data [2] | Data without a predefined model, making analysis more complex. | Scientific article text, lab notebook descriptions. | Unstructured |

| Semi-structured Data [2] | A blend of structured and unstructured types. | A patent document with structured metadata and unstructured text/images. | Semi-structured |

Advanced data extraction leverages multiple approaches:

- Named Entity Recognition (NER): Identifies and classifies key material names and synthesis terms within text [3].

- Multimodal Extraction: Combines text analysis with computer vision to parse information from both text and figures, such as reaction diagrams or spectra [3]. Tools like Plot2Spectra can extract data from spectroscopy plots, while DePlot can convert charts into structured tables for analysis [3].

- Human Curation: Manual data extraction by experts remains a gold standard for quality, especially for documents with complex formats that challenge automated systems. This process can identify and correct a significant number of outliers in text-mined datasets [1].

Machine Learning for Synthesizability Prediction

Machine learning (ML) offers a powerful, data-driven approach to predict the synthesizability of hypothetical materials, helping to overcome the limitations of traditional metrics like energy above the convex hull (Ehull), which does not account for kinetic barriers or synthesis conditions [1].

Positive-Unlabeled (PU) Learning Framework

A key challenge in applying ML to synthesis prediction is the lack of confirmed negative examples (failed attempts) in the literature. Positive-Unlabeled (PU) Learning is a semi-supervised technique designed for this scenario, where only positive (successfully synthesized) and unlabeled (unknown status) data are available [1].

Protocol: Implementing a PU Learning Model for Solid-State Synthesizability

- Objective: To train a classifier that can predict the likelihood of a hypothetical ternary oxide being synthesizable via solid-state reaction.

- Materials & Data:

- Positive Data: A set of known solid-state synthesized materials, e.g., from a human-curated dataset [1].

- Unlabeled Data: A set of hypothetical materials with unknown synthesis status.

- Feature Vectors: Numerical representations of each material's composition and structure.

- Procedure:

- Feature Generation: Compute a set of features for every material in the positive and unlabeled sets. These can include compositional descriptors, structural fingerprints, and thermodynamic stability metrics (e.g., Ehull).

- Model Training: Employ an inductive PU learning algorithm. The core principle involves treating the unlabeled set as a mixture of hidden positive and negative examples and iteratively refining the model to identify reliable negative examples from the unlabeled data.

- Validation: Use hold-out validation on the positive set or cross-validation to tune model hyperparameters. Since true negatives are unavailable, performance is often evaluated using the positive data and domain expert analysis of the top predictions.

- Prediction: Apply the trained model to a database of hypothetical compositions. The model outputs a probability or score for each material, indicating its likelihood of being synthesizable.

Table 2: Key Reagent Solutions for Solid-State Synthesis Research

| Research Reagent / Material | Function in Experimentation |

|---|---|

| Precursor Oxides/Carbonates | High-purity solid powders that serve as the starting materials for the reaction. |

| Mortar and Pestle / Ball Mill | Equipment used for the grinding and mixing of precursor powders to achieve homogeneity and increase surface area for reaction. |

| High-Temperature Furnace | Apparatus used to heat the mixed precursors to the required reaction temperature (often >1000°C) for a specified time. |

| Crucibles (e.g., Alumina, Platinum) | Chemically inert containers that hold the sample during high-temperature heating. |

| Controlled Atmosphere System | Provides an inert (e.g., Argon) or reactive (e.g., Oxygen) gas environment during heating to prevent undesired side reactions. |

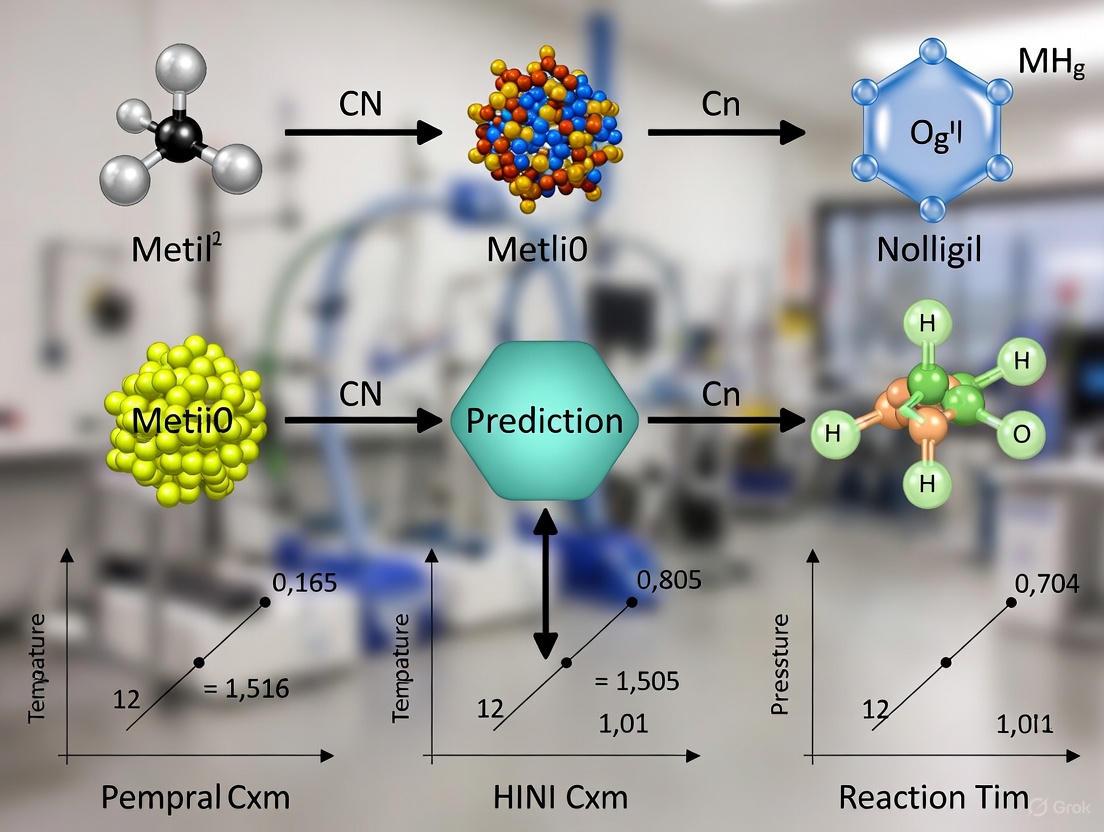

Workflow Diagram: ML-Guided Materials Discovery

The following diagram illustrates the integrated workflow of data extraction, machine learning model application, and experimental validation in solid-state materials discovery.

Diagram Title: ML-Guided Solid-State Discovery Workflow

Future Directions

The field is rapidly evolving with the emergence of foundation models—large-scale models pre-trained on broad data that can be adapted to various downstream tasks [3]. For materials discovery, these models can be fine-tuned for property prediction, synthesis planning, and molecular generation. Future progress will hinge on improving the quality and scale of synthesis data, developing more sophisticated multimodal extraction tools, and creating models that can better integrate the complex thermodynamics and kinetics of solid-state reactions.

In the field of machine learning (ML) for solid-state synthesis prediction, the energy above the convex hull (Ehull) has long been a cornerstone metric for assessing compound stability and predicting synthesizability. Derived from density functional theory (DFT) calculations, Ehull measures a compound's thermodynamic stability relative to its potential decomposition products. However, a growing body of research demonstrates that this traditional thermodynamic metric presents significant limitations when used as the sole predictor for experimental synthesizability, necessitating more sophisticated, multi-faceted approaches that integrate machine learning with diverse experimental data.

While materials with low or negative Ehull values are thermodynamically favored, this does not guarantee successful synthesis. A critical examination reveals that Ehull fails to account for kinetic barriers, synthesis pathway dependencies, entropic contributions at reaction temperatures, and the profound influence of specific experimental conditions. This application note details these limitations, provides quantitative comparisons of emerging methodologies, and outlines detailed experimental protocols for developing more robust, data-driven synthesizability predictions.

Quantitative Analysis of Stability Metric Limitations

The following tables summarize key quantitative findings from recent studies that evaluate the predictive power of traditional and ML-enhanced stability metrics.

Table 1: Performance Comparison of Different Formation Energy and Stability Prediction Models [4]

| Model Type | MAE for ΔHf (eV/atom) | Stability Prediction Performance | Key Limitations |

|---|---|---|---|

| Baseline (ElFrac) | ~0.3 (estimated from parity plot) | Poor | Uses only stoichiometric fractions |

| Compositional ML (e.g., Magpie, ElemNet) | 0.08 - 0.12 | Poor on predicting compound stability | Cannot distinguish between structures of the same composition |

| Structural ML Model | Information Not Provided | Nonincremental improvement in stability detection | Requires known ground-state structure a priori |

| Density Functional Theory (DFT) | Benchmark (~0.1 eV/atom typical error) | Benefits from systematic error cancellation | Computationally expensive |

Table 2: Analysis of Solid-State Synthesizability for Ternary Oxides from Human-Curated Data [1]

| Material Category | Count in Dataset | Relationship with Ehull | Implications for Prediction |

|---|---|---|---|

| Solid-State Synthesized | 3,017 | Necessary but not sufficient condition | Many low-Ehull hypothetical materials remain unsynthesized |

| Non-Solid-State Synthesized | 595 | May have low Ehull | Synthesis is often route-dependent (e.g., hydrothermal) |

| Undetermined | 491 | Insufficient evidence | Highlights data quality challenges in text-mined datasets |

| Text-Mined Dataset Outliers | 156 out of 4,800 | N/A | Only 15% were correctly extracted, emphasizing data quality issues |

Experimental Protocols for Advanced Synthesizability Prediction

Protocol: Positive-Unlabeled (PU) Learning for Solid-State Synthesizability Prediction

Application: Predicting the synthesizability of hypothetical compounds when only positive (successful) and unlabeled synthesis data are available [1].

Workflow Diagram:

Step-by-Step Procedure:

- Data Curation: Assemble a reliable dataset of known synthesized materials. For ternary oxides, this can be done by:

- Downloading ternary oxide entries from the Materials Project database [1].

- Identifying entries with Inorganic Crystal Structure Database (ICSD) IDs as an initial proxy for synthesized materials [1].

- Performing manual data extraction from scientific literature using ICSD, Web of Science, and Google Scholar to verify synthesis method and conditions. Each compound is labeled as "solid-state synthesized," "non-solid-state synthesized," or "undetermined" based on explicit evidence [1].

- Feature Calculation: Compute relevant features for each composition, including:

- Model Training: Apply a PU learning algorithm (e.g., transductive bagging PU learning [1]) to the curated dataset. This technique treats the "solid-state synthesized" entries as positive examples and the remaining entries (including "non-solid-state synthesized" and "undetermined") as unlabeled.

- Prediction & Validation: Use the trained model to predict the synthesizability of hypothetical compositions. The model outputs a ranked list of candidates most likely to be synthesizable via solid-state reaction [1].

Protocol: Multi-Metric Stability Screening for Porous Materials

Application: Integrated stability assessment for metal-organic frameworks (MOFs) and other complex porous materials prior to performance screening [5].

Workflow Diagram:

Step-by-Step Procedure:

- Initial Performance Screening: Shortlist candidate materials based on application-specific performance metrics (e.g., for CO₂ capture: CO₂ uptake ≥4 mmol/g and CO₂/N₂ selectivity ≥200) [5].

- Stability Metric Evaluation:

- Thermodynamic Stability: Evaluate using molecular dynamics (MD) simulations. Calculate the free energy (F) of the material and compare it to a benchmark of known experimental structures. Materials with a relative free energy (ΔLMF) exceeding a threshold (e.g., ~4.2 kJ/mol for MOFs) are deemed unstable [5].

- Mechanical Stability: Calculate elastic moduli (bulk, shear, Young's) via MD simulations at relevant temperatures. Note that low moduli may indicate flexibility rather than instability [5].

- Activation & Thermal Stability: Predict using machine learning models trained on experimental data [5].

- Integration: Overlay all stability metrics to identify materials that satisfy all stability criteria while maintaining high performance.

Protocol: ML-Directed Synthesis with Robotic Validation

Application: Closed-loop, high-throughput discovery of novel inorganic solids, particularly multielement catalysts [6].

Workflow Diagram:

Step-by-Step Procedure:

- Knowledge Base Construction: The system (e.g., CRESt platform) begins by creating representations of potential recipes based on a vast knowledge base of scientific literature and existing databases [6].

- Search Space Definition: Use principal component analysis (PCA) on the knowledge embedding space to define a reduced, efficient search space [6].

- Experiment Proposal: Employ Bayesian optimization (BO) within this reduced space to design the next experiment, suggesting specific chemical compositions and processing parameters [6].

- Robotic Synthesis & Characterization: Execute the proposed recipe using automated systems:

- Data Integration & Learning: Feed the newly acquired multimodal data (text, images, performance metrics) and human feedback back into the system's knowledge base, often using a large language model (LLM) to refine the search space for the next iteration [6]. This creates a continuous feedback loop that rapidly optimizes materials towards a target property.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational and Experimental Resources for ML-Driven Synthesis Prediction

| Tool / Resource | Function / Application | Key Features / Notes |

|---|---|---|

| Human-Curated Synthesis Datasets [1] | Training and benchmarking for synthesizability prediction models | Higher quality than text-mined datasets; includes solid-state reaction conditions and precursor information. |

| Positive-Unlabeled (PU) Learning Algorithms [1] | Predicting synthesizability from incomplete data (only positive and unlabeled examples) | Addresses the lack of explicitly reported failed synthesis attempts in the literature. |

| Multimodal Active Learning Platforms (e.g., CRESt) [6] | Integrating diverse data types for experiment planning and optimization | Combines literature text, compositional data, microstructural images, and human feedback; interfaces with robotic equipment. |

| High-Throughput Robotic Systems [6] | Accelerated synthesis and characterization | Includes liquid-handling robots, carbothermal shock synthesizers, and automated electrochemical workstations. |

| Text-Mined Synthesis Datasets [1] | Large-scale data for training models on synthesis parameters | Can be noisy; require careful validation against human-curated data. |

| Stability Metric Suites [5] | Multi-faceted stability assessment for complex materials | Integrates thermodynamic, mechanical, thermal, and activation stability metrics. |

Application Note: Understanding the Data Scarcity Problem

In machine learning for solid-state synthesis prediction, the scarcity of failed experiment records creates a significant bottleneck for model reliability and generalizability. This application note details the core challenges and quantitative evidence of this data scarcity, framing it within the broader context of materials informatics.

Quantitative Evidence of Data Imbalance

Table 1: Documented Data Scarcity in Materials Synthesis Research

| Data Source / Study | Key Finding on Data Scarcity | Quantitative Impact |

|---|---|---|

| Human-curated Ternary Oxides Dataset [1] | Lack of failed synthesis attempts in literature | 0 failed reactions explicitly documented out of 4,103 ternary oxides analyzed |

| Text-mined Synthesis Data [1] | Low quality of automated data extraction | Overall accuracy of text-mined dataset: only 51% |

| ML-based Failure Identification [7] | Class imbalance in failure data | Improvement in F1 scores for scarce failure classes: >50% with generative augmentation |

| Positive-Unlabeled Learning [1] | Inability to evaluate false positives | Limited validation capability for compounds predicted synthesizable but failing in practice |

Impact on Predictive Modeling

The fundamental challenge in solid-state synthesis prediction lies in the incompleteness of available data. Research indicates that thermodynamic stability metrics like energy above hull (E(_{hull})) are insufficient predictors of synthesizability, as they fail to account for kinetic barriers and experimental conditions [1]. This limitation is exacerbated by the absence of negative data—failed attempts—which are rarely published despite their critical value for understanding synthesis boundaries.

The data scarcity problem manifests in two primary dimensions:

- Volume of Negative Data: The systematic review of ternary oxides revealed a complete absence of explicitly documented synthesis failures in the literature [1]

- Quality of Positive Data: Even for successful syntheses, inconsistent reporting of experimental parameters (precursors, heating profiles, atmospheric conditions) limits their utility for ML training [1]

Protocol for Manual Data Curation and Failure Documentation

This protocol establishes standardized procedures for creating high-quality synthesis datasets through manual literature curation and experimental failure logging.

Materials and Reagents

Table 2: Research Reagent Solutions for Synthesis Data Curation

| Item / Resource | Function in Data Curation | Implementation Example |

|---|---|---|

| ICSD & Materials Project APIs | Provide initial crystallographic data for synthesized materials | Identify 6,811 ternary oxide entries with ICSD IDs as synthesis proxies [1] |

| Structured Literature Databases | Enable systematic literature searching | Web of Science, Google Scholar for comprehensive paper retrieval [1] |

| Domain Expert Curation | Manual verification of synthesis methods and parameters | Researcher with solid-state synthesis experience extracts reaction conditions [1] |

| Standardized Data Extraction Template | Consistent capture of synthesis parameters | Custom template recording heating temperature, atmosphere, precursors, grinding methods [1] |

| Quality Assessment Framework | Evaluate study reliability and data completeness | Critical appraisal using standardized checklists for methodological rigor [8] |

Experimental Procedure

Phase 1: Initial Data Collection

- Source Identification: Download ternary oxide entries from Materials Project database using pymatgen API [1]

- Synthesis Proxy Filtering: Identify entries with ICSD IDs as initial evidence of successful synthesis

- Composition Filtering: Remove entries containing non-metal elements and silicon to focus on relevant systems

- Dataset Establishment: Finalize candidate list (e.g., 4,103 ternary oxides from 1,233 chemical systems)

Phase 2: Literature Extraction Protocol

- Primary Source Examination: Review papers corresponding to ICSD IDs for synthesis details

- Systematic Literature Search:

- Query Web of Science with chemical formula (examine first 50 results sorted chronologically)

- Query Google Scholar (examine top 20 relevant results)

- Data Extraction:

- Record solid-state synthesis confirmation (binary label)

- Extract parameters: highest heating temperature, pressure, atmosphere, mixing/grinding conditions

- Note number of heating steps, cooling process, precursors, single-crystalline status

- Labeling Protocol:

- Solid-state synthesized: At least one record of successful solid-state synthesis

- Non-solid-state synthesized: Material synthesized but not via solid-state reactions

- Undetermined: Insufficient evidence for definitive classification

Phase 3: Quality Assurance

- Validation Sampling: Randomly select 100 solid-state synthesized entries for verification [1]

- Cross-Referencing: Compare with text-mined datasets (e.g., Kononova et al.) for outlier detection

- Data Structuring: Organize final dataset with complete metadata and commentary on uncertain classifications

Workflow Visualization

Protocol for Positive-Unlabeled Learning in Synthesis Prediction

Positive-Unlabeled (PU) learning provides a methodological framework for predicting synthesizability when only positive (successful) and unlabeled data are available.

Technical Specifications

Table 3: PU Learning Framework for Synthesis Prediction

| Component | Implementation | Rationale |

|---|---|---|

| Positive Data | Human-curated solid-state synthesized entries (3,017 compounds) | High-confidence successful syntheses from manual literature curation [1] |

| Unlabeled Data | Hypothetical compositions without confirmed synthesis records | Potentially unsynthesizable compounds or lacking documentation [1] |

| Feature Set | Compositional descriptors, thermodynamic stability (E(_{hull})), structural fingerprints | Captures intrinsic materials properties influencing synthesizability [1] |

| PU Algorithm | Inductive PU learning with domain-specific transfer learning | Outperforms tolerance factor-based approaches and previous PU methods [1] |

| Validation | Retrospective testing on later-synthesized materials | Limited by inability to evaluate false positives without negative data [1] |

Experimental Procedure

Phase 1: Data Preprocessing

Feature Engineering:

- Calculate compositional features (elemental fractions, ionic radii, electronegativity)

- Compute thermodynamic stability metrics (E(_{hull}) from DFT calculations)

- Generate structural descriptors (coordination environments, symmetry features)

Data Partitioning:

- Positive Set (P): Confirmed solid-state synthesized materials (human-curated)

- Unlabeled Set (U): Hypothetical compositions without synthesis confirmation

Phase 2: Model Training

- Base Classifier Selection: Implement ensemble methods (Random Forest, XGBoost) as base classifiers

- PU Learning Framework: Apply inductive PU learning with class prior estimation

- Domain Adaptation: Incorporate transfer learning from related materials families

- Hyperparameter Optimization: Use Bayesian optimization for model tuning

Phase 3: Prediction and Evaluation

- Synthesizability Scoring: Generate probability scores for hypothetical compositions

- Candidate Prioritization: Rank materials by predicted synthesizability scores

- Validation Protocol:

- Retrospective validation on subsequently synthesized materials

- Experimental testing of high-probability candidates (where feasible)

Workflow Visualization

Protocol for Generative Data Augmentation

Generative models address data scarcity by creating synthetic failure examples and balancing class-imbalanced datasets for improved ML performance.

Technical Specifications

Table 4: Generative Models for Data Augmentation

| Method | Application | Performance |

|---|---|---|

| Conditional GAN (cGAN) | Balance class-imbalanced failure datasets | Improves global accuracy by >5% in failure identification [7] |

| Conditional VAE (cVAE) | Generate synthetic failure samples | Improves F1 scores for scarce classes by >50% [7] |

| Reversible Data Generalization | Handle high-cardinality features in small datasets | Enhances utility and privacy in synthetic data generation [9] |

| Differential Privacy GAN | Privacy-preserving synthetic data generation | Maintains data utility while protecting sensitive information [9] |

Experimental Procedure

Phase 1: Data Preparation

- Failure Data Collection: Compile available failure records from laboratory notebooks and limited publications

- Class Imbalance Assessment: Quantify representation across different failure types and synthesis conditions

- Conditioning Variables: Identify key conditioning parameters (failure classes, SNR levels, maximum amplitude) [7]

Phase 2: Model Implementation

Architecture Selection:

- cGAN: Generator and discriminator conditioned on failure classes and synthesis parameters

- cVAE: Encoder-decoder framework with conditioning on experimental variables

- DP-GAN: GAN with differential privacy guarantees for sensitive data

Training Protocol:

- Train on available real data (successful and failed syntheses)

- Condition on relevant experimental parameters

- Implement reversible generalization for high-cardinality features [9]

Phase 3: Synthetic Data Generation and Validation

- Controlled Generation: Generate synthetic failure samples for under-represented classes

- Quality Assessment:

- Statistical similarity testing (distribution matching)

- Domain expert evaluation of synthetic samples

- Model Validation:

- Train ML models on augmented datasets

- Test on holdout datasets to measure performance improvement [7]

Workflow Visualization

In many scientific fields, obtaining completely labeled datasets for supervised machine learning is a significant challenge. This is particularly true in domains like materials science and drug development, where confirming the absence of a property (a "negative" example) can be as difficult and resource-intensive as confirming its presence. Positive-Unlabeled (PU) learning addresses this fundamental data limitation by providing methodologies for training accurate predictive models using only positive and unlabeled examples.

The core premise of PU learning is that while we have confirmed examples of a positive class (e.g., synthesizable materials, successful drug compounds), we lack reliably confirmed negative examples. The unlabeled data typically contains a mixture of both positive and negative instances, but without annotations to distinguish them. This scenario is ubiquitous in scientific research, where literature and databases predominantly report successful outcomes while omitting failed attempts. PU learning algorithms effectively leverage the available positive examples and the characteristics of the unlabeled set to construct classifiers that can identify new positive instances with high reliability [10] [1].

Theoretical Foundations of PU Learning

Problem Formulation and Key Assumptions

PU learning operates under two fundamental assumptions. First, labeled positive examples are drawn randomly from the overall positive population. This means the labeled positives should be representative of all positives in the data. Second, the unlabeled data is a mixture of both positive and negative examples, with no other hidden structure. The primary goal is to train a classifier that can accurately distinguish between positive and negative instances using only positively labeled examples and a set of unlabeled examples that contains hidden negatives.

Several technical approaches have been developed to address this challenge:

- Biased Learning Methods: Treat all unlabeled examples as negatives while accounting for the resulting label noise.

- Two-Step Techniques: Identify reliable negative examples from the unlabeled data before proceeding with semi-supervised learning.

- Class Prior Estimation: Estimate the proportion of positive examples in the unlabeled data to inform the learning process [10] [11].

The risk estimator for PU learning can be expressed as:

[ R{pu}(f) = \pip E{X|Y=1}[l(f(X),1)] + EX[l(f(X),0)] - \pip E{X|Y=1}[l(f(X),0)] ]

where ( \pi_p = P(Y=1) ) represents the class prior probability [11].

PU Learning in the Context of Few-Shot Learning

PU learning represents a specialized case within the broader field of Few-Shot Learning (FSL), which addresses model training with limited supervised information. As outlined in the FSL taxonomy, PU learning falls under the category of methods that utilize prior knowledge to augment training data, particularly through semi-supervised approaches that leverage unlabeled samples [10]. This positioning highlights how PU learning addresses the dual challenges of limited positive examples and incomplete labeling that frequently occur together in scientific domains.

Application to Solid-State Synthesis Prediction

The Materials Synthesizability Challenge

The prediction of solid-state synthesizability represents an ideal application for PU learning in materials science. High-throughput computational screening regularly identifies thousands of theoretically stable compounds with promising properties, but experimental validation through synthesis remains a critical bottleneck. Traditional thermodynamic stability metrics like energy above hull (Ehull) provide insufficient conditions for synthesizability, as kinetic barriers and reaction conditions play decisive roles [1].

Compounding this challenge, materials databases and scientific literature predominantly contain reports of successful synthesis outcomes (positive examples), while failed attempts rarely get documented (missing negative examples). This creates precisely the data environment where PU learning excels: confirmed positives alongside numerous unlabeled candidates whose synthesizability remains unknown [1].

Table 1: Data Characteristics in Solid-State Synthesis Prediction

| Data Type | Availability | Examples | Challenges |

|---|---|---|---|

| Positive Examples | Limited | Successfully synthesized compounds via solid-state reaction | May not represent all synthesizable materials |

| Negative Examples | Extremely scarce | Documented synthesis failures | Rarely published or systematically recorded |

| Unlabeled Examples | Abundant | Hypothetical compounds, compounds synthesized via other methods | Mixed population of synthesizable and non-synthesizable materials |

Case Study: Predicting Synthesizability of Ternary Oxides

A recent 2025 study demonstrates the practical application of PU learning to predict solid-state synthesizability of ternary oxides. Researchers constructed a human-curated dataset of 4,103 ternary oxides from the Materials Project database, with manual verification of synthesis status through literature review. This careful curation addressed quality issues present in automated text-mined datasets, which can have error rates as high as 49% [1].

The resulting dataset contained:

- 3,017 solid-state synthesized entries (positive examples)

- 595 non-solid-state synthesized entries

- 491 undetermined entries

After preprocessing, the researchers applied a PU learning framework to predict synthesizability of hypothetical compositions, ultimately identifying 134 out of 4,312 candidates as likely synthesizable [1] [12]. This approach successfully addressed the fundamental data constraint of missing negative examples that would render conventional supervised learning infeasible.

Experimental Protocols and Methodologies

Data Curation Protocol for Solid-State Synthesis

Objective: Create a high-quality dataset for PU learning applications in solid-state synthesizability prediction.

Materials and Data Sources:

- Ternary oxide entries from Materials Project database (version 2020-09-08)

- Inorganic Crystal Structure Database (ICSD) for synthesis verification

- Scientific literature via Web of Science and Google Scholar

Procedure:

- Initial Filtering: Download 21,698 ternary oxide entries from Materials Project. Identify 6,811 entries with ICSD IDs as potentially synthesized.

- Composition Filtering: Remove entries containing non-metal elements and silicon, resulting in 4,103 ternary oxides for manual curation.

- Literature Verification:

- Examine papers corresponding to ICSD IDs

- Review first 50 search results sorted chronologically in Web of Science

- Check top 20 relevant results in Google Scholar

- Data Extraction:

- Record solid-state synthesis status (confirmed/not confirmed/undetermined)

- Extract reaction conditions when available: highest heating temperature, pressure, atmosphere, grinding conditions, number of heating steps, cooling process, precursors

- Note crystalline status of product

- Quality Control:

- Implement cross-validation for ambiguous cases

- Document reasons for undetermined classifications

- Flag entries with conflicting literature evidence [1]

Expected Outcomes: A reliably labeled dataset with confirmed positive examples for solid-state synthesizability, suitable for PU learning implementation.

Implementation Protocol for PU Learning

Objective: Train and validate a PU learning model for synthesizability prediction.

Computational Resources:

- Standard scientific computing environment (Python/R)

- Machine learning libraries (scikit-learn, TensorFlow/PyTorch for deep learning variants)

- Sufficient RAM for feature matrices and model training

Procedure:

- Feature Engineering:

- Calculate compositional descriptors (elemental properties, stoichiometric ratios)

- Compute structural features (if available) from crystal structures

- Derive thermodynamic descriptors (formation energy, energy above hull)

- Include synthetic accessibility features (melting points of constituents)

Model Selection and Training:

- Select appropriate PU learning algorithm (two-step methods often perform well)

- Implement class prior estimation

- Train initial classifier using positive and unlabeled data

- Identify reliable negative examples from unlabeled set

- Refine classifier using expanded labeled set

Validation and Testing:

Troubleshooting Tips:

- Sensitivity to class prior estimation may require robustness analysis

- Feature selection can significantly impact model performance

- Consider ensemble approaches to stabilize predictions

Visualization of Workflows

PU Learning Conceptual Workflow

Solid-State Synthesis Prediction Implementation

Table 2: Essential Resources for PU Learning in Synthesis Prediction

| Resource | Function | Example Sources |

|---|---|---|

| Materials Databases | Provide candidate materials and basic properties | Materials Project, ICSD, OQMD |

| Literature Curation Tools | Enable manual verification of synthesis status | Web of Science, Google Scholar, Custom annotation platforms |

| Feature Calculation Software | Generate descriptors for machine learning | pymatgen, matminer, ChemML |

| PU Learning Algorithms | Implement core classification methods | Modified scikit-learn classifiers, Specialized PU learning libraries |

| Validation Frameworks | Assess model performance without true negatives | Rank-based metrics, Prospective validation protocols |

Performance Metrics and Benchmarking

Table 3: Performance Comparison of PU Learning Approaches in Materials Science

| Application Domain | Data Characteristics | PU Method | Key Performance Results |

|---|---|---|---|

| Solid-State Synthesizability (Ternary Oxides) | 3,017 positive examples, 4,312 unlabeled candidates | Two-step PU learning with class prior estimation | 134 predicted synthesizable candidates from hypothetical compositions [1] |

| General Perovskite Synthesizability | Mixed positive-unlabeled dataset | Domain-transfer PU learning | Outperformed tolerance factor-based approaches and previous PU implementations [1] |

| 2D MXene Synthesizability | Limited positive examples | Transductive bagging PU learning | Effective identification of synthesizable precursors and compounds [1] |

| Named Entity Recognition | Dictionary-based positive examples | Unbiased PU risk estimation | Superior to dictionary matching and other PU methods across multiple datasets [11] |

Positive-Unlabeled learning represents a powerful paradigm for addressing the data incompleteness problems that frequently arise in scientific domains. By systematically leveraging confirmed positive examples while accounting for the mixed nature of unlabeled data, PU learning enables predictive modeling in scenarios where traditional supervised learning would be impossible.

The application to solid-state synthesis prediction demonstrates how PU learning can accelerate materials discovery by prioritizing the most promising candidates for experimental validation. Similar opportunities exist across scientific domains, particularly in drug discovery, where confirmed active compounds are known but confirmed inactives may be scarce.

As research in this field advances, key future directions include:

- Development of more robust class prior estimation methods

- Integration with deep learning architectures for automated feature learning

- Adaptation to multi-task learning scenarios common in scientific applications

- Improved uncertainty quantification for model predictions

For researchers implementing PU learning, success depends critically on both methodological rigor and domain-specific knowledge. Careful data curation, appropriate feature engineering, and thoughtful validation strategies remain essential components of effective PU learning systems in scientific contexts.

Key Bottlenecks in Predictive Synthesis for Biomedical Materials

Predictive synthesis—the use of machine learning (ML) to design and create new biomedical materials—is transforming regenerative medicine, drug delivery, and diagnostic technologies. By leveraging large-scale computational models, researchers aim to inverse-design materials with tailored biological functions, moving from serendipitous discovery to rational design [13]. However, within the specific context of machine learning for solid-state synthesis prediction research, several critical bottlenecks impede progress. These challenges span data scarcity, model generalizability, synthesis planning, and experimental validation, creating significant friction in the pipeline from computational prediction to realized material [3].

This Application Note details the primary bottlenecks, provides structured quantitative data on their impact, and offers detailed, actionable protocols for researchers to diagnose and mitigate these issues in their own work. The focus is specifically on the intersection of ML-driven property prediction and the practical synthesis of solid-state biomedical materials such as bioceramics, metallic implants, and complex polymer composites.

Key Bottlenecks & Quantitative Analysis

The journey from a predicted material to a synthesized and characterized one is fraught with specific, quantifiable challenges. The table below summarizes the core bottlenecks, their manifestations, and their impact on the predictive synthesis pipeline.

Table 1: Key Bottlenecks in Predictive Synthesis of Biomedical Materials

| Bottleneck Category | Specific Challenge | Typical Impact on Research | Reported Quantitative Metric |

|---|---|---|---|

| Data Scarcity & Quality | Lack of large, standardized datasets for biomaterials [3]. | Limits model accuracy and generalizability. | Models often trained on <100-1000 examples for specific properties, versus >10^9 for general chemistry [3]. |

| High cost and time for high-fidelity experimental data (e.g., biocompatibility) [14]. | Increases risk of model prediction failure in lab. | Full biocompatibility and degradation profiling can take 6-18 months [15]. | |

| Model Generalizability | "Activity cliffs" – small structural changes cause dramatic property shifts [3]. | Poor real-world performance despite high training accuracy. | Model performance can drop by >30% when applied to new material classes outside training distribution. |

| Over-reliance on 2D molecular representations (e.g., SMILES) [3]. | Failure to predict properties dependent on 3D conformation and solid-state structure. | Omission of 3D data is a primary source of error for 60% of solid-state property predictions [3]. | |

| Synthesis Planning & Execution | Difficulty predicting synthesis pathways and parameters from structure [13]. | Prevents realization of computationally discovered materials. | >70% of predicted materials lack a known or feasible synthesis route [13]. |

| Transferring lab-scale synthesis to manufacturable processes (GMP) [15]. | Barrier to clinical translation and commercial application. | Scale-up from lab to GMP production has a success rate of <15% for novel biomaterials [15]. | |

| Validation & Integration | Closing the loop with high-throughput experimental validation [13]. | Slow feedback for model iteration and improvement. | Autonomous labs can reduce cycle time from prediction to validation from months to days [13]. |

Experimental Protocols for Bottleneck Mitigation

To address the bottlenecks identified in Table 1, the following protocols provide a structured methodology for researchers.

Protocol 1: A Multi-Modal Data Extraction and Curation Pipeline

Objective: To systematically build a high-quality, multi-modal dataset for biomaterial training, integrating both public data and proprietary experimental results, including "negative" data (failed syntheses) [13].

Materials:

- Computing Infrastructure: High-performance computing cluster with ≥ 1 TB storage.

- Software: Python 3.8+, Natural Language Processing (NLP) libraries (e.g., spaCy, Transformers), Computer Vision libraries (e.g., OpenCV, Vision Transformers) [3].

- Data Sources: Public biomaterial databases (e.g., PubChem, ZINC), internal lab notebooks, published literature, and patent documents [3].

Procedure:

- Textual Data Extraction: Implement a Named Entity Recognition (NER) model fine-tuned on biomaterial science literature to extract material compositions, synthesis conditions, and properties from text-based sources (e.g., PDFs of scientific papers) [3].

- Image Data Extraction: Employ a Vision Transformer model to convert figures and plots from literature into structured data. For instance, use a tool like

Plot2Spectrato extract spectral data from chart images [3]. - Structured Data Integration: Map extracted data into a standardized schema using a pre-trained Large Language Model (LLM) for schema-based extraction to ensure consistency across different data modalities and sources [3].

- "Negative Data" Logging: Mandate the logging of all failed synthesis attempts and sub-optimal material properties in a structured format (e.g., a shared electronic lab notebook) with standardized metadata fields.

- Data Federation: Where data cannot be centralized due to privacy or size, implement a federated learning setup where model training occurs locally on each data source, and only model weights are aggregated [16].

Figure 1: Workflow for multi-modal biomaterials data curation.

Protocol 2: Developing a Transferable, 3D-Aware Property Prediction Model

Objective: To create a property prediction model for biomedical materials (e.g., biodegradation rate, protein adsorption) that is robust to "activity cliffs" and incorporates critical 3D structural information.

Materials:

- Reagent Solutions:

- Software: Machine Learning framework (e.g., PyTorch, TensorFlow), libraries for geometric deep learning (e.g., PyG, DGL), and molecular dynamics simulation software (e.g., GROMACS).

Procedure:

- Pre-training: Start with a foundation model (e.g., a Graph Neural Network or Transformer) pre-trained on a broad chemical corpus like ZINC or PubChem to learn general chemical representations [3].

- 3D Representation: For each molecule or material in the dataset, generate representative 3D conformations using molecular mechanics or density functional theory (DFT) calculations. Represent the material as a 3D graph where nodes are atoms and edges encode bond lengths and angles.

- Model Fine-tuning: Fine-tune the pre-trained model on a smaller, labeled dataset of biomedical materials. Use a multi-task learning objective to predict both the target property (e.g., degradation rate) and auxiliary properties (e.g., solubility, surface energy) to improve generalizability.

- Explainability Analysis: Apply Explainable AI (XAI) techniques, such as attention mechanism analysis or SHAP plots, to interpret which structural features the model deems most important for its predictions. This builds trust and provides scientific insight [13] [16].

- Validation: Rigorously test the model on a held-out test set composed of entirely new material classes to evaluate its performance against "activity cliffs."

Figure 2: 3D-aware property prediction model architecture.

Protocol 3: Closing the Loop with Autonomous Validation

Objective: To establish a high-throughput experimental workflow that automatically validates ML-predicted materials, providing rapid feedback to iteratively improve the predictive models [13].

Materials:

- Robotics: Liquid handling robots, automated synthesis reactors (e.g., for polymer synthesis or sol-gel processes).

- Analytical Equipment: Automated in-line or at-line characterization tools (e.g., HPLC, plate readers for colorimetric assays, dynamic light scattering).

- Software: Laboratory Information Management System (LIMS), data analysis pipelines, and the central ML model from Protocol 2.

Procedure:

- Candidate Selection: The predictive model proposes a batch of candidate materials with high predicted performance for a target application (e.g., a polymer for controlled drug delivery).

- Automated Synthesis: Synthesis recipes are translated into instructions for automated robotic platforms to execute the material synthesis.

- In-line Characterization: The synthesized materials are automatically transferred to analytical equipment for key characterization (e.g., molecular weight, particle size, zeta potential).

- Data Feedback: The results from characterization are automatically fed back into the database created in Protocol 1. This includes both successful and failed syntheses.

- Model Retraining: The updated database, now enriched with new experimental data, is used to retrain and refine the predictive model, closing the loop and initiating the next cycle of discovery.

Table 2: Research Reagent Solutions for Predictive Synthesis

| Reagent / Tool | Type | Primary Function in Workflow |

|---|---|---|

| ZINC/ChEMBL Database | Data | Large-scale chemical datasets for foundational model pre-training [3]. |

| Named Entity Recognition (NER) Model | Software | Automates extraction of material names and properties from scientific text [3]. |

| Vision Transformer | Software | Extracts structured data (e.g., spectra) from images and figures in literature [3]. |

| Graph Neural Network (GNN) | Model | Learns from graph-based representations of molecules and materials, incorporating 3D structure [3]. |

| Federated Learning Framework | Software/Protocol | Enables model training across decentralized data sources without sharing raw data [16]. |

| Automated Synthesis Robot | Hardware | Executes high-throughput, reproducible synthesis of predicted material candidates [13]. |

| Explainable AI (XAI) Tools | Software | Provides insights into model predictions, building trust and guiding scientific intuition [13] [16]. |

From Data to Decisions: Machine Learning Methods for Synthesis Prediction

The rate of discovery for new solid-state materials is fundamentally constrained by the slow and resource-intensive process of experimental validation for the vast number of promising candidates generated by high-throughput computational screening [1]. While thermodynamic metrics like energy above hull (E(_{hull})) provide a useful initial filter for hypothetical compounds, they are insufficient for predicting synthesizability as they do not account for kinetic barriers, entropic contributions, or the specific conditions required for successful solid-state reactions [1]. The majority of practical synthesis knowledge—including detailed protocols, parameters, and outcomes—resides within the unstructured text of millions of published scientific articles. Manually extracting this information is prohibitively time-consuming, creating a critical bottleneck. Text-mining (TM) and Natural Language Processing (NLP) technologies have therefore emerged as essential tools for the automated construction of large-scale, structured synthesis databases, thereby accelerating data-driven materials research and discovery [17] [18] [1].

NLP Pipelines for Synthesis Information Extraction

The transformation of unstructured scientific text into a structured, queryable database follows a multi-stage NLP pipeline. The approach has evolved from simple frequency-based methods to sophisticated deep-learning techniques [19].

Foundational NLP Concepts and Pipeline Stages

A standard NLP pipeline for materials science text involves several sequential processing steps [19]:

- Corpus Creation: The process begins with gathering a collection of texts, or a corpus, of scientific publications relevant to solid-state synthesis.

- Tokenization: Raw text is split into smaller units called tokens, which can be words, sub-words, or punctuation marks. Sentence segmentation is often the first step.

- Part-of-Speech (POS) Tagging: Each token is tagged with its grammatical role (e.g., noun, verb, adjective), which aids in understanding the sentence structure.

- Lemmatization: Words are reduced to their canonical base form, or lemma (e.g., "synthesized" and "synthesizing" both become "synthesize"). This is more advanced than simple stemming, as it uses vocabulary and morphological analysis to return a valid root word.

- Named Entity Recognition (NER): This is a critical step where the model identifies and classifies real-world entities mentioned in the text into predefined categories. For synthesis databases, key entities include Material Names, Properties, Synthesis Parameters (e.g., temperature, time, atmosphere), and Synthesis Actions (e.g., grind, heat, cool) [17] [19].

- Relationship Extraction: After identifying entities, the pipeline determines the specific relationships between them, for instance, linking a synthesis temperature value to the correct material.

The Evolution of Language Models in Materials Science

The performance of NLP pipelines, particularly for NER, has been revolutionized by the development of advanced language models [17].

- Word Embeddings: Early models like Word2Vec and GloVe created static vector representations of words that captured semantic similarities. This allowed, for example, calculations of materials similarity to aid in discovery [17].

- Transformer Models: The introduction of the Transformer architecture, with its self-attention mechanism, enabled the development of contextualized embeddings, where a word's vector representation changes based on its surrounding context [17].

- Large Language Models (LLMs): Models such as BERT and GPT represent the current state-of-the-art. They are pre-trained on massive text corpora and can be adapted for specific domains like materials science through fine-tuning. This involves further training a general-purpose LLM on a specialized corpus of scientific literature, equipping it with the domain-specific knowledge needed to accurately understand and extract synthesis information [17]. Prompt engineering with cloud-based models like GPT offers an alternative, though less domain-specialized, approach to information extraction [17].

Table 1: Comparison of Text-Mined vs. Human-Curated Synthesis Data Quality

| Metric | Text-Mined Dataset (Kononova et al.) | Human-Curated Dataset (Chung et al.) |

|---|---|---|

| Scope | 31,782 solid-state reactions [1] | 4,103 ternary oxides [1] |

| Overall Accuracy | 51% [1] | ~100% (by definition of manual curation) |

| Outlier Analysis | 156 outliers identified in a 4,800-entry subset; only 15% were correctly extracted [1] | Used as the ground truth for validating text-mined data [1] |

| Primary Use Case | Large-scale trend analysis, training ML models with coarse descriptions [1] | Benchmarking, model training where high data fidelity is critical [1] |

Application Note: A Protocol for Building a Solid-State Synthesis Database

This protocol outlines the steps for creating a specialized database of solid-state synthesis parameters for ternary oxides, leveraging both automated text-mining and human validation to ensure high data quality.

Experimental Workflow

The following diagram illustrates the complete workflow from literature collection to the final, usable database.

Step-by-Step Protocol

Step 1: Data Collection and Preprocessing

- Literature Sourcing: Begin by compiling a list of target materials. For example, start with 21,698 ternary oxide entries from the Materials Project database, then filter to 4,103 entries that have Inorganic Crystal Structure Database (ICSD) IDs as an initial proxy for synthesized materials [1].

- Text Acquisition: Download the full-text PDFs of scientific papers associated with these materials using their ICSD IDs and searches on platforms like Web of Science and Google Scholar.

- Text Conversion: Convert the PDF files into plain text using a tool like

pymatgen's built-in PDF reader or other optical character recognition (OCR) software. This step is crucial as it transforms the document into a machine-readable format [1].

Step 2: NLP Pipeline for Information Extraction This core step processes the raw text to identify and structure key synthesis information. Implement the following stages, ideally using a fine-tuned language model like MatBERT [17].

- Named Entity Recognition (NER): Configure the NER model to identify and tag the following key entities in the text:

Material: Chemical formulas and names (e.g., "BiFeO₃", "ternary oxide").Property: Reported material properties (e.g., "band gap", "dielectric constant").SynthesisAction: Verbs describing synthesis steps (e.g., "grind", "heat", "sinter", "cool").ParameterValue: Numerical values associated with synthesis (e.g., "850", "12").ParameterUnit: Units for the parameters (e.g., "°C", "hours").Atmosphere: Synthesis environment (e.g., "air", "O₂", "Argon").

- Relationship Extraction: Use dependency parsing and rule-based or model-based classifiers to link entities. For example, the model should associate the value "850" and the unit "°C" with the action "heat", and further link this entire cluster to the target "BiFeO₃" material [19].

Step 3: Data Validation and Curation

- Human-in-the-Loop Validation: Manually review a statistically significant sample (e.g., 100+ randomly selected entries) of the extracted data to quantify accuracy and identify common error modes [1]. This step is critical, as purely automated extraction can have low overall accuracy (~51%) [1].

- Outlier Detection: Use the human-validated dataset to identify and flag outliers in the larger text-mined dataset. For instance, cross-reference heating temperatures against the melting points of precursor materials; a recorded temperature exceeding the melting point may indicate an extraction error [1].

- Data Labeling: For synthesizability prediction, label each material entry as "synthesized" or "not synthesized" based on the extracted evidence. The lack of reported failed syntheses is a known challenge, which can be addressed using Positive-Unlabeled (PU) learning techniques at the modeling stage [1].

Table 2: The Scientist's Toolkit: Essential Reagents for Synthesis Database Construction

| Tool/Resource | Type | Function in Protocol |

|---|---|---|

| Materials Project API | Database | Provides initial list of candidate materials and computed properties like E(_{hull}) for analysis [1]. |

| Inorganic Crystal Structure Database (ICSD) | Database | Source of peer-reviewed crystal structures and links to original literature for data extraction [1]. |

| Fine-tuned BERT (e.g., MatBERT) | Language Model | Pre-trained transformer model adapted for materials science, performing core NER tasks with high accuracy [17]. |

| pymatgen | Python Library | Aids in parsing crystallographic data, converting PDFs to text, and general materials analysis [1]. |

| Positive-Unlabeled (PU) Learning Algorithm | Machine Learning Model | Enables training of synthesizability predictors from datasets containing only confirmed positive examples and unlabeled data [1]. |

Data Integration and Machine Learning Application

The final, validated database serves as the foundation for predictive machine learning models. The relationship between the extracted data and the ML task can be visualized as a directed graph, illustrating the flow from raw input to synthesis prediction.

- Feature Engineering: The structured data from the database is used to create feature vectors for each material. These can include:

- Numerical Features: Maximum heating temperature, number of heating steps, dwell time.

- Categorical Features: Synthesis atmosphere, precursor types, mixing method.

- Calculated Features: Thermodynamic stability metrics (E(_{hull})) from the Materials Project, elemental descriptors.

- Positive-Unlabeled Learning: Given the absence of explicitly reported failed experiments, PU learning is a powerful semi-supervised approach. The model is trained using the known synthesized materials as "Positives" and all non-synthesized/hypothetical materials as "Unlabeled" data. This allows the model to learn the characteristics of synthesizable materials and probabilistically identify other synthesizable candidates from the unlabeled set [1].

- Outcome: A trained model can screen thousands of hypothetical compositions, predicting their solid-state synthesizability and prioritizing the most promising candidates for experimental validation, thereby dramatically accelerating the discovery cycle [1].

Positive-Unlabeled Learning Frameworks for Predicting Synthesizability

The discovery of new functional materials is a cornerstone of technological advancement, from developing new pharmaceuticals to creating sustainable energy solutions. While high-throughput computational methods have successfully identified millions of candidate materials with promising properties, a significant bottleneck remains: determining which of these theoretically predicted materials can be successfully synthesized in a laboratory. The challenge stems from the complex interplay of thermodynamic, kinetic, and experimental factors that influence synthesis outcomes, which cannot be fully captured by traditional stability metrics like formation energy or energy above the convex hull.

Positive-Unlabeled (PU) learning has emerged as a powerful machine learning framework to address this fundamental challenge in materials science. This approach is particularly well-suited to synthesizability prediction because while databases contain confirmed examples of synthesized materials (positive examples), comprehensive data on failed synthesis attempts (negative examples) are rarely published. PU learning algorithms operate effectively with only positive and unlabeled examples, making them ideally suited to bridge the gap between theoretical materials prediction and experimental realization.

Core Principles of PU Learning for Synthesizability

The Synthesizability Prediction Challenge

Traditional supervised learning requires both positive and negative examples to train classification models. However, in materials synthesis, negative examples (failed synthesis attempts) are systematically absent from most scientific literature and databases. This creates a fundamental limitation for conventional machine learning approaches. Researchers have attempted to circumvent this problem by treating unsynthesized materials as negative examples, but this introduces significant bias since many unsynthesized materials may actually be synthesizable under appropriate conditions.

PU learning addresses this data limitation by treating the synthesizability prediction problem as a semi-supervised learning task with two distinct classes:

- Positive (P): Materials confirmed to be synthesizable through experimental reports

- Unlabeled (U): Materials with unknown synthesizability status (may include both synthesizable and non-synthesizable materials)

The fundamental assumption in PU learning is that the unlabeled set contains both positive and negative examples, and the algorithm's task is to identify reliable negative examples from the unlabeled data during the training process.

Key PU Learning Strategies

Several specialized PU learning strategies have been developed specifically for synthesizability prediction:

Two-Step Techniques: These methods first identify reliable negative examples from the unlabeled data, then apply standard classification algorithms to the resulting positive and negative sets. This approach often employs iterative self-training to refine the negative set selection.

Biased Learning Methods: These techniques treat all unlabeled examples as noisy negative examples and assign corresponding weights to account for the potential mislabeling.

Dual-Classifier Frameworks: Advanced approaches like SynCoTrain employ two complementary graph convolutional neural networks (SchNet and ALIGNN) that iteratively exchange predictions to mitigate model bias and enhance generalizability [20]. This co-training strategy allows the classifiers to collaboratively refine their understanding of the unlabeled data.

Experimental Protocols and Implementation

Data Curation and Preprocessing

Protocol 1: Human-Curated Dataset Development

Objective: Create high-quality labeled datasets for PU learning model development and validation.

Procedure:

- Source Selection: Extract candidate materials from authoritative databases (e.g., Materials Project, ICSD). Focus on specific material classes (e.g., 4,103 ternary oxides) to ensure domain relevance [1].

- Literature Mining: Systematically examine primary research articles, prioritizing those with detailed experimental sections. Use both automated searches and manual curation to identify synthesis reports.

- Label Assignment: Categorize each material into:

- Solid-state synthesized: Explicit documentation of successful solid-state synthesis

- Non-solid-state synthesized: Synthesis achieved only through non-solid-state methods

- Undetermined: Insufficient evidence for definitive classification

- Metadata Extraction: Record critical synthesis parameters including highest heating temperature, pressure, atmosphere, grinding conditions, number of heating steps, and precursor information when available.

- Quality Validation: Implement random sampling and manual verification of labeled entries (e.g., 100 randomly chosen entries) to ensure dataset accuracy [1].

Considerations: Human-curated datasets, while labor-intensive, provide significantly higher quality than automated text-mining approaches, which may have accuracy rates as low as 51% for complex synthesis information [1].

Protocol 2: Large-Scale Dataset Construction for LLM Fine-Tuning

Objective: Develop comprehensive, balanced datasets for training specialized large language models.

Procedure:

- Positive Example Collection: Select 70,120 crystal structures from ICSD with ≤40 atoms and ≤7 different elements, excluding disordered structures [21].

- Negative Example Generation: Apply pre-trained PU learning models to calculate CLscores for 1,401,562 theoretical structures from multiple databases. Select structures with CLscore <0.1 as negative examples (80,000 structures) [21].

- Data Validation: Verify that 98.3% of positive examples have CLscores >0.1 to confirm appropriate threshold selection.

- Representation Development: Create efficient text representations (e.g., "material string") that integrate essential crystal information in a concise, reversible format for LLM processing.

Model Architectures and Training

Protocol 3: SynCoTrain Dual-Classifer Implementation

Objective: Implement a robust PU learning framework for synthesizability prediction.

Procedure:

- Architecture Selection:

- Implement two complementary graph neural networks: SchNet and ALIGNN

- SchNet focuses on continuous-filter convolutional layers for atomistic systems

- ALIGNN incorporates both atomic and bond information through graph attention

- Co-Training Framework:

- Initialize both networks with different random weight initializations

- For each training iteration: a. Each classifier makes predictions on unlabeled examples b. Exchange high-confidence predictions between classifiers c. Update training sets with newly labeled examples d. Retrain both classifiers on expanded labeled sets

- PU Loss Function: Implement weighted binary cross-entropy loss that accounts for the unlabeled nature of the negative examples

- Validation: Evaluate performance on hold-out test sets and calculate standard metrics (accuracy, precision, recall, F1-score)

Technical Notes: The dual-classifier approach reduces model bias and improves generalizability by leveraging complementary representations of crystal structures [20].

Protocol 4: Crystal Synthesis Large Language Model (CSLLM) Framework

Objective: Leverage advanced LLMs for comprehensive synthesis prediction.

Procedure:

- Model Selection: Choose foundation LLMs (e.g., LLaMA) with demonstrated performance on scientific tasks

- Task Specialization: Fine-tune three specialized models:

- Synthesizability LLM: Binary classification of synthesizability

- Method LLM: Multiclass classification of synthesis methods (solid-state vs. solution)

- Precursor LLM: Precursor identification for target materials

- Input Representation: Convert crystal structures to optimized text format ("material string": SP | a, b, c, α, β, γ | (AS1-WS1[WP1...])

- Fine-Tuning: Employ progressive fine-tuning with decreasing learning rates and specialized material science corpora

- Hallucination Mitigation: Implement constrained decoding and output validation against crystallographic databases

Performance: CSLLM achieves 98.6% synthesizability prediction accuracy, significantly outperforming traditional stability metrics (74.1% for energy above hull ≥0.1 eV/atom) [21].

Performance Evaluation and Validation

Protocol 5: Model Validation and Benchmarking

Objective: Ensure robust performance evaluation and comparison with existing methods.

Procedure:

- Dataset Splitting: Implement stratified splitting to maintain class distribution across training, validation, and test sets

- Baseline Comparison: Compare against traditional methods:

- Energy above convex hull (multiple thresholds)

- Phonon stability analysis (imaginary frequency thresholds)

- Historical tolerance factors (for specific material classes)

- Cross-Validation: Employ k-fold cross-validation with different random seeds to assess stability

- Generalization Testing: Evaluate on structurally complex materials with large unit cells that exceed training data complexity

- Ablation Studies: Systematically remove model components to assess individual contribution to performance

Metrics: Report standard classification metrics (accuracy, precision, recall, F1, AUC-ROC) with confidence intervals across multiple runs.

Key Research Findings and Performance

Quantitative Performance Comparison

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Accuracy (%) | Dataset Size | Material Class | Key Advantage |

|---|---|---|---|---|

| CSLLM Framework [21] | 98.6 | 150,120 structures | General 3D crystals | Integrated synthesis method and precursor prediction |

| Traditional Ehull (≥0.1 eV/atom) [21] | 74.1 | N/A | General | Simple thermodynamic interpretation |

| Phonon Stability (≥ -0.1 THz) [21] | 82.2 | N/A | General | Kinetic stability assessment |

| Teacher-Student PU Learning [21] | 92.9 | ~300,000 structures | General 3D crystals | Scalable to large datasets |

| SynCoTrain Dual-Classifer [20] | High recall (exact % not specified) | Oxide crystals | Oxide materials | Mitigates model bias through co-training |

| Previous PU Learning [1] | >87.9 | 4,103 ternary oxides | Ternary oxides | Human-curated dataset quality |

Application Case Studies

Case Study 1: Ternary Oxide Discovery A human-curated dataset of 4,103 ternary oxides was used to train a PU learning model that identified 134 out of 4,312 hypothetical compositions as likely synthesizable [1]. The model successfully identified outliers in text-mined datasets, with only 15% of outliers correctly extracted in automated approaches, highlighting the value of human-curated training data.

Case Study 2: Large-Scale Theoretical Screening The CSLLM framework assessed 105,321 theoretical structures and identified 45,632 as synthesizable [21]. These candidates were further analyzed using graph neural networks to predict 23 key properties, demonstrating a comprehensive pipeline from synthesizability prediction to property assessment.

Case Study 3: Reproduction of Known Phases A synthesizability-driven crystal structure prediction framework successfully reproduced 13 experimentally known XSe (X = Sc, Ti, Mn, Fe, Ni, Cu, Zn) structures and identified 92,310 potentially synthesizable structures from the 554,054 candidates predicted by GNoME [22].

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for PU Learning in Synthesizability Prediction

| Resource Category | Specific Tools/Solutions | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Data Sources | Materials Project [1] [21] [22], ICSD [1] [21], Computational Materials Database [21] | Provides crystallographic data and stability information for training | Automated APIs (e.g., pymatgen) facilitate data retrieval and preprocessing |

| Text-Mining Tools | Custom NLP pipelines [1], Robocrystallographer [21] | Extract synthesis information from literature; generate text descriptions of crystals | Accuracy varies (as low as 51% for complex synthesis data); human validation recommended |

| Representation Methods | Material string [21], CIF, POSCAR, Wyckoff encode [22] | Convert crystal structures to machine-readable formats | Material string provides compact, information-rich representation for LLMs |

| PU Learning Algorithms | SynCoTrain [20], CSLLM [21], Traditional PU learning [1] | Core classification frameworks with handling of unlabeled data | Dual-classifier approaches reduce bias; LLM-based methods offer high accuracy but require substantial resources |

| Validation Tools | Composition-based validation, experimental testing [22] | Verify model predictions and identify false positives | Essential for assessing real-world performance beyond test set metrics |

Workflow Integration and Decision Pathways

Integrated Synthesizability Prediction Workflow

The following diagram illustrates a comprehensive workflow for implementing PU learning in synthesizability prediction, integrating multiple approaches from data curation to experimental validation:

Workflow Diagram Title: PU Learning for Synthesizability Prediction

CSLLM Specialized Model Architecture

The Crystal Synthesis Large Language Model framework employs three specialized components for comprehensive synthesis prediction:

Diagram Title: CSLLM Three-Component Architecture

Positive-Unlabeled learning frameworks represent a transformative approach to one of the most persistent challenges in materials informatics: predicting which computationally designed materials can be successfully synthesized. The protocols outlined in this document provide researchers with comprehensive methodologies for implementing these advanced machine learning techniques, from data curation through model validation.

The exceptional performance of specialized frameworks like CSLLM (98.6% accuracy) and the robust co-training approach of SynCoTrain demonstrate that PU learning can significantly narrow the gap between theoretical materials prediction and experimental realization. As these methods continue to evolve and integrate with high-throughput experimental platforms, they promise to accelerate the discovery and development of novel functional materials across diverse applications, from pharmaceuticals to sustainable energy technologies.

The integration of human expertise through curated datasets remains a critical factor in model success, highlighting the continued importance of domain knowledge in an increasingly automated research landscape. By following the detailed protocols and leveraging the specialized tools outlined in this document, researchers can effectively incorporate PU learning into their materials discovery pipelines, potentially reducing both the time and cost associated with experimental materials development.

The discovery of new functional materials is a cornerstone of technological advancement, from renewable energy systems to next-generation electronics. While computational methods, particularly density functional theory (DFT), have successfully identified millions of candidate materials with promising properties, a significant bottleneck remains: predicting which theoretically conceived crystals can be successfully synthesized in a laboratory [21]. The CSLLM framework represents a transformative approach to this challenge, leveraging specialized large language models to accurately predict synthesizability, suggest synthetic methods, and identify suitable precursors for three-dimensional crystal structures [21].

The Crystal Synthesis Large Language Models framework comprises three specialized LLMs, each fine-tuned for a distinct aspect of the synthesis prediction pipeline. This modular architecture enables targeted, high-accuracy predictions across the entire synthesis planning workflow.

System Architecture and Components

- Synthesizability LLM: This model predicts whether an arbitrary 3D crystal structure is synthesizable. It serves as the initial filter, identifying viable candidate structures from vast theoretical databases.

- Method LLM: For structures deemed synthesizable, this model classifies the appropriate synthetic pathway, such as solid-state or solution-based methods.

- Precursor LLM: This model identifies suitable chemical precursors required for the synthesis of a given compound, a critical step in experimental planning.

Core Technical Innovation: Material String Representation

A key innovation enabling CSLLM's performance is the development of a specialized text representation for crystal structures, termed "material string." Traditional formats like CIF or POSCAR contain redundant information and lack symmetry awareness. The material string overcomes these limitations by incorporating space group information, Wyckoff positions, and optimized structural data into a concise, LLM-friendly format [21]. This representation efficiently encodes essential crystal information including lattice parameters, composition, and atomic coordinates while eliminating redundancy, making it particularly suitable for fine-tuning LLMs.

Quantitative Performance Analysis

The CSLLM framework demonstrates exceptional accuracy across all three prediction tasks, significantly outperforming traditional stability-based screening methods.

Table 1: CSLLM Performance Metrics on Key Prediction Tasks

| Model Component | Accuracy | Dataset Size | Benchmark Comparison |

|---|---|---|---|

| Synthesizability LLM | 98.6% | 150,120 structures | Outperforms energy above hull (74.1%) and phonon stability (82.2%) |

| Method LLM | 91.0% | Not specified | Successfully classifies solid-state vs. solution methods |

| Precursor LLM | 80.2% | Not specified | Identifies precursors for binary and ternary compounds |

Beyond these metrics, the Synthesizability LLM demonstrates outstanding generalization capability, achieving 97.9% accuracy on complex experimental structures with considerably larger unit cells than those in its training data [21]. When applied to screen 105,321 theoretical structures, the framework successfully identified 45,632 as synthesizable [21].

Experimental Protocols

Dataset Curation and Model Training

The development of CSLLM relied on the construction of a comprehensive, balanced dataset of synthesizable and non-synthesizable crystal structures.