Machine Learning for Predicting Thermodynamic Stability: Accelerating Inorganic Compound Discovery

Accurate prediction of thermodynamic stability is a critical bottleneck in the discovery of new inorganic compounds and materials for biomedical and energy applications.

Machine Learning for Predicting Thermodynamic Stability: Accelerating Inorganic Compound Discovery

Abstract

Accurate prediction of thermodynamic stability is a critical bottleneck in the discovery of new inorganic compounds and materials for biomedical and energy applications. Traditional methods, such as density functional theory (DFT) calculations, are computationally expensive and time-consuming. This article explores the transformative role of machine learning (ML) in overcoming these challenges, detailing how ensemble models and electron configuration-based features can achieve high accuracy with remarkable sample efficiency. We examine foundational ML concepts, diverse methodological approaches including recent ensemble frameworks, strategies for troubleshooting model bias and optimizing performance, and rigorous validation techniques against first-principles calculations. The content is tailored for researchers, scientists, and drug development professionals seeking to leverage ML for accelerated materials design and development.

The Stability Prediction Challenge: Why Machine Learning is Revolutionizing Materials Discovery

Thermodynamic stability determines the synthesizability and functional utility of inorganic compounds in applications ranging from photovoltaics to catalysis. This technical guide delineates the core concepts of decomposition energy (ΔHₕ) and the convex hull construction, the definitive computational framework for assessing stability against competing phases. Advanced machine learning (ML) models now emulate these first-principles calculations, offering rapid screening of vast compositional spaces. This review details the theoretical underpinnings, computational methodologies, and data requirements for accurately predicting solid-state stability, contextualized within modern ML research for accelerated inorganic materials discovery.

The discovery of new inorganic materials is fundamentally constrained by the challenge of thermodynamic stability. With over 10¹² plausible quaternary compositions alone, experimental or computational characterization of all candidates is intractable [1] [2]. Density Functional Theory (DFT) enables stability assessment via decomposition enthalpies but remains computationally prohibitive for large-scale screening [3] [4].

Machine learning offers a promising alternative by learning the relationship between composition, structure, and stability from existing DFT databases [3] [5]. However, effective ML application requires precise definition of the target thermodynamic property. While formation energy (ΔHƒ) measures stability relative to elemental phases, the decomposition energy (ΔHₕ), derived from the convex hull, determines true thermodynamic stability against all competing compounds in a chemical space [6] [2]. This distinction is critical; models accurate for ΔHƒ often fail for stability classification due to the subtle energy differences involved [2].

This guide provides researchers with the theoretical and computational toolkit for stability prediction, focusing on the central role of ΔHₕ and its implementation in high-throughput and ML workflows.

Core Theoretical Concepts

Formation Energy vs. Decomposition Energy

The formation enthalpy (ΔHƒ) represents the enthalpy change when a compound forms from its constituent elements in their standard states:

ΔH_f = E_compound - Σ α_i E_i [6]

where Ecompound is the total energy of the compound, *α*i is the stoichiometric coefficient of element i, and E_i is the energy per atom of the elemental reference phase.

While foundational, ΔHƒ is rarely the relevant metric for stability. A compound competes thermodynamically with all other compounds in its chemical space, not just elements. The definitive stability metric is the decomposition enthalpy (ΔHₕ), or energy above the convex hull, defined as the energy difference between the compound and the most stable combination of other phases at the same composition [6] [7]:

ΔH_d = E_compound - E_decomposition_phases [6]

A negative ΔHₕ indicates thermodynamic stability (the compound is on the convex hull), while a positive value indicates instability. The magnitude quantifies the energy penalty for decomposition or the driving force for stability.

The Convex Hull Construction

The convex hull is a geometric construction in energy-composition space that identifies the set of thermodynamically stable compounds. For a given composition, the hull represents the lowest possible energy achievable by any mixture of stable phases.

Visual Representation of a Binary Convex Hull: The diagram below illustrates the convex hull in a hypothetical A-B binary system, showing stable and unstable compounds.

Figure 1: Convex hull in a binary A-B system. Stable compounds A₂B and AB₃ lie on the hull. Unstable A₄B sits above it; its ΔHₕ is the vertical energy distance to the hull.

Types of Decomposition Reactions

Decomposition reactions fall into three distinct types, with critical implications for synthesis and computational accuracy [6].

Table 1: Classification and Prevalence of Decomposition Reaction Types

| Reaction Type | Description | Prevalence in Materials Project | Synthesis Considerations |

|---|---|---|---|

| Type 1 | Decomposition products are only elemental phases. (ΔHₕ = ΔHƒ) | ~3% (Mostly binaries) | Stability can be modulated by adjusting elemental chemical potentials. |

| Type 2 | Decomposition products are exclusively other compounds. | ~63% | Insensitive to adjustments in elemental chemical potentials. |

| Type 3 | Decomposition products are a mixture of compounds and elements. | ~34% | Thermodynamics can be modulated if an elemental participant's potential is adjusted. |

Analysis of 56,791 compounds in the Materials Project reveals Type 2 reactions are most prevalent, highlighting that stability is predominantly determined by competition between compounds, not elements [6]. This underscores why ΔHₕ, not ΔHƒ, is the correct stability metric.

Computational Assessment of Stability

First-Principles Workflow with Density Functional Theory

DFT provides the foundational data for stability assessment in computational materials science. The standard protocol involves:

- Energy Calculation: Compute the total energy (E_total) for the target compound and all potential competing phases in the relevant chemical space(s) using DFT. Standardized input sets ensure consistency [7].

- Reference State Correction: Reference energies (E_i) for elements are crucial. Calculations for gaseous elements (e.g., O₂, N₂) are particularly sensitive to functional choice [6].

- Formation Energy Calculation: Calculate ΔHƒ for all compounds using the formula in Section 2.1.

- Convex Hull Construction: Input all ΔHƒ values into a hull construction algorithm (e.g., as implemented in Pymatgen) to build the phase diagram [5].

- Stability Determination: For each compound, calculate ΔHₕ as its vertical energy distance to the convex hull. A value ≤ 0 meV/atom indicates thermodynamic stability.

The accuracy of ΔHₕ depends on the DFT functional. Studies comparing the GGA-PBE and meta-GGA-SCAN functionals found their performance is similar for predicting ΔHₕ (Mean Absolute Difference of 70 vs. 59 meV/atom), and both show significantly better agreement with experiment for Type 2 reactions (~35 meV/atom) [6].

Table 2: Key Computational Tools and Databases for Stability Prediction

| Resource Name | Type | Primary Function | Role in Stability Assessment |

|---|---|---|---|

| VASP | Software | First-principles quantum-mechanical calculation. | Computes the foundational E_total for compounds and elements. |

| Pymatgen | Python Library | Materials analysis. | Performs convex hull construction and calculates ΔHₕ from DFT energies. |

| Materials Project (MP) | Database | DFT-calculated properties for ~150,000 materials. | Provides pre-computed ΔHₕ values and decomposition pathways for known compounds. |

| Open Quantum Materials Database (OQMD) | Database | High-throughput DFT calculations. | Alternative source of formation energies and hull information for training ML models. |

Machine Learning for Stability Prediction

The high computational cost of DFT motivates ML models that can predict stability directly from composition or structure.

Model Architectures and Input Representations

ML models for stability use different input representations, each with trade-offs between information content and generality [3] [2].

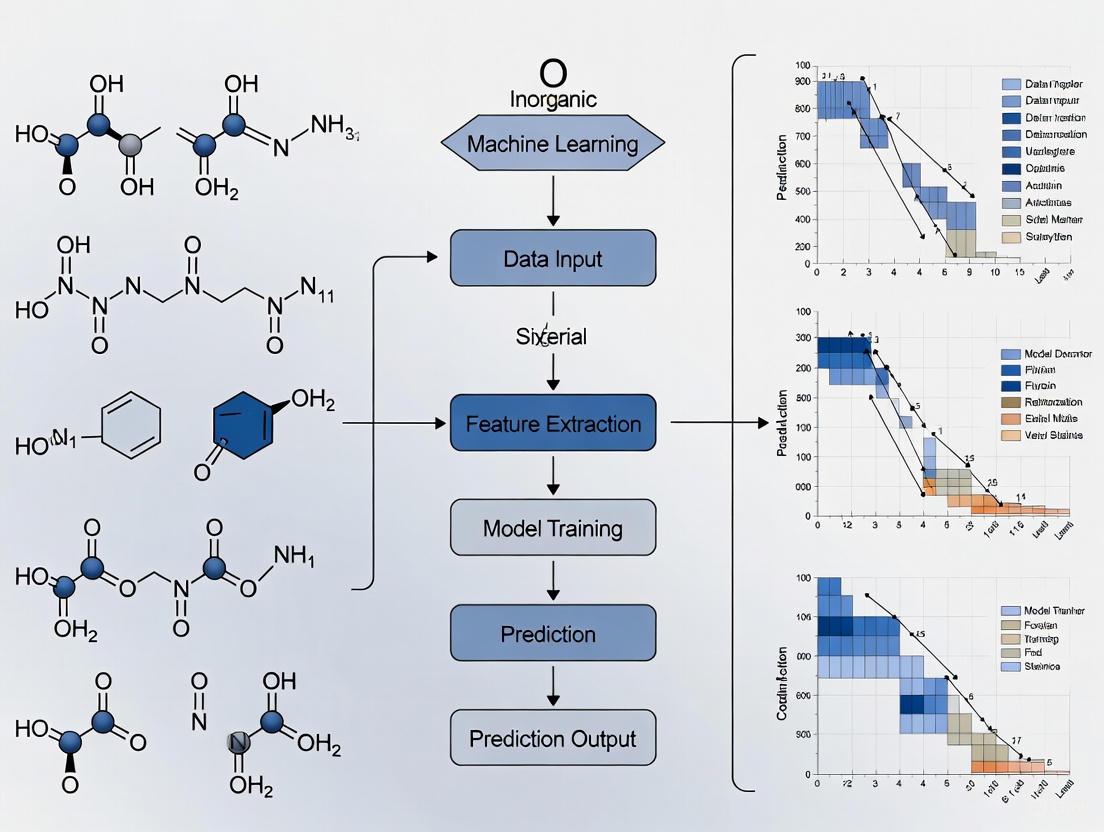

Figure 2: Machine learning frameworks for stability prediction use different input representations, from simple composition to atomic structure.

Performance and Limitations

ML model performance must be critically evaluated on stability prediction, not just formation energy.

Table 3: Performance of Machine Learning Models for Stability Prediction

| Model / Approach | Key Features | Reported Performance | Key Limitations |

|---|---|---|---|

| Compositional Models (e.g., Magpie, Roost) | Use only chemical formula; fast screening of new compositions. | High ΔHƒ accuracy (MAE ~0.04 eV/atom), but poor at identifying stable compounds [2]. | High false-positive rates for stability; cannot distinguish polymorphs. |

| Structure-Based GNNs (e.g., CGCNN) | Incorporate crystal structure; higher accuracy. | MAE ~0.04 eV/atom for total energy; can rank polymorph stability [1]. | Requires known crystal structure, which is unknown for new compositions. |

| Ensemble ECSG Model [3] | Combines models based on electron configuration, atomic properties, and interatomic interactions. | AUC = 0.988 for stability classification; high data efficiency (1/7 the data for similar performance). | Increased model complexity. |

| XGBoost for Perovskites [4] [5] | Uses elemental features; model interpretation via SHAP. | Accurate classification (F1=0.88) and regression (RMSE=28.5 meV/atom) for Ehull [5]. | Performance is material-class specific. |

A critical examination reveals that while compositional models can predict ΔHƒ with accuracy near DFT error, they perform poorly at stability classification (predicting ΔHₕ), often producing high false-positive rates [2]. This is because ΔHₕ depends on small energy differences between compounds, where error cancellation in DFT does not apply to ML models. Consequently, structure-based models or advanced ensembles are essential for reliable stability screening.

Experimental Protocols for ML-Based Discovery

The following workflow integrates ML with DFT validation for practical materials discovery, demonstrated for double perovskites [4] and generic compounds [3].

Data Curation and Feature Engineering

- Data Source: Extract training data from DFT databases (Materials Project, OQMD), including composition, structure (if available), and target variable (ΔHₕ).

- Stability Labeling: Classify compounds as "stable" (ΔHₕ ≤ 0 meV/atom or within a small threshold, e.g., 30 meV/atom) or "unstable."

- Feature Generation: For compositional models, generate features from elemental properties (e.g., electronegativity, ionic radii, valence) [5]. For the ECCNN model, encode the electron configuration of constituent elements into an input matrix [3].

Model Training and Validation

- Algorithm Selection: Test multiple algorithms (e.g., XGBoost, Roost, Graph Neural Networks). XGBoost often excels for tabular feature data [4] [5].

- Validation: Use k-fold cross-validation (e.g., 5-fold) to assess performance metrics: AUC (Area Under the ROC Curve) for classification; MAE (Mean Absolute Error) and RMSE (Root Mean Square Error) for ΔHₕ regression.

- External Test Set: Reserve a held-out set of known compounds or, ideally, newly synthesized materials to test real-world predictive power [4].

Prediction and DFT Validation

- High-Throughput Screening: Apply the trained model to screen thousands of candidate compositions for predicted stability.

- First-Principles Validation: Perform definitive DFT calculations for top candidate materials to verify their ΔHₕ and place them on the convex hull. This step is crucial to mitigate ML false positives [3] [2].

- Iterative Refinement: Incorporate DFT-validated results back into the training set to improve model accuracy iteratively.

The decomposition energy (ΔHₕ), derived from the convex hull, is the definitive metric for thermodynamic stability. Successful machine-learning strategies must address the subtle energy differences that govern stability, moving beyond accurate formation energy prediction alone. Ensemble models integrating diverse chemical insights and structure-aware GNNs show superior performance but require careful validation against DFT. As ML methodologies mature, their integration with first-principles calculations and experimental data creates a robust, iterative pipeline for the discovery of novel, stable inorganic functional materials.

The pursuit of new materials with specific properties is a fundamental challenge in fields ranging from materials science to drug development. A central hurdle in this process is the accurate and efficient determination of a material's thermodynamic stability, a key indicator of whether a compound can be synthesized and persist under specific conditions. For decades, researchers have relied on two primary approaches: direct experimental investigation and computational modeling via Density Functional Theory (DFT). While these methods have paved the way for significant advancements, they are characterized by profound limitations in terms of time, resource consumption, and scalability. This article delineates the computational and practical costs of these traditional approaches, framing them within the context of modern research that leverages machine learning to predict the thermodynamic stability of inorganic compounds.

The Experimental Bottleneck in Materials Discovery

The process of experimental materials discovery is often likened to finding a needle in a haystack, a predicament arising from the extensive compositional space of materials [3].

- Low-Throughput Exploration: The number of compounds that can be feasibly synthesized and tested in a laboratory represents only a minute fraction of the total possible compositional space [3]. This severely limits the pace of discovery.

- Resource Intensity: Traditional experimental investigation is characterized by inefficiency, consuming substantial time, specialized equipment, and expert resources to establish the stability of a single compound or a small family of materials [3].

The experimental approach, while providing direct empirical evidence, acts as a bottleneck that constricts the exploration of novel chemical spaces, necessitating more efficient pre-screening methods.

The Computational Burden of Density Functional Theory (DFT)

As a computational alternative to experimentation, DFT has become a cornerstone of modern materials science. Its widespread use has enabled the creation of extensive materials databases like the Materials Project (MP) and Open Quantum Materials Database (OQMD) [3]. However, this capability comes at a significant cost.

Fundamental Workflow and Associated Costs

The determination of thermodynamic stability via DFT typically involves calculating the decomposition energy (ΔHd), defined as the total energy difference between a given compound and its competing compounds in a specific chemical space. This requires constructing a convex hull using the formation energies of all pertinent materials within the same phase diagram [3]. The following workflow outlines the standard DFT-based stability assessment and its resource-intensive nature.

Diagram: DFT Thermodynamic Stability Workflow. This chart illustrates the multi-step process of determining thermodynamic stability using Density Functional Theory, highlighting the iterative, computationally expensive steps (red arrows) that contribute to its high cost [3] [8].

As the workflow shows, key computationally intensive steps include:

- Structural Relaxation: An iterative process of adjusting atomic coordinates and cell parameters until the ground-state configuration is found [8].

- Self-Consistent Field (SCF) Cycles: An internal iterative process within a single energy calculation to achieve a consistent electronic structure [8].

- Comprehensive Phase Space Sampling: Stability is not an intrinsic property but is relative. Assessing it requires energy calculations not just for the target compound, but for all other chemically related compounds that could potentially form, necessitating a vast number of simulations [3].

Quantitative Scaling and Resource Limitations

The computational cost of DFT methods scales severely with system size and desired accuracy, as detailed in the table below.

Table 1: Scaling and Resource Demands of Computational Methods

| Method | Computational Scaling | Typical System Size Limit (Atoms) | Key Limitation |

|---|---|---|---|

| Density Functional Theory (DFT) | O(N³) | ~100-1,000 atoms [9] | High cost for large/complex systems [3] |

| Coupled Cluster (CCSD(T)) ("Gold Standard") | O(N⁷) | ~10s of atoms | Prohibitively expensive for materials [10] |

| Full Configuration Interaction (FCI) | Exponential (Exact) | <20 atoms (small molecules) | Computationally intractable for most systems [11] |

The real-world implications of these scaling laws are stark. For example, a recent study noted that DFT calculations consume "substantial computation resources," leading to "low efficiency" in exploring new compounds [3]. The challenge is even more pronounced for high-accuracy methods. The FCI method, while exact, is intractable for all but the smallest systems due to its exponential scaling [11]. For a moderately sized organometallic catalyst with 10242 electron configurations, an FCI calculation is simply not feasible [11].

Efforts to overcome these limitations by using simplified molecular models often undermine the accuracy required for practical catalyst design, as they fail to capture significant electronic and steric interactions present in real-world systems [11].

Case Studies in High-Performance Computing (HPC) Requirements

The extreme computational demands have pushed researchers towards record-breaking High-Performance Computing (HPC) efforts, which are not scalable for broad materials discovery.

- Large-Scale Dataset Generation: The creation of the OMat24 dataset, containing 118 million DFT calculations, required approximately 400 million core hours of computing time. This highlights the monumental resource investment needed to build comprehensive datasets for inorganic materials [8].

- Organometallic Catalyst Simulation: A recent project using the incremental FCI (iFCI) method to study a nickel-based catalyst required scaling to 2,200 workers on cloud computing instances. The calculation for a single state of one complex had a cumulative run time of almost six months, condensed to just over 5 hours on this massive cluster. This was noted as the largest organometallic catalyst system ever calculated at such accuracy [11].

- Biomolecular Drug Simulation: In drug discovery, achieving the first quantum simulation of biological systems at a biologically relevant scale (comprising hundreds of thousands of atoms) required the unprecedented "exascale" power of the Frontier supercomputer [9].

Table 2: Representative HPC Efforts for Quantum Chemistry Calculations

| Application | Method | HPC Scale | Reported Outcome |

|---|---|---|---|

| OMat24 Dataset Generation [8] | DFT | 400M+ core hours | 118M labeled structures for AI training |

| Organometallic Catalyst Design [11] | incremental FCI (iFCI) | 2,200 workers (c6i.4xlarge) | Largest organometallic catalyst calculation |

| Biomolecular Drug Simulation [9] | Quantum Mechanics | Exascale Supercomputer (Frontier) | First quantum-accurate simulation of drug behaviour |

These case studies demonstrate that while traditional computational methods are powerful, their application to industrially or biologically relevant problems demands a level of computing power that is inaccessible for most researchers and too costly for high-throughput screening of candidate materials.

The Scientist's Toolkit: Key Reagents for Computational Stability Prediction

The transition from purely physical methods to computational and data-driven approaches requires a new class of "research reagents." The table below details essential components in the modern computational toolkit for predicting thermodynamic stability.

Table 3: Essential Computational Tools and Resources

| Tool/Resource | Function | Example/Note |

|---|---|---|

| High-Performance Computing (HPC) | Provides the processing power for DFT and ab initio calculations. | Cloud clusters (AWS [11]), Exascale supercomputers (Frontier [9]). |

| Materials Databases | Serve as curated sources of training data for machine learning models. | Materials Project (MP) [3], Open Quantum Materials Database (OQMD) [3], Alexandria [8]. |

| Electronic Structure Codes | Software that performs the core quantum mechanical calculations. | Used for DFT, CCSD(T), and other post-Hartree-Fock methods [10]. |

| Machine Learning Frameworks | Enable the development and training of predictive models. | Used for models like ElemNet [3], Roost [3], and EquiformerV2 [8]. |

| Feature Representation | Transforms raw chemical composition into a numerical format for ML models. | Electron Configuration matrices [3], Magpie features (atomic statistics) [3], graph representations [3]. |

The limitations of traditional experimental and DFT-based approaches for determining thermodynamic stability are significant and well-documented. The experimental path is inherently slow and low-throughput, while the computational DFT path, though more scalable than pure experimentation, remains severely constrained by its resource intensity and poor algorithmic scaling. These bottlenecks fundamentally limit the ability of researchers to explore the vast landscape of possible inorganic compounds. It is within this context that machine learning emerges not merely as an incremental improvement, but as a transformative paradigm. By learning the complex relationships between composition, structure, and stability from existing data, ML models can make accurate stability predictions in a fraction of the time and at a minuscule fraction of the computational cost of DFT, thereby overcoming the primary limitations of the traditional approaches detailed in this article.

The discovery of new functional materials has long been characterized by painstaking experimental cycles and intuition-driven approaches, creating significant bottlenecks in technological advancement across energy storage, catalysis, and semiconductor design. The paradigm has now fundamentally shifted from these traditional methods toward a data-driven ecosystem where high-throughput computational screening and machine learning (ML) models work in concert to rapidly identify promising candidates. This transformation is particularly evident in the critical challenge of predicting thermodynamic stability of inorganic compounds, a fundamental property determining whether a material can be synthesized and persist under operational conditions. Traditional approaches for determining stability through experimental investigation or density functional theory (DFT) calculations consume substantial computational resources and time, establishing convex hulls from formation energies of compounds within specific phase diagrams [3].

The convergence of extensive materials databases—such as the Materials Project (MP) and Open Quantum Materials Database (OQMD)—with advanced ML algorithms has created an unprecedented opportunity to accelerate materials discovery. These databases provide the essential training data foundation for developing accurate predictive models that can evaluate thermodynamic stability orders of magnitude faster than conventional methods [3]. This whitepaper examines the core methodologies driving this paradigm shift, presents quantitative performance comparisons of leading approaches, details experimental protocols for model development and validation, and provides the essential toolkit for researchers implementing these advanced predictive frameworks in their own work, with particular emphasis on applications for drug development professionals and research scientists engaged in inorganic materials design.

Core Machine Learning Methodologies

Feature Representation for Materials

A critical foundation for any ML approach in materials science is how chemical compositions and structures are represented as features understandable to algorithms. Current methodologies span multiple conceptual frameworks:

Elemental Property Statistics (Magpie): This approach emphasizes statistical features derived from various elemental properties, including atomic number, atomic mass, and atomic radius. The statistical features encompass mean, mean absolute deviation, range, minimum, maximum, and mode, providing a broad representation of elemental diversity that facilitates accurate prediction of thermodynamic properties [3].

Graph-Based Representations (Roost): This methodology conceptualizes the chemical formula as a complete graph of elements, employing graph neural networks with attention mechanisms to learn relationships and message-passing processes among atoms, thereby effectively capturing critical interatomic interactions that govern thermodynamic stability [3].

Electron Configuration Encoding (ECCNN): This novel approach uses electron configuration information—delineating the distribution of electrons within an atom across energy levels—as fundamental input. This intrinsic atomic characteristic potentially introduces less inductive bias compared to manually crafted features and is conventionally utilized as input for first-principles calculations to determine crucial properties like ground-state energy [3].

Model Architectures and Ensemble Strategies

Diverse model architectures have been developed to leverage these feature representations, each with distinct advantages:

Gradient-Boosted Decision Trees (XGBoost): This highly efficient and scalable ensemble method successively incorporates weak learners to mitigate errors from preceding iterations, resulting in robust models that significantly enhance prediction accuracy through iterative variance and bias reduction. XGBoost has demonstrated exceptional performance in predicting mechanical properties like Vickers hardness and oxidation temperature [12].

Convolutional Neural Networks (CNN): The Electron Configuration Convolutional Neural Network (ECCNN) processes electron configuration data through convolutional operations. The architecture typically includes input layers shaped to accommodate electron configuration matrices, followed by convolutional layers with multiple filters, batch normalization operations, pooling layers, and fully connected layers for final prediction [3].

Ensemble Framework with Stacked Generalization (ECSG): To mitigate limitations of individual models and harness synergistic effects that diminish inductive biases, researchers have developed ensemble frameworks that amalgamate models rooted in distinct knowledge domains. The ECSG framework integrates three foundational models—Magpie, Roost, and ECCNN—then uses their outputs to construct a meta-level model that produces the final prediction, significantly enhancing overall performance [3].

Generative Models for Inverse Design

Beyond predictive modeling, generative approaches represent the cutting edge of ML in materials science:

Diffusion Models (MatterGen): This advanced generative model creates stable, diverse inorganic materials across the periodic table by gradually refining atom types, coordinates, and periodic lattice through a learned diffusion process. The model can be fine-tuned to steer generation toward specific property constraints, enabling true inverse materials design where materials are generated to meet predefined characteristics [13].

Adapter Modules for Property Constraints: MatterGen incorporates adapter modules—tunable components injected into each layer of the base model—to alter outputs depending on given property labels. This approach enables fine-tuning even with small labeled datasets, overcoming a significant limitation in computational materials design where property data is often scarce [13].

Quantitative Performance Comparison

Model Performance Metrics

Table 1: Performance metrics of machine learning models for materials property prediction

| Model | Application | Performance Metrics | Data Efficiency | Key Advantages |

|---|---|---|---|---|

| ECSG (Ensemble) | Thermodynamic Stability Prediction | AUC: 0.988 [3] | 7x more efficient than existing models [3] | Mitigates inductive bias from multiple knowledge domains |

| MatterGen (Generative) | Novel Stable Material Generation | 75% of generated structures within 0.1 eV/atom of convex hull [13] | Trained on 607,683 structures from MP & Alexandria [13] | Generates previously unknown stable compounds |

| XGBoost | Vickers Hardness Prediction | R²: >0.8 for mechanical properties [12] | 1,225 HV values from 606 compounds [12] | Handles compositional and structural descriptors |

| XGBoost | Oxidation Temperature Prediction | R²: 0.82, RMSE: 75°C [12] | 348 compounds in training set [12] | Predicts complex temperature-dependent behavior |

| DiffCSP (Baseline) | Crystal Structure Prediction | <50% SUN materials [13] | Requires full training datasets | Benchmark for generative models |

Computational Efficiency Comparison

Table 2: Computational requirements and efficiency of different modeling approaches

| Method | Hardware Requirements | Time per Prediction | Stability Assessment Accuracy | Scalability to Large Databases |

|---|---|---|---|---|

| Density Functional Theory | High-performance computing clusters | Hours to days | High (reference standard) | Limited to 10³-10⁴ compounds [13] |

| ECSG Ensemble Model | Standard GPU (e.g., NVIDIA V100) | Milliseconds | 98.8% classification accuracy [3] | High (10⁶+ compounds) |

| MatterGen Generation | High-memory GPU | Seconds per generated structure | 75% within 0.1 eV/atom of convex hull [13] | 10 million structures with 52% uniqueness [13] |

| XGBoost Models | CPU or GPU | Milliseconds | R² > 0.8 for property prediction [12] | High (10⁵+ compounds) |

Experimental Protocols and Methodologies

Ensemble Model Development Protocol

The development of robust ensemble models for thermodynamic stability prediction follows a systematic protocol:

Data Curation and Preprocessing: Extract stable and unstable compounds from reference databases (Materials Project, JARVIS). Apply rigorous cleaning procedures to discard entries with negative formation energies, chemically nonsensical properties, or incomplete information. Exclude compounds containing noble gases, hydrogen, technetium, and elements with atomic numbers above 83 (except uranium and thorium) [12].

Feature Generation: Compute diverse feature sets including (1) compositional features based on elemental properties; (2) structural features derived from CIF files using programs like AFLOW or pymatgen; and (3) electronic features capturing valence electron configurations. For the ECCNN component, encode electron configuration as an input matrix with dimensions 118 × 168 × 8 representing elements, electron shells, and orbital characteristics [3].

Model Training and Validation: Implement stacked generalization with k-fold cross-validation (typically k=5 or 10). Train base models (Magpie, Roost, ECCNN) independently, then use their predictions as input to a meta-learner (often logistic regression or gradient boosting) that produces final stability classifications. Employ leave-one-group-out cross-validation to assess generalizability to unseen chemical systems [3] [12].

Hyperparameter Optimization: Utilize GridSearchCV or Bayesian optimization to tune critical hyperparameters. For XGBoost models, optimize maximum depth of trees (range 3-7), learning rate (0.01-0.07), column subsampling rate per tree (0.6-0.9), minimum child weight (4-7), subsample ratio (0.6-0.9), and gamma regularization (0-0.1) [12].

ML Ensemble Model Development

Generative Model Training Protocol

For generative models like MatterGen, the training protocol involves specialized approaches:

Dataset Curation for Pretraining: Compile large and diverse datasets combining structures from multiple sources (Materials Project, Alexandria, ICSD). Apply filters for structures with up to 20 atoms and recompute energies using consistent DFT parameters to ensure data uniformity. The Alex-MP-20 dataset comprising 607,683 stable structures represents an exemplary curated dataset for this purpose [13].

Diffusion Process Configuration: Define customized corruption processes for each component of the crystal structure (atom types, coordinates, periodic lattice). For coordinate diffusion, use a wrapped Normal distribution that respects periodic boundary conditions and approaches a uniform distribution at the noisy limit. Scale noise magnitude according to cell size effects on fractional coordinate diffusion in Cartesian space [13].

Symmetry-Aware Score Network: Implement a score network that outputs invariant scores for atom types and equivariant scores for coordinates and lattice, eliminating the need to learn symmetries from data directly. This approach significantly enhances generation efficiency and physical plausibility of outputs [13].

Fine-Tuning with Adapter Modules: For property-specific generation, inject adapter modules into each layer of the base model to alter outputs depending on given property labels. Combine fine-tuned models with classifier-free guidance to steer generation toward target property constraints. This enables generation of materials with specific chemical composition, symmetry, or target properties like magnetic density [13].

Experimental Validation Protocol

Computational predictions require rigorous experimental validation to confirm real-world performance:

Synthesis of Predicted Materials: Select top candidates from generative model outputs or stability predictions for synthesis. For inorganic solids, employ standard solid-state synthesis protocols: mix stoichiometric amounts of precursor powders, pelletize, and react in sealed quartz tubes or controlled atmosphere furnaces at appropriate temperatures (often 800-1500°C depending on system) [12].

Characterization of Properties: Validate thermodynamic stability through structural characterization (X-ray diffraction to confirm phase purity), thermal analysis (differential scanning calorimetry to assess decomposition temperatures), and property-specific measurements (microindentation for hardness, thermogravimetric analysis for oxidation resistance) [12].

DFT Validation: Perform DFT calculations on predicted stable materials to verify their position relative to the convex hull. Consider materials with formation energy within 0.1 eV/atom of the convex hull as potentially synthesizable. Compute the root mean square displacement (RMSD) between generated structures and their DFT-relaxed counterparts, with values below 0.076 Å indicating high-quality predictions very close to local energy minima [13].

Experimental Validation Workflow

Table 3: Essential databases and computational tools for ML-driven materials research

| Resource | Type | Key Function | Access |

|---|---|---|---|

| Materials Project (MP) | Database | Contains calculated properties of ~150,000 inorganic compounds provides reference data for training ML models [3] [13] | Public web interface & API |

| Joint Automated Repository for Various Integrated Simulations (JARVIS) | Database | Includes DFT-computed properties, ML potentials, and experimental data serves as benchmark for model validation [3] | Public access |

| Open Quantum Materials Database (OQMD) | Database | Contains DFT-calculated formation energies for ~1,000,000 compounds provides training data for stability prediction [3] | Academic access |

| Alexandria Database | Database | Expands structural diversity beyond MP with ~400,000 additional structures enhances generative model training [13] | Available upon request |

| Inorganic Crystal Structure Database (ICSD) | Database | Provides experimentally determined crystal structures serves as ground truth for validation [13] | Subscription required |

| AFLOW | Software Platform | Automates high-throughput DFT calculations and provides standardized descriptors for ML [12] | Public REST API |

| pymatgen | Python Library | Provides robust materials analysis features enables structural feature generation and file processing [12] | Open source |

| XGBoost | ML Algorithm | Gradient boosting framework with high efficiency predicts properties from compositional/structural features [12] | Open source |

| MATTERGEN | Generative Model | Diffusion-based model for inverse materials design generates novel stable crystals with target properties [13] | Code available |

Feature Descriptor Tools

Effective feature representation is crucial for model performance:

Magpie Descriptor Set: This comprehensive feature set computes statistical properties (mean, variance, min, max, range) across 22 elemental attributes for any given composition, providing a rich representation without requiring structural information [3].

Smooth Overlap of Atomic Positions (SOAP): This descriptor provides a quantitative measure of similarity between local atomic environments, capturing essential chemical bonding information that correlates strongly with material properties [12].

Many-Body Tensor Representation (MBTR): This representation comprehensively describes structures by accounting for atomic distributions and their relationships, particularly valuable for capturing complex structural patterns in multicomponent systems [12].

Electron Configuration Matrix: For ECCNN models, electron configuration is encoded as a three-dimensional matrix (elements × electron shells × orbital characteristics), providing direct input for convolutional neural networks to learn stability-determining electronic patterns [3].

The paradigm shift from high-throughput data generation to predictive ML models represents a fundamental transformation in materials discovery methodology. Ensemble approaches like ECSG that combine multiple knowledge domains have demonstrated remarkable performance in predicting thermodynamic stability, achieving AUC scores of 0.988 while requiring only one-seventh of the data used by previous models [3]. Meanwhile, generative frameworks like MatterGen have expanded the horizon beyond predictive screening to true inverse design, generating previously unknown stable materials with target properties [13].

The integration of these approaches creates a powerful materials discovery pipeline: generative models propose candidate structures, ensemble models rapidly evaluate their thermodynamic stability, and focused experimental validation confirms promising candidates. This workflow dramatically accelerates the discovery cycle for functional materials essential across technological domains—from oxidation-resistant hard materials for aerospace applications to novel semiconductor compositions for electronic devices [12].

As these methodologies continue to mature, several frontiers promise further advancement: improved explainability through frameworks like XpertAI that combine XAI methods with large language models to generate natural language explanations of structure-property relationships [14]; enhanced data utilization through techniques that leverage both computational and experimental data sources [15]; and increased accessibility through automated ML platforms that democratize advanced materials modeling capabilities [16]. Together, these developments are establishing a new paradigm where materials discovery is increasingly data-driven, predictive, and systematic, fundamentally accelerating innovation across science and technology.

The accelerated discovery and development of new inorganic compounds represent a critical challenge in advancing technologies across energy storage, electronics, and drug development. Central to this challenge is the accurate prediction of thermodynamic stability, which determines whether a compound can form and persist under given conditions. Traditional experimental approaches to determining stability through synthesis and characterization are notoriously time-consuming and resource-intensive, creating a bottleneck in materials innovation. The Materials Genome Initiative and similar frameworks worldwide have championed a paradigm shift toward computational methods, wherein high-throughput density functional theory (DFT) calculations generate massive datasets of material properties [17]. These curated databases provide the foundational data necessary for training machine learning (ML) models that can rapidly screen candidate materials.

This technical guide examines two pivotal resources in this ecosystem: the Materials Project (MP) and the Open Quantum Materials Database (OQMD). We detail their specific data contents, methodologies for accessing and processing stability data, and their practical application in building predictive ML models. By providing a structured comparison and explicit protocols, this document serves as a reference for researchers aiming to leverage these databases for efficient and accurate prediction of thermodynamic stability in inorganic compounds.

Database Fundamentals: MP and OQMD

Core Database Architectures and Data Contents

The Materials Project (MP) and the Open Quantum Materials Database (OQMD) are two of the most extensive repositories of DFT-calculated materials properties. Both databases systematically compute and organize thermodynamic and structural properties for hundreds of thousands of inorganic compounds, but they differ in specific content, calculation methodologies, and accessibility.

Table 1: Core Features of MP and OQMD

| Feature | Materials Project (MP) | Open Quantum Materials Database (OQMD) |

|---|---|---|

| Primary Data | Formation energies, band structures, elastic tensors, piezoelectric tensors, diffusion pathways, surface energies [18] | Formation energies, band gaps, structural prototypes, crystal structures, stability indicators [19] [20] |

| Stability Metric | Energy above hull (ΔEd), derived from convex hull construction [18] | Decomposition energy (ΔEd), reported as _oqmd_stability [20] |

| Data Accessibility | Web interface, REST API (requires user API key) [18] | Public SQL database dump, OPTIMADE API interface [17] [20] |

| Key Properties | Corrected formation energies, phase diagrams | Calculated formation energy (_oqmd_delta_e), band gap (_oqmd_band_gap) [20] |

| Entry Identification | Materials Project ID (e.g., mp-1234) |

OQMD Entry ID (_oqmd_entry_id) and Calculation ID (_oqmd_calculation_id) [20] |

The OQMD contains DFT-calculated thermodynamic and structural properties for over 1.3 million materials, serving as a vast resource for training data [19]. Its data is accessible via an OPTIMADE API, which provides properties such as formation energy (_oqmd_delta_e) and stability (_oqmd_stability), where a value of zero indicates a computationally stable compound [20]. The MP, while similarly extensive, employs a sophisticated mixing scheme for its calculated energies, combining results from different levels of theory (GGA, GGA+U, and R2SCAN) to improve accuracy [18]. This makes its formation energies and derived "energy above hull" particularly reliable for stability assessments.

While MP and OQMD are primary sources for computed data, validating predictions against experimentally determined phase equilibria is crucial. The NIST Standard Reference Database 31 (Phase Equilibria Diagrams) provides an authoritative, critically evaluated collection of over 33,000 experimental phase diagrams for non-organic systems [21]. This database is indispensable for benchmarking the predictions of ML models against established experimental data. Furthermore, the NIST JANAF Thermochemical Tables offer rigorously evaluated thermochemical data, including temperature-dependent free energies, for over 47 elements and their compounds [22]. These and other resources, such as the Dortmund Data Bank (DDB) and DETHERM for thermophysical data, provide additional dimensions for model training and validation [22].

Foundational Theory and Data Acquisition

Thermodynamic Stability and the Convex Hull Method

The thermodynamic stability of a compound is quantitatively assessed by its energy of decomposition (ΔEd), also known as the "energy above the hull" [3]. This metric represents the energy penalty for a compound to decompose into a set of more stable, competing phases in its chemical system. The mathematical procedure to determine this is the construction of a convex hull in the energy-composition space [18].

For a multi-component system, the normalized formation energy per atom (ΔEf) is calculated for all known compounds. The convex hull is the lowest set of lines (in a binary system) or surfaces (in a ternary system) connecting the stable phases such that all other phases lie above this hull [18]. A compound lying directly on the convex hull is considered thermodynamically stable, meaning no combination of other phases has a lower total energy at that composition. The decomposition energy (ΔEd) for a metastable compound is its vertical distance from this hull, indicating the driving force for its decomposition into the stable phases defining the hull at that composition [18] [3]. This ΔEd is the key target variable for ML models predicting thermodynamic stability.

Figure 1: The convex hull method for determining thermodynamic stability from DFT-calculated formation energies.

Protocols for Data Extraction via API

Acquiring high-quality, consistent data from MP and OQMD is a critical first step in model development. Below are explicit protocols for accessing stability data from both databases.

Data Extraction from the Materials Project

The MP provides a REST API accessible through the mp-api Python client. The following code demonstrates how to retrieve entries for a chemical system and construct a phase diagram to calculate decomposition energies.

Code 1: Using the MP API and pymatgen to compute decomposition energies. The get_e_above_hull function returns the key stability metric, ΔE_d [18].

For more advanced studies incorporating the higher-fidelity R2SCAN functional, MP requires local reapplication of its mixing scheme to ensure consistency across different chemical systems [18].

Data Extraction from the Open Quantum Materials Database

The OQMD can be accessed via its OPTIMADE API endpoint, which allows for flexible querying of its properties using a standardized filter language.

Code 2: Querying the OQMD OPTIMADE API for formation energies and stability data. The _oqmd_stability field directly provides the decomposition energy [20].

Machine Learning for Stability Prediction

Feature Engineering and Model Architectures

Using composition-based features is highly effective for initial stability screening, as structural data is often unavailable for novel materials [3]. Key feature sets include:

- Elemental Property Statistics (Magpie): For a given composition, calculate the mean, standard deviation, range, and mode of elemental properties (e.g., atomic radius, electronegativity, valence) across its constituent elements [3].

- Roost Representations: Model the composition as a graph, where nodes represent elements and edges represent composition ratios, using a graph neural network to learn a representative feature vector [3].

- Electron Configuration (ECCNN): Encode the electron configuration of each element as a fixed matrix, then use convolutional neural networks to extract features that capture the complex, quantum-mechanical interactions underlying stability [3].

Advanced Ensemble Framework

State-of-the-art performance is achieved by combining diverse models into an ensemble to mitigate the inductive bias inherent in any single approach. The Electron Configuration models with Stacked Generalization (ECSG) framework employs stacked generalization, integrating three base models built on different principles: Magpie (elemental statistics), Roost (graph representation), and ECCNN (electron configuration) [3]. The predictions from these base models are used as input features to a meta-learner (e.g., a linear model or XGBoost) that produces the final stability classification. This approach has demonstrated an Area Under the Curve (AUC) score of 0.988 on stability prediction tasks and exhibits superior data efficiency, achieving high accuracy with a fraction of the training data required by other models [3].

Figure 2: The ECSG ensemble ML framework for stability prediction, combining multiple base models via a meta-learner [3].

Experimental Validation Workflow

Predictions of stable compounds from an ML model must be validated through more accurate DFT calculations. The following workflow outlines this process:

- High-Throughput ML Screening: Use the trained ensemble model to screen thousands of candidate compositions in a target chemical space (e.g., double perovskite oxides).

- Candidate Selection: Select the top candidates predicted to be stable (ΔEd = 0) and/or those with desirable auxiliary properties (e.g., a specific band gap).

- DFT Validation: Perform full DFT structural relaxation and energy calculation for each candidate using a code like VASP. Construct the precise convex hull for the candidate's chemical system using data from MP or OQMD to confirm its stability. This step verifies the ML prediction and provides a final, reliable stability assessment [3].

Table 2: The Scientist's Toolkit: Essential Resources for Stability Prediction Research

| Resource / Reagent | Type | Primary Function in Research |

|---|---|---|

| Materials Project API | Database & Tool | Primary source for accessing computed material properties and phase stability data via a programmable interface [18]. |

| OQMD OPTIMADE API | Database & Tool | Alternative source for querying a massive set of DFT-calculated formation energies and stability indicators [20]. |

| pymatgen | Software Library | Python library for materials analysis; essential for constructing phase diagrams and analyzing crystal structures [18]. |

| NIST SRD 31 | Database | Source of experimentally determined phase diagrams for validating computational predictions [21]. |

| VASP / Quantum ESPRESSO | Software | DFT codes used for first-principles validation of ML-predicted stable compounds. |

| Magpie / Roost Features | Feature Set | Engineered input features for machine learning models based on elemental properties and compositional graphs [3]. |

The Materials Project and the Open Quantum Materials Database provide the large-scale, high-quality datasets necessary to power modern machine-learning approaches for thermodynamic stability prediction. By following the protocols outlined for data extraction, leveraging the convex hull method for stability labeling, and implementing advanced ensemble models that minimize bias, researchers can dramatically accelerate the discovery of new, stable inorganic materials. This methodology, which integrates high-throughput computation with intelligent machine learning, represents a cornerstone of the materials genomics approach, enabling a more efficient and targeted path from conceptual design to synthesized material.

Architecting ML Models: From Feature Engineering to Ensemble Frameworks

The accurate prediction of thermodynamic stability is a cornerstone in the discovery and design of novel inorganic compounds. Machine learning (ML) has emerged as a powerful tool to accelerate this process, with the choice of input data strategy—composition-based or structure-based—being a fundamental decision that critically influences a model's predictive performance, applicability, and computational cost. Composition-based models utilize only the chemical formula of a compound, while structure-based models require additional information about the spatial arrangement of atoms within the crystal lattice.

This guide provides an in-depth technical analysis of these two paradigms within the context of predicting thermodynamic stability for inorganic compounds. We will explore their underlying principles, detailed methodologies, and comparative performance, equipping researchers and scientists with the knowledge to select and implement the most appropriate data strategy for their specific research objectives.

Core Concepts and Comparative Analysis

Composition-Based Models

Composition-based models predict material properties using only the chemical formula as input. A primary challenge is converting this simple formula into a meaningful, machine-readable representation. Since raw elemental proportions offer limited insight, a critical pre-processing step involves creating hand-crafted features based on domain knowledge [3]. For instance, the Magpie model calculates statistical features (mean, range, variance, etc.) from a suite of elemental properties like atomic number, mass, and radius [3]. This approach assumes that these statistical summaries capture essential trends influencing stability.

More advanced models seek to learn complex relationships directly from the composition. The Roost model, for example, represents a chemical formula as a complete graph where atoms are nodes. It employs a graph neural network with an attention mechanism to capture interatomic interactions, effectively learning a representation of the material's stability from the data itself [3]. Another novel approach is the Electron Configuration Convolutional Neural Network (ECCNN), which uses the electron configuration of constituent elements as its foundational input. This method aims to reduce inductive bias by leveraging an intrinsic atomic property that is central to quantum mechanical calculations of stability [3].

Structure-Based Models

Structure-based models incorporate detailed information about the periodic lattice and the precise coordinates of atoms within the unit cell. This provides a more complete description of the material, capturing geometric arrangements and bonding environments that are absent in a mere chemical formula. A material's structure is defined by its unit cell, comprising atom types (A), coordinates (X), and the periodic lattice (L) [13].

Generative models like MatterGen exemplify the use of structural data for inverse design. MatterGen is a diffusion model that generates new crystal structures by learning to reverse a corruption process applied to all three components (A, X, L) of the unit cell. It refines atom types, coordinates, and the lattice to produce stable, diverse inorganic materials across the periodic table [13]. The quality of a generated structure is often validated by performing Density Functional Theory (DFT) relaxations and calculating its energy above the convex hull, a key metric of thermodynamic stability [13].

Strategic Comparison

The choice between composition-based and structure-based strategies involves a trade-off between practicality and informational completeness.

Table 1: Comparison of Input Data Strategies

| Aspect | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Required Input | Chemical formula only | Full 3D atomic structure (lattice + coordinates) |

| Primary Advantage | High speed, low cost; applicable for de novo design | Richer information capture; can model polymorphism |

| Primary Limitation | Cannot distinguish between different structural polymorphs | Structural data can be difficult or costly to obtain |

| Sample Efficiency | Can achieve high accuracy with relatively less data [3] | Typically requires large datasets of curated structures |

| Interpretability | Varies; can use feature importance (e.g., Magpie) | Often complex, "black-box" nature (e.g., diffusion models) |

| Ideal Use Case | High-throughput screening of compositional space | Inverse design and precise property prediction |

Composition-based models are highly efficient and are the only option when exploring new chemical spaces where structural data is unavailable. Structure-based models offer a more physically rigorous description but require data that is often scarce or computationally expensive to produce [3] [13].

Detailed Methodologies and Protocols

Implementing a Composition-Based Ensemble Model

The ECSG (Electron Configuration models with Stacked Generalization) framework demonstrates a state-of-the-art ensemble approach for stability prediction [3]. Its protocol involves building a super learner from three base models to mitigate the inductive bias inherent in any single model.

Base-Level Model Training:

- ECCNN Input Encoding: Encode the chemical formula into a 118 (elements) × 168 (features) × 8 (channels) matrix based on the electron configuration of the constituent elements. Process this matrix through two convolutional layers (64 filters, 5×5), followed by batch normalization, max pooling (2×2), and fully connected layers [3].

- MagPiFe Feature Extraction: For the same compositions, calculate statistical features (mean, variance, etc.) from a wide range of elemental properties. Train a gradient-boosted regression tree model (e.g., XGBoost) on these feature vectors [3].

- Roost Graph Processing: Represent the chemical formula as a graph. Train a graph neural network using a message-passing architecture with an attention mechanism to model interatomic interactions [3].

Meta-Level Model Stacking:

- Prediction Collection: Use the three trained base models to generate prediction vectors for a validation dataset.

- Train Super Learner: Use these prediction vectors as input features to train a meta-learner (e.g., a linear model or another XGBoost model) that produces the final, refined stability prediction [3].

Figure 1: Workflow of the ECSG Ensemble Model

Protocol for a Structure-Based Generative Model

MatterGen provides a comprehensive protocol for the inverse design of stable inorganic materials using a diffusion-based, structure-based approach [13].

Model Pretraining:

- Data Curation: Assemble a large and diverse dataset of stable crystal structures, such as the "Alex-MP-20" dataset, which contains over 600,000 structures with up to 20 atoms from sources like the Materials Project and Alexandria databases [13].

- Define Diffusion Process: Tailor the diffusion process for crystalline materials by defining separate corruption processes for atom types (categorical masking), fractional coordinates (wrapped Normal distribution approaching uniformity), and the periodic lattice (noise approaching a cubic lattice with average density) [13].

- Train Score Network: Train a neural network to learn the reverse of the corruption process. This network must output invariant scores for atom types and equivariant scores for coordinates and the lattice to respect crystal symmetries [13].

Inverse Design via Fine-Tuning:

- Adapter Module Integration: For a specific design goal (e.g., target chemistry, symmetry, or magnetic property), inject tunable adapter modules into the pretrained model. Fine-tune the model on a smaller, property-specific dataset [13].

- Conditional Generation: Use classifier-free guidance during the generation process to steer the model towards producing structures that satisfy the target property constraints [13].

- DFT Validation: Perform DFT calculations on the generated structures to relax them to their local energy minimum and compute the energy above the convex hull (e.g., within 0.1 eV/atom) to validate thermodynamic stability [13].

Figure 2: MatterGen Inverse Design Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Predicting Thermodynamic Stability

| Resource Name | Type | Function & Application |

|---|---|---|

| Materials Project (MP) | Database | A primary source of computed structural and energetic data for hundreds of thousands of inorganic compounds, used for training and benchmarking [3] [13]. |

| JARVIS | Database | The Joint Automated Repository for Various Integrated Simulations provides data for benchmarking model performance on stability prediction tasks [3]. |

| Alexandria Database | Database | A large collection of computed crystal structures used to create diverse training sets for generative models like MatterGen [13]. |

| tmQM Dataset | Database | Provides quantum-mechanical properties for transition metal complexes, useful for modeling more complex inorganic systems [23]. |

| ChemDataExtractor | Software Toolkit | A natural language processing tool designed to automatically extract chemical information (e.g., properties, structures) from the scientific literature [23]. |

| RDKit | Software Library | An open-source cheminformatics toolkit used for processing molecular structures, calculating descriptors, and handling SDF files in analysis workflows [24]. |

| KNIME Analytics Platform | Low/No-Code Platform | A visual programming platform used to build and deploy automated workflows for chemical data analysis, grouping, and machine learning without extensive coding [25]. |

| CIME Explorer | Visualization Tool | An interactive, web-based system for visualizing model explanations and exploring the chemical space of compounds, aiding in model interpretation [24]. |

| DFT (e.g., VASP) | Computational Method | The computational standard for validating model predictions by calculating the precise energy and relaxed structure of a compound [3] [13]. |

The strategic selection between composition-based and structure-based input data is pivotal in machine learning for thermodynamic stability. Composition-based models offer a powerful, efficient tool for rapid screening and discovery within vast compositional spaces, especially when structural data is absent. In contrast, structure-based models provide a deeper, more physically accurate representation, enabling precise inverse design of novel materials with targeted properties. The emerging trend of ensemble methods and generative models highlights a future where these strategies are not mutually exclusive but are synergistically combined to push the boundaries of materials discovery. Researchers are encouraged to base their choice on the specific stage of their investigation, the availability of data, and the ultimate design goals of their project.

The discovery and development of new inorganic compounds are central to advancements in fields ranging from photovoltaics to catalysis. A critical property governing whether a material can be successfully synthesized is its thermodynamic stability, traditionally assessed through resource-intensive experimental methods or Density Functional Theory (DFT) calculations [26] [4]. Machine learning (ML) offers a powerful alternative, capable of rapidly screening thousands of candidate compounds by learning the complex relationships between a material's composition and its stability [3]. The performance of these ML models is profoundly dependent on feature engineering—the process of representing chemical compositions as meaningful numerical vectors that capture the underlying physical and electronic principles governing stability [3].

This whitepaper delineates three core feature engineering paradigms for predicting the thermodynamic stability of inorganic compounds: elemental properties, graph representations, and electron configurations. We frame this discussion within a broader thesis that the integration of these diverse, multi-scale feature sets through ensemble methods mitigates the inductive biases inherent in single-source models, leading to superior predictive accuracy, enhanced sample efficiency, and more reliable exploration of uncharted compositional spaces [3].

Core Feature Engineering Paradigms

Elemental Properties (Magpie)

The Magpie approach operationalizes the long-standing materials science practice of leveraging elemental properties to predict compound behavior. It transforms a chemical formula into a fixed-length feature vector by computing statistical moments across a suite of elemental attributes [3].

Feature Engineering Methodology: For a given compound, a list of elemental properties is gathered for each constituent element. Magpie then calculates six statistical quantities for each property across the elements in the compound: mean, standard deviation, minimum, maximum, range, and mode [3]. This process converts a variable-length composition into a standardized, fixed-dimensional vector suitable for traditional machine learning algorithms.

Experimental Implementation: In practice, a dataset containing known compounds and their stability (often expressed as the energy above the convex hull, ΔHd or E hull) is used [26]. The feature vectors for all compounds are constructed using the Magpie methodology and used to train a model, typically a Gradient Boosted Regression Tree (XGBoost), to predict stability [3].

Table 1: Key Elemental Property Categories for Stability Prediction

| Property Category | Specific Examples | Rationale in Stability Prediction |

|---|---|---|

| Atomic Structure | Atomic number, Atomic mass, Atomic radius | Defines the fundamental size and mass of constituents [3]. |

| Electronic Structure | Electronegativity, Electron affinity, Ionization energy | Determines the nature and strength of chemical bonds [26]. |

| Thermodynamic Properties | Melting point, Boiling point, Density | Correlates with cohesive energy and phase stability [3]. |

Graph Representations (Roost)

The Roost model introduces a more nuanced representation by framing a chemical formula as a fully-connected graph, where nodes represent atoms and edges represent the interactions or relationships between them [3]. This approach allows the model to learn complex, non-local interactions directly from data.

Representation Construction: The chemical formula is parsed into a set of nodes, with each node feature vector initialized with elemental properties. A complete graph is built by connecting every node (atom) to every other node. This structure permits message-passing between all atoms in the composition, regardless of their specific spatial arrangement [3].

Model Architecture and Workflow: Roost employs a Graph Neural Network (GNN) with an attention mechanism. The model operates through a series of message-passing steps where information from neighboring nodes is aggregated and used to update the state of each node. The attention mechanism allows the model to learn the relative importance of different atomic interactions. Finally, the updated node representations are pooled into a single graph-level representation for the final stability prediction [3].

Electron Configurations (ECCNN)

While previous models relied on hand-crafted features or interatomic interactions, the Electron Configuration Convolutional Neural Network (ECCNN) leverages the fundamental electron configuration of atoms as its primary input [3]. This intrinsic atomic characteristic is central to first-principles calculations and is postulated to introduce fewer inductive biases.

Input Representation Engineering: The core innovation is encoding a material's composition into a 2D matrix representing its collective electron configuration. The matrix dimensions are 118 (potential elements) × 168 (total orbitals across quantum shells). For each element present in the compound, its ground-state electron configuration is used to populate the corresponding row in the matrix. This creates a sparse, structured image-like representation of the material's electronic structure [3].

Network Architecture: The ECCNN model processes this matrix using two consecutive convolutional layers (each with 64 filters of size 5×5) to detect local patterns and hierarchical features in the electron configuration data. This is followed by batch normalization and a 2×2 max-pooling layer for stability and dimensionality reduction. The extracted features are then flattened and passed through fully connected layers to output the stability prediction [3].

Table 2: Comparative Analysis of Feature Engineering Paradigms

| Feature Paradigm | Representation | Key Advantage | Potential Limitation |

|---|---|---|---|

| Elemental Properties (Magpie) | Fixed-size statistical vector [3]. | Computationally lightweight; highly interpretable [3]. | May miss complex, non-linear interactions between atoms [3]. |

| Graph Representations (Roost) | Fully-connected graph of atoms [3]. | Learns interatomic interactions without prior definition [3]. | Computationally intensive; "complete graph" assumption may not reflect true connectivity [3]. |

| Electron Configurations (ECCNN) | 2D orbital occupation matrix [3]. | Leverages fundamental quantum property; less biased [3]. | High-dimensional input; requires more data for training [3]. |

Integrated Framework and Performance

The Ensemble Super Learner: ECSG

Recognizing the complementary strengths of each feature paradigm, an ensemble framework based on Stacked Generalization (SG) was developed, designated as ECSG [3]. The framework operates on the thesis that integrating models rooted in distinct domains of knowledge—atomic properties (Magpie), interatomic interactions (Roost), and electronic structure (ECCNN)—creates a synergistic super learner that mitigates the limitations and biases of any single model [3].

The meta-learner is typically a simple, interpretable model like logistic regression or a shallow decision tree. It is trained on the predictions of the base models, learning to weight their outputs optimally based on their performance across different regions of the compositional space. For instance, ECCNN might be more reliable for compounds involving transition metals with complex electronic structures, while Roost might excel in systems where coordination chemistry dominates [3].

Quantitative Performance Metrics

The ECSG framework has demonstrated exceptional performance in predicting compound stability. On benchmark datasets like the Joint Automated Repository for Various Integrated Simulations (JARVIS), the ensemble model achieved an Area Under the Curve (AUC) score of 0.988, indicating a very high degree of accuracy in distinguishing stable from unstable compounds [3].

A particularly notable finding was the dramatic improvement in sample efficiency. The ECSG model attained performance equivalent to existing state-of-the-art models using only one-seventh of the training data [3]. This efficiency is a direct benefit of the multi-faceted feature representation, which provides a richer information foundation for the model to learn from, reducing the number of samples required for effective generalization.

Table 3: Key Performance Metrics of the ECSG Ensemble Model

| Performance Metric | Reported Result | Significance |

|---|---|---|

| Area Under the Curve (AUC) | 0.988 [3] | Indicates excellent model performance in classifying stable/unstable compounds. |

| Data Efficiency | Achieved equivalent performance with 1/7th the data [3]. | Reduces computational cost of data generation (DFT/experimental) for training. |

| Validation | High accuracy in identifying new 2D semiconductors and double perovskites via DFT [3]. | Demonstrates model's practical utility and reliability in discovering new materials. |

Experimental Protocols and Validation

Data Sourcing and Preprocessing

The foundation of any robust ML model is a high-quality, curated dataset. Protocols for training stability prediction models typically begin with data extraction from large computational materials databases such as the Materials Project (MP) or the Open Quantum Materials Database (OQMD) [3]. The target variable is usually the energy above the convex hull (E hull), a direct measure of thermodynamic stability where a lower value indicates a more stable compound [26] [4].

Feature preprocessing is critical. For Magpie features, Min-Max Scaling is commonly applied to normalize all features to a [0, 1] range, preventing features with large magnitudes from dominating the model [26]. For the ECCNN input, a custom encoding script maps each element's ground-state electron configuration onto the standardized 118x168x8 matrix [3].

Model Training and Evaluation Protocol

A standard protocol involves a train-validation-test split, often with an 80-10-10 ratio. K-fold cross-validation (e.g., 5-folds) is employed to robustly tune hyperparameters and evaluate model performance without overfitting [4].

The ECSG framework requires a two-stage training process:

- Base Model Training: The Magpie (XGBoost), Roost (GNN), and ECCNN (CNN) models are trained independently on the same training dataset.

- Meta-Learner Training: The predictions of the three base models on the validation set are used as features to train the meta-learner.

The final model is evaluated on the held-out test set using metrics such as AUC, accuracy, F1-score, and Root Mean Square Error (RMSE) for regression tasks [3] [4].

Validation via First-Principles Calculations

To demonstrate practical utility, proposed stable compounds identified by the ML model are validated using Density Functional Theory (DFT) calculations [3]. This involves computing the formation energy of the new compound and all its potential decomposition products to determine its E hull definitively. Case studies on two-dimensional wide bandgap semiconductors and double perovskite oxides have confirmed the ECSG model's remarkable accuracy, with a high proportion of its predictions being validated by subsequent DFT analysis [3].

Visualization of the ECSG Workflow

The following diagram, generated using Graphviz's DOT language, illustrates the integrated workflow of the ECSG ensemble model, from feature input to final prediction.

The Scientist's Toolkit

Table 4: Essential Computational Reagents for Thermodynamic Stability Prediction

| Research Reagent / Tool | Type / Category | Primary Function in Research |

|---|---|---|

| Materials Project (MP) Database | Computational Database | Provides a vast repository of DFT-calculated material properties, including formation energies and E hull values, for model training [3]. |

| Density Functional Theory (DFT) | Computational Method | Serves as the computational ground truth for calculating target variables like E hull and validates ML model predictions [3] [4]. |

| JARVIS Database | Computational Database | Another key source of curated materials data, often used for benchmarking model performance [3]. |

| Shapley Additive Explanations (SHAP) | Model Interpretation Tool | Explains the output of ML models by quantifying the contribution of each input feature to the final prediction, aiding in scientific insight [26] [4]. |

| XGBoost Algorithm | Machine Learning Algorithm | A highly efficient and effective gradient boosting framework often used for models based on tabular feature data (e.g., Magpie) [3] [26]. |

| Graph Neural Network (GNN) | Machine Learning Architecture | The core learning algorithm for models like Roost that operate on graph-structured data representing chemical compositions [3]. |

| Convolutional Neural Network (CNN) | Machine Learning Architecture | The core learning algorithm for models like ECCNN that operate on image-like representations of electron configurations [3]. |

The accurate prediction of the thermodynamic stability of inorganic compounds represents a fundamental challenge in materials science, with profound implications for the discovery of new catalysts, energy storage materials, and pharmaceuticals. Traditional approaches, primarily based on density functional theory (DFT) calculations, provide accuracy at a prohibitive computational cost that severely limits high-throughput exploration. The emergence of machine learning (ML) offers a transformative pathway to accelerate this process by several orders of magnitude. This whitepaper provides an in-depth technical examination of three pivotal algorithmic paradigms—Neural Networks (NNs), Graph Neural Networks (GNNs), and Boosted Trees—within the specific context of predicting thermodynamic stability. We dissect their underlying mechanisms, present quantitative performance comparisons grounded in recent literature, and provide detailed experimental protocols for their application, framing this within a broader thesis that ensemble approaches and multi-fidelity physical knowledge integration are key to unlocking the next generation of materials informatics.

Algorithmic Fundamentals

Neural Networks for Composition-Based Learning

Standard and Physics-Informed Neural Networks operate directly on vectorized representations of materials composition or structure. Their strength lies in learning complex, non-linear mappings from feature space to target properties like formation energy or decomposition enthalpy ((\Delta H_d)), a key metric of thermodynamic stability [3].

A significant advancement is the move from "black-box" models to those that integrate physical constraints. The ThermoLearn architecture exemplifies this as a multi-output Physics-Informed Neural Network (PINN) [27]. It simultaneously predicts total energy (E), entropy (S), and Gibbs free energy (G) by explicitly embedding the thermodynamic relation (G = E - TS) directly into the loss function (L):

(L = w1 \times MSE{E} + w2 \times MSE{S} + w3 \times MSE{Thermo})