Machine Learning for Predicting Energy Above Convex Hull in Inorganic Materials: A Comprehensive Guide

This article provides a comprehensive overview of how machine learning (ML) is revolutionizing the prediction of the energy above the convex hull, a key metric for assessing the thermodynamic stability...

Machine Learning for Predicting Energy Above Convex Hull in Inorganic Materials: A Comprehensive Guide

Abstract

This article provides a comprehensive overview of how machine learning (ML) is revolutionizing the prediction of the energy above the convex hull, a key metric for assessing the thermodynamic stability of inorganic materials. Tailored for researchers and scientists, we explore the foundational principles behind this metric and its critical role in materials discovery. The content delves into a diverse range of ML methodologies, from ensemble models and graph neural networks to advanced interatomic potentials, highlighting their applications across various material classes like MXenes and perovskites. We address critical challenges such as model bias, data scarcity, and the misalignment between regression accuracy and classification performance, offering solutions for optimizing predictive workflows. Furthermore, the article presents rigorous validation frameworks and comparative analyses of state-of-the-art models, empowering researchers to select the most effective strategies for high-throughput screening and accelerate the development of novel functional materials.

The Convex Hull and Thermodynamic Stability: A Primer for Materials Discovery

The energy above convex hull (Eₕᵤₗₗ) serves as a fundamental metric in computational materials science for assessing the thermodynamic stability of inorganic crystalline compounds. It quantifies the energy difference between a given material and the most stable combination of other phases in its chemical space. A material with an Eₕᵤₗₗ of 0 eV/atom lies on the convex hull and is considered thermodynamically stable at 0 K, while a positive value indicates a tendency to decompose into more stable neighboring phases [1].

This metric has become indispensable for high-throughput screening in materials discovery, enabling researchers to prioritize candidate materials for synthesis. The integration of machine learning (ML) models with this stability metric is accelerating the inverse design of novel functional materials for applications in energy storage, catalysis, and carbon capture [2]. Accurate prediction of Eₕᵤₗₗ allows computational researchers to navigate the vast chemical space of potential inorganic compounds, which far exceeds the number of known synthesized materials [3].

Theoretical Foundation and Calculation

Fundamental Concepts

The convex hull is a geometrical construction in energy-composition space that represents the minimum energy "envelope" for all possible compositions in a chemical system. For a multi-element system, the convex hull identifies the set of phases that are thermodynamically stable against decomposition into any other combination of phases [1].

The calculation involves:

- Formation Energy (ΔHf): The energy required to form a compound from its elemental constituents, typically calculated using Density Functional Theory (DFT).

- Decomposition Energy (ΔHd): The energy difference between a compound and the most stable combination of other phases at a similar composition.

The Eₕᵤₗₗ is effectively the vertical distance in energy from a compound's formation energy to this convex hull surface [1]. For stable compounds, this value is zero or negative, while for unstable compounds, it represents the energy cost required for the compound to become stable.

Mathematical Formulation

For a compound with composition AₓBᵧ, the formation energy per atom is calculated as:

ΔHf = [Eₜₒₜₐₗ(AₓBᵧ) - xμₐ⁰ - yμᵦ⁰] / (x+y)

Where Eₜₒₜₐₗ(AₓBᵧ) is the DFT total energy of the compound, and μₐ⁰ and μᵦ⁰ are the reference chemical potentials of elements A and B in their standard states [4].

The Eₕᵤₗₗ is then determined by comparing this formation energy to the convex hull constructed from all known phases in the A-B system. For example, if an unstable compound decomposes into a mixture of other phases, the Eₕᵤₗₗ can be calculated using the decomposition reaction stoichiometry [1]:

Eₕᵤₗₗ = E(compound) - Σᵢ cᵢE(decomposition productᵢ)

Where cᵢ represents the stoichiometric coefficients that balance the chemical reaction. This formulation generalizes to ternary, quaternary, and higher-order systems through multi-dimensional convex hull constructions [1].

Machine Learning Approaches for Prediction

Model Architectures and Performance

Table 1: Machine Learning Models for Energy and Stability Prediction

| Model Name | Architecture Type | Input Features | Prediction Target | Reported MAE (eV/atom) |

|---|---|---|---|---|

| CGCNN [4] | Crystal Graph Convolutional Neural Network | Crystal structure | Total Energy | 0.041 |

| iCGCNN [4] | Improved Crystal Graph CNN | Crystal structure | Formation Enthalpy | 0.03-0.04 |

| MEGNet [4] | Materials Graph Network | Crystal structure | Formation Enthalpy | 0.03-0.04 |

| ElemNet [3] | Deep Neural Network | Composition only | Formation Energy | ~0.08 |

| Roost [3] | Graph Neural Network | Composition only | Formation Energy | ~0.06 |

| MatterGen [2] | Diffusion Model | Composition, structure | Stable crystal generation | N/A |

Machine learning models for predicting formation energy and stability have evolved from composition-based models to structure-aware approaches. Early compositional models like Meredig, Magpie, and AutoMat used engineered features from stoichiometry alone, while newer architectures like graph neural networks (GNNs) incorporate structural information for improved accuracy [3].

The MatterGen model represents a significant advancement as a diffusion-based generative model that directly generates stable, diverse inorganic materials across the periodic table. This model introduces a diffusion process that generates crystal structures by gradually refining atom types, coordinates, and the periodic lattice, with generated structures being more than ten times closer to the local energy minimum compared to previous approaches [2].

Training Data Considerations

The performance of ML models for stability prediction heavily depends on training data composition. Models trained exclusively on ground-state structures from databases like the Materials Project often perform poorly on higher-energy hypothetical structures. A balanced dataset containing both stable and unstable structures is essential for accurate stability predictions [4].

Table 2: Data Requirements for ML Stability Models

| Data Aspect | Importance for Model Performance | Recommended Approach |

|---|---|---|

| Ground-state structures | Provides baseline for stable materials | Include diverse compositions from MP, OQMD |

| Higher-energy structures | Enables discrimination between stable/unstable | Generate via ionic substitution, random search |

| Structural diversity | Ensures transferability across chemical spaces | Include multiple polymorphs per composition |

| Elemental coverage | Enables prediction across periodic table | Curate dataset with up to 20 atoms per cell |

| Experimental validation | Confirms synthesizability | Include ICSD entries with synthesis reports |

Experimental Protocols and Workflows

Computational Determination of Eₕᵤₗₗ

Protocol: DFT-Based Convex Hull Construction

Reference Energy Calculation

- Perform DFT calculations for all elemental phases in their standard states

- Use consistent computational parameters (functional, pseudopotentials, k-point mesh)

- Apply corrections for known DFT limitations (e.g., van der Waals interactions)

Compound Energy Calculation

- Relax crystal structures to their ground state using DFT

- Calculate total energy for each compound in the chemical space of interest

- Compute formation energies relative to elemental references

Convex Hull Construction

- Represent each compound as a point in composition-energy space

- Apply convex hull algorithm to identify the lower energy envelope

- Calculate Eₕᵤₗₗ as the vertical distance to this hull for each compound

Validation

- Compare predicted stable compounds with experimental data

- Verify known stable phases lie on or near the hull

- Check for consistency across similar chemical systems

ML-Enhanced Discovery Workflow

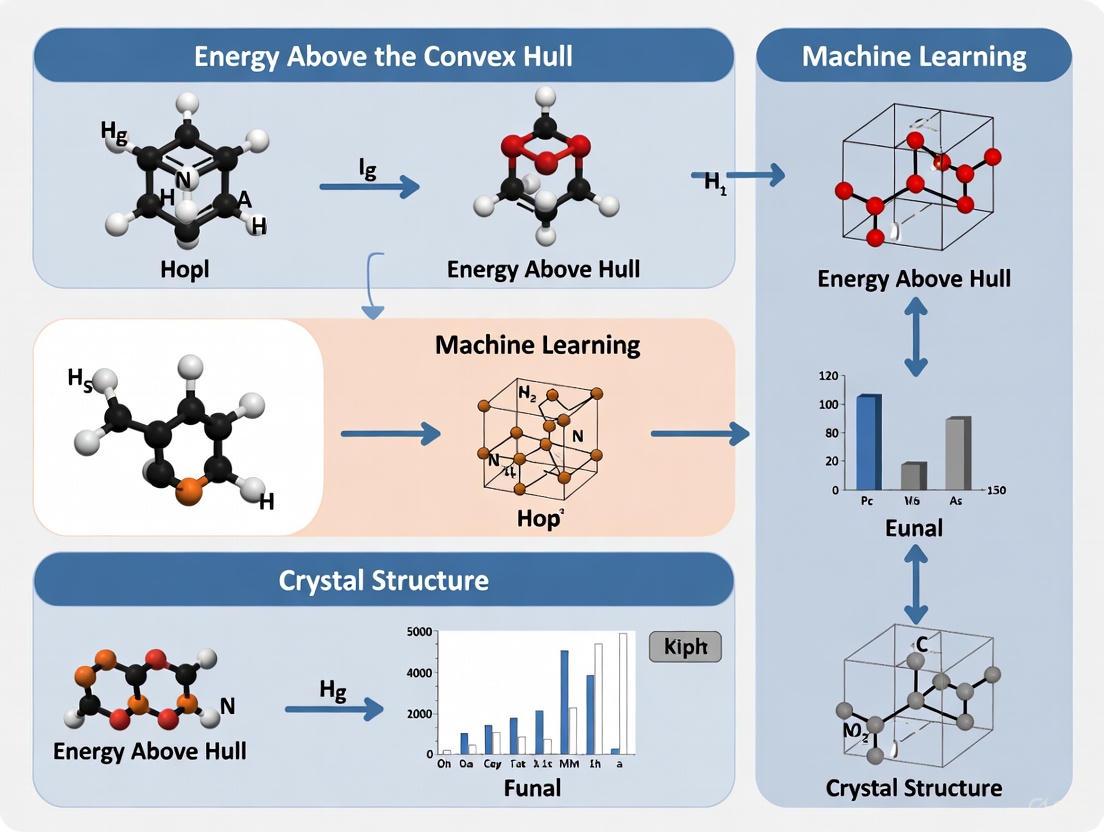

Diagram 1: ML-Driven Materials Discovery Workflow. This flowchart outlines the integrated computational-experimental pipeline for discovering stable materials, combining generative ML with DFT validation.

Research Reagent Solutions

Table 3: Essential Computational Tools for Stability Prediction

| Tool Category | Specific Solutions | Function in Research |

|---|---|---|

| Materials Databases | Materials Project [2] [5], Alexandria [2], OQMD [4], ICSD [6] | Provide reference data for stable and synthesized compounds |

| DFT Codes | VASP, Quantum ESPRESSO, CASTEP | Calculate accurate total energies for convex hull construction |

| ML Frameworks | PyTorch, TensorFlow, JAX | Enable development of custom stability prediction models |

| Structure Analysis | pymatgen [1], ASE, CIF processing tools | Handle crystal structure manipulation and analysis |

| Generative Models | MatterGen [2], CDVAE, DiffCSP | Directly generate novel stable crystal structures |

| Synthesizability Prediction | Composition-structure ensemble models [5] | Prioritize candidates with high probability of experimental synthesis |

Advanced Applications and Validation

Inverse Materials Design

The integration of Eₕᵤₗₗ prediction with generative models enables true inverse design of materials with target properties. MatterGen demonstrates this capability through adapter modules that allow fine-tuning toward desired chemical composition, symmetry, and properties including mechanical, electronic, and magnetic characteristics [2]. This approach successfully generates stable new materials that satisfy multiple property constraints simultaneously, such as high magnetic density and favorable supply-chain characteristics.

Experimental Validation

Computational stability predictions require experimental validation to assess real-world synthesizability. In one implementation of a synthesizability-guided pipeline, researchers applied a combined compositional and structural synthesizability score to screen 4.4 million computational structures, identifying several hundred highly synthesizable candidates [5]. Through subsequent synthesis experiments across 16 targets, they successfully synthesized 7 compounds, validating the computational predictions.

A critical consideration is that thermodynamic stability (low Eₕᵤₗₗ) alone doesn't guarantee synthesizability. Vibrationally stable materials (those without imaginary phonon modes) represent better candidates for experimental realization, even when Eₕᵤₗₗ is minimal [6]. Machine learning classifiers trained on vibrational stability data can further refine candidate selection by identifying materials likely to be vibrationally stable.

Limitations and Future Directions

Current challenges in Eₕᵤₗₗ prediction include:

- Accuracy Gaps: Compositional ML models often perform poorly on stability predictions despite accurate formation energy predictions [3]

- Finite-Temperature Effects: Standard Eₕᵤₗₗ calculations neglect entropic and kinetic factors governing synthetic accessibility [5]

- Data Biases: Models trained primarily on ground-state structures may be unreliable for higher-energy configurations [4]

Future advancements will require improved integration of structural information, better handling of temperature effects, and more sophisticated models that directly learn stability rather than just formation energies. The development of foundational generative models for materials design represents a promising direction for addressing these challenges [2].

Why Stability Prediction is a 'Needle in a Haystack' Problem

The discovery of new, thermodynamically stable inorganic materials is a quintessential "needle in a haystack" problem [7]. This analogy stems from the vast, unexplored compositional space of possible inorganic compounds. While approximately 10^5 combinations have been tested experimentally and ~10^7 have been simulated through computational methods, the potential number of possible quaternary materials alone is estimated to be upwards of 10^10 [8]. The actual number of compounds that can be feasibly synthesized in a laboratory represents only a minute fraction of this total space [7]. This immense combinatorial challenge necessitates highly effective strategies to constrain the exploration space and winnow out materials that are difficult or impossible to synthesize, thereby significantly amplifying the efficiency of materials development.

Defining Thermodynamic Stability: The Energy Above Hull

In computational materials science, the thermodynamic stability of a material is typically assessed using the energy above the convex hull (E_hull), a key metric quantifying a compound's relative stability [1] [8].

- Conceptual Definition: The convex hull is a geometric construction in energy-composition space representing the minimum energy "envelope" for all compositions in a chemical system. The energy above hull is the vertical energy distance from a given compound to this hull [1].

- Thermodynamic Meaning: A compound with Ehull = 0 meV/atom is thermodynamically stable and will not decompose into other phases. A compound with Ehull > 0 is metastable or unstable and will tend to decompose into the phases defining the hull at that composition [1] [8]. The magnitude of E_hull indicates the degree of instability.

- Decomposition Pathway: The decomposition energy (ΔHd) is the energy difference between a compound and its most stable decomposition products. For a compound ABO₂N with decomposition products ⅔ Ba₄Ta₂O₉ + 7⁄₄₅ Ba(TaN₂)₂ + 8⁄₄₅ Ta₃N₅, the Ehull is calculated using the normalized (eV/atom) energies of all compounds involved [1].

Machine Learning Approaches for Stability Prediction

Machine learning offers promising avenues to accelerate stability prediction by accurately forecasting E_hull, bypassing more expensive computational methods. The table below summarizes the performance of various ML models documented in recent literature.

Table 1: Performance of Machine Learning Models for Stability-Related Property Prediction

| Model Name | Architecture/Type | Target Property | Key Performance Metrics | Reference / Dataset |

|---|---|---|---|---|

| ECSG | Ensemble (Stacked Generalization) | Thermodynamic Stability | AUC = 0.988; requires 1/7 data of existing models | [7] |

| Neural Network | Neural Network | Energy Above Hull (MXenes) | MAE: 0.03 eV (train), 0.08 eV (test) | C2DB [9] |

| Random Forest | Random Forest | Heat of Formation (MXenes) | MAE: 0.15 eV (train), 0.23 eV (test) | C2DB [9] |

| Universal Interatomic Potentials (UIPs) | Various | Crystal Stability | Top performer in prospective benchmarking | Matbench Discovery [8] |

| Text-based Transformer | Language Model (MatBERT) | Energy Above Hull & other properties | Outperforms graph neural networks on 4/5 properties | JARVIS [10] |

Key ML Model Architectures and Insights

- Ensemble and Multi-Framework Approaches: The ECSG framework mitigates inductive bias by integrating three models based on distinct knowledge domains: Magpie (atomic properties), Roost (interatomic interactions), and ECCNN (electron configuration) [7]. This synergy enhances overall performance and sample efficiency.

- The Rise of Universal Interatomic Potentials: Recent benchmarking efforts indicate that UIPs have advanced sufficiently to effectively and cheaply pre-screen thermodynamically stable hypothetical materials, outperforming other methodologies in both accuracy and robustness for discovery campaigns [8].

- Emerging Language-Based Models: Transformers fine-tuned on human-readable text descriptions of crystal structures demonstrate state-of-the-art performance in property prediction, including E_hull, while offering enhanced interpretability of the model's reasoning [10].

Experimental and Computational Protocols

Protocol 1: Ensemble ML for Stability Prediction (ECSG Framework)

Objective: To accurately predict thermodynamic stability of inorganic compounds using an ensemble machine learning framework.

Workflow Overview:

Step-by-Step Procedure:

Data Acquisition and Preprocessing:

Feature Generation:

- For Magpie: Calculate statistical features (mean, deviation, range) for elemental properties like atomic number, radius, and electronegativity [7].

- For Roost: Represent the chemical formula as a graph of elements to model interatomic interactions [7].

- For ECCNN: Encode the electron configuration of constituent elements into a 118×168×8 matrix input for a Convolutional Neural Network [7].

Model Training and Stacking:

- Independently train the three base-level models (Magpie, Roost, ECCNN) on the training dataset.

- Use the predictions of these base models as input features for a meta-learner (super learner) that produces the final stability prediction [7].

- Apply cross-validation to prevent overfitting.

Validation:

- Validate model performance prospectively on newly generated hypothetical materials or unseen test sets from databases.

- Confirm top predictions using higher-fidelity methods like Density Functional Theory (DFT) calculations [7].

Protocol 2: Calculating Energy Above Hull via DFT

Objective: To determine the energy above hull of a target compound using first-principles calculations.

Workflow Overview:

Step-by-Step Procedure:

Define the Chemical System: Identify all elements present in the target compound and its potential decomposition products (e.g., A-B-N-O for an oxynitride) [1].

Perform DFT Calculations:

- For the target compound and all known competing phases in the chemical system, perform structural relaxation and energy calculations using standardized DFT parameters (e.g., as implemented in VASP) [1] [8].

- Use consistent calculation settings (functional, potentials, convergence criteria) across all materials for comparable energies.

Construct the Convex Hull:

- For the defined chemical space, plot the formation energy (eV/atom) versus composition for all calculated compounds.

- The convex hull is the set of stable phases forming the lowest-energy envelope in this plot. Any phase lying on this hull has E_hull = 0 [1].

Calculate E_hull for Target Compound:

- The energy above hull is the energy difference per atom between the target compound and the corresponding point on the convex hull at the same composition.

- This can be calculated using the formula:

E_hull = E_target - (sum(fraction_i * E_decomposition_phase_i)), where the fractions ensure conservation of elemental concentrations [1].

Table 2: Essential Computational Tools and Databases for Stability Prediction Research

| Tool/Resource Name | Type | Primary Function in Stability Prediction | Access / Reference |

|---|---|---|---|

| Materials Project (MP) | Database | Repository of DFT-calculated material properties, including formation energies and pre-calculated convex hull data. | [7] [1] |

| JARVIS-DFT | Database | A repository similar to MP, used for training and benchmarking machine learning models. | [10] |

| pymatgen | Software Library | A Python library for materials analysis; includes functionalities for constructing phase diagrams and calculating energies above hull. | [1] |

| Density Functional Theory (DFT) | Computational Method | First-principles quantum mechanical method used to calculate the precise formation energy of a crystal structure, serving as the ground truth for ML models. | [7] [8] |

| Robocrystallographer | Software Library | Automatically generates human-readable text descriptions of crystal structures, which can be used as input for language-based ML models. | [10] |

Predicting the thermodynamic stability of inorganic materials remains a formidable "needle in a haystack" challenge due to the enormity of the chemical search space. However, integrated approaches that leverage ensemble machine learning, advanced universal interatomic potentials, and high-throughput DFT are progressively transforming this pursuit from one of serendipity to a more systematic and efficient engineering discipline. By employing the protocols and resources outlined in this document, researchers can better navigate this complex landscape and accelerate the discovery of novel, stable materials.

Density Functional Theory (DFT) has served as the cornerstone computational method for predicting material properties and energies in inorganic research for decades. Its significance in calculating the energy above convex hull—a crucial metric for assessing thermodynamic stability in materials discovery—cannot be overstated. However, the computational cost of achieving chemical accuracy with advanced DFT functionals presents a fundamental bottleneck in high-throughput materials screening. This limitation becomes particularly pronounced when researchers attempt to explore complex compositional spaces or systems containing transition metals and rare-earth elements, where strong electron correlations dominate the physical properties. The pursuit of accurate energy above convex hull predictions thus represents a critical challenge where traditional DFT approaches face inherent trade-offs between computational feasibility and physical accuracy, creating an ideal opportunity for machine learning (ML) interventions to transform the materials discovery pipeline.

The integration of machine learning into computational materials science represents a paradigm shift from first-principles calculation to data-driven prediction. By learning the complex mappings between material composition, structure, and properties from existing DFT databases, ML models can potentially achieve DFT-level accuracy at fractions of the computational cost. This application note examines the specific limitations of DFT in predicting formation energies and stability metrics, details the emerging ML approaches that circumvent these limitations, and provides practical protocols for researchers working at the intersection of computational chemistry and materials informatics, with particular emphasis on predicting energy above convex hull for inorganic compounds.

The Fundamental Limitations of DFT in Energy Prediction

Accuracy Variations Across Exchange-Correlation Functionals

The choice of exchange-correlation functional in DFT calculations fundamentally governs the accuracy of predicted properties, particularly formation energies and band gaps that directly impact energy above convex hull assessments. Local Density Approximation (LDA) functionals, while computationally efficient, suffer from systematic underestimation of band gaps and often inadequately describe electron correlation effects, leading to significant errors in formation energy predictions for complex inorganic systems [11]. The Generalized Gradient Approximation (GGA), particularly the widely-used PBE functional, introduces density gradient corrections that improve upon LDA but still typically underestimate band gaps and formation energies for many semiconductor and insulator systems [11].

More sophisticated approaches include hybrid functionals such as HSE06, which incorporate a portion of exact Hartree-Fock exchange mixed with DFT exchange-correlation. These functionals demonstrate markedly improved accuracy for band gaps and formation energies—for instance, in Zn₃V₂O₈, HSE06 predicts a band gap of 2.8eV compared to PBE's 1.2eV, bringing calculations much closer to experimental values [11]. For strongly correlated electron systems, particularly those containing transition metals and rare-earth elements, DFT+U and GW+DMFT methods introduce parameterized corrections for electron self-interaction errors and dynamic correlations, though at substantially increased computational cost that limits their application in high-throughput screening [11].

Table 1: Comparative Accuracy of DFT Functionals for Energy-Related Properties

| Functional | Band Gap Accuracy | Formation Energy Accuracy | Computational Cost | Ideal Use Cases |

|---|---|---|---|---|

| LDA | Severe underestimation | Moderate to poor | Low | Simple metals, preliminary screening |

| GGA (PBE) | Systematic underestimation | Moderate | Low to moderate | Wide range of materials, high-throughput studies |

| Hybrid (HSE06) | Good to excellent | Good to excellent | High | Accurate formation energies, band structure prediction |

| PBE+U | Variable improvement | Improved for correlated systems | Moderate to high | Transition metal oxides, f-electron systems |

| GW+DMFT | Excellent | Excellent | Very high | Strongly correlated systems, benchmark calculations |

Quantitative Impact on Energy Above Convex Hull Predictions

The energy above convex hull (ΔEₕ) represents the thermodynamic stability of a compound relative to its competing phases, with negative values indicating stable compounds and positive values suggesting metastable or unstable structures. Errors in DFT-predicted formation energies propagate directly into ΔEₕ calculations, potentially misclassifying material stability. For example, systematic studies have demonstrated that GGA-predicted formation energies for transition metal oxides can exhibit errors of 100-200 meV/atom compared to experimental values, leading to incorrect stability assignments for phases near the convex hull boundary. These uncertainties become particularly problematic when evaluating novel materials with small energy differences between polymorphs or when assessing decomposition pathways for battery electrode materials and catalysts.

The computational expense of high-accuracy functionals creates practical limitations for comprehensive materials exploration. While a single GGA calculation for a medium-sized unit cell (50-100 atoms) might require hours to days on high-performance computing resources, hybrid functional calculations can take weeks for the same system, effectively prohibiting their application across large chemical spaces. This accuracy-efficiency trade-off fundamentally limits the discovery throughput for stable inorganic materials using DFT alone.

Machine Learning Solutions for Energy Prediction

ML Approaches to Bypass DFT Limitations

Machine learning approaches circumvent the DFT bottleneck by learning the relationship between material descriptors and target properties from existing computational or experimental data. Graph neural networks (GNNs) have emerged as particularly powerful frameworks for materials property prediction, as they naturally operate on crystal structures without requiring manual feature engineering. These models learn to represent atoms and their local environments, then aggregate this information to predict system-level properties such as formation energies and band gaps.

Kernel-based methods and random forest models utilizing composition-based features have demonstrated remarkable success in predicting formation energies across diverse chemical spaces. These approaches benefit from architectural simplicity and lower data requirements compared to deep learning methods, making them particularly valuable for limited-data regimes. For energy above convex hull prediction specifically, multitask learning frameworks that simultaneously predict formation energy, band gap, and mechanical properties have shown improved generalization by leveraging correlations between material properties.

Recent advances incorporate physical constraints and symmetry awareness into ML models, ensuring that predictions obey fundamental conservation laws and crystal symmetry requirements. Physics-informed neural networks for materials science embed thermodynamic constraints—such as the requirement that element reference states have zero formation energy—directly into the model architecture, resulting in more physically consistent predictions and improved extrapolation to unseen chemical spaces.

Performance Benchmarks: ML vs. DFT

Table 2: Performance Comparison of ML Methods for Formation Energy Prediction

| ML Method | MAE (meV/atom) | Data Requirements | Speed vs DFT | Transferability |

|---|---|---|---|---|

| Composition-based RF | 80-120 | ~10⁴ compounds | 10⁴-10⁵× | Limited to trained elements |

| Structure-based GNN | 40-80 | ~10⁵ compounds | 10³-10⁴× | Good for isostructural compounds |

| Hybrid descriptor NN | 30-60 | ~10⁴ compounds | 10⁴-10⁵× | Moderate across crystal systems |

| Transfer learning + fine-tuning | 20-50 | ~10³ target compounds | 10³-10⁴× | Excellent with sufficient target data |

Modern ML models can achieve mean absolute errors (MAE) of 20-80 meV/atom for formation energy prediction compared to high-quality DFT references, approaching the disagreement between different DFT functionals themselves. This accuracy level proves sufficient for reliable stability assessment in most materials discovery applications, where the primary goal is identifying the most promising candidates for experimental synthesis. The computational speed advantage is dramatic—where DFT calculations require hours to days per compound, ML models can predict properties in milliseconds to seconds, enabling screening of millions of candidate materials in practical timeframes.

Integrated Protocols for ML-Augmented Energy Prediction

Workflow for High-Throughput Stability Assessment

The following diagram illustrates an integrated workflow combining DFT and ML for efficient prediction of energy above convex hull:

This workflow begins with generating candidate materials through substitution, decoration, or random structure search. An ML model pre-screens these candidates by predicting formation energies and filtering out clearly unstable compounds (ΔEₕ > 50 meV/atom). Promising candidates proceed to DFT validation using hybrid functionals for accurate energy calculation, followed by convex hull construction using existing phase data. Finally, stable compounds are prioritized for experimental synthesis, and the newly calculated data feeds back into the materials database to improve future ML predictions.

Detailed Protocol: ML-Guided Materials Discovery

Protocol Title: ML-Augmented Prediction of Energy Above Convex Hull for Inorganic Compounds

Purpose: To efficiently identify thermodynamically stable inorganic materials by combining machine learning pre-screening with accurate DFT validation.

Materials and Computational Resources:

Table 3: Research Reagent Solutions for ML-DFT Workflows

| Resource Category | Specific Tools/Solutions | Function/Purpose |

|---|---|---|

| DFT Software | VASP, Quantum ESPRESSO, CASTEP | First-principles energy calculations for training data and validation |

| ML Frameworks | PyTorch, TensorFlow, JAX | Building and training deep learning models for property prediction |

| Materials Databases | Materials Project, OQMD, AFLOW | Source of training data and reference convex hull constructions |

| Structure Analysis | pymatgen, ASE, AFLOWpy | Crystal structure manipulation, feature extraction, and analysis |

| ML Model Architectures | CGCNN, MEGNet, SchNet | Graph neural networks specialized for crystal structure property prediction |

| High-Performance Computing | CPU/GPU clusters | Parallel computation for both DFT and ML model training |

Procedure:

Training Data Curation

- Collect formation energies and structures from reliable DFT databases (Materials Project, OQMD)

- Apply quality filters to ensure consistent computational parameters across the dataset

- Split data into training (80%), validation (10%), and test (10%) sets, ensuring no data leakage between chemically similar compounds

Machine Learning Model Development

- For composition-based models: Generate features including stoichiometric attributes, elemental properties (electronegativity, atomic radius, etc.), and electronic structure descriptors

- For structure-based models: Implement graph representations where nodes represent atoms and edges represent bonds with attributes including distance, coordination, etc.

- Train model using appropriate loss functions (typically MAE or MSE) with regularization to prevent overfitting

- Validate model performance on test set and against holdout compounds from diverse chemical spaces

High-Throughput Screening

- Generate candidate materials using substitution patterns, random structure search, or generative models

- Apply trained ML model to predict formation energies for all candidates

- Filter candidates based on predicted ΔEₕ < 50 meV/atom threshold

DFT Validation Protocol

- Perform geometry optimization using PBE functional with appropriate U parameters for transition metals

- Calculate accurate formation energies using HSE06 functional on optimized structures

- Construct convex hull using existing phase data and new DFT calculations

- Identify truly stable compounds (ΔEₕ < 0 meV/atom) and metastable compounds (0 < ΔEₕ < 50 meV/atom)

Iterative Model Refinement

- Incorporate new DFT calculations into training database

- Fine-tune or retrain ML model with expanded dataset

- Re-evaluate model performance and repeat screening cycle

Expected Outcomes: This protocol typically identifies 70-90% of truly stable compounds while reducing the number of required DFT calculations by 1-2 orders of magnitude compared to exhaustive DFT screening.

Troubleshooting:

- If ML model shows poor generalization: Increase diversity of training data, incorporate transfer learning from larger datasets, or implement ensemble methods

- If DFT calculations disagree significantly with ML predictions: Verify DFT convergence parameters, check for unusual bonding environments not represented in training data

- If convex hull construction yields unexpected stability: Verify reference phase energies, check for missing competing phases in the database

Case Study: Transition Metal Oxide Discovery

To illustrate the practical implementation of these methods, consider the discovery of novel transition metal oxides for energy storage applications. The strong electron correlations in many transition metal oxides present particular challenges for standard DFT functionals, while the vast compositional space (ternary and quaternary oxides) makes exhaustive experimental or computational exploration infeasible.

In this scenario, researchers initially trained a graph neural network on approximately 60,000 oxide compounds from the Materials Project database. The model achieved a mean absolute error of 43 meV/atom for formation energy prediction on a held-out test set. This model was then used to screen 150,000 hypothetical ternary oxide compositions generated through charge-balanced substitution patterns. The ML pre-screening identified 1,200 promising candidates with predicted ΔEₕ < 35 meV/atom, representing a 125-fold reduction in candidates requiring DFT validation.

Subsequent DFT calculations using the SCAN functional (which provides improved accuracy for correlated systems without the full cost of hybrid functionals) confirmed 48 truly stable compounds (ΔEₕ < 0) and 127 metastable compounds (0 < ΔEₕ < 35 meV/atom). Several of these newly identified stable compounds exhibited promising electronic properties for battery electrode applications, demonstrating the power of ML-guided discovery to identify novel functional materials with minimal computational resources.

The integration of machine learning with DFT calculations represents a transformative approach to overcoming the computational bottleneck in materials discovery. By leveraging ML models for rapid pre-screening and reserving expensive DFT calculations for final validation, researchers can explore chemical spaces orders of magnitude larger than possible with DFT alone. This synergistic approach is particularly valuable for predicting energy above convex hull—where accuracy demands sophisticated DFT functionals but practical discovery requires computational efficiency.

Future developments will likely focus on improving ML model accuracy for complex electronic systems, incorporating active learning strategies to optimally select compounds for DFT validation, and developing unified frameworks that seamlessly integrate ML predictions with high-fidelity computational methods. As materials databases continue to grow and ML architectures become increasingly sophisticated, the role of machine learning in computational materials science will expand from supplementary tool to central methodology, potentially enabling the comprehensive mapping of inorganic material stability across vast compositional spaces.

In the field of inorganic materials research, predicting thermodynamic stability through properties such as the energy above the convex hull is a fundamental step in discovering new, synthesizable compounds. Traditional methods relying solely on density functional theory (DFT) are computationally expensive, creating a bottleneck for high-throughput exploration. The integration of machine learning (ML) with large-scale DFT databases has emerged as a powerful paradigm to overcome this limitation. Several curated materials databases now serve as essential repositories of calculated properties, providing the structured data necessary for training accurate and efficient ML models. This Application Note details protocols for leveraging four foundational resources—Materials Project (MP), Open Quantum Materials Database (OQMD), AFLOW, and JARVIS—as primary data sources for ML projects aimed at predicting the energy above the convex hull and related stability metrics.

The foundational databases provide millions of DFT-calculated data points, each with distinct strengths in material classes, properties, and accessibility.

Table 1: Overview of Major Materials Databases for ML-Driven Stability Prediction

| Database | Primary Focus & Size | Key Stability/Rlevant Properties | Notable Features for ML | Access Method |

|---|---|---|---|---|

| Materials Project (MP) [7] [12] | Inorganic crystals; 500,000+ compounds [13] | Formation energy, Energy above hull [7] | User-friendly REST API, extensive documentation | REST API, Web Interface |

| Open Quantum Materials Database (OQMD) [12] | Inorganic crystals & hypotheticals; ~300,000 calculations [12] | Formation energy, Energy above hull (validated against 1,670 exp. formations) [12] | Freely available full data dump; validated accuracy (MAE 0.096 eV/atom vs. exp.) [12] | Full download, Website |

| AFLOW [13] [14] | Inorganic materials; 3.5M+ materials [13] | Prototype-based crystal structures, thermodynamic data | Strong focus on crystallographic prototypes and high-throughput computation [14] | REST API |

| JARVIS-DFT [15] [16] | 3D/2D materials; ~40,000 bulk & ~1,000 2D materials [15] [16] | Formation energy, Exfoliation energy (for 2D), SLME [15] [16] | Specialization in 2D and low-dimensional materials; beyond-DFT methods (e.g., G0W0, HSE06) [15] [16] | JSON/API, Website |

Unified Workflow for ML-Based Stability Prediction

A generalized, database-agnostic workflow enables researchers to build robust ML models for stability prediction, encompassing data acquisition, featurization, model training, and validation.

Detailed Experimental and Computational Protocols

Data Acquisition and Pre-processing Protocol

Data Retrieval:

- Using APIs: For MP and AFLOW, use their respective REST APIs to query compounds based on elements, crystal systems, or calculated properties. The

requestslibrary in Python is commonly used. - Bulk Download: For OQMD, the entire dataset is available for download, which is ideal for creating large, custom training sets without repeated API calls [12].

- Target Properties: Extract the

formation_energy_per_atomandenergy_above_hull(or their database-specific equivalents) as primary targets for stability prediction models.

- Using APIs: For MP and AFLOW, use their respective REST APIs to query compounds based on elements, crystal systems, or calculated properties. The

Data Curation:

- Handling Discrepancies: Different databases may use different DFT parameter settings (pseudopotentials, functionals, U values). When merging data, note these differences as they can introduce systematic biases.

- Filtering: Remove entries with missing critical data (e.g., formation energy, crystal structure). Consider filtering by the number of sites per cell or the presence of partial occupancies to ensure data quality [12].

Feature Generation Strategies for Stability Prediction

The choice of features is critical for model performance. Proven strategies include:

- Composition-Based Features (Magpie): This approach calculates statistical moments (mean, standard deviation, range, etc.) of fundamental elemental properties (e.g., atomic number, electronegativity, atomic radius) for a given chemical formula. It is lightweight and effective for a wide range of properties [7].

- Structure-Based Features (Roost): This method represents a crystal structure as a graph, where atoms are nodes and edges represent interactions. Graph Neural Networks with an attention mechanism then learn message-passing functions to capture complex interatomic relationships that determine stability [7].

- Electron Configuration-Based Features (ECCNN): This novel approach uses the electron configurations of constituent atoms as direct input, typically encoded into a 2D matrix. Convolutional Neural Networks (CNNs) are then used to extract patterns, providing a model with potentially lower inductive bias by leveraging an intrinsic atomic property [7].

Advanced ML Model Implementation: The ECSG Framework

The ECSG (Electron Configuration models with Stacked Generalization) framework demonstrates a state-of-the-art approach for stability classification (e.g., stable vs. unstable). It mitigates the bias of any single model by combining them [7].

Base-Level Models:

- ECCNN: Processes raw electron configuration matrices using convolutional layers to learn electronic structure patterns relevant to stability [7].

- Roost: A graph-based model that learns from the stoichiometry and structure of crystals to predict formation energy [7].

- Magpie: A feature-based model using gradient-boosted regression trees (e.g., XGBoost) trained on elemental property statistics [7].

Meta-Learner: The predictions from these three base models are used as input features to a final "super learner" model (e.g., a linear model or another simple classifier), which learns the optimal way to combine them to produce a final, more accurate prediction of stability [7]. This ensemble method has achieved an Area Under the Curve (AUC) score of 0.988 on JARVIS data and shows high sample efficiency, requiring only one-seventh of the data to match the performance of other models [7].

Validation Protocol Using First-Principles Calculations

ML predictions, especially for novel stable compounds, must be validated.

- DFT Settings (JARVIS-DFT Protocol):

- Functional: Employ van der Waals functionals like vdW-DF-OptB88 for accurate geometric optimization, particularly important for layered or 2D materials [16].

- Convergence: Use a stringent force convergence criterion of < 0.001 eV/Å and an energy tolerance of 10⁻⁷ eV [16].

- k-points and Cut-off: Implement automatic k-point and plane-wave cut-off energy convergence protocols to ensure results are numerically accurate [16].

- Stability Assessment: Calculate the formation energy of the ML-predicted stable compound and all other competing phases in its chemical space to construct the convex hull and confirm that the compound's energy above hull is within a stable threshold (e.g., very close to or below 0 eV/atom) [7].

Case Studies in Applied Research

Case Study 1: Discovery of Novel 2D Wide Bandgap Semiconductors

- Objective: Identify previously unexplored, thermodynamically stable 2D semiconductors.

- Method: An ensemble ML model (ECSG) was trained on formation energies from JARVIS-DFT, which contains specialized data on 2D monolayers and exfoliation energies [7]. The model screened uncharted compositional spaces, and the top predictions for stable compounds were validated with DFT.

- Outcome: DFT calculations confirmed the stability of several new 2D materials, demonstrating the model's high precision and its utility in guiding targeted exploration of low-dimensional materials space [7].

Case Study 2: Predicting Stability of MXenes

- Objective: Predict the heat of formation and energy above convex hull for MXenes (Mn₊₁XnTx).

- Method: A Random Forest model was trained on ~300 MXenes from the Computational 2D Materials Database (C2DB). Features included 12 physicochemical properties of the constituent and terminating atoms (e.g., electronegativity, atomic radius) [9].

- Outcome: The model achieved a Mean Absolute Error (MAE) of 0.23 eV for heat of formation on test data. Feature importance analysis confirmed that termination atom properties are critical for predicting MXene stability [9].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools and Resources

| Tool/Resource Name | Type | Primary Function in Workflow | Access/Reference |

|---|---|---|---|

| JARVIS-Tools | Software Python Library | Provides workflows for running and analyzing DFT calculations using JARVIS protocols [16]. | https://github.com/usnistgov/jarvis |

| pymatgen | Software Python Library | Core library for materials analysis; essential for parsing CIF files, manipulating structures, and interfacing with MP API. | https://pymatgen.org/ |

| qmpy | Software Python Framework | The backend framework of OQMD; useful for decentralized database management and analysis [12]. | http://oqmd.org/static/docs |

| AFLOW | Software Framework | A high-throughput framework for calculating the properties of alloys and intermetallics; source of crystallographic prototypes [14]. | http://aflow.org/ |

| Matbench | Benchmark Suite | A collection of ML tasks for testing and benchmarking model performance on materials data [13]. | https://matbench.materialsproject.org/ |

From Composition to Crystal Graph: A Survey of Machine Learning Architectures

Predicting the stability of inorganic crystalline materials is a cornerstone of accelerated materials discovery. The energy above the convex hull is a critical thermodynamic metric that quantifies the relative stability of a compound; a value near or below zero indicates a material is thermodynamically stable and likely synthesizable. Traditional density functional theory (DFT) calculations, while accurate, are computationally prohibitive for screening vast chemical spaces. Composition-based machine learning (ML) models offer a powerful alternative by predicting stability directly from a material's chemical formula, enabling rapid exploration of new inorganic compounds. These models leverage elemental features—physicochemical properties of constituent elements—within statistical learning algorithms to map compositions to target properties like formation energy and energy above hull. This Application Note details the protocols, data requirements, and reagent solutions for implementing such models, contextualized within a broader thesis on ML-driven stability prediction.

Quantitative Performance of Composition-Based Models

The performance of composition-based ML models in predicting formation energy and energy above hull varies significantly based on the algorithm, feature set, and material system. The following table synthesizes quantitative results from key studies to facilitate comparison and model selection.

Table 1: Performance Metrics of Selected Composition-Based ML Models for Stability Prediction

| Material System | ML Model | Key Features | Target Property | Performance (MAE) | Reference |

|---|---|---|---|---|---|

| Diverse Inorganic Solids | Support Vector Regression (SVR) | Elemental properties from composition | Formation Energy | Benchmark on 313,965 DFT calculations [17] | |

| 2D MXenes | Random Forest | 12 physicochemical properties of constituents | Heat of Formation | 0.23 eV (on test set) [9] | |

| 2D MXenes | Neural Network | 12 physicochemical properties of constituents | Heat of Formation | 0.21 eV (on test set) [9] | |

| 2D MXenes | Neural Network | 14 selected features | Energy Above Hull | 0.08 eV (on test set) [9] | |

| Diverse Crystals (Materials Project) | Deep Neural Network (ElemNet) | 86 elemental fractions | Formation Energy | Results on 153,229 data points [18] | |

| Diverse Crystals (Materials Project) | Deep Neural Network (Enhanced) | Elemental fractions + Space Group | Formation Energy | Superior performance vs. composition-only [18] | |

| AB Intermetallics | XGBoost | 133 compositional features (CAF) | Structure Type Classification | High F-1 Score [19] |

Table 2: Key Feature Categories for Composition-Based Stability Prediction

| Feature Category | Example Descriptors | Physical Significance | Relevant Studies |

|---|---|---|---|

| Elemental Properties | Electronegativity, Atomic Radius, Valence, Ionization Energy | Determines bonding character and chemical reactivity [19] [9] | MXene stability [9], General inorganic solids [17] |

| Stoichiometric Attributes | Elemental fractions, Weighted averages (e.g., mean atomic mass) | Captures overall composition and molar ratios [18] | ElemNet model [18] |

| Structural Indicators | Crystal System, Space Group, Point Group (one-hot encoded) | Proxy for crystal polymorphs and phase stability [18] | Enhanced formation energy prediction [18] |

| Electronic Structure | Electron Affinity, Mendeleev Number | Related to periodic trends and electronic configuration [19] | Intermetallic compound classification [19] |

Experimental Protocols for Model Development and Validation

This section provides detailed, step-by-step methodologies for developing and validating composition-based models for energy above hull prediction, as cited in recent literature.

Protocol: SVR for Formation Energy and Convex Hull Targeting

This protocol is adapted from the methodology used to discover YAg~0.65~In~1.35~ by directing synthesis toward productive composition space [17].

Data Curation:

- Source: Compile a large dataset of inorganic compounds with known DFT-calculated formation energies. The foundational study utilized 313,965 high-throughput DFT calculations [17].

- Label: Use the formation energy per atom (eV/atom) as the primary training label.

Feature Engineering:

- Featurization: For each chemical composition, generate a feature vector using only elemental properties. Tools like Composition Analyzer/Featurizer (CAF) can automate this, generating over 100 compositional features [19].

- Descriptors: Calculate stoichiometric-weighted attributes such as average electronegativity, atomic radius, and valence electron count [17] [19].

Model Training and Validation:

- Algorithm: Implement a Support Vector Regression (SVR) model.

- Training: Train the SVR model to learn the mapping between the compositional feature vectors and the DFT-calculated formation energies.

- Validation: Perform standard train-test splitting and k-fold cross-validation to assess model generalizability and prevent overfitting.

Stability Prediction and Synthesis Targeting:

- Convex Hull Construction: Use the model's predicted formation energies to construct zero-kelvin convex hull diagrams for ternary or binary systems of interest (e.g., Y-Ag-In) [17].

- Target Identification: Identify compositions that lie on the convex hull (most stable) or within a small energy window above it (e.g., +50 meV/atom), as these are promising candidates for experimental synthesis [17].

- Experimental Validation: Perform solid-state synthesis on the top-predicted, previously unexplored compositions to validate the discovery of new, stable phases [17].

Protocol: Neural Network for MXene Stability Prediction

This protocol outlines the process for predicting heat of formation and energy above hull for 2D MXenes, achieving high accuracy with a neural network model [9].

Dataset Preparation:

- Source: Extract MXene data from the Computational 2D Materials Database (C2DB). A typical dataset may include ~300 entries for M~2~X, M~3~X~2~, and M~4~X~3~ MXenes with various surface terminations (O, F, OH*) [9].

- Targets: The labels are the DFT-calculated

heat of formationandenergy above the convex hull.

Feature Selection:

- Initial Feature Set: Create a set of ~14 physicochemical features for the M, X, and T (terminating) elements. These include atomic number, atomic mass, electronegativity, and Mendeleev number [9].

- Feature Reduction: Employ feature importance analysis (e.g., via Random Forest) to create reduced-order models. The referenced study found that models with only 7 or 4 key features retained an MAE of 0.21 eV for heat of formation prediction, easing transferability [9].

Model Implementation and Training:

- Architecture: Employ a fully connected Neural Network. The optimal architecture may be determined via hyperparameter tuning.

- Training: Use the Adam optimizer and a loss function like Mean Absolute Error (MAE). The model should be trained separately for heat of formation and energy above hull.

- Benchmarking: Compare the NN performance against other algorithms like Random Forest, which achieved a test MAE of 0.23 eV for heat of formation in the same study [9].

Protocol: Incorporating Symmetry for Improved Formation Energy Prediction

This protocol enhances a pure-composition model by integrating symmetry information to account for crystal polymorphs, significantly improving prediction accuracy [18].

Data Sourcing and Preprocessing:

- Source: Extract data from the Materials Project database, including chemical formula, formation energy, and symmetry classifications (crystal system, point group, space group).

- Cleaning: Remove extreme outliers, for instance, data points beyond ±7 standard deviations from the mean formation energy [18].

Advanced Featurization:

- Compositional Features: Generate a vector of 86 elemental fractions from the chemical formula.

- Symmetry Features: Convert the symmetry classification (e.g., space group) into a binary vector using one-hot encoding. This results in 7, 32, or 228 additional features for crystal system, point group, or space group, respectively [18].

- Feature Integration: Concatenate the elemental fraction vector and the one-hot encoded symmetry vector to form the complete input feature set.

Deep Learning Model Architecture:

- Design: Use a deep neural network with multiple hidden layers (e.g., 512, 512, 256, 128, 64, 32 neurons) with ReLU activation functions [18].

- Output: A single output neuron with a linear activation function for regression.

- Regularization: Implement early stopping with a patience of 10 epochs during training to avoid overfitting [18].

Workflow Visualization

The following diagram illustrates the logical workflow for developing and applying a composition-based model for energy above hull prediction, integrating the key steps from the protocols above.

Model Development and Application Workflow

The Scientist's Toolkit: Research Reagent Solutions

This table details the essential computational tools and data resources required for building composition-based stability prediction models.

Table 3: Essential Research Reagents for Composition-Based Stability Modeling

| Reagent / Resource | Type | Primary Function | Key Features / Notes |

|---|---|---|---|

| Materials Project (MP) | Database | Source of DFT-calculated formation energies, structures, and energies above hull for >150,000 materials [18] [2]. | Provides a standardized, vast dataset for training and benchmarking [18]. |

| Composition Analyzer/Featurizer (CAF) | Software Tool | Generates numerical compositional features from a list of chemical formulae [19]. | Open-source Python program; generates 133 human-interpretable compositional features [19]. |

| Matminer | Software Toolkit | A versatile open-source library for materials data mining. | Contains numerous featurization classes to generate thousands of descriptors from composition and structure [19]. |

| C2DB | Database | Specialized database for 2D materials properties, including MXenes [9]. | Essential for building models focused on 2D materials systems. |

| ElemNet Model | Algorithm/Architecture | Deep neural network that uses only elemental fractions as input [18]. | Demonstrates the power of deep learning even with simple input features [18]. |

| Support Vector Regression (SVR) | Algorithm | A robust statistical learning model for regression tasks. | Effectively applied to predict formation energy from compositional descriptors [17]. |

| Graph Neural Networks (GNNs) | Algorithm | Advanced ML architecture for structured data. | Can predict total energy and rank polymorph stability; requires a balanced dataset of ground-state and high-energy structures [4]. |

The accurate prediction of thermodynamic stability is a cornerstone of inorganic materials research, with the energy above convex hull serving as a critical metric for assessing compound stability. Traditional methods, particularly those based on density functional theory (DFT),, while accurate, are computationally demanding and time-consuming [20] [21]. The emergence of graph neural networks (GNNs) has introduced a paradigm shift, enabling rapid and accurate property predictions by learning directly from the structural and compositional representation of materials [22]. This application note details the use of two powerful GNN architectures—Crystal Graph Convolutional Neural Network (CGCNN) and Representation Learning from Stoichiometry (Roost)—for predicting formation energy and energy above convex hull, framing their use within a broader methodology for accelerating the discovery of novel inorganic materials.

GNN Architectures for Materials Science

Graph neural networks are uniquely suited for modeling atomic systems because they operate directly on a graph representation of a material's structure, where atoms are represented as nodes and the chemical bonds between them as edges [22]. This allows GNNs to learn from the fundamental interactions within a material.

Table 1: Key GNN Architectures for Material Property Prediction

| Architecture | Graph Representation | Key Features | Explicitly Encodes Angles? | Primary Input |

|---|---|---|---|---|

| CGCNN [23] | Crystal Graph (Atoms as nodes, bonds as edges) | Two-body atomic interactions, atomic feature vectors (e.g., electronegativity, group) [24] | No | Crystal Structure |

| Roost [23] [24] | Weighted graph (Elements as nodes, stoichiometry as edges) | Physics-driven, uses only chemical formula | No | Chemical Formula (Composition) |

| ALIGNN [25] | Atomistic Graph + Line Graph | Edge-gated graph convolution on both bond graph and angle-based line graph | Yes (via line graph) | Crystal Structure |

| Tripartite Interaction Model [23] | Crystal Graph with three-body terms | Explicitly incorporates atoms, bond lengths, and bond angles; updates edge vectors | Yes | Crystal Structure |

The CGCNN Framework

CGCNN transforms a crystal structure into a crystal graph. Each atom (node) is assigned a feature vector, and each bond (edge) is characterized by the interatomic distance [23]. The model then uses a convolutional operation to learn from the local atomic environment of each atom. The core update for an atom's feature vector ( \nu_i ) can be summarized as learning from the superposition of its own features, its neighbor's features, and the connecting bond's features [23]. While powerful, standard CGCNN is limited to two-body interactions (bond lengths) and does not explicitly encode higher-order interactions like bond angles [23].

The Roost Framework

In contrast to structure-based models, Roost predicts material properties from chemical composition alone. It represents a material's formula as a weighted graph, where nodes are the constituent elements and edges represent their stoichiometric relationships [23] [24]. This composition-based approach allows for rapid screening of vast chemical spaces without requiring full structural information, making it exceptionally useful in the early stages of materials discovery.

Experimental Protocols & Quantitative Performance

Benchmarking Performance on Formation Energy

Formation energy is a foundational property from which energy above convex hull is derived. Benchmark studies demonstrate the performance of various models.

Table 2: Benchmark Performance on Formation Energy Prediction (Mean Absolute Error, eV/atom)

| Model / Approach | Dataset | Performance (MAE) | Notes | Source |

|---|---|---|---|---|

| Tripartite Interaction CGCNN | Random Dataset | 0.048 eV/atom | Incorporates bond angles explicitly | [23] |

| ALIGNN | Multiple Benchmarks | Comparable or superior to other GNNs | Explicit angle inclusion via line graph | [25] |

| Voxel CNN (Image-based) | Materials Project | Comparable to state-of-the-art | Uses deep convolutional network on voxel images | [21] |

| Neural Network (for MXenes) | C2DB (Testing) | 0.21 eV/atom | Composition-based model with 12 features | [20] |

| Random Forest (for MXenes) | C2DB (Testing) | 0.23 eV/atom | Composition-based model with 12 features | [20] |

Protocol: Predicting Energy Above Convex Hull with a Composition-Based Model (Roost)

Application: High-throughput screening for thermodynamic stability of novel compositions. Principle: This protocol uses only the chemical formula to predict the energy above convex hull, enabling rapid stability assessment of hypothetical compounds before determining their crystal structure.

Step-by-Step Methodology:

- Data Collection and Curation:

- Source a dataset of known materials and their DFT-computed energy above convex hull values from databases like the Materials Project [21] or the Computational 2D Materials Database (C2DB) [20].

- Clean the data to ensure a one-to-one mapping between composition and property, for instance, by including only the most stable polymorph for each composition [24].

Feature Encoding (Input Preparation):

- For each element in the periodic table, choose an encoding scheme. While one-hot encoding is simple, studies show that physical encoding—using attributes like electronegativity, group number, covalent radius, and valence electrons—significantly improves model generalizability, especially on out-of-distribution (OOD) data and with small training sets [24].

- Encode the target material's composition using these elemental feature vectors.

Model Training and Validation:

- Implement the Roost model architecture, which constructs a graph for each composition and performs message passing to learn a material representation [24].

- Split the dataset into training, validation, and test sets. A typical split for a large dataset (>10,000 samples) is 80%/10%/10%.

- Train the model to minimize the loss (e.g., Mean Absolute Error) between the predicted and DFT-calculated energy above convex hull.

- Monitor performance on the validation set to avoid overfitting and tune hyperparameters.

Evaluation and OOD Testing:

- Evaluate the final model on the held-out test set.

- To rigorously test generalizability, create an Out-of-Distribution (OOD) test set. This can be done via the Element Removal (ER) method, where compositions containing a specific element are withheld from training, forcing the model to predict for compositions with unfamiliar elements [24].

Protocol: Predicting Formation Energy with a Structure-Based Model (CGCNN)

Application: Accurate formation energy prediction for compounds with known or proposed crystal structures. Principle: This protocol leverages the full crystal structure to predict formation energy, which can then be used to construct a convex hull and compute the energy above convex hull.

Step-by-Step Methodology:

- Crystal Graph Construction:

- For a given crystal structure (CIF file), identify all atoms within a specified cutoff radius (e.g., the 12-nearest neighbors) of a central atom to define bonds [25].

- Create the crystal graph: atoms are nodes, and bonds are edges.

- Initialize node features using a physical encoding scheme (e.g., the 9 atomic properties used in the original CGCNN: group, period, electronegativity, etc.) [24].

- Initialize edge features using an expansion (e.g., Radial Basis Function) of the interatomic distance [25].

Model Training:

- Implement the CGCNN model, which performs convolutional operations on the crystal graph to update atom feature vectors based on their local environments [23].

- After multiple convolutional layers, pool the atom features into a global crystal feature vector.

- Pass this vector through fully connected layers to predict the formation energy.

- Train the model on a dataset of structures with DFT-computed formation energies (e.g., from Materials Project [21]).

Advanced Implementation (Incorporating Angular Information):

- Standard CGCNN does not explicitly model bond angles, which can limit accuracy. To overcome this, implement an advanced tripartite interaction model.

- Extend the convolutional update (Eq. 2 in [23]) to include a term that explicitly aggregates information from triplets of atoms ( (i, j, l) ) and the two connecting edges ( (k, k') ) that form a bond angle.

- The update for an atom's feature vector then includes contributions from both its direct neighbors and the angular relationships it participates in, leading to a more comprehensive description of the local atomic environment [23].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Item / Resource | Function / Description | Relevance to GNN Workflow |

|---|---|---|

| Materials Project Database | A repository of computed materials properties for ~150,000 inorganic compounds. | Primary source of training and validation data (formation energies, crystal structures) [21]. |

| JARVIS-DFT / C2DB | Databases containing DFT-computed properties for thousands of materials, including 2D systems like MXenes. | Critical for benchmarking and training models on specific material classes [25] [20]. |

| Physical Element Encodings | A set of elemental properties (e.g., electronegativity, atomic radius, group, period) used as node features. | Replaces simple one-hot encoding, drastically improving model generalization and OOD performance [24]. |

| ALIGNN / CGCNN Codebases | Open-source implementations of state-of-the-art GNN models, often available in repositories like GitHub. | Provides a starting point for model implementation, modification, and training [25]. |

| Out-of-Distribution (OOD) Test Sets | Curated datasets designed to test model generalizability beyond its training data distribution. | Essential for validating the real-world predictive power of a trained model on novel, unexplored materials [24]. |

Workflow and Architectural Visualizations

From Crystal Structure to Property Prediction

(GNN Prediction Workflow)

Advanced GNNs: Incorporating Bond Angles

(Encoding Atomic Interactions)

Graph neural networks like Roost and CGCNN provide powerful, complementary frameworks for accelerating the prediction of material stability. Roost enables the rapid screening of compositional space, while structure-based models like CGCNN and its advanced variants offer high accuracy for compounds with defined structures. The explicit inclusion of higher-order interactions, such as bond angles, and the use of physically-informed element encodings are critical advancements that enhance predictive accuracy and model generalizability. Integrating these tools into a materials discovery workflow allows researchers to efficiently navigate the vast space of inorganic materials, prioritizing the most stable and promising candidates for further experimental synthesis and computational investigation.

The Electron Configuration models with Stacked Generalization (ECSG) framework represents a significant methodological advancement in the prediction of thermodynamic stability for inorganic compounds. This approach directly addresses a central challenge in materials informatics: the inductive biases inherent in machine learning models built upon singular domain knowledge or a single hypothesis about the property-composition relationship [7]. Training a model can be conceptualized as a search for ground truth within the model's parameter space. When models are constructed on idealized scenarios or a limited understanding of chemical mechanisms, the actual ground truth may lie outside this parameter space, leading to diminished predictive accuracy [7]. The ECSG framework mitigates this risk by amalgamating models rooted in distinct and complementary domains of knowledge—namely, interatomic interactions, atomic properties, and electron configuration—into a single, powerful super learner via stacked generalization [7].

Accurately predicting stability metrics, such as the energy above the convex hull (EH), is a critical prerequisite for the computational discovery of synthesizable inorganic materials. The convex hull, constructed from the formation energies of compounds within a phase diagram, identifies the most thermodynamically stable structures. A compound's stability is quantified by its decomposition energy ((\Delta H_d)), the energy difference between the compound and the most stable combination of competing phases on the convex hull [7]. While values of EH lower than 100 meV/atom are typically perceived as indicative of thermodynamic stability, this metric alone is insufficient; a material must also be vibrationally stable (possessing no imaginary phonon modes) to be synthesizable [6]. The ECSG framework provides a rapid, accurate filter for thermodynamic stability, enabling the efficient exploration of vast compositional spaces that would be intractable using purely first-principles methods like Density Functional Theory (DFT) [7].

The ECSG framework employs a two-tiered architecture that integrates three base models operating on different physical principles. This design leverages stacked generalization, a robust ensemble technique where the predictions of multiple base models (level-0) are used as input features to train a meta-learner (level-1) that produces the final prediction [7]. The strength of this approach lies in the complementarity of its constituent models, which are selected to capture material characteristics across different scales, thereby reducing the collective inductive bias.

Base-Level Model 1: Electron Configuration Convolutional Neural Network (ECCNN)

The ECCNN is a novel model introduced to address the limited consideration of electronic internal structure in existing stability predictors [7].

- Input Representation: The input is a matrix of dimensions 118 (elements) × 168 × 8, encoded from the electron configuration (EC) of the constituent materials. Electron configuration is an intrinsic atomic property that is fundamental to first-principles calculations and is posited to introduce fewer manual biases compared to hand-crafted features [7].

- Network Architecture: The input matrix is processed through two convolutional layers, each utilizing 64 filters with a 5×5 kernel size. The second convolution is followed by batch normalization and a 2×2 max-pooling operation. The resulting feature maps are flattened and passed through fully connected layers to generate a stability prediction [7].

- Rationale: By using electron configuration as a foundational input, ECCNN directly incorporates quantum mechanical information that is critically related to chemical bonding and stability.

Base-Level Model 2: Roost

The Roost model conceptualizes the chemical formula as a graph to model interatomic interactions [7].

- Representation: It represents a crystal's chemical formula as a complete graph, where nodes correspond to elements and edges represent the interactions between them [7].

- Architecture & Rationale: As a graph neural network, Roost employs message-passing with an attention mechanism to learn the complex relationships between constituent atoms. This allows the model to capture the effects of local chemical environments and bonding interactions that are crucial for determining thermodynamic stability [7].

Base-Level Model 3: Magpie

The Magpie model relies on a suite of descriptive atomic properties to build a statistical profile of a material's composition [7].

- Input Features: It uses a wide array of elemental properties, such as atomic number, mass, radius, electronegativity, and valence. For each property, Magpie calculates statistical moments including the mean, standard deviation, mode, and range across the composition [7].

- Model & Rationale: These statistical features are used to train a model based on gradient-boosted regression trees (XGBoost). This approach provides a robust, domain-informed representation that captures the diversity of elemental characteristics within a compound [7].

The following workflow diagram illustrates the integration of these three base models into the ECSG super learner:

Performance and Quantitative Validation

The ECSG framework has been rigorously validated against standard materials databases, demonstrating state-of-the-art performance in predicting thermodynamic stability.

When evaluated on data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database, the ECSG model achieved an Area Under the Curve (AUC) score of 0.988 in distinguishing stable from unstable compounds [7]. This high AUC indicates an excellent ability to rank stable compounds higher than unstable ones. A critical advantage of the ECSG model is its remarkable sample efficiency. It was reported to achieve performance equivalent to existing models using only one-seventh of the training data, a significant benefit in a field where acquiring labeled data via DFT is computationally expensive [7].

Comparison with Other Methodologies

The table below summarizes the performance of ECSG and other relevant machine learning approaches for predicting stability-related properties in materials science.

Table 1: Performance Comparison of ML Models for Stability and Energy Prediction

| Model / Study | Application Focus | Key Metric | Performance | Data Source |

|---|---|---|---|---|

| ECSG [7] | General inorganic compound stability | AUC | 0.988 | JARVIS |

| Random Forest [9] | MXene heat of formation | MAE (test) | 0.23 eV | C2DB |

| Neural Network [9] | MXene heat of formation | MAE (test) | 0.21 eV | C2DB |

| Neural Network [9] | MXene energy above convex hull | MAE (test) | 0.08 eV | C2DB |

| Graph Neural Network (GNN) [4] | Total energy of crystals | MAE (test) | ~0.04 eV/atom | NREL MatDB |

| MatterGen (Generative) [26] | Generating stable crystals | % Stable & New | >75% stable, 61% new | Alex-MP-ICSD |

The performance of ECSG is contextualized by studies on specific material classes like MXenes, where neural network models predicting energy above the convex hull reported a MAE of 0.08 eV on testing data [9]. Furthermore, the GNN model demonstrates that a balanced training dataset containing both ground-state and higher-energy structures is crucial for accurately ranking polymorphic structures by energy, achieving a MAE of 0.04 eV/atom [4]. The generative model MatterGen, which produces novel stable structures, provides a complementary benchmark, with over 75% of its generated structures falling below the 0.1 eV/atom stability threshold [26].

Case Studies and Experimental Validation

The practical utility of the ECSG framework was demonstrated through targeted exploration of new two-dimensional wide bandgap semiconductors and double perovskite oxides [7]. In these case studies, the model successfully identified numerous novel, stable perovskite structures. Subsequent validation using first-principles calculations (DFT) confirmed the model's high reliability, with a remarkable accuracy in correctly identifying stable compounds [7]. This workflow—using a fast ML filter like ECSG to narrow the search space followed by definitive DFT validation—represents a powerful paradigm for accelerating materials discovery.

Experimental Protocol for Stability Prediction

This protocol details the steps for implementing the ECSG framework to predict the thermodynamic stability of inorganic compounds, from data preparation to final validation.

Data Curation and Preprocessing

- Data Source Selection: Acquire a dataset of inorganic compounds with known stability labels (e.g., stable/unstable or decomposition energy). Public databases such as the Materials Project (MP), the Open Quantum Materials Database (OQMD), or JARVIS are suitable starting points [7] [4].

- Stability Labeling: The target variable is typically derived from the energy above the convex hull (EH). A common threshold is EH < 0.1 eV/atom to classify a compound as "stable," though this can be adjusted based on the application [6].

- Input Feature Generation:

- For ECCNN: Encode the chemical composition into an electron configuration matrix (118 × 168 × 8) as described in the original literature [7].