Machine Learning for Material Stability Prediction: From Composition to Clinical Application

This article explores the transformative role of composition-based machine learning (ML) models in predicting material stability, a critical property for pharmaceutical development and advanced materials science.

Machine Learning for Material Stability Prediction: From Composition to Clinical Application

Abstract

This article explores the transformative role of composition-based machine learning (ML) models in predicting material stability, a critical property for pharmaceutical development and advanced materials science. We first establish the foundational principles of material stability and the limitations of traditional high-throughput computational methods like Density Functional Theory (DFT). The article then details the methodological pipeline, from feature engineering to model selection, illustrated with case studies of successful ML-guided material discovery. A dedicated section addresses common challenges, including data scarcity and model interpretability, and presents optimization strategies. Finally, we provide a rigorous framework for validating and benchmarking model performance against traditional methods, highlighting the profound implications of these technologies for accelerating the discovery of stable biomaterials and drug formulations.

The Fundamentals of Material Stability and the ML Revolution

Defining Thermodynamic and Kinetic Stability in Materials and Pharmaceuticals

Within materials science and pharmaceutical development, the concepts of thermodynamic and kinetic stability provide the fundamental framework for predicting material longevity and functionality. Thermodynamic stability indicates the innate, equilibrium-driven state of a system, defining its lowest energy configuration and ultimate end-state. In contrast, kinetic stability describes the persistence of a non-equilibrium state, governed by the energy barriers that hinder transformation to more stable forms. For researchers developing composition-based machine learning (ML) models for material stability, recognizing this distinction is crucial: thermodynamic stability determines the final destination, while kinetic stability dictates the accessible pathway and timeframe. This application note delineates these concepts through structured protocols, quantitative benchmarks, and computational workflows, providing a standardized foundation for ML-driven stability prediction in both materials discovery and pharmaceutical formulation.

Core Conceptual Definitions

Thermodynamic Stability

Thermodynamic stability is an equilibrium property that defines the state of a material or molecule with the lowest Gibbs free energy under given conditions of temperature, pressure, and composition. A thermodynamically stable compound possesses no driving force to decompose into other phases or compounds, representing the global minimum on the energy landscape.

- Quantitative Descriptors: The most accurate quantitative descriptor is the distance to the convex hull of the phase diagram. This metric characterizes how readily a compound decomposes into different phases or compounds, even over infinite time [1]. The formation energy also provides a quantitative reflection of thermodynamic stability, though it is considered less accurate than the hull distance [1].

- In Pharmaceutical Context: For Active Pharmaceutical Ingredients (APIs), the most stable crystalline polymorph represents the thermodynamically favored form. Formulating with metastable polymorphs, amorphous material, or stabilized supersaturated solutions requires careful risk assessment to ensure stability throughout the product's shelf life, typically about three years [2].

Kinetic Stability

Kinetic stability describes the persistence of a metastable state due to energy barriers that slow down the transformation to the thermodynamic ground state. It is a time-dependent property, defining how long a material can resist change despite not being in its lowest energy configuration.

- Quantitative Descriptors: Kinetic stability is modeled using rate constants and the Arrhenius equation, which relates the reaction rate to temperature. The simplicity of a first-order kinetic model enhances reliability by reducing parameters and preventing overfitting, providing precise stability estimates even with limited data points [3] [4].

- In Pharmaceutical Context: Kinetic stability governs degradation processes like aggregation, hydrolysis, and oxidation. Predicting long-term stability (e.g., aggregate formation in biotherapeutics) from short-term accelerated data is possible using simplified kinetic models that identify the dominant degradation pathway [3].

Table 1: Comparative Features of Thermodynamic and Kinetic Stability

| Feature | Thermodynamic Stability | Kinetic Stability |

|---|---|---|

| Governing Principle | Global minimum Gibbs free energy [1] | Energy barriers and activation energy [3] |

| Time Dependency | Time-independent (equilibrium property) | Time-dependent (persistence over time) |

| Key Quantitative Metric | Distance to convex hull, Formation energy [1] | Rate constants, Arrhenius parameters [3] |

| Primary Role in ML | Target for stable material discovery [5] | Predicts synthesizability and shelf-life [3] |

Experimental Protocols for Stability Assessment

Protocol for Determining Thermodynamic Stability in Materials

Objective: To experimentally determine the thermodynamic stability of a novel inorganic crystal, such as a MAX phase or perovskite.

Principle: Combine synthesis with characterization and first-principles calculations to ascertain if the material is stable on the convex hull of its phase diagram.

Materials & Equipment:

- High-purity elemental powders (e.g., Ti, Sn, N for Ti₂SnN)

- Protective atmosphere furnace (e.g., Argon)

- X-ray Diffractometer (XRD)

- Differential Scanning Calorimetry (DSC)

- Computational resources for Density Functional Theory (DFT) calculations

Procedure:

- Synthesis: Weigh precursor powders according to the stoichiometry of the target compound (e.g., Ti₂SnN). Mix homogenously and press into a pellet. React the pellet in a tube furnace at the target temperature (e.g., 750°C for Ti₂SnN) under an inert atmosphere for a specified duration [6].

- Phase Identification: Grind the synthesized pellet and characterize the powder using XRD. Match the diffraction pattern against known phases and the target compound to confirm successful synthesis and phase purity.

- Thermal Analysis: Use Differential Scanning Calorimetry (DSC) to measure the heat flow associated with phase transitions. A stable compound will show characteristic endothermic or exothermic events corresponding to its decomposition or transformation [7].

- DFT Validation: Calculate the formation energy and the distance to the convex hull using high-throughput DFT calculations. A material is considered thermodynamically stable if its energy above the convex hull is ≤ 0 eV/atom, meaning it does not decompose into other phases [1] [5].

Protocol for Kinetic Stability Modeling of Biologics

Objective: To predict the long-term kinetic stability of a protein therapeutic (e.g., an IgG1 monoclonal antibody) against aggregation.

Principle: Use accelerated stability data and a first-order kinetic model with the Arrhenius equation to extrapolate degradation rates to recommended storage conditions (2-8 °C).

Materials & Equipment:

- Formulated drug substance (sterile-filtered)

- Glass vials and seals

- Stability chambers (e.g., set at 5°C, 25°C, 40°C)

- Size Exclusion Chromatography (SEC)-HPLC system

Procedure:

- Study Design: Aseptically fill the formulated protein into glass vials. Incubate vials upright at a minimum of three elevated temperatures (e.g., 25°C, 30°C, 40°C) in addition to the recommended storage temperature (5°C) [3].

- Sampling and Analysis: At predefined intervals (pull points), remove samples from each temperature condition. Analyze them using SEC-HPLC to quantify the percentage of high-molecular-weight aggregates [3].

- Data Fitting: For each temperature, fit the aggregate growth data to a first-order kinetic model:

dα/dt = k * (1 - α)whereαis the fraction of aggregates andkis the rate constant. - Arrhenius Extrapolation: Plot the natural logarithm of the rate constants (ln k) obtained from each temperature against the reciprocal of the absolute temperature (1/T). Fit these points to the Arrhenius equation:

k = A * exp(-Ea/RT)whereEais the activation energy,Ris the gas constant, andTis the temperature. Use the fitted line to extrapolate the rate constant (k) at the storage temperature (5°C) [3]. - Shelf-life Prediction: Using the extrapolated rate constant, predict the time required for aggregates to reach a critical threshold (e.g., 2%) at the storage condition.

Computational and Machine Learning Approaches

Machine learning models have become powerful tools for predicting stability, effectively navigating the complex relationship between composition, structure, and properties.

ML for Thermodynamic Stability Prediction

The primary goal is to find compounds with high thermodynamic stability, with the distance to the convex hull being a key target [1].

- Input Features: Models use atomic properties (e.g., Mendeleev number, valence electron count), elemental compositions, and structural descriptors derived from methods like Voronoi tessellations [1] [6]. For example, valence electron count was identified as a critical factor for the stability of MAX phases [6].

- Model Performance: Ensemble methods like Extremely Randomized Trees (ERT) and Random Forests (RF) are popular. One benchmark on cubic perovskite systems showed ERT achieved a Mean Absolute Error (MAE) of 121 meV/atom [1]. A key challenge is the misalignment between regression and classification metrics; a model with low MAE can still have a high false-positive rate if predictions lie close to the stability boundary (0 eV/atom above hull) [5].

Table 2: Machine Learning Models for Stability Prediction

| ML Model | Application Context | Reported Performance |

|---|---|---|

| Extremely Randomized Trees (ERT) | Prediction of thermodynamic phase stability of perovskite oxides [1] | MAE: 121 meV/atom for cubic perovskites [1] |

| Random Forest (RF) | Structure-independent prediction of formation energy for ternary compounds [1] | MAE: 80 meV/atom on OQMD data [1] |

| Kernel Ridge Regression (KRR) | Prediction of formation energies for elpasolite crystals [1] | MAE: 0.1 eV/atom [1] |

| Gradient Boosting Tree (GBT) | Screening stable MAX phases [6] | Successfully guided discovery of Ti₂SnN [6] |

| Universal Interatomic Potentials (UIPs) | Pre-screening thermodynamically stable hypothetical materials [5] | Advanced sufficiently for effective and cheap pre-screening [5] |

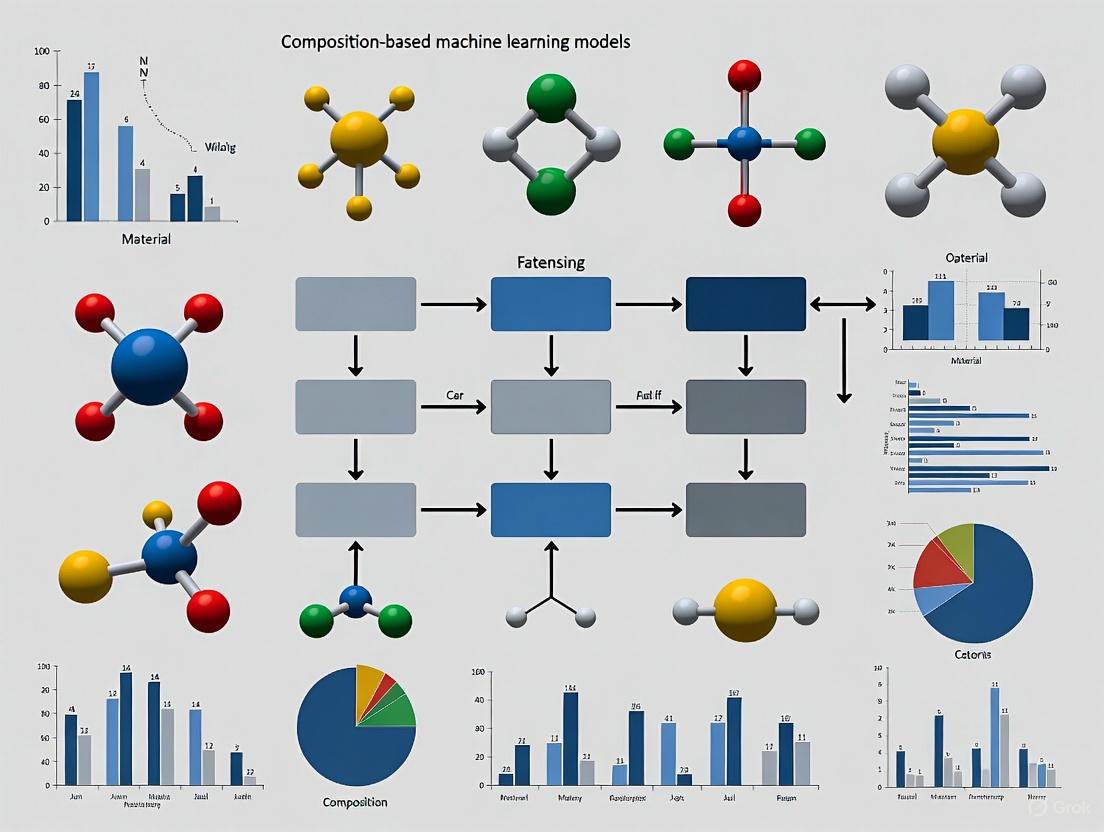

Workflow for ML-Guided Materials Discovery

The following diagram illustrates a prospective ML-driven pipeline for discovering novel stable materials, which more accurately simulates a real-world discovery campaign compared to retrospective benchmarks [5].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions and Materials

| Item | Function/Application | Protocol Context |

|---|---|---|

| High-Purity Elemental Powders (Ti, Sn, Al, etc.) | Precursors for solid-state synthesis of inorganic compounds (e.g., MAX phases) [6] | Thermodynamic Stability (Materials) |

| Formulated Drug Substance | The protein therapeutic (e.g., IgG, scFv) whose stability is under investigation [3] | Kinetic Stability (Biotherapeutics) |

| Hydrochloric Acid (HCl) 0.1-1 mol/L | Agent for acid-catalyzed hydrolysis stress testing in forced degradation studies [8] | Pharmaceutical Stability Toolkit (STABLE) |

| Sodium Hydroxide (NaOH) 0.1-1 mol/L | Agent for base-catalyzed hydrolysis stress testing in forced degradation studies [8] | Pharmaceutical Stability Toolkit (STABLE) |

| SEC Column (e.g., UHPLC protein BEH SEC) | Analytical separation to quantify monomeric protein and high-molecular-weight aggregates [3] | Kinetic Stability (Biotherapeutics) |

| DSC/TGA Instrumentation | Thermal analysis to measure phase transitions, melting points, and thermal decomposition [7] | Thermodynamic Stability (Materials) |

The interplay between thermodynamic and kinetic stability forms the cornerstone of rational design in both advanced materials and pharmaceuticals. Thermodynamic metrics like the distance to the convex hull define the ultimate stability landscape, while kinetic models predict the practical persistence of metastable states critical for processing and shelf-life. The integration of machine learning offers a transformative acceleration in navigating this landscape, from screening millions of hypothetical compounds to predicting degradation pathways. However, robust experimental protocols and standardized frameworks like STABLE remain indispensable for validating computational predictions and ensuring the reliable development of new materials and life-saving drugs. A holistic research strategy that leverages the strengths of computational power, machine learning efficiency, and rigorous experimental validation is key to future discoveries.

The discovery and development of novel functional materials are fundamental to technological breakthroughs across fields ranging from clean energy to information processing. For decades, density functional theory (DFT) and other first-principles calculation methods have served as cornerstone techniques in computational materials science, providing insights into material properties and stability with minimal empirical input [9]. These quantum mechanics-based approaches can accurately predict interactions between atomic nuclei and electrons, enabling researchers to obtain material properties from fundamental physics principles [9].

However, the tremendous computational cost of these methods presents a significant bottleneck for materials discovery, particularly when investigating large compositional spaces or complex systems. First-principles calculations require substantial computational resources and time, especially when dealing with large-scale systems or complex processes [9]. The resource intensity of these methods is demonstrated by their dominance of major supercomputing facilities, demanding up to 45% of core hours at the UK-based Archer2 Tier 1 supercomputer and over 70% allocation time in the materials science sector at the National Energy Research Scientific Computing Center [5].

This application note examines the specific limitations of traditional first-principles methods and documents emerging protocols that combine machine learning with targeted DFT calculations to accelerate materials stability research while maintaining accuracy.

Quantitative Analysis of Computational Costs

The high computational expense of first-principles methods manifests across multiple dimensions, from simple binary systems to complex multi-component materials. The tables below quantify these challenges across different material systems and calculation types.

Table 1: Computational Cost Comparison for Different Material Systems

| Material System | Number of Configurations | DFT Computation Time | Key Computational Challenge |

|---|---|---|---|

| σ Phase (Binary) | 1,342 configurations | ~59 hours per configuration [10] | Multiple non-equivalent crystallographic sites (2a, 4f, 8i1, 8i2, 8j) |

| σ Phase (Ternary) | 243 configurations per ternary system | Substantial CPU resources [10] | Exponential increase with elements (3^5 = 243 configurations) |

| High-Entropy Alloys (HEAs) | >50,000 possible ordered structures | Days per structure [11] | Vast composition space with multiple principal elements |

| Mg-B-N Superconductors | 1,115,435 hypothetical materials | Prohibitive for exhaustive study [12] | Large configurational space requiring efficient screening |

Table 2: Specific Workflow Timings for σ Phase Analysis

| Computational Method | System Size | Error (MAE) | Relative Computational Time |

|---|---|---|---|

| Traditional DFT | 1342 binary configurations | Reference | 100% |

| Machine Learning (MLP) | 1177 ternary configurations | 34.871 meV/atom [10] | <41% [10] |

| High-Throughput Screening | 8,801 HEA compositions | Comparable to SQS [11] | Significantly faster [11] |

The root of these computational challenges lies in the scaling behavior of DFT calculations, which typically scale as O(N³) with the number of atoms [11]. For complex phases like the σ phase, which has a tetragonal crystal structure with 30 atoms distributed across five non-equivalent Wyckoff positions, the number of possible configurations grows exponentially with the number of elements [10]. This combinatorial explosion makes exhaustive first-principles studies practically infeasible for multi-component systems.

Machine Learning Solutions for Accelerated Discovery

Composition-Based Stability Prediction

ElemNet represents a breakthrough in composition-based materials stability prediction, using a 17-layer deep neural network to predict formation enthalpy from elemental composition alone [13]. This approach bypasses the need for structural information, which dramatically reduces computational requirements. The model was trained on 341,000 compounds from the Open Quantum Materials Database and achieves a mean absolute error of 0.042 eV/atom in cross-validation [13]. When applied to V–Cr–Ti alloys, ElemNet successfully predicted stability trends that aligned with experimental ductile-brittle transition temperature data while identifying promising composition regions that had been overlooked by conventional approaches [13].

Integrated ML-DFT Workflow for Enhanced Efficiency

The most effective protocols combine machine learning pre-screening with targeted DFT validation, creating an active learning loop that progressively improves both accuracy and efficiency. The Graph Networks for Materials Exploration (GNoME) framework exemplifies this approach, having discovered 2.2 million stable crystal structures—an order-of-magnitude expansion from previous knowledge [14].

This framework demonstrates how active learning creates a virtuous cycle where ML models become increasingly accurate as they process more DFT-validated data. Through six rounds of active learning, GNoME improved from less than 6% precision to over 80% for structure-based predictions and from less than 3% to 33% for composition-based predictions [14].

Experimental Protocols

Protocol 1: Composition-Based ML for Alloy Stability Prediction

This protocol enables rapid screening of alloy composition spaces using the ElemNet architecture.

Data Preparation

- Access formation energies from the Open Quantum Materials Database (OQMD)

- Format composition data as reduced chemical formulas

- Normalize elemental compositions to weight or atomic percentages

Model Configuration

- Implement 17-layer fully connected deep neural network

- Configure with rectified linear unit (ReLU) activation functions

- Set hyperparameters: momentum=0.9, dropouts=0.7, 0.8, 0.9

- Use stochastic gradient descent optimization

Training and Validation

- Train on 341,000 compounds with known formation energies

- Validate using 10-fold cross-validation

- Target mean absolute error <0.05 eV/atom

Prediction and Analysis

- Input candidate alloy compositions (e.g., V–Cr–Ti combinations)

- Predict formation enthalpy (ΔHf) for each composition

- Calculate stability as -ΔHf

- Correlate with experimental properties (e.g., ductile-brittle transition temperature)

Protocol 2: Integrated ML-DFT for Crystal Structure Discovery

This protocol combines graph neural networks with DFT validation for accelerated materials discovery.

Candidate Generation

- Apply symmetry-aware partial substitutions (SAPS) to known crystals

- Generate diverse candidate structures through random structure search

- Create composition-based candidates using oxidation-state balancing with relaxed constraints

ML Pre-screening

- Implement graph neural networks (GNoME) for energy prediction

- Use volume-based test-time augmentation

- Apply uncertainty quantification through deep ensembles

- Filter candidates based on predicted stability (decomposition energy)

DFT Validation

- Perform DFT computations using Vienna Ab initio Simulation Package (VASP)

- Use standardized settings from Materials Project

- Calculate relaxed structures and energies

- Verify stability with respect to competing phases

Active Learning Loop

- Incorporate successful discoveries into training dataset

- Retrain ML models on expanded dataset

- Iterate through multiple rounds of discovery and learning

Protocol 3: ML-Augmented σ Phase Analysis

This specialized protocol addresses the combinatorial challenge of σ phase formation enthalpy prediction.

Database Construction

- Perform high-throughput DFT calculations for binary σ phase configurations

- Focus on 45 binary systems (1,342 configurations)

- Calculate formation enthalpies and lattice parameters

Feature Engineering

- Incorporate element types at different Wyckoff positions

- Include atomic radius and number of valence electrons

- Encode crystal structure information

Model Training

- Implement Multi-Layer Perceptron (MLP) algorithm

- Train on binary σ phase data

- Target mean absolute error <35 meV/atom on validation set

Prediction and Validation

- Predict formation enthalpies for ternary configurations

- Validate on 8 ternary systems

- Deploy through Graphical User Interface (GUI) for community use

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Tools and Resources for ML-Accelerated Materials Discovery

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| Open Quantum Materials Database (OQMD) | Database | Provides formation energies for 341,000+ compounds for model training | Public |

| Materials Project | Database | Offers standardized DFT data for known and predicted materials | Public |

| ElemNet | Algorithm | Deep neural network for composition-based property prediction | Open-source |

| GNoME | Framework | Graph neural networks for materials exploration with active learning | Research |

| Vienna Ab initio Simulation Package (VASP) | Software | Performs high-fidelity DFT calculations for validation | Licensed |

| Matbench Discovery | Benchmark | Evaluates ML model performance on materials discovery tasks | Public |

The high computational cost of traditional first-principles calculations has historically constrained the pace of materials discovery, particularly for complex multi-component systems. By implementing the protocols described in this application note—which combine machine learning pre-screening with targeted DFT validation—researchers can overcome these limitations and accelerate stability prediction by orders of magnitude. The integrated approach maintains the accuracy of quantum mechanical calculations while dramatically reducing computational costs, enabling efficient exploration of vast compositional spaces that were previously inaccessible to computational screening.

In computational materials science, accurately predicting the stability of a compound is a fundamental step toward discovering new, synthesizable materials. Two key metrics serve as primary indicators of thermodynamic stability: the Formation Energy and the Distance to the Convex Hull (often referred to as Energy Above Convex Hull). While related, these metrics provide distinct information. The Formation Energy (E_f) measures the energy released or absorbed when a compound is formed from its constituent elements in their standard states. A negative E_f indicates that the compound is stable with respect to its elements. The Distance to the Convex Hull (E_hull) is a more rigorous metric that quantifies the stability of a compound with respect to all other known and computationally predicted phases in its chemical system. It represents the energy difference between the compound and the point on the convex hull of formation energies for that compositional space; an E_hull of 0 eV/atom signifies that the material is thermodynamically stable. For materials discovery, a compound is typically considered potentially stable if it possesses a negative formation energy and a very small E_hull (often < 50 meV/atom, though thresholds can vary) [15] [5].

The rapid adoption of composition-based machine learning (ML) models addresses a critical bottleneck in high-throughput screening. While density functional theory (DFT) is the workhorse for calculating these stability metrics, it is computationally intensive and time-consuming, making the exploration of vast compositional spaces prohibitive. Machine learning models, trained on existing DFT databases, can predict E_f and E_hull orders of magnitude faster, acting as efficient pre-filters to identify the most promising candidate materials for subsequent DFT validation and experimental synthesis [5] [16]. This protocol focuses on the application of such models for stability prediction.

Quantitative Performance of ML Models

The following tables summarize the performance of various machine learning models in predicting formation energy and energy above the convex hull, as reported in recent literature. These quantitative benchmarks are essential for selecting the appropriate model for a materials discovery campaign.

Table 1: Performance of ML models in predicting Formation Energy (E_f).

| Material System | ML Model | Input Features | Test MAE (eV/atom) | Training Data Source |

|---|---|---|---|---|

| 2D MXenes [15] | Neural Network | 12 Physico-chemical properties | 0.21 | C2DB (300 entries) |

| 2D MXenes [15] | Random Forest | 12 Physico-chemical properties | 0.23 | C2DB (300 entries) |

| General Inorganic Crystals [13] | ElemNet (Deep Neural Network) | Elemental composition only | 0.042 (avg.) | OQMD (341,000 compounds) |

| V–Cr–Ti Alloys [13] | ElemNet (Deep Neural Network) | Elemental composition only | 0.015 | OQMD (Pretrained) |

Table 2: Performance of ML models in predicting Energy Above Convex Hull (E_hull).

| Material System | ML Model | Input Features | Test MAE (eV/atom) | Training Data Source |

|---|---|---|---|---|

| 2D MXenes [15] | Neural Network | 14 Physico-chemical properties | 0.08 | C2DB (300 entries) |

| General Inorganic Crystals [5] | Universal Interatomic Potentials | Crystal structure | ~0.08 (est. from Fig. 3) | Multiple (MP, AFLOW, OQMD) |

| General Inorganic Crystals [5] | Random Forests | Crystal structure | ~0.12 (est. from Fig. 3) | Multiple (MP, AFLOW, OQMD) |

Key Insights from Quantitative Data:

- Data Regime Impact: Model accuracy is highly dependent on the quantity and quality of training data. Graph-based models and universal interatomic potentials, which have access to structural information, generally outperform composition-based models, but this advantage becomes clear primarily with large datasets (>100,000 samples) [5] [16].

- Reduced-Order Models: For specific material families like MXenes, reduced-order models using only 4 or 7 key features can maintain accuracy (MAE ~0.21 eV for

E_f) while improving computational efficiency and transferability [15]. - Beyond Regression Metrics: A critical best practice is to evaluate models on classification metrics (e.g., false-positive rate for stable materials) in addition to regression metrics like MAE. A model with a good MAE can still have a high false-positive rate if its errors are concentrated near the stability threshold of 0 eV/atom [5].

Experimental and Computational Protocols

Protocol 1: High-Throughput DFT Calculation of Stability Metrics

This protocol outlines the standard procedure for calculating formation energy and energy above the convex hull using DFT, which generates the ground-truth data for training ML models.

1. Structure Preparation and Relaxation:

- Input: Obtain the initial crystal structure for the target material.

- Geometry Optimization: Perform a full DFT relaxation of the atomic coordinates and lattice vectors until the forces on all atoms and the stress tensor components are below a predefined threshold (e.g., 0.01 eV/Å for forces). This yields the ground-state total energy,

E_total.

2. Formation Energy (E_f) Calculation:

- Formula:

E_f = E_total - ∑(n_i * E_i). Here,n_iis the number of atoms of elementiin the compound, andE_iis the reference energy per atom of elementiin its standard stable phase (e.g., bulk solid). - Action: Calculate the

E_fper atom for the target compound using the formula.

3. Convex Hull Construction:

- Data Collection: Gather the calculated

E_ffor the target compound and all other known and predicted compounds in the same chemical system (A-B-C...). - Hull Calculation: For the given chemical system, plot the

E_fper atom of all phases against composition. The convex hull is the set of line segments connecting the most stable phases at different compositions. Phases lying on these segments haveE_hull = 0. - Action: Construct the convex hull using computational tools available in materials databases like the Materials Project or AFLOW.

4. Energy Above Hull (E_hull) Calculation:

- Formula:

E_hull = E_f(compound) - E_f(hull_point). TheE_f(hull_point)is the energy of the point on the convex hull at the same composition as the target compound, obtained by a linear combination of the energies of the hull phases. - Action: Calculate the

E_hullfor the target compound. A positive value indicates metastability or instability.

Protocol 2: Composition-Based ML Prediction of Material Stability

This protocol describes the workflow for using a pre-trained composition-based ML model to predict stability metrics without performing DFT calculations.

1. Input Preparation:

- Input: The elemental composition of the target material (e.g., V₆₀Cr₃₀Ti₁₀).

- Feature Vectorization (if required): For models that do not automatically encode composition (unlike ElemNet), convert the composition into a feature vector. This may involve using properties of the constituent elements, such as atomic radius, electronegativity, valence electron numbers, etc. [15].

2. Model Inference:

- Action: Feed the composition (or its feature vector) into the pre-trained ML model.

- Output: The model returns a prediction for the formation energy (

E_f) and/or the energy above the convex hull (E_hull).

3. Stability Assessment:

- Decision: Classify the material based on the predicted values. A material is a candidate for being thermodynamically stable if it meets the criteria:

E_f< 0 eV/atomE_hull≈ 0 eV/atom (e.g., < 0.05 - 0.10 eV/atom, depending on the chosen threshold) [5].

4. Validation and Selection:

- Action: The materials predicted to be stable are shortlisted for subsequent, more accurate validation using high-fidelity DFT calculations, as described in Protocol 1.

Diagram 1: Composition-based ML stability prediction workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational tools and databases for ML-driven stability prediction.

| Tool/Resource Name | Type | Primary Function in Research | Relevance to Stability Metrics |

|---|---|---|---|

| Open Quantum MaterialsDatabase (OQMD) [13] | Database | Repository of DFT-calculated properties for hundreds of thousands of materials. | Serves as a primary source of training data (Ef, Ehull) for composition-based ML models like ElemNet. |

| Computational 2D MaterialsDatabase (C2DB) [15] | Database | Contains calculated properties for a wide range of two-dimensional materials. | Provides curated, high-quality datasets for training specialized ML models for 2D materials. |

| Materials Project (MP)AFLOW [5] | Database | Large-scale databases of computed material properties and crystal structures. | Used for training universal ML models and for constructing convex hulls for specific chemical systems. |

| ElemNet [13] | ML Model | A deep neural network that predicts formation energy from elemental composition alone. | A key "reagent" for rapid, first-principles stability screening without requiring structural knowledge. |

| Random Forest /Neural Networks [15] | ML Algorithm | Versatile algorithms for regression tasks, capable of learning from physico-chemical features. | Used to build accurate predictive models for Ef and Ehull, with feature importance analysis. |

| Universal InteratomicPotentials (UIPs) [5] | ML Model | ML-based force fields trained on diverse DFT data that can predict energies and forces for arbitrary structures. | Emerging as state-of-the-art tools for pre-screening thermodynamic stability from unrelaxed crystal structures. |

| Matbench Discovery [5] | BenchmarkingFramework | An evaluation framework for assessing the performance of ML energy models on a materials discovery task. | A critical "validation reagent" for comparing different models and selecting the best one for a discovery campaign. |

High-Throughput Screening (HTS) has long been a cornerstone of modern drug discovery and materials science, enabling the rapid experimental testing of thousands to millions of chemical compounds or materials. Traditionally, this process has been a numbers game, often reliant on simple, binary readouts and 2D cell cultures, which, while fast, can lack biological relevance and generate substantial waste [17]. A fundamental paradigm shift is now underway, moving HTS from a largely brute-force approach to a precise, intelligent, and predictive science. This transformation is being driven by the integration of machine learning (ML) and artificial intelligence (AI), which enhances every stage of the pipeline—from initial design to final data analysis [18] [19].

This shift is particularly impactful within the context of composition-based machine learning models for material stability research. The challenge of evaluating the thermodynamic stability of potential new materials from a vast chemical space is analogous to finding a drug candidate in a library of billions of molecules [5]. ML models, especially universal interatomic potentials (UIPs), are now adept at acting as ultra-fast pre-filters, accurately predicting crystal stability and drastically reducing the need for computationally expensive first-principles calculations like Density Functional Theory (DFT) [5]. This allows researchers to focus experimental and simulation efforts on the most promising candidates, thereby accelerating the entire discovery workflow.

The following table summarizes key quantitative trends and performance metrics that characterize the ML-driven transformation of HTS in drug and materials discovery.

Table 1: Impact Metrics of Machine Learning on Discovery Workflows

| Area of Impact | Traditional Approach | ML-Enhanced Approach | Key Performance Metrics |

|---|---|---|---|

| Virtual Screening | Molecular docking with empirical scoring functions [20]. | ML-based scoring (e.g., Gnina CNN, AGL-EAT-Score) and generative models conditioned on binding pockets [21]. | ML models show superior speed (orders of magnitude faster than DFT [5]) and improved accuracy in pose prediction and binding affinity estimation [21]. |

| Materials Stability Prediction | High-throughput DFT calculations, computationally intensive [5]. | ML as a pre-filter (e.g., UIPs, graph neural networks) [5]. | UIPs identified as top performers for pre-screening; benchmarks show alignment of classification metrics with discovery goals is critical [5]. |

| Toxicity & ADMET Profiling | Sequential, experimental in vitro assays [22]. | Predictive models (e.g., AttenhERG for cardiotoxicity, StreamChol for liver injury) [21]. | Models achieve high forecasting accuracy; tools enable early identification and redesign of compounds to reduce toxicity risks [21]. |

| Data Utilization | Manual processing, spreadsheet-based analysis [22]. | Automated FAIRification (Findable, Accessible, Interoperable, Reusable) workflows (e.g., ToxFAIRy) [22]. | Enables integration of multi-endpoint data (e.g., Tox5-score) for holistic hazard assessment and efficient machine-readable data reuse [22]. |

## Detailed Experimental Protocols

This section provides detailed methodologies for key experiments that exemplify the integration of ML into modern HTS workflows.

### Protocol 1: Structure-Based Virtual Screening Enhanced by Machine Learning

This protocol, adapted from a 2025 study on identifying natural tubulin inhibitors, details the use of ML to refine virtual screening hits [20].

1. Homology Modeling and Library Preparation

- Objective: Generate a reliable 3D structure of the target protein and prepare compound libraries.

- Steps:

- Retrieve the target protein sequence (e.g., βIII-tubulin, Uniprot ID: Q13509).

- Use a tool like Modeller to build a 3D homology model using a known crystal structure as a template (e.g., PDB: 1JFF) [20].

- Select the final model based on Discrete Optimized Protein Energy (DOPE) score and validate stereo-chemical quality with a Ramachandran plot (e.g., using PROCHECK) [20].

- Retrieve a library of natural compounds (e.g., ~90,000 from ZINC database) and convert structures into PDBQT format using Open-Babel [20].

2. Structure-Based Virtual Screening (SBVS)

- Objective: Identify top binding candidates from the library.

- Steps:

- Define the binding site (e.g., the 'Taxol site') on the target protein.

- Perform molecular docking for all library compounds using software like AutoDock Vina.

- Filter results based on binding energy and select the top 1,000 hits for further analysis [20].

3. Machine Learning Classification for Active Compounds

- Objective: Distinguish true active compounds from inactive ones using chemical descriptors.

- Steps:

- Prepare Training Data: Curate a dataset of known active and inactive compounds for the target. Generate decoys with similar physicochemical properties using the DUD-E server to balance the dataset [20].

- Calculate Molecular Descriptors: For both the training set and the 1,000 test hits, calculate molecular descriptors and fingerprints from their SMILES codes using a tool like PaDEL-Descriptor [20].

- Train and Validate ML Model: Apply a supervised ML classifier (e.g., Random Forest, Support Vector Machine). Use 5-fold cross-validation and evaluate performance using metrics like precision, recall, F-score, and AUC [20].

- Predict Actives: Use the trained model to predict and rank the activity of the 1,000 test compounds, narrowing the list to a final set of ~20 high-priority candidates [20].

4. Experimental Validation

- Objective: Confirm predicted activity through computational and wet-lab assays.

- Steps:

- Perform in-depth molecular docking and molecular dynamics (MD) simulations on the final candidates to assess binding stability and affinity [20].

- Analyze Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties in silico to evaluate drug-likeness [20].

- Send the most promising, well-characterized candidates for in vitro experimental validation.

### Protocol 2: FAIRification and Multi-Endpoint Toxicity Scoring for Hazard Assessment

This protocol outlines an automated workflow for processing HTS data into a FAIR (Findable, Accessible, Interoperable, Reusable) format and deriving a comprehensive toxicity score, as demonstrated in a 2025 study on nanomaterials [22].

1. HTS Data Generation and Metadata Annotation

- Objective: Generate raw experimental data with comprehensive metadata.

- Steps:

- Conduct a panel of in vitro toxicity assays (e.g., cell viability, DNA damage, apoptosis) across multiple time points and concentrations.

- For materials like nanomaterials, quantify cell-delivered doses using metrics such as nominal concentration (μg/mL), mass per cell growth area (μg/cm²), or surface area per cell growth area (cm²/cm²) [22].

- Systematically record all metadata, including material properties, assay conditions, cell lines, and replicate information.

2. Data FAIRification and Preprocessing

- Objective: Convert raw data into a standardized, machine-readable format.

- Steps:

- Use a custom Python module (e.g.,

ToxFAIRy) or an Orange Data Mining workflow to read and combine experimental data [22]. - Annotate the data with the recorded metadata.

- Convert the dataset into a FAIR-compliant format, such as NeXus, which integrates all data and metadata into a single, structured file for reuse and sharing [22].

- Use a custom Python module (e.g.,

3. Calculation of the Integrated Tox5-Score

- Objective: Integrate multi-endpoint data into a single, comparable hazard value.

- Steps:

- For each dose-response curve, calculate key metrics: the first statistically significant effect (FSE), the area under the curve (AUC), and the maximum effect (Emax) [22].

- Scale and normalize these metrics from the different endpoints and time points to make them comparable.

- Compile the normalized metrics into an integrated Tox5-score, which provides a transparent, weighted overview of the overall hazard profile, visualized as a ToxPi (Toxicological Priority Index) chart [22].

4. Hazard Ranking and Grouping

- Objective: Use the computed scores for decision-making.

- Steps:

- Rank all tested agents (chemicals or materials) from most to least toxic based on their Tox5-score.

- Perform clustering analysis on the endpoint-specific scores to group materials with similar hazard profiles, enabling read-across and hypothesis generation about mechanisms of action [22].

## The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Resources for ML-Enhanced HTS Workflows

| Category | Item / Software | Function / Application |

|---|---|---|

| Computational Docking & Screening | AutoDock Vina / InstaDock [20] | Performs molecular docking for structure-based virtual screening. |

| Gnina (v1.3) [21] | Uses convolutional neural networks (CNNs) for superior pose scoring and binding affinity prediction. | |

| Machine Learning & Cheminformatics | PaDEL-Descriptor [20] | Calculates molecular descriptors and fingerprints from chemical structures for ML model training. |

| Scikit-learn, TensorFlow, PyTorch [18] | Programmatic frameworks for building and training supervised and deep learning models. | |

| ChemProp [21] | A graph neural network (GNN) method specifically designed for molecular property prediction. | |

| Data Management & Analysis | ToxFAIRy Python Module [22] | Automates the preprocessing and FAIRification of HTS data into standardized, reusable formats. |

| Orange Data Mining [22] | A visual programming platform with custom widgets for data analysis and model building. | |

| Experimental Assays (In Vitro) | CellTiter-Glo Assay [22] | Measures cell viability via luminescence in HTS formats. |

| Caspase-Glo 3/7 Assay [22] | Measures apoptosis activation via caspase activity. | |

| GammaH2AX Assay [22] | Detects DNA double-strand breaks, a key marker of genotoxicity. |

The integration of machine learning into High-Throughput Screening represents a true paradigm shift, moving the field from a high-volume, low-context process to an intelligent, predictive, and data-driven discipline. In drug discovery, ML enhances virtual screening, de-risks candidates by predicting ADMET properties early, and enables the generation of novel compounds [19] [21]. In materials science, particularly for stability research, ML models serve as powerful pre-filters that navigate vast compositional spaces with speed and increasing accuracy, ensuring that costly experimental and computational resources are allocated to the most promising leads [5]. The continued development of automated and FAIR data workflows ensures that the vast amounts of data generated can be fully leveraged, creating a virtuous cycle of learning and discovery. As these technologies mature, the future of HTS points toward increasingly adaptive, personalized, and autonomous discovery systems that will fundamentally accelerate the development of new therapeutics and advanced materials.

For researchers in materials science and drug development, predicting stability is a critical challenge that dictates the viability of new compounds and pharmaceutical products. Composition-based machine learning (ML) models offer a powerful strategy to navigate vast chemical spaces by using a material's chemical formula as the primary input, even in the absence of detailed structural data [23]. These models learn complex relationships between elemental composition and thermodynamic stability, enabling the rapid in-silico screening of novel materials with desired stability profiles. This application note details the essential terminology, validated experimental protocols, and key resources for implementing these predictive frameworks in research.

Core Terminology and Key Concepts

Stability: In the context of ML models, stability can refer to several properties. Thermodynamic stability is often represented by the decomposition energy (ΔHd), defined as the energy difference between a compound and its competing phases in a phase diagram [23]. For energetic materials (EMs), stability is frequently assessed via Bond Dissociation Energy (BDE) of the weakest "trigger bond" (e.g., X-NO₂), which correlates strongly with sensitivity and safety [24]. Model Stability refers to the reliability of a model's predictions, particularly the volatility of risk estimates when development data or modeling strategies change [25].

Features and Descriptors: These are quantifiable characteristics of a material that serve as input variables (X) for ML models to predict a target property (Y) [26].

- Composition-Based Descriptors: Derived directly from the chemical formula. These can include simple elemental proportions, statistical summaries (mean, range, mode) of atomic properties (e.g., atomic radius, electronegativity) [23], or more complex representations like electron configuration (EC) matrices that capture the distribution of electrons within an atom [23].

- Hybrid Feature Representation: A strategy that couples local features (e.g., attributes of a target chemical bond) with global features representing the entire molecule's structure to more sufficiently characterize a property [24].

- Descriptor Optimization: The process of down-selecting the most impactful descriptors from a large pool to build a robust and interpretable model, often using feature importance metrics [26].

Model Types: A variety of supervised ML algorithms are employed for stability prediction.

- Tree-Based Ensembles: Methods like Extreme Gradient Boosting (XGBoost) and Random Forest combine multiple decision trees to achieve high predictive accuracy. XGBoost has demonstrated top performance in predicting properties like detonation velocity and decomposition temperature [27] [24].

- Neural Networks: These include Multi-Layer Perceptrons (MLP) and Convolutional Neural Networks (CNN). The Electron Configuration CNN (ECCNN), for example, uses convolutional layers to process encoded electron configuration data [23].

- Ensemble Frameworks: Techniques like Stacked Generalization (SG) combine the predictions of multiple, diverse base models (e.g., Magpie, Roost, ECCNN) into a super learner to mitigate the inductive bias of any single model and enhance overall performance [23].

Quantitative Comparison of Models and Descriptors

Table 1: Performance Metrics of Selected ML Models for Stability Prediction

| Model Type | Application Context | Key Performance Metrics | Notable Strengths |

|---|---|---|---|

| XGBoost [27] [24] | HEDM properties (detonation velocity/pressure); EM BDE prediction | Best scoring metrics vs. other models; R² = 0.98, MAE = 8.8 kJ·mol⁻¹ for BDE [24] | High accuracy; handles complex, non-linear relationships |

| ECSG (Ensemble) [23] | Thermodynamic stability of inorganic compounds | AUC = 0.988; high sample efficiency (1/7 data for same performance) [23] | Mitigates model bias; leverages complementary knowledge |

| Convolutional Autoencoder [28] | Emulsion droplet stability from images | 91.7% classification accuracy for droplet break-up [28] | Discovers latent shape descriptors from image data |

Table 2: Commonly Used Feature Descriptors for Stability Prediction

| Descriptor Category | Specific Examples | Relevance to Stability |

|---|---|---|

| Electronic Structure [23] | Electron Configuration (EC) | An intrinsic atomic property crucial for understanding chemical reactivity and bonding. |

| Atomic Properties [23] [26] | Average reduction potential; Atomic radius statistics; Electronegativity | Related to corrosion resistance, bond strength, and phase formation energy. |

| Chemical Environment [26] | pH; Halide ion concentration | Critical environmental factors for predicting corrosion rate in alloys. |

| Bond-Specific & Global Molecular [24] | Local target bond features; Molecular weight; Nitrogen mass percent | Directly characterizes trigger bond strength and overall energetic character. |

Detailed Experimental Protocols

Protocol 1: Building an Ensemble Model for Compound Stability

This protocol outlines the methodology for developing a robust model to predict the thermodynamic stability of inorganic compounds, based on the ECSG framework [23].

Workflow Overview:

Materials & Reagents:

- Data Source: A curated dataset of known compounds with calculated decomposition energies (ΔHd), such as from the Materials Project (MP) or JARVIS databases [23].

- Software: Python with libraries: Scikit-learn for general ML, XGBoost for gradient boosting, Pytorch for ECCNN, and Roost implementation.

Step-by-Step Procedure:

- Data Preparation & Encoding:

- Compile chemical formulas and corresponding stability labels (e.g., ΔHd, stable/unstable classification).

- Encode each formula into three distinct feature sets:

- Magpie Descriptors: Calculate mean, standard deviation, minimum, maximum, and mode for a suite of elemental properties (e.g., atomic number, atomic radius, electronegativity) for all elements in the compound [23].

- Roost Graph: Represent the crystal structure as a graph of atoms (nodes) and bonds (edges). If structure is unavailable, the chemical formula can be treated as a complete graph of its constituent elements [23].

- ECCNN Input: Encode the compound's composition into a 118 (elements) x 168 (features) x 8 (channels) matrix based on the electron configuration of its constituent atoms [23].

Base Model Training:

- Independently train the three base models (Magpie, Roost, ECCNN) using their respective encoded inputs.

- Use k-fold cross-validation (e.g., 5-fold) to generate out-of-sample predictions for the entire training set from each model.

Stacked Generalization:

- The out-of-sample predictions from the three base models are used as input features for a meta-learner model (e.g., a linear model or another XGBoost).

- Train the meta-learner on these new features to learn the optimal way to combine the base model predictions.

Validation:

- Evaluate the final ensemble model's performance on a held-out test set using metrics like Area Under the Curve (AUC) for classification or Mean Absolute Error (MAE) for regression [23].

Protocol 2: Predicting Bond Dissociation Energy with Hybrid Descriptors

This protocol describes a method for building a high-accuracy model to predict the BDE of trigger bonds in energetic molecules, which is critical for assessing their stability [24].

Workflow Overview:

Materials & Reagents:

- Dataset: A specialized dataset of real, synthesized CHON-containing energetic molecules. For the referenced study, 778 molecules were collected from literature, and their BDEs were calculated at the B3LYP/6-31G level of theory [24].

- Software: Quantum mechanics (QM) software (e.g., Gaussian, ORCA) for BDE calculation; Python with RDKit for descriptor generation and XGBoost for modeling.

Step-by-Step Procedure:

- Dataset Construction:

- Curate a set of energetic molecules and compute their BDEs using high-accuracy QM methods. This ensures a reliable and representative dataset, overcoming the scarcity of experimental BDE data for EMs [24].

Hybrid Feature Engineering:

- For each molecule, identify the weakest bond (e.g., C-NO₂, N-NO₂).

- Local Bond Features: Compute quantum mechanical or geometric descriptors specific to the trigger bond and its immediate atomic environment.

- Global Molecular Features: Calculate descriptors for the entire molecule, such as molecular weight, elemental counts, nitrogen/oxygen mass percent, and topological descriptors [24].

- Concatenate the local and global feature vectors to form a single, comprehensive hybrid descriptor for each molecule.

Data Augmentation with PADRE:

- To overcome data scarcity and improve model robustness, apply the Pairwise Difference Regression (PADRE) strategy.

- Generate new data points by creating feature vectors that are the differences between pairs of original feature vectors. The corresponding new labels are the differences between the original BDE values. This augments the dataset and can help reduce systematic errors [24].

Model Training and Validation:

- Train an XGBoost regressor on the augmented dataset using the hybrid descriptors.

- Validate the model using a rigorous train-test split or cross-validation, reporting metrics like R² and MAE to demonstrate predictive accuracy [24].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources

| Tool / Resource | Type | Function in Stability Prediction |

|---|---|---|

| Materials Project (MP) / JARVIS Database [23] | Data Repository | Provides large-scale, computed thermodynamic data (e.g., formation energies) for training composition-based stability models. |

| B3LYP/6-31G Level Theory [24] | Computational Method | A widely used and reliable quantum mechanics method for calculating target properties like Bond Dissociation Energy (BDE) when experimental data is lacking. |

| Magpie Descriptor Set [23] | Feature Generator | Automates the creation of statistical features from elemental properties, providing a robust input for models predicting bulk material properties. |

| XGBoost Library [27] [24] | Software / Algorithm | An optimized implementation of gradient boosting, frequently a top-performing model for tabular data in materials science. |

| Pairwise Difference Regression (PADRE) [24] | Data Augmentation Strategy | Artificially expands small datasets and improves model generalization by training on differences between samples, reducing systematic error. |

Building and Applying ML Models for Stability Prediction

In composition-based machine learning (ML) for material stability research, valence electron count (VEC) has emerged as a fundamental compositional descriptor for predicting phase stability, etching behavior, and catalytic properties across diverse material systems. VEC represents the number of outer-shell electrons available for bonding and profoundly influences electronic structure, bonding characteristics, and consequent material stability. Recent studies have demonstrated VEC's critical role as a predictive feature in ML models targeting everything from topological material discovery to hydrogen evolution catalyst development.

For transition metal systems, VEC is defined as electrons residing outside a noble-gas core, encompassing both outer s-electrons and d-electrons in proximity to the Fermi level [29]. This descriptor exhibits strong correlation with phase selection rules in high-entropy alloys (HEAs), where VEC ≥ 8 generally stabilizes face-centered cubic (FCC) phases, while VEC < 6.87 favors body-centered cubic (BCC) structures [30]. Beyond metallic systems, valence electron engineering now guides the rational design of MXenes, MBenes, and single-atom catalysts through precise control of electron redistribution at interfaces [31] [32].

Theoretical Foundation and Significance

Quantum Mechanical Basis of VEC

The predictive power of VEC originates from its direct connection to quantum mechanical properties governing atomic bonding. Density functional theory (DFT) calculations reveal that VEC governs orbital hybridization patterns, charge transfer mechanisms, and bond stability [31]. In transition metal MAB phases (M = transition metal, A = group III/IV element, B = boron), the valence electron count of the transition metal directly regulates M−A and M−B bond stability through d-p orbital hybridization [31].

When hybridized states approach the Fermi level, M−A interactions weaken, facilitating selective etching of Al layers from M₂AlB₂ phases. Conversely, increased valence electrons in transition metals stabilize these bonds by shifting hybrid states to lower energies [31]. This electronic structure-dynamics framework enables precise prediction of vacancy formation energies and migration barriers critical for material stability assessment.

VEC in Material Stability Assessment

Valence electron count serves as a robust stability indicator across multiple material classes:

- High-entropy alloys: VEC thresholds dictate FCC/BCC phase stability boundaries [30]

- MAB phases: Lower VEC values correlate with preferential Al-layer removal and etching capabilities [31]

- Topological materials: VEC combined with symmetry indicators enables classification of trivial and nontrivial electronic phases [33]

- Catalytic materials: VEC governs adsorption energies of reaction intermediates through electron-sharing mechanisms [32]

Table 1: Valence Electron Count Thresholds for Phase Stability in High-Entropy Alloys

| Crystal Structure | VEC Range | Stability Region | Remarks |

|---|---|---|---|

| FCC | VEC ≥ 8 | High stability | Metallic bonding dominant |

| FCC + BCC | 6.87 ≤ VEC < 8 | Mixed phase region | Transition zone |

| BCC | VEC < 6.87 | High stability | Covalent bonding contributions |

Computational Protocols for VEC Feature Engineering

First-Principles Calculation of VEC Parameters

Density Functional Theory Workflow for VEC Validation

Protocol 1: DFT Calculation of Valence Electron Characteristics

Initialization

- Build crystal structure from CIF files or material database entries

- Perform geometry optimization until forces < 0.01 eV/Å

- Confirm convergence of lattice parameters to < 0.1% variation

Electronic Structure Calculation

- Employ CASTEP or VASP with PBE-GGA functional [31]

- Use ultrasoft pseudopotentials with plane-wave cutoff ≥ 500 eV

- Implement k-point mesh with spacing ≤ 0.03 Å⁻¹

- Include spin-orbit coupling for heavy elements (Z > 40)

VEC Parameter Extraction

- Calculate total and projected density of states (DOS/PDOS)

- Perform Bader charge analysis for electron partitioning

- Integrate charge density within atomic basins

- Compute orbital-resolved electron counts (s, p, d, f)

Validation

- Compare computed VEC with nominal electron counts

- Verify charge conservation across unit cell

- Confirm band filling aligns with metallic/semiconducting behavior

Machine Learning Feature Engineering Pipeline

Compositional Descriptor Engineering Framework

Protocol 2: VEC Feature Engineering for Material Stability Prediction

Composition-Based Feature Generation

- Implement Composition Analyzer Featurizer (CAF) for elemental properties [34]

- Calculate weighted averages of atomic properties based on stoichiometry

- Generate 133 compositional features including electronegativity, atomic radius, and group number

Structure-Based Feature Enhancement

- Apply Structure Analyzer Featurizer (SAF) to CIF files [34]

- Extract 94 structural descriptors including symmetry operations and Wyckoff positions

- Compute space group-specific symmetry indicators

VEC-Specific Descriptor Engineering

Feature Selection and Optimization

- Apply recursive feature elimination with cross-validation

- Prioritize features with physical significance to stability prediction

- Validate descriptor robustness against overfitting

Table 2: Critical VEC-Derived Features for Material Stability Models

| Feature Name | Calculation Method | Physical Significance | Application Domain |

|---|---|---|---|

| Total VEC | Sum of group numbers | Fermi level position | Phase stability, HEAs |

| d-electron count | Transition metal d-electrons | Bond covalency strength | Catalysis, MBenes |

| p-electron fraction | p-electrons/total electrons | Orbital hybridization character | Glass formation, ChGs |

| VEC variance | Statistical dispersion | Chemical heterogeneity | High-entropy materials |

| Valence electron fitting parameter | VTM + VO/OH = 12 rule [32] | Adsorption energy prediction | Electrocatalysis |

Case Studies and Experimental Validation

VEC-Guided MBene Synthesis from MAB Phases

Protocol 3: VEC-Controlled Etching of M₂AlB₂ Phases

The valence electron count directly governs Al vacancy thermodynamics and kinetics in M₂AlB₂ (M = Sc, Ti, V, Cr, Zr, Mo, Hf, W) phases [31]:

Sample Preparation

- Synthesize phase-pure M₂AlB₂ powders via solid-state reaction

- Characterize crystal structure using XRD (confirm orthorhombic structure)

- Verify composition using EDS spectroscopy

VEC-Dependent Etching Optimization

- Prepare etching solution (HF or HF/HCl mixtures)

- For low-VEC systems (VEC < 4): Use milder etching conditions (10% HF, 4h)

- For high-VEC systems (VEC > 5): Employ aggressive etching (50% HF, 12h)

- Maintain temperature at 25°C with continuous stirring

Etching Validation

- Monitor Al removal using ICP-OES at 30-minute intervals

- Characterize layered structure using TEM and SAED

- Confirm MBene formation using Raman spectroscopy

Key Results: Ti₂AlB₂ (VEC = 4) exhibits optimal etching behavior with Al vacancy formation energy of 0.85 eV and migration barrier of 1.2 eV, while Cr₂AlB₂ (VEC = 6) shows significantly higher formation energy (> 2.5 eV) requiring extended etching duration [31].

VEC-Driven Discovery of Topological Materials

The TXL Fusion framework integrates VEC with symmetry indicators for high-throughput identification of topological materials [33]:

Protocol 4: VEC-Enhanced Topological Material Classification

Dataset Curation

- Collect 38,184 materials from topological materials database

- Label phases as trivial (18,090), topological semimetals (13,985), or topological insulators (6,109)

Feature Engineering

- Calculate total VEC and electron count parity

- Compute orbital-wise electron contributions (d- and f-orbital participation)

- Extract space group symmetry and site symmetry indicators

Model Training

- Implement ensemble classifier with heuristic, numerical, and LLM embedding modules

- Train XGBoost classifier on concatenated feature representations

- Validate using 5-fold cross-validation with stratified sampling

Performance: The VEC-enhanced model achieved 92% accuracy in distinguishing topological phases, significantly outperforming symmetry-only approaches (74% accuracy) [33].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for VEC Feature Engineering

| Tool Name | Function | Application Context | Access Method |

|---|---|---|---|

| CASTEP | DFT electronic structure calculation | VEC parameterization from first principles | Commercial license |

| Composition Analyzer Featurizer (CAF) | Compositional descriptor generation | Automated feature engineering from chemical formulas | Open-source Python |

| Structure Analyzer Featurizer (SAF) | Structural descriptor extraction | Crystal structure-based feature generation | Open-source Python |

| Matminer | Materials data mining and featurization | High-throughput descriptor calculation | Open-source Python |

| Catalysis-hub | ΔGH adsorption energy database | Model training for catalytic stability | Public database |

| SciGlass | Glass property database | Refractive index modeling with VEC features | Subscription |

Valence electron count has established itself as a critical compositional descriptor for machine learning models predicting material stability across diverse chemical spaces. The protocols outlined herein provide researchers with robust methodologies for VEC feature engineering, from first-principles calculation to experimental validation. As ML approaches continue to evolve, integration of VEC with advanced structural descriptors and symmetry indicators will further enhance predictive accuracy for complex material systems, accelerating the discovery of novel stable materials for energy, catalytic, and quantum applications.

In the field of computational materials science, predicting material stability is a critical task for accelerating the discovery and development of new compounds. The complex, non-linear relationships between material composition, structure, and properties make machine learning (ML) particularly valuable for this application. This article provides a detailed overview of three key ML algorithms—Random Forests, Support Vector Machines, and Gradient Boosting—within the context of composition-based material stability research. We examine their fundamental principles, implementation protocols, and performance characteristics, supported by experimental data from recent studies. The growing adoption of ML in scientific domains underscores the need for standardized benchmarking tasks and metrics to ensure reliable comparisons across different methodologies [5]. By framing our discussion around material stability prediction, we aim to equip researchers with the practical knowledge needed to select, implement, and interpret these powerful algorithms in their investigative work.

Algorithm Fundamentals and Comparative Analysis

Random Forests

Random Forests (RF) represent an ensemble learning method that operates by constructing multiple decision trees during training. As a bagging-based ensemble technique, RF generates numerous bootstrap samples (subsets) from the training data and trains independent predictive models on each subset [36]. The algorithm's prediction is determined as the mean of all predictions from the submodels, which improves stability and accuracy while reducing overfitting [36]. RF is particularly noted for its robust performance on small datasets comprising mainly categorical variables and its ability to handle class imbalance effectively [36]. The algorithm's resistance to outliers and minimal requirement for hyperparameter tuning make it particularly attractive for materials research applications.

Support Vector Machines

Support Vector Machines (SVM) are supervised learning models that analyze data for classification and regression analysis. In their simplest form, SVMs construct a hyperplane or set of hyperplanes in a high-dimensional space, which can be used for classification, regression, or outlier detection. The fundamental principle behind SVM is to find the optimal separating hyperplane that maximizes the margin between different classes in the feature space. For non-linearly separable data, SVMs employ kernel functions to map input data into higher-dimensional feature spaces where linear separation becomes possible. The Linear Kernel Support Vector Machine has demonstrated particular effectiveness in predicting stability energy and structural correlations of selenium-based compounds, identifying key descriptors such as BertzCT, PEOE_VSA14, and χ1 [37].

Gradient Boosting

Gradient Boosting is a powerful boosting technique that builds models sequentially, with each new model trained to minimize the loss function of the previous model [38]. Unlike bagging methods, boosting is an iterative and dependence-based system where weak classifiers are collected to generate strong classifiers [36]. The algorithm works by iteratively adding decision trees that focus on the residual errors of the previous ensemble, gradually improving predictive performance. Extreme Gradient Boosting (XGBoost) represents an advanced implementation that utilizes an objective function consisting of both a loss function and a regularization term, which helps control model complexity and prevent overfitting [38]. Ensemble methods based on gradient boosting have demonstrated excellent predictive performance in various materials stability applications, often outperforming traditional machine learning methods [39] [40].

Table 1: Comparative Performance of Key Algorithms in Material Stability Prediction

| Algorithm | Prediction Task | Performance Metrics | Dataset Size | Reference |

|---|---|---|---|---|

| Random Forest | Rock slope stability | AUC: 0.95, Precision: 0.88 | 18 factors | [41] |

| SVM with Linear Kernel | Selenium compound stability | Identified key structural descriptors | 618 compounds | [37] |

| XGBoost | Superhydrophobic coating stability | Outperformed SVR and KNN | Experimental data | [40] |

| LightGBM with KOA | Slope stability | Accuracy: 0.91, AUC: 0.97 | 393 cases | [42] |

| Gradient Boosting Machine | Demolition waste prediction | Effective for small categorical datasets | 690 buildings | [36] |

Table 2: Typical Hyperparameter Settings for Stability Prediction Models

| Algorithm | Key Hyperparameters | Recommended Values | Optimization Methods | |

|---|---|---|---|---|

| Random Forest | Number of trees, Maximum depth, Minimum samples split | 100-500 trees, Depth: 10-30, Min samples: 2-5 | Bayesian optimization, Grid search | [39] [43] |

| SVM | Kernel type, Regularization (C), Gamma | Linear/RBF, C: 0.1-10, Gamma: scale/auto | Kepler optimization algorithm | [37] [42] |

| Gradient Boosting | Learning rate, Number of estimators, Subsample | Learning rate: 0.01-0.1, Estimators: 100-500 | Bayesian optimization, Gaussian process | [39] [38] |

| LightGBM | Number of leaves, Learning rate, Feature fraction | Leaves: 31-127, Learning rate: 0.01-0.05 | Kepler optimization algorithm | [42] |

Application Protocols for Material Stability Prediction

Data Preparation and Preprocessing

The foundation of any successful ML application in material stability prediction lies in rigorous data preparation. For composition-based stability models, the process typically begins with assembling a comprehensive dataset of known materials and their properties. Recent studies have utilized datasets ranging from 393 slope stability cases to 2250 dump slope stability datasets, with the larger datasets generally yielding more reliable models [39] [42]. The data preprocessing pipeline should include several critical steps: handling missing values through imputation or removal, detecting and addressing outliers using statistical methods, normalizing or standardizing features to ensure consistent scaling, and performing feature selection to eliminate redundant or irrelevant descriptors [40]. For material stability applications, key input parameters often include cohesion (c), angle of internal friction (ϕ), unit weight (γ), overall height (H), and various composition-based descriptors [39] [37]. The output is typically a stability metric such as factor of safety (FOS) or energy above the convex hull.

Model Training and Validation

The model training phase requires careful attention to algorithm selection and validation strategy. For material stability prediction, researchers have successfully employed automated machine learning approaches like Lazy Predict AutoML to select the most appropriate algorithms for their specific datasets [39]. The training process should incorporate k-fold cross-validation (typically 5-fold or 10-fold) to ensure robust performance estimation, though Leave-One-Out Cross-Validation may be preferable for smaller datasets [36]. Hyperparameter optimization is crucial for maximizing model performance; recent studies have utilized Bayesian optimization with Gaussian processes, as well as specialized algorithms like the Kepler optimization algorithm, to identify optimal parameter configurations [39] [42]. For ensemble methods, the number of base estimators (trees) should be sufficiently large to ensure convergence while balancing computational efficiency. Regularization techniques should be employed to prevent overfitting, particularly for complex models trained on limited materials data.

Model Interpretation and Explainability

Beyond mere prediction accuracy, interpretability is crucial for scientific applications where understanding feature relationships drives fundamental insights. The SHapley Additive exPlanations method has emerged as a powerful technique for interpreting ML model outputs in material stability prediction [39] [42]. SHAP quantifies the contribution of each input feature to individual predictions, thereby providing both local and global interpretability. In slope stability applications, SHAP analysis has revealed that cohesion, internal friction angle, and slope angle typically represent the most influential factors, with cohesion generally having the most significant effect on model predictions [42]. Similarly, for selenium-based compounds, SHAP can identify critical structural descriptors such as BertzCT, PEOE_VSA14, and χ1 that govern stability [37]. This interpretability is essential for building trust in ML models and generating testable hypotheses for further experimental validation.

Experimental Workflows

The experimental workflow for material stability prediction using ML follows a systematic process from data collection to model deployment. The diagram below illustrates the key stages in this workflow:

Diagram 1: Material Stability Prediction Workflow

Composition-Based Stability Screening Protocol

The protocol for screening material stability based on composition involves a multi-stage process that integrates computational and experimental approaches. The workflow begins with generating a virtual library of candidate compositions, which can include thousands to millions of potential materials [5] [6]. For each composition, relevant descriptors are computed, including structural, electronic, and thermodynamic features. These descriptors serve as input for trained ML models that perform initial stability screening, rapidly identifying promising candidates from the vast compositional space [6]. The top candidates identified through ML screening then undergo more rigorous first-principles calculations, typically using density functional theory, to verify their thermodynamic stability [5] [6]. Finally, the most promising candidates from computational screening are selected for experimental synthesis and validation, completing the discovery cycle. This integrated approach dramatically accelerates the materials discovery process by prioritizing experimental efforts on the most viable candidates.

Integrated ML-Physical Modeling Framework

For applications where purely data-driven models may lack sufficient accuracy or generalizability, an integrated framework combining ML with physical models offers a powerful alternative. This approach is particularly valuable in geotechnical stability applications, where researchers have successfully combined Random Forest models with physical models like GEOtop and Scoops3D [43]. In this framework, physical models first simulate fundamental processes (e.g., water infiltration and pore pressure distribution), generating comprehensive datasets that capture complex physical interactions [43]. ML models are then trained on these physically consistent datasets, learning the relationships between input parameters and stability outcomes. The trained ML models can subsequently make rapid predictions under new conditions, maintaining physical realism while achieving computational efficiency orders of magnitude faster than full physical simulations [43]. This hybrid approach is especially valuable for scenarios requiring rapid assessment, such as landslide early warning systems or high-throughput materials screening.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Example |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Model interpretation and feature importance analysis | Identifying cohesion as most critical factor in slope stability [39] [42] |

| Kepler Optimization Algorithm | Hyperparameter tuning for improved model performance | Optimizing LightGBM parameters for slope stability prediction [42] |

| GEOtop Model | Simulating hydrological processes in unsaturated soils | Predicting volumetric water content for slope stability analysis [43] |

| Scoops3D | 3D slope stability analysis using limit equilibrium methods | Calculating factor of safety for rotational failure surfaces [43] |

| Bayesian Optimization | Efficient hyperparameter tuning with Gaussian processes | Optimizing ensemble models for dump slope stability [39] |

| H2O AutoML | Automated machine learning for model selection and training | Identifying best-performing models for dump slope stability [39] |

Performance Benchmarking and Validation