LLMs for Materials Property Prediction: Transforming Discovery with AI

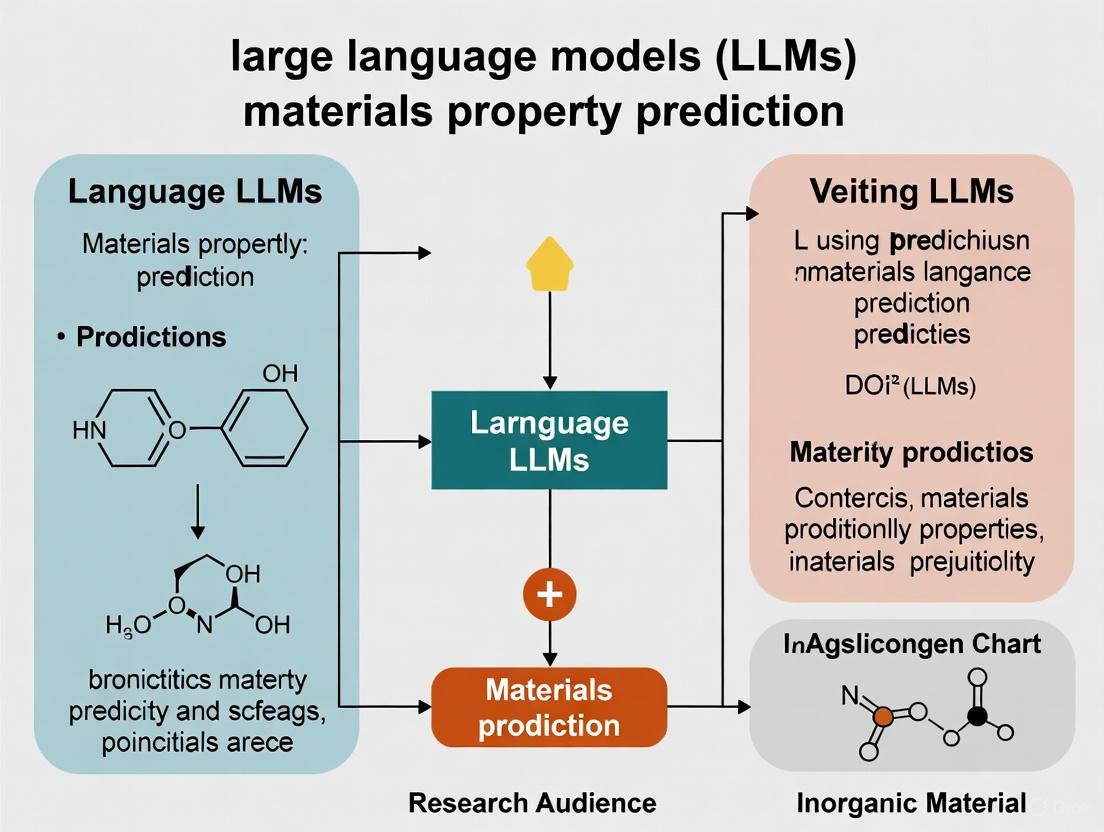

Large Language Models (LLMs) are revolutionizing materials property prediction by leveraging natural language descriptions of materials to achieve state-of-the-art accuracy.

LLMs for Materials Property Prediction: Transforming Discovery with AI

Abstract

Large Language Models (LLMs) are revolutionizing materials property prediction by leveraging natural language descriptions of materials to achieve state-of-the-art accuracy. This article explores how LLMs outperform traditional graph-based models, enable rapid prototyping in low-data environments, and integrate as 'central brains' in automated research workflows. We detail foundational concepts, methodological advances like fine-tuning and novel material representations, and critical optimization techniques for robust performance. Finally, we present comprehensive validation studies benchmarking LLMs against established methods and discuss the profound implications of these AI-driven tools for accelerating the design of advanced materials and therapeutics.

The New Paradigm: How LLMs Decode Materials Science

The prediction of material properties represents a fundamental challenge in materials science and drug development. Traditional approaches, particularly those based on graph neural networks (GNNs), have demonstrated significant capabilities but face inherent limitations in modeling complex crystalline interactions and symmetry information. Recent advances have revealed an alternative paradigm: leveraging the general-purpose learning capabilities of large language models (LLMs) to predict crystal properties directly from text descriptions. This technical guide examines the core concepts, methodologies, and experimental frameworks underlying LLM-based property prediction, highlighting how transforming structural information into natural language enables unprecedented accuracy in predicting electronic, mechanical, and thermal properties of materials. The shift from structured data to textual representations addresses critical gaps in conventional approaches while introducing new considerations for robustness and extrapolation.

The application of large language models to materials property prediction represents a fundamental transformation in how computational approaches extract meaningful patterns from chemical and structural data. Where traditional machine learning methods operate on structured numerical descriptors or graph representations, LLM-based approaches leverage the rich, expressive power of natural language descriptions to capture nuanced material characteristics that often elude conventional formalisms [1].

This paradigm shift is particularly valuable in materials science research, where the complex interactions between atoms and molecules within crystal structures present significant modeling challenges. Graph neural network approaches, while valuable, struggle to efficiently encode crystal periodicity and incorporate critical symmetry information such as space groups and Wyckoff sites [1]. Surprisingly, predicting crystal properties from text descriptions remained understudied until recently, despite the rich information and expressiveness that text data offer [1].

The core insight driving LLM-based property prediction is that textual descriptions can encapsulate complex structural relationships in a format that aligns with the pretraining corpus of large language models. This alignment enables LLMs to transfer their general-purpose reasoning capabilities to the specific domain of materials science, often with fewer parameters than specialized domain-specific models [1].

Fundamental Concepts: Why Text Representations Work

Limitations of Traditional Approaches

Current methods for predicting crystal properties predominantly rely on modeling crystal structures using graph neural networks, where atoms are represented as nodes and bonds as edges [1]. Despite successive improvements through architectures like CGCNN, MEGNet, and ALIGNN, these approaches face persistent challenges in accurately modeling the complex interactions between atoms and molecules within a crystal [1]. Specifically, GNNs struggle with:

- Encoding periodicity: The repetitive arrangement of unit cells within a lattice presents representation challenges distinct from standard molecular graphs [1]

- Incorporating symmetry information: Critical atomic and molecular information such as bond angles and crystal symmetry information prove difficult to integrate into GNN architectures [1]

- Expressiveness limitations: Graph representations may lack the expressiveness needed to convey complex and nuanced crystal information critical for accurate property prediction [1]

The Representational Advantage of Text

Textual descriptions of crystal structures overcome these limitations through several key advantages:

- Rich information encapsulation: Text can comprehensively describe complex structural relationships using natural language, capturing nuances that structured representations may miss [1]

- Straightforward information incorporation: Adding critical structural information to text descriptions is generally more straightforward compared to modifying graph architectures [1]

- Leveraging pre-trained knowledge: LLMs bring extensive scientific knowledge from their training corpus, enabling them to recognize patterns and relationships that may not be explicitly present in the structured training data [1]

Table 1: Comparison of Representation Approaches for Crystal Property Prediction

| Aspect | Graph-Based Approaches | Text-Based Approaches |

|---|---|---|

| Structural Encoding | Nodes (atoms) and edges (bonds) | Natural language descriptions |

| Symmetry Handling | Challenging to incorporate space group symmetry | Directly describable in text |

| Periodicity Representation | Inefficient encoding of crystal periodicity | Naturally described as repetitive arrangements |

| Information Density | Limited expressiveness | Rich, expressive descriptions |

| Implementation Complexity | Complex architectural modifications | Straightforward text augmentation |

Core Methodological Framework: The LLM-Prop Architecture

The LLM-Prop framework demonstrates how LLMs can be adapted for property prediction tasks through careful architectural design and preprocessing strategies [1]. The system leverages a pretrained encoder-decoder Transformer model (T5) but discards the decoder component, using only the encoder with an additional prediction layer for regression and classification tasks [1]. This design choice provides significant advantages:

- Parameter efficiency: Eliminating the decoder reduces total parameters by half, enabling training on longer sequences [1]

- Enhanced context utilization: Longer sequence handling allows incorporation of more comprehensive crystal information [1]

- Task flexibility: The architecture supports both regression and classification through appropriate output layers [1]

Text Preprocessing Pipeline

Effective text-based property prediction requires careful preprocessing of crystal descriptions to optimize information content while managing sequence length [1]:

- Stopword removal: Exclusion of common English stopwords while preserving digits and signs that may carry important crystal information [1]

- Numerical tokenization: Replacement of bond distances with a [NUM] token and bond angles with an [ANG] token to address LLMs' limitations in numerical reasoning while reducing sequence length [1]

- Alternative representations: Experimental approaches include complete removal of bond distances and angles to compress descriptions further [1]

- Classification token addition: Prepending a [CLS] token whose updated embedding is used for prediction, following established practices in encoder-based models [1]

Experimental Workflow

The experimental workflow for LLM-based property prediction follows a systematic process from data preparation to model evaluation:

Diagram 1: LLM Property Prediction Workflow

Quantitative Performance Analysis

Benchmark Results

LLM-Prop demonstrates competitive or superior performance compared to state-of-the-art GNN-based methods across multiple property prediction tasks [1]. The framework outperforms specialized domain-specific models despite having fewer parameters, highlighting the effectiveness of text-based representations.

Table 2: Performance Comparison of LLM-Prop vs. GNN-Based Methods [1]

| Property | Model | Performance | Advantage |

|---|---|---|---|

| Band Gap | LLM-Prop | ~8% improvement over GNNs | More accurate electronic property prediction |

| Band Gap Type | LLM-Prop | ~3% improvement in classification | Better direct/indirect band gap classification |

| Unit Cell Volume | LLM-Prop | ~65% improvement over GNNs | Superior structural property prediction |

| Formation Energy | LLM-Prop | Comparable performance | Equivalent accuracy with fewer parameters |

| Energy per Atom | LLM-Prop | Comparable performance | Maintains accuracy with text representations |

Robustness and Generalization

The performance of LLMs in materials property prediction must be evaluated not only on standard benchmarks but also under realistic and adversarial conditions [2]. Studies evaluating commercial and open-source LLMs have examined their robustness against various forms of "noise," ranging from realistic disturbances to intentionally adversarial manipulations [2].

Key findings include:

- Prompt sensitivity: LLM predictions can vary significantly with different phrasings of equivalent scientific concepts [2]

- Mode collapse behavior: When provided with dissimilar examples during few-shot in-context learning, LLMs may generate identical outputs despite varying inputs [2]

- Train/test mismatch: Counterintuitively, some adversarial perturbations like sentence shuffling can enhance predictive capability with truncated prompts [2]

- Out-of-distribution challenges: LLMs struggle to maintain predictive accuracy when input distributions shift, exhibiting poor generalization to OOD test data [2]

Advanced Applications and Extensions

Knowledge Graph Integration

Beyond direct property prediction, LLMs enable the construction of materials property knowledge graphs that capture relationships between different properties based on scientific principles [3]. These graphs provide a powerful framework for understanding trade-offs and relationships between material characteristics.

The knowledge graph construction process involves:

- Entity extraction: Identifying material properties and their relationships from scientific texts [3]

- Relationship establishment: Connecting properties based on physical principles described in textbooks and literature [3]

- Application to prediction: Leveraging property relationships to estimate missing data and identify substitute properties [3]

Extrapolation to Out-of-Distribution Properties

Discovering high-performance materials requires identifying extremes with property values outside known distributions, making extrapolation to out-of-distribution (OOD) property values critical [4]. Recent work has adapted transductive approaches to OOD property prediction, achieving substantial improvements in extrapolation accuracy [4].

The bilinear transduction method improves OOD prediction by:

- Reparameterization: Learning how property values change as a function of material differences rather than predicting values directly from new materials [4]

- Analogous reasoning: Leveraging input-target relations in training and test sets to enable generalization beyond training target support [4]

- Performance gains: Demonstrating 1.8× improvement in extrapolative precision for materials and 1.5× for molecules, with up to 3× boost in recall of high-performing candidates [4]

Diagram 2: OOD Prediction via Bilinear Transduction

Experimental Protocols and Implementation

Dataset Construction and Preparation

The TextEdge benchmark dataset provides crystal text descriptions with corresponding properties, enabling standardized evaluation of text-based prediction approaches [1]. Key considerations in dataset preparation include:

- Description generation: Using tools like Robocrystallographer to convert structural data (CIF files) into comprehensive text descriptions [1] [2]

- Property annotation: Associating descriptions with target properties from computational and experimental sources [1]

- Data partitioning: Implementing appropriate train/validation/test splits to evaluate generalization capability [1]

Model Training Methodology

Successful implementation of LLM-based property prediction requires careful attention to training protocols:

- Encoder utilization: Leveraging only the encoder component of sequence-to-sequence models like T5 for regression tasks [1]

- Progressive evaluation: Using density functional theory (DFT) calculations during active learning cycles to assess predictive performance [5]

- Multi-fidelity data handling: Optional global state features to manage data from different sources and quality levels [6]

Evaluation Metrics and Benchmarks

Comprehensive evaluation of LLM-based predictors requires multiple performance dimensions:

- Prediction accuracy: Standard metrics including mean absolute error (MAE) for regression tasks and accuracy for classification [1] [4]

- Extrapolation capability: Performance on out-of-distribution property values beyond the training range [4]

- Robustness: Resilience to textual perturbations and adversarial manipulations [2]

- Computational efficiency: Training and inference time compared to alternative approaches [1]

Table 3: Key Research Reagents and Computational Resources

| Resource | Type | Function | Application Context |

|---|---|---|---|

| TextEdge Dataset | Benchmark data | Provides crystal text descriptions with properties | Training and evaluation of text-based predictors [1] |

| MatGL Library | Software framework | Open-source graph deep learning library for materials science | Implementation and comparison of GNN baselines [6] |

| Robocrystallographer | Text generation tool | Generates textual descriptions of crystal structures | Creating input data for LLM-based prediction [1] [2] |

| GNoME Database | Materials database | Contains millions of predicted crystal structures | Source of novel materials for validation [5] |

| Bilinear Transduction | Algorithmic approach | Enables extrapolation to OOD property values | Predicting materials with exceptional properties [4] |

| Knowledge Graph Framework | Representation method | Captures relationships between material properties | Understanding property trade-offs and connections [3] |

Future Directions and Challenges

The application of LLMs to materials property prediction continues to evolve rapidly, with several promising research directions emerging:

- Architectural specialization: Developing LLM architectures specifically designed for scientific reasoning and numerical computation [1]

- Multi-modal integration: Combining textual descriptions with structural representations in unified models [6]

- Robustness enhancement: Addressing sensitivity to prompt variations and out-of-distribution inputs [2]

- Automated synthesis planning: Linking property predictions to experimental synthesis protocols [5]

- Foundation models for materials: Creating large-scale models pretrained on extensive materials science literature and data [3]

As LLM-based approaches mature, they hold the potential to fundamentally transform how researchers discover and design new materials, accelerating the development of next-generation technologies across energy, electronics, and healthcare applications.

Why Text? Overcoming the Limitations of Graph Neural Networks (GNNs)

Graph Neural Networks (GNNs) have emerged as a transformative paradigm in machine learning and artificial intelligence, particularly for modeling interconnected data prevalent in various scientific domains [7]. In materials science, GNNs have been widely adopted to represent crystal structures as graphs, where atoms serve as nodes and chemical bonds as edges, enabling the prediction of material properties from structural information [1]. This approach has demonstrated considerable success, with models like CGCNN, MEGNet, and ALIGNN progressively incorporating more complex structural features such as bond angles and crystal periodicity [1].

However, GNNs face fundamental limitations when applied to crystalline materials prediction. These models struggle to efficiently encode the periodicity inherent to crystals resulting from the repetitive arrangement of unit cells within a lattice [1]. Furthermore, incorporating critical symmetry information such as space groups and Wyckoff sites presents significant challenges for graph-based representations [1]. The complex process of accurately modeling all relevant interactions between atoms and molecules within a crystal structure remains a substantial obstacle for GNN-based approaches [1].

The recent integration of large language models (LLMs) into scientific domains offers a promising alternative pathway. By leveraging textual representations of crystal structures rather than graph-based ones, researchers have begun to overcome these limitations, achieving superior performance on various property prediction tasks [1]. This shift from graphical to textual representations forms the core of our exploration into why text-based approaches present a compelling alternative to traditional GNN methodologies in materials informatics.

Theoretical Limitations of Graph Neural Networks

Expressivity Boundaries and Architectural Constraints

The theoretical foundations of GNNs reveal significant constraints on their learning capabilities. While GNNs have been proven to be Turing universal under ideal conditions, this universality hinges on strong assumptions that rarely hold in practical applications [8]. The network must have powerful layers, sufficient depth and width, and each node requires discriminative attributes that uniquely identify it [8]. In practice, these conditions are seldom fully met, particularly for complex scientific applications like materials property prediction.

A critical limitation stems from the message-passing mechanism fundamental to most GNN architectures. In each layer, nodes update their states by aggregating messages from neighbors, but this process inherently limits the network's receptive field. The representation of any node in the GNN output is fundamentally restricted to its k-radius neighborhood, where k is the number of GNN layers [8]. Consequently, a network with depth smaller than the graph diameter cannot compute inherently global properties, creating a fundamental barrier for predicting material characteristics that depend on long-range interactions or global crystal symmetry.

The role of node anonymity further constrains GNN capabilities. Previous analyses have established that anonymous GNNs (where nodes lack unique identifiers) possess expressivity limited to the power of the Weisfeiler-Lehman (WL) graph isomorphism test [8]. This is particularly problematic for materials science applications, as the WL test cannot distinguish many graph properties relevant to material behavior. For instance, any two regular graphs with the same number of nodes appear identical from the perspective of the WL test, regardless of their actual topological differences [8].

Practical Capacity Limitations

Theoretical impossibility results establish that numerous graph problems cannot be solved by GNNs with sub-linear capacity relative to node count [8]. Specifically, no GNN can solve problems like cycle detection, shortest path approximation, or diameter estimation if its capacity (defined as the product of depth and width) is O(n^c) for c < 1, where n represents the number of nodes [8].

These limitations manifest practically in materials property prediction. GNNs struggle to capture complex periodic patterns and crystal symmetry information that significantly influence material properties [1]. The graph representation paradigm makes it "very complex to incorporate into GNNs critical atomic and molecular information such as bond angles and crystal symmetry information such as space groups" [1], creating a fundamental representational gap that impacts prediction accuracy.

Table 1: Theoretical Limitations of GNNs in Materials Property Prediction

| Limitation Category | Specific Challenge | Impact on Materials Prediction |

|---|---|---|

| Architectural Constraints | Limited receptive field from message-passing | Inability to capture long-range interactions in crystal structures |

| Expressivity Boundaries | Node anonymity and WL test equivalence | Failure to distinguish crystallographically distinct but topologically similar structures |

| Capacity Requirements | Super-linear need for global properties | Computational infeasibility for complex crystal systems |

| Representational Gaps | Difficulty encoding symmetry information | Reduced accuracy for symmetry-dependent properties |

| Periodicity Modeling | Challenges with repetitive unit cells | Inefficient encoding of crystal periodicity and lattice arrangements |

Textual Representations: A Paradigm Shift for Materials Informatics

The Representational Advantages of Text

Textual representations of crystal structures offer significant advantages over graph-based approaches for materials property prediction. Natural language provides inherent expressiveness capable of conveying complex and nuanced crystal information that proves challenging to encode in graphs [1]. Where GNNs struggle to explicitly represent symmetry elements and periodic patterns, textual descriptions can naturally articulate these concepts through structured language.

The information density of textual representations enables more efficient encoding of critical crystal characteristics. As demonstrated in the LLM-Prop framework, text descriptions can compress complex structural information while preserving essential elements for property prediction [1]. This compression allows models to process longer contextual sequences while maintaining computational efficiency, as "textual data contain rich information and are very expressive" compared to graph-based alternatives [1].

Furthermore, textual representations facilitate knowledge transfer from scientific literature. By representing crystals as text, models can leverage the vast body of existing materials science knowledge encoded in research publications, textbooks, and databases [9]. This connection to human scientific communication creates opportunities for models to develop more intuitive understanding of material behavior based on established scientific principles rather than purely structural patterns.

Empirical Evidence: LLM-Prop Performance

Recent empirical evidence strongly supports the superiority of text-based approaches for crystal property prediction. The LLM-Prop framework, which leverages fine-tuned LLMs on text descriptions of crystal structures, demonstrates remarkable performance advantages over state-of-the-art GNN-based methods [1].

Table 2: Performance Comparison: LLM-Prop vs. GNN-Based Approaches [1]

| Property | Model Type | Performance | Advantage |

|---|---|---|---|

| Band Gap Prediction | GNN-Based (ALIGNN) | Baseline | - |

| LLM-Prop | ~8% improvement | Significant | |

| Direct/Indirect Band Gap Classification | GNN-Based (ALIGNN) | Baseline | - |

| LLM-Prop | ~3% improvement | Notable | |

| Unit Cell Volume Prediction | GNN-Based (ALIGNN) | Baseline | - |

| LLM-Prop | ~65% improvement | Substantial | |

| Formation Energy per Atom | GNN-Based (ALIGNN) | Baseline | - |

| LLM-Prop | Comparable performance | Competitive | |

| Model Parameters | MatBERT (Domain-specific) | 3x more parameters | Less efficient |

| LLM-Prop | Fewer parameters | More efficient |

These results highlight the particular advantage of text-based approaches for properties heavily influenced by symmetry and long-range order, such as unit cell volume, where LLM-Prop achieves a remarkable 65% improvement over GNN-based methods [1]. This performance differential underscores the fundamental limitations of GNNs in capturing critical crystallographic information essential for accurate property prediction.

Methodological Framework: Implementing Text-Based Prediction

The LLM-Prop Architecture

The LLM-Prop framework implements a sophisticated methodology for crystal property prediction using textual representations [1]. The architecture leverages the encoder component of a pre-trained T5 model, discarding the decoder to optimize for predictive tasks rather than generative ones [1]. This strategic modification reduces parameter count by approximately half, enabling training on longer sequences while maintaining computational efficiency.

The processing pipeline begins with textual preprocessing of crystal structure descriptions. This involves removing stopwords while preserving numerically significant information, replacing specific bond distances with a [NUM] token and bond angles with an [ANG] token, and prepending a [CLS] token to facilitate classification tasks [1]. This preprocessing strategy compresses the input while preserving semantically critical information, enabling the model to capture broader contextual understanding of the crystal structure.

The model then processes tokenized descriptions through the T5 encoder, which generates contextual representations used for property prediction through task-specific output layers [1]. For regression tasks, a linear layer transforms the [CLS] token representation into numerical predictions, while classification tasks employ sigmoid or softmax activations as appropriate [1].

Textual Representation Strategies

Effective textual representation of crystal structures employs several strategic approaches to maximize predictive performance. The TextEdge dataset provides a benchmark containing comprehensive text descriptions of crystals with corresponding properties, enabling standardized evaluation of text-based prediction approaches [1].

Critical to the success of textual representations is the information compression strategy that preserves semantically meaningful content while reducing sequence length. By replacing specific numerical values with unified tokens ([NUM] for bond distances, [ANG] for bond angles), the model learns to focus on the structural relationships rather than precise values, often capturing more generalizable patterns [1]. This approach addresses known limitations of LLMs in numerical reasoning while maintaining essential structural information.

The domain adaptation of general-purpose LLMs to materials science represents another crucial methodological innovation. Rather than pre-training specialized models from scratch, which requires "millions of materials science articles" [1], LLM-Prop demonstrates that strategic fine-tuning of general-purpose models on curated crystal descriptions achieves state-of-the-art performance. This efficient transfer learning approach significantly reduces computational requirements while leveraging the broad linguistic capabilities of foundation models.

Experimental Protocols and Validation

Benchmarking Methodology

Comprehensive evaluation of text-based approaches requires rigorous benchmarking against established GNN baselines. The experimental protocol for LLM-Prop exemplifies this methodology, employing multiple datasets including the publicly released TextEdge benchmark to ensure reproducible comparison [1].

The validation framework assesses performance across diverse property types:

- Electronic properties (band gap, direct/indirect classification)

- Energetic properties (formation energy per atom, energy above hull)

- Structural properties (unit cell volume)

Each property category presents distinct challenges, with structural properties showing the most dramatic improvement with text-based approaches [1]. This differential performance across property types provides insights into which material characteristics benefit most from textual representation.

Comparative analysis includes both GNN-based state-of-the-art models (ALIGNN, MEGNet, CGCNN) and domain-specific language models (MatBERT) [1]. This comprehensive benchmarking ensures that observed improvements stem from the representational approach rather than architectural advantages or parameter count differences.

Ablation Studies and Sensitivity Analysis

Rigorous ablation studies validate the contribution of individual components within the text-based prediction pipeline. The LLM-Prop framework systematically evaluates the impact of:

- Text preprocessing strategies: Comparing performance with and without stopword removal, number replacement, and special token inclusion [1]

- Input representation formats: Assessing descriptions with retained versus removed bond lengths and angles [1]

- Architectural variations: Contrasting encoder-only approaches with full encoder-decoder configurations [1]

These studies confirm that the strategic compression of numerical information (replacing specific values with [NUM] and [ANG] tokens) enhances rather than diminishes performance, likely by enabling the model to process longer contextual sequences [1]. Similarly, the removal of linguistically redundant stopwords improves predictive accuracy while reducing computational requirements.

Additional sensitivity analysis examines the model's performance across different crystal systems and material classes, identifying any systematic biases or limitations in the textual representation approach. This comprehensive validation ensures the robustness of the methodology across diverse materials chemistry spaces.

Implementing effective text-based property prediction requires careful selection of datasets, computational resources, and software tools. The following toolkit outlines essential components for researchers exploring this paradigm.

Table 3: Essential Research Reagents for Text-Based Materials Prediction

| Resource Category | Specific Tool/Dataset | Function and Application |

|---|---|---|

| Benchmark Datasets | TextEdge Dataset [1] | Provides crystal text descriptions with properties for standardized benchmarking |

| QM9 Dataset [10] | Molecular properties benchmark for comparative validation | |

| Computational Frameworks | Open MatSci ML Toolkit [9] | Standardizes graph-based materials learning workflows |

| FORGE [9] | Provides scalable pretraining utilities across scientific domains | |

| Model Architectures | T5 (Text-to-Text Transfer Transformer) [1] | Encoder-decoder foundation model adaptable for prediction tasks |

| MatBERT [1] | Domain-specific BERT model for materials science applications | |

| Preprocessing Tools | NLTK / spaCy [11] | Natural language processing libraries for text cleaning and tokenization |

| Custom tokenizers [1] | Specialized tokenization for chemical and crystallographic terminology | |

| Evaluation Metrics | RMSE / MAE [12] | Standard regression metrics for property prediction accuracy |

| Classification accuracy [1] | Performance assessment for categorical predictions |

Implementation Workflow: From Crystal Structure to Property Prediction

The complete workflow for text-based crystal property prediction involves multiple stages from data preparation to model deployment. The following diagram illustrates this end-to-end process, highlighting critical decision points and methodological considerations.

Future Directions and Research Opportunities

The integration of text-based approaches with traditional GNN methodologies presents promising avenues for future research. Hybrid models that leverage both structural graph representations and textual descriptions could potentially capture complementary information, mitigating the limitations of either approach alone. Such architectures might employ cross-modal attention mechanisms to align structural and linguistic representations of crystal features.

Multimodal foundation models represent another significant opportunity for advancing materials property prediction. Recent surveys highlight growing interest in "multimodal and cross-domain models like nach0, MultiMat, and MatterChat [that] demonstrate reasoning over complex combinations of structural, textual, and spectral data" [9]. These approaches could unify diverse data modalities—structural graphs, textual descriptions, spectral signatures, and microscopic images—into a cohesive predictive framework.

The development of specialized scientific language models pre-trained on extensive materials science literature offers another promising direction. While current approaches successfully adapt general-purpose LLMs, domain-specific pre-training could enhance performance on nuanced materials concepts and relationships. Models like AtomGPT [9] and MoL-MoE [9] represent early explorations in this space, though they currently face challenges of "limited pre-training and downstream data, limited computational resources, [and] a lack of efficient strategies to use the available resources" [1].

Finally, LLM agentic systems present opportunities for autonomous materials discovery and characterization. Frameworks like HoneyComb, LLMatDesign, and ChatMOF [9] leverage LLMs as reasoning components that interact with computational and experimental environments, potentially accelerating the materials development cycle through automated hypothesis generation and validation.

The limitations of Graph Neural Networks in materials property prediction—particularly regarding symmetry encoding, periodicity representation, and global property capture—have created an opportunity for text-based approaches to demonstrate significant advantages. By leveraging the expressiveness of natural language and the powerful pattern recognition capabilities of large language models, frameworks like LLM-Prop achieve superior performance on critical prediction tasks, especially for properties dependent on crystallographic symmetry and long-range order.

The empirical evidence clearly indicates that textual representations can overcome fundamental limitations of graph-based approaches, particularly for complex crystalline materials. As the field progresses, the integration of textual and structural representations within multimodal frameworks promises to further advance materials informatics, potentially accelerating the discovery and development of novel materials with tailored properties for specific applications.

The integration of Large Language Models (LLMs) into materials science is revolutionizing the research paradigm for crystalline materials, a category that includes highly tunable porous systems like Metal-Organic Frameworks (MOFs) and other inorganic crystalline solids [13] [14]. Accurate prediction of material properties is fundamental to accelerating the discovery and development of new crystals, with impactful applications ranging from carbon capture and hydrogen storage to semiconductor electronics and drug delivery [15] [16] [17]. Traditional approaches, particularly those based on Graph Neural Networks (GNNs), have driven significant progress by modeling crystal structures as graphs of atoms and bonds [17]. However, these methods often struggle to efficiently encode critical crystallographic information such as periodicity, space group symmetry, and Wyckoff sites [1].

The advent of LLMs offers a transformative alternative. By leveraging the rich information and expressiveness of textual data, LLMs can learn complex structure-property relationships from scientific literature and text-based crystal descriptions, overcoming key limitations of graph-based representations [1] [13]. This whitepaper provides an in-depth technical guide on the application of LLMs for property prediction across crystalline materials, with a specific focus on the unique challenges and opportunities presented by MOFs. We summarize quantitative performance data, detail experimental methodologies, and visualize core workflows to equip researchers and scientists with the knowledge to leverage these powerful tools.

Core Methodologies and Experimental Protocols

Text-Based Property Prediction with LLM-Prop

A pioneering approach, LLM-Prop, demonstrates the efficacy of predicting crystal properties directly from their text descriptions [1]. Its methodology can be broken down into the following key stages:

- Dataset Curation (TextEdge): The model is trained and evaluated on a publicly benchmarked dataset containing text descriptions of crystal structures paired with their properties [1].

- Input Preprocessing: The crystal text descriptions undergo a multi-step preprocessing pipeline to enhance model performance:

- Stopword Removal: Publicly available English stopwords are removed, except for digits and signs that may carry critical crystal information [1].

- Numerical Tokenization: Bond distances and their units (e.g., "3.03 Å") are replaced with a

[NUM]token. Bond angles and their units (e.g., "120 degrees") are replaced with an[ANG]token. This compresses the sequence length and helps the model generalize over numerical values [1]. - [CLS] Token Prepending: A

[CLS]token is added to the start of the input sequence. The final embedding of this token is used as the aggregate sequence representation for downstream prediction tasks [1].

- Model Architecture and Fine-Tuning: LLM-Prop utilizes the encoder part of a pre-trained T5 model, a Transformer-based architecture [1]. The decoder is entirely discarded. A linear layer (with sigmoid or softmax activation for classification tasks) is added on top of the encoder. This design choice halves the number of parameters compared to the full T5 model, allowing for training on longer input sequences and more efficient regression performance [1].

- Training Objective: The model is fine-tuned for regression and classification tasks to predict target crystal properties such as band gap, formation energy, and unit cell volume.

Multimodal Learning for MOFs with L2M3OF

Given the extreme complexity of MOF structures, a unimodal text-based approach can be limiting. The L2M3OF model introduces a multimodal framework specifically designed for MOFs [15]. Its experimental protocol involves:

- Multimodal Data Integration: L2M3OF processes three modalities jointly: structural information, textual knowledge, and domain knowledge [15].

- Structural Encoding: A pre-trained crystal encoder, equipped with a lightweight projection layer, compresses the structural information of the MOF (e.g., from a CIF file) into a sequence of tokens that can be aligned with the language model's token space [15].

- Model Architecture: The projected structural tokens are fed into a language model (Qwen2.5) alongside textual and knowledge-based tokens, enabling the model to perform joint reasoning across all modalities [15].

- Training and Evaluation: The model is trained and evaluated on a curated Structure-Property-Knowledge database (MOF-SPK), which contains over 100,000 MOF materials. It is benchmarked against leading closed-source LLMs on tasks including property prediction and application recommendation [15].

Performance Benchmarking

The following tables summarize the performance of LLM-based models against state-of-the-art GNNs and other benchmarks.

Table 1: Performance of LLM-Prop versus GNN-based models on key properties. Adapted from [1].

| Property | Model Type | Specific Model | Performance (vs. Baseline) |

|---|---|---|---|

| Band Gap Prediction | GNN-Based | ALIGNN (Baseline) | - |

| LLM-Based | LLM-Prop | ~8% improvement | |

| Band Gap Direct/Indirect Classification | GNN-Based | ALIGNN (Baseline) | - |

| LLM-Based | LLM-Prop | ~3% improvement | |

| Unit Cell Volume Prediction | GNN-Based | ALIGNN (Baseline) | - |

| LLM-Based | LLM-Prop | ~65% improvement | |

| Formation Energy per Atom | GNN-Based | ALIGNN (Baseline) | - |

| LLM-Based | LLM-Prop | Comparable performance |

Table 2: A comparison of selected LLMs for crystalline materials. Synthesized from [1] [15] [13].

| Model Name | Target Material | Core Architecture | Input Modality | Key Tasks |

|---|---|---|---|---|

| LLM-Prop | Inorganic Crystals | T5 (Encoder-only) | Text | Property Prediction |

| L2M3OF | MOFs | Qwen2.5 + Crystal Encoder | Multimodal (Structure, Text, Knowledge) | Property Prediction, Knowledge Generation, Q&A |

| Matterchat | Inorganic Crystals | Mistral | Text | Property Prediction, Knowledge, Q&A |

| Chemeleon | Inorganic Crystals | BERT | Text, Structure | Property Prediction |

Workflow Visualization

The following diagrams illustrate the core workflows for text-based and multimodal LLM approaches in materials property prediction.

LLM-Prop Text-Based Prediction Workflow

L2M3OF Multimodal Framework for MOFs

Table 3: Essential datasets, models, and tools for LLM-based materials research.

| Item Name | Type | Function/Benefit |

|---|---|---|

| TextEdge Dataset | Dataset | A public benchmark containing crystal text descriptions with properties for training and evaluating LLMs [1]. |

| MOF-SPK Database | Dataset | A curated structure-property-knowledge database for over 100,000 MOFs, facilitating multimodal model training [15]. |

| Crystallographic Information File (CIF) | Data Format | Standardized text file format for encoding crystal structure information, including atomic coordinates and lattice parameters [15]. |

| Pre-trained T5 Model | Language Model | A versatile, pre-trained encoder-decoder model. Its encoder forms the backbone of LLM-Prop for property prediction [1]. |

| Pre-trained Crystal Encoder | Model Component | A model pre-trained on crystal structures to convert 3D structural data into a meaningful latent representation for multimodal fusion [15]. |

| MatBERT | Language Model | A domain-specific BERT model pre-trained on materials science text, serving as a performance benchmark [1]. |

The application of Large Language Models represents a paradigm shift in the property prediction of crystalline materials, from inorganic crystals to complex Metal-Organic Frameworks. While text-based models like LLM-Prop have demonstrated superior or comparable performance to state-of-the-art GNNs on several properties, the future lies in multimodal integration, as exemplified by L2M3OF for MOFs. These approaches successfully combine structural, textual, and knowledge-based information to achieve a more holistic "understanding" of materials, enabling not only accurate property prediction but also intelligent tasks like application recommendation and question-answering. As open-source models and benchmarks continue to mature, LLMs are poised to become indispensable AI assistants in the materials scientist's toolkit, dramatically accelerating the design and discovery of next-generation functional materials.

The integration of large language models (LLMs) into materials property prediction research represents a paradigm shift in computational materials science. Traditional machine learning (ML) approaches have demonstrated significant value in materials structural design, composition optimization, and autonomous experiments [13]. However, these methods face substantial challenges due to limited availability of experimental data, which is often costly and time-consuming to generate [18]. The transformative impact of artificial intelligence (AI) technologies on materials science has revolutionized the study of materials problems, primarily through leveraging well-characterized datasets derived from scientific literature [13]. This technical guide explores how NLP tools and LLMs are addressing the fundamental data scarcity challenge in materials informatics, enabling researchers to extract meaningful insights from sparse, heterogeneous datasets.

The data scarcity problem manifests in multiple dimensions within materials science. Experimental data generation remains expensive, while density functional theory (DFT) computations, though valuable, contain significant discrepancies against experimental measurements [18]. Predictive modeling based solely on experimental observations suffers from high prediction errors due to limited training data, creating a fundamental bottleneck in materials discovery pipelines [18]. This challenge is particularly acute in emerging research areas such as 2D material synthesis, where comprehensive datasets encompassing exhaustive synthesis parameters remain underdeveloped [19].

NLP and LLMs: Bridging the Data Gap in Materials Science

The Evolution of Information Extraction

Natural language processing has emerged as a critical solution to the data extraction challenge in materials science. Born in the 1950s, NLP entered the field of materials chemistry for the first time in 2011 and continues to have impact in materials informatics [13]. The development of NLP has provided an opportunity for the automatic construction of large-scale materials datasets, giving data-driven materials research a complementary focus in utilizing NLP tools [13]. The most common task employs NLP to solve automatic extraction of materials information reported in literature, including compounds and their properties, synthesis processes and parameters, alloy compositions and properties, and process routes [13].

The recent emergence of pre-trained models has brought a new era in NLP research and development. LLMs such as Generative Pre-trained Transformer (GPT), Falcon, and Bidirectional Encoder Representations from Transformers (BERT) have demonstrated general "intelligence" capabilities via large-scale data, deep neural networks, self and semi-supervised learning, and powerful hardware [13]. The Transformer architecture, characterized by the attention mechanism, serves as the fundamental building block that has impacted LLMs and has been employed to solve many problems in information extraction, code generation, and automation of chemical research [13].

Technical Approaches to Data Scarcity

Table 1: Technical Strategies for Addressing Data Scarcity in Materials Informatics

| Strategy Level | Approach | Key Methodologies | Applications |

|---|---|---|---|

| Data Level | LLM-powered data imputation | Prompt engineering for missing value imputation; embedding models for feature homogenization | Graphene CVD synthesis parameter imputation [19] |

| Data augmentation & extraction | Automated information extraction from literature; synthetic data generation | Mining substrates and synthesis conditions from publications [19] | |

| Algorithm Level | Pretraining strategies | Self-supervised learning; fingerprint learning; multimodal learning | Structure-agnostic property prediction with Roost architecture [20] |

| Transfer learning | Deep transfer learning from DFT to experimental data | Formation energy prediction surpassing DFT accuracy [18] | |

| ML Strategy Level | Model frameworks | Transformer language models; graph neural networks; support vector machines | Property prediction from text descriptions [21] |

Recent advancements have demonstrated how LLMs can enhance machine learning performance on limited, heterogeneous datasets. In graphene chemical vapor deposition synthesis, researchers have compiled sparse datasets from existing literature that introduce issues like mixed data quality, inconsistent formats, and variations in reporting experimental parameters [19]. These strategies include prompting modalities for imputing missing data points and leveraging LLM embeddings to encode complex nomenclature of substrates reported in CVD experiments [19].

Experimental Protocols and Methodologies

LLM-Powered Data Imputation and Enhancement

Protocol 1: LLM-Driven Data Imputation for Sparse Materials Datasets

Objective: To populate missing values in heterogeneous materials datasets using large language models, enabling improved machine learning performance on classification tasks.

Materials and Reagents:

- Dataset: Sparsely populated graphene CVD synthesis database compiled from multiple literature sources [19]

- LLM Instance: Pre-trained ChatGPT-4o-mini model [19]

- Comparative Method: Statistical K-nearest neighbors (KNN) imputation for benchmarking

- Embedding Models: OpenAI's embedding models for substrate featurization [19]

Methodology:

- Data Curation: Manually compile heterogeneous dataset from literature sources through meticulous data mining, ensuring sufficient feature variability despite data scarcity [19]

- Prompt Engineering: Implement various prompt-engineering strategies to guide the LLM imputation task with specific instructions for different parameter types [19]

- Feature Homogenization: Apply LLM-based featurization to unify inconsistent substrate nomenclatures using embedding models, converting textual attributes to meaningful vector representations [19]

- Discretization: Transform continuous input feature space into discrete maps to enhance classification performance [19]

- Model Training: Train support vector machine classifiers using LLM-enhanced data for graphene layer classification tasks [19]

Validation: Compare imputation quality between LLM and KNN approaches by evaluating the diversity of generated distributions, richness of feature representation, and final model generalization performance [19]

Transfer Learning for Enhanced Property Prediction

Protocol 2: Deep Transfer Learning from DFT to Experimental Data

Objective: To leverage large DFT-computed datasets and existing experimental observations to build predictive models that compute materials properties more accurately than DFT alone.

Materials and Reagents:

- DFT Datasets: Open Quantum Materials Database (OQMD), Materials Project (MP), Joint Automated Repository for Various Integrated Simulations (JARVIS) [18]

- Experimental Data: EXP dataset from "exp-formation-enthalpy" containing formation energy and materials structure information [18]

- Model Architecture: IRNet deep neural network model [18]

- Target Property: Formation energy prediction from materials structure and composition [18]

Methodology:

- Source Domain Pretraining: Train IRNet model on large DFT-computed dataset to learn rich set of domain-specific features from materials structure and composition [18]

- Target Domain Fine-tuning: Fine-tune pretrained model parameters on available experimental observations containing formation energy and materials structure information [18]

- Feature Transfer: Leverage features learned from DFT computations to capture patterns in smaller but more accurate experimental observations [18]

- Performance Evaluation: Evaluate model on experimental hold-out test set and compare against DFT computation accuracy for the same compounds [18]

Validation Metrics: Mean absolute error (MAE) in eV/atom compared against ground-truth experimental measurements and benchmarked against pure DFT computation performance [18]

Table 2: Performance Comparison of AI vs DFT for Formation Energy Prediction

| Method | Dataset | Mean Absolute Error (eV/atom) | Test Set Size | Key Advantage |

|---|---|---|---|---|

| AI with Transfer Learning | EXP (experimental) | 0.064 | 137 entries | Significantly outperforms DFT computations [18] |

| DFT Computations | Same experimental set | >0.076 | 137 entries | Serves as baseline comparison [18] |

| OQMD DFT | Experimental comparison | 0.108 | 1670 materials | Reference benchmark from literature [18] |

| Materials Project DFT | Experimental comparison | 0.133 | 1670 materials | Reference benchmark from literature [18] |

Structure-Agnostic Pretraining Strategies

Protocol 3: Self-Supervised Pretraining for Structure-Agnostic Prediction

Objective: To develop pretraining strategies that improve downstream material property prediction performance without requiring relaxed crystal structures.

Materials and Reagents:

- Model Architecture: Roost (Representation Learning from Stoichiometry) encoder [20]

- Pretraining Data: Unlabeled large dataset (432,314 data points from combined OQMD, Matbench, and MOF datasets) [20]

- Input Representation: Stoichiometric formulas with initial element representations from Matscholar embeddings [20]

- Finetuning Datasets: Matbench suite including Steel-yield-strength, JDFT2D, Phonons, Dielectric, and others [20]

Methodology:

- Self-Supervised Learning (SSL): Implement Barlow Twins framework with random atom masking augmentation (10% node masking) to create different augmentations from same crystalline material [20]

- Fingerprint Learning (FL): Predict Magpie fingerprint using Roost encoder to retain benefits of learnable framework while capturing fixed descriptor information [20]

- Multimodal Learning (MML): Leverage available characterized structure data to predict embeddings generated using pretrained CGCNN encoder from Crystal Twins framework [20]

- Message Passing Framework: Update node information using weighted attention pooling and multilayer perceptron for final property prediction [20]

Evaluation: Assess performance gains across multiple material property prediction tasks in Matbench suite, with particular focus on small dataset performance and data efficiency [20]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for LLM-Enhanced Materials Informatics

| Tool/Reagent | Type | Function | Application Example |

|---|---|---|---|

| ChatGPT-4o-mini | LLM Instance | Data imputation through prompt engineering; feature homogenization | Populating missing values in graphene CVD synthesis datasets [19] |

| Roost Encoder | Structure-Agnostic Model | Learnable framework for stoichiometry-based representation | Material property prediction without crystal structures [20] |

| IRNet | Deep Neural Network | Transfer learning architecture for property prediction | Formation energy prediction from structure and composition [18] |

| OpenAI Embedding Models | Text Embedding System | Converting textual attributes to vector representations | Substrate featurization for consistent nomenclature encoding [19] |

| Matbench Suite | Benchmarking Framework | Standardized evaluation of prediction models | Performance assessment across diverse material properties [20] |

| Barlow Twins Framework | Self-Supervised Learning | SSL pretraining without labeled data | Structure-agnostic representation learning [20] |

| Matscholar Embeddings | Material-Specific Word Vectors | Initial element representations for stoichiometric inputs | Feature initialization in Roost architecture [20] |

Results and Performance Analysis

The implementation of LLM-driven strategies for addressing data scarcity has demonstrated significant improvements in materials property prediction accuracy. In graphene synthesis classification tasks, LLM-enhanced approaches increased binary classification accuracy from 39% to 65% and ternary accuracy from 52% to 72% [19]. This substantial improvement highlights the value of LLM-based data imputation and feature homogenization in overcoming limitations of small, heterogeneous datasets.

For formation energy prediction, AI models leveraging transfer learning between DFT computations and experimental data achieved a mean absolute error of 0.064 eV/atom on experimental test sets, significantly outperforming DFT computations themselves which showed discrepancies of >0.076 eV/atom for the same compounds [18]. This breakthrough demonstrates how AI can compute materials properties more accurately than the theoretical calculations used for training, effectively bridging the gap between computational and experimental materials science.

Structure-agnostic pretraining strategies have shown remarkable effectiveness in improving data efficiency, particularly for small datasets. The integration of self-supervised learning, fingerprint learning, and multimodal learning strategies with the Roost architecture resulted in significant performance gains across multiple material property prediction tasks within the Matbench suite [20]. These approaches successfully address the challenge of limited structural characterization availability while maintaining prediction accuracy.

Transformer language models utilizing text-based descriptions of materials have also demonstrated superior performance compared to graph neural networks in most cases [21]. These models outperform crystal graph networks in classifying four out of five analyzed properties when considering all available reference data, while also showing high accuracy in the ultra-small data limit [21]. The clarity of text-based representation and maturity of associated explainability methods make this approach particularly valuable for educational applications and improving trust among materials scientists.

From Theory to Practice: Implementing LLMs for Prediction

The evolution of transformer-based architectures has fundamentally reshaped the landscape of natural language processing (NLP) and its applications in scientific domains, particularly materials property prediction research. Among these architectures, encoder-only models like BERT (Bidirectional Encoder Representations from Transformers) and encoder-decoder models such as T5 (Text-to-Text Transfer Transformer) represent distinct paradigms with unique capabilities and limitations. Understanding these architectural differences is crucial for researchers and scientists seeking to leverage large language models (LLMs) for advanced materials informatics tasks, including crystal property prediction, polymer characterization, and autonomous materials discovery.

The fundamental distinction lies in their core design principles: encoder-only models specialize in understanding and analyzing input text through bidirectional context processing, while encoder-decoder models excel at transforming input sequences into output sequences through a unified text-to-text framework [22]. This technical divergence directly impacts their applicability, performance, and efficiency in materials science research, where both analytical understanding and generative capabilities are increasingly valuable.

Architectural Fundamentals

Encoder-Only Models: BERT

Bidirectional Encoder Representations from Transformers (BERT) employs an encoder-only transformer architecture specifically designed for deep bidirectional text understanding [23]. The model consists of four primary components: tokenizer, embedding layer, encoder stack, and task head. The embedding layer combines token type, position, and segment type embeddings to create initial token representations, which are then processed through multiple transformer encoder blocks with self-attention mechanisms without causal masking [23].

BERT's architectural variants are characterized by two key parameters: L (number of layers) and H (hidden size). The standard configurations include BERTBASE (12 layers, 768 hidden dimensions, 110M parameters) and BERTLARGE (24 layers, 1024 hidden dimensions, 340M parameters) [23]. The self-attention mechanism in BERT processes entire sequences simultaneously, enabling each token to attend to all other tokens in both directions, capturing rich contextual relationships essential for understanding complex materials science terminology and relationships.

Encoder-Decoder Models: T5

The Text-to-Text Transfer Transformer (T5) implements a unified encoder-decoder architecture that frames every NLP task as a text-to-text problem [24]. This model converts all tasks—including translation, classification, and regression—into a consistent format where both input and output are text sequences. The encoder processes the input text bidirectionally, while the decoder generates output autoregressively using causal masking, attending to both the decoder's previous states and the full encoder output [25].

T5's architecture employs relative scalar embeddings and is available in various sizes from 60 million to 11 billion parameters [24]. The model uses a span corruption objective during pre-training, where random contiguous spans of tokens are replaced with sentinel tokens, and the decoder learns to reconstruct the original text. This approach proves particularly valuable for materials property prediction, where complex crystal descriptions can be transformed into numerical property values or classifications through appropriate text formatting.

Comparative Analysis

Table 1: Architectural Comparison Between BERT and T5

| Feature | BERT (Encoder-Only) | T5 (Encoder-Decoder) |

|---|---|---|

| Primary Objective | Masked Language Modeling, Next Sentence Prediction | Text-to-Text Transformation |

| Attention Mechanism | Bidirectional, Non-causal | Encoder: Bidirectional; Decoder: Causal with Cross-Attention |

| Task Handling | Requires task-specific heads | Unified text-to-text format with task prefixes |

| Pre-training Objectives | Masked Token Prediction (15% of tokens), Next Sentence Prediction | Span Corruption (Denoising) |

| Typical Output | Classification labels, Token predictions | Generated text sequences |

| Parameter Efficiency | Lower for sequence-to-sequence tasks | Higher for generative and transformation tasks |

| Materials Science Applications | Text classification, Named entity recognition, Relation extraction | Property prediction, Text summarization, Data transformation |

Applications in Materials Property Prediction

Encoder-Only Approaches

Encoder-only models have demonstrated significant utility in materials informatics, particularly for classification tasks and information extraction from scientific literature. Their bidirectional understanding enables deep semantic analysis of complex materials science terminology and relationships. In polymer informatics, BERT-style models have been fine-tuned to predict key thermal properties including glass transition temperature (Tg), melting temperature (Tm), and thermal decomposition temperature (Td) from polymer chemical representations [26].

The pretraining-finetuning paradigm of encoder-only models allows efficient transfer learning on limited materials science datasets. By leveraging knowledge gained from general domain pretraining, these models can adapt to specialized materials science tasks with relatively small labeled datasets, addressing the data scarcity challenges common in materials informatics [13].

Encoder-Decoder Innovations

The encoder-decoder framework has enabled groundbreaking approaches in materials property prediction, most notably through the LLM-Prop methodology [1]. This innovative approach leverages T5's encoder component exclusively for property prediction tasks, discarding the decoder to reduce parameter count and computational requirements while maintaining robust performance.

In crystal property prediction, LLM-Prop processes text descriptions of crystal structures through the T5 encoder, followed by regression or classification heads to predict physical and electronic properties [1]. This method has demonstrated state-of-the-art performance, outperforming graph neural network (GNN) approaches by approximately 8% on band gap prediction, 3% on band gap type classification, and 65% on unit cell volume prediction [1]. The approach successfully leverages the rich informational content and expressiveness of textual crystal descriptions, overcoming limitations of graph-based representations in capturing complex crystallographic symmetries and relationships.

Table 2: Performance Comparison of LLM-Prop vs. GNN Baselines on Crystal Property Prediction

| Property | LLM-Prop Performance | GNN Baseline Performance | Improvement |

|---|---|---|---|

| Band Gap Prediction | State-of-the-art | Previous SOTA (ALIGNN) | ~8% improvement |

| Band Gap Type Classification | State-of-the-art | Previous SOTA (ALIGNN) | ~3% improvement |

| Unit Cell Volume Prediction | State-of-the-art | Previous SOTA (ALIGNN) | ~65% improvement |

| Formation Energy/Atom | Comparable | Previous SOTA (ALIGNN) | Similar performance |

| Energy/Atom | Comparable | Previous SOTA (ALIGNN) | Similar performance |

Hybrid and Specialized Approaches

Recent advancements have explored hybrid methodologies that combine architectural strengths for enhanced materials informatics applications. The LLM-Prop framework exemplifies this trend by strategically utilizing only the encoder component of T5 for predictive tasks while incorporating specialized preprocessing techniques optimized for materials science data [1].

These approaches typically involve domain-specific tokenization, numerical representation handling, and sequence compression techniques. For instance, bond distances and angles in crystal descriptions may be replaced with special tokens ([NUM], [ANG]) to reduce sequence length and computational complexity while preserving critical structural information [1]. This preprocessing enables the model to capture longer-range dependencies in crystal descriptions, significantly enhancing predictive accuracy for complex material properties.

Experimental Protocols and Methodologies

LLM-Prop Framework Implementation

The LLM-Prop methodology represents a sophisticated experimental protocol for materials property prediction using encoder-decoder architectures [1]. The implementation involves four key stages: data preprocessing, model adaptation, fine-tuning, and evaluation.

Data Preprocessing Protocol:

- Stopword Removal: Standard English stopwords are removed from crystal text descriptions while preserving numerical values and scientific notation

- Numerical Tokenization: Bond distances and angles are replaced with specialized tokens ([NUM], [ANG]) to compress sequence length and enhance numerical reasoning

- Sequence Formatting: A [CLS] token is prepended to input sequences for classification tasks, following established practices from encoder-only models

- Textual Representation: Crystal structures are converted to textual descriptions using tools like Robocrystallographer, capturing symmetry information, atomic arrangements, and bonding environments

Model Adaptation Process:

- Encoder Isolation: The decoder component of T5 is discarded, reducing parameter count by approximately 50%

- Task-Specific Heads: Regression or classification layers are added atop the encoder outputs

- Sequence Length Optimization: Reduced parameter count enables processing of longer input sequences, capturing comprehensive crystal structure information

Benchmarking and Evaluation Frameworks

Robust evaluation methodologies are essential for assessing model performance in materials property prediction. Recent frameworks employ comprehensive benchmarking across multiple datasets and perturbation conditions to evaluate model robustness and generalization capability [2].

Standard Evaluation Protocol:

- Dataset Curation: Compilation of specialized datasets like TextEdge for crystal property prediction and MSE-MCQs for materials knowledge evaluation

- Perturbation Testing: Systematic introduction of realistic and adversarial perturbations to assess model robustness

- Comparative Analysis: Performance comparison against established baselines including GNNs and domain-adapted models

- Multi-scale Evaluation: Assessment across easy, medium, and hard question difficulties to probe reasoning capabilities

Experimental results demonstrate that encoder-decoder adaptations like LLM-Prop maintain robust performance under various textual perturbations, with some configurations even showing improved performance with truncated or shuffled input sequences [2]. This unexpected robustness highlights the potential of text-based approaches for materials property prediction compared to traditional graph-based methods.

Essential Research Components

Table 3: Key Research "Reagents" for LLM-Based Materials Property Prediction

| Component | Function | Examples/Specifications |

|---|---|---|

| Pre-trained Language Models | Foundation for transfer learning | T5-base, T5-large, BERT-base, BERT-large |

| Domain-Specific Datasets | Task-specific fine-tuning and evaluation | TextEdge (crystal descriptions), matbench_steels, polymer property datasets |

| Text Representation Tools | Conversion of structured data to text | Robocrystallographer, chemical formula parsers, structure descriptors |

| Computational Infrastructure | Model training and inference | TPU v3/v4, GPU clusters (A100/H100), high-performance computing resources |

| Specialized Tokenization | Domain-adapted text processing | Numerical tokenizers, chemical formula tokenizers, symmetry operation encoders |

| Evaluation Benchmarks | Performance assessment and comparison | Matbench, Materials Project APIs, custom validation splits |

Implementation Considerations

Successful implementation of encoder-only and encoder-decoder models for materials property prediction requires careful consideration of several technical factors. Learning rates for T5-based models typically need adjustment upward from standard defaults, with values between 1e-4 and 3e-4 generally providing optimal performance [24]. Sequence length optimization is crucial, as longer inputs enable richer context capture but increase computational requirements quadratically due to attention mechanisms.

The choice between encoder-only and encoder-decoder architectures involves fundamental trade-offs. Encoder-only models provide computational efficiency for classification and analysis tasks, while encoder-decoder frameworks offer greater flexibility for diverse task formulations and generative applications. In materials discovery pipelines, this architectural decision must align with the specific research objectives, data characteristics, and computational constraints.

Future Directions and Challenges

The integration of transformer architectures in materials informatics continues to evolve, with several emerging trends and persistent challenges. The development of domain-adapted pre-training approaches, combining general language understanding with materials science knowledge, represents a promising direction for enhancing model performance while reducing data requirements [13].

Key challenges include improving model interpretability for scientific applications, enhancing robustness to distribution shifts and adversarial perturbations, and developing efficient fine-tuning methodologies for low-data scenarios. The unique phenomenon of performance recovery from train/test mismatch observed in LLM-Prop and similar models suggests intriguing research directions for model distillation and efficiency optimization [2].

As materials science increasingly embraces autonomous research paradigms, the synergistic combination of encoder-only analysis capabilities and encoder-decoder generative capacities will likely play a pivotal role in accelerating materials discovery and development. The continued refinement of these architectural frameworks promises to enhance their utility across diverse materials research applications, from fundamental property prediction to automated experimental design and optimization.

The integration of Large Language Models (LLMs) into scientific research represents a paradigm shift, offering unprecedented capabilities for natural language processing and knowledge synthesis. However, their application to specialized domains such as materials science and engineering requires deliberate adaptation strategies to meet technical requirements often absent from general-purpose training [27]. The fundamental challenge lies in transforming models with broad capabilities into specialized tools capable of understanding domain-specific terminology, reasoning about complex material properties, and generating scientifically accurate predictions. This technical guide examines the landscape of fine-tuning strategies, evaluating their performance and robustness for materials science applications, with particular emphasis on their role in the broader context of materials property prediction research.

Current research indicates substantial performance gaps between general and adapted models. Benchmark studies reveal that while closed-source LLMs like Claude-3.5-Sonnet and GPT-4o achieve approximately 84% accuracy on materials science question-answering tasks, open-source models such as Llama3-70b and Phi3-14b top at only ~56% and ~43% accuracy respectively without specialized adaptation [28]. This performance differential underscores the critical importance of targeted fine-tuning strategies to bridge the capability gap for open-source models, making them viable for research applications in materials science and related fields such as drug development where molecular property prediction shares analogous challenges.

Foundational Fine-Tuning Approaches

Core Methodological Framework

Adapting general-purpose LLMs to materials science involves a progression of techniques that build upon pre-trained base models. The principal strategies form a methodological hierarchy, each addressing different aspects of domain specialization:

Continued Pre-Training (CPT): This foundational approach exposes the model to domain-specific corpora, introducing new knowledge within the target domain through further pre-training on materials science literature and datasets [27]. CPT helps the model develop fundamental understanding of domain-specific terminology, concepts, and relationships, effectively building a knowledge foundation for subsequent specialization.

Supervised Fine-Tuning (SFT): Following CPT, SFT refines model capabilities using curated datasets in question-answer or instruction-response formats [27]. This stage directly teaches the model to perform specific tasks such as property prediction, synthesis recommendation, or technical question answering. SFT utilizes labeled data to align model behavior with research applications.

Preference-Based Optimization: Advanced optimization strategies including Direct Preference Optimization (DPO) and Odds Ratio Preference Optimization (ORPO) further refine model outputs based on human or baseline preferences [27]. These methods align model behavior with domain-specific quality criteria without requiring explicit reward functions, making them particularly valuable for capturing nuanced scientific accuracy requirements.

Table 1: Core Fine-Tuning Strategies for Materials Science Applications

| Method | Primary Function | Data Requirements | Typical Outcomes |

|---|---|---|---|

| Continued Pre-Training (CPT) | Domain knowledge acquisition | Large-domain corpora (scientific literature) | Foundation for domain-specific reasoning |

| Supervised Fine-Tuning (SFT) | Task-specific skill development | Curated labeled datasets (Q&A, instructions) | Improved accuracy on targeted tasks |

| Direct Preference Optimization (DPO) | Output quality alignment | Preference pairs (chosen/rejected responses) | Enhanced response quality and accuracy |

| Odds Ratio Preference Optimization (ORPO) | Efficient preference integration | Single prompt with preferred output | Balanced performance across multiple criteria |

Low-Rank Adaptation (LoRA) for Efficient Tuning

Low-Rank Adaptation (LoRA) has emerged as a particularly effective technique for parameter-efficient fine-tuning, especially valuable in computational resource-constrained environments [27]. Instead of updating all model parameters, LoRA injects trainable low-rank matrices into linear layers, dramatically reducing the number of parameters requiring optimization. This approach enables rapid adaptation with minimal storage overhead – a single LoRA adapter may be only 1-2% the size of the base model – while maintaining performance comparable to full fine-tuning in many domain-specific applications.

Advanced Architectures and Model Merging

The Model Merging Paradigm

Beyond sequential fine-tuning, model merging represents a transformative approach that combines multiple specialized models to create new capabilities. Research demonstrates that merging differently fine-tuned models generates nonlinear interactions between parameters, resulting in emergent functionalities that surpass the individual capabilities of parent models [27]. This process is not merely additive but can produce qualitatively new capabilities through strategic combination of specialized components.

The success of model merging depends critically on several factors:

- Parent Model Diversity: Merging models with complementary capabilities yields more significant emergent behaviors than combining similar models.

- Fine-Tuning Techniques: The specific adaptation strategies used for parent models influence merging outcomes.

- Architectural Considerations: Model scaling appears crucial – experiments with 1.7 billion parameter models showed limited emergence, suggesting minimum scale thresholds [27].

Spherical Linear Interpolation (SLERP) for Model Fusion

Spherical Linear Interpolation (SLERP) has proven particularly effective for model merging, outperforming simple linear interpolation (LERP) by preserving the geometric relationships in parameter space [27]. Originally developed for computer graphics, SLERP enables smooth interpolation between model states while maintaining the underlying structural integrity of parameter configurations. This geometric preservation avoids the high-loss regions often encountered with linear interpolation, leading to more stable and capable merged models.

Property Prediction: A Case Study in Specialization

LLM-Prop Architecture for Crystal Properties