Leveraging Maximum Delta-G Theory for Predictive Modeling of Solid-State Reactions in Pharmaceutical Development

This article provides a comprehensive examination of maximum delta-G (ΔG) theory as a pivotal tool for predicting solid-state reaction outcomes in pharmaceutical research and development.

Leveraging Maximum Delta-G Theory for Predictive Modeling of Solid-State Reactions in Pharmaceutical Development

Abstract

This article provides a comprehensive examination of maximum delta-G (ΔG) theory as a pivotal tool for predicting solid-state reaction outcomes in pharmaceutical research and development. It explores the foundational thermodynamic principles governing reaction spontaneity and stability, detailing modern computational and high-throughput methodologies for free energy calculation. The scope includes robust strategies for troubleshooting prediction inaccuracies and optimizing models, with a strong emphasis on rigorous validation through case studies and comparative performance analysis against experimental data. Tailored for researchers, scientists, and drug development professionals, this review synthesizes theoretical insights with practical applications to enhance the predictive design and stability assessment of solid drug forms, thereby accelerating robust formulation development.

Foundational Principles of Delta-G: Thermodynamics of Solid-State Stability and Spontaneity

The Gibbs free energy change, denoted as Delta-G (ΔG), is a fundamental thermodynamic potential that serves as the ultimate arbiter of reaction spontaneity and feasibility in chemical processes. Defined by the equation ΔG = ΔH - TΔS, it provides a quantitative measure of the useful work obtainable from a system at constant temperature and pressure. This in-depth technical guide explores the core principles of ΔG, its mathematical and physical interpretation, and its pivotal role in modern research, from predicting solid-state reaction outcomes to driving innovations in drug design and machine-learning-assisted material discovery. The integration of ΔG analysis with advanced experimental and computational protocols is creating a powerful framework for navigating chemical spaces and designing robust industrial processes, establishing it as a cornerstone of predictive materials science.

In thermodynamics, the Gibbs free energy (symbol G) is a state function that predicts the direction of chemical processes and defines the equilibrium condition for systems at constant pressure and temperature. The total Gibbs energy is defined as G = H - TS, where H is enthalpy, T is absolute temperature, and S is entropy [1] [2]. For chemical reactions, the change in Gibbs free energy, ΔG, is the most critical parameter and is given by the fundamental equation: ΔG = ΔH - TΔS [1] [3] [4].

The sign of ΔG directly determines the spontaneity of a process. A negative ΔG (an exergonic process) indicates a spontaneous reaction, while a positive ΔG (an endergonic process) signifies a non-spontaneous one that requires energy input [1] [3] [4]. The magnitude of ΔG corresponds to the maximum amount of non-pressure-volume work that can be harnessed from the reaction [1]. This concept was originally developed in the 1870s by the American scientist Josiah Willard Gibbs, who described it as "the greatest amount of mechanical work which can be obtained from a given quantity of a certain substance in a given initial state" [1].

Table 1: Interpretation of Gibbs Free Energy Change (ΔG)

| ΔG Value | Reaction Spontaneity | Technical Description |

|---|---|---|

| ΔG < 0 | Spontaneous | Exergonic process; proceeds in the forward direction as written. |

| ΔG > 0 | Non-spontaneous | Endergonic process; requires energy input to proceed forward. |

| ΔG = 0 | System at Equilibrium | No net change occurs; forward and reverse reaction rates are equal. |

Mathematical Formalism and Thermodynamic Foundations

The defining equation for the change in Gibbs free energy, ΔG = ΔH - TΔS, encapsulates the interplay between the system's enthalpy (ΔH) and entropy (ΔS) at a given temperature (T) [1] [3] [2]. The standard state Gibbs free energy change, ΔG°, is calculated when reactants and products are in their standard states (typically 1 M concentration for solutions, 1 atm pressure for gases) and is related to the equilibrium constant (K) by the equation: ΔG° = -RT ln K [3].

For conditions not in the standard state, the reaction quotient (Q) is used to calculate ΔG: ΔG = ΔG° + RT ln Q [3]. This relationship provides a direct link between thermodynamics and the reaction's position at equilibrium.

The total differential of Gibbs free energy reveals its dependence on its natural variables, pressure (p) and temperature (T), and is fundamental for understanding open and reacting systems [1]: dG = Vdp - SdT + ΣμᵢdNᵢ where V is volume, S is entropy, μᵢ is the chemical potential of component i, and Nᵢ is the number of particles (or moles) of i [1]. This expression shows how G changes with pressure, temperature, and composition.

Predicting Reaction Feasibility and Spontaneity

The power of ΔG lies in its ability to predict the feasibility of a reaction under given conditions. The analysis of the signs of ΔH and ΔS leads to four possible scenarios for reaction spontaneity, summarized in the table below.

Table 2: Spontaneity Conditions Based on Enthalpy and Entropy Changes

| ΔH | ΔS | ΔG = ΔH - TΔS | Spontaneity Condition |

|---|---|---|---|

| Negative (Exothermic) | Positive | Always Negative | Spontaneous at all temperatures |

| Positive (Endothermic) | Negative | Always Positive | Non-spontaneous at all temperatures |

| Negative (Exothermic) | Negative | Negative at low T | Spontaneous at low temperatures |

| Positive (Endothermic) | Positive | Negative at high T | Spontaneous at high temperatures |

The following diagram illustrates the logical decision process for determining reaction spontaneity using Gibbs free energy.

Diagram 1: Logic of Reaction Spontaneity

Experimental Protocols for Determining ΔG

Accurate experimental determination of thermodynamic parameters is crucial for feasibility analysis. The following section details key methodologies.

Calorimetric Methods for Direct Measurement

Isothermal Titration Calorimetry (ITC) is a primary technique for directly measuring the enthalpy change (ΔH) of a binding interaction in a single experiment [3]. The protocol involves:

- Sample Preparation: The ligand is loaded into a syringe, and the macromolecule solution is placed in the sample cell. Both solutions must be in matched buffers to prevent artifactual heat signals from dilution.

- Titration and Data Acquisition: The ligand is injected in a series of small aliquots into the macromolecule solution. The instrument measures the heat released or absorbed after each injection.

- Data Analysis: The integrated heat peaks are fitted to a binding model to extract the binding affinity (Kₐ), stoichiometry (n), and ΔH. The Gibbs free energy is then calculated using ΔG° = -RT ln Kₐ, and the entropy change is derived from ΔS = (ΔH - ΔG)/T [3].

Determination via Equilibrium Constant Measurements

For reactions where direct calorimetry is not feasible, ΔG can be determined by measuring the equilibrium constant.

- Establishing Equilibrium: The reaction is allowed to reach equilibrium under constant temperature and pressure.

- Concentration Quantification: Analytical techniques such as UV-Vis spectroscopy, liquid chromatography-mass spectrometry (LC-MS) [5], or other methods are used to determine the equilibrium concentrations of reactants and products.

- Calculation: The equilibrium constant K is calculated from these concentrations, and the standard free energy change is derived from ΔG° = -RT ln K.

High-Throughput Experimentation (HTE) for Feasibility Screening

Modern materials discovery leverages HTE to rapidly explore chemical spaces and generate data for ΔG-related predictions [5].

- Automated Synthesis: An automated platform (e.g., a robotic liquid handling system) conducts thousands of distinct reactions at a micro-scale (e.g., 200–300 μL) [5].

- Reaction Analysis: The yield or conversion is determined using high-throughput analytics like uncalibrated UV absorbance in LC-MS [5].

- Data Modeling: The resulting extensive dataset on reaction outcomes is used to train machine learning models, such as Bayesian Neural Networks, to predict reaction feasibility with high accuracy (e.g., 89.48%) without explicitly calculating ΔG for every possible reaction [5].

The Scientist's Toolkit: Key Reagents and Materials

Table 3: Essential Research Reagents and Instruments for Thermodynamic Studies

| Item | Function/Description | Key Application |

|---|---|---|

| Isothermal Titration Calorimeter (ITC) | Measures heat changes upon binding, directly yielding ΔH, Kₐ, and ΔG. | Gold-standard for characterizing biomolecular interactions in drug design [3]. |

| High-Throughput Robotic Platform | Automates liquid handling and synthesis in microplates, enabling rapid exploration of chemical space. | Generation of large, unbiased datasets for reaction feasibility prediction [5]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Separates and quantifies reaction components; used to determine product yield and equilibrium concentrations. | Analysis of High-Throughput Experimentation (HTE) outcomes [5]. |

| Differential Scanning Calorimeter (DSC) | Measures heat capacity changes and thermal transitions (e.g., melting points) in a system. | Studying protein stability and folding in biopharmaceutical development [3]. |

| Standardized Chemical Substrates | Commercially available acids, amines, reagents, and bases with high purity. | Ensuring reproducibility and reliability in automated synthesis screens [5]. |

Computational and Machine Learning Approaches

Beyond experimental methods, computational strategies are vital for predicting ΔG, especially in early-stage design and screening.

Molecular Dynamics and Free Energy Perturbation

Classical molecular dynamics simulations, while computationally demanding, can generate reliable methodologies for determining binding affinity [6]. These methods calculate the free energy difference between states by simulating the physical pathway connecting them.

Machine-Learning Scoring Functions

Machine learning (ML) offers a powerful alternative to quantum mechanical or classical force field methods for predicting ΔG for protein-ligand complexes [6]. The standard protocol involves:

- Dataset Curation: A training set is constructed from high-resolution crystallographic structures of protein-ligand complexes with experimentally determined ΔG values (e.g., from ITC) [6].

- Feature Engineering: Energy terms from classical scoring functions (e.g., AutoDock Vina) are used as descriptors for the ML model [6].

- Model Training and Validation: Supervised machine learning techniques (e.g., via scikit-learn) are applied to calibrate a novel scoring function. The model's predictive power for ΔG is then benchmarked against traditional scoring functions [6]. This approach can significantly improve prediction accuracy in virtual screening for drug discovery.

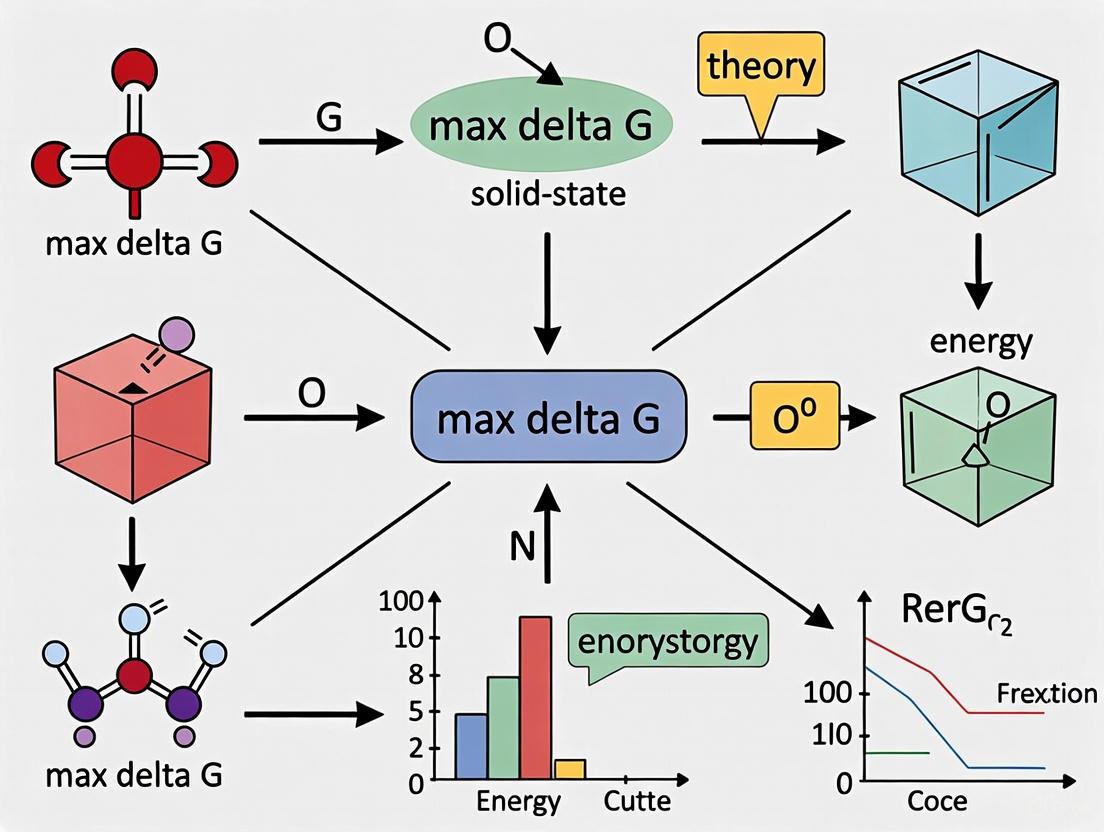

The workflow for integrating High-Throughput Experimentation with Bayesian machine learning to predict reaction feasibility and robustness is illustrated below.

Diagram 2: HTE and ML Workflow for Feasibility

Advanced Theoretical Concepts and Applications

The Role of ΔG in Electron Transfer Kinetics

In Marcus theory of electron transfer, the activation barrier ΔG* is related to the standard Gibbs free energy change ΔG° and the reorganization energy (λ) [7]. In the non-adiabatic limit (weak electronic coupling), the classical Marcus expression is: ΔG* = (λ + ΔG°)² / 4λ

Recent research shows that in the adiabatic limit (strong electronic coupling), the kinetics are still observed to follow a Marcus-like expression, but with a reduced effective reorganization energy, λeff [7]: λeff = λ(1 - 2V/λ)² where V is the electronic coupling strength [7]. This finding reconciles discrepancies between theoretically computed and experimentally fitted reorganization energies and is critical for understanding processes like electrocatalysis.

Thermodynamic Optimization in Drug Design

In rational drug design, a comprehensive thermodynamic profile is vital. The goal is to optimize the balance of enthalpic (ΔH) and entropic (ΔS) contributions to binding free energy (ΔG) [3]. Historically, drug optimization relied heavily on increasing hydrophobicity to gain favorable entropy (via solvent release), but this often led to poor solubility [3]. Modern approaches focus on enthalpic optimization—engineering specific, high-quality interactions like hydrogen bonds—which is more challenging but can lead to superior drug candidates with higher specificity and better physicochemical properties [3]. Tools like thermodynamic optimization plots and the enthalpic efficiency index provide practical guidance for this optimization process [3].

The Gibbs free energy change, ΔG, remains an indispensable metric for predicting the feasibility and directionality of chemical reactions, from simple molecular transformations to complex solid-state syntheses. Its definition, ΔG = ΔH - TΔS, provides a framework that incorporates both energy content and disorder. While the core principles are classical, the application of ΔG is being revolutionized by modern approaches. The integration of high-throughput experimentation, advanced calorimetry, and sophisticated machine-learning models is creating a new paradigm. This allows researchers to not only predict spontaneity but also to assess reaction robustness and navigate vast chemical spaces with unprecedented efficiency, solidifying the role of ΔG as a cornerstone of reaction feasibility in solid-state systems and beyond.

In solid-state synthesis, predicting and controlling reaction pathways remains a fundamental challenge. Unlike solution-based chemistry, where solvents facilitate molecular mobility, solid-state reactions are governed by the intricate interplay between thermodynamic driving forces and kinetic limitations. The "max-ΔG theory" has emerged as a powerful framework for predicting the initial phases formed during these reactions, suggesting that the first product to form is the one that yields the largest decrease in Gibbs free energy per atom, regardless of reactant stoichiometry. This principle operates under the premise that solid products form locally at particle interfaces without knowledge of the sample's overall composition. Understanding how enthalpy (ΔH) and entropy (ΔS) contributions collectively determine this Gibbs free energy (ΔG = ΔH - TΔS) is crucial for advancing predictive synthesis in materials science and pharmaceutical development.

Theoretical Foundation: The Interplay of ΔH and ΔS

The Gibbs Free Energy Equation

The Gibbs free energy represents the fundamental thermodynamic potential that determines the spontaneity of processes at constant temperature and pressure. The relationship is defined by:

Where:

- ΔG is the change in Gibbs free energy

- ΔH is the change in enthalpy (heat content)

- T is the absolute temperature in Kelvin

- ΔS is the change in entropy (disorder)

For a process to be spontaneous, ΔG must be negative. The competition between the enthalpy term (ΔH) and the entropy term (TΔS) dictates reaction behavior under different conditions. [8]

Enthalpic Contributions (ΔH)

Enthalpy represents the heat exchange during a reaction and reflects changes in bond strengths and intermolecular interactions:

- Exothermic reactions (ΔH < 0) release energy, typically through the formation of stronger chemical bonds

- Endothermic reactions (ΔH > 0) consume energy, often associated with bond breaking

- In solid-state systems, ΔH includes contributions from lattice energies, interfacial energies, and the energy required to break existing bonds before new ones can form [9]

Entropic Contributions (ΔS)

Entropy quantifies the disorder or randomness in a system and is directly related to the number of accessible microstates: [10]

S = k ln Ω

Where k is Boltzmann's constant and Ω is the number of microstates. In chemical systems, entropy changes are influenced by:

- Physical states: Gases have higher entropy than liquids, which have higher entropy than solids [11] [10]

- Molecular complexity: More complex molecules with greater degrees of freedom contribute to higher entropy [11]

- Mixing entropy: The configurational entropy increases when different elements or molecules mix [12]

- Temperature dependence: Entropy becomes more significant at higher temperatures where the TΔS term dominates [11] [8]

Table 1: Standard Molar Entropies for Selected Substances at 298K

| Substance | State | S° (J/mol·K) |

|---|---|---|

| NH₃ | Gas | 192.5 |

| N₂ | Gas | 191.5 |

| H₂ | Gas | 130.6 |

| H₂O | Liquid | 70.0 |

| H₂O | Solid | 48.0 |

The Max-ΔG Theory in Solid-State Reactions

Fundamental Principles

The max-ΔG theory proposes that when two solid phases react, they initially form the product with the largest compositionally unconstrained thermodynamic driving force (ΔG per atom), irrespective of reactant stoichiometry. [9] This approach is justified by observations that solid products form locally at particle interfaces without knowledge of the sample's overall composition. The theory operates under these key premises:

- Localized reaction domains: Reactions initiate at interfaces where particle contacts occur

- Stoichiometry independence: The initial product formation is not constrained by global reactant ratios

- Thermodynamic dominance: When the driving force is sufficiently large, thermodynamic considerations override kinetic limitations

Quantitative Threshold for Thermodynamic Control

Recent experimental validation has quantified the conditions under which the max-ΔG theory applies. Through in situ characterization of 37 pairs of reactants, researchers have established that thermodynamic control governs initial product formation when the driving force to form one product exceeds that of all other competing phases by ≥60 meV/atom. [9]

Below this threshold, kinetic factors predominantly determine reaction outcomes. This quantitative framework divides solid-state reactions into two distinct regimes:

- Thermodynamic control regime: One product has significantly larger ΔG (≥60 meV/atom advantage)

- Kinetic control regime: Multiple competing phases have comparable driving forces

Analysis of Materials Project data reveals that approximately 15% of possible reactions (105,652 reactions) fall within the thermodynamic control regime, highlighting the significant predictive capability of this approach. [9]

Nucleation Kinetics and Thermodynamic Competition

Classical nucleation theory provides the kinetic foundation for understanding why the max-ΔG theory succeeds under certain conditions. The nucleation rate (Q) is given by:

Q = A exp(-16πγ³/(3n²kBTΔG²)) [9] [13]

Where:

- A is a kinetic prefactor related to diffusion and thermal fluctuations

- γ is the interfacial energy

- n is the atomic density

- kB is Boltzmann's constant

- T is temperature

- ΔG is the bulk reaction energy

The exponential term varies by several orders of magnitude and tends to dominate the overall nucleation rate. When the driving force (ΔG) for one product significantly exceeds that of competitors, its nucleation rate becomes exponentially faster, ensuring thermodynamic control.

Experimental Validation and Methodologies

In Situ Characterization Techniques

Experimental validation of thermodynamic control requires direct observation of initial phase formation. Advanced characterization methodologies include:

- In situ X-ray diffraction (XRD): Tracks phase evolution in real-time during heating

- Synchrotron radiation sources: Provide high resolution and rapid scanning capabilities

- Machine learning-guided XRD: Steers diffractometers toward features that facilitate identification of reaction intermediates [9]

Case Study: Li-Nb-O Chemical Space

Detailed investigation of the Li-Nb-O system demonstrates the transition between thermodynamic and kinetic control:

- Reactants: LiOH or Li₂CO₃ with Nb₂O₅

- Experimental conditions: Heating to 700°C at 10°C/min, held for 3 hours

- Key findings: LiOH reactions show strong thermodynamic preference for Li₃NbO₄ formation, while Li₂CO₃ reactions exhibit comparable driving forces for multiple phases, placing them in the kinetic control regime [9]

Table 2: Experimental Conditions for In Situ Solid-State Reaction Studies

| Parameter | Specification | Purpose |

|---|---|---|

| Temperature Range | Room temperature to 700°C | Cover typical solid-state reaction conditions |

| Heating Rate | 10°C/min | Standard heating profile |

| Hold Time | 3 hours at maximum temperature | Ensure sufficient reaction time |

| Atmosphere | Ambient or controlled | Prevent unwanted oxidation/reduction |

| XRD Scan Rate | 2 scans/minute (synchrotron) | Capture rapid phase evolution |

| Data Analysis | Rietveld refinement | Quantify phase fractions |

Computational Framework for Reaction Prediction

Thermodynamic Parameter Calculations

Predicting solid-state reaction pathways requires computation of key thermodynamic parameters:

- Enthalpy of mixing: ΔHmix = Σᵢⱼ4Hᵢⱼcᵢcⱼ [12]

- Entropy of mixing: ΔSmix = -RΣcᵢlncᵢ [12]

- Atomic size mismatch: δr = [Σcᵢ(1 - rᵢ/ř)²]^{1/2} × 100% [12]

Where cᵢ and cⱼ are atomic fractions, Hᵢⱼ is the enthalpy of mixing for elements i and j, rᵢ is the atomic radius, and ř is the average atomic radius.

High-Throughput Computational Screening

Large-scale density functional theory (DFT) calculations, as implemented in the Materials Project, enable:

- Calculation of formation energies for thousands of potential compounds

- Construction of compositional phase diagrams to identify competing phases

- Determination of driving forces for all possible reaction products

- Identification of reactions falling within the thermodynamic control regime [9]

Research Reagent Solutions

Table 3: Essential Materials for Solid-State Reaction Studies

| Reagent/Material | Function | Application Example |

|---|---|---|

| LiOH·H₂O | Lithium source with high reactivity | Li-Nb-O system studies |

| Li₂CO₃ | Lithium source with moderate reactivity | Comparison of thermodynamic vs kinetic control |

| Nb₂O₅ | Niobium source | Ternary oxide formation |

| High-Purity Alumina Crucibles | Inert reaction containers | Prevent contamination during heating |

| Synchrotron Radiation | High-intensity X-ray source | In situ XRD with high temporal resolution |

| Rietveld Refinement Software | Quantitative phase analysis | Determine weight fractions of crystalline phases |

Visualization of Concepts and Workflows

Thermodynamic Versus Kinetic Control Regimes

Experimental Workflow for Pathway Determination

The max-ΔG theory provides a powerful predictive framework for solid-state reaction pathways when the thermodynamic driving force for one product exceeds competitors by ≥60 meV/atom. In this regime, enthalpy and entropy act as cooperative drivers rather than competing factors, with both contributing to the minimization of Gibbs free energy. The quantitative threshold established through systematic in situ studies enables researchers to identify approximately 15% of possible solid-state reactions that fall within thermodynamic control, offering significant opportunities for predictive synthesis planning. Future advances will require integrated computational and experimental approaches that account for both thermodynamic driving forces and kinetic factors, particularly for reactions where multiple competing phases have similar formation energies.

Nucleation, the initial step in the formation of a new thermodynamic phase, represents a fundamental process across materials science, chemistry, and pharmaceutical development. This process governs phenomena ranging from crystallization in metallic glasses to drug binding in biological systems. For decades, Classical Nucleation Theory (CNT) has served as the predominant theoretical framework for quantifying nucleation kinetics. However, CNT's quantitative predictions often diverge from experimental observations, particularly in complex solid-state systems and biological environments. These limitations have spurred the development of advanced computational approaches that explicitly map free energy landscapes and leverage modern statistical mechanics frameworks.

The core challenge in nucleation theory stems from the rare event nature of the process, where systems must overcome significant energy barriers to transition from metastable to stable states. This article traces the theoretical evolution from CNT's foundational principles to contemporary energy landscape methods, with particular emphasis on the max-ΔG theory for predicting solid-state reaction pathways. We examine how these frameworks operate within different thermodynamic regimes and present experimental protocols for validating their predictions across diverse material systems.

Classical Nucleation Theory: Foundations and Limitations

Theoretical Framework

Classical Nucleation Theory provides a quantitative model for predicting nucleation rates based on thermodynamic and kinetic considerations. The central equation of CNT expresses the steady-state nucleation rate (R) as:

Where:

ΔG*represents the free energy barrier for forming a critical nucleusN_Sis the number of potential nucleation sitesjrepresents the rate at which atoms attach to the nucleusZis the Zeldovich factor, accounting for the probability that nuclei of critical size will continue to growk_Bis Boltzmann's constantTis absolute temperature [13]

The free energy barrier ΔG* derives from a balance between the volume free energy gain and surface energy cost, yielding for spherical nuclei:

Where σ is the interfacial energy and Δg_v is the bulk free energy change per unit volume [13].

CNT Parameters and Their Physical Significance

Table 1: Key Parameters in Classical Nucleation Theory

| Parameter | Symbol | Physical Meaning | Dependence |

|---|---|---|---|

| Free Energy Barrier | ΔG* | Energy required to form a critical nucleus | σ³/(Δg_v)² |

| Interfacial Energy | σ | Excess energy at phase boundary | System composition, temperature |

| Driving Force | Δg_v | Bulk free energy change per unit volume | Supercooling/supersaturation |

| Zeldovich Factor | Z | Probability nucleus grows versus dissolves | (ΔG*/kT)¹/² |

| Kinetic Prefactor | j | Molecular attachment rate | Diffusion coefficient, molecular size |

Limitations in Solid-State Systems

Despite its widespread application, CNT faces significant challenges in quantitatively predicting nucleation behavior, particularly in solid-state systems where atomic mobility is limited. A strong assumption of CNT is that all thermally-induced stochastic fluctuations are possible regardless of how far their compositions deviate from the bulk alloy composition. However, in kinetically-constrained systems at lower temperatures, these stochastic clusters may not form within relevant experimental timescales [14]. This limitation has motivated the development of complementary models that better describe nucleation under diffusion-limited conditions.

Beyond CNT: Energy Landscape Approaches

Mapping the Energy Landscape

Energy landscape modeling represents a paradigm shift from CNT's continuum approach to an atomistic perspective. In this framework, the potential energy of a system is described as:

Where x, y, z represent the coordinates of each of the N atoms in a periodic box [15]. The energy landscape is constructed by mapping the relationship between local minima and first-order saddle points connecting these minima. This approach has proven particularly valuable for studying nucleation in glass-forming systems like barium disilicate, where it enables first-principles calculation of CNT parameters (interfacial free energy, kinetic barrier, and free energy difference) without empirical fitting [15].

Geometric Cluster Model

For solid-state nucleation at low temperatures where atomic mobility is limited, the geometric cluster model offers an alternative perspective. This approach considers that thermally-induced stochastic clusters cannot form within relevant experimental timescales. Instead, it treats the geometric clusters that statistically exist in any solution as nucleation precursors, presenting a model for their rate of "activation" [14]. This model has successfully predicted phase competition during crystallization of Al-Ni-Y metallic glasses and precipitate number density in Cu-Co and Fe-Cu alloys.

Workflow: From Classical to Modern Frameworks

The following diagram illustrates the conceptual evolution from CNT-based to energy landscape approaches:

Max-ΔG Theory: Thermodynamic Control in Solid-State Reactions

Theoretical Foundation

The max-ΔG theory represents a significant advancement in predicting outcomes of solid-state reactions. This principle states that when reaction energies are sufficiently large, the initial product formed between reactants will be the one that produces the largest decrease in Gibbs energy (ΔG), regardless of reactant stoichiometry. Predictions are made by computing ΔG for each possible reaction in a compositionally unconstrained manner and normalizing per atom of material formed [9]. This approach is justified by the observation that solid products form locally at particle interfaces without knowledge of the sample's overall composition.

The connection between max-ΔG theory and nucleation kinetics emerges from the CNT rate equation:

Where the exponential term varies by orders of magnitude with changes in ΔG, overwhelmingly influencing the nucleation rate compared to the prefactor A [9].

Threshold for Thermodynamic Control

Recent research has quantified the conditions under which max-ΔG theory applies, establishing a threshold for thermodynamic control. Experimental studies across 37 pairs of reactants revealed that initial product formation can be predicted when the driving force for one product exceeds that of all competing phases by ≥60 meV/atom [9]. Below this threshold, kinetic factors predominantly determine the initial product, as multiple phases have comparable driving forces for formation.

Table 2: Experimental Validation of Max-ΔG Theory

| System | Number of Reactions | Prediction Accuracy (>60 meV/atom) | Key Experimental Method |

|---|---|---|---|

| Li-Mn-O | 11 | 91% | Synchrotron XRD |

| Li-Nb-O | 11 | 100% (with LiOH) | In situ XRD |

| Multi-component | 26 | 85% | ML-guided XRD |

| Overall | 37 | 89% | Combined techniques |

Large-scale analysis of Materials Project data indicates that approximately 15% of possible reactions (105,652 reactions) fall within this regime of thermodynamic control, highlighting the significant opportunity for predicting synthesis pathways from first principles [9].

Computational Methods for Free Energy Prediction

Alchemical Transformations

Alchemical transformation methods, including Free Energy Perturbation (FEP) and Thermodynamic Integration (TI), compute free energy differences through non-physical pathways. These methods employ a hybrid Hamiltonian defined as a linear interpolation between states A and B:

Where the coupling parameter λ ranges from 0 (state A) to 1 (state B) [16]. In drug discovery, these approaches are particularly valuable for calculating relative binding free energies between analogous compounds, forming the basis for lead optimization in pharmaceutical development.

Nonequilibrium Switching (NES)

Nonequilibrium Switching represents a transformative approach that replaces slow equilibrium simulations with rapid, bidirectional transformations. NES leverages the statistical relationship:

Where W is the work performed during nonequilibrium transformations [17]. This method achieves 5-10X higher throughput compared to traditional alchemical methods, enabling broader exploration of chemical space in drug discovery applications.

Path-Based Methods and Collective Variables

Path-based methods compute free energy differences along defined reaction pathways, typically producing a Potential of Mean Force (PMF) along selected Collective Variables (CVs). Path Collective Variables (PCVs) represent a sophisticated approach to describing complex transformations:

Where S(x) measures progression along a pathway and Z(x) quantifies orthogonal deviations [16]. These variables enable studying large-scale conformational transitions and ligand binding to flexible targets.

Computational Workflow Comparison

The diagram below illustrates key computational methods for free energy calculation:

Experimental Protocols and Research Tools

Validating Nucleation Theories

Experimental validation of nucleation theories and the max-ΔG framework requires sophisticated characterization techniques:

In Situ X-ray Diffraction (XRD) Protocol:

- Sample Preparation: Reactant powders are mixed in appropriate stoichiometries and prepared as thin layers to maximize signal quality and thermal uniformity.

- Thermal Treatment: Samples are heated to target temperatures (e.g., 700°C for Li-Nb-O systems) at controlled rates (typically 10°C/min) while collecting diffraction patterns.

- Data Collection: Using synchrotron radiation sources (e.g., ALS Beamline 12.2.2), collect XRD patterns at high frequency (2 scans/minute) to capture transient phase formation.

- Phase Identification: Analyze diffraction patterns to identify crystalline phases and determine the temporal sequence of appearance.

- Quantification: Apply Rietveld refinement to determine weight fractions of each phase as a function of temperature/time [9].

Machine Learning-Guided XRD:

- Implement active learning algorithms to steer diffractometer toward features that facilitate intermediate identification.

- Use real-time pattern recognition to adjust measurement parameters based on emerging phases.

- Accumulate data across multiple reaction systems to build comprehensive validation datasets [9].

Computational Validation Methods

Energy Landscape Modeling Protocol:

- System Setup: Construct atomic models of supercooled liquids and crystalline phases (e.g., 768-atom systems for barium disilicate).

- Landscape Mapping: Identify local minima and saddle points using eigenvector-following methods or similar techniques.

- Parameter Calculation: Independently determine interfacial energy, kinetic barriers, and free energy differences from landscape topography.

- Rate Prediction: Compute nucleation rates using CNT expression with calculated parameters.

- Validation: Compare predicted rates and liquidus temperatures with experimental data [15].

Research Reagent Solutions

Table 3: Essential Materials and Computational Tools

| Category | Specific Item/Technique | Function/Application | Key References |

|---|---|---|---|

| Experimental Characterization | Synchrotron XRD | High-resolution, time-resolved phase identification | [9] |

| Differential Scanning Calorimetry (DSC) | Thermal analysis for nucleation and growth studies | [15] | |

| Computational Methods | Molecular Dynamics (MD) | Atomistic simulation of nucleation events | [15] |

| Nonequilibrium Switching (NES) | High-throughput free energy calculations | [17] [16] | |

| Path Collective Variables (PCVs) | Defining reaction pathways for complex transformations | [16] | |

| Data Resources | Materials Project Database | Thermodynamic data for max-ΔG predictions | [9] [18] |

| JARVIS Database | Training data for machine learning models | [18] | |

| Software Tools | RelaxPy | Modeling glass relaxation behavior | [15] |

| KineticPy | Calculating long-time kinetics in energy landscapes | [15] |

The evolution from Classical Nucleation Theory to modern energy landscape methods represents a fundamental shift in how we conceptualize and predict phase transformations. While CNT established important foundational principles, its limitations in quantitative prediction—particularly for solid-state systems—have driven the development of more sophisticated frameworks. The max-ΔG theory provides a quantitative threshold for thermodynamic control in solid-state reactions, establishing that when one product's driving force exceeds competitors by ≥60 meV/atom, the initial phase formed becomes predictable from thermodynamics alone.

Looking forward, several emerging trends promise to further advance this field. Machine learning approaches are demonstrating remarkable efficiency in predicting compound stability, with ensemble methods achieving high accuracy using significantly less training data [18]. The integration of nonequilibrium methods with advanced collective variables enables both free energy prediction and mechanistic insight into binding pathways [16]. Furthermore, the growing availability of materials databases coupled with advanced sampling algorithms suggests a future where computational prediction increasingly guides experimental synthesis across materials science and pharmaceutical development.

As these theoretical frameworks continue to mature, their integration across scales—from electronic structure to macroscopic phase formation—will enable increasingly accurate prediction and control of material structure and properties. This progression from phenomenological models to first-principles prediction represents a fundamental transformation in our approach to materials design and synthesis planning.

The Critical Role of Maximum Delta-G in Predicting Polymorphic Transitions and Reaction Initiation

In the solid state, the Gibbs free energy (G) is a central thermodynamic potential that determines the stability and spontaneous evolution of a system. It is defined by the equation G = H - TS, where H is enthalpy, T is absolute temperature, and S is entropy [2] [1]. The change in Gibbs free energy, ΔG, during a reaction or phase transition provides a quantitative measure of the thermodynamic driving force: negative ΔG values indicate spontaneous processes, while positive values signify non-spontaneous ones that require external energy input [2]. For polymorphic systems—where the same chemical compound can exist in multiple crystalline forms—the relative stability of different polymorphs is determined by subtle differences in their Gibbs free energies. Research indicates that approximately half of all polymorph pairs are separated by less than 2 kJ mol⁻¹ in lattice energy, making accurate ΔG prediction critically important yet challenging [19]. The concept of maximum ΔG refers to the initial thermodynamic driving force available at the onset of a solid-state reaction or polymorphic transition, which plays a decisive role in determining whether a desired polymorph will successfully nucleate and grow or become trapped in metastable intermediate states.

Theoretical Framework of Maximum Delta-G Theory

The Maximum Delta-G Theory posits that the initial thermodynamic driving force, represented by the maximum available ΔG, governs the early stages of polymorphic transformations and solid-state reactions. This theory provides a framework for understanding why certain precursor combinations successfully form target phases while others form persistent intermediates that consume the available driving force. The fundamental equation ΔG = ΔH - TΔS encapsulates the competing energetic and entropic contributions that must be balanced to achieve successful polymorph formation [2] [1].

In practical terms, precursors selected for solid-state synthesis are initially ranked by their calculated thermodynamic driving force (ΔG) to form the target material, with the most negative values representing the largest driving forces [20]. However, reactions with the largest initial ΔG may not always be optimal, as they can also facilitate the formation of stable intermediate phases that consume the available energy and prevent the target material from forming [20]. The Maximum Delta-G Theory addresses this paradox by considering not just the initial driving force but also how it is partitioned throughout the reaction pathway. Effective precursor selection requires maintaining sufficient driving force (ΔG′) at the target-forming step, even after accounting for intermediate compound formation [20].

Table 1: Key Thermodynamic Parameters in Polymorphic Transitions

| Parameter | Symbol | Role in Polymorphic Transitions | Experimental Determination |

|---|---|---|---|

| Gibbs Free Energy | G | Determines overall thermodynamic stability of polymorphs | DFT calculations, melting data [21] [20] |

| Gibbs Free Energy Change | ΔG | Driving force for polymorphic transitions | ΔG = ΔH - TΔS [2] |

| Enthalpy Change | ΔH | Energy differences between polymorphs | Calorimetry, heats of fusion [21] |

| Entropy Change | ΔS | Disorder differences between polymorphs | Heat capacity measurements [21] |

| Transition Temperature | Tₜᵣₐₙₛ | Temperature where polymorph stabilities change | Extrapolation of ΔG to zero [21] |

Experimental Quantification of Delta-G in Polymorphic Systems

Determining Polymorph Stability Relationships

Accurately determining the Gibbs free energy differences between polymorphs is essential for predicting stability relationships and transition behavior. A established methodology involves deriving ΔG between two polymorphs from their melting data, including temperatures and heats of fusion [21]. This information enables extrapolation of ΔG across temperature ranges to estimate transition points where stability relationships reverse—distinguishing between monotropic systems (one polymorph always more stable) and enantiotropic systems (stability dependent on temperature) [21]. For several systems examined, this melting data approach shows good agreement with traditional solubility methods [21].

The temperature dependence of polymorph stabilities arises from both phonon contributions to the vibrational partition function and phonon-driven thermal expansion [19]. Even in monotropic systems where one polymorph is always thermodynamically preferred, the magnitude of enthalpy and free energy differences between polymorphs depends on temperature. Computational approaches often employ fixed-cell optimizations that relax atomic positions while constraining lattice parameters to experimental room-temperature values, effectively capturing thermal expansion effects and associated phonon anharmonicity [19].

Computational Methods and Challenges

Computational prediction of polymorph stabilities presents significant challenges due to the exquisite sensitivity of results to methodological details. Density functional theory (DFT) methods, while widely used, can perform poorly for conformational polymorphs, with errors sometimes exceeding 5-10 kJ mol⁻¹—catastrophically large compared to the small energy differences characteristic of polymorphism [19]. These failures often stem from inaccurate intramolecular conformational energies and imperfect treatment of intermolecular interactions [19].

More advanced methods like fragment-based dispersion-corrected second-order Møller-Plesset perturbation theory (MP2D) have demonstrated improved performance for challenging conformational polymorph systems including o-acetamidobenzamide, ROY, and oxalyl dihydrazide [19]. Recent assessments of high-throughput Gibbs free energy predictions for crystalline solids reveal that while machine learning interatomic potentials (MLIPs) show promising performance, much of the calculated and experimental data for G still lack the accuracy and precision required for reliable thermodynamic modeling applications [22].

Table 2: Experimental Techniques for Delta-G Determination in Polymorphic Systems

| Technique | Measured Parameters | Polymorph Information Obtained | Limitations |

|---|---|---|---|

| Melting Calorimetry | Temperature and heat of fusion | ΔG between polymorphs, transition temperature [21] | Requires pure polymorph samples |

| Solution Calorimetry | Enthalpy of solution | Relative stability of polymorphs [21] | Solvent-polymorph interactions may complicate |

| X-ray Diffraction | Crystal structure, lattice parameters | Polymorph identification, phase transitions [20] | Does not directly measure energy |

| Harmonic Phonon Calculations | Vibrational frequencies | Temperature-dependent free energy [19] | Neglects anharmonic effects |

| Fixed-Cell Optimization | Atomic positions at fixed lattice parameters | Room-temperature free energy estimates [19] | Constrained lattice parameters |

Experimental Protocols for Studying Polymorphic Transitions

Tubulin GTP-Initiated Assembly Protocol

The mechanism of GTP-initiated microtubule assembly provides a biological paradigm for studying nucleotide-dependent polymorphic transitions. The experimental methodology involves several key steps [23]:

- Sample Preparation: Porcine tubulin is incubated with GDP or non-hydrolysable GTP analogs (GMPCPP, GTPγS) on ice to maintain nucleotide-bound states.

- Size Exclusion Chromatography: Dimeric fractions are isolated using gel filtration chromatography to obtain homogeneous samples.

- Cryo-EM Grid Preparation: Samples are applied to cryo-EM grids and vitrified using liquid ethane to preserve native structures.

- Data Collection and Processing: Cryo-EM datasets are collected for GDP-tubulin and GMPCPP-tubulin, followed by single-particle analysis and 3D reconstruction at resolutions of 3.5-3.9 Å.

- Structural Analysis: Atomic models are built for different nucleotide states, with structural comparisons revealing flexibility differences through Root-Mean-Square Deviation (RMSD) analysis.

- Molecular Dynamics Simulations: MD simulations complement structural data to elucidate the sequential straightening of curved tubulin heterodimers during assembly.

This protocol revealed that both GTP- and GDP-tubulin heterodimers adopt similar curved conformations with subtle flexibility differences, challenging earlier straight-versus-curved models and highlighting the role of GTP in reducing interdimer flexibility to stabilize lateral interactions [23].

Colloidal Heteroepitaxy for Polymorphic Transition Studies

Colloidal crystal systems enable direct observation of polymorphic transitions at single-particle resolution through the following methodology [24]:

- Substrate Fabrication: Thin colloidal crystal films are fabricated as substrates using convective assembly with polystyrene particles of specific sizes (e.g., 1100 nm).

- Epitaxial Growth: Smaller particles (e.g., 860 nm, approximately 0.78× substrate size) are introduced as the epitaxial phase with sodium polyacrylate polymer added to induce depletion attraction.

- Real-Time Microscopy: Polymorph formation and transitions are monitored using optical microscopy with single-particle resolution, distinguishing α-phase (3D islands) and β-phase (2D layers) by color intensity and orientation.

- Structural Analysis: The orientational order parameter θ₆ is analyzed to distinguish polymorphs based on their hexagonal symmetry orientation relative to the substrate.

- Solubility Mapping: Super-solubility curves for both polymorphs are determined by measuring particle number densities (ρ) at which nucleation occurs under varying polymer concentrations (Cₚ).

This approach revealed three types of polymorphic transitions leading to nonclassical behavior in nucleation, growth, and dissolution, with transition probabilities depending on metastable cluster stability rather than bulk phase stability [24].

Research Reagent Solutions for Polymorphic Studies

Table 3: Essential Research Reagents for Polymorphic Transition Studies

| Reagent/Chemical | Function in Polymorphic Studies | Example Application |

|---|---|---|

| GMPCPP/GTPγS | Non-hydrolysable GTP analogs | Study of GTP-initiated microtubule assembly without hydrolysis complications [23] |

| Sodium Polyacrylate | Depletion attraction inducer | Colloidal crystal formation and polymorph selection in heteroepitaxial growth [24] |

| Polystyrene Colloids | Model crystalline particles | Direct observation of polymorphic transitions at single-particle resolution [24] |

| CL-20 Crystals | Flexible molecular crystal model | Study of solid-solid polymorphic transitions involving complex conformational changes [25] |

| Y-Ba-Cu-O Precursors | Solid-state synthesis optimization | Testing ARROWS3 algorithm for precursor selection based on thermodynamic driving force [20] |

Visualization of Delta-G Theory and Polymorphic Pathways

Polymorph Selection Pathways diagram illustrates the critical decision points in polymorph formation, showing how high initial ΔG can lead to stable intermediate formation that consumes the available driving force, while moderate ΔG may better maintain sufficient driving force (ΔG′) for target polymorph formation.

ARROWS3 Algorithm Workflow diagram outlines the autonomous precursor selection process that actively learns from experimental outcomes to identify precursors avoiding highly stable intermediates, thereby retaining larger thermodynamic driving force (ΔG′) for target material formation [20].

The critical role of maximum ΔG in predicting polymorphic transitions and reaction initiation represents a fundamental principle in solid-state chemistry and materials science. Experimental evidence from diverse systems—biological tubulin assembly, colloidal crystals, and molecular solids like CL-20—consistently demonstrates that the initial thermodynamic driving force governs polymorph selection pathways [23] [24] [25]. The ARROWS3 algorithm exemplifies how this principle can be operationalized for autonomous materials synthesis, outperforming black-box optimization by explicitly modeling intermediate formation and preserving driving force for target phases [20].

Future research directions should address several key challenges: improving the accuracy of Gibbs free energy predictions for conformational polymorphs beyond current DFT limitations [19], developing more sophisticated models that integrate kinetic factors with thermodynamic driving forces, and expanding autonomous platforms like ARROWS3 to encompass broader chemical spaces. As these methodologies mature, the Maximum Delta-G Theory promises to transform polymorph prediction from an empirical art to a quantitative science, enabling reliable design of solid forms with tailored properties for pharmaceutical, energy, and advanced manufacturing applications.

The prediction and control of phase transformations in complex materials under extreme conditions represent a central challenge in solid-state chemistry and materials science. These transformations are governed by the principles of thermodynamics, particularly the drive to minimize the Gibbs free energy (ΔG) of the system. In multi-stage phase transformations, materials navigate a complex energetic landscape through a series of intermediate states rather than transitioning directly from initial to final structure. Understanding these pathways is critical for advancing max delta G theory, which seeks to predict the thermodynamic stability and transformation behavior of solids under non-equilibrium conditions. The study of these phenomena has been hampered by traditional approaches that focus predominantly on initial and final states, neglecting the crucial role of intermediate phases that dictate the actual transformation pathway [26] [27].

Recent advances in experimental techniques and computational modeling have revealed that intermediate phases play a decisive role in determining whether a material will undergo a crystalline-to-crystalline transformation or become amorphous under irradiation. This whitepaper examines groundbreaking research on MAX phases—ternary layered carbides and nitrides—as a model system for elucidating these complex transformation mechanisms [26]. Through the integration of in situ characterization, theoretical modeling, and machine learning, researchers are developing predictive frameworks that can anticipate material behavior based on fundamental atomic properties, thereby creating a more robust foundation for max delta G theory in solid-state reaction prediction.

MAX Phases as Model Systems for Multi-Stage Transformations

MAX phases are a family of over 340 ternary layered carbides and nitrides with the general formula M({n+1})AX(n), where M is an early transition metal, A is an A-group element, and X is carbon or nitrogen. These "metallic ceramics" exhibit a unique combination of metallic and ceramic properties, making them promising candidates for applications in extreme environments such as nuclear reactors [26] [27]. Their complex layered structures and response to external stimuli like ion irradiation make them ideal model systems for studying multi-stage phase transformations.

Under irradiation, MAX phases typically undergo a transformation from an initial hexagonal structure (hex-phase) to an intermediate γ-phase with a hexagonal close-packed (hcp) structure, and then either to a face-centered cubic (fcc) structure or to an amorphous state [26]. Prior research had predominantly focused on the initial phases, with antisite defect formation energy in the initial structures considered a criterion for assessing irradiation resistance. However, this approach provided only limited predictive capability as it failed to account for the crucial role of the intermediate γ-phase in determining the ultimate transformation pathway [26] [27].

Table 1: Multi-Stage Transformation Pathways in M(_2)AlC MAX Phases

| Compound | Initial Structure | Intermediate Structure | Final Structure | Transformation Pathway |

|---|---|---|---|---|

| Cr(_2)AlC | Hexagonal (hex) | γ-phase (hcp) | Amorphous | hex → γ → amorphous |

| V(_2)AlC | Hexagonal (hex) | γ-phase (hcp) | Face-centered cubic (fcc) | hex → γ → fcc |

| Nb(_2)AlC | Hexagonal (hex) | γ-phase (hcp) | Face-centered cubic (fcc) | hex → γ → fcc |

Experimental Evidence of Distinct Transformation Pathways

In Situ Irradiation and TEM Methodology

The distinct transformation pathways of MAX phases were elucidated through sophisticated experimental protocols employing in situ ion irradiation coupled with real-time transmission electron microscopy (TEM). Researchers studied M(_2)AlC (M = Cr, V, Nb) systems at the Xiamen Multiple Ion Beam In-situ TEM Analysis Facility using an ion beam of 800 keV Kr(^{2+}) at room temperature [26] [27]. The experimental workflow encompassed several critical steps:

- Sample Preparation: High-quality MAX phase samples were synthesized and prepared for TEM analysis using standard techniques.

- In Situ Irradiation: Samples were subjected to controlled ion irradiation while inside the TEM column, with damage levels carefully quantified in displacements per atom (dpa).

- Real-Time Monitoring: Selected area electron diffraction (SAED) patterns were recorded continuously during irradiation to track structural evolution.

- High-Resolution Imaging: HRTEM micrographs were captured at specific damage levels to resolve atomic-scale structural details.

- Data Analysis: Diffraction patterns and images were analyzed to identify phase composition and transformation sequences.

This methodology enabled direct observation of the evolving atomic structure as a function of irradiation damage level, providing unprecedented insight into the complete multi-stage phase transformation pathway [26].

Composition-Dependent Transformation Behavior

The experimental results revealed striking compositional trends in irradiation-induced polymorphism. For all three materials (Cr(2)AlC, V(2)AlC, and Nb(_2)AlC), irradiation triggered the formation of intermediate γ-phases with hexagonal close-packed structure at relatively low damage levels (0.2-0.3 dpa), attributed to the accumulation of M-Al (M = Cr, V, Nb) antisite defects [26] [27].

However, the subsequent transformation pathways diverged significantly based on composition:

- Both V(2)AlC and Nb(2)AlC underwent the complete hex→γ→fcc phase transformation, with the γ-to-fcc transition occurring at a lower irradiation fluence in Nb(2)AlC than in V(2)AlC.

- In contrast, Cr(2)AlC followed a hex→γ→amorphous pathway, with the intermediate γ-Cr(2)AlC phase amorphizing starting from approximately 1.8 dpa without forming the fcc-phase [26].

High-resolution TEM (HRTEM) micrographs provided further evidence of these distinct behaviors. In Cr(2)AlC irradiated at 1.8 dpa, researchers observed the coexistence of the γ-phase and amorphous phase nucleated inside the γ-phase, with no fcc-phase formation [26]. For V(2)AlC at 13.8 dpa, a dual-phase region containing both γ-phase and fcc-phase with abundant stacking faults was observed, indicating an ongoing γ-to-fcc phase transformation [26] [27].

Theoretical Framework: Synchroshear Mechanism and Energetics

Atomic-Scale Transformation Mechanism

The divergence in transformation pathways between different MAX phase compositions can be explained through a synchroshear mechanism that operates at the atomic scale. Prior research had established that stacking faults (SFs) play a crucial role in triggering hcp-to-fcc or fcc-to-hcp phase transformations in MAX phases by altering stacking sequences [26]. In hexagonal MAX phases, SFs are typically produced by dissociation of perfect (\frac{1}{3}\langle 11\bar{2}0\rangle (0001)) dislocations along the (0001) basal planes according to the reaction:

[ \frac{1}{3}\langle 11\bar{2}0\rangle \to \frac{1}{3}\langle 10\bar{1}0\rangle + \text{SF} + \frac{1}{3}\langle 01\bar{1}0\rangle ]

However, in complex materials like MAX phases with sublattices or ordered structures, the shearing mechanism differs from that in simple metals. The irradiation-induced γ-phase possesses an hcp structure with cation sublattice disorder and X atoms rearranged randomly across octahedral interstitial sites [26]. This complexity necessitates a coordinated motion of two Shockley partial dislocations—a mechanism termed "synchroshear"—consisting of two synchronous shears in different directions on adjacent atomic planes, similar to mechanisms observed in intermetallic Laves-phases [26] [27].

Energetics of Phase Transformations

The synchroshear mechanism was combined with ab initio calculations to determine the barrier energies along the minimum energy path (MEP) for the γ-to-fcc phase transformation in γ-M(_2)AlC (M = Cr, V, Nb) phases [26]. The computational methodology involved:

- Structure Generation: For each material, 36 samples of 2×2×1 supercells with different random atomic configurations were generated using the ATAT package based on the Special Quasirandom Structures (SQS) method.

- Path Calculation: A climbing image nudged elastic band (CINEB) approach was applied to search for the minimum energy path.

- Energetic Analysis: The barrier energies for the transformation were calculated and correlated with compositional factors.

These calculations revealed that structural distortion and bond covalency of the intermediate γ-phase determine the outcome of the transformation process [26]. The varying energy barriers for different compositions explain why some systems readily transform to the fcc structure while others become amorphous.

Table 2: Key Factors Influencing Transformation Pathways in MAX Phases

| Factor | Influence on Transformation Pathway | Experimental Evidence |

|---|---|---|

| Atomic Radii | Affects structural distortion in γ-phase | Smaller radii increase distortion energy |

| Electronegativity | Determines bond covalency in γ-phase | Higher electronegativity difference increases covalency |

| Stacking Fault Energy | Influences synchroshear mechanism | Lower SFE promotes amorphization over crystalline transformation |

| Cation Disorder | Alters potential energy landscape | Random site occupancy in γ-phase affects transformation barrier |

Integration with Max Delta G Theory and Predictive Frameworks

Thermodynamic Stability Predictions

The multi-stage transformation behavior of MAX phases must be understood within the broader context of max delta G theory and thermodynamic stability prediction. The Gibbs free energy (G) of a system determines its phase stability, with the driving force for transformations being the reduction of G. However, predicting G for solids remains challenging, particularly under non-equilibrium conditions [22]. Recent advances in machine learning interatomic potentials (MLIPs) and other computational approaches have shown promise in predicting G within the harmonic and quasi-harmonic approximations, though significant challenges remain in achieving the accuracy and precision required for reliable thermodynamic modeling [22].

The decomposition energy (ΔH(_d)), defined as the total energy difference between a given compound and competing compounds in a specific chemical space, serves as a key metric for thermodynamic stability [18]. Traditional approaches to determining compound stability through experimental investigation or density functional theory (DFT) calculations are characterized by inefficiency and high computational costs [18]. This has spurred the development of machine learning frameworks that can rapidly and cost-effectively predict compound stability.

Machine Learning Approaches

Recent research has demonstrated the effectiveness of ensemble machine learning frameworks based on stacked generalization (SG) for predicting thermodynamic stability of inorganic compounds [18]. These approaches integrate models rooted in distinct domains of knowledge—such as electron configuration, atomic properties, and interatomic interactions—to mitigate individual model biases and enhance predictive performance.

The Electron Configuration models with Stacked Generalization (ECSG) framework achieves an Area Under the Curve score of 0.988 in predicting compound stability within the Joint Automated Repository for Various Integrated Simulations (JARVIS) database [18]. Notably, this framework demonstrates exceptional efficiency in sample utilization, requiring only one-seventh of the data used by existing models to achieve the same performance [18]. Such advances in stability prediction directly support the core objectives of max delta G theory by enabling more accurate forecasting of solid-state reaction outcomes.

Graph Theoretical Descriptors

Beyond traditional order parameters, graph theory (GT) offers a powerful mathematical toolbox for quantifying structural changes in complex phases over multiple length scales [28]. GT descriptors such as centrality measures and node-based fractal dimension (NFD) can identify complex phases combining molecular and nanoscale organization that are challenging to characterize with traditional methodologies [28]. These approaches are particularly valuable for capturing the simultaneous presence of order and disorder in transient states close to transition regions, providing enhanced capability for describing the complex pathways in multi-stage phase transformations.

Visualization of Multi-Stage Transformation Pathways

Transformation Pathways in MAX Phases: This diagram illustrates the divergent transformation pathways in MAX phases under irradiation, highlighting the critical role of the intermediate γ-phase and the synchroshear mechanism in determining the final structure.

Experimental Workflow for Phase Transformation Analysis

Experimental and Computational Workflow: This diagram outlines the integrated methodology combining in situ irradiation, TEM characterization, and computational analysis used to elucidate multi-stage transformation pathways in MAX phases.

Research Reagent Solutions and Essential Materials

Table 3: Key Research Materials and Computational Tools for Phase Transformation Studies

| Item | Function/Application | Specific Examples |

|---|---|---|

| MAX Phase Samples | Model systems for studying multi-stage transformations | M(_2)AlC (M = Cr, V, Nb) |

| In Situ TEM Facility | Real-time observation of irradiation-induced transformations | Xiamen Multiple Ion Beam In-situ TEM Analysis Facility |

| Ion Beam Source | Inducing controlled damage in materials | 800 keV Kr(^{2+}) ions |

| DFT Software | Calculating energy barriers and electronic structure | Density Functional Theory codes |

| Special Quasirandom Structures | Modeling disordered intermediate phases | ATAT package with SQS method |

| Machine Learning Frameworks | Predicting thermodynamic stability | ECSG framework with stacked generalization |

| Graph Theory Algorithms | Quantifying complex phase organization | Centrality measures, node-based fractal dimension |

The investigation of multi-stage phase transformations in MAX phases has revealed the critical importance of intermediate states in determining material evolution under extreme conditions. By combining in situ experimentation with theoretical modeling, researchers have established that structural distortion and bond covalency of the intermediate γ-phase dictate whether a material will follow a crystalline-to-crystalline transformation pathway or become amorphous [26]. This understanding enables the development of predictive rules based on fundamental atomic properties—atomic radii and electronegativity—that can forecast phase transformation behavior across the MAX phase family [26] [27].

These advances directly contribute to the refinement of max delta G theory by providing a more nuanced understanding of the energetic landscapes that govern solid-state transformations. The integration of machine learning approaches, particularly ensemble methods that combine diverse knowledge domains, offers promising avenues for enhancing the prediction of thermodynamic stability and transformation pathways [18]. Furthermore, graph theoretical descriptors provide powerful new tools for quantifying complex organizational patterns in transient states, capturing the simultaneous order and disorder that characterize phase transitions in complex materials [28].

As research in this field progresses, the integration of high-throughput computation, advanced in situ characterization, and machine learning will continue to expand our understanding of multi-stage transformation pathways. These insights will not only facilitate the design of materials with enhanced performance in extreme environments but also advance the fundamental theoretical frameworks that predict and explain solid-state reactions across diverse material systems.

Computational and Experimental Methods for Delta-G Determination in Solids

The accurate prediction of solid-state reaction outcomes, a core objective of max delta G theory, hinges on a precise understanding of thermodynamic stabilities. For DNA-guided synthesis and biomolecular engineering, this translates to a critical need for robust, large-scale experimental data on nucleic acid folding energetics. Traditional methods for determining DNA thermodynamics, such as UV melting and differential scanning calorimetry (DSC), are laborious and low-throughput, creating a significant data bottleneck that limits the parameterization and validation of predictive models [29]. This guide details two transformative high-throughput techniques—Array Melt and Toehold Exchange Energy Measurement (TEEM)—that are overcoming these limitations. By enabling the simultaneous measurement of thousands to millions of DNA sequences, these methods provide the extensive thermodynamic datasets required to refine nearest-neighbor models and develop advanced machine-learning approaches, thereby enhancing our ability to predict and control molecular behavior in complex reactions.

The Gibbs free energy change, ΔG, is the central thermodynamic quantity in these studies. It determines the spontaneity of a process such as DNA folding or a chemical reaction. The fundamental relationship is given by:

ΔG = ΔH – TΔS

Where ΔH is the change in enthalpy, T is the temperature in Kelvin, and ΔS is the change in entropy [2] [1]. A negative ΔG indicates a spontaneous process. In the context of max delta G theory, which often seeks to predict the most stable products of a reaction, the accurate experimental determination of ΔG for intermediate and final states is paramount. These high-throughput methods provide a direct or indirect way to measure ΔG at scale, offering the empirical foundation needed to move beyond theoretical approximations.

High-Throughput Methodologies at a Glance

The following table summarizes the core characteristics of the two primary high-throughput techniques discussed in this guide.

Table 1: Comparison of High-Throughput DNA Thermodynamic Measurement Techniques

| Feature | Array Melt Method | TEEM (Toehold Exchange Energy Measurement) |

|---|---|---|

| Core Principle | Fluorescence de-quenching from temperature-dependent DNA hairpin unfolding on a flow cell [29]. | Competitive DNA strand displacement measured via fluorescence to directly determine ΔG° at each temperature [30]. |

| Throughput | Extremely High (Millions of melt curves from 27,732 sequence variants in one study) [29]. | High (Over 1,200 ΔG° values per plate) [30]. |

| Reported Precision (Standard Error) | Uncertainty in ΔG₃₇ ~0.1 kcal/mol for many variants [29]. | ~0.05 kcal/mol [30]. |

| Key Advantage | Unprecedented scale and direct observation of thermal melting. | Direct measurement of ΔG° across a wide temperature range (40+ °C) without extrapolation [30]. |

| Typical Application | Deriving improved thermodynamic parameters for DNA folding models [29]. | Precisely measuring free energy penalties of motifs like bulges and mismatches [30]. |

The Array Melt Technique

Detailed Experimental Protocol

The Array Melt protocol transforms a standard Illumina sequencing flow cell into a high-throughput thermodynamic sensor [29]. The process begins with the design and synthesis of a DNA oligonucleotide pool containing tens of thousands of unique hairpin sequences. These sequences are flanked by universal adapter sequences for amplification and loading onto a MiSeq flow cell. During the sequencing process, single DNA molecules are amplified into localized clusters, each containing approximately 1,000 copies of the same sequence.

The critical measurement phase involves the following steps:

- Probe Annealing: A Cy3-labeled fluorescent oligonucleotide is annealed to the 5' end of the hairpin library, and a Black Hole Quencher (BHQ)-labeled oligonucleotide is annealed to the 3' end. The binding sites for these probes are designed to have very high melting temperatures (~74°C), ensuring they remain bound throughout the experimental temperature ramp [29].

- Temperature Ramp and Imaging: The flow cell is subjected to a controlled temperature gradient from 20°C to 60°C. At low temperatures, the hairpin is folded, bringing the fluorophore and quencher into close proximity, which results in low fluorescence. As the temperature increases, the hairpin unfolds, separating the fluorophore and quencher and leading to an increase in fluorescence intensity [29].

- Data Acquisition: Fluorescence images are captured at each temperature step. The physical location of each cluster on the flow cell is mapped to its specific sequence during an initial sequencing run, allowing the melt curve (fluorescence vs. temperature) to be tracked for millions of individual sequence clusters simultaneously [29].

- Data Processing and QC: Fluorescence signals are normalized and fitted to a two-state melt model. Variants that do not exhibit clear two-state behavior or melt outside the measurement range are filtered out. For quality-controlled data, the enthalpy (ΔH) and melting temperature (Tₘ) are extracted from the curve fit, and the Gibbs free energy at 37°C (ΔG₃₇) is calculated [29].

Research Reagent Solutions

Table 2: Key Reagents for the Array Melt Protocol

| Reagent / Material | Function in the Experiment |

|---|---|

| Illumina MiSeq Flow Cell | A repurposed platform providing a structured surface for millions of parallel, sequence-mapped biochemical reactions [29]. |

| Custom DNA Oligo Pool | A synthesized library containing up to tens of thousands of unique DNA hairpin sequences to be investigated [29]. |

| Cy3-labeled Oligonucleotide | Fluorophore-labeled probe that anneals to a universal binding site; its emission is quenched when the hairpin is folded [29]. |

| BHQ-labeled Oligonucleotide | Quencher-labeled probe that anneals to a second universal binding site; absorbs the energy from the excited Cy3 dye via FRET when in close proximity [29]. |

| Two-State Model Fitting Algorithm | A computational quality control step that filters out melt curves that do not conform to a simple folded-unfolded transition, ensuring data reliability [29]. |

Array Melt Workflow Visualization

The following diagram illustrates the core workflow and signaling principle of the Array Melt technique.

Diagram 1: Array Melt workflow and signaling principle.

The Toehold Exchange Energy Measurement (TEEM) Technique

Detailed Experimental Protocol

TEEM is a solution-based method that infers DNA motif thermodynamics from the equilibrium of a competitive strand displacement reaction, bypassing the need for a thermal melt curve [30]. The system involves three DNA strands: the reporter complex (C, functionalized with a fluorophore) and two competing strands (X and P, where P is functionalized with a quencher). The sequences are designed so that the binding of X and P to C is mutually exclusive.

The experimental procedure is as follows:

- Sample Preparation: Three separate samples are prepared for each reaction condition:

- Reaction Sample: Contains strands C, X, and P.

- Max Signal Control: Contains C and X (all C is in the high-fluorescence CX state).

- Min Signal Control: Contains C and P (all C is in the low-fluorescence CP state) [30].

- Fluorescence Measurement: The fluorescence of all three samples is measured at a single temperature or across a temperature gradient.

- Yield Calculation: The reaction yield (Y), representing the fraction of C strands bound to X, is calculated using the formula: Y = (FReaction - FMin) / (FMax - FMin) where F is the measured fluorescence of each sample [30].

- ΔG° Calculation: The equilibrium constant Keq is derived from the yield and the known concentrations of X and P (Keq = [CX][P] / [CP][X]). The standard free energy change is then calculated directly as ΔG° = -RT ln(K_eq), where R is the gas constant and T is the temperature [30].

- Motif ΔΔG° Determination: To find the thermodynamic penalty of a motif (e.g., a bulge), ΔG° is measured for a reference reaction (without the motif) and a test reaction (with the motif). The difference, ΔΔG° = ΔG°test - ΔG°reference, gives the destabilizing energy of the motif [30]. The method's high precision (standard error ~0.05 kcal/mol) allows for the detection of subtle thermodynamic differences.

Research Reagent Solutions

Table 3: Key Reagents for the TEEM Protocol

| Reagent / Material | Function in the Experiment |

|---|---|

| Fluorophore-labeled Strand (C) | The central reporter strand; its binding partners determine the system's fluorescence output [30]. |

| Quencher-labeled Strand (P) | A competitor strand that, when bound to C, quenches the fluorophore, yielding low fluorescence [30]. |

| Unlabeled Competitor Strand (X) | The strand of interest; its successful binding to C displaces P and restores high fluorescence [30]. |

| Phosphate Buffered Saline (PBS) | A standard buffer used to maintain a consistent ionic strength and pH for the reactions [30]. |

| Real-Time PCR Thermocycler | A precise instrument used to hold samples at specific temperatures or to perform temperature gradients for fluorescence measurement [30]. |

TEEM Reaction Visualization

The following diagram illustrates the competitive strand displacement mechanism and data processing in TEEM.

Diagram 2: TEEM reaction mechanism and data processing.

The advent of high-throughput techniques like Array Melt and TEEM marks a paradigm shift in the experimental investigation of biomolecular thermodynamics. By providing massive, high-quality datasets on DNA folding and stability, these methods directly address the data scarcity that has long constrained predictive models, including those central to max delta G theory. The ability to accurately measure ΔG for vast sequence libraries enables the derivation of refined thermodynamic parameters and the training of sophisticated machine-learning models, as demonstrated by the development of the "dna24" model and a graph neural network from Array Melt data [29]. For researchers in drug development and materials science, these tools provide an unprecedented capacity to rationally design oligonucleotide probes, primers, and DNA nanostructures with predictable behavior, thereby accelerating the translation of in silico predictions into functional realities.

Leveraging Machine Learning and Neural Networks for Enhanced Free Energy Predictions