Inverse Design of Stable Inorganic Materials: AI-Driven Discovery and Validation

This article explores the transformative paradigm of inverse design for discovering stable inorganic materials, a stark departure from traditional trial-and-error methods.

Inverse Design of Stable Inorganic Materials: AI-Driven Discovery and Validation

Abstract

This article explores the transformative paradigm of inverse design for discovering stable inorganic materials, a stark departure from traditional trial-and-error methods. It details how artificial intelligence, including generative models, global optimization, and reinforcement learning, enables the direct generation of novel crystals with pre-defined stability criteria and functional properties. Covering foundational concepts, methodological advances, and solutions to key challenges, the content highlights validated industrial frameworks and successful experimental syntheses. Aimed at researchers and scientists, the article provides a comprehensive roadmap for leveraging these computational strategies to accelerate the development of next-generation materials for advanced technological applications.

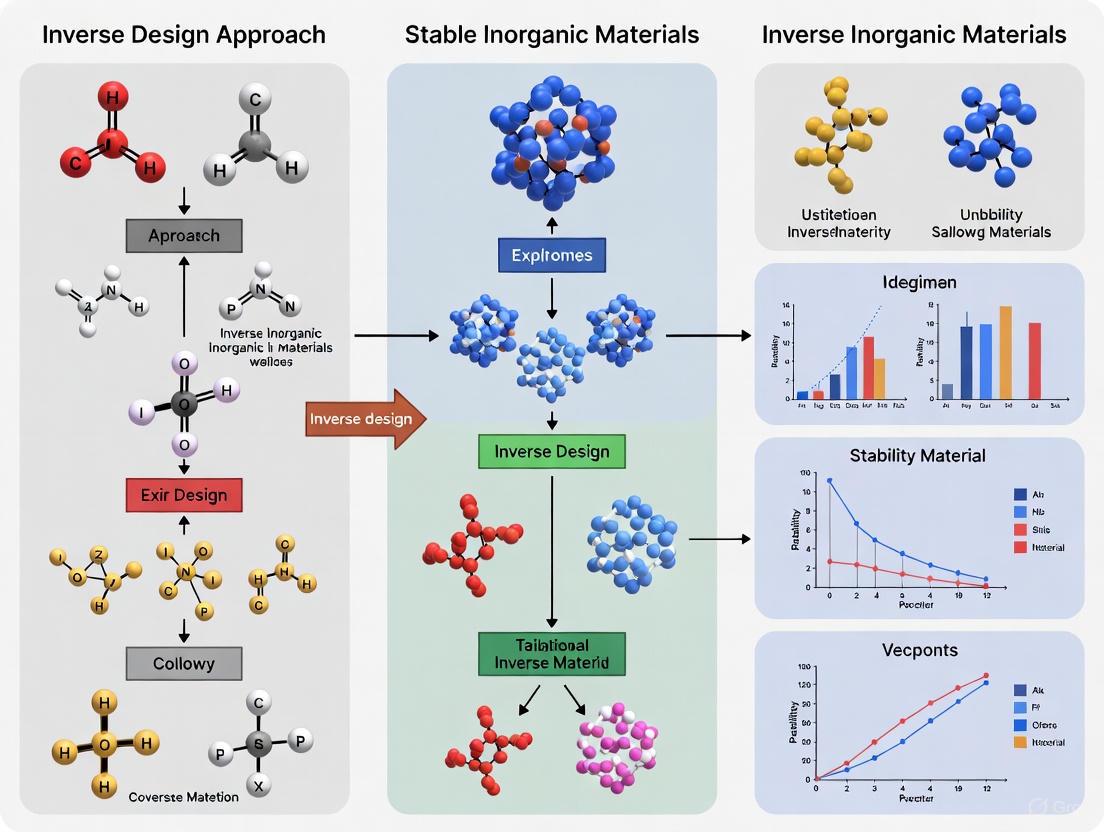

The Paradigm Shift from Trial-and-Error to Targeted Materials Discovery

Inverse design represents a paradigm shift in materials science, reversing the traditional discovery process by starting with desired properties and identifying optimal structures to achieve them. This approach is particularly transformative for stable inorganic materials research, where generative machine learning models now enable the targeted discovery of novel crystals with predefined chemical, mechanical, and electronic characteristics. This technical guide examines the core principles, methodologies, and implementations of inverse design, focusing on diffusion-based generative models that have demonstrated unprecedented capabilities in designing stable inorganic materials across the periodic table. We provide a comprehensive framework encompassing theoretical foundations, experimental validation protocols, and practical computational tools that are advancing the frontier of materials design.

The conventional materials discovery pipeline follows a forward design approach: researchers create a material, characterize its structure, measure its properties, and hopefully identify applications matching these properties. This empirical, trial-and-error process requires extensive experimentation, resulting in high costs and long development cycles [1]. Inverse design fundamentally reverses this workflow by beginning with desired target properties and systematically determining the atomic configurations and compositions needed to achieve them [2]. This data-driven approach leverages machine learning to analyze the complex mapping relationships between materials structures and their properties, enabling the direct generation of candidate materials optimized for specific functionalities [1].

Within inorganic materials research, inverse design addresses critical limitations of high-throughput screening methods. While computational screening of existing materials databases has accelerated discovery, it remains fundamentally constrained by the number of known materials, representing only a tiny fraction of potentially stable inorganic compounds [3]. Inverse design methods transcend these limitations by exploring previously uncharted regions of chemical space, generating truly novel materials architectures not present in existing databases [3] [4].

The application of inverse design to stable inorganic materials presents unique challenges and opportunities. Unlike organic molecules or amorphous systems, crystalline inorganic materials require consideration of periodicity, symmetry, and diverse coordination environments across the periodic table. Recent advances in generative artificial intelligence have now made it possible to address these challenges, producing stable, diverse inorganic materials that can be further optimized for targeted applications in energy storage, catalysis, and carbon capture [3].

Core Methodologies and Theoretical Framework

Key Approaches to Inverse Design

Inverse design methodologies can be categorized into three principal frameworks, each with distinct mechanisms and applications in materials science:

Exploration-based methods utilize algorithms such as genetic algorithms and evolutionary strategies to navigate the materials search space through iterative selection, mutation, and recombination of promising candidates [1] [4]. These approaches benefit from not requiring extensive pre-existing datasets but typically demand substantial computational resources for fitness evaluations through quantum mechanical calculations.

Model-based methods employ generative machine learning models, including diffusion models, variational autoencoders (VAEs), and generative adversarial networks (GANs), to learn the underlying probability distribution of materials structures [2] [1]. These trained models can then sample novel structures directly, with recent diffusion models demonstrating superior performance for generating high-quality material structures [3] [2].

Optimization-based methods formulate materials discovery as an optimization problem, using techniques such as Bayesian optimization to efficiently search the design space [1] [5]. These approaches are particularly valuable when property evaluations are computationally expensive, as they aim to minimize the number of evaluations needed to find optimal solutions.

Diffusion Models for Materials Generation

Diffusion models have emerged as particularly powerful generative frameworks for inverse design of inorganic materials. These models generate valid structures through a progressive denoising process, gradually refining random noise into coherent structures [3] [2]. The fundamental diffusion process involves two stages: a forward process that systematically adds noise to destroy data structure, and a reverse process that learns to recover data structure from noise.

For crystalline materials, specialized diffusion processes must account for unique periodic structures and symmetries. MatterGen, a state-of-the-art diffusion model for inorganic materials, implements a customized diffusion process that generates crystal structures by gradually refining atom types, coordinates, and the periodic lattice [3]. Each component employs a physically motivated corruption process:

- Coordinate diffusion respects periodic boundary conditions using a wrapped Normal distribution and approaches a uniform distribution at the noisy limit, with adjustments for cell size effects on fractional coordinate diffusion [3].

- Lattice diffusion maintains symmetric form and approaches a distribution whose mean is a cubic lattice with average atomic density from training data [3].

- Atom type diffusion operates in categorical space where individual atoms are corrupted into a masked state [3].

To reverse this corruption process, MatterGen learns a score network that outputs invariant scores for atom types and equivariant scores for coordinates and lattice, effectively capturing the symmetries essential for crystalline materials [3].

Conditional Generation for Property Targeting

A critical advancement in inverse design is the development of conditional generative models that can steer the generation process toward materials with specific target properties. MatterGen implements this capability through adapter modules that enable fine-tuning the base diffusion model on datasets with property labels [3]. These tunable components are injected into each layer of the base model to alter its output depending on given property constraints [3].

The fine-tuned model operates in combination with classifier-free guidance to enhance control over generation [3]. This approach enables the generation of materials satisfying multiple simultaneous constraints, including:

- Chemical composition constraints for designing materials with specific elemental constituents.

- Structural symmetry constraints targeting particular space groups or crystal systems.

- Electronic properties such as band gap, magnetic moment, or conductivity.

- Mechanical properties including modulus, hardness, or strength.

This conditional generation framework significantly expands the applicability of inverse design to a broad range of materials optimization problems beyond what was previously possible with unconditional generative models [3].

Comparative Analysis of Inverse Design Methods

Table 1: Comparison of Inverse Design Approaches for Materials Discovery

| Method Category | Key Examples | Advantages | Limitations | Suitable Applications |

|---|---|---|---|---|

| Exploration-based | Genetic algorithms, Evolutionary strategies | No requirement for large datasets; Conceptually simple | Computationally expensive fitness evaluations; Slow convergence | Composition search; Structure prediction with known building blocks |

| Model-based | Diffusion models (MatterGen, AMDEN), VAEs, GANs | Fast sampling after training; High-quality structures | Require large training datasets; Training computationally intensive | High-throughput generation of novel crystals; Multi-property optimization |

| Optimization-based | Bayesian optimization, Surrogate-based optimization | Sample-efficient; Handles expensive evaluations | Limited to continuous parameters; Struggles with high dimensions | Process parameter optimization; Composition-space navigation |

Implementation Framework: MatterGen for Stable Inorganic Materials

Model Architecture and Training Protocol

MatterGen implements a comprehensive diffusion framework specifically designed for crystalline materials. The model represents a crystalline material by its unit cell, comprising atom types (A), coordinates (X), and periodic lattice (L) [3]. The training process involves:

Dataset Curation: MatterGen's base model was trained on the Alex-MP-20 dataset, containing 607,683 stable structures with up to 20 atoms recomputed from the Materials Project and Alexandria datasets [3]. This diverse training set enables the model to learn the complex relationships between composition, structure, and stability across the periodic table.

Network Architecture: The score network employs an equivariant architecture that respects the symmetries of crystalline materials. It outputs invariant scores for atom types and equivariant scores for coordinates and lattice, ensuring generated structures adhere to physical constraints [3].

Stability Optimization: During training, the model learns to generate structures that minimize energy and remain stable after DFT relaxation. This focus on stability is crucial for synthesizable materials discovery.

Conditioning Mechanisms for Targeted Generation

MatterGen's conditioning system enables precise control over generated materials through several mechanisms:

- Chemical conditioning allows specification of desired elements or composition ranges, enabling the discovery of materials with specific constituent elements.

- Symmetry conditioning directs generation toward structures with target space groups, useful for designing materials with specific symmetry-dependent properties.

- Property conditioning enables optimization for electronic, mechanical, or magnetic properties through fine-tuning on property-labelled datasets.

The conditioning mechanism uses adapter modules that transform property constraints into latent representations that guide the generation process without compromising structural validity [3].

Validation and Evaluation Framework

Rigorous validation is essential for inverse design. MatterGen employs multiple evaluation metrics:

- Stability assessment measures whether generated structures have energy within 0.1 eV per atom above the convex hull defined by a reference dataset [3].

- Uniqueness evaluation ensures generated structures do not match other structures produced by the same method.

- Novelty assessment verifies that structures do not match existing materials in expanded databases containing over 850,000 known structures [3].

- Structural quality measures the root-mean-square deviation (RMSD) between generated structures and their DFT-relaxed counterparts, with high-quality generations showing RMSD below 0.076 Å [3].

This comprehensive validation framework ensures generated materials are not only novel but also synthetically plausible and stable.

Experimental Protocols and Workflows

Inverse Design Workflow for Stable Inorganic Materials

The following diagram illustrates the complete inverse design workflow implemented in state-of-the-art frameworks like MatterGen:

Diffusion Process for Crystalline Materials

The core diffusion process for generating crystalline materials involves specialized handling of periodic structures:

Key Experimental Protocols

Model Training and Fine-tuning Protocol

Dataset Preparation:

- Curate diverse inorganic crystal structures from validated sources (Materials Project, Alexandria, ICSD)

- Apply filters for structure quality and stability (e.g., energy above hull < 0.1 eV/atom)

- Compute target properties using consistent DFT parameters

- Split data into training, validation, and test sets (typical ratio: 80/10/10)

Base Model Training:

- Initialize model with symmetry-equivariant architecture

- Train using denoising score matching objective

- Optimize with Adam optimizer, learning rate 10⁻⁴

- Validate on reconstruction accuracy and stability metrics

Conditional Fine-tuning:

- Freeze base model parameters

- Train adapter modules on property-labelled datasets

- Use classifier-free guidance to enhance property control

- Validate conditioning effectiveness through targeted generation

Structure Generation and Validation Protocol

Conditioned Generation:

- Specify target properties (composition, symmetry, electronic properties)

- Sample from conditional distribution using learned model

- Generate multiple candidates for each target (typically 100-1000)

- Apply post-processing for stoichiometry and charge balance

Stability Assessment:

- Perform DFT relaxation using consistent computational parameters

- Calculate energy above convex hull using reference datasets

- Filter structures with energy above hull > 0.1 eV/atom

- Verify dynamic stability through phonon calculations where feasible

Novelty and Diversity Evaluation:

- Compare generated structures to training data using structure matchers

- Assess uniqueness within generated set

- Measure diversity across chemical and structural spaces

- Verify synthesizability through prototype analysis and literature review

Performance Metrics and Benchmarking

Table 2: Performance Comparison of Generative Models for Inorganic Materials

| Model | Stable, Unique & New (SUN) Materials | Average RMSD to DFT (Å) | Property Conditioning | Synthesis Validation |

|---|---|---|---|---|

| MatterGen (Base) | 75% stable (Alex-MP-ICSD hull) | <0.076 Å | No (base model) | Experimental validation of selected materials |

| MatterGen (Conditioned) | Varies by target (often higher than RSS) | Similar to base model | Yes (multiple properties) | One synthesized material within 20% of target property |

| CDVAE | ~30% lower than MatterGen | ~50% higher than MatterGen | Limited (mainly formation energy) | Not reported |

| DiffCSP | ~30% lower than MatterGen | ~50% higher than MatterGen | Limited | Not reported |

| AMDEN (Amorphous) | Not directly comparable | Not directly comparable | Yes | Not applicable |

Essential Research Tools and Infrastructure

Computational Frameworks and Databases

Successful implementation of inverse design requires robust computational infrastructure and curated materials databases:

Integrated Materials Platforms:

- JARVIS Infrastructure: A unified platform for multiscale, multimodal forward and inverse materials design integrating DFT, machine learning, force fields, and experimental data [4]. Key components include JARVIS-DFT (90,000+ materials), JARVIS-FF (force field database), and ALIGNN (property prediction models) [4].

- Materials Project: Extensive database of computed materials properties with open access to structure-property relationships [3].

- AFLOW and OQMD: Automated high-throughput computation frameworks with curated materials data [4].

Generative Modeling Frameworks:

- MatterGen: Diffusion-based generative model for stable inorganic materials across the periodic table [3].

- AMDEN: Diffusion framework for amorphous materials design addressing challenges in disordered systems [2].

- cG-SchNet: Conditional generative neural network for 3D molecular structures with specified chemical and structural properties [6].

Research Reagent Solutions for Computational Materials Design

Table 3: Essential Computational Tools for Inverse Design Implementation

| Tool Category | Specific Solutions | Function | Implementation Considerations |

|---|---|---|---|

| Structure Generators | MatterGen, CDVAE, DiffCSP | Generate novel crystal structures from noise or conditions | Requires pretrained models; MatterGen shows 2x higher success rate for stable materials |

| Property Predictors | ALIGNN, MEGNet, SchNet | Rapidly estimate properties of generated structures | Near-DFT accuracy at significantly lower computational cost |

| Validation Suites | pymatgen, ASE, VASP | Relax structures and compute accurate properties | DFT validation remains computationally expensive but essential |

| Materials Databases | JARVIS-DFT, Materials Project, OQMD | Provide training data and reference for stability assessment | Critical for training generative models and computing convex hull stability |

| Workflow Managers | JARVIS-Tools, AiiDA, FireWorks | Automate complex simulation workflows | Essential for reproducible high-throughput materials screening |

Applications and Experimental Validation

Case Study: Targeted Material Generation with MatterGen

In a comprehensive demonstration of inverse design capabilities, MatterGen was applied to generate materials with specific property profiles [3]. The experimental procedure included:

Multi-property Optimization: Generation of materials with high magnetic density and chemical composition with low supply-chain risk, demonstrating the ability to satisfy multiple simultaneous constraints [3].

Synthesis and Experimental Validation: One generated material was synthesized with measured property values within 20% of the target, providing crucial experimental validation of the inverse design approach [3].

Performance Benchmarking: Comparison with traditional methods including random structure search (RSS) and substitution-based approaches showed that fine-tuned MatterGen often generates more stable, unique, and new materials in target chemical systems [3].

Extension to Amorphous and Nanoscale Materials

While initially developed for crystalline systems, inverse design principles have been extended to more complex materials classes:

Amorphous Materials: AMDEN addresses the unique challenges of generating disordered materials, where the absence of periodicity and dependence on thermal history require specialized approaches [2]. The framework incorporates Hamiltonian Monte Carlo refinement to generate low-energy amorphous configurations [2].

Nanoscale Assemblies: Inverse design has been applied to DNA-programmed nanoparticle assemblies, creating hierarchical 3D organizations with targeted optical properties [7]. This approach demonstrates how local binding rules can be designed to achieve complex large-scale architectures.

Molecular Design: Conditional generative networks like cG-SchNet enable inverse design of 3D molecular structures with specified electronic properties, bridging the gap between molecular and materials design [6].

Inverse design represents a fundamental shift in materials research methodology, moving from serendipitous discovery to targeted creation of materials with predefined properties. The development of conditional generative models, particularly diffusion-based approaches like MatterGen, has dramatically advanced our ability to design stable inorganic materials with specific characteristics across the periodic table.

The integration of these computational methods with experimental validation creates a powerful feedback loop for accelerating materials discovery. As datasets expand and models become more sophisticated, inverse design will likely become an increasingly central approach in materials science, enabling the rapid development of materials for energy, electronics, and sustainability applications.

Future advancements will likely focus on improving the accuracy of property predictions, expanding to more complex materials systems, and enhancing integration with autonomous synthesis and characterization platforms. The continued development of comprehensive infrastructures like JARVIS that support both forward and inverse design will be crucial for realizing the full potential of this transformative approach to materials discovery.

The discovery and development of new functional materials are fundamental to technological progress across critical sectors, including energy storage, catalysis, and pharmaceutical development. For decades, this process has been dominated by traditional methodologies reliant on experimental trial-and-error and human intuition. This conventional paradigm, while responsible for historical breakthroughs, presents a fundamental and critical bottleneck: it is inherently slow, extraordinarily resource-intensive, and fundamentally limited by human cognitive capacity and the scope of known chemical space. The materials design challenge is one of immense scale; the estimated chemical space of potential inorganic compounds alone encompasses tens of billions of stable structures, far exceeding the capacity of any human-guided exploration [3] [8].

This article delineates the core deficiencies of traditional materials development and frames them within the emergent solution: the inverse design approach. Inverse design represents a paradigm shift, using generative artificial intelligence and computational models to directly design novel, stable inorganic materials with target properties from the outset, rather than relying on the serendipitous discovery from screening known candidates. Evidence from the forefront of materials informatics demonstrates that this new paradigm can generate stable, diverse inorganic materials at a rate and precision unattainable by traditional means, thereby overcoming the critical bottlenecks that have long constrained innovation [3].

Quantitative Analysis of Traditional Workflow Bottlenecks

The inefficiencies of the traditional materials development workflow are not merely anecdotal; they are quantifiable across multiple dimensions, from operational overhead in manufacturing to the low success rate of discovery. The data reveals a sector struggling with manual processes and a slow, screening-based approach to innovation.

Table 1: Quantitative Bottlenecks in Life Sciences Manufacturing and Materials Development

| Bottleneck Area | Key Metric | Impact / Finding |

|---|---|---|

| Production Line Changeovers [9] | 63% of pharma & medical device companies use paper-based processes. | Leads to substantial operational strain and delays. |

| Line Clearance Failures [9] | 70% of organizations experienced ≥1 failure in 12 months; 30% had ≥6 failures. | Causes major manufacturing disruptions and quality issues. |

| Changeover Time [9] | Simple changeovers: 30 mins–2 hrs; Complex changeovers: often >4 hrs. | Significant production downtime and reduced agility. |

| Primary Disruption Causes [9] | Human error, equipment setup issues, and missed items (cited by 4/5 respondents). | Highlights vulnerability of manual, human-reliant systems. |

| Generative Model Success [3] | MatterGen generates structures >10x closer to DFT local energy minimum than previous models. | Shows AI's potential to bypass instability issues of traditional discovery. |

The data from the life sciences industry, a key application area for advanced materials, underscores the profound impact of relying on legacy systems. These manual processes are a microcosm of the broader trial-and-error approach that defines traditional materials development, leading to excessive timelines and costs. One analysis of industrial challenges notes that development cycles often exceed a decade with expenditures surpassing ten million USD due to slow experimental iteration [8].

Core Deficiencies of the Traditional Methodology

The quantitative bottlenecks are symptoms of deeper, systemic deficiencies inherent in the traditional materials development framework.

The Screening-Based Discovery Limit

Traditional discovery, including high-throughput computational screening, is fundamentally limited to exploring the tiny fraction of known or easily derivable materials. The largest such explorations are on the order of 10^6–10^7 materials, which is only a minuscule fraction of the potentially stable inorganic compounds thought to exist [3]. This method cannot efficiently propose or explore truly novel chemical compositions or crystal structures outside of established databases, creating a severe innovation ceiling.

The Human Intuition Bottleneck

The process heavily relies on the experience and intuition of materials scientists to hypothesize new candidate materials. This human-dependent approach is ill-suited to navigating the vast, high-dimensional chemical space, where non-intuitive combinations may yield the most promising results. It is, as one study describes, "causing long iteration cycles" and is a "fundamental bottleneck for vertical industries" [8].

The Synthesis and Stability Gap

A significant problem in the field is the persistent gap between computationally predicted materials and their synthetic viability. Many theoretically promising materials predicted through traditional computational methods prove impossible or impractical to synthesize in the laboratory, or are unstable under real-world conditions [8]. This necessitates costly and time-consuming experimental validation cycles that often end in failure.

Inability to Handle Multi-Objective Constraints

Modern applications require materials to satisfy multiple, often competing, constraints simultaneously—not just high performance, but also low cost, minimal environmental impact, sustainability, and compliance with escalating regulations [8]. Traditional sequential development struggles with this complex, multi-faceted optimization, as it lacks the tools to navigate the trade-offs effectively from the design phase.

The Inverse Design Paradigm: A Path Forward

Inverse design addresses these deficiencies by flipping the discovery process on its head. Instead of screening existing candidates, it uses generative models to directly propose new, stable materials that are optimized for a set of pre-defined target properties and constraints.

Principal Workflow: Traditional vs. Inverse Design

The following diagram contrasts the sequential, failure-prone traditional workflow with the integrated, generative approach of inverse design.

Validated Performance of Generative AI

Platforms like MatterGen and Aethorix v1.0 exemplify the inverse design paradigm. MatterGen is a diffusion-based generative model that creates stable, diverse inorganic materials across the periodic table [3]. Its performance quantitatively demonstrates the overcoming of traditional bottlenecks:

Table 2: Performance Benchmark of MatterGen vs. Prior Methods

| Performance Metric | MatterGen Result | Improvement Over Prior State-of-the-Art |

|---|---|---|

| Stability (within 0.1 eV/atom of convex hull) | 75% - 78% | More than doubles the percentage of stable, unique, and new (SUN) materials [3]. |

| Structural Relaxation (RMSD to DFT-relaxed structure) | 95% below 0.076 Å | Generated structures are more than ten times closer to the local energy minimum [3]. |

| Novelty & Diversity | 61% of generated structures are new. | Can generate diverse structures without significant saturation even at a scale of millions [3]. |

The Aethorix v1.0 platform operationalizes this capability into a full industrial workflow, integrating large language models for objective mining, generative models for zero-shot crystal design, and machine-learned interatomic potentials for rapid property prediction at ab initio accuracy. This closed-loop system is designed to replace labor-intensive trial-and-error with a semi-automatic discovery process, incorporating critical operational constraints from the outset [8].

Experimental Protocol for Generative Materials Design

The implementation of an inverse design framework requires a rigorous, multi-stage computational protocol. The following workflow details the key stages from target definition to candidate selection for synthesis.

Detailed Methodologies

- Input Definition and Constraint Specification: The process is guided by industry-provided specifications. This includes (i) the target chemical system and elemental constraints, (ii) operational environment parameters (temperature, pressure), and (iii) quantifiable target property values. Large language models (LLMs) can be employed to retrieve relevant literature and extract these key design parameters [8].

- AI-Driven Structure Generation: A diffusion-based generative model (e.g., MatterGen, Aethorix) is used for zero-shot discovery. The model represents a crystal structure by its unit cell: atom types (A), fractional coordinates (X), and periodic lattice (L), denoted as M = (A, X, L). The model generates novel candidates by iteratively denoising a random initial structure through a learned reverse diffusion process, explicitly trained on stable structures from databases like the Materials Project and Alexandria [3] [8].

- Stability Screening via Machine-Learned Interatomic Potentials (MLIPs): Generated candidate structures undergo large-scale computational pre-screening for thermodynamic stability. This is performed using MLIPs, which provide near-ab initio accuracy at a fraction of the computational cost of density functional theory (DFT). Structures are relaxed using algorithms like BFGS, and their energy above the convex hull is calculated to assess stability [8].

- Property Prediction and Optimization: Synthetically viable structures advance to target property prediction. The same MLIP models are used to efficiently evaluate the performance metrics defined in the input stage, such as mechanical, electronic, or magnetic properties. This step identifies the candidates that meet the design criteria [8].

- Candidate Selection and Experimental Validation: The final shortlist of stable, high-performance candidates is selected for experimental validation. Synthesis and characterization are conducted to confirm predicted properties prior to any consideration of industrial deployment, thereby closing the loop [3] [8].

The Scientist's Toolkit: Research Reagent Solutions

The transition to an inverse design paradigm relies on a suite of computational tools and data resources that form the modern materials scientist's toolkit.

Table 3: Essential Computational Tools for AI-Driven Materials Discovery

| Tool / Resource | Type | Primary Function in Inverse Design |

|---|---|---|

| MatterGen [3] | Generative AI Model | A diffusion model for generating stable, diverse, and novel inorganic crystal structures across the periodic table. |

| Aethorix v1.0 [8] | Integrated Platform | Provides an end-to-end framework combining generative AI, property prediction, and stability screening for industrial R&D. |

| Machine-Learned Interatomic Potentials (MLIPs) [8] | Property Predictor | Enables rapid, high-fidelity assessment of structural stability and target properties for thousands of candidates. |

| Density Functional Theory (DFT) [8] | Computational Method | Serves as the high-fidelity reference for training MLIPs and the final benchmark for promising candidates. |

| Materials Project / Alexandria [3] | Materials Database | Curated datasets of known stable structures used as training data for the generative and predictive models. |

The critical bottleneck of traditional materials development is its fundamental reliance on slow, sequential, and human-limited processes for navigating an impossibly vast chemical space. The quantitative evidence is clear: from persistent manufacturing inefficiencies to the low success rates of discovery, the old paradigm is ill-suited for modern technological demands. The inverse design approach, powered by generative AI and high-throughput computational screening, represents a decisive breakthrough. By directly generating stable, novel materials tailored to specific application constraints, it transforms materials development from a artisanal craft into an engineered, data-driven science. This paradigm shift is poised to dramatically accelerate the discovery of next-generation materials for pharmaceuticals, energy, and beyond, finally overcoming the bottlenecks that have long stifled innovation.

The discovery of new inorganic materials is fundamental to technological advances in areas such as energy storage, catalysis, and carbon capture. Traditionally, this process has relied on experimental trial and error and human intuition, limiting the number of candidates that can be tested. The emerging paradigm of inverse design seeks to reverse this process by directly generating material structures that satisfy specific property constraints. However, a central challenge in this approach is ensuring that computationally proposed materials are both thermodynamically stable and synthetically viable. This technical guide explores the critical role of stability as a prerequisite within the context of a broader thesis on the inverse design of inorganic materials, providing researchers with a detailed overview of the concepts, data, and methodologies required to reliably identify promising candidates.

Core Concepts: Defining Stability and Synthesizability

Thermodynamic Stability

Thermodynamic stability is typically assessed through the decomposition energy (ΔHd), defined as the total energy difference between a given compound and its most stable competing phases in a chemical space. This is determined by constructing a convex hull using the formation energies of compounds within a phase diagram. A material is generally considered stable if its energy per atom, after relaxation via Density Functional Theory (DFT), is within a small threshold (e.g., 0.1 eV/atom) above the convex hull defined by a reference dataset [3] [10].

Synthetic Viability

Synthetic viability, or synthesizability, is a broader concept that extends beyond thermodynamic stability. It refers to whether a material is synthetically accessible through current laboratory capabilities, regardless of whether it has been synthesized yet. This is influenced by a complex array of factors, including kinetic stabilization, the availability of suitable reaction pathways, reactant costs, and available equipment [11]. Crucially, synthesizability cannot be predicted based on thermodynamic constraints alone [11].

The Limitation of Traditional Proxies

Charge balancing, a commonly used proxy for synthesizability, has been shown to be an inaccurate predictor. Remarkably, only 37% of all synthesized inorganic materials and 23% of known ionic binary cesium compounds are charge-balanced according to common oxidation states [11]. This poor performance highlights the need for more sophisticated, data-driven approaches to synthesizability assessment.

Quantitative Comparison of Stability and Synthesizability Models

The table below summarizes the performance and characteristics of contemporary computational models for predicting stability and synthesizability.

Table 1: Performance Metrics of Selected Stability and Synthesizability Models

| Model Name | Primary Task | Key Methodology | Reported Performance | Key Advantages |

|---|---|---|---|---|

| ECSG [10] | Thermodynamic Stability Prediction | Ensemble framework with stacked generalization, combining electron configuration, atomic properties, and interatomic interactions. | AUC: 0.988; Requires only 1/7 of the data to match the performance of existing models. | High accuracy and sample efficiency; reduces inductive bias by integrating diverse knowledge. |

| SynthNN [11] | Synthesizability Classification | Deep learning (SynthNN) using atom2vec representations, trained on ICSD data with a semi-supervised Positive-Unlabeled (PU) learning approach. | 7x higher precision than DFT-based formation energy; 1.5x higher precision than best human expert. | Learns chemistry from data (e.g., charge-balancing, ionicity); does not require structural input. |

| MatterGen [3] | Generative Inverse Design | Diffusion-based model generating atom types, coordinates, and lattice. Can be fine-tuned for properties. | >75% of generated structures stable (<0.1 eV/atom); 61% of generated structures are new. | Generates stable, diverse crystals across the periodic table; enables property-constrained design. |

Integrated Methodologies for Inverse Design

This section details the experimental and computational protocols that form the backbone of modern inverse design workflows.

Workflow for an Integrated Inverse Design Platform

The following diagram illustrates a closed-loop, data-driven inverse design paradigm, as implemented in platforms like Aethorix v1.0 [8].

Diagram 1: Integrated Inverse Design Workflow

Protocol for Data Extraction from Literature

Automated data extraction is crucial for building training datasets. The ChatExtract method provides a high-accuracy, zero-shot approach [12].

Workflow Stages:

- Data Preparation: Gather research papers, remove HTML/XML syntax, and divide text into sentences.

- Stage A - Initial Classification: A simple prompt applied to all sentences to identify those containing relevant data (Value and Unit). This typically reduces the dataset by a ratio of 1:100 (relevant to irrelevant).

- Stage B - Data Extraction:

- Feature 1: The text passage for analysis is expanded to include the paper title, the preceding sentence, and the target sentence to capture the material name.

- Feature 2: Sentences are split into single-valued and multi-valued extraction paths to improve accuracy.

- Feature 3: Prompts explicitly allow for negative answers to discourage hallucination of non-existent data.

- Feature 4: Uncertainty-inducing redundant prompts are used to encourage reanalysis and verification.

Performance: Using this protocol with advanced conversational LLMs like GPT-4 achieves precision and recall rates close to 90% for extracting materials data triplets (Material, Value, Unit) [12].

Protocol for Stability Prediction via Stacked Generalization

The ECSG framework mitigates inductive bias by combining multiple models [10].

Base-Level Model Training:

- ECCNN (Electron Configuration CNN): A newly developed model that uses electron configuration matrices as input, processed through convolutional layers.

- Magpie: Utilizes statistical features (mean, deviation, range) of elemental properties (e.g., atomic mass, radius) and uses gradient-boosted regression trees (XGBoost).

- Roost: Models the chemical formula as a graph, using a graph neural network with an attention mechanism to capture interatomic interactions.

Meta-Learner Training: The predictions from the three base models are used as features to train a final meta-learner model, which produces the super learner's (ECSG) stability prediction.

Validation: Model performance is validated using datasets from the Materials Project (MP) and JARVIS, with final stability confirmed by DFT calculations.

This table details key computational and experimental "reagents" essential for conducting research in inverse design and stability prediction.

Table 2: Key Resources for Inverse Design and Stability Research

| Item/Resource | Function/Description | Example Sources/Tools |

|---|---|---|

| First-Principles Data | Provides high-fidelity data for training and benchmarking models. Calculates formation energies for convex hull construction. | Quantum Espresso [8], DFT Calculations [10] |

| Materials Databases | Source of known crystal structures and properties for training machine learning models and defining reference convex hulls. | Materials Project (MP) [3] [8], Inorganic Crystal Structure Database (ICSD) [11] [3], Alexandria [3], JARVIS [10] |

| Generative Models | Core engine for inverse design; generates novel, stable crystal structures from scratch based on constraints. | MatterGen [3] [8], CDVAE, DiffCSP [3] |

| Machine-Learned Interatomic Potentials (MLIPs) | Enables rapid, large-scale stability pre-screening and property prediction under operational conditions with near-DFT accuracy. | In-house developed MLIPs (e.g., in Aethorix) [8] |

| Stability & Synthesizability Predictors | Classifies generated candidates by their thermodynamic stability and likelihood of successful laboratory synthesis. | ECSG [10], SynthNN [11] |

| Large Language Models (LLMs) | Automates the extraction of design parameters and material data from scientific literature, reducing manual effort. | GPT-4 in ChatExtract [12], General conversational LLMs |

| Visualization & Analysis Tools | Used to sketch, view, and analyze molecular and crystal structures in 2D and 3D. | MolView [13], RCSB Chemical Sketch Tool [14] |

The integration of thermodynamic stability and synthetic viability as prerequisites is transforming the field of inorganic materials discovery. The advent of powerful generative models like MatterGen, combined with robust and efficient predictors for stability and synthesizability, is establishing a new, data-driven paradigm. This integrated approach, exemplified by platforms such as Aethorix v1.0, moves beyond traditional serendipitous discovery and high-throughput screening. By closing the loop from AI-driven design to experimental validation, it promises to significantly accelerate the development of novel, functional materials that meet the rigorous demands of modern technology and industry.

The discovery of novel inorganic materials is a cornerstone of technological advancement, powering innovations in areas from renewable energy and carbon capture to next-generation batteries and quantum computing. Traditionally, materials discovery has relied on experimental trial and error or computational screening of known materials, approaches that are fundamentally limited by human intuition and existing chemical knowledge. These methods struggle to navigate the vastness of chemical space, which is estimated to contain millions of potentially stable inorganic compounds that remain undiscovered. The emergence of artificial intelligence (AI) and machine learning (ML) has catalyzed a paradigm shift from this direct design approach to inverse design, where desired properties are specified as inputs and AI models generate candidate materials satisfying these constraints. This whitepaper examines how AI and ML are enabling researchers to navigate this expansive chemical space, with a specific focus on the inverse design of stable inorganic materials.

Inverse design represents a fundamental reorientation of the discovery process. Where traditional methods start with a material and compute its properties, inverse design begins with target properties and identifies materials that possess them. This approach is particularly valuable for addressing complex, multi-objective design challenges that involve balancing stability, synthesizability, and multiple functional properties. By learning the underlying distribution of known materials and their properties, AI models can extrapolate beyond existing datasets to propose entirely novel, high-performing compounds, dramatically accelerating the materials development cycle from years to weeks.

AI Methodologies for Inverse Design

Several distinct AI strategies have emerged for the inverse design of inorganic materials, each with unique strengths and applications. The three primary methodologies are generative models, global optimization, and high-throughput virtual screening, with generative models showing particularly rapid advancement and capability.

Generative Models for Materials Design

Generative models learn the probability distribution of training data and can sample from this distribution to create novel, plausible material structures. Among these, diffusion models have recently demonstrated remarkable success in generating stable, diverse inorganic crystals.

MatterGen is a state-of-the-art diffusion model specifically designed for crystalline materials. It generates crystal structures through a process that gradually refines atom types, coordinates, and the periodic lattice. The model employs a custom diffusion process that respects the unique symmetries and periodic boundaries of crystals, with physically meaningful limiting noise distributions. MatterGen can be fine-tuned with adapter modules to steer generation toward materials with desired chemistry, symmetry, and properties such as magnetic density or mechanical characteristics [3]. In benchmark tests, structures generated by MatterGen were more than twice as likely to be new and stable compared to previous generative models, and more than ten times closer to their local energy minimum after density functional theory (DFT) relaxation [3].

Other notable generative approaches include the Crystal Diffusion Variational Autoencoder (CDVAE), which uses a score-based diffusion process in the decoder to generate realistic crystals, and conditional variants (Cond-CDVAE) that enable generation based on user-defined parameters like composition and pressure [15]. Graph Networks for Materials Exploration (GNoME) from Google DeepMind has demonstrated the remarkable scalability of such approaches, discovering 2.2 million new crystals, including 380,000 stable materials that could power future technologies [16].

Reinforcement Learning and Alternative Approaches

Reinforcement learning (RL) offers a distinct approach where an AI agent learns to generate materials through repeated interaction with an environment. In RL frameworks for materials design, an agent typically constructs a material composition step-by-step, receiving rewards based on how well the generated material satisfies target properties. Recent work has demonstrated deep RL models that successfully learn chemical guidelines such as negative formation energy, charge neutrality, and electronegativity balance while maintaining high chemical diversity and uniqueness [17]. These models can optimize for multiple objectives simultaneously, including both materials properties (band gap, formation energy, bulk modulus) and synthesis objectives (sintering and calcination temperatures) [17].

High-throughput virtual screening (HTVS) represents a more established computational approach that uses automated techniques to evaluate large libraries of candidate materials for specific requirements. While traditionally limited to known materials or simple substitutions, HTVS has been enhanced by ML-based property predictors that enable rapid evaluation of hypothetical compounds. However, HTVS remains constrained by the initial scope of the screening library and offers less exploration capability than generative or RL methods [18].

Table 1: Comparison of AI Approaches for Inverse Materials Design

| Method | Key Principles | Advantages | Limitations |

|---|---|---|---|

| Generative Models (e.g., Diffusion) | Learns data distribution; generates new structures through probabilistic sampling | High diversity of outputs; can extrapolate beyond training data; enables property-conditional generation | Requires large training datasets; generated structures may require stability validation |

| Reinforcement Learning | Agent learns optimal generation policy through reward feedback | Naturally suited for multi-objective optimization; can incorporate complex constraints | Training can be unstable; requires careful reward function design |

| High-Throughput Virtual Screening | Rapid computational evaluation of large material libraries | Well-established; high interpretability; reliable for incremental discovery | Limited to pre-defined search spaces; less innovative than generative approaches |

| Global Optimization (e.g., Evolutionary Algorithms) | Iteratively improves candidate population using selection, mutation, crossover | Effective for complex optimization landscapes; no training data required | Computationally intensive; requires many property evaluations |

Performance Benchmarks and Quantitative Advances

The rapid progress in AI-driven materials design is clearly demonstrated through quantitative benchmarks comparing model performance across key metrics. These metrics include the rate of generating stable, unique, and new (SUN) materials; structural quality measured by root mean square displacement (RMSD) after DFT relaxation; and success rates in predicting known experimental structures.

Table 2: Performance Comparison of Leading Generative Models for Inorganic Materials

| Model | SUN Materials Rate | Average RMSD after Relaxation (Å) | Experimental Structure Prediction Accuracy | Training Data Size |

|---|---|---|---|---|

| MatterGen | >60% improvement over baselines | ~0.076 (10x lower than atomic radius of H) | N/A | 607,683 structures (Alex-MP-20) [3] |

| CDVAE | Baseline | Baseline | N/A | Standardized benchmark dataset [3] |

| Cond-CDVAE | N/A | <1.0 (average RMSD) | 59.3% for 3547 unseen structures (83.2% for <20 atoms) | 670,979 structures (MP60-CALYPSO) [15] |

| GNoME | 380,000 stable materials identified | N/A | 736 independently synthesized by external researchers | Materials Project + active learning [16] |

Recent advances show remarkable improvements in both the quality and stability of AI-generated materials. MatterGen generates structures that are exceptionally close to their DFT-relaxed configurations, with 95% of generated structures having RMSD below 0.076 Å – nearly an order of magnitude smaller than the atomic radius of hydrogen [3]. This indicates that the model produces materials very near their local energy minima, significantly reducing the computational cost of subsequent relaxation. Furthermore, MatterGen demonstrates impressive diversity, with 100% uniqueness when generating 1,000 structures and only dropping to 52% after generating 10 million structures, while 61% of generated structures are novel compared to existing databases [3].

The Cond-CDVAE model showcases exceptional capability in predicting experimental crystal structures, successfully identifying 59.3% of 3,547 unseen ambient-pressure experimental structures within 800 sampling attempts, with accuracy rising to 83.2% for structures with fewer than 20 atoms per unit cell [15]. These results meet or exceed the performance of conventional CSP methods based on global optimization, demonstrating the growing maturity of AI approaches for practical materials prediction.

Experimental Protocols and Validation

The ultimate validation of AI-designed materials comes through experimental synthesis and characterization. Recent studies have demonstrated successful laboratory realization of AI-predicted materials, confirming the practical utility of these approaches.

MatterGen Validation Protocol

The validation pipeline for MatterGen illustrates a comprehensive approach to evaluating AI-generated materials:

Generation: The model produces candidate structures based on target constraints (e.g., specific chemistry, symmetry, or properties).

Stability Screening: Generated structures are evaluated using DFT calculations to determine stability, typically measured by energy above the convex hull (Ehull). Materials with Ehull < 0.1 eV/atom are considered potentially stable.

Property Verification: Stable candidates undergo further DFT calculations to verify target functional properties (mechanical, electronic, magnetic).

Experimental Synthesis: Promising candidates are synthesized in the laboratory. In one case, researchers synthesized a MatterGen-generated material and measured its property to be within 20% of the target value [3].

This protocol ensures that AI-generated materials are not only computationally stable but also synthetically accessible and functionally viable.

Constrained Generation with SCIGEN

For designing materials with exotic quantum properties, researchers have developed SCIGEN (Structural Constraint Integration in GENerative model), a tool that enables popular diffusion models to generate materials following specific geometric design rules [19]. The experimental protocol involves:

Constraint Definition: Users specify desired geometric patterns (e.g., Kagome or Lieb lattices) associated with target quantum properties.

Constrained Generation: SCIGEN integrates these constraints into each step of the diffusion process, blocking generations that don't align with the structural rules.

Stability Screening: The constrained materials are screened for stability, typically retaining 5-10% of initially generated candidates.

Property Simulation: Stable candidates undergo detailed simulations to understand atomic-level behavior and emergent properties.

Synthesis and Characterization: Promising candidates are synthesized and experimentally characterized. Using this approach, researchers successfully synthesized two previously undiscovered compounds (TiPdBi and TiPbSb) with predicted magnetic properties [19].

Visualization of AI-Driven Materials Design Workflows

The following diagram illustrates the core workflow for AI-driven inverse design of inorganic materials, integrating key steps from data preparation through experimental validation:

The workflow demonstrates the iterative, closed-loop nature of modern AI-driven materials discovery, where experimental validation provides feedback to improve AI models.

Successful implementation of AI-driven inverse design requires both computational tools and data resources. The following table details essential components of the research infrastructure for AI-driven materials discovery:

Table 3: Essential Research Resources for AI-Driven Materials Design

| Resource Category | Specific Tools/Platforms | Function in Research | Access Information |

|---|---|---|---|

| Generative Models | MatterGen, CDVAE, GNoME, Aethorix | Generate novel crystal structures with target properties | MatterGen: Published code; GNoME: Predictions available to research community [3] [16] [8] |

| Materials Databases | Materials Project (MP), Inorganic Crystal Structure Database (ICSD), Cambridge Structural Database (CSD), MP60-CALYPSO | Provide training data and reference structures for validation | Open access with registration; MP60-CALYPSO contains 670,979 structures [15] |

| Property Predictors | Graph Neural Networks (GNNs), Crystal Graph Convolutional Neural Networks (CGCNN) | Rapid prediction of material properties without expensive DFT | Integrated in platforms like Materials Project [18] |

| Validation Tools | Density Functional Theory (DFT) codes (VASP, Quantum ESPRESSO), Machine-Learned Interatomic Potentials (MLIPs) | Validate stability and properties of AI-generated candidates | DFT: Academic licenses; MLIPs: Increasingly open-source [8] [20] |

| Constrained Generation | SCIGEN | Enforce geometric constraints for quantum materials | Methodology published in Nature Materials [19] |

AI and machine learning have fundamentally transformed our approach to navigating the vast chemical space of inorganic materials. The emergence of powerful generative models, reinforcement learning techniques, and enhanced screening methods has created a robust toolkit for inverse materials design. These approaches have demonstrated remarkable success in generating stable, novel materials with target properties, significantly accelerating the discovery timeline.

Looking forward, several key challenges and opportunities will shape the next generation of AI tools for materials science. Improving the synthesizability predictions for AI-generated materials remains crucial, as computational stability doesn't always translate to experimental accessibility. Developing AI models that incorporate kinetic and thermodynamic synthesis constraints will be essential for bridging this gap. Additionally, creating more interpretable and explainable AI models will build trust within the research community and provide deeper physical insights into structure-property relationships.

The integration of AI throughout the entire materials discovery pipeline – from initial design to synthesis optimization and characterization – promises to further accelerate progress. As these tools become more sophisticated and accessible, they have the potential to democratize materials innovation, enabling researchers to tackle pressing global challenges in energy, sustainability, and advanced technology through the design of novel functional materials.

The discovery and development of novel inorganic materials are fundamental to technological progress across sectors such as energy storage, catalysis, and electronics. However, industrial materials research and development (R&D) faces two critical and interconnected challenges: excessively long development cycles and increasingly stringent environmental constraints. Traditional materials discovery methodologies, which heavily rely on experimental trial-and-error and human intuition, typically require over a decade and expenditures surpassing ten million USD per material [8]. This protracted timeline creates a significant innovation bottleneck across numerous technology sectors. Concurrently, escalating environmental regulations impose multifaceted design constraints involving carbon footprint, toxicity, recyclability, and lifecycle sustainability, presenting additional complexities for materials designers [8]. This technical guide examines how inverse design approaches, particularly artificial intelligence (AI)-driven methodologies, are transforming this landscape by addressing these fundamental challenges within the broader context of stable inorganic materials research.

The Core Industrial Challenges in Context

The Problem of Long Development Cycles

Traditional materials discovery follows a sequential process of synthesis, characterization, and testing—a methodology that has remained largely unchanged for decades. This direct design approach is inherently time- and resource-intensive, creating a significant bottleneck for industrial innovation [18]. The reliance on human expertise and phenomenological scientific theories makes the process not only slow but also difficult to scale and reproduce [21]. As noted in recent industry surveys, development cycles often exceed a decade with costs surpassing ten million USD due to slow experimental iteration and dependence on expensive specialized labor [8]. Furthermore, a persistent gap exists between computationally predicted materials and their synthetic viability, with many theoretically promising materials proving impossible to synthesize in practice [8].

The Growing Impact of Environmental Constraints

Modern materials design must navigate an increasingly complex landscape of environmental considerations. Industrial processes now face significant barriers in managing material waste, particularly in multi-component or composite systems [8]. Environmental regulations now impose comprehensive design constraints encompassing carbon footprint, toxicity, recyclability, and complete lifecycle sustainability assessments [8]. These constraints add additional dimensions to the already complex challenge of designing stable, functional inorganic materials, requiring researchers to balance performance objectives with environmental and supply chain considerations.

Inverse Design: A Paradigm Shift for Materials Research

Inverse design represents a fundamental shift in materials discovery methodology. Unlike traditional forward design approaches that begin with a material structure and then investigate its properties, inverse design starts with the desired properties and identifies materials that satisfy those constraints [18]. This paradigm leverages advanced computational methods to efficiently explore the vast chemical space toward target regions, dramatically accelerating the discovery process.

The development of inverse design has evolved through several distinct paradigms, as illustrated below:

Evolution of Materials Design Paradigms

AI-Driven Inverse Design Methodologies

Generative Models for Materials Discovery

Generative models represent one of the most promising approaches for inverse materials design. These probabilistic machine learning models generate new materials data from continuous vector spaces learned from prior knowledge on dataset distributions [18]. The key advantage of generative models is their ability to create previously unseen materials with target properties by learning the distribution patterns in existing materials databases [18].

Diffusion Models: MatterGen, a diffusion-based generative model, directly addresses stability challenges by generating stable, diverse inorganic materials across the periodic table [3]. The model employs a customized diffusion process that gradually refines atom types, coordinates, and periodic lattice structures, respecting the unique periodic structure and symmetries of crystalline materials [3]. This approach has demonstrated remarkable performance, with generated structures being more than twice as likely to be new and stable compared to previous methods, and more than ten times closer to local energy minima according to density functional theory (DFT) calculations [3].

Reinforcement Learning: Deep reinforcement learning (RL) approaches frame materials generation as a sequence generation task where agents learn to build chemical formulas element by element while maximizing rewards based on target properties [17]. These methods successfully learn chemical guidelines such as negative formation energy, charge neutrality, and electronegativity balance while maintaining high chemical diversity and uniqueness [17]. RL algorithms can generate novel compounds with both desirable materials properties (band gap, formation energy, bulk modulus) and synthesis objectives (low sintering and calcination temperatures) [17].

Industrial-Grade Implementation Platforms

The translation of these methodologies to industrial applications requires robust platforms that integrate multiple AI technologies. Aethorix v1.0 exemplifies this approach, implementing a closed-loop, data-driven inverse design paradigm to semi-automatically discover, design, and optimize unprecedented inorganic materials without experimental priors [8]. The platform integrates large language models for objective mining, diffusion-based generative models for zero-shot inorganic crystal design, and machine-learned interatomic potentials for rapid property prediction at ab initio accuracy [8].

The workflow of such industrial platforms typically follows a systematic process:

Industrial Inverse Design Workflow

Quantitative Performance of Inverse Design Methods

Stability and Novelty Metrics

Recent advances in generative models have demonstrated significant improvements in generating stable, unique, and new (SUN) materials. The table below summarizes key performance metrics for leading inverse design approaches:

Table 1: Performance Comparison of Inverse Design Methods

| Method | Type | Stability Rate | Novelty Rate | Distance to DFT Minimum | Key Advantages |

|---|---|---|---|---|---|

| MatterGen [3] | Diffusion Model | 78% stable (<0.1 eV/atom above convex hull) | 61% new structures | <0.076 Å RMSD | Broad conditioning abilities across periodic table |

| MatterGen-MP [3] | Diffusion Model | 60% more SUN structures than CDVAE/DiffCSP | N/A | 50% lower average RMSD | Effective even with smaller training datasets |

| RL Approaches [17] | Reinforcement Learning | Learns chemical stability rules | High chemical diversity & uniqueness | N/A | Multi-objective optimization for synthesis & properties |

| Aethorix v1.0 [8] | Integrated Platform | Synthetic viability screening | Zero-shot discovery without experimental priors | Ab initio accuracy with MLIPs | Incorporates operational constraints |

Multi-Objective Optimization Capabilities

A critical advantage of modern inverse design approaches is their ability to simultaneously optimize multiple properties, addressing both performance requirements and environmental constraints. Reinforcement learning methods, in particular, demonstrate this capability by generating compounds with both desirable materials properties (band gap, formation energy, bulk modulus, shear modulus) and synthesis objectives (low sintering and calcination temperatures) [17]. This multi-objective functionality is essential for addressing the complex trade-offs between material performance, synthesizability, and environmental impact in industrial applications.

Experimental Protocols and Validation

Validation Methodologies

Rigorous experimental validation is essential to confirm the predictions of inverse design models. The standard protocol involves several key stages:

Computational Stability Assessment: Generated structures first undergo DFT calculations to evaluate thermodynamic stability. Structures are typically considered stable if their energy per atom after relaxation is within 0.1 eV per atom above the convex hull defined by a reference dataset [3]. For example, in MatterGen validation, 1,024 generated structures were evaluated using this criterion, with 78% falling below the stability threshold [3].

Synthetic Validation: Promising computational candidates proceed to laboratory synthesis. As a proof of concept, one study synthesized a generated material and measured its property to be within 20% of the target value, validating the practical design capabilities of the inverse design approach [3].

Structure Matching: To assess novelty, generated structures are compared against existing materials databases using advanced matching algorithms. The ordered-disordered structure matcher accounts for compositional disorder effects when determining whether a generated structure is truly novel [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Inverse Materials Design

| Tool/Platform | Function | Application in Inverse Design |

|---|---|---|

| Density Functional Theory (DFT) [3] [18] | Electronic structure calculation | Gold-standard validation of stability and properties |

| Machine-Learned Interatomic Potentials (MLIPs) [8] | Rapid property prediction | High-throughput screening with ab initio accuracy |

| Diffusion Models [3] | Crystal structure generation | Generating novel, stable crystal structures from noise |

| Reinforcement Learning Agents [17] | Sequential decision making | Multi-objective optimization of composition and properties |

| Large Language Models (LLMs) [8] | Literature mining | Extracting design parameters and innovation objectives |

Inverse design represents a transformative approach to addressing the dual challenges of long development cycles and environmental constraints in industrial materials research. By leveraging generative AI, reinforcement learning, and integrated computational platforms, researchers can now rapidly discover novel inorganic materials tailored to specific application requirements while incorporating sustainability considerations directly into the design process. The quantitative success of these methods—with stability rates exceeding 75% for generated materials and demonstrated experimental validation—heralds a new era of accelerated materials innovation. As these technologies continue to mature and integrate more sophisticated environmental metrics, they hold the potential to fundamentally reshape materials development pipelines across numerous industries, simultaneously accelerating innovation and enhancing sustainability.

AI Toolbox for Inverse Design: Generative Models, Optimization, and Workflows

The discovery of new inorganic materials is a cornerstone of technological advancement, pivotal for next-generation applications in energy storage, catalysis, and electronics. Traditional material discovery has long relied on experimental trial-and-error or computationally intensive ab initio simulations, processes that are often slow, costly, and ill-suited for navigating the vastness of chemical space. The emergence of the inverse design paradigm represents a fundamental shift. Instead of synthesizing a material and then characterizing its properties, inverse design starts with a set of desired properties and aims to identify a material that fulfills them. This approach is being revolutionized by generative artificial intelligence (AI) models, which learn the underlying probability distribution of existing material data to propose novel, chemically valid candidates.

Among the most prominent generative models for this task are Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models. Each of these architectures offers a distinct mechanism for generating new data and presents a unique set of trade-offs between reconstruction quality, latent space interpretability, and computational demands. Framed within the critical context of discovering stable inorganic materials, this review provides an in-depth technical analysis of these three generative model families, comparing their fundamental principles, applications in materials science, and experimental efficacy in inverse design workflows.

Core Architectural Principles and Trade-offs

Variational Autoencoders (VAEs)

VAEs are a class of generative models that learn a probabilistic mapping between a high-dimensional data space (e.g., material structures) and a low-dimensional latent space. The architecture consists of an encoder that compresses an input into a distribution (characterized by a mean and variance), and a decoder that reconstructs the data from a point sampled from this distribution [22] [23]. A key component of the VAE loss function is the Kullback-Leibler (KL) divergence, which regularizes the latent space to approximate a standard normal distribution. This creates a continuous and smooth latent space, enabling meaningful interpolation and exploration for inverse design [24] [25]. However, this information compression often results in generated samples that are blurry or lack high-frequency details, failing to capture intricate features of material microstructures [24] [23].

Generative Adversarial Networks (GANs)

GANs operate on an adversarial principle, pitting two neural networks against each other: a generator that creates synthetic data from random noise, and a discriminator that distinguishes between real (training) and generated samples [26] [27]. This adversarial training process, when successful, can yield outputs with high perceptual quality and sharpness. A significant challenge in training GANs is mode collapse, where the generator produces a limited diversity of samples, failing to capture the full breadth of the training data distribution [23]. Furthermore, GANs lack an inherent encoder and can be difficult and computationally expensive to train stably [26] [23].

Diffusion Models

Diffusion models generate data through a iterative, multi-step process. A forward diffusion process systematically adds Gaussian noise to the training data until it becomes pure noise. The core of the model is a learned reverse diffusion process (or denoising process) that gradually removes this noise to reconstruct the data [22] [23]. Trained to predict the noise added at each step, the model can start from random noise and generate novel, high-quality samples through a series of denoising steps. These models are renowned for producing high-fidelity and diverse samples [23] [25]. Their primary drawback is slow sample generation due to the requirement of hundreds or thousands of neural network evaluations [23].

Table 1: Comparative Analysis of Core Generative Model Architectures

| Feature | Variational Autoencoders (VAEs) | Generative Adversarial Networks (GANs) | Diffusion Models |

|---|---|---|---|

| Core Principle | Probabilistic encoding/decoding with KL divergence loss [23] | Adversarial training between generator and discriminator [23] | Iterative denoising reversal of a fixed noise process [23] |

| Training Stability | Stable, tractable likelihood loss [23] | Unstable, susceptible to mode collapse [23] | Stable, tractable likelihood loss [23] |

| Output Fidelity | Lower, often blurry outputs [24] [23] | High, sharp outputs [26] [23] | Very high, state-of-the-art quality [22] [23] |

| Sample Diversity | High, encouraged by likelihood loss [23] | Can be low due to mode collapse [23] | High, encouraged by likelihood loss [23] |

| Generation Speed | Fast, single forward pass [23] | Fast, single forward pass [23] | Slow, requires many iterative steps [23] |

| Latent Space | Continuous, smooth, and interpretable [24] | Less interpretable, no direct encoder [23] | High-dimensional (noise space), less compact [24] |

Application in Inverse Design of Inorganic Materials

The inverse design of stable inorganic materials involves using generative models to propose novel crystal structures or chemical compositions that are predicted to be thermodynamically stable and possess targeted functional properties.

VAEs excel in providing a low-dimensional, continuous latent space that is ideal for optimization algorithms. The compact representation allows for efficient navigation using techniques like genetic algorithms or Bayesian optimization to find regions corresponding to materials with desired properties [24]. For instance, the latent space of a VAE has been used to assess material similarity and evolve microstructures by performing vector operations [24]. However, the inherent blurriness of VAE-generated microstructures can lead to inaccuracies in property prediction and hinder the effectiveness of the inverse design cycle [24].

GANs have demonstrated proficiency in efficiently sampling the vast chemical composition space of inorganic materials. For example, a model dubbed MatGAN was trained on the Inorganic Crystal Structure Database (ICSD) and could generate millions of novel hypothetical compositions with a high percentage (84.5%) of them being chemically valid (charge-neutral and electronegativity-balanced), despite these rules not being explicitly programmed [27]. This demonstrates the model's ability to learn implicit chemical composition rules from data. GANs have also been applied in frameworks for the inverse design of sustainable food packaging materials, generating candidates based on target properties like barrier functionality and biodegradability [28].

Diffusion Models are currently at the forefront of high-quality crystal structure generation. Models like MatterGen are trained on large-scale unlabeled crystal datasets and can generate novel, theoretically stable inorganic structures across a wide range of elements [29]. Their ability to produce high-fidelity outputs makes them exceptionally well-suited for reconstructing complex material microstructures and generating candidates that are both novel and structurally sound [22] [24]. Furthermore, their iterative nature is well-adapted for conditional generation tasks, such as inpainting or property-constrained generation.

Table 2: Performance of Generative Models in Materials Science Tasks

| Model Type | Reported Metric | Value / Outcome | Context and Dataset |

|---|---|---|---|

| GAN (MatGAN) [27] | Chemical Validity Rate | 84.5% | Generation of charge-neutral & electronegativity-balanced compositions from ICSD data. |

| GAN (MatGAN) [27] | Novelty | 92.53% | 2 million generated samples were not in the training dataset. |

| Diffusion (MatInvent) [29] | Property Evaluation Reduction | Up to 378-fold | Inverse design of crystals with target properties vs. state-of-the-art. |

| VAE-CDGM (Hybrid) [24] | Reconstruction Quality | Superior to standalone VAE | Statistical descriptors (e.g., two-point correlation) on material microstructures. |

| GAN (for Packaging) [28] | Screening Acceleration | 20–100x | Compared to traditional high-fidelity simulations (e.g., DFT). |

Detailed Experimental Protocols

Protocol 1: Microstructure Reconstruction with a VAE-Guided Conditional Diffusion Model (VAE-CDGM)

This hybrid protocol addresses the trade-off between the efficient latent space of a VAE and the high output quality of a diffusion model [24].

VAE Training and Initial Reconstruction:

- Data Preparation: A dataset of 2D microstructure images (e.g., of random inclusions, metamaterials) is assembled, typically at resolutions like 128x128 pixels.

- Model Training: A VAE is trained on the microstructure dataset. The encoder learns to map input images to a low-dimensional latent vector, while the decoder learns to reconstruct the images.

- Initial Generation: The trained VAE decoder is used to generate initial, but often blurry, reconstructions of the microstructures from points in the latent space.

Conditional Diffusion Model Training:

- Conditioning Input: The blurred reconstructions from the VAE are used as the conditional input to a Denoising Diffusion Probabilistic Model (DDPM).

- Target Output: The original, high-quality microstructure images are used as the target for the diffusion model to learn.