Inverse Design of Materials Using Deep Generative Models: A Comprehensive Guide for Researchers

This article provides a comprehensive overview of the rapidly evolving field of inverse materials design using deep generative models.

Inverse Design of Materials Using Deep Generative Models: A Comprehensive Guide for Researchers

Abstract

This article provides a comprehensive overview of the rapidly evolving field of inverse materials design using deep generative models. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles, core methodologies—including Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models—and their practical applications in discovering novel semiconductors, catalysts, and van der Waals heterostructures. The content addresses critical challenges such as data scarcity, computational cost, and synthesizability, while offering troubleshooting guidance and a comparative analysis of model performance and validation frameworks. By synthesizing key insights from foundational concepts to real-world applications, this guide aims to equip practitioners with the knowledge to leverage these transformative AI tools for accelerating materials discovery in biomedical and clinical research.

Foundations of Inverse Design: From Trial-and-Error to AI-Driven Discovery

Inverse design represents a fundamental paradigm shift in materials discovery, moving away from traditional Edisonian (trial-and-error) approaches toward computational automation. This methodology inverts the traditional design process by defining desired performance metrics first, then using computational models to automatically identify material structures or device configurations that fulfill these specifications. Unlike conventional design that progresses from structure to property, inverse design starts with the target property and works backward to identify optimal structures, often yielding non-intuitive designs that surpass human intuition [1]. This approach is increasingly enabled by deep generative models and gradient-based optimization techniques, allowing researchers to navigate complex, high-dimensional design spaces with unprecedented efficiency.

The core principle of inverse design involves formulating an objective function that quantifies desired performance, then employing optimization algorithms to find the design parameters that maximize this function. In photonics, this might involve maximizing light transmission between specific waveguide modes; in materials science, it could involve generating crystals with target electronic properties. The resulting designs often defy conventional wisdom, demonstrating superior performance through geometries that would be difficult to conceive through human intuition alone [2] [1].

Fundamental Methodologies and Computational Tools

The implementation of inverse design relies on sophisticated computational frameworks, primarily falling into two categories: gradient-based optimization and deep generative models. Gradient-based methods, such as those employing the adjoint method, are particularly powerful for problems with continuous parameters and known physics governed by differential equations. These methods compute gradients of an objective function with respect to thousands or millions of design parameters simultaneously using only two simulations: one forward and one adjoint simulation [1]. This makes them exceptionally efficient for optimizing photonic devices and aerodynamic components where physical laws are well-established.

Deep generative models offer a complementary approach, particularly valuable when the design space is discrete or the physical relationships are complex. Models such as Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and diffusion models learn to encode material representations into a continuous latent space. Through exploration and manipulation of this latent space, these models can generate novel material structures with targeted properties [3] [4]. For example, the Crystal Diffusion Variational Autoencoder (CDVAE) incorporates invariance neural networks to account for the permutation, translation, rotation, and periodicity of crystal structures, significantly enhancing generation capabilities for crystalline materials [4].

Table 1: Comparison of Major Inverse Design Methodologies

| Methodology | Key Mechanism | Primary Applications | Advantages | Limitations |

|---|---|---|---|---|

| Adjoint Method | Gradient computation using forward/adjoint simulations | Photonic devices, fluid dynamics, aerodynamics | Highly efficient for continuous parameters; Requires few simulations | Requires differentiable model; Physics must be well-defined |

| Variational Autoencoders (VAEs) | Encoder-decoder architecture learning latent representations | Crystal structure generation, molecular design | Continuous latent space enables interpolation; Stable training | May generate blurry or averaged structures |

| Generative Adversarial Networks (GANs) | Generator-discriminator competition producing realistic outputs | Semiconductor design, crystal generation | Produces sharp, realistic structures | Training instability; Mode collapse issues |

| Diffusion Models | Progressive denoising from noise to structure | Van der Waals heterostructures, molecule generation | High-quality generation; Training stability | Computationally intensive sampling process |

Application Notes: Inverse Design in Practice

Photonic Device Design

In photonics, inverse design has demonstrated remarkable success in creating compact, high-performance devices. A prime example is the mode converter designed using Tidy3D's inverse design capabilities. This integrated photonics component converts a fundamental waveguide mode to a higher-order mode through a rectangular region with pixelated permittivity, where each pixel's value is independently tunable between vacuum and a maximum permittivity value [2]. The objective function maximizes power conversion between input and output modes, with gradient-based optimization efficiently navigating the enormous design space comprising thousands of permittivity values. To ensure fabricable designs, the process incorporates smoothening and binarization filters that guarantee smooth features and permittivity values restricted to either vacuum or the waveguide material [2].

Van der Waals Heterostructure Design

For two-dimensional materials, the ConditionCDVAE+ framework demonstrates inverse design for van der Waals (vdW) heterostructures. This model addresses the challenge of incorporating target property constraints by integrating a crystal diffusion variational autoencoder with a conditional guidance module combining Low-rank Multimodal Fusion and Generative Adversarial Networks [4]. This approach maps properties and structures into a joint latent space, enabling generation of novel vdW heterostructures based on target optoelectronic properties. When experimentally validated on a dataset of Janus III-VI vdW heterostructures, the model achieved a remarkable 99.51% convergence rate to energy minima in Density Functional Theory (DFT) calculations, confirming the physical viability of generated structures [4].

Semiconductor Materials Discovery

An integrated inverse design framework for semiconductors combines composition generation (VGD-CG) with template-based structure prediction (TSP). The VGD-CG model incorporates conditional variational autoencoders, generative adversarial networks, and diffusion models to explore compositional spaces like N-Ga, Si-Ge, and V-Bi-O [5]. This approach successfully identified several potential semiconductor materials with target properties by leveraging decomposition enthalpies, synthesizability information, and band gaps as design constraints. The comparative analysis of VAE, GAN, and DM approaches provides insights into their respective strengths and limitations for inorganic materials design [5].

Table 2: Performance Metrics of Inverse Design Models in Materials Science

| Model | Application Domain | Key Performance Metrics | Results |

|---|---|---|---|

| ConditionCDVAE+ | Van der Waals heterostructures | Reconstruction RMSE, Match Rate, Ground-state Convergence | RMSE: 0.1842, Match Rate: 25.35%, Convergence: 99.51% [4] |

| CDVAE | General inorganic crystals | Validity, Coverage (COV), Property Distribution | >90% Validity, COV-R: 65.2%, COV-P: 59.8% [4] |

| Inverse Design Mode Converter | Photonic waveguides | Power Conversion Efficiency | Optimized design achieving target mode conversion [2] |

| VGD-CG with TSP | Semiconductor materials | Novel Stable Materials Identified | Several potential semiconductors discovered in N-Ga, Si-Ge, V-Bi-O spaces [5] |

Experimental Protocols

Protocol 1: Inverse Design of a Photonic Mode Converter

This protocol outlines the inverse design process for creating a photonic mode converter using gradient-based optimization [2].

Initial Setup and Parameter Definition:

- Define operational wavelength (e.g., 1.0 μm) and calculate corresponding frequency (freq0 = td.C_0 / wavelength).

- Set design region dimensions (e.g., lx = 5.0 μm, ly = 3.0 μm) and resolution (dldesignregion = 0.01 μm).

- Initialize design parameters as a random array with dimensions corresponding to the number of pixels in the design region (nx × ny).

Simulation Construction:

- Create static waveguide structure with specified permittivity (eps_wg) and width.

- Define a function

make_input_structuresthat converts parameters to permittivity distributions using filtering and projection operations to ensure smooth, binarized features. - Implement a function

make_simthat constructs the simulation including design region, source, and monitors. - Set up ModeSource with fundamental mode (modeindexin = 0) and ModeMonitor to measure output mode conversion (modeindexout = 2).

Optimization Loop:

- Define objective function that runs simulation and returns transmission to target mode.

- Compute gradient using adjoint method (e.g.,

gradient = grad(f)(params)). - Update parameters using gradient-based optimizer (e.g., Adam, L-BFGS).

- Iterate until convergence or for a specified number of iterations.

- Apply final filtering and binarization to ensure fabricable design.

Validation:

- Perform full-wave simulation of final design to verify performance.

- Check manufacturing constraints compliance (feature sizes, permittivity extremes).

Protocol 2: Crystal Generation with ConditionCDVAE+

This protocol details the use of deep generative models for inverse design of crystalline materials, specifically van der Waals heterostructures [4].

Data Preparation:

- Curate dataset of crystal structures with associated properties (e.g., J2DH-8 dataset for vdW heterostructures).

- Preprocess structures: normalize lattice parameters, align orientations, and featurize atomic coordinates.

- Split data into training, validation, and test sets (e.g., 60:20:20 ratio).

Model Configuration:

- Implement ConditionCDVAE+ architecture with three modules:

- VAE module with EquiformerV2-based encoder and decoder for SE(3)-equivariant processing.

- Diffusion module for denoising process.

- Conditional guidance module integrating LMF and GAN for property-structure mapping.

- Set hyperparameters: latent space dimension, learning rate, batch size, diffusion steps.

Training Procedure:

- Pre-train VAE component to reconstruct crystal structures from the dataset.

- Train diffusion model on denoising task.

- Jointly train conditional guidance module to map target properties to latent representations.

- Validate reconstruction performance using StructureMatcher (match rate, RMSE).

Inverse Design Generation:

- Encode target properties into conditional latent vector.

- Sample from latent space under property constraints.

- Decode to generate candidate crystal structures.

- Filter valid structures based on geometric constraints (minimum atomic distances, charge neutrality).

Validation and Analysis:

- Assess generation quality using validity, coverage, and property distribution metrics.

- Perform DFT calculations to verify thermodynamic stability and property accuracy.

- Select top candidates for experimental synthesis consideration.

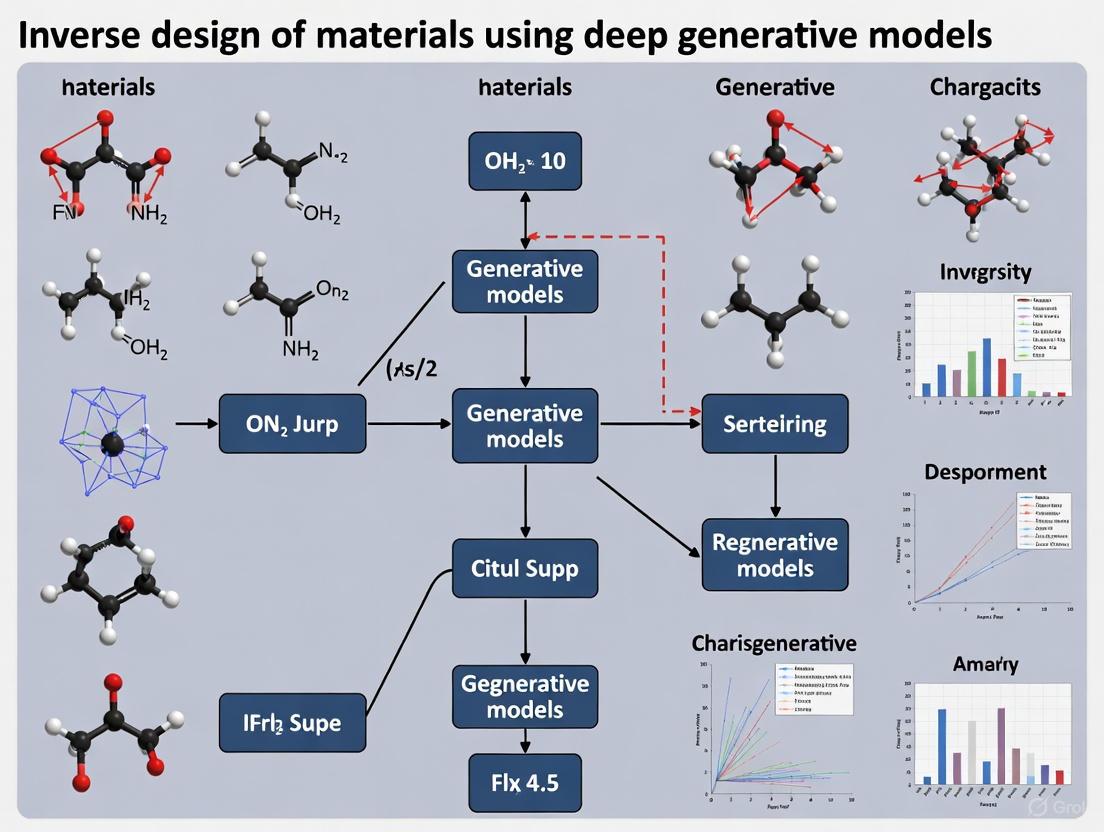

Visualization of Workflows

Inverse Design High-Level Workflow

Inverse Design vs Traditional Workflow

Deep Generative Model Framework

Generative Models for Material Design

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Inverse Design

| Tool/Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Simulation Engines | Tidy3D, DFT Codes (VASP, Quantum ESPRESSO) | Provides physical modeling and property calculation | Photonic device simulation; Material property prediction [2] [4] |

| Optimization Frameworks | TidyGrad, SciPy Optimize | Enables gradient computation and parameter optimization | Inverse design photonics; Structural optimization [1] |

| Generative Models | VAEs, GANs, Diffusion Models, ConditionCDVAE+ | Learns material representations and generates novel structures | Crystal structure generation; Molecular design [3] [4] [5] |

| Material Databases | Materials Project, J2DH-8, AFLOWLIB | Provides training data and validation benchmarks | Model training; Property prediction [4] |

| Analysis & Validation | pymatgen, StructureMatcher | Validates generated structures and compares to ground truth | Crystal structure analysis; Matching generated materials [4] |

| Active Learning Frameworks | pyiron, Bluesky, ChemOS | Manages autonomous experimentation loops | Closed-loop materials discovery [6] [7] |

Inverse design represents a transformative approach to materials discovery and device design, fundamentally shifting from human intuition-driven methods to computational automation. By leveraging both gradient-based optimization and deep generative models, this paradigm enables exploration of design spaces with complexity and dimensionality beyond human comprehension. The integration of these computational approaches with experimental validation through active learning frameworks promises to accelerate materials discovery by orders of magnitude, potentially reducing development timelines from decades to years or months. As these methodologies mature and become more accessible, they hold the promise of addressing urgent materials needs in energy, healthcare, and electronics through targeted, efficient design rather than serendipitous discovery.

The Role of Deep Generative Models in Learning Material Structure-Property Relationships

The inverse design of materials represents a paradigm shift from traditional, often serendipitous discovery methods toward a targeted approach where materials are designed from specific property requirements. Deep generative models (DGMs) are powering this revolution by learning the complex, high-dimensional relationships between material structures and their properties, enabling the generation of novel candidates that satisfy desired performance criteria [8]. This capability is critical across technological domains, from developing better battery electrodes and catalysts to designing advanced high-entropy alloys and composite materials [9] [10].

These models learn the underlying probability distribution ( P(x) ) of material structures and properties from existing data, creating a lower-dimensional latent space that captures the essential features governing material behavior [8] [10]. This latent space enables inverse design by allowing researchers to sample points corresponding to target properties and decode them into viable material structures, effectively inverting the traditional structure-to-property prediction pipeline [8].

Deep Generative Model Architectures for Materials Science

Several specialized deep generative architectures have been developed to handle the unique challenges of materials data, including periodicity in crystals, invariance to symmetry operations, and diverse representation formats.

Conditional Crystal Diffusion Variational Autoencoder (ConditionCDVAE+)

ConditionCDVAE+ enhances the Crystal Diffusion Variational Autoencoder (CDVAE) framework by incorporating SE(3)-equivariant graph neural networks (EquiformerV2) as encoder-decoder components, enabling robust handling of crystal symmetries [4]. The model integrates a conditional guidance module combining Low-rank Multimodal Fusion (LMF) and Generative Adversarial Networks (GAN) to map target properties and structures into a joint latent space for constrained generation [4].

Experimental Protocol: Van der Waals Heterostructure Generation

- Objective: Generate novel, stable van der Waals (vdW) heterostructures with target electronic properties.

- Training Data: Janus 2D III–VI van der Waals Heterostructures (J2DH-8) dataset containing 19,926 structures [4].

- Model Configuration:

- Encoder: EquiformerV2 processes crystal graphs to latent distributions ( q(z|x) ).

- Diffusion Module: Equivariant denoising network refines atom coordinates, lattice parameters, and atom types.

- Conditioning: LMF fuses property constraints (e.g., bandgap, stability) into the latent space.

- Generation: Sampling from noise followed by equivariant denoising steps under property constraints [4].

- Validation: Density Functional Theory (DFT) calculations verify 99.51% of generated samples converge to energy minima [4].

MatterGen: Diffusion Model for Inorganic Crystals

MatterGen employs a diffusion process specifically designed for crystalline materials, separately corrupting and denoising atom types, coordinates, and periodic lattice parameters [11] [12]. Its architecture incorporates adapter modules for fine-tuning on property-labeled datasets, enabling generation under diverse constraints including chemistry, symmetry, and electronic properties [12].

Experimental Protocol: Property-Constrained Crystal Generation

- Objective: Generate novel, stable inorganic crystals with target properties (e.g., high bulk modulus, specific magnetism).

- Training Data: 607,683 stable structures from Materials Project and Alexandria databases [12].

- Diffusion Process:

- Atom Corruption: Categorical diffusion with masking.

- Coordinate Corruption: Wrapped normal distribution respecting periodic boundaries.

- Lattice Corruption: Noise addition preserving symmetry.

- Conditioning: Fine-tuning with adapter modules and classifier-free guidance steers generation [12].

- Validation: DFT relaxation and property calculation; experimental synthesis for select candidates (e.g., TaCr₂O₆ with measured bulk modulus within 20% of target) [11] [12].

Conditional Generative Adversarial Networks (cGANs) for Composites and Alloys

cGANs learn to generate material structures through adversarial training between a generator and discriminator, with condition vectors enforcing property constraints [13] [10]. This approach has proven effective for designing composite microstructures and high-entropy alloys.

Experimental Protocol: Composite Microstructure Inverse Design

- Objective: Generate composite microstructures matching target full-range stress-strain curves.

- Training Data: Finite Element Analysis (FEA) simulations of hybrid composites with varying filler properties and distributions [13].

- Model Architecture: cGAN with Long Short-Term Memory (LSTM) networks to handle sequential stress-strain data.

- Generator: Maps random noise and condition vector (stress-strain curve) to microstructure images.

- Discriminator: Distracts between real and generated microstructures under conditions [13].

- Validation: FEA on generated microstructures; Fréchet Inception Distance (FID) scores quantify similarity (validation FID: 0.21) [13].

Performance Comparison of Deep Generative Models

Table 1: Quantitative Performance of Generative Models on Materials Design Tasks

| Model | Architecture | Material System | Stability Rate | Novelty Rate | Property Control | Key Metrics |

|---|---|---|---|---|---|---|

| ConditionCDVAE+ [4] | Conditional Diffusion VAE | 2D vdW Heterostructures | 99.51% (energy minima) | N/A | Electronic, Optical | RMSE: 0.1842 (reconstruction) |

| MatterGen [12] | Diffusion | Inorganic Crystals | 78% (<0.1 eV/atom hull) | 61% new structures | Chemistry, Symmetry, Mechanical, Electronic, Magnetic | SUN materials: >2× baseline; RMSD: <0.076Å |

| cGAN-LSTM [13] | Conditional GAN | Hybrid Composites | N/A | N/A | Full stress-strain curves | FID: 0.21-0.577 |

| CDVAE [4] | Diffusion VAE | General Crystals | ~75% (DFT-stable) | Moderate | Limited properties | Baseline for comparison |

Table 2: Data Requirements and Computational Resources

| Model | Training Data Size | Data Sources | Compute Requirements | Fine-tuning Capability |

|---|---|---|---|---|

| ConditionCDVAE+ | 19,926 structures [4] | J2DH-8 dataset [4] | High (equivariant networks) | Yes (property conditioning) |

| MatterGen | 607,683 structures [12] | Materials Project, Alexandria [12] | Very High (large-scale diffusion) | Yes (adapter modules) |

| cGAN-LSTM | FEA simulation data [13] | Synthetic (Abaqus) | Moderate | Limited |

| Foundation Models [14] | Millions of structures | Multi-database | Extremely High | Extensive fine-tuning |

Table 3: Key Computational Tools and Databases for Inverse Materials Design

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| Materials Project [14] [12] | Database | Crystal structures and computed properties | Public |

| Alexandria [12] | Database | Expanded inorganic crystal structures | Public |

| ALKEMIE [4] | Platform | High-throughput first-principles calculations | Research |

| pymatgen [4] | Software Library | Structural analysis and materials generation | Open-source |

| DFT Codes | Simulation | Quantum mechanical validation (VASP, Quantum ESPRESSO) | Academic/Commercial |

| StructureMatcher [4] | Algorithm | Crystal structure comparison and matching | Open-source |

Workflow Visualization

Inverse Design Workflow The standard inverse design pipeline begins with property definition, proceeds through model training and conditional generation, and iterates based on validation results.

Conditional Generation DGMs learn a joint latent space representation of structures and properties, enabling generation of novel structures when conditioned on target properties.

Future Directions and Challenges

While deep generative models have demonstrated remarkable capabilities for inverse materials design, several challenges remain. Data scarcity for specific material classes, computational costs of validation, and ensuring synthesizability of generated candidates represent active research areas [8]. Emerging approaches include physics-informed architectures that incorporate domain knowledge, multimodal models that integrate diverse data sources, and closed-loop discovery systems that combine generative AI with robotic experimentation [9] [14] [8].

The integration of foundation models pretrained on broad scientific data with specialized generative architectures promises to further accelerate materials discovery [14]. As these models mature, they will increasingly enable the targeted design of materials addressing critical challenges in sustainability, energy storage, and healthcare innovation.

The inverse design of materials represents a paradigm shift in materials science, moving away from traditional trial-and-error experimentation towards a targeted approach where materials are designed based on desired properties [8]. This process is facilitated by deep generative models, which learn the underlying probability distribution of existing materials data [8]. Once learned, these models can generate novel, chemically valid material structures by sampling from this distribution, effectively navigating the vast chemical space which is estimated to exceed 10^60 carbon-based molecules [8] [15]. The ability to perform inverse design allows researchers to specify target properties, such as a specific bandgap for semiconductors or high elasticity for polymers, and use the generative model to propose candidate structures that meet these criteria [8] [4].

Several generative model families have emerged as powerful tools for this task, primarily Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Diffusion Models, and Generative Flow Networks (GFlowNets) [16] [8] [15]. Each of these model families employs a distinct mechanistic approach to learn and generate data, offering different trade-offs in terms of generation quality, diversity, training stability, and computational requirements [16] [17]. Their application is revolutionizing the acceleration of scientific discovery, with the potential to reduce the decade-long, multimillion-dollar process of traditional material discovery [15]. The following sections provide a detailed examination of each model family, their applications in materials science, and practical protocols for their implementation.

Variational Autoencoders (VAEs)

Core Principles and Architecture

Variational Autoencoders (VAEs) are generative models that learn a probabilistic latent space for data generation and representation [16] [8]. A VAE typically consists of two main components: an encoder and a decoder [8]. The encoder maps input data (e.g., a material structure) to a probability distribution in a latent space, usually defined by a mean (μ) and variance (σ), rather than a single point [18]. This is represented as q(z|x) = N(μ(x), σ(x)²). The decoder then reconstructs the data from samples z drawn from this latent distribution [8]. The model is trained by maximizing the Evidence Lower Bound (ELBO), which balances reconstruction accuracy and the regularity of the latent space [8].

The key advantage of this probabilistic approach is its ability to handle uncertainty and create a continuous, structured latent space [16]. This allows for smooth interpolation between materials and the generation of novel structures by sampling from the latent distribution. VAEs are particularly useful in scenarios where training data is limited or of low quality, as they can fill in gaps using probabilistic reasoning [16]. For example, when processing medical images or analyzing molecular structures, VAEs can infer plausible features not explicitly present in the training data [16].

Applications in Materials Science

VAEs have been successfully applied across various materials domains. A prominent example is the Crystal Diffusion Variational Autoencoder (CDVAE), a framework designed for generating stable, periodic crystal structures [4]. CDVAE incorporates invariance neural networks to account for the fundamental symmetries of crystals, including permutation, translation, rotation, and periodicity, which are critical for generating physically realistic materials [4]. In a recent advancement, ConditionCDVAE+ was developed for the inverse design of van der Waals (vdW) heterostructures [4]. This model uses an SE(3)-equivariant graph neural network, EquiformerV2, as its encoder and decoder, enhancing its ability to capture angular and directional information in complex crystal structures [4].

Another significant application is in molecular design, where VAEs are trained on text-based representations of molecules, such as SMILES or SELFIES strings, to generate novel molecular structures with optimized properties [15]. The Generative Toolkit for Scientific Discovery (GT4SD) provides an open-source library that includes VAE-based models for such tasks, enabling researchers to generate hypotheses for new organic materials [15].

Experimental Protocol: Implementing a VAE for Molecular Generation

Objective: To train a VAE model for the de novo generation of drug-like molecules with targeted properties. Dataset: A dataset of molecular structures (e.g., from PubChem) represented as SMILES or SELFIES strings [15].

Procedure:

- Data Preprocessing:

- Standardize molecular representations (e.g., canonicalize SMILES).

- Split the dataset into training, validation, and test sets (e.g., 80/10/10).

- Model Training:

- Encoder: Implement a neural network (e.g., RNN, Transformer) that maps a SMILES string to the parameters (μ, log σ) of a Gaussian latent distribution,

q(z|x). - Decoder: Implement a network that takes a sample

zfrom the latent distribution and reconstructs the SMILES string autoregressively. - Loss Function: Minimize the combined loss:

L(x) = L_reconstruction(x) + β * KL(q(z|x) || p(z)), where:L_reconstructionis the cross-entropy loss between the input and reconstructed SMILES.- The KL divergence term ensures the learned distribution

q(z|x)stays close to a priorp(z)(typically a standard normal distribution). βis a hyperparameter controlling the weight of the KL term [17].

- Encoder: Implement a neural network (e.g., RNN, Transformer) that maps a SMILES string to the parameters (μ, log σ) of a Gaussian latent distribution,

- Conditional Generation:

- For property-targeted generation, extend the VAE to a Conditional VAE (C-VAE) by feeding the target property (e.g., solubility) as an additional input to both the encoder and decoder.

- Validation:

Diagram 1: VAE architecture and workflow for molecular generation.

Research Reagent Solutions

| Reagent / Tool | Function in Research |

|---|---|

| GT4SD Library [15] | An open-source Python library providing pre-trained VAE models and training pipelines for molecular and material generation. |

| SMILES/SELFIES [15] | String-based representations of molecular structures; the standard text input for molecular VAEs. |

| pymatgen [4] | A Python library for materials analysis; used for processing and analyzing generated crystal structures. |

| ELBO Loss Function [8] | The variational lower bound objective function used to train VAEs, balancing reconstruction fidelity and latent space regularity. |

Generative Adversarial Networks (GANs)

Core Principles and Architecture

Generative Adversarial Networks (GANs) are based on a game-theoretic framework involving two neural networks: a generator (G) and a discriminator (D) [16] [17]. These two networks are trained simultaneously in an adversarial minimax game [17]. The generator learns to map random noise from a prior distribution to the data space, creating synthetic samples. The discriminator's role is to distinguish between real samples from the training data and fake samples produced by the generator [16] [20]. The training process can be summarized by the value function: min_G max_D V(D, G) = E[log D(x)] + E[log(1 - D(G(z)))], where x is real data and z is the noise input [17].

Over time, the generator becomes increasingly adept at producing realistic data that can fool the discriminator, while the discriminator becomes a better critic [20]. A key advantage of GANs is their ability to produce outputs with sharp, fine-grained details, often resulting in higher perceptual quality compared to early VAEs [16] [18]. However, GAN training is notoriously challenging, suffering from issues like instability and mode collapse, where the generator fails to capture the full diversity of the training data [16] [17] [20].

Applications in Materials Science

In materials discovery, GANs are often used in a conditional setting (cGAN), where both the generator and discriminator receive additional information about desired properties [4] [21]. This allows for targeted inverse design. For instance, the AlloyGAN framework integrates large language models (LLMs) with conditional GANs for alloy discovery [21]. The LLM assists in mining and enriching text-based data, which is then used to condition the GAN, enabling the generation of novel alloy compositions with predicted thermodynamic properties that show less than 8% discrepancy from experimental values [21].

Another application is the CCDCGAN model, which incorporates constrained feedback to generate stable and synthesizable crystal structures [4]. Furthermore, GANs have been used in a hybrid approach within the ConditionCDVAE+ model, where a GAN-based module is employed to map properties and structures into a joint latent space, improving the conditional guidance for generating van der Waals heterostructures [4].

Experimental Protocol: Implementing a cGAN for Crystal Structure Generation

Objective: To train a conditional GAN for generating novel crystal structures conditioned on a target formation energy. Dataset: A curated dataset of crystal structures (e.g., from the Materials Project) with associated formation energies.

Procedure:

- Data Representation:

- Model Architecture:

- Generator (G): A neural network (e.g., Graph Neural Network) that takes a noise vector

zand the target property (formation energy) as input and outputs a generated crystal structure. - Discriminator (D): A network that takes either a real or generated crystal structure along with the target property and outputs a probability that the structure is real.

- Generator (G): A neural network (e.g., Graph Neural Network) that takes a noise vector

- Training Loop:

- Step 1 - Update D: Maximize

E[log D(x|y)] + E[log(1 - D(G(z|y)))].- Use a batch of real crystal-property pairs

(x, y). - Use a batch of generated crystals

G(z|y)conditioned on the same properties.

- Use a batch of real crystal-property pairs

- Step 2 - Update G: Maximize

E[log D(G(z|y))](or minimizeE[log(1 - D(G(z|y)))]).- This encourages the generator to produce samples that the discriminator classifies as real.

- Techniques like Gradient Penalty or Spectral Normalization are often applied to stabilize training [17].

- Step 1 - Update D: Maximize

- Validation:

- Check the validity of generated crystals using tools like

pymatgento ensure minimum inter-atomic distances and charge neutrality [4]. - Use a separate property predictor (e.g., a trained ML model) to verify that the generated structures exhibit the target formation energy.

- Check the validity of generated crystals using tools like

Diagram 2: Adversarial training loop of a conditional GAN (cGAN).

Research Reagent Solutions

| Reagent / Tool | Function in Research |

|---|---|

| Spectral Normalization [17] | A technique applied to the discriminator to enforce the Lipschitz constraint, significantly improving GAN training stability. |

| Wasserstein GAN (WGAN) [17] | A GAN variant using the Earth-Mover distance, which provides a more stable training process and meaningful loss metric. |

| Graph Neural Networks [4] | Used as the backbone for both generator and discriminator when the material data is represented as graphs (e.g., crystal graphs). |

| ALIGNN/CGCNN [4] | Pre-trained graph neural network models for material property prediction; can be used as a property validator for GAN outputs. |

Diffusion Models

Core Principles and Architecture

Diffusion Models have recently emerged as state-of-the-art generative models, particularly for high-fidelity image and audio synthesis [16] [18]. Their operation is based on a forward and reverse diffusion process [16] [17]. The forward process is a fixed Markov chain that gradually adds Gaussian noise to the input data over a series of steps, eventually transforming it into pure noise [20]. The reverse process, which is what the model learns, is a denoising procedure that iteratively recovers the data from noise [18].

The core of a diffusion model is a neural network (e.g., a U-Net) trained to predict the noise that was added at a given step in the forward process [17]. During generation, the model starts with a random noise pattern and applies this learned denoising process over multiple steps to produce a coherent output [20]. The primary strength of diffusion models lies in their training stability and their ability to produce highly diverse and accurate outputs [16]. A significant drawback, however, is their computational cost and slow inference speed, as generation requires hundreds or thousands of neural network evaluations [16] [17].

Applications in Materials Science

Diffusion models are gaining traction in materials science for their robustness and quality. The Crystal Diffusion Variational Autoencoder (CDVAE) framework incorporates a diffusion module to generate the atomic coordinates of crystal structures [4]. Another model, DiffCSP, is an extension that synchronously generates lattice parameters and fractional coordinates via a joint equivariant diffusion model, effectively handling the periodicity and symmetry of crystals [4] [21]. These models have demonstrated a high success rate, with DFT calculations confirming that 99.51% of generated samples converge to energy minima, indicating superior ground-state convergence [4].

Beyond inorganic crystals, diffusion models are also being applied to polymer design. For example, text-conditional diffusion models can be guided by natural language prompts (e.g., "a polymer with high glass transition temperature") to generate potential candidates, although this application is still maturing [16]. Their flexibility in conditioning makes them suitable for complex, multi-property optimization tasks.

Experimental Protocol: Implementing a Diffusion Model for Crystal Generation

Objective: To train a diffusion model for the unconditional generation of stable crystal structures. Dataset: A dataset of crystal structures (e.g., the MP-20 dataset containing inorganic materials with less than 20 atoms per unit cell) [4].

Procedure:

- Data Preparation and Representation:

- Represent each crystal as a tuple containing lattice parameters and atomic coordinates.

- Normalize the data.

- Forward Diffusion Process (Fixed):

- Define a noise schedule

{β_1, β_2, ..., β_T}that controls the amount of noise added at each stept. - For each training sample

x_0, generate a noisy samplex_tat a random timesteptusing the formula:x_t = sqrt(ᾱ_t) * x_0 + sqrt(1 - ᾱ_t) * ε, whereε ~ N(0, I)andᾱ_tis a function of theβschedule.

- Define a noise schedule

- Model Training:

- A neural network (e.g., an Equivariant GNN) is trained to predict the noise

εgiven the noisy samplex_tand the timestept. - The loss function is typically the mean squared error between the true and predicted noise:

L = || ε - ε_θ(x_t, t) ||².

- A neural network (e.g., an Equivariant GNN) is trained to predict the noise

- Sampling (Generation):

- Start with a sample of pure noise,

x_T ~ N(0, I). - Iteratively denoise from

t = Ttot = 1using the trained model to getx_{t-1}. A common sampling algorithm is DDPM [17]. - The final output

x_0is the generated crystal structure.

- Start with a sample of pure noise,

- Validation:

- Use the same validity and stability checks as for other crystal generators (e.g., minimum inter-atomic distance, charge neutrality).

- Evaluate the coverage and diversity of the generated structures compared to the training set.

Diagram 3: Forward and reverse processes of a diffusion model.

Research Reagent Solutions

| Reagent / Tool | Function in Research |

|---|---|

| DDPM/DDIM Samplers [17] | Algorithms for the reverse diffusion process; control the trade-off between generation quality and speed. |

| Equivariant Graph NNs [4] | Neural networks that respect the symmetries of 3D space (e.g., rotation equivariance); crucial for modeling physical atomic systems. |

| Noise Scheduler | Defines the variance schedule for adding noise in the forward process; is a key hyperparameter influencing model performance. |

| StructureMatcher (pymatgen) [4] | A tool for comparing crystal structures; used to evaluate the reconstruction and matching performance of generated crystals. |

Generative Flow Networks (GFlowNets)

Core Principles and Architecture

Generative Flow Networks (GFlowNets) are a relatively new family of generative models that frame the generation of composite objects (like molecules or crystals) as a sequential decision-making process [15]. Unlike models that generate an entire structure in one step, GFlowNets construct an object step-by-step, for example, by adding one atom or molecular substructure at a time [15]. The key idea behind GFlowNets is to learn a stochastic policy for this construction process such that the probability of generating a particular object x is proportional to a given reward function R(x) [15].

This makes GFlowNets particularly well-suited for scientific discovery, where the "reward" could be a material's property, such as its catalytic activity or stability [15]. The primary training objective is to match the flow in a directed acyclic graph (where states are partial objects and actions are construction steps) to the reward function [15]. A significant advantage of GFlowNets is their explicit focus on generating diverse candidates, as they are trained to sample in proportion to the reward, rather than only seeking a single high-reward solution [15]. This helps in exploring a wider region of the chemical space.

Applications in Materials Science

GFlowNets are rapidly gaining popularity in molecular and material design due to their sample efficiency and diversity. The Crystal-GFN model is a direct application for generating crystal structures [4]. Within the GT4SD library, GFlowNets are available as a model class for molecule generation, where they have been shown to produce a more diverse set of candidates compared to some traditional approaches [15]. Their non-iterative sampling mechanism and ability to balance exploitation (high reward) and exploration (diversity) make them a powerful tool for the initial stages of a discovery pipeline, where identifying a broad set of promising candidates is crucial.

Experimental Protocol: Implementing a GFlowNet for Molecular Generation

Objective: To train a GFlowNet for generating diverse molecules with high predicted solubility (ESOL). Dataset: A set of molecules with associated ESOL scores [15].

Procedure:

- Define the Generation Process:

- Define the state space (e.g., a partial molecular graph) and action space (e.g., adding an atom or a predefined fragment).

- Define a terminal state, which is a complete, valid molecule.

- Reward Function:

- Define the reward

R(x)for a terminal state (complete molecule)x. This could be the predicted ESOL score from a surrogate model, possibly scaled and shifted to be positive.

- Define the reward

- Model Architecture:

- A neural network is used to parameterize the GFlowNet's policy. This network takes the current state (e.g., a graph) and outputs a probability distribution over possible next actions.

- Training:

- The model is trained by sampling trajectories (sequences of states and actions) from its current policy.

- The core training objective is to minimize a loss function that encourages a flow consistency condition. One common loss is the Trajectory Balance (TB) loss, which ensures that the flow from the initial state to a terminal state via a trajectory is consistent with the reward.

- Sampling:

- Once trained, molecules are generated by sampling actions from the learned policy from the initial (empty) state until a terminal state is reached.

- Validation:

- Evaluate the diversity of the generated molecules using Tanimoto similarity or other molecular diversity metrics.

- Assess the property distribution of the generated set to verify that a high proportion of molecules have the desired ESOL score.

Diagram 4: Sequential decision-making process of a GFlowNet.

Research Reagent Solutions

| Reagent / Tool | Function in Research |

|---|---|

| Trajectory Balance Loss [15] | A key loss function for training GFlowNets, which provides stable and efficient learning of the generative policy. |

| Fragment Libraries | Pre-defined sets of molecular building blocks (fragments) used as the action space for constructing molecules in a chemically realistic way. |

| GT4SD (GFlowNet Module) [15] | Provides implementations of GFlowNets for molecular generation, integrated into a broader ecosystem of generative models. |

| Tanimoto Similarity [15] | A metric for quantifying the structural diversity of a set of generated molecules; used to evaluate GFlowNet output. |

Comparative Analysis and Performance Metrics

Quantitative Model Performance

The selection of an appropriate generative model depends heavily on the specific requirements of the inverse design task. The table below synthesizes quantitative performance data from various studies, particularly in the domain of crystal structure generation, to guide this decision.

Table 1: Quantitative performance comparison of generative models for materials design.

| Model | Task / Dataset | Key Performance Metrics | Notes |

|---|---|---|---|

| ConditionCDVAE+ (VAE+Diffusion) [4] | Crystal Reconstruction (J2DH-8 dataset) | Match Rate: 25.35%, RMSE: 0.1842 | Outperformed CDVAE (Match Rate: ~20.6%, RMSE: ~0.211) on the same dataset. |

| CDVAE (VAE+Diffusion) [4] | Crystal Generation (MP-20 dataset) | Validity: >90%, Property Distribution (Density): Wasserstein distance ~0.05 | Property metric measures similarity between generated and real data distributions. |

| DP-CDVAE (Diffusion) [4] | Crystal Generation | Ground-state Convergence: 99.51% of samples converged to energy minima in DFT calculations. | Indicates a very high rate of generating physically stable structures. |

| AlloyGAN (GAN) [21] | Metallic Glass Design | Property Prediction: Discrepancy < 8% from experimental values for thermodynamic properties. | Demonstrates accuracy in conditional generation for alloys. |

| VAE (GuacaMol) [15] | Molecular Generation | Capable of generating molecules with improved water solubility (ESOL) by >1 M/L. | Performance is benchmarked on standard molecular design tasks. |

Qualitative Comparison and Selection Guide

Beyond quantitative metrics, the choice of model is dictated by practical considerations such as data availability, computational budget, and desired output characteristics.

Table 2: Qualitative comparison and selection guide for generative model families.

| Aspect | VAEs | GANs | Diffusion Models | GFlowNets |

|---|---|---|---|---|

| Training Stability | Stable [17] | Unstable, prone to mode collapse [16] [20] | Stable and predictable [16] | Stable [15] |

| Output Quality | Can be blurry; may lack fine details [16] [17] | Very sharp and high perceptual quality [18] [20] | High quality and diversity [16] [18] | High validity for structured data [15] |

| Sample Diversity | Good | Can suffer from mode collapse [20] | Excellent [16] | Excellent, explicit diversity objective [15] |

| Inference Speed | Fast (single pass) | Very fast (single pass) [20] | Slow (multiple iterative steps) [16] [20] | Fast (sequential but single trajectory) |

| Data Efficiency | Works well with limited data [16] | Requires large, curated datasets [20] | Requires very large datasets [16] | Sample efficient [15] |

| Conditioning Strength | Good | Good (with cGAN) | Very strong and flexible [20] | Strong (reward is inherent condition) |

| Best Use Case | Limited data, probabilistic reasoning, initial exploration. | High-fidelity generation when data and compute are ample, and speed is critical. | State-of-the-art quality and diversity, complex conditioning. | Diverse candidate generation, especially for structured objects (molecules, crystals). |

The inverse design of materials is being profoundly transformed by deep generative models. VAEs, GANs, Diffusion Models, and GFlowNets each offer a unique set of strengths and trade-offs. VAEs provide a robust probabilistic framework, GANs excel at producing high-fidelity samples, Diffusion Models deliver state-of-the-art quality and diversity, and GFlowNets offer a principled approach to generating diverse, high-reward candidates. The emergence of hybrid models, such as ConditionCDVAE+ which combines a VAE with a diffusion process and GAN-based conditioning, highlights a trend towards leveraging the strengths of multiple architectures [4]. As the field progresses, the integration of these generative models with high-throughput computation, automated experimentation, and large language models for knowledge integration promises to further accelerate the discovery of next-generation materials for sustainability, healthcare, and energy applications [8] [21].

The inverse design of materials using deep generative models represents a paradigm shift in the discovery and development of novel functional materials. This approach aims to accelerate the design cycle by generating material structures with predefined target properties, moving beyond traditional trial-and-error methods. Central to the success of these models is the choice of materials representation, which fundamentally determines how structural and compositional information is encoded, processed, and generated. The representation format directly influences a model's ability to capture critical physical constraints, learn meaningful patterns, and produce valid, synthesizable materials. Within this context, three principal representation paradigms have emerged: graph-based, sequence-based, and voxel-based formats. This application note provides a detailed comparative analysis of these representations, offering experimental protocols, performance metrics, and practical guidance for researchers engaged in the inverse design of materials, with particular emphasis on van der Waals (vdW) heterostructures and molecular systems.

Representation Formats: Theoretical Foundations and Applications

Graph-Based Representations

Graph-based representations model a material as a set of nodes (atoms) connected by edges (bonds or interatomic interactions). This format naturally captures the topological connectivity and local coordination environments within a structure, making it particularly suited for describing crystalline materials and molecular systems. The explicit representation of relationships between constituents allows graph neural networks (GNNs) to learn from and generate structures by propagating information across connected nodes.

Key Applications in Inverse Design: The Crystal Diffusion Variational Autoencoder (CDVAE) framework utilizes graph representations to generate physically stable inorganic crystal structures through a diffusion process combined with periodic invariant graph neural networks [4]. Recent advancements, such as ConditionCDVAE+, employ SE(3)-equivariant graph neural networks like EquiformerV2 as encoders and decoders to enhance generation quality by better capturing angular and directional information [4]. For cryo-EM data interpretation, graph-based representations effectively characterize atomic locations in proteins by correlating points of high density with atomic positions, achieving up to 99% residue coverage in high-resolution maps [22].

Voxel-Based Representations

Voxel-based representations discretize 3D space into a regular grid of volumetric pixels (voxels), where each voxel contains information about density or material presence. This format is particularly valuable for processing volumetric data from experimental techniques and for representing continuous density fields without explicit atomic positions.

Key Applications in Inverse Design: In cryo-EM analysis, voxel grids are the native format for storing electron density maps, which can be processed using 3D convolutional neural networks (CNNs) for structure determination [22]. The neural cryo-EM map format represents an advanced voxel-based approach that uses a set of neural networks to parameterize cryo-EM maps, providing spatially continuous, differentiable data for density and gradient information [22]. For materials design, frameworks like iMatGen utilize 3D voxel representations with variational autoencoders to inversely design novel material structures [4]. In medical imaging, stacked custom CNNs process voxel-based morphometry (VBM) data from MRI scans for brain tumor classification, achieving 98% accuracy through adaptive median filtering and Canny edge detection preprocessing [23].

Sequence-Based Representations

Sequence-based representations encode material structures as linear sequences of symbols, typically using string notations such as SMILES (Simplified Molecular Input Line Entry System) for molecules or compound formulas for crystals. While less common for complex 3D structures in materials science, sequence representations offer compact encoding and compatibility with natural language processing models.

Table 1: Comparison of Materials Representation Formats

| Representation Format | Structural Encoding | Key Strengths | Primary Limitations | Exemplary Models |

|---|---|---|---|---|

| Graph-Based | Nodes (atoms) and edges (bonds) in a graph structure | Naturally captures topology and local environments; SE(3)-equivariance; High interpretability | Complex implementation; Computationally intensive for large systems | ConditionCDVAE+ [4], CDVAE [4], Graph Convolutional Networks [22] |

| Voxel-Based | 3D grid of density values or occupancy | Native format for many experimental techniques; Compatible with 3D CNNs; Simple structure | Discrete representation; Memory-intensive at high resolutions; Loss of continuous spatial information | Neural Cryo-EM Maps [22], iMatGen [4], Stacked Custom CNN [23] |

| Sequence-Based | Linear string of symbols (e.g., SMILES, formulas) | Compact representation; Compatibility with NLP models; Simple data structure | Limited 3D structural information; Challenges with periodicity and symmetry | FTCP (partially) [4] |

Quantitative Performance Comparison

Recent benchmarking studies provide quantitative insights into the performance of different representation formats, particularly for inverse design applications. The following table summarizes key performance metrics across representation types and model architectures.

Table 2: Quantitative Performance Metrics for Inverse Design Models

| Model | Representation Format | Dataset | Key Performance Metrics | Values |

|---|---|---|---|---|

| ConditionCDVAE+ [4] | Graph-Based | J2DH-8 (vdW Heterostructures) | Reconstruction Match RateReconstruction RMSEGround-State Convergence | 25.35%0.184299.51% |

| CDVAE [4] | Graph-Based | J2DH-8 (vdW Heterostructures) | Reconstruction Match RateReconstruction RMSE | ~20.61%~0.2117 |

| Neural Cryo-EM Map [22] | Voxel-Based (Neural) | Experimental Cryo-EM Maps (115 maps) | Interpolation MAEResidue Coverage (Atomic Resolution)Atomic Coverage (Atomic Resolution) | <0.01>99%85% |

| Tri-linear Interpolation [22] | Voxel-Based (Traditional) | Experimental Cryo-EM Maps (115 maps) | Interpolation MAEResidue Coverage (Lower Resolution) | 0.066-0.1284% |

| Stacked Custom CNN with VBM [23] | Voxel-Based | Brain MRI Images | Classification Accuracy | 98% |

Experimental Protocols

Protocol 1: Graph-Based Inverse Design of vdW Heterostructures

Purpose: To implement inverse design of van der Waals heterostructures using ConditionCDVAE+, a graph-based deep generative model.

Materials and Reagents:

- Computational Resources: High-performance computing cluster with GPU acceleration (NVIDIA V100 or equivalent recommended)

- Software Environment: Python 3.8+, PyTorch, PyTorch Geometric, pymatgen library

- Dataset: J2DH-8 dataset (19,926 two-dimensional Janus III-VI vdW heterostructures) [4]

Procedure:

- Data Preprocessing:

- Load crystal structures from the J2DH-8 dataset.

- Convert each crystal structure to a graph representation with nodes as atoms and edges as bonds within a cutoff radius.

- Normalize node features (atomic numbers) and edge features (distances, vectors).

- Split dataset into training, validation, and test sets with a 6:2:2 ratio.

Model Configuration:

- Implement the ConditionCDVAE+ architecture with EquiformerV2 as the encoder-decoder.

- Configure the variational autoencoder (VAE) module with latent dimension of 256.

- Set up the diffusion module with 1000 denoising steps.

- Integrate the conditional guidance module using Low-rank Multimodal Fusion (LMF) and Generative Adversarial Networks (GAN) to map target properties to the latent space.

Training:

- Train the model for 1000 epochs with batch size of 64.

- Use Adam optimizer with learning rate of 0.001 and weight decay of 0.0001.

- Apply periodic evaluation on validation set to monitor reconstruction performance.

Generation and Validation:

- Sample latent vectors from the prior distribution.

- Decode sampled vectors to generate novel vdW heterostructures.

- Validate generated structures using StructureMatcher from pymatgen with parameters: stol=0.5, angle_tol=10, ltol=0.3.

- Perform Density Functional Theory (DFT) calculations to verify ground-state convergence.

Troubleshooting:

- For invalid structures (minimum interatomic distance < 0.5 Å), adjust the latent space sampling or increase the weight of validity constraints during training.

- If generation diversity is low, increase the temperature parameter during sampling or adjust the GAN loss weights.

Protocol 2: Neural Cryo-EM Map Representation for Protein Structure Determination

Purpose: To create continuous, differentiable representations of cryo-EM maps using neural networks for improved protein structure interpretation.

Materials and Reagents:

- Data Source: Experimental cryo-EM maps from EMDB (Electron Microscopy Data Bank)

- Software: Python 3.7+, PyTorch, SIREN architecture implementation

- Reference Structures: Corresponding PDB-deposited structures for validation

Procedure:

- Data Preparation:

- Download experimental cryo-EM maps in MRC format.

- Normalize voxel values to the range [0, 1].

- Extract spatial coordinates and corresponding density values.

Neural Network Configuration:

- Implement SIREN (Sinusoidal Representation Networks) architecture with 5 hidden layers of 256 units each.

- Use periodic activation functions (sine) with frequency parameter ω₀=30.

- Initialize weights according to SIREN specifications.

Training:

- Train the network to map 3D coordinates to density values.

- Use mean squared error (MSE) loss between predicted and actual density values.

- Train for 50,000 iterations with batch size of 4096.

- Use Adam optimizer with learning rate of 0.0001.

Graph-Based Interpretation:

- Identify critical points in the neural representation by finding local maxima in the density field.

- Construct graph with nodes at critical points and edges based on spatial proximity.

- Map graph nodes to amino acid residues in the reference structure.

- Calculate coverage metrics (residue and atomic coverage) and accuracy (RMSD).

Validation:

- Compare interpolation accuracy against tri-linear interpolation using Mean Absolute Error (MAE).

- Evaluate graph coverage by calculating the percentage of residue locations within a threshold distance (e.g., 2Å) of graph nodes.

- Assess node placement accuracy using Root Mean Square Deviation (RMSD) from reference atomic positions.

Visualization and Workflow Diagrams

Diagram 1: Workflow for Materials Representation in Inverse Design

Diagram 2: Performance Characteristics of Representation Formats

Research Reagent Solutions

Table 3: Essential Computational Tools for Materials Representation Research

| Tool/Resource | Type | Primary Function | Representation Format |

|---|---|---|---|

| ConditionCDVAE+ [4] | Deep Generative Model | Inverse design of vdW heterostructures with conditional guidance | Graph-Based |

| CDVAE [4] | Deep Generative Model | Generation of physically stable crystal structures using diffusion | Graph-Based |

| Neural Cryo-EM Map [22] | Data Format | Continuous, differentiable representation of cryo-EM data | Voxel-Based (Neural) |

| EquiformerV2 [4] | Graph Neural Network | SE(3)-equivariant encoder-decoder for geometric learning | Graph-Based |

| SIREN [22] | Neural Network Architecture | Continuous representation of 3D data with periodic activations | Voxel-Based (Neural) |

| StructureMatcher [4] | Validation Tool | Comparison of crystal structure similarity | All Formats |

| pymatgen [4] | Materials Analysis | Python library for materials analysis | All Formats |

| ALIGNN [4] | Graph Neural Network | Predicting material properties from crystal structures | Graph-Based |

Inverse design represents a paradigm shift in materials science and drug discovery, moving from traditional, resource-intensive trial-and-error methods to a targeted approach that starts with desired properties and works backward to identify optimal structures [24] [25]. This methodology is made possible by deep generative models, which learn the complex, non-linear relationships connecting a material's structure to its properties [26]. At the heart of these models lies a powerful concept: the latent space.

The latent space is a compressed, low-dimensional mathematical representation where every point corresponds to a potential material structure [27]. Navigating this continuous space allows researchers to interpolate between known structures, explore entirely new regions, and systematically generate candidates with optimized, target properties [25]. This document provides detailed application notes and protocols for leveraging the latent space to accelerate the inverse design of functional materials and therapeutic molecules.

Theoretic Foundations and Key Concepts

The Role of Deep Generative Models

Deep generative models create the latent space and provide the mechanisms for its navigation. The primary model architectures include:

- Variational Autoencoders (VAEs): VAEs learn to compress input data (e.g., a molecular structure) into a latent vector sampled from a defined probability distribution, typically Gaussian [27]. The decoder then reconstructs the data from this vector. This architecture regularizes the latent space, making it continuous and allowing for smooth interpolation. A significant advancement is the disentangled VAE, where individual latent variables encode independent property factors, enabling precise property editing [27].

- Generative Adversarial Networks (GANs): GANs employ a generator that creates structures from latent vectors and a discriminator that distinguishes generated structures from real ones [27] [25]. Through this adversarial training, the generator learns to map latent points to realistic structures. However, training can be unstable and prone to "mode collapse" [25].

- Flow-based Models: Unlike VAEs and GANs, flow-based models learn an invertible, bijective mapping between the data distribution and the latent space [27]. This allows for exact log-likelihood evaluation and efficient sampling.

Representation of Chemical Structures

The choice of molecular representation fundamentally shapes the latent space and the generative process. The common representations are summarized in Table 1 below.

Table 1: Molecular Representations for Generative Models

| Representation Type | Description | Common Model Applications | Pros & Cons |

|---|---|---|---|

| Sequence-based (e.g., SMILES/SELFIES) | Represents molecules as strings of characters, analogous to a language [27]. | RNNs (LSTM, GRU), Transformer-based LLMs [27] [14]. | Pros: Compact, memory-efficient [27]. Cons: May generate invalid strings; 2D representation lacks 3D spatial information [14]. |

| Graph-based | Represents atoms as nodes and bonds as edges [27]. | Graph Neural Networks (GNNs), GraphINVENT [27] [28]. | Pros: Naturally captures molecular topology; generally high validity [27]. Cons: Higher computational complexity [27]. |

| 3D Structural | Encodes the 3D coordinates and conformations of molecules [27]. | Specialized GNNs, Equivariant Diffusion Models [27] [29]. | Pros: Critical for modeling real-world interactions (e.g., drug-target binding) [27]. Cons: Data is more challenging and costly to obtain [14]. |

Experimental Protocols and Workflows

General Workflow for Latent Space Navigation

The following diagram illustrates a generalized, iterative workflow for inverse design using a navigable latent space. This framework can be adapted to specific model architectures and design problems.

Diagram 1: Inverse design workflow using a navigable latent space.

Protocol 1: High-Throughput Virtual Screening with Active Learning

This protocol, inspired by the InvDesFlow-AL framework, is designed for discovering stable crystalline materials [30].

- Objective: To iteratively generate and identify materials with low formation energy and high thermodynamic stability.

- Materials & Data:

- Initial Dataset: A starting set of known crystal structures (e.g., from the Materials Project).

- Property Predictor: A machine learning model (e.g., a Gaussian Process or a Graph Neural Network) trained to predict formation energy (

E_form) and energy above hull (Ehull) from structure. - Generator: A diffusion model or VAE trained on crystal structures [30].

- Procedure:

- Initial Generation: Use the generator to produce a large batch (e.g., 10,000) of candidate crystal structures.

- Property Prediction: Use the property predictor to evaluate

E_formandEhullfor all candidates. - Active Learning Selection:

- Select the top

Ncandidates (e.g., 1,000) with the lowestE_form/Ehull. - Select an additional

Mcandidates (e.g., 100) that are diverse in composition or structure to encourage exploration.

- Select the top

- High-Fidelity Validation: Validate the selected

N+Mcandidates using computationally expensive, but accurate, Density Functional Theory (DFT) calculations. - Model Update: Add the DFT-validated structures and their accurate properties to the training data. Fine-tune the property predictor and, if necessary, the generator on this expanded dataset.

- Iteration: Repeat steps 1-5, gradually guiding the generative process toward regions of the latent space that correspond to increasingly stable materials [30].

- Output: A set of theoretically stable candidate materials, ready for experimental synthesis. This method has been shown to successfully generate millions of materials with low

Ehull[30].

Protocol 2: Goal-Directed Molecular Optimization with Reinforcement Learning (RL)

This protocol is tailored for drug discovery, aiming to optimize lead compounds for multiple properties simultaneously [27] [28].

- Objective: To generate novel, synthesizable molecules with high predicted activity on a target (on-target potency) and acceptable ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties.

- Materials & Data:

- Generative Model: A model such as a RNN (e.g., CharRNN) or a VAE pre-trained on a large database of drug-like molecules (e.g., ZINC, ChEMBL) [27] [28].

- Predictive Models: QSAR/RF models or other ML predictors for on-target activity, toxicity, and synthesizability.

- RL Framework: A framework like REINVENT [28].

- Procedure:

- Pre-training: Train or obtain a generative model to produce valid molecules, establishing a prior over chemical space.

- Reward Function Definition: Formulate a composite reward function,

R(molecule). For example:R = [Activity Prediction] + [0.5 * Synthesizability Score] - [Toxicity Prediction] - Fine-tuning with RL:

- The generative model (agent) proposes new molecules (actions).

- Each generated molecule is evaluated by the reward function (environment).

- The model's parameters are updated using a policy gradient method to maximize the expected reward, shifting the generative distribution away from the prior and toward the desired property profile [28].

- Conditional Generation: Alternatively, use the latent space of a VAE. Train a surrogate model to predict the reward from the latent vector,

z. Then, use an optimizer (e.g., Bayesian optimization) to find thezthat maximizes the predicted reward, and decode it to obtain the candidate molecule [25].

- Output: A set of novel molecular structures optimized for the specified multi-objective reward function.

Benchmarking and Performance Metrics

Evaluating the performance of generative models is crucial for selecting the right approach. A 2025 benchmarking study on polymer design provides quantitative insights into the performance of various models [28]. The key metrics and results are summarized in Table 2.

Table 2: Benchmarking Deep Generative Models for Polymer Design (adapted from [28])

| Model | Valid Polymers (f_v) | Unique Polymers (f_10k) | Fréchet ChemNet Distance (FCD) | Best-Suited Application |

|---|---|---|---|---|

| CharRNN | High | High | Low | Excellent performance on real polymer datasets; can be fine-tuned with RL [28]. |

| REINVENT | High | High | Low | Excellent for goal-directed design using reinforcement learning [28]. |

| GraphINVENT | High | High | Low | High performance on real polymer datasets [28]. |

| VAE | Moderate | Moderate | Moderate | More advantageous for generating hypothetical polymers, expanding known chemical spaces [28]. |

| AAE | Moderate | Moderate | Moderate | Similar to VAE, better for exploring hypothetical polymer spaces [28]. |

| ORGAN | Lower | Lower | Higher | Lower overall performance in benchmarked metrics [28]. |

Key to Metrics:

- Valid (f_v): Fraction of generated structures that are chemically plausible.

- Unique (f_10k): Fraction of unique structures in a sample of 10,000.

- FCD: Measures the similarity between the distributions of generated and real molecules; a lower value is better.

The Scientist's Toolkit

This section details essential "research reagents" – the datasets, software, and representations – required for effective inverse design research.

Table 3: Key Research Reagents and Resources

| Resource | Type | Function & Application |

|---|---|---|

| ZINC Database [27] | Small-Molecule Database | Provides nearly 2 billion purchasable, "drug-like" compounds for virtual screening and for pre-training generative models to learn chemical rules. |

| ChEMBL Database [27] | Bioactive Molecule Database | A manually curated database of ~1.5M bioactive molecules with experimental measurements, used for training models to generate molecules with specific biological properties. |

| PolyInfo Database [28] | Polymer Database | A key resource containing structural data for real polymers, used for training polymer-specific generative models. |

| SMILES/SELFIES [27] [14] | Molecular Representation | String-based representations that enable the use of NLP-based models (RNNs, Transformers) for molecule generation. |

| Graph Representations [27] | Molecular Representation | A direct representation of molecular topology (atoms=nodes, bonds=edges) used by Graph Neural Networks to generate molecules with high validity. |

| InvDesFlow-AL [30] | Software Framework | An active learning-based generative framework for inverse design of functional materials, proven effective in discovering stable crystals and superconductors. |

| REINVENT [28] | Software/Algorithm | A reinforcement learning framework for goal-directed molecular generation, optimizing compounds against a multi-parameter reward function. |

Core Methodologies and Real-World Applications in Materials Science

The discovery and development of new functional materials are crucial for technological progress in fields ranging from electronics to drug development. Inverse design—the process of generating material structures with predefined target properties—represents a paradigm shift from traditional, often serendipitous, discovery methods. Deep generative models have emerged as powerful tools for this inverse design challenge by learning the underlying probability distribution of known crystal structures and enabling the sampling of novel, plausible candidates. This application note provides an in-depth technical examination of three foundational architectures—Conditional Variational Autoencoders (C-VAEs), Generative Adversarial Networks (GANs), and Crystal Diffusion Models (CDVAE)—framed within the context of inverse design of crystalline materials. We detail their operational principles, present quantitative performance comparisons, and outline standardized experimental protocols for their implementation and validation in materials informatics research.

Foundational Model Architectures

Variational Autoencoders (VAEs) and their Conditional Extensions

The Variational Autoencoder (VAE) is a generative model that combines dimensionality reduction with probabilistic modeling [31] [32]. Its architecture consists of two primary neural networks: an encoder that maps input data to a latent space, and a decoder that reconstructs data from this latent space. Unlike standard autoencoders, the VAE encoder outputs parameters defining a probability distribution (typically a Gaussian) in the latent space, from which a point is sampled and passed to the decoder [33] [32]. This stochastic process ensures the latent space becomes continuous and regular, allowing for smooth interpolation and meaningful generation of new samples.

The training objective of a VAE is to maximize the Evidence Lower Bound (ELBO), which consists of a reconstruction loss term (ensuring the decoder can accurately reconstruct its input) and a Kullback-Leibler (KL) divergence term (regularizing the latent distribution towards a standard normal prior) [31]. For inverse design, the standard VAE is extended to a Conditional VAE (C-VAE), where the generation process is conditioned on a target property or other descriptor (e.g., band gap, composition). This is achieved by feeding the condition vector to both the encoder and decoder, thereby learning the conditional distribution ( p(\mathbf{x}|c) ) of structures given a property [34] [31].

Generative Adversarial Networks (GANs)

Generative Adversarial Networks (GANs) employ a game-theoretic framework comprising two competing neural networks: a Generator (G) and a Discriminator (D) [33] [32]. The generator takes random noise as input and transforms it into synthetic data, aiming to produce realistic crystal structures. The discriminator receives both real data (from the training set) and fake data (from the generator) and attempts to distinguish between them. The two networks are trained simultaneously in an adversarial minimax game: the generator strives to fool the discriminator, while the discriminator aims to become a better critic [33]. This competition drives the generator to produce increasingly convincing outputs. Conditional GANs (cGANs) can be constructed for inverse design by feeding the target property condition as an additional input to both the generator and discriminator, guiding the generation towards structures that not only appear valid but also possess the desired characteristics [4].

Crystal Diffusion Variational Autoencoder (CDVAE)

The Crystal Diffusion Variational Autoencoder (CDVAE) is a sophisticated hybrid architecture specifically designed for the challenges of crystal structure generation [35] [4]. It integrates a VAE with a Denoising Diffusion Probabilistic Model (DDPM). The model consists of three core components:

- A VAE module that encodes crystal structures into a latent representation and decodes to predict fundamental lattice parameters and the number of atoms.

- A diffusion module that refines the generated atomic coordinates through an iterative denoising process.

- A property conditioning module (in conditional setups) that maps target properties into the joint latent space to guide the generation.

A key innovation of CDVAE and its variants is the use of E(3)-equivariant graph neural networks (e.g., EquiformerV2) as encoders and decoders [4]. This architectural choice ensures the model inherently respects the fundamental physical symmetries of crystal structures—including rotation, translation, permutation, and periodicity—leading to the generation of more physically realistic and stable materials [4].

Diagram 1: High-level workflow of the ConditionCDVAE+ architecture for inverse design.

Quantitative Performance Comparison

The performance of generative models for crystals is typically evaluated across several key metrics: the ability to accurately reconstruct crystal structures from a latent representation (Reconstruction), the quality and diversity of entirely new structures (Generation), and the success in generating structures that exhibit a desired target property (Inverse Design).

Table 1: Reconstruction Performance on Benchmark Datasets (Match Rate % and Normalized RMSE)

| Model | MP-20 Dataset | J2DH-8 Dataset | Carbon-24 Dataset | Perov-5 Dataset |

|---|---|---|---|---|

| FTCP | - | 24.10% / 0.2173 | - | - |

| CDVAE | 41.59% / 0.0352 | 20.61% / 0.2118 | 46.31% / 0.1494 | 97.52% / 0.0196 |

| DP-CDVAE | 32.42% / 0.0383 | - | 45.57% / 0.1513 | 90.04% / 0.0212 |

| DiffCSP | 43.15% / 0.0331 | - | - | - |

| ConditionCDVAE+ | 45.88% / 0.0325 | 25.35% / 0.1842 | - | - |

Note: Match Rate is the percentage of reconstructed structures deemed similar to ground-truth by the StructureMatcher algorithm. RMSE is the normalized root-mean-square distance of atomic positions. Data synthesized from [35] [4].

Table 2: Crystal Generation Performance and Property Convergence