Improving Accuracy in Machine Learning Predictions of Thermodynamic Stability: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on enhancing the accuracy of machine learning models for predicting thermodynamic stability—a critical property in drug design and...

Improving Accuracy in Machine Learning Predictions of Thermodynamic Stability: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on enhancing the accuracy of machine learning models for predicting thermodynamic stability—a critical property in drug design and materials science. It covers the foundational principles of stability prediction, explores advanced methodological frameworks like ensemble learning and feature engineering, details optimization techniques to overcome data and model biases, and establishes robust validation protocols. By synthesizing current advances and practical strategies, this resource aims to equip scientists with the knowledge to build more reliable predictive models, thereby accelerating the discovery of stable therapeutic compounds and materials.

Understanding Thermodynamic Stability and Its Critical Role in Drug Discovery

Frequently Asked Questions (FAQs)

1. What is the concrete definition of "Energy Above Hull (Ehull)"? The Energy Above Hull, often denoted as Ehull or ΔHd (decomposition energy), is the energy difference between a compound and its most stable decomposition products on the convex hull. It is the vertical distance in energy from the compound's formation energy to the convex hull surface at that specific composition. A stable compound has an Ehull of 0 meV/atom, meaning it lies directly on the convex hull. A positive E_hull indicates the compound is metastable or unstable and will decompose into a combination of more stable phases from the hull [1] [2] [3].

2. How is the convex hull constructed for multi-component systems like ternaries or quaternaries? The convex hull is a geometric construction in energy-composition space. For a system with N elements, the formation energies of all known compounds are plotted in an (N-1) dimensional composition space. The convex hull is then the set of lowest-energy surfaces (lines, planes, or hyperplanes) connecting the stable phases. A phase is stable if it is a vertex of this lower convex envelope. The algorithm finds the smallest convex set containing all the points in this multi-dimensional space [2] [3].

3. My compound has a negative formation energy but a positive Ehull. Is it stable? A negative formation energy is necessary but not sufficient for thermodynamic stability. A compound with a positive Ehull, even with a negative formation energy, is thermodynamically unstable with respect to decomposition into other, more stable compounds in its chemical system. Its synthesis may be challenging, and it may degrade over time. However, many metastable materials (E_hull > 0) can still be synthesized under kinetic control [2] [3].

4. Can I use a single chemical reaction to confirm the stability of my novel compound? No. Determining thermodynamic stability requires comparing your compound against all competing phases in its chemical system, not just one presumed decomposition pathway. The convex hull automatically identifies the most stable set of decomposition products. Writing a single synthesis reaction (e.g., A₂B₂O₇ + 2NH₃ → 2ABO₂N + 3H₂O) and finding a negative reaction energy only shows that the reaction is likely spontaneous; it does not guarantee that your compound is the most stable product, as it could decompose into other, unconsidered phases [3].

Troubleshooting Guides

Issue 1: Inconsistent or Incorrect Energy Above Hull Values

Problem: You calculate an E_hull value that differs significantly from database values (e.g., Materials Project) or get unexpected results.

Solution:

- Normalize Energies per Atom: Ensure all formation energies (E_f) used in hull construction are normalized per atom, not per formula unit. The convex hull exists in energy-per-atom vs. composition space [3].

- Use a Consistent Reference Set: All energies for the chemical system must be calculated at the same level of theory (e.g., identical DFT functionals, pseudopotentials, and calculation parameters). Mixing data from different computational setups introduces errors [2].

- Include a Comprehensive Set of Competing Phases: The hull's accuracy depends on including all relevant stable phases in the chemical system. An incomplete set will yield an incorrect hull and misleading E_hull values. Use a robust database like the Materials Project as a starting point [2] [3].

- Do Not Manually Guess Decomposition Products: Rely on established convex hull algorithms (e.g., in pymatgen) to correctly identify the set of most stable decomposition products and their stoichiometric coefficients, which can be fractional [3].

Issue 2: Integrating Machine Learning Predictions with DFT Validation

Problem: Your ML model predicts a compound is stable, but subsequent DFT calculations show it is unstable, or vice-versa.

Solution:

- Understand ML Model Limitations: Know your model's typical error. For example, a model with a Mean Absolute Error (MAE) of 28.5 meV/atom may misclassify compounds with E_hull near this threshold [4]. Treat ML as a rapid screening tool, not a final arbiter.

- Check the Model's Training Data: Models trained only on ground-state structures (e.g., from the ICSD) can be biased and perform poorly on higher-energy hypothetical structures. Use models trained on a balanced dataset of both stable and unstable phases for stability prediction tasks [5].

- Standardize DFT Validation Protocol: When validating ML predictions with DFT, ensure your DFT calculations are performed using standardized settings consistent with the major databases (e.g., Materials Project's VASP input sets) to allow for a fair comparison and application of necessary energy corrections [2] [3].

Experimental Protocols & Data

Protocol 1: Constructing a Phase Diagram and Calculating E_hull Using Pymatgen

This protocol outlines the steps for building a phase diagram from computed energies to determine thermodynamic stability [2].

1. Gather Computed Entries:

- Collect ComputedEntry or ComputedStructureEntry objects for all known and candidate phases in the chemical system. These entries contain the computed energy and composition.

2. Construct the PhaseDiagram Object:

- Input the list of entries into pymatgen's PhaseDiagram class.

- The class automatically constructs the convex hull in the relevant composition space.

3. Analyze a Specific Phase:

- Use the PhaseDiagram.get_e_above_hull(entry) method for any entry to get its E_hull.

- Use PhaseDiagram.get_decomposition(entry.composition) to get the precise set of stable phases and their fractions that the compound would decompose into.

Example Code Snippet:

Protocol 2: An Ensemble Machine Learning Workflow for Stability Prediction

This protocol describes a modern ML approach to predict stability, mitigating bias by combining multiple models [1].

1. Feature Engineering: - Generate input features from different domains of knowledge. The ECSG framework uses: - Electron Configuration (EC): A matrix representing the electron distribution of constituent atoms, processed by a Convolutional Neural Network (ECCNN). - Elemental Properties: Statistical features (mean, deviation, range) of atomic properties like radius and electronegativity (Magpie model). - Interatomic Interactions: Represent the composition as a graph to model atom-atom relationships (Roost model).

2. Model Training and Stacking: - Train the three base models (ECCNN, Magpie, Roost) independently on formation energy or stability data. - Use Stacked Generalization (SG): The predictions from these base models become the input features for a final "meta-learner" model (e.g., linear model) that produces the final, refined prediction.

3. Validation and Screening: - Apply the trained ECSG model to screen vast compositional spaces for promising stable compounds. - Validate the top candidates with high-fidelity DFT calculations to confirm stability.

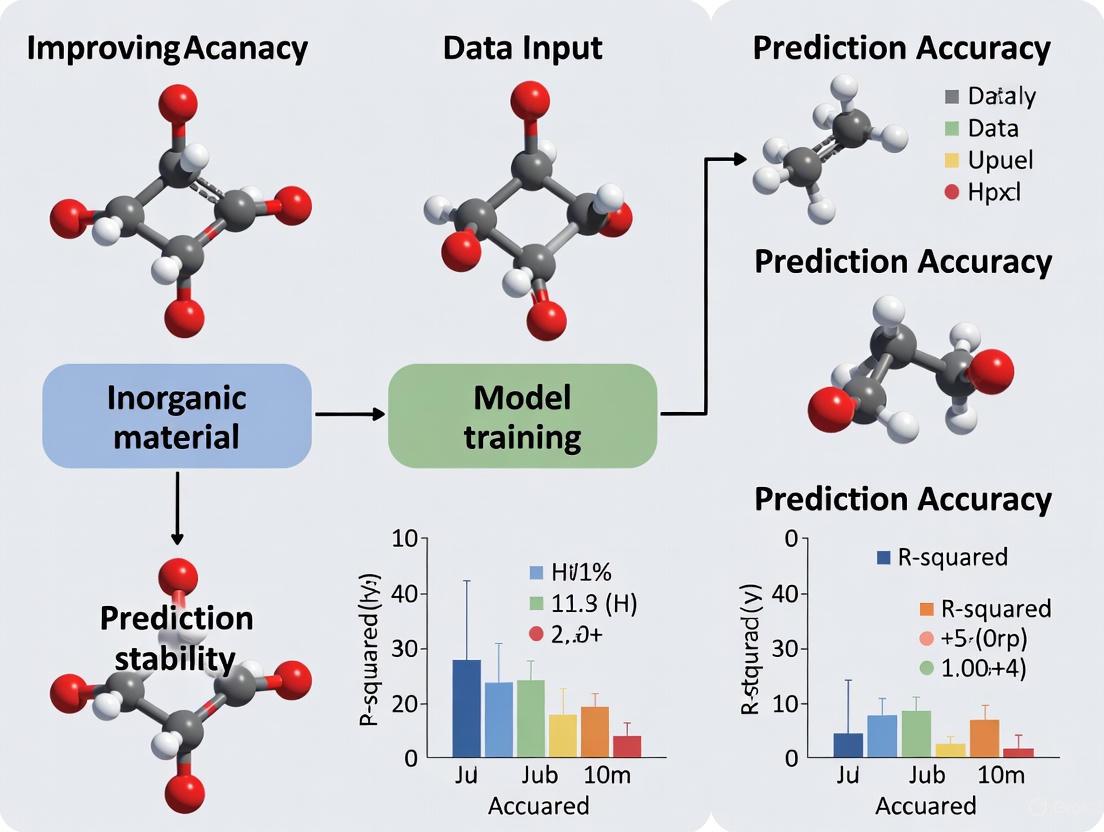

The workflow below illustrates this ensemble machine learning process for predicting thermodynamic stability.

The table below summarizes performance metrics of various machine learning models for predicting thermodynamic stability, as reported in the literature.

Table 1: Performance Metrics of ML Models for Predicting Thermodynamic Stability

| Material Class | ML Model | Key Metric | Performance | Reference / Notes |

|---|---|---|---|---|

| General Inorganic Compounds | ECSG (Ensemble) | AUC (Area Under Curve) | 0.988 | Electron Configuration + Stacked Generalization; High sample efficiency [1] |

| Perovskite Oxides | Kernel Ridge Regression | RMSE (Root Mean Square Error) | 28.5 ± 7.5 meV/atom | Prediction of Energy Above Hull (E_hull) [4] |

| Perovskite Oxides | Extra Trees Classifier | F1 Score | 0.88 (± 0.03) | Classification (Stable vs. Unstable) [4] |

| General Inorganic Crystals | Graph Neural Network (GNN) | MAE (Mean Absolute Error) | 0.041 eV/atom | Predicting DFT total energy, requires balanced training data [5] |

| Cubic Perovskites | Extra Trees Regression | MAE | 121 meV/atom | Large-scale benchmark on ~250k systems [6] |

| Conductive MOFs | Engineered Features + ML | R² (Coefficient of Determination) | 0.96 | For formation energy prediction [7] |

The Scientist's Toolkit

Table 2: Essential Computational Tools and Reagents for Stability Research

| Tool / Solution | Function / Description | Relevance to Experiment |

|---|---|---|

| Pymatgen | A robust, open-source Python library for materials analysis. | Provides core algorithms for constructing phase diagrams (PhaseDiagram class), calculating E_hull, and analyzing decomposition pathways [2]. |

| Materials Project (MP) API | A web API that provides programmatic access to the Materials Project database. | Used to fetch computed crystal structures and energetics for a vast range of materials, which serve as the foundational data for building phase diagrams and training ML models [2]. |

| VASP (Vienna Ab initio Simulation Package) | A widely used software for performing DFT calculations. | Generates the fundamental total energy data from first principles. This data is the "ground truth" for validating ML predictions and populating materials databases [4] [5]. |

| JARVIS/DFT, OQMD, NRELMatDB | Curated databases of DFT-calculated material properties. | Serve as critical sources of training data for machine learning models, containing thousands to millions of computed formation energies and crystal structures [1] [5]. |

| CGCNN/MEGNet/iCGCNN | Graph Neural Network (GNN) architectures for materials property prediction. | These models represent crystal structures as graphs to directly learn structure-property relationships, enabling accurate prediction of formation energies and total energies [5]. |

| Stacked Generalization (SG) | An ensemble machine learning technique. | Combines predictions from multiple base models (e.g., ECCNN, Magpie, Roost) to create a super-learner with reduced bias and improved predictive performance for stability [1]. |

Why Accurate Stability Prediction is a Bottleneck in Pharmaceutical Development

Frequently Asked Questions (FAQs)

Q1: Why is thermodynamic stability prediction so critical for new drug molecules? Over 90% of newly developed drug molecules face challenges with low solubility and bioavailability. Accurate thermodynamic stability prediction is foundational for modeling and measuring the data required to understand and design safe, stable pharmaceutical products and their production processes. It is the most important prerequisite for developing stable formulations and increasing production efficiency [8].

Q2: How can machine learning (ML) models accelerate stability prediction? ML models can process complex, multi-dimensional datasets to identify patterns that are difficult to discern with traditional methods. They act as powerful pre-filters, rapidly screening vast numbers of hypothetical materials or formulations to identify promising candidates for further, more resource-intensive testing. This can dramatically speed up discovery workflows, though they work best in conjunction with higher-fidelity methods like density functional theory (DFT) [9].

Q3: What are the key challenges when using ML for crystal stability prediction? Key challenges include a disconnect between common regression metrics and task-relevant classification metrics, the circular dependency created when models require relaxed structures from the calculations they are meant to accelerate, and the risk of high false-positive rates even for models with accurate regression performance. A successful framework must address prospective benchmarking, use relevant stability targets, and employ informative metrics [9].

Q4: What is the role of predictive stability in the context of new regulatory guidelines? Predictive stability based on computational modeling and risk-based approaches is gaining traction for prospectively assessing the long-term stability and shelf-life of products. New regulatory approaches, such as the draft ICH Q1 guideline, are expected to lead to increased use of stability modeling in clinical trials and market applications, which can help accelerate patient access to new medicines [10] [11].

Q5: What common issue occurs when a model shows good regression metrics but high false-positive rates? This is a known pitfall where a model may have a low mean absolute error (MAE) but still misclassify many unstable materials as stable. This happens when accurate predictions lie very close to the decision boundary (e.g., 0 eV per atom above the convex hull). Therefore, models should be evaluated based on classification performance and their ability to facilitate correct decision-making, not just regression accuracy [9].

Troubleshooting Guides

Guide 1: Addressing Poor Predictive Performance in Solubility Models

This guide helps diagnose issues when machine learning models fail to accurately predict drug solubility in supercritical fluids, a key step in nanonization.

Problem: Model predictions do not align with experimental solubility measurements.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient or Poor-Quality Data | Check dataset size and for missing values. Use algorithms like Isolation Forest to detect outliers [12]. | Clean data by removing outliers. Expand the dataset with more experimental measurements. |

| Incorrect Model or Hyperparameters | Compare performance of different algorithms (e.g., SVM, GWO-ADA-KNN) using metrics like R² and MSE [13] [12]. | Utilize ensemble methods (e.g., AdaBoost) and metaheuristic optimizers (e.g., Grey Wolf Optimizer) to tune hyperparameters [12]. |

| Inadequate Feature Representation | Analyze if input features (e.g., only temperature and pressure) fully capture the solubility physics [13]. | Incorporate additional relevant features, such as solvent density or molecular descriptors of the drug [12]. |

Guide 2: Managing High False Positive Rates in Crystal Stability Screening

This guide addresses the critical issue of ML models incorrectly classifying unstable crystals as stable, which wastes experimental resources.

Problem: A model with good regression accuracy (low MAE) has an unexpectedly high false-positive rate.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Misaligned Evaluation Metrics | Evaluate the model using classification metrics (e.g., precision, recall) instead of, or in addition to, regression metrics (MAE, R²) [9]. | Shift the evaluation focus to classification performance based on the energy above the convex hull. Use metrics that prioritize correct stability classification [9]. |

| Lack of Uncertainty Quantification | Determine if the model provides uncertainty estimates for its predictions [9]. | Implement models that quantify prediction uncertainty. Use this uncertainty to flag borderline predictions for further scrutiny. |

| Data Distribution Shift | Check if the test data comes from a different chemical space than the training data [9]. | Use prospective benchmarking with test data generated from the intended discovery workflow to better simulate real-world performance [9]. |

Experimental Protocols & Data

Protocol 1: Predicting Drug Solubility in Supercritical CO₂ using SVM

This protocol outlines a methodology for using a Support Vector Machine (SVM) to predict the solubility of a drug, such as Lornoxicam, in supercritical carbon dioxide [13].

1. Objective: To build a predictive model correlating drug solubility (mole fraction) with process parameters (temperature and pressure).

2. Materials and Data Preparation:

- Data Collection: Obtain experimental data measuring drug solubility across a range of temperatures and pressures beyond the supercritical point of CO₂ (e.g., 308–338 K and 120–360 bar) [13].

- Data Pre-processing: The input features (X) are temperature (T) and pressure (P). The output (Y) is the drug solubility in mole fraction. Normalize the data if necessary.

3. Model Training:

- Algorithm Selection: Use a Support Vector Machine with a Radial Basis Function (RBF) kernel for regression. The RBF kernel enhances the model's ability to capture non-linear relationships.

- Training Process: The SVM algorithm is trained on the pre-processed dataset to find a function that maps the input features (T, P) to the output solubility with the greatest accuracy.

4. Model Validation:

- Validation Method: Use a portion of the data or cross-validation to test the trained model.

- Success Criteria: The model is considered successful when there is a strong agreement between measured and simulated values, as indicated by a high regression coefficient (R²) [13].

Protocol 2: Ensemble Learning for Paracetamol Solubility with Metaheuristic Optimization

This protocol describes a robust approach using ensemble learning and optimization algorithms to predict paracetamol solubility and solvent density [12].

1. Objective: To accurately predict the mole fraction of paracetamol and the density of supercritical CO₂ using ensemble models optimized with metaheuristic algorithms.

2. Materials and Data Preparation:

- Dataset: A dataset of 40 instances with features: Temperature (T), Pressure (P), and outputs: Mole Fraction (MF), Density (D) [12].

- Data Cleaning: Use the Isolation Forest algorithm to detect and remove outliers. This step is crucial for ensuring model robustness [12].

3. Model Building and Optimization:

- Base Model: Use K-Nearest Neighbor (KNN) regression as the base model.

- Ensemble Methods: Apply Bagging and AdaBoost ensemble techniques to improve the base model's performance and robustness.

- Hyperparameter Tuning: Optimize the ensemble models using metaheuristic algorithms:

4. Performance Evaluation:

- Metrics: Assess model performance using R-squared (R²), Mean Squared Error (MSE), and Average Absolute Relative Deviation (AARD%) [12].

The following table summarizes quantitative results from recent ML studies on pharmaceutical solubility, demonstrating the performance of different models:

Table 1: Performance Metrics of Machine Learning Models for Pharmaceutical Solubility Prediction

| Drug Compound | ML Model | Key Input Features | Performance Metrics | Reference |

|---|---|---|---|---|

| Paracetamol | GWO-ADA-KNN | Temperature, Pressure | R² = 0.98105 (Mole Fraction), R² = 0.96719 (Density) | [12] |

| Lornoxicam | SVM (RBF Kernel) | Temperature, Pressure | "Great agreement" with "acceptable regression coefficient" | [13] |

| General API Solubility | Random Forest | Temperature, Pressure | High accuracy and reliability reported | [12] |

The Scientist's Toolkit: Essential Research Reagents & Materials

This table lists key materials and computational tools used in advanced stability and solubility prediction research.

Table 2: Key Reagents and Materials for Stability and Solubility Experiments

| Item Name | Function / Application | Brief Explanation |

|---|---|---|

| Supercritical CO₂ | Solvent for nanonization | A green, safe solvent used in supercritical processing to produce nano-sized drug particles with enhanced solubility and bioavailability [13] [12]. |

| Amorphous Solid Dispersions (ASDs) | Formulation strategy | A formulation technique used to improve the solubility and bioavailability of poorly water-soluble drugs by dispersing them in a polymer matrix [14]. |

| Polymeric Carriers | Excipient in ASDs | Polymers (e.g., PVP, HPMC) used to create amorphous solid dispersions, inhibiting recrystallization and stabilizing the drug in its amorphous form [14]. |

| Machine Learning Platforms | In-silico prediction | Computational platforms using AI/ML to accurately predict drug-polymer interactions, physical stability, and solubility, reshaping formulation strategies [14]. |

| Universal Interatomic Potentials (UIPs) | Crystal stability prediction | A type of ML model trained on diverse datasets that can effectively pre-screen the thermodynamic stability of hypothetical crystalline materials with high accuracy [9]. |

Workflow and Relationship Diagrams

ML for Material Discovery Workflow

This diagram illustrates the prospective benchmarking workflow for evaluating machine learning models in a real-world materials discovery campaign [9].

Stability Prediction Challenge

This diagram outlines the core challenges and their relationships in achieving accurate stability predictions for pharmaceuticals [8] [9] [14].

Technical Support & Troubleshooting Hub

This technical support center addresses common challenges in thermodynamic stability research, providing targeted solutions that leverage machine learning (ML) to overcome the high costs and limitations of traditional Density Functional Theory (DFT) and experimental methods.

Frequently Asked Questions (FAQs)

1. How can we reduce our reliance on expensive DFT calculations for predicting new stable compounds? Solution: Implement ensemble machine learning models that use material composition as input.

- Detailed Protocol: The Electron Configuration models with Stacked Generalization (ECSG) framework demonstrates that composition-based ML models can achieve high accuracy in predicting thermodynamic stability, quantified by an Area Under the Curve (AUC) score of 0.988 [1]. This framework requires only one-seventh of the data used by existing models to achieve equivalent performance, drastically reducing the need for preliminary DFT screening [1].

- Workflow Integration: Use the model's output to identify the most promising candidate compositions before committing resources to DFT for final validation. This creates a highly efficient funnel, screening out unstable compounds computationally.

2. Our experimental screening for drug discovery is slow and has high attrition rates. How can we improve efficiency? Solution: Integrate AI and automation into the early hit-to-lead phase.

- Detailed Protocol: Adopt AI-guided platforms that compress the traditional design–make–test–analyze (DMTA) cycle. A 2025 study utilized deep graph networks to generate over 26,000 virtual analogs, leading to the development of sub-nanomolar inhibitors with a 4,500-fold potency improvement over initial hits. This process reduced discovery timelines from months to weeks [15].

- Workflow Integration: Deploy in silico screening tools (e.g., molecular docking, ADMET prediction) to triage large compound libraries before any wet-lab synthesis. This prioritizes candidates based on predicted efficacy and developability, freeing up laboratory resources for the validation of the most promising leads [15].

3. How can we obtain more physiologically relevant data on drug-target engagement without costly and lengthy in vivo studies? Solution: Utilize functional cellular assays that confirm mechanistic activity in a biologically relevant context.

- Detailed Protocol: Implement the Cellular Thermal Shift Assay (CETSA) to validate direct target engagement in intact cells and tissues. A 2024 study applied CETSA with mass spectrometry to quantitatively confirm dose- and temperature-dependent stabilization of a drug target (DPP9) in rat tissue, providing system-level validation that bridges the gap between biochemical potency and cellular efficacy [15].

- Workflow Integration: Incorporate CETSA as a decisive step between in silico prediction and in vivo testing. This provides high-quality, functionally relevant data for go/no-go decisions, reducing the risk of late-stage failures attributed to poor target engagement [15].

4. Our research involves optimizing complex thermodynamic cycle systems. How can we manage the numerous interacting variables efficiently? Solution: Apply machine learning techniques to model and optimize the entire system.

- Detailed Protocol: Frame the system design as a mixed-integer nonlinear programming problem. ML models (e.g., Artificial Neural Networks, Random Forest) can predict performance based on variables including working fluids, cycle configuration, operating parameters, and component design. This holistic approach achieves fast and reliable optimization of all variables simultaneously, which is infeasible with traditional experimental or physical modeling methods alone [16].

- Workflow Integration: Use ML models for rapid virtual prototyping and sensitivity analysis. This identifies the most critical parameters and optimal design spaces, guiding focused and cost-effective experimental campaigns [16].

Comparative Data Tables

Table 1: Comparison of Traditional Methods vs. Machine Learning Approaches

| Metric | Traditional DFT/Experimentation | ML-Accelerated Workflow |

|---|---|---|

| Typical Timeline | Months to years for discovery and preclinical work [17] | 18-24 months from target to Phase I trials [17] |

| Resource Intensity | High computation (DFT) or material/synthesis costs (Experimentation) [1] [16] | Lower; in silico screening prioritizes synthesis and testing [15] |

| Sample/Data Efficiency | Relies on large-scale calculations or library screens | Can achieve high accuracy with a fraction of the data (e.g., 1/7th for stability prediction) [1] |

| Primary Advantage | High accuracy and direct mechanistic insight for validated systems | Dramatically accelerated screening and expanded exploration of chemical/ compositional space [15] [1] |

Table 2: Essential Research Reagent Solutions

| Reagent / Material | Function in Experimentation |

|---|---|

| CETSA (Cellular Thermal Shift Assay) | Validates direct drug-target engagement and mechanistic activity in physiologically relevant intact cells and native tissues, bridging the in vitro-in vivo gap [15]. |

| 3D Cell Culture / Organoids | Provides human-relevant, reproducible tissue models for screening efficacy and toxicity, improving predictive power and reducing reliance on animal models [18]. |

| Automated Liquid Handlers (e.g., Veya, firefly+) | Replaces manual pipetting to provide robust, consistent liquid handling for assays, increasing throughput and data reliability for model training [18]. |

| AI-Assisted Digital Lab Notebooks (e.g., Labguru) | Manages experimental data and metadata to ensure traceability and structure, creating high-quality, interconnected datasets necessary for effective AI/ML analysis [18]. |

Workflow Visualization

The following diagram illustrates a modern, ML-integrated workflow designed to overcome traditional hurdles.

ML-Driven Research Workflow: This workflow replaces traditional, resource-intensive screening with an efficient, closed-loop process. It begins with AI/ML conducting high-throughput in-silico screening of vast virtual libraries to output a prioritized shortlist [15] [1]. Researchers then perform targeted validation only on these top candidates using definitive but costly methods like DFT or functional assays (e.g., CETSA) [15] [1]. Crucially, the data generated from these validation experiments is systematically collected and fed back to retrain and refine the AI models, creating a continuous cycle of improving predictive accuracy and efficiency [18].

Frequently Asked Questions (FAQs)

Q1: My ML model has a low mean absolute error (MAE) on formation energy, but it still identifies many unstable materials as stable (high false positives). What is wrong? This common issue arises from a misalignment between standard regression metrics and the actual goal of stability classification. A model can have excellent MAE while its errors are strategically located near the stability decision boundary (0 eV/atom above the convex hull). This leads to accurate but unusable predictions. To fix this, prioritize classification metrics like precision-recall and F1-score over regression metrics like MAE or R² during model evaluation. Ensure your test set is prospectively designed to mimic a real discovery campaign [9].

Q2: What is the most critical step to improve the generalizability of my ML model for discovering new, stable materials? Robust feature engineering is paramount. Relying solely on compositional features is often insufficient. Integrate structural descriptors (e.g., from Voronoi tessellations) to capture atomic arrangements. One study on conductive metal-organic frameworks achieved an R² of 0.96 for formation energy prediction by creating hybrid feature sets (GD, M-GD, A-GD) that blend compositional and structural information [7] [6].

Q3: How can I perform stability predictions when labeled unstable data is scarce or unavailable? You can employ advanced techniques like Generative Adversarial Networks (GANs) trained only on stable data. The generator creates Out-Of-Distribution (OOD) samples representing unstable behavior. The discriminator learns to distinguish these from stable data, forming a robust decision boundary without needing real unstable examples. This approach has achieved 98.1% accuracy in smart grid stability prediction and is adaptable to materials science [19].

Q4: Why is it essential to look at both enthalpy (ΔH) and entropy (ΔS) instead of just binding affinity (ΔG) in drug stability? Because entropy-enthalpy compensation is a frequent phenomenon in molecular interactions. A modification that improves bonding (more negative ΔH) might rigidify the complex (more negative ΔS), yielding no net gain in ΔG. Relying only on ΔG can mask these opposing effects and obscure the true binding mode. A full thermodynamic profile (ΔG, ΔH, ΔS) is necessary for rational optimization [20].

Troubleshooting Guides

Issue: Poor Model Performance on New, Unseen Compositions

Symptoms:

- High error rates when predicting stability for materials outside the training set's chemical space.

- Successful predictions only for materials similar to those in the training data.

Diagnosis and Solutions:

Diagnose Data Fidelity:

- Problem: The training data from high-throughput DFT calculations may have inconsistencies or may not adequately represent the target chemical space.

- Solution: Implement a data validation pipeline. Use tools like the

matbench-discoveryPython package to assess dataset quality and ensure a realistic covariate shift between your training and test distributions [9].

Implement a Robust Benchmarking Framework:

- Problem: The model was evaluated on a retrospective, idealized test split, not a prospective one simulating a real discovery mission.

- Solution: Adopt an evaluation framework that uses a test set generated from the intended discovery workflow. This better indicates real-world performance. The framework should enforce that the test set is larger and chemically broader than the training set [9].

Expand Feature Descriptors:

- Problem: The model uses only basic compositional descriptors, lacking information about atomic structure.

- Solution: Integrate structural descriptors. For example, extract features from Voronoi tessellations of crystal structures. Research shows that while composition-based descriptors are sufficient for many cases, structural descriptors are critical for accurately identifying materials with large formation enthalpies [6].

Issue: High Computational Cost of Data Generation and Model Training

Symptoms:

- DFT calculations for generating training labels are consuming excessive resources.

- Training complex models like deep neural networks is slow and computationally expensive.

Diagnosis and Solutions:

Use ML as a Pre-filter:

- Problem: Running DFT on every candidate in a vast chemical space is computationally prohibitive.

- Solution: Deploy fast ML models as pre-screeners. They can rapidly narrow down millions of candidates to a shortlist of the most promising stable materials, which are then passed to higher-fidelity (but slower) DFT methods for validation. Universal interatomic potentials (UIPs) are particularly effective for this role [9].

Choose the Right Model for the Data Regime:

- Problem: Using a complex, data-hungry model on a relatively small dataset.

- Solution: For datasets of moderate size (~20,000 samples), ensemble methods like Extremely Randomized Trees (ERT) have been shown to achieve low MAE (e.g., 121 meV/atom) and are less sensitive to hyperparameters. Reserve deep neural networks for very large datasets (>100,000 samples) where representation learning provides an advantage [9] [6].

Experimental Protocols & Methodologies

Protocol 1: Building a Robust ML Model for Crystal Stability Prediction

This protocol outlines the steps for constructing an ML model to predict thermodynamic stability, using the formation energy or the energy above the convex hull as the target property.

Data Collection:

- Source a large dataset of computed formation energies from a high-throughput database like the Materials Project (MP), Open Quantum Materials Database (OQMD), or AFLOW.

- Critical Step: Calculate the target variable, the "distance to the convex hull" (ΔEhull), for each material. This is a more accurate indicator of thermodynamic stability than formation energy alone [6].

Feature Engineering:

- Generate a comprehensive set of features for each material:

- Compositional Features: Elemental properties (e.g., electronegativity, atomic radius) and their statistics (mean, range, mode) across the composition.

- Structural Features: Use methods like Voronoi tessellation to extract descriptors characterizing the local atomic environment and crystal structure [6].

- Create hybrid feature sets (e.g., GD, M-GD, A-GD) to provide the model with complementary information [7].

- Generate a comprehensive set of features for each material:

Model Training and Benchmarking:

- Split data into training and a prospective test set designed to simulate a real discovery campaign [9].

- Benchmark various algorithms (Random Forests, Gradient Boosting, Neural Networks, etc.) using the

matbench-discoveryframework or similar. - Focus on classification metrics (precision, recall, F1-score) for stability classification in addition to regression metrics (MAE, RMSE).

Validation:

- Apply the best-performing model to a set of novel, unscreened compositions.

- Validate the top ML predictions using DFT calculations.

Protocol 2: Thermodynamic Optimization in Drug Design

This protocol describes how to integrate thermodynamic measurements into the drug design process to optimize molecular interactions.

Isothermal Titration Calorimetry (ITC) Experiments:

- Perform ITC to measure the binding affinity (Ka) and the enthalpy change (ΔH) upon the drug candidate binding to its target.

- Calculate the Gibbs free energy (ΔG) and entropy (ΔS) using the equations: ΔG = -RT ln Ka and ΔG = ΔH - TΔS [20].

Construct a Thermodynamic Profile:

- For each drug candidate, create a profile containing ΔG, ΔH, and TΔS.

- Analyze the balance of forces. Is binding driven by enthalpy (favorable bonding) or entropy (e.g., hydrophobic effects)?

Energetic Optimization:

- Use tools like thermodynamic optimization plots and the enthalpic efficiency index to guide chemical modifications.

- Aim for enthalpic optimization—improving bonding interactions—rather than relying solely on increasing hydrophobicity, which can lead to poor solubility [20].

- Be aware of entropy-enthalpy compensation; a more negative ΔH may be offset by a more negative ΔS.

Performance Data and Model Comparison

The following tables summarize quantitative performance data for various ML models applied to stability prediction tasks, as reported in the literature.

Table 1: Performance of ML Models on Material Stability Prediction

| Material System | ML Model | Performance Metric | Result | Key Insight | Source |

|---|---|---|---|---|---|

| General Power System | Artificial Neural Network (ANN) | Accuracy | 96% | Demonstrates high accuracy achievable with ANN for stability tasks. | [21] |

| Cubic Perovskites | Extremely Randomized Trees (ERT) | MAE | 121 meV/atom | ERT performs well on moderate-sized datasets (~20k samples). | [6] |

| Conductive MOFs | Ensemble/Tree Models (with feature engineering) | R² (Formation Energy) | 0.96 | Proper feature engineering is critical for high prediction accuracy. | [7] |

| Elpasolite Crystals | Kernel Ridge Regression (KRR) | MAE | 0.1 eV/atom | KRR can be a strong model for specific crystal prototypes. | [6] |

| Ti-N System | Moment Tensor Potential (MTP) | RMSE (Test set) | 6.8 meV/atom | ML-based interatomic potentials can achieve DFT-level accuracy. | [22] |

Table 2: Classification Performance for Electronic Properties

| Property Predicted | Material System | ML Model | Performance Metric | Result | Source | |

|---|---|---|---|---|---|---|

| Metallicity | Conductive MOFs | Extra Trees Classifier | Accuracy | 92% | ML can effectively predict electronic properties beyond stability. | [7] |

| Bandgap Classification | Conductive MOFs | Extra Trees Classifier | Accuracy | 82% | Highlights the utility of ML for multi-property screening. | [7] |

Workflow Visualization

ML for Material Stability Workflow

Drug Stability Optimization Protocol

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Datasets for ML-Driven Stability Research

| Tool/Reagent | Type | Primary Function | Application Note |

|---|---|---|---|

| Materials Project (MP) | Database | Source of pre-computed structural and energetic data for ~150,000 materials. | Essential for sourcing training data and calculating convex hull stability [9]. |

| Matbench Discovery | Python Package | Evaluation framework for benchmarking ML models on materials discovery tasks. | Provides standardized metrics and leaderboards to compare model performance fairly [9]. |

| Voronoi Tessellation | Structural Descriptor | Generates fingerprints describing the local atomic coordination environment. | Crucial for creating structural features that improve model generalizability [6]. |

| Isothermal Titration Calorimetry (ITC) | Instrument | Directly measures binding affinity (Ka) and enthalpy change (ΔH). | The "gold standard" for obtaining full thermodynamic parameters in drug binding studies [20]. |

| Universal Interatomic Potentials (UIPs) | ML Model | Fast, quantum-accurate force fields for energy and force prediction. | Excellent for pre-screening millions of hypothetical structures before DFT [9]. |

| Moment Tensor Potential (MTP) | ML Interatomic Potential | A class of MLIPs for modeling complex atomic interactions. | Achieves low errors (e.g., RMSE < 7 meV/atom) comparable to DFT, as shown in Ti-N systems [22]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the key difference in how the Materials Project and OQMD handle formation energies, and why does this matter for my ML model's accuracy?

The core difference lies in their energy correction schemes. The Materials Project employs the MaterialsProject2020Compatibility scheme, which applies post-DFT energy corrections to better align formation energies with experimental data. This includes refitted corrections for legacy species (e.g., oxygen, diatomic gases) and new corrections for elements like Br, I, Se, and Te [23]. In contrast, the OQMD uses a different chemical potential fitting procedure [24]. These methodological differences mean that the absolute formation energy for the same compound can vary between databases. For ML model accuracy, it is crucial to avoid mixing formation energy data from these sources without accounting for these systematic discrepancies, as it can introduce a significant bias. The mean absolute error (MAE) of these databases against experimental data is approximately 0.078-0.095 eV/atom [24].

FAQ 2: I found a material in the OQMD that is not in the Materials Project, or vice versa. How should I handle such missing data when building a training set?

This is a common occurrence due to the different curation criteria and calculation timelines of each database. The OQMD contains numerous hypothetical compounds based on decorations of common crystal prototypes, which may not be present in the MP [25]. Conversely, the MP regularly adds new content, such as materials from the GNoME project [23] [26]. For a comprehensive training set, you can merge data from both sources. However, it is critical to:

- Standardize the Data: Use the same input features (e.g., identical featurization methods) for materials from both databases.

- Account for Systematic Shifts: Be aware that your model might learn a different baseline for energies from each source. One strategy is to include a binary feature indicating the data source during training, which can help the model adjust for these systemic offsets.

FAQ 3: My ML model, trained on DFT formation energies from these databases, shows poor agreement with experimental stability data. What could be the cause?

This is a fundamental challenge arising from the DFT-experiment discrepancy. DFT calculations are performed at 0 K, while experimental formation energies are typically measured at room temperature. Although databases apply corrections to reduce this gap, an inherent error remains. As shown in research, the MAE between DFT databases and experimental data is around 0.1 eV/atom, which sets a lower bound on the error you can expect from a model trained solely on DFT data [24]. To improve accuracy, consider using deep transfer learning. This involves first pre-training a model on a large source of DFT data (e.g., the ~341,000 entries in the OQMD) and then fine-tuning it on a smaller set of experimental data. This approach has been shown to achieve an MAE of about 0.06 eV/atom against experiments, significantly outperforming models trained from scratch on either DFT or experimental data alone [24].

FAQ 4: The Materials Project database has multiple versions. How do version changes impact my existing models and analysis?

The Materials Project database is regularly updated, which can lead to changes in the stability of materials (i.e., a material previously classified as stable may be "bumped off" the convex hull in a newer version) [23] [26]. For example, the v2024.12.18 release changed the hierarchy for thermodynamic data presentation, which affected which formation energy is displayed for a material [23]. To ensure reproducibility, you must always record the specific database version used to train your model. When a new version is released, it is good practice to re-benchmark your model's performance on the updated data to assess its robustness and determine if retraining is necessary.

Troubleshooting Guides

Issue 1: Inconsistent Phase Stability Predictions

Problem: Your ML model predicts a material to be stable, but data from a DFT database (or a different model) indicates it is unstable, or vice versa.

Solution:

- Step 1: Verify the Reference Data. Check the "energy above hull" in the database. A value of 0 eV/atom indicates thermodynamic stability. Be aware that small positive values (e.g., 5-10 meV/atom) might be within the error margin of both the DFT calculation and your ML model.

- Step 2: Check for Database Updates. As outlined in FAQ #4, consult the MP database changelog [23] to see if the stability of the material in question has changed in a recent version. Your model might have been trained on an outdated snapshot.

- Step 3: Investigate Data Source. Confirm whether your training data was sourced from a single database or a mixture. As per FAQ #1, mixing data from MP and OQMD without correction can lead to instability in predictions.

- Step 4: Analyze Feature Space. Check if the composition or structure of the mispredicted material falls outside the distribution of your training data (i.e., it is an out-of-distribution sample). Models are less reliable when extrapolating.

Issue 2: Poor Generalization to New Chemical Spaces

Problem: Your model performs well on a test set from known chemical systems but fails to accurately predict formation energies for compositions with many (5+) unique elements.

Solution:

- Step 1: Leverage Advanced Models. Use state-of-the-art graph network models like GNoME, which have demonstrated emergent generalization to high-entropy systems with 5+ elements, a space where traditional models struggle [26].

- Step 2: Apply Transfer Learning. If your target chemical space has limited data, pre-train a model on a large, diverse dataset (like the OQMD or MP) and then fine-tune it on the smaller, specific dataset for your area of interest. This allows the model to learn general chemical principles before specializing [24].

- Step 3: Data Augmentation. Actively seek out databases that include hypothetical structures and high-entropy alloys to broaden the chemical diversity of your training data [25] [27].

Database Comparison and Key Metrics

Table 1: Key characteristics of the Materials Project and OQMD databases.

| Feature | Materials Project (MP) | Open Quantum Materials Database (OQMD) |

|---|---|---|

| Primary Focus | Experimentally known and computationally predicted stable materials [23] [26] | DFT calculations of ICSD compounds & vast hypothetical structures from prototype decorations [25] |

| Energy Correction | MaterialsProject2020Compatibility scheme [23] |

Chemical potential fitting procedure [24] |

| Typical MAE vs. Experiments | ~0.078 eV/atom [24] | ~0.083 eV/atom [24] |

| Data Scale | > 48,000 stable materials (as of historical data); 381,000 new stable crystals discovered by GNoME [26] | ~300,000 DFT calculations (as of 2015); over 32,000 ICSD compounds [25] |

| Key Features for ML | Regular updates, r2SCAN data, battery electrode data, phonon data [23] | Large volume of hypothetical structures; entire database is freely available without restrictions [25] |

| Access | API and web interface [23] | Full database download; web interface [25] |

Table 2: Key quantitative comparisons between DFT databases and experimental data for formation energy (from a 2019 study) [24].

| Database | Mean Absolute Error (MAE) vs. Experiments (eV/atom) |

|---|---|

| OQMD | 0.083 |

| Materials Project | 0.078 |

| JARVIS | 0.095 |

| ML Model with Transfer Learning | ~0.06 |

Experimental Protocol: Leveraging Transfer Learning to Bridge the DFT-Experiment Gap

This protocol details the methodology for using deep transfer learning to predict experimental formation energies, achieving higher accuracy than models trained solely on DFT data [24].

Objective: To train a model that predicts experimental formation energies with an MAE of ~0.06 eV/atom by leveraging large DFT datasets and smaller experimental data.

Materials & Computational Tools:

- Source Data: A large DFT-computed database (e.g., OQMD with ~341,000 formation energies) for pre-training.

- Target Data: A smaller set of experimental formation energies (e.g., the SGTE SSUB database with 1,963 samples).

- Model Architecture: The ElemNet deep neural network architecture or a similar deep learning model.

- Software Framework: Python with deep learning libraries (e.g., TensorFlow, PyTorch).

Procedure:

- Pre-training Phase:

- Train a deep neural network from scratch on the large OQMD dataset to predict DFT-computed formation energies from material composition.

- This step allows the model to learn a rich set of underlying features and chemical rules from a vast amount of data.

Transfer Learning / Fine-tuning Phase:

- Take the pre-trained model from the previous step. Do not initialize a new model with random weights.

- Replace the final output layer of the network to match the output of your new task (predicting experimental energy).

- Continue training (fine-tune) this model using the much smaller experimental dataset (e.g., 1,963 samples). Use a lower learning rate for this stage to avoid catastrophically forgetting the features learned during pre-training.

Validation:

- Evaluate the final model on a held-out test set of experimental data. The performance (MAE) should be significantly better (~0.06 eV/atom) than a model trained from scratch only on the experimental data (~0.15 eV/atom MAE) [24].

Transfer Learning Workflow for Formation Energy

Table 3: Key computational tools and resources for working with materials databases and ML.

| Tool / Resource | Function | Relevance to Thermodynamic Stability ML |

|---|---|---|

| pymatgen | Python library for materials analysis [23] | Parsing crystal structures, calculating features, and applying MP's energy compatibility corrections. |

| Matminer | Open-source materials data mining toolkit [24] | Provides a wide array of featurization methods to convert materials compositions and structures into numerical descriptors for ML models. |

| ElemNet | Deep neural network architecture [24] | A specialized model for predicting material properties from only their chemical composition; effective for transfer learning. |

| GNoME Models | Graph neural networks for crystal stability [26] | State-of-the-art models that show exceptional generalization for predicting the stability of new crystals, including those with many elements. |

| ATAT (Alloy Theoretic Automated Toolkit) | Toolkit for cluster expansion and phase diagram calculation [28] | Useful for generating special quasirandom structures (SQS) and calculating phase stability for alloy systems. |

| VASP | First-principles DFT calculation package [25] [28] | The underlying computational engine used to generate the data in OQMD, MP, and others; can be used to verify model predictions or generate new data. |

Advanced ML Architectures and Feature Engineering for Enhanced Stability Prediction

Stacked Generalization, or Stacking, is an ensemble machine learning technique designed to improve predictive performance by combining multiple models. It reduces the inductive bias that can occur when relying on a single model or hypothesis by leveraging a diverse set of "base models" and intelligently aggregating their predictions using a "meta-model" [29] [1] [30].

In scientific fields like thermodynamic stability research, where models are often constructed based on specific domain knowledge or assumptions, stacking has proven highly effective. It mitigates bias and enhances the accuracy of predicting properties like decomposition energy, a key metric of thermodynamic stability [1].

How Stacked Generalization Works

The architecture of a stacking model involves two or more levels of learning [29] [31] [32]:

- Level-0 Models (Base-Models): These are the first models that directly learn from the original training data. A diverse range of models (e.g., decision trees, logistic regression, neural networks) is used to ensure they make different types of errors [29] [32].

- Level-1 Model (Meta-Model): This model learns how to best combine the predictions from the base models. It is trained on the predictions made by the base models on out-of-sample data [29] [30].

The following diagram illustrates this workflow and data flow:

Diagram 1: Stacking Workflow and Data Flow

The most common approach to preparing the training dataset for the meta-model is via k-fold cross-validation of the base models. The out-of-fold predictions are used as the basis for the training dataset for the meta-model, which prevents overfitting and provides a more honest measure of performance on unseen data [29] [30].

Key Experimental Protocols and Methodologies

Implementation in Thermodynamic Stability Research

A practical application of stacking in materials science involved predicting the thermodynamic stability of inorganic compounds. The researchers developed a framework named ECSG (Electron Configuration models with Stacked Generalization) that integrated three distinct base models to reduce inductive bias [1]:

- Magpie: Utilizes statistical features from elemental properties.

- Roost: Conceptualizes chemical formulas as graphs to model interatomic interactions.

- ECCNN (Electron Configuration Convolutional Neural Network): A novel model using electron configuration matrices as input.

The meta-model was trained to find the optimal combination of these base models, resulting in an Area Under the Curve (AUC) score of 0.988 on the JARVIS database. Notably, this model demonstrated high sample efficiency, requiring only one-seventh of the data used by existing models to achieve the same performance [1].

Super Learner Protocol

The "Super Learner" is a specific implementation of stacking that uses V-fold cross-validation to build the optimal weighted combination of predictions. The following steps outline a generalized protocol applicable to thermodynamic stability prediction [30]:

- Split the dataset into V folds of equal size.

- For each fold v = {1, ..., V}:

- Treat fold v as the validation set and the remaining V-1 folds as the training set.

- Fit each base model on the training set.

- Use each fitted model to predict outcomes for the validation set.

- Calculate the risk (e.g., mean squared error) for each algorithm on the validation set.

- Average the estimated risks across all V folds for each algorithm.

- Create the "level-one" data: the cross-validated predicted outcomes from all base models.

- Train the meta-model by regressing the actual outcome against the cross-validated predictions, often under non-negativity and summation constraints.

- Re-fit all base models on the entire original training set.

- Generate final predictions on new data by combining the predictions from the fully-trained base models using the trained meta-model.

Frequently Asked Questions (FAQs)

1. What types of models should I choose for my base learners? Choose a diverse range of models that make different assumptions about the prediction task. The strength of stacking comes from combining models with uncorrelated errors. For example, you might combine linear models, tree-based models, support vector machines, and neural networks. Using models trained on different feature representations (e.g., elemental properties, graph representations, and electron configurations) has been shown to be effective in materials science [1].

2. What is the simplest meta-model I can start with? Linear models are highly effective and commonly used as meta-models. Linear Regression for regression tasks and Logistic Regression for classification tasks are standard choices. Their simplicity provides a smooth interpretation of the predictions from the base models and helps prevent overfitting [29] [30].

3. How do I prevent data leakage when implementing stacking? The key is to ensure the meta-model is trained on predictions from data not seen by the base models during their training. Always use k-fold cross-validation to generate the "level-one" data for the meta-model. Using the same dataset to train both the base learners and the meta-learner without cross-validation will lead to overfitting and over-optimistic performance estimates [29] [33].

4. My stacked model is not performing better than my best base model. What could be wrong? This can happen for several reasons:

- Lack of Diversity: Your base models may be making similar errors. Ensure they are diverse in type and assumptions.

- Overfitting the Meta-Model: Your meta-model might be too complex. Try a simpler meta-model like linear regression.

- Insufficient Data: Stacking often requires a reasonable amount of data to effectively train both the base and meta-models. Check if your dataset size is adequate [32].

5. Can I use ensemble methods like Random Forest as a base learner? Yes, other ensemble algorithms can be used as base-models within a stacking framework. A diverse set of base learners, including complex ones like Random Forests or other boosting algorithms, can contribute to a stronger stacked ensemble [29].

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Computational Tools and Libraries for Implementing Stacked Generalization

| Tool/Library | Primary Function | Application in Research |

|---|---|---|

| Scikit-learn [29] | Provides StackingRegressor and StackingClassifier classes. |

Offers a standard, production-ready implementation for Python users, simplifying the process of defining base models and a meta-model. |

| MLxtend [31] | Offers a StackingClassifier for rapid prototyping. |

Useful for educational purposes and quick experiments with stacking ensembles. |

| XGBoost | An implementation of gradient boosting. | Often used as a powerful base model within a stacking ensemble due to its high predictive performance. |

| SuperLearner (R) [30] | An R package that formalizes the Super Learner algorithm. | Provides a rigorous implementation based on V-fold cross-validation, ideal for clinical and epidemiological research. |

| K-Fold Cross-Validation [29] [30] | A model validation technique. | Critical function: Used to generate the out-of-fold predictions for the "level-one" dataset, preventing data leakage. |

Advanced Considerations and Best Practices

Performance and Error Metrics

The choice of loss function for training your meta-model is critical and should align with your research goal. The table below summarizes common metrics used in different scenarios.

Table 2: Common Objective Functions for Super Learner in Different Research Contexts

| Research Context | Objective Function | What It Optimizes | Example Use Case |

|---|---|---|---|

| Regression | L-2 Squared Error Loss (Y - Ŷ)² [30] |

Minimizes Mean Squared Error (MSE). | Predicting continuous properties like decomposition energy (ΔHd) [1]. |

| Binary Classification | Rank Loss [30] | Maximizes the Area Under the ROC Curve (AUC). | Classifying compounds as stable or unstable. |

| Binary Classification | Negative Bernoulli Log-Likelihood [30] | Maximizes the binomial deviance. | Predicting the probability of a binary outcome. |

Comparison with Other Ensemble Methods

Table 3: Comparison of Stacking with Other Popular Ensemble Techniques

| Feature | Stacking (SG) | Bagging (e.g., Random Forest) | Boosting (e.g., AdaBoost, XGBoost) |

|---|---|---|---|

| Core Principle | Combines different models via a meta-learner [33]. | Averages predictions from models trained on bootstrap samples [34]. | Sequentially builds models to correct errors of previous ones [33]. |

| Model Diversity | Heterogeneous (different algorithms) [33]. | Homogeneous (same algorithm) [33]. | Homogeneous (same algorithm) [33]. |

| Training Method | Parallel training of base models, then meta-model training [33]. | Parallel training of base models on random data subsets [34]. | Sequential training of base models [33]. |

| Primary Goal | Improve performance by leveraging unique strengths of different models and reducing model-specific bias [1]. | Reduce variance and overfitting [34]. | Reduce bias and create a strong learner from weak ones [33]. |

| Key Advantage | Can harness capabilities of a range of well-performing models, potentially capturing patterns any single model may miss [29]. | Highly effective with high-variance models like decision trees. Robust to outliers. | Often achieves very high accuracy and is effective on many problems. |

FAQs: Addressing Common Experimental Challenges

FAQ 1: What is the most significant source of error when building a feature set for thermodynamic stability prediction, and how can I mitigate it?

A significant source of error is inductive bias introduced by relying on a single type of domain knowledge or feature set. Models built solely on elemental compositions or specific atomic properties may miss crucial electronic-level information, leading to poor generalization on unseen data [1].

- Mitigation Strategy: Implement an ensemble framework based on stacked generalization. This approach combines models built on diverse knowledge domains (e.g., interatomic interactions, atomic properties, and electron configurations) to create a super learner that compensates for the weaknesses of any single model and provides more robust predictions [1].

FAQ 2: My model performs well on validation data but fails to predict the stability of new compounds accurately. What could be wrong?

This is a classic sign of poor model generalization, often resulting from a feature set that does not fully capture the factors governing thermodynamic stability.

- Troubleshooting Steps:

- Audit Your Features: Ensure your feature set includes information from multiple scales. The incorporation of Electron Configuration (EC) features, which are an intrinsic atomic property, can provide a more fundamental description of a material that is less reliant on idealized assumptions [1].

- Check for Data Leakage: Confirm that your validation data is not contaminated with information from your training set.

- Evaluate Sample Efficiency: Test if your model can achieve high accuracy with smaller datasets. A robust, well-generalized model often requires fewer data points to learn effectively. The ECSG framework, for example, has been shown to achieve comparable performance using only one-seventh of the data required by other models [1].

FAQ 3: Why should I use electron configurations as features instead of more traditional atomic descriptors?

Electron configurations describe the distribution of electrons in an atom's orbitals and are the fundamental basis for understanding chemical properties and bonding behavior [35] [36]. In the context of machine learning:

- Reduced Inductive Bias: Unlike hand-crafted features based on specific theories, EC is an intrinsic atomic property, potentially introducing fewer preconceived assumptions into the model [1].

- Foundation for Properties: Many atomic properties used in traditional feature sets (e.g., electronegativity, ionization energy) are themselves determined by the electron configuration. Using EC provides the model with a more foundational layer of data from which to derive complex relationships [35].

FAQ 4: How do I represent electron configuration data for use in a machine learning model, such as a Convolutional Neural Network (CNN)?

A effective method is to encode the EC data into a matrix format that a CNN can process.

- Methodology: For a given material's composition, create an input matrix where the rows correspond to elements (up to 118, for all possible elements) and the columns represent detailed electron orbital information (e.g., 168 features). This matrix can have multiple channels (e.g., 8) to capture different aspects of the electronic structure. This matrix is then fed into a CNN architecture for feature extraction and learning [1].

Experimental Protocols & Workflows

Protocol 1: Building an Ensemble Model with Stacked Generalization

This protocol outlines the methodology for creating a robust super learner, the Electron Configuration models with Stacked Generalization (ECSG), as presented in Nature Communications [1].

Objective: To accurately predict the thermodynamic stability of inorganic compounds by integrating multiple machine learning models based on diverse knowledge domains, thereby reducing inductive bias.

Key Reagent Solutions (Computational):

| Research Reagent Solution | Function in the Experiment |

|---|---|

| Materials Project (MP) / OQMD Database | Provides a large pool of validated data on compound energies and structures for training and testing machine learning models. |

| Magpie Model | A base learner that provides predictions based on statistical features of various elemental properties (e.g., atomic radius, mass) [1]. |

| Roost Model | A base learner that uses graph neural networks to model the chemical formula as a graph and capture interatomic interactions [1]. |

| ECCNN Model | A base learner, the Electron Configuration Convolutional Neural Network, which uses encoded electron configuration data as its input to capture electronic-level information [1]. |

| Stacked Generalization Meta-Learner | The algorithm (e.g., logistic regression) that learns to optimally combine the predictions of the three base models (Magpie, Roost, ECCNN) to produce the final, superior prediction [1]. |

Methodology:

- Data Collection: Acquire a dataset of inorganic compounds with known thermodynamic stability labels (e.g., stable/unstable) from a database like the Materials Project (MP) or JARVIS.

- Base Model Training: Independently train three distinct base models:

- Train the Magpie model using statistical features of elemental properties.

- Train the Roost model using the graph representation of chemical formulas.

- Train the ECCNN model using the encoded electron configuration matrix as input.

- Prediction Generation: Use the trained base models to generate prediction scores on a hold-out validation dataset.

- Meta-Learner Training: Use the prediction scores from the three base models as new input features to train a meta-level model (the stacked generalizer).

- Validation: Evaluate the final ECSG model on a separate test set to measure its performance, for example, by its Area Under the Curve (AUC) score [1].

The following workflow illustrates the ECSG framework architecture and data flow:

Protocol 2: Implementing an Electron Configuration Convolutional Neural Network (ECCNN)

This protocol details the setup for the ECCNN, a novel model that directly processes electron configuration data [1].

Objective: To construct a CNN that learns from the fundamental electron configuration of elements in a compound to predict its thermodynamic stability.

Methodology:

- Input Encoding: Encode the chemical composition of a compound into a 3D input matrix (118 × 168 × 8). This matrix represents the electron configuration information for all possible elements in the dataset.

- Architecture:

- Convolutional Layers: Pass the input through two convolutional layers, each using 64 filters with a 5x5 kernel size to extract relevant features.

- Batch Normalization: Apply batch normalization (BN) after the second convolutional layer to stabilize and accelerate the training process.

- Pooling: Perform a 2x2 max pooling operation to reduce the dimensionality of the feature maps.

- Fully Connected Layers: Flatten the resulting features into a one-dimensional vector and pass it through one or more fully connected (dense) layers to generate the final prediction [1].

The ECCNN model architecture for processing electron configuration data is shown below:

Data Presentation: Model Performance Metrics

The following table summarizes the high performance of the ensemble ECSG model as reported in its foundational research, providing a benchmark for expected outcomes [1].

Table 1: Performance Metrics of the ECSG Ensemble Model

| Metric | Score / Outcome | Evaluation Context |

|---|---|---|

| Area Under the Curve (AUC) | 0.988 | Predicting compound stability within the JARVIS database. |

| Sample Efficiency | 1/7 of the data required by existing models | To achieve performance equivalent to other state-of-the-art models. |

| Key Advantage | Mitigates inductive bias | By integrating models from diverse knowledge domains (Magpie, Roost, ECCNN). |

| Validation Method | First-principles calculations (DFT) | Used to confirm the model's accuracy in identifying new stable compounds. |

The Electron Configuration Convolutional Neural Network (ECCNN) is a specialized machine learning framework designed to predict the thermodynamic stability of inorganic compounds by using their fundamental electron configuration as input data. This approach addresses a significant challenge in materials science: the efficient discovery of new, stable compounds without relying on costly and time-consuming experimental methods or density functional theory (DFT) calculations [1].

Traditional models for predicting material properties often incorporate significant biases because they are built on specific domain knowledge or idealized scenarios. ECCNN mitigates this issue by using electron configuration—an intrinsic atomic property—as its foundational input, thereby reducing inductive bias. When integrated into an ensemble framework called ECSG (Electron Configuration models with Stacked Generalization), ECCNN has demonstrated exceptional performance, achieving an Area Under the Curve (AUC) score of 0.988 on the JARVIS database. Notably, this framework requires only one-seventh of the data used by existing models to achieve equivalent performance, showcasing remarkable sample efficiency [1].

Technical Architecture & Workflow

Input Encoding and Data Representation

The ECCNN model processes information based on the electron configuration of the elements within a material's composition.

- Input Matrix Structure: The input to ECCNN is a 3D tensor with the dimensions 118 (elements) × 168 (features) × 8 (channels) [1]. This matrix is directly encoded from the electron configuration of the compounds.

- Conceptual Workflow: The process transforms raw compositional data into a stable/unstable prediction through a series of feature extraction and learning steps. The workflow can be visualized as follows:

Core Model Architecture

The ECCNN architecture is a convolutional neural network specifically designed to process the encoded electron configuration matrix [1].

- Convolutional Layers: The input matrix first undergoes two consecutive convolutional operations. Each convolution uses 64 filters with a kernel size of 5 × 5. These layers are responsible for detecting local, hierarchical patterns within the electron configuration data.

- Batch Normalization and Pooling: The second convolutional layer is followed by a Batch Normalization (BN) operation, which stabilizes and accelerates the training process. This is followed by a 2×2 max pooling layer, which reduces the spatial dimensions of the feature maps, aiding in computational efficiency and providing a degree of translational invariance.

- Classification Head: The features extracted by the convolutional and pooling layers are then flattened into a one-dimensional vector. This vector is passed through one or more fully connected (dense) layers, which ultimately perform the final classification task (e.g., predicting decomposition energy or a binary stability label).

The following diagram illustrates the architectural layers and data flow within the ECCNN model:

Key Research Reagent Solutions

The following table details the essential computational "reagents" and resources required to implement and train an ECCNN model for thermodynamic stability prediction.

| Resource Name | Type/Function | Key Details & Purpose in ECCNN |

|---|---|---|

| JARVIS/MP/OQMD Databases | Training Data | Extensive materials databases (e.g., Joint Automated Repository for Various Integrated Simulations, Materials Project) providing formation energies and decomposition energies for training and validation [1]. |

| Electron Configuration Data | Input Feature | Fundamental physical data describing the electron distribution of atoms; serves as the primary, low-bias input for the model [1]. |

| Convolutional Neural Network (CNN) | Core Algorithm | Specialized for processing structured grid-like data (e.g., the encoded electron matrix); excels at extracting spatial hierarchies of features [1]. |

| Stacked Generalization (SG) | Ensemble Framework | A meta-learning technique that combines ECCNN with other models (e.g., Roost, Magpie) to create a super learner, reducing individual model biases and enhancing overall accuracy [1]. |

Experimental Protocols & Performance

Key Experimental Methodology

The development and validation of ECCNN followed a rigorous experimental protocol [1]:

- Data Acquisition and Preprocessing: A large dataset of inorganic compounds and their corresponding thermodynamic stability data (e.g., decomposition energy, (\Delta H_d)) was sourced from public databases like JARVIS. The electron configuration for each compound was encoded into the standardized 118x168x8 input matrix.

- Model Training and Validation: The ECCNN model was trained on a subset of the data. Its architecture, featuring two convolutional layers with batch normalization and max pooling, was optimized to learn the mapping from electron configuration to thermodynamic stability.

- Ensemble Integration: The predictions from ECCNN were combined with those from other models (Magpie and Roost) using a stacked generalization framework. This created a "super learner" (ECSG) that leveraged the strengths of each individual model.

- Performance Benchmarking: The final ECSG model was tested on a held-out test set. Its performance was quantitatively evaluated using metrics like AUC and compared against existing state-of-the-art models to demonstrate its superiority in both accuracy and data efficiency.

Quantitative Performance Data

The ECCNN-based ensemble model demonstrates high performance as shown in the table below [1].

| Metric | ECCNN/ECSG Performance | Comparative Advantage |

|---|---|---|

| AUC (Area Under the Curve) | 0.988 | Higher accuracy in stability classification compared to existing models. |

| Sample Efficiency | Uses ~1/7 of the data | Achieves similar performance to other models using only a fraction of the training data. |

| Validation Method | First-principles calculations | Predictions were confirmed with high-accuracy computational methods, verifying model reliability. |

Troubleshooting Guides and FAQs

FAQ 1: What should I do if my ECCNN model fails to converge during training?

Answer: This is often related to data preprocessing or model configuration.

- Step 1: Verify Input Encoding. Double-check that your electron configuration matrix is correctly encoded. Ensure the dimensions (118x168x8) are exact and that the values are properly normalized. Misaligned input is a common cause of convergence failure.

- Step 2: Adjust Learning Rate. A learning rate that is too high can cause the model to overshoot optimal weights, while one that is too low can lead to extremely slow progress. Implement a learning rate scheduler to reduce the rate gradually during training.

- Step 3: Inspect Data Quality. Check your training dataset for excessive noise or incorrect labels. The quality of data from sources like the Materials Project is generally high, but inconsistencies can occur during your own data collection and labeling process.

- Step 4: Review Model Architecture. Confirm that the sequential order of layers (Convolution -> Convolution -> Batch Normalization -> Pooling) is correct. As a sanity check, try simplifying the model by reducing the number of filters or layers to see if it can learn a simpler task first.

FAQ 2: How can I improve the prediction accuracy of my ECCNN model for a specific class of materials?

Answer: Specializing the model requires fine-tuning and targeted data handling.

- Step 1: Perform Data Augmentation. Artificially increase the number of training examples for your specific material class. Techniques in computational materials science might include creating hypothetical, yet plausible, derivatives of existing compounds in your dataset to improve model robustness [37].

- Step 2: Utilize Transfer Learning. Take a pre-trained ECCNN model (trained on a large, general dataset like JARVIS) and fine-tune it on your smaller, specialized dataset. This allows the model to apply its general knowledge of electron configurations to your specific problem, often leading to better performance than training from scratch.

- Step 3: Employ Ensemble Learning. Follow the methodology in the original paper and integrate your ECCNN model into a stacked generalization framework with other models. For example, combine it with a model like Roost that captures interatomic interactions. This ensemble approach mitigates the bias of any single model and typically boosts overall accuracy [1].

FAQ 3: My model's predictions do not agree with subsequent DFT validation. What could be wrong?

Answer: Discrepancies between ML predictions and DFT results require a systematic diagnostic approach.