How Deep Learning Models Learn Chemical Principles for Synthesizability

This article explores how deep learning models are trained to understand and predict the synthesizability of chemical compounds, a critical challenge in drug discovery and materials science.

How Deep Learning Models Learn Chemical Principles for Synthesizability

Abstract

This article explores how deep learning models are trained to understand and predict the synthesizability of chemical compounds, a critical challenge in drug discovery and materials science. It covers the foundational concepts of molecular representation, from SMILES strings to graph-based and functional-group approaches. The piece delves into specific methodological architectures, including transformer-based models and autoencoders, and addresses key challenges like data scarcity and model interpretability. Finally, it provides a comparative analysis of modern synthesizability predictors and discusses the validation frameworks essential for translating computational predictions into real-world laboratory synthesis, offering a comprehensive guide for researchers and development professionals navigating this evolving field.

The Synthesizability Challenge: Why Deep Learning is Revolutionizing Molecular Design

Defining Synthesizability in Chemical and Materials Science

Synthesizability is a central, yet complex, concept in chemical and materials science. It refers to the likelihood that a proposed chemical structure or material can be successfully realized in the laboratory through known or feasible synthetic pathways. The challenge of accurate synthesizability prediction lies in its multi-factorial nature, which depends not only on thermodynamic stability but also on kinetic factors, available precursors, synthetic methods, and even the availability of laboratory equipment [1]. For decades, heuristic rules, such as charge-balancing for inorganic materials, served as crude proxies. However, the rise of deep learning is revolutionizing this field by providing data-driven models that learn the underlying chemical principles governing synthesis from vast experimental datasets [2]. This guide explores the core definition of synthesizability and the mechanisms through which deep learning models are learning to decode its principles, thereby accelerating the discovery of novel materials and molecules.

Core Concepts and Definitions

What is Synthesizability?

Within the context of modern research, synthesizability can be defined on a spectrum:

- General Synthesizability: A material or molecule is considered synthesizable if it is synthetically accessible through current capabilities, regardless of whether it has been reported yet. This contrasts with a "synthesized" material, which has already been realized and documented [2].

- In-House Synthesizability: This pragmatic definition is crucial for experimentalists. It constrains synthesizability to the use of a specific, limited set of readily available building blocks and resources within a particular laboratory, making it a key objective for practical de novo drug design [3].

- Synthesis-Centric Synthesizability: With the growth of make-on-demand chemical libraries, a molecule can be deemed synthesizable if a pathway to create it from purchasable building blocks via a series of reliable chemical transformations can be proposed [4].

Traditional Proxies and Their Limitations

Historically, synthesizability has been assessed using simple physical and heuristic principles, which deep learning models must now learn to transcend.

- Charge-Balancing: For inorganic crystalline materials, a common assumption is that synthesizable compounds should have a net neutral ionic charge. However, analysis of known materials reveals this to be an incomplete metric, with only 37% of synthesized inorganic materials in the Inorganic Crystal Structure Database (ICSD) being charge-balanced according to common oxidation states [2].

- Thermodynamic Stability: Density functional theory (DFT) calculations of formation energy and energy above the convex hull are widely used. These metrics assume that synthesizable materials will not have thermodynamically stable decomposition products. While valuable, this approach fails to account for kinetic stabilization and only captures approximately 50% of synthesized inorganic materials [2] [5].

- Kinetic Stability: Phonon spectrum analysis can assess kinetic stability by identifying imaginary frequencies that indicate structural instability. However, some materials with imaginary frequencies can still be synthesized, showing this is not a perfect predictor [5].

Table 1: Performance Comparison of Traditional Synthesizability Proxies

| Proxy Metric | Fundamental Principle | Key Limitation | Reported Accuracy/ Coverage |

|---|---|---|---|

| Charge-Balancing | Net neutral ionic charge based on common oxidation states | Inflexible; cannot account for metallic, covalent, or complex bonding environments. | ~37% of known ICSD compounds are charge-balanced [2] |

| Thermodynamic Stability | Negative formation energy and low energy above convex hull | Does not account for kinetic stabilization; can miss metastable phases. | ~50% coverage of synthesized materials [2] |

| Kinetic Stability | Absence of imaginary frequencies in phonon spectrum | Materials with imaginary frequencies can be synthesized. | Limited quantitative accuracy reported [5] |

Deep Learning Approaches to Synthesizability

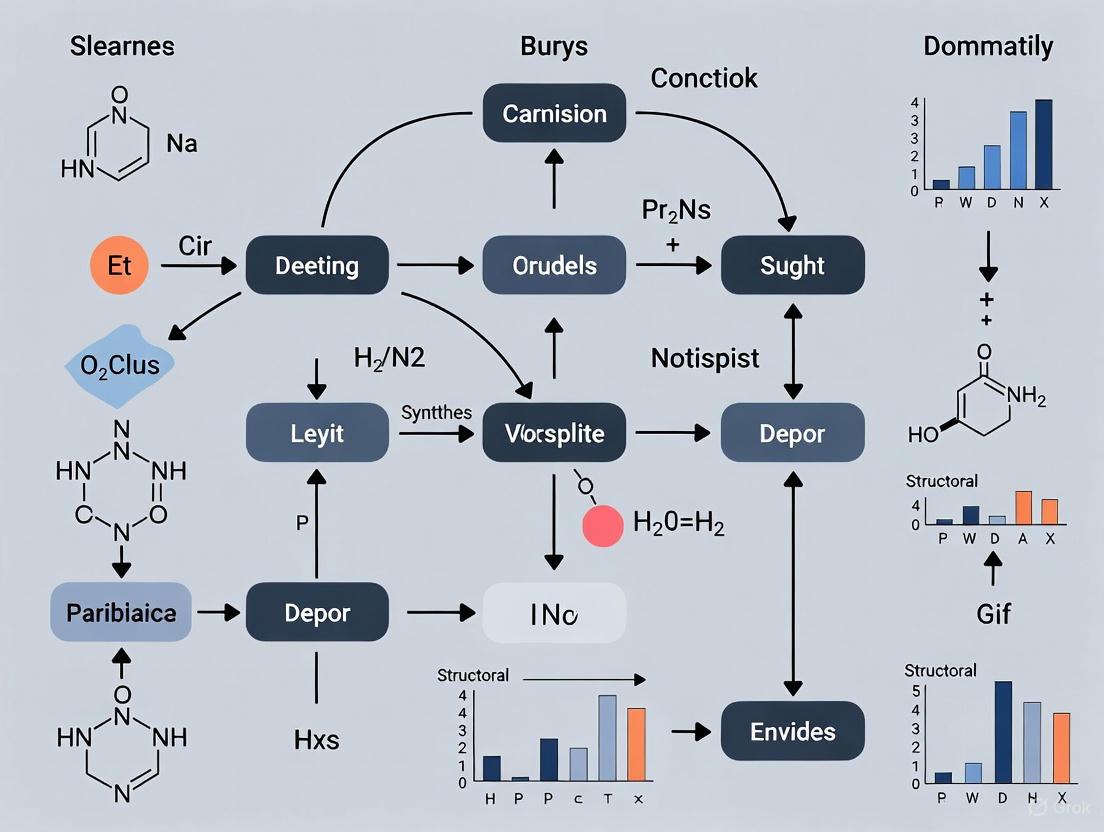

Deep learning models bypass the need for pre-defined rules by learning the complex, implicit "chemistry of synthesizability" directly from data. The following workflow illustrates the two primary paradigms in deep learning for synthesizability prediction.

Structure-Centric Models: Predicting 'If' a Material is Synthesizable

These models treat synthesizability as a classification or regression problem, predicting a likelihood score based on a material's composition or structure.

- SynthNN: A deep learning model that uses an atom2vec embedding matrix to represent chemical formulas. Trained on data from the ICSD and artificially generated unsynthesized materials, it learns an optimal representation for synthesizability without prior chemical knowledge. It has demonstrated 1.5x higher precision in discovering synthesizable materials compared to human experts and completes the task five orders of magnitude faster [2].

- Crystal Synthesis Large Language Models (CSLLM): This framework fine-tunes large language models on a text-based representation of crystal structures ("material string"). The synthesizability LLM achieves a state-of-the-art accuracy of 98.6% on testing data, significantly outperforming thermodynamic (74.1%) and kinetic (82.2%) stability metrics [5].

- Positive-Unlabeled (PU) Learning: A key technique to address the lack of confirmed "negative" examples (proven unsynthesizable materials). Models are trained on known synthesized materials (positives) and treat the rest of chemical space as unlabeled, then probabilistically reweight these unlabeled examples during learning [2] [5].

Synthesis-Centric Models: Generating 'How' to Synthesize a Molecule

These models ensure synthesizability by design, generating viable synthetic pathways rather than just scoring final structures.

- SynFormer: A generative AI framework that creates synthetic pathways for molecules using a transformer architecture and a diffusion module for building block selection. It defines the synthesizable chemical space as all molecules that can be formed from purchasable building blocks via up to five steps of known chemical transformations. This approach directly constrains the design process to synthetically tractable molecules [4].

- Computer-Aided Synthesis Planning (CASP)-based Scores: These models approximate the results of full synthesis planning. They are trained to predict the outcome of a CASP run (classification) or properties of the resulting synthesis route (regression), providing a fast proxy for full retrosynthesis analysis [3].

Table 2: Comparison of Deep Learning Models for Synthesizability

| Model Name | Model Type | Input | Key Output | Reported Performance |

|---|---|---|---|---|

| SynthNN [2] | Composition-based Classification | Chemical Formula | Synthesizability Probability | 7x higher precision than DFT formation energy; outperformed human experts. |

| CSLLM [5] | Fine-tuned Large Language Model | Crystal Structure (as text) | Synthesizability Classification | 98.6% accuracy on test set. |

| SynFormer [4] | Generative Transformer | Building Blocks & Templates | Synthetic Pathway | Enables navigable, synthesizable-by-design chemical space. |

| In-house CASP Score [3] | CASP-based Approximation | Molecular Structure | In-house Synthesizability Score | Enables rapid identification of molecules synthesizable from a limited building block set. |

How Deep Learning Models Learn Chemical Principles

A pivotal finding in this field is that deep learning models, even when provided only with compositional data or structural representations, can learn fundamental chemical principles without explicit programming. The experiments with SynthNN indicate that the model internalizes concepts such as charge-balancing, chemical family relationships, and ionicity from the distribution of known synthesized materials [2]. Similarly, the high accuracy of CSLLM suggests that through fine-tuning on a comprehensive dataset, the model's attention mechanisms align with material features critical to synthesizability, effectively learning the "language" of crystal synthesis [5]. This represents a shift from expert-defined rules to data-driven discovery of the complex, and potentially previously unknown, factors that make a material synthesizable.

Experimental Protocols and Methodologies

Protocol: Training a Structure-Centric Synthesizability Model (e.g., SynthNN)

Data Curation:

- Positive Examples: Extract experimentally synthesized materials from a database like the Inorganic Crystal Structure Database (ICSD). Filter for ordered, crystalline structures. Example: 70,120 structures with ≤40 atoms and ≤7 elements [5].

- Negative Examples: Employ a Positive-Unlabeled (PU) learning strategy. Generate a large set of hypothetical compositions or structures (e.g., from materials project databases) and treat them as unlabeled. Use a pre-trained model (e.g., ) to assign a CLscore, selecting those with the lowest scores (e.g., CLscore <0.1) as negative examples. This yields a balanced dataset [5].

Feature Representation:

- For composition-only models, use learned representations like atom2vec, where an embedding for each element is optimized during training [2].

- For crystal structure models, convert the structure into a concise text representation ("material string") that includes space group, lattice parameters, and atomic coordinates with Wyckoff positions to reduce redundancy [5].

Model Training and Validation:

- Use a deep neural network architecture (e.g., fully connected networks for compositions, transformers for text representations).

- Implement a PU loss function that probabilistically re-weights unlabeled examples [2].

- Validate performance on a held-out test set of known synthesizable and non-synthesizable materials, benchmarking against traditional methods like formation energy and charge-balancing.

Protocol: Assessing In-House Synthesizability for Drug Discovery

- Define the In-House Building Block Library: Curate a list of all readily available chemical building blocks in the laboratory (e.g., ~6000 compounds) [3].

- Configure Synthesis Planning Software: Use a tool like AiZynthFinder, configured to use the in-house building block library [3].

- Run CASP and Generate Data: For a set of target molecules (e.g., from ChEMBL), run the synthesis planner. A molecule is considered "solved" if at least one valid synthetic route is found using only in-house blocks.

- Train a Predictive Score: Use the outcomes of step 3 to train a machine learning classifier (the in-house synthesizability score) to quickly predict whether new molecules are synthesizable in-house.

- Integrate into De Novo Design: Use this score as an objective in a multi-objective generative model alongside property predictions (e.g., QSAR model) to generate candidate molecules that are both potent and synthesizable [3].

Table 3: Essential Resources for Synthesizability Research

| Resource Name | Type | Function in Research | Example/Reference |

|---|---|---|---|

| ICSD (Inorganic Crystal Structure Database) | Materials Database | Primary source of confirmed synthesizable (positive) inorganic crystalline materials for model training. | [2] [5] |

| Commercial Building Block Libraries (e.g., Zinc, Enamine) | Chemical Database | Defines the universe of possible starting materials for synthesis-centric models and CASP. | Zinc (17.4M compounds) [3] |

| In-House Building Block Inventory | Chemical Inventory | Defines the constrained synthesizable space for practical, resource-aware synthesizability prediction. | Led3 (~6000 compounds) [3] |

| Curated Reaction Template Sets | Knowledge Base | Encodes chemical knowledge about feasible transformations; the "grammar" for generating synthetic pathways. | 115 reaction templates used in SynFormer [4] |

| AiZynthFinder | Software Tool | Open-source toolkit for computer-aided synthesis planning used to generate training data and validate routes. | [3] |

| PU Learning Model (Pre-trained) | Algorithm | Provides a method to generate negative examples from unlabeled data, a critical step for structure-centric model training. | Model from Jang et al. used in [5] |

In the domain of chemical science, the reliance of deep learning (DL) on large-scale, labeled datasets presents a significant bottleneck. The process of discovering new, synthesizable materials and molecules is inherently constrained by the scarcity of experimentally validated data, a challenge often referred to as the "data scarcity" problem [6] [7]. This issue is particularly acute for supervised learning models, which require substantial amounts of labeled data to learn the complex relationships between a chemical structure and its properties, such as synthesizability or thermodynamic stability [6].

The core of the problem lies in the fact that while theoretical chemical space is nearly infinite, the subset of compounds that have been synthesized and characterized is exceptionally small. Data scarcity acts as the "biggest challenge" for Artificial Intelligence (AI) in these fields, threatening to restrict its growth and potential [7]. This whitepaper details the specific technical hurdles of data scarcity in synthesizability research, explores state-of-the-art methodological solutions, and provides a practical toolkit for researchers to navigate these challenges effectively.

Technical Hurdles in Synthesizability Research

Synthesizability research aims to predict whether a proposed inorganic crystalline material or a novel organic molecule can be successfully synthesized in a laboratory. Applying deep learning to this task faces several interconnected, data-related challenges.

The primary hurdle is the fundamental lack of negative data. While databases like the Inorganic Crystal Structure Database (ICSD) catalog successfully synthesized materials, unsuccessful synthesis attempts are rarely reported in the scientific literature [2]. This results in a dataset containing only "positive" examples (known synthesized materials) without confirmed "negative" examples (known unsynthesizable materials), creating a classic Positive-Unlabeled (PU) Learning scenario [2]. This lack of confirmed negative data makes it difficult for models to learn the boundaries between synthesizable and non-synthesizable compounds.

Furthermore, the available data is often imbalanced. In predictive maintenance, a field facing analogous issues, run-to-failure datasets may contain hundreds of thousands of observations of healthy system states and only a handful of failure events [8]. Similarly, in chemistry, the number of stable, synthesizable compounds is vastly outnumbered by the count of hypothetical, unstable ones. Models trained on such imbalanced data tend to be biased toward the majority class and may fail to identify rare but critical cases, such as a promising yet unconventional synthesizable molecule [8].

Finally, the process of manual data labeling is costly, time-consuming, and error-prone. It typically involves human annotators with vast domain knowledge, and in chemistry, this often requires expert scientists and expensive experimental work [6]. This manual bottleneck severely limits the pace at which large, high-quality labeled datasets can be created for training data-hungry deep learning models.

Table 1: Core Data Scarcity Challenges in Chemical Synthesizability Research

| Challenge | Description | Impact on Model Training |

|---|---|---|

| Lack of Negative Data | Only successfully synthesized ("positive") compounds are typically reported; unsynthesized or failed compounds ("negatives") are not [2]. | Prevents models from learning the distinguishing features of unsynthesizable materials, a problem framed as Positive-Unlabeled (PU) Learning. |

| Data Imbalance | The number of known, synthesizable compounds is vastly smaller than the number of hypothetical, non-synthesizable ones [8]. | Models become biased toward predicting "non-synthesizable," failing to identify novel, synthesizable candidates. |

| Cost of Labeling | Experimental validation of synthesizability requires expert knowledge, specialized equipment, and is time-consuming [6]. | Creates a fundamental bottleneck for expanding high-quality labeled datasets needed for supervised learning. |

Deep Learning Methodologies to Overcome Data Scarcity

To circumvent the data scarcity problem, researchers have developed sophisticated deep-learning methodologies that reduce dependency on large, labeled datasets.

Positive-Unlabeled and Semi-Supervised Learning

Semi-Supervised Learning (SSL) offers a powerful framework for leveraging a small amount of labeled data alongside a large pool of unlabeled data [9]. Techniques like pseudo-labeling, where the model itself generates labels for the unlabeled data, and consistency regularization, which enforces model predictions to be stable under small perturbations or augmentations of the input data, have been successfully refined for applications like medical image segmentation and species recognition in ecology [9].

A specific and highly relevant incarnation of SSL is Positive-Unlabeled (PU) Learning. The SynthNN model for predicting synthesizable inorganic materials is a prime example. Since definitive "unsynthesizable" examples are unavailable, SynthNN is trained on a database of known synthesized materials (positives) augmented with artificially generated unsynthesized materials [2]. To handle the uncertainty that some artificially generated materials might be synthesizable, SynthNN uses a PU learning approach that treats these unconfirmed examples as unlabeled data and probabilistically reweights them according to their likelihood of being synthesizable [2]. This allows the model to learn the chemistry of synthesizability directly from the distribution of known materials without relying on proxy metrics like charge-balancing alone.

Self-Supervised and Generative Approaches

Self-Supervised Learning (Self-SL) has become a cornerstone for scalable AI, particularly for pre-training models on vast amounts of unlabeled data [9]. In this paradigm, models are trained to solve a "pretext task" that does not require manual labels, such as predicting masked parts of the input data. For example, Meta's I-JEPA model learns abstract representations from unlabeled video data by predicting masked regions, enabling efficient downstream tasks with minimal labeled fine-tuning [9]. This approach allows models to learn robust, general-purpose representations of chemical space from massive unlabeled molecular databases before being fine-tuned for specific tasks like synthesizability prediction.

Generative AI provides another pathway, with models like Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) being used to create synthetic data [8]. A study on predictive maintenance demonstrated that GANs could generate synthetic run-to-failure data with patterns similar to the original, scarce data, which, when used to train machine learning models, led to high accuracy [8]. In drug discovery, generative chemistry uses models like RNNs, VAEs, and GANs for the de novo design of molecular structures, opening a path to explore chemical space beyond known compounds [10] [11].

A cutting-edge advancement is the move from generating molecular structures to generating synthetic pathways. SynFormer is a generative framework that ensures every proposed molecule has a viable synthetic pathway by modeling the synthesis process itself, using readily available building blocks and known chemical transformations [4]. This synthesis-centric approach directly constrains the design process to synthesizable chemical space, addressing the core problem of intractable AI-generated molecules.

Advanced Techniques for Low-Data Regimes

Transfer Learning (TL) is a widely adopted strategy where a model pre-trained on a large, general dataset (e.g., a broad molecular database) is fine-tuned on a smaller, specific dataset for a targeted task [6]. This allows knowledge gained from a data-rich domain to be transferred to a data-poor one.

Active Learning optimizes the data labeling process by intelligently selecting the most informative data points for experimental validation. In drug discovery, Deep Batch Active Learning methods have been developed to select batches of molecules for testing based on their likelihood of improving model performance, leading to significant potential savings in the number of experiments needed [12]. These methods use model uncertainty and diversity metrics to maximize the information content of each experimental batch.

Table 2: Deep Learning Solutions for Data Scarcity in Synthesizability Research

| Methodology | Core Principle | Key Example Models |

|---|---|---|

| Semi-Supervised (SSL) & Positive-Unlabeled (PU) Learning | Leverages a small set of labeled data and a large pool of unlabeled data; directly handles the lack of negative examples [9] [2]. | SynthNN [2], Pseudo-labeling, Consistency Regularization [9] |

| Self-Supervised Learning (Self-SL) | Pre-trains models using "pretext tasks" on unlabeled data to learn general representations before fine-tuning on labeled data [9]. | I-JEPA [9], GPT-4 & variants [9] |

| Generative AI & Synthetic Data | Generates new, realistic data to augment training sets or directly designs molecules constrained by synthesizability rules [10] [4] [8]. | GANs [8], VAEs [10] [11], SynFormer [4] |

| Transfer Learning | A model pre-trained on a large, source task is fine-tuned for a specific, data-scarce target task [6]. | Models pre-trained on ChEMBL/PDB, fine-tuned for specific targets |

| Active Learning | Iteratively selects the most valuable data points to label, optimizing the use of experimental resources [12]. | COVDROP, COVLAP [12] |

Experimental Protocols and Workflows

Protocol: Implementing a PU Learning Model for Synthesizability

The following protocol is based on the development of SynthNN for predicting synthesizable inorganic materials [2].

Data Acquisition and Curation:

- Positive Data: Compile a database of known, synthesized materials. The primary source for inorganic crystals is the Inorganic Crystal Structure Database (ICSD) [2].

- Unlabeled Data: Generate a large set of hypothetical, artificial chemical formulas that are not present in the positive dataset. This can be done through combinatorial generation or by perturbing known compositions.

Model Architecture and Training:

- Representation: Use a learned representation for chemical formulas, such as atom2vec, which learns an optimal atom embedding matrix directly from the distribution of synthesized materials alongside other network parameters [2]. This avoids reliance on pre-defined, hand-crafted features.

- PU Learning Formulation: Implement a PU learning algorithm that treats the artificially generated formulas as unlabeled data. A common approach is to class-weight these unlabeled examples according to their likelihood of being synthesizable, as done in SynthNN [2].

- Training: Train a deep learning classification model (e.g., a fully connected network) using the positive and (weighted) unlabeled data. The model learns to predict the probability that a given chemical formula is synthesizable.

Validation and Benchmarking:

- Benchmarking: Compare the model's performance against baseline methods, such as random guessing and traditional heuristics like the charge-balancing criteria [2].

- Expert Comparison: For a robust validation, conduct a head-to-head comparison against human experts. SynthNN, for instance, was shown to outperform all experts, achieving 1.5× higher precision and completing the task five orders of magnitude faster than the best human expert [2].

Protocol: Generative Molecular Design with Synthetic Pathways

This protocol outlines the workflow for using a generative framework like SynFormer to create synthesizable molecules [4].

Define the Synthesizable Chemical Space:

Model Training and Pathway Generation:

- Architecture: Employ a scalable transformer architecture to represent synthetic pathways. Use a linear postfix notation to sequence tokens representing start, end, reactions ([RXN]), and building blocks ([BB]) [4].

- Building Block Selection: Incorporate a denoising diffusion model as a token head module to efficiently select suitable molecular building blocks from the large, multimodal space of available options [4].

- Training: Train the model (e.g., SynFormer-ED for reconstruction or SynFormer-D for goal-oriented generation) on synthetic pathways constructed from the defined building blocks and reaction templates.

Application for Molecular Discovery:

- Local Exploration: To generate analogs of a query molecule, input the molecule into the encoder (in SynFormer-ED). The model will generate synthetic pathways for molecules that are structurally similar and synthesizable [4].

- Global Exploration: To optimize for a specific property, fine-tune the decoder-only model (SynFormer-D) using a black-box property prediction oracle. The model will then generate synthetic pathways for novel molecules that maximize the target property while remaining synthesizable [4].

The following diagram illustrates the core logical relationship between the data scarcity problem and the suite of solutions discussed in this whitepaper, culminating in the generative pathway approach.

Table 3: Essential Resources for Deep Learning in Synthesizability Research

| Resource Category | Specific Examples & Functions | Key Applications |

|---|---|---|

| Chemical Databases | ICSD [2]: Source of synthesized inorganic crystal structures.PubChem [10]: Comprehensive database of chemical substances.ChEMBL [10] [4]: Database of bioactive molecules with drug-like properties.PDB [10]: Source for 3D structures of proteins and nucleic acids. | Provides "positive" data for training; source of molecular structures for pre-training and benchmarking. |

| Molecular Representations | SMILES [11]: String-based representation of molecular structure.Molecular Graphs [11]: Represents atoms as nodes and bonds as edges in a graph.atom2vec [2]: Learned representation that captures chemical properties from data. | Converts chemical structures into a numerical format that deep learning models can process. |

| Software & Tools | DeepChem [12]: Open-source toolkit for deep learning in drug discovery.GuacaMol & MOSES [11]: Benchmarking platforms for evaluating generative models. | Provides implementations of algorithms and standardized metrics for model evaluation and comparison. |

| Commercial Building Blocks | Enamine REAL Space [4]: A make-on-demand library of billions of synthesizable compounds. | Defines the space of readily accessible molecules for synthesis-centric generative models like SynFormer. |

The scarcity of labeled data is a defining challenge for applying deep learning to domain-specific problems like chemical synthesizability prediction. However, as detailed in this whitepaper, the field is moving beyond traditional supervised learning. Through innovative methodologies such as Positive-Unlabeled learning, Self-Supervised pre-training, and synthesis-centric generative AI, researchers can effectively learn chemical principles from limited data. The integration of these techniques, supported by active learning for optimal experimental design and robust benchmarking, provides a powerful framework for navigating the vastness of chemical space. This enables the reliable and efficient discovery of novel, synthesizable molecules and materials, ultimately accelerating progress in drug development and materials science.

The application of deep learning in molecular discovery represents a paradigm shift in fields such as drug development and materials science. A central challenge in this domain is the effective representation of molecular structures in a format that is both computationally tractable and chemically meaningful for machine learning models. The choice of molecular representation fundamentally determines a model's ability to learn underlying chemical principles, particularly the complex rules governing synthesizability—predicting whether a proposed molecule can be realistically synthesized in a laboratory. This technical guide provides a comprehensive examination of the three predominant molecular representation schemes: string-based (SMILES), fingerprint-based, and graph-based embeddings, with particular emphasis on their architectural implementation, comparative strengths, and limitations in the context of synthesizability research.

String-Based Representations: SMILES and Beyond

The Simplified Molecular-Input Line-Entry System (SMILES) is the most prevalent string-based representation, describing molecular structure using a sequence of characters that symbolically represent atoms, bonds, branches, and rings [13]. Despite its widespread adoption, SMILES exhibits significant limitations for deep learning applications. Its inherent fragility means that minor character-level errors can render an entire string syntactically invalid and chemically meaningless [13]. Furthermore, a single molecule can generate multiple valid SMILES strings, creating unnecessary complexity for model learning.

Advanced String-Based Representations

Recent research has developed more robust alternatives to canonical SMILES:

- SELFIES (SELF-referencIng Embedded Strings): This representation guarantees 100% syntactic validity, as every SELFIES string corresponds to a valid molecular graph through a rigorously defined grammar [13]. The units in SELFIES are enclosed in square brackets, preventing invalid tokenizations [13].

- t-SMILES (tree-based SMILES): A fragment-based, multiscale molecular representation framework that describes molecules using SMILES-type strings obtained by performing a breadth-first search on a full binary tree formed from a fragmented molecular graph [14]. The t-SMILES framework introduces only two new symbols ("&" and "^") to encode multi-scale and hierarchical molecular topologies, significantly reducing the search space compared to atom-based techniques and providing fundamental insights into molecular recognition [14].

Table 1: Comparison of String-Based Molecular Representations

| Representation | Description | Validity Guarantee | Key Advantage | Key Limitation |

|---|---|---|---|---|

| SMILES | Linear string from depth-first traversal of molecular graph | No | Simple, human-readable syntax | Fragile grammar; single character error causes invalidity [13] |

| DeepSMILES | Modified SMILES with reduced long-term dependencies | No | Resolves some syntactic issues | Allows semantic violations (e.g., incorrect atom valences) [14] |

| SELFIES | Grammar-based string with guaranteed validity | Yes (100%) | Robustness for generation; no invalid outputs [13] | Less human-readable; complex tokenization [14] |

| t-SMILES | Fragment-based string from binary tree traversal | Yes (theoretical 100%) | Multi-scale topology learning; reduced search space [14] | Dependent on fragmentation algorithm choice [14] |

Molecular Fingerprints and Feature-Based Representations

Molecular fingerprints are fixed-length vector representations that encode chemical structures by capturing the presence or absence of specific substructural patterns. Unlike string-based representations, fingerprints provide a lossy but numerically stable encoding suitable for similarity searching and machine learning models [13].

Fingerprint Reconstruction and Applications

The conversion from molecular structure to fingerprint is traditionally considered a lossy process, but recent advances demonstrate that seemingly irreversible fingerprint-to-molecule conversion is feasible with high accuracy [13]. Transformer-based architectures have successfully reconstructed lossless molecular representations from various structural fingerprints, including extended connectivity (ECFP), topological torsion, and atom pairs [13]. This breakthrough addresses a major limitation of structural fingerprints that previously precluded their use in natural language processing models for chemistry.

Table 2: Major Structural Fingerprint Types and Characteristics

| Fingerprint Category | Examples | Encoding Mechanism | Sequence Length | Application in Deep Learning |

|---|---|---|---|---|

| Predefined Substructure | MACCS Keys | Presence of 166 predefined SMARTS patterns | Fixed (166 bits) | Binary classification; similarity search [13] |

| Path-Based | RDKit, Daylight | Hashed linear and branched subgraphs | Variable (hashed to fixed size) | General-purpose machine learning [13] |

| Circular | ECFP, FCFP | Circular atom environments up to radius x | Variable (typically hashed) | Structure-activity relationship modeling [13] |

| Atom Environment | Topological Torsion | Sequences of four bonded atoms | Variable | Local structure capture [13] |

Graph-Based Molecular Representations

Graph-based representations provide the most natural abstraction of molecular structure, where atoms correspond to nodes and bonds to edges. This approach preserves the inherent topology and connectivity of molecules, making it particularly valuable for capturing complex structural relationships.

Molecular Graph Construction

The transformation of a SMILES string into a molecular graph involves several systematic steps. Using libraries such as RDKit and PyTorch Geometric, this conversion can be standardized for deep learning applications [15]:

The resulting graph structure contains comprehensive information about atom properties (via feature vectors) and bond characteristics, creating a complete computational representation of the molecule [15].

Hierarchical and Coarse-Grained Graph Representations

Recent innovations in graph-based representations include hierarchical approaches that integrate multiple levels of molecular detail. One promising framework employs functional-group-based coarse-graining, creating a dual-level graph representation [16]:

- Motif-level graph: Represents the molecule as a graph of functional groups ({{\mathcal{G}}}^{{\rm{f}}}(M)=\left({{\mathcal{V}}}^{{\rm{f}}}(M),{{\mathcal{E}}}^{{\rm{f}}}(M)\right)) where nodes are chemical functional groups and edges represent their connectivity [16].

- Atom-level graph: The traditional atomic representation ({{\mathcal{G}}}^{{\rm{a}}}(M)=\left({{\mathcal{V}}}^{{\rm{a}}}(M),{{\mathcal{E}}}^{{\rm{a}}}(M)\right)) containing detailed atomic information [16].

- Hierarchical mapping: A precise mapping from each functional group node to its corresponding atomic subgraph [16].

This coarse-grained approach substantially reduces the complexity of the design space while maintaining chemical meaningfulness, making it particularly valuable for data-scarce environments common in domain-specific molecular design problems [16].

Experimental Protocols and Methodologies

Protocol 1: Transformer-Based Fingerprint Reconstruction

Objective: To reconstruct lossless molecular representations (SMILES/SELFIES) from structural fingerprints using sequence-to-sequence models [13].

Data Preparation:

- Curate a dataset of diverse molecular structures (e.g., from ChEMBL or ZINC databases)

- Generate multiple fingerprint representations for each molecule (ECFP, topological torsion, atom pairs, etc.)

- Pair each fingerprint with its corresponding canonical SMILES and SELFIES representation

Model Architecture:

- Implement a Transformer model with multi-head attention mechanism

- Configure encoder to process fingerprint inputs and decoder to generate string outputs

- Utilize attention mechanisms to learn global dependencies between input and output sequences

Training Procedure:

- Employ cross-entropy loss between predicted and target tokens

- Use teacher forcing during training with a scheduled sampling ratio

- Validate reconstruction accuracy on held-out test sets

Evaluation Metrics:

- Reconstruction success rate (percentage of exactly matching SMILES/SELFIES)

- Chemical validity of reconstructed molecules (even if not exact matches)

- Tanimoto similarity between original and reconstructed molecular fingerprints

Protocol 2: Coarse-Grained Graph Autoencoding

Objective: To learn invertible molecular embeddings through hierarchical graph representation [16].

Molecular Coarse-Graining:

- Deconstruct molecules into functional groups using a predefined vocabulary of ~100 common motifs

- Create motif graph {{\mathcal{G}}}^{{\rm{f}}}(M) where nodes represent functional groups

- Establish connectivity edges between functional groups based on molecular structure

Model Architecture:

- Implement a hierarchical encoder with message-passing networks at both atom and motif levels

- Design a self-attention mechanism to capture long-range interactions between functional groups

- Construct a decoder network that maps latent embeddings back to molecular graphs

Training Procedure:

- Jointly optimize reconstruction loss (between input and output molecules) and property prediction loss

- Employ variational inference to regularize the latent space

- Use a limited dataset of labeled molecules (e.g., 600 labeled examples) with larger unlabeled set

Evaluation Metrics:

- Reconstruction accuracy (exact match rate)

- Chemical validity of generated molecules

- Property prediction performance on target properties (e.g., glass transition temperature)

- Novelty and diversity of molecules generated from latent space interpolation

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Tools and Libraries for Molecular Representation Research

| Tool/Library | Type | Primary Function | Application in Research |

|---|---|---|---|

| RDKit | Cheminformatics Library | SMILES parsing, fingerprint generation, molecular graph manipulation | Fundamental tool for all molecular representation conversion and feature extraction [17] [15] |

| PyTorch Geometric | Deep Learning Library | Graph neural network implementations specialized for molecular graphs | Building and training graph-based molecular models [15] |

| Transformer Architectures | Model Architecture | Sequence-to-sequence learning for SMILES/fingerprint translation | Fingerprint reconstruction, molecular generation [13] [18] |

| t-SMILES Framework | Representation Framework | Fragment-based molecular string representation | Multi-scale molecular generation and optimization [14] |

| SynFormer | Generative Framework | Synthetic pathway generation for synthesizable molecules | Ensures generated molecules have viable synthetic routes [4] |

Workflow Visualization

Molecular Representation Conversion Pipeline

The following diagram illustrates the comprehensive workflow for converting between different molecular representations and their applications in synthesizability research:

Hierarchical Graph Representation Architecture

This diagram details the architecture for coarse-grained molecular graph representation, which integrates both atom-level and motif-level information:

The evolution of molecular representations from simple strings to sophisticated graph-based embeddings reflects the growing complexity of deep learning applications in chemical synthesis research. SMILES and fingerprint representations provide accessible entry points with well-established tooling, while graph-based approaches offer superior representation of molecular topology. The emerging frontier of hierarchical, coarse-grained representations successfully balances chemical intuition with computational efficiency, particularly for synthesizability prediction. As molecular design increasingly prioritizes synthetic feasibility, integration of synthesis pathway generation directly into representation models—exemplified by frameworks like SynFormer—represents the most promising direction for future research. The ideal molecular representation must not only accurately capture structural information but also encode the chemical knowledge necessary to distinguish between theoretically possible and practically synthesizable molecules.

The integration of deep learning into molecular discovery has revolutionized the ability to navigate vast chemical spaces, yet a significant challenge remains in ensuring that proposed molecules are synthetically feasible. This whitepaper examines how deep learning models learn and leverage fundamental chemical principles, with a specific focus on the critical role of functional groups and structural motifs. By analyzing advanced frameworks—including multi-channel learning, hierarchical message passing, and synthesis-aware generative models—we demonstrate that explicitly encoding hierarchical chemical knowledge enables models to better predict molecular properties, understand complex structure-activity relationships, and generate synthesizable candidates. The discussion is framed within synthesizability research, highlighting how learned representations of chemical hierarchies bridge the gap between predictive accuracy and practical synthetic feasibility, ultimately accelerating drug discovery and materials design.

The primary goal of computational molecular design is to identify novel compounds with target properties, but their practical impact is contingent upon synthesizability. Deep learning models initially struggled with this, often proposing structurally optimal but synthetically intractable molecules. This gap arises because synthesizability is a complex function of molecular hierarchy—from atomic connectivity to functional group compatibility and scaffold-based reactivity patterns.

Central to this discussion are functional groups—reactive clusters of atoms like hydroxyl or carbonyl groups—and structural motifs—broader patterns such as scaffolds or common molecular subgraphs. These elements form a natural hierarchy that governs chemical behavior. As [16] notes, "functional groups are local structures that underlie the key chemical properties of molecules," and their arrangement dictates reactivity, stability, and synthetic pathways. Deep learning models that learn this hierarchy can internalize rules of synthetic accessibility, moving beyond statistical correlation to capture causal chemical principles.

This technical guide explores how modern deep learning architectures explicitly represent and utilize these hierarchical components. We detail specific methodologies, experimental protocols, and performance outcomes, providing researchers with a framework for developing models that not only predict but also design within synthesizable chemical space.

Core Concepts: Functional Groups and Motifs as Hierarchical Design Elements

Defining the Chemical Hierarchy

In hierarchical chemistry, a molecule is decomposed into multiple representational levels:

- Atomic Level: Individual atoms and bonds, providing the finest structural granularity.

- Functional Group Level: Collections of atoms exhibiting characteristic chemical behavior (e.g., carboxylic acids, amines).

- Motif/Scaffold Level: Larger, often cyclic structural units that form a molecule's core framework.

- Molecular Level: The complete structure, integrating all lower-level components.

This hierarchy is not merely structural; it embodies a semantic organization where each level conveys distinct chemical information. As [19] explains, hierarchical modeling allows for the "capture [of] cross‐motif cooperative mechanisms, including hydrogen bonding, π–π stacking, and hydrophobic effects," which are often non-additive and context-dependent.

The Bridge to Synthesizability

Synthesizability is inherently a hierarchical problem. Retrosynthetic analysis decomposes target molecules into simpler precursors by breaking bonds at specific functional groups or around recognizable motifs. Deep learning models that operate natively at these hierarchical levels can learn the relationship between high-level structural patterns and feasible synthetic routes. For instance, [4] observes that models generating synthetic pathways—rather than just molecular structures—ensure that "designs are synthetically tractable" by construction, directly leveraging hierarchical chemical knowledge.

Table 1: Key Hierarchical Components and Their Roles in Molecular AI

| Hierarchical Level | Key Components | Role in Molecular Property Prediction | Role in Synthesizability Assessment |

|---|---|---|---|

| Atomic | Atoms, Bonds | Provides foundational topological information | Determines bond dissociation energies and basic reactivity |

| Functional Group | -OH, -NH₂, -COOH, etc. | Directly influences physicochemical properties (e.g., logP, polarity) | Dictates compatibility with specific reaction types and conditions |

| Motif/Scaffold | Benzene rings, piperidines, defined scaffolds | Defines core molecular shape and electronic environment | Serves as retrosynthetic starting point; influences strategic bond disconnections |

| Molecular | Complete 2D/3D structure | Determines emergent properties (e.g., bioactivity, toxicity) | Governs overall molecular complexity and synthetic step count |

Computational Frameworks for Hierarchical Molecular Learning

Multi-Channel Learning for Structural Hierarchies

The multi-channel learning framework introduced in [20] addresses context-dependent molecular properties by pre-training separate representation "channels" for different hierarchical views of a molecule:

- Global View (Molecule Distancing): Contrastive learning at the whole-molecule level.

- Partial View (Scaffold Distancing): Focuses on core scaffold structures, crucial for drug discovery.

- Local View (Context Prediction): Predicts masked functional groups and local atomic environments.

During fine-tuning, a prompt selection module dynamically aggregates these channel representations, making the final representation context-dependent for the downstream task. This approach demonstrates "competitive performance across various molecular property benchmarks and offers strong advantages in particularly challenging yet ubiquitous scenarios like activity cliffs" [20]. The explicit scaffolding channel helps the model recognize when structurally similar molecules exhibit dramatically different biological activities—a key synthesizability consideration in lead optimization.

Hierarchical Graph Neural Networks

Graph-based approaches naturally represent molecular hierarchy. The Hierarchical Interaction Message Passing Network (HimNet) constructs a multi-level graph with atom nodes, motif nodes (identified via BRICS decomposition), and a global molecular node [19]. Its Hierarchical Interaction Message Passing Mechanism enables "interaction-aware representation learning across atomic, motif, and molecular levels via hierarchical attention-guided message passing" [19].

Table 2: Performance Comparison of Hierarchical Models on Molecular Property Prediction Benchmarks

| Model | Hierarchical Approach | BBBP (AUC) | Tox21 (AUC) | Clint (Accuracy) | Activity Cliff Robustness |

|---|---|---|---|---|---|

| HimNet [19] | Multi-level graph with interaction attention | 0.923 | 0.851 | 0.901 | High |

| Multi-Channel [20] | Prompt-guided multi-channel pre-training | 0.910 | 0.842 | N/R | Superior |

| Functional-Group CG [16] | Coarse-grained functional group representation | 0.901 | 0.831 | 0.885 | Moderate |

| Standard GCN | Atomic-level only | 0.872 | 0.812 | 0.842 | Low |

Synthesis-Centric Generative Models

Rather than treating synthesizability as a post-hoc filter, synthesis-centric models like SynFormer [4] and the framework in [21] generate synthetic pathways directly, ensuring inherent synthesizability. SynFormer uses a transformer architecture to generate synthetic pathways in postfix notation, employing tokens for reactions and building blocks. This approach "ensures that every generated molecule has a viable synthetic pathway" [4] by construction.

The Saturn model [21] demonstrates that with sufficient sample efficiency, retrosynthesis models can be directly incorporated into the optimization loop, moving beyond simplistic synthesizability heuristics. This is particularly valuable when exploring "other classes of molecules, such as functional materials, [where] current heuristics' correlations diminish" [21].

Experimental Protocols and Methodologies

Building Hierarchical Molecular Representations

Protocol: Constructing a Hierarchical Molecular Graph [19]

- Input Representation: Begin with SMILES string or molecular structure file.

- Atom-Level Graph Construction: Parse into atom nodes (with features: atom type, hybridization, formal charge) and bond edges (with features: bond type, conjugation).

- Motif Identification: Apply BRICS decomposition algorithm to identify functional groups and structural motifs.

- Multi-Level Graph Assembly:

- Create motif nodes with features aggregated from constituent atoms

- Establish atom-motif edges representing membership relationships

- Create motif-motif edges based on molecular connectivity

- Introduce global node connected to all motif nodes

- Feature Initialization: Use learned embeddings for atom and motif types, with positional encodings for graph topology.

Training Multi-Channel Representation Learning Models

Protocol: Multi-Channel Pre-training [20]

- Channel Configuration: Implement three parallel channels for global, partial, and local views of molecular structure.

- Pre-training Tasks:

- Molecule Distancing: Use triplet contrastive loss with adaptive margin based on structural similarity.

- Scaffold Distancing: Generate scaffold-invariant perturbations as positive samples; push molecules with different scaffolds apart.

- Context Prediction: Mask subgraphs and predict their composition from surrounding context.

- Prompt-Guided Readout: For each channel, use a dedicated prompt token to aggregate atom representations into molecule-level representations.

- Fine-tuning: Implement task-specific prompt selection to aggregate channel representations, potentially using property prediction loss for guidance.

Evaluating Synthesizability in Generative Design

Protocol: Direct Synthesizability Optimization [21]

- Model Selection: Choose a sample-efficient generative model (e.g., Saturn based on Mamba architecture).

- Retrosynthesis Integration: Incorporate retrosynthesis models (e.g., AiZynthFinder) as oracles in the optimization loop.

- Multi-Parameter Optimization: Define objective function combining:

- Target molecular properties (e.g., binding affinity, solubility)

- Synthesizability score from retrosynthesis model

- Constrained Optimization: Execute under limited oracle budget (e.g., 1000 evaluations) to simulate real-world constraints.

- Validation: Compare generated molecules against synthesizability heuristics (SA score, SC score) and expert evaluation.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Hierarchical Molecular Learning

| Tool/Category | Specific Examples | Function in Hierarchical Learning | Application Context |

|---|---|---|---|

| Molecular Representation | SMILES, Graph Representation, Functional Group Vocabulary | Provides standardized input formats; functional groups enable coarse-graining | All stages of model development and evaluation |

| Decomposition Algorithms | BRICS, Retrosynthetic Rules | Identifies meaningful motifs and scaffolds for hierarchical graph construction | Data preprocessing for hierarchical models |

| Deep Learning Architectures | GNNs, Transformers, Multi-Channel Encoders | Learns hierarchical representations through specialized network designs | Model implementation and training |

| Retrosynthesis Platforms | AiZynthFinder, ASKCOS, IBM RXN | Provides ground truth for synthesizability assessment and training data | Synthesizability-constrained generation |

| Synthesizability Metrics | SA Score, SYBA, SC Score, RA Score | Quantitative assessment of synthetic feasibility | Model evaluation and comparison |

| Molecular Databases | ZINC15, ChEMBL, Enamine REAL | Source of training data and building blocks for generative models | Dataset curation and model training |

Case Studies: Hierarchical Learning in Action

Overcoming Activity Cliffs with Multi-Channel Learning

Activity cliffs—where small structural changes cause dramatic potency changes—pose significant challenges in drug discovery. The multi-channel framework [20] demonstrates particular strength in these scenarios by explicitly representing scaffolds (partial view) and functional groups (local view). In benchmark evaluations, it maintained robust performance where other methods showed "substantial performance decline," suggesting better preservation of chemical knowledge during fine-tuning [20].

Functional Materials Design with Explicit Synthesizability

When moving beyond drug-like molecules to functional materials, the correlation between common synthesizability heuristics and actual synthetic feasibility diminishes. [21] shows that in these cases, "directly optimizing for retrosynthesis models can offer clear benefits." By incorporating retrosynthesis models directly in the optimization loop, their approach identified promising functional material candidates that would have been overlooked by heuristic filters alone.

Data-Efficient Polymer Design with Coarse-Graining

The functional-group coarse-graining approach [16] demonstrates how hierarchical representation enables effective learning from limited data. Using only 6,000 unlabeled and 600 labeled polymer monomers, their model achieved "over 92% accuracy in forecasting properties directly from SMILES strings," exceeding state-of-the-art methods. The coarse-grained representation served as a low-dimensional embedding that substantially reduced data requirements while maintaining chemical fidelity.

The integration of hierarchical chemical knowledge into deep learning models represents a paradigm shift in computational molecular design. By explicitly modeling functional groups, structural motifs, and their complex interactions, these approaches bridge the gap between predictive accuracy and synthetic feasibility.

Future research directions should focus on:

- Dynamic Hierarchies: Developing models that can learn context-dependent hierarchical decompositions rather than relying on fixed rules.

- Reaction-Centric Representations: Creating unified representations that simultaneously encode molecular structure and synthetic pathways.

- Multi-Step Planning: Extending beyond one-step retrosynthesis to multi-step synthetic planning within generative frameworks.

- Knowledge Integration: Developing methods to incorporate explicit chemical rules and constraints into deep learning architectures.

As [16] concludes, hierarchical approaches that focus on "coarse graining based on functional groups" remain "data-efficient, allowing robust design and analysis even under data-scarce conditions." This efficiency, combined with improved synthesizability awareness, positions hierarchical deep learning as a transformative technology for the next generation of molecular discovery.

The integration of deep learning into chemical research has transformed molecular design, yet a fundamental challenge persists: bridging the gap between model predictions and chemical intuition. This is particularly acute in synthesizability research, where accurately predicting whether a computer-generated molecule can be feasibly created in a laboratory is paramount for practical applications in drug discovery and materials science. Traditional deep learning models often function as "black boxes," providing predictions without revealing the underlying chemical rationale. This limitation hinders trust and adoption among chemists and limits the utility of these models for providing genuine scientific insights. Contemporary research has therefore increasingly focused on developing interpretable AI approaches that extract and visualize the chemical principles models learn from data. By moving beyond pure prediction to explainable reasoning, these methods aim to replicate and augment a chemist's intuition, identifying key structural features and patterns that dictate synthetic feasibility. This technical guide examines the architectures, methodologies, and visualization techniques that are making this transition from black box to chemical intuition possible, with a specific focus on synthesizability research.

Interpretability Techniques: Extracting Chemical Rationale

To transform a model's internal logic from an inscrutable set of parameters into comprehensible chemical principles, researchers employ several powerful interpretability techniques. These methods probe trained models to determine which features and patterns most strongly influence their predictions.

SHAP Analysis for Feature Importance

SHAP (SHapley Additive exPlanations) quantifies the contribution of each input feature to a model's final prediction, based on cooperative game theory. In the context of synthesizability, models use SHAP to identify which molecular descriptors or functional groups most significantly impact the predicted synthetic accessibility score. For instance, in predicting chemical hazard properties—a related chemical feasibility task—SHAP analysis identified key molecular descriptors such as MIC4, ATSC2i, ATS4i, and ETAdEpsilonC as critical determinants for properties like toxicity and reactivity [22]. This approach translates a model's complex calculations into a ranked list of chemically meaningful contributors.

Individual Conditional Expectation (ICE) Plots

While SHAP provides global feature importance, ICE plots visualize the relationship between a specific molecular feature and the model's prediction for individual instances. ICE plots are particularly valuable for understanding how a model's response to a particular descriptor changes across its range, revealing non-linear relationships and interaction effects that might be obscured in aggregate analyses [22]. For example, an ICE plot could show how the predicted synthesizability score changes as the count of a specific functional group increases, allowing chemists to identify potential "tipping points" where synthetic complexity dramatically increases.

Attention Mechanisms in Chemical Language Models

Attention mechanisms, particularly self-attention in transformer architectures, automatically learn to weigh the importance of different parts of a molecular representation when making predictions. When processing a SMILES string or a molecular graph, the attention weights can be visualized to show which atoms, bonds, or functional groups the model "attends to" most strongly. This capability is exemplified in frameworks that integrate self-attention with functional-group coarse-graining, which capture intricate chemical interactions between molecular motifs [16]. The resulting attention maps provide an intuitive, human-interpretable visualization of the molecular substructures the model deems most relevant for predicting synthesizability.

Table 1: Key Interpretability Methods in Synthesizability Research

| Method | Technical Approach | Chemical Insight Provided | Representative Application |

|---|---|---|---|

| SHAP Analysis | Computes Shapley values from game theory to quantify feature contribution | Identifies which molecular descriptors most influence synthesizability scores | Ranking key molecular descriptors for toxicity and reactivity prediction [22] |

| ICE Plots | Plots model prediction as a function of a feature for individual instances | Reveals non-linear and interaction effects of specific molecular features | Visualizing how specific functional group counts affect predicted synthesis steps [22] |

| Attention Mechanisms | Learns and visualizes weights assigned to different parts of molecular structure | Highlights chemically significant substructures and functional groups | Identifying critical functional group interactions in polymer monomers [16] |

| Model Distillation | Trains simpler, interpretable models to approximate complex models | Creates transparent proxy models that maintain predictive performance | Extracting functional-group-based rules for synthesizability classification |

Model Architectures for Synthesizable Molecular Design

Different deep learning architectures offer distinct advantages and mechanisms for learning and applying chemical principles related to synthesizability. The table below compares the predominant architectures used in synthesizability research.

Table 2: Deep Learning Architectures for Synthesizability Prediction

| Architecture | Molecular Representation | Mechanism for Encoding Synthesizability | Performance Highlights |

|---|---|---|---|

| Chemical Language Models (CLMs) | SMILES strings | Learn syntactic and structural patterns from large corpores of known synthesizable compounds | DeepSA achieved 89.6% AUROC in discriminating hard-to-synthesize molecules [23] |

| Graph Neural Networks (GNNs) | Molecular graphs | Capture topological relationships and functional group interactions | GASA (Graph Attention-based model) showed remarkable performance in distinguishing synthetic accessibility [23] |

| Transformer-based Generative Models | SMILES or SELFIES strings | Generate synthetic pathways rather than just molecular structures | SynFormer ensures every generated molecule has a viable synthetic pathway [4] |

| Variational Autoencoders (VAEs) | Latent space representation | Enable Bayesian optimization in continuous chemical space | Combined with Bayesian optimization for efficient exploration of synthesizable chemical space [24] |

Synthesis-Centric vs. Structure-Centric Approaches

A critical architectural distinction in synthesizability research lies between structure-centric and synthesis-centric approaches. Structure-centric models (e.g., DeepSA, GASA) directly predict synthesizability scores from molecular structures by learning patterns from existing data on synthesizable and non-synthesizable compounds [23]. While effective for discrimination, these models provide limited insight into why a molecule is difficult to synthesize.

In contrast, synthesis-centric models (e.g., SynFormer) generate viable synthetic pathways rather than just molecular structures, ensuring synthetic tractability by construction [4]. These models "think" like chemists by planning retrosynthetic steps using known reaction templates and available building blocks. This approach embodies a more fundamental learning of chemical principles, as it must internalize knowledge of chemical reactivity, functional group compatibility, and synthetic strategy.

Experimental Protocols for Model Training and Validation

Rigorous experimental methodologies are essential for developing models that genuinely learn chemical principles rather than memorizing dataset artifacts. This section outlines standardized protocols for training and evaluating synthesizability models.

Dataset Curation and Preparation

The foundation of any effective synthesizability model is a high-quality, chemically diverse dataset. The standard protocol involves:

- Data Sourcing: Compile molecules from make-on-demand libraries (e.g., Enamine REAL Space) for "easy-to-synthesize" examples, and from virtual generative models or challenging natural products for "hard-to-synthesize" examples [23] [4].

- Synthesizability Labeling: Employ multi-step retrosynthetic analysis software (e.g., Retro*, AiZynthFinder) to assign labels. Molecules requiring ≤10 synthetic steps are typically labeled "easy-to-synthesize" (ES), while those requiring >10 steps or failing route prediction are labeled "hard-to-synthesize" (HS) [23].

- Feature Representation: Convert molecules to appropriate representations:

- SMILES Strings: Canonicalize SMILES using standardizers like RDKit [25].

- Molecular Graphs: Represent atoms as nodes and bonds as edges with features for atom type, hybridization, etc. [26].

- Functional Group Graphs: Implement coarse-grained representation where nodes are functional groups and edges represent their connectivity [16].

- Data Splitting: Employ scaffold splitting to ensure structural diversity between training and test sets, preventing overoptimistic performance from memorizing similar structures.

Model Training Protocol

The training procedure must be carefully designed to encourage learning of fundamental chemical principles:

Architecture Configuration:

Pretraining Phase: Train on large-scale molecular databases (e.g., ChEMBL, ZINC) to learn general chemical patterns without specific synthesizability labels [25].

Fine-Tuning Phase: Transfer learn on the curated synthesizability dataset with the following hyperparameters:

- Batch size: 128-512 (depending on model complexity)

- Learning rate: 1e-4 to 1e-5 with cosine decay scheduling

- Optimization: AdamW optimizer with gradient clipping

- Regularization: Attention dropout (0.1-0.3) and weight decay [23]

Interpretability Integration: Incorporate attention visualization and SHAP value calculation directly into the training loop to monitor the development of chemically meaningful feature importance.

Validation and Benchmarking

Comprehensive validation ensures models learn genuine chemical principles:

Performance Metrics: Evaluate using standard classification metrics (Accuracy, Precision, Recall, F1-score, ROC-AUC) with emphasis on AUC for robust class-imbalance handling [23].

Chemical Validity Check: For generative models, assess the percentage of generated molecules that are chemically valid (e.g., proper valency, recognized functional groups) [25].

Retrosynthetic Validation: For top-predicted synthesizable molecules, perform expert chemists' blind validation or computational retrosynthetic analysis to confirm feasibility [4].

Benchmarking: Compare against established synthesizability scores (SAscore, SCScore, RAscore, SYBA) on standardized test sets [23].

The Scientist's Toolkit: Essential Research Reagents

Implementing and experimenting with synthesizability models requires both computational and chemical resources. The table below details key components of the research toolkit.

Table 3: Essential Research Reagents for Synthesizability AI

| Tool/Resource | Type | Function | Example Implementations |

|---|---|---|---|

| Retrosynthetic Planning Software | Computational Tool | Generates synthetic pathways for labeling training data | Retro*, AiZynthFinder, Molecule.one [23] [4] |

| Molecular Building Blocks | Chemical Reagents | Purchasable starting materials for synthetic pathway generation | Enamine U.S. stock catalog (223,244 building blocks) [4] |

| Reaction Templates | Chemical Knowledge Base | Curated set of reliable chemical transformations for pathway generation | 115 reaction templates focusing on bi- and trimolecular couplings [4] |

| Molecular Descriptors | Feature Set | Quantifiable molecular features for model interpretation | MIC4, ATSC2i, ATS4i, ETAdEpsilonC [22] |

| Functional Group Vocabulary | Chemical Taxonomy | Standardized set of ~100 common functional groups for coarse-grained representation | Hierarchical mapping from functional groups to atomic subgraphs [16] |

Visualization Framework: From Data to Chemical Intuition

Effective visualization is crucial for translating model internals into chemically intuitive concepts. The following diagram illustrates the complete framework through which models transform raw data into actionable chemical intuition.

The transition from black-box models to chemically intuitive partners represents the next frontier in molecular AI. By leveraging interpretability techniques like SHAP analysis and attention mechanisms, and through architectures that explicitly encode chemical knowledge like functional group interactions and synthetic pathways, deep learning models are increasingly capable of not just predicting synthesizability but explaining their reasoning in chemically meaningful terms. The experimental protocols and visualization frameworks outlined in this guide provide a roadmap for developing and validating such interpretable models. As these approaches mature, they promise to augment chemical intuition with data-driven insights, accelerating the discovery of synthesizable functional molecules for drug development and materials science. Future research directions include developing more sophisticated attention mechanisms that can explain multi-step synthetic planning, creating standardized benchmarks for interpretability, and integrating real-world synthetic feedback to continuously refine model reasoning.

Architectures and Algorithms: How Models Encode Chemical Rules for Synthesis

The application of graph neural networks (GNNs), particularly message-passing neural networks (MPNNs), has catalyzed a paradigm shift in how computational models learn chemical principles for synthesizability research and drug development. Unlike traditional machine learning approaches that rely on manually engineered molecular descriptors, GNNs operate directly on the natural graph representation of molecules, where atoms constitute nodes and chemical bonds form edges [27]. This capability enables automated extraction of informative features that characterize molecular structure and properties, providing a powerful framework for predicting synthesizability and accelerating materials discovery.

Within the broader thesis of how deep learning models learn chemical principles, MPNNs offer a compelling architecture for capturing the fundamental rules governing atomic interactions and structural stability. By iteratively passing messages between connected atoms, these networks develop an internal representation that encodes both local chemical environments and global molecular topology [27] [28]. This review comprehensively examines the technical foundations, architectural variants, and practical applications of GNNs and MPNNs, with particular emphasis on their role in advancing synthesizability prediction and drug discovery.

Fundamental Principles of Molecular Graph Representation

Molecular Graphs as Structured Inputs

In computational chemistry, molecules are naturally represented as graphs, where atoms correspond to vertices and chemical bonds constitute edges. Formally, a molecular graph is defined as (G = (V, E)), where (V) represents the set of atoms (nodes) and (E) represents the set of chemical bonds (edges) connecting them [27]. This representation preserves the topological structure of molecules and provides a mathematical framework for computational analysis.

The concept of chemical graphs dates to 1874, predating even the modern term "graph" in graph theory [27]. This historical foundation underscores the natural alignment between molecular structures and graph-based computational approaches. For machine learning applications, each node (v \in V) is associated with a feature vector (hv^0 \in \mathbb{R}^d) encoding atomic properties (e.g., element type, hybridization, formal charge), while each edge (e{v,w} = (v, w) \in E) carries features (h_e^0 \in \mathbb{R}^c) representing bond characteristics (e.g., bond type, conjugation, stereochemistry) [27].

The Message-Passing Framework

Message-passing neural networks provide a unified framework for learning from graph-structured molecular data. The MPNN framework operates through three fundamental phases: message passing, node update, and readout [27] [28]. For (t = 1 \ldots K) message passing steps, the operations are defined as:

$${m}{v}^{t+1}=\sum\limits{w\in N(v)}{M}{t}({h}{v}^{t},{h}{w}^{t},{e}{vw})$$

$${h}{v}^{t+1}={U}{t}({h}{v}^{t},{m}{v}^{t+1})$$

$$y=R({{h}_{v}^{K}| v\in G})$$

where:

- (N(v)) denotes the neighbors of node (v)

- (M_t(\cdot)) is a learnable message function

- (U_t(\cdot)) is a learnable node update function

- (R(\cdot)) is a permutation-invariant readout function

- (y) is the final graph-level representation [27]

Table 1: Core Components of the Message-Passing Framework

| Component | Mathematical Definition | Chemical Interpretation |

|---|---|---|

| Message Function ((M_t)) | Generates messages from neighbor states | Encodes local bond interactions and atomic environments |

| Update Function ((U_t)) | Combines current state with incoming messages | Updates atomic representation based on local chemical context |

| Readout Function ((R)) | Aggregates final node states | Produces molecular fingerprint for property prediction |

This iterative process allows information to propagate through the molecular structure, with each step extending the receptive field of each atom to its broader chemical environment. After (K) steps, each atom representation encodes structural information from its (K)-hop neighborhood [27].

Advanced Architectures and Methodological Innovations

Extensions to Basic MPNN Framework

Recent research has introduced several architectural enhancements to address limitations of basic MPNNs:

Attention Mechanisms: Attention-based MPNNs (AMPNN) replace simple summation in message aggregation with weighted combinations, allowing the model to dynamically prioritize more relevant neighbors [28]. The message function becomes (m{v}^{t} = At(hv^t, Sv^t)), where (Sv^t = {(hw^t, e{vw}) \| w \in N(v)}) and (At) computes attention weights.

Edge Memory Networks: EMNN architectures incorporate dedicated edge representations that are updated throughout the message-passing process, explicitly modeling bond states in addition to atom states [28]. This is particularly valuable for capturing reaction chemistry and bond formation energetics relevant to synthesizability prediction.

Geometric GNNs: For 3D molecular structures, SE(3)-equivariant networks incorporate rotational and translational symmetry, enabling learning from molecular conformations without expert-crafted features [29]. These architectures have demonstrated particular strength in chirality-aware tasks, critical for pharmaceutical applications.

Multi-Modal and Multi-Level Fusion

The MLFGNN architecture addresses the limitation of capturing both local and global molecular structures by integrating Graph Attention Networks with a Graph Transformer [30]. This approach additionally incorporates molecular fingerprints as a complementary modality and introduces cross-representation attention to adaptively fuse information [30].

For imperfectly annotated data common in real-world drug discovery, the OmniMol framework formulates molecules and properties as a hypergraph, capturing three key relationships: among properties, molecule-to-property, and among molecules [29]. This approach integrates a task-routed mixture of experts (t-MoE) backbone to discern explainable correlations among properties and produce task-adaptive outputs.

Table 2: Performance Comparison of Advanced GNN Architectures

| Architecture | Key Innovation | Reported Advantages | Applications |

|---|---|---|---|

| MLFGNN [30] | Multi-level fusion of GAT and Graph Transformer | Outperforms SOTA in classification and regression | Molecular property prediction |

| OmniMol [29] | Hypergraph representation with t-MoE | State-of-the-art in 47/52 ADMET-P prediction tasks | Multi-task molecular representation |

| VAE-AL GM [31] | Variational autoencoder with active learning | Generates novel scaffolds with high predicted affinity | Target-specific molecule generation |

Experimental Protocols and Implementation

Benchmark Datasets and Evaluation Metrics

Standardized benchmarks are essential for evaluating GNN performance in molecular representation learning. Established datasets include:

- QM9: 134k stable small organic molecules with 19 geometric, energetic, electronic, and thermodynamic properties [29]

- Open Catalyst 2020 (OC20): Extensive dataset for catalyst simulations [29]

- PCQM4MV2: PubChemQC project dataset for molecular property prediction [29]

- MoleculeNet: Curated benchmark containing multiple datasets for various molecular properties [28]