High-Throughput DFT for Thermodynamic Stability Screening: Accelerating Materials and Drug Discovery

This article provides a comprehensive overview of high-throughput Density Functional Theory (DFT) calculations for thermodynamic stability screening, a transformative approach in materials science and drug development.

High-Throughput DFT for Thermodynamic Stability Screening: Accelerating Materials and Drug Discovery

Abstract

This article provides a comprehensive overview of high-throughput Density Functional Theory (DFT) calculations for thermodynamic stability screening, a transformative approach in materials science and drug development. It covers the foundational principles of thermodynamic stability and its critical role in predicting compound synthesizability and properties. The piece details established high-throughput DFT methodologies, workflow automation tools, and specific applications ranging from perovskite discovery to organic piezoelectric materials. It further addresses key challenges in computational screening, including accuracy versus efficiency trade-offs and bias mitigation strategies, and explores validation protocols that integrate experimental data and machine learning. Designed for researchers and development professionals, this guide serves as a strategic resource for leveraging computational power to accelerate the discovery and optimization of novel materials and pharmaceutical compounds.

The Fundamentals of Thermodynamic Stability and Its Role in Discovery

In high-throughput computational materials science, accurately assessing thermodynamic stability is a critical first step for predicting synthesizable compounds among vast numbers of candidates. This process primarily relies on two interconnected concepts: the formation energy of a compound and the construction of the convex hull within a phase diagram. The formation energy provides a measure of the energy gained when a compound forms from its constituent elements, while the convex hull defines the set of the most thermodynamically stable phases at various compositions. Compounds lying on this hull are considered stable, while those above it are metastable or unstable. The vertical distance from a compound's formation energy to the hull, known as the *energy above hull (Ehull*), quantifies its relative instability and propensity to decompose into more stable neighboring phases [1]. With the advent of extensive materials databases and advanced machine learning, the methodologies for determining these stability metrics are rapidly evolving, enabling the efficient screening of novel inorganic compounds, metal-organic frameworks, and energetic materials [2] [3] [4].

Core Definitions and Quantitative Metrics

Formation Energy (Ef)

The formation energy is the energy change associated with forming a compound from its elemental constituents in their standard reference states. A negative formation energy indicates that the compound is stable with respect to its elements.

Calculation: For a compound ( AxBy ), the formation energy per atom, ( Ef ), is calculated as: [ Ef = \frac{E{total}(AxBy) - x\muA - y\muB}{x+y} ] where ( E{total}(AxBy) ) is the total energy of the compound, and ( \muA ) and ( \muB ) are the chemical potentials of the elemental phases of A and B, respectively [1].

Energy Above Hull (Ehull)

The energy above hull is the energy difference between a compound's calculated formation energy and the corresponding point on the convex hull at the same composition. It represents the decomposition energy—the energy released when the compound decomposes into the most stable phases on the hull.

- Ehull = 0 meV/atom: The compound is thermodynamically stable.

- Ehull > 0 meV/atom: The compound is metastable or unstable. The magnitude indicates the degree of instability; even small positive values (e.g., 20-30 meV/atom) can indicate a metastable compound that may still be synthesizable [1].

Table 1: Key Thermodynamic Stability Metrics

| Metric | Symbol | Definition | Stability Criterion |

|---|---|---|---|

| Formation Energy | ( E_f ) | Energy to form a compound from its elemental references [1]. | ( E_f < 0 ) |

| Energy Above Hull | ( E_{hull} ) | Vertical distance from a compound's ( E_f ) to the convex hull [1]. | ( E_{hull} = 0 ) |

| Decomposition Energy | ( \Delta H_d ) | Energy difference between a compound and its stable decomposition products [2]. | ( \Delta H_d > 0 ) |

Computational Construction of the Convex Hull

The convex hull is a geometrical construction in energy-composition space that represents the set of the most stable phases.

Protocol for Convex Hull Construction:

- Data Collection: Gather the calculated formation energies (( E_f )) for all known and candidate compounds within a given chemical system (e.g., the A-B binary system).

- Plotting: Plot the formation energy per atom against composition for every compound.

- Geometrical Construction: Determine the lower envelope of points—the convex hull. In a binary system, this is the set of straight lines connecting the stable phases such that all other compounds lie on or above these lines.

- Stability Assignment: Any compound whose data point lies on this hull is deemed stable. Any compound above the hull is unstable with respect to decomposition into the stable phases defining the hull at that composition.

- Calculating Ehull: For a compound above the hull, its ( E_{hull} ) is the vertical energy difference to the hull surface. The decomposition path is not always to a single phase but often to a combination of multiple phases in thermodynamic equilibrium [1].

Example: For a quaternary compound ABO*2N, the decomposition reaction might be into a mixture of stable phases, such as: ( \text{ABO}2\text{N} \rightarrow \frac{2}{3} \text{A}4\text{B}2\text{O}9 + \frac{7}{45} \text{A}(\text{BN}2)2 + \frac{8}{45} \text{B}3\text{N}5 ) The ( E_{hull} ) is calculated using the normalized (eV/atom) energies of all involved phases according to the stoichiometry of this specific reaction [1].

Diagram 1: Convex hull in a binary system (A-B). The unstable compound's E_hull is its energy distance to the hull.

Advanced Methods and High-Throughput Protocols

High-Throughput Screening Workflow

The integration of stability assessment into high-throughput screening is crucial for identifying viable materials.

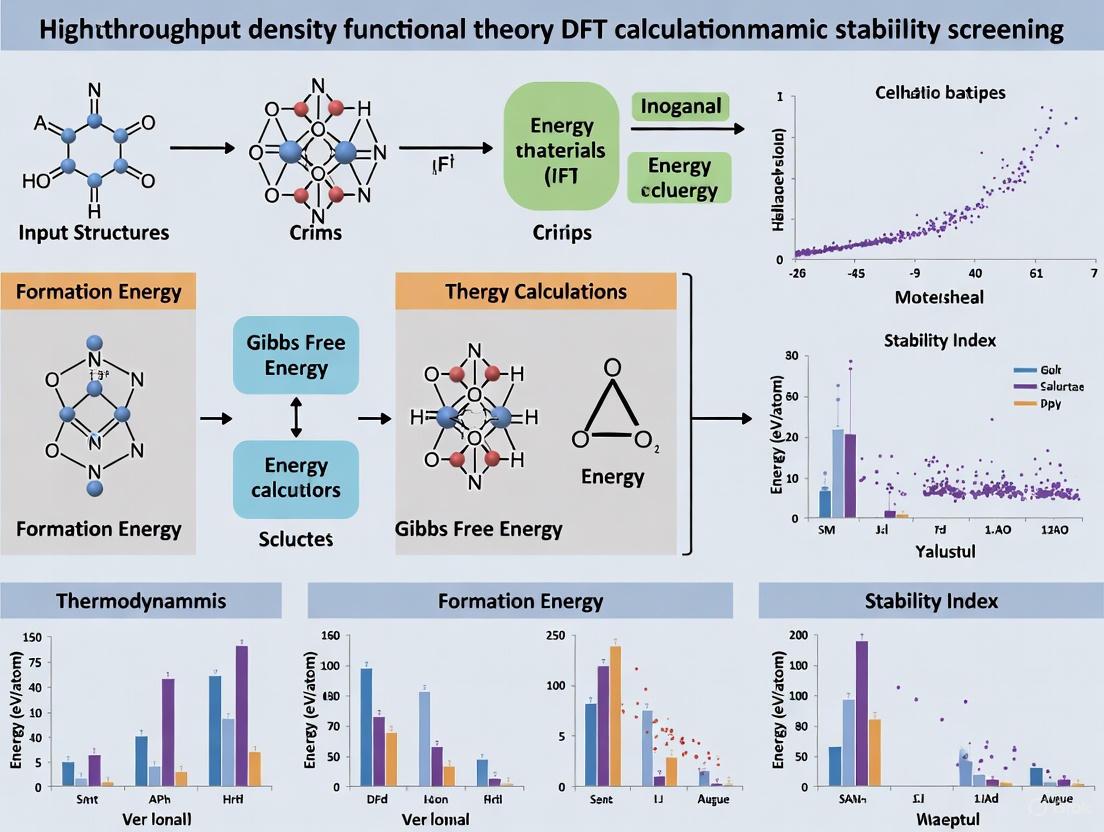

Diagram 2: High-throughput stability screening workflow.

Machine Learning and Enhanced Sampling Methods

Traditional Density Functional Theory (DFT) is computationally expensive. Emerging methods accelerate stability prediction:

- Machine Learning (ML) for Formation Energy: Advanced ML models can predict formation energies directly from composition or structure, bypassing costly DFT. State-of-the-art models include graph neural networks (e.g., ALIGNN) and image-based approaches using voxel representations of crystals, achieving accuracy comparable to DFT at a fraction of the cost [5].

- Ensemble ML for Stability: Ensemble models like ECSG integrate multiple models based on different knowledge domains (elemental properties, graph representations of formulas, and electron configurations) to predict thermodynamic stability with high accuracy (AUC > 0.98) and superior data efficiency [2].

- Enhanced Molecular Dynamics (MD) for Thermal Stability: For applications like energetic materials, an optimized MD protocol using Neural Network Potentials (NNP), nanoparticle models, and low heating rates (e.g., 0.001 K/ps) can reliably predict decomposition temperatures, reducing errors from >400 K to ~80 K compared to experiments [4].

Table 2: Computational Methods for Stability Prediction

| Method | Core Principle | Key Advantage | Example/Performance |

|---|---|---|---|

| DFT + Convex Hull | First-principles energy calculation followed by geometrical construction [1]. | High fidelity; considered the benchmark. | Foundation of Materials Project database. |

| ML Formation Energy | Trains models on DFT databases to predict ( E_f ) from structure or composition [5]. | Speed (orders of magnitude faster than DFT). | Voxel-based CNN achieves MAE competitive with graph models [5]. |

| Ensemble ML (ECSG) | Combines multiple models to reduce bias and improve accuracy [2]. | High accuracy and data efficiency. | AUC = 0.988 for stability classification [2]. |

| NNP-MD Protocol | Uses machine-learned interatomic potentials for realistic simulations of decomposition [4]. | Captures finite-temperature and kinetic effects. | Reduces T_d error to ~80 K for RDX [4]. |

The Scientist's Toolkit: Essential Computational Reagents

Table 3: Key Resources for Thermodynamic Stability Analysis

| Tool / Resource | Type | Function | Access/Reference |

|---|---|---|---|

| Materials Project (MP) | Database | Provides pre-calculated ( Ef ), ( E{hull} ), and decomposition pathways for over 150,000 materials [1]. | https://materialsproject.org |

| AFLOW | Database | A large repository of computed material properties, including formation energies for convex hull construction [5]. | http://aflow.org |

| pymatgen | Software Library | A Python library for materials analysis that includes robust modules for constructing phase diagrams and calculating ( E_{hull} ) [1]. | Open-source |

| Density Functional Tight Binding (DFTB) | Computational Method | A faster, approximate alternative to DFT for calculating formation energies and convex hulls, suitable for large systems [6]. | Integrated with codes like DFTB+ |

| Agentic Automation (DREAMS) | Framework | An LLM-agent framework that automates DFT simulation setup, execution, and error handling for high-fidelity property prediction [7]. | Prototype (academic) |

Application Notes and Protocols

Protocol 1: Calculating Energy Above Hull for a Novel Compound

Objective: To determine the thermodynamic stability of a newly modeled compound, "ABO*2N".

Materials: DFT calculation setup (e.g., VASP, Quantum ESPRESSO), computational resources, pymatgen library, access to the Materials Project API.

Procedure:

- Relaxation and Energy Calculation: Perform a full structural relaxation of ABO*2N using DFT to obtain its ground-state total energy.

- Energy Normalization: Normalize this total energy to eV per atom.

- Reference Element Energies: Obtain the energies per atom of the standard reference states for elements A, B, O, and N (e.g., the crystal structures of pure A, pure B, O_2 gas, and N_2 gas).

- Calculate Formation Energy (Ef): Use the formula in Section 2.1 to compute the formation energy of ABO*2N.

- Retrieve Relevant Phase Diagram: Use the Materials Project API or pymatgen to retrieve all existing compounds in the A-B-O-N chemical system and their formation energies.

- Construct the Convex Hull: Use pymatgen's

PhaseDiagramclass to construct the convex hull from the dataset. - Query Ehull: Use the

PhaseDiagram.get_e_above_hull(compound_entry)method, inputting an entry for your calculated ABO*2N, to directly obtain its ( E_{hull} ) [1].

Notes:

- Ensure consistency in DFT calculation parameters (functional, pseudopotentials, convergence settings) between your new compound and the data in the reference database.

- An ( E_{hull} ) less than 20-30 meV/atom often suggests a potentially synthesizable metastable material.

Protocol 2: High-Throughput Screening with Integrated Stability Metrics

Objective: To screen a large database of hypothetical materials for application-specific properties while ensuring thermodynamic and mechanical stability.

Materials: A database of candidate structures (e.g., hypothetical MOFs), DFT or ML force fields, stability evaluation tools.

Procedure (Inspired by MOF Screening [3]):

- Initial Performance Screening: Screen the database for primary application performance metrics (e.g., CO_2 uptake and CO_2/N_2 selectivity for carbon capture). Shortlist the top performers.

- Assess Thermodynamic Stability: For each shortlisted candidate, evaluate thermodynamic stability. For MOFs, this can be done by calculating its free energy and comparing it to a baseline of known experimental MOFs. Candidates with free energies significantly higher than the baseline are filtered out [3].

- Evaluate Mechanical Stability: Perform molecular dynamics (MD) simulations at relevant temperatures to calculate elastic constants (bulk, shear, Young's moduli). This assesses whether the framework can withstand synthesis and processing conditions [3].

- Predict Thermal and Activation Stability: Use pre-trained machine learning models or additional simulations to predict the thermal decomposition temperature and stability during activation (e.g., solvent removal) [3].

- Final Candidate Identification: Select the final list of candidates that simultaneously exhibit high application performance and pass all stability filters.

Why Stability Screening is a Critical First Step in Materials and Drug Design

Stability screening serves as the foundational gatekeeper in both materials science and pharmaceutical development, determining the ultimate feasibility and commercial viability of new compounds and drug candidates. This application note details the critical role of high-throughput stability assessment, with a specific focus on thermodynamic stability screening via Density Functional Theory (DFT) calculations. We provide comprehensive protocols for implementing these strategies, enabling researchers to identify promising candidates with the highest potential for success while efficiently allocating resources. By establishing stability as a primary filter in discovery pipelines, organizations can significantly reduce late-stage failures and accelerate the development of robust materials and therapeutics.

Stability is a fundamental quality attribute that dictates the practical utility of any engineered substance, be it a catalytic material or a pharmaceutical compound. Stability screening provides the first major checkpoint, ensuring that only candidates with sufficient longevity under relevant environmental conditions progress through costly development stages. In materials design, thermodynamic stability determines whether a synthesized material will maintain its structure and function under operational stresses. Similarly, in pharmaceuticals, chemical stability ensures that drug products maintain their safety, identity, strength, quality, and purity throughout their intended shelf-life [8] [9].

The consequences of inadequate stability screening are severe. For materials, unstable compounds can lead to catastrophic failure in applications such as fuel cells or catalysts. In pharmaceuticals, instability can result in reduced efficacy, harmful degradation products, product recalls, and most critically, potential harm to patients [8]. Comprehensive stability assessment is therefore not merely a regulatory hurdle but a fundamental scientific and economic imperative throughout the product lifecycle.

Theoretical Foundations: Thermodynamic Stability in Materials and Drug Design

Thermodynamic Basis of Stability

At its core, stability is governed by thermodynamics. The Gibbs free energy (ΔG) provides the crucial parameter describing the spontaneity of processes, where negative values indicate favorable, spontaneous reactions [10]. For molecular interactions in drug design, ΔG determines binding affinity, while for materials, it dictates phase stability. The relationship between free energy, enthalpy (ΔH), and entropy (ΔS) is defined by the fundamental equation:

ΔG = ΔH - TΔS [10]

This separation of ΔG into enthalpic and entropic components reveals critical insights into binding modes and stability mechanisms. Similar ΔG values can mask radically different ΔH and ΔS contributions, describing entirely different physical phenomena that have profound implications for further optimization [10].

Computational Assessment of Thermodynamic Stability

In high-throughput materials design, thermodynamic stability is quantitatively assessed through the convex hull distance, which represents the energy difference between a compound and the most stable combination of other phases at that composition [11]. Compounds with convex hull distances ≤ 0 are considered thermodynamically stable, while those with positive values are metastable or unstable [11]. This approach enables rapid computational screening of thousands of candidate materials before any synthetic effort is undertaken.

Table 1: Key Thermodynamic Parameters for Stability Assessment

| Parameter | Definition | Significance in Stability Assessment | Experimental/Calculation Method |

|---|---|---|---|

| Gibbs Free Energy (ΔG) | Energy change determining process spontaneity | Negative values indicate thermodynamically favorable processes; determines binding affinity and phase stability | ITC, van't Hoff analysis, DFT calculations |

| Formation Energy (Hf) | Energy change when a compound forms from its constituent elements | More negative values indicate greater stability of the compound relative to its elements | DFT calculations [11] |

| Convex Hull Distance | Energy difference between a compound and the most stable phases at that composition | Values ≤ 0 indicate thermodynamic stability; positive values indicate instability [11] | DFT calculations with convex hull construction [11] |

| Enthalpy (ΔH) | Heat change during a process at constant pressure | Indicates net bond formation/breakage; negative values suggest stronger bonding | Calorimetry (ITC, DSC) [10] |

| Entropy (ΔS) | Measure of system disorder | Positive values often associated with hydrophobic interactions and desolvation | Calculated from ΔG and ΔH measurements [10] |

High-Throughput Stability Screening Protocols

Protocol 1: High-Throughput DFT Screening for Material Stability

This protocol outlines a computational workflow for assessing thermodynamic stability of materials, specifically ABO3 perovskites, using high-throughput Density Functional Theory (DFT) calculations [11].

Experimental Workflow

Materials and Computational Parameters

Table 2: Research Reagent Solutions for High-Throughput DFT Screening

| Component | Specification | Function/Role |

|---|---|---|

| DFT Software | Vienna Ab initio Simulation Package (VASP) [11] | Performs quantum mechanical calculations to determine electronic structure and total energy |

| Exchange-Correlation Functional | GGA-PBE [11] | Approximates quantum mechanical interactions between electrons |

| Pseudopotential Method | Projector-Augmented Wave (PAW) [11] | Efficiently handles electron-ion interactions |

| DFT+U Corrections | Applied to 3d transition metals and actinides [11] | Accounts for strong electron correlations in specific elements |

| Elemental References | Ground-state structures from OQMD [11] | Provides reference states for calculating formation energies |

| High-Throughput Framework | qmpy python package [11] | Automates workflow management and thermodynamic analysis |

Step-by-Step Procedure

Define Composition Space: Select 73 metallic and semi-metallic elements for A and B site substitution in ABO3 perovskites, generating 5,329 possible compositions [11].

Generate Crystal Structures: Create initial structures for ideal cubic perovskite configuration and three common distortions (rhombohedral, tetragonal, orthorhombic) [11].

Perform DFT Calculations: Execute high-throughput DFT calculations using parameters in Table 2. Apply Hubbard U corrections for 3d transition metals and actinides according to established values [11].

Calculate Formation Energy: For each compound, compute the formation energy HfABO3 using: HfABO3 = E(ABO3) - μA - μB - 3μO where E(ABO3) is the DFT total energy of the perovskite, and μA, μB, and μO are the chemical potentials of the constituent elements [11].

Construct Convex Hull: Build a convex hull using all known phases in the A-B-O ternary phase diagram from the Open Quantum Materials Database (OQMD), which contains approximately 470,000 compounds [11].

Calculate Stability Metric: Compute the convex hull distance HstabABO3: HstabABO3 = HfABO3 - Hfhull where Hfhull is the convex hull energy at the ABO3 composition [11].

Classify Stability: Designate compounds with convex hull distance ≤ 0.025 eV per atom as stable, approximately kT at room temperature [11].

Protocol 2: Automated Solution Stability Assay for Drug Discovery

This protocol describes an automated high-performance liquid chromatography (HPLC)-based method for screening solution stability of drug candidates in discovery phases [12].

Experimental Workflow

Materials and Equipment

Table 3: Research Reagent Solutions for Automated Stability Screening

| Component | Specification | Function/Role |

|---|---|---|

| HPLC System | HPLC with temperature-controlled autosampler [12] | Automated sample preparation, injection, and separation |

| Detection Method | Mass spectrometry (LC-MS) or UV detection [12] | Detects and quantifies parent compound and degradation products |

| Stability Matrices | Buffers at various pH, biological assay media, physiological fluids [12] | Provides relevant environmental conditions for stability assessment |

| Incubation System | Temperature-controlled compartment [12] | Maintains precise temperature during stability study |

| Analytical Columns | Reversed-phase C18 or similar [12] | Separates analytes of interest |

Step-by-Step Procedure

Sample Preparation: Prepare compound solutions in relevant stability matrices including buffers at various pH, biological assay media, or simulated physiological fluids [12].

Automated Setup: Program temperature-controlled autosampler to deliver compounds to stability matrices, mix, and incubate at specified temperatures [12].

Time-Course Sampling: Configure system for automated injections at predetermined time points (e.g., 0, 1, 2, 4, 8, 24 hours) without operator intervention [12].

Chromatographic Analysis: Use HPLC or LC-MS with stability-indicating methods to separate and quantify parent compound and degradation products [12].

Data Analysis: Calculate percentage of parent compound remaining at each time point and determine degradation kinetics.

Stability Ranking: Rank compounds based on degradation half-life under each condition to identify candidates with optimal stability profiles.

Protocol 3: Phase-Appropriate Stability Testing for Pharmaceutical Development

This protocol outlines a phase-appropriate approach to stability testing throughout pharmaceutical development, from pre-clinical stages to commercial marketing [9].

Experimental Workflow

Materials and Equipment

Table 4: Research Reagent Solutions for Pharmaceutical Stability Testing

| Component | Specification | Function/Role |

|---|---|---|

| Stability Chambers | Temperature and humidity-controlled [8] | Maintains specified storage conditions (e.g., 25°C/60% RH, 40°C/75% RH) |

| Container-Closure System | Same as proposed marketed packaging [9] | Evaluates product-package interactions and protective properties |

| Analytical Methods | Stability-indicating HPLC, GC, spectroscopy [9] | Detects and quantifies changes in quality attributes over time |

| Reference Standards | Qualified working standards [13] | Ensures analytical method performance and data integrity |

| Data Loggers | Continuous temperature and humidity monitors [8] | Provides documentation of storage conditions |

Step-by-Step Procedure

Preclinical/Phase 1: Initiate an ongoing stability program using representative samples. Conduct studies under accelerated and long-term storage conditions to support clinical trial duration [9].

Forced Degradation Studies: Perform stress testing on the API under conditions including hydrolytic (acid/base), thermal, oxidative, and photolytic stress to identify potential degradation pathways and validate stability-indicating methods [9].

Phase 2 Studies: Expand stability testing to include formal protocols with defined tests, analytical procedures, acceptance criteria, and test intervals. Use stability data to support formulation development and container-closure selection [9].

Phase 3/Registration: Conduct formal ICH-compliant stability studies on three primary batches of the final formulation manufactured using the commercial process [9]. Implement storage conditions per ICH guidelines:

Testing Intervals: Schedule testing appropriately (e.g., 0, 3, 6, 9, 12, 18, 24, 36 months for long-term studies) [8].

Data Analysis and Shelf-Life Determination: Analyze stability data using statistical methods including regression analysis and Arrhenius modeling to establish recommended storage conditions and expiration dating [8].

Applications and Significance

Materials Design Applications

High-throughput DFT stability screening has enabled rapid discovery of novel materials across multiple domains. In ABO3 perovskites, this approach identified 395 predicted stable compounds, many not yet experimentally reported, opening avenues for applications in solid oxide fuel cells, piezo-, ferro-electricity, and thermochemical water splitting [11]. Similarly, machine learning models trained on DFT-calculated formation energies and convex hull distances have identified novel stable Ag-Pd-F catalyst materials for formate oxidation reaction in direct formate fuel cells [14].

Pharmaceutical Development Applications

Stability screening in pharmaceutical development provides critical data for formulation optimization, container-closure selection, and establishment of shelf-life and storage conditions [9]. Automated solution stability assays enable rapid profiling of compound stability under physiological conditions, guiding structural modifications to improve metabolic stability [12]. The extensive stability data required for regulatory submissions ultimately ensures that marketed drug products maintain quality, safety, and efficacy throughout their shelf-life [8] [9].

Stability screening represents an indispensable first step in both materials and pharmaceutical design pipelines. The protocols detailed herein—from high-throughput DFT calculations for materials to automated solution stability assays and phase-appropriate pharmaceutical testing—provide robust frameworks for implementing these critical assessments. By integrating comprehensive stability screening early in development workflows, researchers can make informed decisions, mitigate downstream risks, and accelerate the development of viable materials and therapeutics. As high-throughput and computational methods continue to advance, stability screening will become increasingly predictive and efficient, further solidifying its role as the critical gatekeeper in materials and drug design.

In the field of high-throughput materials discovery, accurately assessing thermodynamic stability is a critical first step for identifying synthesizable compounds. Density Functional Theory (DFT) calculations enable the efficient screening of vast compositional spaces by computing key thermodynamic parameters. This application note details the core thermodynamic parameters—formation energy (ΔHf), decomposition energy (ΔHd), and stability criteria—within the context of high-throughput DFT screening, providing protocols and guidelines for researchers.

Defining the Key Thermodynamic Parameters

Formation Energy (ΔHf)

The formation energy (ΔHf) quantifies the energy released or absorbed when a compound forms from its constituent elements in their reference states at T=0 K. It is calculated according to Equation 1 [11]:

$$ Hf^{ABO3} = E(ABO3) - \muA - \muB - 3\muO $$

where:

- ( E(ABO_3) ) is the total DFT energy of the compound.

- ( \muA ), ( \muB ), and ( \mu_O ) are the chemical potentials of elements A, B, and oxygen, respectively.

For most elements, the chemical potentials are equal to the DFT total energies of their standard elemental reference states. Corrections are applied for certain elements where the T=0 K DFT ground state is not an adequate reference (e.g., diatomic gases like O₂, room-temperature liquids like Hg, and elements with structural phase transformations between 0 and 298 K) [11]. A negative ΔHf indicates that the compound is stable with respect to its elements.

Table 1: Key Quantitative Data on Formation and Decomposition Energies from High-Throughput Studies

| Property | Typical Value Range | Dataset Context | Significance | Citation |

|---|---|---|---|---|

| Formation Energy (ΔHf) | Mean ± AAD = -1.42 ± 0.95 eV/atom | Materials Project (85,014 compositions) | Quantifies stability relative to elements; wide energy range. | [15] |

| Decomposition Energy (ΔHd) | Mean ± AAD = 0.06 ± 0.12 eV/atom | Materials Project (85,014 compositions) | Subtle but critical for stability; positive = unstable. | [15] |

| Stability Threshold | < 0.025 eV/atom | ABO₃ Perovskites (5,329 compositions) | Approximate kT at room temperature; labels compounds as stable. | [11] |

| Number of Predicted Stable Perovskites | 395 compounds | ABO₃ Perovskites (5,329 compositions) | Demonstrates the power of high-throughput screening for discovery. | [11] |

Decomposition Energy (ΔHd) and Phase Stability

While formation energy measures stability against elements, the decomposition energy (ΔHd), or "energy above the convex hull," determines a compound's thermodynamic stability with respect to all other competing phases in its chemical space. It is defined as [15]:

$$ \Delta Hd = Hf^{compound} - H_f^{hull} $$

where ( Hf^{compound} ) is the formation energy of the compound in question and ( Hf^{hull} ) is the convex hull energy at that composition, derived from the lowest-energy combination of other phases.

The convex hull is constructed in formation energy-composition space, forming the lower envelope that connects the most stable phases. Any phase lying on this hull (ΔHd ≤ 0) is considered thermodynamically stable, while phases above the hull (ΔHd > 0) are unstable or metastable [15] [2]. The magnitude of ΔHd indicates the energy penalty for a compound's decomposition into more stable neighboring phases. As shown in Table 1, ΔHd typically operates on a much smaller energy scale than ΔHf, making it a more sensitive metric [15].

Stability Criteria and the "Stable" Label

In computational high-throughput screening, a compound is typically classified as thermodynamically stable if its ΔHd is negative or zero. In practice, a small positive tolerance is often applied to account for numerical uncertainties and nearly-stable compounds. For instance, a stability criterion of ΔHd < 0.025 eV/atom (approximately kT at room temperature) has been used to label ABO₃ perovskites as stable [11].

High-Throughput Workflow for Stability Assessment

The process for determining these parameters at a high-throughput scale involves a multi-step, automated workflow. The diagram below illustrates the logical sequence from initial setup to final stability classification.

Computational Protocols and Methodologies

DFT Calculation Parameters for Thermodynamic Properties

High-throughput DFT relies on standardized protocols to ensure consistency and accuracy across thousands of calculations. The following parameters are critical [11] [16].

Table 2: Essential DFT Setup Parameters for Thermodynamic Stability Screening

| Parameter | Typical Setting / Consideration | Function/Role in Calculation |

|---|---|---|

| Software | Vienna Ab initio Simulation Package (VASP) | Performs electronic structure calculation and geometry relaxation. |

| Pseudopotential | Projector-Augmented Wave (PAW) method | Represents core electrons and interaction with valence electrons. |

| Exchange-Correlation Functional | GGA-PBE (Perdew-Burke-Ernzerhof) | Approximates the quantum mechanical exchange-correlation energy. |

| DFT+U | Applied for 3d transition metals and actinides (e.g., U=3-4 eV) | Corrects self-interaction error for strongly correlated electrons. |

| k-point Sampling | Automated meshes (e.g., Monkhorst-Pack); protocols exist for precision/efficiency tradeoffs [17] | Samples the Brillouin zone for numerical integration. |

| Energy Cutoff | System-specific; tested for convergence (see Fig. S1 in [16]) | Determines the basis set size (plane-wave kinetic energy cutoff). |

| Energy Convergence Criterion | Tight settings (e.g., 10⁻⁵ eV per atom) | Ensures electronic self-consistency is achieved. |

| Force Convergence Criterion | (e.g., 0.01 eV/Å) | Determines when ionic relaxation is complete. |

Standard solid-state protocols (SSSP) have been developed to optimize the selection of parameters like k-point sampling and smearing, balancing precision and computational efficiency for high-throughput workflows [17]. For charged defect calculations, which are related but distinct, advanced corrections or hybrid functionals are often necessary to address band gap errors [18].

Machine Learning for Accelerated Stability Prediction

Machine learning (ML) has emerged as a powerful tool to predict formation energies and stability, bypassing expensive DFT calculations for initial screening. However, a critical examination reveals that accurate prediction of ΔHf does not guarantee accurate prediction of ΔHd and stability [15]. This is because stability depends on the small energy differences between competing phases, where errors in ΔHf may not cancel out.

Ensemble models that combine different knowledge domains show improved performance. For example, the ECSG framework integrates models based on elemental properties (Magpie), interatomic interactions (Roost), and electron configuration (ECCNN), achieving an Area Under the Curve (AUC) score of 0.988 for stability classification and requiring only one-seventh of the data to match the performance of other models [2]. For composition-based models without structural information, important features for predicting ΔHf include anion electronegativity, electron affinity, and orbital properties [19].

Successful high-throughput screening relies on a suite of computational tools and databases.

Table 3: Key Resources for High-Throughput Thermodynamic Screening

| Tool / Resource | Type | Primary Function | Example/URL |

|---|---|---|---|

| VASP | Software | First-principles DFT code using PAW pseudopotentials. | https://www.vasp.at/ |

| Quantum ESPRESSO | Software | Open-source DFT code using plane waves and pseudopotentials. | https://www.quantum-espresso.org/ |

| AiiDA | Workflow Manager | Automates, manages, and reproduces computational workflows. | https://www.aiida.net/ |

| pymatgen | Materials API | Python library for analyzing, generating, and manipulating structures. | https://pymatgen.org/ |

| OQMD | Database | Open Quantum Materials Database; source of DFT-calculated formation energies and phase data. | http://oqmd.org |

| Materials Project | Database | Provides computed properties for over 85,000 materials, including formation energies. | https://materialsproject.org |

| SSSP | Protocol | Standard Solid-State Protocols for optimized pseudopotentials and calculation parameters. | https://www.sssp.eu |

The rigorous application of formation energy (ΔHf), decomposition energy (ΔHd), and well-defined stability criteria forms the foundation of high-throughput thermodynamic stability screening. While high-throughput DFT provides the primary data, integrated machine-learning models and robust computational protocols are enhancing the efficiency and scope of materials discovery. Adherence to standardized methods ensures reliable identification of stable, synthesizable materials, accelerating the development of new functional compounds for energy and electronic applications.

The Limitation of Traditional Methods and the Need for High-Throughput Approaches

The discovery and development of new functional materials and drug compounds are fundamental to technological and medical progress. Traditionally, this process has been dominated by experimental approaches characterized by sequential, labor-intensive testing of individual candidates. In computational materials science, conventional density functional theory (DFT) calculations, while powerful, are often too resource-intensive for exploring vast chemical spaces. The limitations of these traditional methods have become increasingly apparent, creating a pressing need for high-throughput (HT) approaches that can rapidly screen thousands to millions of compounds efficiently and systematically [20] [21].

Traditional screening methods in pharmaceutical research were limited to 20-50 compounds per week per laboratory, requiring large assay volumes (∼1 ml) and substantial amounts of each compound (5-10 mg) [20]. Similarly, in materials science, conventional DFT studies are often restricted to investigating a manageable subspace of potential materials due to prohibitive computational costs, creating a bottleneck for discovery [22]. This article details the specific limitations of traditional methodologies and presents structured protocols for implementing high-throughput alternatives, with a specific focus on high-throughput DFT for thermodynamic stability screening.

Quantitative Comparison: Traditional vs. High-Throughput Methods

The quantitative advantages of high-throughput screening over traditional methods are substantial, as detailed in Table 1.

Table 1: Performance Comparison of Traditional and High-Throughput Screening Methods

| Screening Parameter | Traditional Methods | High-Throughput Methods |

|---|---|---|

| Throughput (Compounds/Week) | 20-50 [20] | 1,000-100,000+ [20] [23] |

| Assay Volume | ∼1 ml [20] | 1-100 μL [20] [23] |

| Compound Consumption | 5-10 mg [20] | ∼1 μg [20] |

| Automation Level | Manual, single-tube processing [20] | Robotic, array-based (96 to 1586-well plates) [20] [23] |

| Data Integration | Limited, non-standardized | Centralized databases (e.g., OQMD) [11] [21] |

The impact of these limitations is clearly demonstrated in specific research contexts. For example, a high-throughput DFT screening of single-metal and high-entropy MOF-74 structures identified Mn-MOF-74 as optimal for CO₂/N₂ separation and MnFeCoNiMgZn-MOF-74 for H₂ storage, a task impractical through sequential study [24]. In another case, screening 4350 bimetallic alloy structures via HT-DFT identified eight promising Pd-substitute catalysts, with four experimentally confirmed and one (Ni₆₁Pt₃₉) showing a 9.5-fold enhancement in cost-normalized productivity [25].

Limitations of Traditional Workflows

Low Throughput and High Resource Consumption

The most significant limitation of traditional methods is their low throughput. The serial "one-at-a-time" paradigm for testing compounds or materials is inherently slow, creating a critical path in research and development timelines [20]. Furthermore, traditional experimental screening consumes large amounts of reagents and compounds (5-10 mg per test), making it expensive and often impractical when novel compounds are difficult to synthesize in large quantities [20]. In computational settings, conventional DFT calculations, while faster than experimentation, can require "several months... for computing a full energetics picture of even one metallic catalyst system" [25].

Limited Exploration of Chemical Space

The combinatorial nature of chemical and materials spaces is enormous. Relying on intuition or incremental modifications for candidate selection means that vast regions of potentially fruitful chemical space remain unexplored [22] [21]. This often results in suboptimal materials or drug candidates being selected for development. High-throughput computational screening has revealed that promising materials can be exceptionally rare; for instance, among ternary Heusler compounds, only 0.5% (135 of 27,000+) simultaneously met the criteria for high magnetic anisotropy and thermodynamic, dynamic, and magnetic stability [22].

Inefficient Data Management and Knowledge Transfer

Traditional research often lacks standardized data management protocols, leading to "data silos" where information is difficult to aggregate, analyze, and reuse across different projects [21]. The absence of a formal data flow strategy in conventional computational studies hinders the extraction of broader scientific trends and makes it challenging to assess the transferability and accuracy of computational methods across diverse chemical systems [21].

High-Throughput Screening Protocol for Thermodynamic Stability

This protocol outlines a standardized workflow for using high-throughput DFT calculations to screen materials for thermodynamic stability, a critical first step in identifying viable new compounds.

Protocol Workflow

The high-throughput screening process for thermodynamic stability involves a multi-stage workflow, illustrated in Figure 1.

Figure 1: High-Throughput DFT Screening Workflow. The process is a sequential pipeline from target definition to final candidate validation [21].

Detailed Experimental Procedures

Data Selection and Compound Management

- Objective: Define the target chemical space and generate a list of candidate structures for computation.

- Procedure:

- Define Search Space: Systematically enumerate possible compositions, such as all possible ABO₃ perovskites [11] or all conventional quaternary Heusler compounds [22].

- Select Structural Prototypes: For each composition, identify relevant crystal structures (e.g., cubic, tetragonal, rhombohedral for perovskites [11]).

- Generate Input Files: Automate the creation of all necessary computational input files (e.g., for VASP) with standardized parameters [21].

- Note: For stability screening, it is crucial to include not only the target compounds but also all competing phases (elements and other known compounds) to construct accurate phase diagrams [11].

High-Throughput Data Generation (DFT Calculations)

- Objective: Perform automated, consistent first-principles calculations to determine the total energy and optimized geometry for each candidate.

- Computational Parameters (Generalized, based on [24] [11] [21]):

- Software: Vienna Ab initio Simulation Package (VASP).

- Functional: Perdew-Burke-Ernzerhof (PBE) generalized gradient approximation (GGA).

- Van der Waals Correction: D3 Becke-Johnson damping [24].

- Basis Set: Plane-wave basis set with projector-augmented wave (PAW) pseudopotentials.

- k-point Mesh: Automated mesh generation ensuring energy convergence (e.g., 500 k-points per reciprocal atom) [21].

- Convergence Criteria: Define strict thresholds for electronic (e.g., 10⁻⁵ eV) and ionic (e.g., 0.01 eV/Å) relaxation [21].

- Automation: Use job-management scripts to submit, monitor, and manage thousands of calculations in parallel on high-performance computing clusters.

Data Storage and Management

- Objective: Store calculated properties in a queryable, persistent database to facilitate analysis and sharing.

- Procedure:

- Database Schema: Design a relational database (e.g., MySQL, PostgreSQL) with tables for compositions, crystal structures, energies, and derived properties [21].

- Automated Parsing: Implement scripts to automatically parse the output of DFT calculations and populate the database.

- Public Databases: Consider contributing to or using public databases like the Open Quantum Materials Database (OQMD), which contains hundreds of thousands of calculated compounds [11].

Data Analysis: Stability and Property Screening

- Objective: Identify the most stable and promising candidates from the calculated dataset.

- Key Calculations:

- Formation Energy (ΔEf): Calculate using

H_f_ABO₃ = E(ABO₃) - μ_A - μ_B - 3μ_O, whereE(ABO₃)is the total energy of the compound andμare the chemical potentials of the constituent elements [11]. - Distance to Convex Hull (ΔH): The energy difference between a compound and the most stable mixture of phases at the same composition. A stable compound has

ΔH = 0; a near-stable compound has a small, positive value (e.g., < 0.025 eV/atom) [11] [22].

- Formation Energy (ΔEf): Calculate using

- Screening: Apply filters to select compounds that are stable or near-stable (

ΔH< threshold) and that possess the target functional properties.

Experimental and Advanced Validation

- Objective: Validate the computational predictions with targeted experiments or higher-fidelity calculations.

- Procedure:

- Synthesis Attempt: Prioritize the top predicted stable candidates for experimental synthesis [11].

- Property Characterization: Experimentally measure the target properties (e.g., gas adsorption, catalytic activity, thermoelectric performance) [24] [25] [26].

- Higher-Level Theory: Validate key findings using more accurate (but computationally expensive) methods like hybrid DFT or quantum Monte Carlo [27].

Successful implementation of high-throughput screening relies on a suite of computational and physical resources, as detailed in Table 2.

Table 2: Key Research Reagent Solutions for High-Throughput Screening

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| VASP (Vienna Ab initio Simulation Package) [24] [11] | A widely used software package for performing DFT calculations. | Computing formation energies and electronic structures in HT screens. |

| Open Quantum Materials Database (OQMD) [11] | A public database containing DFT-calculated data for ~40,000 experimental and ~430,000 hypothetical compounds. | Provides reference energies for stability analysis (convex hull construction). |

| 96/384-Well Microplates [20] [23] | Standardized array formats for miniaturized and parallelized experimental assays. | Conducting thousands of biological or chemical tests in parallel. |

| Machine Learning Interatomic Potentials (MLIPs) [22] | ML models that accelerate structure optimization by orders of magnitude vs. DFT. | Rapidly relaxing crystal structures of thousands of candidates in a HT workflow. |

| Robotic Liquid Handling Systems [20] [23] | Automation workstations for precise, high-speed dispensing of assay reagents. | Enabling the preparation and testing of vast compound libraries. |

| qmpy Python Package [11] | An open-source tool for managing high-throughput calculations and thermodynamic analysis. | Automating workflow and analyzing phase stability via convex hull constructions. |

The limitations of traditional, sequential discovery methods are clear: they are slow, resource-intensive, and ill-suited for exploring the vastness of chemical space. High-throughput approaches, particularly those leveraging high-throughput DFT, represent a paradigm shift. By integrating automated computation, systematic data management, and machine learning, these protocols enable researchers to rapidly identify stable, functional materials with a precision and scale previously unimaginable. As these methodologies continue to mature and integrate with experimental validation, they promise to dramatically accelerate the pace of innovation across materials science and drug discovery.

Implementing High-Throughput DFT Workflows: From Theory to Practice

Core Components of a High-Throughput DFT Framework

High-throughput density functional theory (HT-DFT) has emerged as a transformative paradigm in computational materials science, enabling the rapid screening and discovery of novel materials with targeted properties. This approach leverages automated computational workflows to systematically evaluate thousands of materials, dramatically accelerating the materials design cycle that traditionally relied on resource-intensive trial-and-error experimentation [28]. In the specific context of thermodynamic stability screening—a critical prerequisite for experimental synthesis—HT-DFT provides invaluable predictions of phase stability, formation energies, and decomposition pathways, guiding researchers toward synthesizable materials candidates [29]. The framework's core components work in concert to manage the immense computational scale, ensure data consistency, and facilitate the extraction of scientifically meaningful trends from massive datasets, thereby establishing a robust foundation for data-driven materials innovation.

Core Component Architecture

A functional HT-DFT framework rests on several interconnected pillars, each addressing a distinct challenge in the high-throughput pipeline. The seamless integration of these components is what enables efficient, reliable, and scalable materials screening.

Workflow Automation & Management

This component manages the execution of complex computational tasks, from initial structure generation to final property extraction. It handles error recovery, job scheduling, and dependency management, which is crucial for maintaining throughput across thousands of calculations. Specialized software packages are essential for this automation, with several established options available:

- FireWorks & Atomate: A widely adopted combination for designing, managing, and executing workflows. Atomate provides a library of pre-defined workflows for common materials simulation tasks, built upon the FireWorks workflow engine [30].

- AiiDA: A powerful informatics platform that not only automates workflows but also tracks the provenance of every calculation, ensuring full reproducibility and transparency of the data generation process [30].

- qmpy: This package was used to automate high-throughput calculations and thermodynamic analysis for a comprehensive study on ABO₃ perovskites, demonstrating its application in large-scale stability screening [29].

Computational Infrastructure & DFT Codes

This component encompasses the core quantum mechanics engines that perform the actual energy and property calculations. The choice of DFT code and its configuration dictates the accuracy and computational cost of the simulations.

- DFT Codes: Common plane-wave basis set codes include VASP (Vienna Ab initio Simulation Package) and Quantum ESPRESSO. For instance, a massive HT-DFT study on 5,329 perovskites was conducted using VASP [29], while the nanoHUB resource provides a platform for running high-throughput calculations with Quantum ESPRESSO [31].

- Exchange-Correlation Functional: The approximation for the exchange-correlation functional is a critical choice. While the GGA-PBE functional is widely used in high-throughput studies [32] [29], its tendency to underestimate band gaps is well-documented. For more accurate electronic properties, hybrid functionals like HSE06 or meta-GGAs like HLE17 can be employed, though at a higher computational cost [32].

- Pseudopotentials: The projector-augmented wave (PAW) method is commonly used to describe electron-ion interactions [33] [29]. HT frameworks often integrate pseudopotential libraries to ensure consistent accuracy across the periodic table.

Data Management & Analysis

This component is responsible for storing, retrieving, and analyzing the vast volumes of structured data generated by HT-DFT calculations. Effective data management transforms raw calculation outputs into actionable knowledge.

- Databases and Platforms: Public databases such as the Materials Project and the Open Quantum Materials Database (OQMD) are central repositories for HT-DFT data [32] [31] [29]. They provide curated data and tools for analysis. The OQMD, for instance, was used to assess the thermodynamic stability of perovskites by constructing convex hulls from hundreds of thousands of compounds [29].

- Data Analysis Techniques: Dimensionality reduction techniques like Principal Component Analysis (PCA) can be employed to reveal latent structure in complex data, such as identifying correlations between bulk and surface electronic properties [34]. Furthermore, computed properties are often validated against existing experimental data to ensure predictive fidelity [29].

Validation & Uncertainty Quantification

This final component ensures the reliability and physical meaningfulness of the high-throughput predictions. It involves benchmarking computational results against experimental data or higher-level theories and assessing the impact of methodological choices.

- Stability and Convergence Testing: Protocols are established to test for the convergence of key parameters like plane-wave cutoff energy and k-point sampling [33].

- Functional Benchmarking: Studies systematically compare results from different DFT approximations (e.g., LDA, PBE, PBE+U, HSE06) to evaluate their performance for specific classes of materials, such as finding that PBE+U provided the best agreement with experimental data for zinc-blende CdS and CdSe [33].

- Experimental Cross-Validation: Predicted stable materials are compared against known experimental compounds to validate the computational framework. For example, a HT-DFT study curated a list of 223 experimentally synthesized perovskites to compare against its predictions [29].

Table 1: Key Software Tools for High-Throughput DFT Frameworks

| Tool Name | Primary Function | Application Example |

|---|---|---|

| FireWorks/Atomate [30] | Workflow Automation | Managing complex job dependencies in high-throughput screening. |

| AiiDA [30] | Workflow Automation & Data Provenance | Tracking the entire calculation history for reproducibility. |

| pymatgen [31] [30] | Materials Analysis | Parsing output files, analyzing structures, and generating input files. |

| qmpy [29] | Thermodynamic Analysis | Constructing convex hulls to assess phase stability. |

| VASP [29] | DFT Engine | Large-scale property calculation for thousands of compounds. |

| Quantum ESPRESSO [31] | DFT Engine | High-throughput simulations on platforms like nanoHUB. |

Experimental Protocol for Thermodynamic Stability Screening

The following protocol outlines a standardized methodology for using an HT-DFT framework to screen for thermodynamically stable materials, using the extensive study on ABO₃ perovskites as a benchmark [29].

Workflow Visualization

The logical flow of the high-throughput stability screening process is summarized in the diagram below.

Step-by-Step Methodology

Step 1: Define the Target Chemical Space

- Objective: Systematically define the set of chemical compositions to be screened.

- Procedure: For an ABO₃ perovskite screening, select all possible combinations of 73 metallic and semi-metallic elements on the A and B sites, resulting in 5,329 unique compositions [29]. For other systems, define the relevant structure types and compositional ranges.

Step 2: Generate Initial Crystal Structures

- Objective: Create initial structural models for DFT relaxation.

- Procedure:

- For each composition, create input files for the primary crystal structure of interest (e.g., the ideal cubic perovskite structure).

- Optionally, generate input files for common distorted variants (e.g., rhombohedral, tetragonal) for a subset of compositions to capture lower-energy phases [29].

Step 3: Perform High-Throughput DFT Calculations

- Objective: Compute the total energy of each structure in its ground state.

- Computational Parameters:

- Code: Vienna Ab initio Simulation Package (VASP) [29].

- Functional: GGA-PBE [29].

- HF Exchange: For more accurate electronic properties, a hybrid functional like HSE06 can be used, though it increases computational cost [32].

- DFT+U: Apply a Hubbard U correction for elements with localized d- or f-electrons (e.g., U = 3.1 eV for V, 3.5 eV for Cr) to improve the description of on-site electron correlations [29].

- Pseudopotentials: Use the projector-augmented wave (PAW) method [29].

- Convergence: Ensure convergence of total energy with respect to plane-wave cutoff energy and k-point sampling [33].

Step 4: Calculate Formation Energies

- Objective: Determine the energy of formation for each compound from its constituent elements.

- Procedure: Use the equation:

H_f(ABO₃) = E(ABO₃) - μ_A - μ_B - 3μ_OwhereE(ABO₃)is the total energy from DFT, andμare the chemical potentials of the elements, typically derived from the DFT total energies of their standard elemental phases [29].

Step 5: Construct Convex Hulls for Stability Assessment

- Objective: Identify which compounds are thermodynamically stable.

- Procedure:

- Gather formation energies for all known and calculated phases in the A-B-O ternary chemical space from a reference database (e.g., OQMD) [29].

- Construct the convex hull—the set of stable phases with the lowest formation energy at their composition.

- For each perovskite, calculate its hull distance (Ehull):

E_hull = H_f(Perovskite) - H_f(Convex Hull at ABO₃ composition)A compound is considered theoretically stable if Ehull ≤ 0 eV/atom. A small positive value (e.g., < 0.025 eV/atom, approximately thermal energy at room temperature) may indicate a "nearly stable" compound that might be synthesizable under non-equilibrium conditions [29].

Step 6: Data Storage and Analysis

- Objective: Curate and disseminate results for further use.

- Procedure: Store relaxed structures, formation energies, hull distances, and other computed properties (band gaps, magnetic moments) in a structured, publicly accessible database. This data can be used for direct candidate selection or to train machine learning models [29].

Table 2: Key Calculated Properties for Thermodynamic Stability Screening

| Property | Calculation Method | Significance in Stability Screening |

|---|---|---|

| Formation Energy [29] | H_f = E_total - Σ μ_elements |

Measures thermodynamic stability relative to elemental phases. |

| Hull Distance (E_hull) [29] | E_hull = H_f - H_f,hull |

Definitive metric for thermodynamic stability. E_hull ≤ 0 indicates stability. |

| Oxygen Vacancy Formation Energy [29] | E_vac = E(A₂B₂O₅) + μ_O - 2E(ABO₃) |

Key for assessing application-relevant stability under reducing conditions. |

| Band Gap [32] [29] | From electronic DOS calculation | Important for functional properties; sensitive to DFT functional choice. |

In the context of HT-DFT, "research reagents" refer to the essential software, data, and computational resources required to build and operate the framework.

Table 3: Essential "Reagents" for a High-Throughput DFT Framework

| Reagent / Resource | Type | Function in the HT-DFT Workflow |

|---|---|---|

| Pymatgen [30] | Python Library | Provides core materials analysis capabilities, file input/output, and workflow management. |

| Materials Project API [31] [30] | Database & Tool | Allows query and retrieval of existing crystal structures and properties to define initial screening sets. |

| Standard Solid State Pseudopotentials (SSSP) | Pseudopotential Library | A curated set of high-quality pseudopotentials ensuring consistency and accuracy across calculations. |

| Open Quantum Materials Database (OQMD) [29] | Database | Provides a vast repository of calculated phases essential for constructing convex hulls and assessing stability. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Provides the massive parallel computing resources necessary to execute thousands of DFT calculations. |

Data Analysis and Technical Validation

The final stage of the HT-DFT protocol involves rigorous analysis and validation to ensure the predictive power of the results. This often involves techniques that manage the complexity of the generated data.

Data Analysis Workflow

The process of analyzing results, particularly for establishing structure-property relationships, can be visualized as a pipeline from raw data to predictive models.

A key example of an advanced analysis is mapping the surface density of states (DOS) from bulk properties. This involves:

- Dimensionality Reduction: Using Principal Component Analysis (PCA) to obtain compact, low-dimensional representations of both bulk and surface DOS [34].

- Latent Feature Alignment: Identifying a linear transformation that maps the latent features of the bulk DOS to those of the surface DOS. This transformation is learned from a small set of materials (e.g., CuNbS, CuTaS, CuVS) for which both bulk and surface calculations are available [34].

- Prediction: Applying the learned transformation to the bulk DOS of new, unseen compositions (e.g., CuCrS, CuMoS) to predict their surface DOS, thereby bypassing the need for computationally expensive surface calculations [34].

Technical Validation and Benchmarking

Validation is critical. For a stability screening study, this includes:

- Comparison with Experiment: Cross-referencing predicted stable compounds with known experimental materials. In the perovskite study, 395 compounds were predicted stable, with a significant number representing new predictions awaiting experimental confirmation [29].

- Functional Benchmarking: Systematically evaluating different DFT approximations. For instance, one should note that while PBE is efficient, it can severely underestimate band gaps compared to hybrid functionals like HSE06, which can affect the predicted electronic properties of stable compounds [32].

- Sensitivity Analysis: Testing the robustness of predictions to computational parameters, such as the choice of Hubbard U value or the effect of different structural distortions on the calculated formation energy [33] [29].

This structured approach to analysis and validation ensures that the high-throughput framework produces not just data, but reliable and actionable scientific insights.

High-Throughput Density Functional Theory (HT-DFT) has emerged as a transformative approach in computational materials science, enabling the systematic calculation of properties for thousands of materials in an automated fashion. This paradigm shift from traditional single-structure calculations to large-scale screening has been facilitated by the development of sophisticated software frameworks and extensive databases. These resources allow researchers to predict material properties, assess thermodynamic stability, and identify promising candidates for various applications before undertaking experimental synthesis. The core infrastructure enabling these efforts consists of automation tools like Pymatgen and AFLOW, alongside comprehensive databases such as the Open Quantum Materials Database (OQMD) and the Materials Project (MP). These platforms collectively provide researchers with standardized methods for generating structures, performing calculations, analyzing results, and accessing curated data, thereby accelerating materials discovery across diverse fields including energy storage, catalysis, and electronic materials.

The critical importance of these tools is underscored by their widespread adoption in the materials community. They have been used to screen over 80,000 inorganic compounds for applications ranging from Li-ion batteries to topological insulators [35]. Within the context of thermodynamic stability screening—a fundamental step in materials discovery—these tools provide robust methodologies for determining whether a proposed compound is stable against decomposition into competing phases. This article provides detailed application notes and protocols for leveraging these tools effectively in HT-DFT research, with particular emphasis on thermodynamic stability assessment.

Major HT-DFT Databases: Contents and Specializations

Table 1: Key Features of Major HT-DFT Databases

| Database | Primary Focus | Number of Entries | Key Properties | Access Method |

|---|---|---|---|---|

| OQMD (Open Quantum Materials Database) | DFT formations energies and stability assessment [36] | ~300,000 calculations [36] (ICSD compounds + hypothetical structures) | Formation energies, thermodynamic stability, oxygen vacancy energies [11] | Public download at oqmd.org/download [36] |

| Materials Project | Calculated properties of all known inorganic materials [35] | ~80,000 compounds screened as of 2013 [35] | Crystal structures, band gaps, elastic properties, electrochemical data [37] | RESTful API via Pymatgen [35] |

| AFLOW (Automatic Flow) | High-throughput calculation of crystal structure properties [35] | Not specified in sources | Structural properties, stability, superconductors, topological insulators [35] | Online database access |

The Open Quantum Materials Database (OQMD) specializes in DFT formation energies and thermodynamic stability assessments, containing nearly 300,000 DFT calculations as of 2015 [36]. Its dataset includes both experimentally reported structures from the Inorganic Crystal Structure Database (ICSD) and hypothetical compounds generated through decoration of common crystal prototypes [36]. A notable specialized dataset within OQMD includes 5,329 ABO₃ perovskites with calculated formation energies, stability, and oxygen vacancy formation energies, highly relevant for applications like solid oxide fuel cells and water splitting [11].

The Materials Project aims to compute properties of all known inorganic materials and make this data publicly available [35]. It provides a diverse range of calculated properties accessible through a RESTful API that can be interfaced via Pymatgen [35]. The project has historically used the PBE GGA functional but has more recently transitioned to the r²SCAN meta-GGA functional, which generally provides improved accuracy for magnetic moments and thermodynamic stability [37].

AFLOW (Automatic Flow) provides a software framework for high-throughput calculation of crystal structure properties, with applications including binary alloys, superconductors, and topological insulators [35]. While specific current statistics were not provided in the search results, it represents one of the three major HT-DFT databases alongside OQMD and Materials Project.

Quantitative Comparison of Database Methodologies and Outputs

Table 2: Methodological Comparison and Reproducibility Across Major Databases

| Aspect | OQMD | Materials Project (Legacy) | Materials Project (Newer) |

|---|---|---|---|

| DFT Functional | GGA-PBE with DFT+U for specific elements [11] | PBE (GGA) and GGA+U [37] | r²SCAN meta-GGA [37] |

| Formation Energy Accuracy | MAE: 0.096 eV/atom vs. experiments [36] | Not specified | Improved thermodynamic accuracy [37] |

| Magnetic Properties | Not specified | Good for elemental metals [37] | Overestimates on-site ferromagnetic moments in elemental metals [37] |

| Inter-database Variance | Median Relative Absolute Difference: 6% for formation energy [38] |

A comparative analysis reveals both consistencies and variations across the major databases. Formation energies and volumes generally show higher reproducibility than band gaps and total magnetizations [38]. The median relative absolute difference between databases is approximately 6% for formation energy and 4% for volume, while band gaps show 9% difference, and total magnetization 8% [38]. Notably, these variances are comparable to differences between DFT and experimental measurements, highlighting the importance of understanding methodological choices when utilizing these databases.

Key sources of discrepancy include choices involving pseudopotentials, the DFT+U formalism, and elemental reference states [38]. For instance, the Materials Project's transition from PBE to r²SCAN has improved thermodynamic accuracy but tends to overestimate magnetic moments in transition metals compared to experimental measurements [37]. These differences underscore the benefit of having multiple databases available, as they enable cross-validation and uncertainty quantification for high-throughput calculations.

Experimental Protocols for Thermodynamic Stability Screening

Workflow for Thermodynamic Stability Assessment

The assessment of thermodynamic stability for new materials represents a fundamental application of HT-DFT databases. The following protocol outlines a standardized workflow for determining phase stability using the OQMD and Materials Project frameworks.

Protocol 1: Phase Stability Assessment via Convex Hull Construction

Structure Generation and Input Preparation

- Generate initial crystal structures for compounds of interest. Pymatgen provides powerful tools for structure generation via substitution based on data-mined rules [35].

- For perovskite systems (ABO₃), consider multiple structural distortions (rhombohedral, tetragonal, orthorhombic) beyond the ideal cubic structure, as distortions generally lower the energy [11].

DFT Calculation Parameters

- Perform DFT calculations using consistent parameters across all compounds. The OQMD methodology uses:

- Vienna Ab initio Simulation Package (VASP) [11] [36]

- GGA-PBE exchange-correlation functional [11]

- Projector-augmented wave (PAW) method [11]

- DFT+U for 3d transition metals and actinides with element-specific U-values [11]

- Plane-wave cutoff energy: 520 eV [11]

- k-point density: 6,000 k-points per reciprocal atom (KPPRA) for structural relaxation [11]

- Perform DFT calculations using consistent parameters across all compounds. The OQMD methodology uses:

Formation Energy Calculation

- Calculate the formation energy (H(f^{ABO3})) using: [ Hf^{ABO3} = E(ABO3) - \muA - \muB - 3\muO ] where E(ABO₃) is the total energy of the perovskite, and μ(A), μ(B), and μ(_O) are the chemical potentials of the constituent elements [11].

- Use appropriate reference states for elements (standard states for most elements, with corrections applied for diatomic gases, room temperature liquids, and elements with structural phase transformations) [11].

Stability Assessment via Convex Hull Construction

- Construct the energy convex hull for the A-B-O ternary system using all known competing phases from the database [11].

- Calculate the convex hull distance (H({stab}^{ABO3})) as: [ H{stab}^{ABO3} = Hf^{ABO3} - Hf^{hull} ] where H(f^{hull}) is the convex hull energy at the ABO₃ composition [11].

- Classify compounds with stability below 0.025 eV per atom (approximately kT at room temperature) as stable [11].

Validation and Analysis

Specialized Protocol: Oxygen Vacancy Formation Energy Calculation

For energy materials such as perovskites in fuel cell applications, oxygen vacancy formation energy represents a critical property beyond basic thermodynamic stability. The following protocol details its calculation.

Protocol 2: Oxygen Vacancy Formation Energy in Perovskites

Defect Structure Generation

- Create a 2×2×2 supercell of the perovskite structure (A(8)B(8)O(2)(4)) or use a standardized A(2)B(2)O(_5) 9-atom supercell for high-throughput screening [11].

- Remove one oxygen atom to create the defective structure.

DFT Calculation of Defect System

- Perform DFT relaxation of the defective A(2)B(2)O(_5) structure using consistent calculation parameters with the pristine system.

- Ensure consistent k-point sampling and convergence criteria.

Energy Calculation

- Calculate the oxygen vacancy formation energy (E({vO})) using: [ E{vO} = E(A2B2O5) + \muO - 2E(ABO3) ] where E(A(2)B(2)O(5)) is the total energy of the defective cell, E(ABO(3)) is the total energy of the pristine perovskite, and μ(O) is the chemical potential of oxygen [11].

Analysis and Correlation

- Correlate oxygen vacancy formation energies with stability metrics and application-specific performance.

- For water-splitting applications, use both stability and oxygen vacancy formation energy as screening parameters [11].

Essential Tools and Integration Strategies

The Scientist's Toolkit: Software and Databases

Table 3: Essential Software Tools and Databases for HT-DFT Research

| Tool/Database | Type | Primary Function | Key Applications in Stability Screening |

|---|---|---|---|

| Pymatgen | Python Library | Materials analysis, input generation, and post-processing [35] | Structure manipulation, phase diagram analysis, API access to Materials Project [35] |

| qmpy | Python Framework | High-throughput DFT calculations and database management [11] [36] | OQMD database management, thermodynamic analysis [36] |

| VASP | DFT Code | First-principles electronic structure calculations [11] | Energy calculations, structural relaxation, property prediction [11] |

| OQMD | Database | DFT formation energies and stability data [36] | Convex hull construction, stability assessment, new compound prediction [11] [36] |

| Materials Project API | Web API | Programmatic access to calculated materials data [35] | Retrieving reference data, comparative analysis, structure retrieval [35] |

Integration Workflow for Materials Screening

Effective integration of these tools enables robust materials screening pipelines. The following diagram illustrates the relationships and data flow between key components in a typical HT-DFT workflow for stability screening.

Best Practices and Uncertainty Management

Data Representation and Functional Considerations

When working with HT-DFT databases, researchers should adhere to several best practices to ensure robust and reproducible results:

Structure Representation: Be aware that databases may store structures in non-standard representations. Always convert to primitive or conventional cells using tools like Pymatgen's

to_conventional()orget_primitive_structure()methods for consistent analysis [37].Functional Differences: Understand the implications of different DFT functionals. While PBE (GGA) provides reasonable accuracy, r²SCAN (meta-GGA) generally improves thermodynamic accuracy and magnetic ordering in oxides but may overestimate magnetic moments in elemental metals [37].

Cross-Validation: Leverage multiple databases when possible to quantify uncertainty. The observed variances between databases (6% for formation energy, 9% for band gaps) are significant and should be considered when making stability predictions [38].

Stability Thresholds: Use appropriate energy thresholds for stability classification. The OQMD uses 0.025 eV/atom (approximately kT at room temperature) as a practical threshold for predicting synthesizable materials [11].

Uncertainty Quantification in HT-DFT

Recent research has highlighted the importance of quantifying uncertainty in high-throughput calculations. Key considerations include:

Phonon-Induced Uncertainty: Ab initio molecular dynamics (AIMD) calculations reveal significant uncertainties (standard deviation ≈ 0.16 V in overpotentials) due to phonon-induced energy fluctuations [39].

Experimental Comparison: The mean absolute error between DFT and experimental formation energies is 0.096 eV/atom in OQMD, but a significant fraction of this error may be attributed to experimental uncertainties, which show a mean absolute error of 0.082 eV/atom between different experimental measurements [36].

Methodological Consistency: Maintain consistent calculation parameters (pseudopotentials, k-point sampling, U-values) throughout a screening project to minimize systematic errors and ensure comparable results [38].

These protocols and best practices provide a foundation for robust thermodynamic stability screening using modern HT-DFT tools and databases. By leveraging these standardized approaches, researchers can efficiently identify promising new materials while properly accounting for computational uncertainties and methodological limitations.

The discovery of advanced functional materials is often limited by the vastness of chemical space and the resource-intensive nature of traditional experimental approaches. ABO₃ perovskites, characterized by their versatile oxide structure, exhibit remarkable properties for applications including solid oxide fuel cells, ferroelectrics, and thermochemical water splitting [11]. Their stability with respect to cation substitution makes them particularly promising, with many potential compounds awaiting discovery [11] [40]. This case study details a high-throughput density functional theory (HT-DFT) framework for computationally screening ABO₃ perovskites, focusing on two critical properties: thermodynamic stability and oxygen vacancy formation energy. The protocol demonstrates how integrated computational workflows can accelerate materials discovery by rapidly identifying promising candidates for further experimental investigation.

Key Quantitative Data from High-Throughput Screening

A high-throughput DFT screening of 5,329 ABO₃ perovskites, comprising both cubic and distorted structures, provides a robust dataset for analysis [11] [41]. The key quantitative results are summarized in the following tables.

Table 1: Summary of High-Throughput DFT Screening Results for ABO₃ Perovskites

| Property Category | Metric | Value | Description |

|---|---|---|---|

| Dataset Scope | Total Compounds Calculated | 5,329 | Includes cubic and distorted structures [11] |

| Thermodynamic Stability | Predicted Stable Compounds | 395 | Stability threshold: < 36 meV/atom [11] [42] |

| Predicted Metastable Compounds | 13 | Stability range: 36-70 meV/atom [42] | |

| Structural Analysis | Compositions with Multiple Distortions | 2,162 | Rhombohedral, tetragonal, and orthorhombic [11] |

| Experimental Validation | Experimentally Known Stable Perovskites | 223 | Curated from literature for comparison [11] |

Table 2: Key Property Definitions and Calculated Ranges

| Property | Definition/Calculation | Significance | Representative Range/Notes |

|---|---|---|---|

| Formation Energy ((H_f)) | (Hf^{ABO3} = E(ABO3) - \muA - \muB - 3\muO) [11] | Measures stability of compound from its elements. | Negative values indicate exothermic formation [11]. |