High-Throughput Computational Screening of Crystal Structures: Accelerating Drug Discovery and Materials Design

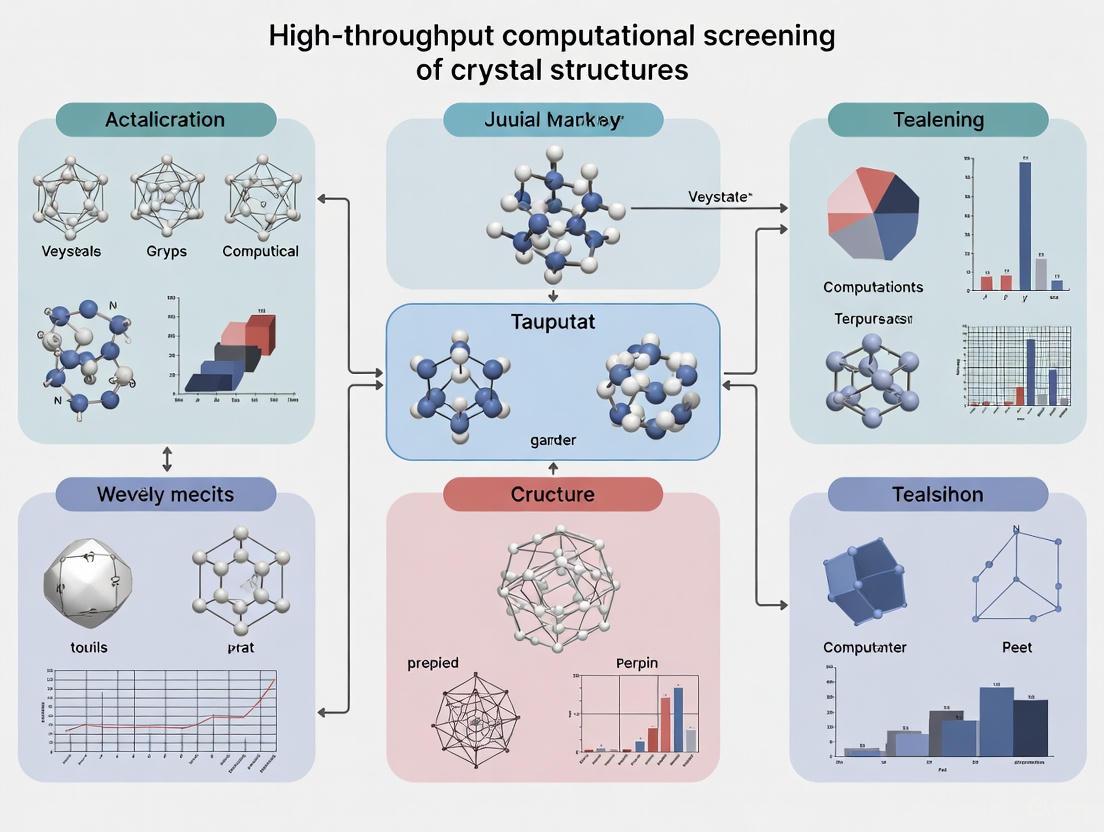

This article provides a comprehensive overview of high-throughput computational screening (HTCS) for crystal structures, a transformative approach accelerating discovery in structural biology, drug development, and materials science.

High-Throughput Computational Screening of Crystal Structures: Accelerating Drug Discovery and Materials Design

Abstract

This article provides a comprehensive overview of high-throughput computational screening (HTCS) for crystal structures, a transformative approach accelerating discovery in structural biology, drug development, and materials science. We explore the foundational principles of crystallization and the shift towards automated, data-driven pipelines. The scope covers core methodologies like molecular docking, dynamics simulations, and machine learning, alongside diverse applications from lead compound identification to porous material design. Critical discussions on troubleshooting experimental bottlenecks, optimizing screening protocols, and validating results through integrative computational and experimental strategies are included. This resource is tailored for researchers and professionals seeking to implement or understand HTCS to navigate complex chemical spaces efficiently and drive innovation in biomedical and clinical research.

The Foundation: Unraveling the Core Principles and Challenges of Structural Screening

In the era of high-throughput computational screening and structural genomics, the process of determining three-dimensional protein structures remains heavily constrained by a critical experimental step: the production of high-quality crystals. Despite significant advancements in X-ray sources, detector technologies, and structure solution algorithms, macromolecular crystallization continues to be the primary bottleneck in structural biology pipelines. Data from large-scale structural genomics efforts reveals that of the purified, soluble proteins entered into TargetDB, only approximately 14% successfully yield a crystal structure [1]. This substantial attrition rate underscores the formidable challenge crystallization presents, even when targets are pre-selected for expressibility and solubility.

The transition to high-throughput methodologies has systematized the crystallization process but has not fundamentally solved the underlying scientific challenge. As one analysis notes, "Getting crystals is still not a solved problem. High-throughput approaches can help when used skillfully; however, they still require human input in the detailed analysis and interpretation of results to be more successful" [1]. This application note examines the multifaceted nature of the crystallization bottleneck, provides quantitative assessments of current success rates, details experimental protocols for optimization, and visualizes the critical pathways where failures most commonly occur.

Quantitative Assessment of the Crystallization Bottleneck

The following table summarizes key quantitative metrics that highlight the crystallization bottleneck across structural biology pipelines:

Table 1: Quantitative Metrics of the Crystallization Bottleneck in Structural Biology

| Metric | Value | Context/Source |

|---|---|---|

| Overall success rate from purified soluble protein to structure | ~14% | Structural Genomics data [1] |

| Percentage of structural knowledge provided by X-ray crystallography | ~86% | Predominant structural biology technique [1] |

| Number of proteins resulting in structural depositions from PSI efforts | ~5,000 | From over 36,000 purified proteins [1] |

| Crystal size requirements for MicroED | 100-300 nm | Thickness in all dimensions to reduce multiple diffraction [2] |

| Crystal size for early microfocus beamlines | 1 × 1 × 3 µm | First high-resolution structure from microcrystal slurry [2] |

| Modern X-ray beamline size (VMXm) | 0.3 × 2.3 µm | Vertical × horizontal beam dimensions [2] |

The challenge extends beyond initial crystal formation to producing crystals of sufficient quality and size for diffraction studies. While microfocus beamlines and techniques like MicroED have pushed the size boundaries downward, they introduce new complexities in sample handling and data collection. The persistent gap between protein purification and structure determination underscores that the crystallization bottleneck remains a primary constraint in structural biology.

Technical Challenges in Crystal Formation and Optimization

The Multi-Parametric Optimization Problem

Identifying crystallization conditions represents a formidable multi-parametric problem that involves navigating a vast chemical and physical landscape [1]. The fundamental challenge lies in identifying the precise combination of parameters that will drive a protein solution to a state of supersaturation and then guide it along a pathway toward crystalline order rather than amorphous precipitation.

Experimental evidence indicates that subtle variations in chemical conditions can dramatically alter crystal morphology and quality. As one study observed, "fibrous, dendrite crystals abruptly change to plate morphology" with minimal adjustments in protein and cocktail concentrations [3]. This sensitivity to initial conditions makes crystallization optimization particularly challenging, as the phase space is too extensive for exhaustive exploration, even with high-throughput approaches.

The Enigma of Crystallization Pathways

Understanding how proteins crystallize remains a significant scientific challenge. Recent research employing kinetic small-angle scattering studies has revealed several nonclassical pathways for salt-induced protein crystallization [4]. These pathways include:

- Initial gel phases with metastable intermediates

- Continuous presence of protein assemblies

- Crystal growth directly from monomeric solutions

The application of complementary techniques (NSE, NBS, DLS, SANS, microscopy) has been essential for characterizing these distinct pathways [4]. This complexity means that crystallization cannot be approached as a single, uniform process but must be understood as a system-specific phenomenon with varying thermodynamic and kinetic parameters.

Methodologies for Crystallization Optimization

High-Throughput Screening and Optimization Strategies

The following table outlines key reagents and methodologies employed in crystallization optimization:

Table 2: Research Reagent Solutions for Crystallization Optimization

| Reagent/Method | Function/Purpose | Application Context |

|---|---|---|

| Sparse Matrix Screens | Survey historically successful chemical conditions | Initial screening [1] |

| Incomplete Factorial Designs | Statistically sample chemical parameter space | Broad coverage screening [1] |

| Additive Screening | Modify hit conditions with small molecules | Optimization [5] |

| Seeding | Introduce nucleation points to promote growth | Optimization of difficult targets [5] |

| Microbatch-under-oil | Containerize and retard dehydration | Small-volume batch crystallization [3] |

| Optimization Gradients | Systematically vary precipitant concentration | Fine screening [5] |

The Drop Volume Ratio/Temperature (DVR/T) method represents an efficient optimization approach that simultaneously samples temperature alongside the concentrations of protein and cocktail solutions [3]. This method uses exactly the same microbatch-under-oil crystallization protocol for both screening and optimization, improving reproducibility and eliminating complications when converting conditions between methods.

Advanced Techniques for Challenging Samples

For particularly challenging samples that produce only microcrystals, advanced techniques have been developed:

- Microcrystal Electron Diffraction (MicroED) uses a transmission electron microscope to collect datasets from crystals between 100-300 nm in thickness, enabling structure determination from sub-micrometre crystals [2].

- Serial crystallography approaches at X-ray free-electron lasers (XFELs) and synchrotrons allow data collection from microcrystal slurries, bypassing the need for large single crystals [2].

- in vacuo sample environments on advanced beamlines like VMXm improve the signal-to-noise ratio in X-ray diffraction experiments, enabling the use of submicrometre crystals [2].

Experimental Protocols for Crystallization Optimization

DVR/T (Drop Volume Ratio/Temperature) Optimization Protocol

Purpose: To efficiently optimize initial crystallization hits by simultaneously varying protein concentration, precipitant concentration, and temperature without reagent reformulation.

Materials and Reagents:

- Purified protein solution

- Initial hit crystallization cocktail

- Microbatch plates

- Paraffin oil

- Liquid handling robot (optional but recommended)

- Temperature-controlled incubators (4°C, 12°C, 18°C, 23°C)

Procedure:

- Prepare Protein and Cocktail Solutions: Use exactly the same solutions identified in initial screening experiments.

- Design Volume Ratios: Create an 8×8 matrix of protein-to-cocktail volume ratios, typically ranging from 2:1 to 1:2.

- Set Up Microbatch Experiments:

- Dispense 10 µL of paraffin oil into each well of the microbatch plate.

- Using liquid handling capabilities, combine protein and cocktail solutions according to the designed volume ratios directly under the oil.

- Total drop volumes should not exceed 1000 nL for high-throughput approaches.

- Incubate at Multiple Temperatures: Replicate the entire plate setup at four different temperatures (4°C, 12°C, 18°C, 23°C).

- Monitor and Image: Regularly image drops using automated imaging systems over 2-8 weeks.

- Analyze Results: Identify conditions that produce single crystals with optimal morphology.

Technical Notes: The DVR/T method is particularly valuable because it "makes use of the same cocktails for screening and optimization. This prevents batch differences caused by reformulation" [3]. This approach samples temperature simultaneously with the concentrations of the protein and cocktail solutions, providing a multi-parametric optimization in a single experimental series.

Microcrystal Handling Protocol for Advanced Beamlines

Purpose: To prepare microcrystals for data collection at microfocus beamlines or using MicroED.

Materials and Reagents:

- Microcrystal slurry (1-10 µm crystals)

- Glow-discharged carbon-coated grids

- Humidity-controlled environment

- Liquid ethane for vitrification

- Fine tips for handling (1-2 µm)

Procedure:

- Harvest Microcrystals: Gently concentrate microcrystals without mechanical damage.

- Grid Preparation: Pipette 2-3 µL of microcrystal slurry onto one side of a glow-discharged carbon-coated grid.

- Blotting: Carefully blot excess liquid in a humidity-controlled environment (≥80% RH) to prevent dehydration.

- Vitrification: Rapidly plunge the grid into liquid ethane for vitrification.

- Screening: Transfer to electron microscope (for MicroED) or synchrotron beamline for data collection.

Technical Notes: "Reducing excess liquid around crystals and matching the sample to the beam size results in reduced background, thereby improving data quality" [2]. For MicroED, crystal thickness must be restricted to between 100 and 300 nm in all dimensions to reduce multiple diffraction events.

Workflow Visualization of Structural Biology Pipeline

The following diagram illustrates the structural biology pipeline, highlighting key bottleneck points in red, optimization checkpoints in yellow, and successful outcomes in green:

Diagram 1: Structural biology pipeline with key bottlenecks.

Crystallization Pathway Complexity

The diagram below visualizes the multiple pathways proteins may take during crystallization, explaining why the process is difficult to control and predict:

Diagram 2: Multiple crystallization pathways and outcomes.

Despite significant technological advances, crystallization remains the central bottleneck in structural biology due to the fundamental complexity of protein crystallization pathways and the multi-parametric nature of the optimization problem. The continued development of microcrystal techniques, including MicroED and serial crystallography, provides alternative paths forward for challenging targets that resist conventional crystallization approaches.

Successful navigation of the crystallization bottleneck requires: (1) systematic application of high-throughput optimization methods like DVR/T; (2) implementation of advanced techniques for microcrystals when conventional approaches fail; and (3) deeper investigation into the fundamental principles governing protein crystallization pathways. As these methods continue to mature and integrate with computational approaches, they offer the potential to gradually transform crystallization from an empirical art to a more predictable engineering discipline, ultimately accelerating drug discovery and structural biology research.

The evolution of crystallization screening from manual methods to automated, high-throughput platforms represents a pivotal advancement in structural biology and drug discovery. X-ray crystallography, the source of over 86% of our structural biological knowledge, depends entirely on obtaining high-quality crystals, making this process a critical bottleneck in structural determination pipelines [1]. High-throughput crystallization has matured over the past decade through structural genomics initiatives, transforming what was once an "empirical art of rational trial and error" into a systematic, technology-driven process [6] [1]. This evolution addresses the fundamental challenge that despite massive efforts, crystallization success rates remain remarkably low, with only approximately 0.2% of initial screening experiments yielding crystals [6]. The integration of computational approaches and automation has significantly accelerated early-stage drug discovery by enabling researchers to explore vast chemical and biological spaces efficiently [7].

The Manual Crystallization Era

Historical Foundations and Basic Principles

The history of protein crystallization extends back over 150 years, with the first documented protein crystals observed in 1840 from earthworm blood evaporated between glass slides [8]. For early biochemists, crystallization served primarily as a purification method rather than a step toward structure determination. These pioneers worked with limited tools—without modern buffers, micropipettes, or refrigeration—relying instead on classical chemical purification techniques like ethanol extraction, salt precipitation, and pH manipulation [8]. The vapor diffusion method, particularly in the hanging drop format, emerged as the dominant manual technique and remains prevalent today due to its effectiveness in gradually achieving supersaturation [9].

Key Manual Techniques and Protocols

In manual hanging drop vapor diffusion, a small volume of protein sample (typically 1-2 μL) is combined with an equal volume of precipitant solution on a glass coverslip, which is then sealed over a reservoir containing a higher concentration of precipitant solution [9]. Through vapor equilibration, water slowly evaporates from the drop, increasing the concentration of both protein and precipitant until the system reaches equilibrium with the reservoir solution. This gradual concentration increase favors the formation of ordered crystals over amorphous precipitate [9]. The lipid cubic phase (LCP) method represents another sophisticated manual approach, particularly valuable for membrane proteins. This technique involves reconstituting the protein into a lipid matrix before dispensing nanoliter-volume boluses (as small as 50-200 nL) and overlaying them with precipitant solutions [10].

Table: Key Manual Crystallization Methods and Their Characteristics

| Method | Key Features | Typical Volume Range | Primary Applications |

|---|---|---|---|

| Hanging Drop Vapor Diffusion | Gradual concentration via vapor equilibration | 1-10 μL | Soluble proteins, standard screening |

| Sitting Drop Vapor Diffusion | Similar to hanging drop but easier to automate | 1-10 μL | Soluble proteins, robotic setup |

| Lipid Cubic Phase (LCP) | Protein reconstituted in lipid matrix | 50-200 nL | Membrane proteins, difficult targets |

| Microbatch under Oil | Isolation from atmosphere under oil layer | 0.5-5 μL | Soluble proteins, screening |

The Shift to High-Throughput Automation

Technological Drivers and Capabilities

The transition to high-throughput automation was driven by several critical needs: reduced sample consumption, increased screening efficiency, and enhanced reproducibility. Automated systems revolutionized crystallization by enabling researchers to set up thousands of experiments with minimal protein sample, addressing the fundamental limitation of protein availability that often constrained manual approaches [1]. Early automation technologies emerged in the 1980s, with syringe pumps used to deliver reservoir and experiment drop solutions to specialized plates [1]. These pioneering systems introduced the key ingredients for successful automation: solution preparation, experiment setup, information tracking, and image analysis capabilities [1].

Modern automated platforms like the Crystal Gryphon can prepare a 96-condition screen at two different protein concentrations in under two minutes, dispensing nanoliter volumes with precision unattainable through manual pipetting [9]. This level of automation has made it feasible to rapidly screen thousands of chemical conditions while consuming minimal quantities of precious protein samples, dramatically increasing the probability of identifying initial crystallization hits.

Quantitative Impact of Automation

The statistical impact of high-throughput approaches is evident from large-scale structural genomics initiatives. Data from the Protein Structure Initiative (PSI) reveals that of approximately 45,000 soluble, purified targets processed, about 8,000 produced crystals, and ultimately only 5,000 resulted in crystal structures [6]. This translates to a crystallization success rate of approximately 18% at the target level, with only about 11% of targets ultimately yielding structures. At the individual experiment level, the success rate is even more stark, with only about 0.2% of the approximately 150,000 screening experiments producing crystal leads [6].

Table: Crystallization Success Rates in High-Throughput Environments

| Metric | Success Rate | Data Source | Context |

|---|---|---|---|

| Targets yielding crystals | ~18% (8K/45K) | PSI Structural Genomics | Soluble, purified proteins [6] |

| Targets yielding structures | ~11% (5K/45K) | PSI Structural Genomics | Soluble, purified proteins [6] |

| Individual experiments yielding crystals | ~0.2% (277/150K) | Hauptman-Woodward HTS Lab | 36 targets screened against 1536 conditions [6] |

| Overall structural determination | 13% | PSI Data | Percentage of purified soluble proteins resulting in PDB deposits [1] |

Experimental Protocols and Methodologies

Protein Sample Preparation Guidelines

Proper protein preparation is fundamental to successful crystallization regardless of methodological approach. Proteins should be highly pure (>90% homogeneity) and concentrated to 10-20 mg/mL for initial screening trials [9]. Sample handling requires care to maintain stability: proteins should be kept on ice when not refrigerated, avoided vortexing to prevent denaturation, and centrifuged at 14,000 ×g for 5-10 minutes at 4°C to remove precipitated protein and particulate matter before setting up crystallization trials [9]. Advanced formulation techniques like differential scanning fluorimetry (DSF) can identify optimal buffer conditions that stabilize the protein and enhance crystallization likelihood by measuring thermal stability shifts in different chemical environments [8].

Manual LCP Crystallization Protocol

The LCP crystallization method provides a specialized protocol for challenging targets, particularly membrane proteins:

- Reconstitution: Mix protein solution with lipid (typically monoolein) to form protein-laden LCP as described in Reconstitution of Protein in LCP protocol [10].

- Loading: Transfer the protein-laden LCP into a 10 μL gas-tight syringe affixed to a repetitive dispenser [10].

- Dispensing: Attach a short, flat-tipped needle (26 gauge) and deliver 200 nL LCP boluses to the center of each well in a glass sandwich plate, maintaining optimal needle height (200-300 μm above the surface) [10].

- Precipitant Addition: Add 1 μL of precipitant solution on top of each LCP bolus [10].

- Sealing: Cap groups of four wells with an 18 mm square glass coverslip, using a wooden toothpick to press around the wells for proper sealing [10].

- Incubation: Incubate the plate at constant temperature (20°C or higher for monoolein-based LCP), avoiding fluctuations that cause light-scattering droplets [10].

The entire process of manually setting up a 96-well LCP plate, including mixing protein and lipid, takes approximately one hour [10].

Automated Crystallization Setup with Crystal Gryphon

The Crystal Gryphon automated system exemplifies modern high-throughput crystallization:

Materials Required:

- Deep well block with screen solutions (e.g., Greiner 1.0 mL MasterBlock)

- Empty crystallization plate (e.g., Art Robbins 2-well Intelliplate)

- Protein solution (80-100 μL at appropriate concentration) in a 200 μL PCR tube

- HD clear duct tape for sealing [9]

Setup Protocol:

- Ensure wash stations have adequate water supply and all tubing is properly connected.

- Power up the dispensing stage and pumps, then open the Crystal Gryphon software.

- Place empty crystallization plate in stage position 1.

- Position screen solution block (with sealing tape removed) in stage position 2.

- Place open protein solution tube in stage position 10.

- Load appropriate dispensing protocol (e.g., "2-drop Screen" for two protein concentrations).

- Verify protocol compatibility with plates and sufficient protein volume.

- Initiate dispensing with the "GO" button [9].

Typical 2-drop Screen Protocol:

- Wash 96-syringe head twice with 50 μL in wash station.

- Wash nano-needle with 3 cycles in solvent reservoir.

- Aspirate 55 μL of screen solution from deep well block.

- Pre-dispense 5 μL to compress bubbles.

- Nano-aspirate 85 μL protein from sample tube.

- Dispense 40 μL screen into crystallization plate reservoirs.

- Dispense 200 nL screen + 200 nL protein for first drop and 200 nL screen + 400 nL protein for second drop [9].

Integrated Workflows and Visualization

Evolution of Crystallization Screening Workflow

The following diagram illustrates the key stages in the evolution from manual to automated crystallization screening, highlighting the integrated computational and experimental approaches that define modern high-throughput structural biology:

Modern High-Throughput Crystallization Pipeline

Contemporary high-throughput crystallization represents the integration of multiple automated processes into a seamless pipeline:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Key Research Reagent Solutions for High-Throughput Crystallization

| Reagent/Material | Function/Purpose | Examples/Specifications |

|---|---|---|

| Glass Sandwich Plates | Optimal optical properties for crystal detection in LCP | Paul Marienfeld GmbH cat# 0890003; Molecular Dimensions cat# MD11-55 [10] |

| Pre-greased 24-well Crystallization Trays | Manual vapor diffusion experiments | Hampton Research VDX plates with siliconized coverslips [9] |

| Gas-tight Syringes | Precise dispensing of viscous LCP mixtures | Hamilton 7653-01 (10 μL without needle) [10] |

| Repetitive Syringe Dispenser | Automated bolus delivery for LCP | Hamilton 83700 (modifiable for 70 nL delivery) [10] |

| Flat-tipped Needles | LCP bolus application without clogging | Hamilton 7804-03 (26 gauge, 0.375 inch) [10] |

| Sparse Matrix Screens | Initial condition screening | Commercial screens (e.g., Hampton Research Crystal Screen) [10] [1] |

| Precipitant Solutions | Drive crystallization through supersaturation | Ammonium sulfate, PEGs of various molecular weights [9] |

| Buffer Systems | Maintain protein stability and pH | HEPES, Tris, phosphate buffers at appropriate concentrations [9] |

| Additives/ Ligands | Enhance crystallization of specific targets | Metal ions, cofactors, small molecule ligands [8] |

Integration with Computational Approaches

The next evolutionary stage in crystallization screening involves tight integration with computational methods. High-throughput computational screening (HTCS) leverages advanced algorithms, machine learning, and molecular simulations to efficiently explore vast chemical spaces, significantly accelerating early-stage drug discovery [7]. While initially developed for small molecule drug discovery, these approaches are increasingly applied to crystallization condition prediction.

Machine learning algorithms like Random Forest and CatBoost are being employed to predict molecular interactions and optimize conditions, though their application to protein crystallization specifically is still emerging [11]. These computational approaches benefit from incorporating multiple descriptor types: structural features (pore dimensions, surface area), molecular features (atomic types, bonding modes), and chemical features (binding affinities, thermodynamic parameters) [11]. The development of interpretable machine learning models allows researchers to identify which factors most significantly influence crystallization success, creating a feedback loop that continuously improves both experimental and computational screening strategies [11].

The evolution from manual to high-throughput crystallization screening has fundamentally transformed structural biology's capacity to tackle challenging molecular targets. This progression has been characterized by increasing automation, miniaturization of experiments, and integration of computational approaches. Where early crystallizers relied on artisanal techniques and serendipity, modern structural biologists employ systematic, technology-driven processes capable of screening thousands of conditions with minimal sample consumption.

The future of crystallization screening lies in deeper integration between experimental and computational paradigms. Machine learning algorithms will increasingly guide condition selection based on protein properties and historical success data [7] [11]. High-throughput computational screening approaches will enable virtual testing of crystallization conditions before wet lab experiments, optimizing resource allocation [7]. As these technologies mature, they promise to overcome the persistent challenge of crystallization that has long constrained structural biology, ultimately accelerating drug discovery and expanding our understanding of biological systems at molecular resolution.

Within the framework of high-throughput computational screening of crystal structures research, the experimental pipeline for producing diffraction-quality crystals is foundational. This pipeline transforms genetic information into structured, three-dimensional knowledge, enabling rational drug design. The high-throughput philosophy aims to accelerate this process through automation, parallelization, and data-driven iteration, yet the fundamental milestones remain critically important. Success hinges on meticulously optimizing each step, from gene sequence to X-ray diffraction experiment, to produce the high-quality crystals required for elucidating atomic-level structures. This application note details the key milestones and provides standardized protocols to establish a robust pipeline for generating diffraction-quality protein crystals.

The Crystallization Pipeline: Key Milestones and Success Rates

The journey from a gene of interest to a refined atomic model is a multi-stage process with significant attrition at each step. The following milestones represent the critical path in a structural biology pipeline.

Table 1: Key Milestones and Estimated Success Rates in the Crystallization Pipeline

| Pipeline Milestone | Key Activities | Estimated Success Rate | Cumulative Impact |

|---|---|---|---|

| 1. Cloning & Expression | Construct design, vector preparation, recombinant protein expression in a host system (e.g., E. coli, insect cells). | Highly variable; ~50% of soluble proteins may express adequately [1]. | Initial success determines feasibility for the entire project. |

| 2. Purification & Quality Control | Cell lysis, affinity/size-exclusion chromatography, buffer exchange. Assessment of purity, monodispersity, and stability. | ~13% of purified, soluble proteins progress to a deposited structure [1]. | The single largest bottleneck; purity (>95%) and homogeneity are paramount [12]. |

| 3. Crystallization | Initial screening using sparse matrix or statistical approaches, followed by optimization of hit conditions. | A significant limiting factor, with a high failure rate for novel proteins [1]. | Success is non-linear and often requires iterative cycles of optimization. |

| 4. Crystal Harvesting & Diffraction | Cryo-protection, crystal mounting, and X-ray diffraction data collection at synchrotron sources. | Not all crystals diffract; among those that do, resolution can vary widely. | The final experimental gate; defines the quality and resolution of structural data. |

The following workflow diagram visualizes this pipeline, integrating the continuous feedback loops essential for success.

Detailed Experimental Protocols for Key Milestones

Milestone 2: Protein Purification and Quality Assurance

A rigorous purification and quality control protocol is essential for generating protein samples capable of forming crystals.

Protocol: Size-Exclusion Chromatography (SEC) for Crystallization-Grade Protein

- Objective: To separate monodisperse, properly folded protein from aggregates and contaminants, while simultaneously transferring the protein into a crystallization-friendly buffer.

- Materials:

- Purified protein sample (from affinity chromatography)

- FPLC system

- High-resolution SEC column (e.g., Superdex 200 Increase 10/300 GL)

- SEC buffer: 25 mM HEPES pH 7.5, 150 mM NaCl, 5% (v/v) glycerol, 1 mM TCEP

- Method:

- Equilibrate the SEC column with at least 1.5 column volumes of degassed SEC buffer at a recommended flow rate (e.g., 0.5 mL/min).

- Concentrate the protein sample to a volume ≤ 0.5% of the column volume (e.g., ≤ 250 µL for a 24 mL column) and centrifuge at 14,000 × g for 10 minutes to remove any precipitate.

- Inject the supernatant onto the column using an automated sample loop or syringe.

- Monitor the UV absorbance at 280 nm and collect the peak corresponding to the target protein's oligomeric state. Avoid collecting the leading or trailing edges of the peak.

- Concentrate the collected fractions to the desired concentration for crystallization (typically 5-20 mg/mL, determined empirically).

- Perform immediate quality control via SDS-PAGE (purity) and Dynamic Light Scattering (DLS) to confirm monodispersity. A polydispersity value (%Pd) below 20% is generally desirable for crystallization trials [12].

Milestone 3: High-Throughput Crystallization Screening

Initial crystallization screening is a multi-parametric problem explored empirically using high-throughput methods [1].

Protocol: High-Throughput Sitting-Drop Vapor-Diffusion Screening

- Objective: To rapidly identify initial crystallization conditions for a purified protein sample by testing hundreds of chemical cocktails in a nanoliter-scale format.

- Materials:

- Crystallization robot (e.g., Mosquito, Dragonfly)

- 96-well sitting-drop crystallization plates

- Pre-formulated commercial sparse-matrix screens (e.g., JC SG, MemGold, PEG/Ion)

- Purified, concentrated protein sample

- Clear seal or tape

- Method:

- Plate Preparation: Centrifuge the crystallization plates briefly to ensure reservoir wells are empty and clear.

- Dispensing Reservoirs: Using the robot, dispense 50-100 µL of each screening cocktail into the corresponding reservoir wells.

- Setting up Drops: For each condition, the robot mixes:

- 100 nL of protein sample

- 100 nL of reservoir cocktail solution The 200 nL combined drop is dispensed onto the crystallization plate's micro-bridge or sitting-drop post.

- Sealing: Seal the entire plate with a transparent, adhesive seal to prevent evaporation and allow for controlled vapor diffusion.

- Incubation: Place the plate in a vibration-free, temperature-controlled incubator (e.g., 4°C, 20°C). The choice of temperature is a variable in the screening process.

- Imaging: Use an automated imaging system to regularly photograph each drop (e.g., daily for the first week, weekly thereafter) to monitor for crystal growth, precipitation, or phase separation.

Table 2: The Scientist's Toolkit: Key Reagents for Crystallization

| Research Reagent / Material | Function in the Pipeline |

|---|---|

| Affinity Chromatography Resin | First purification step via a genetically encoded tag (e.g., His-tag, GST-tag), enabling rapid capture of the target protein from complex cell lysates. |

| Size-Exclusion Chromatography (SEC) Column | Critical polishing step to separate protein monomers/oligomers from aggregates, ensuring a homogeneous sample for crystallization [12]. |

| TCEP Reductant | A stable, odorless reducing agent that prevents oxidation of cysteine residues, maintaining protein stability over the long timescales of crystallization trials [12]. |

| Sparse-Matrix Screening Kits | Commercial suites of crystallization conditions (e.g., from Hampton Research, Jena Bioscience) that sample "chemical space" where proteins have historically crystallized, providing the initial leads [1]. |

| Polyethylene Glycol (PEG) | A versatile polymer precipitant that induces macromolecular crowding, reducing protein solubility and promoting crystal lattice formation [12]. |

Integrating Computational Screening and Crystal Quality Assessment

The modern structural genomics pipeline is augmented by computational tools that predict success and analyze outcomes.

Computational Pre-Screening of Constructs and Conditions

Before wet-lab experiments begin, computational tools can prioritize targets. AlphaFold3 models can guide construct design by identifying and eliminating flexible regions that hinder crystallization [12]. Furthermore, data mined from the Protein Data Bank (PDB) can be used to build predictive algorithms that suggest likely crystallization conditions for a target based on its sequence or properties, informing the choice of initial screens [12].

Deep-Learning for Predicting Diffraction Quality

A major bottleneck is identifying which crystals will yield high-resolution diffraction data. Traditional methods rely on experienced researchers visually inspecting crystals. A emerging solution uses deep learning to predict diffraction quality directly from optical images of the crystals.

Protocol: Deep-Learning Assessment of Crystal Diffraction Quality

- Objective: To classify protein crystals based on their predicted X-ray diffraction quality, allowing researchers to prioritize the best crystals for data collection.

- Materials:

- Automated imaging microscope

- Trained deep-learning model (e.g., based on ConvNeXt architecture with CBAM attention module [13])

- Curated dataset of crystal images paired with diffraction data

- Method:

- Image Acquisition: Capture high-resolution, brightfield images of all crystals in the crystallization plate.

- Pre-processing: The images are normalized and prepared for input into the neural network.

- Prediction: The trained model processes the crystal image and outputs a classification score (e.g., "High," "Medium," or "Low" quality) based on learned features correlated with diffraction performance.

- Prioritization: Crystals classified as "High" quality are harvested and mounted for X-ray diffraction experiments first, increasing the overall efficiency of beamtime use [13].

The following workflow integrates this computational assessment step into the traditional crystal handling process.

The path from protein expression to diffraction-quality crystals is defined by a series of interdependent milestones, where success is contingent upon rigorous optimization at each stage. The integration of high-throughput experimental methods—from automated purification and crystallization to robotic imaging—with advanced computational predictions for construct design and crystal quality assessment, creates a powerful, modern pipeline. This synergistic approach, which feeds experimental data back into computational models for continuous refinement, maximizes the probability of success. It systematically converts the formidable challenge of protein crystallization into a more manageable, data-driven process, thereby accelerating structural biology and structure-based drug discovery.

Application Notes

The exploration of chemical space is a fundamental challenge in modern drug discovery and materials science. The vastness of synthetically accessible compounds, estimated to exceed 70 billion in make-on-demand libraries and potentially 10^14 structures in virtual spaces, makes exhaustive screening computationally intractable [14] [15]. This document outlines application notes and protocols for employing sparse matrix and statistical sampling strategies to navigate this immense complexity efficiently, framed within high-throughput computational screening of crystal structures.

Sparse matrix approaches involve screening a small, strategically selected subset of conditions or compounds that represent the broader chemical diversity. When combined with machine learning (ML) and statistical sampling, these methods enable the rapid identification of promising candidates for further investigation, such as stable crystal structures or novel drug ligands [16] [14]. For instance, machine learning-guided docking has demonstrated the potential to reduce the computational cost of screening multi-billion-compound libraries by over 1,000-fold [14].

Key applications in the field include:

- Stable Crystal Discovery: ML models, particularly universal interatomic potentials (UIPs), act as highly accurate pre-filters for Density Functional Theory (DFT) calculations, efficiently identifying thermodynamically stable inorganic crystals from vast hypothetical spaces [16].

- Ligand Identification for Drug Discovery: Conformal prediction frameworks combined with classifiers like CatBoost can process ultralarge chemical libraries to identify top-scoring compounds for specific protein targets (e.g., G protein-coupled receptors) with high sensitivity (e.g., 0.87-0.88) [14].

- Chemical Space Visualization: Tools like MolCompass and infiniSee use parametric t-SNE and other algorithms to project high-dimensional chemical descriptor data onto 2D maps, enabling intuitive, cluster-based visual analysis and validation of QSAR/QSPR models [17] [15] [18].

The following sections detail the quantitative benchmarks, experimental protocols, and essential toolkits that underpin these advanced navigation strategies.

Performance Benchmarking Data

The table below summarizes key performance metrics for different chemical space navigation strategies, highlighting the trade-offs between computational cost and predictive accuracy.

Table 1: Performance Metrics of Chemical Space Navigation Strategies

| Strategy / Model | Library Size | Key Performance Metric | Result | Computational Efficiency |

|---|---|---|---|---|

| ML-Guided Docking (CatBoost) [14] | 3.5 billion compounds | Screening Efficiency (Reduction in docking) | >1,000-fold reduction | Docks only ~10% of library |

| ML-Guided Docking (CatBoost) [14] | 234 million compounds | Sensitivity | 0.87 - 0.88 | ~90% of virtual actives identified |

| Universal Interatomic Potentials (UIPs) [16] | ~10^5+ crystals | Pre-screening Accuracy | Surpasses other ML methodologies | Cheaper pre-screen for DFT |

| infiniSee Platform [15] | 10^14 molecules | Search Speed | Results in seconds to minutes on standard hardware | Enables real-time navigation of ultra-large spaces |

| Matbench Discovery Framework [16] | Vast inorganic crystals | False-Positive Rate | Accurate regressors can have high false-positive rates near decision boundaries | Emphasizes need for classification metrics |

Experimental Protocols

Protocol 1: Machine Learning-Guided Docking Screen

This protocol describes a workflow for virtual screening of multi-billion-scale compound libraries using a combination of machine learning and molecular docking [14].

- Library Preparation: Compile the virtual compound library (e.g., Enamine REAL space). Apply rule-of-four (molecular weight <400 Da, cLogP < 4) filtering to focus on drug-like compounds [14].

- Target Preparation: Prepare the 3D structure of the target protein (e.g., a G protein-coupled receptor). This includes adding hydrogen atoms, assigning protonation states, and defining the binding site [14].

- Initial Docking and Training Set Generation:

- Perform molecular docking for a randomly sampled subset of 1 million compounds from the large library.

- Rank the results by docking score and define an energy threshold, typically based on the top-scoring 1% of compounds, to classify them as "virtual actives" (minority class) versus "virtual inactives" (majority class) [14].

- Machine Learning Classifier Training:

- Encode the chemical structures of the 1-million-compound training set using molecular descriptors. Morgan2 fingerprints (the RDKit implementation of ECFP4) are recommended for their optimal balance of performance and computational cost [14].

- Train a classification algorithm, such as CatBoost, on these features using the binary labels (active/inactive) derived from the docking scores. Use 80% of the data for proper training and 20% for calibration [14].

- Conformal Prediction for Library Screening:

- Apply the trained classifier to the entire multi-billion-compound library within a Mondrian conformal prediction (CP) framework. This framework outputs normalized P values for each compound [14].

- Set a significance level (ε, e.g., 0.08 to 0.12) to control the error rate. The CP framework will then classify compounds into "virtual active," "virtual inactive," or "both" sets. The "virtual active" set is the drastically reduced candidate list for explicit docking [14].

- Final Docking and Experimental Validation:

- Perform full molecular docking on the much smaller "virtual active" set identified by the ML model.

- Select top-ranking compounds from this final docked list for experimental validation (e.g., synthesis and binding assays) [14].

Protocol 2: Visual Validation of a QSAR/QSPR Model with MolCompass

This protocol uses the MolCompass framework for the visual validation of a QSAR/QSPR model, helping to identify model weaknesses and "model cliffs" [17].

- Model and Dataset Preparation:

- Train a QSAR/QSPR model (e.g., a binary classifier for a specific biological activity) on a training dataset.

- Prepare a validation set of compounds with known experimental outcomes and run the model to obtain predictions.

- Data Encoding and Projection:

- Encode all compounds (from both training and validation sets) into a high-dimensional space using chemical descriptors [17].

- Use a pre-trained parametric t-SNE model (the core of MolCompass) to project the high-dimensional descriptors of all compounds onto a deterministic 2D map. This projection groups structurally similar compounds together in clusters [17].

- Visualization and Error Mapping:

- Visualize the 2D chemical map using the MolCompass KNIME node, web tool, or Python package.

- Color the data points (compounds) based on the prediction error (e.g., the difference between the model's prediction and the experimental value) or the binary prediction correctness (correct/incorrect) [17].

- Analysis and Model Refinement:

- Identify regions or specific clusters on the map where the model systematically makes incorrect predictions. These are the "model cliffs" where chemical similarity does not correlate with predictive reliability [17].

- Use these visual insights to refine the model's Applicability Domain (AD) and prioritize areas for model improvement, such as acquiring more training data in the problematic chemical regions [17].

Workflow Diagram

Strategy Selection Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Platforms for Chemical Space Navigation

| Tool / Resource Name | Type | Primary Function | Key Features / Underlying Algorithm |

|---|---|---|---|

| Matbench Discovery [16] | Evaluation Framework | Benchmark ML models for predicting crystal stability | Provides standardized tasks, metrics, and a leaderboard; emphasizes prospective benchmarking. |

| MolCompass [17] | Software Framework | Visualize chemical space and validate QSAR/QSPR models | Parametric t-SNE core; available as KNIME node, web tool, and Python package (LCNC). |

| infiniSee [15] | Commercial Platform | Navigate ultra-large chemical spaces for drug discovery | Scaffold Hopper (FTrees), Analog Hunter (SpaceLight), Motif Matcher (SpaceMACS). |

| PubChem [19] | Public Database | Source of biological activity data (HTS results) for millions of compounds | Access via manual portal or programmatically (PUG-REST) for large datasets. |

| Enamine REAL Space [14] | Make-on-Demand Library | Source of billions of readily synthesizable compounds for virtual screening | Contains >70 billion molecules; used for machine learning-guided docking screens. |

| JBScreen JCSG++ [20] | Sparse Matrix Screen | Experimentally crystallize biological macromolecules | 96 pre-formulated conditions for initial high-throughput crystallization trials. |

| Conformal Prediction (CP) [14] | Statistical Framework | Provide valid, user-defined error control for ML predictions | Integral to ML-guided docking; ensures reliability of virtual active set selection. |

Methods in Action: Core Computational Techniques and Their Transformative Applications

The integration of molecular docking, molecular dynamics (MD), and machine learning (ML) has created a powerful, multi-scale computational toolkit for high-throughput screening in structural biology and drug discovery. This paradigm shift, driven by advances in artificial intelligence and computing power, allows researchers to move beyond static structural analysis to model the dynamic interplay between proteins and ligands with unprecedented speed and accuracy [21] [22]. These methodologies are no longer used in isolation; instead, they form an interconnected pipeline that accelerates the identification and optimization of therapeutic candidates by leveraging the unique strengths of each approach.

Molecular docking provides a static snapshot of potential binding modes, MD simulations capture the critical temporal evolution and stability of these complexes, and ML models extract predictive insights from vast, complex datasets generated by both experimental and computational methods [21] [23]. This integrated framework is particularly vital for high-throughput computational screening of crystal structures, enabling the efficient prioritization of lead compounds and the deciphering of complex molecular networks that underpin disease mechanisms [24]. This article details the application notes and experimental protocols for employing this integrated toolkit effectively.

Application Notes & Experimental Protocols

Molecular Docking for Binding Pose Prediction

Molecular docking computationally predicts the stable conformation of a protein-ligand complex. Modern approaches have evolved from traditional rigid-body methods to include flexible docking and, most recently, deep learning-based paradigms that can significantly accelerate the process [21].

Key Quantitative Metrics and Performance

Docking methods are typically evaluated on their ability to predict a ligand's binding pose accurately and in a physically plausible manner. Table 1 summarizes the performance of various state-of-the-art docking methods across key benchmarks, highlighting a trade-off between pose accuracy and physical validity [25].

Table 1: Performance Comparison of Molecular Docking Methods across Different Benchmark Datasets [25]

| Method Category | Method Name | Astex Diverse Set (RMSD ≤ 2 Å / PB-valid) | PoseBusters Benchmark (RMSD ≤ 2 Å / PB-valid) | DockGen (Novel Pockets) (RMSD ≤ 2 Å / PB-valid) |

|---|---|---|---|---|

| Traditional | Glide SP | ~70% / >94% | ~65% / >94% | ~60% / >94% |

| Generative Diffusion | SurfDock | 91.76% / 63.53% | 77.34% / 45.79% | 75.66% / 40.21% |

| Generative Diffusion | DiffBindFR (MDN) | 75.29% / ~70% | 50.93% / 47.20% | 30.69% / 47.09% |

| Regression-based | KarmaDock | <30% / <20% | <20% / <15% | <10% / <10% |

| Hybrid (AI Scoring) | Interformer | ~80% / ~85% | ~70% / ~80% | ~65% / ~75% |

Note: PB-valid refers to the percentage of predictions that pass all physical and chemical sanity checks in the PoseBusters toolkit [25].

Protocol: Deep Learning-Based Docking with DiffDock

Application Note: DiffDock is a generative diffusion model that excels in blind docking tasks, where the binding site is not predefined. It is particularly useful for rapidly generating accurate initial poses, though subsequent refinement with MD is recommended to ensure physical plausibility [21] [25].

Procedure:

- Input Preparation: Obtain the 3D structure of the target protein in PDB format. For the ligand, provide a 2D structure in SMILES format or a 3D structure file.

- Structure Preprocessing: Use a toolkit like Open Babel or RDKit to assign proper bond orders and protonation states to the ligand. Prepare the protein by adding hydrogen atoms and assigning partial charges.

- Pose Generation with DiffDock: Submit the prepared protein and ligand files to the DiffDock model. The model will iteratively denoise an initial random ligand pose to generate multiple candidate binding poses.

- Pose Ranking and Selection: DiffDock outputs a confidence score for each generated pose. Select the top-ranked poses for further analysis. As per benchmarking data, while pose accuracy (RMSD) may be high, it is critical to validate the physical plausibility of the selected poses [25].

- Validation: Cross-reference the predicted poses with known experimental data if available. Use a tool like PoseBusters to check for steric clashes, improper bond lengths/angles, and other physical inconsistencies [25].

Molecular Dynamics for Stability and Interaction Analysis

MD simulations model the physical movements of atoms and molecules over time, providing critical insights into the stability of docked complexes, conformational changes, and the free energy of binding.

Key Quantitative Properties from MD

MD simulations generate trajectories from which numerous properties can be extracted. Table 2 lists key MD-derived properties that are highly influential in predicting drug-relevant properties like solubility and, by extension, binding behavior [23].

Table 2: Key Molecular Dynamics-Derived Properties and Their Significance in Drug Discovery

| Property | Description | Significance in Drug Discovery |

|---|---|---|

| Root Mean Square Deviation (RMSD) | Measures the average change in displacement of atoms relative to a reference structure. | Quantifies the structural stability of the protein-ligand complex during simulation. |

| Solvent Accessible Surface Area (SASA) | The surface area of a molecule accessible to a solvent molecule. | Correlates with solvation energy and aqueous solubility; key for bioavailability [23]. |

| Coulombic Interaction Energy (Coulombic_t) | The electrostatic interaction energy between the ligand and its environment. | Measures the strength of polar interactions (e.g., hydrogen bonds, salt bridges) in binding. |

| Lennard-Jones Interaction Energy (LJ) | The van der Waals interaction energy between the ligand and its environment. | Measures the strength of non-polar, shape-complementarity interactions in binding. |

| Estimated Solvation Free Energy (DGSolv) | The free energy change associated with transferring a ligand from gas phase to solvent. | A critical component of the overall binding free energy prediction. |

| Average Solvation Shell (AvgShell) | The average number of solvent molecules in direct contact with the ligand. | Provides insight into the hydration state and desolvation penalty upon binding. |

Protocol: MD Simulation of a Protein-Ligand Complex

Application Note: This protocol uses GROMACS to simulate a docked protein-ligand complex, validating the stability of the docking pose and capturing dynamic interactions missed by static docking [24].

Procedure:

- System Setup:

- Obtain the 3D coordinates of the docked protein-ligand complex.

- Generate topology files for the protein using a force field such as CHARMM36. For the ligand, generate topology and parameters using a tool like

acpypewith GAFF2 parameters [24]. - Place the complex in a cubic box under periodic boundary conditions and solvate it with explicit water molecules (e.g., TIP3P model).

- Energy Minimization:

- Run an energy minimization (e.g., using the steepest descent algorithm for 50,000 steps) to remove any steric clashes introduced during system setup, until the maximum force is below a threshold (e.g., 1000 kJ/mol/nm) [24].

- System Equilibration:

- NVT Ensemble: Equilibrate the system for 100 ps at the target temperature (e.g., 310 K) using a thermostat (e.g., V-rescale), applying position restraints on the protein and ligand heavy atoms.

- NPT Ensemble: Further equilibrate the system for 100 ps at constant temperature (310 K) and pressure (1 bar) using a barostat (e.g., Parrinello-Rahman), again with position restraints [24].

- Production Simulation:

- Run an unrestrained MD simulation for a duration sufficient to observe the phenomena of interest (e.g., 50-100 ns for ligand stability). Use a 2 fs time step and save trajectory frames every 10 ps for analysis [24].

- Trajectory Analysis:

- Analyze the saved trajectory to calculate properties from Table 2 (RMSD, SASA, interaction energies, etc.) using GROMACS analysis tools. This data validates the docking pose and provides quantitative metrics on binding stability.

Machine Learning for Predictive Modeling and Scoring

ML algorithms learn complex patterns from large datasets to predict molecular properties, optimize scoring functions, and analyze high-dimensional data from docking and MD simulations.

Key Quantitative Performances of ML Models

ML models have been successfully applied to predict various physicochemical and biological properties. Table 3 shows the performance of ensemble ML models in predicting aqueous solubility using MD-derived properties, a critical factor in drug development [23].

Table 3: Performance of Ensemble Machine Learning Models for Predicting Aqueous Solubility (logS) using MD-Derived Properties [23]

| Machine Learning Algorithm | Test Set R² | Test Set RMSE |

|---|---|---|

| Gradient Boosting Regression (GBR) | 0.87 | 0.537 |

| XGBoost (XGB) | 0.85 | 0.561 |

| Extra Trees (EXT) | 0.84 | 0.579 |

| Random Forest (RF) | 0.83 | 0.589 |

Note: Features included logP and key MD-derived properties like SASA, Coulombic_t, LJ, DGSolv, RMSD, and AvgShell [23].

Protocol: Building an ML Model for Binding Affinity Prediction

Application Note: This protocol outlines a multi-instance learning approach that uses multiple docking poses, rather than a single crystal structure, to predict protein-ligand binding affinity. This increases applicability for targets with limited structural data [26].

Procedure:

- Data Curation:

- Collect a dataset of protein-ligand complexes with experimentally measured binding affinities (e.g., from PDBbind).

- For each complex, generate multiple docking poses using a traditional or DL-based docking tool (see Protocol 2.1.2).

- Feature Extraction:

- For each docking pose, extract structural and interaction features. This can include intermolecular distances, angles, interaction fingerprints, and energy terms.

- Model Training with Multi-Instance Learning:

- Represent each protein-ligand pair as a "bag" of instances, where each instance is a feature vector from one docking pose.

- Train a model (e.g., a graph neural network with an attention mechanism) that learns to weigh the contribution of each pose in the bag to predict the single binding affinity label for the entire complex [26].

- Model Validation:

- Validate the trained model on a held-out test set or through cross-validation. Compare its performance against models that use only crystal structures to demonstrate competitiveness [26].

Integrated Workflow for High-Throughput Screening

The individual protocols for docking, MD, and ML are most powerful when combined into a cohesive workflow for high-throughput screening of crystal structures. The diagram below illustrates the logical flow and integration points between these methodologies.

Workflow for Integrated Computational Screening

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential software tools and databases that form the core "reagent solutions" for executing the protocols described in this article.

Table 4: Essential Research Reagents & Software Tools for the Computational Toolkit

| Item Name | Type | Function & Application Note |

|---|---|---|

| AutoDock Vina | Software Tool | Traditional, physics-based docking program widely used for its balance of speed and accuracy in pose prediction [25]. |

| DiffDock | Software Tool | Deep learning-based docking tool that uses diffusion models for high-accuracy blind pose generation [21] [25]. |

| GROMACS | Software Tool | High-performance molecular dynamics package used for simulating the Newtonian equations of motion for systems with hundreds to millions of particles [24] [23]. |

| PDBbind | Database | Curated database of protein-ligand complex structures and their experimentally measured binding affinities, essential for training and validating ML models [26]. |

| PoseBusters | Software Tool | Validation toolkit to check the physical plausibility and chemical sanity of docking predictions, crucial for filtering DL-generated poses [25]. |

| CHARMM36/GAFF2 | Parameter Set | Force fields providing parameters for proteins and small molecules, respectively, essential for energy calculations in MD simulations [24]. |

The synergistic integration of molecular docking, molecular dynamics, and machine learning, as detailed in these application notes and protocols, provides a robust and powerful framework for high-throughput computational screening. Docking offers rapid initial sampling, MD provides dynamic validation and deep mechanistic insight, and ML enables predictive modeling and the efficient distillation of complex data into actionable hypotheses. As these tools continue to evolve—especially with the rise of more physically accurate deep learning models and increasingly efficient simulation algorithms—their collective impact on accelerating drug discovery and deepening our understanding of molecular interactions in structural biology will only grow.

The escalating complexity and cost of drug discovery have necessitated the development of computational approaches that can efficiently identify and optimize lead compounds. Virtual screening has emerged as a cornerstone technology in this endeavor, enabling researchers to rapidly sift through billions of chemically accessible molecules to identify promising candidates for experimental validation [27]. The integration of artificial intelligence and machine learning with traditional physics-based docking methods has created a powerful paradigm shift, compressing screening timelines from months to days while dramatically improving hit rates [27] [28]. This acceleration is particularly crucial as chemical libraries have expanded to contain billions of make-on-demand compounds, presenting both unprecedented opportunities and significant computational challenges [28].

Framed within the broader context of high-throughput computational screening of crystal structures, modern virtual screening platforms must address critical challenges in binding pose prediction, affinity accuracy, and receptor flexibility modeling. Recent advances have demonstrated that hybrid approaches, which combine AI-guided selection with high-fidelity physics-based docking, can achieve remarkable success rates. For instance, the OpenVS platform has reported hit rates of 14-44% for unrelated targets, with the entire screening process completed in less than seven days [27]. These developments represent a fundamental transformation in early drug discovery, moving from traditional high-throughput experimental screening to intelligent, computation-driven candidate identification.

Quantitative Analysis of Virtual Screening Platforms

The performance of virtual screening methodologies can be quantitatively assessed through standardized benchmarks and real-world applications. The table below summarizes key performance metrics for leading virtual screening platforms and approaches, highlighting their respective advantages in addressing the challenges of modern drug discovery.

Table 1: Performance Comparison of Virtual Screening Platforms and Methods

| Platform/Method | Key Features | Performance Metrics | Application Examples |

|---|---|---|---|

| OpenVS with RosettaVS [27] | Open-source; AI-accelerated; models receptor flexibility; active learning | EF1% = 16.72 (CASF2016); 14-44% experimental hit rate; screening of billion-compound libraries in <7 days | KLHDC2 ubiquitin ligase (7 hits); NaV1.7 sodium channel (4 hits) |

| ML-Guided Docking [28] | Machine learning (CatBoost) pre-screening; conformal prediction | 1000-fold reduction in computational cost; successful screening of 3.5 billion compounds | Identification of multi-target GPCR ligands |

| AI-Powered Quantum Chemistry [29] | Generative biology; machine learning; collaborative data environments | Measurable improvements in discovery timelines and hit-to-lead progression | Photocatalyst discovery; molecular design |

| DOS Pattern Similarity Screening [30] | Electronic structure similarity as descriptor for catalyst discovery | Identified Ni61Pt39 catalyst with 9.5-fold enhancement in cost-normalized productivity | Replacement of Pd in H2O2 direct synthesis |

The Enrichment Factor (EF), particularly at the top 1% of screened compounds (EF1%), is a crucial metric indicating a method's ability to identify true binders early in the ranking process. The superior EF1% of 16.72 achieved by RosettaGenFF-VS on the CASF2016 benchmark demonstrates its enhanced screening power compared to other state-of-the-art methods [27]. Furthermore, the translation of these computational advantages into experimental success is evidenced by the high hit rates (14% for KLHDC2 and 44% for NaV1.7) observed when moving from virtual screening to biochemical assays [27].

Recent quantitative modeling of structure-based virtual screening performance reveals that observed experimental hit-rate curves can be accurately reproduced by a simple bivariate normal distribution model, where docking scores are interpreted as noisy predictors of binding free energy [31]. This model predicts that even slight improvements in scoring accuracy would substantially improve both hit rates and hit affinities, highlighting the critical importance of continued development in scoring functions as chemical libraries expand into the billions of compounds.

Experimental Protocols for AI-Accelerated Virtual Screening

Protocol: RosettaVS for Ultra-Large Library Screening

The RosettaVS protocol represents a state-of-the-art approach for virtual screening of multi-billion compound libraries. The method is integrated into the OpenVS platform, which employs active learning to efficiently triage and select promising compounds for expensive docking calculations [27].

Required Reagents and Computational Resources:

- Target protein structure (experimental or predicted)

- Ultra-large chemical library (e.g., 3.5 billion compounds [28])

- High-performance computing cluster (3000 CPUs, GPUs like RTX2080 or H100)

- RosettaVS software suite (open-source)

Methodology:

- Library Preparation: Format the chemical library for docking, ensuring standardized structures, charges, and protonation states.

- Active Learning Phase:

- Dock a representative subset (e.g., 1 million compounds) to the target protein.

- Train a target-specific classification algorithm (e.g., CatBoost [28]) to identify top-scoring compounds based on molecular features.

- Use the conformal prediction framework to select compounds from the full multi-billion library for docking.

- Virtual Screening Express (VSX) Mode:

- Perform rapid initial screening of selected compounds using a simplified protocol with fixed receptor side chains.

- Utilize the improved RosettaGenFF-VS forcefield for scoring.

- Virtual Screening High-Precision (VSH) Mode:

- Subject top hits from VSX to more accurate docking with full receptor flexibility (side chains and limited backbone movement).

- Combine enthalpy calculations (ΔH) with entropy estimates (ΔS) for improved ranking [27].

- Hit Validation:

- Select top-ranked compounds for experimental testing.

- Validate binding through biochemical assays and structural methods (e.g., X-ray crystallography).

This protocol reduces the computational cost of structure-based virtual screening by more than 1,000-fold compared to exhaustive docking, while maintaining high sensitivity for identifying true binders [28].

Protocol: Machine Learning-Guided Docking Screen

This protocol leverages machine learning to rapidly traverse vast chemical spaces, enabling efficient screening of billions of compounds [28].

Methodology:

- Initial Docking and Model Training:

- Perform molecular docking of 1 million randomly selected compounds from the full library.

- Train a CatBoost classifier to distinguish between top-scoring and poor-scoring compounds based on molecular descriptors and fingerprints.

- Conformal Prediction:

- Apply the trained classifier to the entire multi-billion compound library.

- Use the conformal prediction framework to select compounds with high likelihood of being top-binders.

- Focused Docking:

- Dock only the machine learning-selected subset (typically 1-5% of the full library).

- Apply standard docking scoring functions to rank the final candidates.

- Experimental Validation:

- Procure or synthesize top-ranked compounds.

- Test binding affinity and functional activity in relevant biological assays.

This approach has been successfully applied to identify ligands for G protein-coupled receptors and to discover compounds with multi-target activity tailored for therapeutic effect [28].

Workflow Visualization: AI-Accelerated Virtual Screening

The following diagram illustrates the integrated workflow of the AI-accelerated virtual screening platform, highlighting the synergy between machine learning pre-screening and high-fidelity molecular docking.

Diagram 1: AI-Accelerated Virtual Screening Workflow. This workflow integrates machine learning pre-screening with high-fidelity docking to efficiently identify hits from ultra-large chemical libraries.

Successful implementation of virtual screening campaigns requires access to specialized computational tools, chemical libraries, and analytical resources. The following table details key components of the virtual screening toolkit.

Table 2: Essential Research Reagents and Resources for Virtual Screening

| Category | Resource | Function/Application | Examples/Sources |

|---|---|---|---|

| Software Platforms | OpenVS [27] | Open-source AI-accelerated virtual screening platform | Integrated RosettaVS, active learning |

| RosettaVS [27] | Physics-based docking with receptor flexibility | VSX and VSH docking modes | |

| CDD Vault [29] | Collaborative data management for chemical and biological data | Activity, Registration, Assays, ELN modules | |

| Chemical Libraries | Make-on-Demand Libraries [28] | Ultra-large enumerable chemical spaces | >75 billion readily accessible compounds [32] |

| vIMS Library [32] | Targeted virtual library with drug-like compounds | ~800,000 compounds based on existing scaffolds | |

| Computational Resources | Universal Model for Atoms (UMA) [33] | Machine learning interatomic potential for CSP | FastCSP workflow for crystal structure prediction |

| High-Performance Computing | Parallel processing for docking billions of compounds | 3000 CPU clusters with GPUs (H100, RTX2080) | |

| Analytical Tools | RDKit [32] | Cheminformatics for molecular representation and analysis | SMILES processing, fingerprint generation |

| ChemicalToolbox [32] | Web server for cheminformatics analysis | Filtering, visualization, simulation |

The integration of these resources creates a powerful ecosystem for virtual screening. For instance, the combination of OpenVS for docking, CDD Vault for data management, and access to make-on-demand libraries creates an end-to-end pipeline from virtual compound selection to experimental data management [29] [27] [28]. Furthermore, the emergence of universal machine learning potentials like UMA enables accurate crystal structure prediction, which is critical for understanding solid-form properties of potential drug candidates [33] [34].

The field of virtual screening is rapidly evolving toward even greater integration of artificial intelligence and machine learning with traditional physics-based methods. Federated learning approaches are enabling secure multi-institutional collaborations by integrating diverse datasets without compromising data privacy [35]. Meanwhile, transfer learning and few-shot learning techniques are proving effective in scenarios with limited training data, leveraging pre-trained models to predict molecular properties, optimize lead compounds, and identify toxicity profiles [35]. These advances are particularly valuable for novel target classes with limited structural or ligand information.

The continuing expansion of accessible chemical space to hundreds of billions of compounds presents both opportunities and challenges for virtual screening. Future improvements will likely focus on enhancing scoring function accuracy, which quantitative models suggest would substantially improve both hit rates and hit affinities—potentially enabling equivalent performance with smaller libraries [31]. Additionally, the integration of crystal structure prediction workflows like FastCSP with virtual screening platforms will provide a more comprehensive understanding of solid-form properties early in the drug discovery process [33] [34].

In conclusion, the revolution in drug discovery through virtual screening is characterized by the seamless integration of computational and experimental approaches. The development of AI-accelerated platforms capable of screening billion-compound libraries in days rather than months, combined with improved scoring functions that accurately model receptor flexibility and binding thermodynamics, has dramatically increased the efficiency and success rates of lead identification and optimization. As these technologies continue to mature and become more accessible to the broader research community, they promise to fundamentally transform the landscape of pharmaceutical development, enabling more rapid discovery of therapeutics for diverse human diseases.

The field of materials science is undergoing a transformative shift, mirroring the evolution of structural biology, where high-throughput (HTP) methodologies are moving from specialized applications to central discovery tools. Structural genomics initiatives have demonstrated that parallel processing, automation, and rigorous data mining can systematically address complexity, determining thousands of protein structures by implementing automated pipelines from gene to structure [36]. This same paradigm is now accelerating the development of functional porous materials, such as Metal-Organic Frameworks (MOFs), for critical environmental applications including carbon dioxide (CO₂) and radioactive iodine capture.

The core challenge in materials discovery lies in the vastness of the hypothetical design space. Over 160,000 hypothetical MOFs have been proposed, making individual experimental testing impractical [37]. High-throughput computational screening (HTCS), coupled with machine learning (ML), has emerged as a powerful approach to navigate this complexity. By rapidly evaluating thousands of materials in silico, researchers can identify top-performing candidates for synthesis and testing, thereby closing the gap between theoretical design and practical application [37] [11]. These approaches provide the foundation for the application notes and protocols detailed in this document.

Core Principles of Porous Material Screening

The performance of porous materials in gas separation and capture is governed by key structural and chemical properties. Adsorption separation in materials like MOFs is influenced by mechanisms such as molecular sieving (size and shape exclusion), thermodynamic equilibrium, and kinetic effects [37]. Understanding the relationship between a material's structure and its adsorption properties is the first step in rational design.

Computational and data-driven studies have identified optimal ranges for these structural parameters to guide the screening of high-performance materials. The tables below summarize the optimal structural parameters for iodine (I₂) and carbon dioxide (CO₂) capture, derived from large-scale HTCS studies.

Table 1: Optimal Structural Parameters for Iodine Capture in MOFs under Humid Conditions, as Identified through HTCS [11].

| Structural Parameter | Optimal Range for I₂ Capture | Functional Rationale |

|---|---|---|

| Largest Cavity Diameter (LCD) | 4.0 – 7.8 Å | Balances reduced steric hindrance with sufficient adsorbent-adsorbate interaction. |

| Pore Limiting Diameter (PLD) | 3.34 – 7.0 Å | Must exceed the kinetic diameter of I₂ (3.34 Å) for accessibility. |

| Void Fraction (φ) | 0 – 0.17 | Low porosity favors I₂ over H₂O in competitive humid adsorption. |

| Density | ~0.9 g/cm³ | Maximizes adsorption sites before excessive steric hindrance occurs. |

| Surface Area | 0 – 540 m²/g | A moderate area is optimal, as very high surfaces can reduce selectivity in humid conditions. |

Table 2: Key Chemical and Molecular Features Influencing MOF Performance, as Identified by Interpretable Machine Learning [11].

| Feature Category | Key Feature | Impact on Iodine Capture |

|---|---|---|

| Chemical Features | Henry's Coefficient for I₂ | One of the most crucial factors, indicating adsorption strength at low concentrations. |

| Heat of Adsorption for I₂ | A primary factor for adsorption capacity and selectivity. | |

| Molecular Features | Presence of Nitrogen Atoms | Key structural factor that enhances iodine adsorption. |

| Presence of Six-Membered Rings | A key structural motif that improves performance. | |

| Presence of Oxygen Atoms | Secondary positive influence on adsorption. |

Workflow: Integrated Computational & Experimental Screening

The modern materials discovery pipeline is an iterative cycle combining large-scale computation, AI, and automated experimentation. The following diagram illustrates this integrated workflow.

Workflow Description

The process begins with Database Curation, where a starting database of potential materials is assembled, such as the Computation-Ready, Experimental (CoRE) MOF database [11]. This is followed by High-Throughput Computational Screening, where molecular simulations (e.g., Grand Canonical Monte Carlo or Density Functional Theory) are used to calculate the adsorption performance of every material in the database for the target gas under specific conditions [37] [11]. The resulting data set is then used for Machine Learning Model Training and Prediction. This step builds a model that can predict material performance, often with high accuracy, and identifies the key structural and chemical features governing performance [11]. The ML model is used to Rank Candidates and perform feature importance analysis, providing interpretable design rules [11]. Finally, the most promising candidates are forwarded to Automated Laboratory Synthesis and Validation, where robotic systems synthesize and test the materials, generating high-quality experimental data to close the loop and refine the computational models [37].

Experimental Protocols

Protocol 1: High-Throughput Computational Screening of MOFs for Gas Adsorption

This protocol details the steps for performing HTCS of MOFs for gas adsorption capacity and selectivity, specifically for I₂ in humid air [11].

1. Research Reagent Solutions & Materials