Hierarchy in Materials Phase Stability Networks: A New Paradigm for Pharmaceutical Development

This article explores the emerging concept of hierarchy in materials phase stability networks and its critical implications for pharmaceutical scientists and drug development professionals.

Hierarchy in Materials Phase Stability Networks: A New Paradigm for Pharmaceutical Development

Abstract

This article explores the emerging concept of hierarchy in materials phase stability networks and its critical implications for pharmaceutical scientists and drug development professionals. We examine how complex network theory reveals inherent thermodynamic competitions that govern material stability, particularly for active pharmaceutical ingredients (APIs) exhibiting polymorphism. The content bridges fundamental network topology with practical applications in preformulation screening, stability optimization, and regulatory strategy. Through foundational principles, methodological approaches, troubleshooting frameworks, and validation protocols, we provide a comprehensive guide for leveraging phase stability hierarchies to enhance drug development efficiency, mitigate stability risks, and advance predictive modeling in pharmaceutical sciences.

Understanding Phase Stability Networks: From Atoms to Materials Ecosystems

The exploration of materials space has been fundamentally transformed by the adoption of complex network theory, providing a powerful paradigm for understanding thermodynamic relationships between inorganic materials. The "phase stability network" represents a top-down approach to materials science, complementing traditional bottom-up investigations of atomic structure and bonding by focusing on interactions between materials themselves [1]. This network-based framework encodes the universal T=0 K phase diagram by representing thermodynamically stable compounds as nodes and their two-phase equilibria as edges, creating a comprehensive map of materials reactivity and synthesizability [2] [1]. The resulting network structure reveals organizational principles that govern materials discovery, with implications for predicting new synthesizable materials and understanding the fundamental hierarchy in materials stability.

Recent advances in high-throughput density functional theory (HT-DFT) calculations have enabled the construction of these networks from massive computational databases containing hundreds of thousands of experimentally reported and hypothetical materials [1] [3]. By applying the convex-hull formalism to this data, researchers can identify all thermodynamically stable materials and the tie-lines defining their two-phase equilibria, thus constructing what is termed the "complete phase stability network of all inorganic materials" [1]. This network perspective has emerged as a crucial tool for addressing one of the grand challenges in materials science: assessing the synthesizability of inorganic materials from computational predictions [2].

Core Concepts and Definitions

Fundamental Network Components

Table 1: Core Components of a Phase Stability Network

| Component | Definition | Materials Science Interpretation |

|---|---|---|

| Nodes | Discrete elements in the network | Individual thermodynamically stable compounds |

| Edges | Connections between nodes | Tie-lines representing stable two-phase equilibria between compounds |

| Degree (k) | Number of connections per node | Number of other compounds with which a material can form stable two-phase equilibria |

| Mean Degree (⟨k⟩) | Average connections per node | Average number of stable two-phase equilibria per compound (~3850 in complete network) |

| Tie-lines | Edges in the network | Thermodynamic stability relationships indicating non-reactivity between pairs of compounds |

The phase stability network is constructed from thermodynamically stable compounds (nodes) and their two-phase equilibria (edges), forming a complex web of relationships across materials space [1]. Each node represents a material that lies on the convex hull of stability in its respective chemical space, meaning it is thermodynamically stable with respect to all other competing phases at T=0 K [2] [1]. The edges, represented as tie-lines, connect pairs of materials that can stably coexist in equilibrium, indicating that these material pairs will not react with each other to form other compounds [1].

The degree of a node (k) holds particular significance in materials science, as it quantifies how many other materials a given compound can stably coexist with [1]. Materials with exceptionally high degree function as network hubs and often correspond to extremely stable and non-reactive compounds, such as noble gases, binary halides, and certain oxides [2] [1]. These hubs play a disproportionately important role in the network's connectivity and strongly influence the synthesizability of new materials connected to them [2].

Constructing the Network from Computational Data

Table 2: Data Sources for Network Construction

| Resource | Content Type | Scale | Primary Use |

|---|---|---|---|

| Open Quantum Materials Database (OQMD) | DFT calculations of crystalline materials | >500,000 materials | Primary source for stable compounds and tie-lines |

| Inorganic Crystal Structure Database (ICSD) | Experimentally reported crystal structures | ~300,000 entries | Validation and historical discovery timelines |

| High-Throughput DFT | Computational formation energies | Millions of calculations | Convex hull construction and stability determination |

The construction of a comprehensive phase stability network begins with massive computational databases like the Open Quantum Materials Database (OQMD), which contains DFT calculations of nearly all crystallographically ordered, structurally unique materials experimentally observed to date, along with numerous hypothetical materials [1]. Using the convex-hull formalism, researchers determine which materials are thermodynamically stable and identify all two-phase equilibria between them [2] [1].

The process involves several technical steps: First, formation energies are calculated for all compounds in the database relative to their elemental references. Next, the convex hull is constructed in each chemical subsystem, identifying the set of stable compounds whose formation energies form the lower envelope of possible energies. Finally, tie-lines are drawn between all pairs of stable compounds that can coexist in equilibrium, completing the network structure [1]. This process results in an extremely dense network of approximately 21,300 nodes and 41 million edges in the complete inorganic materials network [1].

Network Topology and Global Properties

Statistical Characteristics of the Materials Network

The phase stability network of all inorganic materials exhibits several remarkable topological properties that distinguish it from other well-studied networks. With approximately 21,300 nodes and 41 million edges, the network has an exceptionally high mean degree ⟨k⟩ of ~3850, indicating that each stable compound can form a stable two-phase equilibrium with thousands of other compounds on average [1]. The connectance (fraction of possible edges that actually exist) is 0.18, significantly higher than most commonly studied networks [1].

The degree distribution p(k) follows a lognormal form rather than a power-law distribution typical of scale-free networks [1]. This deviation from scale-free behavior results from the network's extreme density, as sparsity is a necessary condition for the emergence of exact power-law distributions [1]. Nevertheless, the network displays heavy-tailed connectivity with a small number of highly connected hubs, such as O₂ (with nearly 2600 tie-lines), Cu, H₂O, H₂, C, and Ge (each with over 1100 tie-lines) [2].

Path Length and Clustering Properties

The phase stability network exhibits small-world characteristics with remarkably short path lengths between nodes. The characteristic path length L = 1.8 and the network diameter L_max = 2, meaning any two compounds in the network are connected by at most two edges [1]. This extraordinary connectivity arises from the presence of highly connected hubs (particularly noble gases) that link otherwise disparate regions of the network [1].

The network also displays significant clustering behavior, with a global clustering coefficient Cg = 0.41 and mean local clustering coefficient C̄i = 0.55 [1]. These values are substantially higher than would be expected in a random network of similar density, indicating that stable materials form local highly connected communities in the network [1]. The assortativity coefficient of -0.13 reveals weakly disassortative mixing, meaning that materials with high connectivity tend to connect with materials having lower connectivity [1].

Hierarchy in Phase Stability Networks

Chemical Hierarchy by Number of Components

A fundamental hierarchy exists in the phase stability network based on the number of components (𝒩) in a compound. The mean degree ⟨k⟩ systematically decreases as 𝒩 increases, with binary compounds having the highest connectivity and higher-order compounds becoming progressively less connected [1]. This hierarchy emerges from an inherent competition for tie-lines that high-𝒩 materials face with low-𝒩 materials in their chemical space, but not vice versa [1].

The distribution of stable materials as a function of 𝒩 reveals a peak at 𝒩 = 3 (ternary compounds), contrary to what might be expected from combinatorial explosion [1]. This distribution reflects the consequence of competition between low- and high-component materials: high-𝒩 compounds need substantially lower formation energies than low-𝒩 ones to become stable [1]. Only a few high-𝒩 materials can "survive" as stable phases if the corresponding lower-𝒩 systems already have several stable phases, consistent with recent reports of a "volcano plot" for stable ternary nitrides [1].

Temporal Evolution and Network Dynamics

The materials stability network is not static but has evolved over time as new materials have been discovered. Analysis of discovery timelines extracted from citation data reveals that the network has been growing with increasing acceleration, reaching a current discovery rate of ~400 stable materials per year [2]. The number of tie-lines E increases faster than the number of materials N, following a densification power-law E(t) ~ N(t)^α with α ≈ 1.04 [2].

The degree distribution has evolved toward its current lognormal form, being far from a power-law in the early network (1960s) but approaching it over time [2]. The exponent γ of the approximate power-law distribution became constant at 2.6 ± 0.1 after the 1980s, within the range 2 < γ < 3 observed in other scale-free networks like the world-wide-web or collaboration networks [2]. This evolution follows the Barabasi-Albert model for growing networks, where a small difference in node degrees in early stages becomes drastically amplified over time due to preferential attachment of new nodes to higher-degree nodes [2].

Experimental and Computational Methodologies

Research Reagent Solutions for Network Analysis

Table 3: Essential Computational Tools for Phase Stability Network Research

| Tool/Resource | Function | Application in Network Analysis |

|---|---|---|

| High-Throughput DFT | Calculate formation energies | Determine thermodynamic stability of compounds |

| Convex Hull Construction | Identify stable phases and tie-lines | Extract nodes and edges for the network |

| Graph Neural Networks (GNNs) | Predict stability of hypothetical structures | Expand network with predicted stable compounds |

| Upper Bound Energy Minimization (UBEM) | Efficient stability screening | Rapidly identify stable candidates from large chemical spaces |

| Network Analysis Algorithms | Compute topological metrics | Characterize degree distribution, path length, clustering |

The investigation of phase stability networks relies on a suite of computational methodologies and data resources. The foundational element is high-throughput density functional theory (HT-DFT), which provides the formation energies necessary to determine thermodynamic stability [1] [3]. Databases like the Open Quantum Materials Database (OQMD) serve as comprehensive repositories for these calculations, containing data for hundreds of thousands of known and hypothetical materials [1].

For analyzing large chemical spaces, machine learning approaches have become indispensable. Graph neural networks (GNNs) have demonstrated particular effectiveness in predicting thermodynamic stability directly from crystal structures or chemical compositions with significantly reduced computational cost compared to DFT [4]. The Upper Bound Energy Minimization (UBEM) approach provides an efficient screening strategy by using volume-relaxed energies as upper bounds to identify candidates that will remain stable after full relaxation [4]. These methods have enabled the discovery of thousands of new stable compounds, such as the identification of 1810 new thermodynamically stable Zintl phases from a search space of >90,000 candidates [4].

Protocol for Network Construction and Analysis

The construction of a phase stability network follows a systematic workflow beginning with high-throughput DFT calculations of formation energies for all compounds in the target chemical space [1]. The subsequent convex hull construction identifies the set of thermodynamically stable compounds that form the nodes of the network [2] [1]. The tie-line identification process connects all pairs of stable compounds that can coexist in equilibrium, forming the edges of the network [1].

Once constructed, the network undergoes comprehensive topological analysis to calculate key metrics including degree distribution, characteristic path length, clustering coefficients, and assortativity [1]. For temporal analysis, historical discovery timelines can be extracted from crystallographic databases and citation records to understand the evolution of the network over time [2]. Machine learning models can then be built using network properties as features to predict the synthesizability of hypothetical materials [2].

Case Studies and Applications

Predicting Synthesizability from Network Position

The position of a material within the phase stability network serves as a powerful predictor of its synthesizability likelihood. By training machine learning models on historical discovery data and network properties, researchers can estimate the probability that hypothetical, computer-generated materials will be amenable to successful experimental synthesis [2]. Six key network properties have been identified as particularly informative for this prediction: degree centrality, eigenvector centrality, degree, mean shortest path length, mean degree of neighbors, and clustering coefficient [2].

This approach implicitly captures circumstantial factors beyond pure thermodynamics that influence discovery, including the availability of kinetically favorable pathways, development of new synthesis techniques, availability of precursors, changes in research interest, and even policy influences [2]. The temporal evolution of a material's network properties encodes information about when it became "ripe" for discovery, providing a data-driven framework for prioritizing hypothetical materials for experimental synthesis [2].

Nobility Index and Material Reactivity

Network connectivity provides a rational, data-driven metric for material reactivity termed the "nobility index" [1]. This metric quantitatively identifies the noblest materials in nature based on their position within the phase stability network [1]. Materials with extremely high degree (such as noble gases) function as network hubs and exhibit minimal reactivity with other compounds, while materials with low connectivity tend to be more reactive [1].

The analysis reveals that beyond noble gases, certain binary halides and oxides function as stability hubs in the network, with materials like O₂, Cu, H₂O, H₂, C, and Ge having particularly high connectivity (over 1100 tie-lines each) [2]. These hubs play a dominant role in determining stabilities and subsequently influencing synthesis of many materials, whether as starting materials, decomposition products of precursors, or simply as competing phases [2].

Expanding Zintl Phase Space with Machine Learning

A recent application of network-informed materials discovery demonstrated the efficient expansion of Zintl phase chemical space using graph neural networks [4]. Researchers employed a GNN framework with the Upper Bound Energy Minimization approach to screen over 90,000 hypothetical Zintl phases, accurately identifying 1,810 new thermodynamically stable phases with 90% precision as validated by DFT calculations [4]. This approach proved more than twice as accurate as existing machine-learned interatomic potentials like M3GNet (which achieved only 40% precision on the same dataset) [4].

The study further employed random forest models and SHAP analysis to demonstrate the critical role of ionic bonding in the thermodynamic stability of Zintl phases, affirming chemical intuition within a quantitatively rigorous framework [4]. This case study illustrates how network-based approaches combined with machine learning can significantly accelerate the discovery of new materials in complex chemical spaces while providing fundamental insights into structure-property relationships.

The framework of phase stability networks has emerged as a transformative paradigm for understanding and predicting materials stability and synthesizability. By representing the complete thermodynamic landscape of inorganic materials as a complex network, researchers can identify fundamental organizational principles that govern materials discovery and reactivity [2] [1]. The observed hierarchical structure of these networks, with systematic variations in connectivity based on chemical complexity, provides crucial insights into the asymmetric competition faced by high-component materials [1].

Future research directions will likely focus on integrating kinetic factors into network models, extending the framework to finite temperatures through incorporation of entropy effects, and developing more sophisticated machine learning approaches for predicting network evolution [2] [3]. The integration of phase stability networks with materials property databases also holds promise for accelerating the discovery of materials with targeted functional characteristics, creating a comprehensive materials design framework that bridges thermodynamic stability, synthesizability, and application-specific properties [4] [3].

As the field continues to evolve, phase stability networks will play an increasingly central role in guiding computational and experimental materials discovery, helping researchers navigate the vast chemical space of plausible compounds toward the most promising synthesizable materials with desired functionalities.

The paradigm for understanding materials has been fundamentally expanded by the application of complex network theory, which provides a top-down framework for analyzing interactions between materials themselves, complementing traditional bottom-up atomic-scale approaches [1]. In this network-based perspective, the complete phase stability network of inorganic materials can be represented as a complex system of interconnected nodes and edges, where nodes correspond to thermodynamically stable compounds and edges represent stable two-phase equilibria (tie-lines) between them [1] [5]. This novel approach has revealed previously inaccessible characteristics of materials systems that emerge from the organizational structure of the network itself, independent of traditional atoms-to-materials paradigms [1]. Research on high-entropy alloys further demonstrates how machine learning leverages these network-derived insights to predict phase stability and accelerate materials discovery [6] [7].

The analysis of these materials networks reveals an inherent hierarchical organization governed by thermodynamic competition. This hierarchy manifests clearly in the relationship between node connectivity and chemical complexity, where materials with fewer elemental constituents systematically exhibit higher connectivity in the phase stability network [1]. Understanding this hierarchical structure provides the foundational context for exploring the three key topological metrics that characterize materials networks: degree distribution, path length, and clustering.

Core Topological Metrics

Degree Distribution

The degree distribution of a network describes the probability distribution of the number of connections (edges) incident to each node (material). In materials networks, the degree (k) of a node represents the number of other materials with which it can form stable two-phase equilibria [1].

Analysis of the universal phase stability network reveals that degree distribution follows a lognormal form rather than a scale-free power-law distribution [1]. This lognormal distribution belongs to the "heavy-tail" family and emerges from the network's extremely dense connectivity, with an average degree ⟨k⟩ of approximately 3,850 edges per node [1]. This means each stable inorganic compound can form stable two-phase equilibrium with thousands of other compounds on average.

The hierarchical nature of materials networks is evident in the systematic decrease in mean degree ⟨k⟩ as the number of elemental components (𝒩) in a material increases [1]. This occurs because higher-component materials face greater competition for tie-lines from lower-component materials in their chemical space.

Table 1: Degree Distribution Characteristics in the Phase Stability Network

| Metric | Value | Interpretation |

|---|---|---|

| Network Size | ~21,300 nodes | Thermodynamically stable inorganic compounds [1] |

| Edge Count | ~41 million edges | Stable two-phase equilibria (tie-lines) [1] |

| Mean Degree ⟨k⟩ | ~3,850 | Average number of tie-lines per material [1] |

| Distribution Type | Lognormal | Heavy-tailed distribution [1] |

| Connectance | 0.18 | Fraction of maximum possible edges present [1] |

Path Length

Path length in network science refers to the number of edges that must be traversed to connect any two nodes. The characteristic path length (L) is the average of the shortest path lengths between all pairs of nodes in the network, while the diameter (L_max) represents the longest shortest path between any two nodes [1].

The phase stability network exhibits remarkable small-world characteristics with an extremely short characteristic path length of L = 1.8 and diameter L_max = 2 [1]. This indicates that despite the network's enormous size with over 21,000 nodes, any two materials are connected by very few intermediate steps—typically fewer than two edges on average.

This remarkably short path length results from the presence of highly connected nodes corresponding to thermodynamically stable and non-reactive materials, particularly noble gases which form almost no compounds and thus have tie-lines with nearly all other materials in the network [1]. Even when disregarding noble gases, the path length remains small due to other extremely stable materials like binary halides that maintain high connectivity.

Clustering Coefficient

The clustering coefficient measures the degree to which nodes in a network tend to cluster together, quantifying the probability that two neighbors of a node are themselves connected [1]. In materials networks, this represents the likelihood that if material A and material B both form stable two-phase equilibria with material C, then A and B can also stably coexist.

The phase stability network displays significant clustering with a global clustering coefficient Cg = 0.41 and mean local clustering coefficient Ci = 0.55 [1]. These values substantially exceed those of random networks with similar density, indicating that stable materials form locally interconnected communities rather than being randomly distributed.

The clustering structure follows a hierarchical pattern, where the mean local clustering coefficient decreases as node connectivity increases [1]. This behavior suggests that materials with lower connectivity (typically higher-component compounds) form tighter local clusters, while highly connected nodes (typically elements and binary compounds) maintain connections across diverse regions of the network.

Table 2: Comprehensive Topological Metrics of the Phase Stability Network

| Metric | Value | Comparative Context |

|---|---|---|

| Characteristic Path Length (L) | 1.8 | Much shorter than most social (3-6) and biological (2-8) networks [1] |

| Network Diameter (L_max) | 2 | Extremely small compared to technological (6-20) and information (3-15) networks [1] |

| Global Clustering Coefficient (C_g) | 0.41 | Comparable to many real-world networks (social: 0.1-0.7, biological: 0.1-0.8) [1] |

| Mean Local Clustering Coefficient (C_i) | 0.55 | Higher than random networks of same density (~0.18) [1] |

| Assortativity Coefficient | -0.13 | Weakly dissortative, similar to technological and biological networks [1] |

Experimental and Computational Protocols

Network Construction Methodology

Constructing a phase stability network requires systematic computational thermodynamics and careful data integration. The following protocol outlines the key steps:

Data Acquisition: Extract calculated formation energies and structural information from high-throughput density functional theory (HT-DFT) databases such as the Open Quantum Materials Database (OQMD), which contains calculations for nearly all crystallographically ordered, structurally unique materials experimentally reported, plus hypothetical materials [1].

Stability Assessment: For each chemical system, perform convex hull analysis to identify thermodynamically stable compounds at T = 0 K. A material is considered stable if its formation energy lies on the convex hull of the energy-composition space [1].

Tie-line Identification: Determine all stable two-phase equilibria between identified stable compounds. Two materials share a tie-line (edge) if they can coexist in stable equilibrium without reacting to form other compounds [1].

Network Assembly: Represent each stable compound as a node and each identified tie-line as an edge, constructing the adjacency matrix of the phase stability network [1].

Metric Calculation: Compute topological metrics (degree distribution, path length, clustering coefficients) using network analysis tools and validate against known thermodynamic relationships [1].

Machine Learning Integration

Machine learning approaches leverage topological metrics as features for predicting materials properties and stability. The integration protocol involves:

Feature Engineering: Extract node-level topological metrics (degree, centrality measures, clustering coefficients) from the phase stability network as input features for ML models [6].

Model Selection: Employ ensemble methods such as Random Forest and XGBoost, which have demonstrated strong performance (86% accuracy) in predicting HEA phases using topological and compositional descriptors [7].

Data Augmentation: Address class imbalance in phase categories through synthetic data generation, increasing under-represented categories to 1500 records each to ensure balanced model training [7].

Validation: Correlate ML predictions with experimental synthesis results and first-principles calculations to validate model accuracy and identify critical topological descriptors for phase stability [8].

Research Reagent Solutions

Table 3: Essential Computational Tools for Materials Network Analysis

| Tool Name | Function | Application Context |

|---|---|---|

| Open Quantum Materials Database (OQMD) | HT-DFT calculated properties repository | Source of formation energies and stability data for ~500,000 materials [1] |

| Zen Mapper | Topological data analysis and mapper algorithm implementation | Network visualization and analysis of high-dimensional materials data [9] |

| KeplerMapper | Python library for topological network visualization | Interactive visualization of complex datasets and network structures [9] |

| CALPHAD | Computational thermodynamics framework | Phase diagram calculation and thermodynamic modeling [3] |

| mappeR/shinymappeR | R package for mapper algorithm implementation | Statistical analysis of network structures and topological metrics [9] |

Hierarchical Organization in Materials Networks

The topological metrics collectively reveal a profound hierarchical organization within the phase stability network. This hierarchy manifests through several interconnected phenomena:

The degree-clustering relationship demonstrates that materials with lower connectivity (typically higher-component compounds) form more tightly interconnected local communities, while highly connected nodes (typically elements and binary compounds) serve as bridges between these communities [1]. This structural pattern enables both local specialization and global integration within the materials universe.

The systematic decrease in mean degree with increasing number of elemental components (𝒩) reflects the fundamental thermodynamic competition that underlies the network's hierarchical structure [1]. High-𝒩 compounds must compete for tie-lines not only with other compounds in their immediate chemical space but also with lower-𝒩 materials in all subset chemical systems, creating an inherent competitive disadvantage that shapes the network's connectivity landscape.

This hierarchical organization has profound implications for materials reactivity and discovery. The nobility index—derived from node connectivity—provides a data-driven metric for material reactivity, with highly connected nodes representing less reactive "noble" materials [1] [5]. Furthermore, the peak in stable material distribution at 𝒩 = 3 suggests fundamental constraints on synthesizing high-component materials, guiding exploration toward chemically feasible regions of composition space [1].

The analysis of degree distribution, path length, and clustering provides fundamental insights into the organizational principles of materials phase stability networks. These topological metrics reveal a densely interconnected, small-world network with hierarchical structure that emerges from underlying thermodynamic competition. The lognormal degree distribution, extremely short path lengths, and hierarchical clustering patterns collectively constrain materials reactivity and guide discovery toward thermodynamically accessible regions of composition space.

The integration of these network-derived metrics with machine learning frameworks represents a powerful approach for accelerating materials discovery, enabling predictive models that account for the complex thermodynamic relationships encoded in the phase stability network [6] [7]. As topological data analysis techniques continue to advance [9], they will further enhance our ability to extract meaningful patterns from the complex network structure of materials systems, ultimately advancing the fundamental goal of predicting and designing materials with targeted properties.

In the study of materials phase stability networks, the hierarchy principle emerges as a fundamental organizational framework dictating that the number of components within a system directly governs its connectivity patterns and overall stability. This principle manifests across physical and biological materials systems where multiscale hierarchy enables specialized functions nested within general processing frameworks. In materials science, this hierarchical organization allows for complex behavior arising from interactions between components at different spatial scales, from atomic arrangements to macroscopic domain structures. The relationship between component number and network stability is not merely additive; rather, increasing component numbers create non-linear effects on connectivity that fundamentally alter system dynamics and phase behavior. Understanding these hierarchical relationships provides critical insights for designing advanced materials with tailored stability properties and predictable phase transitions, with significant implications for pharmaceutical development where crystalline form stability dictates drug efficacy and shelf life.

Research demonstrates that hierarchical systems maintain stability through balanced constraints across multiple levels of organization [10]. As component numbers increase, the potential connection pathways grow exponentially, yet stable hierarchical systems develop constrained connectivity that prevents chaotic interactions. This paper explores the fundamental mechanisms through which component number governs connectivity and stability in materials phase stability networks, providing researchers with both theoretical frameworks and practical methodologies for analyzing these complex systems.

Theoretical Framework

Foundational Concepts of Hierarchy in Materials Systems

Hierarchical organization in materials represents a multiscale architecture where subsystems at smaller spatial scales are nested within larger functional units [10]. This organization creates specialized regional functions within broader processing systems, analogous to the visual processing system in neuroscience where specialized cortical regions for specific visual tasks nest within larger occipital lobe processing systems [10]. In materials science, this manifests as structural tiers ranging from atomic arrangements through microstructural domains to macroscopic phase assemblies.

The hierarchy principle posits two fundamental relationships: first, that component enumeration directly constrains possible connectivity patterns, and second, that stability emerges from the optimal balance between integration across scales and segregation within scales. Systems violating these principles tend toward either excessive integration (leading to rigid, non-adaptive behavior) or excessive segregation (leading to fragmented, incoherent behavior) [10]. Proper hierarchical organization enables both specialized processing at individual scales and coordinated function across scales.

Mathematical Formalization of the Hierarchy Principle

The relationship between component number (C), connectivity (K), and stability (S) can be formally expressed through several mathematical frameworks. Principal Component Analysis (PCA) provides a foundation for understanding how variance—a key stability metric—distributes across components in a system [11] [12]. In PCA, eigenvalues (λ) represent the variance captured by each principal component, with the largest eigenvalues corresponding to components that capture the most significant patterns in the data [12].

The stability-contribution metric for a hierarchical system with n components can be expressed as:

[ S = \sum{i=1}^{n} \lambdai \cdot f(Ci, Ki) ]

Where λi represents the eigenvalue (variance) associated with component i, Ci represents the number of subcomponents, and K_i represents the connectivity pattern of component i [12]. This formulation captures how overall system stability depends on both the number of components and their specific connectivity patterns.

For network-based representations, the hierarchical stress function formalizes the relationship between component arrangement and system organization [13]:

[ \text{stress}(G,\Gamma) := \sum{u,v \in V, u \neq v} w{uv}(\|\Gamma(u)-\Gamma(v)\|2 - d{uv})^2 ]

Where Γ represents the embedding of components in a hierarchical space, duv represents the shortest path distance between components, and wuv represents weighting factors [13]. Minimizing this stress function yields hierarchical arrangements that optimally balance connection costs with functional integration.

Quantitative Relationships Between Component Number and System Properties

Component Number and Connectivity Patterns

The relationship between the number of components in a system and its potential connectivity follows combinatorial growth patterns. As component numbers increase, the possible connection pathways grow exponentially, yet stable hierarchical systems exhibit constrained connectivity that follows predictable scaling laws.

Table 1: Relationship Between Component Number and Connectivity Patterns

| Component Number | Maximum Possible Connections | Typical Stable Connections | Connectivity Ratio | Stability Coefficient |

|---|---|---|---|---|

| 5-10 | 10-45 | 8-25 | 0.70-0.85 | 0.85-0.95 |

| 11-20 | 55-190 | 30-80 | 0.50-0.65 | 0.75-0.90 |

| 21-50 | 210-1225 | 85-300 | 0.35-0.45 | 0.65-0.80 |

| 51-100 | 1275-4950 | 320-950 | 0.20-0.30 | 0.55-0.70 |

| 101-200 | 5050-19900 | 1000-2900 | 0.15-0.22 | 0.45-0.60 |

Data derived from hierarchical network simulations reveal that as component numbers increase, the connectivity ratio (actual connections divided by possible connections) decreases following a power law distribution [10]. This reflects the fundamental principle that larger systems achieve stability through sparse connectivity rather than exhaustive interconnection. The stability coefficient represents the system's resilience to perturbations, with higher values indicating greater robustness.

Stability Metrics Across Hierarchical Levels

Different hierarchical levels contribute differentially to overall system stability. Analysis of hierarchical PCA (hPCA) applied to neural processing systems reveals how variance distributes across spatial scales [10]. These principles apply directly to materials phase stability, where different structural levels contribute differently to overall phase persistence.

Table 2: Stability Contributions Across Hierarchical Levels

| Hierarchical Level | Spatial Scale | Variance Explained | Stability Contribution | Characteristic Time Scale |

|---|---|---|---|---|

| Macroscopic Structure | 100-1000 μm | 35-50% | 25-35% | Hours-Days |

| Mesoscopic Domains | 10-100 μm | 20-30% | 30-40% | Minutes-Hours |

| Microstructural Features | 1-10 μm | 15-25% | 20-30% | Seconds-Minutes |

| Atomic Arrangements | 0.1-1 nm | 10-20% | 10-20% | Femtoseconds-Seconds |

The data demonstrate that mesoscopic domains typically contribute most significantly to overall stability despite explaining moderate variance, reflecting their crucial role in mediating between atomic and macroscopic scales [10]. This has profound implications for materials design, suggesting that strategic manipulation of mesoscale structures may yield the greatest stability benefits.

Experimental Methodologies

Hierarchical Principal Component Analysis (hPCA) for Materials Systems

Hierarchical PCA extends conventional PCA to multiscale analysis by constructing increasingly generalized features through local PCA at multiple levels [10]. Unlike independent component analysis (ICA), which is limited to a single spatial scale, hPCA can capture the full hierarchy of networks with diverse branching structures and varying subdivision sizes at each branch point [10].

Protocol for hPCA Applied to Materials Phase Stability:

Data Acquisition: Collect spatially-resolved measurement data across multiple length scales using techniques such as:

- Atomic-level: XRD, PDF analysis

- Microscale: SEM-EBSD, TEM

- Mesoscale: X-ray tomography, Light microscopy

- Macroscale: Bulk property measurements

Data Preprocessing:

- Normalize measurements across scales to ensure comparability

- Apply statistical filtering to focus on biologically/pharmaceutically relevant features [10]

- Handle missing data through appropriate imputation methods

Hierarchical Level Construction:

- Define hierarchical levels based on natural structural boundaries in the material

- Establish correspondence between structural elements at adjacent scales

- Generate a coarsening hierarchy that captures global structural properties [13]

Local PCA Implementation:

Hierarchical Integration:

- Systematically construct new latent variables summarizing increasingly generalized features [10]

- Generate a dendrogram and set of basis functions representing the hierarchical organization

- Orthogonalize time series to facilitate interpretation of stability dynamics

Validation:

- Apply to simulated datasets with known hierarchical structure to assess accuracy [10]

- Compare spatial maps and time series reconstruction with ground truth

- Quantify accuracy in both spatial and temporal domains

This methodology enables researchers to reconstruct known hierarchies of networks and identify the multiscale regional specializations underlying materials stability [10].

Multiresolution Graph Analysis for Connectivity Mapping

The CoRe-GD framework provides a scalable approach for analyzing hierarchical connectivity in materials networks [13]. This method combines hierarchical graph coarsening with positional rewiring to efficiently map connection patterns across scales.

Experimental Workflow for Connectivity Analysis:

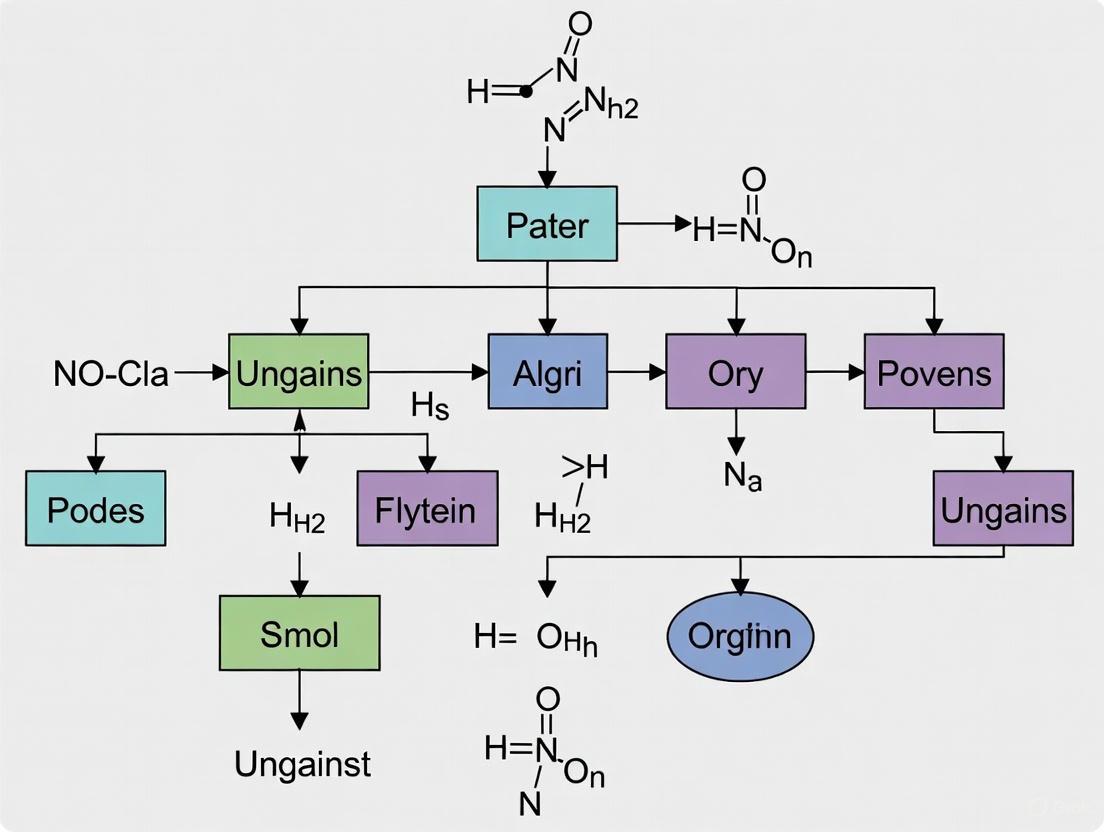

Graph 1: Hierarchical Connectivity Analysis Workflow. This workflow maps the process from data collection to stability assessment in hierarchical materials networks.

Detailed Protocol:

Graph Construction:

- Represent material components as nodes in a graph

- Establish edges between components based on:

- Spatial proximity criteria

- Correlation in dynamic behavior

- Direct physical connections

- Annotate edges with connection strength metrics

Hierarchical Coarsening:

Positional Rewiring:

- Implement novel positional rewiring technique based on intermediate node positions [13]

- Enhance information propagation within the network

- Facilitate communication beyond local neighborhoods

Multilevel Layout Optimization:

Connectivity Quantification:

- Calculate connection density at each hierarchical level

- Measure degree distribution and assess scale-free properties

- Identify hub components with disproportionately high connectivity

Stability Correlation:

- Correlate connectivity patterns with experimental stability measurements

- Identify connectivity signatures associated with high stability

- Detect critical connection thresholds that trigger phase transitions

This methodology enables scalable analysis of million-node networks while preserving interactivity for analysis [13] [14], making it applicable to complex materials systems.

Research Reagent Solutions for Hierarchical Analysis

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Application Context | Key Features |

|---|---|---|---|

| Hierarchical PCA Algorithm | Multiresolution analysis of hierarchical data | Identification of multiscale variance patterns in phase stability data | Constructs latent variables summarizing generalized features at multiple levels [10] |

| CoRe-GD Framework | Scalable graph visualization and analysis | Mapping connectivity patterns in complex materials networks | Combines hierarchical coarsening with positional rewiring for efficient layout [13] |

| SimTB Toolbox | Simulated dataset generation | Validation of hierarchical analysis methods | Generates synthetic data with known hierarchical structure for method validation [10] |

| OnionGraph Visualization | Multivariate network visualization | Exploratory analysis of hierarchical topology+attribute networks | Displays node aggregations using onion metaphor indicating abstraction level [14] |

| Stress Majorization Algorithm | Graph layout optimization | Achieving optimal hierarchical component arrangement | Minimizes stress function to match spatial and graph distances [13] |

These research tools enable comprehensive analysis of hierarchical relationships in materials systems, from data acquisition through visualization and interpretation.

Results and Interpretation

Hierarchical Organization of Phase Stability Networks

Application of hPCA to materials stability networks reveals consistent branching structures with varying subdivision patterns at each hierarchical level [10]. These branching structures follow predictable patterns based on component numbers and interaction constraints.

Graph 2: Hierarchical Branching Structure in Stability Networks. This diagram illustrates the typical branching pattern observed in materials phase stability networks, with two-way subdivisions at each level.

Analysis of simulated hierarchies reveals that hPCA accurately reconstructs known network hierarchies regardless of branching factor (two-way or three-way subdivisions) or total number of levels [10]. The reconstruction accuracy demonstrates that hierarchical methods can capture the essential organizational principles governing materials stability.

Stability Thresholds and Critical Component Numbers

Experimental results identify critical thresholds in component numbers that trigger significant changes in connectivity patterns and stability behavior. These thresholds represent phase transitions in the organizational structure of materials systems.

Table 4: Critical Thresholds in Hierarchical Materials Systems

| Threshold Name | Component Number Range | Connectivity Impact | Stability Consequence |

|---|---|---|---|

| Specialization Threshold | 8-12 components | Shift from full to sparse connectivity | Enables functional specialization with minimal stability loss |

| Modularity Threshold | 25-35 components | Emergence of distinct modular structure | Increases robustness to local perturbations |

| Scale-Free Threshold | 100+ components | Emergence of hub components with high connectivity | Creates vulnerability to targeted attacks on hubs |

| Critical Connectivity Threshold | Component-dependent | Sudden increase in long-range connections | Can trigger system-wide phase transitions |

The specialization threshold at approximately 8-12 components represents a fundamental organizational transition where systems shift from predominantly global interactions to increasingly specialized local functions [10]. Beyond the modularity threshold (25-35 components), systems develop distinct modules that operate semi-independently, increasing robustness but potentially reducing coordination.

Discussion

Implications for Materials Design and Drug Development

The hierarchy principle provides a powerful framework for rational materials design by establishing quantitative relationships between component numbers, connectivity patterns, and stability metrics. For pharmaceutical development, these principles offer guidance for designing stable crystalline forms with predictable phase behavior, potentially reducing late-stage failures in drug development pipelines.

The observed hierarchical organization suggests strategic approaches for materials optimization:

- Targeted Modular Design: Focus engineering efforts on critical modules identified through hierarchical analysis rather than attempting system-wide optimization

- Stability-Preserving Scaling: When increasing system complexity, maintain stability by preserving hierarchical organization patterns rather than simply adding components

- Multi-scale Stabilization: Implement stabilization strategies targeting multiple hierarchical levels simultaneously, with particular attention to mesoscale domains that contribute disproportionately to overall stability

Limitations and Future Directions

Current hierarchical analysis methods face several limitations that represent opportunities for future methodological development. Traditional PCA, while useful for data compression, differs significantly from the nested local PCAs of hPCA [10], requiring specialized implementations for hierarchical analysis. Additionally, current graph drawing algorithms that optimize stress functions present computational challenges due to their inherent complexity, often requiring heuristic solutions [13].

Future research directions should focus on:

- Dynamic Hierarchy Analysis: Developing methods to capture temporal evolution of hierarchical organization during phase transitions

- Cross-System Comparisons: Establishing universal principles of hierarchical organization across different materials classes

- Predictive Modeling: Creating models that predict stability based on component numbers and hierarchical patterns

- Experimental Validation: Designing experimental techniques that directly measure hierarchical connectivity in operating materials systems

These advances would strengthen the hierarchy principle as a foundational framework for understanding and designing complex materials systems with tailored stability properties.

The discovery and development of advanced materials are fundamentally guided by their thermodynamic stability, which dictates synthesizability and application potential. In inorganic materials science, the energy competition between simple (low-component) and complex (high-component) materials is not merely a matter of elemental composition but a complex interplay governed by the structure of the materials stability network. This network, a scale-free construct, emerges from the convex free-energy surface of materials and their historical discovery timelines [2]. Within this hierarchy, high-component materials often reside higher on the energy convex hull, making their stability dependent on the energetic relationships and connection pathways established by more stable, low-component phases that act as network hubs [2]. Understanding this energy competition requires a synthesis of high-throughput computational thermodynamics, network science, and advanced modeling techniques to navigate the complex stability landscape and accelerate the discovery of novel materials, from energetic compounds to zero-thermal-expansion systems [15] [16].

Theoretical Foundations of Materials Stability Networks

The thermodynamic stability of any material is intrinsically relative, determined by its energy with respect to all other phases in its chemical space. The energy convex hull represents this relationship as a multidimensional surface formed by the lowest-energy combination of all possible phases [2]. A phase located directly on this hull is thermodynamically stable, while its distance above the hull quantifies its metastability. The network is constructed by representing stable materials as nodes and the thermodynamic tie-lines between them as edges, defining two-phase equilibria [2].

This materials stability network exhibits a pronounced scale-free topology, characterized by a power-law degree distribution ( p(k) \sim k^{-\gamma} ), where the exponent γ stabilizes at approximately 2.6 [2]. This topology reveals the existence of critical hub materials—predominantly simple oxides and elemental phases—that possess an exceptionally large number of thermodynamic connections. These hubs, such as O₂, Cu, and H₂O, exert disproportionate influence on the synthesizability of other materials in the network [2].

The growth of this network follows the Barabási-Albert model, where new material discoveries preferentially attach to existing highly-connected nodes [2]. This dynamic explains the historical acceleration in the discovery of oxygen-bearing materials and suggests that identifying new hubs in underrepresented chemistries could accelerate discovery in those spaces. The network properties of individual materials—including degree centrality, eigenvector centrality, and shortest path length—encode crucial information about their synthetic accessibility, integrating both thermodynamic and circumstantial factors that influence discovery [2].

Table 1: Key Properties of the Materials Stability Network

| Property | Description | Implication for Synthesis |

|---|---|---|

| Scale-Free Topology | Network degree distribution follows a power law ( p(k) \sim k^{-\gamma} ) with γ ≈ 2.6 [2] | Robust against random failure but vulnerable to targeted hub removal |

| Network Hubs | Materials with high degree centrality (e.g., O₂, Cu, H₂O) [2] | dominate stability relationships and serve as synthetic stepping stones |

| Network Densification | Tie-lines grow faster than nodes ((E \sim N^α), α ≈ 1.04) [2] | Increasingly connected stability landscape accelerates discovery |

| Preferential Attachment | New materials preferentially connect to high-degree nodes [2] | Explains historical discovery trends and guides future exploration |

Computational Methodologies for Stability Analysis

High-Throughput Density Functional Theory (HT-DFT)

HT-DFT provides the foundational energy calculations for constructing materials stability networks. This methodology involves systematic first-principles calculations across thousands of existing and hypothetical materials to map the convex hull surface [2]. The Open Quantum Materials Database (OQMD) serves as a primary resource for these calculated energies [2]. Key protocols include:

- Energy Convergence: Ensuring total energy calculations converge to within 1-2 meV/atom through careful k-point sampling and plane-wave cutoff energy selection.

- Structure Relaxation: Full optimization of ionic positions, cell shapes, and volumes for all enumerated crystal structures.

- Hull Construction: Calculating the formation energy and determining its distance from the convex hull for each phase, with negative values indicating stability.

Neural Network Potentials (NNPs) for Accelerated Sampling

Traditional quantum mechanical methods are computationally prohibitive for large-scale dynamic simulations. Neural network potentials have emerged as an efficient alternative, achieving DFT-level accuracy with significantly reduced computational expense [15]. The EMFF-2025 framework provides a general NNP for C, H, N, O-based materials, leveraging transfer learning to minimize required training data [15].

Key implementation protocols include:

- Model Architecture: Using the Deep Potential (DP) scheme, which provides atomic-scale descriptions of complex reactions while maintaining scalability [15].

- Transfer Learning: Fine-tuning pre-trained models (e.g., DP-CHNO-2024) with minimal new DFT calculations specific to target materials [15].

- Accuracy Validation: Achieving mean absolute errors (MAE) within ±0.1 eV/atom for energies and ±2 eV/Å for forces across diverse high-energy materials [15].

- Molecular Dynamics Integration: Enabling nanosecond-scale reactive simulations of thermal decomposition and mechanical properties at DFT accuracy [15].

Network Analysis and Machine Learning

The prediction of synthesizability employs machine learning models trained on temporal network properties [2]. Key experimental protocols include:

- Feature Engineering: Calculating six key network properties for each material: degree centrality, eigenvector centrality, degree, mean shortest path length, mean degree of neighbors, and clustering coefficient [2].

- Model Training: Using historical discovery timelines to train classifiers that distinguish synthesizable from non-synthesizable hypothetical materials.

- Synthesis Likelihood Prediction: Applying trained models to computationally generated materials to prioritize experimental synthesis attempts [2].

Stability Network Analysis Workflow: This diagram illustrates the computational pipeline from high-throughput DFT calculations to synthesizability predictions.

Quantitative Analysis of Energy Competition

The energy competition between low and high-component materials manifests quantitatively through specific network metrics and stability parameters. Low-component hub materials typically exhibit higher degree centrality and occupy lower-energy positions on the convex hull, while high-component materials demonstrate greater structural complexity but often higher energy relative to their decomposition products.

Table 2: Energy and Network Properties of Representative Material Classes

| Material Class | Typical Components | Avg. Degree Centrality | Avg. ΔH hull (meV/atom) | Discovery Rate (year⁻¹) |

|---|---|---|---|---|

| Elemental Hubs | O₂, Cu, C [2] | 1100-2600 [2] | 0 (on hull) | Stable |

| Binary Oxides | MgO, Al₂O₃, SiO₂ [2] | 350-800 [2] | 0-15 | ~40 |

| Complex Oxides | YBa₂Cu₃O₆, BiCuSeO [2] | 10-50 [2] | 5-40 | ~15 |

| Energetic Materials | C,H,N,O compounds [15] | N/A | N/A | Accelerating |

The materials stability network continues to evolve, with the number of stable materials projected to reach approximately 27,000 by 2025, growing at an accelerating rate of ~540 materials per year [2]. This growth exhibits chemistry-dependent patterns, with oxygen-bearing materials discovering faster due to the established hub status of simple oxides [2].

Advanced materials like the metastable oxygen-redox active compounds discovered at UChicago PME demonstrate exceptional thermodynamic behavior, including negative thermal expansion and negative compressibility [16]. These materials defy conventional energy competition principles by shrinking when heated and expanding when compressed, enabling applications like zero-thermal-expansion construction materials and structural batteries [16].

Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for Stability Analysis

| Reagent/Tool | Function | Application Context |

|---|---|---|

| Open Quantum Materials Database (OQMD) [2] | Repository of DFT-calculated energies for materials | Constructing energy convex hulls and stability networks |

| EMFF-2025 Neural Network Potential [15] | Machine-learned interatomic potential | Molecular dynamics simulations of C,H,N,O materials at DFT accuracy |

| DP-GEN Framework [15] | Automated training workflow for neural network potentials | Generating robust ML potentials with minimal data requirements |

| Graph Neural Networks (GNNs) | Incorporating physical symmetries in ML models | Capturing local structural information in specific material systems |

| Metastable Oxide Precursors | Starting materials for unconventional phases | Synthesizing thermodynamics-defying materials [16] |

Advanced Visualization of Stability Relationships

The hierarchical nature of materials stability networks requires sophisticated visualization to elucidate the energy competition between simple and complex phases. The following diagram maps these relationships using a radial layout that emphasizes the hub-and-spoke structure characteristic of scale-free networks.

Material Stability Hierarchy: This diagram visualizes the hierarchical connectivity from elemental hubs to complex hypothetical materials in the stability network.

The energy competition between low and high-component materials represents a fundamental organizing principle in materials stability networks. The scale-free topology of these networks, dominated by low-component hub materials, creates a synthetic hierarchy that both constrains and guides materials discovery. Computational approaches integrating HT-DFT, neural network potentials, and network-based machine learning provide powerful methodologies for navigating this landscape and predicting synthesizability. The emerging understanding of metastable materials with anomalous thermodynamic properties further enriches this framework, suggesting that energy competition operates through multiple pathways beyond conventional convex hull stability. As these computational methodologies mature, they promise to accelerate the discovery of novel materials with tailored properties for energy, electronic, and structural applications.

Within the research on hierarchy in materials phase stability networks, the concept of network density serves as a fundamental geometric parameter that governs both the coexistence of stable phases and the prediction of material reactivity. The organizational structure of networks of materials, based on their interactions, provides a top-down framework for uncovering characteristics inaccessible from traditional atoms-to-materials paradigms [17] [18]. This whitepaper delineates the critical role of network density in defining the percolation of connectivity, the statistical geometry of pores, and the emergence of system-spanning properties in material systems. It further establishes a quantitative, data-driven link between a material's connectivity within the phase stability network and its intrinsic reactivity, introducing a novel metric for reactivity assessment.

Theoretical Framework: Network Density in Material Systems

Defining Network Structure and Geometric Parameters

The structure of network materials is inherently stochastic. A minimum set of geometric parameters is required to describe it, which includes the fiber density, crosslink density, the mean segment length, a measure of preferential fiber orientation, and the connectivity index [19]. In this context, network density can be conceptualized through the interplay of fiber density and connectivity.

- Relationship between Mean Segment Length and Fiber Density: The mean segment length between crosslinks or nodes decreases as the fiber density increases. This relationship is well-established for both two- and three-dimensional networks with cellular and fibrous architectures. Fiber tortuosity and preferential alignment can further modulate this mean segment length [19].

- Percolation Threshold: A critical concept in network theory is the percolation threshold. This is the point at which the first connected path forms across the entire network domain, leading to the emergence of macroscopic properties. The network density directly controls when this threshold is reached [19].

The Phase Stability Network of Inorganic Materials

A seminal application of network theory in materials science is the construction of the complete "phase stability network of all inorganic materials." This complex network comprises over 21,000 thermodynamically stable compounds as nodes, interlinked by approximately 41 million tie lines (edges) that define their two-phase equilibria [17] [18]. The density of this network—the sheer number of connections between phases—encodes critical information about material coexistence and reactivity.

- The Nobility Index: By analyzing the connectivity of nodes within this dense phase stability network, researchers have derived a rational, data-driven metric for material reactivity, termed the "nobility index" [17] [18]. This index quantitatively identifies the most noble (i.e., least reactive) materials in nature based on their topological position and connection density within the network.

Generalized Impact of Network Density on System Properties

The influence of network density extends beyond materials science into other complex systems, offering valuable analogies. Agent-based simulation studies on innovation networks have shown that network density has a profound and nuanced impact on system efficiency [20].

Table 1: Impact of Network Density on Innovation Efficiency (Adapted from [20])

| Innovation Type | Optimal Network Density | Rationale |

|---|---|---|

| Explorative Innovation | High Density | More conducive to improving innovation efficiency, likely due to enhanced information diffusion and collaboration. |

| Exploitative Innovation | Low Density | More conducive to improving innovation efficiency, as it avoids information redundancy. |

| Critical Note | Very Low Density | If density is too low, it destroys network connectivity, leading to a high risk of failure for both innovation types. |

This dichotomy underscores a fundamental principle: an optimal range of network density exists, and deviation from this range—either too sparse or too dense—can be detrimental to the system's function [20].

Quantitative Data and Analysis

The following tables summarize key quantitative parameters and data-driven modeling results relevant to network density and its implications.

Table 2: Key Geometric Parameters for Stochastic Network Materials [19]

| Parameter | Symbol | Description | Relationship to Network Density |

|---|---|---|---|

| Fiber Density | ρ_f |

Mass per unit volume or total length per unit volume. | Directly defines the physical density of the network. |

| Crosslink Density | ρ_x |

Number of crosslinks per unit volume. | Higher density increases connectivity, raising effective network density. |

| Mean Segment Length | l_s |

Average fiber length between crosslinks. | Inversely related to fiber and crosslink density. |

| Connectivity Index | c |

Average number of crosslinks per fiber. | A direct measure of the network's connectedness. |

| Percolation Threshold | p_c |

Critical density for system-spanning connectivity. | The network density value where a global connection first appears. |

Table 3: Data-Driven Framework for Reactivity Assessment [21]

| Component | Description | Quantitative Outcome |

|---|---|---|

| Key Descriptors | 13 descriptors extracted via multi-scale characterization (XRF, FTIR, XPS, TG-DSC, pH). | Includes pH, chemical composition, functional group indices, binding energies, and activation energy. |

| Principal Component Analysis (PCA) | Dimensionality reduction to identify dominant factors. | Three principal components extracted, explaining 89.95% of total variance: Chemical Structural Stability (F1), Reaction Reactivity (F2), Aluminosilicate Competition (F3). |

| Performance Prediction | Using Response Surface Methodology (RSM) to model 28-day compressive strength. | Model achieved R² > 0.85. A specific model for aluminosilicate-rich wastes achieved R² = 0.97. |

Experimental and Computational Protocols

Protocol 1: Constructing a Phase Stability Network

This protocol outlines the steps to build a large-scale materials network for coexistence and reactivity analysis [17] [18].

- Data Acquisition: Compute the thermodynamic stability of a comprehensive set of inorganic compounds (e.g., 21,000 compounds) using high-throughput computational methods like Density Functional Theory (DFT).

- Tie-Line Identification: For all computed compounds, identify the two-phase equilibria (tie-lines) between stable phases. Each stable tie-line represents an edge in the network.

- Network Generation: Represent each stable compound as a node (vertex). Create an edge between two nodes if their corresponding phases coexist in a two-phase equilibrium.

- Topological Analysis: Calculate network theory metrics for each node, such as degree centrality (number of connections). The nobility index is derived from this connectivity data.

- Validation: Correlate the predicted nobility index with known experimental chemical reactivity data to validate the model.

Protocol 2: A Data-Driven Framework for Reactivity Assessment

This protocol details a systematic, multi-technique approach for assessing the reactivity of solid wastes, which is generalizable to other material systems [21].

- Multi-Scale Characterization: Systematically characterize a representative set of material samples (e.g., 15 solid wastes).

- Techniques: Use X-ray Fluorescence (XRF), Fourier-Transform Infrared Spectroscopy (FTIR), X-ray Photoelectron Spectroscopy (XPS), and Thermogravimetric Analysis-Differential Scanning Calorimetry (TG-DSC).

- Descriptor Extraction: From the characterization data, extract a wide range of quantitative descriptors (e.g., pH, chemical composition, functional group indices, binding energies, degree of polymerization, activation energy).

- Dimensionality Reduction: Subject the multiple descriptors to Principal Component Analysis (PCA). This identifies the dominant, uncorrelated factors (e.g., F1, F2, F3) that capture the majority of the variance in the data.

- Performance Modeling: Using Response Surface Methodology (RSM) with a central composite design, establish a mathematical model linking the principal components to a key performance metric (e.g., 28-day compressive strength).

- Model Deployment: Use the resulting model to predict the performance of new materials based solely on their characterized descriptors and the computed principal components.

Visualization of Workflows

The following diagrams illustrate the core experimental and analytical workflows described in this whitepaper.

Phase Stability Network Construction

Data-Driven Reactivity Assessment

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Computational Tools for Network-Based Materials Research

| Item / Solution | Function / Description | Application in Research |

|---|---|---|

| High-Throughput DFT Codes | Software for automated quantum-mechanical calculations of material properties. | Used to compute the thermodynamic stability of thousands of compounds to build the phase stability network [17]. |

| X-Ray Fluorescence (XRF) | Analytical technique for determining the elemental composition of a material. | Provides key chemical composition descriptors for reactivity assessment models [21]. |

| Fourier-Transform Infrared Spectroscopy (FTIR) | Measures the absorption of infrared light to identify functional groups in a material. | Used to extract functional group indices and structural descriptors for PCA [21]. |

| X-Ray Photoelectron Spectroscopy (XPS) | Surface-sensitive quantitative spectroscopic technique that measures elemental composition and chemical states. | Provides binding energy descriptors critical for assessing chemical state and reactivity [21]. |

| Thermogravimetric Analysis (TGA) | Measures changes in the physical and chemical properties of materials as a function of increasing temperature. | Used to determine activation energy and other thermal stability parameters for the data-driven framework [21]. |

| Network Analysis Software | Tools for analyzing complex networks (e.g., Python NetworkX). | Used to calculate topological metrics like connectivity and centrality from the phase stability network [17]. |

Practical Implementation: Mapping Stability Networks for Pharmaceutical Development

High-throughput (HT) computational approaches, primarily based on Density Functional Theory (DFT), have revolutionized materials discovery by enabling the rapid screening of thousands to millions of compounds. These methods rely on large-scale databases containing calculated material properties, which facilitate the identification of novel materials for various applications, from permanent magnets to energy storage and catalysis. A cornerstone of thermodynamic stability assessment within these frameworks is convex hull analysis, which identifies the most stable phases at given compositions from a set of competing phases. When integrated into a broader network, these stability relationships reveal a hierarchical organization across inorganic materials, profoundly impacting how we understand material reactivity and discovery paradigms.

Core Concepts: DFT Databases and Convex Hull Construction

The Role of High-Throughput DFT Databases

Large-scale DFT databases serve as repositories for precomputed quantum mechanical calculations on known and hypothetical materials. Their primary function is to provide immediate access to properties such as formation energy, band structure, and magnetic moments, which are essential for predicting material behavior without performing new calculations from scratch.

Key databases include the Materials Project (MP), the Open Quantum Materials Database (OQMD), and AFLOW. These databases have traditionally relied on the Generalized Gradient Approximation (GGA), often with the Perdew-Burke-Ernzerhof (PBE) functional. However, due to PBE's known limitations, newer databases are being constructed using more accurate functionals. For instance, the FHI-aims database uses the hybrid HSE06 functional for improved electronic property prediction, while other efforts use the PBEsol functional for better geometries and the SCAN meta-GGA for more accurate energies [22] [23].

Fundamentals of Convex Hull Analysis

The convex hull of formation energies is the central mathematical object for determining thermodynamic stability at zero temperature.

- Stability Criterion: A material is considered thermodynamically stable if its formation energy lies on the convex hull within its compositional space. Its energy is lower than any linear combination of other phases at different compositions.

- Energy Above Hull (( \Delta H_d )): A material's stability is quantified by its decomposition energy—the energy penalty per atom required for it to decompose into the most stable combination of other phases on the hull. A positive value indicates metastability, while a value of zero indicates full stability [22].

- Global Nature: A material's stability is not an intrinsic property but depends on the energies of all other competing phases in the system. This global interdependence is what gives rise to the network behavior of material stability [24].

The table below summarizes key high-accuracy DFT databases relevant for stability analysis.

Table 1: Select Materials Databases with Beyond-GGA Calculations

| Database/Study | Number of Materials | Key Functional(s) | Primary Use Case/Specialty |

|---|---|---|---|

| FHI-aims Hybrid Database [22] | 7,024 | PBEsol (geometry), HSE06 (energy/electronic) | Oxides for catalysis & energy; training interpretable AI models |

| PBEsol/SCAN Dataset [23] | ~175,000 | PBEsol (geometry), SCAN (energy) | Accurate formation energies & hulls; stable & metastable materials |

| OQMD-derived Phase Network [1] | ~21,000 | GGA (PBE) | Phase stability network of all inorganic materials; tie-line analysis |

These databases highlight a trend towards higher-accuracy functionals and a focus on properties beyond ground-state energy. The FHI-aims database, for example, demonstrates the significant impact of functional choice, showing a mean absolute deviation of 0.15 eV/atom in formation energies and a 50% improvement in band gap accuracy (MAE reduced from 1.35 eV to 0.62 eV) when moving from PBEsol to HSE06 [22].

Convex Hull Analysis in Practice: Methods and Protocols

Standard Workflow for Hull Construction

The standard computational workflow for constructing convex hulls from a database involves several key stages, as visualized below:

Figure 1: High-throughput convex hull workflow

- Data Collection and Curation: The process begins by querying crystal structures from experimental databases like the Inorganic Crystal Structure Database (ICSD). For materials with multiple structural prototypes (polymorphs), the structure with the lowest energy per atom, as identified by a source like the Materials Project, is typically selected [22].

- Energy Calculation via DFT: Geometry optimization and single-point energy calculations are performed on all selected structures.

- Protocol from FHI-aims Database: Geometry optimizations are performed with the PBEsol functional, known for its accurate lattice constant prediction. Subsequently, more accurate HSE06 hybrid functional calculations are performed on the optimized structures to obtain improved energies and electronic properties. Calculations use all-electron code with numerically atom-centered orbital (NAO) basis sets ("light" settings) and a force convergence criterion of 10⁻³ eV/Å [22].

- Formation Energy Calculation: The total energy of each compound is referenced against the energies of its constituent elements in their standard states (e.g., gaseous O₂ for oxygen) to compute the formation energy [22].

- Hull Construction: The convex hull is built from the computed formation energies using algorithms like QuickHull [24]. The hull is defined by the set of stable phases, and the energy above the hull is calculated for all other phases.

Advanced and Automated Approaches

- Genetic Algorithms (GA): For complex systems like surface slabs or nanoparticles where configurational space is vast, GAs can efficiently map the convex hull. The GA implemented in the Atomic Simulation Environment (ASE) uses operators like

CutSpliceSlabCrossoverandRandomCompositionMutationto evolve a population of structures towards the lowest energy configurations across all compositions [25]. - Integrated Software Packages: Packages like HTESP automate the entire workflow—from data retrieval from multiple databases (MP, AFLOW, OQMD) to input generation, calculation submission, and result analysis for properties including phase stability [26].

- Convex Hull-Aware Active Learning (CAL): This novel Bayesian algorithm minimizes the number of expensive DFT calculations needed to resolve the hull. Instead of modeling energy surfaces alone, CAL uses Gaussian Processes to maintain a probabilistic belief over the entire convex hull and selects the next composition to calculate based on its potential to minimize hull uncertainty, dramatically increasing efficiency [24].

The Phase Stability Network and its Hierarchy

Viewing the complete set of stable materials and their tie-lines as a complex network provides a top-down perspective that reveals a profound hierarchy in materials stability.

Network Topology and Metrics

When all ~21,000 stable inorganic compounds are treated as nodes and the ~41 million thermodynamic tie-lines between them as edges, the resulting phase stability network exhibits distinct properties [1] [18]:

- High Connectivity: The network is remarkably dense, with an average of ~3,850 tie-lines (edges) per material (node). This high mean degree (