Handling Data Skew for Robust Feature Distribution and Stability Prediction in Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on managing data skew to ensure reliable feature distribution and stability predictions.

Handling Data Skew for Robust Feature Distribution and Stability Prediction in Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing data skew to ensure reliable feature distribution and stability predictions. It covers foundational concepts of data skew in scientific datasets, explores methodological applications of machine learning and accelerated predictive stability (ASAP) models, addresses common troubleshooting and optimization challenges, and establishes robust validation and benchmarking frameworks. By synthesizing techniques from data science and pharmaceutical stability testing, this resource aims to enhance the accuracy and reliability of predictive models in biomedical research and clinical development.

Understanding Data Skew and Stability Prediction Fundamentals in Pharmaceutical Research

Defining Data Skew: Core Concepts and Terminology

In data science and machine learning, "data skew" refers to an asymmetric distribution of data in a dataset. This imbalance can manifest in two primary forms, each with distinct characteristics and implications for research, particularly in sensitive fields like pharmaceutical development.

Statistical Data Skew: This occurs when the statistical distribution of a variable's values is not symmetrical around its mean. In a normal distribution (bell curve), the mean, median, and mode are approximately equal. Skewed data disrupts this balance.

- Right-Skewed (Positively Skewed): The distribution has a long tail extending to the right. The mean is typically greater than the median [1]. Real-world examples include personal income distributions (many people with moderate incomes, a few with very high incomes) and insurance claim amounts [1].

- Left-Skewed (Negatively Skewed): The distribution has a long tail extending to the left. The mean is typically less than the median [1]. An example is the age at death within a population, where most people die at an older age, creating a peak on the right [1].

Target Variable Skew (Class Imbalance): In machine learning, skew often refers to an imbalance in the distribution of the label or target variable [2]. This is a critical challenge in pharmaceutical research, where the event of interest (e.g., a patient responding to a drug, the presence of a rare disease) is often the minority class. For instance, in a dataset of credit card transactions, only a very small fraction are typically fraudulent, thus the data is skewed towards non-fraudulent transactions [2].

Data Skew in Distributed Systems: In large-scale data processing, data skew refers to the uneven distribution of data across different partitions or nodes [3] [4]. This can cause severe performance bottlenecks, as the overall processing time is determined by the slowest task running on the most overloaded node [3]. Key types include:

The following table summarizes the core characteristics of statistical skewness.

| Skew Type | Tail Direction | Mean vs. Median | Real-World Example |

|---|---|---|---|

| Right (Positive) | Long tail on the right | Mean > Median | Distribution of personal income [1] |

| Left (Negative) | Long tail on the left | Mean < Median | Age at death in a population [1] |

Troubleshooting Guides and FAQs

This section addresses specific, high-impact issues related to data skew that researchers and scientists encounter during experimental workflows.

FAQ 1: How does skewed data degrade the performance of my predictive model for drug efficacy?

Skewed data, particularly in the target variable or key features, can significantly impair a model's ability to learn and generalize, leading to misleading conclusions in drug discovery.

- Impact on Model Performance: Extreme values in the tail of a skewed distribution can act as outliers, reducing the accuracy of many statistical models, especially regression-based algorithms [5] [1]. The model's efforts to account for these rare, extreme values can degrade its predictive power for the more common, typical cases [5]. While some models like tree-based algorithms are more robust to outliers, skewed data can still limit the choice of viable models for an experiment [5] [1].

- Impact on Statistical Analysis: Many statistical methods assume that data is normally distributed. Skewed data violates this assumption, which can lead to invalid statistical inferences and reduce the reliability of p-values and confidence intervals derived from the data [1].

- Exacerbation of Data Scarcity Issues: In pharmaceuticals, the problem is often compounded. For instance, data on rare diseases or specific adverse drug reactions is inherently scarce and skewed. A model trained on such data may become biased towards the majority class (e.g., non-responders), failing to identify the critical minority class (e.g., responders or patients with a rare condition) [6]. This can directly impact patient safety and drug efficacy profiles.

FAQ 2: My distributed model training is slow, and I suspect data skew. How can I confirm and resolve this?

Slow training jobs in distributed computing environments (e.g., using Apache Spark or Hadoop) are a classic symptom of data skew, where a few partitions or nodes are overloaded with data [3] [4].

- Confirmation: Monitor the task execution within your cluster. If you observe that a small number of tasks are taking significantly longer to complete than the majority, and that these tasks are processing much larger volumes of data, data skew is the likely cause [3] [4].

- Resolution Strategies:

- Data Salting: This technique involves adding a random value (a "salt") to the keys of skewed datasets before operations like

joinorgroupBy. This breaks down large keysets into smaller, more uniformly distributed subsets, allowing for parallel processing [4]. - Adaptive Query Execution: Modern distributed frameworks like Apache Spark can use runtime statistics to dynamically adjust query execution plans, helping to mitigate the impact of skew during operations like shuffles [4].

- Custom Partitioning: Implement a custom partitioning strategy that is aware of the data's inherent skew and deliberately distributes it to balance the load across nodes [4].

- Data Salting: This technique involves adding a random value (a "salt") to the keys of skewed datasets before operations like

FAQ 3: How can I handle skewed data without discarding valuable rare event information?

Discarding data, especially in domains like drug discovery where data is scarce and costly to obtain, is often not a viable option. Several techniques can normalize data distributions while preserving information.

- Data Transformation: Apply mathematical functions to make the skewed distribution more symmetrical. The table below compares common methods.

- Synthetic Data Generation: For target variable skew (class imbalance), techniques like SMOTE (Synthetic Minority Over-sampling Technique) or using Generative Adversarial Networks (GANs) can be used to generate realistic, synthetic examples of the minority class [5] [6]. This balances the dataset without replicating existing data points, as simple oversampling would. This is particularly promising for creating synthetic patient data for rare diseases to train more robust ML models [6] [7].

- Algorithmic Solutions: Use models that are inherently robust to skewed data and outliers, such as tree-based algorithms (e.g., Random Forests, Gradient Boosting Machines) [5]. Alternatively, models can be trained with cost-sensitive learning, where a higher penalty is assigned to misclassifying the rare minority class.

Comparison of Common Data Transformation Techniques

| Transformation | Formula | Use Case | Key Limitations |

|---|---|---|---|

| Log Transform | x' = log(x) |

Right-skewed data with positive values [5] [8] | Cannot handle zero or negative values [5] |

| Square Root | x' = √x |

Right-skewed data with positive values [5] | Applied only to positive values [5] |

| Box-Cox | x' = (x^λ - 1)/λ (if λ ≠ 0) |

Right-skewed data with positive values; finds optimal λ [5] [8] | Requires all values to be positive [5] |

| Yeo-Johnson | (Similar to Box-Cox with modifications) | A more flexible variant for data with zero or negative values [1] | More computationally complex than basic transforms |

| Square Transform | x' = x² |

Can be applied to left-skewed data [5] | Can intensify skew if applied incorrectly |

Experimental Protocols for Detection and Mitigation

Protocol 1: Quantifying and Visualizing Feature Skew in a Dataset

Objective: To systematically identify and measure the degree of skew in numerical features within a pharmaceutical dataset (e.g., biomarker measurements, assay results).

Materials: Python environment with Pandas, NumPy, SciPy, and Seaborn/Matplotlib libraries.

Methodology:

- Data Loading: Load your dataset (e.g., from a CSV file).

- Skewness Calculation: For each numerical feature, calculate the skewness coefficient using a function like

scipy.stats.skew()orpandas.DataFrame.skew(). A skewness value of 0 indicates perfect symmetry. A negative value indicates left-skew, and a positive value indicates right-skew. As a rule of thumb, absolute values greater than 0.5 suggest moderate skew, and greater than 1.0 indicate high skew. - Visualization: Create distribution plots for features with high absolute skewness.

- Use

seaborn.histplot()to plot a histogram with a Kernel Density Estimate (KDE) curve overlaid. - Compare the distribution to a normal curve to visually assess the asymmetry and tail direction [8].

- Use

- Documentation: Record the skewness coefficients and plots for all critical features as part of the experiment's baseline data profile.

Protocol 2: Mitigating Class Imbalance for Rare Disease Detection

Objective: To improve machine learning model performance in detecting a rare disease by rebalancing the training dataset.

Materials: Imbalanced dataset with patient records; Python with imbalanced-learn (imblearn) library; a classification algorithm (e.g., Logistic Regression, Random Forest).

Methodology:

- Baseline Model: Split the data into training and test sets. Train a chosen model on the original, imbalanced training set and evaluate its performance on the test set. Key metrics should include Precision, Recall, and F1-score for the minority (rare disease) class, not just overall accuracy.

- Apply SMOTE: Use the

SMOTEclass from theimblearnlibrary on the training set only. SMOTE generates new synthetic examples of the minority class in the feature space [5] [6].- Critical: Do not apply SMOTE to the test set. The test set must remain on real, unseen data to provide a valid performance estimate.

- Retrain and Evaluate: Train an identical model on the SMOTE-resampled training data. Evaluate it on the original, untouched test set.

- Comparison: Compare the performance metrics, especially the recall and F1-score for the minority class, against the baseline model. A successful mitigation will show a significant improvement in these metrics.

Workflow Visualization

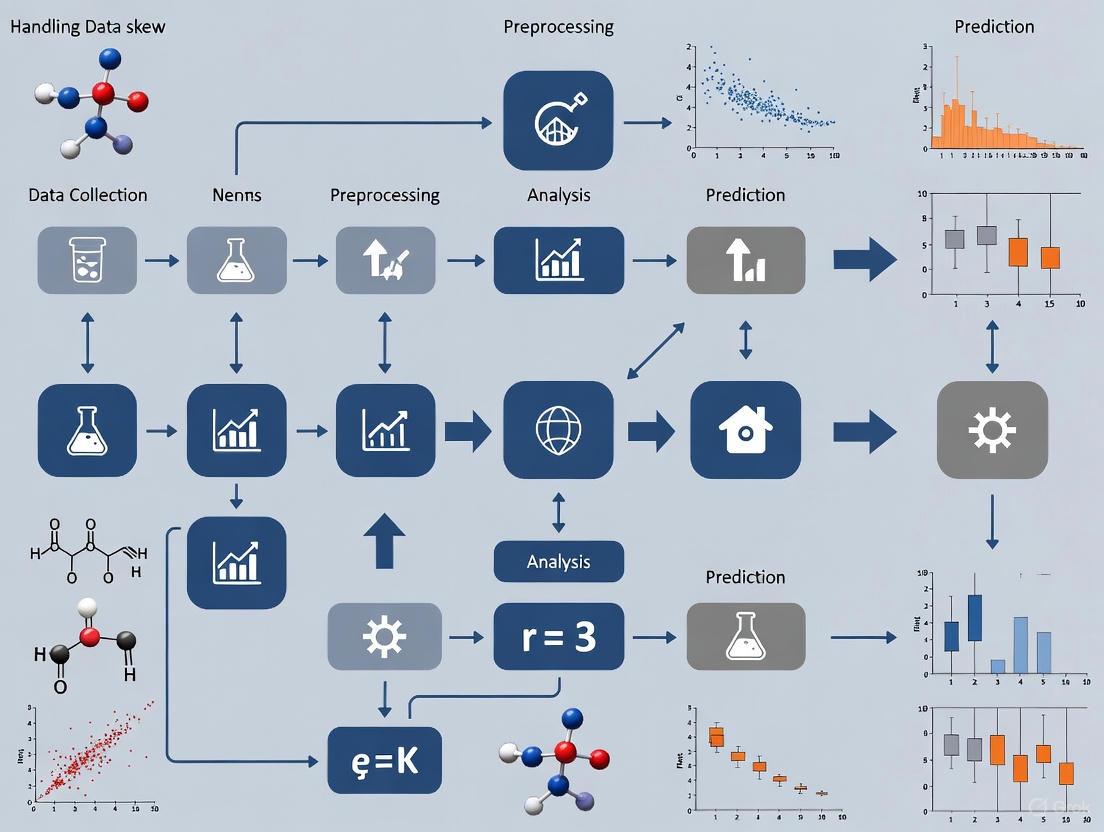

The following diagram illustrates a systematic workflow for diagnosing and treating data skew in a machine learning pipeline, integrating the concepts and protocols described above.

Diagnosis and Mitigation Workflow for Data Skew

The Scientist's Toolkit: Key Research Reagents and Solutions

This table details essential computational and methodological "reagents" for handling data skew in pharmaceutical AI research.

| Tool/Reagent | Function/Benefit | Application Context |

|---|---|---|

| Apache Spark | Distributed processing engine with built-in mechanisms (e.g., salting, adaptive query execution) to handle skewed data in large datasets [4]. | Pre-processing and model training on large-scale genomic or patient data. |

| Synthetic Data Generators (GANs) | Generates privacy-compliant, synthetic patient data to balance class distribution and mitigate bias, useful for rare disease research [6] [7]. | Augmenting training sets for rare event prediction where real data is limited. |

| Box-Cox Transform | A parameterized transformation that finds the optimal power transformation to best approximate a normal distribution [5] [8]. | Normalizing heavily right-skewed continuous features like biomarker concentrations. |

| SMOTE | An oversampling technique that creates synthetic examples for the minority class to rectify class imbalance [5] [6]. | Improving model sensitivity for detecting rare diseases or adverse drug reactions. |

| FAIR Data Principles | A framework (Findable, Accessible, Interoperable, Reusable) to ensure data quality and mitigate biases from flawed or outdated data [9]. | Foundational data governance to prevent skew issues at the source in drug discovery pipelines. |

The Critical Link Between Feature Distribution and Predictive Model Stability

Frequently Asked Questions

Q1: What is data skew and why is it a critical concern in predictive modeling for research? Data skew refers to an asymmetry in the distribution of your data where the tail of the distribution is longer on one side [10]. This is a critical concern because it can fundamentally distort analytical insights and bias machine learning models toward the majority class or dominant range of values [10] [11]. In stable predictive models, we expect features to behave consistently; skewed features violate this assumption, leading to unreliable predictions and poor generalization on new data [11] [12].

Q2: How can I quickly check if my dataset has skewed features?

You can calculate the skewness value for each feature. A value close to zero indicates a symmetrical distribution, while significant positive or negative values indicate skew [10]. The table below provides a guideline for interpretation. Python libraries like Pandas (df['column'].skew()) and visualization tools like histograms with Kernel Density Estimate (KDE) plots are essential for this initial diagnostic [13].

Table: Interpreting Skewness Values

| Skewness Value | Interpretation | Impact on Model Stability |

|---|---|---|

| Between -0.5 and 0.5 | Approximately Symmetric | Low risk; model assumptions are likely met. |

| Less than -1 or Greater than 1 | Highly Skewed | High risk; can significantly bias model outcomes and destabilize predictions. |

| Greater than 0 | Positive (Right) Skew | Most data is concentrated on the left with a long tail to the right [10]. |

| Less than 0 | Negative (Left) Skew | Most data is concentrated on the right with a long tail to the left [10]. |

Q3: My model is biased towards the majority class in a highly skewed clinical outcome variable. What can I do? This is a common issue in medical datasets, such as those with a rare disease outcome. Transforming the target variable can linearize the relationship and make it easier for models to learn effectively [14]. For a positively skewed continuous target like 'Disease Progression Score', a log transformation is often the first step. Critical Note: If you transform your target variable before training, you must apply the inverse transformation to your final predictions to get them back on the original scale for interpretation [14].

Q4: Which scaling technique should I use for my skewed features to improve model convergence? For skewed features, Robust Scaling is often the best choice because it uses the median and Interquartile Range (IQR) and is therefore resistant to outliers that are common in skewed data [15] [16]. Standardization (Z-score normalization) can also be used but is more sensitive to extreme values [15] [16]. Min-Max scaling is generally not recommended for skewed data as it is highly sensitive to outliers [15].

Table: Comparison of Feature Scaling Techniques on Skewed Data

| Technique | Formula | Best For Skewed Data? | Outlier Sensitivity |

|---|---|---|---|

| Robust Scaling | (Xᵢ - Median) / IQR | Yes | Low [15] |

| Standardization | (Xᵢ - Mean) / Std | Sometimes | Moderate [15] |

| Min-Max Scaling | (Xᵢ - Min) / (Max - Min) | No | High [15] |

Q5: How do I fix high skewness in a feature before training a model? Applying a power transformation is the most effective method. The choice depends on your data:

- For Positive Values with Positive Skew: Use the Log Transformation or Box-Cox Transformation [11] [13]. Box-Cox is more powerful as it finds the optimal parameter (λ) to maximize normality but requires strictly positive data [13].

- For Data with Zero or Negative Values: Use the Yeo-Johnson Transformation, which is flexible and handles both positive and negative values [11] [13].

- For a Guaranteed Normal Output: Use the Quantile Transformation, which maps your data to a specified distribution (e.g., normal) by force, effectively removing skewness [13].

Troubleshooting Guides

Problem: Model performance is poor, and feature importance shows bias towards high-magnitude features.

- Symptoms: Inaccurate predictions, unreliable insights, and the model fails to generalize on unseen data [11]. In distance-based algorithms like K-Nearest Neighbors (KNN) or K-Means, features with larger scales can overshadow others [16].

- Root Cause: The dataset contains features with significantly different scales and distributions. Skewed features can dominate the model's learning process, regardless of their true relevance [16].

- Solution:

- Diagnose: Calculate skewness for all numerical features and visualize their distributions.

- Transform: Apply a power transformation (e.g., Box-Cox, Yeo-Johnson) to highly skewed features to make their distributions more symmetrical [13].

- Scale: Use Robust Scaling or Standardization on all features to ensure they contribute equally to the model [15] [16].

- Re-train & Validate: Re-train the model on the transformed and scaled dataset and validate its stability on a hold-out test set.

Problem: Gradient-based models (e.g., Neural Networks, Linear Regression) are converging slowly or unstably.

- Symptoms: The training process is erratic, the loss function fluctuates widely, and the model takes a long time to converge to a minimum.

- Root Cause: Features are on vastly different scales. This causes gradients to update at different rates, making the optimization path inefficient and unstable [16].

- Solution:

- Standardize Features: Apply Standardization (Z-score normalization) to transform features to have a mean of 0 and a standard deviation of 1 [15] [16]. This is particularly crucial for algorithms like Linear Regression and Neural Networks [16].

- Monitor Training: Use learning curves to monitor the loss and validation metrics during training after standardization to confirm improved stability.

Experimental Protocol: Correcting for Data Skew

This protocol outlines a systematic approach to diagnose and correct feature skew to enhance predictive model stability.

1. Hypothesis: Correcting for skewness in feature distributions through appropriate transformations will improve model stability and predictive performance.

2. Experimental Workflow: The following diagram illustrates the key steps for diagnosing and treating data skew in a modeling pipeline.

3. Detailed Methodology:

- Step 1: Diagnose Skewness

- Procedure: For each numerical feature, calculate the skewness coefficient using a function like

pandas.DataFrame.skew(). Visually inspect distributions using histograms with KDE plots [13]. - Decision Threshold: Typically, a skewness value greater than 0.5 or less than -0.5 warrants investigation, and values beyond 1 or -1 almost certainly require correction [10].

- Procedure: For each numerical feature, calculate the skewness coefficient using a function like

Step 2: Apply Transformation

Step 3: Scale Features

- Procedure: After correcting skewness, scale the features. Use a

RobustScalerif outliers are suspected, or aStandardScalerotherwise [15]. Always fit the scaler on the training data and use it to transform both training and test sets.

- Procedure: After correcting skewness, scale the features. Use a

Step 4: Validate Stability

- Metrics: Compare the model's performance on validation/test sets before and after treatment. Look for improvements in metrics like R², Mean Absolute Error (MAE), and the stability of feature importance rankings across different data splits.

The Scientist's Toolkit

Table: Essential Research Reagents for Data Stability Experiments

| Reagent / Tool | Function / Explanation |

|---|---|

| Pandas Library | Foundational Python library for data manipulation and analysis; used to calculate descriptive statistics and handle dataframes. |

| Scikit-learn Preprocessing | Provides ready-to-use scalers (StandardScaler, RobustScaler) and transformers (PowerTransformer) for consistent data treatment [15] [13]. |

| SciPy Stats Library | Offers advanced statistical functions, including the boxcox and yeojohnson transformations for normality [13]. |

| Seaborn/Matplotlib | Visualization libraries used to plot feature distributions (histograms, KDE plots) before and after transformation to visually assess effectiveness [13]. |

| Robust Scaler | A scaling reagent that uses median and IQR, making it essential for pre-processing datasets with outliers or heavy skews [15]. |

FAQs on Batch Effects

Q1: What are batch effects and why are they a critical problem in biomedical data analysis?

Batch effects are technical variations introduced into high-throughput data due to factors unrelated to the study's biological objectives. These can arise from variations in experimental conditions over time, using different laboratories or machines, or employing different analysis pipelines [17]. They are critically important because they can introduce noise that dilutes true biological signals, reduce statistical power, and potentially lead to misleading, biased, or non-reproducible results [17]. In severe cases, batch effects have been identified as a paramount factor contributing to the reproducibility crisis in science, resulting in retracted articles, invalidated research findings, and significant economic losses [17].

Q2: At what stages of an experiment can batch effects be introduced?

Batch effects can emerge at virtually every step of a high-throughput study [17]. Common sources include:

- Study Design: Flawed or confounded design where samples are not collected randomly or are selected based on specific characteristics.

- Sample Preparation & Storage: Variations in protocols, such as different centrifugal forces during plasma separation or differences in storage temperature and duration.

- Data Generation: Using different machines, instruments, or reagent batches.

- Data Processing: Utilizing different bioinformatic pipelines for analysis.

Q3: What is the difference between a balanced and a confounded study design, and why does it matter for batch correction?

The ability to correct for batch effects depends heavily on the initial experimental design [18].

- In a balanced design, the phenotype classes of interest are equally distributed across the different batches. In this scenario, batch effects may be "averaged out" when comparing phenotypes.

- In a confounded design (or fully imbalanced design), the phenotype classes separate completely by batch. Here, the phenotype perfectly correlates with the batch, making it nearly impossible to distinguish whether observed differences are due to true biology or technical artifacts [18]. Correction in confounded designs is challenging and sometimes not possible.

Q4: How can I evaluate the performance of a Batch Effect Correction Algorithm (BECA) for my dataset?

Simply trusting visualizations like PCA plots or a single metric can be misleading [19]. A robust evaluation involves:

- Downstream Sensitivity Analysis: Compare the outcomes (e.g., lists of differentially expressed features) obtained from analyzing batches individually versus after applying a BECA. A good BECA should help recover the "union" of true biological findings from the separate batches [19].

- Multiple Metrics: Use a variety of evaluation metrics. Be cautious of methods that rank BECAs by a single aggregated score, as a poor performance in one critical metric might be masked by good performance in others [19].

- Workflow Compatibility: Ensure the BECA is compatible with your entire data processing workflow (e.g., normalization, missing value imputation), as each step influences the next [19].

Troubleshooting Guide: Batch Effects

Problem: Clustering by batch in PCA plots.

- Potential Cause: Strong technical variation between processing groups is overshadowing biological signal.

- Solution:

- Apply a batch effect correction algorithm like ComBat or limma's

removeBatchEffect()[17] [18]. - If using proteomic data with many missing values, consider tools like HarmonizR that correct without imputation [20].

- Always check the design balance. If it is confounded, correction may not be feasible, and the data should be interpreted with extreme caution.

- Apply a batch effect correction algorithm like ComBat or limma's

- Potential Cause: Batch effects are confounding the biological interpretation, or "Batch Effect Associated Missing Values (BEAMs)" are skewing the analysis [21].

- Solution:

- Detect BEAMs: Identify features that are entirely missing in one batch but present in another.

- Handle BEAMs Carefully: Standard missing value imputation (MVI) methods perform poorly on BEAMs, often introducing artifacts [21]. Avoid using KNN, SVD, or Random Forest imputation directly on such data without considering batch structure.

- Use Batch-Aware Methods: Employ a batch-sensitized MVI strategy or a unified pipeline that handles both MVI and BEC appropriately [21].

Problem: A previously identified biomarker fails to validate in a new batch.

- Potential Cause: The original "biomarker" was actually a artifact of batch effects in the discovery dataset [17] [22].

- Solution:

- Re-analyze the original data using quality-aware batch correction methods to verify the signal is truly biological [22].

- In future studies, implement a study design that balances biological groups across batches from the outset.

- Use machine learning quality scores to assess and account for batch-related quality differences during analysis [22].

FAQs on Rare Events

Q1: What defines a "rare event" in biomedical data?

Rare events are incidents that stand out due to their infrequency, and their definition can be context-dependent [23]. In machine learning for biomedical data, this often refers to a significant class imbalance where the event of interest (e.g., a circulating tumor cell) is vastly outnumbered by other events (e.g., regular blood cells) [24] [23]. The "Curse of Rarity" (CoR) describes the challenge that these events provide limited information due to their scarcity, leading to issues in decision-making, modeling, and validation [23].

Q2: What are common approaches for detecting rare events in an unsupervised manner?

Unsupervised detection does not require prior knowledge of the rare event's signature. One effective approach uses a Denoising Autoencoder (DAE) [24].

- Principle: The DAE is trained to reconstruct clean data from a noisy version. It learns the distribution of common events very well. When a rare event is input, the reconstruction error is high because the event does not fit the common pattern. This reconstruction error serves as a metric for rarity [24].

- Workflow: The data (e.g., an image) is split into tiles. Each tile is noised and fed into the DAE. The reconstruction error is calculated for each tile, and tiles are ranked by this error, with the highest errors corresponding to the most rare (and potentially most interesting) events [24].

Troubleshooting Guide: Rare Events

Problem: A model fails to learn or identify rare biological events.

- Potential Cause: Extreme class imbalance, where the rare events are outnumbered by common events by several orders of magnitude (e.g., 1 in 1 million) [24].

- Solution:

- Data-Level Approaches: Use techniques like oversampling the rare class or undersampling the common class to create a more balanced training set [23].

- Algorithm-Level Approaches: Employ cost-sensitive learning, where a higher penalty is assigned to misclassifying the rare event, or use ensemble methods designed for imbalanced data [23].

- Anomaly Detection: Frame the problem as unsupervised anomaly detection using methods like the DAE-based RED algorithm to isolate rare events without pre-labeled data [24].

FAQs on Measurement Artifacts

Q1: How do missing values act as a measurement artifact, and what is special about BEAMs?

Missing Values (MVs) are a common artifact in high-dimensional biomedical data. They can be technically driven (e.g., below detection limit) or biologically driven (e.g., the analyte is absent) [21]. Batch Effect Associated Missing Values (BEAMs) are a specific, problematic type of MV where an entire feature (e.g., a protein or gene) is missing in one batch but present in others due to differences in platform coverage or sensitivity [21]. BEAMs present a substantial challenge because they create a perfect confounding between the batch and the missingness pattern.

Q2: How should I handle missing values in a multi-batch dataset?

The standard practice of performing Missing Value Imputation (MVI) first, followed by Batch Effect Correction (BEC), is flawed and can be detrimental when BEAMs are present [21].

- Recommended Strategy: The handling of MVs and BEC must be considered together. For standard MVs, a batch-sensitized imputation (imputing within batches) is recommended [21].

- For BEAMs: Specialized tools or cautious workflows are needed. Simply imputing values for a feature that is entirely missing in a batch can introduce false signals and artificial confidence [21]. Tools like HarmonizR, which performs batch correction on sub-matrices without imputing BEAMs, can be a more reliable approach for proteomic data [20].

Experimental Protocols

Protocol 1: Quality-Aware Batch Effect Detection and Correction in RNA-seq Data

This protocol uses a machine-learning-based quality score to detect and correct for batches without prior knowledge [22].

Quality Score Calculation:

- Input: FASTQ files.

- Tool:

seqQscoreror similar. - Method: Derive quality features from the files (either full files or a subset of 1 million reads to save time). Use a pre-trained classifier to predict a probability score (

Plow) for each sample being of low quality [22].

Batch Detection:

- Statistically compare the distribution of

Plowscores across suspected or documented batches (e.g., using Kruskal-Wallis test). A significant difference indicates that batch effects are correlated with sample quality [22].

- Statistically compare the distribution of

Batch Correction:

- Use the

Plowscore as a covariate in a correction model (e.g., in thesvapackage) to remove the variation associated with quality differences [22]. - Evaluation: Compare PCA plots and clustering metrics (Gamma, Dunn1, WbRatio) before and after correction. The goal is for samples to cluster by biological group, not by batch or quality score [22].

- Use the

Protocol 2: Unsupervised Rare Event Detection in Liquid Biopsy Images

This protocol details the use of a Denoising Autoencoder (DAE) to find rare cells in immunofluorescence images without prior labeling [24].

Image Tiling:

- Divide a large immunofluorescence image (e.g., with DAPI, Cytokeratin, Vimentin, CD45/CD31 channels) into smaller tiles of 32x32 pixels. This typically generates millions of tiles from a single image [24].

DAE Training:

- Architecture: A deep learning model with an encoder (compresses the input tile) and a decoder (reconstructs the tile).

- Training: Input noisy versions of the tiles. Train the DAE to output the clean, original tile. The model learns the distribution of common events (e.g., immune cells) present in the majority of tiles [24].

Rarity Scoring and Ranking:

- Input: Pass each clean tile through the trained DAE.

- Calculation: For each tile, compute the reconstruction error (e.g., mean squared error) between the DAE's output and the original input.

- Output: Rank all tiles based on this error. The tiles with the highest error are the most "rare" as the model could not reconstruct them well. These are candidate tiles containing biologically interesting, rare cells like CTCs [24].

Key Data Summaries

Table 1: Common Batch Effect Correction Algorithms (BECAs) and Their Characteristics

| Algorithm | Main Principle | Key Application Context | Pros | Cons |

|---|---|---|---|---|

| ComBat [17] [19] | Empirical Bayes framework to standardize mean and variance across batches. | Bulk omics data (e.g., transcriptomics, proteomics) with known batch factors. | Effective for known batches; can handle parametric and non-parametric data. | Assumes batch effects fit a linear model; requires features to be present in all batches for standard use. |

| limma's removeBatchEffect() [19] [18] | Linear model to remove batch-associated variation. | Balanced designs in bulk omics data. | Simple, fast, and effective for linear, additive effects. | Less effective for complex, non-linear batch effects. |

| HarmonizR [20] | Uses ComBat/limma on sub-matrices created by matrix dissection. | Proteomic data with extensive missing values (including BEAMs). | Does not require data imputation, preventing the introduction of imputation artifacts. | More computationally complex than standard ComBat. |

| SVA/RUV [19] | Identifies and adjusts for surrogate variables or unwanted variation. | When sources of batch effects are unknown or unrecorded. | Does not require prior knowledge of batch factors. | Risk of removing biological signal if surrogate variables are correlated with biology. |

| Rarity Level | Event Frequency | Description & Challenges |

|---|---|---|

| R1: Extremely Rare | 0 - 1% | The most challenging level. Events are exceptionally scarce, leading to the "Curse of Rarity" with very limited information for modeling. |

| R2: Very Rare | 1 - 5% | Very infrequent events. Standard ML models often ignore this class without specialized techniques (e.g., oversampling, cost-sensitive learning). |

| R3: Moderately Rare | 5 - 10% | Manageable imbalance. Ensemble methods and careful sampling can be effective. |

| R4: Frequently-Rare | > 10% | The least severe level of imbalance. Standard algorithms may perform adequately but can still benefit from imbalance-aware techniques. |

Visual Workflows and Pathways

Diagram 1: Batch Effect Assumption Model

Diagram Title: Theoretical Assumptions of Batch Effects

Diagram 2: Rare Event Detection Workflow

Diagram Title: Unsupervised Rare Event Detection Pipeline

Diagram 3: Impact of Missing Value Imputation on Batch Effects

Diagram Title: BEAMs Skew Downstream Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Managing Data Skew

| Tool / Resource | Function | Key Application Note |

|---|---|---|

| ComBat [17] [19] | Batch Effect Correction Algorithm | Best for known batches in balanced designs. Use non-parametric mode for non-Gaussian data. |

| HarmonizR [20] | Data Harmonization Tool | Essential for proteomic datasets with high rates of missing values; avoids error-prone imputation. |

| limma R Package [19] [18] | Linear Models for Microarray & RNA-seq Data | Its removeBatchEffect() function is a fast, standard choice for linear batch effect removal. |

| seqQscorer [22] | Machine Learning-Based Quality Assessment | Automatically evaluates NGS sample quality from FASTQ files; can be used to detect quality-associated batch effects. |

| Denoising Autoencoder (DAE) [24] | Unsupervised Rare Event Detection | Framework for isolating rare analytes (e.g., CTCs) in images without prior knowledge of their signature. |

| OpDEA [19] | Workflow Sensitivity Analysis | Evaluates how sensitive differential expression results are to the choice of BECA and other workflow steps. |

Stability testing is a critical component of pharmaceutical development, essential for understanding how the quality of a drug substance or product changes over time under various environmental conditions. The International Council for Harmonisation (ICH) has provided the global benchmark for these activities for decades. A significant evolution is underway with the new ICH Q1 Step 2 Draft Guideline, endorsed in April 2025, which consolidates previous guidelines (Q1A-F and Q5C) into a single, modernized framework [25] [26] [27].

This revised guideline emphasizes science- and risk-based approaches, aligning with modern Quality by Design principles and encouraging robust stability lifecycle management [26] [27]. For researchers, this shift is paramount. It moves stability testing from a box-ticking regulatory exercise to an integrated, data-driven process that requires sophisticated handling of complex data, including navigating the challenges of data skew and feature distribution stability in prediction models.

Frequently Asked Questions (FAQs)

1. What is the most significant change in the new ICH Q1 draft guideline? The most significant change is the consolidation of multiple previous guidelines into a single, unified document. This new draft is structured into 18 main sections and 3 annexes, replacing the fragmented Q1A-F and Q5C series. It introduces a more holistic framework and expands its scope to include emerging product types like Advanced Therapy Medicinal Products (ATMPs) and provides new guidance on stability modeling and risk-based approaches [25] [26] [27].

2. How can I justify a reduced stability study design, like bracketing or matrixing? The new guideline, particularly in Annex 1, provides a clearer framework for designing reduced stability studies using bracketing and matrixing. Justification must be based on prior knowledge and robust risk assessment. For instance, bracketing (testing only the extremes of certain design factors) is acceptable when supported by data from development studies that demonstrate a clear understanding of the product's stability behavior [27].

3. My stability data is highly skewed and does not follow a normal distribution. How does this impact my shelf-life calculation? Skewed data directly challenges the traditional statistical models that often assume normality. The new guideline's Annex 2 on stability modeling acknowledges this by encouraging the use of more flexible statistical approaches. In such cases, you may need to:

- Explore Alternative Distributions: Utilize statistical models based on non-normal, or skew distributions, which offer increased flexibility for modeling asymmetric data [28] [29].

- Leverage Advanced Modeling: Employ modern machine learning techniques that can better handle distributional shifts and skewed data, ensuring more accurate and reliable shelf-life predictions [30].

4. What are the new requirements for stability studies on Advanced Therapy Medicinal Products (ATMPs)? Annex 3 of the new guideline is dedicated to ATMPs, such as cell and gene therapies. It addresses their unique stability challenges, which often include very short shelf-lives and high sensitivity. The guidance requires real-time stability assessments and considers the unique quality attributes of these complex products, though some stakeholders have noted that further detailed guidance may still be needed [25] [27].

Troubleshooting Guides

Problem 1: Handling Skewed and Multi-Modal Stability Data

Challenge: The distribution of your stability data (e.g., for a degradation product) is highly skewed or shows multiple peaks (multi-modal), violating the assumptions of standard statistical models used for shelf-life estimation [28].

Solutions:

- Utilize Flexible Statistical Distributions: Move beyond normal distribution assumptions. Consider using a Modified Generalized Skew (MGS) distribution, which can model asymmetric data with multiple modes by incorporating higher-order moments like skewness and kurtosis [28]. The density function for such a family is given by

fY(y,α,λ) = 2/(1+αρ4) * (1+αy^4) * h(y) * G(λy), whereαandλcontrol the modes and skewness [28]. - Implement Skew-Probabilistic Neural Networks (SkewPNN): For a machine learning approach, use SkewPNN. It replaces the standard Gaussian kernel in a Probabilistic Neural Network with a skew-normal kernel function, providing the flexibility to model underlying class densities in imbalanced or non-symmetric data effectively [29].

- Adopt Exact Feature Distribution Matching (EFDM): If treating lighting or environmental conditions as a style factor, use EFDM as a loss objective. This aligns the feature distributions of your data with the target distribution across multiple moments—mean, variance, skewness, and kurtosis—leading to more robust predictions in complex, multi-illuminant scenarios [30].

Experimental Protocol: Fitting a Modified Generalized Skew Distribution

- Data Collection: Collect stability data for the critical quality attribute (CQA) of interest.

- Distribution Fitting: Fit the MGS distribution to your data by estimating parameters

α(mode controller) andλ(skewness controller). - Model Selection: Compare the goodness-of-fit of the MGS distribution against the normal and standard skew-normal distributions using criteria like AIC or BIC.

- Moment Calculation: Calculate the first four moments of the fitted distribution using the provided formulas [28]:

- First moment (Mean):

μ1 = [ρ1 + αρ5] / [1 + αρ4] - Second moment (Variance related):

μ2 = [ρ2 + αρ6] / [1 + αρ4] - Third moment (Skewness related):

μ3 = [ρ3 + αρ7] / [1 + αρ4] - Fourth moment (Kurtosis related):

μ4 = [ρ4 + αρ8] / [1 + αρ4]whereρris the r-th moment of a base skew distribution.

- First moment (Mean):

- Shelf-life Estimation: Use the fitted and validated MGS model to estimate the shelf-life, ensuring the confidence intervals account for the data's specific distribution shape.

Problem 2: Adjusting to the New Guideline's Emphasis on Risk and Lifecycle Management

Challenge: Your existing stability protocols and Standard Operating Procedures (SOPs) are designed for the old, fragmented guidelines and are not aligned with the new emphasis on science- and risk-based lifecycle management.

Solutions:

- Gap Analysis: Conduct a thorough review of the entire draft guideline against your current stability procedures [25].

- Update Training and SOPs: Begin developing new training modules and updating internal SOPs to reflect the consolidated structure and new concepts, such as lifecycle stability management (Section 15) [25] [27].

- Engage Early with Regulators: Monitor communications from the FDA and EMA for evolving interpretations. Participate in industry forums to anticipate inspector expectations regarding the justification for risk-based decisions [25].

Structured Data Tables

Table 1: Comparison of Key Changes in ICH Q1 Guideline

| Aspect | Previous Guidelines (Q1A-F, Q5C) | New 2025 Draft Q1 Guideline |

|---|---|---|

| Structure | Multiple fragmented documents | Single, consolidated document (18 sections, 3 annexes) [25] [26] |

| Core Approach | Largely fixed and descriptive | Science- and risk-based, aligned with QbD [27] |

| Product Scope | Primarily synthetics and some biologics | Expanded to include ATMPs, novel excipients, drug-device combinations [27] |

| Statistical Modeling | Limited and vague guidance | New, clearer guidance in Annex 2 [25] |

| Lifecycle Management | Not explicitly addressed | Dedicated section (Section 15) on stability lifecycle management [27] |

| Reduced Designs | Addressed in Q1D | Refined and incorporated into Annex 1 with emphasis on risk-justification [27] |

Table 2: Research Reagent Solutions for Stability Studies

| Item | Function in Stability Prediction |

|---|---|

| Reference Standards | Essential for ensuring the reliability and consistency of analytical methods throughout the stability study. The new guideline provides clearer instructions on their stability testing and storage [25]. |

| Novel Excipients/Adjuvants | These can significantly impact product stability. The guideline now includes specific considerations for their evaluation due to their potential effect on drug product quality [26] [27]. |

| Validated Modeling Software | Critical for implementing the statistical modeling and predictive stability approaches encouraged in Annex 2 of the new guideline. Used for shelf-life prediction and extrapolation [25]. |

| Forced Degradation Samples | Samples deliberately degraded under extreme conditions (e.g., high heat, pH, oxidation) are key reagents for validating stability-indicating analytical methods during development studies [26] [27]. |

Workflow and Relationship Diagrams

Stability Study Design Workflow

Handling Skewed Data in Stability Prediction

Technical Support & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: My AI model for predicting compound efficacy performs well in validation but fails in real-world testing. What could be wrong?

A: This is a classic symptom of data skew undermining model generalizability. The most likely cause is a covariate shift, where the statistical distribution of the input features (e.g., chemical structures, assay data) in your real-world data differs from the data used to train and validate the model [31]. For instance, your training data may overrepresent certain molecular scaffolds, causing the model to perform poorly on novel chemotypes encountered in production.

- Diagnosis Checklist:

- Compare the distributions of key molecular descriptors (e.g., molecular weight, logP, polar surface area) between your training set and the new, real-world data.

- Perform a Principal Component Analysis (PCA) to visualize if the new data clusters outside the domain of your training data.

- Audit your data collection process for sampling bias, where the training data was not representative of the entire chemical space of interest due to experimental priorities or technical limitations [32].

Q2: My dataset for a toxicity prediction model has very few positive (toxic) compounds. The model has high overall accuracy but misses all the toxicants. How can I fix this?

A: You are dealing with a class imbalance problem, a common form of data skew in drug discovery where inactive or safe compounds significantly outnumber active or toxic ones [32]. Models trained on such data become biased toward the majority class.

- Solution Pathway:

- Do not rely on accuracy alone. Use metrics like Precision, Recall (Sensitivity), and the F1-score, which are more informative for imbalanced datasets [33].

- Apply resampling techniques: Use Synthetic Minority Over-sampling Technique (SMOTE) to generate synthetic samples of the minority (toxic) class. This technique creates new data points by interpolating between existing minority class instances in feature space, helping to balance the dataset and refocus the model [32].

- Consider algorithmic solutions: Use models like Cost-Sensitive Classifiers that impose a higher penalty for misclassifying the minority class during training.

Q3: After deploying a model for high-throughput screening, the results are inconsistent with subsequent manual assays. The Z'-factor was acceptable. What should I investigate?

A: While a good Z'-factor indicates a robust assay window, it does not guarantee that the data distribution fed into your model is stable [34]. The issue may lie in technical bias introduced during data processing.

- Troubleshooting Steps:

- Verify data normalization: Ensure that the method used to normalize signals (e.g., using ratiometric data analysis in TR-FRET assays) is applied consistently and correctly. Small lot-to-lot variability in reagents can affect raw signals but should be corrected by proper ratiometric calculations [34].

- Check for instrumentation drift: Confirm that the instrument settings (e.g., laser power, detector gain) have not drifted over time, as this can introduce a subtle covariate shift.

- Audit the feature engineering pipeline: Ensure that the steps for calculating molecular features from raw data are identical between the development and deployment phases.

The following table summarizes quantitative findings from studies investigating data skew and model performance in biomedical contexts.

Table 1: Impact of Data Skew and Mitigation Strategies on Model Performance

| Study Context | Skew Type / Mitigation Method | Key Performance Finding | Citation |

|---|---|---|---|

| Sepsis Early Detection Model (First Affiliated Hospital of Zhengzhou University) | Integration of MLD with EHR to address data representativeness. | Model sensitivity: 87%; specificity: 89%, significantly outperforming traditional methods. [35] | |

| Ovarian Cancer Diagnostic Models | Comparative analysis of models on blood test data. | Best-performing model (Medina, Jamie E. et al.) achieved sensitivity of 0.91 and specificity of 0.96 on training set. [35] | |

| Polymer Material Property Prediction | Use of SMOTE to balance imbalanced data. | Application of SMOTE with XGBoost improved the prediction of mechanical properties in an imbalanced dataset. [32] | |

| Catalyst Design for Hydrogen Evolution | Use of SMOTE to address uneven data distribution. | SMOTE improved predictive performance of ML models for candidate screening. [32] | |

| General Model Assessment | Z'-factor for assay quality (not model quality). | Assays with Z'-factor > 0.5 are considered suitable for screening. A large assay window with high noise can have a lower Z'-factor than a small window with low noise. [34] |

Experimental Protocols

Protocol 1: Detecting Covariate Shift in a Compound Library

Objective: To determine whether new, externally sourced compounds fall outside the feature distribution of a model's training set.

Materials: Training dataset, new compound dataset, chemical descriptor calculation software (e.g., RDKit).

Methodology:

- Calculate Descriptors: For both training and new compound sets, calculate a standardized set of molecular descriptors (e.g., MW, logP, number of rotatable bonds, H-bond donors/acceptors).

- Standardize Data: Apply the same scaling (e.g., StandardScaler) fitted only on the training data to both sets.

- Dimensionality Reduction: Perform PCA on the scaled training data. Project the new data onto the same principal components.

- Visualization & Analysis: Create a scatter plot of the first two principal components (PC1 vs. PC2). Visually inspect if the new compounds cluster within the cloud of training data.

- Quantitative Measure: Calculate the Mahalanobis distance (or use a population stability index) between the training and new datasets in the principal component space. A large distance indicates a significant covariate shift. [31]

Protocol 2: Mitigating Class Imbalance with SMOTE

Objective: To balance a dataset for a toxicity prediction model where toxic compounds are the minority class.

Materials: Imbalanced dataset, programming environment (e.g., Python) with imbalanced-learn library.

Methodology:

- Data Preprocessing: Split data into features (X) and target (y). Perform train-test split before applying SMOTE to avoid data leakage.

- Apply SMOTE: Apply the SMOTE algorithm exclusively to the training data. SMOTE generates synthetic minority class samples by:

- For each sample in the minority class, finding its k-nearest neighbors (typically k=5).

- Selecting one of these neighbors at random.

- Calculating a synthetic data point at a random point on the line segment connecting the original sample and its selected neighbor. [32]

- Model Training: Train your classifier (e.g., Random Forest, XGBoost) on the resampled, balanced training set.

- Validation: Evaluate the model on the untouched, imbalanced test set using metrics like AUC-PR and F1-score.

The workflow for this protocol is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Managing Data Skew in Drug Discovery

| Item / Solution | Function / Description | Relevance to Skew & Generalizability |

|---|---|---|

| SMOTE & Variants (Borderline-SMOTE, SVM-SMOTE) | Algorithmic oversampling techniques to synthetically generate samples for the minority class. [32] | Directly addresses class imbalance, preventing model bias toward the majority class and improving prediction of rare outcomes (e.g., toxicity, high-efficacy). |

| Explainable AI (xAI) Tools (e.g., SHAP, LIME) | Provides post-hoc interpretations of model predictions, highlighting the most influential features. [36] | Uncovers hidden biases by revealing if models rely on spurious correlations. Increases trust and allows researchers to audit and refine models. |

| Federated Learning Frameworks | A distributed learning technique where models are trained across multiple decentralized data sources without sharing the raw data. [35] | Mitigates sample selection bias by leveraging more diverse datasets from different institutions, leading to more robust and generalizable models. |

| Z'-Factor Statistical Metric | A measure of the quality and robustness of an assay, incorporating both the assay dynamic range and the data variation. [34] | Ensures high-quality, reproducible input data. Poor assay quality (low Z'-factor) is a source of noise and bias that propagates through the ML pipeline. |

| Hash-Based Partitioning | A data management technique to ensure even distribution of data across computational partitions in distributed systems. [37] | Prevents technical data skew during large-scale processing, ensuring efficient model training and preventing bottlenecks that can distort analysis. |

Methodological Approaches: Data Resampling, ASAP, and ML for Stability Modeling

Frequently Asked Questions (FAQs)

Q1: Why is accuracy a misleading metric for imbalanced datasets, and what should I use instead? In imbalanced datasets, a model can achieve high accuracy by simply predicting the majority class for all instances. For example, in a dataset where 99% of transactions are non-fraudulent, a model that always predicts "non-fraudulent" will be 99% accurate but useless for identifying fraud [38] [39]. Instead, you should use metrics that provide a nuanced view of model performance, such as Precision, Recall, F1-score, and AUC-ROC [40] [39]. These metrics better capture the model's effectiveness at identifying the minority class.

Q2: When should I choose SMOTE over Random Undersampling for my experiment? The choice depends on your dataset size and the risk you want to mitigate.

- Use SMOTE when your overall dataset is not extremely large, and you want to avoid losing information. SMOTE is preferred as it generates new synthetic samples for the minority class, helping the model learn its characteristics without mere duplication [38] [41].

- Use Random Undersampling when you have a very large dataset (millions of rows) and computational efficiency is a concern. Be aware that randomly removing majority class instances can lead to a significant loss of valuable information [39].

Q3: My model is overfitting after applying SMOTE. What is the cause, and how can I resolve it? A common cause is that the standard SMOTE algorithm can generate noisy samples in the feature space or create too many synthetic instances in high-density regions of the minority class, leading the model to learn an overly specific pattern [38] [42]. Consider these solutions:

- Use Hybrid Techniques: Combine SMOTE with data cleaning methods like SMOTE + Tomek Links or SMOTE + ENN. These hybrids remove overlapping data points from both classes after oversampling, resulting in a clearer decision boundary and reduced overfitting [38].

- Try Advanced Variants: Implement improved algorithms like ISMOTE (Improved SMOTE), which expands the sample generation space to create more diverse and realistic synthetic samples, thereby better preserving the underlying data distribution [42].

Q4: How can I implement a basic SMOTE process in Python for a binary classification problem?

You can use the imblearn library to easily implement SMOTE. The following code snippet demonstrates the process [38]:

Remember, SMOTE should only be applied to your training set. Your test set should remain unchanged to properly evaluate model performance on the original data distribution [38].

Troubleshooting Guides

Problem: SMOTE Generates Noisy or Unrealistic Samples

Diagnosis: This occurs when synthetic samples are generated in regions that overlap with the majority class or do not conform to the true data manifold, confusing the classifier [42].

Solution:

- Switch to a Focused Oversampling Algorithm: Use Borderline-SMOTE, which only oversamples minority instances that are on the "borderline" (hard to classify) rather than all minority instances, reducing the generation of noise [42].

- Apply a Cleaning Hybrid Method: Use the SMOTETomek hybrid method from

imblearn, which applies SMOTE first and then removes Tomek links (pairs of close instances from opposite classes) to clean the feature space [38].

Problem: Significant Loss of Information After Random Undersampling

Diagnosis: Randomly discarding majority class samples can remove instances that carry important patterns, leading to an under-trained model [39].

Solution:

- Use Informed Undersampling: Instead of random removal, use methods that aim to preserve important majority samples. Tomek Links can be used for undersampling by removing only the majority class instance from each Tomek pair, which are borderline points [39].

- Implement a Hybrid Approach: Combine undersampling with SMOTE. This balances the dataset while mitigating the downsides of each method used alone. The

imblearnlibrary provides built-in hybrid methods [38]. - Apply Ensemble Undersampling: Use EasyEnsemble or BalancedRandomForest classifiers. These algorithms create multiple subsets of the majority class and ensemble the results, ensuring that different majority class samples are considered across models and reducing information loss [40].

Problem: The Classifier Still Favors the Majority Class After Resampling

Diagnosis: The resampling process might not have been sufficient, or the model needs a direct incentive to pay more attention to the minority class.

Solution:

- Adjust Class Weights: Many machine learning algorithms (e.g., in scikit-learn) have a

class_weightparameter. Setting this to'balanced'automatically adjusts weights inversely proportional to class frequencies. This makes the model penalize misclassifications of the minority class more heavily [40]. - Tune the Resampling Ratio: SMOTE and undersampling don't always require a perfect 1:1 balance. Experiment with different

sampling_strategyratios (e.g., 0.5, 0.75) to find the optimal class distribution for your specific problem [43]. - Re-evaluate Your Metrics: Ensure you are not using accuracy. Confirm that improvements in Recall (ability to find all positive samples) and F1-score (balance between precision and recall) are being observed, even if overall accuracy decreases slightly [38] [39].

Experimental Protocols & Data

Protocol 1: Benchmarking Resampling Techniques

This protocol outlines a standardized method for comparing the efficacy of different data-level solutions on a given imbalanced dataset [38] [42].

1. Data Preparation:

- Split the dataset into a fixed training set (e.g., 70%) and a test set (e.g., 30%). The test set must remain untouched and reflect the original, real-world class distribution.

- Preprocessing: Apply feature scaling and encoding based on the dataset's requirements.

2. Resampling Application (on Training Set Only):

- Apply the following techniques to the training data only:

- Baseline: No resampling.

- Random Undersampling: Reduce the majority class randomly.

- SMOTE: Generate synthetic minority class samples.

- ADASYN: A SMOTE variant that generates more samples for "hard-to-learn" minority instances [38].

- Hybrid Method (e.g., SMOTE + Tomek Links).

3. Model Training & Evaluation:

- Train the same classifier (e.g., Logistic Regression, Random Forest) on each resampled training set.

- Evaluate all models on the same, original test set.

- Record key performance metrics: Precision, Recall, F1-Score, and AUC-ROC.

4. Workflow Diagram: The following diagram visualizes the experimental workflow.

Protocol 2: Evaluating Feature Distribution Stability Post-Resampling

This protocol is crucial for thesis research focused on whether resampling distorts the original feature space and how that impacts model robustness [42] [44].

1. Dimensionality Reduction:

- Apply a technique like PCA (Principal Component Analysis) or t-SNE to the original training data (before resampling) to project it into 2 or 3 dimensions for visualization.

2. Comparative Visualization:

- Apply the same PCA/t-SNE transformation (fitted on the original data) to the resampled datasets (e.g., after SMOTE, after undersampling).

- Create 2D/3D scatter plots for the original and each resampled dataset. Visually inspect if the synthetic samples (from SMOTE) follow the natural cluster of the original minority class or if they create artificial, distorted patterns [42].

3. Quantitative Stability Metrics:

- Intra-class Distance: Calculate the average distance between minority class samples before and after resampling. A significant increase might indicate generation of noisy, spread-out samples.

- Inter-class Overlap: Measure the separation between majority and minority class distributions post-resampling. Increased overlap can signal a noisier dataset.

Performance Comparison of Resampling Techniques

The table below summarizes quantitative findings from a study comparing various oversampling algorithms across multiple public datasets, using metrics critical for imbalanced data [42].

Table 1: Classifier Performance Improvement with Different Oversampling Techniques (Average Relative % Increase)

| Oversampling Technique | F1-Score | G-Mean | AUC-ROC |

|---|---|---|---|

| ISMOTE (Improved SMOTE) | +13.07% | +16.55% | +7.94% |

| Standard SMOTE | Base | Base | Base |

| ADASYN | Lower | Lower | Lower |

| Borderline-SMOTE | Lower | Lower | Lower |

Resampling Technique Selection Guide

The table below provides a strategic overview of when to use each technique based on dataset characteristics and research goals.

Table 2: Strategic Guide to Data-Level Solutions

| Technique | Ideal Use Case | Advantages | Disadvantages & Risks |

|---|---|---|---|

| Random Undersampling | Very large datasets; computational cost is a primary concern [39]. | Simple, fast; reduces computational load. | High risk of losing valuable data from the majority class [39]. |

| SMOTE | Small to medium-sized datasets; the goal is to avoid information loss [38] [42]. | Generates diverse synthetic data; avoids mere duplication. | Can generate noisy samples and cause overfitting in high-density regions [38] [42]. |

| Hybrid (SMOTE+ENN) | Datasets with significant class overlap; stability of the feature distribution is critical [38]. | Cleans data space; leads to well-defined class clusters. | Can be too aggressive, removing too many samples. |

| Algorithm-Level (Class Weights) | A quick first solution; when using algorithms that support it (e.g., SVM, Random Forest) [40]. | No change to the dataset; easy to implement. | May be less effective than data-level methods when complex, new data patterns are needed [43]. |

Table 3: Key Software Tools and Libraries for Imbalanced Data Research

| Tool / Library | Function | Application in Research |

|---|---|---|

| Imbalanced-Learn (imblearn) | A Python library providing a wide array of resampling techniques. | The primary tool for implementing SMOTE, its variants (ADASYN, Borderline-SMOTE), undersampling, and hybrid methods in a scikit-learn compatible framework [38] [39]. |

| Scikit-learn | A core library for machine learning in Python. | Used for data preprocessing, training baseline and comparative models, and calculating all essential evaluation metrics (F1, Precision, Recall, AUC-ROC) [39]. |

| SMOTE Variants (ISMOTE, G-SMOTE) | Advanced algorithms that improve upon the standard SMOTE data generation mechanism. | Critical for research aiming to enhance the quality and realism of synthetic samples, thereby improving feature distribution stability and model generalization [42]. |

| SHAP (SHapley Additive exPlanations) | A unified framework for interpreting model predictions. | Used post-training to explain which features drive predictions for minority class instances, adding a layer of interpretability to models trained on resampled data [45]. |

Troubleshooting Guides

G1: Poor Performance on Minority Class

Problem: Your model achieves high overall accuracy but fails to identify sick patients or rare events (the minority class). Diagnosis: This is a classic symptom of class imbalance. The classifier is biased towards the majority class because it is penalized equally for all types of errors, making it easier to "ignore" the minority class. Solution: Implement a cost-sensitive learning approach. Modify the algorithm's objective function to assign a higher misclassification cost for errors on the minority class. This forces the model to pay more attention to learning the characteristics of the minority class. Unlike resampling techniques, this method does not alter the original data distribution, preserving its integrity [46].

G2: Inconsistent Results Across Different Datasets

Problem: A model that performs well on one imbalanced medical dataset (e.g., Pima Indians Diabetes) shows degraded performance on another (e.g., Cervical Cancer Risk Factors). Diagnosis: The optimal algorithm and its parameters are often dataset-dependent. According to the "no-free-lunch" theorem, no single algorithm is superior for all problems [45]. Solution: Utilize Automated Machine Learning (AutoML) frameworks, such as H2O AutoML or Lazy Predict, for model selection. These tools automatically train and evaluate a wide range of models (e.g., Gradient Boosting, Extreme Gradient Boosting, Random Forest) and their ensembles, identifying the best-performing one for your specific dataset [45].

G3: Model is Difficult to Interpret

Problem: Your cost-sensitive model makes predictions, but you cannot understand which features it relies on, which is critical for medical diagnosis. Diagnosis: Complex ensemble or neural network models can act as "black boxes." Solution: Integrate model interpretation tools into your workflow. Use methods like SHapley Additive exPlanations (SHAP) to determine the importance and contribution of each input feature to the final prediction, providing crucial insight for researchers [45].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between cost-sensitive learning and data resampling? Cost-sensitive learning addresses imbalance by making the algorithm itself skew-insensitive, typically by imposing a higher penalty for misclassifying minority class examples within its loss function. In contrast, resampling (like SMOTE) alters the original training data distribution by adding synthetic minority samples or removing majority samples. A key advantage of cost-sensitive learning is that it avoids potential overfitting or loss of information that can occur from manipulating the dataset [46].

Q2: For which types of algorithms can cost-sensitive learning be applied? The cost-sensitive principle can be applied to a wide range of core machine learning algorithms. Research has demonstrated successful implementations by modifying the objective functions of Logistic Regression, Decision Trees, Extreme Gradient Boosting (XGBoost), and Random Forest models [46].

Q3: How do I know what cost weights to assign to each class? There is no universal set of weights. The optimal cost ratio is typically determined through empirical experimentation, often using cross-validation on the training data. A common starting point is to set the cost for each class inversely proportional to its frequency in the training data, but these weights should be treated as hyperparameters to be tuned for optimal performance [46].

Q4: My dataset is not only imbalanced but also small. What should I do? For small, imbalanced datasets, rigorous validation is crucial. Use stratified k-fold cross-validation to ensure that each fold preserves the class distribution of the overall dataset. This provides a more reliable estimate of model performance than a simple train-test split. Furthermore, consider leveraging ensemble methods or AutoML techniques that are effective even with limited data [45].

Q5: How can I ensure my model's predictions are stable and reliable for clinical use? Stability and reliability are achieved through a robust validation framework. This includes:

- Using multiple, independent medical datasets for validation [46].

- Reporting performance metrics specifically for the minority class (e.g., sensitivity, F1-score) in addition to overall accuracy.

- Performing statistical significance tests to compare different algorithmic approaches [46] [45].

- Interpreting model decisions with tools like SHAP to ensure they align with clinical knowledge [45].

Experimental Protocols & Data

Protocol: Validating a Cost-Sensitive Classifier on Medical Data

Objective: To compare the performance of a standard classifier against its cost-sensitive version on an imbalanced medical dataset.

Materials:

- Dataset: One of the benchmark medical datasets (e.g., Pima Indians Diabetes, Haberman Breast Cancer) [46].

- Algorithms: Standard and cost-sensitive versions of Logistic Regression, Decision Tree, and XGBoost.

- Evaluation Metrics: Accuracy, Sensitivity (Recall), Specificity, F1-Score.

Methodology:

- Data Preparation: Split the data into 70% training and 30% test sets, using stratification to maintain the imbalance ratio.

- Baseline Training: Train the standard (cost-insensitive) versions of the algorithms on the training set.

- Cost-Sensitive Training: Train the cost-sensitive versions. For example, set the

class_weightparameter in Scikit-learn to 'balanced' or manually tune the cost matrix. - Evaluation: Predict on the test set and calculate the evaluation metrics for both the overall model and the minority class.

- Comparison: Statistically compare the results to determine if the cost-sensitive approach yields a significant improvement in sensitivity without unduly compromising specificity.

Table 1: Example Performance Comparison on Chronic Kidney Disease Dataset (Illustrative Values)

| Algorithm | Overall Accuracy | Sensitivity (Sick Patients) | Specificity (Healthy Patients) | F1-Score (Minority Class) |

|---|---|---|---|---|

| Standard Logistic Regression | 92% | 65% | 97% | 0.70 |

| Cost-Sensitive Logistic Regression | 90% | 85% | 91% | 0.82 |

| Standard Decision Tree | 89% | 60% | 95% | 0.65 |

| Cost-Sensitive Decision Tree | 88% | 82% | 89% | 0.80 |

| Standard XGBoost | 94% | 75% | 98% | 0.78 |

| Cost-Sensitive XGBoost | 93% | 89% | 94% | 0.87 |

Note: Based on experimental results from [46].

Table 2: Characteristics of Medical Datasets Used in Imbalanced Learning Research

| Dataset | Majority Class | Minority Class | Approximate Imbalance Ratio | Key Predictors |

|---|---|---|---|---|

| Pima Indians Diabetes | Healthy | Diabetic | 1.6:1 | Glucose, BMI, Age |

| Haberman Breast Cancer | Survived ≥5 years | Died <5 years | 2.7:1 | Age, Year of Operation, Nodes |

| Cervical Cancer Risk Factors | Low Risk | High Risk | 7.7:1 | Number of Pregnancies, STDs, Hormonal Contraceptives |

| Chronic Kidney Disease | Not Chronic Kidney Disease | Chronic Kidney Disease | 3.6:1 | Blood Pressure, Albumin, Blood Glucose |

Note: Compiled from information in [46].

Model Workflow and Interpretation

Workflow: Skew-Insensitive Modeling Pipeline

The following diagram illustrates a robust workflow for developing a predictive model on imbalanced data, integrating both cost-sensitive learning and model interpretation.

Interpretation: Feature Importance in Stability Prediction

After model training, tools like SHAP can be used to interpret which input parameters were most critical for the model's predictions, a technique also used in geotechnical stability prediction [45]. The diagram below visualizes this interpretation logic.

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Benchmark Medical Datasets | Publicly available datasets with inherent class imbalance, used for model validation and benchmarking. | Pima Indians Diabetes, Haberman Breast Cancer [46]. |

| Cost-Sensitive Algorithm Variants | Modified versions of standard ML algorithms (e.g., Logistic Regression, XGBoost) whose internal objective function penalizes minority class errors more heavily. | Directly addressing class imbalance without resampling [46]. |

| AutoML Frameworks | Tools that automate the process of model selection, training, and hyperparameter tuning, saving researcher time and identifying high-performing models. | H2O AutoML, Lazy Predict [45]. |

| Model Interpretation Libraries | Software libraries that provide post-hoc explanations for model predictions, ensuring transparency and building trust. | SHAP (SHapley Additive exPlanations) [45]. |

| Stratified Cross-Validation | A resampling technique that preserves the percentage of samples for each class in every training/validation fold, crucial for reliable performance estimation on imbalanced data. | Tuning hyperparameters on the Pima Indians Diabetes dataset. |

| Performance Metrics | Evaluation metrics that are robust to class imbalance, focusing on the minority class's prediction quality. | Sensitivity, F1-Score, Precision-Recall Curves [46]. |

Implementing Accelerated Stability Assessment Program (ASAP) for Predictive Shelf-Life

Core Concepts of ASAP

What is the fundamental principle behind ASAP, and how does it differ from traditional stability testing?

The Accelerated Stability Assessment Program (ASAP) is a science-based approach designed to predict the shelf-life of drug products accurately and rapidly. Its fundamental principle relies on the isoconversion paradigm and a humidity-corrected Arrhenius equation [47] [48].

Unlike traditional stability testing, where samples are stored at fixed conditions and time points to measure the amount of degradation, ASAP fixes the level of degradation (at the specification limit) and measures the time required to reach that level under various stressed conditions. This "time to fail" or isoconversion time is the key metric used for modeling [47] [49] [50]. This approach compensates for the complex, often non-linear kinetics commonly found in solid-state drug products [48].

How does the ASAP model account for humidity, and what is the 'B' factor?

For solid dosage forms, relative humidity (RH) is a critical factor affecting stability. ASAP uses a moisture-corrected Arrhenius equation to quantitatively account for this [47] [48]:

The equation is expressed as: ln k = ln A - (Ea/RT) + B(RH) [47] [48]

Where:

- k is the degradation rate.

- A is the Arrhenius collision frequency.

- Ea is the activation energy.

- R is the gas constant.

- T is the temperature in Kelvin.

- B is the humidity sensitivity factor.

- RH is the equilibrium relative humidity.

The B-value indicates the product's sensitivity to moisture. It typically ranges from 0 (low moisture sensitivity) to 0.10 (high moisture sensitivity). A high B-value means that a small increase in relative humidity will lead to a significant decrease in shelf-life [47] [48].

Experimental Protocols & Design

What is a typical ASAP protocol for a solid oral dosage form?

A typical ASAP study involves exposing the product, without primary packaging, to a range of controlled temperature and humidity conditions. The goal is to find the time it takes to reach the specification limit (isoconversion) at each condition [47] [50]. A standard screening protocol might look like this [48]:

Table 1: Example ASAP Screening Protocol for Solid Dosage Forms [48]

| Temperature (°C) | Relative Humidity (% RH) | Typical Time (Days) |

|---|---|---|

| 50 | 75 | 14 |

| 60 | 40 | 14 |

| 70 | 5 | 14 |

| 70 | 75 | 1 |

| 80 | 40 | 2 |

It is recommended that all analyses are executed simultaneously to minimize analytical variation [47].

How do I design an ASAP study for a liquid formulation?