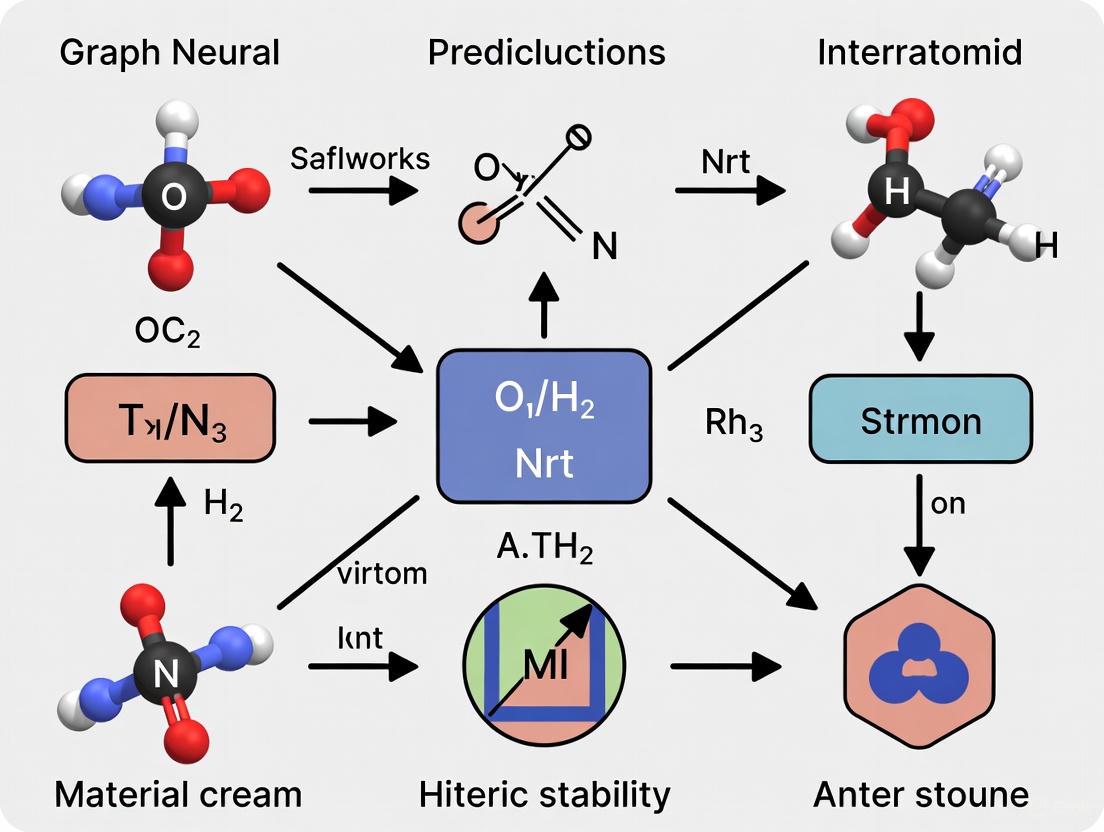

Graph Neural Networks for Interatomic Interactions: Advancing Stability Prediction in Drug Discovery and Materials Science

This article explores the transformative role of Graph Neural Networks (GNNs) in predicting interatomic interactions and system stability, a critical challenge in computational chemistry and drug development.

Graph Neural Networks for Interatomic Interactions: Advancing Stability Prediction in Drug Discovery and Materials Science

Abstract

This article explores the transformative role of Graph Neural Networks (GNNs) in predicting interatomic interactions and system stability, a critical challenge in computational chemistry and drug development. It covers the foundational principles of GNN architectures designed for molecular systems, details cutting-edge methodological advances and their applications in predicting drug-drug interactions and material properties, addresses key challenges in model stability and uncertainty quantification, and provides a comparative analysis of state-of-the-art models. Aimed at researchers and drug development professionals, this review synthesizes recent progress to guide the selection, application, and improvement of GNN-based models for robust and reliable atomic-scale simulations.

The Building Blocks: How GNNs Learn Interatomic Interactions and Molecular Representations

The accurate prediction of molecular properties and interatomic interactions is a cornerstone of modern computational chemistry and drug discovery. Graph Neural Networks (GNNs) have emerged as a powerful framework for this task, leveraging the inherent graph structure of molecules where atoms represent nodes and bonds represent edges. This paradigm allows GNNs to learn rich representations that capture complex chemical environments and interactions. The representation of molecular structures as graphs enables models to learn from large datasets and extrapolate to untrained geometries, providing remarkable predictive capabilities for properties ranging from quantum chemical energies to bioactivity and toxicity profiles [1].

This application note provides detailed protocols for representing molecular structures as graphs, with a specific focus on enabling stability prediction research through GNNs. We present standardized methodologies for graph construction, quantitative comparisons of representation schemes, and visualization techniques that facilitate model interpretability and deployment in real-world drug development pipelines.

Theoretical Foundations

Molecular Graph Theory

In molecular graph theory, a molecule is formally represented as a graph G = (V, E), where V is the set of vertices (atoms) and E is the set of edges (bonds). This representation preserves the topological connectivity of the molecule while abstracting away spatial coordinates, though geometric information can be incorporated as node and edge attributes. The graph structure naturally captures invariant molecular properties that are fundamental to chemical behavior and stability [1].

Graph neural networks operate directly on this structure through message-passing algorithms, where information is exchanged between connected nodes (atoms) across multiple layers. This enables the model to capture both local chemical environments and global molecular patterns. Recent theoretical work has shown that the message-passing mechanism in GNN interatomic potentials (GNN-IPs) allows them to capture non-local electrostatic interactions, explaining their remarkable extrapolation capability to untrained domains such as surfaces or amorphous configurations [1].

GNNs for Molecular Property Prediction

Multi-task learning (MTL) approaches have been developed to address data scarcity in molecular property prediction by leveraging correlations among related properties. However, imbalanced training datasets often degrade efficacy through negative transfer. Adaptive Checkpointing with Specialization (ACS) has been introduced as a training scheme for multi-task GNNs that mitigates detrimental inter-task interference while preserving MTL benefits [2]. ACS integrates a shared, task-agnostic backbone with task-specific trainable heads, adaptively checkpointing model parameters when negative transfer signals are detected. This approach has demonstrated practical utility in real-world scenarios, enabling accurate predictions with as few as 29 labeled samples for sustainable aviation fuel properties [2].

Application Notes

Molecular Graph Construction Protocol

Objective: Convert molecular structures into graph representations suitable for GNN processing.

Materials:

- Molecular structure files (SDF, MOL, XYZ, PDB formats)

- Python environment with RDKit or Open Babel

- NetworkX library for graph operations [3]

Procedure:

Molecular Input: Load molecular structure using cheminformatics toolkit

Node Creation: For each atom in the molecule:

- Create a node with unique identifier

- Add atom features as node attributes:

- Atom type (element)

- Formal charge

- Hybridization state

- Degree of connectivity

- Aromaticity

Edge Creation: For each bond in the molecule:

- Create an edge between corresponding atom nodes

- Add bond features as edge attributes:

- Bond type (single, double, triple, aromatic)

- Conjugation

- Stereochemistry

Graph Validation: Verify connectivity and feature consistency

Graph Serialization: Export graph in compatible format (GraphML, DGL, PyG)

Troubleshooting:

- Aromaticity perception errors: Use Kekulization before feature extraction

- Stereochemistry preservation: Verify chiral flag in source file

- Charge representation: Check for neutralization during file conversion

Advanced Representation Schemes

Table 1: Molecular Graph Representation Schemes

| Scheme | Node Features | Edge Features | Spatial Encoding | Best Use Case |

|---|---|---|---|---|

| Basic Graph | Element, Degree | Bond type, Conjugation | None | 2D QSAR |

| 3D-Aware Graph | Element, Hybridization | Bond type, Distance | Atomic coordinates | Conformation-dependent properties |

| Quantum Graph | Element, Partial charge | Bond order, Bond length | Wavefunction overlap | Reactivity prediction |

| Multi-Task Graph | Extended feature set | Multiple bond descriptors | Various | Low-data regimes [2] |

Multi-Task Learning Implementation

Objective: Implement ACS for molecular property prediction in low-data regimes.

Background: ACS combines shared GNN backbone with task-specific heads, using adaptive checkpointing to mitigate negative transfer. Validation loss is monitored for each task, checkpointing the best backbone-head pair when a task reaches a new minimum [2].

Procedure:

Architecture Setup:

- Initialize shared GNN backbone (message-passing network)

- Create task-specific MLP heads for each target property

- Configure independent optimization paths

Training Loop:

- Forward pass through shared backbone

- Task-specific processing through individual heads

- Loss computation with masking for missing labels

- Validation loss monitoring per task

Checkpointing:

- Track validation loss for each task independently

- Save backbone-head parameters when task-specific minimum is achieved

- Maintain best-performing specialized models for each task

Inference:

- Load specialized model for target property

- Generate predictions using task-optimized parameters

Validation: Benchmark against single-task learning and conventional MTL on molecular property benchmarks (ClinTox, SIDER, Tox21) [2].

Visualization Methods

Basic Molecular Graph Diagram

Figure 1: Basic molecular graph with atom and bond types

GNN Message Passing Diagram

Figure 2: GNN message passing between atoms

ACS Training Scheme Diagram

Figure 3: ACS architecture with shared backbone and task-specific heads

Experimental Protocols

Benchmarking Protocol for Molecular Property Prediction

Objective: Evaluate GNN performance on standard molecular property prediction tasks.

Dataset Preparation:

- Source data from MoleculeNet benchmarks (ClinTox, SIDER, Tox21) [2]

- Apply Murcko scaffold splitting for realistic evaluation [2]

- Handle missing labels through loss masking [2]

Model Configuration:

- GNN architecture: Message-passing network with 4-6 layers

- Hidden dimension: 128-300 units

- Readout function: Global mean pooling or set2set

- Task heads: 2-layer MLPs with task-specific dimensions

Training Procedure:

- Optimization: Adam optimizer with learning rate 0.001

- Batch size: 32-128 depending on dataset size

- Early stopping: Based on validation performance

- Evaluation metric: ROC-AUC for classification, RMSE for regression

Validation: Compare against state-of-the-art baselines including D-MPNN and other supervised methods [2].

Ultra-Low Data Regime Protocol

Objective: Assess performance with minimal labeled data.

Procedure:

- Create artificially limited datasets (29-100 samples) [2]

- Apply ACS training scheme with adaptive checkpointing

- Compare against single-task and conventional MTL baselines

- Evaluate on real-world low-data scenario (sustainable aviation fuels)

Metrics:

- Prediction accuracy relative to experimental values

- Data efficiency: Performance vs. training set size

- Negative transfer mitigation: Task imbalance robustness [2]

Data Presentation

Table 2: Quantitative Performance Comparison on Molecular Property Benchmarks [2]

| Method | ClinTox | SIDER | Tox21 | Average | Parameters |

|---|---|---|---|---|---|

| STL (Single-Task) | 0.823 | 0.635 | 0.758 | 0.739 | Task-specific |

| MTL (Multi-Task) | 0.845 | 0.642 | 0.769 | 0.752 | Shared |

| MTL-GLC | 0.847 | 0.645 | 0.772 | 0.755 | Shared |

| ACS (Proposed) | 0.876 | 0.649 | 0.775 | 0.767 | Shared + Specialized |

Table 3: Task Imbalance Analysis on ClinTox Dataset (Two Tasks) [2]

| Imbalance Level | STL | MTL | MTL-GLC | ACS |

|---|---|---|---|---|

| Balanced (I=0) | 0.823 | 0.845 | 0.847 | 0.876 |

| Moderate (I=0.3) | 0.801 | 0.832 | 0.838 | 0.861 |

| Severe (I=0.6) | 0.763 | 0.798 | 0.812 | 0.843 |

| Extreme (I=0.9) | 0.712 | 0.735 | 0.762 | 0.819 |

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Context |

|---|---|---|

| RDKit | Cheminformatics toolkit | Molecular graph construction and feature calculation |

| NetworkX | Graph analysis and visualization | Graph manipulation and algorithm implementation [3] |

| PyTorch Geometric | GNN library | Implementation of graph neural network architectures |

| DGL-LifeSci | Domain-specific GNN tools | Pre-built models for molecular property prediction |

| Graphviz | Graph visualization | Generation of publication-quality diagrams [4] |

| ACS Training Scheme | Multi-task learning | Mitigating negative transfer in low-data regimes [2] |

| Message-Passing GNN | Core architecture | Learning representations from molecular graphs [1] |

| Adaptive Checkpointing | Training optimization | Preserving task-specific knowledge in MTL [2] |

Graph Neural Networks (GNNs) have emerged as a transformative technology for modeling molecular systems, fundamentally shifting how researchers approach problems in drug discovery, materials science, and computational chemistry. The inherent graph structure of molecules—with atoms as nodes and bonds as edges—makes GNNs a natural fit for learning molecular representations. Early GNN models operated on simple graph structures with invariant features, but recent advances have incorporated geometric equivariance to account for the physical symmetries and 3D spatial relationships essential for accurate molecular property prediction.

The core learning pattern of most GNNs involves message passing, where each node aggregates feature information from its neighboring nodes to update its own representation. This process enables the network to capture complex molecular interactions and dependencies. However, traditional GNNs optimized for independent and identically distributed data often face performance degradation in real-world scenarios with out-of-distribution data, driving the development of more robust architectures that can handle distribution shifts commonly encountered in molecular systems.

Foundational GNN Architectures for Molecular Representation

Basic Architectural Framework

Most GNN architectures share a universal framework where each layer operates as a non-linear function of the form ( H^{(l+1)} = f(H^{(l)}, A) ), with ( H^{(0)} = X ) (node features) and ( H^{(L)} = Z ) (final node representations), where ( A ) represents the graph structure, typically as an adjacency matrix. The specific implementations differ primarily in how the function ( f(\cdot, \cdot) ) is designed and parameterized [5].

The Graph Convolutional Network (GCN) introduced one of the earliest and most influential propagation rules, using a layer-wise operation defined as ( f(H^{(l)}, A) = \sigma( \hat{D}^{-\frac{1}{2}}\hat{A}\hat{D}^{-\frac{1}{2}}H^{(l)}W^{(l)} ) ), where ( \hat{A} = A + I ) (adding self-loops), ( \hat{D} ) is the diagonal node degree matrix of ( \hat{A} ), and ( W^{(l)} ) is a layer-specific trainable weight matrix. This symmetric normalization ensures numerical stability while enabling effective feature propagation across graph neighborhoods [5].

Advanced Message-Passing Variants

Beyond basic graph convolutions, several specialized architectures have emerged with enhanced representational capabilities:

- Graph Attention Networks (GAT): Assign differential attention weights to neighbors during aggregation, allowing models to focus on more relevant molecular substructures [6].

- Graph Isomorphism Networks (GIN): Utilize a sum aggregator combined with multi-layer perceptrons to maximize representational capacity, theoretically as powerful as the Weisfeiler-Lehman graph isomorphism test [6].

- Message Passing Neural Networks (MPNNs): Provide a generalized framework that iteratively passes messages between neighboring nodes, with updates governed by learned neural network functions [6].

These foundational architectures excel at capturing topological relationships in molecular graphs but traditionally lack explicit mechanisms for incorporating 3D geometric information, which is crucial for accurately predicting many molecular properties.

The Critical Advancement: Geometric Equivariant GNNs

The Equivariance Principle in Molecular Systems

Geometric equivariance represents a paradigm shift in GNN design for molecular systems. While invariant models use features unchanged by transformations (e.g., bond lengths, angles), equivariant models maintain internal representations that transform consistently with input transformations. This property is essential for predicting molecular properties where directions matter, such as forces, dipole moments, and other vector-valued quantities [7] [8].

Formally, a function ( f: X \rightarrow Y ) is equivariant to a group ( G ) if for any transformation ( g \in G ), ( f(g \circ x) = g \circ f(x) ). In molecular systems, the relevant symmetry groups include SO(3) (rotations), SE(3) (rotations and translations), and E(3) (including reflections). Embedding these physical symmetries directly into network architectures—rather than applying constraints only to final outputs—has proven instrumental for achieving both data efficiency and prediction accuracy [8].

Key Equivariant Architectures and Their Innovations

Table 1: Comparison of Key Equivariant GNN Architectures for Molecular Systems

| Architecture | Core Innovation | Representation Type | Key Advantages | Target Applications |

|---|---|---|---|---|

| E2GNN [7] | Scalar-vector dual representation | Scalar and vector features | Computational efficiency while maintaining equivariance | Interatomic potentials, force prediction |

| TEGNN [9] | Equivariant locally complete frames | Tensor information projection | Incorporates chemical bond constraints and higher-order tensors | Molecular dynamics prediction |

| NequIP [8] | Higher-order tensor representations | Spherical harmonics | State-of-the-art accuracy for complex systems | Interatomic potentials, material properties |

| MACE [8] | Atomic cluster expansion | Higher-order body order | High data efficiency and accuracy | Interatomic potentials |

| MagNet [8] | E(3)-equivariance for spins | Magnetic force vectors | Models magnetic materials with spin interactions | Magnetic force prediction |

E2GNN (Efficient Equivariant GNN) addresses the computational challenges of equivariant models by employing a scalar-vector dual representation rather than relying on computationally expensive higher-order representations. The model maintains separate scalar features ( {{\bf{x}}}{i} ) and vector features ( {\overrightarrow{{\bf{x}}}}{i} ) for each node, updated through specialized geometric operations that preserve equivariance. This approach achieves significant efficiency improvements while maintaining high accuracy for interatomic potential and force predictions [7].

TEGNN (Tensor Improved Equivariant GNN) extends equivariant architectures to incorporate more sophisticated tensor information (relative position, velocity, torsion angles) while explicitly modeling chemical bonding constraints through generalized coordinates. The model employs a scalarization block that projects geometric tensors onto equivariant local frames, converting them into SO(3)-invariant scalar coefficients for message passing. This innovation allows TEGNN to leverage rich geometric information without the computational complexity of high-dimensional equivariant function embeddings [9].

Experimental Protocols and Benchmarking

Standardized Evaluation Frameworks

Robust evaluation is essential for comparing GNN architectures across molecular tasks. Standardized benchmarks have emerged using established datasets and metrics:

Table 2: Key Benchmark Datasets for Molecular GNN Evaluation

| Dataset | Domain | Scale | Prediction Tasks | Key Metrics |

|---|---|---|---|---|

| QM9 [8] [10] | Small organic molecules | 134k molecules | Quantum mechanical properties | MAE, RMSE |

| MD17 [9] | Molecular dynamics | 3-4M configurations | Energy and forces | MAE, RMSE |

| ESOL [10] | Physical chemistry | 1,128 molecules | Water solubility | RMSE, R² |

| FreeSolv [10] | Physical chemistry | 642 molecules | Hydration free energy | RMSE, MAE |

| Lipophilicity [10] | Physical chemistry | 4,200 molecules | Octanol/water distribution coefficient | RMSE, MAE |

For regression tasks (energy, solubility, etc.), standard metrics include Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and correlation coefficients (R², Pearson's R). For classification tasks (toxicity, activity, etc.), common metrics include ROC-AUC, precision-recall curves (AUPRC), and F1-score [6] [10].

Implementation Protocol for Molecular Property Prediction

A standardized experimental protocol for molecular property prediction involves these key steps:

Data Preparation and Splitting

- Obtain molecular structures from sources like MoleculeNet or TUDatasets

- Convert molecules to graph representations with nodes (atoms) and edges (bonds)

- Implement dataset splitting (typically 80/10/10 train/validation/test) with scaffold splitting to assess generalization

Model Configuration

- For invariant GNNs (GCN, GAT, GIN): 3-6 message passing layers with hidden dimensions of 64-256

- For equivariant GNNs (E2GNN, TEGNN): Implement geometric features with scalar-vector dimensions of 64-128

- Use appropriate readout functions: global mean/max/sum pooling for graph-level predictions

Training Procedure

- Optimization: Adam optimizer with learning rate 0.001-0.0001

- Regularization: Dropout (0.1-0.5), weight decay, and early stopping

- Batch size: 32-128 depending on model complexity and memory constraints

- Loss function: Mean squared error for regression, cross-entropy for classification

Validation and Testing

- Perform hyperparameter optimization on validation set

- Report final performance on held-out test set with multiple random seeds

- Conduct ablation studies to isolate contribution of architectural components

Architectural Visualizations

Molecular GNN Architecture Pathways - This diagram illustrates the parallel processing pathways for invariant and equivariant GNN architectures in molecular systems, from input structures to application outputs.

Equivariant GNN Message Passing - This workflow details the key operations in equivariant GNNs, highlighting the separate processing pathways for scalar and vector features and their interactions.

Table 3: Essential Computational Tools for Molecular GNN Research

| Resource Category | Specific Tools & Libraries | Primary Function | Application Context |

|---|---|---|---|

| Deep Learning Frameworks | PyTorch, PyTorch Geometric, TensorFlow, JAX | Model implementation and training | General GNN development |

| Molecular Processing | RDKit, Open Babel, MDAnalysis | Molecular graph construction and featurization | Data preprocessing |

| Specialized GNN Libraries | DGL-LifeSci, MatterGen, e3nn | Domain-specific GNN implementations | Drug discovery, materials science |

| Benchmark Datasets | MoleculeNet, TUDatasets, OGB | Standardized evaluation datasets | Model benchmarking |

| Quantum Chemistry Data | QM9, MD17, ANI-1 | High-quality reference data for training | Interatomic potential development |

The evolution of GNN architectures for molecular systems has progressed from basic graph convolutional networks to sophisticated geometrically equivariant models that explicitly incorporate 3D structural information. This progression has enabled increasingly accurate predictions of molecular properties, energies, and forces at computational costs far below traditional quantum mechanical methods.

Future research directions include developing more data-efficient architectures that can learn from limited labeled data, improving out-of-distribution generalization through stable learning techniques [11], and creating more interpretable models that provide insights into molecular structure-property relationships. Additionally, the integration of large-scale pre-training approaches for molecular GNNs represents a promising avenue for developing foundation models in molecular sciences, potentially transforming the pace of discovery in drug development and materials design.

Message-passing mechanisms form the computational foundation of modern graph neural networks (GNNs) applied to molecular graphs, enabling the prediction of chemical properties, material behaviors, and bioactivities directly from structural information. In molecular contexts, where atoms naturally represent nodes and bonds represent edges, these mechanisms allow neural networks to learn from graph-structured data by iteratively exchanging information between connected entities [12]. Unlike traditional convolutional neural networks designed for grid-like data, message-passing GNNs specialize in handling the irregular connectivity patterns inherent to molecular systems, from simple organic compounds to complex crystalline structures [13] [12].

The significance of message-passing mechanisms extends beyond mere structural analysis to impactful applications in drug development and materials science. For molecular property prediction, message-passing neural networks (MPNNs) have demonstrated state-of-the-art performance in predicting quantum chemical properties, bioactivity, and physical-chemical characteristics without requiring hand-crafted feature engineering [14] [12]. This capability is particularly valuable in virtual screening and materials design, where accurate prediction of molecular behavior accelerates discovery while reducing experimental costs.

Theoretical Foundations of Message Passing

Core Mathematical Framework

Message passing in graph neural networks operates through three fundamental operations that transform node representations by aggregating information from local neighborhoods. For a molecular graph (G = (V, E)) with node features (hv) and edge features (e{vw}), the message-passing process at layer (t) can be formally described by the following equations [14] [12]:

[mv^{(t+1)} = \sum{w \in N(v)} Mt\left(hv^{(t)}, hw^{(t)}, e{vw}\right)]

[hv^{(t+1)} = Ut\left(hv^{(t)}, mv^{(t+1)}\right)]

[y = R\left({h_v^{(K)} \mid v \in G}\right)]

where:

- (M_t) is the message function that transforms neighbor information

- (U_t) is the update function that combines current node state with incoming messages

- (R) is the readout function that produces graph-level predictions

- (N(v)) denotes the neighbors of node (v)

- (K) is the total number of message-passing layers

This framework creates a powerful computational paradigm where each node progressively incorporates information from its extended neighborhood through multiple iterations, effectively capturing both local atomic environments and global molecular structure [15] [12].

Aggregation Functions and Their Properties

The choice of aggregation function significantly influences the expressive power and behavior of message-passing networks. Different functions offer distinct advantages for capturing various aspects of molecular structure:

Table 1: Comparison of Aggregation Functions in Message-Passing Networks

| Aggregation Function | Mathematical Expression | Key Advantages | Molecular Applications | ||

|---|---|---|---|---|---|

| Sum | (mv = \sum{w \in N(v)} h_w) | Preserves complete neighborhood information | Counting specific substructures, molecular fingerprints | ||

| Mean | (m_v = \frac{1}{ | N(v) | } \sum{w \in N(v)} hw) | Stable across neighborhoods of different sizes | Statistical properties, normalized features |

| Max | (mv = \max{w \in N(v)} h_w) | Identifies most salient features in neighborhood | Critical functional group detection | ||

| Attention-weighted | (mv = \sum{w \in N(v)} \alpha{vw} hw) | Adaptively weights neighbor importance | Complex bond interactions, protein-ligand binding |

The attention mechanism, particularly implemented through multi-head attention, has demonstrated significant advantages in molecular applications by allowing the model to focus on particularly relevant atomic interactions while suppressing noise from less important connections [16] [14].

Figure 1: Message-Passing Architecture showing the flow of information from node and edge features through message functions, aggregation, and update functions to produce node and graph-level representations.

Implementation Protocols for Molecular Applications

Molecular Graph Construction

The first critical step in applying message-passing mechanisms to molecular systems is the construction of appropriate graph representations from chemical structure data:

Protocol 1: Molecular Graph Construction from SMILES

- Input Processing: Convert SMILES string to molecular structure using cheminformatics libraries (RDKit or OpenBabel)

- Node Representation: Generate atom features including:

- Atom type (one-hot encoded)

- Degree of connectivity

- Formal charge

- Hybridization state

- Aromaticity flag

- Atomic mass (normalized)

- Edge Representation: Generate bond features including:

- Bond type (single, double, triple, aromatic)

- Conjugation

- Ring membership

- Spatial distance (if 3D coordinates available)

- Graph Assembly: Construct adjacency matrix or adjacency list connecting atoms via bonds

For crystalline materials, additional considerations include periodic boundary conditions and longer-range interactions beyond covalent bonding, often addressed through multi-scale graph representations [16] [12].

Message-Passing Neural Network Implementation

Protocol 2: MPNN Forward Pass Implementation

- Initialization:

- Initialize atom embeddings (hv^0) from feature vectors

- Initialize bond embeddings (e{vw}) from bond features

- Set number of message-passing steps (K) (typically 3-6 for molecules)

Message-Passing Loop (for (t = 0) to (K-1)):

- For each atom (v) in molecular graph:

- Gather neighbor features ({hw^t | w \in N(v)})

- Compute messages: (m{vw}^t = Mt(hv^t, hw^t, e{vw}))

- Aggregate messages: (mv^{t+1} = \sum{w \in N(v)} m_{vw}^t)

- Update node state: (hv^{t+1} = Ut(hv^t, mv^{t+1}))

- For each atom (v) in molecular graph:

Readout Phase:

- Apply graph-level pooling: (hG = R({hv^K | v \in G}))

- Pass through output network for prediction

The implementation typically employs learned neural networks for both message and update functions, with gated recurrent units (GRUs) or simple multi-layer perceptrons (MLPs) common choices for (U_t) [14] [12].

Advanced Message-Passing Mechanisms

Attention-Based Message Passing

Advanced message-passing architectures incorporate attention mechanisms to dynamically weight the importance of different neighbors during aggregation:

[mv^{(t+1)} = \sum{w \in N(v)} \alpha{vw}^{(t)} Mt\left(hv^{(t)}, hw^{(t)}, e_{vw}\right)]

where attention weights (\alpha_{vw}^{(t)}) are computed as:

[\alpha{vw}^{(t)} = \frac{\exp\left(\text{LeakyReLU}\left(a^T [W hv^{(t)} \| W hw^{(t)}]\right)\right)}{\sum{k \in N(v)} \exp\left(\text{LeakyReLU}\left(a^T [W hv^{(t)} \| W hk^{(t)}]\right)\right)}]

This approach enables the model to focus on particularly relevant atomic interactions, such as those critical for binding affinity in drug-target interactions or catalytic activity in materials [16] [14]. Multi-head attention extends this concept by employing multiple independent attention mechanisms to capture different aspects of molecular interactions.

Edge Memory and Dynamic Message Passing

Recent innovations in message passing include edge memory networks and dynamic message-passing mechanisms that adaptively modify information flow:

Edge Memory Networks maintain and update edge representations throughout the message-passing process, allowing richer information exchange beyond simple node features [14]. The enhanced message function becomes:

[mv^{(t+1)} = \sum{w \in N(v)} Mt\left(hv^{(t)}, hw^{(t)}, e{vw}^{(t)}\right)]

[e{vw}^{(t+1)} = Et\left(e{vw}^{(t)}, hv^{(t)}, h_w^{(t)}\right)]

where (E_t) is an edge update function that refines edge features at each step.

Dynamic Message Passing introduces learnable pseudo-nodes and spatial relationships that evolve during processing, effectively creating adaptive communication pathways beyond the fixed molecular topology [17]. This approach addresses limitations of static graph structures by allowing information to flow along dynamically determined optimal paths.

Figure 2: Dynamic Message-Passing Framework showing how pseudo nodes and spatial relations create adaptive message pathways beyond initial molecular connectivity.

Experimental Protocols and Validation

Benchmark Datasets and Evaluation Metrics

Rigorous evaluation of message-passing mechanisms requires standardized datasets spanning diverse molecular properties:

Table 2: Molecular Graph Benchmark Datasets for Message-Passing Evaluation

| Dataset | Domain | Graphs | Task Type | Evaluation Metric | Key Challenge |

|---|---|---|---|---|---|

| QM9 | Quantum chemistry | 133,885 | Regression | MAE | Predicting quantum mechanical properties |

| MD17 | Molecular dynamics | 10+ molecules | Regression | Energy MAE, Force MAE | Molecular conformations |

| MoleculeNet | Various | Multiple | Classification/Regression | ROC-AUC, RMSE | Multi-task generalization |

| OGB | Various | Large-scale | Various | Dataset-specific | Scalability and transfer |

| TUDataset | Chemical & biological | Multiple | Classification | Accuracy | Domain-specific learning |

These datasets enable comprehensive benchmarking of message-passing architectures across different molecular complexity levels, from small organic molecules to complex drug-like compounds and materials [11] [12].

Stability and Generalization Protocols

A significant challenge in molecular graph networks is ensuring robust performance under distributional shifts (out-of-distribution, OOD). Recent research has introduced stable learning approaches specifically designed for GNNs:

Protocol 3: Stable-GNN Training for OOD Generalization

- Feature Decorrelation: Apply random Fourier features (RFF) to measure and decorrelate feature dependencies

- Sample Reweighting: Learn instance-specific weights that suppress spurious correlations

- Stable Regularization: Incorporate decorrelation loss during training:

[\mathcal{L}{stable} = \mathcal{L}{task} + \lambda \sum{i,j} \text{Corr}(hi, h_j)^2]

where (\text{Corr}(hi, hj)) measures correlation between different feature dimensions [11]

- Cross-Domain Validation: Evaluate on deliberately constructed OOD splits to test generalization

This approach enhances model reliability for real-world applications where test molecules may differ systematically from training data due to selection biases or evolving chemical spaces [11].

Applications in Molecular Property Prediction

Multi-Component Gas Adsorption in MOFs

Message-passing networks have demonstrated exceptional performance in predicting complex materials behaviors such as gas adsorption in metal-organic frameworks (MOFs). The multi-scale graph representation enables modeling of interactions at different structural levels:

Protocol 4: Multi-Scale Crystal Graph Network for Adsorption Prediction

- Graph Construction: Create crystal graph with nodes as atoms and edges as bonds or proximity relations

- Multi-Scale Representation: Implement separate message-passing pathways for:

- Covalent bonding interactions

- Functional group patterns

- Global crystalline structure

- Multi-Head Attention: Apply independent attention mechanisms at each scale to identify critical structural features

- Hierarchical Readout: Combine representations from different scales for final prediction

This approach has achieved state-of-the-art accuracy in predicting multi-component gas adsorption isotherms, significantly outperforming traditional descriptor-based methods and uniform graph architectures [16].

Bioactivity and Physical-Chemical Property Prediction

In pharmaceutical applications, message-passing networks directly predict bioactivity and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties from molecular structure:

Table 3: Message-Passing Applications in Drug Discovery

| Property Type | Prediction Task | Architecture Variant | Key Performance |

|---|---|---|---|

| Target affinity | IC50, Ki values | Attention MPNN | >0.9 ROC-AUC on benchmark sets |

| Toxicity | hERG, Ames toxicity | Edge Memory MPNN | Significant reduction in false negatives |

| Solubility | LogS regression | 3D-aware MPNN | RMSE <0.6 log units |

| Metabolic stability | CYP450 inhibition | Multi-task MPNN | 85% accuracy on clinical candidates |

| Permeability | P-gp substrate | Geometric MPNN | >0.85 precision in classification |

The capacity of message-passing networks to learn directly from molecular graphs eliminates the need for manual feature engineering while capturing subtle structure-property relationships that challenge traditional QSAR approaches [14].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Research Tools and Resources for Message-Passing Implementation

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics library | Molecular graph construction from SMILES/SDF | Preprocessing pipeline for small molecules |

| PyTor Geometric | Deep learning library | GNN implementation and training | Message-passing network development |

| DGL | Deep learning library | Scalable graph network operations | Large-scale molecular datasets |

| OGB | Benchmark suite | Standardized evaluation | Model comparison and validation |

| MoleculeNet | Benchmark dataset | Multi-task performance assessment | Method robustness testing |

| SAMSON | Molecular visualization | Structure and property visualization | Result interpretation and analysis |

| Crystal Graph Converter | Materials preprocessing | Periodic structure to graph conversion | Materials property prediction |

These tools collectively provide the essential infrastructure for implementing, training, and evaluating message-passing networks on molecular graphs, from initial data preparation to final model deployment [18] [11] [12].

Message-passing mechanisms provide a powerful framework for learning from molecular graphs, enabling accurate prediction of chemical, biological, and materials properties directly from structural information. The continued evolution of these mechanisms—through attention, edge memory, dynamic pathways, and stability enhancements—addresses key challenges in molecular machine learning while creating new opportunities for scientific discovery.

As these methods mature, their integration into automated discovery pipelines promises to accelerate progress across molecular sciences, from rational drug design to functional materials development. The protocols and implementations detailed in this work provide researchers with practical guidance for applying these advanced techniques to diverse molecular prediction tasks.

The accurate prediction of material stability and molecular properties represents a cornerstone of modern computational materials science and drug development. In this context, Graph Neural Networks (GNNs) have emerged as powerful tools for modeling atomic systems, representing molecules and materials as graphs where nodes correspond to atoms and edges represent interatomic interactions [12]. A critical advancement in this field has been the systematic embedding of fundamental physical symmetries—particularly rotational and translational invariance—directly into neural network architectures. These geometric deep learning approaches ensure that model predictions remain consistent regardless of arbitrary choices of coordinate systems, leading to more physically realistic predictions, enhanced data efficiency, and improved generalization across diverse chemical spaces [19] [20].

The significance of these symmetry-aware models extends across multiple domains, from accelerating the discovery of novel materials with tailored properties to predicting protein-ligand binding affinities in rational drug design [21]. This application note examines the theoretical foundations, implementation protocols, and practical applications of rotational and translational invariance in GNNs, providing researchers with actionable methodologies for leveraging these principles in stability prediction and interatomic interaction modeling.

Theoretical Foundations of Symmetry Principles

Mathematical Formalisms of Physical Symmetries

In molecular and materials systems, physical properties must exhibit well-defined transformation behaviors under rotations and translations of the coordinate system. Formally, a function (f: X \rightarrow Y) is defined as equivariant with respect to a group (G) that acts on (X) and (Y) if:

[{D}{Y}[g]f(x)=f({D}{X}[g]x)\quad \forall g\in G,\forall x\in X]

where ({D}{X}[g]) and ({D}{Y}[g]) are the representations of the group element (g) in the vector spaces (X) and (Y), respectively [19]. For interatomic potentials, we primarily concern ourselves with the E(3) symmetry group encompassing rotations, reflections, and translations in 3D space. When ({D}_{Y}[g]) is the identity transformation, the function is considered invariant, a crucial property for predicting scalar values such as potential energy [19].

Traditional neural network architectures that operate on Cartesian coordinates do not inherently respect these symmetries, requiring extensive data augmentation and often failing to generalize to unseen orientations. In contrast, equivariant GNNs explicitly preserve transformation properties through specialized architectures that maintain geometric tensor representations throughout the network [19].

Architectural Implementation of Symmetry Principles

Several complementary architectural strategies have been developed to embed physical symmetries into deep learning models:

Irreducible Representations (irreps) and Spherical Harmonics: Advanced frameworks such as e3nn utilize irreducible representations of the O(3) symmetry group based on spherical harmonics to track how outputs vary under rotations [19] [21]. The Clebsch-Gordan tensor product combines these representations equivariantly, generalizing operations such as dot and cross products while maintaining symmetry properties [20].

Message Passing with Geometric Tensors: Equivariant message-passing networks update node features comprising not only scalars but also vectors and higher-order geometric tensors. This approach preserves directional information while maintaining equivariance, allowing the network to leverage angular information critical for modeling interatomic forces [19].

Invariant Descriptor Engineering: Alternative approaches construct inherently invariant descriptors of atomic environments, such as Gaussian Overlap Matrix (GOM) fingerprints, which encode many-body interactions through orbital overlap matrices while guaranteeing rotational and translational invariance [22].

Table 1: Comparison of Architectural Approaches for Embedding Physical Symmetries

| Architectural Approach | Key Features | Representative Models | Advantages |

|---|---|---|---|

| Irreducible Representations | Spherical harmonics, Clebsch-Gordan tensor products | NequIP [19], MACE [23], SevenNet [20] | High expressiveness, rigorous symmetry preservation |

| Invariant Descriptors | Precomputed invariant representations of atomic environments | EOSnet [22], SOAP [22] | Simplified architecture, guaranteed invariance |

| Geometric Message Passing | Vector features, equivariant update rules | PaiNN [19], NewtonNet [19] | Balance of expressive power and computational efficiency |

Application Case Studies in Materials and Molecular Science

Interatomic Potential Development

The development of machine learning interatomic potentials (MLIPs) has been revolutionized by symmetry-aware architectures. The NequIP (Neural Equivariant Interatomic Potential) framework demonstrates the remarkable advantages of E(3)-equivariance, achieving state-of-the-art accuracy with significantly enhanced data efficiency [19]. In benchmark studies, NequIP outperformed existing models with up to three orders of magnitude fewer training data, accurately reproducing structural and kinetic properties from ab initio molecular dynamics simulations [19].

Recent advancements continue to build upon these principles. The Facet architecture introduces computational optimizations to steerable GNNs, replacing resource-intensive multi-layer perceptrons with efficient splines for processing interatomic distances [20]. This innovation achieves performance comparable to leading approaches with significantly fewer parameters and less than 10% of the training computation, enabling faster iteration in potential development [20].

Materials Property Prediction

For crystalline materials, symmetry-aware GNNs have demonstrated exceptional performance in predicting diverse materials properties. The EOSnet framework incorporates Gaussian Overlap Matrix fingerprints as node features, providing a compact, rotationally invariant representation of many-body interactions [22]. This approach has achieved a mean absolute error of 0.163 eV in band gap prediction, surpassing previous state-of-the-art models while maintaining computational efficiency [22].

Systematic benchmarking of universal MLIPs for elastic property prediction further validates the importance of symmetry principles. In evaluations across nearly 11,000 elastically stable materials, equivariant models including SevenNet and MACE demonstrated superior accuracy in predicting bulk modulus, shear modulus, and other mechanical properties, establishing their reliability for computational materials design [23].

Table 2: Performance Benchmarks of Symmetry-Aware Models Across Applications

| Model | Application Domain | Key Performance Metrics | Competitive Advantages |

|---|---|---|---|

| NequIP [19] | Interatomic Potentials | State-of-the-art accuracy with 100-1000x data efficiency | Remarkable data efficiency, faithful force prediction |

| EOSnet [22] | Materials Property Prediction | 0.163 eV MAE (band gap), 97.7% accuracy (metal/nonmetal) | Effective many-body interaction capture |

| SevenNet [23] | Elastic Property Prediction | Highest accuracy in uMLIP benchmark (11,000 materials) | Superior elastic constant prediction |

| EMFF-2025 [24] | Energetic Materials | MAE within ±0.1 eV/atom (energy), ±2 eV/Å (force) | Transfer learning capability for CHNO systems |

| InvarNet [25] | Molecular Property Prediction | 2.24x faster training vs. SphereNet, state-of-the-art R2 on QM9 | Optimized processing, rotational invariant loss |

Biomolecular Applications

In drug discovery, predicting protein-ligand binding affinity represents a critical challenge where rotational symmetry plays a crucial role. The PLAe methodology combines radial basis functions with e3nn networks to capture radial and angular dimensions of molecular features while maintaining rotational equivariance [21]. This approach demonstrates how symmetry principles can enhance prediction accuracy in complex biomolecular systems where binding interactions depend critically on three-dimensional spatial relationships [21].

Experimental Protocols and Methodologies

Implementation of Equivariant Graph Neural Networks

The following protocol outlines the key steps for implementing an E(3)-equivariant GNN for interatomic potential training, based on the NequIP framework [19]:

Step 1: Data Preparation and Representation

- Collect reference atomic structures and corresponding energies/forces from ab initio calculations (DFT, coupled-cluster)

- Represent atomic systems as graphs with:

- Nodes: Atoms with chemical species attributes

- Edges: Connections between atoms within a specified cutoff radius (typically 4-6 Å)

- Apply periodic boundary conditions for crystalline materials using periodic graph constructions [20]

Step 2: Feature Initialization

- Initialize node features as geometric tensors comprising direct sums of irreducible representations of O(3)

- Encode edge information using spherical harmonics based on relative position vectors

- For hybrid approaches (e.g., EOSnet), compute invariant atomic environment descriptors (GOM fingerprints) as node features [22]

Step 3: Equivariant Message Passing

- Implement message passing with E(3)-equivariant operations:

- Apply Clebsch-Gordan tensor products to combine representations

- Use spherical harmonics-based convolutions for neighborhood information aggregation

- Employ equivariant nonlinearities for activation [19]

- Iterate message passing steps (typically 3-6 layers) to propagate information across atomic neighborhoods

Step 4: Invariant Readout and Property Prediction

- Extract invariant scalars from final geometric tensor features

- Pool node-level representations to system-level properties via permutation-invariant operations (sum, mean)

- Compute potential energy as a sum of atomic contributions: [{E}{pot}=\mathop{\sum}\limits{i\in {N}{atoms}}{E}{i,atomic}]

- Obtain atomic forces as gradients of energy with respect to atomic positions: [{\vec{F}}{i}=-{\nabla }{i}{E}_{pot}] ensuring automatic energy conservation [19]

Step 5: Optimization and Training

- Define composite loss function incorporating energy, force, and stress components: [L = \lambdaE LE + \lambdaF LF + \lambdaS LS]

- Utilize rotational invariant loss terms to enhance robustness against molecular rotations [25]

- Employ adaptive optimization algorithms (Adam, AdamW) with learning rate scheduling

Transfer Learning Protocol for Specialized Applications

For applications with limited training data, such as specialized material systems, transfer learning from general-purpose potentials provides an effective strategy:

Pre-training Phase:

- Train a foundational model on diverse datasets (e.g., MPTrj [20], Materials Project [23]) encompassing broad chemical spaces

- Utilize large-scale computational resources for initial training, potentially requiring extensive GPU days [20]

Transfer Learning Phase:

- Initialize model weights from pre-trained checkpoint

- Fine-tune on target system data with reduced learning rate

- For EMFF-2025 approach, incorporate minimal new training data from DFT calculations specific to target materials [24]

- Validate transfer performance against held-out target system data and experimental measurements where available

Special Considerations for Energetic Materials:

- Focus on C, H, N, O element systems with complex decomposition pathways

- Ensure training data encompasses relevant temperature and pressure regimes

- Validate against mechanical properties and decomposition characteristics simultaneously [24]

Table 3: Essential Computational Tools for Symmetry-Aware GNN Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| e3nn Library [19] [21] | Framework for E(3)-equivariant neural networks | Implementation of irreducible representations and spherical harmonics |

| MPTrj Dataset [20] | Large-scale training dataset for MLIPs | Pre-training of foundational potential models |

| Materials Project [23] | Database of calculated materials properties | Benchmarking and validation datasets |

| DP-GEN Framework [24] | Active learning pipeline for training data generation | Efficient construction of specialized training sets |

| GOM Fingerprint Generator [22] | Computation of Gaussian Overlap Matrix descriptors | Atomic environment representation for invariant models |

| QM9, MD17 Datasets [25] | Quantum chemical properties of molecules | Benchmarking molecular property prediction |

The systematic embedding of rotational and translational invariance into graph neural network architectures has fundamentally advanced the precision and efficiency of interatomic interaction modeling. Through equivariant operations based on irreducible representations and invariant descriptor engineering, these approaches achieve unprecedented data efficiency and physical consistency in predicting material stability, molecular properties, and interaction energies. The continued development of computationally efficient implementations, such as those demonstrated in the Facet architecture, promises to further accelerate materials discovery and drug development workflows. As benchmark studies across diverse chemical spaces continue to validate the superiority of symmetry-aware models, their adoption as standard tools in computational materials science and chemistry appears inevitable.

Graph Neural Networks (GNNs) have emerged as transformative tools for computational chemistry and materials science, enabling accurate predictions of interatomic interactions and system stability at a fraction of the computational cost of traditional quantum mechanical methods. These models learn fundamental chemical principles directly from data by representing atomic systems as graphs, where nodes correspond to atoms and edges represent interatomic interactions [26]. This representation allows GNNs to naturally capture complex quantum mechanical effects, including critical many-body interactions that are essential for predicting molecular properties and material stability with high fidelity [27] [22]. The capacity of GNNs to learn these fundamental principles positions them as powerful tools for accelerating drug discovery and materials design.

This application note examines the specific chemical principles learned by GNNs, focusing on their ability to capture interaction strengths and many-body effects. We provide a structured analysis of quantitative performance data across different architectural approaches, detailed experimental protocols for implementing and interpreting these models, and visualization tools to elucidate the learned interactions. By framing these capabilities within the context of interatomic interactions and stability prediction research, we aim to equip scientists with the practical knowledge needed to leverage GNNs in their computational workflows.

Theoretical Foundations: How GNNs Learn Chemical Interactions

GNNs learn chemical interactions through a message-passing framework that propagates information across the molecular graph. In this paradigm, node features represent atomic properties (e.g., element type, orbital configuration), while edge features encode pairwise relationships (e.g., interatomic distances, bond orders) [26]. During message passing, each atom gathers information from its local environment, progressively building representations that capture increasingly complex chemical environments with each layer [26] [22].

Learning Many-Body Interactions

Traditional machine learning potentials often struggle to capture many-body interactions beyond pairwise atomic relationships. GNNs address this limitation through several advanced architectural approaches:

Structural encodings explicitly incorporate angular information critical for three-body interactions. The AGT framework, for instance, integrates Spherical Bessel Function (SBF) angle encoding alongside atomic and edge encodings, significantly enhancing the model's capacity to represent geometric distortions and bond angle dependencies [28].

Orbital overlap representations capture quantum mechanical effects through mathematical constructs such as Gaussian Overlap Matrix (GOM) fingerprints. In EOSnet, these fingerprints are derived from the eigenvalues of overlap matrices between Gaussian-type orbitals centered on atoms, providing a rotationally invariant representation of many-body atomic environments without requiring explicit angular terms [22].

Fragmentation approaches combine GNNs with physical principles like the Many-Body Expansion (MBE) theory. The FBGNN-MBE method partitions large systems into fragments, uses first-principles quantum mechanical methods for single-fragment energies, and deploys GNNs to learn the complex many-fragment interactions, creating a manageable framework for large functional materials [27].

Quantitative Performance Analysis

Table 1: Performance Comparison of GNN Architectures on Material Property Prediction

| GNN Architecture | Key Innovation | Target Property | Performance Metrics | Reference |

|---|---|---|---|---|

| EOSnet | Gaussian Overlap Matrix node features | Band gap prediction | MAE = 0.163 eV | [22] |

| EOSnet | Gaussian Overlap Matrix node features | Metal/nonmetal classification | Accuracy = 97.7% | [22] |

| AGT | Angle encoding + MPNN-Transformer | Adsorption energy (OC20-Ni dataset) | MAE = 0.54 eV | [28] |

| KA-GNN | Fourier-based Kolmogorov-Arnold Networks | Molecular property prediction | Superior accuracy & computational efficiency vs conventional GNNs | [29] |

| FBGNN-MBE | Integration with many-body expansion theory | Potential energy surfaces | Reproduces FD-PES with manageable accuracy/complexity | [27] |

| Universal MLIPs (eSEN) | Cross-dimensional transferability | Energy/forces across dimensionalities | Energy error < 10 meV/atom across 0D-3D systems | [30] |

Table 2: Analysis of Many-Body Interaction Capabilities in GNN Architectures

| Architecture Category | Representative Models | Mechanism for Many-Body Interactions | Interpretability Features |

|---|---|---|---|

| Geometrically Enhanced | DimeNet, GemNet, ALIGNN, M3GNet | Incorporate angular and directional information between atoms | Varies by implementation |

| Equivariant Networks | E3NN, NequIP, MACE, eSEN | Use spherical harmonics and tensor products | Limited inherent interpretability |

| Orbital Overlap-Based | EOSnet | Gaussian Overlap Matrix fingerprints as node features | Direct physical interpretation of orbital interactions |

| Transformer Hybrids | AGT, Graph Transformers | Self-attention mechanisms with geometric encodings | Attention weights indicate important interactions |

| Explainable AI Enhanced | GNN-LRP models | Layer-wise Relevance Propagation for decomposition | Identifies n-body contributions to predictions |

Experimental Protocols

Protocol: Implementing an EOSnet Architecture for Electronic Property Prediction

Purpose: To predict electronic properties of materials using orbital overlap information.

Materials and Software:

- Python 3.8+

- PyTorch or TensorFlow

- Deep Graph Library (DGL) or PyTorch Geometric

- Atomic simulation environment (ASE)

- Crystallography data for target materials

Procedure:

Graph Construction:

- Represent crystal structure as a graph with atoms as nodes.

- Define edges between atoms within a specified cutoff radius (typically 5-8 Å).

- Initialize node features using atomic properties (atomic number, valence electrons, etc.).

GOM Fingerprint Calculation:

- For each atom, identify all neighbors within the cutoff sphere.

- Compute Gaussian Overlap Matrix elements using the formula: [ [Om]{i+l,j+l'} = fc(r{im})\langle\phi{il}|\phi{jl'}\rangle fc(r{jm}) ] where (fc(r) = (1 - \frac{r^2}{r{\text{cut}}^2})^n) is the smooth cutoff function, and (\phi_{il}) are Gaussian-type orbitals.

- Calculate eigenvalues of the GOM for each atom to use as node features [22].

Network Architecture:

- Implement graph convolutional layers with message passing.

- Update node embeddings by aggregating information from neighbors.

- Use multiple convolutional layers (typically 3-6) to capture increasingly complex environments.

Training Configuration:

- Use Mean Absolute Error (MAE) or Mean Squared Error (MSE) as loss function.

- Employ Adam optimizer with learning rate 0.001-0.0001.

- Implement early stopping based on validation set performance.

Validation:

- Compare predictions with DFT-calculated band gaps.

- Evaluate generalization across different material classes.

Protocol: Interpreting Many-Body Contributions with GNN-LRP

Purpose: To decompose GNN predictions into n-body interaction contributions.

Materials and Software:

- Trained GNN potential

- GNN-LRP implementation

- Molecular dynamics trajectories or structural datasets

Procedure:

Model Inference:

- Run the trained GNN on the target molecular configuration to obtain the total energy prediction.

Relevance Propagation:

- Apply Layer-wise Relevance Propagation (LRP) rules backward through the network.

- Decompose the energy output into contributions from sequences of graph edges ("walks").

n-Body Aggregation:

- Aggregate relevance scores of all walks associated with specific subgraphs.

- Calculate the n-body relevance contribution for each interacting group of atoms.

- Sort contributions by absolute value to identify the most relevant interactions [31].

Physical Validation:

- Compare identified relevant interactions with known physical principles.

- Verify that dominant contributions align with chemical intuition (e.g., strong covalent bonds, important non-covalent interactions).

Protocol: Training a KA-GNN for Molecular Property Prediction

Purpose: To leverage Kolmogorov-Arnold Networks for enhanced molecular property prediction.

Materials and Software:

- Molecular datasets (e.g., QM9, MD17)

- KA-GNN implementation

- RDKit for molecular processing

Procedure:

Data Preparation:

- Convert molecular structures to graph representations.

- Node features: atomic number, hybridization state, formal charge.

- Edge features: bond type, bond length, topological distance.

Fourier-KAN Layer Implementation:

- Replace standard MLP transformations with Fourier-based KAN modules.

- Use Fourier series as learnable activation functions on edges: [ f(x) \sim \sum{k} (ak \cos(k \cdot x) + b_k \sin(k \cdot x)) ]

- Implement in node embedding, message passing, and readout components [29].

Model Variants:

- KA-GCN: Integrate KAN modules into Graph Convolutional Networks.

- KA-GAT: Incorporate KAN modules into Graph Attention Networks.

Training and Interpretation:

- Train on target molecular properties (e.g., energy, solubility, toxicity).

- Analyze learned Fourier coefficients to identify important frequency components.

- Visualize attention weights to highlight chemically meaningful substructures.

Visualization of GNN Architectures and Workflows

GNN Workflow for Interatomic Interactions

Many-Body Interaction Capture in GNNs

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Datasets for GNN Implementation

| Resource Category | Specific Tools/Datasets | Application Purpose | Key Features |

|---|---|---|---|

| GNN Frameworks | PyTorch Geometric, Deep Graph Library | Model Implementation | Pre-built GNN layers, molecular graph utilities |

| Materials Datasets | Materials Project, OQMD, JARVIS | Training & Benchmarking | DFT-calculated material properties |

| Molecular Datasets | QM9, MD17, ANI, OC20 | Training & Benchmarking | Diverse molecular conformations & properties |

| Quantum Chemistry | ORCA, Gaussian, PySCF | Reference Calculations | Generate training data & validate predictions |

| Analysis & Visualization | ASE, OVITO, VMD | Structure Analysis | Process atomic structures & visualize results |

| Specialized GNN Models | MACE, NequIP, CHGNet, Allegro | Transferable Potentials | Pretrained universal machine learning interatomic potentials |

| Explainability Tools | GNN-LRP, Captum | Model Interpretation | Decompose predictions into atomic contributions |

GNNs have demonstrated remarkable capability in learning fundamental chemical principles, particularly interaction strengths and many-body effects critical for accurate stability prediction. Through specialized architectural features—including angular encodings, orbital overlap representations, and integration with fragment-based quantum mechanics—these models capture complex quantum mechanical interactions that elude simpler machine learning approaches. The experimental protocols and visualization tools presented in this application note provide researchers with practical methodologies for implementing and interpreting these advanced networks. As GNN architectures continue to evolve, their capacity to learn and represent intricate chemical interactions will further bridge the gap between computational efficiency and quantum mechanical accuracy, accelerating discovery across materials science and drug development.

Advanced Architectures and Real-World Applications: From Drug Discovery to Materials Design

The accurate prediction of molecular properties and interatomic interactions represents a cornerstone of modern computational chemistry and materials science, with profound implications for drug discovery and materials design. Graph Neural Networks (GNNs) have emerged as powerful frameworks for these tasks by naturally modeling atomic systems as graphs, where atoms constitute nodes and chemical bonds form edges. Recent architectural innovations have significantly enhanced the capabilities of these models, improving their accuracy, computational efficiency, and physical faithfulness. This article explores three groundbreaking developments: Kolmogorov-Arnold Graph Neural Networks (KA-GNNs), which leverage mathematical representation theory; Moment Graph Neural Networks (MGNN), which utilize moment representations for universal potentials; and the emerging class of universal neural network interatomic potentials that demonstrate remarkable transferability across diverse chemical spaces. These architectures are pushing the boundaries of what's possible in molecular dynamics simulations, property prediction, and rational material design.

KA-GNN: Kolmogorov-Arnold Graph Neural Networks

Theoretical Foundation and Architecture

KA-GNNs represent a significant architectural innovation that integrates the mathematical foundations of the Kolmogorov-Arnold representation theorem into graph neural networks. The Kolmogorov-Arnold theorem states that any multivariate continuous function can be represented as a finite composition of continuous functions of a single variable and the binary operation of addition [32]. Inspired by this theorem, KA-GNNs replace traditional multilayer perceptron (MLP) components with Kolmogorov-Arnold network (KAN) modules that feature learnable activation functions on edges rather than fixed activations on nodes [29].

The KA-GNN framework systematically integrates Fourier-based KAN modules across all fundamental components of GNNs: node embedding initialization, message passing between atoms, and graph-level readout for property prediction [29]. A key innovation lies in its use of Fourier series as basis functions for the univariate activation functions, replacing the B-splines used in earlier KAN implementations. This Fourier-based approach enables effective capture of both low-frequency and high-frequency structural patterns in molecular graphs, enhancing the model's expressiveness for representing complex quantum mechanical relationships [29].

Table 1: Core Components of KA-GNN Architecture

| Component | Traditional GNN Approach | KA-GNN Innovation | Benefit |

|---|---|---|---|

| Node Embedding | Atomic features processed through MLP with fixed activations | KAN layer with learnable Fourier-based functions | Data-driven atomic representation incorporating local chemical context |

| Message Passing | Weighted sum of neighbor features with fixed nonlinearity | Composition of learnable univariate functions on edges | Enhanced feature interaction modeling during information propagation |

| Readout Function | Global pooling followed by MLP for graph-level prediction | KAN-based readout with Fourier representations | More expressive graph-level representation for property prediction |

| Basis Functions | Not applicable | Fourier series replacing B-splines | Better capture of periodic and oscillatory patterns in molecular data |

Implementation Variants and Performance

Two principal variants of KA-GNN have been developed: KA-Graph Convolutional Network (KA-GCN) and KA-Graph Attention Network (KA-GAT). In KA-GCN, node embeddings are initialized by processing concatenated atomic features and neighboring bond features through a KAN layer, effectively encoding both atomic identity and local chemical environment. The message-passing layers follow the GCN scheme but employ residual KANs instead of traditional MLPs for feature updates [29]. KA-GAT extends this approach by incorporating edge embeddings initialized with KAN layers, with attention mechanisms operating on these enriched representations [29].

Experimental evaluations across seven molecular benchmark datasets demonstrate that KA-GNN variants consistently outperform conventional GNNs in both prediction accuracy and computational efficiency [29]. The architecture exhibits particular strength in capturing complex quantum chemical relationships while maintaining parameter efficiency. Additionally, KA-GNNs offer enhanced interpretability, often highlighting chemically meaningful substructures that contribute significantly to predicted properties, thereby providing valuable insights for domain experts [29].

MGNN: Moment Graph Neural Network for Universal Potentials

Architectural Principles and Formulation

The Moment Graph Neural Network (MGNN) represents a novel approach to constructing universal interatomic potentials that effectively capture the spatial relationships and symmetries inherent in molecular systems. MGNN innovatively propagates information between atoms using moments—mathematical quantities that encapsulate the spatial relationships between atoms within a molecule [33]. The architecture is inspired by Moment Tensor Potentials, which have established theoretical guarantees for approximating any regular function satisfying all necessary physical symmetries [33].

In the MGNN framework, molecular systems are represented as graphs where edges connect atoms within a defined cutoff distance. The model processes information through triplets of atoms (center atom i and neighbors j, k), where triplet messages update edge representations, which subsequently update node features [33]. This hierarchical information flow—from triplets to edges to nodes—enables the model to capture increasingly complex atomic environments while maintaining physical consistency.

A distinctive feature of MGNN is its use of Chebyshev polynomials to encode interatomic distance information, diverging from the more commonly employed Bessel and Gaussian radial basis functions in other GNN architectures [33]. This mathematical choice contributes to the model's efficiency and accuracy in capturing spatial relationships.

Performance and Applications

MGNN has demonstrated state-of-the-art performance across multiple benchmark datasets including QM9, revised MD17, and MD17-ethanol [33]. Its robustness extends to diverse material systems, having been successfully applied to 3BPA, 25-element high-entropy alloys, and amorphous electrolytes [33]. In molecular dynamics simulations, MGNN achieves remarkable consistency with ab initio methods while offering significantly reduced computational cost, making long-timescale simulations of complex systems practically feasible.

The architecture's versatility is evidenced by its capability to predict diverse molecular properties including scalar quantities (e.g., formation energy), vectorial properties (e.g., forces, dipole moments), and tensorial properties (e.g., polarizabilities) [33]. This comprehensive predictive capability enables accurate simulation of molecular spectra and other complex physicochemical phenomena, positioning MGNN as a valuable tool for computational chemistry and materials science.

Diagram 1: MGNN Architecture showing information flow through interaction blocks with triplet moment interactions

Universal Neural Network Interatomic Potentials

Extrapolation Capabilities and Design Principles

Universal neural network interatomic potentials represent a transformative advancement in computational materials science, offering the potential for accurate, transferable force fields applicable across diverse chemical spaces. A key breakthrough lies in their remarkable extrapolation capabilities—these models, often trained primarily on crystalline structures, can generalize to untrained domains such as surfaces, amorphous configurations, and defect environments [1].

Research into the theoretical foundations of this extrapolation behavior reveals that GNN interatomic potentials can capture non-local electrostatic interactions through their message-passing algorithms [1]. Models such as SevenNet and MACE demonstrate the ability to learn the exact functional form of Coulomb interactions, contributing significantly to their transferability across different chemical environments. This capacity to learn fundamental physical interactions, combined with the embedding nature of GNNs, provides a compelling explanation for their extrapolation capabilities [1].

Recent architectural innovations have further enhanced these universal potentials. TeaNet (Tensor Embedded Atom Network) incorporates Euclidean tensors, vectors, and scalars to represent angular interactions through graph convolution, enabling accurate modeling of diverse chemical systems involving the first 18 elements of the periodic table [34]. Similarly, E2GNN (Efficient Equivariant Graph Neural Network) employs a scalar-vector dual representation to encode equivariant features, maintaining rotational symmetry while achieving computational efficiency [7].

Applications and Performance Benchmarks

Universal interatomic potentials have demonstrated impressive performance across a wide spectrum of material systems. TeaNet shows robust performance for diverse structures including C-H molecules, metals, amorphous SiO₂, and water, achieving energy mean absolute errors of 19 meV/atom [34]. E2GNN consistently outperforms representative baselines in accuracy and efficiency across catalysts, molecules, and organic isomers, enabling ab initio accuracy in molecular dynamics simulations of solid, liquid, and gas systems [7].

Table 2: Comparison of Universal GNN Interatomic Potentials

| Model | Architectural Approach | Key Innovations | Reported Performance | Applicable Systems |

|---|---|---|---|---|

| MGNN [33] | Moment-based message passing | Chebyshev polynomials for distances, triplet interactions | State-of-the-art on QM9, MD17 | Molecules, alloys, amorphous materials |

| TeaNet [34] | Tensor embedding with ResNet | Euclidean tensors for angular information, 16-layer depth | 19 meV/atom MAE on randomized configurations | First 18 elements (H to Ar) |

| E2GNN [7] | Scalar-vector dual representation | Efficient equivariance without higher-order tensors | Outperforms baselines on diverse datasets | Catalysts, organic isomers, molecules |

| Magnetic MLIPs [35] | Spin-polarized machine learning potentials | Combination of DFT accuracy with empirical potential efficiency | Accurate for magnetic systems | Magnetic materials, alloys |

The development of magnetic machine-learning interatomic potentials (magnetic MLIPs) further extends the universality paradigm to magnetic materials, combining the computational efficiency of empirical potentials with the accuracy of spin-polarized density functional theory calculations [35]. This specialization addresses the unique challenges of modeling magnetic interactions while maintaining the transferability principles of universal potentials.

Experimental Protocols and Methodologies

Benchmarking Procedures for Molecular Property Prediction

Rigorous evaluation of GNN architectures requires standardized benchmarking across diverse datasets. For molecular property prediction, models are typically evaluated on established datasets such as QM9 [33], MD17 [33], FreeSolv [36], and CombiSolv-Exp [36]. The standard protocol involves dataset splitting (typically 80/10/10 for train/validation/test), ensuring representative distribution of molecular structures and target properties across splits.

Training procedures generally employ the Adam optimizer with an initial learning rate of 0.001, which is reduced upon validation loss plateau. Early stopping is implemented to prevent overfitting, with training terminated after a specified number of epochs without validation improvement. For property prediction tasks, models are evaluated using mean absolute error (MAE) and root mean square error (RMSE) metrics, with statistical significance testing across multiple random seeds [29] [33].

For KA-GNN implementations, the Fourier-based KAN layers require specific initialization of the harmonic coefficients, typically sampled from a normal distribution with small variance. The number of harmonics represents a key hyperparameter, with values between 10-20 often providing optimal performance across diverse molecular tasks [29].

Molecular Dynamics Simulation with GNN Potentials

When deploying GNN interatomic potentials for molecular dynamics simulations, specific protocols ensure physical consistency and numerical stability. The workflow begins with model training on diverse reference configurations, typically including crystals, surfaces, molecular dimers, and deformed structures to ensure adequate sampling of the potential energy surface [33] [34].

For MD simulations, forces are computed as the negative gradient of the predicted energy with respect to atomic coordinates, typically implemented through automatic differentiation. Integration of Newton's equations of motion employs standard algorithms such as Velocity Verlet with timesteps of 0.5-1.0 fs, constrained by the stability requirements of the potential [33]. Simulations are typically conducted in the NVT or NPT ensemble using appropriate thermostats and barostats, with production runs preceded by equilibration phases.

Validation against ab initio MD trajectories provides the ultimate test of potential reliability, comparing structural properties (radial distribution functions), dynamical properties (diffusion coefficients), and thermodynamic quantities [33] [7]. For universal potentials, additional validation on unseen element combinations or crystal structures confirms extrapolation capability [34].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Resources for GNN Implementation

| Resource Category | Specific Tools/Datasets | Purpose and Utility | Access Information |

|---|---|---|---|

| Benchmark Datasets | QM9, MD17, FreeSolv, CombiSolv-Exp | Standardized evaluation of model performance | Publicly available from academic sources |

| Molecular Processing | RDKit [36] | Conversion of SMILES to graph structures, feature computation | Open-source cheminformatics toolkit |