Generative AI in Materials Science: A Systematic Review of Performance Metrics and Real-World Impact

This systematic review synthesizes the current landscape of performance metrics for generative artificial intelligence (GenAI) in materials science.

Generative AI in Materials Science: A Systematic Review of Performance Metrics and Real-World Impact

Abstract

This systematic review synthesizes the current landscape of performance metrics for generative artificial intelligence (GenAI) in materials science. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning from the foundational architectures of generative models to their practical application in discovering novel materials like catalysts, semiconductors, and polymers. The review methodologically examines key metrics for stability, novelty, and property prediction accuracy, while also addressing critical challenges such as data scarcity, model interpretability, and computational costs. It further evaluates validation protocols, including computational benchmarks and experimental synthesis, and offers a comparative analysis of leading models. By consolidating performance criteria and identifying future directions, this review serves as a critical resource for the effective development and deployment of generative AI in accelerating materials discovery for biomedical and clinical applications.

The Building Blocks: Core Generative AI Architectures and Evolving Performance Metrics in Materials Informatics

Generative Artificial Intelligence (AI) represents a transformative class of machine learning models capable of creating novel data that mirrors the underlying patterns of its training data. Unlike discriminative models that predict labels or categories, generative models learn the intrinsic probability distribution of the data, enabling them to synthesize entirely new, realistic samples [1]. In the high-stakes fields of materials science and drug development, this capability is catalyzing a paradigm shift from traditional, often serendipitous, discovery processes toward inverse design—where researchers define desired material properties and deploy AI to identify candidate structures that meet those specifications [2].

The systematic review of these models' performance is critical for directing future research and resource allocation. The global generative AI in material science market, valued at an estimated $1.2 billion in 2024 and projected to reach $13.6 billion by 2033, reflects the immense commercial and scientific potential of these technologies [3]. This growth is primarily driven by the escalating demand from industries such as aerospace, pharmaceuticals, and energy for novel materials with unprecedented performance characteristics [2]. North America currently dominates this market, contributing nearly 47% of its growth, bolstered by a mature ecosystem integrating academia, government research, and commercial sectors [2] [3].

Within this expansive field, four families of generative models have emerged as particularly influential: Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Diffusion Models, and Transformers. Each operates on distinct architectural principles and mathematical foundations, leading to unique performance trade-offs in accuracy, diversity, computational cost, and stability. This guide provides an objective, data-driven comparison of these models, framing their performance within the rigorous context of materials science and drug discovery research. It synthesizes quantitative performance data, detailed experimental protocols, and emerging trends to offer researchers a foundational resource for navigating the rapidly evolving landscape of generative AI.

Core Architectures: A Comparative Framework

Generative models share the common goal of synthesizing novel data but differ fundamentally in their approach to learning and representing data distributions. The following section delineates the core architectures, operational mechanisms, and inherent strengths and weaknesses of VAEs, GANs, Diffusion Models, and Transformers.

Variational Autoencoders (VAEs)

Architecture and Workflow: VAEs are probabilistic generative models based on an encoder-decoder architecture. The encoder network maps input data to a probability distribution in a latent (hidden) space, typically characterized by a mean and standard deviation. The decoder network then samples from this distribution to reconstruct the input data or generate new samples [4] [1]. The training process involves minimizing two loss functions: the reconstruction loss, which ensures the output resembles the input, and the KL divergence loss, which regularizes the latent space to resemble a predefined prior distribution, like a standard Gaussian [1] [5].

Key Characteristics and Limitations: A principal advantage of VAEs is their probabilistic nature and stable training process. The structured, continuous latent space they learn facilitates smooth interpolation and meaningful data exploration [1] [6]. However, a well-documented limitation is that VAE-generated samples, particularly images, can often appear blurry, as the pixel-wise reconstruction loss may fail to capture fine-grained textural details [4] [6]. Furthermore, their probabilistic approach can sometimes lead to an over-emphasis on covering the data distribution at the expense of generating highly precise outputs [4].

Generative Adversarial Networks (GANs)

Architecture and Workflow: GANs employ an adversarial framework comprising two competing neural networks: a Generator and a Discriminator. The generator creates synthetic data from random noise, while the discriminator evaluates its authenticity by distinguishing it from real training data [4] [5]. This setup forms a two-player minimax game: the generator strives to produce data indistinguishable from real data, while the discriminator improves its detection capabilities. Through iterative training, the generator learns to produce increasingly realistic samples [5] [6].

Key Characteristics and Limitations: GANs are renowned for their ability to generate high-fidelity, sharp, and detailed samples, often surpassing VAEs in perceptual quality [4] [7]. The primary challenge with GANs is their unstable training dynamics. The adversarial process can be sensitive to hyperparameters and is prone to mode collapse, a situation where the generator produces a limited diversity of samples [5] [6]. They also typically require substantial computational resources and longer training times compared to VAEs [4].

Diffusion Models

Architecture and Workflow: Diffusion models generate data through a progressive noising and denoising process. The forward process systematically adds Gaussian noise to training data over many steps until it becomes pure noise. The reverse process trains a neural network to gradually denoise, starting from random noise, to reconstruct a coherent data sample [4] [7]. Models like DALL-E and Stable Diffusion operate on this principle, often conducting the reverse process in a lower-dimensional latent space for efficiency [7].

Key Characteristics and Limitations: Diffusion models have set new benchmarks for output quality and diversity in image generation, rivaling or even exceeding GAN performance in some cases [4] [7]. Their training is generally more stable than GANs. However, this comes at the cost of computational intensity; the iterative denoising process can require hundreds or thousands of steps, leading to significantly slower inference times [4] [6]. While highly accurate, they can sometimes overlook fine details or generate anatomically implausible features [4].

Transformers

Architecture and Workflow: Originally designed for natural language processing, Transformers have become foundational for generative tasks across multiple data types. Their core innovation is the self-attention mechanism, which weighs the importance of different parts of the input data (e.g., words in a sentence or patches of an image) when generating an output [4]. In generative settings, models like GPT-4 are trained autoregressively, predicting the next token in a sequence based on all previous tokens [4] [1].

Key Characteristics and Limitations: Transformers excel at capturing long-range dependencies and contextual relationships within data, making them exceptionally versatile for text, code, and even image generation [4] [1]. Their primary drawback is their massive appetite for data and computational resources during both training and inference. Furthermore, their "black-box" nature results in low model explainability, making it difficult to trace which training data influenced specific outputs [4].

Table 1: Comparative Overview of Core Generative Model Architectures

| Feature | VAEs | GANs | Diffusion Models | Transformers |

|---|---|---|---|---|

| Core Principle | Probabilistic encoding/decoding | Adversarial training (Generator vs. Discriminator) | Iterative noising and denoising | Self-attention mechanism for context weighting |

| Training Stability | High & stable [1] [5] | Low & often unstable [4] [6] | Moderate & more stable than GANs [4] | High, but requires massive resources [4] |

| Output Quality | Can be blurry; lower fidelity [4] [6] | High-fidelity, sharp, detailed [4] [7] | State-of-the-art, highly realistic [4] [7] | High-quality, contextually coherent [4] |

| Inference Speed | Fast | Fast | Slow (iterative process) | Variable, can be slow for long sequences |

| Primary Challenge | Blurry outputs, oversimplification | Mode collapse, training instability | High computational cost, slow generation | High resource demands, low explainability [4] |

| Key Materials Science Application | Anomaly detection, initial molecular screening [1] | High-resolution material image synthesis [7] | De novo molecular & crystal structure design [8] | Predicting synthesis pathways, analyzing research literature [9] |

Performance Metrics and Experimental Protocols in Materials Science

Evaluating generative models for scientific applications requires a multi-faceted approach that integrates quantitative metrics with domain-expert validation. Standard image quality metrics alone are often insufficient for capturing the scientific plausibility and utility of generated materials data [7].

Key Performance Metrics

- Structural Coherence and Fidelity: Metrics like the Structural Similarity Index Measure (SSIM) and Fréchet Inception Distance (FID) are commonly used. SSIM assesses the perceptual similarity between generated and real images, while FID measures the distance between feature distributions of real and generated datasets, with lower scores indicating higher quality [7].

- Semantic Alignment: For conditional generation tasks (e.g., creating a material from a text description), CLIPScore evaluates how well the generated image aligns with the text prompt by measuring the similarity between their embeddings in a shared space [7].

- Diversity and Mode Coverage: Metrics like Learned Perceptual Image Patch Similarity (LPIPS) gauge the diversity of generated samples, which is critical for ensuring a model explores a wide chemical space and avoids mode collapse [7].

- Scientific Validity: This is the ultimate metric and often requires domain-expert validation. Researchers assess whether AI-generated molecular structures are chemically valid, synthetically accessible, and possess physically plausible properties [7] [8]. This can involve computational checks for valency and stability, as well as physical synthesis and testing.

A Standard Experimental Protocol for Molecular Discovery

The following workflow, synthesized from recent literature, outlines a standard protocol for using generative AI in molecular and materials discovery [10] [8] [9]:

- Problem Formulation and Data Curation: Define the target material properties (e.g., high conductivity, specific bandgap, binding affinity for a protein). Assemble a high-quality dataset of known molecules or materials with associated property data. Data scarcity and quality remain a significant challenge in this field [2] [3].

- Model Training and Conditional Generation: Train a generative model (e.g., a Diffusion Model or GAN) on the collected dataset. To steer the generation toward desired properties, models are often trained conditionally, where the target property is provided as an additional input [2] [8].

- Virtual Screening and Optimization: The trained model generates a large library of candidate structures. These candidates are then screened virtually using predictive models (e.g., for toxicity, solubility, or binding energy) to filter out implausible options. Reinforcement learning can further optimize the shortlisted candidates [8].

- Multi-Scale Validation:

- Computational Validation: Use first-principles simulations (e.g., Density Functional Theory) to verify the stability, electronic properties, and other quantum mechanical characteristics of the proposed materials [9].

- Synthesis and Experimental Testing: The most promising candidates are synthesized in the laboratory, and their properties are characterized using techniques like X-ray diffraction, spectroscopy, and performance testing in devices (e.g., batteries or sensors) [10].

- Closed-Loop Learning: Experimental results are fed back into the training dataset, refining the model in an iterative, closed-loop system. An emerging trend is the integration of generative AI platforms with robotic automation to create fully autonomous discovery systems [2].

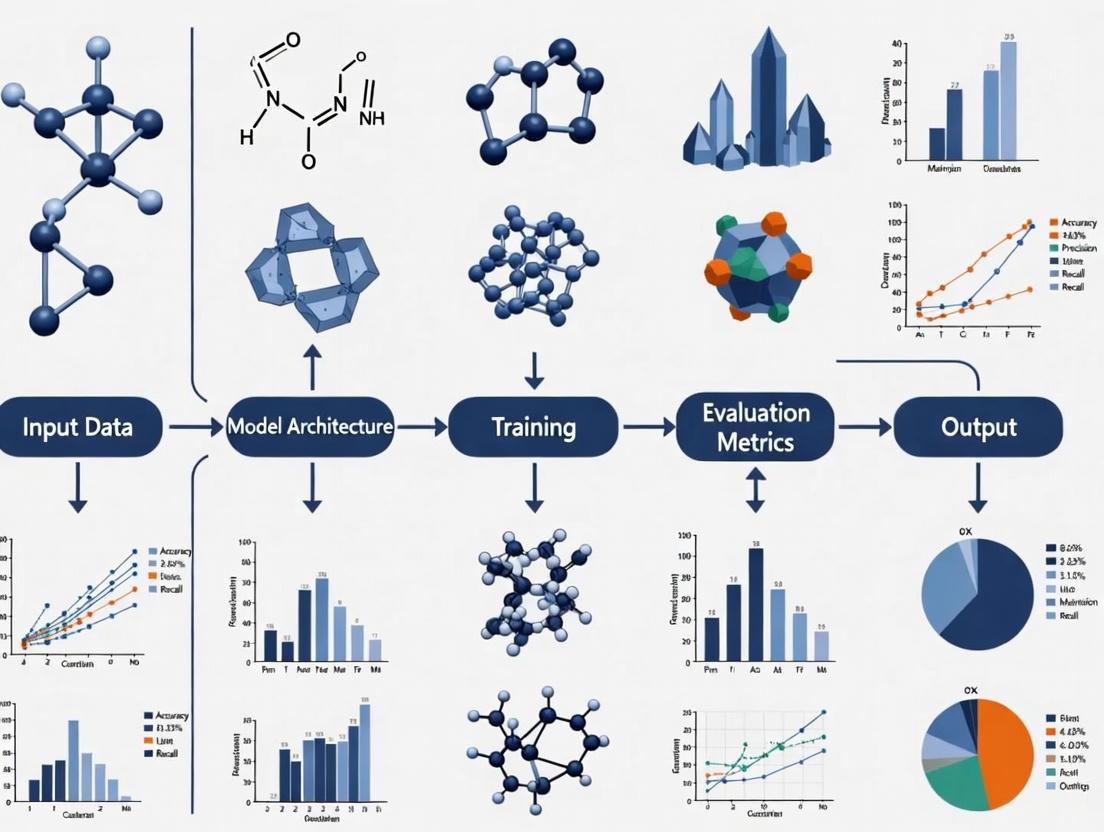

The diagram below illustrates this iterative workflow for AI-driven material discovery.

Quantitative Performance Analysis

Objective data is crucial for comparing the real-world efficacy of generative models. The following tables consolidate performance metrics and application data from recent studies and market analyses.

Table 2: Market Adoption and Application Focus (2024) Data sourced from market analysis reports [2] [3]

| Application Segment | Market Share (%) | Dominant Model Types | Primary Use Case |

|---|---|---|---|

| Materials Discovery & Design | 41.4% | GANs, Diffusion Models [2] | Inverse design of novel atomic structures. |

| Pharmaceuticals & Chemicals | 25.2% | Transformers, Diffusion Models [10] [3] | De novo molecular design & drug candidate screening. |

| Predictive Modeling & Simulation | Not Specified | Transformers, VAEs | Predicting material properties and behaviors. |

Table 3: Experimental Performance in Scientific Image Generation Based on a comparative study of generative architectures on domain-specific datasets [7]

| Model Architecture | Perceptual Quality (FID ↓) | Structural Coherence (SSIM ↑) | Expert Assessment |

|---|---|---|---|

| GANs (e.g., StyleGAN) | Best | High | High structural coherence and perceptual quality. |

| Diffusion Models (e.g., DALL-E 2) | Excellent | Medium | High realism but may struggle with scientific accuracy. |

| VAEs | Good | Medium | Softer, sometimes blurry outputs. |

Key Insights from Clinical Translation: The most compelling performance metric is the successful transition of AI-designed molecules into clinical trials. As of 2024, at least 15 AI-developed drug candidates have entered various clinical trial stages [10]. Examples include:

- REC-2282: A small molecule pan-HDAC inhibitor for neurofibromatosis, developed by Recursion and now in Phase 2/3 trials [10].

- BEN-8744: A small molecule PDE10 inhibitor for ulcerative colitis, developed by BenevolentAI and in Phase 1 trials [10].

This clinical progress demonstrates that generative AI can reduce the time and cost of drug discovery by an estimated 25-50% [10].

The effective application of generative AI in materials science relies on a suite of computational "reagents" and platforms.

Table 4: Essential Research Reagents and Tools

| Tool / Resource | Function | Example Uses in Research |

|---|---|---|

| Generative Models (VAE, GAN, Diffusion, Transformer) | Core engine for generating novel molecular structures and material configurations. | De novo drug design, crystal structure prediction, polymer generation. |

| AlphaFold Protein Structure Database | Provides predicted 3D structures of proteins, which are critical for structure-based drug design. | Understanding protein-based drug targets and enabling molecular docking studies [10]. |

| Knowledge Distillation | A technique to compress large, complex models into smaller, faster versions without significant performance loss. | Creating efficient models for rapid molecular screening on limited computational hardware [9]. |

| Physics-Informed Generative AI | Embeds physical laws and constraints (e.g., symmetry, energy conservation) directly into the AI's learning process. | Ensuring generated crystal structures are not just statistically likely but chemically realistic and stable [9]. |

| Cloud-Based AI Platforms | Provides scalable computing power and pre-built AI environments for running complex model trainings and inferences. | Hosting generative AI software for collaborative, resource-efficient material discovery [3]. |

| Generalist Materials Intelligence | Emerging class of AI powered by Large Language Models (LLMs) that can reason across data types and interact with scientific text. | Functioning as an autonomous research agent to develop hypotheses, design experiments, and verify results [9]. |

The systematic review of generative AI performance in materials science reveals a diverse and rapidly maturing ecosystem. The choice of model is not a matter of identifying a single "best" option, but rather of selecting the most appropriate tool based on the specific research objective, constrained by resources and required output quality.

Diffusion Models and Transformers are currently at the forefront of de novo design tasks, setting benchmarks for the quality and diversity of generated molecules and materials, as evidenced by their leading role in materials discovery and the progression of AI-designed drugs into clinical trials [2] [10] [8]. However, their high computational cost can be prohibitive. GANs remain powerful for tasks demanding high perceptual fidelity, such as generating realistic scientific images, though their practical application may be hampered by training instability [7]. VAEs offer a stable and efficient alternative, particularly valuable for initial screening, anomaly detection, and in scenarios with limited data or computational budget [4] [1].

The future direction of the field lies not only in improving standalone models but also in their smarter integration and application. Key trends include the move toward physics-informed models that respect scientific constraints [9], the use of knowledge distillation to enhance efficiency [9], and the development of closed-loop, autonomous discovery systems that integrate AI with robotic experimentation [2]. For researchers and drug development professionals, success will increasingly depend on a nuanced understanding of these trade-offs and a strategic approach to leveraging the unique strengths of each generative architecture to accelerate the journey from conceptual design to validated material.

The advent of generative artificial intelligence (AI) has ushered in a new paradigm for the discovery and design of novel functional materials. Unlike traditional high-throughput screening methods, which are limited to searching existing databases, generative models can proactively design candidate materials with targeted properties, dramatically accelerating the exploration of vast chemical spaces [11] [12]. However, the effectiveness of these generative models hinges on robust and meaningful performance metrics to evaluate the quality of their outputs. Within materials science, three key metrics have emerged as fundamental for assessing generative model performance: stability, which determines a material's synthesizability; novelty, which measures its distinction from known structures; and diversity, which gauges the variety of generated candidates [13] [14]. This guide provides a systematic comparison of how state-of-the-art generative models perform against these critical benchmarks, detailing experimental protocols and offering a toolkit for researchers engaged in AI-driven materials discovery.

Quantitative Performance Comparison of Generative Models

A comparative analysis of leading generative models reveals significant differences in their ability to produce stable, novel, and diverse materials. The following tables summarize key quantitative findings from recent studies and benchmarking efforts.

Table 1: Comparative performance of generative models for inorganic crystals on stability and novelty metrics. SUN denotes Stable, Unique, and New materials. Data is adapted from benchmarking studies in the field [13].

| Model | SUN Rate (%) | Average RMSD to DFT Relaxed (Å) | Novelty Rate (%) | Uniqueness Rate (%) |

|---|---|---|---|---|

| MatterGen (Base Model) | 75.0 | < 0.076 | 61.0 | 52.0 (at 10M samples) |

| MatterGen-MP | ~60% higher than CDVAE/DiffCSP | ~50% lower than CDVAE/DiffCSP | Information Missing | Information Missing |

| CDVAE | Information Missing | Information Missing | Information Missing | Information Missing |

| DiffCSP | Information Missing | Information Missing | Information Missing | Information Missing |

Table 2: Performance of generative models in human-in-the-loop discovery workflows. "Ed" refers to predicted decomposition enthalpy [15].

| Generated Material | Space Group | Predicted Ed (eV/atom) | Experimentally Synthesized? |

|---|---|---|---|

| LiZn2Pt | Fm-3m | -0.146 | Yes |

| NiPt2Ga | Fm-3m | -0.007 | Yes |

| BaH8Pt | I4/mmm | -0.173 | No |

| NaZn2Pd | Information Missing | -0.014 | No (Unsuccessful) |

Detailed Experimental Protocols for Key Metrics

To ensure reproducible and comparable results, researchers follow standardized computational and experimental protocols for evaluating generative models.

Stability Assessment via DFT Computation

The gold standard for assessing the stability of a computationally generated material is to compute its energy relative to a convex hull of known stable phases using Density Functional Theory (DFT) [13] [15].

- Structure Relaxation: The generated crystal structure is first relaxed to its nearest local energy minimum using DFT calculations. This process adjusts atomic positions and cell parameters.

- Energy Calculation: The formation energy of the relaxed structure is calculated.

- Energy Above Hull Determination: The calculated energy is compared to the convex hull constructed from all known competing phases in its chemical system. The "energy above hull" is the energy difference per atom between the candidate material and the most stable combination of other phases at the same composition. A material is typically considered stable if this value is below 0.1 eV/atom [13].

- Decomposition Enthalpy: Some workflows use decomposition enthalpy, which can be negative for stable compounds, providing a more nuanced stability metric for training machine learning predictors [15].

Novelty and Uniqueness Evaluation with Structure Matching

Evaluating whether a generated material is new and distinct involves comparing it against existing databases and other generated samples.

- Discrete Evaluation (Traditional): The most common method uses a structure matcher, such as the one in the pymatgen library, which returns a Boolean value (True/False) indicating whether two structures are equivalent based on tolerances for cell parameters and atomic positions [14]. Novelty is then calculated as the percentage of generated structures not found in a training database (e.g., Materials Project, ICSD), while uniqueness is the percentage of non-identical structures within the generated set itself [13].

- Continuous Evaluation (Emerging): Recent research highlights limitations in discrete metrics, including their inability to quantify the degree of similarity. New continuous metrics are being proposed, such as:

- Compositional Distance (

d_magpie): The Euclidean distance between Magpie fingerprints, which are vectors of 145 stoichiometric and elemental attributes [14]. - Structural Distance (

d_amd): The distance between Average Minimum Distance (AMD) vectors, which are structural fingerprints invariant to choice of unit cell [14]. These continuous metrics provide a more nuanced and reliable evaluation of generative models.

- Compositional Distance (

Experimental Validation Protocol

The ultimate validation of a generative model is the successful synthesis and property verification of a proposed material [11] [15].

- Candidate Down-Selection: Generated materials predicted to be stable computationally are filtered based on domain expertise, considering factors like feasible oxidation states and synthesizability.

- Synthesis: The down-selected candidates are synthesized in the lab using standard solid-state or solution-based methods.

- Structure Verification: The crystallographic structure of the synthesized material is determined using X-ray Powder Diffraction (XRD). The experimental pattern is compared against the pattern simulated from the generative model's proposed structure, often using Rietveld refinement [15].

- Property Measurement: Target properties (e.g., bulk modulus) are experimentally measured and compared to the design target used to condition the generative model. A close match (e.g., within 20% for bulk modulus) validates the model's inverse design capability [11].

Workflow Visualization

The following diagram illustrates the typical closed-loop workflow for generative materials design, integrating stability assessment, novelty checks, and experimental validation.

AI-Driven Materials Discovery Workflow

Successful implementation of generative materials design relies on a suite of computational and experimental tools.

Table 3: Key resources for generative materials science research.

| Tool / Resource | Type | Primary Function |

|---|---|---|

| MatterGen | Generative AI Model | A diffusion model for directly generating novel, stable inorganic materials with targeted property constraints [11] [13]. |

| Materials Project (MP) | Database | A core open-access database of computed crystal structures and properties used for training and benchmarking models [11] [13]. |

| Inorganic Crystal Structure Database (ICSD) | Database | A comprehensive database of experimentally determined crystal structures, crucial for evaluating the novelty of generated materials [13]. |

| Density Functional Theory (DFT) | Computational Method | The foundational quantum-mechanical method for calculating material properties and verifying stability via energy-above-hull analysis [13] [15]. |

| pymatgen | Software Library | A Python library for materials analysis, featuring essential tools like StructureMatcher for evaluating novelty and uniqueness [14]. |

| X-ray Powder Diffraction (XRD) | Experimental Technique | The primary method for experimentally verifying the crystal structure of a synthesized material against the model's prediction [15]. |

The systematic evaluation of generative AI models using stability, novelty, and diversity metrics is paramount for advancing the field of computational materials discovery. Benchmarking studies show that modern diffusion models like MatterGen can significantly outperform earlier approaches, generating materials that are not only stable and novel but also address targeted property constraints [11] [13]. The emergence of continuous metrics for novelty and diversity promises more nuanced model assessments, moving beyond simple binary checks [14]. Furthermore, successful experimental validation, as demonstrated by the synthesis of predicted materials like LiZn2Pt and NiPt2Ga, provides the most compelling evidence for the real-world impact of this technology [15]. As these tools mature, the integration of robust performance metrics into a closed-loop "flywheel" of generation, simulation, and experimental feedback will be crucial for realizing the full potential of generative AI in creating the next generation of functional materials.

The Shift from Discriminative to Generative Models in Materials Discovery

The field of materials science is undergoing a fundamental transformation in its approach to discovery, moving from a discriminative paradigm that classifies and predicts properties of known materials to a generative paradigm that creates entirely novel materials with targeted characteristics. This shift represents a critical evolution in the application of artificial intelligence within materials research, enabling the inverse design of materials—where researchers begin with desired properties and then identify or create materials that exhibit them [16]. Where discriminative models excel at learning the boundary between existing classes of materials, generative models learn the underlying probability distribution of the data itself, allowing them to propose previously unconsidered atomic structures and compositions [16] [17].

This transition is driven by the recognition that the traditional trial-and-error approach to materials discovery is ill-suited to exploring the vastness of chemical space, which is estimated to exceed 10^60 carbon-based molecules alone [16]. The timeline from material conception to deployment has historically spanned decades, hindering innovation in critical areas such as renewable energy, healthcare, and electronics [16]. Generative models address this bottleneck by leveraging advanced machine learning to navigate complex structural and functional requirements, dramatically accelerating the discovery process for next-generation materials [16].

Fundamental Model Comparisons: Discriminative vs. Generative Approaches

Core Philosophical and Mathematical Differences

Discriminative and generative models employ fundamentally different learning approaches and mathematical frameworks, which leads to their distinct capabilities in materials science applications.

Discriminative models, also known as conditional models, focus on modeling the conditional probability ( P(y|x) )—the probability of a particular output or property ( y ) given an input material structure ( x ) [18] [17]. These models excel at learning the decision boundaries that separate different classes of materials or predict specific properties based on existing data. They directly learn the mapping from inputs to outputs without attempting to understand how the data is generated [17] [19]. The majority of discriminative models are used for supervised learning tasks, where they separate data points into different classes by learning boundaries using probability estimates and maximum likelihood [19].

Generative models take a fundamentally different approach by learning the underlying probability distribution ( P(x) ) of the data itself [16]. These models aim to understand how the actual data is structured and embedded into the feature space, rather than merely learning the boundaries between classes [19]. Mathematically, generative classifiers typically assume a functional form for the prior probability ( P(Y) ) and the likelihood ( P(X|Y) ), estimate these parameters from the data, and then use Bayes' theorem to calculate the posterior probability ( P(Y|X) ) [19]. This approach allows generative models to create new data instances that resemble those in the original training dataset [17].

Table 1: Fundamental Differences Between Discriminative and Generative Models

| Characteristic | Discriminative Models | Generative Models |

|---|---|---|

| Probability Modeled | Conditional probability ( P(y|x) ) | Joint probability ( P(x, y) ) and data distribution ( P(x) ) |

| Learning Focus | Decision boundaries between classes | Underlying data distribution and structure |

| Approach | "Learn the differences" between categories | "Learn everything" about the data distribution |

| Primary Applications | Classification, regression, prediction | Data generation, anomaly detection, inverse design |

| Mathematical Foundation | Direct estimation of ( P(y|x) ) | Estimation of ( P(x) ) and ( P(x|y) ) via Bayes' theorem |

| Data Requirements | Labeled data for supervised learning | Can utilize both labeled and unlabeled data |

Capability Comparison and Use Cases

The different philosophical approaches of discriminative and generative models lead to distinct capabilities and applications in materials science research.

Discriminative models excel in tasks requiring precise predictions and classifications based on existing data. In materials science, this includes applications such as predicting material properties based on structural characteristics, classifying materials into specific categories, and detecting anomalies in material behavior [17]. These models are particularly valuable when researchers need to quickly assess the potential properties of a material without conducting expensive experimental characterization or computational simulations [16]. Their strength lies in their efficiency and typically faster training times compared to generative models, making them well-suited for tasks where the primary goal is accurate prediction rather than novel discovery [17] [19].

Generative models unlock fundamentally new capabilities in materials discovery, particularly in the domain of inverse design. Rather than simply predicting properties of existing materials, generative models can propose entirely new atomic structures with desired characteristics [16]. This capability is transformative for fields where specific material properties are needed but the chemical or structural space to achieve them is vast and poorly understood. Generative models have demonstrated success in designing new catalysts, semiconductors, polymers, and crystals by exploring chemical spaces beyond human intuition [16]. A critical feature of these models is their use of a latent space—a lower-dimensional representation of the structure-properties relationship that enables the inverse design strategy [16].

Table 2: Application-Based Comparison in Materials Science

| Application Area | Discriminative Model Performance | Generative Model Performance |

|---|---|---|

| Property Prediction | Excellent at predicting specific properties from structure | Can infer properties but less direct than discriminative |

| Material Classification | Highly effective at categorizing materials into classes | Less directly suited for pure classification tasks |

| Novel Material Discovery | Limited to variations of known materials | Exceptional at creating truly novel structures |

| Inverse Design | Not applicable | Transformative capability to design from properties |

| Data Augmentation | Cannot generate new training data | Can create synthetic materials to expand datasets |

| Stability Assessment | Effective at predicting stability of proposed structures | Can optimize for stability during generation |

Experimental Protocols and Methodologies

Reinforcement Learning Fine-Tuning for Generative Models

A significant advancement in generative models for materials discovery is the application of reinforcement fine-tuning, as demonstrated by the CrystalFormer-RL approach [18]. This methodology bridges the strengths of both discriminative and generative models by using discriminative models to guide and improve generative models through reward signals.

The objective function optimized in this approach is: [ \mathcal{L} = \mathbb{E}{x \sim p{\theta}(x)} \left[ r(x) - \tau \ln \frac{p{\theta}(x)}{p{\text{base}}(x)} \right] ] where ( x ) represents crystalline materials sampled from a policy network ( p{\theta}(x) ), ( r(x) ) is the reward function that awards preferred materials with high returns, and ( \tau ) is the regularization coefficient controlling proximity to the base model ( p{\text{base}}(x) ) [18]. The second term represents the Kullback-Leibler (KL) divergence between the policy distribution and the base model, ensuring that the optimized policy does not deviate too drastically from the base generative model while still maximizing the expected reward [18].

In practice, this reinforcement fine-tuning approach can utilize discriminative models such as machine learning interatomic potentials (MLIP) and property prediction models as reward functions [18]. For example, rewards can be based on properties such as energy above the convex hull (indicating stability) or specific material property figures of merit [18]. This methodology has been shown to enhance the stability of generated crystals and enable the discovery of materials with conflicting property requirements, such as substantial dielectric constant and band gap simultaneously [18].

Structural Constraint Integration in Generative Models

The SCIGEN (Structural Constraint Integration in GENerative model) approach represents another methodological advancement for generative models in materials science [20]. This technique addresses the challenge of steering generative models toward creating materials with specific structural features known to give rise to desirable quantum properties.

The experimental protocol involves:

Constraint Definition: Users define specific geometric structural rules for the generative model to follow, such as Kagome lattices, Lieb lattices, or Archimedean lattices, which are known to host exotic quantum phenomena [20].

Constrained Generation: The SCIGEN computer code ensures diffusion models adhere to these user-defined constraints at each iterative generation step, blocking generations that don't align with the structural rules [20].

High-Throughput Screening: The constrained model generates millions of candidate materials, which are then screened for stability using computational methods [20].

Property Simulation: A subset of stable candidates undergoes detailed simulation using supercomputing resources to understand how the materials' underlying atoms behave and predict properties such as magnetism [20].

Experimental Validation: Promising candidates are synthesized and experimentally characterized to validate the model's predictions [20].

This approach has successfully generated over 10 million material candidates with Archimedean lattices, with one million surviving stability screening. From a smaller sample of 26,000 materials, simulations revealed magnetism in 41% of structures, leading to the successful synthesis of two previously undiscovered compounds, TiPdBi and TiPbSb [20].

Benchmarking Frameworks for Generative Models

The evaluation of generative models for materials discovery has been formalized through benchmarking frameworks such as Dismai-Bench (Disordered Materials & Interfaces Benchmark) [21]. This benchmark addresses the challenge of properly assessing generative model performance beyond heuristic metrics such as charge neutrality.

The benchmarking protocol involves:

Dataset Selection: Using specialized datasets of complex materials, including disordered alloys, interfaces, and amorphous silicon with 256-264 atoms per structure, which represent more challenging generation tasks than small, periodic crystals [21].

Model Training: Independently training generative models on each dataset using standardized procedures to ensure fair comparison.

Evaluation Metrics: Performing direct structural comparisons between training and generated structures to assess model performance. This is possible because the material system of each training dataset is fixed, allowing for meaningful comparisons [21].

Architecture Comparison: Testing different model architectures, such as graph diffusion models and coordinate-based U-Net diffusion models, to understand the impact of architectural choices on generation quality [21].

This benchmarking approach has revealed that graph-based models significantly outperform U-Net models due to their higher expressive power, particularly for complex disordered structures [21]. The insights from such systematic benchmarking guide the development of more effective generative models for materials discovery.

Performance Metrics and Experimental Data

Quantitative Performance Comparison

The shift from discriminative to generative models can be quantitatively assessed through various performance metrics relevant to materials discovery objectives. The table below summarizes key quantitative comparisons based on experimental implementations documented in the literature.

Table 3: Quantitative Performance Metrics for Materials Discovery Models

| Metric | Discriminative Models | Generative Models | Generative with RL Fine-Tuning |

|---|---|---|---|

| Novel Stable Materials Generated | Not applicable | Varies by model and dataset | Enhanced stability of generated crystals [18] |

| Success Rate for Target Properties | High for prediction on known materials | Moderate for direct generation | Successfully discovers crystals with conflicting properties [18] |

| Computational Cost | Lower training and inference costs | Higher training costs, especially for complex structures | Additional cost for reward computation during fine-tuning |

| Data Efficiency | Requires labeled data for training | Can leverage unlabeled data through unsupervised learning | Transfers knowledge from discriminative models |

| Exploration Capability | Limited to interpolating between known materials | Can extrapolate to novel regions of chemical space | Targeted exploration guided by reward signals |

| Experimental Validation Success | High accuracy for property prediction | Emerging results showing experimental synthesis | Two novel compounds (TiPdBi, TiPbSb) synthesized from SCIGEN [20] |

Market Adoption and Impact Assessment

The growing adoption of generative AI in materials science is reflected in market analysis data, providing another lens through which to assess the impact of this paradigm shift. The generative AI in material science market is expected to be worth approximately USD 1.2 billion in 2024, growing to USD 13.6 billion by 2033 at a compound annual growth rate (CAGR) of 30.9% [3]. Another analysis projects growth from USD 1.1 billion in 2024 to USD 11.7 billion by 2034 at a CAGR of 26.4% [22].

This significant market growth is particularly concentrated in the materials discovery and design segment, which captured more than 40% of the market share in 2024 [22] [3]. This dominance reflects the transformative impact of generative models on the initial phase of material development, where the identification of new materials can disrupt various industries, including pharmaceuticals, energy, and consumer electronics [22].

Regionally, North America has captured a dominant position in the generative AI in material science market, accounting for more than 36% of the market share in 2024 [22]. This leadership is attributed to a mature ecosystem integrating academia, government research, and commercial sectors, with unparalleled access to venture capital and AI talent [22] [2].

Successful implementation of generative approaches in materials discovery requires both computational resources and experimental capabilities for validation. The following table details key components of the research infrastructure supporting this paradigm shift.

Table 4: Essential Research Reagents and Computational Resources

| Resource Category | Specific Examples | Function in Materials Discovery |

|---|---|---|

| Generative Models | CrystalFormer [18], DiffCSP [20], GANs [17], VAEs [17] | Generate novel material structures with desired properties through inverse design |

| Discriminative Models | Machine Learning Interatomic Potentials (MLIP) [18], Property Prediction Models [18] | Provide reward signals for reinforcement fine-tuning and validate generated materials |

| Benchmarking Datasets | Dismai-Bench [21] | Standardized evaluation of generative model performance on complex material systems |

| Structural Constraint Tools | SCIGEN [20] | Steer generative models to create materials with specific geometric patterns associated with target properties |

| High-Performance Computing | Oak Ridge National Laboratory supercomputers [20] | Enable detailed simulations of generated materials' atomic behavior and properties |

| Experimental Synthesis Facilities | Materials synthesis labs [20] | Validate AI-generated material candidates through actual synthesis and characterization |

The shift from discriminative to generative models in materials discovery represents a fundamental transformation in how researchers approach the design and development of new materials. Rather than viewing this as a complete replacement of one paradigm by another, the most promising path forward appears to be a synergistic integration of both approaches, as demonstrated by reinforcement fine-tuning methodologies [18]. Generative models provide the creative capacity to explore vast chemical spaces and propose novel structures, while discriminative models offer the critical assessment needed to guide this exploration toward practically useful and synthesizable materials.

This synergistic relationship is further enhanced by the development of specialized tools such as SCIGEN, which enables researchers to incorporate domain knowledge about structure-property relationships directly into the generation process [20]. By steering generative models toward specific geometric patterns known to give rise to desirable quantum properties, these approaches combine the exploratory power of generative AI with the curated knowledge of materials science experts.

As the field continues to evolve, the integration of generative AI with experimental workflows through multimodal models, physics-informed architectures, and closed-loop discovery systems promises to further accelerate materials discovery [16]. The remarkable market growth projected for generative AI in materials science—with estimates of USD 11.7-13.6 billion by 2033-2034—reflects the significant confidence in this technological transition and its potential to revolutionize how we discover and develop the materials needed for future technological advancements [22] [3].

The application of generative artificial intelligence (AI) in materials science represents a paradigm shift, accelerating the discovery and development of novel materials. The Generative AI in Material Science Market, projected to grow at a compound annual growth rate (CAGR) of 26.4% to USD 11.7 billion by 2034, is a testament to this transformation [22]. This rapid growth is primarily fueled by the technology's capacity to drastically shorten development cycles and reduce costs associated with physical experiments [22]. However, the performance and reliability of these AI models are fundamentally dependent on the quality, scale, and structure of the foundational datasets upon which they are trained and benchmarked. Within this context, established computational databases and emerging AI-driven platforms serve as the essential bedrock for innovation.

This guide provides an objective comparison of two such critical resources: The Materials Project, a pioneering, calculation-based database, and Alexandria, a platform emblematic of the next generation of generative AI-driven material discovery. Understanding their distinct data architectures, methodological approaches, and performance characteristics is crucial for researchers and development professionals aiming to navigate this evolving landscape. The core value proposition of these platforms lies in their ability to provide large-scale, consistent data that enables high-throughput screening and predictive modeling across vast chemical spaces [23].

Quantitative Comparison of Platforms and Market Context

The generative AI market in material science is segmented by function, deployment, and application, with "Materials Discovery and Design" being the dominant segment, accounting for over 40% of the market share [2] [3]. This segment leverages deep learning architectures, including generative adversarial networks and diffusion models, to explore chemical space and propose novel atomic structures through inverse design [2]. The table below summarizes the key market segments and their distributions.

Table 1: Generative AI in Material Science Market Segmentation (2024)

| Segment Type | Segment Name | Market Share / Key Metric | Primary Driver |

|---|---|---|---|

| Type/Function | Materials Discovery and Design [2] [3] | >40% revenue share [2] | Inverse design of novel atomic structures [2] |

| Type/Function | Predictive Modeling and Simulation [2] | Significant growth segment [3] | Accurate prediction of material properties and behavior [3] |

| Deployment | Cloud-Based [3] | 45.6% revenue share [3] | Accessibility, collaboration, and computational power [3] |

| Application | Aerospace & Defense [22] | >30% revenue share [22] | Need for lightweight, high-performance materials [22] |

| Application | Pharmaceuticals & Chemicals [3] | 25.2% market share [3] | Discovery of new molecules and drug delivery systems [3] |

| Region | North America [2] [22] [3] | 36%-46.9% market share [2] [22] [3] | Concentration of AI talent, venture capital, and tech firms [2] |

North America, particularly the United States, is the unequivocal leader in this market, contributing nearly half of its global growth. This dominance is underpinned by a mature ecosystem integrating academia, government research, and a vibrant commercial sector with unparalleled access to venture capital and AI talent [2].

Table 2: High-Level Platform Comparison: The Materials Project vs. Alexandria

| Feature | The Materials Project | Alexandria |

|---|---|---|

| Core Data Source | First-principles calculations (Density Functional Theory) [24] | Generative AI models; specific data sources not detailed in search results |

| Primary Methodology | High-throughput computational materials science [24] | AI-driven material design and discovery |

| Key Output | Energetic, electronic, and elastic properties of known & predicted crystals [24] | Novel material designs & optimized structures |

| Data Scale | Massive, consistent dataset across the periodic table [23] | AI-explored chemical space beyond human conception [2] |

| Industry Application | Foundational screening for batteries, semiconductors, etc. [24] | Tailored material solutions for specific industry needs [22] |

Experimental Protocols and Methodologies

The Materials Project Workflow and Validation

The Materials Project employs a rigorous, high-throughput computational pipeline to generate its core dataset.

- Data Generation Protocol: The primary method is Density Functional Theory (DFT) using the PBE functional. Calculations are performed on known crystal structures from experimental databases and theoretically predicted polymorphs [24]. Each individual calculation is assigned a unique, permanent

task_id[24]. - Data Aggregation Protocol: The aggregated data presented on material detail pages is derived from multiple individual calculations (

task_ids). A uniquematerial_id(mp-id) is assigned to each distinct material polymorph, ensuring a consistent reference point even as new calculations are added to the database [24]. - Performance Validation & Error Analysis: The accuracy of the data is benchmarked against known experimental values where possible, with detailed discussions of systematic errors provided in peer-reviewed publications [24]. Key systematic errors include:

- Lattice Parameters: A typical overestimation of 1-3% due to the PBE functional underbinding materials [24] [23].

- Band Gaps: A systematic underestimation, as PBE is known to poorly describe electronic excited states [24].

- Elastic Constants: Predictions require validation against experimental data, as their accuracy can vary [23].

- Ongoing Methodological Evolution: The platform is transitioning to newer functionals like r2SCAN, which are expected to significantly improve the accuracy of formation enthalpies and lattice parameters, thereby enhancing predictive fidelity [24] [23].

Generative AI Workflow in Material Discovery

Platforms like Alexandria represent a different, AI-centric paradigm. The following diagram illustrates a generalized workflow for generative AI in material discovery.

Diagram 1: Generative AI Material Discovery Workflow

The generative AI process inverts the traditional research approach. It begins with researchers defining a set of target properties, such as high conductivity or specific tensile strength. Generative models, like Generative Adversarial Networks (GANs) or diffusion models, then explore a vast chemical space to propose novel atomic structures or molecules that meet these criteria [2]. These candidates undergo virtual screening and computational validation (e.g., via DFT simulations) to shortlist the most promising leads before they are passed to experimental synthesis and testing, creating a closed-loop, autonomous discovery system [2] [3].

Performance Benchmarking and Key Differentiators

Data Accuracy and Systematic Performance

A critical aspect of benchmarking is understanding the inherent accuracy and limitations of the data.

Table 3: Data Accuracy and Systematic Performance Benchmarks

| Performance Metric | The Materials Project (PBE Functional) | Generative AI Platforms (e.g., Alexandria) |

|---|---|---|

| Lattice Parameter Accuracy | Systematic overestimation of 1-3% [24] [23] | Accuracy is model and training-data dependent |

| Band Gap Accuracy | Systematic underestimation (PBE known limitation) [24] | Aims for higher accuracy but relies on foundational DFT data |

| Throughput & Scale | High-throughput screening of hundreds of thousands of materials [23] | Exploration of "virtually infinite" chemical space [2] |

| Primary Value | Large-scale, consistent data with systematic (and often correctable) errors [23] | Acceleration of discovery for novel, application-specific materials [22] |

For The Materials Project, the true value lies not in the absolute accuracy for a single material, but in the fact that the entire dataset is generated consistently, allowing for reliable large-scale comparisons and trend identification across chemical space [23]. The systematic nature of the errors means that predictions, even when numerically inaccurate, can still be used for effective screening and ranking of materials [24].

Key Challenges and Limitations

Both types of platforms face significant challenges that impact their performance and utility.

- Data Quality and Scarcity: This is a primary constraint for generative AI. The development of robust models requires massive, high-quality datasets, which are often scarce, inconsistently documented, or scattered across institutions [2] [22] [3].

- Computational Cost: High-fidelity DFT calculations are computationally expensive, limiting the scope of methods that can be applied at scale. Generative AI models, particularly those involving complex simulations, also demand extensive computational resources, creating a barrier to entry for some organizations [24] [22].

- Physical Fidelity: The Materials Project's use of generalized functionals (PBE) leads to known inaccuracies, such as poor description of van der Waals forces in layered crystals [24]. Generative AI models can inherit and even amplify the biases and inaccuracies present in their training data.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The effective use of these platforms requires a suite of digital and computational "research reagents." The table below details key solutions and their functions in computational and AI-driven materials research.

Table 4: Key Research Reagent Solutions for AI-Driven Materials Science

| Solution / Resource | Function / Purpose | Relevance to Platforms |

|---|---|---|

| High-Throughput DFT Codes | Performs the foundational quantum mechanical calculations that generate energetic and electronic structure data. | The Materials Project [24] |

| Generative AI Models (GANs, VAEs, Diffusion) | Core engines for proposing novel material structures based on target properties (inverse design). | Alexandria & similar AI platforms [2] [3] |

| Cloud Computing Infrastructure | Provides on-demand, scalable computational power necessary for running large-scale AI training and complex simulations. | Essential for cloud-based deployment of generative AI [3] |

| Application Programming Interfaces (APIs) | Allows for programmatic access to database information, enabling automated data retrieval and integration into custom workflows. | The Materials Project, Alexandria [24] |

| Structure File Formats (CIF, POSCAR) | Standardized files for representing crystal structures, enabling data transfer between different simulation and AI software. | Universal (exportable from The Materials Project) [24] |

| Robotic Automation Systems | Integrates with AI platforms to physically execute synthesis and characterization, creating closed-loop discovery systems. | Emerging trend for AI platforms [2] |

The Materials Project and Alexandria represent complementary yet distinct paradigms in materials informatics. The Materials Project serves as a foundational, high-consistency database built on high-throughput quantum mechanics, invaluable for large-scale screening and trend analysis, albeit with known systematic errors. In contrast, platforms like Alexandria embody the generative AI approach, focusing on the accelerated discovery and inverse design of novel materials by exploring chemical spaces intractable to human intuition or traditional simulation-alone methods.

The future trajectory of this field points toward greater integration. Foundational datasets like those from The Materials Project are crucial for training and validating the next generation of generative AI models. Meanwhile, the predictive power and design capabilities of AI will guide more focused and efficient use of computational resources. As both computational methodologies and AI algorithms continue to advance—driven by increased investment and a focus on sustainable material solutions—the synergy between these foundational benchmarks and generative tools will undoubtedly accelerate the pace of innovation across pharmaceuticals, energy storage, electronics, and aerospace.

From Code to Crystal: Methodologies and Real-World Applications of Generative AI

The discovery of advanced materials is a cornerstone of technological progress, traditionally relying on iterative, resource-intensive experimental cycles. This conventional "forward" paradigm begins with a material, whose properties are then studied and incrementally modified. A transformative shift is now underway towards inverse design, which starts with a set of desired property targets and aims to computationally generate material structures or compositions that meet them [25]. This paradigm is particularly powerful for designing materials with highly specialized functions, such as high-temperature shape memory alloys for aerospace actuators or efficient catalysts for clean energy technologies [26] [27].

Artificial intelligence (AI), especially generative models, serves as the engine for this inverse design approach. By learning the complex, non-linear relationships between a material's composition, processing, structure, and its resulting properties, these models can navigate the vast design space of possible materials more efficiently than human intuition or traditional high-throughput screening alone [27] [13]. This guide provides a systematic comparison of the performance of mainstream AI-driven inverse design methodologies, evaluating their experimental protocols, quantitative results, and practical utility for scientific research.

Comparative Performance of Inverse Design Methodologies

The table below summarizes the core architectures, performance, and experimental validation of leading inverse design approaches, providing a basis for objective comparison.

Table 1: Performance Comparison of AI-Driven Inverse Design Methods

| Methodology & Model Name | Core Architecture | Key Performance Metrics | Material System & Target Properties | Experimental Validation |

|---|---|---|---|---|

| MatterGen [13] | Diffusion Model | 78% of generated structures stable (<0.1 eV/atom from convex hull); 61% are novel structures; >10x closer to DFT energy minimum vs. prior models. | Inorganic crystals across the periodic table; Chemical system, symmetry, mechanical/electronic/magnetic properties. | One generated material synthesized; measured property within 20% of target. |

| CRESt [28] | Multimodal LMM + Bayesian Optimization | Discovered a catalyst with 9.3x improvement in power density per dollar over pure Pd; 3,500 electrochemical tests conducted. | Fuel cell catalyst; High power density, low cost. | Electrode material synthesized and tested in a working fuel cell; record power density achieved. |

| GAN Inversion [26] | GAN + Latent Space Optimization | Designed a NiTi-based SMA with a high transformation temperature (404 °C) and large mechanical work output (9.9 J/cm³). | Shape Memory Alloys (SMAs); Transformation temperature, mechanical work output. | Five generated alloys were synthesized and characterized; properties matched predictions. |

| SVAE for Molten Salts [25] | Supervised Variational Autoencoder (SVAE) | Predictive DNN for density achieved R²=0.997, MAE=0.038 g/cm³ on test set. | Molten salt mixtures; Mass density at a specific temperature. | Predicted densities of new computer-generated compositions validated via ab initio molecular dynamics (AIMD). |

| LLM as Optimizer [29] | Fine-tuned Large Language Model (WizardMath-7B) | Generational Distance (GD) of 1.21, significantly outperforming a standard Bayesian Optimization (BO) baseline (GD=15.03). | General constrained multi-objective regression; Formulations for resins, polymers, paints. | Computational benchmark against established Bayesian Optimization frameworks (qEHVI). |

Key Performance Insights

- Stability and Novelty: A primary metric for generative models in materials science is their ability to propose new materials that are also stable. MatterGen sets a high bar, with more than double the percentage of stable, unique, and new (SUN) materials compared to earlier models like CDVAE and DiffCSP [13].

- Multi-Objective Optimization: Real-world materials design often requires balancing multiple, competing property targets. Frameworks like CRESt and the GAN inversion for SMAs demonstrate the capacity to handle this complexity, optimizing for performance and cost, or temperature and work output simultaneously [28] [26].

- Beyond Composition: The most advanced systems, such as CRESt, go beyond generating chemical formulas. They integrate processing parameters and leverage diverse data sources (literature, experimental results, images) to plan and even conduct real experiments, closing the loop between computation and validation [28].

Experimental Protocols and Workflows

A critical factor in selecting an inverse design method is its underlying workflow. The following diagram illustrates the two dominant paradigms: the targeted generation workflow, and the integrated robotic experimentation workflow.

Detailed Methodological Breakdown

Targeted Generation via Latent Space Optimization

This protocol, exemplified by the GAN inversion for shape memory alloys and the SVAE for molten salts, is a purely computational approach for proposing candidate materials [26] [25].

Model Training:

- A generative model (e.g., GAN, VAE) is trained on a dataset of known material compositions and/or structures to learn their underlying distribution.

- A separate, but connected, surrogate predictor (e.g., a deep neural network) is trained to map material designs to their properties.

Inverse Design Loop:

- A target property (e.g., a transformation temperature of 400°C) is defined.

- An initial random vector in the model's latent space is sampled.

- The generator produces a candidate material from this vector.

- The surrogate predictor estimates the candidate's properties.

- A loss function, quantifying the difference between the predicted and target properties, is computed.

- Gradient-based optimization is used to iteratively update the latent vector to minimize this loss. The loop continues until the loss is sufficiently small, at which point the final candidate material is output.

Experimental Validation: The top-ranked generated candidates are then synthesized and characterized in the laboratory to confirm the model's predictions [26].

Autonomous Robotic Experimentation

The CRESt platform demonstrates a more integrated protocol that directly connects AI-driven decision-making to physical experimentation [28].

Goal Setting: A researcher provides a high-level goal in natural language, such as "find a catalyst that maximizes power density while minimizing precious metal content."

AI-Driven Experimentation Loop:

- The system's large multimodal model, incorporating literature knowledge and experimental data, plans a batch of experiments.

- Robotic equipment, including liquid-handling robots and carbothermal shock synthesizers, executes the synthesis based on the AI's recipes.

- Automated characterization equipment, such as electron microscopes and X-ray diffractometers, analyzes the synthesized materials.

- An automated electrochemical workstation tests the performance of the new materials.

Analysis and Iteration:

- The results from synthesis, characterization, and testing are fed back to the AI model.

- The model uses this multimodal feedback to update its hypotheses and plan the next, more optimal, round of experiments. This closed-loop cycle continues until the material goal is achieved.

The Scientist's Toolkit: Essential Research Reagents & Solutions

For researchers aiming to implement or validate inverse design workflows, the following tools and "reagents" are fundamental.

Table 2: Key Research Reagents and Computational Tools for Inverse Design

| Category / Item | Function in Inverse Design Workflow | Specific Examples / Notes |

|---|---|---|

| Generative Models | Core engine for proposing new material candidates. | Variational Autoencoders (VAE): Priors for property-conditioned latent spaces [27] [25].Generative Adversarial Networks (GAN): High-fidelity generation; used with inversion for targeted design [26].Diffusion Models: High-quality, stable crystal generation (e.g., MatterGen) [13]. |

| Optimization Algorithms | Navigates the design space to find candidates meeting targets. | Bayesian Optimization (BO): Data-efficient for black-box functions [28] [27].Latent Space Optimization: Gradient-based search in a generative model's latent space [26].Evolutionary Algorithms: Population-based global search. |

| Surrogate Predictors | Fast, approximate property prediction for high-throughput screening. | Deep Neural Networks (DNN) [25].Graph Neural Networks (GNNs): Capture geometric features of atomistic structures [27].Machine Learning Force Fields: Near-DFT accuracy at lower cost [30] [13]. |

| Validation & Synthesis | Physical verification of computationally generated materials. | Robotic Platforms: For high-throughput synthesis (e.g., liquid-handling, carbothermal shock) [28].Ab Initio Molecular Dynamics (AIMD): Computational validation of properties like density [25].Density Functional Theory (DFT): The gold standard for calculating stability and electronic properties [13]. |

| Data & Representations | Structured language for describing materials to AI models. | Material Databases: Materials Project, Alexandria, ICSD for training data [13].Elemental Feature Vectors: E.g., molar mass, electronegativity, radii [25].Crystal Structure Representations: E.g., atom coordinates, periodic lattice, space group [13]. |

The field of AI-driven inverse design is rapidly maturing, moving from a proof-of-concept to a demonstrably powerful tool for accelerating functional materials discovery. As benchmarked in this guide, methods like diffusion models (MatterGen), GAN inversion, and multimodal systems (CRESt) are capable of generating novel, stable materials that meet complex, multi-objective property targets, with validation moving from in silico prediction to physical synthesis and measurement. The choice of methodology depends heavily on the research problem: foundational crystal generation across the periodic table, precise optimization of a known alloy system, or the full automation of the discovery process itself. The continued development and integration of these tools, coupled with growing and more diverse datasets, promise to further solidify inverse design as an indispensable component of modern materials science research.

The discovery of novel materials with targeted properties is a critical driver of technological advancement in fields ranging from energy storage to carbon capture. Traditionally, this process has relied on either costly experimental trial-and-error or computational screening of known materials databases—methods fundamentally limited to exploring only a tiny fraction of potentially stable inorganic compounds [11] [13]. Generative artificial intelligence (AI) represents a paradigm shift, enabling direct generation of novel materials conditioned on desired properties, a approach known as inverse design [12] [31]. Among these emerging tools, MatterGen (Microsoft) has demonstrated state-of-the-art performance in generating stable, diverse inorganic materials across the periodic table [13] [32]. This case study objectively evaluates MatterGen's performance against other generative and screening methods for designing high-bulk-modulus materials and battery components, providing a systematic analysis of experimental data and methodologies relevant to materials science researchers.

Performance Benchmarking: Quantitative Comparative Analysis

MatterGen's capabilities are demonstrated through comprehensive benchmarking against prior state-of-the-art generative models and traditional screening methods. The table below summarizes key performance indicators across multiple dimensions.

Table 1: Overall performance comparison between MatterGen and baseline methods

| Metric | MatterGen | CDVAE (Previous SOTA) | DiffCSP | Screening-Based Methods |

|---|---|---|---|---|

| Stable, Unique & New (SUN) Materials Rate | >2× higher than CDVAE [13] | Baseline | Not specified | Saturates due to database exhaustion [11] |

| Distance to DFT Local Minimum (RMSD) | >10× closer to local minimum [13] | Baseline | Not specified | Not applicable |

| Structure Relaxation RMSD | 95% of structures <0.076 Å [13] | Not specified | Not specified | Not applicable |

| Success Rate for 5-Element Systems | Outperforms substitution & random structure search [31] | Not specified | Not specified | Limited by known combinations |

| Novelty Rate (vs. Alex-MP-ICSD) | 61% new structures [13] | Not specified | Not specified | 0% (limited to known materials) |

Performance on High-Bulk-Modulus Materials

The capability to generate materials with specific mechanical properties, particularly high bulk modulus (resistance to compression), serves as a key benchmark. The following table compares the performance of different approaches in generating novel materials with bulk modulus exceeding specified thresholds.

Table 2: Performance comparison for high-bulk-modulus materials generation

| Method | Property Target | Generation Success Rate | Experimental Validation | Remarks |

|---|---|---|---|---|

| MatterGen | Bulk modulus >400 GPa | Continues to generate novel candidates without saturation [11] | Not specified for >400 GPa | Explores unknown material space [11] |

| MatterGen | Bulk modulus = 200 GPa | Generated 8,000+ candidates; 4 selected for manual inspection [32] | TaCr₂O₆ synthesized: measured 169 GPa (≈20% error from 200 GPa target) [11] [32] | Structure matched prediction; compositional disorder observed [11] |

| Screening (Traditional) | Bulk modulus >400 GPa | Saturates due to exhausting known candidates [11] | Not applicable | Limited to known materials databases [11] |

| Con-CDVAE with Active Learning | Bulk modulus = 350 GPa | Successfully generated target structures through iterative active learning [33] | Not specified | Requires multi-stage screening and iterative refinement [33] |

Methodological Deep Dive: Experimental Protocols and Workflows

MatterGen Architecture and Training Methodology

MatterGen employs a diffusion model specifically engineered for crystalline materials, operating directly on the 3D atomic coordinates, atom types, and periodic lattice of crystal structures [11] [13]. Unlike image diffusion models that add Gaussian noise, MatterGen implements customized corruption processes for each material component: atom types are corrupted in categorical space toward a masked state, coordinates use a periodic wrapped Normal distribution approaching uniformity, and lattice parameters diffuse toward a symmetric form [13]. The model learns to reverse this process through a score network that respects crystal symmetries [13].

Training follows a two-stage process. First, the base model is pretrained on approximately 608,000 stable structures from the Materials Project and Alexandria databases (Alex-MP-20) to learn general principles of stable crystal formation [11] [13]. Second, adapter modules are added and fine-tuned on smaller labeled datasets to enable property-guided generation [13] [32]. These adapters are tunable components injected into each layer of the base model, altering its output based on property labels [13]. During generation, classifier-free guidance steers the sampling process toward user-specified constraints such as chemical composition, symmetry, or target property values [13] [31].

Validation and Experimental Synthesis Protocol

The validation of AI-generated materials follows a rigorous multi-stage workflow, as demonstrated with the high-bulk-modulus material TaCr₂O₆ [11] [32]:

- Conditional Generation: MatterGen generates candidate structures conditioned on a target bulk modulus of 200 GPa, producing over 8,000 potential candidates [32].

- Automated Filtering: Candidates undergo automated filtering to eliminate structures present in training databases and those predicted to be unstable [32].

- DFT Verification: Promising candidates are validated using Density Functional Theory (DFT) calculations to confirm stability and property predictions [11] [31].

- Manual Selection: Researchers manually select the most promising candidates (e.g., 4 structures) for experimental synthesis [32].

- Laboratory Synthesis: Collaborating experimental partners (e.g., Shenzhen Institutes of Advanced Technology) synthesize the selected materials [11] [34].

- Property Measurement: Experimental measurements (e.g., bulk modulus via nanoindentation or similar techniques) compare actual properties to target values [11].

For TaCr₂O₆, the synthesized material's structure aligned closely with MatterGen's prediction, though with noted compositional disorder between Ta and Cr atoms. The experimentally measured bulk modulus of 169 GPa showed a relative error of approximately 20% from the 200 GPa target, which is considered reasonably close from an experimental perspective [11] [34].

Figure 1: MatterGen material design and validation workflow.

Alternative Workflow: Active Learning with Con-CDVAE

An alternative approach to inverse design combines conditional generative models with active learning frameworks. Research documented in Active Learning for Conditional Inverse Design with Crystal Generation and Foundation Atomic Models employs Con-CDVAE as the conditional generator and integrates it with foundation atomic models like MACE-MP-0 for high-throughput property screening [33].

The active learning cycle proceeds as follows:

- Initial Training: Con-CDVAE is trained on a curated dataset (e.g., 5,296 metallic structures from Materials Project with bulk modulus values) [33].

- Candidate Generation: The model generates candidate crystal structures conditioned on a target property (e.g., bulk modulus of 350 GPa) [33].

- Multi-Stage Screening: A three-stage screening process filters candidates using:

- Dataset Augmentation: Successfully validated candidates are added to the training dataset [33].

- Iterative Refinement: The generative model is retrained on the enriched dataset, improving its performance in subsequent active learning cycles [33].

This framework demonstrates that Con-CDVAE can progressively improve its accuracy in generating crystals with target properties through iterative fine-tuning, particularly valuable for exploring sparsely labeled data regions [33].

Table 3: Key research reagents and computational tools for AI-driven materials discovery

| Tool/Resource | Type | Primary Function | Application in Case Study |

|---|---|---|---|

| MatterGen | Generative AI Model | Direct generation of novel crystal structures conditioned on property constraints [11] [13] | Core generator for high-bulk-modulus materials and battery components [11] [31] |

| Materials Project Database | Materials Database | Repository of computed properties for known inorganic materials [13] [32] | Source of training data (≈608k structures) for base model [11] [13] |

| Density Functional Theory (DFT) | Computational Method | Ab initio calculation of material properties and stability [33] [35] | Gold-standard validation of generated structures' stability and properties [11] [13] |

| Foundation Atomic Models (FAMs) | Machine Learning Potentials | Machine-learned force fields for rapid property prediction [33] | High-throughput screening in active learning frameworks (e.g., MACE-MP-0) [33] |