From Text to Synthesis: How Machine Learning is Decoding Scientific Recipes for Materials and Drug Development

This article explores the transformative role of text mining and machine learning in extracting and utilizing synthesis recipes from scientific literature.

From Text to Synthesis: How Machine Learning is Decoding Scientific Recipes for Materials and Drug Development

Abstract

This article explores the transformative role of text mining and machine learning in extracting and utilizing synthesis recipes from scientific literature. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview from foundational concepts to advanced applications. It covers the evolution from manual curation to Large Language Model (LLM)-based automation, details practical methodologies for building knowledge bases, addresses common challenges like data quality and legal barriers, and evaluates the performance and validation of these AI-driven systems. The insights offered are crucial for accelerating data-driven discovery in materials science and pharmaceutical research.

The Foundation of Synthesis Intelligence: From Manual Curation to AI-Driven Data Extraction

The Urgent Need for Predictive Synthesis in Materials and Drug Discovery

Predictive synthesis represents the critical bottleneck in the discovery pipeline for novel materials and pharmaceuticals. While computational methods have matured to enable rapid design of candidate compounds, the lack of reliable synthesis pathways severely impedes their realization. This whitepaper examines how text-mining of synthesis recipes and machine learning approaches are being leveraged to overcome this challenge. By converting unstructured experimental data from scientific literature into structured, machine-readable formats, researchers can train models to predict viable synthesis routes, accelerating the transition from digital design to physical reality.

The Synthesis Bottleneck in Materials Discovery

The materials discovery pipeline has undergone significant transformation through computational advances. High-throughput ab initio calculations can rapidly screen thousands of potential materials for target properties, leading to an abundance of computationally predicted candidates. However, synthesizability remains a major consideration, with conventional stability metrics like convex-hull analysis providing no practical guidance on actual synthesis parameters such as precursor selection, reaction temperatures, or processing times [1].

This synthesis bottleneck is particularly acute in solid-state materials chemistry, where reactions often involve complex kinetic pathways and non-equilibrium intermediates. The challenge extends beyond merely identifying thermodynamically stable compounds to determining the experimental conditions that will yield phase-pure materials with desired morphologies and properties. Without predictive synthesis capabilities, computationally discovered materials remain theoretical constructs rather than functional realities [1].

Technical Foundations: From Text to Predictive Models

Text-Mining Synthesis Recipes from Literature

The scientific literature contains vast amounts of synthesis knowledge accumulated over decades, but this information exists in unstructured formats that resist automated analysis. Recent advances in natural language processing (NLP) have enabled the extraction of structured synthesis recipes from scientific publications through multi-step pipelines [2] [1].

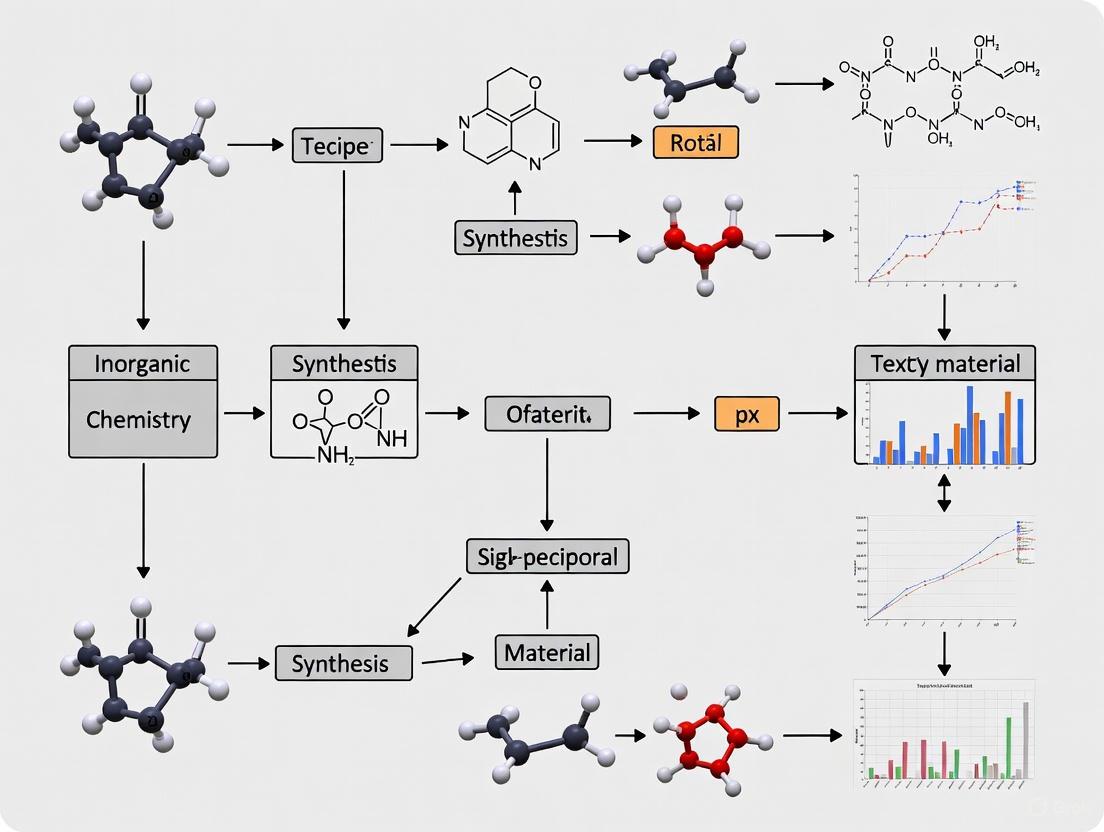

Diagram 1: Text-Mining Synthesis Pipeline illustrates the automated extraction of structured synthesis data from scientific literature.

The foundational work in this domain includes the creation of datasets such as the text-mined dataset of inorganic materials synthesis recipes, which comprises 19,488 synthesis entries retrieved from 53,538 solid-state synthesis paragraphs [2]. Similar efforts have yielded 31,782 solid-state synthesis recipes and 35,675 solution-based synthesis recipes mined from literature [1].

Table 1: Key Text-Mined Synthesis Databases

| Database Scope | Number of Recipes | Source Paragraphs | Extraction Yield | Key Applications |

|---|---|---|---|---|

| Solid-State Synthesis | 31,782 | 53,538 | 28% | Predictive synthesis models, anomaly detection |

| Solution-Based Synthesis | 35,675 | Not specified | Not specified | Solution chemistry optimization |

| General Inorganic Materials | 19,488 | 53,538 | Not specified | Synthesis route prediction, reaction balancing |

The text-mining process employs sophisticated NLP techniques including BiLSTM-CRF (Bidirectional Long Short-Term Memory with Conditional Random Field) networks for material entity recognition, which achieves an accuracy of approximately 85-90% in identifying targets, precursors, and other materials [1]. For synthesis operation extraction, latent Dirichlet allocation (LDA) clusters keywords into topics corresponding to specific materials synthesis operations, enabling the classification of sentence tokens into categories such as mixing, heating, drying, shaping, and quenching [1].

Machine Learning Frameworks for Synthesis Prediction

Once structured synthesis data is available, various machine learning approaches can be applied to build predictive models. The ME-AI (Materials Expert-Artificial Intelligence) framework represents an advanced approach that combines expert intuition with machine learning to uncover quantitative descriptors predictive of material properties [3].

This framework employs a Dirichlet-based Gaussian-process model with a chemistry-aware kernel trained on curated, measurement-based data. In one implementation, ME-AI analyzed 879 square-net compounds described using 12 experimental features, successfully reproducing established expert rules for spotting topological semimetals while revealing hypervalency as a decisive chemical lever in these systems [3].

Experimental Protocols and Methodologies

ME-AI Workflow for Predictive Materials Discovery

The ME-AI framework implements a systematic workflow for leveraging expert knowledge in machine learning models:

Expert Data Curation: A materials expert (ME) curates a refined dataset with experimentally accessible primary features chosen based on intuition from literature, ab initio calculations, or chemical logic [3].

Primary Feature Selection: The model utilizes atomistic and structural features including electron affinity, electronegativity, valence electron count, and structural parameters like characteristic crystallographic distances [3].

Expert Labeling: Materials are labeled through multiple approaches: direct band structure comparison when available (56% of cases), chemical logic for alloys (38% of cases), and stoichiometric relationship analysis for novel compounds (6% of cases) [3].

Model Training: A Gaussian process model with specialized kernels learns the relationship between primary features and target properties, discovering emergent descriptors that articulate expert insight [3].

Validation and Transfer Testing: The model is validated on held-out data and tested for transferability to related material systems beyond the training domain [3].

Table 2: Primary Features in ME-AI Framework

| Feature Category | Specific Features | Role in Prediction | Measurement Basis |

|---|---|---|---|

| Atomistic Features | Electron affinity, Electronegativity, Valence electron count | Capture chemical bonding tendencies | Tabulated values for elements |

| Structural Features | Square-net distance (dsq), Out-of-plane nearest neighbor distance (dnn) | Quantify structural motifs | Crystallographic measurements |

| Composite Features | Maximum/minimum values across elements, Square-net element features | Incorporate multi-element effects | Calculated from composition |

Synthesis Route Prediction Methodology

For predicting synthesis routes of novel materials, the following methodological approach has been developed:

Similarity Analysis: Identify known materials with similar chemical composition or crystal structure to the target material [2] [1].

Precursor Selection: Apply machine learning models to recommend precursor compounds based on decomposition energies, reactivity, and historical usage patterns [1].

Condition Optimization: Predict optimal synthesis parameters (temperature, time, atmosphere) through regression models trained on text-mined data [2].

Reaction Balancing: Automatically balance chemical equations including volatile byproducts using computational stoichiometry algorithms [2].

Anomaly Detection: Identify unusual synthesis recipes that defy conventional wisdom, which may reveal novel synthetic mechanisms [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Predictive Synthesis Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| MatNexus Software Suite | Automated collection, processing, and analysis of scientific text | Extracting synthesis insights from materials science literature [4] |

| BiLSTM-CRF Networks | Material entity recognition from synthesis paragraphs | Identifying targets, precursors, and other materials in text [1] |

| Latent Dirichlet Allocation (LDA) | Topic modeling for synthesis operations | Clustering keywords into categories like mixing, heating, drying [1] |

| Text-Mined Synthesis Databases | Structured recipe collections for ML training | Predictive model development and synthesis route planning [2] |

| Gaussian Process Models | Descriptor discovery with uncertainty quantification | Identifying key features governing material properties [3] |

| Inorganic Crystal Structure Database (ICSD) | Crystallographic reference data | Structural feature calculation and material classification [3] |

Critical Challenges and Limitations

Despite promising advances, significant challenges remain in the development of robust predictive synthesis capabilities:

Data Quality and Coverage Issues

Text-mined synthesis datasets face fundamental limitations characterized by the "4 Vs" of data science:

Volume: While thousands of recipes have been extracted, this represents only a fraction of known synthesis knowledge, with extraction yields around 28% for solid-state synthesis paragraphs [1].

Variety: The datasets exhibit significant bias toward commonly studied material systems, with limited coverage of novel or unconventional compositions [1].

Veracity: Extraction errors propagate through the pipeline, with material identification accuracy around 85-90% and lower accuracy for parameter extraction [1].

Velocity: The static nature of historical datasets limits their utility for predicting synthesis of truly novel materials [1].

Integration of Physical Knowledge

Purely data-driven approaches often lack the physical interpretability needed for scientific acceptance. Hybrid approaches that incorporate domain knowledge and theoretical principles show greater promise. The ME-AI framework demonstrates how expert intuition can be formalized into machine-learning models, creating interpretable descriptors rather than black-box predictors [3].

Diagram 2: Predictive Synthesis Cycle shows the integration of physical knowledge with data-driven approaches in an iterative refinement loop.

Future Directions and Emerging Solutions

Autonomous Laboratories and Closed-Loop Discovery

The integration of predictive synthesis with autonomous experimentation represents a promising direction for addressing current limitations. AI-supported synthesis planning combined with robotic experimentation platforms enables real-time feedback and adaptive optimization of synthesis parameters [5]. These systems can explore synthetic parameter spaces more efficiently than human researchers, while simultaneously generating high-quality, standardized data for improving predictive models.

Explainable AI for Enhanced Interpretability

Future predictive synthesis platforms will increasingly incorporate explainable AI techniques to improve model transparency and physical interpretability [5]. By articulating the reasoning behind synthesis recommendations, these systems can build trust with experimental researchers and provide genuine scientific insights rather than merely empirical predictions.

Generative Models for Novel Synthesis Design

Beyond predicting synthesis routes for known materials, generative AI models are being developed to propose entirely new synthesis pathways and conditions for target materials [5]. These approaches leverage patterns learned from text-mined synthesis databases while incorporating physicochemical constraints to ensure feasibility.

Predictive synthesis stands as the critical gateway to realizing the promise of computational materials and drug discovery. While significant challenges remain in data quality, model generalizability, and experimental validation, the integration of text-mined synthesis knowledge with machine learning frameworks offers a viable path forward. The ME-AI approach demonstrates how expert intuition can be formalized into quantitative descriptors, while large-scale text-mining efforts provide the foundational data needed for predictive modeling. As these technologies mature through improved NLP capabilities, enhanced data infrastructure, and autonomous validation platforms, predictive synthesis will transform from a limiting bottleneck into a powerful accelerator of molecular and materials innovation.

In the context of accelerated materials discovery, the ability to predict how to synthesize a computationally designed material is a urgent bottleneck [1]. While high-throughput computations can rapidly identify promising new compounds, these predictions offer no guidance on the practical steps needed to create them in the laboratory. A materials synthesis recipe serves as this crucial bridge, containing the structured knowledge required to transform design into reality [2] [6]. Within the broader thesis of using text-mining to build machine-learning models for synthesis, a precise definition of its core components is foundational. This technical guide defines the synthesis recipe through its three fundamental pillars—targets, precursors, and operations—and details the methodologies for converting unstructured text from scientific literature into a structured, machine-actionable format [1] [2].

Core Components of a Synthesis Recipe

A synthesis recipe is a structured representation of the experimental procedure required to create a target material. Its three essential components are defined below.

Target Material

The target material is the desired end-product of the synthesis procedure [1] [2]. In a synthesis paragraph, it is the compound whose formation the experimental protocol is designed to achieve. Accurately identifying the target is complicated by the varied representations of inorganic materials in text, which can include solid-solutions (e.g., AxB1−xC2−δ), common abbreviations (e.g., PZT for Pb(Zr0.5Ti0.5)O3), and notations for dopants [1].

Precursors

Precursors are the starting compounds that participate in the chemical reaction to form the target material [1] [2]. The selection of precursors is a critical and non-trivial step in synthesis design. A single element in the target can often be introduced by multiple different precursor compounds (e.g., carbonates, nitrates, or oxides), and the choice among them is not random. Statistical analysis of text-mined data reveals strong dependencies in the selection of precursor pairs for different elements, influenced by factors such as co-solubility or common application in specific processing routes [6].

Synthesis Operations

Synthesis operations are the actions performed on the precursors to facilitate the formation of the target material. In solid-state synthesis, the main operations, as classified by text-mining pipelines, are mixing, heating, drying, shaping, and quenching [1] [2]. Each operation is associated with specific parameters—such as time, temperature, and atmosphere for a heating step—that are essential for reproducing the synthesis [2]. A single operation can be described by numerous synonyms in the literature (e.g., 'calcined', 'fired', 'heated'), which must be clustered into a standardized set of actions [1].

The Synthesis Reaction

The balanced chemical reaction is a synthesized representation that connects the precursors and the target, often requiring the inclusion of volatile "open" compounds like O2, CO2, or N2 to conserve mass and elements [2]. This balanced equation enables the computation of reaction energetics using data from resources like the Materials Project, providing a thermodynamic perspective on the synthesis [1].

Table 1: Core Components of a Synthesis Recipe

| Component | Definition | Examples | Extraction Challenge |

|---|---|---|---|

| Target Material | The desired final compound [1] [2] | LiFePO4, ZrO2, a metastable polymorph | Diverse text representations (formulas, abbreviations, solid-solutions) [1] |

| Precursors | The starting ingredients that react to form the target [1] [2] | Li2CO3, Fe2O3, NH4H2PO4 (for LiFePO4) | Identifying material role (precursor vs. target vs. grinding medium); Precursor co-selection dependencies [1] [6] |

| Operations | The physical actions and steps performed [1] [2] | Mixing (grinding), Heating (calcination), Quenching | Synonym clustering ('calcined', 'fired', 'heated'); Parameter association (time, atmosphere) [1] |

Text-Mining Synthesis Recipes from Literature

The process of converting unstructured text from scientific papers into codified recipes involves a multi-step natural language processing (NLP) pipeline.

Data Procurement and Preprocessing

The first step involves procuring full-text journal articles from major scientific publishers (e.g., Springer, Wiley, Elsevier, RSC) with appropriate permissions [2]. To simplify parsing, this process is typically restricted to papers published after the year 2000 that are available in HTML or XML format, as opposed to scanned PDFs [1] [2]. A web-scraping engine is used to download the content, which is then stored in a document-oriented database [2].

Paragraph Classification

Given that synthesis descriptions can be located in different sections of a paper depending on the publisher, a key step is to identify the paragraphs that describe a synthesis procedure. A two-step classification approach is used:

- Unsupervised Topic Modeling: Latent Dirichlet allocation (LDA) is used to cluster common keywords from experimental paragraphs into "topics," generating a probabilistic topic assignment for each paragraph [2].

- Supervised Classification: A random forest classifier is then trained on a manually annotated set of paragraphs to classify the synthesis methodology as solid-state, hydrothermal, sol-gel, or "none of the above" [2].

Information Extraction

This is the most technically complex phase, where specific entities are extracted from the classified synthesis paragraphs.

Material Entity Recognition and Role Labeling: A Bi-directional Long Short-Term Memory neural network with a Conditional Random Field layer (BiLSTM-CRF) is employed [1] [2]. This model first identifies all material entities in a paragraph. Then, each material is replaced with a

<MAT>tag, and the context is analyzed by a second neural network to classify its role asTARGET,PRECURSOR, orOTHER(e.g., reaction media, atmosphere) [1]. The model is trained on hundreds of manually annotated paragraphs [2].Synthesis Operation Extraction: A combination of a neural network and sentence dependency tree analysis identifies key synthesis steps [2]. The neural network classifies sentence tokens into operation categories (MIXING, HEATING, etc.) [1] [2]. The dependency tree is then used to refine the classification, for instance, by differentiating between "solution mixing" and "liquid grinding" [2]. Parameters for each operation (e.g., temperature, time) are extracted using regular expressions and keyword searches [2].

Recipe Compilation and Reaction Balancing

The final step assembles the extracted information into a unified "codified recipe" in a structured data format like JSON [1] [2]. A material parser converts the string for each material into a standardized chemical formula. Finally, a system of linear equations is solved to balance the chemical reaction between the precursors and the target, inferring and including any necessary volatile "open" compounds to satisfy element conservation [2].

Text-Mining Synthesis Recipes Pipeline

Quantitative Scope of Text-Mined Datasets

Large-scale text-mining efforts have produced substantial datasets that capture decades of heuristic synthesis knowledge. The table below summarizes the quantitative findings from two key studies that mined solid-state and solution-based synthesis recipes.

Table 2: Scale of Text-Mined Synthesis Data from Literature

| Metric | Solid-State Synthesis [2] | Solution-Based Synthesis [1] | Overall Context [1] |

|---|---|---|---|

| Total Papers Processed | Not Specified | Not Specified | 4,204,170 |

| Paragraphs Analyzed | 53,538 (classified as solid-state) [2] | Not Specified | 6,218,136 (in experimental sections) |

| Total Synthesis Paragraphs | — | — | 188,198 (inorganic) |

| Final Recipes with Balanced Reactions | 19,488 [2] | 35,675 [1] | ~31,782 (solid-state) & 35,675 (solution-based) |

| Overall Extraction Yield | — | — | 28% (of solid-state paragraphs) [1] |

The "extraction yield" of 28% for solid-state synthesis paragraphs highlights the significant technical challenges in the process, with failures arising from issues in any step of the pipeline, such as inability to parse a material or to balance a reaction [1].

Machine Learning Applications and Experimental Validation

Structured recipe data enables various machine learning approaches to predictive synthesis. One application is precursor recommendation, where the goal is to suggest likely precursor sets for a novel target material.

Precursor Recommendation Methodology

A proven strategy involves a three-step pipeline that mimics a chemist's literature-based approach [6]:

- Materials Encoding: An encoding neural network learns a vector representation (embedding) for a target material based on its composition. This is achieved via a self-supervised learning task called Masked Precursor Completion (MPC), where the model learns to predict masked parts of a precursor set, thereby capturing the correlations between targets and their precursors, as well as dependencies among different precursors [6].

- Similarity Query: For a new target material, its encoding is used to query a knowledge base of past successful syntheses to find the most similar known material [6].

- Recipe Completion: The precursor set from the most similar reference material is adopted. If this set does not contain all the necessary elements for the new target, a conditional prediction model adds the missing precursors [6].

ML Pipeline for Precursor Recommendation

Experimental Protocol and Performance

In a large-scale historical validation, this pipeline was trained on a knowledge base of 29,900 text-mined solid-state synthesis reactions [6]. When tasked with recommending five precursor sets for each of 2,654 unseen test targets, the strategy achieved a remarkable success rate of at least 82% [6]. This demonstrates the viability of data-driven methods to capture and repurpose human synthesis heuristics.

Beyond recommendation systems, text-mined data has also proven valuable for hypothesis generation. The analysis of anomalous recipes—those that defy conventional synthesis intuition—has led to new mechanistic insights into solid-state reactions, which were subsequently validated through targeted experiments [1].

The integration of these models with automated laboratories represents the cutting edge of the field. Systems like AutoBot combine synthesis robotics, characterization tools, and machine learning in a closed loop [7]. In one demonstration, AutoBot optimized the fabrication of metal halide perovskite films by varying four synthesis parameters (timing, temperature, duration, humidity). Its AI algorithms identified the most informative experiments to run, needing to sample only 1% of over 5,000 possible parameter combinations to find the optimal "sweet spot," a process that compressed a year of manual work into a few weeks [7].

Table 3: Key Research "Reagent Solutions" in AI-Driven Synthesis

| Item / Tool | Function / Role | Application Example |

|---|---|---|

| Text-Mined Recipe Database | Structured knowledge base of historical synthesis procedures; training data for ML models [1] [2] [6] | Precursor recommendation; analysis of synthesis trends and anomalies [1] [6] |

| BiLSTM-CRF Model | Natural language processing model for identifying material entities and their roles in text [1] [2] | Core component of the information extraction pipeline for building recipe databases [1] |

| PrecursorSelector Encoding | Self-supervised neural network for creating material representations based on synthesis context [6] | Enables similarity search and precursor recommendation for novel target materials [6] |

| AutoBot / Autonomous Lab | Integrated platform combining robotics, characterization, and ML for closed-loop experimentation [7] | High-throughput optimization of synthesis parameters (e.g., for metal halide perovskites) [7] |

The ability to predict and execute the synthesis of novel materials is a critical final step in computationally accelerated materials discovery [1]. For decades, the scientific knowledge required for this task—detailed synthesis recipes—remained locked within the unstructured text of millions of published papers. This created a significant bottleneck: while high-throughput computations could design new materials, the lack of a fundamental theory for synthesis meant experts had to manually curate and interpret literature to devise synthesis routes [2] [1]. The process of extracting this knowledge has undergone a profound transformation, evolving from reliance on manual, expert-driven curation to the emergence of sophisticated, automated text-mining pipelines. This evolution, framed within the broader pursuit of enabling machine-learning-driven synthesis prediction, represents a fundamental shift in how researchers leverage the vast repository of historical scientific knowledge [2] [8] [1].

This transition is not merely a change in efficiency; it is a redefinition of what is possible. Manual curation, though valuable, is inherently limited in scale and susceptible to human bias. Automated pipelines, powered by natural language processing (NLP) and machine learning (ML), can process millions of documents to create large-scale datasets, uncovering hidden patterns and anomalies that might escape human notice [1]. This guide provides a technical examination of this evolution, detailing the core methodologies, quantitative comparisons, and essential tools that define the modern, automated approach to text-mining synthesis recipes for machine learning research.

The Manual Paradigm: Expert-Driven Curation

Before the advent of large-scale automation, the extraction of synthesis information was a manual process. Researchers would painstakingly read individual papers, often within a narrow domain, to compile datasets of synthesis recipes. This involved:

- Limited Data Volume: Studies were typically restricted to a few dozen or hundred papers, such as the manual annotation of 834 solid-state synthesis paragraphs used to train early models [1] or the creation of datasets for specific oxide systems [2].

- Human Interpretation: Experts identified target materials, precursors, and synthesis operations based on their domain knowledge, navigating the complex and idiosyncratic ways chemists describe procedures (e.g., "calcined," "fired," "heated") [1].

- Direct Knowledge Transfer: The primary value was the direct transfer of expert knowledge from the literature to a specific application, without the intermediate step of creating a large, general-purpose dataset.

While this approach could yield high-quality, curated data for focused studies, its scale was insufficient for training data-hungry machine learning models. The resulting datasets often reflected the historical biases and exploration patterns of the materials science community, limiting their generality for predicting the synthesis of truly novel materials [1].

Table 1: Characteristics of Manual vs. Automated Approaches to Synthesis Data Extraction

| Feature | Manual Curation | Automated Pipelines |

|---|---|---|

| Data Volume | Dozens to hundreds of papers [1] | Millions of papers, yielding tens of thousands of recipes [2] [1] |

| Primary Actor | Human expert | NLP & ML models |

| Key Strength | High accuracy in narrow domains; handles complexity well | Unprecedented scale and speed |

| Key Limitation | Low throughput; human bias; not scalable | Technical extraction errors; inherits historical data bias [1] |

| Typical Output | Focused datasets for specific material systems [2] | Large-scale, structured databases (e.g., JSON) of codified recipes [2] |

The Rise of Automated Pipelines

The limitations of manual curation spurred the development of automated, end-to-end pipelines designed to convert unstructured scientific text into structured, machine-readable synthesis data. The core objective of these pipelines is to identify a synthesis paragraph, extract the relevant entities and operations, and compile them into a standardized "codified recipe" [2].

The following diagram illustrates the generalized logical workflow of such an automated text-mining pipeline, from raw data acquisition to the generation of a structured synthesis database.

Diagram 1: Automated Text-Mining Pipeline for Synthesis Recipes

Pipeline Components and Detailed Methodologies

1. Information Retrieval and Paragraph Classification The first step involves procuring full-text scientific papers from publishers, often limited to post-2000 HTML/XML content for easier parsing [1]. A critical subsequent task is identifying which paragraphs describe a synthesis procedure. Modern approaches use a two-step classification process [2]:

- Unsupervised Topic Modeling: Algorithms like Latent Dirichlet Allocation (LDA) cluster common keywords in experimental paragraphs into "topics" (e.g., heating, mixing), generating a probabilistic topic assignment for each paragraph [1].

- Supervised Classification: A classifier, such as a Random Forest (RF) model, is then trained on a set of annotated paragraphs (e.g., 1,000 per label for solid-state, hydrothermal, etc.) to finalize the classification. Recent studies have achieved F1-scores as high as 0.977 using transformers like SciBERT in a Positive-Unlabeled (PU) Learning framework [8].

2. Material Entity Recognition (MER) and Role Labeling Extracting and correctly labeling materials is a complex NLP challenge. The same compound (e.g., TiO₂) can be a target, a precursor, or a grinding medium [1]. State-of-the-art methods use a Bi-Directional Long Short-Term Memory Neural Network with a Conditional Random Field layer (BiLSTM-CRF) [2] [1].

- Process: The model first identifies all material entities in a paragraph. Then, each material is replaced with a generic

<MAT>tag, and the context is analyzed to classify it asTARGET,PRECURSOR, orOTHER(e.g., atmosphere, reaction media). For example, from the sentence "a spinel-type cathode material<MAT>was prepared from high-purity precursors<MAT>,<MAT>and<MAT>...", the model learns to assign the labels correctly [1]. - Training: This requires a large, manually annotated dataset, such as the 834 solid-state synthesis paragraphs used by Huo et al. [1]. The model's word embeddings are often pre-trained on a corpus of synthesis paragraphs (e.g., ~33,000) to better understand the domain language [2].

3. Synthesis Operation and Condition Extraction

This step identifies the actions performed during synthesis. A neural network classifies sentence tokens into categories like MIXING, HEATING, DRYING, or NOT OPERATION [2]. To improve accuracy, this is combined with syntactic dependency parsing using libraries like SpaCy [2] [9]. For example, a MIXING operation can be subclassified as SOLUTION MIXING if its dependency tree contains words like 'dissolve' or 'ethanol' [2].

- Parameter Extraction: For each operation, associated parameters (time, temperature, atmosphere) are extracted using regular expressions and keyword searches within the same sentence [2].

4. Recipe Compilation and Reaction Balancing The final stage compiles all extracted information into a structured format (e.g., JSON). A "Material Parser" converts material strings into chemical formulas. Balanced chemical reactions are then derived by solving a system of linear equations to conserve elements, often including inferred "open" compounds like O₂ or CO₂ [2].

Table 2: Performance Metrics of an Automated Text-Mining Pipeline for Solid-State Synthesis

| Pipeline Stage | Method | Training Data | Output & Yield |

|---|---|---|---|

| Paragraph Classification | Random Forest / SciBERT | 1,000 annotated paragraphs per label [2] [8] | 53,538 solid-state paragraphs from 4.2M papers [1] |

| Material Entity Recognition | BiLSTM-CRF | 834 annotated paragraphs [1] | Precursor and target materials identified |

| Operation Extraction | Neural Network + Dependency Parsing | 100 paragraphs (664 sentences) [2] | 6 operation categories (Mixing, Heating, etc.) |

| Overall Pipeline | Integrated NLP Pipeline | - | 15,144 balanced chemical reactions (28% yield from 53,538 paragraphs) [1] |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for building and working with automated text-mining pipelines for synthesis recipes.

Table 3: Essential Research Reagents for Text-Mining Synthesis Data

| Item Name | Type | Function / Application |

|---|---|---|

| BiLSTM-CRF Model | Software Model | Identifies and classifies material entities (target, precursor) in text based on sentence context [2] [1]. |

| Word2Vec Embeddings | Data Structure | Provides vector representations of words trained on synthesis corpora, used for feature generation in operation classification [2]. |

| SpaCy Library | Software Library | Performs grammatical dependency parsing to understand sentence structure and relate operations to their conditions [2] [9]. |

| Text-Mined Recipe Dataset | Database | Structured dataset (e.g., in JSON) of synthesis recipes; used for training ML models or analyzing synthesis trends [2]. |

| Annotated Training Corpus | Dataset | Manually labeled set of synthesis paragraphs; essential for training and validating supervised MER and operation models [1]. |

| Latent Dirichlet Allocation (LDA) | Algorithm | Performs unsupervised topic modeling to cluster keywords and identify common synthesis operations from text corpora [1]. |

Critical Reflection and Future Directions

While automated pipelines have achieved remarkable scale, critical reflections urge a re-evaluation of their utility for predictive synthesis. Sun et al. (2025) argue that text-mined synthesis datasets often fail to satisfy the "4 Vs" of data science: Volume, Variety, Veracity, and Velocity [1]. The volume of data, while large, is sparse relative to the immense combinatorial space of possible synthesis reactions. The variety is limited by historical research trends, meaning the data is biased toward well-studied material families. Veracity is compromised by both technical extraction errors and the inherent noisiness of reported scientific procedures. Finally, the velocity of data updates is slow, as it is tied to the pace of scientific publishing [1].

These limitations mean that ML models trained on such data may simply learn to replicate past human preferences rather than uncover novel physical insights for synthesizing new materials [1]. However, these datasets provide immense value in a different capacity: the identification of anomalous recipes. Recipes that defy conventional wisdom are rare and thus have little influence on a regression model, but their manual examination can lead to new scientific hypotheses. This was demonstrated when anomalous recipes text-mined by Sun et al. led to a new mechanistic hypothesis for solid-state reaction kinetics, which was later validated experimentally [1].

The future of the field likely lies in a hybrid approach. Automated pipelines are indispensable for processing the overwhelming volume of literature and surfacing rare but insightful data points. The role of the human expert then evolves from a manual curator to an interpreter of machine-generated insights, using their domain knowledge to validate, contextualize, and build novel hypotheses upon the foundation laid by automated systems. This synergy, rather than a complete replacement of manual with automated, will most effectively accelerate machine-learning-driven materials synthesis.

In the domain of data-driven scientific research, the ability to extract meaningful information from vast volumes of unstructured text is paramount. For researchers aiming to build machine learning models that predict synthesis pathways, this begins with converting unstructured scientific text into structured, machine-readable data. Named Entity Recognition (NER) and Topic Modeling represent two fundamental Natural Language Processing (NLP) techniques that power this conversion. This whitepaper provides an in-depth technical examination of these core NLP tasks, framed within the specific context of text-mining synthesis recipes to accelerate machine learning research in fields ranging from materials science to drug development.

Theoretical Foundations

Named Entity Recognition (NER)

Named Entity Recognition (NER), also known as entity extraction or chunking, is a component of natural language processing that identifies and classifies predefined categories of objects in a body of text into categories such as person, organization, location, date, monetary values, and more [10]. The primary goal of NER is to transform unstructured text into structured information by locating and categorizing atomic elements, enabling downstream systems to better understand, search, and analyze language data [11].

In the context of text-mining synthesis recipes, NER moves beyond generic categories to identify domain-specific entities. For example, in a solid-state synthesis paragraph, a specialized NER system would detect precursor materials, target compounds, synthesis operations, and processing parameters [1]. A sentence such as "Li2CO3 and TiO2 were mixed, calcined at 800°C for 12 hours, and ground to obtain Li4Ti5O12" would be processed to identify "Li2CO3" and "TiO2" as precursors, "Li4Ti5O12" as the target material, "mixed," "calcined," and "ground" as operations, and "800°C" and "12 hours" as processing parameters [12].

Topic Modeling

Topic modeling is an unsupervised NLP technique designed to automatically discover hidden thematic structures—or "topics"—within a large collection of documents [13]. Unlike classification, it does not require pre-defined labels. Topic models operate on two fundamental assumptions: first, that each document in a collection is represented as a mixture of various topics, and second, that each topic is characterized by a distribution over words [13].

In materials synthesis, topic modeling can cluster keywords into topics corresponding to specific experimental steps [12]. For instance, Latent Dirichlet Allocation (LDA) might identify a "heating" topic characterized by words like "[°C, h, min, air, annealed, samples, atmosphere, heat, treatment, annealing, furnace, temperatures]" [1]. This allows researchers to automatically categorize synthesis paragraphs by their primary experimental methods (e.g., solid-state, hydrothermal, sol-gel) and reconstruct flowcharts of synthesis procedures [12].

Technical Approaches and Methodologies

NER Techniques and Evolution

NER methodologies have evolved significantly from early rule-based systems to modern deep learning approaches. Each paradigm offers distinct advantages and limitations for scientific text mining.

Table 1: Comparison of NER Technical Approaches

| Approach | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| Rule-Based | Predefined patterns, dictionaries, regular expressions [14] | Simple, interpretable, requires no training data [11] | Poor generalization, brittle to variations [10] |

| Machine Learning | Statistical models (CRF, SVM) trained on annotated data [10] | Adaptable, learns contextual patterns [15] | Requires extensive feature engineering [15] |

| Deep Learning | Neural networks (BiLSTM, Transformers, BERT) [10] | Automatic feature learning, handles complex context [15] | Computationally intensive, requires large datasets [10] |

| Hybrid | Combines rule-based and machine learning methods [10] | Leverages strengths of both approaches [10] | Increased implementation complexity [11] |

Modern NER systems for scientific text increasingly rely on deep learning architectures. Bidirectional Long Short-Term Memory networks with Conditional Random Fields (BiLSTM-CRF) effectively model sequence dependencies, making them particularly suitable for identifying entity boundaries in scientific text [15] [1]. More recently, transformer-based models like BERT (Bidirectional Encoder Representations from Transformers) have demonstrated state-of-the-art performance by using self-attention mechanisms to weigh the importance of different words in a sentence, enabling better understanding of contextual nuances [10] [15].

Topic Modeling Techniques

Topic modeling has similarly evolved from algebraic methods to neural approaches that better capture semantic meaning.

Table 2: Evolution of Topic Modeling Techniques

| Technique | Category | Key Principles | Applications in Synthesis Text-Mining |

|---|---|---|---|

| LSA (Latent Semantic Analysis) | Algebraic | Matrix factorization (SVD) of term-document matrix [13] | Baseline topic discovery in scientific corpora [13] |

| LDA (Latent Dirichlet Allocation) | Probabilistic | Assumes documents are mixtures of topics with Dirichlet priors [16] | Clustering synthesis keywords into experimental steps [12] |

| NMF (Non-Negative Matrix Factorization) | Algebraic | Parts-based representation with non-negativity constraints [13] | Alternative to LDA for document clustering [13] |

| Neural Topic Models | Neural | Combine traditional topic models with deep learning [13] | Enhanced topic coherence through embeddings [13] |

| BERTopic | Transformer-based | Uses BERT embeddings and clustering (HDBSCAN) [16] | Handling short or noisy text in scientific abstracts [16] |

The standard LDA algorithm assumes a generative process where each document is modeled as a probability distribution over topics, and each topic is a probability distribution over words [16]. The model has three main hyperparameters: α (controlling document-topic density), β (controlling topic-word density), and K (the number of topics) [16]. In practice, the optimal number of topics K is often determined using metrics like perplexity or topic coherence [13].

Experimental Protocols for Text-Mining Synthesis Recipes

Integrated Pipeline for Synthesis Information Extraction

The extraction of synthesis information from scientific literature requires a multi-step NLP pipeline that combines both NER and topic modeling. The following diagram illustrates this integrated workflow:

Detailed Protocol: Text-Mining Solid-State Synthesis Recipes

Based on published large-scale efforts to extract synthesis information from materials science literature, the following protocol provides a reproducible methodology for researchers [12] [1]:

Step 1: Literature Procurement and Preprocessing

- Obtain full-text permissions from scientific publishers (e.g., Springer, Wiley, Elsevier, RSC)

- Filter for papers published after year 2000 with machine-readable HTML/XML formats (avoid scanned PDFs)

- Extract paragraphs from experimental sections using pattern matching

- Apply standard NLP preprocessing: tokenization, lowercasing, special character removal, lemmatization

Step 2: Entity Recognition for Synthesis Components

- Implement a BiLSTM-CRF model architecture for sequence labeling [1]

- Replace all chemical compounds with

<MAT>placeholders to handle diverse representations - Manually annotate a training set of ~800 synthesis paragraphs with entity labels (target, precursor, operation, parameter) [1]

- Train the model to classify each

<MAT>instance based on sentence context clues - For example: "a spinel-type cathode material

<MAT>was prepared from high-purity precursors<MAT>,<MAT>and<MAT>, at 700°C for 24 h" should identify the first<MAT>as target and subsequent ones as precursors [1]

Step 3: Topic Modeling for Synthesis Operations

- Apply Latent Dirichlet Allocation (LDA) to cluster keywords into synthesis operation topics [12]

- Manually label tokens in ~100 synthesis paragraphs across 6 categories: mixing, heating, drying, shaping, quenching, or not operation [1]

- Extract parameter values (times, temperatures, atmospheres) associated with each operation type

- Construct Markov chain representations to model procedural flowcharts

Step 4: Recipe Compilation and Validation

- Combine extracted entities and topics into structured JSON recipe format [1]

- Balance chemical reactions by including volatile atmospheric gasses (O₂, N₂, CO₂) where needed

- Compute reaction energetics using DFT-calculated bulk energies when possible

- Validate extraction pipeline on randomly sampled paragraphs (expect ~70% success rate for complete extraction) [1]

Research Reagent Solutions

The following table details essential computational "reagents" required for implementing the described text-mining pipeline:

Table 3: Essential Research Reagents for Synthesis Text-Mining

| Tool/Library | Type | Primary Function | Application in Protocol |

|---|---|---|---|

| spaCy [10] [14] | NLP Library | Production-ready NLP with pre-trained models | Text preprocessing, tokenization, and entity recognition |

| BiLSTM-CRF [15] [1] | Neural Architecture | Sequence labeling for entity recognition | Identifying targets, precursors, and parameters in text |

| Gensim | Topic Modeling | LDA and other topic modeling algorithms | Clustering keywords into synthesis operations |

| Transformers (Hugging Face) [15] | NLP Library | Pre-trained transformer models (BERT, SciBERT) | Domain-specific entity recognition when fine-tuned |

| Scikit-learn | Machine Learning | General ML utilities and algorithms | Feature extraction, model evaluation, and auxiliary tasks |

| Custom Annotation Tools [14] | Data Preparation | Create labeled datasets for NER | Manual annotation of synthesis entities and operations |

Applications in Drug Discovery and Materials Research

The integration of NER and topic modeling has enabled significant advances in data-driven research domains:

In drug discovery, AI-powered language models that incorporate NER are transforming treatment development by analyzing vast scientific literature [17]. These systems can identify potential drug targets, predict drug interactions, and facilitate drug repurposing strategies by extracting structured information from unstructured biomedical text [18] [17]. For COVID-19 treatment development, for instance, NER has been instrumental in identifying existing drugs that might be repurposed by extracting entity relationships from virology literature [17].

In materials science, the application of this integrated NLP approach has yielded tangible research outcomes. One large-scale effort text-mined 31,782 solid-state synthesis recipes and 35,675 solution-based synthesis recipes from the literature [1]. While regression models trained on this data showed limited predictive utility for novel synthesis, the analysis revealed anomalous recipes that defied conventional intuition. Manual examination of these outliers led to new mechanistic hypotheses about solid-state reaction kinetics, which were subsequently validated through targeted experiments [1].

Challenges and Future Directions

Despite considerable advances, significant challenges remain in applying NER and topic modeling to scientific text-mining:

Data Quality and Availability: Text-mined synthesis datasets often fail to satisfy the "4 Vs" of data science: volume, variety, veracity, and velocity [1]. Extraction pipelines may have yields as low as 28%, meaning only a fraction of identified synthesis paragraphs produce balanced chemical reactions [1].

Domain Adaptation and Ambiguity: General-purpose NER models struggle with scientific terminology and context-dependent meanings. For example, "TiO2" may be a target material in one context and a precursor in another, while "ZrO2" might be a precursor or a grinding medium [1]. Similarly, topic models trained on general text fail to capture domain-specific semantic relationships.

Emerging Solutions: Future progress will likely come from several promising directions. Transfer learning with domain-specific pre-trained models (e.g., SciBERT) reduces the need for extensive labeled data [15]. Hybrid approaches that combine neural methods with symbolic reasoning show promise for handling compositional materials formulas [1]. The integration of large language models (LLMs) offers potential for few-shot learning and better contextual understanding [16], while multimodal models that combine text with structural chemical information could enable more accurate knowledge extraction [13].

Named Entity Recognition and Topic Modeling represent foundational NLP technologies that enable the transformation of unstructured scientific text into structured, machine-actionable knowledge. When strategically integrated within a comprehensive text-mining pipeline, these techniques empower researchers to construct large-scale datasets of synthesis recipes that can fuel machine learning approaches to predictive materials design and drug discovery. While challenges remain in domain adaptation, data quality, and contextual understanding, ongoing advances in deep learning and language models continue to enhance their capabilities. For researchers in materials science and pharmaceutical development, mastery of these core NLP tasks provides a critical competitive advantage in the increasingly data-driven landscape of scientific discovery.

The integration of artificial intelligence into materials science and drug development represents one of the most promising technological frontiers of our time. The 2025 Gartner Hype Cycle for Artificial Intelligence reveals a critical inflection point: generative AI has entered the "Trough of Disillusionment," while foundational enablers like AI-ready data and AI engineering are gaining prominence [19]. This shift signals a broader industry transition from experimental curiosity to practical, scalable deployment—a pattern acutely relevant to researchers attempting to leverage text-mined synthesis data for machine learning applications.

In the specific context of materials informatics, this hype cycle manifests through early excitement about text-mining scientific literature for synthesis recipes, followed by challenges in transforming this data into predictive models. Between 2016 and 2019, significant efforts were made to text-mine tens of thousands of solid-state and solution-based synthesis recipes from published literature, creating datasets intended to train machine learning models for predictive materials synthesis [1]. These initiatives followed the classic hype cycle pattern, beginning with a technology trigger (the availability of NLP methods and materials literature), reaching a peak of inflated expectations (that these datasets would enable predictive synthesis of novel materials), and subsequently encountering limitations that led to a period of disillusionment.

This technical guide examines the concrete strategies and methodologies that enable researchers to navigate beyond the trough of disillusionment toward sustainable value creation. By focusing on the specific application of text-mining synthesis recipes, we provide a roadmap for transforming promising AI technologies into practical research tools that accelerate materials discovery and development.

The Current AI Landscape: A Hype Cycle Analysis

Key AI Technologies and Their Position in the Hype Cycle

The Gartner Hype Cycle provides a valuable framework for understanding the maturity and adoption trajectory of emerging AI technologies. For researchers in materials science and drug development, this framework offers strategic guidance for investment decisions and technology prioritization. The table below summarizes the positioning of key AI technologies relevant to text-mining and materials informatics research based on the 2025 Hype Cycle analysis [19] [20] [21].

Table 1: Positioning of AI Technologies in the 2025 Hype Cycle Relevant to Materials Informatics

| Technology | Hype Cycle Position | Maturity Level | Relevance to Text-Mining Research |

|---|---|---|---|

| Generative AI | Trough of Disillusionment | Early mainstream | Automated literature analysis, synthesis paragraph generation |

| AI-Ready Data | Peak of Inflated Expectations | Emerging | Foundation for quality training datasets from text-mined sources |

| AI Agents | Peak of Inflated Expectations | Emerging | Autonomous research assistants for literature analysis |

| Foundation Models | Trough of Disillusionment | Adolescent | Domain-specific LLMs for materials science literature |

| Synthetic Data | Trough of Disillusionment | Emerging | Augmenting limited experimental data from literature |

| AI-Native Software Engineering | Innovation Trigger | Embryonic | Next-generation research software development |

| ModelOps | Slope of Enlightenment | Adolescent | Lifecycle management of ML models for synthesis prediction |

| AI Engineering | Slope of Enlightenment | Adolescent | Disciplined approach to production AI systems |

The Reality of Text-Mined Data for Synthesis Prediction

The application of AI to materials synthesis prediction provides a compelling case study of navigating the hype cycle. Initial efforts to text-mine synthesis recipes from scientific literature between 2016-2019 yielded substantial datasets—31,782 solid-state synthesis recipes and 35,675 solution-based synthesis recipes—creating expectations that these would enable predictive synthesis of novel materials [1]. However, these datasets encountered significant challenges related to the "4 Vs" of data science:

- Volume: While containing thousands of recipes, the data remained sparse for many material systems and synthesis approaches [1].

- Variety: The datasets captured limited diversity in synthesis techniques, reflecting historical research preferences rather than comprehensive methodological coverage [1].

- Veracity: Extraction inaccuracies, ambiguous reporting in source literature, and inconsistent terminology affected data quality [1] [22].

- Velocity: The static nature of the historical data limited adaptability to emerging synthesis approaches and novel material systems [1].

These limitations highlighted the gap between initial expectations and practical reality, representing a classic "trough of disillusionment" experience for the research community. Organizations that failed to anticipate these challenges often abandoned their efforts, while those adopting strategic approaches found alternative paths to value creation.

Foundational Enablers: Building Sustainable AI Capabilities

AI-Ready Data for Materials Informatics

The concept of "AI-ready data" has reached the Peak of Inflated Expectations in the 2025 Hype Cycle, reflecting both its critical importance and the challenges of practical implementation [19]. For text-mining applications, AI-ready data refers to datasets possessing sufficient quality, completeness, relevance, and ethical soundness for specific AI use cases. Current research indicates that 57% of organizations estimate their data is not AI-ready [19], creating a significant barrier to effective AI implementation in materials research.

In the context of text-mined synthesis recipes, achieving AI-ready status requires addressing several critical challenges:

- Ambiguity Resolution: Natural language ambiguity presents significant challenges, where words or phrases can have multiple interpretations depending on context. For example, in materials synthesis literature, "TiO2" might represent a target material in nanoparticle synthesis or a precursor for ternary oxides like Li4Ti5O12 [1]. Implementing context analysis algorithms that use surrounding text to determine meaning is essential for accurate data extraction [23].

- Data Quality and Noise: Text data often contains noise in the form of spelling errors, typos, and non-standard abbreviations [24]. In materials literature, this is compounded by domain-specific terminology and variations in reporting standards. Techniques such as spell-checking, regular expressions, and token standardization are essential for cleaning and normalizing text data before analysis [24] [23].

- Language Complexity and Evolution: The technical nature of synthesis descriptions includes specialized jargon, abbreviations, and evolving terminology that challenge standard NLP approaches [23] [1]. Continuous model training and adaptation to new language trends are necessary to maintain extraction accuracy [23].

Table 2: Solutions for Creating AI-Ready Data from Text-Mined Synthesis Recipes

| Challenge | Technical Solution | Implementation Example |

|---|---|---|

| Entity Recognition | BiLSTM-CRF models with custom annotation | Identifying targets/precursors by replacing compounds with |

| Operation Classification | Latent Dirichlet Allocation (LDA) for topic modeling | Clustering synthesis operations (mixing, heating, drying) from keyword patterns [1] |

| Data Volume | Distributed computing (Apache Spark, Hadoop) | Parallel processing of millions of research papers across computing clusters [24] [23] |

| Multilingual Processing | Multilingual NLP libraries (spaCy) | Processing scientific literature in multiple languages with context-aware translation [24] [25] |

| Relationship Extraction | Markov chain representations | Reconstructing synthesis flowcharts from extracted operation sequences [1] |

AI Engineering and ModelOps: Scaling Research Applications

As generative AI enters the Trough of Disillusionment, Gartner identifies AI Engineering and ModelOps as critical disciplines for scaling AI applications along the Slope of Enlightenment [19]. For research organizations working with text-mined synthesis data, these practices provide the framework for transitioning from experimental models to production-ready prediction systems.

AI Engineering establishes the foundational discipline for enterprise delivery of AI solutions at scale, emphasizing reliability, robustness, and consistent value creation [20]. In the context of materials informatics, this translates to:

- Implementing version control for both models and training data

- Establishing automated testing pipelines for model validation

- Creating monitoring systems for model performance drift

- Developing standardized interfaces for model deployment

ModelOps focuses on the end-to-end governance and lifecycle management of AI models, addressing the critical gap between model development and production deployment [19]. For synthesis prediction systems, effective ModelOps includes:

- Standardized processes for model validation against new literature

- Automated pipelines for retraining models with newly published synthesis methods

- Governance frameworks ensuring model compliance with research standards

- Monitoring systems tracking prediction accuracy against experimental results

The integration of these disciplines enables research organizations to maintain and scale AI systems that leverage text-mined synthesis data, ultimately accelerating the transition from disillusionment to practical productivity.

Experimental Protocols: Methodologies for Text-Mining Synthesis Data

Natural Language Processing Pipeline for Synthesis Extraction

The extraction of structured synthesis recipes from unstructured scientific literature requires a sophisticated NLP pipeline. The following workflow, derived from published text-mining efforts in materials science [1], provides a validated methodology for this process:

Table 3: Detailed Protocol for NLP Pipeline for Synthesis Recipe Extraction

| Processing Stage | Technical Approach | Tools/Libraries | Output |

|---|---|---|---|

| Literature Procurement | Bulk download with publisher permissions | Custom scripts with API access | Full-text papers in HTML/XML format |

| Synthesis Paragraph Identification | Probabilistic classification using keywords | Keyword matching with domain dictionaries | Paragraphs containing synthesis descriptions |

| Material Entity Recognition | Bi-directional LSTM with CRF layer | TensorFlow/PyTorch with custom annotation | Labeled targets, precursors, reaction media |

| Operation Extraction | Latent Dirichlet Allocation (LDA) | Gensim with manual sentence labeling | Classified operations (mixing, heating, etc.) |

| Parameter Association | Pattern matching with unit recognition | Regular expressions with context rules | Parameter-value pairs (temperature, time, etc.) |

| Recipe Compilation | JSON schema with balanced reactions | Custom Python scripts with stoichiometry | Structured synthesis recipes with balanced equations |

The following diagram illustrates the complete text-mining workflow for extracting structured synthesis recipes from scientific literature:

Machine Learning Model Development for Synthesis Prediction

Once structured synthesis data has been extracted, the development of predictive models follows a rigorous experimental protocol. Based on published methodologies [1], this process involves:

Feature Engineering:

- Composition-based descriptors from precursor and target materials

- Processing parameters (temperature, time, atmosphere) as continuous variables

- Synthesis operations encoded as categorical variables

- Contextual features from the synthesis paragraph

Model Architecture Selection:

- Random forest and gradient boosting for structured feature sets

- Recurrent neural networks for sequential operation data

- Graph neural networks for reaction pathway representation

- Transformer-based architectures for multimodal data integration

Validation Framework:

- Temporal splitting to evaluate predictive performance for novel materials

- Composition-based splitting to assess generalization to new chemical systems

- Cross-validation with multiple random seeds to ensure statistical significance

- Experimental validation through collaboration with synthesis laboratories

This methodology acknowledges the limitations of historical data while maximizing its utility for guiding novel synthesis efforts.

The Scientist's Toolkit: Research Reagent Solutions

Implementing effective text-mining and AI workflows requires a carefully selected toolkit of software frameworks, libraries, and platforms. The following table details essential "research reagents" for overcoming hype cycle challenges in materials informatics:

Table 4: Essential Research Reagent Solutions for Text-Mining and AI Workflows

| Tool Category | Specific Solutions | Function | Application in Synthesis Research |

|---|---|---|---|

| NLP Libraries | spaCy, NLTK, AllenNLP | Text preprocessing, tokenization, entity recognition | Extraction of materials, operations, and parameters from literature [24] |

| Deep Learning Frameworks | TensorFlow, PyTorch, Hugging Face | Model development for NLP tasks | Building custom models for synthesis paragraph analysis [1] |

| Topic Modeling | Gensim, Scikit-learn | Clustering of synthesis operations | Identifying patterns in synthesis methodologies [24] [1] |

| Distributed Computing | Apache Spark, Dask, Hadoop | Processing large text corpora | Analyzing millions of research papers efficiently [24] [23] |

| Chemistry-Aware NLP | Custom BiLSTM-CRF models | Domain-specific entity recognition | Accurate identification of materials and their roles [1] |

| Workflow Orchestration | Apache Airflow, MLflow | Pipeline management and experiment tracking | Managing end-to-end text-mining workflows [20] |

| Data Visualization | Matplotlib, Plotly, Streamlit | Exploration of extracted synthesis data | Identifying patterns and anomalies in synthesis recipes [1] |

Strategic Navigation: From Disillusionment to Practical Value

Leveraging Anomalies and Edge Cases

The transition from disillusionment to practical value often comes from rethinking initial assumptions about data utility. In the case of text-mined synthesis data, researchers discovered that the most valuable insights frequently came not from the bulk patterns in the data, but from the anomalous recipes that defied conventional synthesis intuition [1]. These outliers, which would typically be considered noise in standard machine learning approaches, instead provided the foundation for new mechanistic hypotheses about solid-state reaction kinetics and precursor selection [1].

This approach represents a strategic navigation of the hype cycle—acknowledging the limitations of initial expectations while discovering alternative pathways to value creation. The following diagram illustrates this strategic navigation process:

Implementation Roadmap for Research Organizations

For research organizations navigating the AI hype cycle in materials and drug development, a structured implementation approach accelerates progress toward practical value:

Phase 1: Foundation Building (Months 1-6)

- Conduct inventory of existing data assets and their AI-readiness

- Establish cross-functional teams combining domain and AI expertise

- Implement pilot text-mining projects focused on specific material classes

- Develop data standards and annotation protocols for synthesis information

Phase 2: Capability Development (Months 7-18)

- Scale successful pilot projects to broader literature corpora

- Establish ModelOps practices for lifecycle management of predictive models

- Develop validation frameworks connecting predictions to experimental verification

- Create anomaly detection systems to identify unusual synthesis patterns

Phase 3: Value Realization (Months 19-36)

- Integrate prediction systems with experimental planning workflows

- Establish continuous learning systems incorporating new publications

- Develop explainable AI approaches to build researcher trust

- Expand to multimodal data integration (combining text with experimental data)

This roadmap emphasizes incremental progress with regular value checkpoints, avoiding the overcommitment that often characterizes the peak of inflated expectations while maintaining momentum through the trough of disillusionment.

The journey through the AI hype cycle in materials and drug development research follows a predictable but navigable path. The 2025 landscape, with generative AI in the Trough of Disillusionment and foundational enablers like AI-ready data and AI Engineering gaining prominence, reflects a necessary maturation toward practical, scalable applications [19] [20].

For researchers focused on text-mining synthesis recipes, this transition requires shifting from a mindset of AI as a standalone solution to AI as an augmentative technology. The most successful implementations leverage text-mined data not as a complete source of truth for predictive synthesis, but as a catalyst for novel hypotheses and an augmentation of human expertise [1]. This approach embraces the strategic navigation of the hype cycle, recognizing that practical value emerges not by avoiding the trough of disillusionment, but by traversing it with clear-eyed understanding of both capabilities and limitations.

As AI technologies continue to evolve, research organizations that build disciplined approaches to data quality, model operationalization, and human-AI collaboration will be positioned to accelerate discovery while avoiding the cyclical disappointments of hype-driven investments. The future belongs not to those who expect AI to replace scientific intuition, but to those who strategically integrate it as a powerful augmentative tool in the research workflow.

Building Your Text-Mining Pipeline: Methods and Real-World Applications

The rapid expansion of scientific literature presents a formidable challenge for researchers seeking to consolidate experimental knowledge. This is particularly acute in fields like materials science and drug development, where synthesis protocols—the detailed recipes for creating new compounds—are buried in unstructured text. The vision of using machine learning (ML) to predict synthesis pathways for novel materials hinges on the ability to extract and structure this information at scale [1]. This whitepaper delineates a comprehensive, end-to-end protocol for transforming unstructured scientific papers into structured, machine-actionable synthesis recipes, thereby creating the foundational datasets required for data-driven discovery.

The journey from a published paper to a generated recipe is a multi-stage pipeline involving sequential data processing and modeling tasks. The following diagram illustrates the high-level workflow and the logical relationships between its core components.

Stage 1: Paper Collection & Procurement

The initial stage involves assembling a comprehensive digital library of relevant scientific literature.

Core Methodology: Automated scripts are used to procure full-text articles from scientific publishers via Application Programming Interfaces (APIs) or through direct agreements with publishers [1] [26]. For instance, one prominent study downloaded papers from publishers including Springer, Wiley, Elsevier, the Royal Society of Chemistry, and the American Chemical Society [1]. A focused study on battery recipes used the ScienceDirect RESTful API to gather papers [26].

Key Considerations:

- Format Selection: Preference is given to modern HTML or XML formats for easier parsing. Scanned PDFs, particularly from pre-2000 publications, are often excluded due to the high error rate in text extraction [1].

- Open Access: To circumvent copyright restrictions and enable legal redistribution of the extracted dataset, some pipelines, like the one for the Open Materials Guide (OMG), exclusively use open-access publications [27].

- Search Strategy: Domain-specific search queries are constructed in collaboration with subject matter experts. For a battery recipe knowledge base, a query such as

("LiFePO4" OR "lithium iron phosphate") AND ("battery")was used, yielding 5,885 initial papers [26]. The OMG dataset leveraged 60 expert-recommended search terms to retrieve 28,685 open-access articles from a pool of 400,000 search results [27].

Table 1: Representative Data Collection Statistics from Various Studies

| Study Focus | Initial Paper Pool | Final Relevant Papers | Primary Source |

|---|---|---|---|

| Solid-State Synthesis | 4,204,170 papers scraped | 31,782 recipes extracted | [1] |

| Battery Recipes (LiFePO4) | 5,885 papers from API query | 2,174 relevant papers | [26] |

| Open Materials Guide (OMG) | 400,000 search results | 17,667 high-quality recipes from 28,685 articles | [27] |

Stage 2: Paper Selection & Filtering

The initially collected corpus contains many irrelevant documents. This stage refines the pool to papers that genuinely contain synthesis protocols.

Core Methodology: This is typically framed as a binary text classification problem. A machine learning model is trained to distinguish between relevant and irrelevant papers based on their abstract and/or title [26].

Experimental Protocol:

- Manual Annotation: A subset of the corpus (e.g., 1,000 papers) is manually labeled by domain experts to create a gold-standard training set [26].

- Feature Engineering: Text from abstracts is converted into numerical features, often using methods like Term Frequency-Inverse Document Frequency (TF-IDF) [26].

- Model Training and Evaluation: A classifier is trained and optimized using cross-validation. One study compared five different models and found an eXtreme Gradient Boosting (XGB) classifier achieved the highest F1-score of 85.19% for this task [26].

- Application: The best-performing model is applied to the entire unlabeled corpus to identify the final set of relevant papers.

Stage 3: Paragraph Preparation & Topic Modeling

Once relevant papers are identified, the next step is to locate the specific paragraphs that describe the synthesis and assembly procedures.

Core Methodology: Topic modeling, an unsupervised learning technique, is applied to all paragraphs of a paper to identify clusters of text related to experimental methods.

Experimental Protocol:

- Preprocessing: Paragraphs that are too short (e.g., less than 200 characters) are filtered out as they likely contain titles, captions, or incomplete information [26].

- Model Comparison: Common algorithms include:

- Topic Selection: Researchers analyze the generated topics and their keyword distributions to identify those corresponding to "synthesis" and "cell assembly" procedures. For battery recipes, LDA was selected for its ability to produce a manageable number of 25 distinct and interpretable topics, from which two were identified as target topics [26].

Stage 4: Information Extraction via Named Entity Recognition (NER)

This is the core technical stage where structured information is extracted from the unstructured synthesis paragraphs.

Core Methodology: Named Entity Recognition (NER) models, often based on deep learning, are trained to identify and classify key entities within the text into predefined categories such as precursors, temperatures, and equipment [26] [27].

Experimental Protocol:

- Entity Definition: A schema of relevant entities is defined. The battery recipe study defined 30 entities, including

precursor,active_material,binder,atmosphere, andtemperature[26]. - Model Training and Evaluation:

- Pre-trained Models: Models like BiLSTM-CRF (Bidirectional Long Short-Term Memory with a Conditional Random Field layer) have been used, where chemical compounds are first replaced with a generic

<MAT>tag to simplify context learning [1]. - Transformer Models: More recent approaches use pre-trained language models (e.g., BERT) fine-tuned on a manually annotated dataset. The battery recipe study achieved high F1-scores of 88.18% (synthesis) and 94.61% (assembly) with this method [26].

- Large Language Models (LLMs): Frameworks like GPT-4 can be applied through few-shot learning or fine-tuning for this extraction task. The OMG dataset used GPT-4o in a multi-stage process to segment article text into five key components, achieving high expert-rated correctness and coherence [27].

- Pre-trained Models: Models like BiLSTM-CRF (Bidirectional Long Short-Term Memory with a Conditional Random Field layer) have been used, where chemical compounds are first replaced with a generic

- Quality Verification: A panel of domain experts manually reviews a sample of extracted recipes, scoring them on criteria like completeness, correctness, and coherence to ensure data quality [27].

Table 2: Performance of Different Information Extraction Methods

| Extraction Method | Reported Performance | Key Advantages / Applications |

|---|---|---|

| BiLSTM-CRF | Trained on 834 annotated paragraphs [1] | Effective for identifying material roles (target/precursor) in context. |

| Fine-tuned Transformer | F1-scores of 88.18% and 94.61% on 30 entities [26] | High accuracy for extracting a wide range of entities. |

| LLM (GPT-4o) | Expert scores: ~4.7/5 for Correctness & Coherence [27] | Flexible, scalable extraction; capable of segmenting complex text. |

Stage 5: Structured Recipe Generation

The final stage involves compiling the extracted entities into a coherent, structured recipe format suitable for database storage and machine learning.

Core Methodology: The extracted sequences of entities and actions are formalized into a structured data format like JSON, which encapsulates the complete end-to-end protocol [1] [26].

Experimental Protocol:

- Data Structuring: Information is organized into logical sections. The OMG dataset, for example, structures data into a summary (

X), raw materials (Y_M), equipment (Y_E), procedural steps (Y_P), and characterization methods (Y_C) [27]. - Sequence Generation: Entities from the synthesis and assembly paragraphs are linked to create a continuous process flow. The T2BR protocol generated 2,840 sequences for cathode material synthesis and 2,511 for cell assembly, which were then combined into 165 end-to-end battery recipes [26].

- Database Construction: The structured recipes are compiled into a database, which can support flexible retrieval and data-driven analysis, such as identifying trends in precursor-method associations [26].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational tools and data solutions that form the essential "reagents" for building a text-mining pipeline for synthesis recipes.