From Simulation to Synthesis: A Roadmap for the Experimental Realization of Theoretically Predicted Materials

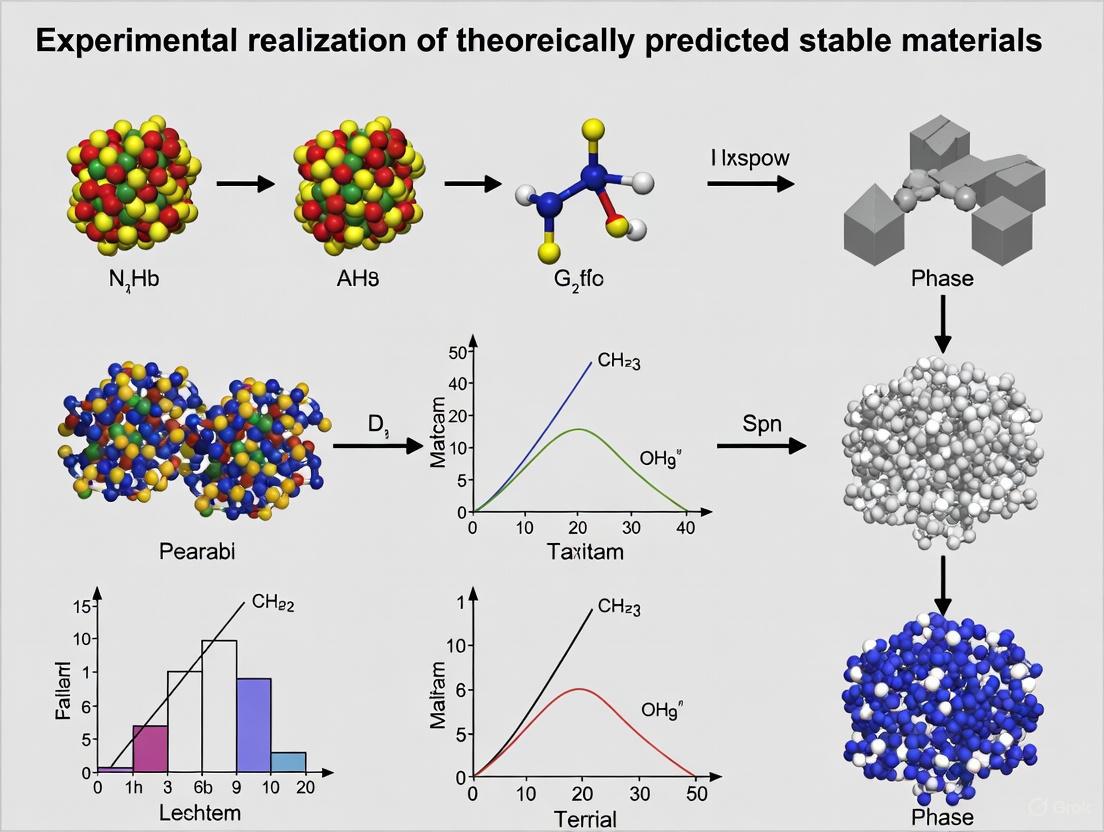

This article provides a comprehensive guide for researchers and drug development professionals on translating computationally predicted stable materials into experimentally validated realities.

From Simulation to Synthesis: A Roadmap for the Experimental Realization of Theoretically Predicted Materials

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on translating computationally predicted stable materials into experimentally validated realities. It covers the foundational principles of material prediction, advanced synthesis and characterization methodologies, strategies for troubleshooting common optimization challenges, and rigorous validation frameworks essential for biomedical and clinical application. By synthesizing insights from data-driven materials science and real-world evidence paradigms, this resource aims to bridge the critical gap between theoretical design and practical realization in advanced material development.

The Computational Blueprint: Foundations for Predicting Novel Stable Materials

The Paradigm Shift to Data-Driven Materials Discovery

The field of materials science is undergoing a profound transformation, shifting from traditional trial-and-error approaches to sophisticated data-driven methodologies. This paradigm shift leverages artificial intelligence (AI), high-throughput computation, and automated experimentation to dramatically accelerate the discovery and development of novel materials. Where traditional methods might require decades to bring a new material from concept to realization, data-driven approaches can compress this timeline to mere months, unlocking unprecedented opportunities across clean energy, electronics, and medicine [1]. This transition is particularly transformative for the experimental realization of theoretically predicted stable materials, where the synergy between prediction and validation creates a powerful discovery engine. This guide compares the performance of traditional and data-driven approaches, providing researchers with a comprehensive framework for navigating this new landscape.

Comparative Analysis: Traditional vs. Data-Driven Discovery

The following table quantitatively compares the key performance metrics of traditional materials discovery against modern data-driven approaches.

Table 1: Performance Comparison of Discovery Methodologies

| Performance Metric | Traditional Approach | Data-Driven Approach | Acceleration Factor | Experimental Validation |

|---|---|---|---|---|

| Discovery Throughput | ~10-100 stable crystals/year (historically) [2] | 2.2 million stable crystals discovered computationally; 736 experimentally realized [2] | >10,000x | GNoME framework active learning |

| Typical Development Cycle | Decades [1] | Months [1] | ~20x | Multiple case studies (e.g., MPEAs, OER catalysts) |

| Experiment Optimization Efficiency | Baseline (Random Acquisition) | Up to 20x faster for specific goals [3] | Up to 20x | Benchmarking on OER catalyst datasets [3] |

| Data Acquisition Efficiency | Low (Static protocols) | >10x more data points [4] | >10x | Self-driving labs with dynamic flow experiments [4] |

| Success Rate (Hit Rate) | ~1% with simple substitutions [2] | >80% with structure; ~33% with composition only [2] | >30x | GNoME models via scaled deep learning |

| Exploration of High-Order Composition Spaces | Limited by chemical intuition | Efficient discovery of 5+ unique element crystals [2] | New capability | Emergent generalization of GNoME models |

Experimental Protocols in Data-Driven Discovery

Sequential Learning for Catalyst Discovery

Objective: To accelerate the discovery of high-activity oxygen evolution reaction (OER) catalysts by iteratively updating a machine learning model to guide experiments [3].

Methodology:

- Dataset Construction: A discrete library of 2121 unique metal oxide compositions is synthesized via inkjet printing of elemental precursors, covering all possible unary, binary, ternary, and quaternary combinations from a set of six elements at 10 at% intervals [3].

- Calibration & Aging: The printed library is calcined at 400°C for 10 hours to convert to metal oxides, followed by accelerated aging of catalysts via parallel operation for 2 hours [3].

- Electrochemical Characterization: A scanning droplet cell characterizes each sample in pH 13 electrolyte, measuring the OER overpotential at 3 mA cm⁻². The negative of this overpotential is used as the figure of merit (FOM) for catalytic activity [3].

- Sequential Learning Loop: A machine learning model (e.g., Random Forest, Gaussian Process) is trained on the accumulated data. An acquisition function uses the model's predictions to select the next most promising compositions for experimental synthesis and testing, iteratively updating the model with new results [3].

Autonomous Discovery in Self-Driving Labs

Objective: To achieve fully autonomous, high-speed discovery and optimization of inorganic materials, such as colloidal quantum dots [4].

Methodology:

- Dynamic Flow Reactor: Chemical precursors are continuously varied through a microfluidic system, unlike traditional steady-state experiments that test one condition at a time [4].

- Real-Time In Situ Characterization: A suite of sensors monitors the reaction and material properties continuously as the conditions change, capturing a data point every half-second and generating a "movie" of the reaction instead of a "snapshot" [4].

- Closed-Loop Operation: A machine learning algorithm processes the real-time data stream to predict the optimal set of reaction conditions (e.g., temperature, concentration, flow rate) needed to achieve the target material properties. The system automatically adjusts the reactor parameters to these new conditions without human intervention [4].

- Data Intensification: This process generates at least an order of magnitude more high-quality data than state-of-the-art self-driving labs using steady-state methods, dramatically improving the speed and efficiency of the AI-guided discovery process [4].

Workflow Visualization

The following diagram illustrates the core iterative loop that powers modern, AI-accelerated materials discovery platforms.

AI-Driven Materials Discovery Workflow

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Resources for Data-Driven Materials Research

| Tool / Solution | Function & Application | Key Features |

|---|---|---|

| Graph Networks for Materials Exploration (GNoME) | A deep learning model for predicting crystal structure stability [2]. | Scales with data/compute; Achieves 11 meV/atom prediction error; Enables discovery of millions of stable crystals. |

| Sequential Learning (SL) Algorithms | Guides experiments by iteratively updating models with new data [3]. | Can accelerate discovery by up to 20x; Includes Random Forest, Gaussian Process, and query-by-committee variants. |

| Explainable AI (XAI) / SHAP Analysis | Interprets AI model predictions to provide scientific insights [5]. | Moves beyond "black box" models; Reveals how elements influence properties in MPEAs. |

| Self-Driving Laboratory | Robotic platform combining AI, automation, and synthesis [4]. | Uses dynamic flow for data intensification; Reduces time, cost, and chemical waste by orders of magnitude. |

| High-Throughput Experimentation (HTE) | Rapidly synthesizes and characterizes large libraries of materials [3]. | e.g., Inkjet printing of 2121 unique compositions; Scanning droplet cell for electrochemical characterization. |

| Inverse Design Framework | Predicts stable materials directly from a target property or composition space [6]. | Couples predictive calculations with combinatorial synthesis; Identifies "missing" stable materials. |

Key Properties for Stability Prediction in Biomaterials

The development of new biomaterials has traditionally relied on a "trial-and-error" approach, involving numerous experiments that consume significant resources including manpower, time, materials, and finances [7]. This methodology presents particular challenges in predicting biomaterial stability—a critical property determining how a material will perform in biological environments over time. Stability encompasses not only structural integrity but also chemical consistency, degradation profiles, and biological performance within physiological systems.

The paradigm is shifting with the emergence of computationally driven experimental science, where theoretical prediction and experimental validation operate synergistically [8]. This approach is exemplified by research that applies Inverse Design to screen chemical systems, successfully predicting and experimentally realizing previously missing stable materials [8]. For researchers, scientists, and drug development professionals, understanding the key properties for stability prediction and the methodologies for their assessment is fundamental to accelerating the translation of biomaterials from concept to clinical application. This guide provides a comparative analysis of the predictive frameworks, experimental methodologies, and reagent solutions advancing this field.

Theoretical Frameworks for Predicting Biomaterial Stability

Theoretical frameworks for stability prediction leverage computational power to identify promising biomaterial candidates before synthesis, guiding experimental efforts toward the most viable options.

Artificial Intelligence and Machine Learning Models

Artificial Intelligence (AI), particularly through Machine Learning (ML) and its subcategory Deep Learning (DL), has demonstrated significant potential in biomaterials science [7]. These systems emulate human cognition through advanced algorithms, processing complex reasoning with minimal human intervention. In stability prediction, ML models excel at recognizing complex patterns in material structure-property relationships that are often non-intuitive to human researchers.

- Supervised Learning relies on labelled datasets, where the model learns a mapping function from input (e.g., material composition, processing parameters) to output (e.g., degradation rate, mechanical integrity over time). This approach is particularly valuable for predictive tasks such as forecasting the long-term structural stability of a polymeric implant based on its initial properties [7].

- Unsupervised Learning works with unlabelled data to discover hidden patterns or clusters, potentially identifying novel material groupings with similar stability profiles without predefined categories [7].

- Reinforcement Learning follows a computational trial-and-error strategy, where algorithms learn sequential decision-making to optimize for desired outcomes, such as maximizing a scaffold's functional lifespan while minimizing inflammatory response [7].

A key advantage of the ML approach is its ability to address both forward problems (predicting properties from structure) and inverse problems (identifying structures that yield desired properties), providing powerful flexibility in biomaterial design [7].

The Inverse Design Approach

The Inverse Design methodology systematically screens chemical spaces to identify theoretically stable materials that may have been overlooked. This approach was successfully applied to V-IX-IV group ternary ABX materials, where high-throughput computational screening revealed nine previously missing stable compounds. Subsequent combinatorial experiment synthesized TaCoSn and discovered TaCo₂Sn, the first two reported ternaries in this chemical system [8]. This demonstrates how computationally driven experimental chemistry can fill gaps in material databases with rationally designed, stable compounds.

Evidence-Based Biomaterials Research

Evidence-based biomaterials research (EBBR) is an emerging methodology that applies evidence-based approaches, represented by systematic reviews and meta-analysis, to generate scientific evidence from existing research data [9]. For stability prediction, EBBR can synthesize data from numerous studies to establish robust correlations between material properties and in vivo performance, creating a foundational evidence base that informs both computational models and experimental hypotheses.

Table 1: Comparison of Theoretical Frameworks for Biomaterial Stability Prediction

| Framework | Primary Function | Data Requirements | Key Advantages | Common Applications |

|---|---|---|---|---|

| Supervised Machine Learning | Predicts stability properties from input features | Large, labelled datasets | High prediction accuracy for known material classes | Degradation rate prediction, Mechanical property forecasting |

| Unsupervised Machine Learning | Discovers hidden patterns and groups in material data | Unlabelled datasets | Identifies novel material groupings without predefined categories | Material classification, Anomaly detection in stability data |

| Inverse Design | Identifies material compositions that meet specific stability criteria | Chemical rules, Energetic calculations | Systematically explores chemical space for overlooked stable materials | Discovering new stable compounds, Ternary and quaternary material systems |

| Evidence-Based Synthesis | Generates scientific evidence from aggregated research data | Multiple published studies | Provides validated, comprehensive evidence for decision-making | Correlating in vitro and in vivo stability, Establishing structure-property relationships |

Experimental Methodologies for Stability Validation

Theoretical predictions require rigorous experimental validation to confirm stability under biologically relevant conditions. Several advanced methodologies provide this critical bridge between computation and application.

Biomaterials for Organoid Culture and Stability Assessment

Liver organoid models represent a sophisticated experimental system for assessing biomaterial functionality and stability in complex biological environments. Traditional organoid culture relies on tumor-derived extracellular matrices like Matrigel, which poses challenges due to its xenogeneic nature and variable composition, complicating stability assessment [10]. Newer biomaterials-guided approaches utilize defined hydrogel systems that support liver organoid development while offering more consistent, reproducible platforms for evaluating material stability in physiologically relevant contexts [10]. These systems allow researchers to monitor how biomaterials maintain their structural integrity and functional properties while supporting specialized tissue development.

The experimental workflow for biomaterial stability assessment in organoid culture typically involves: (1) biomaterial synthesis and characterization; (2) organoid seeding and culture; (3) longitudinal monitoring of material properties and degradation; (4) assessment of functional outcomes; and (5) computational correlation of prediction with experimental results.

Diagram 1: Experimental workflow for biomaterial stability assessment in organoid culture models, integrating computational prediction with experimental validation.

Standardized Biocompatibility and Biosafety Evaluation

For translational biomaterials, stability assessment must be integrated into the regulatory pathway. According to the roadmap of biomaterials translation, non-clinical evaluation includes bench performance tests, biocompatibility, and biosafety evaluations per ISO 10993 standards, complemented by pre-clinical animal studies [9]. These standardized protocols provide critical data on how biomaterials maintain their stability and performance under physiological conditions, with biocompatibility defined as "the ability of a material to perform with an appropriate host response in a specific application" [9].

Table 2: Comparative Methodologies for Experimental Stability Validation

| Methodology | Key Measurements | Timeframe | Biological Relevance | Regulatory Application |

|---|---|---|---|---|

| In Vitro Degradation Studies | Mass loss, Molecular weight reduction, Breakdown product analysis | Days to months | Medium (Controlled environment) | Early-stage screening, ISO 10993 compliance |

| Organoid Culture Models | Material-tissue interaction, Functional maintenance, Structural integrity | Weeks | High (Complex tissue environment) | Pre-clinical functionality assessment |

| Pre-clinical Animal Studies | Host response, Degradation in vivo, Systemic effects | Months to years | Very High (Whole organism physiology) | Design validation, Regulatory submission |

| Real-World Evidence (RWE) Collection | Long-term performance, Rare failure modes, Population-specific outcomes | Years | Highest (Actual clinical use) | Post-market surveillance, Product refinement |

Comparative Analysis of Stability Prediction Approaches

Different computational approaches offer varying strengths for predicting specific aspects of biomaterial stability. The selection of an appropriate methodology depends on the specific stability property of interest, available data resources, and the stage of development.

Diagram 2: Logical relationships in stability prediction using machine learning, showing how input data feeds into algorithms that predict specific stability aspects before experimental validation.

Table 3: Property-Specific Prediction Performance of Computational Approaches

| Stability Property | Most Effective Predictive Method | Typical Prediction Accuracy Range | Key Influencing Factors | Validation Methods |

|---|---|---|---|---|

| Structural Integrity (Mechanical) | Supervised ML (Regression models) | 75-92% | Polymer crystallinity, Cross-linking density, Composite interfaces | Tensile testing, Compression testing, Fatigue analysis |

| Degradation Profile | Deep Neural Networks | 80-90% | Hydrophilicity/hydrophobicity, Chemical bond stability, Enzyme susceptibility | Mass loss measurement, GPC analysis, SEM surface characterization |

| Surface Stability | Random Forest Classifiers | 70-85% | Surface energy, Protein adsorption tendency, Oxidation resistance | Contact angle measurement, XPS, AFM |

| Biological Response | Ensemble ML Methods | 65-80% | Surface topography, Chemical functionality, Degradation products | Cell viability assays, Cytokine secretion profiling, Histological analysis |

Essential Research Reagent Solutions for Stability Studies

Cutting-edge research in biomaterial stability prediction and validation relies on specialized reagent solutions and research tools. The following table details key resources essential for conducting rigorous stability assessment experiments.

Table 4: Essential Research Reagent Solutions for Biomaterial Stability Studies

| Reagent/Tool | Function in Stability Research | Key Applications | Considerations |

|---|---|---|---|

| Defined Hydrogel Systems | Provides reproducible 3D matrix for stability assessment under biological conditions | Liver organoid culture [10], Tissue engineering scaffolds | Replaces variable tumor-derived matrices; enables controlled composition |

| High-Throughput Screening Platforms | Enables rapid experimental validation of computationally predicted stable materials | Ternary material synthesis [8], Composition-property mapping | Accelerates validation phase; reduces resource consumption |

| Computational Material Databases | Provides training data for AI/ML stability prediction models | Inverse design [8], Property prediction [7] | Data quality and completeness directly impact prediction accuracy |

| ISO 10993 Testing Kits | Standardized assessment of biological safety and stability | Biocompatibility evaluation [9], Regulatory submission | Essential for translational research; follows quality management systems |

| 3D Bioprinting Systems | Fabricates complex structures with precise architectural control | Customized implant production [7], Tissue-specific scaffolds | Enables creation of structures matching computational designs |

The field of biomaterial stability prediction is undergoing a transformative shift from empirical trial-and-error toward integrated computational-experimental methodologies. Approaches combining AI-driven prediction with rigorous experimental validation in advanced model systems like organoids represent the frontier of efficient biomaterial development [10] [7]. The successful application of Inverse Design to discover missing stable materials demonstrates the power of this synergistic approach [8].

Future advancements will likely focus on improving prediction accuracy through larger, more standardized datasets and refining experimental models to better capture the complexity of biological environments. The emerging methodology of evidence-based biomaterials research will play a crucial role in synthesizing collective knowledge into validated principles for stability prediction [9]. For researchers and drug development professionals, mastering these integrated approaches is essential for accelerating the development of safe, effective, and stable biomaterials that address unmet clinical needs.

Navigating Materials Databases and Open Science Infrastructures

The field of materials science is undergoing a profound transformation, moving from artisanal-scale discovery to industrial-scale science powered by artificial intelligence and data-centric approaches [11]. This revolution is fundamentally constrained by a critical bottleneck: the challenge of experimentally realizing theoretically predicted stable materials. While computational models like DeepMind's GNoME have demonstrated the ability to predict millions of stable crystal structures, the ultimate validation occurs not in silicon but in the laboratory, where synthesis conditions, processing parameters, and environmental factors determine real-world viability [11]. This comparison guide examines the databases and open science infrastructures that enable researchers to navigate this critical transition from prediction to experimental realization, with a specific focus on their capabilities for handling experimental data, stability predictions, and collaborative research workflows essential for validating computationally discovered materials.

Comparative Analysis of Major Platforms and Consortia

The ecosystem of materials databases and infrastructures can be broadly categorized into computational repositories, experimental data platforms, and open science consortia. Each plays a distinct role in the research pipeline for experimentally realizing predicted stable materials.

Table 1: Comparison of Major Materials Data Platforms and Their Experimental Capabilities

| Platform/Consortium | Primary Focus | Experimental Data Integration | Stability Assessment Features | Key Advantages | Notable Limitations |

|---|---|---|---|---|---|

| Cambridge Structural Database (CSD) | Experimental crystal structures of organics, metal-organic frameworks, and transition metal complexes [12] | High: Contains over 500,000 X-ray structures with associated experimental conditions [12] | Limited to structural stability information from crystallographic data | Largest source of experimental structural data; tmQM dataset provides DFT properties for ~86,000 transition metal complexes [12] | Does not systematically include failed synthesis attempts or stability under operational conditions |

| CoRE MOF 2019 ASR | Experimentally studied metal-organic frameworks with refined structures [12] | High: Nearly 10,000 experimentally characterized MOFs with mining of associated literature properties [12] | Includes thermal stability (Td), solvent removal stability, and water/acid/base stability data from literature mining [12] | Curated experimental structures with associated stability labels; enables ML model training for stability prediction [12] | Potential structural errors in earlier versions; stability label extraction challenged by inconsistent reporting conventions [12] |

| Canadian Open Neuroscience Platform (CONP) | Open neuroscience collaboration with distributed governance [13] | Intermediate: Focuses on sharing intermediate research resources including data, code, and materials | Governance model emphasizes attribution norms for shared resources rather than specific stability metrics | Distributed governance facilitates resource sharing while navigating established authorship and evaluation norms [13] | Domain-specific to neuroscience; limited direct materials stability focus |

| The Cancer Genome Atlas (TCGA) | Layered governance model for cancer research data [13] | High: Comprehensive multi-omics and clinical data with standardized processing | Not focused on materials stability; primarily biological system stability | Layered governance with centralized quality control enables large-scale data reuse [13] | Not applicable to materials science domain |

Table 2: Quantitative Data Extraction and Stability Assessment Capabilities

| Platform/Dataset | Extracted Stability Metrics | Data Volume | Extraction Methodology | Experimental Validation |

|---|---|---|---|---|

| MOF Thermal Stability | Decomposition temperature (Td) | ~3,000 Td values [12] | NLP detection of TGA plots + digitization with tangent intersection method [12] | Varied reporting conventions (onset vs. complete crystallinity loss) [12] |

| MOF Water Stability | Binary stability labels in aqueous environments | 1,092 MOFs with stability labels [12] | Sentiment analysis of textual descriptions; transfer learning from solvent removal data [12] | Limited by publication bias toward stable materials [12] |

| MOF Gas Adsorption | Single-component gas uptake isotherms | 948 isotherms from 192 MOFs (acetylene, ethylene, ethane) [12] | Pattern matching + NLP for detection; WebPlotDigitizer for semi-manual digitization [12] | Challenged by non-standard pressure ranges and units across studies [12] |

| Perovskite Environmental Stability | Degradation kinetics under environmental stressors | 206 MAPI thin-film samples [14] | Color change monitoring via camera; sparse regression to identify governing differential equations [14] | Follows Verhulst logistic function analogous to self-propagating reactions [14] |

Experimental Protocols and Methodologies

Scientific Machine Learning for Discovering Governing Equations from Experimental Data

The application of Scientific ML represents a paradigm shift in analyzing experimental materials stability data. A groundbreaking methodology from npj Computational Materials demonstrates how to extract governing differential equations directly from experimental degradation data [14]:

Objective: To uncover the underlying differential equation governing methylammonium lead iodide (MAPI) perovskite degradation under environmental stressors (temperature, humidity, light) using sparse regression algorithms [14].

Experimental Setup:

- Sample Preparation: 206 thin-film MAPI samples prepared under controlled variance conditions (108 low-variance, 98 high-variance samples) [14]

- Environmental Stressors: Samples subjected to 0.15 ± 0.01 Sun illumination, 20 ± 5% relative humidity, and temperatures ranging from 35 to 85°C [14]

- Degradation Monitoring: Camera-based color change tracking over time, focusing on red color values (0-255 scale) due to correlation with formation of yellow PbI2 degradation product [14]

Sparse Regression Protocol (PDE-FIND Algorithm):

- Library Construction: Build candidate function library containing polynomials of U up to order 5, sine/cosine functions, polynomials of time, temperature terms, and adjusted negative exponent of 1/T [14]

- Differential Calculation: Compute derivatives using finite difference or polynomial interpolation methods [14]

- Sparse Regression: Apply sequential threshold ridge regression to identify parsimonious differential equation describing the system dynamics [14]

- Robustness Validation: Test identified equations against experimental variance and Gaussian noise to establish application limits [14]

Key Finding: The environmental degradation of MAPI across 35-85°C is minimally described by a second-order polynomial corresponding to the Verhulst logistic function, indicating reaction kinetics analogous to self-propagating reactions [14].

Literature-Based Data Extraction for Stability Modeling

For materials classes where high-throughput experimentation is challenging, literature-based data extraction provides an alternative approach for building stability prediction models [12]:

Named Entity Recognition Challenge: Overcoming the mismatch between material names and chemical structures, particularly for materials like MOFs without one-to-one naming conventions [12].

Stability Data Extraction Workflow:

- Corpus Curation: Start with structurally validated datasets (e.g., CoRE MOF 2019 ASR) with associated digital object identifiers [12]

- Natural Language Processing: Apply sentiment analysis to identify solvent removal stability descriptions; implement pattern matching to detect thermogravimetric analysis mentions [12]

- Image Digitization: Extract numerical data from published TGA plots using tools like WebPlotDigitizer with standardized tangent intersection methods [12]

- Label Harmonization: Address inconsistent reporting conventions (e.g., onset temperature vs. complete crystallinity loss) through uniform digitization protocols [12]

Visualization of Research Workflows

Scientific ML for Experimental Materials Discovery

Literature Mining for Materials Stability Data

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Reagents and Computational Tools for Materials Stability Research

| Reagent/Tool | Function | Application Context | Key Features |

|---|---|---|---|

| Methylammonium Lead Iodide (MAPI) | Model perovskite material for stability studies [14] | Environmental degradation kinetics under thermal, humidity, and light stress [14] | Correlated color change with device performance; multiple documented decomposition pathways [14] |

| WebPlotDigitizer | Semi-manual digitization of published plots and figures [12] | Extraction of numerical data from literature TGA traces and gas adsorption isotherms [12] | Enables conversion of graphical data to numerical values for meta-analysis and ML training [12] |

| ChemDataExtractor | Named entity recognition and data extraction from scientific literature [12] | Automated mining of materials properties from published manuscripts [12] | Bypasses challenges of manual data extraction; uses heuristics for property identification [12] |

| PDE-FIND Algorithm | Sparse regression for governing equation identification [14] | Discovery of differential equations from experimental degradation data [14] | Identifies parsimonious mathematical descriptions of complex materials behavior patterns [14] |

| High-Throughput Environmental Chambers | Controlled application of multiple environmental stressors [14] | Accelerated aging tests for materials stability assessment [14] | Precise control of temperature (35-85°C), humidity (20±5%), and illumination (0.15±0.01 Sun) [14] |

The navigation of materials databases and open science infrastructures requires careful alignment with specific research objectives in experimental realization of predicted stable materials. For high-throughput experimental validation of computationally discovered materials, platforms with robust experimental data integration like the Cambridge Structural Database and CoRE MOF provide essential structural and stability benchmarks. For mechanistic understanding of degradation processes, scientific machine learning approaches applied to controlled experimental data offer pathways to discover fundamental governing equations. For maximizing resource efficiency, open science consortia with appropriate governance models enable sharing of intermediate research resources while addressing attribution concerns. The emerging paradigm of industrial-scale materials discovery depends on strategic integration across these platforms, leveraging their complementary strengths to accelerate the transition from computational prediction to experimentally realized stable materials.

Theoretical Models for Predicting Synthesis Pathways

The acceleration of novel materials discovery hinges on the ability to predict viable synthesis pathways through computational models. Within the broader thesis of experimental realization of theoretically predicted stable materials, this guide objectively compares the performance of leading theoretical frameworks. These models span applications from organic small molecules to solid-state materials and pharmaceuticals, each employing distinct methodologies to navigate chemical reaction space, prioritize routes, and ultimately bridge the gap between computational prediction and experimental validation.

Comparative Analysis of Theoretical Models

The table below summarizes the core characteristics, performance, and experimental validation of four prominent models for predicting synthesis pathways.

Table 1: Comparative Overview of Synthesis Pathway Prediction Models

| Model / Approach Name | Core Methodology | Reported Performance / Validation | Experimental Protocol for Validation |

|---|---|---|---|

| Similarity Metric for Synthetic Routes [15] | Calculates route similarity based on formed bonds and atom grouping using atom-mapping. | 0.97 similarity score between AI-proposed and experimental route for benzimidazole; aligns with chemist intuition. | Compared AI-predicted routes from AiZynthFinder to subsequent experimental routes for drug discovery molecules. |

| Vector-Based Route Assessment [16] | Represents molecular structures as 2D coordinates from similarity and complexity to create route vectors. | Used to compare CASP performance of AiZynthFinder on 100k ChEMBL targets; quantifies route efficiency. | Analysis of 640k literature syntheses (2000-2020); vectors for reactions grouped by type follow logical patterns. |

| LLM-Guided Pathway Exploration (ARplorer) [17] | Integrates QM and rule-based methods, underpinned by Large Language Model (LLM)-assisted chemical logic. | Effectively explored multi-step reactions (cycloaddition, Mannich-type, Pt-catalyzed); accelerated PES searching. | Case studies: Quantum mechanical calculations (GFN2-xTB/Gaussian 09) identified intermediates and transition states; pathways validated by IRC analysis. |

| Graph-Based Reaction Network [18] | Constructs chemical reaction networks from thermochemical data; uses pathfinding algorithms to suggest pathways. | Predicted complex pathways for YMnO₃, Fe₂SiS₄, etc., comparable to literature; suggested routes for unsynthesized MgMo₃(PO₄)₃O. | Network built from Materials Project data; pathway costs based on reaction free energies; validation against reported experimental syntheses. |

Detailed Model Methodologies and Experimental Protocols

Similarity Metric for Synthetic Routes

This method provides a continuous similarity score (0-1) for comparing two synthetic routes to the same molecule. The score is the geometric mean of an atom similarity (Satom) and a bond similarity (Sbond) [15].

- Atom Similarity Calculation: For each molecule in a route, a set of atom-mapping numbers present in the target compound is defined. The overlap between molecules from two different routes is calculated, and the total Satom is derived by summing the maximum overlaps and normalizing by the total number of molecules [15].

- Bond Similarity Calculation: Each reaction in a route is defined as a set of bonds formed that are present in the target compound. The bond overlap is computed as a normalized intersection of all such bond sets between two routes [15].

- Experimental Protocol: The algorithm requires atom-to-atom mapping for every reaction in the routes, performed using tools like

rxnmapper. The atom-mapping from the final reaction is propagated backward through the synthesis. The target compound is excluded from the Satom calculation [15].

Vector-Based Assessment of Synthetic Routes

This approach assesses routes by representing the progression of molecular structures through a two-dimensional space defined by similarity and complexity [16].

- Molecular Coordinate System: The method uses two descriptors:

- Similarity (S): Structural commonality to the target, calculated via Morgan fingerprints or Maximum Common Edge Subgraph (MCES).

- Complexity (C): A path-based metric (CM*) serving as a surrogate for synthetic ease, cost, and waste.

- Route Vectorization: Each synthetic transformation is a vector from reactant to product in this S-C space. A complete route is a sequence of these vectors from the starting material to the target [16].

- Experimental Protocol:

- Data Collection: Compile a dataset of known synthetic routes and reactions from literature sources.

- Descriptor Calculation: For each intermediate and target in a route, compute the similarity and complexity values.

- Vector Construction & Analysis: Plot the route pathway, analyze vector directions and magnitudes to assess step efficiency, and compare route trajectories [16].

LLM-Guided Automated Pathway Exploration (ARplorer)

ARplorer is a hybrid program that automates the exploration of reaction pathways on potential energy surfaces (PES) by combining quantum mechanics with rule-based biases derived from chemical literature [17].

- Workflow:

- Active Site Identification: Identify potential reactive sites and bond-breaking locations in the input structure.

- Structure Optimization & TS Search: Iteratively optimize molecular structures and search for transition states using active-learning sampling.

- Pathway Confirmation: Perform Intrinsic Reaction Coordinate (IRC) analysis to confirm the reaction pathway connecting reactants and products.

- LLM-Guided Chemical Logic: A key innovation is using a Large Language Model to generate both general chemical logic from literature and system-specific rules based on the functional groups present in the reaction system. This logic helps filter unlikely reaction pathways, increasing search efficiency [17].

- Experimental/Computational Protocol: The program is flexible but typically combines semi-empirical methods (GFN2-xTB) for rapid PES generation with more accurate density functional theory (DFT) calculations for final energy evaluations. The workflow is compatible with quantum chemistry software like Gaussian 09 [17].

Graph-Based Reaction Network for Solid-State Materials

This model predicts inorganic solid-state synthesis pathways by treating thermodynamic phase space as a navigable graph network [18].

- Network Construction:

- Node Definition: Nodes represent specific combinations of solid phases (e.g., precursor mixtures).

- Edge Definition: Directed edges represent possible chemical reactions between these phases.

- Edge Costing: The cost of a reaction edge is a function of its thermodynamic driving force (e.g., reaction free energy normalized per atom).

- Pathway Prediction: Pathfinding algorithms (e.g., Dijkstra's) are applied to the network to find the lowest-cost pathways from a set of precursors to a target material. Linear combinations of short paths are used to generate multi-step reaction sequences [18].

- Experimental Protocol:

- Data Source: Acquire thermochemical data (formation energies, free energies) for thousands of compounds from databases like the Materials Project.

- Network Generation: Include all stable phases and metastable phases up to a defined energy threshold (e.g., +30 meV/atom above the convex hull).

- Pathfinding & Validation: Compute the k-shortest paths to target products and validate predicted pathways against known experimental syntheses from the literature [18].

Visualizing Model Workflows

The diagram below illustrates the core operational workflow of the LLM-guided ARplorer program.

LLM-Guided Pathway Exploration

The diagram below illustrates the graph-based approach to predicting solid-state synthesis routes.

Graph-Based Solid-State Synthesis Prediction

The Scientist's Toolkit: Essential Research Reagents and Solutions

The table below lists key computational tools, data sources, and software used in the development and application of the featured models.

Table 2: Key Research Reagents and Computational Tools

| Item Name | Function / Application |

|---|---|

| AiZynthFinder [15] | A retrosynthesis planning tool used to generate AI-predicted synthetic routes for comparison and validation. |

| rxnmapper [15] | A tool for assigning atom-to-atom mapping in chemical reactions, essential for calculating route similarity metrics. |

| Materials Project Database [18] | A extensive database of computed material properties, used as the primary source of thermochemical data for constructing solid-state reaction networks. |

| RDKit [16] | An open-source cheminformatics toolkit used for generating molecular fingerprints and calculating molecular similarity/complexity metrics. |

| Gaussian 09 & GFN2-xTB [17] | Quantum chemistry software packages used for accurate energy calculations and rapid potential energy surface exploration, respectively. |

| Large Language Models (LLMs) [17] | Used to mine chemical literature and generate system-specific chemical logic and reaction rules to guide automated pathway exploration. |

From Virtual to Physical: Synthesis and Characterization Methodologies for Real-World Application

The discovery and development of novel functional materials have long been driven by theoretical predictions of stable structures with exceptional properties. However, a critical gap often exists between computational predictions and experimental realization, particularly for metastable materials synthesized through kinetically controlled pathways. Advanced synthesis techniques, particularly additive manufacturing (AM) and other innovative approaches, are now bridging this divide by providing the precise control necessary to experimentally realize theoretically predicted materials [19] [20]. The emergence of a synthesizability-driven crystal structure prediction (CSP) framework represents a paradigm shift in materials discovery, integrating symmetry-guided structure derivation with machine learning models to identify subspaces likely to yield synthesizable structures [19]. This approach successfully filtered 92,310 potentially synthesizable structures from 554,054 candidates predicted by the GNoME database, demonstrating the power of combining computational prediction with experimental feasibility assessment.

Simultaneously, additive manufacturing has evolved from a rapid prototyping tool to a sophisticated manufacturing platform capable of creating complex geometries with precise material architectures that were previously impossible to fabricate. The experimental realization of two-dimensional copper boride using a novel synthesis technique exemplifies this progress, confirming long-standing theoretical predictions about this class of materials [21]. This breakthrough, achieved by depositing atomic boron onto copper surfaces at elevated temperatures, provides a blueprint for creating additional 2D metal borides with exceptional electrical conductivity, tunable magnetism, and remarkable strength. These advancements collectively highlight the growing synergy between computational materials prediction and advanced synthesis techniques, enabling researchers to systematically explore previously inaccessible regions of materials space.

Comparative Analysis of Advanced Synthesis Techniques

Performance Benchmarking of Additive Manufacturing Technologies

Table 1: Comparative performance of metal additive manufacturing techniques for experimental materials realization

| Technique | Key Materials | Achievable Resolution | Key Advantages | Limitations & Challenges |

|---|---|---|---|---|

| Laser Powder Bed Fusion (L-PBF) | AlSi10Mg, Ti6Al4V, Ti1Fe, Ni-based superalloys [22] [23] | 20-100 μm layer thickness [24] | High strength outputs (e.g., Ti6Al4V UTS >1 GPa); Complex geometries [22] | Anisotropic properties; Residual stress; Limited build volumes [22] [24] |

| Directed Energy Deposition (DED) | Nickel-aluminum bronze (from recycled chips) [22] | 100-500 μm layer thickness [22] | Multi-material capability; Large part production; Material recycling (e.g., 775 MPa tensile strength from recycled NAB) [22] | Lower resolution; Significant post-processing often required [22] |

| Fused Filament Fabrication (FFF) | Carbon-fiber-infused PLA, PETG, nylon [22] | 100-300 μm layer thickness [24] | Low cost; Material versatility; Accessibility | Anisotropy; Nozzle wear with composites; Limited high-temperature capability [22] |

| Vat Photopolymerization | Polycarbonate, polyamide 12 [23] | 10-50 μm layer thickness [24] | High resolution; Smooth surface finish | Brittle outputs; Limited functional materials; Post-curing required [24] |

Table 2: Emerging non-AM synthesis techniques for predicted materials

| Technique | Key Materials | Synthesized Structures | Unique Capabilities | Experimental Challenges |

|---|---|---|---|---|

| Atomic Layer Deposition | 2D Copper Boride [21] | Atomically thin metal borides | Creates previously inaccessible 2D materials; Strong interfacial chemical control | Precise temperature control required; Limited to specific substrate combinations [21] |

| Microgravity Crystallization | Protein crystals (e.g., Keytruda, insulin) [25] | More uniform pharmaceutical crystals | Larger, more defect-free crystals; Improved drug formulations | Extreme cost; Limited experimental access; Small batch sizes [25] |

| Machine-Learning-Guided Synthesis | Hf-X-O (X = Ti, V, Mn) systems [19] | 92,310 synthesizable candidates from 554,054 predictions | Identifies synthesizable metastable phases; Bridges computational/experimental divide | Limited material validation; Complex workflow integration [19] |

Quantitative Benchmarking Data for AM Processes

Table 3: Experimental mechanical properties of additively manufactured materials versus conventional processing

| Material | Synthesis Method | Tensile Strength (MPa) | Yield Strength (MPa) | Elongation at Break (%) | Notable Characteristics |

|---|---|---|---|---|---|

| Recycled NAB | DED (from chips) [22] | 775 | 455 | 12.6 | Good properties in vertical direction; Some impurities from recycling [22] |

| Ti6Al4V | L-PBF [22] | >1000 (typical) | Not specified | Good ductility (when defect-free) | High strength but microstructures not ideal for AM [22] |

| Ti1Fe | L-PBF (in-situ alloyed) [22] | Similar to Ti6Al4V | Similar to Ti6Al4V | Similar to Ti6Al4V | Simplified chemistry; AM-compatible microstructure [22] |

| AlSi10Mg | L-PBF [22] | Varies with orientation | Varies with orientation | Varies with orientation | Work hardening predictable via Hollomon/Voce models [22] |

Experimental Protocols for Advanced Synthesis

Protocol 1: Synthesis of 2D Copper Boride via Atomic Layer Deposition

The recent experimental realization of 2D copper boride exemplifies the synthesis of theoretically predicted stable materials [21]. This protocol requires precise control over interfacial reactions:

Substrate Preparation: Begin with high-purity copper foil (≥99.99%). Clean the substrate using argon plasma etching for 10 minutes at 100W power to remove surface oxides and contaminants.

Reactor Setup: Load the copper substrate into an ultra-high vacuum (UHV) deposition chamber with a base pressure of ≤1×10⁻⁸ Torr. The system should be equipped with a boron effusion cell capable of temperatures up to 1800°C and substrate heating capability up to 600°C.

Boron Deposition: With the copper substrate maintained at 450-500°C, thermally evaporate high-purity boron (99.999%) from the effusion cell operated at 1500°C. The deposition rate should be carefully controlled at 0.1-0.3 monolayers per minute, monitored via reflection high-energy electron diffraction (RHEED).

Reaction and Formation: Allow the deposition to continue for 30-60 minutes. The strong chemical interactions between boron and copper at the optimized substrate temperature facilitate the self-assembly of 2D copper boride rather than pure borophene.

Characterization: Confirm successful synthesis using in-situ scanning tunneling microscopy (STM) and atomic-resolution spectroscopic measurements. Key indicators include a well-ordered atomic lattice with characteristics distinct from either pure copper or boron [21].

This methodology provides a blueprint for creating additional 2D metal borides by pairing boron with other metal substrates, significantly accelerating the experimental realization of theoretically predicted 2D materials.

Protocol 2: Laser Powder Bed Fusion for In-Situ Alloyed Titanium

The production of in-situ alloyed Ti1Fe as an alternative to Ti6Al4V demonstrates AM's capability to create materials with tailored microstructures [22]:

Powder Preparation: Create a homogeneous powder mixture of fine titanium (≤45μm) and iron (≤20μm) particles using turbulent mixing for 30 minutes. The composition should target Ti1Fe (approximately 1.5-2.5 wt% iron).

Process Parameter Optimization: Utilize higher energy densities than typical for Ti6Al4V. Specific parameters include laser power of 250-300W, scan speed of 800-1200 mm/s, hatch spacing of 80μm, and layer thickness of 30μm.

In-Situ Alloying: The L-PBF process melts the titanium and iron particles simultaneously, creating a melt pool where alloying occurs through Marangoni convection and diffusion. The high cooling rates (10³-10⁶ K/s) result in non-equilibrium microstructures.

Microstructural Control: Apply specific thermal management strategies including baseplate heating to 200°C and interlayer dwell times to control residual stress and phase formation.

Validation: Characterize resulting microstructure using scanning electron microscopy (SEM) with energy-dispersive X-ray spectroscopy (EDS) to confirm homogeneous iron distribution and the presence of desired phases.

This approach demonstrates how AM can create alternative alloy systems with microstructures specifically optimized for the unique thermal conditions of additive processes, rather than accepting suboptimal microstructures from conventionally-designed alloys [22].

Protocol 3: Machine-Learning-Assisted Prediction of Synthesizable Structures

The synthesizability-driven crystal structure prediction framework bridges theoretical prediction and experimental realization [19]:

Initial Structure Generation: Generate candidate structures using symmetry-guided structure derivation, focusing on compositions of interest (e.g., Hf-X-O systems).

Synthesizability Screening: Apply a Wyckoff encode-based machine learning model to identify subspaces likely to yield highly synthesizable structures. This model should be fine-tuned using recently synthesized structures to enhance predictive accuracy.

Energetic Evaluation: Perform ab initio calculations (e.g., density functional theory) on the filtered candidates to assess thermodynamic stability and electronic properties.

Experimental Validation: Select top candidates (e.g., three HfV₂O₇ structures predicted with high synthesizability) for laboratory synthesis using conventional solid-state or solution-based methods.

Iterative Refinement: Use experimental results to refine the machine learning model, creating a positive feedback loop that improves prediction accuracy over time.

This protocol successfully identified 92,310 potentially synthesizable structures from 554,054 theoretical predictions, demonstrating its power in bridging the gap between computational materials discovery and experimental realization [19].

Research Reagent Solutions for Advanced Materials Synthesis

Table 4: Essential research reagents and materials for advanced synthesis techniques

| Reagent/Material | Function in Synthesis | Application Examples | Key Considerations |

|---|---|---|---|

| High-Purity Metal Powders (Ti, AlSi10Mg, Ni625/718) [22] [23] | Feedstock for powder-bed AM processes | L-PBF of aerospace components; Biomedical implants [22] [24] | Particle size distribution (15-45μm); Sphericity; Flowability; Oxide content [23] |

| Photopolymer Resins | Vat photopolymerization feedstock | Microfluidic devices; Biomedical models [23] [24] | Viscosity; Curing wavelength; Biocompatibility; Mechanical properties post-curing [24] |

| Composite Filaments (Carbon-fiber PLA, PETG, Nylon) [22] | FFF process feedstock | Drone components; Lightweight structures [22] | Fiber content; Nozzle wear resistance; Layer adhesion; Storage stability |

| Atomic Boron Source [21] | Precursor for 2D metal boride synthesis | Experimental realization of 2D copper boride [21] | Purity (>99.999%); Evaporation temperature; Deposition rate control |

| Protein Crystallization Reagents [25] | Microgravity pharmaceutical research | Improved Keytruda formulation; Insulin studies [25] | Ground control comparisons; Stability during launch/return; Purity requirements |

Visualization of Synthesis Workflows and Relationships

Synthesizability Prediction Framework

Synthesizability Prediction Workflow: This diagram illustrates the machine-learning-assisted framework for predicting synthesizable crystal structures, which successfully identified 92,310 potentially synthesizable candidates from 554,054 theoretical predictions [19].

Additive Manufacturing Synthesis Pathway

AM Synthesis Pathway: This workflow outlines the pathway for creating materials with controlled microstructures through additive manufacturing, highlighting the capability for in-situ alloying to create materials with AM-optimized properties [22].

The integration of advanced synthesis techniques with computational materials prediction is fundamentally transforming materials research and development. Additive manufacturing provides unprecedented control over material architecture and composition, enabling the experimental realization of structures that were previously only theoretical concepts. The emergence of machine-learning-assisted synthesizability prediction represents a complementary approach that systematically bridges the gap between computational prediction and experimental realization [19]. These convergent technological pathways are accelerating the discovery and development of novel functional materials with tailored properties for applications ranging from energy storage and computing to biomedical implants and drug development.

The benchmarking data presented in this review enables researchers to select appropriate synthesis techniques based on material requirements, structural complexity, and property targets. As these advanced synthesis methods continue to mature and become more accessible, they will undoubtedly unlock new opportunities for realizing theoretically predicted materials with exceptional properties and functionalities. The experimental realization of 2D copper boride and the development of synthesizability-driven prediction frameworks exemplify the remarkable progress already achieved and point toward an exciting future where the transition from theoretical prediction to experimental realization becomes increasingly systematic and efficient.

In the field of materials science research, particularly in the experimental realization of theoretically predicted stable materials, characterization techniques form the cornerstone of validation and analysis. As research progresses from computational prediction to synthesized material, techniques such as Scanning Electron Microscopy (SEM), X-ray Diffraction (XRD), and Thermal Analysis provide the critical data necessary to confirm structure, morphology, and properties. These methods enable researchers to bridge the gap between theoretical models and physical reality, offering insights into crystalline structure, surface morphology, thermal stability, and compositional integrity. This guide provides a comprehensive comparison of these essential characterization methods, detailing their operational principles, applications, experimental protocols, and synergistic use in materials research.

The effective characterization of materials requires an understanding of the complementary information provided by different techniques. The following workflow illustrates how SEM, XRD, and thermal analysis integrate into a comprehensive materials validation strategy:

Scanning Electron Microscopy (SEM)

Principles and Applications

Scanning Electron Microscopy (SEM) operates by scanning a focused electron beam across a sample surface and detecting signals generated by electron-matter interactions. The primary electrons interact with atoms in the sample, producing various signals including secondary electrons (SE), back-scattered electrons (BSE), and characteristic X-rays that reveal information about surface topography, composition, and crystalline structure [26].

According to research applications, SEM provides critical information for materials scientists including:

- Surface morphology and texture at nanoscale resolutions

- Elemental composition through energy-dispersive X-ray spectroscopy (EDS)

- Phase distribution in composite materials via backscattered electron imaging

- Crystallographic information through electron backscatter diffraction (EBSD)

The technique finds particular utility in observing morphological evolution under various stimuli. For instance, in situ SEM enables real-time observation of materials transformation under thermal, mechanical, or electrical stimuli, providing direct visualization of processes like carbon nanotube compression deformation [26].

Experimental Protocols

Sample Preparation:

- Conductive materials: Mount directly on SEM stub with conductive tape or paste

- Non-conductive materials: Require sputter-coating with gold, platinum, or carbon to prevent charging

- Biological samples: Typically require fixation, dehydration, and critical point drying

- Nanoparticles: Disperse on conductive substrate and dry under inert atmosphere

Data Acquisition Parameters:

- Acceleration voltage: Typically 1-30 kV (adjusted based on sample properties and required resolution)

- Working distance: Optimized for depth of field and signal strength (usually 5-15 mm)

- Detector selection: Secondary electron detector for topography; backscattered detector for compositional contrast

- Magnification: Selected based on feature size of interest

Typical Experimental Workflow:

X-ray Diffraction (XRD)

Principles and Applications

X-ray Diffraction operates on the principle of Bragg's Law, where X-rays scattered by crystal planes interfere constructively when path length differences equal integer multiples of the wavelength [27]. This technique provides comprehensive information about crystalline structure through analysis of diffraction peak positions, intensities, and widths.

XRD delivers multiple critical characterization capabilities:

- Phase identification through comparison with reference databases (e.g., PDF database)

- Crystal structure determination including space group and atomic positions

- Crystallite size calculation using the Scherrer equation (D = Kλ/βcosθ)

- Lattice parameter determination and strain analysis through peak position shifts

- Crystallinity quantification and amorphous content assessment

- Texture and preferred orientation analysis [27] [28]

In situ XRD extends these capabilities to dynamic processes, enabling real-time monitoring of structural evolution during battery cycling, catalytic reactions, or phase transitions under controlled temperature and atmosphere [26].

Experimental Protocols

Sample Preparation:

- Powder samples: Grind to fine powder (typically <10 μm), avoid preferred orientation

- Flat specimens: Ensure smooth surface aligned with sample holder plane

- Capillary mounting: For preferred orientation minimization in transmission mode

- Thin films: May require grazing incidence geometry for surface structure analysis

Data Collection Parameters:

- X-ray source: Cu Kα (λ=1.5406 Å) most common; Cr or Mo for special applications

- Voltage/current: Typically 40 kV/40 mA for laboratory instruments

- Scan range: 5-90° 2θ for most materials; adjusted based on expected phases

- Step size: 0.01-0.02° for high-quality data; larger steps for rapid screening

- Counting time: 1-5 seconds per step depending on required signal-to-noise ratio

Quantitative Analysis Methods:

- Reference intensity ratio (RIR) method for simple mixtures

- Rietveld refinement for complete structure quantification

- Whole pattern fitting for crystalline phase distribution

Table 1: XRD Data Interpretation Guide

| Observation | Possible Interpretation | Research Significance |

|---|---|---|

| No diffraction peaks | Non-crystalline/amorphous material | Confirmation of glassy state or lack of long-range order |

| Peak broadening | Small crystallite size (<100 nm) or microstrain | Nanomaterial confirmation, defect analysis |

| Peak position shifts | Lattice expansion/compression, solid solution formation | Dopant incorporation, strain engineering |

| Preferred orientation | Non-random crystal alignment | Processing condition effects, anisotropic properties |

| Extra peaks | Secondary phases, impurities | Synthesis optimization, phase purity assessment |

Thermal Analysis Techniques

Principles and Applications

Thermal analysis encompasses a suite of techniques that measure material properties as functions of temperature, providing critical information about thermal stability, phase transitions, and compositional characteristics [29] [30]. The principal methods include:

Thermogravimetric Analysis (TGA): Measures mass changes as temperature varies, indicating processes like decomposition, oxidation, dehydration, or sublimation [30].

Differential Scanning Calorimetry (DSC): Quantifies heat flow differences between sample and reference, detecting endothermic/exothermic processes including melting, crystallization, glass transitions, and curing reactions [29].

Thermomechanical Analysis (TMA): Monitors dimensional changes under mechanical stress, determining coefficients of thermal expansion, softening points, and viscoelastic properties [29].

These techniques find diverse applications in materials characterization:

- Thermal stability assessment for polymers and organic compounds

- Decomposition kinetics through multi-heating rate experiments

- Compositional analysis of complex mixtures and composites

- Phase transition identification and quantification

- Glass transition temperature determination in amorphous materials

Experimental Protocols

Sample Preparation:

- Mass: 5-20 mg typical for TGA/DSC; adjusted based on expected transitions

- Form: Powder, film, or small solid piece with good thermal contact

- Reference: Empty pan for TGA; matched inert material for DSC

- Atmosphere: Inert (N₂, Ar) for stability; oxidative (air, O₂) for oxidation studies

Temperature Program Design:

- Heating rates: 5-20°C/min standard; varied for kinetic studies

- Temperature range: Room temperature to 600-800°C for organic materials; higher for inorganics

- Isothermal segments: For time-dependent process investigation

- Gas switching: For studying oxidative stability or reaction mechanisms

Data Interpretation:

- TGA: Weight loss steps assigned to specific decomposition processes

- DSC: Peak integration for transition enthalpies; step changes for glass transitions

- TMA: Slope changes for expansion coefficients; dimensional shifts for transitions

Table 2: Thermal Analysis Applications in Material Characterization

| Technique | Measured Parameter | Information Obtained | Typical Research Application |

|---|---|---|---|

| TGA | Mass change | Thermal stability, composition, decomposition temperatures | Polymer degradation studies, filler content determination |

| DSC | Heat flow | Melting point, crystallization, glass transition, cure kinetics | Phase behavior analysis, polymorph identification |

| TMA | Dimension change | Expansion coefficients, softening temperature, viscoelasticity | Thin film stress analysis, composite interface studies |

| DTA | Temperature difference | Phase transitions, reaction temperatures | Ceramic sintering optimization, mineral identification |

Comparative Technique Analysis

Capability Comparison

The three characterization techniques provide complementary information critical for comprehensive materials analysis. The following table summarizes their distinct capabilities and typical applications:

Table 3: Technique Comparison for Material Characterization

| Parameter | SEM | XRD | Thermal Analysis |

|---|---|---|---|

| Primary Information | Surface morphology, elemental composition | Crystal structure, phase identification | Thermal stability, phase transitions, composition |

| Spatial Resolution | 1 nm to 1 μm | 10 nm to 100 μm (crystallite size) | Bulk measurement (mg quantities) |

| Depth Resolution | 1 nm to 1 μm | Surface to bulk (μm to mm) | Bulk measurement |

| Sample Environment | Vacuum typical; variable pressure available | Ambient, controlled atmosphere, in situ cells | Precise temperature control, various atmospheres |

| Quantitative Capability | Elemental composition via EDS | High (crystal structure, phase percentages) | High (mass changes, enthalpy, expansion coefficients) |

| Material Removal | Generally non-destructive | Non-destructive | Destructive (sample consumed) |

| Analysis Time | Minutes to hours | 30 min to several hours | 30 min to several hours |

| Key Limitations | Conductive coating often required, vacuum compatibility | Limited to crystalline materials, peak overlap issues | Bulk measurement, complex data interpretation for mixtures |

Synergistic Applications in Materials Research

The integration of multiple characterization techniques provides comprehensive insights that surpass the capabilities of individual methods. This synergistic approach proves particularly valuable in validating theoretically predicted materials:

Case Study 1: Functional Nanomaterial Development Research on β-Ga₂O₃ nanomaterials exemplifies technique integration, where XRD confirmed crystal structure and phase purity, SEM revealed nanorod morphology and size distribution, and photoluminescence spectroscopy correlated optical properties with structural features [31].

Case Study 2: Adsorbent Material Optimization In developing lanthanum-modified phosphate tailings ceramsite for wastewater treatment, researchers employed XRD to identify LaPO₄ formation, SEM to visualize surface morphology changes creating a dense nanomesh membrane, and XPS to confirm chemical state modifications - collectively explaining the enhanced phosphorus removal mechanism [32].

Case Study 3: Battery Material Evolution In situ XRD tracks real-time structural changes in electrode materials during battery operation, while post-cycling SEM analysis reveals morphological degradation, and thermal analysis assesses stability of cycled materials for safety evaluation [26].

Essential Research Reagents and Materials

Successful materials characterization requires specific consumables and standards to ensure data quality and reproducibility:

Table 4: Essential Materials for Material Characterization Experiments

| Item | Function/Purpose | Application Notes |

|---|---|---|

| Conductive Tapes/Cements | Sample mounting for SEM | Carbon tapes preferred for EDS analysis; silver paste for high conductivity needs |

| Sputter Coating Materials | Surface conductivity for non-conductive samples | Gold/palladium for high-resolution imaging; carbon for EDS analysis |

| Standard Reference Materials | Instrument calibration | Silicon powder for XRD line position; pure metals for SEM calibration |

| Sample Holders/Crucibles | Containment during analysis | Aluminum pans for DSC below 600°C; alumina for TGA to 1600°C |

| Calibration Standards | Quantitative analysis validation | NIST traceable standards for composition, temperature, and mass changes |

| Polishing Materials | Surface preparation | Diamond suspensions for metallographic sample preparation |

SEM, XRD, and thermal analysis represent three pillars of materials characterization, each providing distinct yet complementary information essential for validating theoretically predicted materials. SEM offers nanoscale visualization of surface morphology and elemental distribution; XRD delivers precise structural information about crystalline phases and orientation; while thermal analysis provides critical data on stability, transitions, and compositional properties. The synergistic application of these techniques enables comprehensive material validation, forming an essential toolkit for researchers transitioning from computational prediction to experimental realization. As materials systems grow increasingly complex, the integrated interpretation of data from these complementary techniques becomes ever more critical for advancing materials innovation across scientific and industrial domains.

High-Throughput Experimental (HTE) Platforms for Rapid Validation

High-Throughput Experimentation (HTE) has emerged as a transformative approach for accelerating the discovery and optimization of new materials and pharmaceutical compounds. By enabling the parallel execution of hundreds to thousands of experiments, HTE platforms provide the rapid validation capabilities essential for bridging theoretical predictions and practical realization in research. These systems are particularly valuable in the context of experimentally realizing theoretically predicted stable materials, where they enable researchers to efficiently test computational predictions across multidimensional parameter spaces. Modern HTE integrates advanced automation, sophisticated data analytics, and machine learning to navigate complex experimental landscapes, dramatically reducing development timelines from months to weeks while generating comprehensive datasets that capture both successful and failed experiments—crucial information for training robust AI models [33] [34] [35].

The evolution of HTE has been driven by limitations of traditional one-factor-at-a-time (OFAT) approaches, which often miss optimal conditions in high-dimensional spaces. As noted in a recent Nature Communications article, "HTE platforms, utilising miniaturised reaction scales and automated robotic tools, enable highly parallel execution of numerous reactions," making them "more cost- and time-efficient than traditional techniques relying solely on chemical intuition" [33]. This efficiency is particularly critical in pharmaceutical process development, where rapid optimization of multiple objectives (yield, selectivity, cost, safety) is required under demanding timelines.

Comparative Analysis of HTE Platforms

Table 1: Comparison of Representative HTE Platforms

| Platform Name | Type | Key Features | Primary Applications | Data Handling | Accessibility |

|---|---|---|---|---|---|

| Virscidian Analytical Studio | Commercial Software | Automated plate design, chemical database integration, vendor-neutral data processing | Reaction optimization, solubility screens, reaction monitoring | Seamless metadata flow, LC/MS data processing | Commercial license |

| Minerva ML Framework | ML-Driven Workflow | Bayesian optimization, handles large parallel batches (96-well), high-dimensional search spaces | Reaction optimization, pharmaceutical process development | SURF format, open-source code repository | Free, open-source |

| phactor | Academic Software | Rapid experiment design, liquid handling robot integration, machine-readable data output | Reaction discovery, direct-to-biology experiments, library synthesis | Standardized machine-readable format | Free academic use |

| HTE OS | Open-Source Workflow | Google Sheets integration, Spotfire analytics, chemical identifier translation | Reaction planning, execution, and analysis | Centralized sheet communication | Free, open-source |

| Swiss Cat+ RDI | FAIR Data Infrastructure | Semantic modeling (RDF), Kubernetes/Argo Workflows, Matryoshka files | Automated synthesis, multi-stage analytics, autonomous experimentation | FAIR principles, ontology-driven | Institutional implementation |

Performance Metrics and Experimental Validation

Table 2: Performance Comparison of HTE Platforms Based on Documented Applications

| Platform | Reported Performance | Experimental Scale | Key Advantages | Validation Method |

|---|---|---|---|---|

| Minerva ML | Identified conditions with >95% yield/selectivity for API syntheses; reduced optimization from 6 months to 4 weeks | 96-well HTE; 88,000 condition space | Handles high-dimensional spaces, batch constraints, and reaction noise | Successful scale-up to improved process conditions |

| Virscidian AS-Experiment Builder | Used by 80% of top 10 largest pharma companies; >50% of Fortune 500 pharma customers | 96-well plates and custom configurations | Vendor neutrality, template saving for iterations, streamlined visualization | Customer adoption metrics |

| phactor | Enabled discovery of low micromolar SARS-CoV-2 main protease inhibitor; multiple reaction discoveries | 24 to 1,536 wellplates | Rapid design-analysis cycle (ideation to results), minimal organizational load | Successful reaction discovery and optimization cases |

| HTE OS | Supports practitioners from experiment submission to results presentation | Not specified | Free, open-source, leverages familiar tools (Google Sheets) | Functional workflow description |

| Radiochemistry HTE | Achieved reliable quantification of 96 reactions; trends translated to 10x larger scale | 96-well blocks with 2.5 μmol scale | Uses commercial equipment; adapts to short-lived isotopes | Validation at larger scales |

HTE Workflow Architecture and Data Management

Standardized Experimental Workflow

The following diagram illustrates the core HTE workflow implemented across modern platforms, from experimental design through data analysis:

This workflow highlights the continuous cycle of modern HTE, where data storage feeds back into experimental design. As demonstrated in the Swiss Cat+ infrastructure, "each experimental step [is] captured in a structured, machine-interpretable format, forming a scalable, and interoperable data backbone" [34]. The integration of machine learning, particularly Bayesian optimization, creates a closed-loop system where experimental results directly inform subsequent experimental designs, maximizing the information gain from each iteration [33].

Data Infrastructure and FAIR Principles

Modern HTE platforms increasingly adopt FAIR (Findable, Accessible, Interoperable, Reusable) data principles to ensure research data integrity and utility. The Swiss Cat+ Research Data Infrastructure (RDI) exemplifies this approach, transforming "experimental metadata into validated Resource Description Framework (RDF) graphs using an ontology-driven semantic model" [34]. This infrastructure captures each experimental step in a structured format, ensuring data completeness and traceability by systematically recording both successful and failed experiments. Such comprehensive data collection is essential for creating "bias-resilient datasets essential for robust AI model development" [34].

Similar approaches are seen in community resources like CatTestHub, which provides "a standardized open-access database for catalytic benchmarking" with unique identifiers that enhance data traceability following FAIR principles [36]. These standardized data formats enable seamless sharing between research groups and computational pipelines, creating a foundation for collaborative materials development.

Experimental Protocols and Methodologies

Machine Learning-Driven Reaction Optimization

The Minerva framework demonstrates a sophisticated protocol for ML-guided reaction optimization:

Experimental Design: Define a discrete combinatorial set of plausible reaction conditions guided by domain knowledge and practical constraints, automatically filtering impractical conditions (e.g., temperatures exceeding solvent boiling points) [33].

Initial Sampling: Employ algorithmic quasi-random Sobol sampling to select initial experiments, maximizing reaction space coverage to increase the likelihood of discovering optimal regions [33].

ML Model Training: Train Gaussian Process (GP) regressors on experimental data to predict reaction outcomes and their uncertainties for all possible conditions [33].

Acquisition Function: Apply scalable multi-objective acquisition functions (q-NParEgo, TS-HVI, q-NEHVI) to balance exploration of unknown regions with exploitation of promising areas, selecting the next batch of experiments [33].

Iterative Refinement: Repeat the process through multiple iterations, with chemists integrating evolving insights with domain expertise to fine-tune the exploration-exploitation balance [33].

This protocol was successfully applied to a nickel-catalyzed Suzuki reaction optimization, exploring a search space of 88,000 possible conditions where "traditional chemist-designed HTE plates failed to find successful reaction conditions" [33].

Radiochemistry HTE Workflow

A specialized HTE protocol for radiochemistry addresses the unique challenges of working with short-lived isotopes: