From Code to Lab: A Guide to Experimental Validation of Computationally Discovered Materials

This article provides a comprehensive framework for the experimental validation of computationally discovered materials, a critical bottleneck in modern materials science.

From Code to Lab: A Guide to Experimental Validation of Computationally Discovered Materials

Abstract

This article provides a comprehensive framework for the experimental validation of computationally discovered materials, a critical bottleneck in modern materials science. Tailored for researchers and scientists, it explores the foundational partnership between computation and experiment, details cutting-edge methodologies from high-throughput screening to AI-driven automation, and addresses pervasive challenges in synthesis reproducibility and data integration. By presenting real-world case studies and comparative analyses of validation frameworks, this guide aims to equip professionals with the strategies needed to successfully transition virtual predictions into tangible, high-performance materials for advanced applications, from energy storage to biomedical devices.

The New Paradigm: How Computation is Redefining Materials Discovery

A profound transformation is reshaping the scientific landscape, fundamentally inverting the traditional discovery process. The established model of hypothesis-driven experimentation, often reliant on resource-intensive trial-and-error, is increasingly being supplanted by a predictions-led research paradigm. This inversion is most evident in fields like materials science and drug development, where researchers now leverage advanced computational models to predict promising candidates with desired properties before any physical experiment is conducted. This approach is underpinned by the integration of machine learning (ML), high-throughput computation, and active learning strategies, which together guide and optimize experimental validation, dramatically accelerating the path to discovery [1] [2].

The core of this shift lies in the ability of machine learning models to analyze vast datasets and uncover complex relationships between chemical composition, structure, and material properties. Where traditional methods like density functional theory (DFT) are computationally expensive and slow, ML models trained on existing data can provide rapid, preliminary assessments, ensuring that only the most promising candidates undergo detailed experimental analysis [2]. This new paradigm is not merely an incremental improvement but represents an order-of-magnitude expansion in efficiency and capability, enabling the exploration of chemical spaces that were previously intractable [3].

Comparative Analysis: Traditional vs. Predictions-Led Workflows

The following table summarizes the fundamental differences between the traditional and modern, predictions-led research methodologies.

Table 1: A comparison of traditional trial-and-error and predictions-led research frameworks.

| Aspect | Traditional Trial-and-Error Research | Predictions-Led Research |

|---|---|---|

| Primary Workflow | Hypothesis → Experimentation → Analysis → Discovery | Data → ML Prediction → Targeted Experimentation → Validation & Discovery |

| Key Drivers | Chemical intuition, literature, serendipity | Graph Neural Networks (GNNs), Generative Models, High-Throughput Screening [3] [2] |

| Exploration Efficiency | Low; narrow focus based on existing knowledge | High; broad, unbiased exploration of vast chemical spaces [3] |

| Resource Consumption | High (time, cost, materials) for extensive lab work | Lower; computationally pre-screened candidates reduce failed experiments [2] |

| Typical Discovery Rate | Slow, with high risk of dead ends | Accelerated; models can identify millions of stable candidates [3] |

| Role of Experimentation | Primary tool for discovery and validation | Final validation step for computationally predicted candidates |

This inversion from a discovery-led to a prediction-led process creates a powerful data flywheel. As predictions are validated through experiments, the results feed back into the computational models, refining their accuracy and guiding the next cycle of discovery in an iterative process of active learning [1] [3].

Experimental Validation of Computationally Discovered Materials

The true test of any predictive model is its experimental validation. The following case studies demonstrate how computationally discovered materials are confirmed through rigorous experimental protocols, bridging the digital-physical divide.

Case Study 1: Discovery of Novel Superconductors

The InvDesFlow-AL framework, an active learning-based generative model, was designed for the inverse design of functional materials, including high-temperature superconductors [1].

Experimental Protocol: The validation of computationally discovered superconductors follows a multi-stage protocol:

- Inverse Design & Prediction: A generative model produces new crystal structures that are predicted to meet target performance constraints, such as high superconducting transition temperatures (T_c) [1].

- Stability Validation: The thermodynamic stability of predicted crystals is assessed via Density Functional Theory (DFT) calculations, verifying low formation energy and confirming stability (e.g., atomic forces below 1e-4 eV/Å) [1].

- Property Verification: For superconductors, key properties like the electron-phonon coupling and T_c are calculated using higher-fidelity methods beyond standard DFT.

- Synthesis & Characterization: Successful candidates are synthesized in the lab (e.g., under high pressure for hydrides) and characterized using techniques like X-ray diffraction to confirm crystal structure and electrical transport measurements to verify superconductivity.

Validation Outcome: Using this protocol, InvDesFlow-AL successfully identified Li₂AuH₆ as a conventional BCS superconductor with a predicted ultra-high transition temperature of 140 K under ambient pressure. The framework also discovered several other materials with transition temperatures exceeding theoretical limits and within the liquid nitrogen range [1].

Case Study 2: Scaling Deep Learning for Stable Crystal Discovery

The GNoME (Graph Networks for Materials Exploration) project from Google DeepMind showcases the power of scale in ML-driven discovery [3].

Experimental Protocol:

- Candidate Generation: Diverse candidate crystal structures are generated using methods like symmetry-aware partial substitutions (SAPS) and random structure search.

- ML Filtration: Graph Neural Network (GNN) models predict the stability (decomposition energy) of these candidates.

- DFT Verification: The energy of filtered candidates is computed using DFT with standardized settings, verifying model predictions.

- Iterative Active Learning: The results from DFT are fed back to train more robust models in the next round.

Validation Outcome: This process led to the discovery of 2.2 million new crystal structures stable with respect to previous datasets. Of these, 381,000 exist on the updated convex hull of stable materials, expanding the number of known stable crystals by almost an order of magnitude. The final GNoME models achieved a remarkable precision (hit rate) of over 80% for predicting stable structures [3].

Case Study 3: Neural Network Prediction for Engineering Systems

Beyond materials science, the paradigm is validated in engineering applications. One study developed a soft sensor and neural network model to predict natural ventilation (NV) airflow rates in buildings [4].

Experimental Protocol:

- Data Collection: Data (indoor/outdoor temperatures, window openings, wind speed/direction) were collected over months from a building management system (BMS).

- Soft Sensor Validation: A soft sensor based on a thermal zone sensible heat balance was validated against CO₂ decay measurements, achieving an average error of 27%.

- ANN Model Training & Validation: An Artificial Neural Network (ANN), structured as a multi-layer perceptron (MLP), was trained on soft sensor data. Its predictions were then directly validated against CO₂ decay measurements.

Validation Outcome: The ANN model predicted NV airflow rates with a Mean Absolute Percentage Error (MAPE) of ~30%, demonstrating moderate accuracy and providing a cost-effective alternative to complex CFD simulations [4].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The predictions-led research paradigm relies on a suite of computational and experimental tools. The table below details key resources essential for conducting such research.

Table 2: Key research reagents, tools, and resources for predictions-led discovery and validation.

| Tool/Resource | Function/Brief Explanation | Example Applications |

|---|---|---|

| Graph Neural Networks (GNNs) | ML models that operate on graph-structured data, ideal for representing atomic structures and predicting material properties [3]. | Predicting crystal stability and formation energy [3]. |

| Generative Models (GANs, VAEs, Diffusion) | AI models that generate novel, valid material structures that meet specific target property constraints (inverse design) [1] [2]. | Designing new superconductors and functional materials with tailored properties [1]. |

| Density Functional Theory (DFT) | A computational quantum mechanical method used to investigate the electronic structure of many-body systems, providing high-fidelity validation of stability and properties [1] [3]. | Final validation of predicted material stability and energy calculations [1]. |

| Active Learning Frameworks | Iterative workflows where ML models select the most informative data points for calculation, optimizing the learning process [1]. | Guiding the discovery process towards desired performance characteristics efficiently [1]. |

| High-Throughput Computing | Automated, large-scale computational screening of material candidates using either DFT or fast ML force fields [5]. | Rapidly screening millions of candidate structures for stability [3]. |

| Vienna Ab initio Simulation Package (VASP) | A popular software package for performing DFT calculations [1]. | Performing structural relaxation and energy calculations for crystals [1]. |

| PyTorch/TensorFlow | Open-source libraries used for building and training deep learning models [1]. | Developing and training custom GNNs and other ML models for material property prediction [1]. |

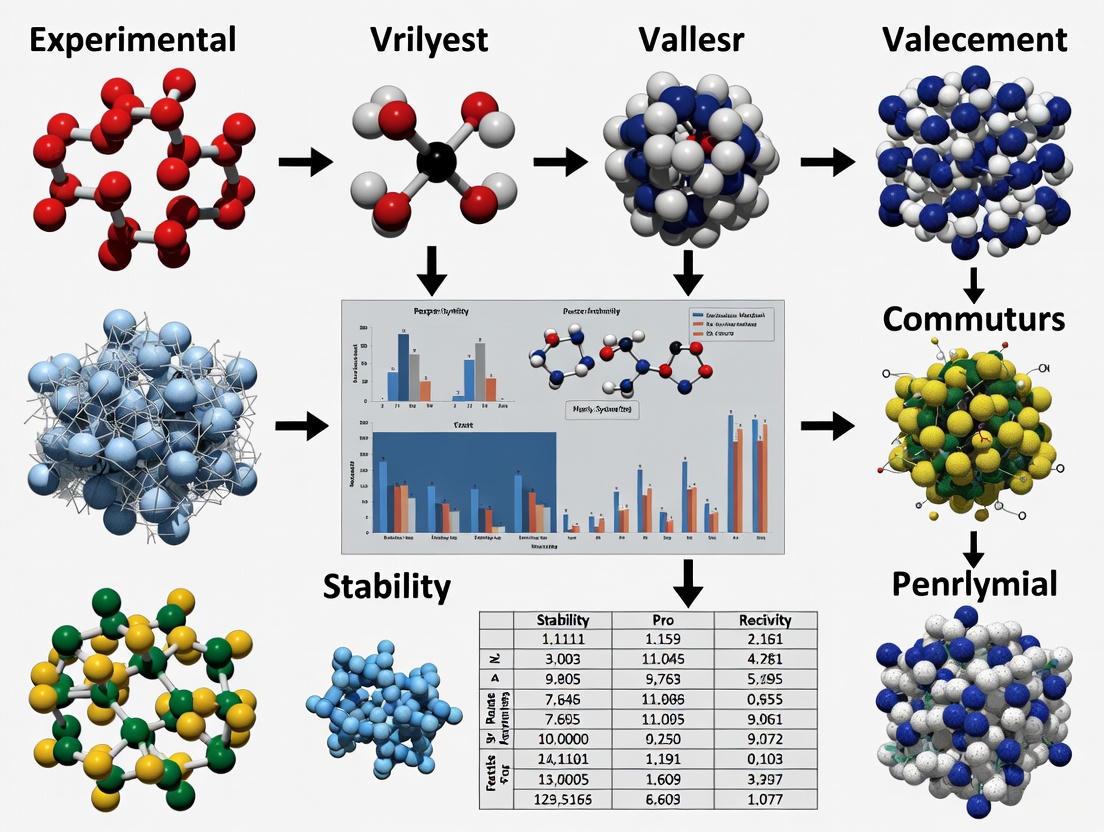

Workflow Visualization: The Predictions-Led Discovery Pipeline

The following diagram illustrates the integrated, cyclical workflow that characterizes modern predictions-led research, from initial data aggregation to final experimental validation.

The inversion from trial-and-error to predictions-led research marks a pivotal advancement in science and engineering. The comparative data and experimental validations presented in this guide consistently demonstrate that this paradigm enhances efficiency, reduces costs, and unlocks previously inaccessible regions of discovery space. As machine learning models continue to improve through scaling laws and active learning, and as automated robotic laboratories become more prevalent, the cycle of prediction and validation will only accelerate [3] [2].

The future of discovery lies in the tight integration of computation and experiment, creating a continuous, self-improving loop. This synergy is transforming the role of researchers, empowering them to move from being manual explorers of the scientific unknown to strategic architects who design and guide intelligent systems towards groundbreaking discoveries. This is not the end of experimentation, but its elevation, ensuring that every experiment counts.

The field of materials science is undergoing a profound transformation, moving from a paradigm reliant on serendipity and iterative experimentation to one driven by computational prediction and data-driven discovery. This shift is powered by the convergence of three key technologies: High-Performance Computing (HPC), Artificial Intelligence (AI), and expansive, FAIR (Findable, Accessible, Interoperable, and Reusable) databases. HPC provides the unprecedented computational power required to simulate complex material properties and train sophisticated AI models. AI algorithms, in turn, can navigate vast combinatorial spaces to identify promising new materials and optimize experimental designs. Underpinning this synergy are the growing materials databases that feed AI models with the high-quality data necessary for accurate predictions. This guide objectively compares the leading computational products and platforms enabling this new era of materials research, with a specific focus on their application in the experimental validation of computationally discovered materials.

Quantitative evidence underscores the power of this convergence. A large-scale study analyzing over five million scientific publications found that research combining AI and HPC was up to three times more likely to introduce novel concepts and five times more likely to be among the top 1% of most-cited papers compared to conventional research [6]. In disciplines like Biochemistry, Genetics, and Molecular Biology, nearly 5% of AI+HPC papers reached this elite citation status [6]. This demonstrates that the combination is not merely an incremental improvement but a fundamental engine for breakthrough science.

Quantitative Comparison of HPC-AI Solutions and Databases

Selecting the right infrastructure is critical for the demanding workflow of computational materials discovery. The following tables provide a detailed, data-driven comparison of leading HPC-AI platforms and database management systems, highlighting their performance in key areas relevant to materials research.

Table 1: Comparative Analysis of Leading AI-HPC Solutions for Materials Research (2025)

| Solution | Best For | Key Hardware & Features | Performance & Scalability | Pricing & Cost Considerations |

|---|---|---|---|---|

| NVIDIA DGX Cloud [7] | Large-scale AI training, Generative AI | Multi-node H100/A100 GPU clusters, NVIDIA AI Enterprise suite | Industry-leading GPU acceleration, seamless scalability for AI training | Custom pricing; expensive for small businesses |

| Microsoft Azure HPC + AI [7] | Enterprise hybrid environments | InfiniBand clusters, native PyTorch/TensorFlow, Azure Machine Learning | Strong hybrid cloud support, enterprise-grade security | Starts ~$0.50/hr; costs can scale quickly with usage |

| AWS ParallelCluster [7] | Flexible AI research | Elastic Fabric Adapter (EFA) for low latency, auto-scaling, AWS SageMaker | High flexibility, tight AWS AI ecosystem integration | Pay-per-use; potential hidden costs in storage/networking |

| Google Cloud TPU v5p [7] | Machine/Deep Learning research | Cloud TPU v5p accelerators, AI-optimized VMs, Vertex AI integration | Best-in-class TPU performance for ML training and inference | Starts ~$8/TPU hour; less ideal for non-ML HPC workloads |

| HPE Cray EX [8] [7] | National labs, exascale R&D | Exascale architecture, Slingshot interconnect, liquid cooling | Extreme power for largest AI models, energy-efficient design | Very high custom cost; impractical for small-to-medium entities |

| IBM Spectrum LSF & Watsonx [7] | Regulated industries (e.g., healthcare) | AI workload scheduling, integration with Watsonx for AI governance | Strong governance, compliance, and hybrid deployment | Enterprise licensing; steeper learning curve |

Table 2: Database Management Systems for Materials Data (2025)

| System | Type | Key Features for Materials Science | Performance Highlights | Best Suited For |

|---|---|---|---|---|

| PostgreSQL [9] | Relational (RDBMS) | Extensible (e.g., PostGIS, TimescaleDB), native JSONB, parallel queries | High performance for complex queries, open-source | SaaS platforms, analytics, cloud-native apps |

| MongoDB Atlas [9] | NoSQL (Document) | Document model, aggregation pipeline, vector search for GenAI | Real-time replication and sharding | Agile development, IoT, handling diverse data forms |

| Amazon Aurora [9] | Relational (Cloud) | MySQL/PostgreSQL compatible, auto-scaling, multi-AZ replication | Up to 5x faster than standard MySQL, millisecond latency | Cloud-first businesses, global data replication |

| Snowflake [9] | Cloud Data Warehouse | Unistore (transactional/analytical), near-infinite compute scalability, Snowpark for Python/SQL | Elastic compute separates storage and compute | Analytics, data lakes, GenAI integration on cloud data |

| IBM Db2 [9] | Relational | BLU Acceleration for in-memory, native ML integration | High-speed in-memory querying | Financial services, enterprise-grade security |

Experimental Protocols for Validating Computationally Discovered Materials

The ultimate test of any computational prediction is experimental validation. The following section details specific methodologies and workflows that have successfully bridged the digital-physical divide.

Case Study 1: Autonomous Discovery with the CRESt Platform

The Copilot for Real-world Experimental Scientists (CRESt) platform, developed by MIT researchers, is a landmark example of a closed-loop system for materials discovery and validation [10]. Its workflow integrates multimodal AI and robotic experimentation.

- Experimental Objective: To discover a high-performance, low-cost multielement catalyst for direct formate fuel cells [10].

- Computational & AI Methodology:

- Multimodal Knowledge Integration: The system's active learning models were guided not only by experimental data but also by information extracted from scientific literature, chemical compositions, and microstructural images [10].

- Hypothesis Generation: AI performed a principal component analysis in a "knowledge embedding space" to define a reduced search space, which was then explored using Bayesian optimization to design new material recipes involving up to 20 precursor molecules [10].

- Robotic Synthesis & Testing: A liquid-handling robot and a carbothermal shock system synthesized the proposed material chemistries. An automated electrochemical workstation then conducted performance testing [10].

- Validation & Results: Over three months, CRESt explored over 900 chemistries and conducted 3,500 electrochemical tests. It discovered an eight-element catalyst that delivered a record power density for a working direct formate fuel cell while containing just one-fourth the precious metals of previous benchmarks. This represented a 9.3-fold improvement in power density per dollar over pure palladium [10].

The diagram below illustrates the continuous, closed-loop workflow of the CRESt platform.

Case Study 2: Developing Machine Learning Surrogate Models at Argonne National Laboratory

Researchers at Argonne National Laboratory demonstrated a protocol for creating and validating machine learning surrogate models to bypass prohibitively expensive simulations, with a focus on calculating material "stopping power" [11].

- Experimental Objective: To create a fast, accurate ML surrogate model for Time-dependent Density Functional Theory (TD-DFT) calculations of stopping power [11].

- Computational & AI Methodology:

- Data Collection & Curation: Raw TD-DFT data was retrieved from the Materials Data Facility (MDF), a scalable repository for materials science data [11].

- Data Processing & Representation: Data was processed on the ALCF Cooley system using the Parsl parallel scripting library. A critical step was "representation," where atomic structure data was translated into a finite-length vector of key variables correlating with the force on a projectile [11].

- Model Training & Selection: Using Jupyter notebooks on ALCF's JupyterHub, researchers trained and compared algorithms (linear models and neural networks). The best model was selected based on highest prediction accuracy, speed, and differentiability, validated via cross-validation and a hold-out test set [11].

- Validation & Results: The project successfully created a surrogate model that could interactively and accurately predict stopping power, extending original results to model direction dependence in Aluminum. The workflow was streamlined using the Globus platform for data search, transfer, and authentication, demonstrating a scalable pipeline for surrogate model development [11].

The diagram below outlines this data-driven surrogate model development workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

Beyond the major platforms, successful computational and experimental workflows rely on a suite of essential "research reagents" – the software, data, and infrastructure that enable modern materials science.

Table 3: Essential Tools for Computational Materials Discovery

| Tool / Resource | Category | Function in the Research Workflow |

|---|---|---|

| Globus Platform [11] | Data Infrastructure | Simplifies secure, reliable data movement, sharing, and identity management across distributed computing resources and storage systems. |

| Materials Data Facility (MDF) [11] | Data Repository | A scalable, community-focused repository for publishing, preserving, discovering, and sharing materials science data of all sizes. |

| Parsl [11] | Parallel Programming | A Python library for parallel scripting, enabling researchers to easily parallelize computational workflows on HPC and cloud systems. |

| DataPerf [12] | AI Benchmarking | A benchmark suite for data-centric AI development, shifting focus from model refinement to dataset quality improvement. |

| Flash Attention [12] | AI Algorithm Optimization | A fast and memory-efficient GPU implementation of the attention mechanism, crucial for speeding up transformer model training. |

| SAM 2 (Segment Anything Model 2) [13] | Computer Vision | A state-of-the-art AI model for image and video segmentation, with applications in analyzing microstructural images from microscopy. |

The confluence of HPC, AI, and databases is no longer a futuristic concept but the operational backbone of modern materials science. As evidenced by the quantitative data and experimental case studies, research that strategically integrates these three drivers achieves significantly higher impact and accelerates the path from hypothesis to validated discovery. The trend is clear: the future lies in increasingly tightly-integrated systems, such as the CRESt platform, where AI not only suggests candidates but also actively plans and learns from experiments conducted on HPC-driven robotic systems, all fed by continuously growing, FAIR-compliant databases. For researchers, the critical task is to thoughtfully assemble their toolkit from the available best-in-class solutions, balancing raw performance with data accessibility and workflow integration to tackle the next generation of materials challenges.

The modern workflow from virtual screening to lab synthesis represents a paradigm shift in materials and drug discovery, moving from sequential, isolated steps to a highly integrated, data-driven pipeline. This convergence of computational prediction and experimental validation is crucial for reducing attrition rates and accelerating the development of novel materials and therapeutics. By leveraging artificial intelligence (AI), high-throughput automation, and cross-disciplinary frameworks, researchers can now navigate vast chemical spaces with unprecedented efficiency and precision. This guide objectively compares the performance of various computational and experimental approaches at each stage of the discovery workflow, supported by quantitative benchmarking data and experimental validation metrics. The integrated pipeline aligns with the broader thesis that experimental validation is not merely a final verification step but an essential component that actively informs and refines computational predictions, thereby creating a virtuous cycle of discovery and optimization [14] [15].

Core Workflow Stages and Performance Comparison

The journey from in silico prediction to tangible material or drug candidate involves several critical stages, each with distinct methodologies and performance metrics. The workflow is fundamentally iterative, where experimental outcomes continuously refine computational models.

Stage 1: Virtual Screening and Structure-Based Design

Objective: To computationally identify and prioritize candidate molecules or materials with a high probability of possessing desired properties from vast virtual libraries.

Performance Comparison: The efficacy of virtual screening is highly dependent on the chosen docking tools and the incorporation of machine learning-based re-scoring. Benchmarking studies against specific protein targets provide clear performance differentials.

Table 1: Performance Benchmarking of Docking and ML Re-scoring Tools for PfDHFR Variants

| Docking Tool | ML Re-scoring Function | Target Variant | Performance Metric (EF 1%) | Key Finding |

|---|---|---|---|---|

| PLANTS | CNN-Score | Wild-Type (WT) PfDHFR | 28 | Demonstrated the best enrichment for the WT variant [16] |

| FRED | CNN-Score | Quadruple-Mutant (Q) PfDHFR | 31 | Achieved the best enrichment against the resistant variant [16] |

| AutoDock Vina | RF-Score-VS v2 / CNN-Score | WT & Q PfDHFR | Improved to better-than-random | Re-scoring significantly improved performance from worse-than-random [16] |

Supporting Experimental Data: The use of multi-state modeling (MSM) for kinases, which accounts for different conformational states (e.g., DFG-in, DFG-out), has been shown to enhance virtual screening outcomes. In benchmarks, an MSM approach for AlphaFold2-generated kinase structures consistently outperformed standard AlphaFold2 and AlphaFold3 models in pose prediction accuracy and, crucially, in identifying diverse hit compounds during virtual screening [17]. This is particularly valuable for overcoming the structural bias in experimental databases toward certain states (e.g., 87% of human kinase structures are DFG-in) and for discovering inhibitors for resistant variants [17].

Stage 2: AI-Driven Optimization and Lead Development

Objective: To rapidly optimize prioritized hits into leads with improved potency, selectivity, and developability profiles.

Performance Comparison: This stage has been dramatically accelerated by AI and high-throughput experimentation (HTE). Traditional hit-to-lead (H2L) cycles that took months can now be compressed into weeks.

Table 2: Comparison of Traditional vs. AI-Accelerated Optimization

| Method | Timeline | Key Output | Representative Result |

|---|---|---|---|

| Traditional Medicinal Chemistry | Months | Incremental potency improvement | N/A |

| AI-Guided Scaffold Enumeration & HTE | Weeks | Significant potency and selectivity gains | Sub-nanomolar MAGL inhibitors with >4,500-fold potency improvement over initial hits [18] |

| Explainable AI (SHAP Analysis) | N/A | Interpretable structure-property relationships | Design of Multiple Principal Element Alloys (MPEAs) with superior mechanical strength [19] |

Supporting Experimental Data: The power of a data-driven framework is exemplified in the design of novel metallic materials. Researchers at Virginia Tech used explainable AI (SHAP analysis) to understand how different elements influence the properties of multiple principal element alloys (MPEAs). This approach not only predicted promising new alloys but also provided scientific insights that transform the traditional "trial-and-error" design process into a predictive one [19].

Stage 3: Experimental Synthesis and Autonomous Validation

Objective: To synthesize, characterize, and validate the top-predicted candidates in the laboratory.

Performance Comparison: Autonomous laboratories represent the pinnacle of integration, bridging the gap between computational screening speed and experimental realization.

Table 3: Synthesis Success Rates of Autonomous vs. Traditional Methods

| Synthesis Approach | Targets Attempted | Success Rate | Key Enabling Factors |

|---|---|---|---|

| Traditional (Human-Guided) | N/A | Slow and resource-intensive | Human intuition and manual experimentation |

| A-Lab (Autonomous) | 58 novel compounds | 71% (41 compounds) | Robotics, literature-data ML, and active learning (ARROWS3) [15] |

Supporting Experimental Data: The A-Lab, an autonomous laboratory for solid-state synthesis, successfully realized 41 of 58 target novel compounds over 17 days. Its success was driven by a workflow that integrated robotics with computational screening (Materials Project), ML-based recipe generation from historical literature, and active learning. When initial recipes failed, the active learning algorithm (ARROWS3) used observed reaction data and thermodynamic driving forces to propose improved synthesis routes, successfully optimizing six targets that had zero initial yield [15]. This demonstrates a closed-loop workflow where experimental outcomes directly inform and refine subsequent computational planning.

Stage 4: Pre-Clinical and Clinical Toxicity Prediction

Objective: To identify compounds with a high risk of toxicity or clinical trial failure as early as possible.

Performance Comparison: While not a laboratory synthesis step, predicting clinical outcomes is a critical validation of a candidate's translational potential. Traditional drug-likeness rules are conservative and limited in their predictive power for clinical toxicity.

Table 4: Comparison of Clinical Toxicity Prediction Methods

| Prediction Method | Features Used | Performance (AUC) | True Negative Rate (TNR) |

|---|---|---|---|

| Lipinski's Rule of 5 | Molecular structure (4 rules) | N/A | 27% [20] [21] |

| Veber's Rule | Molecular structure | N/A | 92% (but overly conservative) [20] [21] |

| PrOCTOR Score | Molecular structure + Target properties (e.g., expression, connectivity) | 0.8263 | 74.1% [20] [21] |

Supporting Experimental Data: The data-driven PrOCTOR model integrates a compound's structural properties with its target's biological features (e.g., tissue expression levels, network connectivity). This "moneyball" approach significantly outperforms traditional rules in distinguishing FDA-approved drugs from those that failed clinical trials for toxicity (FTT), providing a more robust, data-driven strategy to de-risk the pipeline before costly clinical trials begin [20] [21].

Essential Workflow Visualization

The following diagram synthesizes the core stages of the integrated discovery workflow, highlighting the continuous feedback loop between computation and experiment.

Detailed Experimental Protocols

Protocol: Structure-Based Virtual Screening with ML Re-scoring

This protocol is adapted from benchmarking studies on Plasmodium falciparum Dihydrofolate Reductase (PfDHFR) [16].

Protein Preparation:

- Obtain the target protein structure from the PDB (e.g., 6A2M for WT PfDHFR).

- Using software like OpenEye's "Make Receptor," remove water molecules, unnecessary ions, and redundant chains.

- Add and optimize hydrogen atoms. Save the prepared structure in the required format for docking (e.g., PDB, OEBinary).

Ligand/Compound Library Preparation:

- Prepare a library of known actives and decoys (e.g., from DEKOIS 2.0).

- Generate multiple low-energy conformers for each molecule using a tool like Omega.

- Convert the final structures to the appropriate file formats for docking (e.g., PDBQT for AutoDock Vina, MOL2 for PLANTS).

Molecular Docking:

- Define the docking grid box centered on the protein's active site with dimensions sufficient to cover all relevant residues.

- Perform docking using one or more tools (e.g., AutoDock Vina, FRED, PLANTS) using their default search parameters and scoring functions.

Machine Learning Re-scoring:

- Extract the top poses generated by each docking tool.

- Re-score these poses using pre-trained machine learning scoring functions (ML-SFs) such as CNN-Score or RF-Score-VS v2.

- Re-rank the screened compounds based on the ML-SF scores.

Performance Evaluation:

- Assess the screening performance using metrics like Enrichment Factor at 1% (EF 1%), area under the ROC curve (pROC-AUC), and chemotype enrichment plots to evaluate the ability to retrieve diverse, high-affinity actives.

Protocol: Autonomous Synthesis and Characterization (A-Lab Protocol)

This protocol outlines the autonomous workflow for synthesizing novel inorganic powders, as demonstrated by the A-Lab [15].

Target Selection and Recipe Proposals:

- Select target materials predicted to be stable by large-scale ab initio databases (e.g., Materials Project).

- Generate initial synthesis recipes using natural language processing (NLP) models trained on historical literature data. These models propose precursors based on chemical similarity to known materials.

- Propose synthesis temperatures using a second ML model trained on heating data from the literature.

Robotic Synthesis Execution:

- A robotic station dispenses and mixes the calculated masses of precursor powders.

- The mixture is transferred into an alumina crucible.

- A robotic arm loads the crucible into a box furnace for heating according to the proposed temperature profile.

Automated Sample Characterization:

- After cooling, the sample is robotically transferred to a station where it is ground into a fine powder.

- The powder is characterized by X-ray diffraction (XRD).

Automated Data Analysis and Active Learning:

- The XRD pattern is analyzed by probabilistic ML models to identify phases and their weight fractions via automated Rietveld refinement.

- If the target yield is below a threshold (e.g., <50%), an active learning algorithm (ARROWS3) is triggered.

- This algorithm uses the observed reaction products and thermodynamic data from the Materials Project to propose new, optimized synthesis recipes (e.g., by avoiding intermediates with low driving force to form the target).

- The loop (steps 2-4) continues until the target is synthesized or all recipe options are exhausted.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 5: Essential Tools and Reagents for the Integrated Workflow

| Tool/Reagent Category | Specific Examples | Function in the Workflow | Context of Use |

|---|---|---|---|

| Computational Screening & AI | AutoDock Vina, FRED, PLANTS, CNN-Score, RF-Score-VS v2, PrOCTOR, AlphaFold2/3 (with MSM) | Predicts binding affinity, generates novel molecular structures, and estimates toxicity or stability. | Virtual screening, lead optimization, and de-risking candidates [16] [17] [20]. |

| Precursor & Compound Libraries | Commercially Available Building Blocks, DEKOIS 2.0 Benchmark Sets, Enamine, MCULE | Provides the chemical starting points for virtual screening and experimental synthesis. | Initial stages of discovery for both drugs and materials [16] [14]. |

| Automation & Robotics | Automated Powder Dispensing Systems, Robotic Arms (A-Lab), Box Furnaces with Auto-loading | Enables high-throughput and reproducible execution of synthesis and sample preparation. | Accelerated synthesis and characterization in autonomous laboratories [15]. |

| Analytical & Characterization | X-ray Diffraction (XRD), Automated Rietveld Refinement, Cellular Thermal Shift Assay (CETSA), High-Resolution Mass Spectrometry | Characterizes synthesis products, confirms crystal structure, and validates target engagement in a physiologically relevant context. | Critical for experimental validation of synthesized materials and drug candidates [15] [18]. |

| Data Analysis & Active Learning | SHAP Analysis, ARROWS3 Algorithm, Bayesian Optimization | Interprets AI model decisions and uses experimental data to propose the next best experiment. | Closes the loop between computation and experiment, guiding optimization [19] [15]. |

The Validation Imperative in Discovery

In the modern research pipeline, the path from computational prediction to real-world application is paved with experimental validation. This step confirms that a theoretically promising target or material is directly involved in the intended biological process or possesses the predicted physical properties, establishing its true potential [22] [23]. In drug discovery, a failure to rigorously validate a target at an early stage is strongly linked to costly failures in late-stage clinical trials [22] [23]. Similarly, in materials science, computational screening identifies candidates, but only experimental measurement can confirm their real-world performance [24]. Validation is thus the critical, non-trivial bridge between digital hypotheses and tangible breakthroughs.

Experimental Validation in Drug Discovery

In drug development, target validation is the process that confirms whether modulating a specific biological entity (like a protein or gene) offers a potential therapeutic benefit [22]. It provides the crucial proof that the target is not merely correlated with a disease, but is causally involved in its mechanism.

Key Experimental Methodologies

A multi-faceted approach is employed to validate drug targets, combining cellular, genetic, and in vivo techniques [22] [23].

- Cell-Based Assays: These involve cultivating cells in a controlled environment to observe their response to drug compounds. A prominent example is the Cellular Thermal Shift Assay (CETSA), which measures drug-target engagement inside cells by quantifying how a drug interaction alters the protein's thermal stability [22].

- Genetic Manipulation: Techniques like RNA interference (RNAi) and gene knockouts are used to suppress or deactivate a target gene. The subsequent analysis of the resulting phenotype (e.g., changes in cellular fitness or proliferation) helps confirm the target's role in the disease pathway [22].

- In Vivo Validation: Mouse models, including tumor cell line xenografts, are a highly reliable system for confirming that a compound can interact with and impact its target within a complex living organism [22].

- Quantitative Polymerase Chain Reaction (qPCR): This technique is used to examine the expression profiles of specific genes, providing crucial insights into how drug treatments affect gene expression levels and the downstream signaling pathways of the presumed target [22].

Comparative Analysis of Validation Techniques

The table below summarizes the core methodologies, highlighting their applications and limitations to guide researchers in selecting the appropriate tools.

Table: Comparison of Key Experimental Validation Techniques in Drug Discovery

| Technique | Primary Application | Key Advantages | Inherent Challenges / Limitations |

|---|---|---|---|

| Cellular Assays (e.g., CETSA) [22] | Measuring drug-target engagement & protein stability in a cellular environment. | Preserves the native cellular environment; allows for high-throughput screening. | Results may not fully translate to the complexity of a whole organism. |

| Genetic Manipulation (e.g., RNAi, Knockouts) [22] [23] | Establishing causal relationship between target & disease phenotype. | Powerful for demonstrating target necessity and function. | Risk of off-target effects; compensatory mechanisms may obscure results. |

| In Vivo Models (e.g., Mouse Xenografts) [22] | Confirming target impact & therapeutic effect in a whole living system. | Provides critical data on efficacy, pharmacokinetics, and toxicity in a whole organism. | Time-consuming, costly, and animal models may not perfectly mirror human physiology. |

| Quantitative PCR (qPCR) [22] | Monitoring downstream gene expression & signaling pathway changes. | Highly sensitive and quantitative; widely accessible technology. | Shows correlation but not direct binding; downstream effects can be complex. |

| Thermal Proteome Profiling (TPP) [23] | Proteome-wide identification of drug-target engagement. | Unbiased, system-wide view of interactions directly in cells or tissues. | Computationally intensive; requires sophisticated mass spectrometry infrastructure. |

Diagram: The Multi-Modal Workflow of Target Validation in Drug Discovery

Case Study: Validating a Computationally Discovered Material

The principles of computational discovery and experimental validation extend beyond biology into materials science. A 2025 study exemplifies this process, where high-throughput ab initio calculations were used to screen for high-refractive-index dielectric materials suitable for visible-range photonics [24].

From Virtual Screening to Measured Properties

The research team performed density functional theory (DFT) calculations on 1693 unary and binary materials, identifying 338 semiconductors for further analysis [24]. Their screening highlighted hafnium disulfide (HfS₂), an anisotropic van der Waals material, as a super-Mossian candidate predicted to exhibit a high in-plane refractive index (above 3) and low optical losses across the visible spectrum [24].

Experimental Validation Protocol:

- Imaging Ellipsometry: The complex refractive index tensor of exfoliated HfS₂ was experimentally measured using imaging ellipsometry. This step was critical to confirm the BSE+ calculations of low losses and a high refractive index in the visible range [24].

- Nanofabrication and Stability Management: A fabrication process was developed to create HfS₂ nanodisks. Researchers discovered that HfS₂ is chemically unstable under ambient conditions. This challenge was mitigated by storing the material in oxygen-free environments or encapsulating it in hexagonal boron nitride (hBN) or polymethyl methacrylate (PMMA) [24].

- Optical Characterization: The final step involved demonstrating that the fabricated HfS₂ nanodisks could support optical Mie resonances, thereby validating its predicted potential for nanoscale photonic applications [24].

Quantitative Validation of Predicted Properties

The experimental data confirmed the computational predictions, as shown in the comparison below.

Table: Computational Predictions vs. Experimental Validation for HfS₂ [24]

| Property | Computational Prediction (BSE+) | Experimental Measurement | Application Significance |

|---|---|---|---|

| In-Plane Refractive Index (n) | > 3 across the visible spectrum | Confirmed (e.g., ~3.1 at 600 nm) | Enables better focusing efficiency for metalenses and higher quality factor for optical resonators. |

| Optical Losses / Extinction Coefficient (k) | Values below 0.1 for wavelengths > 550 nm | Confirmed | Ensures low absorption and high transparency, which is crucial for efficient light manipulation. |

| Material Stability | Not explicitly predicted | Unstable under ambient air; requires encapsulation | Highlighted a critical, non-trivial challenge for practical application that was only revealed through experiment. |

Diagram: The Validation Loop for HfS₂, Confirming Predictions and Revealing New Challenges

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key reagents and materials essential for the experimental validation techniques discussed in this guide.

Table: Essential Research Reagents and Materials for Validation Experiments

| Reagent / Material | Function in Validation | Example Application Context |

|---|---|---|

| Cell Lines [22] | Provide a controlled cellular environment for testing drug-target engagement and phenotypic response. | Used in cell-based assays (e.g., CETSA) and to create xenograft models for in vivo studies. |

| siRNA/shRNA Libraries [22] | Selectively silence or knock down the expression of specific target genes to study the resulting phenotypic consequences. | A key tool for genetic validation via RNA interference (RNAi). |

| Mouse Xenograft Models [22] [23] | Provide an in vivo system to validate target modulation and therapeutic efficacy in a complex, living organism. | Commonly used for in vivo validation of cancer drug targets. |

| Chemical Probes [22] | Designed to bind specifically to desired proteins, enabling their retrieval and identification from complex biological mixtures. | Used in chemical proteomics for proteome-wide target identification. |

| Antibodies | Detect and quantify specific proteins, their post-translational modifications, and changes in expression levels in various assay formats. | Used in Western blotting, immunofluorescence, and ELISA to monitor downstream signaling pathways. |

| qPCR Reagents [22] | Enable precise quantification of gene expression levels through fluorescent detection. | Used to analyze how drug treatments affect the expression of target genes and downstream pathway components. |

| Encapsulation Materials (hBN, PMMA) [24] | Protect air-sensitive materials (e.g., HfS₂) from degradation during storage and experimentation, enabling accurate property measurement. | Critical for handling and validating the properties of unstable van der Waals materials. |

| Mass Spectrometry Systems [22] [23] | Identify and quantify proteins, drug metabolites, and protein-drug interactions with high precision and proteome-wide coverage. | Central to techniques like Thermal Proteome Profiling (TPP) and activity-based protein profiling (ABPP). |

Building the Bridge: Methodologies for High-Throughput Prediction and Automated Validation

The discovery of novel materials has long been a cornerstone of technological advancement, traditionally relying on resource-intensive trial-and-error experimental approaches. Computational screening has emerged as a powerful alternative, enabling researchers to rapidly evaluate thousands of material candidates in silico before committing to laboratory synthesis. At the forefront of this revolution stands Density Functional Theory (DFT), a quantum mechanical method that has become the workhorse for predicting electronic, structural, and thermodynamic properties of materials with sufficient accuracy for initial screening purposes. The fundamental premise of computational screening involves leveraging first-principles calculations to establish quantitative structure-property relationships, which can then be used to identify promising candidate materials for specific applications.

This guide objectively compares the current state of computational screening methodologies, with particular emphasis on how traditional DFT-based approaches stack against emerging machine learning (ML) techniques and multi-scale frameworks. As we evaluate these competing paradigms, we ground our analysis within the crucial context of experimental validation—the ultimate benchmark for any computational prediction. Recent studies have demonstrated that while DFT continues to offer valuable insights, its limitations in accuracy and computational cost have spurred the development of hybrid approaches that combine the best of both quantum mechanical and machine learning worlds.

Comparative Analysis of Computational Screening Methodologies

Table 1: Key Methodologies for Computational Materials Screening

| Methodology | Computational Cost | Accuracy Range | Typical System Size | Key Applications | Experimental Validation Success Rate |

|---|---|---|---|---|---|

| Traditional DFT | High (Hours to days) | Moderate to High (Variable with functional) | 10-1000 atoms | Catalytic activity, formation energies, electronic properties | ~70-80% for qualitative trends; ~50-60% for quantitative predictions |

| Neural Network Potentials (NNPs) | Medium (Minutes to hours) | Near-DFT (When properly trained) | 1000-100,000 atoms | Reactive chemistry, molecular dynamics, mechanical properties | ~85-95% for properties within training domain |

| Foundation Models/LLMs | Low (Seconds to minutes) | Moderate (Limited by training data) | Virtually unlimited | Initial screening, synthesis planning, molecular generation | ~60-70% (Rapidly improving with model size) |

| Multi-scale Frameworks (e.g., JARVIS) | Variable (Integrated approach) | Variable across scales | Multi-scale (Atoms to devices) | High-throughput screening across material classes | ~80-90% for integrated workflows |

Table 2: Performance Benchmarks for Different Screening Approaches

| Methodology | Representative Tool/Platform | Energy MAE (eV/atom) | Force MAE (eV/Å) | Speedup vs. DFT | Key Limitations |

|---|---|---|---|---|---|

| Traditional DFT | VASP, Quantum ESPRESSO | N/A (Reference) | N/A (Reference) | 1x | System size limitations, functional choice dependence |

| Neural Network Potentials | EMFF-2025 [25] | <0.1 [25] | <2.0 [25] | 100-1000x [25] | Training data requirements, transferability concerns |

| Agentic DFT Systems | DREAMS [26] | ~0.05-0.15 (vs. experiment) | Not specified | ~5x (vs. manual DFT) | Limited to DFT accuracy ceiling |

| High-Throughput DFT | JARVIS-DFT, AFLOW, Materials Project [27] | Functional-dependent | Functional-dependent | 10-100x (Workflow automation) | Database coverage gaps, functional transferability |

The quantitative comparison reveals a clear trade-off between computational efficiency and accuracy across methodologies. Traditional DFT remains invaluable for its first-principles foundation without empirical parameters but suffers from significant computational costs that limit system sizes and time scales. The EMFF-2025 neural network potential demonstrates remarkable efficiency, achieving 100-1000x speedup over DFT while maintaining chemical accuracy for high-energy materials containing C, H, N, and O elements [25]. This represents a significant advancement for high-throughput screening of complex materials.

Emerging agentic systems like DREAMS address a different aspect of the screening pipeline—automating the expertise-intensive process of DFT parameter selection and convergence testing. By achieving average errors below 1% compared to human DFT experts on benchmark systems, such frameworks demonstrate the potential for reducing human intervention while maintaining accuracy [26]. This approach is particularly valuable for standardizing screening protocols across research groups and ensuring reproducibility.

Experimental Protocols and Validation Methodologies

DFT-Guided Catalyst Screening with Experimental Validation

A representative experimental study demonstrates the integrated computational-experimental approach for screening polyester synthesis catalysts [28]. The protocol exemplifies how DFT calculations can guide experimental design and subsequently be validated through materials synthesis and characterization.

Table 3: Experimental Validation Protocol for DFT-Predicted Catalysts

| Stage | Protocol Description | Characterization Techniques | Validation Metrics |

|---|---|---|---|

| Computational Screening | HOMO/LUMO calculations via DFT; Frontier molecular orbital theory analysis | Computational: Electron cloud density visualization, orbital energy quantification | LUMO energy correlation with catalytic activity |

| Materials Synthesis | PET synthesis using top-ranked catalysts from computational screening; Polycondensation reaction monitoring | Process: Reaction time, temperature, pressure tracking | Polymerization kinetics, catalyst efficiency |

| Materials Characterization | Optical properties measurement; Thermal analysis; Structural characterization | Spectrophotometry (transmittance, luminosity); DSC (crystallinity); Chromaticity measurements | Transmittance (91.43%), luminosity (92.82%), crystallinity (~24%) |

| Performance Validation | Comparison of catalyst performance against industrial standards | Side product analysis, color measurement, processing window assessment | Reduction in yellowness, improved optical clarity vs. antimony catalysts |

The detailed experimental workflow began with DFT calculations on seven metal-based catalysts, focusing on their highest occupied molecular orbital (HOMO) and lowest unoccupied molecular orbital (LUMO) energies [28]. The computational screening identified that catalysts with lower LUMO energy levels significantly promote nucleophilic attack during polycondensation, exhibiting superior catalytic efficiency. This theoretical insight guided the development of a composite catalyst comprising cobalt(II) acetate tetrahydrate and germanium(IV) oxide in a 40:60 ratio.

Experimental validation confirmed the DFT predictions, with the composite catalyst yielding PET films with exceptional transmittance (91.43%) and luminosity (92.82%) [28]. The study established a quantitative correlation between computed LUMO energies and experimental polycondensation times, demonstrating how computational screening can rationally guide materials design beyond traditional trial-and-error approaches. This end-to-end pipeline from computation to experimental validation exemplifies the power of integrated approaches in materials discovery.

Neural Network Potential Validation Protocol

The validation of machine learning potentials like EMFF-2025 follows a rigorous protocol to ensure transferability and accuracy [25]. The methodology involves:

Training Data Curation: Transfer learning from pre-trained models (e.g., DP-CHNO-2024) with minimal additional data from DFT calculations using the Deep Potential generator (DP-GEN) framework [25].

Accuracy Assessment: Comparison of predicted energies and forces against DFT reference calculations, with mean absolute errors (MAE) predominantly within ±0.1 eV/atom for energies and ±2 eV/Å for forces [25].

Property Prediction: Application to 20 high-energy materials (HEMs) for structure, mechanical properties, and decomposition characteristics prediction.

Experimental Benchmarking: Validation against experimental crystal structures, mechanical properties, and thermal decomposition behaviors [25].

The surprising discovery that most HEMs follow similar high-temperature decomposition mechanisms—challenging the conventional view of material-specific behavior—demonstrates how NNPs can uncover fundamental insights that might remain hidden with traditional methods [25].

Computational Screening Workflow

Essential Research Toolkit for Computational Screening

Table 4: Essential Research Reagents and Computational Tools

| Tool/Category | Specific Examples | Function/Role | Access Method |

|---|---|---|---|

| DFT Codes | VASP, Quantum ESPRESSO [29], Gaussian [30] | First-principles property calculation | Academic licensing, open source |

| Machine Learning Potentials | EMFF-2025 [25], ALIGNN-FF [27] | Near-DFT accuracy at lower computational cost | Open source, published parameters |

| High-Throughput Platforms | JARVIS [27], AFLOW, Materials Project [29] | Automated workflow management, database generation | Web applications, Python APIs |

| Multi-scale Frameworks | MISPR [30], DREAMS [26] | Integrated quantum-classical simulations, automated convergence | Open source, specialized implementations |

| Experimental Validation Suites | JARVIS-Exp [27], MDPropTools [30] | Experimental data comparison, property analysis | Open source, custom implementations |

The computational screening ecosystem has evolved into a sophisticated infrastructure with specialized tools for each stage of the discovery pipeline. High-throughput DFT platforms like JARVIS integrate diverse theoretical and experimental approaches, providing "multimodal, multiscale, forward, and inverse materials design" capabilities [27]. These platforms distinguish themselves by offering true integration of first-principles calculations, machine learning models, and experimental datasets within a unified framework.

Multi-scale frameworks such as MISPR address the critical challenge of automating complex hierarchical simulations through modular DFT and classical molecular dynamics workflows [30]. These infrastructures automatically handle error correction, data provenance, and workflow management, significantly reducing the expertise barrier for running sophisticated computational screenings.

Emerging agentic systems like DREAMS represent the cutting edge, utilizing hierarchical multi-agent frameworks to automate the traditionally expertise-intensive process of DFT simulation setup and convergence testing [26]. By combining a central Large Language Model planner with domain-specific agents for structure generation, convergence testing, and error handling, such systems approach "L3-level automation—autonomous exploration of a defined design space" [26].

Experimental Validation Process

The field of computational materials screening is rapidly evolving toward increasingly automated and integrated approaches. Foundation models pretrained on broad materials data are showing promise for property prediction and molecular generation, though they currently face limitations due to their predominant training on 2D molecular representations rather than 3D structural information [31]. The next generation of these models will likely incorporate geometric deep learning to better capture structure-property relationships.

The integration of multi-agent systems like DREAMS with high-throughput platforms such as JARVIS points toward a future where computational screening requires minimal human intervention for routine tasks [26] [27]. These systems will potentially enable researchers to focus on higher-level scientific questions rather than technical computational details. However, this automation must be balanced with rigorous validation protocols to ensure the physical accuracy of predictions.

The most significant trend is the growing emphasis on closing the loop between computational prediction and experimental validation. As demonstrated in the PET catalyst study [28], successful screening pipelines increasingly integrate computational guidance with experimental validation from the outset, creating virtuous cycles where experimental results inform improved computational models. This tight integration represents the most promising path forward for accelerating materials discovery while ensuring practical relevance.

In conclusion, while DFT remains the foundational method for computational screening, its future lies not in isolation but as part of integrated multi-scale workflows that combine the accuracy of first-principles methods with the speed of machine learning and the validation of experimental characterization. Researchers who strategically leverage these complementary approaches will be best positioned to accelerate materials discovery for applications ranging from energy storage to advanced electronics and beyond.

The integration of artificial intelligence (AI) into scientific research has catalyzed a paradigm shift, particularly in the validation of computationally discovered materials. This process transforms from a linear, hypothesis-driven endeavor to an iterative, data-driven cycle where machine learning (ML) models both predict novel candidates and guide their experimental confirmation. Within this framework, the "AI Assistant" emerges as a critical tool, streamlining the path from in silico prediction to tangible, validated material. This guide provides a structured comparison of methodologies and tools essential for constructing such AI-assisted workflows, with a focus on generating robust, reproducible, and experimentally grounded insights for researchers in materials science and drug development.

Performance Benchmarking: A Comparative Analysis of ML Tools

Selecting the appropriate machine learning tool is critical for the success of AI-driven discovery projects. The following tables offer a comparative overview of popular frameworks and models based on key performance metrics and functional characteristics, guiding researchers toward informed choices.

Table 1: Comparative Performance of ML Tools for Material Property Prediction

| Tool / Framework | Primary Application | Key Metrics (Typical Range) | Notable Features | Considerations |

|---|---|---|---|---|

| DeepChem [32] | Drug Discovery, Quantum Chemistry, Materials Science | R²: ~0.65-0.95 [32]; AUC-ROC: ~0.8-0.98 [32] | Specialized metrics (BedROC); Integrated TensorBoard; Validation callbacks [32] | Steeper learning curve; Domain-specific |

| ChemProp(GNN) [33] | Small Molecule Property Prediction | MAE: Lower than LightGBM in specific tasks [33]; Recall@Precision: Statistically significant gains [33] | Message-passing neural networks for molecular graphs; High interpretability for molecular features [33] | Computationally intensive; Requires structured molecular data |

| LightGBM [33] | General Purpose & Tabular Data | MAE: Can be higher than GNNs [33]; Training Speed: Very Fast [33] | High efficiency on tabular data; Low computational requirements [33] | May underperform on complex molecular relationships |

| Polaris Hub Protocol [33] | Method Comparison & Benchmarking | N/A (Provides statistical rigor) | Implements 5x5 repeated CV; Tukey HSD test; Guidelines for practical significance [33] | A benchmarking protocol, not a modeling tool |

Table 2: Performance of AI-Generated Material Candidates in Validation

| Material/Drug Candidate | Discovery/AI Platform | Experimental Validation Result | Key Metric | Stage |

|---|---|---|---|---|

| Rentosertib (ISM001-055) [34] | Generative AI Platform (Pharma.AI) | FVC mean increase of 98.4 mL (vs. placebo decrease of 20.3 mL) in IPF patients [34] | Lung Function (FVC) | Phase IIa Clinical Trial [34] |

| TNIK Inhibitor [34] | AI-driven Target Discovery | Dose-dependent reduction in COL1A1, MMP10; Increase in IL-10 [34] | Serum Biomarkers | Preclinical/Clinical [34] |

| AI-Discovered Molecules(Various) [35] | Generative Pre-trained Models | 32.2% higher success rate vs. random screening [35] | Compound Generation Success | Early Discovery [35] |

| Structure Material Models [36] | Symbolic Regression & Deep Learning | Development of 2-3 high-performance metal materials; Engineering pilot validation [36] | Material Performance (PPA) | R&D and Pilot [36] |

Essential Experimental Protocols for Robust Validation

Adhering to statistically sound experimental protocols is fundamental to ensuring that performance comparisons are meaningful and replicable. The following methodologies are considered best practice in the field.

Protocol for Model Performance Comparison

For comparing the predictive performance of different ML models on a fixed dataset, a rigorous resampling protocol is recommended to obtain reliable performance estimates [33].

- Data Splitting: Implement a 5x5 repeated cross-validation (CV) scheme. This involves randomly splitting the dataset into 5 folds. The model is trained on 4 folds and validated on the 1 held-out fold. This process is repeated 5 times so that each fold serves as the validation set once. The entire 5-fold CV procedure is then repeated 5 times with different random splits, resulting in 25 performance estimates per model. This provides a robust sampling distribution of performance [33].

- Statistical Testing: To compare the performance distributions of multiple models, use Repeated Measures ANOVA followed by a post-hoc Tukey Honest Significant Difference (HSD) test. The ANOVA determines if there are any statistically significant differences between the means of the models. The Tukey HSD test then performs all pairwise comparisons between models, controlling for the family-wise error rate that increases with multiple comparisons [33].

- Assessing Practical Significance: A result can be statistically significant but not meaningful in a real-world context. Therefore, always report effect sizes (e.g., Cohen's D for standardized mean differences) and contextualize performance metrics using domain-specific knowledge. For instance, a small reduction in Mean Absolute Error (MAE) might be statistically significant with a large sample size but have no impact on the downstream decision to synthesize a compound [33].

Protocol for Validating AI-Discovered Candidates

When moving from a trained model to the experimental validation of a specific AI-predicted candidate (e.g., a new material composition or drug molecule), the workflow requires integrating computational and experimental efforts.

Diagram 1: AI-Driven Material Discovery and Validation Workflow

The diagram above outlines the core iterative cycle for validating AI-discovered candidates. The critical stages involve:

- In Silico Validation: Before any physical experiment, top-ranked candidates undergo further computational scrutiny. This includes molecular dynamics (MD) simulations using AI-enhanced forcefields (which can speed up simulations 100-fold while maintaining density functional theory (DFT) level accuracy) [35] and predictive modeling of key properties like Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET). Multi-layer perceptron (MLP) models can now achieve over 85% accuracy in predicting ADMET properties, significantly de-risking later-stage failure [35].

- Experimental Validation and Biomarker Analysis: Successful in silico candidates proceed to synthesis and in vitro testing. The protocol must include a plan for exploratory biomarker analysis to confirm the hypothesized mechanism of action. For example, in the validation of the AI-discovered drug Rentosertib, researchers analyzed serum samples and observed a dose-dependent decrease in pro-fibrotic proteins (COL1A1, MMP10) and an increase in anti-inflammatory markers (IL-10), thereby providing biological validation for the AI-predicted target (TNIK) [34].

- Model Feedback and Iteration: The experimentally measured properties of the synthesized candidates are fed back into the AI model's training dataset. This active learning loop allows the model to refine its predictions, improving the success rate of subsequent design cycles [35].

The Scientist's Toolkit: Essential Research Reagents and Solutions

A successful AI-assisted research pipeline relies on a combination of computational tools and data resources. The following table details key components of this modern toolkit.

Table 3: Key Research Reagent Solutions for AI-Assisted Discovery

| Item / Resource | Function | Application Example | Key Features |

|---|---|---|---|

| DeepChem Framework [32] | Open-source framework for deep learning on molecular data. | Training and monitoring predictive models for material toxicity or solubility [32]. | Provides specialized metrics (BedROC), validation callbacks, and TensorBoard integration for real-time performance tracking [32]. |

| Polaris Method Comparison [33] | Open-source code protocols for statistically rigorous ML benchmarking. | Comparing the performance of a new GNN architecture against existing QSAR models on a proprietary dataset [33]. | Implements 5x5 repeated CV, statistical tests (Tukey HSD), and effect size calculations to ensure robust comparisons [33]. |

| AI-Generated Hypotheses [37] | "Scientist智能体" (Scientist Agent) for automated literature review and hypothesis generation. | Automatically scanning published research to propose novel material combinations or biological targets [37]. | Capable of knowledge extraction, causal reasoning, and multi-agent collaboration to generate testable scientific hypotheses [37]. |

| Scientific Data Toolchain [37] | Integrated system for data collection, cleaning, and dataset creation. | Building a high-quality, labeled dataset of crystal structures and their electronic properties for model training [37]. | Enables efficient data acquisition, standardization, and the creation of large-scale (>100k entries) datasets for specific scientific domains [37]. |

| Validation Datasets | Curated experimental data used for model testing and benchmarking. | Serving as a ground-truth standard to evaluate a new model's prediction of band gaps in perovskites. | High-quality, low-noise data with standardized formats; often include temporal or structural splits to test generalizability [33]. |

Navigating Implementation: From Theory to Practical Workflow

Implementing an AI-assisted pipeline requires careful consideration of the entire workflow, from data ingestion to final validation. The following diagram and explanation detail this integrated process.

Diagram 2: End-to-End AI-Assisted Research Pipeline

The final implementation involves connecting all components into a cohesive system. The process begins with ingesting multi-modal data, such as molecular structures, spectral data, and high-throughput assay results [37]. This raw data is processed through a data toolchain responsible for cleaning, annotation, and structuring, which is critical for building high-quality training datasets [37]. The clean data is then used to train and, just as importantly, to rigorously benchmark multiple ML models using protocols like 5x5 repeated cross-validation to select the best performer [33]. The chosen model then generates and ranks new candidate materials or molecules. The most promising of these undergo experimental validation, where the results are not merely an endpoint but are fed back into both the data toolchain and the model training process. This creates a powerful feedback loop, continuously improving the AI's predictive capability and accelerating the discovery cycle [35].

The discovery of next-generation battery materials is pivotal for advancing energy storage technologies. Traditional experimental approaches, often characterized by time-consuming synthesis and testing, are increasingly being supplemented by computational methods that can rapidly identify promising candidates. Among these, high-throughput screening using Density Functional Theory (DFT) has emerged as a powerful tool for accelerating this discovery process. This case study examines the paradigm of integrated computational and experimental workflows, focusing on the accelerated discovery of novel materials for lithium-ion batteries (LIBs) and aqueous zinc-ion batteries (AZIBs). The central thesis is that high-throughput DFT screening, when coupled with targeted experimental validation, constitutes a robust framework for identifying high-performance battery materials with enhanced efficiency. This approach dramatically expands the explorable chemical space, guides synthesis toward the most viable candidates, and provides atomistic insights into material properties, thereby de-risking and informing the experimental pipeline [5] [38].

High-Throughput DFT Screening: Methodologies and Workflows

The foundational principle of high-throughput materials discovery is the systematic and automated computation of properties for a vast number of candidate materials. DFT serves as the workhorse for these calculations due to its favorable balance between accuracy and computational cost, enabling the prediction of key properties prior to synthesis.

Core DFT Calculations and Screening Criteria

The screening process typically involves several stages of property evaluation. Initially, structural stability is assessed through the calculation of the formation energy; for instance, in a study on Wadsley-Roth niobates, compounds with a formation enthalpy (ΔHd) below 22 meV/atom were considered potentially (meta)stable [39]. Subsequently, electrochemical properties critical for battery operation are computed. These include the assessment of ionic diffusion pathways and energy barriers to identify materials with fast ion transport, as well as the calculation of open-circuit voltage to ensure compatibility with common electrolytes [39] [38]. For example, the lithium diffusivity in a newly discovered material, MoWNb24O66, was predicted to have a peak value of 1.0x10⁻¹⁶ m²/s [39].

Workflow Automation and Data-Driven Discovery

The screening process is structured as a multi-stage funnel, visually summarized in Figure 1. The workflow begins with the definition of a vast chemical space, often generated through elemental substitutions into known crystal prototypes [39]. This is followed by sequential DFT-based filters for stability, electrochemistry, and kinetics, ultimately yielding a handful of top candidates for experimental validation. Recent advancements are introducing greater automation into this pipeline. Frameworks like the DFT-based Research Engine for Agentic Materials Screening (DREAMS) leverage hierarchical multi-agent systems to automate complex tasks such as atomistic structure generation, DFT convergence testing, and error handling, thereby significantly reducing the reliance on human expertise and intervention [40].

The following diagram illustrates the typical high-throughput screening workflow, from initial candidate generation to final experimental validation.

Figure 1: High-throughput DFT screening and experimental validation workflow.

Case Study 1: Discovery of Wadsley-Roth Niobates for Lithium-Ion Batteries

Computational Screening Protocol

A landmark study demonstrates the power of this approach for discovering novel Wadsley-Roth (WR) niobate anode materials for LIBs [39]. The WR family is known for its open crystal structure, which enables rapid Li⁺ diffusion and good electronic conductivity. To expand beyond the limited number of known WR structures, researchers employed a high-throughput strategy involving single- and double-site substitution into 10 known WR-niobate prototypes using 48 elements across the periodic table. This generated 3,283 potential compositions. DFT calculations were then used to evaluate the thermodynamic stability of each composition by calculating its formation enthalpy. This screening identified 1,301 potentially stable compositions, dramatically expanding the family of candidate WR materials and enabling the identification of structure-property relationships [39].

Experimental Validation and Performance

From the computationally stable candidates, MoWNb₂₄O₆₆ was selected for experimental synthesis and validation. X-ray diffraction (XRD) confirmed the successful formation of the predicted crystal structure. Electrochemical testing revealed outstanding performance, with the material achieving a specific capacity of 225 mAh/g at a 5C rate, indicating excellent rate capability. Furthermore, the experimentally measured lithium diffusivity showed a peak value of 1.0x10⁻¹⁶ m²/s at 1.45 V vs. Li/Li⁺, confirming the predicted fast ionic transport. This performance exceeded that of Nb₁₆W₅O₅₅, a benchmark WR compound, thereby validating the computational prediction and demonstrating the success of the integrated approach [39].

Case Study 2: Discovery of Spinel Cathodes for Aqueous Zinc-Ion Batteries

High-Throughput Screening Strategy

A complementary case study focuses on the discovery of spinel cathode materials for safer and lower-cost AZIBs [38]. The research team initiated the process with a massive initial pool of 12,047 Mn/Zn-O based materials. A multi-stage DFT screening funnel was applied: First, structures were examined for their basic suitability as electrodes. Subsequent rounds of screening calculated more intensive properties, including band structures, open-circuit voltage, volume expansion rate, and the ionic diffusion coefficient/energy barrier for Zn²⁺ ions. This rigorous computational workflow narrowed the vast candidate pool down to just five promising spinel materials for experimental consideration [38].

Experimental Synthesis and Electrochemical Performance

From the shortlist, Mg₂MnO₄ was synthesized and characterized. Its performance as a cathode was evaluated in a custom AZIB cell. The results aligned closely with computational predictions; the material exhibited excellent cycling stability, which was attributed to the theoretically predicted low volume expansion. Moreover, it displayed high reversible capacity and exceptional rate performance, even at high current densities. This case underscores how high-throughput DFT screening can effectively prioritize candidates with balanced properties, such as adequate capacity, good ionic conductivity, and structural resilience, which are all critical for practical battery applications [38].

Comparative Analysis of Discovered Materials

The table below provides a quantitative comparison of the key performance metrics for the materials discovered in the featured case studies, alongside a known benchmark material for context.

Table 1: Performance Comparison of Battery Materials Discovered via High-Throughput Screening

| Material | Battery System | Role | Specific Capacity | Rate Performance | Key Metric (Ion Diffusivity/Stability) | Reference |

|---|---|---|---|---|---|---|

| MoWNb₂₄O₆₆ | Lithium-ion | Anode | 225 mAh/g | Retained at 5C | Li⁺ Diffusivity: 1.0×10⁻¹⁶ m²/s | [39] |

| Mg₂MnO₄ | Aqueous Zinc-ion | Cathode | High reversible capacity | Excellent at high current density | Low volume expansion | [38] |

| Nb₁₆W₅O₅₅ (Benchmark) | Lithium-ion | Anode | (Lower than MoWNb₂₄O₆₆) | (Lower than MoWNb₂₄O₆₆) | (Lower Li⁺ Diffusivity) | [39] |

Essential Research Toolkit for High-Throughput Screening

The implementation of a high-throughput DFT screening pipeline relies on a suite of computational and experimental tools. The following table details key "research reagents" and their functions in this domain.

Table 2: Essential Tools for High-Throughput Computational Materials Discovery

| Tool Category / 'Reagent' | Specific Examples | Function in the Discovery Workflow |

|---|---|---|

| Computational Codes | VASP (Vienna Ab-initio Simulation Package) | Performs DFT calculations to determine total energy, electronic structure, and material properties. [41] [42] |

| Automation & Workflow | DREAMS Framework, Atomic Simulation Environment (ASE) | Automates complex simulation tasks, manages calculations, and facilitates data flow between different codes. [40] [43] |

| Data Analysis & Machine Learning | Artificial Neural Networks (ANN), AGNI fingerprints | Accelerates property prediction, identifies patterns in large datasets, and builds surrogate models for faster screening. [44] [42] |