Foundation Models for Materials Synthesis: Accelerating Discovery from Prediction to Production

This article explores the transformative role of foundation models in materials synthesis planning, a critical bottleneck in materials science and drug development.

Foundation Models for Materials Synthesis: Accelerating Discovery from Prediction to Production

Abstract

This article explores the transformative role of foundation models in materials synthesis planning, a critical bottleneck in materials science and drug development. Aimed at researchers and scientists, it provides a comprehensive examination of how these large-scale AI models, trained on broad data, are enabling rapid property prediction, inverse design, and autonomous experimentation. The scope ranges from foundational concepts and methodological applications to troubleshooting current limitations and validating model performance against traditional methods. By synthesizing the latest research and real-world case studies, this content serves as a strategic guide for integrating AI-driven synthesis planning into advanced research workflows to bridge the gap between computational discovery and scalable manufacturing.

What Are Foundation Models and Why Are They Revolutionizing Materials Science?

Foundation models represent a paradigm shift in artificial intelligence, defined as models "trained on broad data (generally using self-supervision at scale) that can be adapted (e.g., fine-tuned) to a wide range of downstream tasks" [1]. These models have evolved from early expert systems relying on hand-crafted symbolic representations to modern deep learning approaches that automatically learn data-driven representations [1]. The advent of the transformer architecture in 2017 and subsequent generative pretrained transformer (GPT) models demonstrated the power of creating generalized representations through self-supervised training on massive data corpora [1]. This technological evolution has particularly impacted scientific domains such as materials discovery and drug development, where foundation models are now being applied to complex tasks including property prediction, synthesis planning, and molecular generation [1].

In scientific contexts, the adaptation process typically involves two key stages after initial pre-training: fine-tuning using specialized scientific datasets to perform domain-specific tasks, followed by an optional alignment process where model outputs are refined to match researcher preferences, such as generating chemically valid structures with improved synthesizability [1]. The philosophical underpinning of this approach harks back to the era of specific feature design, but with the crucial distinction that representations are learned through exposure to enormous volumes of data rather than manual engineering [1].

Current Applications in Materials and Drug Discovery

Property Prediction and Molecular Generation

Foundation models are revolutionizing property prediction in materials science, traditionally dominated by either highly approximate initial screening methods or prohibitively expensive physics-based simulations [1]. These models enable powerful predictive capabilities based on transferable core components, paving the way for truly data-driven inverse design approaches [1]. Most current models operate on 2D molecular representations such as SMILES or SELFIES, though this approach necessarily omits crucial 3D conformational information [1]. The dominance of 2D representations stems primarily from the significant disparity in dataset availability, with foundation models trained on datasets like ZINC and ChEMBL containing approximately 10^9 molecules—a scale not readily available for 3D structural data [1].

Encoder-only models based on the BERT architecture currently dominate the literature for property prediction tasks, although GPT-style architectures are becoming increasingly prevalent [1]. For inorganic solids like crystals, property prediction models typically leverage 3D structures through graph-based or primitive cell feature representations, representing an exception to the 2D-dominant paradigm [1]. The reuse of both core models and architectural components exemplifies a key strength of the foundation model approach, though this raises important questions about novelty in scientific discovery when models are trained predominantly on existing knowledge [1].

Scientific Data Extraction and Synthesis Planning

The extraction of structured scientific information from unstructured documents represents a critical application of foundation models in materials research. Advanced data-extraction models must efficiently parse materials information from diverse sources including scientific reports, patents, and presentations [1]. Traditional approaches focusing primarily on text are insufficient for materials science, where significant information is embedded in tables, images, and molecular structures [1]. Modern extraction pipelines therefore employ multimodal strategies combining textual and visual information to construct comprehensive datasets that accurately reflect materials science complexities [1].

Data extraction foundation models typically address two interconnected problems: identifying materials themselves through named entity recognition (NER) approaches, and associating described properties with these materials [1]. Recent advances in LLMs have significantly improved the accuracy of property extraction and association tasks, particularly through schema-based extraction methods [1]. Specialized algorithms like Plot2Spectra demonstrate how modular approaches can extract data points from spectroscopy plots in scientific literature, enabling large-scale analysis of material properties otherwise inaccessible to text-based models [1]. Similarly, DePlot converts visual representations like plots and charts into structured tabular data for reasoning by large language models [1].

Quantitative Analysis of Foundation Models

Table 1: Comparison of Leading Open-Source Foundation Models for Scientific Research

| Model | Developer | Architecture | Parameters | Context Length | Core Research Strength |

|---|---|---|---|---|---|

| DeepSeek-R1 | deepseek-ai | Reasoning Model (MoE) | 671B | 164K tokens | Premier mathematical reasoning, complex scientific problems |

| Qwen3-235B-A22B | Qwen3 | Reasoning Model (MoE) | 235B total, 22B active | Not specified | Dual-mode academic flexibility, multilingual collaboration |

| GLM-4.1V-9B-Thinking | THUDM | Vision-Language Model | 9B | 4K resolution images | Multimodal research excellence, STEM problem-solving |

Table 2: Data Extraction Tools and Techniques for Materials Science

| Tool/Method | Modality | Primary Function | Application in Materials Discovery |

|---|---|---|---|

| Named Entity Recognition (NER) | Text | Identify materials entities | Extract material names from literature |

| Vision Transformers | Images | Identify molecular structures | Extract structures from patent images |

| Plot2Spectra | Plots/Charts | Extract spectral data points | Large-scale analysis of material properties |

| DePlot | Visualizations | Convert plots to tabular data | Enable reasoning about graphical data |

| SPIRES | Text | Extract structured data | Create knowledge bases from publications |

Experimental Protocols and Methodologies

Protocol 1: Fine-Tuning Foundation Models for Property Prediction

Objective: Adapt a pre-trained foundation model to predict specific material properties (e.g., band gap, solubility, catalytic activity) from molecular representations.

Materials and Setup:

- Hardware: High-performance computing cluster with multiple GPUs (minimum 4× A100 80GB)

- Software: Python 3.9+, PyTorch 2.0+, Hugging Face Transformers library

- Data: Curated dataset of labeled molecular structures (SMILES/SELFIES) with target properties

Procedure:

- Data Preprocessing: Convert all molecular structures to SELFIES representation to ensure chemical validity. Apply data augmentation through canonical SMILES enumeration.

- Model Initialization: Load pre-trained weights from a chemical foundation model (e.g., trained on ZINC or PubChem). Initialize classification/regression head with random weights.

- Hyperparameter Configuration: Set batch size to 32, learning rate to 5e-5 with linear decay, and weight decay to 0.01. Use AdamW optimizer with warmup for first 5% of training steps.

- Training Loop: For each epoch, compute property prediction loss using mean squared error for regression or cross-entropy for classification. Validate every 500 steps on held-out validation set.

- Evaluation: Assess model performance on test set using metrics relevant to target application (RMSE, MAE, ROC-AUC). Perform chemical sanity checks on predictions.

Troubleshooting: If model fails to converge, reduce learning rate or increase batch size. For overfitting, implement early stopping or increase dropout probability.

Protocol 2: Multimodal Data Extraction from Scientific Literature

Objective: Extract structured materials information from heterogeneous scientific documents containing text, tables, and figures.

Materials and Setup:

- Hardware: Workstation with high-resolution display and GPU support

- Software: PDF parsing libraries (e.g., Camelot, PyMuPDF), computer vision models (Vision Transformers), named entity recognition pipeline

- Data: Collection of scientific papers and patents in PDF format

Procedure:

- Document Processing: Convert PDF documents to standardized format while preserving layout information. Separate document into text, table, and image streams.

- Text Processing: Apply named entity recognition (NER) with domain-specific model to identify material names, properties, and synthesis conditions. Extract relationships using dependency parsing.

- Table Extraction: Identify table structures using computer vision approaches. Parse table content and associate header information with data cells.

- Image Analysis: Process figures containing chemical structures using Vision Transformers. Convert identified structures to machine-readable formats (SMILES, InChI).

- Data Integration: Fuse information from all modalities using schema-based alignment. Resolve conflicts through confidence scoring and cross-verification.

- Validation: Manually review extractions from sample documents. Compute precision, recall, and F1-score against expert annotations.

Quality Control: Implement human-in-the-loop validation for critical extractions. Maintain version control for extracted datasets.

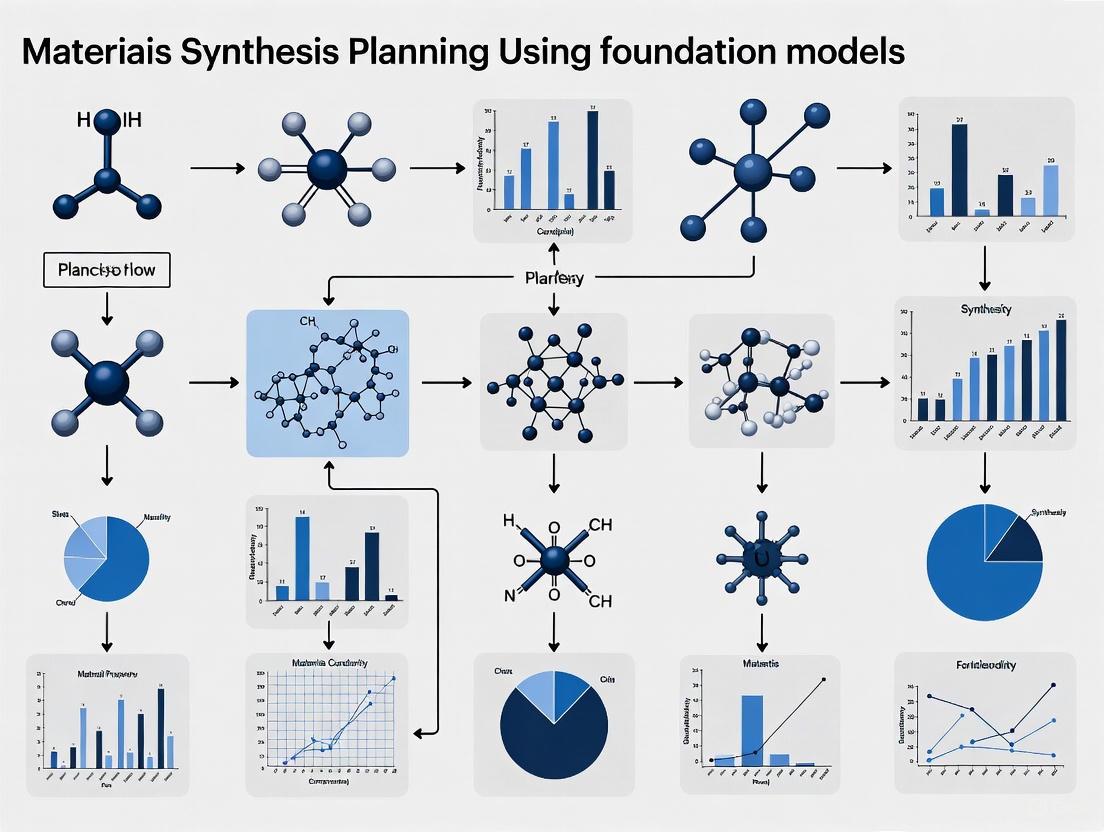

Visualization of Workflows

Diagram 1: Materials Discovery Workflow

Diagram 2: Foundation Model Training

Research Reagent Solutions

Table 3: Essential Computational Research Reagents for Foundation Model Applications

| Reagent/Tool | Type | Function | Example Applications |

|---|---|---|---|

| SMILES/SELFIES Representations | Molecular Encoding | Convert chemical structures to text | Model input for molecular generation |

| ZINC Database | Chemical Database | Source of ~10^9 compounds for pre-training | Training data for chemical foundation models |

| ChEMBL Database | Bioactivity Database | Curated bioactivity data | Fine-tuning for drug discovery applications |

| PubChem | Chemical Repository | Comprehensive chemical information | Data source for property prediction tasks |

| Crystal Graph Convolutional Networks | Geometric Deep Learning | Handle 3D crystal structures | Property prediction for inorganic materials |

| Vision Transformers | Computer Vision Architecture | Process molecular structure images | Extract compounds from patent documents |

| Named Entity Recognition Models | NLP Tool | Identify scientific entities | Extract materials data from literature |

The field of artificial intelligence in science is undergoing a fundamental transformation, moving from narrowly focused, task-specific models toward versatile, general-purpose foundation models. This paradigm shift represents a critical evolution in how researchers approach scientific discovery, particularly in complex domains like materials science. Traditional machine learning approaches in materials research have typically relied on models trained for specific predictive tasks—such as forecasting a particular material property or optimizing a single synthesis parameter. While these models have demonstrated value, they operate in isolation, lacking the broad, contextual understanding necessary for true scientific innovation [1] [2].

Foundation models, characterized by their training on broad data at scale and adaptability to a wide range of downstream tasks, are redefining this landscape [1] [3]. These models, built on architectures like the transformer, leverage self-supervised pre-training on enormous datasets to develop fundamental representations of scientific knowledge. This approach decouples representation learning from specific downstream tasks, enabling researchers to fine-tune a single, powerful base model for numerous applications with minimal additional training [1]. In materials synthesis planning—a domain requiring the integration of diverse knowledge spanning chemistry, physics, and engineering—this shift enables more holistic, efficient, and innovative approaches to designing and discovering new materials.

The Emerging Architecture of Scientific AI

Defining the Foundation Model Paradigm

The core distinction between traditional task-specific models and foundation models lies in their architecture, training methodology, and application potential. Foundation models for science are defined by several key characteristics: they are pre-trained on extensive and diverse datasets using self-supervision, exhibit scaling laws where performance improves with increased model size and data, and can be adapted to numerous downstream tasks through fine-tuning [3]. This stands in stark contrast to earlier approaches that required training separate models for each specific prediction task.

In materials science, these models typically employ encoder-decoder architectures that learn meaningful representations in a latent space, which can then be conditioned to generate outputs with desired properties [1]. The encoder component focuses on understanding and representing input data—such as chemical structures or synthesis protocols—while the decoder generates new outputs by predicting one token at a time based on the input and previously generated tokens [1]. This architectural separation enables both sophisticated understanding of complex material representations and generative capabilities for novel material design.

Table 1: Comparison of AI Model Paradigms in Materials Science

| Characteristic | Task-Specific Models | Foundation Models |

|---|---|---|

| Training Data | Limited, labeled datasets for specific tasks | Large-scale, diverse, often unlabeled data |

| Architecture | Specialized for single tasks | Flexible encoder-decoder transformers |

| Knowledge Transfer | Limited between domains | Strong cross-domain transfer capabilities |

| Computational Requirements | Lower per task, but cumulative cost high | High initial cost, lower fine-tuning cost |

| Applications | Single property prediction, specific optimizations | Multi-task: property prediction, synthesis planning, molecular generation |

Quantitative Evidence of the Paradigm Shift

Recent research demonstrates the tangible benefits of foundation models across various scientific domains. In materials informatics, foundation models have been applied to property prediction, synthesis planning, and molecular generation, showing remarkable improvements in efficiency and accuracy compared to traditional approaches [1]. For instance, models trained on large chemical databases like ZINC and ChEMBL—containing approximately 10^9 molecules—have achieved unprecedented performance in predicting complex material properties [1]. This data scale is crucial for capturing the intricate dependencies in materials science, where minute structural details can profoundly influence properties—a phenomenon known as an "activity cliff" [1].

The shift is further evidenced by emerging scaling laws in scientific AI, where model performance improves predictably with increased model size, training data, and computational resources [3]. This mirrors the trajectory that transformed natural language processing, suggesting a similar revolution may be underway for scientific AI. As these models scale, they begin to exhibit emergent capabilities—solving tasks that appeared impossible at smaller scales—thereby unlocking new possibilities for materials discovery and synthesis planning [3].

Application Notes: Foundation Models for Materials Synthesis Planning

Protocol: Implementing Constrained Generation for Quantum Materials

The integration of foundation models with domain-specific constraints represents a cutting-edge application in materials synthesis planning. The following protocol, adapted from recent research on SCIGEN (Structural Constraint Integration in GENerative model), enables the generation of novel materials with specific quantum properties [4].

Purpose: To generate candidate materials with exotic quantum properties (e.g., quantum spin liquids) by enforcing geometric constraints during the generative process.

Principles: Certain atomic structures (e.g., Kagome, Lieb, and Archimedean lattices) are more likely to exhibit exotic quantum properties. Traditional generative models optimized for stability often miss these promising candidates. SCIGEN addresses this by integrating structural constraints directly into the generation process [4].

Table 2: Research Reagent Solutions for AI-Driven Materials Discovery

| Research Reagent | Function in Experimental Workflow |

|---|---|

| DiffCSP Model | Base generative AI model for crystal structure prediction |

| SCIGEN Code | Computer code that enforces geometric constraints during generation |

| Archimedean Lattice Patterns | Design rules (2D lattice tilings) that give rise to quantum phenomena |

| High-Throughput Simulation | Screens generated candidates for stability and properties |

| Synthesis Lab Equipment | Validates AI predictions through physical material creation |

Procedure:

- Model Selection: Begin with a pre-trained diffusion model for crystal structure prediction (e.g., DiffCSP).

- Constraint Definition: Define the target geometric pattern (e.g., specific Archimedean lattices) known to produce desired quantum phenomena.

- Constraint Integration: Apply SCIGEN to integrate these constraints at each step of the generative process, blocking generations that don't align with structural rules.

- Candidate Generation: Generate material structures (SCIGEN enabled generation of over 10 million candidates with Archimedean lattices).

- Stability Screening: Apply stability filters (approximately 10% of generated materials typically pass stability screening).

- Property Simulation: Use high-performance computing (e.g., Oak Ridge National Laboratory supercomputers) to simulate quantum properties (41% of screened structures exhibited magnetism in the SCIGEN study).

- Experimental Validation: Synthesize top candidates (e.g., TiPdBi and TiPbSb) and validate properties experimentally [4].

Protocol: Multi-Modal Data Extraction for Synthesis Planning

Foundation models can overcome a critical bottleneck in materials discovery: extracting synthesis knowledge from diverse scientific literature. This protocol outlines an approach for building comprehensive synthesis databases.

Purpose: To extract materials synthesis information from multimodal scientific documents (text, tables, images) to create structured databases for training synthesis planning models.

Principles: Significant synthesis information exists in non-text elements, particularly tables, molecular images, and spectroscopy plots. Traditional natural language processing approaches miss this critical data. Multi-modal foundation models can integrate textual and visual information to construct comprehensive synthesis databases [1].

Procedure:

- Document Processing: Convert heterogeneous documents (journal articles, patents) into standardized digital formats.

- Multi-Modal Entity Recognition:

- Property Association: Use schema-based extraction with LLMs to associate identified materials with their properties and synthesis conditions [1]

- Knowledge Graph Construction: Integrate extracted entities and relationships into a structured knowledge graph

- Model Fine-Tuning: Use the constructed database to fine-tune foundation models for synthesis planning tasks

Implementation Framework: From Theory to Practice

Workflow for Materials Synthesis Planning

The complete workflow for AI-driven materials synthesis planning integrates multiple components, from data extraction through experimental validation. The systematic approach ensures that foundation models are effectively leveraged throughout the discovery pipeline.

Future Directions and Strategic Implications

The paradigm shift toward foundation models in science carries profound implications for research institutions, funding agencies, and the private sector. Governments worldwide are recognizing this transformation—the UK has identified materials science as one of five priority areas for AI-driven scientific advancement and has committed substantial funding (£137 million) to accelerate progress in this domain [5]. Similar initiatives are emerging globally, reflecting the strategic importance of AI leadership for scientific and economic competitiveness.

Looking ahead, several key developments will shape the evolution of foundation models for materials science:

- Autonomous Laboratories: The integration of AI with robotic synthesis and characterization platforms will enable fully autonomous discovery cycles [5]

- Specialized vs. General Models: While foundation models offer broad capabilities, targeted, task-specific models will continue to play important roles, particularly in regulated environments or highly specialized domains [6] [7]

- Data Infrastructure: The development of comprehensive, multi-modal databases will be crucial for training next-generation models [1] [2]

- Benchmarking Standards: Community-wide efforts like MLIP Arena are emerging to establish fairness and transparency in benchmarking machine learning interatomic potentials [8]

This paradigm shift from task-specific models to general-purpose AI represents more than a technical advancement—it constitutes a fundamental transformation in the scientific method itself. By leveraging foundation models for materials synthesis planning, researchers can navigate the complex landscape of material design with unprecedented speed and insight, potentially accelerating the decades-long materials development timeline into a process of years or even months [4] [2]. As these technologies mature, they promise to unlock new frontiers in materials science, from sustainable energy solutions to advanced quantum materials, fundamentally reshaping our approach to scientific discovery.

The Transformer architecture, introduced by Vaswani et al., has become a foundational technology not only in natural language processing (NLP) but also in scientific domains such as drug discovery and materials science [9] [10]. Its core innovation, the self-attention mechanism, enables the model to weigh the importance of all parts of the input sequence when processing information, thereby effectively capturing complex, long-range dependencies [11]. This capability is particularly valuable for modeling intricate relationships in scientific data, such as molecular structures and synthesis pathways.

In practice, the original Transformer architecture is most commonly adapted into three distinct variants, each optimized for different types of tasks: encoder-only models, decoder-only models, and encoder-decoder models [12]. Encoder-only models are designed for tasks requiring deep bidirectional understanding of the input, such as classification or entity recognition. Decoder-only models are specialized for autoregressive generation tasks, predicting subsequent elements in a sequence. Encoder-decoder models combine these strengths for sequence-to-sequence transformation tasks, making them ideal for applications like translation or summarization [12]. The selection among these architectures is crucial and depends on the specific requirements of the scientific problem, such as whether the task necessitates comprehensive input analysis, generative capability, or complex input-to-output transformation.

Encoder-Only Models

Core Architecture and Mechanics

Encoder-only models, such as BERT and RoBERTa, utilize the encoder stack of the original Transformer to build a deep, bidirectional understanding of input data [13] [14] [12]. The self-attention mechanism allows each token in the input sequence to interact with all other tokens, enabling the model to capture the full contextual meaning of each element based on its entire surroundings [14]. This architecture outputs a series of contextual embeddings that encapsulate the nuanced understanding of the input, making them highly suitable for analysis tasks [14].

These models are pretrained using self-supervised objectives that involve reconstructing corrupted input. A common pretraining method is Masked Language Modeling (MLM), where random tokens in the input sequence are masked, and the model is trained to predict the original tokens based on the surrounding context [13] [12]. This forces the model to develop a robust, bidirectional representation of the language or data structure. Another pretraining task used in models like BERT is Next Sentence Prediction (NSP), which helps the model understand relationships between different data segments [13].

Applications in Materials Science and Drug Discovery

Encoder-only models excel in scientific tasks that require classification, prediction, or extraction of information from complex structured data. Their ability to provide rich, contextual representations makes them particularly useful in biochemistry and materials informatics.

- Small Molecule and Polymer Property Prediction: Models like ChemBERTa and TransPolymer demonstrate the application of encoder-only architectures in predicting molecular properties [15]. TransPolymer, for instance, uses a chemically-aware tokenizer to convert polymer structures (e.g., SMILES strings of repeating units and descriptors like degree of polymerization) into sequences. The model, pretrained on large unlabeled polymer datasets via MLM, is then fine-tuned to accurately predict properties such as electrolyte conductivity, band gap, and dielectric constant [15].

- Drug-Target Interaction and Virtual Screening: Encoder-only models are effectively employed in drug discovery for tasks like identifying potential drug targets and virtual screening. They can encode molecular structures of compounds and proteins to predict binding affinities or biological activity, significantly accelerating the early stages of drug development [9].

Experimental Protocol: Fine-Tuning an Encoder Model for Polymer Property Prediction

Objective: To adapt a pre-trained encoder-only model (e.g., a RoBERTa-like architecture) for predicting a specific polymer property, such as glass transition temperature (Tg).

Materials and Reagents:

- Hardware: A computing server with a GPU (e.g., NVIDIA A100 or V100) for accelerated training.

- Software: Python 3.8+, PyTorch or TensorFlow, Hugging Face Transformers library, and scientific computing libraries (NumPy, Pandas, Scikit-learn).

- Dataset: A curated dataset of polymer SMILES strings and their corresponding measured Tg values. The dataset should be split into training, validation, and test sets (e.g., 80/10/10).

Procedure:

- Data Preprocessing and Tokenization:

- Represent each polymer sample as a string incorporating the SMILES of its repeating unit and relevant experimental descriptors (e.g.,

"Tg: <value> [MASK] *<Polymer_SMILES>*"). - Use a specialized, chemically-aware tokenizer (e.g., the tokenizer from the pre-trained TransPolymer model) to convert these strings into token IDs and generate an attention mask [15].

- Normalize the numerical Tg values for the regression task.

- Represent each polymer sample as a string incorporating the SMILES of its repeating unit and relevant experimental descriptors (e.g.,

Model Setup:

- Load a pre-trained encoder-only model (e.g.,

roberta-baseor a dedicated scientific model). - Add a custom regression head on top of the model's [CLS] token output. This is typically a dropout layer followed by a linear layer that maps the hidden dimension to a single output value.

- Load a pre-trained encoder-only model (e.g.,

Training Loop:

- Use a Mean Squared Error (MSE) loss function.

- Select an optimizer (e.g., AdamW) with a learning rate between 1e-5 and 5e-5.

- Train the model for a fixed number of epochs (e.g., 20), evaluating on the validation set after each epoch.

- Implement early stopping if the validation loss does not improve for a pre-defined number of epochs.

Evaluation:

- Evaluate the final model on the held-out test set.

- Report standard regression metrics: Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and Coefficient of Determination (R²).

Diagram 1: Encoder Model Fine-Tuning Workflow for Polymer Property Prediction.

Decoder-Only Models

Core Architecture and Mechanics

Decoder-only models, such as the GPT family and LLaMA, form the backbone of most modern Large Language Models (LLMs) [16] [12]. These models utilize only the decoder stack of the original Transformer and are characterized by their use of causal (masked) self-attention [16]. This mechanism ensures that when processing a token, the model can only attend to previous tokens in the sequence, preventing information "leakage" from the future. This autoregressive property is ideal for generative tasks, as the model predicts the next token based on all preceding tokens [16].

The pretraining of decoder-only models is typically based on a next-token prediction objective [12]. The model is trained on vast amounts of unlabeled text data to predict the next token in a sequence given all previous tokens. This process encourages the model to learn a powerful, general-purpose representation of the language or data domain. Modern LLMs are often further refined through a process of instruction tuning, which fine-tunes the pre-trained model to follow instructions and generate helpful, safe, and aligned responses [12].

Applications in Materials Science and Drug Discovery

The powerful generative and in-context learning capabilities of decoder-only models open up novel research pathways in scientific domains.

- De Novo Molecular Design: Decoder-only models can generate novel, valid molecular structures (e.g., in SMILES format) by learning the statistical patterns and "grammar" of chemical languages [11]. This allows for the de novo design of molecules or polymers with desired properties, such as high conductivity for polymer electrolytes or specific band gaps for organic photovoltaics [15].

- Predicting Reaction Outcomes and Retrosynthesis: While often a sequence-to-sequence task, decoder models can be adapted to predict the products of a chemical reaction given the reactants and conditions, framing it as a conditional generation task [11].

- Scientific Assistant and Knowledge Retrieval: Large, instruction-tuned decoder models can act as scientific assistants, answering questions about materials or drugs, summarizing research literature, and providing reasoning based on their internalized knowledge [12].

Experimental Protocol: Using a Decoder Model for De Novo Polymer Design

Objective: To leverage a pre-trained decoder-only LLM for the generative design of novel polymer SMILES strings.

Materials and Reagents:

- Hardware: GPU-equipped server (requirements can be high for large models).

- Software: Python, PyTorch/TensorFlow, Transformers library, RDKit (for chemical validation).

- Model: A pre-trained decoder model, which could be a general-purpose LLM (e.g., LLaMA, GPT-2) or a model pre-trained on a large corpus of chemical structures (e.g., SMILES).

- Data: A large dataset of polymer SMILES for optional further pre-training or fine-tuning.

Procedure:

- Prompt Engineering and Context Setting:

- The core of this method is to provide the model with a prompt that defines the task. For example:

"GENERATE POLYMER SMILES: The polymer should have a high dielectric constant and a glass transition temperature above 100°C. POLYMER: *"

- The core of this method is to provide the model with a prompt that defines the task. For example:

Text Generation Loop:

- The prompt is tokenized and fed into the model.

- The model operates autoregressively: it calculates the probability distribution for the next token, a token is sampled from this distribution (using methods like top-k or nucleus sampling), and this token is appended to the sequence to form the new input for the next step.

- This loop continues until an end-of-sequence token is generated or a maximum length is reached.

Validation and Filtering:

- The generated SMILES strings are parsed using a cheminformatics toolkit like RDKit to check for syntactic and semantic validity.

- Valid SMILES can be further filtered or prioritized using a property predictor (like the fine-tuned encoder model from Section 2.3) or other rule-based criteria to ensure they meet the initial design goals.

Diagram 2: Decoder Model Workflow for De Novo Polymer Design.

Encoder-Decoder Models

Core Architecture and Mechanics

Encoder-decoder models, also known as sequence-to-sequence models, employ both components of the Transformer architecture [12]. The encoder processes the input sequence bidirectionally, creating a rich, contextualized representation. The decoder then uses this representation, along with its own autoregressive generation mechanism (using causal self-attention), to generate the output sequence one token at a time [12] [11]. An important component is the encoder-decoder attention layer in the decoder, which allows it to focus on relevant parts of the input sequence during each step of generation [11].

Pretraining for these models often involves reconstruction tasks. For instance, the T5 model is pre-trained by replacing random spans of text with a single mask token and tasking the model to predict the masked text [12]. BART, another popular model, is trained by corrupting a document with noising functions (like token masking and sentence permutation) and learning to reconstruct the original [12].

Applications in Materials Science and Drug Discovery

This architecture is naturally suited for tasks that involve transforming one representation into another, which is common in scientific workflows.

- Retrosynthetic Planning: This is a quintessential sequence-to-sequence problem in chemistry. The model takes a target molecule (as a SMILES string) as input and generates a sequence representing the reactants and reagents needed for its synthesis, effectively working backward [11].

- Reaction Prediction: Predicting the products of a chemical reaction given the reactants and conditions can be framed as a translation task from "reactants + reagents" to "products" [11].

- Cross-Modal Translation: For example, translating between different molecular representations (e.g., from SMILES to a descriptive IUPAC name) or generating a synthesis procedure from a target molecule string.

Experimental Protocol: Retrosynthesis Planning with an Encoder-Decoder Model

Objective: To use a pre-trained encoder-decoder model to predict reactant molecules for a given target product molecule.

Materials and Reagents:

- Hardware: GPU server.

- Software: Python, PyTorch/TensorFlow, Transformers library, RDKit.

- Model: A sequence-to-sequence model pre-trained for chemical tasks, such as a T5 or BART model fine-tuned on reaction data.

- Data: A dataset of chemical reactions, such as the USPTO dataset, where each sample is a pair (product SMILES, reactants SMILES).

Procedure:

- Input Preparation:

- The target product molecule is converted into a canonical SMILES string.

- The input is formatted as a string, often with a task prefix (e.g.,

"retrosynthesis: [TARGET_SMILES]").

Inference:

- The input string is encoded by the encoder component.

- The decoder starts with a beginning-of-sequence token and, conditioned on the encoder's output, generates the output sequence token-by-token in an autoregressive fashion.

- The generation continues until an end-of-sequence token is produced. The output is a string representing the predicted reactants and reagents.

Post-processing and Validation:

- The output string is parsed to extract the predicted reactant SMILES.

- The proposed reactants are validated chemically using RDKit to ensure they are valid molecules and that the proposed reaction is chemically plausible.

Comparative Analysis and Architectural Selection

The table below provides a structured comparison of the three core architectures to guide model selection for scientific applications.

Table 1: Comparative Analysis of Transformer Architectures for Scientific Applications

| Feature | Encoder-Only (e.g., BERT, RoBERTa) | Decoder-Only (e.g., GPT, LLaMA) | Encoder-Decoder (e.g., T5, BART) |

|---|---|---|---|

| Core Mechanism | Bidirectional self-attention [12] | Causal (masked) self-attention [16] [12] | Encoder: Bidirectional attention. Decoder: Causal attention [12] |

| Primary Pretraining Task | Masked Language Modeling (MLM), Next Sentence Prediction (NSP) [13] [12] | Next Token Prediction [12] | Span corruption / Text infilling (e.g., T5) [12] |

| Typical Output | Contextual embeddings for each input token, or a pooled [CLS] embedding [13] [14] | A continuation of the input sequence (autoregressive) [16] | A newly generated sequence based on the input [12] |

| Key Scientific Applications | Property prediction, virtual screening, named entity recognition from literature [9] [15] | De novo molecular design, scientific Q&A, knowledge reasoning [11] [15] | Retrosynthesis planning, reaction prediction, cross-modal translation [11] |

| Computational Complexity | O(n²) for sequence length n [10] | O(n²) for sequence length n [16] | O(n² + m²) for input n and output m [10] |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Resources for Transformer-Based Research

| Tool/Resource | Type | Primary Function | Relevance to Materials/Drug Discovery |

|---|---|---|---|

| Hugging Face Transformers | Software Library | Provides APIs and tools to download, train, and use thousands of pre-trained Transformer models [13] [12] | Drastically reduces the barrier to applying state-of-the-art models to scientific problems. |

| SMILES | Data Representation | A string-based notation system for representing molecular structures [11] [15] | The "language" for representing molecules as input to Transformer models. |

| RDKit | Software Library | Cheminformatics and machine learning tools for working with molecular data. | Used for validating generated SMILES, calculating molecular descriptors, and filtering results. |

| PyTorch / TensorFlow | Deep Learning Framework | Open-source libraries for building and training neural networks. | The foundational infrastructure for implementing, modifying, and training model architectures. |

| Graph Neural Networks (GNNs) | Model Architecture | Neural networks that operate directly on graph-structured data. | Often used in conjunction with Transformers (e.g., TxGNN [17]) to incorporate explicit topological knowledge from medical or molecular graphs. |

The integration of artificial intelligence into materials science is transforming traditional research paradigms. A significant challenge in applying supervised learning to experimental data is the scarcity of labeled datasets, as manual annotation by domain experts is both time-consuming and costly. This article details how self-supervised learning (SSL) provides a powerful framework to overcome this data bottleneck. By enabling models to learn meaningful representations directly from vast quantities of unlabeled data, SSL establishes a foundational pre-training step that significantly improves downstream task performance with minimal labeled examples. We present application notes and protocols for implementing SSL in the context of materials science, with a specific focus on particle segmentation in Scanning Electron Microscopy (SEM), and situate its utility within the broader objective of materials synthesis planning aided by foundation models.

The development of foundation models for materials science promises to accelerate the discovery and synthesis of novel materials. However, the "data challenge" remains a substantial obstacle. Supervised machine learning approaches require large, meticulously labeled datasets, which are often impractical to acquire in experimental disciplines. For instance, in particle sample analysis, manually annotating thousands of SEM images for segmentation is a prohibitively time-intensive process [18].

Self-supervised learning emerges as a critical solution to this impasse. SSL methods are designed to extract knowledge from raw, unlabeled data by defining a pretext task that the model solves using only the inherent structure of the data itself. This process generates rich, general-purpose feature representations that can be efficiently fine-tuned for specific downstream tasks—such as semantic segmentation, denoising, or classification—with remarkably few labeled examples [19]. This paradigm is particularly well-suited for materials science, where unlabeled data from instruments like SEMs are abundant, but labeled sets are not.

Leveraging SSL for pre-training is a decisive step towards building powerful foundation models for materials science. These models, pre-trained on diverse, multi-modal data, can form the core of autonomous analysis pipelines, ultimately feeding critical structural and property information into synthesis planning systems [20] [21].

Application Notes: SSL for SEM Particle Segmentation

The following application notes are derived from a benchmark study that curated a dataset of 25,000 SEM images to evaluate SSL techniques for particle detection [18].

Key Experimental Findings

The study demonstrated that SSL pre-training consistently enhances model performance across various experimental conditions. The table below summarizes the key quantitative results, highlighting the effectiveness of the ConvNeXtV2 architecture.

Table 1: Performance summary of self-supervised learning models for particle segmentation in SEM images.

| Model Architecture | Primary Downstream Task | Key Performance Metric | Result | Comparative Advantage |

|---|---|---|---|---|

| ConvNeXtV2 (Varying sizes) | Particle Detection & Segmentation | Relative Error Reduction | Up to 34% reduction | Outperformed other established SSL methods across different length scales [18]. |

| Data Efficiency | High performance maintained | An ablation study showed robust performance even with variations in dataset size, providing guidance on model selection for resource-limited settings [18]. | ||

| SSL-pretrained Model (General) | Multiple: Semantic Segmentation, Denoising, Super-resolution | Convergence & Performance | Faster convergence, higher accuracy | Lower-complexity fine-tuned models outperformed more complex models trained from random initialization [19]. |

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential computational components and their functions for implementing SSL in an SEM analysis workflow.

Table 2: Essential components for implementing self-supervised learning in SEM image analysis.

| Item / Component | Function in the SSL Workflow |

|---|---|

| Unlabeled SEM Image Dataset | The foundational "reagent"; a large collection of raw, unannotated images from Scanning Electron Microscopes used for pre-training [18]. |

| ConvNeXtV2 Architecture | A modern convolutional neural network backbone used to learn powerful feature representations from the unlabeled images during pre-training and fine-tuning [18]. |

| Pretext Task Framework | The specific self-supervised algorithm (e.g., contrastive learning, masked autoencoding) that creates a learning signal from unlabeled data [19]. |

| Curated Labeled Subset | A small, expert-annotated dataset used for fine-tuning the pre-trained model on specific tasks like particle segmentation [18]. |

Experimental Protocols

This section provides a detailed methodology for the primary experiments cited in the application notes, specifically the framework for evaluating SSL techniques on SEM images.

Protocol: SSL Pre-training and Fine-tuning for Particle Segmentation

Objective: To train and evaluate a model for segmenting particles in SEM images using self-supervised pre-training on unlabeled data followed by supervised fine-tuning on a small labeled dataset.

Materials and Software

- Dataset: A minimum of 25,000 unlabeled SEM images of particle samples for pre-training. A separate, curated set of several hundred to a few thousand labeled images for fine-tuning [18].

- Computing Environment: High-performance computing cluster with multiple GPUs (e.g., NVIDIA A100 or V100).

- Software Frameworks: Python 3.8+, PyTorch or TensorFlow, and libraries for scientific computing (NumPy, SciPy).

- Model Architecture: ConvNeXtV2 model implementation [18].

Procedure

- Data Preprocessing (Pre-training Phase):

- Collect a large volume of unlabeled SEM images.

- Apply a series of stochastic augmentations to each image to create a positive pair. Standard augmentations include:

- Random cropping and resizing.

- Color jitter (adjust brightness, contrast, saturation, hue).

- Gaussian blur.

- Random grayscale conversion.

- Normalize the pixel values of all images.

Self-Supervised Pre-training:

- Initialize a ConvNeXtV2 model with random weights.

- The model is trained to solve the predefined pretext task. For example, in a contrastive learning framework like SimCLR, the objective is to maximize the agreement between the representations of the two augmented views of the same image while minimizing agreement with views from other images in the same batch.

- Train the model for a large number of epochs (e.g., 500-1000) on the unlabeled dataset using an optimizer like AdamW or LAMB.

Data Preparation (Fine-tuning Phase):

- Utilize the smaller, expert-labeled dataset where each pixel in an SEM image is annotated as either belonging to a particle or the background.

- Split this labeled data into training, validation, and test sets (e.g., 70/15/15).

Supervised Fine-tuning for Segmentation:

- Replace the pre-training head (e.g., the projection network) of the pre-trained model with a new segmentation head, typically a convolutional decoder.

- Initialize the encoder weights with those obtained from the pre-training phase.

- Train the entire model (or sometimes just the decoder) on the labeled training set. The objective is now a standard supervised segmentation task, using a loss function like Dice loss or Cross-Entropy.

- Use the validation set for hyperparameter tuning and to select the best model checkpoint.

Model Evaluation:

- Evaluate the final model on the held-out test set.

- Report standard segmentation metrics such as Intersection over Union (IoU), Dice coefficient, and pixel-level accuracy.

- Compare the performance against a model of the same architecture trained from scratch on the limited labeled dataset to quantify the benefit of SSL pre-training.

Troubleshooting

- Model Collapse during Pre-training: If the model fails to learn diverse representations, adjust the strength of the data augmentations and review the contrastive loss formulation.

- Poor Fine-tuning Results: Ensure that the pre-training and fine-tuning data come from a similar distribution. If the labeled set is too small, consider freezing the encoder layers during the initial stages of fine-tuning.

Visualizing the SSL Workflow for Materials Science

The following diagram illustrates the end-to-end process of self-supervised pre-training and its application to downstream tasks in materials analysis.

Diagram 1: SSL workflow for materials analysis.

Integration with Materials Synthesis Planning

The utility of SSL extends beyond image analysis to the core challenge of synthesis planning. Foundation models for synthesis, such as the LLM-driven framework for quantum dots [20] or the DiffSyn model for zeolites [21], rely on high-quality, structured data. SSL plays a pivotal role in populating these models with accurate information.

For example, an SSL model pre-trained on millions of unlabeled SEM images can be fine-tuned to automatically characterize the morphology, size distribution, and crystallinity of a synthesized powder. This quantitative data regarding synthesis outcome is a critical feedback loop for planning models. By automating the analysis of experimental outcomes, SSL-powered tools accelerate the validation of proposed synthesis routes and enrich the datasets needed to train more accurate and robust synthesis foundation models. This creates a virtuous cycle: better data from SSL-enhanced analysis leads to better synthesis predictions, which in turn guides more efficient experiments [18] [21].

The "Valley of Death" in materials science represents the critical gap between laboratory research discoveries and their successful translation into commercially viable applications. Traditional materials development has been characterized by a "trial-and-error" approach that often consumes 10-15 years and substantial resources to bring a new material from discovery to market implementation [22] [23]. This extended timeline presents significant challenges for industries ranging from pharmaceuticals and energy to electronics and aerospace, where rapid innovation is essential for maintaining competitive advantage. The integration of artificial intelligence, particularly foundation models, is fundamentally transforming this paradigm by accelerating the entire materials development pipeline from initial discovery through synthesis optimization and scale-up.

Foundation models are demonstrating remarkable capabilities in bridging this innovation valley by addressing core challenges in materials synthesis planning. These AI systems leverage retrieval-augmented generation (RAG), multi-agent reasoning, and human-in-the-loop collaboration to compress development timelines that traditionally required decades into significantly shorter periods [24] [25]. The emergence of specialized AI platforms capable of natural language interaction, automated experiment design, and real-time optimization is creating a new research ecosystem where human expertise is amplified rather than replaced. This application note examines the specific protocols, workflows, and reagent solutions that are enabling this transformative shift in materials development, with particular emphasis on their implementation within research environments focused on synthesis planning.

AI Foundation Models for Materials Research

Capabilities and Performance Metrics

Table 1: Foundation Model Capabilities in Materials Research

| Model/Platform | Primary Function | Key Performance Metrics | Application Examples |

|---|---|---|---|

| Chemma (Shanghai Jiao Tong University) | Organic synthesis planning and optimization | 72.2% Top-1 accuracy in single-step retrosynthesis (USPTO-50k); 67% isolated yield in unreported N-heterocyclic cross-coupling achieved in 15 experiments [26] | Suzuki-Miyaura cross-coupling reaction optimization; ligand and solvent screening |

| MatPilot (National University of Defense Technology) | AI materials scientist with human-machine collaboration | Automated experimental platforms reducing manual intervention by >70%; improved consistency and precision in material preparation, sintering, and characterization [24] | Ceramic materials research via solid-state sintering automation; knowledge graph construction from scientific literature |

| GNoME (Google DeepMind) | Crystalline material discovery | Prediction of 2.2 million new crystal structures with ~380,000 deemed stable; 736 structures experimentally validated [27] | Novel stable crystal structure identification for electronics and energy applications |

| 磐石 (Chinese Academy of Sciences) | Scientific foundation model for multi-modal data | Enabled non-specialist team to complete high-entropy alloy (HEA) catalyst design with guidance from domain experts [28] | Cross-disciplinary material design; integration of domain knowledge with AI reasoning |

Technical Architectures for Synthesis Planning

Foundation models for materials science employ sophisticated architectures that integrate domain-specific knowledge with general reasoning capabilities. The Chemma model exemplifies this approach by treating chemical reactions as natural language tasks, enabling the model to learn structural patterns and relationships from SMILES sequences and reaction data [26]. This architecture allows the model to perform multiple critical functions within the synthesis planning workflow, including forward reaction prediction, retrosynthetic analysis, condition recommendation, and yield prediction without requiring quantum chemistry calculations.

The MatPilot system demonstrates an alternative approach centered on human-machine collaboration through a multi-agent framework [24]. Its architecture comprises two core modules: a cognitive module for information processing, data analysis, and decision-making, and an execution module responsible for operating automated experimental platforms. This dual-module design enables continuous iteration between hypothesis generation and experimental validation, creating a closed-loop system for materials development. The cognitive module employs specialized agents for exploration (divergent thinking), evaluation (feasibility analysis), and integration (coordinating diverse perspectives), which work in concert with human researchers to generate innovative research directions and practical experimental protocols.

Experimental Protocols for AI-Driven Materials Development

Protocol 1: Human-AI Collaborative Synthesis Planning

Purpose: To establish a standardized methodology for integrating foundation models into organic synthesis planning through natural language interaction and iterative experimental validation.

Materials and Equipment:

- Foundation model access (e.g., Chemma platform, MatPilot system)

- Automated synthesis workstation with liquid handling capabilities

- Analytical instrumentation (HPLC, NMR, MS)

- Solvent and reagent libraries

- Ligand and catalyst collections

Procedure:

- Reaction Definition: Input target molecule SMILES notation or structural drawing into foundation model interface with specific performance requirements (e.g., yield thresholds, selectivity criteria).

- Retrosynthetic Analysis: Model generates multiple retrosynthetic pathways with associated confidence scores and recommended reaction conditions.

- Route Evaluation: Human experts review proposed pathways based on feasibility, cost, safety, and available resources, selecting optimal route for experimental validation.

- Condition Optimization: Model recommends specific reaction conditions including catalyst/ligand combinations, solvents, temperatures, and concentrations based on similar transformations in its training corpus.

- Experimental Execution: Conduct small-scale reactions using automated synthesis platforms with real-time monitoring capabilities.

- Data Feedback: Input experimental results (yields, selectivity, purity) back into model for continuous learning and refinement.

- Iterative Refinement: Model adjusts recommendations based on experimental outcomes, focusing search space on promising regions of chemical parameter space.

Validation Metrics:

- Yield improvement over traditional methods

- Reduction in required optimization cycles

- Success rate in predicting viable synthetic routes

- Time and resource savings compared to conventional approaches

Table 2: Performance Benchmarks for AI-Driven Synthesis Planning

| Metric | Traditional Approach | AI-Augmented Approach | Improvement |

|---|---|---|---|

| Synthetic route identification time | 2-4 weeks literature review | <24 hours model inference | 85-95% reduction [26] |

| Experimental optimization cycles | 50-100 iterations | 10-15 iterations | 70-80% reduction [26] |

| Success rate for novel reactions | 25-40% initial success | 65-75% initial success | 40-50% improvement [29] |

| Material cost per optimization | $5,000-15,000 | $1,000-3,000 | 70-80% reduction [22] |

Protocol 2: Autonomous Materials Discovery and Optimization

Purpose: To implement a closed-loop materials development system combining AI-driven design with automated experimental validation for accelerated discovery of novel materials.

Materials and Equipment:

- High-throughput synthesis platform (e.g., automated pipetting, robotic arms)

- Multi-modal characterization tools (XRD, SEM, spectroscopy)

- Computational resources for simulation and modeling

- Raw material libraries with diverse chemical compositions

- AI platform with multi-objective optimization capabilities

Procedure:

- Design Space Definition: Specify target material properties and constraints (e.g., thermal stability, conductivity, mechanical strength).

- Generative Design: Foundation model proposes novel material compositions or structures predicted to meet target specifications.

- Synthesis Planning: AI system develops optimized synthesis protocols for proposed materials, including precursor preparation, reaction conditions, and processing parameters.

- Automated Synthesis: Robotic platforms execute synthesis protocols with minimal human intervention, ensuring reproducibility and precise control.

- High-Throughput Characterization: Synthesized materials undergo automated structural and functional characterization to determine key properties.

- Data Integration: Experimental results are fed back into AI model to refine predictions and update design rules.

- Active Learning: Model identifies most informative experiments to perform next, maximizing knowledge gain while minimizing experimental effort.

- Lead Identification: Promising candidates advancing to validation and scale-up studies.

Validation Metrics:

- Number of novel materials discovered per unit time

- Accuracy of property predictions

- Reproducibility of synthesis protocols

- Performance against application-specific benchmarks

Figure 1: Autonomous Materials Discovery Workflow - This diagram illustrates the closed-loop system for AI-driven materials discovery, integrating computational design with automated experimental validation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for AI-Driven Materials Development

| Reagent/Category | Function | Example Applications | AI Integration |

|---|---|---|---|

| Polymer & Resin Libraries | Base materials for composite development | High-performance resins for aerospace applications; membrane materials for separation technologies | AI screening of structure-property relationships to identify candidates with optimal thermal, mechanical, and processing characteristics [22] |

| High-Entropy Alloy Precursors | Metallic material systems with tailored properties | Catalyst design; corrosion-resistant coatings; high-strength structural materials | AI-driven composition optimization to navigate complex multi-element phase spaces and predict stable configurations [28] |

| Ligand & Catalyst Libraries | Reaction acceleration and selectivity control | Cross-coupling reactions; asymmetric synthesis; polymerization catalysts | Foundation model recommendation of optimal catalyst/ligand combinations for specific transformations based on chemical similarity and electronic parameters [26] |

| Solvent & Additive Collections | Reaction medium and performance modifiers | Optimization of reaction kinetics and selectivity; material processing and formulation | AI-guided solvent selection based on computational descriptors (polarity, hydrogen bonding, coordination strength) to maximize yield and purity [29] |

| Characterization Standards | Reference materials for analytical calibration | Quantitative analysis; instrument validation; method development | Automated quality control through AI-powered analysis of spectral data and comparison to reference standards [24] |

Implementation Framework and Workflow Integration

Protocol 3: Knowledge Extraction and Literature-Based Discovery

Purpose: To systematically extract and structure knowledge from scientific literature for training foundation models and guiding experimental programs.

Materials and Equipment:

- Scientific literature corpus (patents, journals, technical reports)

- Natural language processing pipelines

- Knowledge graph construction tools

- Domain-specific ontologies and taxonomies

Procedure:

- Corpus Assembly: Collect comprehensive set of domain-relevant scientific documents from databases and repositories.

- Information Extraction: Deploy NLP models to identify and extract key entities (materials, synthesis methods, properties, applications) and their relationships.

- Knowledge Distillation: Condense complex scientific information into core concepts and relationships suitable for model training.

- Graph Construction: Build structured knowledge graphs representing materials, processing methods, and performance attributes.

- Quality Validation: Expert review of extracted knowledge for accuracy and completeness.

- Model Integration: Incorporate structured knowledge into foundation models through fine-tuning or retrieval-augmented generation.

- Continuous Updating: Establish pipelines for incorporating new publications to maintain knowledge currency.

Validation Metrics:

- Precision and recall of information extraction

- Coverage of domain knowledge

- Utility in guiding successful experiments

- Reduction in literature review time

Figure 2: Knowledge Extraction and Structuring Pipeline - This workflow demonstrates the process of transforming unstructured scientific literature into structured knowledge for foundation model training.

Case Studies and Performance Validation

Case Study: High-Performance Polymer Development

The application of AI-driven approaches to polymer development demonstrates significant acceleration across the entire research-to-application pipeline. Researchers at华东理工大学 developed an AI platform that has reduced screening experiments by 90% while identifying novel polymer compositions with enhanced thermal and mechanical properties [22]. Traditional methods required hundreds of experiments to optimize the balance between heat resistance, mechanical strength, and processability, whereas the AI platform achieved comparable results with dramatically reduced experimental effort.

Implementation Protocol:

- Database Construction: Compiled 2.6 million polymer property data points and 1.4 million chemical reaction records

- Model Training: Developed specialized AI models to identify structure-property relationships beyond human intuition

- Virtual Screening: AI platform screened thousands of potential monomer combinations in silico

- Experimental Validation: Top candidates synthesized and characterized, confirming predicted properties

- Application Testing: Successful deployment in aerospace components with performance exceeding conventional materials

Results: The AI-designed high-temperature polysilylacetylene imide resin demonstrated superior processing characteristics and thermal resistance compared to traditional polyimides, with verification in aerospace applications [22]. This approach compressed a development timeline that traditionally required 5-7 years into approximately 18 months, effectively bridging the valley of death through computational acceleration.

Case Study: Organic Molecule Synthesis Optimization

The Chemma model developed by Shanghai Jiao Tong University exemplifies how foundation models can accelerate reaction optimization and条件筛选 [26]. In one demonstration, the model was applied to an unreported N-heterocyclic cross-coupling reaction, where it successfully identified optimal reaction conditions in only 15 experiments, achieving a 67% isolated yield.

Implementation Protocol:

- Reaction Specification: Defined target transformation and performance criteria

- Condition Generation: Model proposed initial set of reaction conditions based on chemical similarity and learned patterns

- Experimental Execution: Reactions performed using automated synthesis platforms

- Data Integration: Results fed back to model for continuous learning

- Active Learning: Model refined recommendations based on accumulated data

- Validation: Optimal conditions confirmed through repetition and scale-up

Results: The AI-driven approach reduced the number of required experiments by approximately 70% compared to traditional optimization methods while achieving commercially viable yields [26]. This demonstrates the powerful role foundation models can play in accelerating process development, a critical bottleneck in the translation of new molecular entities to practical applications.

The integration of foundation models into materials synthesis planning represents a paradigm shift in how we approach the "Valley of Death" in materials development. The protocols and case studies presented in this application note demonstrate that AI-driven approaches can reduce development timelines by 70-80% while simultaneously improving success rates and optimizing resource utilization [22] [26]. The key to successful implementation lies in establishing robust workflows that seamlessly integrate computational prediction with experimental validation, creating virtuous cycles of continuous learning and improvement.

Looking forward, the field is evolving toward increasingly autonomous research systems where AI not only recommends experiments but also plans and executes them through robotic platforms [29] [24]. The emergence of specialized foundation models trained on scientific data rather than general corpora will further enhance predictive accuracy and practical utility. As these technologies mature, we anticipate a fundamental restructuring of materials research workflows, with AI systems serving as collaborative partners that augment human creativity with computational scale and precision. This collaborative human-AI research paradigm promises to significantly compress the innovation timeline, transforming the "Valley of Death" into a manageable transition that can be navigated with unprecedented speed and efficiency.

How Foundation Models Plan and Optimize Materials Synthesis

Within the paradigm of foundation models for materials discovery, the representation of chemical structures is a fundamental prerequisite. The conversion of molecular entities into machine-readable formats enables the application of advanced artificial intelligence to tasks such as property prediction, synthesis planning, and generative molecular design [1]. Foundation models, trained on broad data and adaptable to a wide range of downstream tasks, rely heavily on the quality and expressiveness of their input data [1]. The choice of representation—whether string-based notations like SMILES and SELFIES, or graph-based structures—directly influences a model's ability to learn accurate structure-property relationships and generate valid, novel materials [30]. This document provides detailed application notes and experimental protocols for employing these key molecular representations in the context of materials synthesis planning research.

Molecular Representation Modalities: A Comparative Analysis

The selection of a molecular representation imposes specific inductive biases on machine learning models. The following table summarizes the core characteristics, advantages, and limitations of the primary modalities used in chemical foundation models.

Table 1: Comparison of Primary Molecular Representation Modalities

| Representation | Data Structure | Key Advantages | Inherent Limitations | Common Downstream Tasks |

|---|---|---|---|---|

| SMILES [31] | String (1D) | Human-readable; Simple syntax; Wide adoption in databases. | Can generate invalid strings; Ambiguity in representing isomers. | Property Prediction, Chemical Language Modeling. |

| SELFIES [31] [32] | String (1D) | 100% syntactic validity; Robustness in generative models. | Less human-readable; Relatively newer, with fewer pre-trained models. | Generative Molecular Design, Robust Inverse Design. |

| Molecular Graph [33] [30] | Graph (2D/3D) | Explicitly encodes topology; Naturally captures connectivity and functional groups. | Requires specialized model architectures (e.g., GNNs). | Quantum Property Prediction, Interaction Modeling. |

| Quantum-Informed Graph (e.g., SIMG) [33] | Graph (3D+) | Incorporates orbital interactions and stereoelectronic effects; High physical fidelity. | Computationally expensive to generate for large molecules. | Accurate Spectroscopy Prediction, Catalysis Design. |

Quantitative performance comparisons between these representations are essential for informed selection. The table below summarizes benchmark results from tokenization and property prediction studies.

Table 2: Quantitative Performance Benchmarks of Molecular Representations

| Representation | Tokenizer / Model | Dataset(s) | Performance Metric (ROC-AUC) | Key Finding |

|---|---|---|---|---|

| SMILES [31] | Atom Pair Encoding (APE) | HIV, Tox21, BBBP | ~0.820 (Average) | APE with SMILES outperformed BPE by preserving chemical context. |

| SELFIES [31] | Byte Pair Encoding (BPE) | HIV, Tox21, BBBP | ~0.800 (Average) | Robust against mutations, but performance lagged behind SMILES+APE. |

| Multi-View (SMILES, SELFIES, Graph) [34] | MoL-MoE (k=4 experts) | Multiple MoleculeNet | State-of-the-Art | Integration of multiple representations yields superior and robust performance. |

| Stereoelectronics-Infused Molecular Graph (SIMG) [33] | Custom GNN | Quantum Chemical | High Accuracy with Limited Data | Explicit quantum-chemical information enables high performance with small datasets. |

Application Notes and Experimental Protocols

Protocol 1: Tokenization of String-Based Representations for Chemical Language Models

Objective: To convert SMILES or SELFIES strings into sub-word tokens suitable for training or fine-tuning transformer-based foundation models (e.g., BERT architectures) for tasks such as property classification.

Materials and Reagents:

- Hardware: Standard workstation with a GPU (e.g., NVIDIA series with ≥8GB VRAM).

- Software: Python 3.8+, PyTorch or TensorFlow, Hugging Face Transformers library, Tokenizers library.

- Data: A dataset of molecular structures in SMILES or SELFIES format (e.g., from PubChem or ZINC [1]).

Procedure:

- Data Preprocessing: Standardize the molecular strings (e.g., canonicalize SMILES) and split the dataset into training, validation, and test sets (e.g., 80/10/10).

- Tokenizer Selection and Training:

- Byte Pair Encoding (BPE): Utilize a standard BPE tokenizer (e.g., from the Hugging Face library) to learn a vocabulary from the training corpus of strings [31].

- Atom Pair Encoding (APE): Implement the APE tokenizer, which is designed to keep chemical entities like atoms and functional groups intact, thus preserving chemical contextual relationships [31].

- Model Training/Fine-tuning: Employ a transformer encoder (e.g., BERT) with the trained tokenizer. Use Masked Language Modeling (MLM) for pre-training or directly fine-tune on a downstream task using a task-specific head.

- Evaluation: Benchmark the model on the held-out test set using domain-relevant metrics such as ROC-AUC for classification tasks.

Protocol 2: Implementing a Multi-View Mixture-of-Experts (MoL-MoE) Framework

Objective: To integrate multiple molecular representations (SMILES, SELFIES, molecular graphs) into a single predictive model for enhanced accuracy and robustness in property prediction [34].

Materials and Reagents:

- Hardware: High-performance computing node with multiple GPUs.

- Software: Deep learning framework (PyTorch/TensorFlow), libraries for graph neural networks (e.g., PyTorch Geometric), MoE implementations.

- Data: Curated molecular datasets with associated properties (e.g., from MoleculeNet).

Procedure:

- Multi-Modal Data Preparation: For each molecule in the dataset, generate the three input modalities:

- A canonical SMILES string.

- Its corresponding SELFIES string.

- A molecular graph object with nodes (atoms) and edges (bonds).

- Expert Network Construction: Create three separate groups of expert networks. Each group contains several "expert" sub-networks specialized in processing one of the three modalities (e.g., Transformers for SMILES/SELFIES, GNNs for graphs).

- Gating Network and Routing: Implement a gating network that takes a fused representation of the inputs and dynamically routes data to the top-k most relevant experts (e.g., k=4) from across all modalities [34].

- End-to-End Training: Train the entire MoL-MoE model, including the gating network and all experts, in an end-to-end manner on the target property prediction task.

- Analysis: Examine the gating network's routing patterns to understand which representations are prioritized for specific chemical tasks.

Protocol 3: Generating and Utilizing Quantum-Informed Molecular Graphs (SIMGs)

Objective: To augment standard molecular graphs with quantum-chemical orbital interaction data for highly accurate prediction of complex molecular properties and behaviors, even with limited data [33].

Materials and Reagents:

- Hardware: Compute cluster with CPUs and GPUs.

- Software: Quantum chemistry software (e.g., ORCA, Gaussian), Python with cheminformatics (RDKit) and deep learning libraries.

- Data: A set of target molecules and their equilibrium 3D geometries.

Procedure:

- Base Graph Generation: Generate a standard 2D molecular graph for each molecule, with atoms as nodes and bonds as edges.

- Quantum Chemical Calculation: For a subset of small molecules, perform ab initio quantum calculations to compute stereoelectronic effects, including natural bond orbitals (NBOs) and their interactions.

- SIMG Construction: Create a Stereoelectronics-Infused Molecular Graph (SIMG) by augmenting the base graph with additional nodes and edges representing key orbitals and their interactions [33].

- Training a Predictive Generator: Train a fast, surrogate machine learning model (e.g., a GNN) on the small-molecule set to predict the SIMG representation from the standard molecular graph. This model can then be applied to generate SIMGs for large molecules (e.g., peptides) where direct quantum calculation is intractable.

- Property Prediction: Use the generated SIMGs to train specialized GNNs for high-fidelity property prediction tasks in catalysis or spectroscopy.

Table 3: Key Resources for Molecular Representation Research

| Resource Name | Type | Function in Research | Access / Reference |

|---|---|---|---|

| ZINC/ChEMBL [1] | Database | Provides large-scale, structured molecular data for pre-training chemical foundation models. | Publicly available databases. |

| Atom Pair Encoding (APE) [31] | Algorithm | A tokenization method for chemical strings that preserves chemical integrity, enhancing model accuracy. | Implementation required as per literature. |

| OmniMol Framework [35] | Software Framework | A hypergraph-based MRL framework for imperfectly annotated data, capturing property correlations. | GitHub repository. |

| TopoLearn Model [30] | Analytical Model | Predicts ML model performance based on the topological features of molecular representation space. | Open access model provided. |

| Embedded One-Hot Encoding (eOHE) [36] | Encoding Method | Reduces computational resource usage (memory, disk) by up to 80% compared to standard one-hot encoding. | Method described in literature. |

| Web Application for SIMG [33] | Tool | Makes quantum-informed molecular graphs (SIMGs) accessible and interpretable for chemists. | Available via associated web portal. |

The integration of foundation models—large-scale, pre-trained artificial intelligence systems—is revolutionizing the approach to materials discovery and chemical synthesis. These models, trained on broad data, can be adapted to a wide range of downstream tasks, offering unprecedented capabilities in predicting material properties and chemical reaction outcomes [1]. This acceleration is critical for reducing the cost and time associated with traditional experimental methods, particularly in fields like drug development and heterogeneous catalysis [37] [38]. This Application Note details the practical implementation of these models, providing structured data, validated experimental protocols, and essential toolkits for researchers.

Quantitative Performance of Foundation Models