Foundation Models for Materials Discovery: Current State, AI Applications, and Future Directions in Biomedical Research

Foundation models, a class of AI trained on broad data and adaptable to diverse tasks, are revolutionizing materials discovery.

Foundation Models for Materials Discovery: Current State, AI Applications, and Future Directions in Biomedical Research

Abstract

Foundation models, a class of AI trained on broad data and adaptable to diverse tasks, are revolutionizing materials discovery. This article explores their current state and future trajectory, specifically for researchers and drug development professionals. We first establish the foundational principles of these models, including transformer architectures and self-supervised learning. The review then details methodological advances in property prediction, generative design, and synthesis planning, highlighting tools like GNoME and SCIGEN that enable the discovery of novel quantum materials and stable crystals. We critically address key challenges in data quality, model generalizability, and computational efficiency, presenting optimization strategies such as knowledge distillation and physics-informed AI. Finally, we examine validation frameworks, performance benchmarks against traditional methods, and the emerging role of large language model agents as autonomous research assistants. The conclusion synthesizes how these integrated capabilities are poised to accelerate the development of advanced materials for drug delivery, diagnostics, and therapeutics.

What Are Foundation Models? Core Concepts Reshaping Materials Science

Foundation models represent a transformative paradigm in artificial intelligence, defined as large-scale machine learning models trained on broad data at scale, typically using self-supervision, that can be adapted to a wide range of downstream tasks [1] [2]. These models have fundamentally altered the AI landscape by decoupling the data-hungry process of learning general-purpose representations from the task-specific adaptation phase [1]. While large language models (LLMs) constitute the most prominent category of foundation models, the conceptual framework extends far beyond textual applications to encompass various data modalities including images, molecular structures, and scientific data [1] [3].

The architectural cornerstone of modern foundation models is the transformer architecture, introduced in 2017, which utilizes a self-attention mechanism that allows models to "pay attention to" different tokens at different moments, calculating relationships and dependencies between tokens regardless of their positional distance [4]. This innovation enabled the parallelization and scaling necessary for training on unprecedented volumes of data, facilitating the emergence of models with billions or trillions of parameters [4]. Foundation models are typically pre-trained using self-supervised learning on vast, unlabeled datasets, then adapted to specific tasks through techniques such as fine-tuning or prompting, making them exceptionally versatile and data-efficient for specialized applications [1] [2].

Core Architectural Principles and Training Methodologies

Transformer Architecture and Self-Attention Mechanism

The transformer architecture serves as the fundamental building block for most contemporary foundation models. Its core innovation lies in the self-attention mechanism, which processes sequences of tokens by projecting each token into three distinct vectors: queries, keys, and values [4]. The model computes alignment scores as the similarity between queries and keys, then uses these scores to create weighted combinations of value vectors, allowing it to dynamically focus on relevant context while ignoring less important tokens [4]. This architecture enables foundation models to capture complex patterns, long-range dependencies, and contextual relationships within their training data, whether that data consists of natural language, molecular structures, or scientific measurements [1] [3].

Training Pipeline: From Pre-training to Specialization

Foundation models undergo a multi-stage training pipeline that begins with pre-training on massive, diverse datasets. During this phase, models learn general representations through self-supervised objectives, such as predicting the next token in a sequence or reconstructing masked portions of input [1] [4]. Following pre-training, models typically undergo specialization through several fine-tuning approaches:

Table: Foundation Model Fine-Tuning Methodologies

| Method | Purpose | Process | Applications |

|---|---|---|---|

| Supervised Fine-Tuning | Adapt general models to specific tasks | Updates model weights using smaller, labeled datasets | Domain-specific customization (e.g., legal, medical) [4] |

| Reinforcement Learning from Human Feedback (RLHF) | Align model outputs with human preferences | Humans rank outputs; model trained to prefer higher-ranked responses | Reducing harmful outputs, improving usefulness [1] [4] |

| Instruction Tuning | Improve ability to follow human instructions | Trains on task examples resembling user requests | Enhancing response to prompts and instructions [4] |

| Reasoning Fine-Tuning | Develop multi-step reasoning capabilities | Trains models to break problems into smaller steps | Complex problem-solving, scientific reasoning [4] |

The complete training workflow encompasses data collection from diverse sources, tokenization, model pre-training, and subsequent specialization phases, as illustrated below:

From LLMs to Scientific AI: Expanding the Paradigm

Evolution Beyond Language Applications

While the public discourse often equates foundation models with LLMs, the paradigm has expanded significantly beyond natural language processing. The defining characteristic of foundation models is not their architecture but their applicability to diverse downstream tasks [2]. This versatility has enabled their adoption across scientific domains, where they process specialized data modalities including molecular structures, crystal formations, spectral data, and scientific literature [1] [3].

In scientific contexts, foundation models demonstrate particular value in integrating multiple data modalities, each offering complementary perspectives on the same underlying phenomenon [3]. For instance, a material's properties can be represented through its crystal structure, density of states, charge density, and textual descriptions, with multimodal foundation models learning aligned representations across these modalities to develop more robust and generalizable understanding [3]. This multimodal approach enables novel scientific applications including accurate property prediction, materials discovery through latent space exploration, and interpretation of emergent features that may provide novel scientific insights [3].

Foundation Models for Materials Discovery

The application of foundation models to materials discovery represents a particularly advanced implementation of scientific AI. These models address core challenges in computational materials science, where the vast combinatorial space of possible materials makes exhaustive calculation computationally infeasible [3]. By learning rich representations from existing materials data, foundation models can dramatically accelerate property prediction and materials screening [1] [3].

Table: Foundation Model Applications in Materials Science

| Application Domain | Traditional Approach | Foundation Model Approach | Key Benefits |

|---|---|---|---|

| Property Prediction | Quantum simulations (computationally expensive) or approximate QSPR methods | Transfer learning from pre-trained models; multi-modal prediction [1] [3] | Significant speedup; state-of-the-art accuracy [3] |

| Materials Discovery | Sequential experimentation and calculation | Generative design; latent space exploration and screening [1] [3] | Rapid exploration of chemical space; novel material identification [3] |

| Synthesis Planning | Expert knowledge; literature search | Extraction and reasoning from scientific literature and patents [1] | Accelerated synthesis route identification; knowledge integration |

| Data Extraction | Manual curation; traditional NER | Multimodal extraction from text, tables, and images [1] | Scalable knowledge base construction; relationship association |

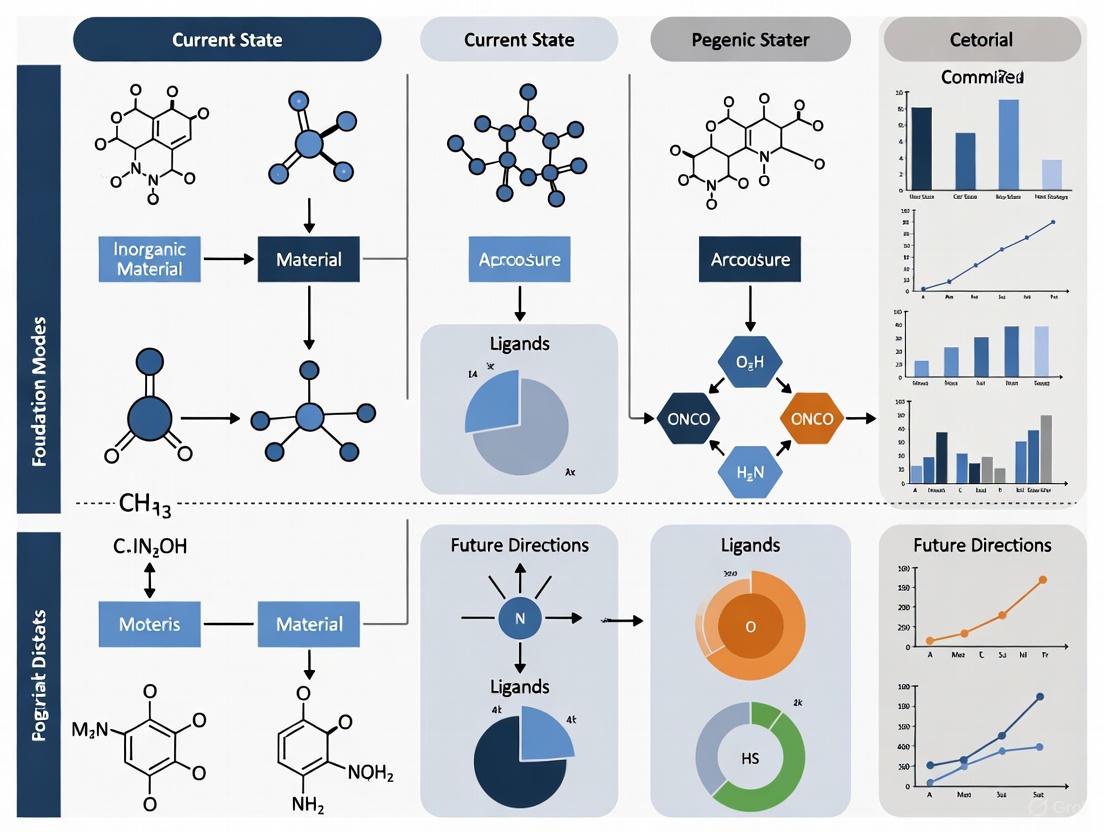

The multimodal framework for materials science integrates diverse data representations, aligning them in a shared latent space to enable various downstream applications, as visualized in the following workflow:

Experimental Protocols and Implementation Frameworks

Multimodal Pre-training for Materials Science

The MultiMat framework demonstrates a sophisticated implementation of foundation models for materials science, employing contrastive learning across multiple modalities to create aligned representations [3]. The experimental protocol involves:

Data Collection and Modalities: The framework utilizes four complementary modalities for each material from databases such as the Materials Project: (i) crystal structure (C), represented as atomic coordinates and lattice vectors; (ii) density of states (DOS) as a function of energy; (iii) charge density as a function of position; and (iv) textual descriptions of crystals generated by tools like Robocrystallographer [3].

Encoder Architectures: Each modality processes through specialized encoders: crystal structures use PotNet (a graph neural network); DOS employs transformer architectures; charge density utilizes 3D convolutional neural networks; and text descriptions leverage pre-trained language models like MatBERT [3].

Training Objective: The model learns through a contrastive alignment loss that brings representations of different modalities for the same material closer in the shared latent space while pushing apart representations of different materials, following principles adapted from CLIP (Contrastive Language-Image Pre-training) but extended to multiple modalities [3].

Implementation Details: Training occurs in two phases: (1) self-supervised multimodal pre-training on large-scale materials data, followed by (2) fine-tuning for specific downstream tasks such as property prediction or generative design [3].

Successful implementation of foundation models for scientific applications requires specific computational resources and datasets, which function as essential "research reagents" in this domain:

Table: Essential Research Reagents for Scientific Foundation Models

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Materials Databases | Materials Project [3], PubChem [1], ZINC [1], ChEMBL [1] | Provide structured data for training and evaluation; source of material structures and properties |

| Molecular Representations | SMILES [1], SELFIES [1], Crystal Graph Representations [3] | Standardized encodings of molecular and crystal structures for model input |

| Model Architectures | Transformer variants [1], Graph Neural Networks (GNNs) [3], Encoder-Decoder frameworks [1] | Neural network backbones for processing different data modalities |

| Specialized Encoders | PotNet (for crystals) [3], MatBERT (for materials text) [3], Vision Transformers (for images) [1] | Domain-specific model components adapted for scientific data |

| Training Infrastructure | GPU clusters [2], Experiment tracking tools (e.g., Neptune) [2], Distributed training frameworks | Computational resources necessary for training at scale |

Future Directions and Challenges

The development of foundation models for scientific applications faces several important challenges and opportunities. Data quality and diversity remain significant concerns, as materials exhibit intricate dependencies where minute details can profoundly influence properties—a phenomenon known as "activity cliffs" [1]. Current models predominantly trained on 2D molecular representations must evolve to incorporate 3D structural information and temporal dynamics [1]. Additionally, interpretability remains a crucial challenge, as scientific applications require not just accurate predictions but understandable relationships that can guide hypothesis formation and theoretical development [3].

Future research directions likely include: development of more sophisticated multimodal frameworks capable of handling an arbitrary number of modalities [3]; improved integration of physical principles and constraints into model architectures; more efficient training and adaptation methods that reduce computational requirements [2]; and enhanced collaboration between AI systems and human scientists throughout the scientific method [5]. As these models become more sophisticated and widely adopted, they hold the potential to significantly accelerate scientific discovery across materials science, chemistry, biology, and related disciplines [1] [5] [3].

The trajectory suggests a future where foundation models serve not merely as predictive tools but as collaborative partners in scientific discovery—capable of generating novel hypotheses, designing experiments, and interpreting complex results in the context of existing scientific knowledge [5]. This partnership between human intuition and machine intelligence may ultimately unlock new paradigms for scientific exploration and technological innovation.

The transformer architecture has emerged as a foundational pillar in the ongoing revolution of artificial intelligence, enabling the development of powerful foundation models that are reshaping the landscape of scientific discovery. Originally designed for natural language processing, this architecture's core mechanism—self-attention—has proven exceptionally capable of modeling complex, long-range dependencies in scientific data. In the domain of materials discovery, transformer-based models are now accelerating the entire research pipeline, from property prediction and molecular generation to the planning of synthesis routes and the operation of autonomous laboratories. This technical guide explores the current state and future directions of transformer-powered foundation models, detailing their architectures, methodologies, and transformative impact on the pace of scientific innovation [1] [6].

The field of AI in science is undergoing a significant paradigm shift, moving from task-specific, hand-crafted models to general-purpose foundation models. These models are characterized by pre-training on broad data at scale, typically using self-supervision, and subsequent adaptation (e.g., fine-tuning) to a wide range of downstream tasks [1]. The transformer architecture serves as the computational engine for this shift, providing the necessary architectural framework for models to learn rich, transferable representations from vast and diverse scientific datasets.

In materials science, this transition is particularly impactful. The intricate dependencies in materials, where minute structural details can profoundly influence macroscopic properties (a phenomenon known as an "activity cliff"), demand models capable of capturing complex, non-local relationships. Transformer-based foundation models are uniquely positioned to address this challenge, enabling researchers to navigate the immense design space of potential materials—estimated to contain between 10^60 and 10^100 molecules—with unprecedented efficiency [1] [7].

Core Architectural Principles of the Transformer

The transformer architecture, introduced by Vaswani et al. in 2017, departs from the sequential processing of earlier recurrent models in favor of a mechanism that processes all elements of a sequence simultaneously and weighs their relative importance.

The Self-Attention Mechanism

The cornerstone of the transformer is the self-attention mechanism, which allows the model to contextualize each element of an input sequence by assessing its relationship to all other elements. For a given sequence, self-attention computes a weighted sum of value vectors for each element, where the weights are determined by the compatibility between its query vector and the key vectors of all other elements. This process can be expressed as:

Attention(Q, K, V) = softmax(QK^T / √d_k)V

Where:

- Q (Query) is a matrix representing the current element being processed.

- K (Key) is a matrix against which the query is compared.

- V (Value) is the actual representation to be weighted and summed.

- d_k is the dimensionality of the key vectors, used as a scaling factor.

This mechanism enables the model to capture dependencies regardless of their distance in the sequence, effectively overcoming the vanishing gradient problem that plagued earlier recurrent architectures and allowing for more parallelized computation [7] [6].

Encoder-Decoder Architecture and Modalities

While the original transformer combined encoding and decoding components, the field has since seen a productive decoupling into specialized architectures:

- Encoder-only models focus on comprehending and generating meaningful representations of input data. These are often used for property prediction and classification tasks, drawing inspiration from models like BERT (Bidirectional Encoder Representations from Transformers) [1].

- Decoder-only models are specialized for generating novel outputs sequentially, predicting one token at a time based on given input and previously generated tokens. These are ideal for generative tasks such as designing new molecular structures [1].

- Encoder-decoder models maintain the original structure and are suited for sequence-to-sequence tasks like translating a desired set of material properties into a molecular structure that fulfills them.

Transformer Applications in Materials Discovery

The adaptability of the transformer architecture has enabled its application across diverse data modalities and tasks in materials science. The following table summarizes the principal application areas and their key characteristics.

Table 1: Key Application Areas of Transformers in Materials Discovery

| Application Area | Key Function | Architecture Type | Data Modalities |

|---|---|---|---|

| Property Prediction [1] [8] | Predicting material properties from structure | Primarily Encoder-only | Molecular graphs, SMILES, 3D crystal structures |

| Molecular Generation [1] [7] | De novo design of novel molecular structures | Primarily Decoder-only | SMILES, SELFIES, Molecular graphs |

| Many-Body Property Prediction [8] | Predicting excited-state quantum properties | Custom Transformer | Mean-field wavefunctions, DFT orbitals |

| Synthesis Planning [1] [9] | Proposing viable synthesis routes and parameters | Encoder-Decoder | Chemical reactions, process conditions |

| Data Extraction [1] | Extracting materials data from scientific literature | Multimodal Transformer | Text, tables, images, molecular structures |

Property Prediction

Traditional quantum mechanical methods for property prediction, such as Density Functional Theory (DFT), are highly accurate but computationally prohibitive for screening large chemical spaces. Transformer-based models offer a powerful alternative. Encoder-only models pre-trained on large molecular datasets can be fine-tuned to predict specific properties with accuracy approaching that of ab initio methods but at a fraction of the computational cost [1] [9].

A significant challenge in this domain is moving beyond 2D molecular representations (e.g., SMILES, SELFIES) to incorporate 3D structural information, which is critical for accurately modeling many material properties. While datasets for 3D structures are currently smaller, transformer architectures are being adapted to handle graph-based and volumetric representations that encode spatial relationships [1].

Molecular Generation and Inverse Design

Decoder-only transformer architectures have revolutionized molecular generation by enabling inverse design—the process of generating candidate structures that satisfy a set of desired properties. Early approaches used string-based representations like SMILES, treating molecular generation as a language modeling task. However, these methods often struggled with ensuring chemical validity [7].

A more robust approach is to use graph-based transformers, which operate directly on the molecular graph, iteratively adding atoms and bonds. This method inherently respects chemical validity and facilitates the incorporation of structural constraints. The GraphXForm model is a leading example of this paradigm, employing a decoder-only graph transformer that is pre-trained on existing compounds and then fine-tuned for specific design objectives using reinforcement learning [7].

Modeling Complex Quantum Interactions

The prediction of excited-state properties governed by quantum many-body interactions represents one of the most computationally challenging tasks in materials science. Methods like GW and Bethe-Salpeter Equation (BSE) formalisms are considered gold standards but scale as poorly as O(N^4) to O(N^6) with system size, making them intractable for high-throughput screening [8].

The MBFormer model addresses this by learning a mapping from ground-state, mean-field wavefunctions (obtained from DFT) to the results of many-body calculations. Its symmetry-aware, grid-free transformer architecture uses attention mechanisms to capture the complex, non-local, energy-dependent correlations that define many-body interactions. This approach achieves high accuracy (MAE of 0.16-0.20 eV for quasiparticle and exciton energies) while reducing computational cost by orders of magnitude, serving as a foundation model for excited-state materials physics [8].

Experimental Protocols and Methodologies

Case Study: GraphXForm for Molecular Design

GraphXForm formulates molecular design as a sequential graph-building task, ensuring inherent chemical validity [7].

1. Problem Formulation:

- Representation: Molecules are represented as hydrogen-suppressed graphs ( G = (V, E) ), where nodes ( vi \in V ) are atoms and edges ( e{ij} \in E ) are bonds.

- Objective: Learn a policy network ( \pi ) that sequentially selects actions ( a_t ) (add atom, add bond, terminate) to construct a graph maximizing a given property function ( R(G) ).

2. Model Architecture (GraphXForm):

- A decoder-only graph transformer takes the current molecular graph as input.

- The model computes node embeddings using a combination of learned atom type embeddings and a positional encoding based on the graph distance from a root node.

- A stack of transformer decoder layers, using self-attention over the node embeddings, produces a final context-aware representation for each node.

- The output consists of three probability distributions guiding the generation:

- Focus Node: Which existing node to connect a new atom to.

- New Atom Type: The chemical element of the new atom.

- Bond Type: The type of bond (single, double, triple) to form between the focus node and the new atom.

3. Training Protocol:

- Pre-training: The model is first trained on a large dataset of existing molecules (e.g., ZINC, ChEMBL) to learn general chemical principles and syntax.

- Fine-tuning: For specific design tasks, the pre-trained model is fine-tuned using a combination of the deep cross-entropy method and self-improvement learning.

- The model generates a batch of candidate molecules.

- Candidates are evaluated using the objective function ( R(G) ).

- The model is updated to increase the probability of generating the top-performing candidates.

- This process iterates, allowing the policy to progressively improve for the target objective.

Table 2: Key Research Reagents and Computational Tools for Transformer Experiments

| Tool / Resource | Type | Primary Function | Example Datasets |

|---|---|---|---|

| GraphXForm [7] | Graph Transformer | Generative molecular design | GuacaMol, ZINC |

| MBFormer [8] | Scientific Transformer | Predicting many-body properties | C2DB (721 2D materials) |

| BMFM [10] | Multi-modal Foundation Model | Multi-task drug discovery | >1B molecules, protein data |

| ZINC/ChEMBL [1] | Chemical Database | Pre-training and benchmarking | ~10^9 molecules each |

| GuacaMol [7] | Benchmarking Suite | Evaluating generative models | Various goal-directed tasks |

Case Study: MBFormer for Many-Body Prediction

MBFormer provides an end-to-end pipeline for predicting excited-state properties from ground-state calculations [8].

1. Problem Setup:

- Input: A set of ( N ) mean-field Kohn-Sham (KS) states from DFT, ( { \phi{nk}, \epsilon{nk} } ), where ( \phi{nk} ) is the Bloch wavefunction and ( \epsilon{nk} ) is its energy for band ( n ) and momentum ( k ).

- Output: Target many-body properties, such as the GW quasiparticle Hamiltonian ( H^{GW} ) or the BSE exciton Hamiltonian ( H^{BSE} ).

2. Tokenization and Embedding:

- Each KS state ( |i\rangle = \phi_{nk} ) is treated as a token.

- A state embedding ( h_i^0 ) is created by concatenating and projecting:

- Orbital features: Band index, k-point coordinates, energy.

- Symmetry features: Irreducible representation labels.

- Environmental descriptor: A learned function of the electron density.

3. Model Architecture (MBFormer):

- The embedded sequence of KS states ( [h1^0, ..., hN^0] ) is passed through a transformer encoder with multiple self-attention layers.

- The attention mechanism is critical, as it allows the model to learn the complex, energy-dependent correlations between different mean-field states that constitute the many-body interaction.

- For a specific task (e.g., predicting a quasiparticle energy), a task-specific query token is introduced. Cross-attention between this query and the encoded KS states produces the final prediction.

4. Workflow Visualization:

Performance and Quantitative Results

The effectiveness of transformer-based models is demonstrated by their state-of-the-art performance on established benchmarks and real-world scientific tasks.

Table 3: Quantitative Performance of Selected Transformer Models

| Model | Task | Dataset / Benchmark | Key Metric | Performance |

|---|---|---|---|---|

| GraphXForm [7] | Drug Design | GuacaMol | Benchmark Score | Outperformed state-of-the-art molecular design approaches |

| GraphXForm [7] | Solvent Design | Liquid-Liquid Extraction | Separation Factor | Outperformed Graph GA, REINVENT-Transformer |

| MBFormer [8] | Quasiparticle Energy | C2DB (721 2D materials) | Mean Absolute Error | 0.16 eV (R² = 0.97) |

| MBFormer [8] | Exciton Energy | C2DB (721 2D materials) | Mean Absolute Error | 0.20 eV |

Future Directions and Challenges

Despite rapid progress, several challenges and opportunities for development remain in the application of transformer architectures to materials discovery.

Data Quality and Modalities: A primary limitation is the reliance on 2D molecular representations in many models due to the scarcity of large, high-quality 3D datasets. Future work will focus on developing multimodal transformers that seamlessly integrate information from text, images, tables, and 3D structural data [1] [9]. Furthermore, the curation of datasets that include "negative" experiments (unsuccessful syntheses or failed candidates) is crucial for improving model robustness [9].

Interpretability and Explainable AI: The "black box" nature of complex transformer models remains a significant barrier to their widespread adoption by domain scientists. Developing methods for explainable AI (XAI) is essential to build trust and provide genuine scientific insight, moving beyond predictions to understanding the physical or chemical rationale behind them [9].

Integration with Autonomous Systems: Transformers are becoming the computational brains of Self-Driving Labs (SDLs). The next evolutionary step is to transition from isolated, lab-centric SDLs to shared, community-driven platforms. A notable initiative in this direction is the effort to create open, cloud-based portals that couple science-ready large language models with data streams from experiments and simulations, thereby democratizing access to advanced materials discovery [11].

Generalization and Safety: Ensuring that foundation models generalize well across diverse chemical spaces and do not generate potentially hazardous or unstable materials is an ongoing concern. This necessitates the development of robust benchmarking frameworks, improved alignment techniques, and ethical guidelines for the responsible deployment of AI in science [6].

The transformer architecture, with its powerful self-attention mechanism, has proven to be far more than a tool for natural language processing. It has become the fundamental engine driving a new era of AI for science, particularly in the field of materials discovery. By enabling the development of versatile and powerful foundation models, transformers are accelerating the entire research pipeline—from data extraction and property prediction to the generative design of novel materials and the autonomous execution of experiments. As research addresses current challenges in data, interpretability, and integration, transformer-based models are poised to further deepen their role as indispensable collaborators in the scientific process, dramatically accelerating the journey from conceptual design to functional material.

Self-Supervised Pre-training, Fine-Tuning, and Alignment

Within the paradigm of foundation models for materials discovery, Self-Supervised Pre-training, Fine-Tuning, and Alignment form a critical pipeline for developing capable and reliable artificial intelligence systems. These methodologies enable the creation of models that learn from vast quantities of unlabeled data and can subsequently be adapted to specialized downstream tasks with limited labeled examples, effectively addressing the data-scarcity challenges prevalent in materials science [1]. Foundation models—models trained on broad data using self-supervision at scale that can be adapted to a wide range of downstream tasks—are increasingly being applied to materials discovery for tasks ranging from property prediction to synthesis planning [1] [12]. The core value lies in their ability to develop transferable representations that capture fundamental relationships in materials science, which can then be efficiently specialized for specific predictive tasks.

The significance of this pipeline is particularly evident in the context of materials property prediction, a cornerstone capability that enables rapid virtual screening of novel materials and accelerates the discovery cycle [1] [9]. Traditionally, accurate property prediction required expensive density functional theory (DFT) calculations or experimental measurements, creating a fundamental bottleneck in materials development. The foundation model approach, utilizing self-supervised pre-training followed by fine-tuning, offers a path toward accurate, data-efficient predictors that can generalize across diverse chemical spaces [13] [14]. Furthermore, alignment ensures that model outputs conform to physical laws and experimental constraints, a critical consideration for deploying these systems in real-world discovery pipelines where physical admissibility is non-negotiable [15].

Self-Supervised Pre-training Strategies

Self-supervised pre-training enables models to learn fundamental representations of materials without the need for expensive labeled data. By creating supervisory signals from the data itself, SSL methods allow models to capture essential chemical and structural patterns that facilitate strong performance on downstream tasks with limited labels [14].

Core Methodologies and Experimental Protocols

Several SSL strategies have been developed specifically for materials science applications, leveraging the natural graph representations of crystalline structures and their compositional information:

Barlow Twins Framework: This approach creates two different augmentations from the same crystalline material and makes encoder representations for these augmentations as similar as possible [14]. The core methodology involves:

- Input: Stoichiometric formula or crystal structure

- Augmentation: Random atom masking (10% of nodes in the formula graph)

- Encoder: ROOST or graph neural network architecture

- Loss Function: Minimizes the cross-correlation matrix between the embeddings of the two augmented versions to be as close to the identity matrix as possible

- Objective: Learn representations that are invariant to trivial variations while capturing essential material characteristics

Element Shuffling: A novel SSL method based on shuffling atoms while ensuring that processed structures contain only elements present in the original structure [16]. This approach:

- Prevents easily detectable atom replacements that could hinder effective learning

- Maintains chemical consistency while creating learning signals

- Has demonstrated accuracy improvements of up to 0.366 eV during fine-tuning compared to state-of-the-art methods

- Achieves approximately 12% improvement in energy prediction accuracy compared to supervised-only training

Multimodal Learning: This strategy leverages available characterized structure data to predict embeddings generated using pretrained structure-based encoders, effectively transferring structural knowledge to structure-agnostic models [14]. The protocol involves:

- Using a pretrained CGCNN encoder from the Crystal Twins framework to generate structural embeddings

- Training a structure-agnostic Roost encoder to predict these structural embeddings

- Enabling the model to learn structural information without explicit structural inputs

Table 1: Comparison of Self-Supervised Pre-training Strategies for Materials Property Prediction

| Strategy | Core Mechanism | Input Data | Key Advantage | Reported Improvement |

|---|---|---|---|---|

| Barlow Twins | Representation invariance via augmentation | Stoichiometry | Leverages compositional information only | Significant gains on small datasets [14] |

| Element Shuffling | Atom rearrangement within elemental constraints | Crystal structure | Maintains chemical consistency | 0.366 eV accuracy gain vs state-of-the-art [16] |

| Multimodal Learning | Cross-modal embedding prediction | Stoichiometry + structure | Transfers structural knowledge | Enhanced data efficiency [14] |

| Supervised Pretraining | Surrogate labels from available classes | Various representations | Leverages limited labeled data effectively | 2-6.67% MAE improvement [13] |

Implementation Workflow

The following diagram illustrates the logical workflow and architectural components involved in self-supervised pre-training for materials property prediction:

Self-Supervised Pre-training Workflow - This diagram illustrates the creation of two augmented views of input data that are processed through encoders with shared weights, with representations optimized using an SSL objective function.

Fine-Tuning Strategies for Domain Adaptation

Fine-tuning represents the crucial adaptation phase where a pre-trained foundation model is specialized for specific materials property prediction tasks. This process leverages the general representations learned during pre-training and refines them for targeted applications with limited labeled data.

Fine-Tuning Methodologies

The fine-tuning process typically involves several key considerations and strategies tailored to materials informatics:

Progressive Fine-Tuning: This approach involves gradually adapting the pre-trained model to the target task by first fine-tuning on a related larger dataset before specializing to the specific property prediction task. This strategy has been shown to improve stability and final performance, particularly for small datasets [14].

Multi-Task Fine-Tuning: Simultaneously fine-tuning on multiple related property prediction tasks can regularize the model and improve generalization by leveraging shared representations across tasks. This approach mimics the multi-task learning paradigm but builds upon pre-trained representations [17].

Parameter-Efficient Fine-Tuning: Techniques such as adapter modules, LoRA (Low-Rank Adaptation), or partial parameter freezing can achieve strong performance while requiring updates to only a small subset of model parameters. This is particularly valuable in materials science where computational resources may be constrained [17].

Table 2: Fine-Tuning Frameworks and Their Applications in Materials Science

| Framework | Supported Models | Key Features | Target Applications |

|---|---|---|---|

| MatterTune [17] | ORB, MatterSim, JMP, EquformerV2 | Modular design, distributed fine-tuning, broad task support | Materials informatics and simulation workflows |

| Roost Fine-Tuning [14] | Roost encoder | Structure-agnostic prediction, message-passing architecture | Property prediction from stoichiometry alone |

| Graph Neural Networks [18] | GNoME architecture | Active learning integration, uncertainty quantification | Stability prediction and materials discovery |

Experimental Protocols for Fine-Tuning

Successful fine-tuning for materials property prediction requires careful experimental design:

Data Preparation:

- Collect labeled dataset for target property (e.g., formation energy, band gap, mechanical properties)

- Perform train/validation/test splits considering material composition similarity to avoid data leakage

- Standardize input representations (SMILES, SELFIES, CIF files, or compositional formulas)

Model Configuration:

- Initialize with pre-trained weights from self-supervised pre-training phase

- Replace task-specific head with appropriate output layer for target property (regression, classification)

- Set optimization hyperparameters (typically lower learning rate than pre-training)

Training Procedure:

- Monitor validation performance to avoid overfitting

- Employ early stopping based on validation loss

- Consider gradual unfreezing of layers for progressive adaptation

The fine-tuning process typically demonstrates the most significant improvements for small datasets, with reported gains of 2-6.67% in mean absolute error for various material property predictions [13]. For structure-agnostic approaches, fine-tuning enables accurate property prediction from stoichiometry alone, achieving performance competitive with structure-based methods [14].

Alignment for Physically Admissible Predictions

Alignment ensures that model outputs conform to physical laws and experimental constraints, representing a critical final step in developing trustworthy materials AI systems. Unlike general AI alignment focused on human values, materials alignment emphasizes physical admissibility, numerical accuracy, and scientific consistency [15] [19].

Physics-Aware Alignment Techniques

Physics-Aware Rejection Sampling (PaRS)

PaRS is a domain-tailored approach that couples rejection sampling with task-native, continuous error metrics derived from wet-lab experiments [15]. The methodology addresses two key challenges in materials discovery: high combinatorial design space and physically grounded outputs. The core protocol involves:

Sequential Trace Generation: For each device recipe, sequentially generate candidate reasoning traces using a teacher model (e.g., Qwen3-235B)

Physics-Aware Acceptance Gates: Evaluate traces based on:

- Consistency with fundamental physics principles

- Numerical closeness to experimental targets

- Adherence to conservation laws and constitutive relations

Efficient Halting: Stop sampling early when further candidates show negligible variance or improvement, controlling computational cost

Student Model Training: Fine-tune a smaller student model (e.g., Qwen3-32B) on the accepted high-quality traces

This approach has demonstrated improvements in accuracy, calibration, and reduced physics-violation rates compared to baselines using binary correctness or learned reward signals [15].

Conformal Alignment

Conformal Alignment provides statistical guarantees for model outputs, ensuring that on average, a prescribed fraction of selected outputs meet specified alignment criteria [19]. The experimental protocol involves:

Reference Data Collection: Assemble a set of reference data with ground-truth alignment status

Alignment Predictor Training: Train a model to predict alignment scores using features such as:

- Uncertainty estimates

- Physical constraint violations

- Consistency with known principles

Threshold Determination: Compute a data-dependent threshold that certifies outputs as trustworthy

Selection: Deploy the alignment predictor to select new units whose predicted alignment scores surpass the threshold

This framework provides formal guarantees regardless of the foundation model or data distribution, making it particularly valuable for high-stakes materials applications [19].

Implementation Framework

The following diagram illustrates the Physics-aware Rejection Sampling (PaRS) workflow for aligning materials foundation models:

Physics-Aware Rejection Sampling - This workflow shows how candidate reasoning traces from a teacher model are filtered through physics-aware gates before fine-tuning a student model.

The Scientist's Toolkit: Research Reagent Solutions

The experimental implementation of self-supervised pre-training, fine-tuning, and alignment for materials discovery relies on several key computational frameworks and data resources. The following table details these essential components and their functions in the research pipeline.

Table 3: Essential Research Resources for Materials Foundation Models

| Resource/Platform | Type | Primary Function | Key Features |

|---|---|---|---|

| MatterTune [17] | Fine-tuning platform | Integrated framework for fine-tuning atomistic foundation models | Modular design, support for multiple models (ORB, MatterSim, JMP), distributed training |

| Roost [14] | Structure-agnostic encoder | Property prediction from stoichiometry alone | Message-passing framework, weighted graph construction, attention pooling |

| GNoME [18] | Graph neural network | Materials discovery and stability prediction | Active learning integration, scaleable architecture, uncertainty quantification |

| Matbench [14] | Benchmarking suite | Standardized evaluation of material property prediction | Diverse tasks, standardized splits, community benchmarks |

| Physics-aware Rejection Sampling [15] | Alignment method | Ensures physical admissibility of model outputs | Physics-aware gates, efficient halting, trace selection |

| Barlow Twins [14] | SSL framework | Self-supervised representation learning | Invariance learning, cross-correlation objective, augmentation strategies |

The integration of self-supervised pre-training, fine-tuning, and alignment represents a paradigm shift in computational materials discovery. Current research directions focus on enhancing the physical grounding of models, improving data efficiency, and developing more robust alignment techniques [15] [1]. Future work will likely address several key challenges:

Multimodal Foundation Models: Developing models that can seamlessly integrate information from text, crystal structures, spectroscopic data, and experimental synthesis parameters [1] [9]

Uncertainty Quantification: Enhancing model calibration and uncertainty estimation to support reliable deployment in autonomous discovery systems [15] [18]

Explainable AI: Improving model interpretability to provide scientific insights alongside predictions, fostering trust within the materials science community [9]

Automated Workflows: Tightening the integration between AI prediction and experimental validation through autonomous laboratories and real-time feedback systems [9]

As these methodologies mature, the combination of self-supervised pre-training, targeted fine-tuning, and rigorous alignment will continue to transform materials discovery, enabling more efficient, physically consistent, and generalizable AI systems that accelerate the design of novel materials with tailored functionalities.

In the emerging paradigm of foundation models for materials discovery, data representation has become a fundamental cornerstone that critically influences model performance, generalizability, and physical consistency. Foundation models—defined as models "trained on broad data (generally using self-supervision at scale) that can be adapted to a wide range of downstream tasks"—are catalyzing a transformative shift in materials science by enabling scalable, general-purpose, and multimodal AI systems for scientific discovery [1] [20]. Unlike traditional machine learning models, which are typically narrow in scope and require task-specific engineering, foundation models offer cross-domain generalization and exhibit emergent capabilities, with their versatility being especially well-suited to materials science where research challenges span diverse data types and scales [20].

The evolution of data representations in materials AI mirrors the broader trajectory from traditional artificial intelligence to advanced AI, moving from heuristic models and empirical data toward generative AI that leverages advanced machine learning frameworks to predict material properties, structural design, and synthesize new materials [21]. This progression has been marked by significant milestones in representation languages for molecular structures and increasingly sophisticated graph-based encodings for crystalline materials, each offering distinct advantages for capturing the complex structure-property relationships that underpin materials functionality. The strategic selection and implementation of these representations now represents a critical determinant of success in deploying foundation models for accelerated materials discovery, particularly as the field addresses persistent challenges in generalizability, interpretability, and data scarcity [20] [22].

Molecular Structure Representations: SMILES and SELFIES

SMILES (Simplified Molecular Input Line Entry System)

The SMILES representation has emerged as a foundational text-based encoding system for molecular structures, serving as a bridge between chemical structures and natural language processing techniques that underpin many foundation models. SMILES represents molecular structures using ASCII strings that encode atomic constituents, bonding patterns, branching, and cyclic structures through a specific grammar of characters and symbols [23]. This textual representation enables the application of powerful natural language processing architectures, particularly transformer-based models, to chemical discovery problems.

The integration of SMILES within foundation models is exemplified by recent large-scale efforts to develop chemical foundation models for battery materials discovery. Researchers at the University of Michigan leveraged SMILES representations to train foundation models on the Polaris supercomputer, enabling the prediction of key electrolyte properties such as conductivity, melting point, boiling point, and flammability [23]. This approach has been further enhanced through the development of SMIRK, a novel tool that improves how models process these structures, enabling learning from billions of molecules with greater precision and consistency [23].

SELFIES (Self-Referencing Embedded Strings)

SELFIES represents an advanced evolution of string-based molecular representations designed specifically to address a critical limitation of SMILES: the generation of invalid molecular structures. SELFIES employs a rigorous grammar that guarantees 100% validity of generated structures, making it particularly valuable for generative tasks in materials discovery [1]. This representation has gained traction in foundation models for molecular design where structural validity is paramount for practical application.

The current materials informatics landscape shows a predominance of models trained on 2D representations such as SMILES or SELFIES, though this approach introduces limitations by omitting critical 3D conformational information [1]. This limitation is partially addressed in specialized domains, particularly for inorganic solids and crystals, where property prediction models typically leverage 3D structural information through graph-based or primitive cell feature representations [1].

Table 1: Comparison of Molecular Structure Representations in Materials AI

| Representation | Format Type | Key Advantages | Limitations | Primary Applications |

|---|---|---|---|---|

| SMILES | Text string | Simple ASCII representation, compatible with NLP models, human-readable | May generate invalid structures, lacks 3D information | Property prediction, virtual screening, foundation model pretraining |

| SELFIES | Text string | Guarantees 100% valid molecular structures | Still primarily 2D representation | Generative molecular design, inverse materials design |

| 3D Graph Representations | Graph structure | Captures spatial relationships, quantum mechanical properties | Computationally intensive, limited training data | High-accuracy property prediction, quantum mechanical calculations |

Experimental Implementation and Methodologies

The practical implementation of SMILES and SELFIES within foundation models follows established computational workflows that transform raw chemical structures into model-ready representations. For SMILES-based foundation models, the standard protocol involves:

Data Collection and Curation: Large-scale molecular databases such as PubChem, ZINC, and ChEMBL provide billions of known molecular structures for pretraining [1]. These databases offer structured information on materials but are often limited by licensing restrictions, dataset size, and biased data sourcing.

SMILES Canonicalization: Molecular structures are converted to canonical SMILES representations using standardized algorithms that ensure consistent encoding of identical molecules regardless of input orientation.

Tokenization: SMILES strings are segmented into tokens compatible with transformer architectures using specialized chemical tokenizers that understand SMILES syntax and preserve meaningful chemical subunits.

Model Architecture Selection: Encoder-only transformer architectures (based on BERT) are typically employed for property prediction tasks, while decoder-only architectures (GPT-based) are used for generative molecular design [1].

Pretraining and Fine-tuning: Models undergo self-supervised pretraining on large unlabeled molecular datasets followed by task-specific fine-tuning on smaller labeled datasets for target properties.

For generative applications, SELFIES implementations typically employ constrained generation algorithms that ensure structural validity throughout the sampling process, enabling efficient exploration of chemical space while maintaining chemical plausibility.

Crystallographic Graph Representations

Fundamentals of Crystallographic Encoding

Crystallographic graph representations have emerged as a powerful framework for encoding inorganic crystalline materials, capturing the fundamental periodicity and bonding environments that dictate material properties. Unlike molecular representations that describe discrete entities, crystallographic graphs represent infinite periodic structures through graph networks where nodes correspond to atoms and edges represent bonded interactions or spatial proximities within the crystal lattice [20]. This representation naturally captures the symmetry constraints and periodicity that are fundamental to crystalline materials.

Advanced implementations of crystallographic graph representations incorporate key symmetry elements including rotation, reflection, inversion, and translation operations that define crystal systems. The representation of crystalline materials in foundation models has been pioneered by systems such as GNoME (Graph Networks for Materials Exploration), which discovered over 2.2 million new stable materials by combining graph neural networks with active-learning-driven density functional theory validation [20]. Similarly, MatterSim employs graph-based representations to create a zero-shot machine-learned interatomic potential trained on 17 million DFT-labeled structures, enabling universal simulation across all elements and a wide range of temperatures and pressures [20].

Advanced Graph Architectures for Crystalline Materials

The development of specialized graph neural network architectures has been instrumental in advancing crystallographic representation learning. These architectures include:

Graph Transformer Networks: Employ attention mechanisms to capture long-range interactions in crystalline materials, overcoming limitations of traditional graph convolutional networks that primarily model local environments [20].

Equivariant Graph Neural Networks: Explicitly incorporate symmetry constraints through equivariance to rotation and translation operations, ensuring that physical predictions remain consistent across reference frames [20].

MultiScale Graph Representations: Capture hierarchical structural information from unit cell configurations to mesoscale morphological features, enabling modeling of properties that emerge across length scales [22].

These architectures have demonstrated remarkable success in property prediction tasks for crystalline materials, accurately forecasting electronic, mechanical, and thermal properties from structural information alone. The representation has proven particularly valuable for high-throughput virtual screening of novel materials, enabling rapid assessment of hypothetical compounds before resource-intensive experimental synthesis or computational validation.

Table 2: Crystallographic Graph Representation Methods in Materials Foundation Models

| Representation Method | Structural Elements Encoded | Symmetry Handling | Notable Implementations | Performance Characteristics |

|---|---|---|---|---|

| Crystal Graph Convolutional Networks | Atoms (nodes), Bonds (edges) | Data augmentation | MatDeepLearn, CGCNN | High accuracy for formation energy prediction, moderate computational cost |

| Graph Transformer Networks | Atoms, bonds, periodic images | Attention mechanisms | CrystalFormer, MACE-MP-0 | Superior for long-range interactions, higher memory requirements |

| Equivariant Graph Networks | Atoms, directional bonds, angular information | Built-in rotational equivariance | MACE, NequIP | State-of-the-art force and energy prediction, computationally intensive |

| Multiscale Graph Representations | Atomic structure, grain boundaries, defects | Hierarchical symmetry preservation | MultiMat, ATLANTIC | Captures emergent properties, complex architecture |

Experimental Protocols for Crystallographic Graph Implementation

The implementation of crystallographic graph representations within foundation models follows rigorous computational workflows:

Crystal Structure Preprocessing:

- Input: Crystallographic Information Files (CIF) containing unit cell parameters, atomic coordinates, and space group symmetry

- Symmetry Analysis: Identification of crystallographic symmetry operations using tools like SPGLIB

- Primitive Cell Reduction: Conversion to smallest repeating unit while preserving symmetry

Graph Construction:

- Node Features: Atomic number, oxidation state, atomic position, magnetic moment

- Edge Definition: Distance-based cutoff (typically 5-8 Å) or Voronoi tessellation

- Edge Features: Bond distance, direction vector, periodic boundary conditions

Graph Neural Network Architecture:

- Message Passing: 3-6 layers of graph convolution operations

- Pooling: Crystal-level pooling through mean/sum aggregation or symmetry-aware pooling

- Readout: Multi-layer perceptron for property prediction

Training Protocol:

- Pretraining: Self-supervised tasks like masked atom prediction or contrastive learning on unlabeled crystal structures

- Fine-tuning: Supervised learning on targeted material properties using datasets like the Materials Project or OQMD

- Validation: Crystallographic hold-out strategies ensuring no similar structures across splits

Recent advances have demonstrated the effectiveness of this approach, with models like MACE-MP-0 achieving state-of-the-art accuracy for periodic systems while preserving equivariant inductive biases essential for physical consistency [20].

Integration in Foundation Models and Workflow Automation

Unified Multimodal Representation Frameworks

The integration of diverse representation modalities within unified foundation models represents a frontier in materials AI research. Modern frameworks aim to combine SMILES, SELFIES, and crystallographic graphs with complementary data types including textual descriptions, experimental spectra, and synthetic procedures [20]. This multimodal approach enables more robust and generalizable models that can leverage complementary information sources.

Notable implementations include nach0, which unifies natural and chemical language processing to perform tasks like molecule generation, retrosynthesis, and question answering [20]. Similarly, MultiMat integrates multiple representation modalities to enable cross-domain learning from literature, structures, and properties [20]. These systems demonstrate the growing trend toward foundation models that can reason across traditionally siloed representation formats, creating more comprehensive understanding of materials behavior.

LLM Agents and Autonomous Workflows

Large Language Model (LLM) agents are emerging as powerful orchestrators of materials discovery workflows, leveraging multiple representation formats to plan and execute complex experimental sequences [20]. These systems utilize LLMs as core reasoning components that interact with external environments, including simulation tools, robotic synthesis platforms, and characterization instruments.

Representative implementations include:

- HoneyComb: Extends LLM capabilities in materials science domain through specialized tools and APIs [20]

- ChatMOF: Autonomous framework for predicting and generating metal-organic frameworks [20]

- MatAgent: LLM-based agentic system for property prediction, hypothesis generation, and experimental data analysis [20]

- A-Lab: Integrates surrogate models and robotic synthesis to optimize experimental discovery through closed-loop automation [20]

These agentic systems represent a paradigm shift from static representation learning toward dynamic, interactive AI systems that can actively participate in the materials discovery process.

Table 3: Essential Computational Tools and Resources for Materials AI Implementation

| Tool/Resource | Type | Primary Function | Representation Formats Supported | Access Method |

|---|---|---|---|---|

| Open MatSci ML Toolkit | Software library | Standardizing graph-based materials learning workflows | Crystallographic graphs, molecular graphs | Open source [20] |

| FORGE | Pretraining utilities | Scalable pretraining across scientific domains | Multimodal (text, graphs, images) | Open source [20] |

| GT4SD | Generative framework | Materials generation and design | SMILES, SELFIES, crystallographic graphs | Open source [20] |

| ALCF Supercomputers | Computing infrastructure | Large-scale foundation model training | All representations | INCITE program access [23] |

| Materials Project | Database | Crystallographic structures and properties | CIF, crystallographic graphs | Web API [1] |

| PubChem | Database | Molecular compounds and properties | SMILES, SELFIES | Web interface/API [1] |

| ChEMBL | Database | Bioactive molecules | SMILES, molecular descriptors | Web interface/API [1] |

| ZINC | Database | Commercially available compounds | SMILES, 3D coordinates | Download [1] |

Future Directions and Research Challenges

The evolution of data representations in materials foundation models faces several significant challenges that define the research frontier. A primary limitation concerns the dimensionality gap between commonly used 2D representations (SMILES, SELFIES) and the 3D structural reality that governs material behavior and properties [1]. This discrepancy is particularly problematic for properties dependent on conformational flexibility, stereochemistry, or supramolecular assembly. Future research directions focus on developing unified 3D-aware representations that maintain computational efficiency while capturing essential spatial information.

A second critical challenge involves data scarcity and imbalance, particularly for crystallographic systems where experimental data remains sparse relative to the vastness of possible compositional and structural combinations [20] [22]. Transfer learning approaches that leverage knowledge from data-rich domains (e.g., small molecules) to data-poor domains (e.g., complex crystals) represent a promising direction, as do data augmentation strategies that explicitly incorporate physical constraints and symmetry operations.

The integration of physical principles directly into representation learning frameworks represents a third frontier, moving beyond pattern recognition in existing data toward physically consistent extrapolation to novel materials classes [22]. Approaches including physics-informed neural networks, equivariant representations that respect fundamental symmetries, and hybrid models that combine machine learning with first-principles simulations are gaining traction as strategies to enhance model interpretability and physical consistency.

Finally, the development of standardized evaluation benchmarks specific to materials foundation models remains an ongoing need, enabling rigorous comparison of representation strategies across diverse materials classes and property domains [20]. Community-wide efforts to establish these benchmarks will accelerate progress toward more effective, reliable, and trustworthy AI-driven materials discovery.

As the field advances, the optimal representation strategy will likely involve context-dependent selection from a portfolio of approaches, with simpler representations like SMILES enabling rapid screening of vast chemical spaces, while more sophisticated crystallographic graphs support high-fidelity modeling of selected candidates. The emergence of multimodal foundation models capable of reasoning across multiple representation formats promises to leverage the complementary strengths of each approach, ultimately accelerating the discovery and design of novel materials with tailored properties and functions.

The exponential growth of scientific literature presents a critical bottleneck in materials discovery and drug development. Valuable experimental data on material properties, synthesis protocols, and performance metrics are locked within multimodal formats—including text, tables, and figures—across millions of research articles and patents. Foundation models, trained on broad data using self-supervision and adaptable to diverse downstream tasks, are poised to overcome this data extraction challenge and accelerate the materials discovery pipeline [1]. This technical guide examines the current state and future directions of automated information extraction from multimodal scientific literature, providing researchers with methodologies and tools to harness this transformative capability.

The Multimodal Data Landscape in Materials Science

Scientific literature presents unique extraction challenges due to its complex integration of data modalities. In materials science, critical information is distributed across textual descriptions, molecular structures in images, numerical data in tables, and experimental results in charts and spectra [1]. This multimodality creates significant hurdles for traditional text-based extraction systems, as key relationships often exist only through connections between these different data representations.

Specialized benchmarks like MatViX have emerged to address this complexity, comprising 324 full-length research articles and 1,688 complex structured JSON files curated by domain experts [24]. These resources provide standardized frameworks for developing and evaluating multimodal extraction systems capable of processing complete document context.

The nanoMINER system exemplifies the advanced capabilities required, processing entire research articles to extract structured data on nanomaterial properties, surface characteristics, and catalytic activities with high precision [25]. Such systems must handle the intricate dependencies where minute details significantly influence material properties—a phenomenon known in cheminformatics as an "activity cliff" [1].

Table 1: Key Challenges in Multimodal Data Extraction from Scientific Literature

| Challenge Category | Specific Limitations | Impact on Research |

|---|---|---|

| Data Modality Integration | Information fragmented across text, tables, and figures [1] | Incomplete data extraction and loss of critical experimental context |

| Cross-Document Inconsistencies | Varied terminologies, measurement units, and presentation styles [26] | Difficulties in standardizing information for comparative analysis |

| Domain-Specific Complexity | Complex chemical nomenclature and cross-domain terminology [25] | Limited accuracy of general-purpose NLP models for scientific content |

| Scalability Limitations | Exponential literature growth outpacing manual processing [25] | Inefficient and time-consuming data curation processes |

Foundation Models and Advanced Extraction Architectures

Foundation models represent a paradigm shift in how machines understand scientific literature. These models, trained through self-supervision on massive datasets, learn transferable representations that can be adapted to specialized downstream tasks with minimal fine-tuning [1]. The transformer architecture, introduced in 2017, forms the basis for these advancements, enabling models to process complex relationships in scientific data through self-attention mechanisms [1].

Architectural Approaches

Two primary architectural paradigms dominate the current landscape:

Encoder-only models (e.g., SciBERT) focus on understanding and representing input data, generating meaningful representations ideal for classification and named entity recognition tasks [26]. These models excel at identifying key entities and relationships within text but lack strong generative capabilities.

Decoder-only models (e.g., GPT series) specialize in generating new outputs by predicting sequences, making them suitable for tasks requiring structured output generation or content creation [1]. These models demonstrate remarkable flexibility in following extraction instructions and producing standardized formats.

The emerging multi-agent approach, exemplified by nanoMINER, combines specialized models orchestrated by a central coordinator [25]. This architecture leverages the strengths of different foundation models while maintaining task focus and improving overall extraction quality through modular error handling.

Specialized Extraction Techniques

Different data modalities require specialized extraction techniques:

Textual Data: Named Entity Recognition (NER) and Relation Extraction (RE) identify key materials concepts, properties, and their relationships. Fine-tuned models like Mistral-7B and Llama-3-8B have shown strong performance in extracting nanomaterial parameters from scientific text [25].

Visual Data: Computer vision models, including Vision Transformers and YOLO, extract molecular structures from images and detect figures, tables, and schematics in documents [1] [25]. Specialized tools like DePlot convert visual representations into structured tabular data [1].

Multimodal Integration: Systems like Plot2Spectra demonstrate how specialized algorithms can extract data points from spectroscopy plots, enabling large-scale analysis of material properties inaccessible to text-only models [1].

Table 2: Performance Metrics of Advanced Extraction Systems in Materials Science

| Extraction System | Primary Architecture | Data Modalities | Reported Precision | Key Applications |

|---|---|---|---|---|

| nanoMINER [25] | Multi-agent (GPT-4o) | Text, images, plots | 0.96-0.98 (kinetic parameters) | Nanomaterial characterization, nanozyme activity |

| SciDaSynth [26] | RAG with GPT-4 | Text, tables, figures | High qualitative accuracy | Cross-domain scientific data synthesis |

| MatViX Benchmark [24] | Vision-Language Models | Full articles, JSON | Significant improvement potential | General materials science extraction |

| Eunomia Agent [25] | GPT-4 | Text | Demonstrated capability | MOF materials and properties |

Experimental Protocols for Multimodal Extraction

Implementing an effective multimodal extraction pipeline requires careful orchestration of specialized components. The following protocols are derived from state-of-the-art systems with proven efficacy in materials science applications.

Multi-Agent Extraction Methodology

The nanoMINER system exemplifies a robust approach to end-to-end document processing [25]:

1. PDF Processing and Data Unbundling

- Input: Scientific articles in PDF format

- Toolset: Specialized PDF parsers (e.g., GROBID, PaperMage) extract text, images, and plots

- Text Segmentation: Article text is strategically divided into 2048-token chunks for efficient processing

- Image Processing: YOLO model detects and classifies visual elements (figures, tables, schemes)

2. Multi-Agent Orchestration

- Main Agent: ReAct agent based on GPT-4o coordinates the workflow, performs function-calling, and merges information

- NER Agent: Fine-tuned Mistral-7B or Llama-3-8B models extract critical parameters from text

- Vision Agent: GPT-4o analyzes graphical images and non-standard tables, linking visual data with textual descriptions

3. Information Aggregation and Structured Output

- The Main Agent aggregates information from NER and Vision agents

- Cross-validation between textual and visual data resolves discrepancies

- Final output is formatted into structured data (JSON, CSV) with material compositions, surface modifiers, reaction conditions, and catalytic properties

This protocol achieved precision of 0.98 for kinetic parameters (Km, Vmax) and essential features (Cmin, Cmax) in nanozyme data, demonstrating its effectiveness for complex scientific extraction tasks [25].

Diagram 1: Multi-Agent Extraction Architecture

Interactive Structured Data Synthesis

The SciDaSynth framework provides an alternative approach emphasizing human-AI collaboration [26]:

1. Query Interpretation and Retrieval

- User defines specific data requirements through natural language queries

- Retrieval-Augmented Generation (RAG) framework dynamically retrieves up-to-date, domain-specific information

- Multimodal retrieval integrates relevant information from text, tables, and figures across multiple documents

2. Table Generation and Standardization

- LLMs interpret retrieved information and generate structured tabular output

- Automatic standardization addresses terminology and unit inconsistencies across documents

- Source linking maintains connections between extracted data and original literature

3. Interactive Validation and Refinement

- Multi-faceted visual summaries highlight variations and inconsistencies across qualitative and quantitative data

- Semantic grouping enables flexible data organization based on content similarities

- Iterative refinement through follow-up queries applied to specific data groups

This protocol significantly reduced time requirements while maintaining high data quality in user studies with nutrition and NLP researchers [26].

Implementing effective multimodal extraction requires a curated set of tools and resources. The following table summarizes essential components for building scientific data extraction pipelines.

Table 3: Essential Tools for Multimodal Scientific Data Extraction

| Tool Category | Representative Solutions | Primary Function | Application Context |

|---|---|---|---|

| Foundation Models | GPT-4o, Mistral-7B, Llama-3-8B [25] | Core understanding and generation capabilities | General-purpose text processing and reasoning |

| Vision-Language Models | GPT-4V, DePlot [1] [24] | Cross-modal understanding of figures and plots | Extracting data from visual representations |

| PDF Processing | PaperMage, GROBID, Adobe Extract API [26] | Unbundling PDF documents into constituent elements | Initial document processing and segmentation |

| Computer Vision | YOLO Models, Vision Transformers [25] | Detection and analysis of visual elements | Identifying figures, tables, and molecular structures |

| Specialized Benchmarks | MatViX, SciDaSynth [24] [26] | Evaluation standards and test datasets | System validation and performance measurement |

| Workflow Orchestration | ReAct Agent Framework [25] | Multi-agent coordination and task management | Complex pipeline implementation |

Implementation Framework and Best Practices

Successful implementation of multimodal extraction systems requires careful attention to several critical factors that influence performance and reliability.

Data Quality Assurance

Establish robust validation mechanisms to ensure extracted data accuracy:

- Source Linking: Maintain clear connections between extracted data points and their source documents to enable verification [26]

- Cross-Modal Validation: Implement consistency checks between data extracted from different modalities (text vs. figures) [25]

- Expert Review: Incorporate domain expert feedback loops for continuous system improvement and error correction [26]

System Architecture Considerations

Design extraction pipelines with modularity and extensibility in mind:

- Specialized Agents: Decompose complex extraction tasks into smaller subtasks handled by fine-tuned components [25]

- Tool Integration: Leverage specialized algorithms (e.g., Plot2Spectra) for domain-specific extraction tasks rather than relying solely on general-purpose models [1]

- Error Handling: Implement graceful failure recovery for problematic document sections or unrecognized content types

Diagram 2: Implementation Framework Components

The field of multimodal information extraction from scientific literature is rapidly evolving, with several promising directions emerging. Future systems will likely feature enhanced cross-modal reasoning capabilities, enabling more sophisticated understanding of the relationships between textual descriptions and visual representations [1]. Improved domain adaptation techniques will make these tools more accessible across specialized subfields of materials science and drug development.

The integration of foundation models with knowledge graphs represents another promising direction, creating structured representations of scientific knowledge that can be queried and updated automatically as new literature emerges [1]. As these systems mature, they will increasingly move from extraction tools to active discovery partners, identifying patterns and relationships across the scientific literature that may elude human researchers.

Addressing the data challenge in multimodal scientific literature requires a thoughtful combination of state-of-the-art foundation models, specialized extraction tools, and human expertise. By implementing the protocols and frameworks described in this guide, researchers and drug development professionals can significantly accelerate their data curation processes, enabling more comprehensive and systematic approaches to materials discovery and development.

From Prediction to Creation: AI Methodologies for Property Prediction and Generative Design

The field of materials discovery is undergoing a transformative shift with the adoption of foundation models—AI systems trained on broad data that can be adapted to a wide range of downstream tasks [1]. Among these, encoder-based models have emerged as particularly powerful tools for property prediction, a core task in accelerated materials design [1]. These models learn contextualized representations of input data through self-supervised pre-training on large unlabeled corpora, typically followed by fine-tuning on specific downstream tasks [27]. This approach has demonstrated remarkable success in predicting diverse molecular and material properties, from quantum mechanical characteristics to physiological activity [27].

Encoder-based models fundamentally differ from traditional machine learning approaches in their ability to learn transferable representations without exhaustive labeled datasets [1]. By processing structured representations of materials—such as Simplified Molecular-Input Line-Entry System (SMILES) strings, molecular graphs, or crystallographic data—these models capture complex patterns and relationships that enable accurate property forecasting [27] [1]. The resulting representations form a latent space that organizes materials based on chemically relevant features, often separating compounds according to properties like electron-donating effects and HOMO energy levels [27]. This structural organization enables not only accurate prediction but also meaningful chemical reasoning with minimal supervision [27].

Core Architectural Principles of Encoder-Based Models

Input Representations and Tokenization