Forward Screening vs. Inverse Design: A Comparative Guide for Modern Drug Discovery

This article provides a comprehensive comparison of forward screening and inverse design methodologies for researchers and drug development professionals.

Forward Screening vs. Inverse Design: A Comparative Guide for Modern Drug Discovery

Abstract

This article provides a comprehensive comparison of forward screening and inverse design methodologies for researchers and drug development professionals. It explores the foundational principles of both approaches, from hypothesis-generating genetic screens to goal-oriented computational design. The scope covers key applications across diverse fields, including functional genomics and AI-driven material discovery, and delves into the specific challenges and optimization strategies for each method. By synthesizing the strengths, limitations, and complementary potential of these paradigms, this review aims to guide the selection and implementation of efficient strategies for target identification and therapeutic development.

Core Principles: From Phenotype-Based Discovery to Target-Oriented Design

In the pursuit of mapping genotype-phenotype relationships, two fundamentally distinct methodological philosophies have emerged: forward screening and inverse design. Forward screening, a classical yet evolving approach, begins with an observed phenotype and works backward to identify the genetic factors responsible [1]. This hypothesis-generating strategy is uniquely powerful for uncovering novel biological mechanisms without preconceived notions about which genes are important. In contrast, inverse design starts with a known gene or pathway and seeks to determine what phenotypes result from its perturbation, serving as a hypothesis-testing framework [1]. This guide provides a comprehensive comparison of these methodologies, focusing on the workflow, applications, and recent technological advancements in forward screening that have reinforced its vital role in functional genomics and drug discovery.

The core distinction lies in their starting points and philosophical approaches. Forward genetic screens have been compared to fishing—scientists cast a wide net without knowing exactly what they will catch—while reverse genetic screens resemble gambling, concentrating resources on a single gene with the hope it produces an interesting phenotype [1]. This unbiased nature of forward screening has led to seminal discoveries across model organisms, establishing it as a powerful tool for gene discovery.

Core Principles and Workflow of Forward Screening

Conceptual Foundation and Key Characteristics

Forward screening operates on the principle that random mutagenesis followed by systematic phenotypic analysis can reveal genes essential for specific biological processes. This approach requires no prior hypotheses about which genes might be involved, allowing for truly novel discoveries [1]. The methodology is particularly valuable for investigating complex biological phenomena where the genetic basis is poorly understood, such as behavior, development, and disease mechanisms.

The key advantage of this unbiased approach is its capacity to identify previously unknown genetic regulators. For instance, forward genetics in mice revealed TLR-4 as the sensor of lipopolysaccharide and Foxp3 as a transcription factor essential for regulatory T-cell development—discoveries that might not have been made through hypothesis-driven approaches [2]. The methodology continues to evolve with technological advancements, maintaining its relevance in modern functional genomics.

The Six-Step Forward Screening Workflow

The standard forward screening pipeline involves a systematic process from mutagenesis to gene identification:

- Assay Design: Develop a specific, quantitative assay to measure the phenotype of interest [1]. This requires thorough characterization of the wild-type phenotype and establishing parameters to distinguish abnormal variants.

- Mutagenesis: Introduce random mutations into the genome using chemical mutagens (e.g., ENU), irradiation, or insertional mutagens (transposons) [1] [2].

- Phenotypic Screening: Systematically examine mutant populations for individuals displaying aberrant phenotypes [1].

- Complementation Analysis: Cross mutants with similar phenotypes to determine if mutations occur in the same or different genes [1].

- Gene Mapping: Identify the chromosomal location of the mutation through linkage analysis or, in the case of insertional mutagenesis, sequence flanking regions [1].

- Gene Cloning: Isolate and clone the DNA encoding the mutated gene for further functional characterization [1].

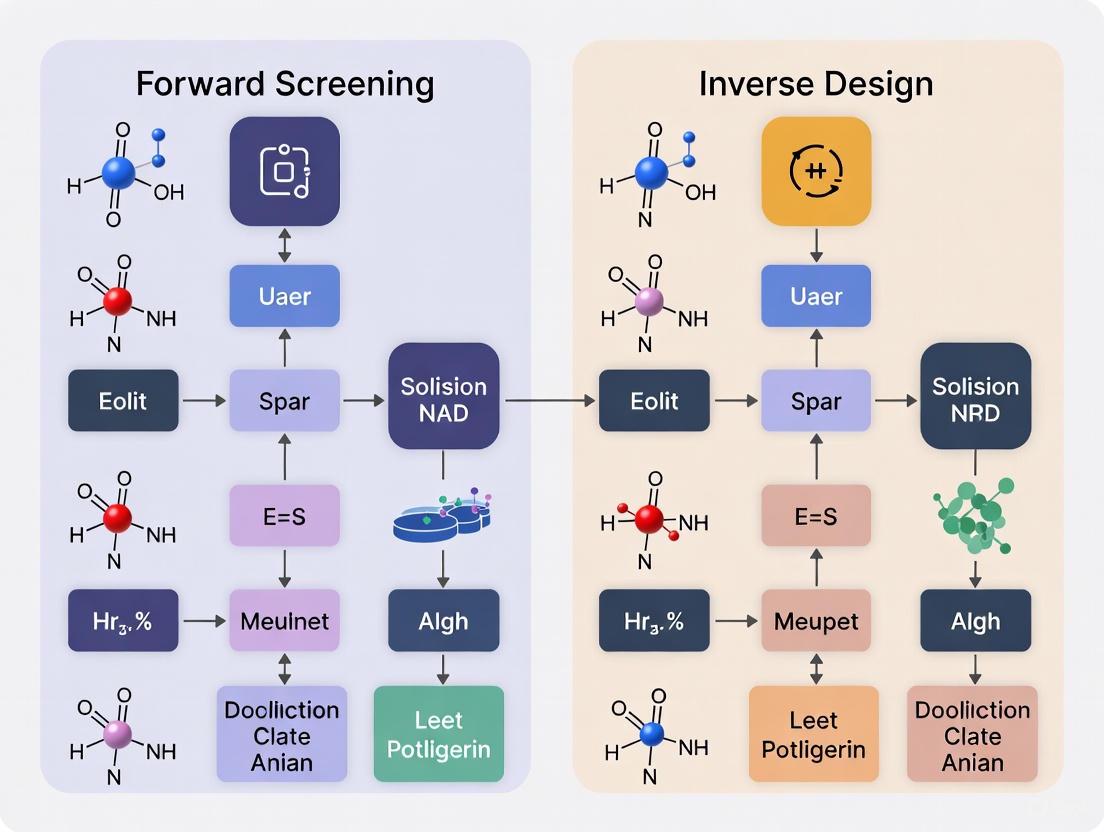

The following diagram illustrates this workflow, highlighting the hypothesis-generating nature of the process:

Methodological Comparison: Forward Screening vs. Inverse Design

The distinction between forward and inverse approaches extends beyond genetics into broader scientific methodology. The table below compares their fundamental characteristics:

Table 1: Core Methodological Differences Between Forward Screening and Inverse Design

| Characteristic | Forward Screening | Inverse Design |

|---|---|---|

| Starting Point | Phenotype of interest [1] | Known gene or pathway [1] |

| Philosophy | Hypothesis-generating [1] | Hypothesis-testing [1] |

| Throughput | Tests thousands of genes simultaneously [1] | Focuses on a single gene or pathway [1] |

| Primary Strength | Unbiased discovery of novel genes [2] | Targeted investigation of gene function |

| Key Limitation | Resource-intensive identification of causal mutations [1] | Limited to known biology; may miss novel interactions |

| Analogy | Fishing - uncertain what will be caught [1] | Gambling - focused investment on one gene [1] |

Advanced Forward Screening Technologies and Quantitative Benchmarking

High-Content Phenotypic Screening with Compression

Recent innovations have dramatically enhanced the scale and resolution of forward screening. Compressed screening (CS) methodologies now enable pooling of exogenous perturbations (e.g., small molecules, protein ligands) followed by computational deconvolution, significantly increasing throughput [3]. This approach reduces sample number, cost, and labor by testing perturbations in pools rather than individually.

In a benchmark study comparing conventional versus compressed screening using a 316-compound FDA drug repurposing library and Cell Painting readout, researchers demonstrated that CS could identify compounds with large effects even at high compression levels [3]. The study employed a regression-based computational framework to deconvolve individual perturbation effects from pooled experiments, validating that top compressed hits drove conserved phenotypic responses when tested individually [3].

Table 2: Performance Benchmarking of Conventional vs. Compressed Screening

| Screening Parameter | Conventional Screening | Compressed Screening (P-fold) |

|---|---|---|

| Sample Number | 2,088 wells (316 compounds + controls) [3] | Reduced by factor of P (3-80 tested) [3] |

| Phenotypic Features | 886 morphological attributes [3] | Same feature set deconvolved [3] |

| Hit Identification | Direct measurement of individual effects [3] | Regression-based inference from pools [3] |

| Key Finding | Identified 8 phenotypic clusters [3] | Consistently identified largest ground-truth effects [3] |

Single-Cell Perturbation Screening (Perturb-seq)

The integration of single-cell RNA sequencing with CRISPR-based screening has revolutionized forward screening by enabling information-rich genotype-phenotype mapping at unprecedented resolution [4]. Perturb-seq measures the transcriptional effects of genetic perturbations across thousands of individual cells, capturing complex cellular responses and heterogeneous effects.

In a landmark genome-scale Perturb-seq study targeting all expressed genes with CRISPRi across >2.5 million human cells, researchers generated a multidimensional portrait of gene and cellular function [4]. This approach successfully predicted functions for poorly characterized genes, uncovering new regulators of ribosome biogenesis (CCDC86, ZNF236, SPATA5L1), transcription (C7orf26), and mitochondrial respiration (TMEM242) [4]. The following diagram illustrates the Perturb-seq workflow:

Experimental Protocols for Key Forward Screening Modalities

Protocol: Forward Genetic Screen in Model Organisms

Application: Identification of genes essential for a specific phenotype (e.g., axon guidance, behavior) in flies, worms, or zebrafish [1].

Procedure:

- Mutagenesis: Treat animals with chemical mutagens (e.g., ENU at 90 mg/kg for mice) [2], irradiation, or transposons to induce random mutations.

- Breeding Scheme: Establish mutant lines through systematic crossing (e.g., cross mutagenized G0 males to females, then intercross G1 offspring) [2].

- Phenotypic Assessment: Screen thousands of individuals using the predefined assay. For example, in axon guidance studies, examine specific axons for mistargeting across thousands of individuals [1].

- Genetic Mapping: For chemical/irradiation mutants, perform linkage analysis to map chromosomal location. For transposon mutants, sequence flanking regions using transposon-specific primers [1].

- Validation: Clone the gene and confirm causality through rescue experiments or independent mutants.

Protocol: Compressed Phenotypic Screening with High-Content Readouts

Application: High-throughput screening of biochemical perturbations (small molecules, proteins) in complex models with limited biomass [3].

Procedure:

- Pool Design: Combine N perturbations into unique pools of size P, ensuring each perturbation appears in R distinct pools [3].

- Experimental Setup: Apply pooled perturbations to biological system (e.g., patient-derived organoids, PBMCs).

- High-Content Readout: Acquire phenotypic data using scRNA-seq, high-content imaging (Cell Painting), or other multimodal assays [3].

- Computational Deconvolution: Apply regularized linear regression with permutation testing to infer individual perturbation effects from pooled measurements [3].

- Hit Prioritization: Rank perturbations by effect size and validate top candidates in individual follow-up experiments.

The Scientist's Toolkit: Essential Research Reagents and Databases

Table 3: Key Research Reagent Solutions for Forward Screening

| Reagent/Database | Function | Application Context |

|---|---|---|

| ENU (N-ethyl-N-nitrosourea) | Chemical mutagen that induces point mutations [2] | Mouse forward genetic screens [2] |

| Cell Painting Assay | Multiplexed fluorescent imaging for morphological profiling [3] | High-content phenotypic screening [3] |

| CRISPR Perturbation Libraries | Pooled sgRNA collections for gene knockout/activation [4] | Perturb-seq and genetic screens [4] |

| PerturBase Database | Curated repository of single-cell perturbation data [5] | Querying and analyzing perturbation effects across studies [5] |

| GEARS (Gene Expression Aware of Regulatory Structure) | Graph neural network for predicting perturbation outcomes [6] | In silico prediction of gene perturbation effects [6] |

Forward screening remains an indispensable methodology in the functional genomics toolkit, particularly when investigating biological processes with unknown genetic determinants. Its hypothesis-generating nature complements targeted inverse design approaches, providing a strategic advantage for discovery-phase research. Recent advancements in compressed screening, single-cell technologies, and computational deconvolution have significantly enhanced the scale, resolution, and efficiency of forward screening approaches.

When designing functional genomics studies, researchers should consider forward screening when pursuing novel gene discovery, investigating complex phenotypes with likely polygenic basis, or when prior knowledge of relevant pathways is limited. Conversely, inverse design approaches are more appropriate for focused hypothesis testing, pathway validation, or when resources are constrained. The integration of both methodologies within a comprehensive research program—using forward screening for unbiased discovery and inverse design for mechanistic validation—represents the most powerful strategy for elucidating genotype-phenotype relationships in complex biological systems.

In the pursuit of innovation across materials science, chemistry, and drug discovery, researchers have traditionally relied on forward screening approaches. This conventional methodology involves creating a vast library of candidate structures, synthesizing or simulating them, testing their properties, and then attempting to identify those that best match desired criteria. While forward screening has yielded significant successes, it faces fundamental limitations in efficiently navigating enormous design spaces, often making the process computationally expensive and time-consuming [7].

Inverse design represents a paradigm shift from this traditional structure-to-property approach. Instead of screening existing candidates, inverse design begins with the desired target property or function and works backward to identify optimal structures that achieve this goal [7]. This goal-oriented framework leverages advanced computational techniques, particularly machine learning and generative models, to explore design spaces more intelligently and efficiently. By reframing the discovery process from property-to-structure, inverse design enables researchers to focus computational resources on promising regions of chemical or material space, potentially accelerating the development of novel solutions with tailored characteristics [7].

Core Principles of Inverse Design

Fundamental Framework and Key Differentiators

Inverse design establishes a fundamentally different workflow from traditional screening methods. Where forward screening follows a "trial-and-error" methodology, inverse design implements a systematic goal-oriented approach that reverses the typical discovery pipeline. This core framework consists of several key stages: first, precisely defining the target properties or functions; second, employing computational models to explore the design space in reverse; and third, generating candidate solutions optimized for the specific target [7].

The table below contrasts the fundamental characteristics of forward screening versus inverse design approaches:

Table 1: Fundamental comparison between forward screening and inverse design methodologies

| Aspect | Forward Screening | Inverse Design |

|---|---|---|

| Directionality | Structure → Property | Property → Structure |

| Search Strategy | Explore then filter | Generate then validate |

| Design Space | Limited to known or pre-enumerated structures | Potentially infinite, including novel configurations |

| Computational Load | High for exhaustive screening | Focused on promising regions |

| Primary Technologies | High-throughput simulation, database mining | Generative models, optimization algorithms |

| Innovation Potential | Incremental improvements | Novel discoveries |

Enabling Technologies and Methodological Variations

The practical implementation of inverse design relies heavily on advanced computational frameworks, with deep generative models emerging as particularly powerful tools. These models learn the underlying patterns and relationships in existing material or molecular databases, then generate novel candidates with desired properties [7]. Common architectural variations include generative adversarial networks (GANs), variational autoencoders (VAEs), and recurrent neural networks, each with distinct strengths for different design challenges [7].

Beyond fully generative approaches, inverse design also incorporates optimization-based methods that combine machine learning forward predictors with search algorithms. For instance, researchers have successfully integrated residual network-based shape prediction models with both gradient descent and evolutionary algorithms to design 4D-printed active composites [8]. This hybrid approach uses machine learning for rapid property prediction while optimization algorithms efficiently navigate the complex design space to identify solutions meeting target specifications.

Comparative Analysis: Quantitative Performance Metrics

Efficiency and Efficacy Across Domains

The performance advantages of inverse design become evident when examining quantitative metrics across various applications. In materials science, inverse design has demonstrated remarkable efficiency in exploring vast design spaces that would be prohibitively expensive to investigate through forward screening alone. For example, in designing active plates for 4D printing, the design space for a 15×15×2 voxel configuration reaches approximately 3×10¹³⁵ possible material distributions—a space effectively navigable only through inverse design methodologies [8].

Table 2: Performance comparison of inverse design versus forward screening across domains

| Application Domain | Forward Screening Performance | Inverse Design Performance | Key Metric |

|---|---|---|---|

| 4D-Printed Active Composites | Limited to small design spaces | Effective even for 3×10¹³⁵ design space [8] | Design space complexity |

| Molecular Optoelectronics | Brute-force screening computationally impossible [9] | Iterative generation of target HLG molecules [9] | Computational feasibility |

| Vanadyl Catalyst Design | Limited by pre-defined chemical space | High validity (64.7%), uniqueness (89.6%) [10] | Generation metrics |

| Polymer Design | Trial-and-error or prediction-screening strategies | 100% chemically valid structures [11] | Structural validity |

| High-Tc Superconductors | DFT calculations computationally expensive | ALIGNN models faster than first-principles [12] | Computational speed |

Accuracy and Validation Metrics

While efficiency is a significant advantage, the ultimate value of inverse design depends on its ability to produce accurate, valid solutions. Experimental validations across multiple domains have demonstrated that inverse design can achieve high accuracy while generating novel configurations. For hierarchical architectures, a recurrent neural network-based forward prediction model achieved over 99% accuracy in predicting strain fields, enabling effective inverse optimization [13]. Similarly, in polymer design, recent advances have achieved 100% chemically valid structures through group SELFIES methods with PolyTAO generators, addressing a longstanding bottleneck in the field [11].

The accuracy of inverse design approaches is further validated through experimental verification. For bi-material 4D-printed facial shells, fabricated structures closely matched target facial features with minimal deviation between simulations and experiments [14]. In molecular design, generated vanadyl-based catalyst ligands demonstrated high synthetic accessibility scores, supporting their practical feasibility [10].

Experimental Protocols and Workflows

Molecular Design Implementation

The inverse design workflow for molecular discovery typically follows an iterative process that combines property prediction, generative design, and validation. The following diagram illustrates a comprehensive molecular inverse design workflow:

Molecular Design Workflow

This workflow implements a closed-loop design process that continuously improves through iteration. As described in studies of molecular optoelectronic properties, the process begins with defining electronic structure targets such as HOMO-LUMO gaps [9]. Initial molecular datasets (e.g., GDB-9) provide starting points for training surrogate models that predict properties from molecular structures [9]. These surrogate models, typically graph convolutional neural networks, learn from quantum chemical calculations (DFT or DFTB methods) to rapidly predict properties without expensive simulations [9].

The generative component then creates novel molecular structures using masked language models or other generative architectures [9]. Each generation of candidates is evaluated using the surrogate model, with promising structures added to the training database for model refinement in subsequent iterations. This iterative retraining addresses the "generalization error" that can occur when generated molecules diverge structurally from the initial training set [9]. Finally, top candidates undergo experimental validation, completing the inverse design cycle.

Materials and 4D Printing Implementation

In materials science and 4D printing, inverse design workflows incorporate specialized simulation and fabrication steps. The following diagram illustrates a typical inverse design process for active composites:

Materials Design Workflow

This workflow specifically addresses the challenge of designing active composites (ACs) that morph into target 3D shapes when stimulated [8]. The process begins with creating a dataset of possible material distributions and their corresponding shape changes using finite element simulations that model the thermal expansion behavior of composite materials [8]. This dataset trains a machine learning model (typically a residual network) to predict deformed shapes from material distributions [8].

The inverse design phase employs optimization algorithms—either gradient-based methods using automatic differentiation or evolutionary algorithms—to find material distributions that minimize the difference between predicted and target shapes [8]. For complex shapes, studies have demonstrated that combining evolutionary algorithms with normal distance-based loss functions achieves superior results [8]. The optimized designs are then fabricated using multimaterial 3D printing, with experimental validation confirming the shape-morphing behavior.

Research Reagent Solutions and Essential Materials

Successful implementation of inverse design requires specialized computational tools and materials. The following table details key resources across different application domains:

Table 3: Essential research reagents and computational tools for inverse design implementation

| Category | Specific Tool/Material | Function in Inverse Design | Example Application |

|---|---|---|---|

| Computational Frameworks | Graph Convolutional Neural Networks | Molecular property prediction | HOMO-LUMO gap prediction [9] |

| Residual Networks (ResNet) | Shape prediction for composites | 4D-printed active plates [8] | |

| Variational Autoencoders (VAE) | Crystal structure generation | Inorganic materials design [7] | |

| Generative Models | Masked Language Models (MLM) | Molecular structure generation | Organic molecule design [9] |

| Generative Adversarial Networks (GAN) | Novel material generation | Porous crystalline materials [7] | |

| Crystal Diffusion VAE | Crystal structure generation | Superconductor design [12] | |

| Simulation Tools | Density Functional Theory (DFT) | Electronic structure calculation | Molecular property computation [9] |

| Finite Element Analysis (FEA) | Mechanical deformation simulation | Shape-morphing prediction [8] | |

| Density-functional Tight-binding (DFTB) | Approximate quantum chemistry | High-throughput property data [9] | |

| Materials Systems | Polylactic Acid (PLA)/Shape Memory Polymers | Active composite fabrication | 4D-printed facial shells [14] |

| Arylfluorosulfates | Latent electrophiles for targeting | Inverse drug discovery [15] | |

| Vanadyl-based complexes (VOSO₄, VO(OiPr)₃, VO(acac)₂) | Modular catalyst scaffolds | Epoxidation catalyst design [10] |

Domain-Specific Applications and Case Studies

Pharmaceutical and Ligand Design

In pharmaceutical research, inverse design has enabled innovative approaches to drug discovery. The "Inverse Drug Discovery" strategy exemplifies this paradigm, where researchers start with small molecules of intermediate complexity harboring latent electrophiles and identify proteins they react with in cells or cell lysates [15]. This approach reverses the conventional drug discovery process by being agnostic to the cellular proteins targeted, instead identifying the proteins after compound exposure [15].

This methodology has been successfully applied using arylfluorosulfates as latent electrophiles. These compounds remain essentially unreactive toward most proteomes but form covalent conjugates with specific proteins that present the correct constellation of functional groups to activate the sulfur-fluoride exchange reaction [15]. Through this inverse approach, researchers have identified and validated covalent ligands for 11 different human proteins, including targeting non-enzymes like hormone carriers and small-molecule carrier proteins [15].

Functional Materials Design

Inverse design has produced significant advances in functional materials development, particularly for electronic and energy applications. Research on high-Tc superconductors demonstrates a comprehensive multi-step workflow combining forward and inverse approaches [12]. This methodology begins with BCS-inspired pre-screening of materials databases, followed by DFT-based electron-phonon coupling calculations to establish superconducting properties [12].

The inverse design component employs crystal diffusion variational autoencoders (CDVAE) to generate thousands of new superconductors with high chemical and structural diversity [12]. These generated structures are then screened using deep learning models (ALIGNN) to identify candidates that are stable with high Tc values, with top candidates verified through DFT calculations [12]. This hybrid approach demonstrates how inverse design can expand beyond known chemical spaces to discover novel materials with tailored electronic properties.

The comparative analysis between forward screening and inverse design reveals distinct advantages and appropriate applications for each methodology. Forward screening remains valuable when exploring limited design spaces or when comprehensive property data for training models is unavailable. However, for challenges requiring navigation of vast design spaces or discovery of truly novel configurations, inverse design offers superior efficiency and innovation potential.

Successful implementation of inverse design requires careful consideration of several factors: sufficient training data quality and diversity, appropriate model selection for the specific design challenge, and robust validation protocols to ensure generated solutions meet both performance and practical constraints. As computational power increases and algorithms evolve, inverse design is poised to become increasingly central to discovery workflows across scientific disciplines, potentially transforming how researchers approach the design of molecules, materials, and pharmaceuticals.

The integration of inverse design with emerging technologies like automated synthesis and high-throughput experimentation further enhances its potential, creating closed-loop discovery systems that can rapidly translate computational designs into physical realities [7] [14]. This convergence suggests that the future of scientific discovery lies not in choosing between forward and inverse approaches, but in strategically combining them to leverage their complementary strengths.

The methodology for discovering and designing new biological interventions has undergone a profound transformation. This evolution has moved from high-throughput physical screening of genetic and pharmacological libraries to sophisticated computational design methodologies that predict outcomes in silico. Traditionally, forward genetic and pharmacological screens involved experimentally perturbing a system—for instance, with gene knockouts or small molecules—and observing the outcomes, such as changes in gene expression or cell phenotype. While powerful, these methods are often resource-intensive and low-throughput relative to the vast complexity of biological systems. The emergence of computational forward prediction and inverse design represents a paradigm shift, enabling researchers to move from observing outcomes to intelligently designing inputs to achieve a desired result. This guide objectively compares the performance and experimental protocols of these evolving methodologies, framing the analysis within the critical comparison of forward screening versus inverse design.

The Legacy of Forward Screening

Forward screening is a discovery-oriented approach where a system is perturbed, and the resulting changes are measured to identify candidates of interest, such as novel drug targets or key genetic regulators.

Core Principles and Experimental Protocols

In a classic forward pharmacological screen, a library of small molecules is applied to a biological system (e.g., a cell line), and a phenotypic or molecular readout (e.g., cell viability, gene expression) is measured. Similarly, forward genetic screens using technologies like CRISPR-Cas9 systematically knock out genes to identify those that influence a specific biological pathway or disease state.

A key experimental protocol for a modern forward expression screen is outlined in the PEREGGRN benchmarking study [16]:

- Perturbation Introduction: A genetic perturbation (e.g., knockout, knockdown, or overexpression) is introduced to a cell population. For CRISPR screens, this involves transducing cells with a lentiviral library of single-guide RNAs (sgRNAs).

- Transcriptome Profiling: Post-perturbation, the transcriptome-wide gene expression profiles are measured using RNA-seq (RNA sequencing).

- Differential Expression Analysis: The expression levels of genes in the perturbed sample are compared to control samples (e.g., non-targeting sgRNAs or wild-type cells) to calculate log fold changes.

- Hit Identification: Genes or compounds that produce a statistically significant and biologically relevant change in the expression signature of interest are identified as "hits."

Performance and Limitations

Large-scale benchmarking reveals both the power and limitations of forward screening. Studies show that in overexpression experiments, the expected increase in the targeted transcript's expression occurs in 73% to over 92% of cases, confirming the technical success of the perturbations [16]. However, the transcriptome-wide effect sizes are often small and not strongly correlated with the effect on the targeted transcript itself [16]. A major limitation is the challenge of replication; correlation in log fold change between replicates can be variable, and some large-scale datasets lack sufficient replication, potentially affecting the reliability of the identified hits [16].

The Rise of Computational Design

Computational methodologies address the bottlenecks of physical screens by using models to predict system behavior, bifurcating into two complementary approaches: forward prediction and inverse design.

Forward Prediction

Forward prediction uses computational models to simulate the outcome of a given perturbation. It essentially automates the "screening" process in silico.

Experimental Protocol for Expression Forecasting (GGRN Framework) [16]:

- Model Training: A machine learning model (e.g., a supervised regression algorithm) is trained on a dataset of known perturbation-expression pairs. The model learns to predict the expression of every gene based on the expression of candidate regulators (e.g., Transcription Factors).

- Data Handling: To avoid trivial predictions, samples where a gene is directly perturbed are omitted when training the model to predict that specific gene's expression.

- Baseline Matching: Predictions can be made from a steady-state expression vector or, more effectively, by predicting the change in expression from a matched control sample.

- Iterative Forecasting: For multi-step predictions, the model's output for one time point can be fed back as input for the next, simulating a dynamic response.

Performance Data: Benchmarks across 11 large-scale perturbation datasets show that it is uncommon for expression forecasting methods to outperform simple baselines, highlighting the difficulty of the task [16]. Performance is highly dependent on the choice of evaluation metric (e.g., Mean Squared Error, Spearman correlation, classification accuracy on cell type), and no single metric is universally best [16].

Inverse Design

Inverse design flips the problem: it starts with a desired outcome (e.g., a target gene expression profile or a specific 3D shape) and computes the perturbation or input configuration needed to achieve it. This is a much harder problem but offers the potential for direct, intelligent design.

Experimental Protocol for Inverse Design in 4D Printing [8]:

- Define Target: A target 3D shape or surface is defined mathematically.

- Forward Model Utilization: A pre-trained, high-accuracy forward prediction model (e.g., a Residual Network trained on Finite Element Analysis data) is used to rapidly evaluate candidate designs.

- Optimization Loop: An optimization algorithm, such as a Gradient Descent (GD) or Evolutionary Algorithm (EA), is used to iteratively adjust the material distribution.

- ML-GD: Uses automatic differentiation to compute exact gradients for efficient search.

- ML-EA: A gradient-free method that explores a vast design space by combining the ML forward model with an evolutionary search strategy.

- Validation: The optimized design is validated through simulation and physical experimentation (e.g., 4D printing of the structure).

Performance Data: In 4D printing, a Recurrent Neural Network (RNN) forward model can achieve over 99% accuracy in predicting physical properties and performance [13]. For inverse design of active plates, the ML-EA approach can efficiently navigate a design space of ~3x10^135 possible configurations, which is impossible for traditional Finite Element-EA methods [8]. In drug target prediction, machine learning-based reverse screening can rank the correct protein target highest among 2,069 possibilities for more than 51% of external test molecules, demonstrating powerful enrichment for drug repurposing and polypharmacology [17].

Comparative Analysis: Performance and Data

The table below summarizes a quantitative comparison of key metrics across these methodologies.

Table 1: Quantitative Comparison of Screening and Design Methodologies

| Methodology | Typical Throughput | Key Performance Metrics | Experimental / Computational Cost | Primary Application |

|---|---|---|---|---|

| Forward Pharmacological Screen | Hundreds of thousands of compounds | Hit rate (e.g., 0.01-1%); Validation rate | Very high (compound libraries, assays) | Phenotypic drug discovery |

| Forward Genetic Screen (CRISPR) | Whole genome (~20,000 genes) | % of targeted genes with expected effect (e.g., 73-92%) [16] | High (library construction, sequencing) | Target identification & validation |

| Computational Forward Prediction (Expression) | Virtually unlimited in silico | Varies; often fails to outperform simple baselines [16] | Moderate (model training data collection) | In-silico perturbation screening |

| Computational Inverse Design (4D Printing) | Explores >10^135 designs [8] | Forward model accuracy (>99%) [13]; Target shape matching | High (computational power for optimization) | Programmable material design |

| Reverse Screening (Target Prediction) | Millions of molecules in silico | Rank 1 target prediction accuracy (~51%) [17] | Low (once model is trained) | Drug repurposing & polypharmacology |

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions

| Item | Function in Research |

|---|---|

| CRISPR sgRNA Library | A pooled library of single-guide RNAs for systematically knocking out genes in a forward genetic screen. |

| Small Molecule Compound Library | A curated collection of chemical compounds used in forward pharmacological screens to identify bioactive molecules. |

| Shape Memory Polymer (SMP) | A "smart" material used in 4D printing that changes shape in response to stimuli (e.g., heat), enabling the physical validation of inverse designs [14]. |

| Polylactic Acid (PLA) | A common biodegradable polymer used as a passive material in multi-material 4D printing to create complex shape-morphing structures [14]. |

| ChEMBL / Reaxys Database | High-quality, curated public databases of bioactive molecules and their properties, used to train and benchmark computational target prediction models [17]. |

Visualizing Workflows

The following diagrams illustrate the logical relationships and fundamental workflows of the discussed methodologies.

Forward vs. Inverse Workflow

Expression Forecasting Engine

In contemporary scientific research, particularly in fields like drug discovery and materials science, two fundamentally distinct approaches have emerged: Unbiased Discovery and Specified Engineering. Unbiased Discovery refers to hypothesis-free approaches that use computational tools to identify key patterns, pathways, or candidates from large datasets without a priori assumptions. In contrast, Specified Engineering employs targeted, hypothesis-driven approaches to design solutions that meet precisely defined criteria or properties. These methodologies align with the broader research paradigms of forward screening (testing multiple candidates against desired properties) and inverse design (directly generating candidates based on target properties) [18]. This guide provides an objective comparison of these approaches, focusing on their performance, experimental protocols, and applications in biomedical and materials research.

Core Conceptual Framework and Workflows

The operational workflows for Unbiased Discovery and Specified Engineering fundamentally differ in their sequencing of key steps, particularly regarding when hypotheses are formed and how candidates are selected or created.

Logical Workflow Diagram

Pathway Analysis Diagram

Performance Comparison: Quantitative Analysis

Pathway Discovery Performance Metrics

Table 1: Performance Comparison of Pathway Analysis Tools in Unbiased Discovery [19]

| Method Type | Tool Name | Median Rank of Correct Pathway | Precision@10 (P@10) | Average Precision@10 (AP@10) |

|---|---|---|---|---|

| Ensemble Methods | PET (Pathway Ensemble Tool) | 1-8 | 76% | 69% |

| Ensemble Methods | decoupler | 1-8 | 76% | 69% |

| Ensemble Methods | piano | 1-8 | 76% | 69% |

| Individual Methods | ora | 7-14 | 45% | - |

| Individual Methods | GSEA | 7-14 | 54% | - |

| Individual Methods | Enrichr | 7-14 | 45% | - |

Inverse Design Performance in Materials Science

Table 2: Performance Comparison of Design Paradigms in Materials Science [8]

| Design Paradigm | Application Domain | Success Rate | Computational Efficiency | Design Space Size |

|---|---|---|---|---|

| Forward Screening | Refractory High-Entropy Alloys | Conventional | Lower | Limited |

| Inverse Design | Refractory High-Entropy Alloys | Enhanced | Higher | 3 × 10¹³⁵ possible distributions |

| ML-Gradient Descent | 4D-Printed Active Plates | High for regular shapes | High | 2⁴⁵⁰ possible configurations |

| ML-Evolutionary Algorithm | 4D-Printed Active Plates | High for irregular shapes | Medium | 2⁴⁵⁰ possible configurations |

Experimental Protocols and Methodologies

Benchmarking Protocol for Unbiased Discovery Tools

The Benchmark platform for evaluating pathway discovery tools comprises three critical components [19]:

Input Genesets (IGS) Preparation: Genesets are derived from high-throughput sequencing experiments, including:

- Transcription factor (TF) bound genes from ChIP-seq

- RNA binding protein (RBP) targets from eCLIP-seq

- Differentially expressed genes from knockdown experiments (gKD)

Target Genesets (TGS) Curation: Established biological pathways from curated databases (KEGG, Gene Ontology) are used as reference.

Evaluation Metrics Calculation:

- For each IGS, the rank of the correct TGS is determined

- Precision@10 (P@10): Frequency of correct pathway in top 10 results

- Average Precision@10 (AP@10): Mean of precision scores at positions 1-10

- Statistical validation using Wilcoxon signed-rank test for significance

Inverse Design Protocol for Specified Engineering

The machine learning-enabled inverse design protocol for active materials involves [8]:

Problem Formulation:

- Material distribution encoded as 3D binary array M (active=1, passive=0)

- Target shape represented by coordinates S of voxel mesh points

- Design space of 2^(Nx×Ny×Nz) possible configurations

Forward Prediction Model Development:

- Deep residual network (ResNet) trained on FE simulation data

- Data augmentation using symmetry operations

- Boundary condition optimization

Inverse Optimization Methods:

- ML-Gradient Descent (ML-GD): Uses automatic differentiation for exact gradient computation

- ML-Evolutionary Algorithm (ML-EA): Employs population-based search with normal distance-based loss function

- Global-subdomain strategy for efficient large-space exploration

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools [19] [20] [8]

| Category | Item/Reagent | Function/Application | Key Features |

|---|---|---|---|

| Computational Tools | Pathway Ensemble Tool (PET) | Unbiased pathway discovery from omics data | Ensemble method combining multiple algorithms |

| Computational Tools | Benchmark Platform | Evaluation of pathway analysis tools | ENCODE-derived experimental datasets |

| Computational Tools | decoupler, piano, egsea | Pathway enrichment analysis | Alternative ensemble methods |

| Computational Tools | ResNet-based ML Model | Forward shape prediction for active materials | Handles complex material-structure mapping |

| Computational Tools | VoxelMorph | Deep learning-based image registration | Probabilistic deformation fields for atlas generation |

| Experimental Datasets | ENCODE Datasets | Source of validated genesets for benchmarking | ~1000 high-throughput sequencing experiments |

| Experimental Assays | RNA-sequencing (RNA-seq) | Transcriptomic profiling for pathway analysis | Identifies differentially expressed genes |

| Experimental Assays | ChIP-seq | Transcription factor binding site identification | Maps protein-DNA interactions |

| Experimental Assays | eCLIP-seq | RNA binding protein target identification | Maps protein-RNA interactions |

| Validation Methods | In vitro cell growth assays | Therapeutic candidate validation | Measures drug efficacy in cell models |

| Validation Methods | In vivo xenograft models | Therapeutic candidate validation | Measures drug efficacy in animal models |

Applications and Case Studies

Unbiased Discovery in Cancer Research

The Pathway Ensemble Tool (PET) has been successfully deployed to identify prognostic pathways across 12 cancer types [19]. Key applications include:

Biomarker Discovery: Genes within PET-identified prognostic pathways serve as reliable biomarkers for clinical outcomes, outperforming existing biomarkers in dividing patients into highly resilient and highly vulnerable categories.

Therapeutic Target Identification: Normalizing prognostic pathways using drug repurposing strategies represents therapeutic opportunities. For example, the top predicted repurposed drug for bladder cancer (CCT068127, a CDK2/9 inhibitor) demonstrated significant repression of cancer growth in vitro and in vivo.

Validation Framework: Findings were confirmed in independent cancer datasets and showed consistency with established aggressive molecular subtypes, demonstrating the robustness of the unbiased discovery approach.

Specified Engineering in Advanced Materials

The inverse design paradigm has demonstrated remarkable success in materials science applications [8]:

4D-Printed Active Plates: ML-enabled inverse design achieved optimized material distributions for complex target shapes that were previously intractable with conventional forward screening approaches.

Large Design Space Navigation: The approach successfully handled design spaces of up to 3 × 10¹³⁵ possible configurations (for 15 × 15 × 2 voxel plates), demonstrating scalability beyond human design capacity.

Multi-Algorithm Optimization: Both ML-Gradient Descent and ML-Evolutionary Algorithm approaches showed complementary strengths, with the former excelling in efficiency for regular shapes and the latter achieving superior performance for irregular target geometries.

Comparative Analysis: Strengths and Limitations

Performance Under Experimental Conditions

Table 4: Comprehensive Comparison of Paradigm Performance [19] [18] [8]

| Performance Metric | Unbiased Discovery | Specified Engineering |

|---|---|---|

| Hypothesis Dependency | Hypothesis-free; discovers unexpected relationships | Requires predefined targets and constraints |

| Design Space Exploration | Comprehensive but can be limited by reference databases | Can navigate extremely large spaces (10¹³⁵+) efficiently |

| Computational Efficiency | Moderate; depends on dataset size and algorithm complexity | High once trained; rapid candidate generation |

| Experimental Validation Rate | 52-76% for top pathway identification | High success for well-defined property targets |

| Resistance to Biological Noise | High (PET demonstrated robustness to variations) | Varies with model architecture and training data |

| Clinical/Biological Relevance | High; directly links to disease mechanisms and biomarkers | High for materials; emerging for biological applications |

| Implementation Complexity | Moderate; requires benchmarking and ensemble methods | High; demands specialized ML expertise and validation |

| Interpretability | Moderate; requires pathway expertise for interpretation | Can be low for complex deep learning models |

The comparative analysis reveals that Unbiased Discovery and Specified Engineering represent complementary rather than competing paradigms. Unbiased Discovery excels in situations where the underlying mechanisms are poorly understood or when seeking novel, unexpected relationships in complex biological systems. Specified Engineering demonstrates superior performance when navigating vast design spaces to achieve precisely defined objectives, particularly in materials science and engineering applications.

The integration of both approaches represents the most promising future direction. For instance, unbiased discovery can identify critical pathways in disease mechanisms, while inverse design can then generate therapeutic candidates targeting those specific pathways. This synergistic approach leverages the strengths of both paradigms while mitigating their individual limitations, potentially accelerating the development of novel therapies and advanced materials.

The ongoing development of more accurate benchmarking platforms, enhanced machine learning architectures, and improved experimental validation frameworks will continue to bridge the gap between these paradigms, enabling more efficient and effective scientific discovery across multiple disciplines.

Methodologies in Action: CRISPR Screens, AI Models, and Real-World Applications

Forward genetics is a powerful approach for identifying the genetic basis of phenotypes, traditionally linking observed traits to their underlying mutations through methods like linkage analysis and genome-wide association studies [21]. The advent of Clustered Regularly Interspaced Short Palindromic Repeats (CRISPR) and its associated Cas9 nuclease has revolutionized this field, providing researchers with an unprecedented ability to perform systematic, genome-wide functional screens [21] [22]. Unlike traditional reverse genetics approaches that study phenotypes by engineering specific, predetermined genetic changes, forward genetics takes an unbiased approach to discover which genes are involved in biological processes or disease states [21]. CRISPR/Cas9 systems excel in this domain because they can generate comprehensive libraries of mutations at known genomic locations, enabling high-throughput screening to identify genes influencing specific cellular phenotypes [21].

The fundamental components of the CRISPR/Cas9 system include a guide RNA (gRNA) containing a ~20-nucleotide spacer sequence that defines the genomic target, and the Cas9 nuclease that creates double-strand breaks in DNA [23]. This system can be programmed to target virtually any genomic locus by simply redesigning the gRNA sequence, making it exceptionally suited for scalable screening applications [24] [23]. CRISPR/Cas9 has largely surpassed earlier technologies like RNA interference (RNAi) and transcription activator-like effector nucleases (TALENs) for functional genomics due to its higher specificity, greater efficiency, and ability to generate permanent, complete gene knockouts rather than temporary knockdowns [24] [22].

This guide provides a comprehensive comparison of CRISPR/Cas9-based loss-of-function and gain-of-function screening methodologies, detailing their experimental protocols, performance characteristics, and applications in modern drug discovery and functional genomics research.

Comparative Analysis of Screening Approaches

Loss-of-Function vs. Gain-of-FFunction Systems

Table 1: Comparison of CRISPR/Cas9 Loss-of-Function and Gain-of-Function Screening Systems

| Feature | Loss-of-Function (Knockout) | Gain-of-Function (Activation) |

|---|---|---|

| Mechanism | Double-strand breaks induce frameshift mutations via NHEJ repair [23] | dCas9 fused to transcriptional activators targets gene promoters [25] |

| Cas9 Type | Wild-type Cas9 nuclease [23] | Catalytically dead Cas9 (dCas9) [25] |

| Primary Application | Identifying essential genes, drug targets, and resistance mechanisms [24] | Studying gene overexpression effects, activating silenced pathways [25] |

| Editing Efficiency | Can reach nearly 100% in optimized systems [25] | Highly variable; up to 90% protein reduction in some systems [25] |

| Multiplexing Capacity | High (2-7 loci with Cas9, higher with Cas12a) [23] | Moderate to high (dependent on activator system) [25] |

| Key Limitations | Off-target effects, dependency on NHEJ repair [23] | Potential for incomplete activation, positional effects [25] |

Performance Metrics and Experimental Data

Table 2: Quantitative Performance Comparison of CRISPR Screening Approaches

| Parameter | CRISPR/Cas9 Knockout | CRISPRa | RNAi Screening |

|---|---|---|---|

| Gene Perturbation | Permanent DNA-level knockout [24] | Transcriptional activation [25] | Transient mRNA knockdown [24] |

| Editing Efficiency | 90-100% in optimized pear systems [25] | Demonstrated in pear calli [25] | Variable, often incomplete [24] |

| Off-Target Effects | Reduced with high-fidelity Cas9 variants [23] | Minimal with careful gRNA design [25] | Common due to seed-based off-targeting [24] |

| Phenotypic Strength | Strong, penetrant phenotypes [24] | Dependent on activation system efficiency [25] | Weaker, transient phenotypes [24] |

| Screening Duration | Long-term analysis possible due to permanent editing [24] | Medium to long-term | Limited by transient nature [24] |

| Library Size | Genome-wide coverage feasible [24] | Targeted or genome-wide [25] | Genome-wide coverage feasible [24] |

Experimental Protocols for CRISPR Screening

Core Workflow for Pooled CRISPR Screens

The most common approach for genome-wide CRISPR screening involves pooled lentiviral libraries where a complex mixture of sgRNAs is delivered to a population of Cas9-expressing cells [24]. The fundamental steps include:

Library Design and Construction: Genome-wide sgRNA libraries typically contain 3-10 guides per gene, with each guide designed to minimize off-target effects while maximizing on-target efficiency [24]. Libraries are cloned into lentiviral vectors for efficient delivery.

Viral Production and Transduction: Lentiviral particles are produced in HEK293T cells and titrated to achieve optimal multiplicity of infection (MOI ~0.3) to ensure most cells receive a single sgRNA [24].

Selection and Phenotype Induction: Transduced cells are selected with antibiotics, then subjected to the experimental condition of interest (e.g., drug treatment, viral infection, or other selective pressures) [24].

Genomic DNA Extraction and Sequencing: After selection, genomic DNA is extracted from surviving cells, sgRNA sequences are amplified by PCR, and next-generation sequencing quantifies sgRNA abundance [24].

Bioinformatic Analysis: Enriched or depleted sgRNAs are identified by comparing their abundance before and after selection, with statistical packages like MAGeCK or CRISPRESSO used to identify significant hits [24].

Arrayed Screening Methodology

Arrayed CRISPR screens offer an alternative format where each sgRNA is delivered separately in multiwell plates, enabling more complex phenotypic readouts [24] [22]. The key protocol differences include:

- Library Format: Individual sgRNAs or gene-targeting sets are arrayed in 96-, 384-, or 1536-well plates [24].

- Delivery Method: Transfection or transduction is performed well-by-well, often using automated liquid handling systems [24].

- Phenotypic Assays: Compatible with high-content imaging, transcriptomics, and other multiparametric readouts since each well contains a genetically uniform population [24].

- Data Analysis: No sequencing required for deconvolution; phenotypes are directly linked to each targeted gene based on well position [24].

Specialized Methodologies: CRISPRa Implementation

For gain-of-function screening, the CRISPR activation (CRISPRa) system employs a deactivated Cas9 (dCas9) fused to transcriptional activation domains like VP64, p65, or HSF1 [25]. The experimental protocol varies in several key aspects:

- gRNA Design: sgRNAs must target promoter regions (typically within -200 to +50 bp relative to transcription start site) rather than coding sequences [25].

- Delivery Considerations: The larger size of dCas9-activator fusions may require optimized delivery systems, with newer compact activators improving compatibility with viral vectors [25].

- Validation: Hits require confirmation through orthogonal methods like RT-qPCR to verify transcriptional activation of target genes [25].

A notable example demonstrated successful implementation of the CRISPR-Act3.0 system in pear calli, achieving multiplexed gene activation in a previously recalcitrant species [25]. This third-generation CRISPRa system showed potent activation capability, successfully engineering the anthocyanin biosynthesis pathway through targeted gene upregulation [25].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for CRISPR Screening

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Cas9 Variants | SpCas9, eSpCas9(1.1), SpCas9-HF1, HypaCas9 [23] | DNA cleavage; high-fidelity variants reduce off-target effects [23] |

| Cas9 Orthologs | Cas12a (Cpf1), Cas12b [25] | Alternative nucleases with different PAM requirements for expanded targeting [25] |

| Activation Systems | dCas9-VP64, CRISPR-Act3.0 [25] | Transcriptional activation for gain-of-function studies [25] |

| Delivery Vehicles | Lentiviral vectors, lipid nanoparticles (LNPs) [26] [24] | Efficient intracellular delivery of CRISPR components [26] |

| gRNA Libraries | Genome-wide knockout (GeCKO), CRISPRa libraries [24] | Pre-designed sgRNA sets for specific screening applications [24] |

| Detection Tools | High-throughput sequencers, flow cytometers, high-content imagers [24] | Phenotypic assessment and hit identification [24] |

Signaling Pathways and Experimental Workflows

The following diagrams visualize key experimental workflows and system architectures for CRISPR-based forward screening approaches.

CRISPR Screening Workflow

CRISPR System Architectures

Emerging Applications and Future Directions

CRISPR screening technologies continue to evolve with emerging applications across biomedical research. In cancer research, genome-wide CRISPR screens have identified novel tumor suppressor genes and oncogenes, with elegant Cas9-expressing mouse models enabling in vivo forward genetic screens to discover cancer drivers and modifiers of therapy response [21] [27]. The technology has proven particularly valuable for studying therapy resistance mechanisms, with screens identifying genes that confer resistance or sensitivity to chemotherapeutic agents, targeted therapies, and immunotherapies [27] [28].

Recent advances include the integration of artificial intelligence to predict CRISPR screen outcomes, potentially reducing the need for costly experimental screens [29]. The 2025 Ashby Prize Hackathon demonstrated that large language models can help predict which genes are likely to be hits in functional screens, enabling researchers to prioritize experiments [29]. Additionally, improved delivery systems like lipid nanoparticles (LNPs) have facilitated in vivo CRISPR screening applications, with clinical trials showing promising results for hereditary transthyretin amyloidosis (hATTR) and hereditary angioedema [26].

The field is also advancing toward more complex phenotypic readouts. Rather than simple viability assays, researchers are implementing high-content imaging, single-cell RNA sequencing, and spatial transcriptomics to capture multidimensional effects of genetic perturbations [24] [28]. These technological improvements continue to solidify CRISPR/Cas9's position as the premier tool for forward genetic screening in the modern research landscape.

The discovery of new materials and drugs has traditionally been dominated by forward screening approaches, which involve computationally or experimentally testing vast libraries of candidate molecules against desired properties. This "trial-and-error" methodology, while systematic, explores chemical space inefficiently and constitutes a significant bottleneck in research and development pipelines. Inverse design represents a fundamental paradigm shift by reversing this process: it starts with the desired properties and uses computational models to generate candidate structures that meet those specifications. This approach, often called "generative inverse design," is dramatically more efficient than traditional methods [11]. By leveraging deep generative models, researchers can navigate an effectively unbounded chemical space on-demand, generating novel polymers, drug candidates, and other molecules with predefined characteristics, thereby accelerating the translation of discoveries into practical applications and lowering development costs [11] [30].

This guide provides a comparative analysis of the primary deep generative models acting as inverse design engines—Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), Diffusion Models, and Transformer-based architectures. It is structured for researchers and professionals, offering objective performance data, detailed experimental protocols, and essential toolkits to inform methodology selection within a research context that critically evaluates forward versus inverse design strategies.

Comparative Analysis of Deep Generative Models

The following sections dissect the core architectures, strengths, and weaknesses of the leading generative models used in inverse design.

Generative Adversarial Networks (GANs)

- Core Architecture: GANs operate on a competitive game-like dynamic between two neural networks: a Generator that creates synthetic data and a Discriminator that evaluates its authenticity against real data [31]. This adversarial training process pushes the generator to produce increasingly realistic outputs.

- Strengths: GANs are renowned for generating crisp, sharp images and are capable of extremely fast inference after training, as creation requires only a single forward pass [31]. This makes them suitable for real-time applications and tasks requiring high visual fidelity.

- Weaknesses in Inverse Design: Training GANs can be unstable and prone to "mode collapse," where the generator produces limited diversity [31]. Furthermore, they offer limited flexibility for conditioning on complex constraints, such as detailed text prompts specifying multiple target properties, which is a significant drawback for precise inverse design [31].

Variational Autoencoders (VAEs)

- Core Architecture: VAEs are latent-variable models that learn to encode input data into a compressed, lower-dimensional latent space that follows a known probability distribution (like a Gaussian) [32]. They then decode points from this space back into the original data domain, enabling the generation of new, similar data.

- Strengths: VAEs provide a stable and tractable training process compared to GANs. The structured latent space allows for smooth interpolation between molecules and offers a degree of interpretability for exploring chemical space [32] [30].

- Weaknesses in Inverse Design: Generated outputs from early VAEs often tend to be blurrier or less detailed than those from GANs or Diffusion Models, which can limit their effectiveness for generating complex molecular structures with high precision [32].

Diffusion Models

- Core Architecture: Diffusion models learn by a two-step process: a forward pass that gradually adds noise to data until it becomes pure noise, and a reverse pass where a neural network is trained to denoise it, thereby reconstructing the data from noise [32] [31].

- Strengths: These models have achieved state-of-the-art results in output diversity and quality. They offer high flexibility and can be easily guided or "conditioned" by text, sketches, or other data types to align generated outputs with specific goals [31]. Their training is also generally more stable than that of GANs.

- Weaknesses in Inverse Design: The primary drawback is slower inference speed, as generating a sample requires multiple (often dozens or hundreds) denoising steps. This process is computationally heavy during both training and inference, though optimizations like Latent Diffusion are mitigating this [31].

Transformer-based Models

- Core Architecture: Originally developed for natural language processing (NLP), Transformers use a self-attention mechanism to weigh the importance of different parts of the input data, such as tokens in a text string or, in chemistry, atoms and bonds in a SMILES string [30].

- Strengths: Transformers excel at capturing long-range dependencies in sequential data, making them powerful for generating valid molecular strings (SMILES) and predicting complex chemical properties. Models like GPT and T5 can be effectively adapted for molecular generation [30].

- Weaknesses in Inverse Design: The computational cost of self-attention scales quadratically with sequence length, which can be prohibitive for very long sequences or large models [30]. New architectures like selective state space models (e.g., Mamba) are emerging to address this limitation [30].

Table 1: High-Level Comparison of Generative Model Architectures for Inverse Design.

| Aspect | GANs | VAEs | Diffusion Models | Transformers |

|---|---|---|---|---|

| Core Principle | Adversarial competition | Probabilistic latent space | Iterative denoising | Self-attention on sequences |

| Training Stability | Unstable, prone to collapse [31] | Stable and tractable [32] | Stable and predictable [31] | Generally stable |

| Output Quality | High sharpness, less diversity [31] | Can be blurrier, lower detail [32] | High diversity, strong alignment [31] | High validity for sequential data [30] |

| Conditioning Flexibility | Limited [31] | Moderate | Highly flexible (text, image, etc.) [31] | High, via sequence conditioning [30] |

| Inference Speed | Very fast (single pass) [31] | Fast (single pass) | Slow (iterative process) [31] | Fast (autoregressive) |

| Key Challenge in Inverse Design | Mode collapse, hard to control | Generating high-fidelity details | Computational cost at inference | Scalability to long sequences |

Performance and Experimental Data

Independent evaluations and real-world applications provide the most meaningful metrics for comparing these models.

Quantitative Performance Benchmarks

Recent studies have systematically evaluated these models on standardized tasks. In scientific image generation, which shares challenges with molecular generation (e.g., requiring accuracy and adherence to physical laws), GANs like StyleGAN produce images with high perceptual quality and structural coherence [32]. However, diffusion-based models, such as DALL-E 2, delivered higher realism and semantic alignment with text prompts, though they sometimes struggled with scientific accuracy [32]. Critically, these evaluations revealed that standard quantitative metrics like FID (Fréchet Inception Distance) and SSIM (Structural Similarity Index Measure) can fail to capture scientific relevance, underscoring the necessity of domain-expert validation for any inverse design application [32].

In de novo molecular generation, Transformer-based models have demonstrated top performance. For instance, MolGPT, a model based on the GPT architecture, outperformed earlier models including CharRNN, VAEs, and AAE in generating drug-like molecules [30]. Modifications to the core Transformer, such as using Rotary Position Embedding (RoPE) and GEGLU activation functions, have further improved its ability to handle long-range dependencies and training stability [30].

Table 2: Summary of Comparative Model Performance from Recent Studies.

| Study / Model | Task | Key Comparative Finding | Metric / Outcome |

|---|---|---|---|

| Scientific Image Generation Review [32] | Image Synthesis | GANs (StyleGAN) produce high structural coherence; Diffusion Models (DALL-E 2) offer superior semantic alignment. | Expert-driven qualitative assessment and metrics (FID, SSIM). Highlights metric limitations. |

| MolGPT & T5MolGe [30] | Conditional Molecular Generation | Transformer architectures (GPT, T5) outperform CharRNN, VAEs, AAE, and LatentGAN. | Generation of valid, novel, and unique molecules; successful optimization of specific drug targets (e.g., mutant EGFR). |

| Polymer Generative Model [11] | Polymer Inverse Design | Integration of Group SELFIES with a generative model (PolyTAO) achieved 100% chemical validity. | Generated polymers showed <10% deviation from target dielectric constants in first-principles validation. |

| Mamba Model [30] | Molecular Generation | Selective state space model (Mamba) matches or beats Transformers in language modeling with linear scaling. | Evaluated for performance in molecular generation tasks as a promising alternative to Transformers. |

Case Study: Inverse Design of Polyimides

A robust inverse design engine was demonstrated in the generation of novel polyimides with target dielectric constants [11]. The methodology integrated robust molecular representation (Group SELFIES) with a state-of-the-art polymer generator (PolyTAO) and a task-agnostic training strategy combining physics-informed heuristics with reinforcement learning [11].

- Experimental Protocol:

- Conditional Generation: The model was conditioned on the target property (dielectric constant) and specific chemical motifs or classes (e.g., polyimide backbone).

- Model Training: The generator was trained using a combination of supervised learning on existing data and reinforcement learning to maximize reward from a property predictor, ensuring good performance even with limited data.

- Validation: Thirty generated polyimide structures were rigorously validated using first-principles calculations (e.g., Density Functional Theory) to compute their actual dielectric constants.

- Result: The generated polymers showed a deviation of less than 10% from their target dielectric values, proving the model's capability for controlled, on-demand design [11]. This end-to-end pipeline is deployment-ready for integration with self-driving laboratories and industrial synthesis.

Case Study: Targeting Drug-Resistant Mutations in NSCLC

Research on targeting the L858R/T790M/C797S-mutant EGFR in non-small cell lung cancer (NSCLC) highlights the practical application of inverse design in drug discovery [30]. Traditional screening is challenged by the vastness of chemical space and the specificity required to overcome drug resistance.

- Experimental Protocol:

- Model Selection & Modification: Several models were screened, including modified GPT architectures (GPT-RoPE, GPT-Deep, GPT-GEGLU) and a novel T5-based encoder-decoder model (T5MolGe) designed for better conditional generation [30].

- Conditional Training: Models were trained to generate molecules conditioned on being tyrosine kinase inhibitors, effectively limiting the search to a relevant region of chemical space.

- Transfer Learning: A transfer learning strategy was employed to overcome the bottleneck of small, specialized datasets typical in AI-aided drug discovery [30].

- Result: The T5-based model (T5MolGe), which learns the mapping between conditional properties and SMILES sequences via a complete encoder-decoder architecture, was selected as the optimal approach for this conditional generation task, demonstrating the importance of model architecture for specific inverse design challenges [30].

The Scientist's Toolkit: Key Reagents and Computational Tools

Table 3: Essential Resources for Implementing Inverse Design Workflows.

| Resource / Tool | Type | Function in Inverse Design |

|---|---|---|

| Group SELFIES [11] | Molecular Representation | A robust string-based representation for molecules and polymers that guarantees 100% chemical validity upon generation, overcoming a key bottleneck. |

| SPICE Netlist [33] | Simulation Input | A text-file describing an electronic circuit used in simulations; an LLM can generate this to design analog accelerators for AI hardware. |

| TCAD (Technology Computer-Aided Design) [33] | Simulation Software | Uses computer simulations to develop and optimize semiconductor processes and devices; generates data for machine learning models. |

| PolyTAO [11] | Generative Model | A state-of-the-art polymer generator that can be integrated with Group SELFIES for valid and controllable polymer design. |

| T5MolGe [30] | Generative Model | A Transformer-based (T5) model using an encoder-decoder architecture for conditional molecular generation, learning the relationship between properties and structures. |

| SPINS Platform [34] | Design Software | A platform (e.g., from Stanford) that makes inverse photonic design a practical tool, lowering the barrier for industry adoption. |

Workflow and Signaling Pathways

The following diagram illustrates a generalized, iterative workflow for inverse design, highlighting the role of the generative model and the critical validation feedback loop.

Diagram Title: Iterative Inverse Design Workflow with Model Feedback.

Logical Workflow Explanation

- Define Target Properties: The process begins with researchers specifying the desired functional characteristics, such as a specific dielectric constant [11], binding affinity, or solubility.

- Generative Model: The core inverse design engine (e.g., a Diffusion Model or Transformer) takes the target properties as input and generates a pool of candidate molecular structures [11] [30].

- Property Prediction: A fast, often ML-based, property predictor screens the generated candidates to estimate their performance, filtering out poor candidates before costly simulation or experimentation [11] [33].

- Validation Check: The top-performing candidates are rigorously validated using high-fidelity methods, such as first-principles calculations [11] or TCAD simulations [33]. This step is critical for assessing real-world performance.

- Feedback Loop: The results from the validation step are used to update the generative model, often via reinforcement learning [11] or fine-tuning. This creates a closed-loop system that improves its performance with each iteration, progressively learning to generate more optimal designs.

The evidence from current research indicates that inverse design, powered by deep generative models, is not merely an incremental improvement but a transformative methodology that fundamentally reorients the discovery process. While forward screening will remain a valuable tool for validation and exploration in specific contexts, inverse design offers a more direct, efficient, and intelligent path to creating novel materials and molecules.

As of 2025, Diffusion Models and Transformers are leading in versatility and output quality for many inverse design tasks, particularly where complex conditioning is required [32] [30] [31]. However, the optimal choice of model is highly task-dependent. GANs retain value for high-speed generation, while VAEs offer a stable and interpretable approach. The future likely lies not in a single winner-takes-all architecture, but in hybrid models that combine the strengths of these approaches, and in the tighter integration of these engines with automated experimental and synthetic pipelines for fully autonomous discovery [11] [31] [33].

The identification of a drug's cellular target is a pivotal step in the drug discovery process. Two fundamentally different paradigms dominate this field: forward screening and inverse design. Forward genetic screening interrogates the entire genome in an entirely unbiased fashion to identify genes and pathways related to a drug's mechanism of action [35]. In contrast, inverse design approaches start with a desired molecular outcome and work backwards to design compounds or identify targets that achieve this goal, increasingly leveraging generative machine learning models [10] [36]. This guide provides an objective comparison of these methodologies, their experimental protocols, performance characteristics, and practical implementation requirements to aid researchers in selecting the optimal approach for their drug target identification projects.

Methodology Comparison: Principles and Applications

Table 1: Fundamental Characteristics of Forward Screening and Inverse Design Approaches

| Characteristic | Forward Genetic Screening | Inverse Design |

|---|---|---|

| Basic Principle | Unbiased genome interrogation through phenotypic selection [35] | Target-first approach using computational design [15] [36] |

| Primary Application | Drug-target deconvolution and pathway mapping [35] [37] | Rational design of ligands for specific protein targets [10] |

| Typical Output | Direct target identification and resistance mechanisms [35] [38] | Optimized small molecules with predicted binding characteristics [10] |

| Key Advantage | Unbiased discovery in physiological cellular environments [37] | Focused exploration of chemical space with desired properties [36] |

| Resolution Capability | Amino acid-level target mapping [35] | Atomic-level interaction prediction [10] |

Forward Genetic Screening Approaches

Forward genetic screening employs phenotypic selection in model organisms to systematically identify drug targets without prior assumptions about mechanism. In cancer research, engineered defective DNA mismatch repair (dMMR) systems in mammalian cells create forward genetics platforms where compound-resistant alleles emerge in drug-resistant clones, directly revealing drug targets [38]. Chemical mutagenesis-based screens induce single nucleotide changes that can generate amino acid substitutions perturbing drug-target interactions, resulting in drug resistance that reveals the direct target when sequenced [35].

Inverse Design Methodologies

Inverse design represents a paradigm shift from traditional screening. The "Inverse Drug Discovery" strategy matches organic compounds of intermediate complexity harboring weak, activatable electrophiles with the proteins they react with in cells or cell lysates [15]. This approach is agnostic to the cellular proteins targeted and uses affinity chromatography-mass spectrometry to identify reacting proteins [15]. Modern implementations leverage deep learning workflows that combine density-functional tight-binding methods for property data generation with graph convolutional neural network surrogate models for rapid property predictions [9].

Experimental Protocols and Workflows

Forward Genetic Screening Protocol

Table 2: Key Experimental Steps in Forward Genetic Screening

| Step | Method | Purpose | Key Parameters |

|---|---|---|---|

| 1. Mutagenesis | Chemical mutagenesis (alkylating reagents) or CRISPR/Cas9-engineered dMMR [35] [38] | Induce genetic variations | EMS concentration: 0.1-0.5%; Exposure time: 1-2 hours |

| 2. Selection | Drug treatment at appropriate concentrations [37] | Select for resistant clones | IC50-IC90 concentrations; 5-14 day selection |

| 3. Target Identification | Next-generation sequencing of resistant clones [35] | Identify causative mutations | 30-50x whole genome sequencing coverage |

| 4. Validation | Gene dosage assays (HIP, HOP, MSP) [37] | Confirm target identification | Competitive growth assays; statistical significance |

Detailed Forward Screening Workflow: