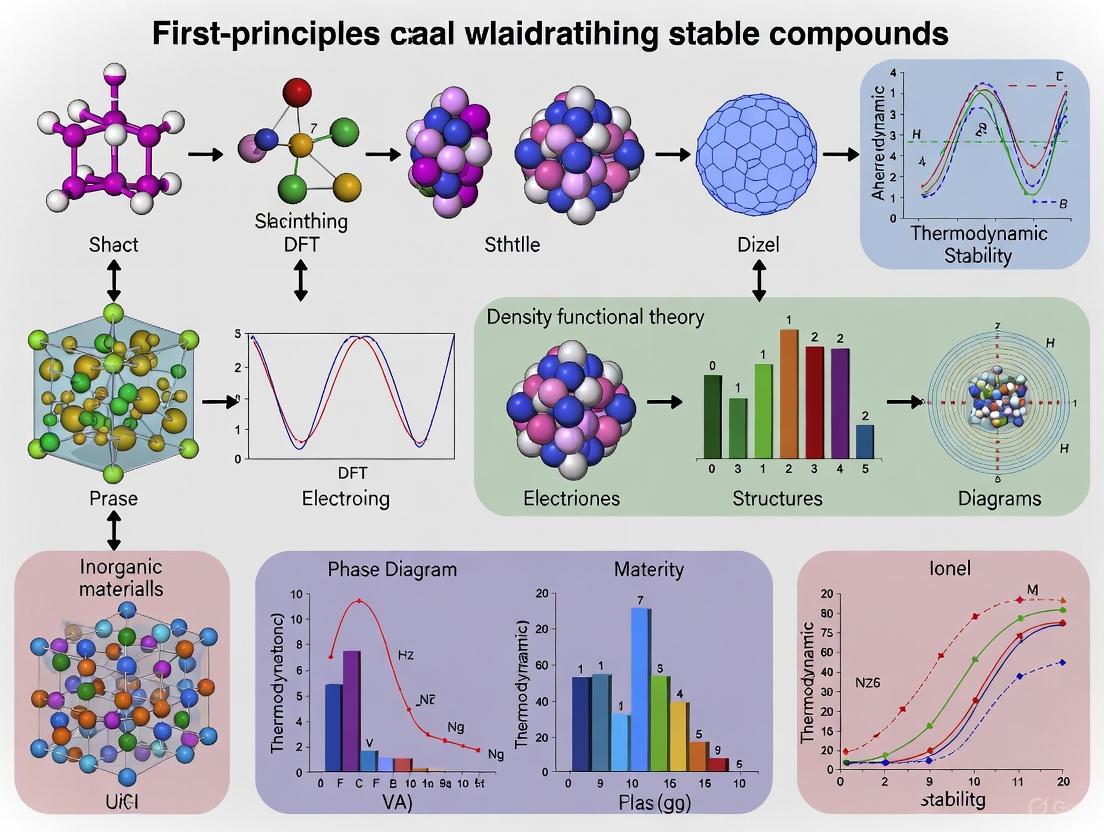

First-Principles Calculations: Validating Stable Compounds for Advanced Materials and Drug Development

This article provides a comprehensive overview of how first-principles calculations, rooted in density functional theory (DFT), are revolutionizing the validation of stable compounds in materials science and drug development.

First-Principles Calculations: Validating Stable Compounds for Advanced Materials and Drug Development

Abstract

This article provides a comprehensive overview of how first-principles calculations, rooted in density functional theory (DFT), are revolutionizing the validation of stable compounds in materials science and drug development. It explores the fundamental physics underlying these methods, details computational workflows for assessing thermodynamic and mechanical stability, and presents real-world applications from hydrogen storage materials to pharmaceutical polymorph prediction. The content also addresses best practices for overcoming computational challenges and highlights rigorous validation studies that demonstrate the growing power of in silico methods to de-risk experimental discovery and accelerate the development of new materials and therapeutics.

The Physics Behind the Predictions: First-Principles Fundamentals for Stability Analysis

First-principles thinking represents a fundamental approach to problem-solving that breaks down complex systems into their most basic, foundational elements. In computational materials science and drug discovery, this philosophy has been realized through first-principles calculations, primarily based on density functional theory (DFT), which enables the prediction of material properties from quantum mechanical principles without empirical parameters. This paradigm has revolutionized materials discovery and validation, allowing researchers to explore stable compounds and their properties computationally before experimental synthesis. The rigorous framework provided by first-principles thinking has been particularly transformative in fields requiring high-precision predictions, from thermoelectric materials to pharmaceutical development, where accurate property prediction directly impacts technological applications and clinical success rates.

Core Computational Protocols in First-Principles Calculations

Standard Solid-State Protocols (SSSP) for High-Throughput Simulations

Advancements in high-throughput materials simulation have necessitated the development of automated protocols to balance numerical precision with computational efficiency. The Standard Solid-State Protocols (SSSP) provide a rigorous methodology for assessing the quality of self-consistent DFT calculations across a wide range of crystalline materials [1]. These protocols establish criteria to reliably estimate average errors on total energies, forces, and other properties as a function of desired computational efficiency while consistently controlling key numerical parameters.

The foundational challenge in these simulations involves managing the interplay between k-point sampling and smearing techniques, particularly for metallic systems where the occupation function becomes discontinuous at the Fermi surface. Smearing techniques effectively smooth out this discontinuity by replacing the discontinuous occupation function with a smooth, differentiable one, enabling exponential convergence of integrals with respect to the number of k-points [1]. This process is physically interpreted as adding a fictitious electronic temperature (σ) to the system, which broadens electronic occupations and introduces an entropic term to the total free energy.

Table 1: Standard Solid-State Protocol (SSSP) Parameters for DFT Calculations

| Parameter Category | Specific Parameters | Precision-Optimized Setting | Efficiency-Optimized Setting |

|---|---|---|---|

| k-point Sampling | Metallic Systems | Dense uniform mesh (e.g., 24×24×24) | Coarser mesh (e.g., 12×12×12) with optimized smearing |

| Semiconductor/Insulator | Moderate mesh (e.g., 16×16×16) | Basic mesh (e.g., 8×8×8) | |

| Smearing Techniques | Smearing Type | Methfessel-Paxton or Gaussian | Marzari-Vanderbilt cold smearing |

| Smearing Width (σ) | Small value (0.01-0.02 Ry) | Larger value (0.05-0.10 Ry) | |

| Pseudopotentials | Plane-wave Cutoff | High cutoff (e.g., 100 Ry) | Moderate cutoff (e.g., 60-80 Ry) |

| Pseudopotential Type | Norm-conserving | Ultrasoft |

Automated Workflows for Hubbard Parameter Calculation

For systems with strongly correlated electrons, particularly those containing transition metals or rare-earth elements, standard DFT functionals often fail to adequately describe localized d and f states. The aiida-hubbard framework provides an automated, flexible approach to self-consistently calculate Hubbard U and V parameters from first principles [2]. This workflow leverages density-functional perturbation theory (DFPT) to efficiently parallelize computations using multiple concurrent primitive cell calculations.

The DFT+U+V approach adds corrective terms to the base DFT exchange-correlation functional:

[ E{\text{DFT}+U+V} = E{\text{DFT}} + E_{U+V} ]

where (E_{U+V}) contains both on-site terms (penalizing fractional orbital occupation) and inter-site terms (stabilizing states between atoms) [2]. The self-consistent determination of these parameters ensures mutual consistency between ionic and electronic ground states, significantly improving the accuracy of electronic structure properties, particularly for redox materials and compounds with complex transition metal chemistry.

Table 2: Experimentally Determined Hubbard Parameters for Selected Elements

| Element | Oxidation State | Coordination Environment | U (eV) | V (eV) |

|---|---|---|---|---|

| Fe | +2 | Octahedral | 3.5-4.5 | 0.2-0.8 |

| +3 | Octahedral | 4.0-5.0 | 0.3-0.9 | |

| Mn | +2 | Tetrahedral | 3.0-4.5 | 0.2-0.7 |

| +3 | Octahedral | 4.0-6.0 | 0.3-1.0 | |

| Li | +1 | Various | 1.5-3.0 | 0.1-0.5 |

Application Notes: Validating Stable Compounds

Protocol for Stability Assessment of Heusler Compounds

Heusler compounds represent a fascinating class of intermetallics with diverse functional properties. The following protocol outlines a comprehensive approach for validating the stability of full-Heusler compounds, using Ac₂MgGa as a case study [3]:

1. Structural Optimization

- Begin with the experimental or predicted crystal structure (e.g., from the Materials Project database).

- Perform full structural relaxation using the BFGS algorithm, allowing both lattice parameters and atomic positions to relax simultaneously.

- Employ the Perdew-Burke-Ernzerhof (PBE) functional or similar GGA functional.

- Use ultrasoft or projector-augmented wave (PAW) pseudopotentials.

- Apply a plane-wave energy cutoff of 60-100 Ry and charge density cutoff of 600-1000 Ry.

- Converge atomic forces to below 0.001 eV/Å with stress threshold of 0.05 GPa.

2. Mechanical Stability Assessment

- Compute the full elastic tensor using finite strain theory.

- Verify stability criteria for cubic crystals: C₁₁ > 0, C₁₁ - C₁₂ > 0, C₁₁ + 2C₁₂ > 0, C₄₄ > 0.

- Calculate derived mechanical properties: bulk modulus (B), shear modulus (G), Pugh's ratio (B/G), and Vickers hardness.

- For Ac₂MgGa, analysis reveals ductile behavior (B/G > 1.75) and elastic anisotropy [3].

3. Dynamical Stability Assessment

- Perform phonon calculations across the entire Brillouin zone.

- Confirm absence of imaginary frequencies in the phonon dispersion spectrum.

- For metallic systems, employ density-functional perturbation theory or finite displacement methods with appropriate smearing techniques.

4. Thermodynamic Stability Assessment

- Calculate the formation energy: ΔHf = Etotal - ΣniEi, where E_i represents the energy of constituent elements in their standard states.

- Determine phase stability against decomposition to competing phases by constructing the convex hull.

- For Ac₂MgGa, the compound demonstrates thermodynamic stability with negative formation energy [3].

Machine Learning-Accelerated Discovery of Zintl Phases

The exploration of complex chemical spaces, such as Zintl phases, benefits from integrating machine learning with first-principles validation. A recent study screened over 90,000 hypothetical Zintl phases using graph neural networks (GNNs) and the Upper Bound Energy Minimization (UBEM) approach [4].

The UBEM strategy employs a scale-invariant GNN model to predict DFT volume-relaxed energies using unrelaxed crystal structures as input. This approach provides an upper bound to the fully relaxed energy - if the volume-relaxed structure is thermodynamically stable, the fully relaxed structure is guaranteed to be stable [4]. This protocol achieved 90% precision in identifying 1,810 new thermodynamically stable Zintl phases validated by DFT calculations.

Experimental Protocols for Property Validation

Electronic Structure Analysis Protocol

Computational Details:

- Employ DFT calculations with Quantum ESPRESSO or VASP simulation packages.

- Use PBE-GGA functional for structural properties, with HSE06 or PBE0 hybrid functionals for accurate band gaps.

- Apply Monkhorst-Pack k-point grid of 8×8×8 or denser for self-consistent field calculations.

- Implement Methfessel-Paxton or Gaussian smearing for metallic systems with width of 0.01-0.05 Ry.

- For transition metal compounds, include DFT+U corrections with self-consistently determined Hubbard parameters [2].

Electronic Property Analysis:

- Calculate band structure along high-symmetry paths in the Brillouin zone.

- Compute density of states (DOS) and projected DOS (pDOS) to identify orbital contributions.

- For Ac₂MgGa, calculations confirm metallic character with no band gap opening and significant density of states at the Fermi level [3].

- Analyze electron localization function (ELF) to characterize bonding nature.

Optical Property Calculation Protocol

Dielectric Function Computation:

- Use norm-conserving pseudopotentials for improved accuracy in optical properties.

- Compute the complex dielectric function ε(ω) = ε₁(ω) + iε₂(ω) within the random phase approximation.

- Calculate frequency-dependent optical constants: refractive index n(ω), extinction coefficient k(ω), absorption coefficient α(ω), reflectivity R(ω), and energy loss function L(ω).

- For FexZr1-xO2 solid solutions, optical analysis reveals strong band-gap narrowing from 3.41 eV (x=0) to ≈0.02 eV (x=0.50) with large absorption coefficients (α ≈ 10⁵ cm⁻¹ at higher x) [5].

Optical Anisotropy Assessment:

- For non-cubic systems, compute dielectric tensor components separately.

- Analyze birefringence and dichroism from polarization-dependent response.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for First-Principles Materials Validation

| Tool Name | Type | Primary Function | Application Example |

|---|---|---|---|

| Quantum ESPRESSO | DFT Code | Plane-wave pseudopotential DFT calculations | Structural relaxation, electronic structure, phonons [1] [5] |

| VASP | DFT Code | Plane-wave PAW method DFT calculations | Charge density, electrostatic potential simulations [6] |

| AiiDA | Workflow Manager | Automated workflow management and data provenance | High-throughput calculations, parameter optimization [1] [2] |

| aiida-hubbard | Specialized Plugin | Self-consistent calculation of Hubbard parameters | DFT+U calculations for transition metal compounds [2] |

| SSSP | Pseudopotential Library | Curated collection of extensively tested pseudopotentials | High-precision and high-throughput materials modeling [1] |

| HP Code | Perturbation Tool | DFPT calculation of Hubbard parameters | Linear response approach for U/V parameter determination [2] |

| UBEM-GNN | Machine Learning Model | Prediction of volume-relaxed energies from unrelaxed structures | High-throughput screening of Zintl phases [4] |

| pymatgen/ASE | Materials API | Input generation, output parsing, and structure analysis | Workflow integration and materials analysis [1] |

Advanced Applications in Drug Discovery and Development

The first-principles approach has extended beyond materials science into pharmaceutical development, particularly through the emergence of Large Quantitative Models (LQMs). These models combine physics-based simulations with AI to predict molecular behavior with unprecedented accuracy [7]. Unlike large language models trained on textual data, LQMs are grounded in first principles of physics, chemistry, and biology, allowing them to simulate fundamental molecular interactions and create new knowledge through billions of in silico simulations.

In pharmaceutical applications, first-principles thinking enables:

- Prediction of drug exposure and metabolism: Modeling how compounds are absorbed, distributed, metabolized, and excreted across human organs.

- Organ-level safety and toxicity assessment: Evaluating compound safety profiles for major organs, beginning with liver toxicity prediction.

- FDA risk-benefit analysis: Contextualizing predicted safety and efficacy profiles to estimate regulatory evaluation outcomes [8].

These approaches address the critical challenge of toxicity-related clinical failures, which account for 25% of clinical trial failures and 30% of post-market withdrawals [8]. By accurately predicting human safety risks early in discovery, first-principles methods aim to drastically reduce the 90% clinical failure rate that has plagued traditional drug development.

The implementation of first-principles thinking from philosophical concept to computational reality has created a robust framework for validating stable compounds across materials science and pharmaceutical development. The protocols outlined in this document - from standardized solid-state calculations to machine-learning accelerated discovery - provide researchers with comprehensive methodologies for rigorous materials validation. As computational power increases and algorithms become more sophisticated, the integration of first-principles thinking with experimental validation will continue to accelerate the discovery and development of novel materials and therapeutics, ultimately transforming the approach to scientific investigation across multiple disciplines.

Density Functional Theory (DFT) stands as a cornerstone of modern computational materials science and chemistry, providing a practical framework for solving the many-body Schrödinger equation. The fundamental theorem of Hohenberg and Kohn establishes that all ground-state properties of a system of interacting electrons are uniquely determined by its electron density ρ(r), thereby reducing the complex many-body wavefunction problem to a manageable functional of the electron density [9]. This theoretical breakthrough forms the basis for first-principles calculations that predict material properties without empirical parameters, enabling researchers to validate stable compounds computationally before synthesis.

The Kohn-Sham equations, which form the working heart of DFT, introduce a fictitious system of non-interacting electrons that produce the same density as the true interacting system [10]. This approach separates computationally tractable components from the challenging exchange-correlation functional, which must be approximated. The energy expression in Kohn-Sham DFT is given by EKS = V + 〈hP〉 + 1/2〈PJ(P)〉 + EX[ρ] + EC[ρ], where EX[ρ] and EC[ρ] represent the exchange and correlation functionals, respectively [10]. The accuracy of DFT calculations thus hinges on the quality of these approximate functionals, which has driven continuous methodological development across multiple rungs of Jacob's ladder of approximations [9].

Theoretical Foundations: From Schrödinger Equation to Practical DFT

The Fundamental Theorems and Kohn-Sham Equations

The theoretical foundation of DFT rests on two seminal theorems proved by Hohenberg and Kohn. The first theorem establishes a one-to-one mapping between the external potential acting on a system and its ground-state electron density. The second theorem provides a variational principle, stating that the universal functional F[ρ] delivers the ground-state energy when evaluated at the correct ground-state density. This universal functional F[ρ] captures all electronic contributions to the total energy and has been described as a "holy grail" for chemistry, physics, and materials science [9].

The practical implementation of DFT through the Kohn-Sham approach reformulates the problem by introducing a reference system of non-interacting electrons described by single-particle orbitals. The Kohn-Sham Hamiltonian incorporates the exchange-correlation potential, which must be approximated in practice. The electron density is constructed from the occupied Kohn-Sham orbitals ψi(r) as ρ(r) = ∑i|ψi(r)|², which can be expanded using a basis set representation as ρ(r) = ∑νμDμνχμ(r)χν(r), where Dμν are density matrix elements and χμ(r) are basis functions [9].

Advancements in Exchange-Correlation Functionals

The development of increasingly sophisticated exchange-correlation functionals represents a central theme in DFT research. These approximations are often organized in the hierarchy of "Jacob's ladder," progressing from local density approximations to meta-generalized gradient approximations and hybrid functionals [9]. Recent breakthroughs include the SCAN (strongly constrained and appropriately normed) meta-GGA functional, which satisfies 17 exact physical constraints and has demonstrated remarkable accuracy for water simulations [11]. The regularized-restored SCAN functional (r2SCAN) addresses convergence issues while maintaining adherence to physical constraints [11].

Table 1: Classification of Density Functional Approximations

| Functional Rung | Ingredients | Examples | Computational Cost | Typical Applications |

|---|---|---|---|---|

| Local Density Approximation (LDA) | Local density ρ(r) | SVWN | Low | Homogeneous electron gas, simple metals |

| Generalized Gradient Approximation (GGA) | ρ(r), ∇ρ(r) | PBE, BLYP | Low-medium | General purpose solid-state calculations |

| Meta-GGA | ρ(r), ∇ρ(r), τ(r) | SCAN, r2SCAN | Medium | Strongly bonded systems, molecular crystals |

| Hybrid | ρ(r), ∇ρ(r), exact exchange | B3LYP, PBE0 | High | Molecular systems, quantum chemistry |

| Double Hybrid | ρ(r), ∇ρ(r), exact exchange, MP2 correlation | B2PLYP | Very High | High-accuracy thermochemistry |

Machine learning approaches to constructing density functionals represent a promising frontier. Recent work has developed "pure, non-local, and transferable" machine-learned density functionals that retain mean-field computational cost while achieving coupled-cluster quality results for certain systems [9]. These Kernel Density Functional Approximations (KDFA) employ a rotationally invariant density representation based on density-fitting basis functions, bypassing limitations of conventional semi-local functionals for electron correlation [9].

Computational Methodologies and Protocols

DFT-1/2 and Pseudopotential Projector Shift Methods

The DFT-1/2 method provides a semi-empirical approach to correcting self-interaction errors in local and semi-local functionals for extended systems. This method defines an atomic self-energy potential that cancels electron-hole self-interaction energy by calculating the difference between the potential of a neutral atom and that of a charged ion with a fraction of its charge removed [12]. The total self-energy potential is summed over atomic sites and added to the DFT Hamiltonian, significantly improving band gap predictions for semiconductors and insulators.

A practical protocol for implementing the DFT-1/2 correction in semiconductor band structure calculations involves:

- System Preparation: Build the crystal structure, preferably using a crystal database such as that available in QuantumATK [12]

- Calculator Setup: Select an exchange-correlation functional (LDA or GGA/PBE) and appropriate basis set

- DFT-1/2 Activation: Enable the DFT-1/2 correction, typically applying it only to anionic species while leaving cationic species uncorrected [12]

- k-point Sampling: Use a sufficiently dense k-point grid (e.g., 9×9×9 for zincblende structures) [12]

- Band Structure Calculation: Compute the electronic band structure using the modified Hamiltonian

The pseudopotential projector shift (PPS) method offers an alternative approach by applying empirical shifts to nonlocal projectors in pseudopotentials according to: V̂nl → V̂nl + ∑l|pl⟩αl⟨pl|, where αl are empirical parameters dependent on orbital angular momentum quantum number l [12]. This method does not increase computational cost and remains suitable for geometry optimization, unlike DFT-1/2.

Table 2: Default DFT-1/2 Parameters for Selected Semiconductors

| Material | Element Corrected | Fractional Charge | Cutoff Radius (Bohr) | Predicted Band Gap (eV) | Experimental Band Gap (eV) |

|---|---|---|---|---|---|

| InP | P | [0.5, 0.0] | 4.0 | 1.46 | 1.34 [13] |

| GaAs | As | [0.3, 0.0] | 4.0 | 1.55 | 1.52 [13] |

| GaP | P | [0.5, 0.0] | 4.0 | 2.39 | 2.35 [13] |

| Si | Si | [0.5, 0.0] | 3.5 | 1.17 | 1.17 [13] |

Density-Corrected DFT for Aqueous and Biomolecular Systems

Density-corrected DFT (DC-DFT) provides a rigorous framework for separating errors in self-consistent DFT calculations into density-driven and functional-driven components. The simplest practical implementation is HF-DFT, where density functionals are evaluated on Hartree-Fock densities and orbitals rather than self-consistent DFT densities [11]. This approach significantly improves accuracy for systems prone to density-driven errors, including water clusters, reaction barriers, and anions.

A protocol for applying DC-DFT to aqueous systems and biomolecules:

- Reference Density Calculation: Perform a Hartree-Fock calculation to obtain the electron density

- Functional Evaluation: Compute the DFT energy using the HF density instead of the self-consistent density

- Dispersion Correction: Carefully parameterize dispersion corrections (e.g., D4) following DC-DFT principles to maintain accuracy for water while capturing noncovalent interactions [11]

- Validation: Benchmark against high-level reference data for water clusters and noncovalent complexes

The recently developed HF-r2SCAN-DC4 method demonstrates the power of this approach, combining HF densities, the r2SCAN functional, and a specially parameterized D4 dispersion correction to achieve chemical accuracy for pure water while capturing vital noncovalent interactions in biomolecules [11].

Diagram 1: DFT Computational Workflow. This diagram illustrates the logical relationship between the fundamental Schrödinger equation and practical DFT calculations, highlighting the central role of the exchange-correlation functional.

Application Notes: Validating Stable Compounds

Semiconductor Materials Design

DFT methods enable high-throughput screening of semiconductor compounds by predicting key properties including band gaps, formation energies, and defect thermodynamics. The DFT-1/2 and DFT-PPS methods specifically address the systematic band gap underestimation problem in standard DFT, providing accuracy comparable to more expensive GW calculations at significantly lower computational cost [12].

For compound validation, the following protocol is recommended:

- Structure Optimization: Relax crystal structures using PBE or SCAN functionals with appropriate dispersion corrections

- Band Structure Calculation: Compute electronic band structures using DFT-1/2 or PPS methods for accurate band gaps

- Stability Assessment: Calculate formation energies and phase stability against competing compounds

- Defect Analysis: Identify and characterize intrinsic defects that may impact material performance

- Property Prediction: Compute optical absorption, effective masses, and transport properties

Application to III-V semiconductors demonstrates the effectiveness of these approaches, with PBE-1/2 predicting band gaps within 0.1-0.2 eV of experimental values for materials like InP, GaAs, and GaP [12].

Biomolecular Systems and Aqueous Solutions

Accurate modeling of biomolecules in aqueous environments requires capturing both strong hydrogen bonding and weak dispersion interactions. The HF-r2SCAN-DC4 method represents a significant advancement, achieving mean absolute errors below 0.5 kcal/mol for water cluster energies and stacking interactions in nucleobases [11].

A protocol for validating stable compounds in aqueous solution:

- Conformational Sampling: Generate low-energy conformers using molecular dynamics or Monte Carlo methods

- Interaction Energy Calculation: Compute binding energies using DC-DFT methods with appropriate dispersion corrections

- Solvation Effects: Incorporate implicit or explicit solvation models

- Spectroscopic Properties: Predict NMR chemical shifts and vibrational frequencies for comparison with experiment

- Relative Stability: Determine the most stable hydration states or complexation modes

This approach has been successfully applied to study stacking interactions in cytosine dimers, where HF-r2SCAN-DC4 correctly describes dispersion-dominated interactions that are significantly underestimated by HF-SCAN [11].

Diagram 2: Compound Validation Workflow. This diagram outlines the iterative process for validating stable compounds using DFT methods, from initial structure modeling to experimental validation.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for DFT-Based Compound Validation

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| QuantumATK | Software Platform | Atomistic simulation with DFT, semi-empirical, and force field methods | Semiconductor materials design, electronic device modeling [14] |

| Gaussian 16 | Quantum Chemistry Package | Molecular DFT calculations with extensive functional library | Molecular systems, spectroscopy, reaction mechanisms [10] |

| SCAN/r2SCAN | Meta-GGA Functional | Strongly constrained meta-GGA with high accuracy for diverse bonding | Phase diagrams of water, material properties prediction [11] |

| DFT-1/2 | Band Gap Correction | Self-energy correction for improved band structures | Semiconductor band gaps, optical properties [12] |

| DFT-PPS | Pseudopotential Method | Projector shifts for accurate lattice constants and band gaps | Silicon and germanium systems, lattice matching [12] |

| D4 Dispersion | Empirical Correction | Geometry-dependent dispersion correction with charge dependence | Noncovalent interactions, biomolecular systems [11] |

| SG15 | Pseudopotential Set | Optimized norm-conserving pseudopotentials | Solid-state calculations, particularly with PPS method [12] |

The continuing evolution of density functional theory methodologies enhances our capability to validate stable compounds through first-principles calculations. Machine-learned density functionals, density-corrected approaches, and specialized methods for addressing specific limitations like band gap underestimation collectively push the boundaries of predictive materials design. As these computational protocols become increasingly integrated with high-throughput screening and machine learning approaches, the role of DFT in accelerating the discovery and validation of novel compounds will continue to expand across materials science, chemistry, and drug development.

The integration of DFT with experimental validation creates a powerful feedback loop for method development. As new experimental data becomes available for complex systems—from heterogeneous catalysts to biomolecular assemblies—theoretical methods must evolve to address emerging challenges. The next generation of density functionals will likely incorporate more sophisticated machine-learning components while maintaining physical constraints, further bridging the gap between computational efficiency and chemical accuracy in first-principles compound validation.

Theoretical Foundations of Stability Metrics

In the computational design and validation of new compounds, predicting thermodynamic stability is paramount. Two fundamental metrics used to assess this stability are the cohesive energy and the formation enthalpy. These quantities, derived from first-principles calculations based on density functional theory (DFT), provide a rigorous means to evaluate whether a proposed compound is likely to form and persist under given conditions. The cohesive energy of an ionic solid is defined as the energy required to decompose the solid into its constituent independent gaseous atoms at 0 K. In contrast, the lattice energy is the energy required to decompose the solid into its constituent independent gaseous ions at 0 K [15]. These energies can be converted into enthalpies at a given temperature by adding the small energies corresponding to the integration of the heat capacity of each constituent.

First-principles calculations are uniquely suited for this task as they are based on quantum mechanics and do not rely on empirical parameters or experimental data for fitting. They start directly from the established science of electronic structure, requiring only the atomic numbers of the constituent atoms [16]. This ab initio approach allows researchers to probe the stability of compounds that have not yet been synthesized, guiding experimental efforts towards promising candidates.

First-Principles Methodology

Density Functional Theory (DFT) Framework

First-principles calculations, particularly those employing DFT, serve as the cornerstone for computing material properties from quantum mechanical principles. DFT simplifies the many-body Schrödinger equation by using the electron density as the fundamental variable instead of the complex many-electron wavefunction. The total energy within the Kohn-Sham DFT framework, the most widely used method, is expressed as a functional of the electron density [17]. The accuracy of a DFT calculation critically depends on the choice of the exchange-correlation functional, which accounts for quantum interactions between electrons.

The primary types of functionals include:

- LDA (Local Density Approximation): Uses only the local electron density. It is applicable to simple metals like alkali metals but often overbinds molecules and solids.

- GGA (Generalized Gradient Approximation): Incorporates both the electron density and its gradient, offering improved accuracy. The Perdew-Burke-Ernzerhof (PBE) functional is a well-known GGA functional and is a current standard for many solid-state calculations [17] [18].

- Hybrid Functionals: Mix a portion of exact Hartree-Fock exchange with GGA exchange and correlation. Functionals like B3LYP and PBE0 offer higher accuracy for band gaps and are frequently used in quantum chemical calculations [17] [18].

- VDW/DFT-D: Explicitly account for van der Waals (dispersion) forces, which are crucial for describing intermolecular interactions in molecular crystals and layered materials [17].

Computational Workflow

A typical first-principles study of stability involves a well-defined sequence of steps to ensure self-consistency and accuracy. The following workflow, applicable to software like CASTEP and SIESTA, outlines the core protocol:

Protocol 1: Total Energy Calculation Workflow.

- Initial Structure Definition: Obtain the crystal structure (atomic positions and lattice vectors) of the compound of interest from crystallographic databases or preliminary calculations.

- Geometry Optimization: Relax both the atomic positions and the cell parameters until the Hellmann-Feynman forces on atoms and the internal stresses are minimized below a predefined threshold (e.g., maximum force < 0.03 eV/Å, maximum stress < 0.05 GPa) [18] [19]. This finds the ground-state structure.

- Self-Consistent Field (SCF) Calculation: Perform a single-point energy calculation on the optimized geometry. The Kohn-Sham equations are solved iteratively until the input and output electron densities are consistent, yielding the total energy of the compound [17].

Calculating Cohesive Energy

Definition and Protocol

The cohesive energy ((E{coh})) is the energy gained when isolated atoms are brought together to form the solid. A large negative value indicates a strongly bound, stable solid. For a compound (AxB_y), it is defined as the total energy of the solid minus the sum of the energies of its individual, isolated gaseous atoms, all calculated at 0 K [15].

[E{coh}(AxBy) = E{total}(AxBy) - [xE{atom}(A) + yE{atom}(B)]]

Protocol 2: Calculation of Cohesive Energy for a Binary Compound.

- Calculate the total energy of the bulk crystal, (E{total}(AxB_y)), using the workflow in Protocol 1.

- Calculate the energy of a single, isolated atom of type A, (E_{atom}(A)), in a large simulation box to prevent interaction with its periodic images.

- Repeat step 2 for atom type B, (E_{atom}(B)).

- Apply the formula above to compute (E_{coh}). The result is typically reported in eV/atom or eV/formula unit.

Data and Applications

Cohesive energy is a universal metric of bond strength. For example, first-principles studies of Fe–W–C ternary carbides (Fe(2)W(2)C, Fe(3)W(3)C, etc.) calculate cohesive energies to confirm their intrinsic stability, which is crucial for their application as wear-resistant coatings [19]. The calculated cohesive energies, along with other stability metrics, for these compounds are summarized in the table below.

Table 1: Calculated Stability Metrics for Fe–W–C Ternary Carbides [19].

| Compound | Cohesive Energy, (E_{coh}) (eV/atom) | Formation Enthalpy, (\Delta H_{form}) (eV/atom) | Bulk Modulus (GPa) | Remarks |

|---|---|---|---|---|

| Fe(2)W(2)C | - | - | 324.9 | Thermodynamically stable |

| Fe(3)W(3)C | - | - | 326.0 | Thermodynamically stable |

| Fe(6)W(6)C | - | - | 326.1 | Thermodynamically stable |

| Fe({21})W(2)C(_6) | - | - | 336.1 | Thermodynamically stable |

Note: The source [19] confirms the thermodynamic stability of these compounds (E_form < 0) but does not provide the specific numerical values for E_coh and ΔH_form in the abstract and excerpt.

Calculating Formation Enthalpy

Definition and Protocol

While cohesive energy measures total binding, the formation enthalpy ((\Delta H_{form})) specifically assesses thermodynamic stability with respect to the elemental phases. It is the enthalpy change when a compound is formed from its constituent elements in their standard reference states (e.g., bulk solid, diatomic gas). A negative formation enthalpy signifies that the compound is more stable than the separated elements.

For a compound (AxBy), the formation enthalpy at 0 K is approximated as: [\Delta H{form}(AxBy) \approx E{total}(AxBy) - [xE{bulk}(A) + yE{bulk}(B)]] where (E{bulk}(A)) and (E{bulk}(B)) are the total energies per atom of the standard reference solid phases of elements A and B.

Protocol 3: Calculation of Formation Enthalpy for a Binary Compound.

- Calculate the total energy of the bulk crystal, (E{total}(AxB_y)), using Protocol 1.

- Calculate the total energy per atom of the standard reference solid for element A (e.g., body-centered cubic for Fe, hexagonal close-packed for Mg).

- Repeat step 2 for element B.

- Apply the formula above to compute (\Delta H_{form}). The result is typically reported in eV/atom or eV/formula unit.

Data and Applications

Formation enthalpy is indispensable for predicting phase stability. In the study of Fe–W–C carbides, the negative formation enthalpies calculated for Fe(2)W(2)C, Fe(3)W(3)C, Fe(6)W(6)C, and Fe({21})W(2)C(_6) confirm that these phases are thermodynamically stable and likely to form, unlike compounds with positive formation enthalpies [19]. This allows researchers to pinpoint promising candidate materials from a vast chemical space, such as in the search for new high-hardness coatings or thermoelectric materials.

The Scientist's Toolkit: Essential Research Reagents and Software

Successful first-principles calculations rely on a suite of sophisticated software tools and computational "reagents." The table below details key resources for performing these studies.

Table 2: Key Software and Computational "Reagents" for First-Principles Stability Analysis.

| Name | Type / Category | Primary Function in Stability Analysis |

|---|---|---|

| CASTEP [18] [19] | Plane-wave DFT Code | Calculates total energy of crystals; used for geometry optimization and property prediction under pressure. |

| VASP | Plane-wave DFT Code | Widely used code for electronic structure calculations and quantum-mechanical molecular dynamics. |

| SIESTA [17] | DFT Code (Local-orbital) | Uses localized basis sets for efficient calculation of large systems (100-1000 atoms), ideal for interfaces and molecules on surfaces. |

| QUANTUM ESPRESSO [17] | Plane-wave DFT Code | An integrated suite of Open-Source computer codes for electronic-structure calculations and materials modeling. |

| PBE Functional [17] [18] | Exchange-Correlation Functional (GGA) | A standard functional for general-purpose solid-state calculations; provides good lattice parameters and energies. |

| PBE0 Functional [18] | Exchange-Correlation Functional (Hybrid) | Used for more accurate prediction of electronic band gaps, which can be critical for understanding stability in semiconductors. |

| Ultrasoft Pseudopotential [18] [19] | Pseudopotential | Allows the use of a lower plane-wave energy cutoff, making calculations of elements with strong potentials (e.g., O, Fe) more efficient. |

| Norm-Conserving Pseudopotential [18] | Pseudopotential | More accurate and transferable than ultrasoft potentials, often used for calculating precise electronic properties and vibrational spectra. |

Advanced Protocols and Validation

Pressure-Dependent Studies

Stability is not absolute and can change with environmental conditions like pressure. First-principles calculations can predict these phase transitions. The workflow involves performing geometry optimizations at a series of fixed hydrostatic pressures, as demonstrated in studies of LiBO(_2) [18]. The theoretical cell parameters at various pressures are calculated by geometry optimization with both cell parameters and atomic positions relaxed under different hydrostatic pressures. The compressibilities based on the theoretical cell parameters can then be fitted using software like PASCal [18].

Validation of Results

To ensure the reliability of calculated stability metrics, several validation checks must be performed:

- Convergence Tests: Key computational parameters, such as the plane-wave kinetic energy cutoff and the k-point mesh density for Brillouin zone sampling, must be tested to ensure total energies are converged to within a desired tolerance (e.g., 1 meV/atom). For example, a high kinetic energy cutoff of 300 eV and a dense k-point mesh are used to ensure accuracy [18].

- Mechanical Stability: The calculated elastic constants should satisfy the Born-Huang stability criteria for the crystal's symmetry. For cubic crystals, this requires (C{11} > 0), (C{44} > 0), (C{11} > |C{12}|), and ((C{11} + 2C{12}) > 0) [19].

- Comparison with Experiment: Whenever possible, calculated structural parameters (lattice constants) and stability should be compared with available experimental data to benchmark the computational approach. As noted in one study, "The calculated lattice parameters are in good agreement with the experimental and theoretical results" [19].

The calculation of cohesive energy and formation enthalpy through first-principles methods provides a powerful, predictive framework for validating stable compounds. These metrics, grounded in quantum mechanics, enable researchers to navigate complex material spaces and identify promising candidates for synthesis, thereby accelerating the discovery of new materials for applications ranging from drug development to advanced engineering. By adhering to the detailed protocols for total energy calculation, using appropriate software and functionals, and rigorously validating results, scientists can confidently employ these key stability metrics in their research.

The pursuit of new functional materials, driven by computational design, requires robust validation of their predicted stability before experimental synthesis can be attempted. Within the broader context of first-principles calculations for stable compound research, establishing mechanical integrity is a critical first step. Elastic stability criteria provide the foundational theory and practical methodology for assessing whether a proposed crystal structure can physically exist under finite stress. These criteria determine if a material is mechanically stable by evaluating its response to small deformations, directly calculated from first-principles. This application note details the protocols for applying these criteria, using contemporary computational studies on advanced alloys and perovskites as illustrative examples [20] [21] [22].

Theoretical Foundation: The Born-Huang Criteria

The mechanical stability of a crystal is governed by its elastic stiffness tensor ( C{ij} ). The Born-Huang criteria specify the necessary and sufficient conditions on the ( C{ij} ) components for a lattice to be stable against any small deformation [21]. The specific constraints depend on the crystal system, as detailed below.

Table 1: Elastic Stability Conditions for Major Crystal Systems

| Crystal System | Independent Elastic Constants | Stability Criteria |

|---|---|---|

| Cubic | C11, C12, C44 | ( C{11} > 0 ), ( C{44} > 0 ), ( C{11} > |C{12}| ), ( C{11} + 2C{12} > 0 ) |

| Tetragonal | C11, C12, C13, C16, C33, C44, C66 | ( C{11} > 0 ), ( C{33} > 0 ), ( C{44} > 0 ), ( C{66} > 0 ),( C{11} - C{12} > 0 ), ( C{11} + C{33} - 2C{13} > 0 ),( 2C{11} + C{33} + 2C{12} + 4C_{13} > 0 ) |

| Hexagonal | C11, C12, C13, C33, C44 | ( C{11} > 0 ), ( C{33} > 0 ), ( C{44} > 0 ),( C{11} - C{12} > 0 ), ( (C{11} + C{12})C{33} - 2C_{13}^2 > 0 ) |

These criteria ensure that the crystal's strain energy remains positive for any small deformation, a fundamental requirement for stability. For instance, the cubic phase of In3Sc was confirmed to be mechanically stable at zero pressure by examining its elastic constants against these exact criteria [21].

Computational Protocols

Workflow for Elastic Stability Validation

The following diagram outlines the standard workflow for a first-principles elastic stability assessment, illustrating the logical progression from initial structure setup to the final stability determination.

Detailed Experimental Methodology

This section provides a step-by-step protocol for the key computational experiments, drawing from established methodologies in recent literature [20] [21] [23].

Protocol 1: Structural Optimization and Ground-State Energy Calculation

Objective: To determine the equilibrium lattice constants and the minimum-energy configuration of the crystal structure.

- Software Setup: Employ a Density Functional Theory (DFT) code such as VASP (Vienna Ab initio Simulation Package) [21], CASTEP [20], or WIEN2k [20] [22].

- Exchange-Correlation Functional: Select the Perdew-Burke-Ernzerhof (PBE) parameterization of the Generalized Gradient Approximation (GGA) [20] [21].

- Pseudopotentials: Use the Projector-Augmented Wave (PAW) [21] or Ultrasoft [20] pseudopotentials to model ion-electron interactions.

- Calculation Parameters:

- Set a plane-wave cutoff energy (e.g., 520 eV for VASP [21], 700 eV for CASTEP [20]).

- Select a k-point mesh for Brillouin zone integration (e.g., 20×20×20 for cubic structures [21], 15×15×15 for WIEN2k [20]) using the Monkhorst-Pack scheme.

- Set energy and force convergence criteria to high precision (e.g., energy change < 10−5 eV/atom, residual forces < 0.01 eV/Å [20]).

- Execution: Optimize all atomic positions and lattice parameters by minimizing the Hellmann-Feynman forces and stress tensor components. Fit the resulting energy-volume data to an equation of state (e.g., Murnaghan [21]) to obtain the equilibrium lattice constant (a0), bulk modulus (B0), and its pressure derivative (B′).

Protocol 2: Elastic Constant Calculation

Objective: To compute the full elastic constant tensor (Cij) for the optimized crystal structure.

- Method Selection: Choose one of two primary methods:

- Density Functional Perturbation Theory (DFPT): A highly efficient method implemented in codes like VASP that calculates the linear response of the system to small, symmetry-preserving strains [21].

- Finite Displacement Method: Apply a set of finite, small strains (both positive and negative) and diagonal strains to the lattice, and calculate the resulting stress tensor from DFT. The elastic constants are derived from the linear relationship between the applied strain and the computed stress.

- Calculation Setup: In VASP, this involves setting

IBRION=6orIBRION=7for the stress-strain approach or using theLElastictag. In CASTEP, the elastic constants module is used post-optimization [20]. - Validation: Ensure the resulting elastic tensor satisfies the required symmetry conditions for the crystal system. The number of independent constants should match those listed in Table 1.

Protocol 3: Stability Validation and Mechanical Property Derivation

Objective: To apply the Born-Huang criteria and derive key mechanical properties.

- Stability Check: Programmatically check the computed Cij values against the inequalities for the specific crystal system (see Table 1). If all conditions are satisfied, the phase is mechanically stable.

- Property Calculation: From the stable Cij tensor, calculate aggregate mechanical properties:

- Bulk Modulus (B): Represents resistance to uniform compression. For cubic crystals, ( B = (C{11} + 2C{12})/3 ).

- Shear Modulus (G): Represents resistance to shear deformation. Calculate using the Voigt-Reuss-Hill approximation.

- Young's Modulus (E): ( E = 9BG/(3B + G) ), indicates material stiffness.

- Pugh's Ratio (B/G): A common indicator of ductility (B/G > ~1.75 suggests ductile behavior) [22].

- Poisson's Ratio (ν): ( ν = (3B - 2G)/(2(3B + G)) ).

- Elastic Anisotropy: Measures the direction dependence of elastic properties.

Data Presentation and Analysis

The following table synthesizes key results from recent first-principles studies, demonstrating the application of these protocols across different material classes.

Table 2: Computed Elastic Properties and Stability of Selected Materials from First-Principles Studies

| Material | Crystal System | Elastic Constants (GPa) | Stability Outcome | Key Derived Properties | Reference |

|---|---|---|---|---|---|

| In3Sc | Cubic (Pm(\bar{3})m) | C11=118, C12=44, C44=42 | Stable (All criteria met) | B=69 GPa, G=... | [21] |

| XRbCl3 (X=Ca, Ba) | Cubic | Calculated via IRelast package | Stable & Ductile (B/G confirms) | Anisotropic, ν = ... | [22] |

| (MoNbReTaV)5Si3 | Tetragonal (D8m) | Calculated via first-principles | Stable | "Favorable mechanical properties" | [23] |

| LiBeZ (Z=P, As) | Cubic (F-43m) | Calculated using CASTEP | Stable | Chemically stable structure | [20] |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Computational Tools and Their Functions in Stability Research

| Tool / "Reagent" | Type | Primary Function in Analysis |

|---|---|---|

| VASP | Software Package | Performs DFT calculations for structural optimization, energy, and elastic constant computation [21]. |

| WIEN2k | Software Package | Full-potential LAPW method for highly accurate electronic structure and optical property calculation [20] [22]. |

| CASTEP | Software Package | Plane-wave pseudopotential DFT code for studying structural, mechanical, and vibrational properties [20]. |

| Phonopy | Software Package | Calculates phonon dispersion relations to confirm dynamical stability [21]. |

| GGA-PBE Functional | Computational Parameter | Approximates the quantum mechanical exchange-correlation energy; standard for geometric and elastic property prediction [20] [21]. |

| TB-mBJ Potential | Computational Parameter | Advanced potential that provides more accurate electronic band gaps compared to standard GGA-PBE [20] [22]. |

| Projector-Augmented Wave (PAW) | Computational Method | Manages electron-ion interactions, offering a good balance between accuracy and computational cost [21]. |

| Special Quasirandom Structure (SQS) | Computational Method | Models disordered alloys (e.g., high-entropy silicides) for first-principles study [23]. |

The Density of States (DOS) is a fundamental concept in solid-state physics and quantum chemistry that describes the number of electronically allowed quantum states at each energy level [24]. Formally defined as D(E) = N(E)/V, it represents the number of states per unit energy range per unit volume [24]. In practical terms, DOS provides a simplified, energy-focused representation of electronic structure that serves as a powerful alternative to complex band structure diagrams [25].

While band structure plots electronic energy levels against wave vectors in k-space, DOS compresses this information into a plot of state density versus energy, retaining crucial information about band gaps, Fermi level position, and state density while omitting k-space specifics [25]. This compressed representation makes DOS particularly valuable for rapid assessment of material properties including conductivity, band gaps, and bonding characteristics.

Projected Density of States (PDOS) extends this analysis by decomposing the total DOS into contributions from specific atoms, atomic orbitals (s, p, d, f), or molecular fragments [25]. This projection enables researchers to identify which atomic components dominate at specific energy ranges, providing atomic-level insights into electronic properties and chemical bonding [25].

Theoretical Foundation and Significance

Fundamental DOS Principles

The DOS formalism varies significantly with system dimensionality, reflecting how quantum confinement affects available states [24]:

Dimensionality Effects on DOS:

| Dimensionality | DOS Formulation | Key Characteristics |

|---|---|---|

| 1D Systems | D₁ᴅ(E) ∝ 1/√E | Diverges at band edges |

| 2D Systems | D₂ᴅ(E) = constant | Step-function behavior |

| 3D Systems | D₃ᴅ(E) ∝ √E | Parabolic energy dependence |

These dimensional effects crucially influence electron behavior in nanomaterials, quantum wells, and bulk materials [24].

DOS vs. Band Structure: Comparative Analysis

DOS provides a distinct analytical perspective compared to traditional band structure plots [25]:

Information Retained in DOS:

- Band gap presence and size

- Fermi level position

- Density of available states

- General conductivity assessment

Information Lost in DOS:

- k-space specific details

- Direct vs. indirect band gap distinction

- Effective carrier masses

- Band curvature information

The strategic choice between DOS and band structure analysis depends on research objectives: DOS excels in rapid property screening and orbital contribution analysis, while band structure remains essential for detailed carrier transport studies [25].

Computational Protocols

First-Principles Workflow for DOS/PDOS Calculation

The following diagram illustrates the comprehensive computational workflow for DOS/PDOS analysis:

Detailed Methodological Specifications

Structure Optimization Protocol:

- Software Implementation: CASTEP package with ultrasoft pseudopotential plane-wave (PPPW) method [26]

- Exchange-Correlation Functional: Generalized Gradient Approximation (GGA) with Perdew-Burke-Ernzerhof (PBE) parameterization [27] [26]

- Convergence Parameters: Total energy convergence threshold of 1.0 × 10⁻⁵ eV/atom with residual forces < 0.03 eV/Å [26]

- k-Point Sampling: 8 × 8 × 8 Monkhorst-Pack grid for Brillouin zone integration [26]

- Basis Set Quality: Plane-wave cutoff energy of 330 eV [26]

DOS-Specific Calculations:

- Energy Range: Typically -20 eV to +20 eV relative to Fermi level

- Smearing Method: Gaussian broadening with 0.1-0.2 eV width

- Orbital Projection: Atom-centered orbital decomposition for PDOS

- Symmetry Considerations: Exploit point group symmetry to reduce computational cost [24]

Research Reagent Solutions: Computational Tools

Essential Computational Resources:

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| DFT Software Packages | WIEN2k, CASTEP, VASP | Electronic structure calculation using FP-LAPW and pseudopotential methods [27] [26] |

| Exchange-Correlation Functionals | GGA-PBE, LDA, hybrid functionals | Approximate quantum mechanical exchange-correlation energy [27] [26] |

| Basis Sets | Plane-waves, localized orbitals, augmented plane-waves | Represent electronic wavefunctions in calculations [27] [26] |

| Pseudopotentials | Ultrasoft, norm-conserving, PAW | Replace core electrons to reduce computational cost [26] |

| Visualization Tools | VESTA, XCrySDen, VMD | Analyze and visualize crystal structures and electronic properties |

Analytical Framework: DOS Interpretation for Bonding Analysis

Bonding Characterization via PDOS

The core analytical approach for bonding analysis involves examining PDOS overlaps between adjacent atoms. When the PDOS projections of two spatially proximate atoms show significant overlap within the same energy range, this indicates bonding interaction and orbital hybridization [25].

Critical Interpretation Guidelines:

- Spatial Proximity Requirement: PDOS overlaps only indicate bonding when atoms are spatially close; distant overlaps lack physical significance [25]

- Orbital Specificity: Analyze specific orbital contributions (s, p, d, f) to determine hybridization character

- Energy Alignment: Relative energy positioning of bonding and anti-bonding states determines bond strength

- Peak Intensity Correlation: Stronger PDOS peak overlaps typically indicate stronger orbital hybridization

Quantitative Bonding Descriptors

Key Analytical Parameters from DOS/PDOS:

| Descriptor | Extraction Method | Bonding Significance |

|---|---|---|

| d-Band Center | First moment of d-projected PDOS | Catalytic activity predictor for transition metals [25] |

| Band Gap | Energy range with zero DOS between valence and conduction bands | Conductivity classification (metal, semiconductor, insulator) [24] |

| Orbital Projection Ratios | Relative integrals of s, p, d PDOS contributions | Hybridization character and bond type identification |

| Fermi Level Position | Energy where occupation probability is 0.5 | Oxidation state and chemical potential assessment |

| PDOS Overlap Integral | ∫PDOSA(E)×PDOSB(E)dE | Quantitative bond strength estimation |

Application Case Studies

Case Study 1: Doping-Induced Band Engineering in TiO₂

Experimental Objective: Engineer band gap reduction in TiO₂ through nitrogen (N) and fluorine (F) co-doping for enhanced visible-light photocatalytic activity [25].

Methodology:

- System: Undoped TiO₂ versus N/F-doped TiO₂

- Calculation Parameters: GGA-PBE functional, 400 eV cutoff, 6×6×6 k-point mesh

- Analysis Focus: PDOS comparison of O-2p, Ti-3d, N-2p, and F-2p orbitals

Quantitative Results:

| System | Band Gap (eV) | Valence Band Edge | Conduction Band Edge | New States |

|---|---|---|---|---|

| Pristine TiO₂ | 3.0 | O-2p dominant | Ti-3d dominant | None |

| N/F-doped TiO₂ | 2.5 | O-2p + N-2p hybrid | Ti-3d dominant | N-2p states above O-2p VB |

Bonding Interpretation: N-doping introduces occupied N-2p states above the O-2p valence band maximum, creating band gap narrowing through orbital hybridization while maintaining structural stability [25].

Case Study 2: Adsorption Bonding at Metal Surfaces

Experimental Objective: Quantify hydroxyl (OH) group adsorption strength on transition metal surfaces (Ni, Ir, Ta) for catalytic applications [25].

Methodology:

- Systems: OH adsorption configurations on Ni(111), Ir(111), Ta(110) surfaces

- Calculation Parameters: GGA-RPBE, slab models with 15Å vacuum, dipole corrections

- Analysis Focus: PDOS overlaps between metal-d and O-2p orbitals

Quantitative Bonding Analysis:

| Metal Surface | Adsorption Energy (eV) | PDOS Overlap Region | Bond Strength Classification |

|---|---|---|---|

| Ni(111) | -1.2 | -5.5 to -3.0 eV | Strong chemisorption |

| Ir(111) | -0.9 | -5.0 to -2.8 eV | Moderate chemisorption |

| Ta(110) | -0.4 | -6.0 to -4.5 eV | Weak physisorption |

Bonding Interpretation: Stronger PDOS overlaps at higher energies (closer to Fermi level) correlate with stronger adsorption bonds, enabling predictive catalyst design [25].

Case Study 3: d-Band Center Theory in Transition Metal Catalysis

Experimental Objective: Establish quantitative relationship between d-band center position and catalytic activity for hydrogen evolution reaction [25].

Methodology:

- Systems: Pt(111), Pd(111), Cu(111) surfaces

- Calculation Parameters: GGA-PBE, 450 eV cutoff, 15×15×1 k-point mesh

- Analysis Focus: d-projected PDOS and d-band center (ε_d) calculation

Quantitative Electronic Descriptors:

| Catalyst | d-Band Center (eV relative to E_F) | H Adsorption Energy (eV) | Experimental Activity |

|---|---|---|---|

| Pt(111) | -2.1 | -0.3 | Excellent |

| Pd(111) | -1.8 | -0.5 | Good |

| Cu(111) | -3.5 | -0.1 | Poor |

Bonding Interpretation: d-band centers closer to Fermi level enable stronger adsorbate interactions through enhanced orbital hybridization, establishing the d-band center as a predictive descriptor for catalytic activity [25].

Advanced Analytical Framework

Integrated DOS Interpretation Workflow

The relationship between computational results and physical interpretation follows a systematic analytical process:

Troubleshooting and Validation Framework

Common Computational Artifacts and Solutions:

| Issue | Identification | Resolution Strategy |

|---|---|---|

| Underestimated Band Gaps | GGA-PBE typical error | Use hybrid functionals (HSE06) or GW approximation [27] |

| PDOS Sum Mismatch | Total PDOS < DOS | Check projection methodology; use normalized projections [25] |

| k-Point Convergence | DOS variations with k-grid | Perform k-point convergence testing (6×6×6 minimum) [26] |

| Symmetry Over-exploitation | Missed defect states | Use primitive cells for defective systems [24] |

Experimental Validation Protocols:

- Photoemission Spectroscopy: Direct experimental DOS measurement via XPS/UPS

- Optical Spectroscopy: Band gap validation through UV-Vis absorption edges

- Transport Measurements: Electrical conductivity correlation with DOS at E_F

- Catalytic Testing: Activity correlation with d-band center predictions

DOS and PDOS analyses provide powerful, computationally accessible methods for probing bonding characteristics in materials design and catalyst development. The protocols outlined herein enable researchers to extract quantitative bonding descriptors from first-principles calculations, creating predictive frameworks for material properties.

Future methodological developments will likely focus on AI-enhanced PDOS analysis, machine learning for rapid projection calculations, and increased integration with real-time spectroscopy validation. These advances will further establish DOS analysis as an indispensable tool in the computational materials discovery pipeline, particularly for validating stable compounds in advanced materials research.

Computational Workflows in Action: From Theory to Practical Application

Hierarchical Workflow for Crystal Structure Prediction (CSP)

Crystal polymorphism, the ability of a single chemical compound to exist in multiple crystalline forms, is a critical phenomenon with profound implications for the pharmaceutical, agrochemical, and materials industries [28]. Different polymorphs can exhibit significantly different physical and chemical properties, including solubility, stability, dissolution rate, and bioavailability, directly impacting drug efficacy, safety, and manufacturability [28] [29]. The appearance of a previously unknown, more stable polymorph late in drug development can jeopardize formulation stability and even lead to market recalls, as famously occurred with ritonavir [28].

Experimental polymorph screening alone can be expensive, time-consuming, and sometimes fails to identify all low-energy polymorphs due to the inability to exhaust all possible crystallization conditions [28]. Computational Crystal Structure Prediction (CSP) has emerged as a powerful technique to complement experiments by identifying thermodynamically accessible solid forms in silico [28] [30]. A CSP workflow aims to predict all plausible crystal packings for a given molecule and establish their relative thermodynamic stability, thereby de-risking downstream processing and accelerating clinical formulation design [28] [31].

The central challenge of CSP lies in its dual requirement for extensive exploration of a vast conformational and crystallographic space and highly accurate energy evaluations to resolve fine energy differences (often just a few kJ/mol) between polymorphs [29] [30]. No single computational model can simultaneously fulfill both the need for speed in sampling and the demand for accuracy in ranking. To address this, the field has widely adopted a hierarchical workflow that employs a sequence of models of increasing accuracy and computational cost [28] [29] [30]. This document details the components, protocols, and applications of this hierarchical workflow for CSP, framing it within broader research on validating stable compounds via first-principles calculations.

The hierarchical CSP workflow is designed to efficiently navigate the complex energy landscape of molecular crystals. It strategically combines rapid but approximate methods for broad sampling with expensive, high-fidelity methods for final ranking. The workflow typically progresses through three main stages: 1) Initial Structure Generation and Screening, 2) Intermediate Refinement and Ranking, and 3) Final Stability Evaluation [28] [29] [30].

The following diagram illustrates the logical flow and the models employed at each stage of this hierarchical approach.

Workflow Stage 1: Initial Structure Generation and Screening

The primary goal of this stage is to generate a vast and diverse set of plausible initial crystal structures and perform a coarse-grained filtering to reduce the candidate pool for more expensive calculations.

Experimental Protocols

- Molecular Conformer Generation: The process begins with generating low-energy molecular conformers. This is typically done using quantum chemical methods like Density Functional Theory (DFT) with a functional such as B3LYP and a basis set like 6-311G, incorporating dispersion corrections (e.g., D3) [32]. The resulting optimized gas-phase conformer(s) serve as the rigid or semi-flexible building blocks for crystal packing.

- Systematic and Random Packing Search: This step explores how the molecular building blocks arrange themselves in a crystal lattice.

- Systematic Search: Algorithms like the one reported by Schrödinger systematically divide the crystal packing parameter space into subspaces based on space group symmetries and search them consecutively [28]. Common space groups for organic crystals (e.g., P2(1)/c, P-1, P2(1)2(1)2(1)) are prioritized.

- Random Search: Tools like Genarris 3.0 use random structure generation across a broad set of compatible space groups [29]. Initial structures may undergo compression using a regularized hard-sphere potential to achieve realistic close-packing.

- Initial Relaxation and Filtering: The thousands to millions of generated trial structures are then subjected to a rapid initial relaxation. This is often performed using:

- Classical Force Fields (FF): Efficient but approximate empirical potentials [30].

- Machine Learning Force Fields (MLFF): Potentials like the Universal Model for Atoms (UMA) can accelerate this step significantly, relaxing structures in about 15 seconds each on a modern GPU [29].

- Hybrid Ab Initio/Empirical Force-Field (HAIEFF) Models: These partition the lattice energy into ab initio intramolecular and electrostatic terms, and an empirical repulsion-dispersion term [30].

Following relaxation, duplicate structures are removed using tools like Pymatgen's

StructureMatcher[29].

Key Reagents and Computational Tools

Table 1: Essential Research Solutions for Workflow Stage 1

| Item Name | Function/Description | Example Tools/Methods |

|---|---|---|

| Quantum Chemistry Code | Optimizes gas-phase molecular conformers and calculates electronic properties. | Gaussian, ORCA |

| Systematic Packing Sampler | Methodically explores crystal packing in defined space groups. | Schrödinger's CSP platform [28] |

| Random Structure Generator | Generates a diverse set of random crystal packing arrangements. | Genarris 3.0 [29] |

| Classical Force Field | Provides fast, approximate energy evaluation and geometry relaxation. | Transferable (e.g., GAFF) or tailor-made FFs [30] |

| Structure Deduplication Tool | Identifies and removes redundant crystal structures from the candidate pool. | Pymatgen's StructureMatcher [29] |

Workflow Stage 2: Intermediate Refinement and Ranking

This stage refines the shortlisted candidates from Stage 1 using more accurate energy models to create a reliable preliminary ranking of polymorph stability.

Experimental Protocols

- Geometry Optimization with MLFFs: Candidate structures are fully relaxed using general-purpose or system-specific Machine Learning Interatomic Potentials (MLIPs). These models, such as the Universal Model for Atoms (UMA) or MACE, are trained on large datasets of DFT calculations (e.g., the OMC25 dataset) and offer near-DFT accuracy at a fraction of the cost [29] [32]. This step is critical for refining atomic coordinates and lattice parameters.

- Energy Re-ranking with MLFFs: The lattice energies of the optimized structures are computed using the same high-fidelity MLFF. The relative energies are used to generate a more accurate stability ranking than was possible in Stage 1.

- Clustering and Deduplication: The refined energy landscape often contains many structures that are geometrically very similar (e.g., with a root-mean-square deviation (RMSD) of less than 1.2 Å for a cluster of 15 molecules) but represent different local minima [28]. Clustering algorithms are applied to group these similar structures, typically selecting the lowest-energy representative from each cluster. This step mitigates the "over-prediction" problem and simplifies the landscape [28].

- Energy Windowing: A common practice is to retain only those structures whose lattice energy lies within a predefined window (e.g., 5-10 kJ/mol) above the global minimum found at this stage, forming the final potential energy landscape for high-fidelity validation [29].

Workflow Stage 3: Final Stability Evaluation

The objective of the final stage is to provide a definitive assessment of the relative stability of the top-ranked candidate polymorphs using the most accurate methods available.

Experimental Protocols

- Final Geometry Optimization and Ranking with DFT-D: The shortlisted structures (typically the top 10-50) undergo further geometry optimization and final energy ranking using periodic Density Functional Theory with dispersion corrections (DFT-D). Functionals like the strongly constrained and appropriately normed (SCAN) meta-GGA, particularly r(^2)SCAN-D3, are often used for their superior accuracy for molecular crystals [28]. This step is considered the "gold standard" for final energy ranking in CSP, though it is computationally prohibitive for thousands of structures.

- Free Energy Calculations: Lattice energy calculations at 0 K are insufficient to model finite-temperature behavior. To account for thermodynamic effects, free energy contributions are computed for the most stable candidates. This involves:

- Lattice Dynamics: Calculating vibrational frequencies within the harmonic or quasi-harmonic approximation to obtain the Helmholtz free energy [28] [29].

- Molecular Dynamics (MD): Using methods like free energy perturbation (FEP+) or thermodynamic integration to compute thermodynamic solubility and relative stability at room temperature [31].

Key Reagents and Computational Tools

Table 2: Essential Research Solutions for Workflow Stages 2 and 3

| Item Name | Function/Description | Example Tools/Methods |

|---|---|---|

| Machine Learning Force Field (MLFF) | High-accuracy, lower-cost alternative to DFT for geometry optimization and energy ranking. | Universal Model for Atoms (UMA) [29], MACE [32] |

| Periodic DFT Code | Provides final, high-fidelity geometry optimization and energy evaluation. | VASP, Quantum ESPRESSO, CP2K |

| Dispersion Correction | Accounts for van der Waals interactions, critical for molecular crystals. | D3, D4 [32] |

| Free Energy Calculation Method | Computes temperature-dependent stability and solubility. | Free Energy Perturbation (FEP+), Lattice Dynamics [28] [31] |

Performance and Validation

Large-scale validations demonstrate the reliability and predictive power of the hierarchical CSP workflow. The following table summarizes key performance metrics from recent large-scale studies.

Table 3: Performance Metrics of Hierarchical CSP Workflows from Large-Scale Validations

| Study Focus | Dataset Size | Key Performance Metric | Result | Source |

|---|---|---|---|---|

| Pharmaceutical CSP | 66 drug-like molecules (137 polymorphs) | Success rate in reproducing known experimental structures | All 137 known polymorphs were sampled and ranked in the top 10 candidates [28] | [28] |

| Rigid Molecule CSP | ~1,000 small, rigid organic molecules | Success rate in locating experimental structures | 99.4% of observed experimental structures were found on the predicted landscape [32] | [32] |

| FastCSP (MLFF-based) | 28 mostly rigid molecules | Ranking of experimental structures | Known experimental structures were ranked within the top 10 and 5 kJ/mol of the global minimum [29] | [29] |

| Pharmaceutical CSP | 33 molecules with one known form | Ranking of known structure after clustering | For 26/33 molecules, the known structure was ranked #1 or #2 [28] | [28] |

These studies confirm that a well-executed hierarchical workflow can consistently reproduce experimentally observed crystal structures and provide a reliable assessment of their thermodynamic stability. Furthermore, CSP often predicts novel low-energy polymorphs not yet discovered by experiment, highlighting its power to de-risk late-appearing polymorphs in drug development [28].

The Scientist's Toolkit: Essential Research Reagents

This section consolidates the critical computational tools and methods referenced in the protocols.

Table 4: The CSP Scientist's Toolkit

| Toolkit Item | Category | Brief Description & Function |

|---|---|---|

| Genarris 3.0 | Software | An open-source package for random generation of crystal structures, often used for initial candidate sampling [29]. |

| GLEE | Software | Global Lattice Energy Explorer; uses quasi-random sampling for CSP landscape exploration [32]. |

| Universal Model for Atoms (UMA) | ML Model | A universal machine learning interatomic potential for fast and accurate relaxation and ranking of crystal structures [29]. |

| r²SCAN-D3 Functional | Computational Method | A meta-GGA density functional with dispersion corrections, used for final, high-accuracy DFT ranking [28]. |

| Hybrid Ab Initio/Empirical FF (HAIEFF) | Energy Model | A lattice energy model combining ab initio intramolecular/electrostatic terms with an empirical repulsion-dispersion term [30]. |

| Pymatgen | Software Library | A robust Python library for materials analysis, including utilities for structure comparison and deduplication [29]. |

| Free Energy Perturbation (FEP+) | Computational Method | A physics-based method for computing relative free energies, used for predicting thermodynamic solubility [31]. |

Validating the thermodynamic stability of compounds is a critical step in materials design and drug development, ensuring that proposed structures are viable for synthesis and application. First-principles computational methods, particularly density functional theory (DFT), provide a powerful framework for this validation by enabling the prediction of material properties from quantum mechanical calculations without empirical parameters [33]. Within this framework, phonon calculations are indispensable, as they assess dynamic stability by determining whether a structure will remain stable against atomic vibrations at finite temperatures [34]. Furthermore, the phonon spectrum serves as the foundation for computing key thermodynamic properties, such as vibrational free energy and heat capacity, which are essential for constructing temperature-dependent phase diagrams and understanding material behavior under operational conditions [34] [35]. This document outlines integrated application notes and protocols for employing these computational techniques within a broader research context, providing practical guidance for researchers and scientists.

Core Theoretical Concepts

The Role of Phonons in Thermodynamic Stability

Phonons, the quantized lattice vibrations in a crystalline material, directly influence a multitude of physical properties, including thermal conductivity, mechanical behavior, and phase stability [36]. The calculation of a material's phonon band structure is the primary method for validating its dynamic stability. A structure is considered dynamically stable if all its phonon frequencies across the Brillouin zone are real (i.e., no imaginary frequencies). The presence of significant imaginary frequencies indicates that the lattice is unstable and will undergo a phase transition or reconstruction [37].

Beyond dynamic stability, phonon spectra are the fundamental input for determining a system's vibrational entropy and other thermodynamic potentials. The quasi-harmonic approximation, which extends the harmonic model by accounting for volume-dependent phonon frequencies, enables the calculation of the Helmholtz free energy, A(V, T), as a function of volume and temperature. This is crucial for predicting properties like thermal expansion and the stability of different polymorphs at varying temperatures [37]. The vibrational contribution to the Helmholtz free energy, A_vib, is calculated from the phonon density of states and directly impacts the total free energy of a system, thereby influencing phase stability [35].

First-Principles Foundation

The accuracy of phonon and stability calculations hinges on high-quality first-principles computations. DFT is the most widely used method for obtaining the ground-state energies and electronic structures that serve as the input for lattice dynamics [33] [36]. The formalism for predicting macroscopic thermodynamic information from a series of quantum mechanical energy calculations is well-established, enabling the practical prediction of phase diagrams [33]. For instance, these methods can be used to compute the formation energies of point defects, such as vacancies, and to analyze how their stability and charge transition levels evolve with temperature by incorporating phonon contributions to the free energy [38].

Table 1: Key Thermodynamic Properties Derived from Phonon Calculations

| Property | Computational Formula/Description | Significance in Stability Validation |

|---|---|---|

| Phonon Dispersion | Phonon frequency ω as a function of wave vector q. |

Identifies dynamical stability; imaginary frequencies indicate instability. |

| Helmholtz Free Energy | A(T) = E_total + A_vib(T), where E_total is the internal energy from DFT. |

Determines the stable phase at a given temperature and volume [37]. |

| Vibrational Entropy | Derived from the phonon density of states. | Favors phases with softer vibrational modes at higher temperatures. |

| Formation Free Energy | G_form(T) = H_form - T*S_vib for defects or compounds. |

Assesses the concentration of defects or stability of compounds vs. reservoirs [38]. |

Computational Methods and Protocols

This section details the primary computational methodologies for performing phonon and stability calculations.

Finite-Displacement Method

The finite-displacement method is a widely used technique for calculating phonon properties from first principles [36].

Experimental Protocol:

- Structure Relaxation: Begin with a fully optimized crystal structure using DFT. The relaxation must account for both atomic positions and lattice vectors until the forces on atoms and the stress on the unit cell are minimized (e.g., forces < 0.001 eV/Å) [37].

- Supercell Construction: Build a supercell of the primitive cell large enough to capture the relevant atomic interactions. A larger supercell is required for materials with long-range forces.