Feature Selection Engineering for Thermodynamic Stability Models: A Guide for Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on applying feature selection engineering to build robust and predictive models for thermodynamic stability.

Feature Selection Engineering for Thermodynamic Stability Models: A Guide for Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying feature selection engineering to build robust and predictive models for thermodynamic stability. It covers the foundational principles of binding thermodynamics and its critical role in drug design, explores a suite of feature selection methodologies from filter to embedded methods, addresses common challenges like data bias and entropy-enthalpy compensation, and presents real-world validation case studies from materials science and drug discovery. The goal is to equip scientists with practical strategies to enhance model accuracy, interpretability, and efficiency, thereby accelerating the discovery of stable and effective therapeutic compounds.

The Pillars of Prediction: Understanding Thermodynamic Stability and Feature Relevance

Why Thermodynamic Stability is a Cornerstone of Drug Design

In rational drug design, achieving high binding affinity between a drug candidate and its biological target has historically been the primary focus. However, this approach provides an incomplete picture of molecular interactions, as similar binding affinities can mask radically different underlying thermodynamics. Thermodynamic stability—the balance of energetic forces driving binding interactions—provides essential information for understanding and optimizing these molecular interactions [1]. A comprehensive thermodynamic evaluation is vital early in the drug development process to speed development toward an optimal energetic interaction profile while retaining good pharmacological properties [1]. The most effective drug design platforms integrate structural, thermodynamic, and biological information to create a complete picture of drug-target interactions.

The optimization of thermodynamic parameters represents a sophisticated approach to drug development that goes beyond simple affinity measurements. Thermodynamic characterization reveals the balance between enthalpic (bond-forming) and entropic (disorder-related) forces, providing crucial insights for guiding molecular optimization [1]. This is particularly important given the phenomenon of entropy-enthalpy compensation, where designed modifications producing favorable effects on enthalpy often cause compensatory unfavorable effects on entropy, or vice versa, yielding little net improvement in binding affinity [1]. Understanding these trade-offs is essential for efficient drug optimization.

Computational Approaches: Modeling and Machine Learning

Key Thermodynamic Concepts and Relationships

Table 1: Fundamental Thermodynamic Parameters in Drug Design

| Parameter | Symbol | Interpretation | Significance in Drug Design |

|---|---|---|---|

| Gibbs Free Energy | ΔG | Overall spontaneity of binding | Determines binding affinity; negative values favor spontaneous binding |

| Enthalpy | ΔH | Heat changes from bond formation/breakage | Favorable (negative) values indicate strong specific interactions |

| Entropy | ΔS | Changes in system disorder | Favorable (positive) values often associated with hydrophobic interactions |

| Heat Capacity | ΔCp | Temperature dependence of ΔH | Indicator of binding mechanisms and conformational changes |

The fundamental relationship governing these parameters is defined by the equation: ΔG = ΔH - TΔS, where T is the absolute temperature [1]. The free energy (ΔG) determines the binding affinity, with negative values indicating spontaneous binding. However, this single parameter obscures the distinct contributions of enthalpy (ΔH) from bond formation and entropy (ΔS) from changes in disorder [1]. Understanding this balance is crucial because different combinations of ΔH and ΔS can yield the same ΔG but represent entirely different binding modes with implications for selectivity and optimization strategies.

Machine Learning in Thermodynamic Modeling

Machine learning has emerged as a powerful tool for predicting thermodynamic properties of complex systems, overcoming limitations of traditional theoretical models [2]. ML algorithms can learn complex relationships between molecular structures and their thermodynamic behavior from large datasets, enabling accurate predictions without extensive experimental measurements. This capability is particularly valuable in pharmaceutical development where experimental determination of properties like solubility can be time-consuming and costly [3].

Several ML approaches have demonstrated success in thermodynamic modeling:

- Gaussian Process Regression (GPR) has shown superior performance in predicting drug solubility and activity coefficients, achieving R² scores of 0.9950 on test data in recent studies [4].

- Random Forest and Ensemble Methods effectively predict formation energies and thermodynamic stability of materials, with one study reporting mean absolute errors of 121 meV/atom for cubic perovskite systems [5].

- Deep Neural Networks can learn complex patterns from molecular descriptors and quantum chemical calculations to predict solubility and other key properties [2] [4].

These ML methods utilize various molecular descriptors, including elemental properties, structural features from Voronoi tessellations, and quantum chemical calculations to build predictive models [5]. The integration of ML with high-throughput molecular simulations has been particularly fruitful, generating massive datasets that far exceed the scale of classical experimental methods [2].

Integrated Molecular Modeling and Pocket Detection

Structure-based drug design relies heavily on identifying and characterizing binding sites on protein surfaces. Methods like AlphaSpace utilize fragment-centric topographical mapping to analyze concave regions on biomolecular surfaces, which is crucial for targeting protein-protein interactions (PPIs) [6]. This approach clusters alpha-spheres placed at vertices of Voronoi diagrams to represent binding pockets, providing insights for lead optimization and ligand screening [6].

Deep learning methods are increasingly applied to binding site detection. DeepSurf, a 3D-convolutional neural network, has demonstrated superior performance at identifying druggable sites on diverse datasets of apo and holo structures [6]. Similarly, MaSIF (Molecular Surface Interaction Fingerprinting) uses surface patches characterized by chemical and geometric fingerprints to predict protein-protein and ligand interaction sites [6]. These computational approaches enable researchers to identify potential binding pockets and assess their ligandability before experimental verification.

Experimental Protocols and Stability Assessment

Stability Testing Methodologies

Table 2: Standardized Stability Testing Protocols for Pharmaceuticals

| Test Type | Conditions | Purpose | Duration |

|---|---|---|---|

| Real-time Stability | Recommended storage conditions | Establish shelf life under normal conditions | Up to product expiry date |

| Accelerated Testing | Elevated temperature/humidity | Predict stability over shorter timeframes | 3-6 months |

| Forced Degradation | Extreme stress conditions | Identify degradation pathways and products | Hours to weeks |

| Photostability | Controlled light exposure | Assess light sensitivity | 24-48 hours |

Experimental stability testing is critical in drug development to ensure quality, safety, and efficacy of active pharmaceutical ingredients (APIs) [7]. The STABLE (Stability Toolkit for the Appraisal of Bio/Pharmaceuticals' Level of Endurance) framework provides a standardized approach for evaluating API stability across five key stress conditions: oxidative, thermal, acid-catalyzed hydrolysis, base-catalyzed hydrolysis, and photostability [7]. This toolkit uses a color-coded scoring system to quantify and compare stability, facilitating consistent assessments across different APIs.

Forced degradation testing intentionally exposes drug products to extreme conditions to assess their stability under stress and understand degradation pathways [7]. Common stress factors include acid/base-catalyzed hydrolysis, thermal degradation, photolysis, and oxidation. Typically, degradation between 5% and 20% is considered acceptable for stability studies and validation of stability-indicating assay methods (SIAMs) [7].

Thermal Shift Assays and Proteome Profiling

Thermal shift proteomic assays represent advanced experimental approaches for probing drug-protein interactions. Mass spectrometry-based thermal proteome profiling is predominantly used in characterization of drug-protein interactions to identify target and off-target binding [8]. This method involves measuring protein thermal stability changes in the presence of ligands, providing insights into binding mechanisms and specificity.

Method development in thermal shift assays has focused on improving sensitivity and accuracy of detecting protein-small molecule and protein-protein interactions [8]. Optimization strategies prioritize increased independent biological replicates over the number of evaluated temperatures, enhancing statistical reliability of results. These experimental advances enable comprehensive characterization of drug-target engagement in complex biological systems.

Research Reagent Solutions for Thermodynamic Studies

Table 3: Essential Research Reagents for Thermodynamic Stability Assessment

| Reagent/Category | Function in Experiments | Application Context |

|---|---|---|

| Supercritical CO₂ | Solvent for particle size reduction | Enhances drug solubility and bioavailability [3] |

| HCl/NaOH Solutions (0.1-1 mol/L) | Acid/base stress testing | Forced degradation studies for hydrolytic stability [7] |

| Hydrogen Peroxide Solutions | Oxidative stress testing | Evaluating oxidative degradation pathways [7] |

| Controlled Light Chambers | Photostability testing | Assessing drug sensitivity to light exposure [7] |

| Thermal Stability Chambers | Accelerated stability testing | Predicting shelf life under elevated temperatures [7] |

| DMSO/Solvent Systems | Solubilization vehicles | Maintaining drug solubility during experimental assays [3] |

Troubleshooting Guides and FAQs

FAQ 1: Why do my compound modifications show improved binding in structural models but no actual affinity enhancement?

This common issue typically results from entropy-enthalpy compensation [1]. When you introduce modifications to increase specific bonding (improving enthalpy), you may inadvertently restrict molecular flexibility or increase ordering in the binding complex (worsening entropy). The net result is little to no change in overall binding affinity (ΔG) despite apparent structural improvements.

Troubleshooting Steps:

- Perform full thermodynamic profiling using isothermal titration calorimetry (ITC) to separate ΔH and ΔS contributions

- Analyze water-mediated bonding networks in the binding site - displaced water molecules can contribute significantly to entropy

- Consider introducing flexibility in other regions of the molecule to compensate for increased rigidity at the binding site

- Use molecular dynamics simulations to assess conformational entropy changes

FAQ 2: How can I accurately predict API solubility during early development stages?

Low solubility affects >90% of newly developed drug molecules, making accurate prediction crucial [9] [10]. Traditional methods are often insufficient for complex API-polymer systems.

Solution Approaches:

- Employ machine learning models like Gaussian Process Regression (GPR), which has demonstrated R² scores of 0.9950 for solubility prediction [4]

- Utilize supercritical CO₂ technology with thermodynamic modeling (e.g., Bian model with AARD% of 8.11) for solubility enhancement [3]

- Implement the Z-score method for outlier detection in your dataset to improve model reliability [4]

- Combine experimental data with computational predictions using activity coefficient models like PC-SAFT [4]

FAQ 3: What strategies can address thermodynamic instability in protein-protein interaction inhibitors?

Targeting PPIs presents unique challenges due to typically large, shallow interfaces. Conventional small molecules often lack sufficient binding energy.

Optimization Strategies:

- Use fragment-centric pocket detection tools like AlphaSpace to identify untargeted binding pockets [6]

- Employ minimal protein mimetics guided by pocket analysis - this approach has demonstrated ∼10-fold improvements in binding affinity [6]

- Incorporate noncanonical amino acids to access untargeted binding regions - this has successfully reversed binding affinity losses from truncation [6]

- Target secondary binding sites identified through topographic mapping to enhance overall binding energy [6]

FAQ 4: How can I balance enthalpic and entropic optimization in lead compounds?

Traditional drug design often over-relies on hydrophobic decoration for entropic gains, leading to solubility limitations and suboptimal physicochemical properties [1].

Balanced Optimization Approach:

- Early thermodynamic profiling to establish baseline enthalpy-entropy balance

- Prioritize enthalpic optimization through precise atomic interactions - though more challenging, it provides better specificity

- Monitor lipophilic efficiency metrics to avoid excessive hydrophobicity while maintaining potency

- Use thermodynamic optimization plots to visualize the evolution of energetic contributions during optimization

- Implement enthalpic efficiency index as a key parameter for compound prioritization [1]

FAQ 5: What experimental and computational methods best integrate for comprehensive stability assessment?

A robust stability assessment requires both experimental and computational approaches.

Integrated Methodology:

- Computational Phase:

Experimental Phase:

Iterative Optimization:

- Use experimental results to refine computational models

- Apply machine learning for pattern recognition in degradation data

- Continuously update predictive models with experimental findings

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental relationship between ΔG, ΔH, and ΔS, and how do they collectively determine reaction spontaneity?

The Gibbs free energy change (ΔG) is defined by the equation ΔG = ΔH - TΔS, where ΔH is the change in enthalpy, ΔS is the change in entropy, and T is the absolute temperature in Kelvin [11] [12] [13]. This relationship is the cornerstone for predicting the direction of chemical and biological processes. The sign of ΔG provides a definitive indicator of spontaneity for a reaction occurring at constant temperature and pressure [11] [12].

- ΔG < 0: The reaction proceeds spontaneously [12].

- ΔG > 0: The reaction is non-spontaneous and will not occur without an input of energy [12].

- ΔG = 0: The system is at equilibrium, with no net change [12].

FAQ 2: How can two reactions with the same ΔG have different underlying thermodynamic drivers, and why is this distinction important in drug design?

A single ΔG value can result from vastly different combinations of ΔH and ΔS, a phenomenon known as entropy-enthalpy compensation [1] [14]. This is critical because these different profiles indicate different binding modes and molecular interactions [1].

- Enthalpy-Driven Binding (ΔH dominant): Often associated with specific, directional interactions like hydrogen bonding and van der Waals forces. This profile is increasingly sought in drug design as it may lead to higher selectivity and better physicochemical properties [1] [14].

- Entropy-Driven Binding (TΔS dominant): Often associated with the release of ordered water molecules from hydrophobic surfaces (hydrophobic effect) and an increase in disorder. While this can be engineered to gain affinity, over-reliance on hydrophobicity can lead to poor drug solubility [1].

FAQ 3: My reaction is thermodynamically spontaneous (ΔG < 0), but in practice, it does not proceed at a measurable rate. What is the likely explanation?

A negative ΔG indicates that a reaction is thermodynamically favored, but it provides no information about the kinetics, or the speed, of the reaction [14]. A reaction may be spontaneous but face a significant activation energy barrier that prevents it from proceeding at an observable rate under given conditions. This is a key distinction: thermodynamics tells you "if" a reaction can happen, while kinetics tells you "how fast" it will happen. Resolving this requires investigating the reaction pathway and potentially using a catalyst.

FAQ 4: In the context of feature selection for thermodynamic stability models, what do ΔH and ΔS represent at the molecular level?

When building models to predict thermodynamic stability, ΔH and ΔS are composite features representing the net energy changes from all underlying molecular interactions.

- ΔH (Enthalpy Change): Represents the heat change of the system, reflecting the net making and breaking of non-covalent bonds (e.g., hydrogen bonds, electrostatic interactions, van der Waals forces) between the molecules and with the solvent [1] [15]. A negative ΔH (exothermic) typically favors spontaneity.

- ΔS (Entropy Change): Represents the change in molecular disorder. A positive ΔS (increase in disorder) favors spontaneity and often arises from the release of structured solvent molecules or an increase in conformational freedom [1] [15].

Troubleshooting Common Experimental Issues

Problem: Discrepancy between calculated and measured ΔG values.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Non-standard Conditions | Calculate the reaction quotient (Q) and use ΔG = ΔG° + RT ln Q [1]. | Ensure concentrations of reactants and products are accounted for, as ΔG° only applies to standard states. |

| Significant Heat Capacity Change (ΔCp) | Measure ΔH at multiple temperatures. A linear change indicates a non-zero ΔCp [1] [15]. | Use extended equations that incorporate ΔCp for accurate calculation of ΔH(T) and ΔS(T) [1]. |

| Coupled Processes | Use controls to check for unexpected protonation events or solvent interactions. | Deconvolute the observed heat changes (e.g., from ITC) to isolate the binding energetics of interest [1]. |

Problem: High variability in entropy (ΔS) measurements for biomolecular interactions.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Solvent Isotope Effects | Compare experiments conducted in H₂O versus ²H₂O (D₂O) [15]. | Use a consistent solvent system and account for isotopic effects in interpretation. |

| Inaccurate ΔH Measurement | Verify calorimeter calibration and baseline stability. | Use direct measurement methods like Isothermal Titration Calorimetry (ITC) instead of van't Hoff analysis where possible, as the latter can be skewed by a non-zero ΔCp [1]. |

| Conformational Flexibility | Employ structural techniques (e.g., X-ray crystallography, NMR) to assess flexibility. | Recognize that the restriction of conformational freedom upon binding leads to a negative ΔS, which is a fundamental component of the interaction [14]. |

Experimental Protocols

Protocol for Determining Thermodynamic Parameters via Isothermal Titration Calorimetry (ITC)

Principle: ITC directly measures the heat released or absorbed during a biomolecular binding event, allowing for the direct determination of ΔH, ΔG, and ΔS in a single experiment [1] [14].

Methodology:

- Sample Preparation:

- Purify both the ligand and the target macromolecule (e.g., protein, DNA) to homogeneity.

- Dialyze both molecules into an identical buffer to avoid heat effects from buffer mismatch.

- Degas all solutions to prevent bubble formation in the calorimeter cell.

Instrument Setup:

- Load the macromolecule solution into the sample cell.

- Load the ligand solution into the syringe.

- Set the experimental temperature, stirring speed, and the number of injections.

Data Acquisition:

- The instrument performs a series of automated injections of the ligand into the macromolecule solution.

- After each injection, the instrument measures the heat required to maintain the sample cell at the same temperature as the reference cell.

Data Analysis:

- The raw heat pulses are integrated to produce a binding isotherm (heat vs. molar ratio).

- Non-linear regression of the isotherm is used to fit the model and determine:

- The binding constant (Kₐ), from which ΔG is calculated using ΔG = -RT ln Kₐ [1].

- The enthalpy change (ΔH), directly measured.

- The stoichiometry (n) of the interaction.

- The entropy change (ΔS) is calculated using the fundamental equation ΔG = ΔH - TΔS [1] [14].

Protocol for Van't Hoff Analysis

Principle: The equilibrium constant (K) is measured at different temperatures, and the van't Hoff plot is used to derive the thermodynamic parameters [1].

Methodology:

- Equilibrium Constant Determination:

- Determine the binding affinity (Kd or Ka) for the interaction at a minimum of five different temperatures using a technique such as surface plasmon resonance (SPR) or fluorescence anisotropy.

Data Plotting:

- Create a van't Hoff plot by graphing ln(Kₐ) vs. 1/T.

Parameter Calculation:

- The slope of the linear fit is equal to -ΔH/R, allowing calculation of ΔH.

- The y-intercept is equal to ΔS/R, allowing calculation of ΔS.

- ΔG can then be calculated at any temperature using the standard equation [1].

- Note: This method assumes ΔCp is zero. Non-linearity in the van't Hoff plot indicates a significant ΔCp, requiring more complex analysis [1].

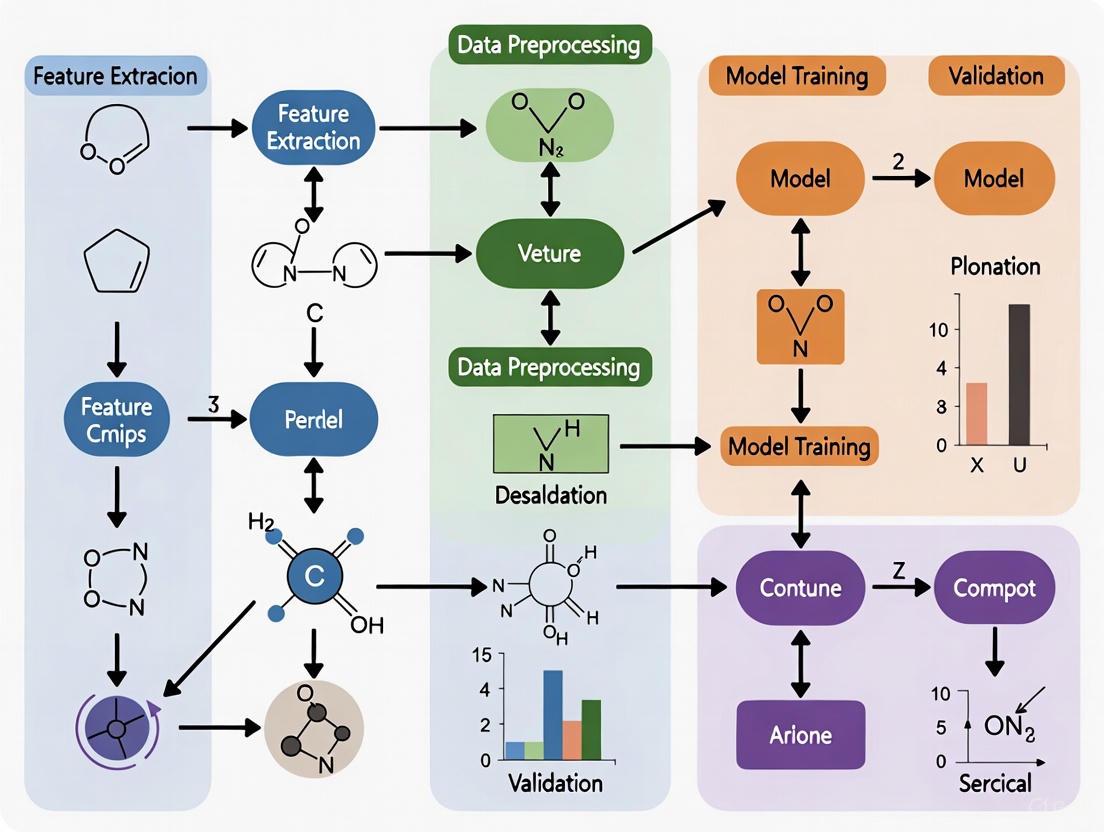

Workflow and Relationship Visualizations

Diagram 1: Experimental workflow for determining thermodynamic parameters.

Diagram 2: Logical relationship between ΔG, ΔH, and TΔS.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Thermodynamic Experiments |

|---|---|

| Isothermal Titration Calorimeter (ITC) | The primary instrument for directly measuring the heat change of a binding interaction, allowing simultaneous determination of Kₐ, ΔH, and n [1] [14]. |

| Surface Plasmon Resonance (SPR) Instrument | An optical biosensor used for label-free, real-time measurement of binding kinetics (kon, koff) and equilibrium constants (Kd) at multiple temperatures for van't Hoff analysis [14]. |

| High-Precision Dialysis System | Critical for preparing samples for ITC by ensuring the ligand and macromolecule are in identical buffer conditions, thus minimizing artifactic heat signals from buffer mismatch. |

| Stable, Inert Buffer Systems | Provide a consistent chemical environment. Phosphate buffers are often preferred over Tris for calorimetry because they have a smaller protonation enthalpy [14]. |

| Differential Scanning Calorimeter (DSC) | Used to study the thermal denaturation of biomolecules (e.g., protein unfolding), providing information on melting temperature (Tm) and the enthalpy and heat capacity changes associated with the transition [1]. |

The Critical Role of Feature Selection in Model Generalization and Performance

Technical Support: Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when building machine learning models for predicting material properties, such as thermodynamic stability.

FAQ 1: My model achieves high accuracy on training data but performs poorly on unseen validation data. What is the cause and how can I fix it?

- Problem: This is a classic sign of overfitting, where the model learns noise and irrelevant patterns from the training data instead of the underlying generalizable relationship.

- Solution: Implement rigorous feature selection to reduce dimensionality and remove irrelevant or redundant features.

- Actionable Protocol: Apply a Minimum Redundancy Maximum Relevance (mRMR) filter. This algorithm selects features that are highly correlated with the target property (maximum relevance) while being minimally correlated with each other (minimum redundancy) [16]. This prevents the model from being overwhelmed by multiple features conveying the same information.

FAQ 2: My dataset is limited to a few hundred samples, but I have hundreds of potential features. Can I still build a reliable model?

- Problem: Yes, this is a typical "curse of dimensionality" scenario. With limited data and many features, models fail to learn effectively.

- Solution: Prioritize feature selection methods that are effective for small datasets.

- Actionable Protocol: Use a relevance-redundancy (RR) ranking based on Normalized Mutual Information (NMI) [17]. This method is non-parametric and can capture non-linear relationships, making it superior to simple correlation for complex materials data. Start with the feature having the highest NMI with the target. Then, iteratively add the feature with the highest RR score:

RR(f) = NMI(f, y) / [max(NMI(f, f_s))^p + c], whereyis the target,f_sis an already-selected feature, andpandcare hyperparameters [17].

- Actionable Protocol: Use a relevance-redundancy (RR) ranking based on Normalized Mutual Information (NMI) [17]. This method is non-parametric and can capture non-linear relationships, making it superior to simple correlation for complex materials data. Start with the feature having the highest NMI with the target. Then, iteratively add the feature with the highest RR score:

FAQ 3: How can I ensure that my feature selection is robust and not dependent on a random data split?

- Problem: Feature selection can be unstable; small changes in the training data can lead to different selected feature sets.

- Solution: Focus on feature selection stability.

- Actionable Protocol: Employ techniques like stability selection or define stability using concepts like Lyapunov stability from dynamic systems [18]. This involves running the feature selection algorithm on multiple random subsets of your data and only retaining features that are consistently selected across a high percentage of these subsets. This creates a more reliable and robust feature set.

FAQ 4: I need my model's predictions to be interpretable to gain physical insights. What feature selection approach should I use?

- Problem: Some complex models, like deep neural networks, are "black boxes."

- Solution: Use feature selection and interpretation methods that provide insight into feature importance.

- Actionable Protocol: After model training, use SHAP (SHapley Additive exPlanations) analysis [16]. SHAP quantifies the contribution of each feature to an individual prediction. By analyzing SHAP values across your dataset, you can identify which features (e.g., elemental electronegativity, energy per atom) are the primary drivers of your model's predictions for thermodynamic stability, thereby revealing the underlying physics.

Quantitative Performance Data

The table below summarizes the performance of various machine learning models that utilized feature selection for predicting material properties, demonstrating its impact on accuracy and data efficiency.

Table 1: Impact of Feature Selection on Model Performance for Materials Property Prediction

| Model Name | Primary Feature Selection Method | Target Property | Key Performance Metric | Result & Advantage |

|---|---|---|---|---|

| MODNet [17] | Relevance-Redundancy (RR) using Normalized Mutual Information | Vibrational Entropy, Formation Energy | Mean Absolute Error (MAE) | Achieved MAE of 0.009 meV/K/atom for entropy; outperforms graph networks on small datasets. |

| ECSG [19] | Ensemble of models (Magpie, Roost, ECCNN) with stacked generalization | Thermodynamic Stability (Decomposition Energy) | Area Under the Curve (AUC) | Achieved AUC of 0.988; required only 1/7th of the data to match performance of existing models. |

| Elastic Properties Predictor [16] | mRMR and SHAP analysis | Bulk & Shear Modulus | Model Accuracy & Interpretability | Identified "energy per atom" as most critical feature; enabled accurate predictions with traditional ML models. |

| Ensemble of Decision Trees (ERT) [5] | Elemental properties and position in periodic table | Thermodynamic Phase Stability (Perovskites) | Mean Absolute Error (MAE) | Achieved MAE of 121 meV/atom on a large dataset of cubic perovskites. |

Detailed Experimental Protocol: MODNet Feature Selection

This protocol details the feature selection methodology used in the MODNet framework, which is highly effective for limited datasets in materials science [17].

Objective: To select an optimal subset of descriptors for predicting a target material property (e.g., formation energy, vibrational entropy) from an initial large pool of features.

Workflow Overview:

Materials and Inputs:

- Input Data: Crystallographic Information Files (CIFs) or other structure representations for your material dataset.

- Featurization Library: The

matminerpackage in Python, which provides a vast library of pre-defined physical, chemical, and structural descriptors [17]. - Initial Feature Pool (

F): A vector of all features generated bymatminerfor your dataset (can number in the hundreds).

Step-by-Step Procedure:

- Featurization: Use

matminerto convert the raw crystal structures into a numerical feature matrix. This includes elemental properties (e.g., atomic mass, electronegativity), structural properties (e.g., space group), and site-specific features [17]. - Initialization: Create an empty set,

F_S, which will hold the selected features. - First Feature Selection: Calculate the Normalized Mutual Information (NMI) between every feature in the pool

Fand the target variabley. Select the feature with the highestNMI(f, y)and add it toF_S. - Iterative Selection: For each subsequent feature, calculate a Relevance-Redundancy (RR) score for every feature

fstill inF:RR(f) = NMI(f, y) / [ max(NMI(f, f_s))^p + c ]for allf_sinF_S.- NMI(f, y): Relevance of the feature to the target.

- max(NMI(f, f_s)): Maximum redundancy between the candidate feature and any already-selected feature.

- p, c: Hyperparameters that balance the trade-off between relevance and redundancy. The MODNet study used dynamic parameters:

p = max(0.1, 4.5 - n^0.4)andc = 10^-6 * n^3, wherenis the number of features already inF_S[17].

- Add Best Feature: Add the feature with the highest

RR(f)score toF_Sand remove it fromF. - Termination: Repeat steps 4 and 5 until a pre-defined number of features is selected. This number can be optimized by evaluating model performance on a validation set at different feature set sizes.

- Model Training: Use the final selected feature subset

F_Sto train a feedforward neural network (or other ML model) for property prediction.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Feature Selection in Materials Informatics

| Tool / Solution | Type | Primary Function | Relevance to Thermodynamic Stability |

|---|---|---|---|

| matminer [17] [16] | Software Library | Feature extraction from crystal structures and molecules. | Provides a standardized set of physically meaningful descriptors (e.g., elemental statistics, structural symmetry) that are foundational for predicting formation energy and stability. |

| SHAP (SHapley Additive exPlanations) [16] | Analysis Library | Post-hoc model interpretability and feature importance analysis. | Identifies which atomic or structural properties (e.g., energy per atom, valence electron concentration) most strongly influence the model's stability predictions, revealing underlying physics. |

| mRMR Algorithm [16] | Feature Selection Algorithm | Selects features based on maximum relevance and minimum redundancy. | Efficiently reduces a large feature space (e.g., from matminer) to a compact set of non-redundant, high-impact features, crucial for avoiding overfitting in stability models. |

| Normalized Mutual Information (NMI) [17] | Statistical Measure | Quantifies linear and non-linear dependence between variables. | Used in custom feature selection workflows (e.g., MODNet) to robustly assess the relevance of features to decomposition energy and redundancy among features. |

| Stacked Generalization (Ensemble) [19] | Modeling Framework | Combines predictions from multiple base models to improve accuracy. | Mitigates the inductive bias of any single model (e.g., composition-based vs. structure-based) by combining them, leading to more robust stability predictions across diverse chemical spaces. |

Frequently Asked Questions (FAQs)

Q1: What are the most critical high-value features for predicting the thermodynamic stability of inorganic compounds? The most critical features depend on the material class, but several key categories have been identified. For perovskite oxides, elemental properties like the third ionization energy of the B-site element and the electron affinity of the X-site ion are significantly negatively correlated with stability (lower energy above the convex hull, Ehull) [20]. For a broad range of inorganic compounds, models that incorporate intrinsic electron configuration information demonstrate remarkable predictive accuracy by directly capturing the electronic structure that governs bonding and stability [19]. Features derived from elemental property statistics (mean, deviation, range) and those that model interatomic interactions within a crystal graph are also highly valuable [19].

Q2: My machine learning model for stability prediction is suffering from high error. What could be wrong?

High error can stem from several sources in the feature engineering pipeline. First, check for insufficient or biased features. Relying on a single domain of knowledge (e.g., only elemental fractions) introduces inductive bias; a framework that combines features from atomic properties, interatomic interactions, and electron configurations can mitigate this [19]. Second, improper data preprocessing can be a cause. Ensure you scale your features (e.g., using MinMaxScaler) to a consistent range like [0, 1] to promote equitable weight distribution and faster convergence [20]. Finally, always perform feature correlation analysis to remove redundant or irrelevant descriptors, which can improve model performance and generalization [20] [21].

Q3: How can I validate that my model's predictions are reliable for discovering new, stable materials? A robust validation protocol involves multiple steps. Initially, use standard metrics like Area Under the Curve (AUC) and Root Mean Square Error (RMSE) on a held-out test set; state-of-the-art models can achieve an AUC of 0.988 for stability classification [19]. More importantly, perform external validation by applying your trained model to explore a new compositional space (e.g., for double perovskite oxides) and then validate the top candidate materials using first-principles calculations (DFT). The model's predictions are considered reliable if the DFT-calculated stability confirms the predictions, which has been demonstrated in recent studies [19].

Q4: What is the practical advantage of using a complex ensemble model over a simpler one? The primary advantage is higher accuracy and reduced bias. Simple models built on a single hypothesis or a narrow set of features can have their ground truth lie outside their parameter space. An ensemble framework based on stacked generalization amalgamates models rooted in distinct domains of knowledge (e.g., atomic statistics, graph networks, and electron configuration), creating a "super learner" that diminishes individual model biases and harnesses synergistic effects [19]. Furthermore, such models can exhibit exceptional sample efficiency, potentially achieving the same accuracy as existing models with only a fraction (e.g., one-seventh) of the training data [19].

Troubleshooting Guides

Issue: Poor Model Generalization on New Compounds

Problem: Your trained model performs well on the test set but makes inaccurate stability predictions for new compounds outside the original dataset.

Solution: Follow this systematic troubleshooting guide to identify and resolve the issue.

Step 1: Diagnose Feature Scope and Representation

- Action: Verify that your feature set comprehensively captures the physical and chemical properties governing stability. Using only elemental fractions is often insufficient [19].

- Checklist:

- Have you incorporated features from multiple domains (atomic, interactive, electronic)?

- For perovskites, have you included critical features like the third ionization energy of the B-site element and electron affinity of the X-site ion? [20]

- Are you using a robust representation for electron configuration, such as the matrix encoding used in the ECCNN model? [19]

Step 2: Analyze and Preprocess Training Data

- Action: Inspect your dataset for outliers and skewed distributions that could bias the model.

- Protocol:

- Visualize Data: Create box plots and density maps of your feature data. Identify and remove obvious outliers, as was done for descriptors like "crystal length" and "sphericity of the B atom" in perovskite studies [20].

- Scale Features: Apply a scaling method like

MinMaxScalerto normalize all features to a [0, 1] interval. This mitigates disparities in feature scales and stabilizes model training [20].

Step 3: Implement Advanced Feature Selection

- Action: Reduce feature dimensionality to avoid overfitting and improve model focus.

- Protocol:

- Correlation Analysis: Use the Pearson correlation coefficient to evaluate the relationship between each feature and the target stability (Ehull) [20].

- Select Top Features: Employ feature selection methods (e.g., stability selection, recursive feature elimination) to identify the most predictive features. Research on perovskite oxides found that the top 70 features were sufficient for optimal model performance without overfitting [21].

Issue: Integrating Electron Configuration Features

Problem: You want to incorporate electron configuration (EC) data into your model but are unsure how to represent it effectively as an input feature.

Solution: Implement a encoding and modeling strategy tailored for EC information.

Step 1: Encode the Electron Configuration

- Action: Transform the EC of each element in a compound into a structured, machine-readable format.

- Protocol: The ECCNN model represents the input as a matrix with dimensions of 118 (elements) × 168 (energy levels/electron counts) × 8. This matrix comprehensively encodes the electron distribution across energy levels for all elements in the periodic table present in the material [19].

Step 2: Choose an Appropriate Model Architecture

- Action: Select a model capable of processing the spatial structure of the encoded EC matrix.

- Protocol: A Convolutional Neural Network (CNN) is well-suited for this task. The workflow for the ECCNN model is as follows [19]:

- Input Layer: Takes the encoded EC matrix.

- Convolutional Layers: Processes the matrix through two convolutional operations, each with 64 filters of size 5x5, to extract relevant spatial hierarchies.

- Batch Normalization & Pooling: Applies Batch Normalization (BN) after the second convolution for stable training, followed by a 2x2 max pooling layer for dimensionality reduction.

- Fully Connected Layers: The extracted features are flattened into a one-dimensional vector and passed through fully connected layers to generate the final stability prediction.

Diagram: ECCNN Model Workflow. This workflow illustrates the processing of electron configuration data through convolutional and fully connected layers to predict stability.

Experimental Protocols & Data

Quantitative Performance of Stability Prediction Models

Table 1: Performance metrics of machine learning models for thermodynamic stability prediction across different material classes.

| Material Class | Model/Algorithm | Key Performance Metric | Value | Key High-Value Features Identified | Source |

|---|---|---|---|---|---|

| Broad Inorganic Compounds | ECSG (Ensemble with Stacked Generalization) | AUC (Area Under the Curve) | 0.988 | Electron Configuration, Interatomic Interactions, Elemental Statistics | [19] |

| Organic-Inorganic Hybrid Perovskites | LightGBM Regression | Low prediction error, high accuracy | N/R (Not Reported) | Third Ionization Energy of B-site, Electron Affinity of X-site | [20] |

| Perovskite Oxides | Kernel Ridge Regression | RMSE (Root Mean Square Error) | 28.5 ± 7.5 meV/atom | Top 70 selected from 791 elemental property features | [21] |

| Perovskite Oxides | Extra Trees Classifier | Prediction Accuracy | 0.93 (± 0.02) | Top 70 selected from 791 elemental property features | [21] |

| 2D Conductive MOFs | Ensemble Learning (R²) | R² (Coefficient of Determination) | 0.96 | Integrated Compositional & Structural Descriptors (GD, M-GD, A-GD) | [22] |

| Ti-N System | Moment Tensor Potential (MTP) | RMSE (Formation Energy) | 6.8 meV/atom (testing) | Atomic environment descriptors (local moments) | [23] |

Table 2: Essential research reagents and computational tools for feature engineering and stability prediction.

| Name/Item | Function/Brief Explanation | Example Context |

|---|---|---|

MinMaxScaler |

A data preprocessing tool that normalizes features to a fixed range, typically [0, 1], to ensure stable model training and fair feature weighting. | Used to scale features for predicting stability of organic-inorganic hybrid perovskites [20]. |

| Electron Configuration Encoder | Transforms the electron configuration of elements in a compound into a numerical matrix suitable for machine learning models like CNNs. | Core component of the ECCNN model, creating a 118x168x8 input matrix [19]. |

| Pearson Correlation Coefficient | A statistical measure used in feature selection to evaluate the linear correlation between a feature and the target variable (e.g., Ehull). | Applied to identify features most relevant to the thermodynamic stability of perovskites [20] [21]. |

| Stacked Generalization (SG) | An ensemble technique that combines the predictions of multiple base models (from different knowledge domains) using a meta-learner to improve accuracy. | The foundation of the ECSG framework, which integrates Magpie, Roost, and ECCNN models [19]. |

| Convex Hull Analysis | A computational method to calculate the energy above the convex hull (Ehull), which is a direct measure of a compound's thermodynamic phase stability. | Used to generate stability labels (Ehull) for training machine learning models in DFT-based studies [19] [21]. |

Detailed Methodologies for Key Experiments

Protocol 1: Building an Ensemble Model with Stacked Generalization for Stability Prediction

This protocol is based on the ECSG framework that integrates multiple base-level models [19].

Base Model Selection and Training:

- Select or develop three base models rooted in distinct domains of knowledge to ensure complementarity.

- Model A (Atomic Statistics): Use a model like Magpie, which calculates statistical features (mean, deviation, range, etc.) from a suite of elemental properties (atomic number, mass, radius, etc.). This model is typically trained with gradient-boosted regression trees (XGBoost) [19].

- Model B (Interatomic Interactions): Use a model like Roost, which represents the chemical formula as a graph. It employs graph neural networks with an attention mechanism to capture the message-passing and relationships between atoms [19].

- Model C (Electron Configuration): Develop an ECCNN model. Encode the compound's electron configuration into a matrix. Process it through two convolutional layers (each with 64 filters of size 5x5), followed by batch normalization, max pooling (2x2), and fully connected layers [19].

Meta-Model Training:

- Use the predictions from the three trained base models (Magpie, Roost, ECCNN) as input features for a new dataset.

- Train a meta-level model (the "super learner") on these new features to produce the final, integrated stability prediction. This step leverages the strengths of each base model and mitigates their individual biases [19].

Protocol 2: Feature Engineering and Selection for Perovskite Stability

This protocol outlines the process for identifying high-value features for perovskite oxides, as detailed in [21].

Initial Feature Generation:

- For a given perovskite composition (e.g., ABO₃), gather a wide range of elemental properties for the constituent elements from periodic table data.

- Generate a large set of initial features (e.g., 791) by creating statistical combinations and representations of these elemental properties.

Feature Selection:

- Apply multiple feature selection methods, such as stability selection, recursive feature elimination (RFE), and univariate feature selection.

- Evaluate the cross-validation score (e.g., F1 score for classification) against the number of features used.

- Select the optimal number of features that provides the best performance without overfitting. For perovskite oxides, this was found to be the top 70 features [21].

Model Training and Validation:

- Train the chosen machine learning model (e.g., Kernel Ridge Regression for regression, Extra Trees for classification) using the selected feature set.

- Validate the model rigorously using leave-out cross-validation and by predicting the stability of compounds not present in the training set.

A Practical Toolkit: Implementing Feature Selection for Stability Modeling

Frequently Asked Questions

1. What are filter methods and why should I use them for thermodynamic stability prediction?

Filter methods are feature selection techniques that use statistical tests to evaluate and select the most relevant features from your dataset before training a machine learning model. They are "model-agnostic," meaning the selection is based purely on the data's inherent properties and not tied to a specific learning algorithm [24] [25]. For researchers building thermodynamic stability models, this offers key advantages:

- Speed and Efficiency: These methods are computationally fast, making them ideal for the initial screening of a large number of material descriptors (features) [24] [26].

- Overfitting Reduction: By removing irrelevant or redundant features, you simplify the model, which helps it generalize better to new, unseen perovskite candidates [25] [27].

- Interpretability: Understanding which features (e.g., atomic radii, orbital energies) are most statistically relevant to stability provides valuable physical insights [24] [28].

2. How do I choose the correct statistical test for my data?

The choice of statistical measure depends entirely on the data types of your input features (e.g., ionic radius, coordination number) and your target variable (e.g., stability energy, a categorical stable/unstable label). The following table serves as a quick guide [29] [27]:

Table 1: Choosing a Statistical Test for Feature Selection

| Input Data Type | Target Variable Type | Problem Type | Recommended Statistical Test(s) |

|---|---|---|---|

| Numerical | Numerical | Regression | Pearson's Correlation Coefficient (linear), Spearman's Rank Correlation (nonlinear) [29] |

| Numerical | Categorical | Classification | ANOVA correlation coefficient (linear), Kendall's rank coefficient (nonlinear) [29] |

| Categorical | Categorical | Classification | Chi-Squared test, Mutual Information [24] [29] |

| Categorical | Numerical | Regression | ANOVA, Kendall's rank coefficient (use tests for "Numerical Input, Categorical Output" in reverse) [29] |

3. I've selected features with a filter method. How do I know if the selection was successful?

Evaluating your feature selection is a critical step. The success can be measured by assessing both the quality of the reduced dataset and the performance of your final model [24]:

- Performance Metrics: Train your model (e.g., a gradient boosting regressor) on the selected features and compare its performance on a hold-out test set against a model trained on all features. Look for improved or comparable accuracy (e.g., higher R² score for regression) with a significantly smaller feature set [30] [28].

- Model Robustness: A successful feature selection leads to reduced overfitting. This means the performance gap between training and validation/test sets should narrow.

- Information Retention: The selected subset should retain the most critical information. You can evaluate this by checking the explained variance or using mutual information between the selected features and the target [24].

4. What are common pitfalls when using filter methods?

- Ignoring Feature Interactions: Since most filter methods evaluate features individually, they might miss features that are only predictive when combined with others [24] [29]. For example, the stability of a perovskite might depend on a specific ratio of ionic radii, not just the radii themselves.

- Selecting Redundant Features: The method might select multiple features that are highly correlated with each other, as they all score highly against the target. This can introduce multicollinearity without adding new information [29].

- Relying Solely on One Method: No single filter method is universally best. A feature dismissed by one test might be important for a non-linear relationship captured by another [30].

Experimental Protocol: Implementing a Filter Method for Stability Modeling

This protocol outlines the steps for using filter methods to select features for a thermodynamic stability model, as demonstrated in research on hybrid organic-inorganic perovskites (HOIPs) [28].

Objective: To identify the most relevant material descriptors for predicting the thermodynamic stability of HOIPs using a univariate filter method.

Materials and Dataset

- Dataset: A curated dataset of known perovskites with calculated relative energies (quantifying thermodynamic stability) and a range of compositional, structural, and electronic features [28].

- Software: Python with standard data science libraries (e.g., pandas, numpy, scikit-learn).

Table 2: Key Research Reagents & Computational Tools

| Item / Software | Function in the Experiment |

|---|---|

| scikit-learn Library | Provides built-in functions (e.g., SelectKBest, f_classif, mutual_info_regression) to perform statistical tests and feature selection [29]. |

| Pearson's Correlation | A filter method used to measure linear relationships between continuous features and a continuous target (e.g., relative energy) [29]. |

| Recursive Feature Elimination (RFE) | A wrapper method often used in conjunction with filter methods for further refinement, as seen in HOIP studies [28]. |

| Gradient Boosting Model | A powerful ML algorithm used to validate the selected features by training on the filtered subset and evaluating predictive performance (R² score) [28]. |

Methodology

- Data Preprocessing: Clean the dataset. Handle missing values, normalize, or standardize numerical features to ensure statistical tests are not biased by different scales.

- Define Target and Features: Clearly specify the target variable (e.g., relative energy for regression, stable/unstable label for classification) and the pool of input features.

- Apply Statistical Test: Based on the data types (refer to Table 1), choose an appropriate statistical test. For a regression problem with numerical features and target, you might use Pearson's correlation to score each feature.

- Rank and Select Features: Rank all features based on their statistical scores (e.g., p-value, correlation coefficient). Use a method like

SelectKBestfrom scikit-learn to retain the top k features, orSelectPercentileto keep the top n% of features [24] [29]. - Validate Selection: Train your final machine learning model (e.g., a gradient boosting regressor [28]) using only the selected features. Use cross-validation to compare its performance against a baseline model that uses all features. A successful selection will show comparable or improved performance with far fewer features.

The workflow below visualizes this process.

Wrapper methods are a category of feature selection techniques that employ a specific machine learning model to evaluate and select the optimal subset of features. Unlike other methods that assess features independently, wrapper methods use the model's performance as the guiding metric for the search. This approach is particularly valuable in research domains like thermodynamic stability modeling and drug-target affinity (DTA) prediction, where identifying a compact, high-performing feature set is crucial for both model accuracy and interpretability [31] [32].

The primary advantage of wrapper methods is their ability to account for complex feature interactions and dependencies, often leading to superior predictive performance compared to simpler filter methods [33] [32]. However, this performance comes at a cost: wrapper methods are typically computationally intensive and carry a higher risk of overfitting, as they involve repeatedly training and evaluating a model on different feature subsets [26] [34].

Frequently Asked Questions (FAQs)

Q1: Why would I choose a wrapper method over a faster filter method for my thermodynamic stability model? You should consider a wrapper method when model performance is the critical objective and you have sufficient computational resources. Wrapper methods can capture complex, non-linear interactions between features—such as those between elemental properties in a compound—that simple correlation-based filter methods might miss [33] [32]. This often results in a feature subset that is more finely tuned to your specific predictive algorithm.

Q2: What is the main computational challenge associated with wrapper methods? The main challenge is the combinatorial explosion of possible feature subsets. Evaluating all possible combinations is computationally infeasible for high-dimensional data. This is why greedy search strategies, which make a series of locally optimal choices, are commonly employed as a practical compromise [34] [32].

Q3: How can I prevent overfitting when using a wrapper method? Robust validation is key. Using cross-validation (CV) within the search process, rather than a single train-test split, provides a more reliable estimate of model performance on unseen data. Techniques like Recursive Feature Elimination with Cross-Validation (RFECV) are explicitly designed for this purpose [35]. Furthermore, holding out a completely separate test set for final evaluation is essential to ensure the selected features generalize well.

Q4: Are there ways to reduce the high computational cost of wrapper methods? Yes, two common strategies are:

- Hybrid Approaches: Combine a filter method for a quick preliminary feature reduction with a wrapper method for fine-grained selection. This significantly narrows the search space for the wrapper [35] [33].

- Greedy Search Strategies: Algorithms like Sequential Forward Selection (SFS) or Backward Selection (SBS) are less computationally intensive than evaluating all possible subsets, though they may not find the global optimum [32].

Troubleshooting Common Experimental Issues

| Problem | Root Cause | Proposed Solution |

|---|---|---|

| High Variance in Model Performance | The selected feature subset is overfitted to the specific random partitions of the training/validation data. | Implement Recursive Feature Elimination with Cross-Validation (RFECV). RFECV uses cross-validation scores to determine the optimal number of features, making the selection process more robust and stable [35]. |

| Unacceptable Training Time | The search space of feature combinations is too large, often due to a high number of initial features. | Adopt a hybrid feature selection framework. First, use a fast filter method (e.g., Random Forest importance scores) to eliminate clearly irrelevant features. Then, apply the wrapper method on the reduced feature set to refine the selection [33]. |

| Model Performance Decreased After Feature Selection | The greedy search strategy converged to a local optimum, or important interacting features were prematurely removed. | For Sequential Forward Selection, try Sequential Floating Forward Selection (SFFS), which allows backtracking. This enables the algorithm to re-add previously removed features that become important later, offering more flexibility [32]. |

| Selected Features Lack Interpretability or Domain Relevance | The wrapper method is purely performance-driven and may select features that are spurious or difficult to interpret. | Incorporate domain knowledge into the process. Use the wrapper result as a starting point, then manually review and refine the subset based on scientific plausibility. Alternatively, use SHAP (SHapley Additive exPlanations) values to interpret the selected model's feature contributions [31]. |

Key Experimental Protocols & Workflows

Protocol: Recursive Feature Elimination with Cross-Validation (RFECV)

RFECV is a powerful wrapper-style method that is highly effective for high-dimensional data. It was successfully applied in thermal preference prediction models to identify a compact set of seven key features, improving the model's F1-score [35].

Detailed Methodology:

- Train Model: Train the chosen estimator (e.g., Random Forest, SVM) on the entire set of features.

- Rank Features: Obtain a feature importance score (e.g., Gini importance for Random Forest, coefficients for linear models).

- Prune Weakest: Remove the feature(s) with the lowest importance score(s).

- Cross-Validate: Retrain and evaluate the model performance with the remaining features using cross-validation.

- Iterate: Repeat steps 1-4 until no features remain.

- Select Optimal Subset: The optimal number of features is determined by the subset that achieved the highest average cross-validation score. This subset is selected for the final model [35].

Protocol: Two-Stage Hybrid Feature Selection

This protocol leverages the strengths of both filter and wrapper methods to balance efficiency and effectiveness. A study on classification problems used Random Forest for initial filtering, followed by an Improved Genetic Algorithm for wrapper-based selection, resulting in significant performance improvements [33].

Detailed Methodology:

- Stage 1 (Filter-based Pre-filtering):

- Train a Random Forest model on the high-dimensional dataset.

- Calculate and rank all features based on their Variable Importance Measure (VIM) scores, which reflect their contribution to reducing node impurity across all trees [33].

- Eliminate all features with VIM scores below a defined threshold (e.g., bottom 50%), creating a reduced feature subset.

- Stage 2 (Wrapper-based Refinement):

- Use a search algorithm (e.g., Genetic Algorithm, Sequential Selection) to find the optimal subset from the pre-filtered features.

- The learning algorithm's performance (e.g., classification accuracy) is used as the fitness function to guide the search.

- The output is the final, optimal feature subset.

Workflow Visualization: Generic Wrapper Method Logic

The following diagram illustrates the core iterative logic shared by most wrapper-based feature selection methods.

The Scientist's Toolkit: Research Reagents & Algorithms

This section details key computational "reagents" essential for implementing wrapper methods in a research environment.

| Item Name | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Random Forest (RF) | An ensemble learning method that provides robust feature importance scores (VIM), useful for initial filtering or as the core estimator in RFECV [35] [33]. | Pre-filtering features based on Gini importance before applying a more computationally expensive wrapper [33]. |

| Recursive Feature Elimination with CV (RFECV) | A wrapper method that recursively removes features and uses cross-validation to determine the optimal feature set size, minimizing overfitting [35]. | Identifying a minimal set of key environmental and personal features for thermal preference prediction models [35]. |

| XGBoost / LightGBM | Advanced gradient boosting frameworks that inherently rank feature importance. They can be used for filtering or as high-performance estimators within wrapper methods [31]. | Processing self-associated and adjacent-associated features in Drug-Target Affinity (DTA) prediction to enhance model robustness [31]. |

| Sequential Forward Selection (SFS) | A greedy search wrapper that starts with no features and adds them one by one, selecting the feature that most improves model performance at each step [32]. | Building a feature subset for a compound stability model when the number of initial features is moderately large. |

| Genetic Algorithm (GA) | An evolutionary search algorithm that explores feature subsets based on a "fitness" function (model performance), effective at avoiding local optima [33]. | Global search for the optimal feature subset in a high-dimensional dataset after an initial filter has reduced the search space [33]. |

| SHAP (SHapley Additive exPlanations) | A unified measure of feature importance that explains the output of any machine learning model, aiding in the interpretation of the final selected feature set [31]. | Post-hoc analysis and validation of the features selected by a wrapper method to ensure they align with domain knowledge in drug discovery [31]. |

Fundamental Concepts: Your Technical FAQ

Q1: What are embedded feature selection methods and how do they differ from other techniques? Embedded methods perform feature selection during the model training process itself, integrating the selection into the learning algorithm. This contrasts with filter methods (which use statistical measures independent of the model) and wrapper methods (which use a separate search process with a predictive model). Embedded methods combine the advantages of both: they consider feature interactions like wrapper methods while maintaining the computational efficiency of filter methods [36] [37] [38].

Q2: Why should I use embedded methods for building thermodynamic stability models? Embedded methods offer several critical advantages for research applications like thermodynamic stability prediction:

- Built-in Efficiency: They perform feature selection and model training in a single step, eliminating the need for separate selection processes [36].

- Reduced Overfitting: By removing irrelevant features, they create more robust models that generalize better to new data [38].

- Model-Specific Optimization: They select features specifically tailored to the algorithm being trained [38].

- Computational Advantage: They are faster than wrapper methods while typically being more accurate than filter methods [36].

Q3: Which embedded methods are most relevant for high-dimensional experimental data? For high-dimensional data common in materials science and drug discovery, two approaches are particularly effective:

- LASSO (L1 Regularization): Excellent for linear models where feature coefficients can be shrunk to zero, effectively removing them from the model [36] [37].

- Tree-Based Algorithms: Including Random Forests and Gradient Boosting machines, which provide native feature importance measures based on how much each feature reduces impurity across all trees [37] [38].

Q4: My LASSO model removes all features when I increase regularization. How do I fix this? This indicates your regularization parameter (alpha or λ) is too high. The solution is systematic hyperparameter tuning:

- Use cross-validation to find the optimal alpha value that maximizes model performance without excessive feature removal.

- Start with a low alpha value and gradually increase while monitoring both model performance (e.g., MSE) and the number of retained features.

- For scikit-learn implementations, the

SelectFromModelclass withLogisticRegression(C=0.5, penalty='l1')provides a practical approach where C is the inverse of regularization strength [37].

Q5: How reliable are feature importance scores from tree-based models with correlated features? Feature importance in tree-based models can be misleading with correlated features because the importance may be distributed among correlated variables. To address this:

- Combine embedded methods with Recursive Feature Elimination (RFE), which retrains the model after removing the least important features.

- If a correlated feature is removed, the importance of remaining correlated features typically increases in subsequent iterations, providing a more accurate assessment [37].

- Consider using permutation importance as a complementary approach, which measures feature importance by randomizing each feature and observing the performance drop [39].

Experimental Protocols & Implementation

Protocol: Feature Selection with LASSO for Stability Prediction

This protocol implements LASSO regularization to identify key descriptors for thermodynamic stability models, particularly relevant for inorganic compound discovery [19].

Protocol: Tree-Based Feature Importance for Compound Screening

This methodology leverages ensemble tree models to rank feature importance for high-throughput screening of stable compounds [37] [38].

Workflow Visualization

Embedded Methods Selection Process

Thermodynamic Stability Modeling Pipeline

Research Reagent Solutions & Materials

Table 1: Essential Computational Tools for Embedded Feature Selection

| Tool/Resource | Function | Implementation Example |

|---|---|---|

| scikit-learn SelectFromModel | Meta-transformer for selecting features based on importance weights | from sklearn.feature_selection import SelectFromModel |

| Lasso Regression (L1) | Linear regression with L1 penalty for sparse feature selection | Lasso(alpha=0.1, random_state=42) |

| Logistic Regression (L1) | Classification with L1 penalty for feature selection | LogisticRegression(penalty='l1', solver='liblinear', C=0.5) |

| Random Forest Classifier | Ensemble method providing impurity-based feature importance | RandomForestClassifier(n_estimators=100) |

| StandardScaler | Standardizes features by removing mean and scaling to unit variance | StandardScaler().fit(X_train) |

| Matplotlib | Visualization of feature importance rankings | plt.barh(features, importances) |

| Materials Project Database | Source of compositional and stability data for training | API access to formation energies and structures |

Table 2: Performance Comparison of Embedded Methods for Stability Prediction

| Method | Key Parameters | Features Selected | AUC Score | Computational Cost |

|---|---|---|---|---|

| LASSO (L1) | alpha=0.01 | 14 of 30 | 0.945 | Low |

| Random Forest | nestimators=100, maxdepth=10 | 8 of 30 | 0.962 | Medium |

| ElasticNet | alpha=0.01, l1_ratio=0.5 | 16 of 30 | 0.951 | Low |

| Ensemble ECSG | Stacked generalization of multiple models | 22 of 30 | 0.988 [19] | High |

Advanced Troubleshooting Guide

Q6: How do I handle different data types (continuous, categorical) in embedded methods?

- For LASSO implementations, ensure all features are numerically encoded. Use one-hot encoding for categorical variables but be aware this expands the feature space.

- Tree-based models naturally handle mixed data types, but encoding categorical variables numerically typically improves performance.

- When using feature importance from tree models, the importance scores are comparable across different data types.

Q7: What metrics should I use to evaluate if my feature selection improved the model? Beyond standard accuracy metrics, consider:

- AUC-ROC: Particularly important for imbalanced datasets common in materials discovery.

- Feature Set Stability: Measure how consistent the selected features are across different data samples.

- Computational Efficiency: Track training and inference time reduction with the reduced feature set.

- Model Interpretability: Assess whether the selected features align with domain knowledge in thermodynamics.

Q8: My embedded method selects different features each time I run it. Is this normal? Some variability is expected, particularly when:

- Using algorithms with inherent randomness (e.g., Random Forests with different random states).

- Working with highly correlated features where multiple subsets may provide similar performance.

- Having small sample sizes where bootstrap sampling creates significant variation.

Solutions: Increase the sample size if possible, use a fixed random seed for reproducibility, and consider running the selection process multiple times to identify consistently selected features. For critical applications, recursive feature elimination with cross-validation provides more stable results [37].

FAQ: Troubleshooting Ensemble Models for Thermodynamic Stability

FAQ 1: My ensemble model for predicting compound stability is overfitting, showing high performance on training data but poor generalization to new chemical spaces. What steps can I take?

- Problem Diagnosis: This often occurs when base models are too complex or when the meta-learner is trained on the same data as the base models without proper separation.

- Solution:

- Implement Cross-Validation for Stacking: Ensure that the predictions from your base models used to train the meta-learner are generated from out-of-fold samples. This prevents the meta-learner from learning the noise already seen by the base models. Use techniques like k-fold cross-validation during the base model prediction phase [40].

- Promote Model Diversity: The strength of ensemble methods lies in combining diverse, uncorrelated models. Intentionally select base models that rely on different domain knowledge or algorithmic approaches. For example, the ECSG framework successfully combined a graph neural network (Roost), a model based on elemental properties (Magpie), and a novel Electron Configuration Convolutional Neural Network (ECCNN) [19].

- Regularize the Meta-Learner: Apply regularization techniques (e.g., L1 or L2) to the meta-learning algorithm itself to prevent it from over-relying on any single base model and to keep the weights generalizable.

FAQ 2: I am working with a limited dataset of experimentally measured thermodynamic stability. How can I build a robust ensemble model with low sample efficiency?

- Problem Diagnosis: Many complex models, like deep neural networks, require large amounts of data. With limited data, these models cannot learn effectively.

- Solution:

- Prioritize Sample-Efficient Base Models: Choose or design base models that are known to perform well with smaller datasets. Research has shown that ensembles based on electron configuration can achieve state-of-the-art performance using only a fraction of the data required by other models—in one case, as little as one-seventh [19].

- Leverage Feature Reduction: Before building the ensemble, use feature selection methods to reduce dimensionality and noise. This helps models learn the underlying patterns more efficiently with fewer samples.

- Utilize Simple Meta-Learners: A simpler meta-learner, such as linear regression or logistic regression, is less prone to overfitting on a small dataset than a complex one. The key is the diversity and quality of the base predictions fed into it.

FAQ 3: My ensemble model's performance has plateaued. How can I further reduce bias and improve predictive accuracy for new compound stability?

- Problem Diagnosis: The current set of base models may have correlated errors or may be missing a key perspective on the data.

- Solution:

- Incorporate Physicochemically Meaningful Features: Integrate base models or features that capture fundamental physical principles. The introduction of an electron configuration-based model (ECCNN) provided a new, less biased data perspective that complemented traditional atomic property and interatomic interaction models, leading to a more robust super learner [19].

- Analyze Residual Errors: Examine the cases where your ensemble fails. If a specific type of compound (e.g., certain perovskite structures) is consistently mispredicted, consider developing a specialized base model or feature set to address that weakness.

- Explore Advanced Stacking Techniques: Instead of using a single meta-learner, you can create a hierarchy of meta-learners. Furthermore, ensure you are using a heterogeneous set of base learning algorithms (e.g., decision trees, support vector machines, neural networks) to maximize diversity [40] [41].

Experimental Protocol: Implementing a Stacked Generalization Framework

The following protocol outlines the process for building a stacked ensemble model, based on the ECSG framework, for predicting thermodynamic stability [19].

Objective: To create a robust predictive model for the decomposition energy (∆Hd) of inorganic compounds by combining multiple, diverse machine learning models via stacked generalization.

Materials & Computational Tools:

- Dataset: A curated dataset of inorganic compounds with known thermodynamic stability labels (e.g., stable/unstable) or continuous ∆Hd values, sourced from databases like the Materials Project (MP) or JARVIS [19].

- Computing Environment: Python programming language with key libraries:

scikit-learnfor base models and meta-learning,PyTorchorTensorFlowfor neural network-based base models (e.g., ECCNN), andXGBoostfor gradient-boosted trees. - Feature Sets: Prepared input features for different base models, including elemental fractions, Magpie features, graph representations of crystals, and encoded electron configuration matrices.

Procedure:

Data Preparation and Splitting:

- Partition the dataset into a Training Set (e.g., 80%) and a hold-out Test Set (e.g., 20%). The Test Set will be used for the final model evaluation and must not be used during training or validation of the base models or meta-learner.

Base Model Training (Level-0 Models):

- Train multiple, diverse base models on the Training Set. The following table summarizes three complementary models used in the ECSG framework [19]:

| Base Model | Input Features | Algorithm | Key Domain Knowledge |

|---|---|---|---|

| Magpie [19] | Statistical features (mean, deviation, range) of elemental properties (e.g., atomic radius, electronegativity). | Gradient-Boosted Regression Trees (XGBoost) | Atomic-scale properties and their statistical variations across a compound. |

| Roost [19] | Chemical formula represented as a graph of atoms (nodes) and bonds (edges). | Graph Neural Network (GNN) with Attention | Interatomic interactions and relational structure within a crystal. |

| ECCNN [19] | Matrix encoding the electron configuration (energy levels, electron counts) of constituent elements. | Convolutional Neural Network (CNN) | Fundamental electronic structure, which is the basis for quantum mechanical calculations. |

Generate Cross-Validated Predictions for Meta-Features:

- To create the training dataset for the meta-learner, perform k-fold cross-validation (e.g., k=5 or k=10) on the original Training Set for each base model.

- For each fold, train a base model on the k-1 training folds and use it to generate predictions on the validation fold. After completing all folds, you will have a full set of out-of-sample predictions for the entire Training Set.

- These predictions, often called "meta-features," are the inputs for the meta-learner. This process is visualized in the workflow diagram below.

Train the Meta-Learner (Level-1 Model):

- Use the cross-validated predictions from all base models as the new feature matrix.

- Train a meta-learner on this new matrix, with the original target values (stability labels or ∆Hd) as the labels.

- Common choices for meta-learners include linear models, logistic regression, or simple neural networks. The ECSG framework uses a super learner trained via stacked generalization [19].

Final Model Evaluation:

- Train each base model on the entire original Training Set.

- Use these fully-trained base models to make predictions on the hold-out Test Set.

- Feed these test set predictions into the trained meta-learner to generate the final ensemble predictions.

- Evaluate the final performance using the hold-out Test Set with metrics like Area Under the Curve (AUC) for classification or Root Mean Square Error (RMSE) for regression.

The workflow for this stacked generalization process is as follows:

Diagram 1: Stacked Generalization Workflow. This shows the process of using k-fold cross-validation to create meta-features from base models for training the meta-learner without data leakage.

Table 1: Quantitative Performance of the ECSG Ensemble Model vs. Base Models [19]

| Model | AUC (Stability Prediction) | Key Advantage / Note |

|---|---|---|

| ECSG (Ensemble) | 0.988 | Achieved highest accuracy by combining strengths and reducing individual model bias. |

| ECCNN (Base Model) | Not Reported | Introduced electron configuration features, requiring only 1/7 of data to match other models' performance. |