Extracting Synthesis Procedures with Natural Language Processing: A Guide for Drug Development and Biomedical Research

This article provides a comprehensive overview of Natural Language Processing (NLP) methodologies for the automated extraction of synthesis procedures from unstructured text.

Extracting Synthesis Procedures with Natural Language Processing: A Guide for Drug Development and Biomedical Research

Abstract

This article provides a comprehensive overview of Natural Language Processing (NLP) methodologies for the automated extraction of synthesis procedures from unstructured text. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of NLP, details specific techniques like Named Entity Recognition and Relation Extraction for identifying chemical entities and processes, and addresses common challenges such as data sparsity and ambiguity. The content further guides the evaluation and validation of NLP models in biomedical contexts, covering performance metrics, comparative analysis of tools, and strategies for integration into existing research pipelines to accelerate discovery and development.

The Foundation of NLP in Scientific Text Mining: Unlocking Chemical Synthesis Data

The Role of NLP in Processing Unstructured Scientific Text

The application of Natural Language Processing (NLP) and Large Language Models (LLMs) to unstructured scientific text represents a paradigm shift in the acceleration of materials and drug discovery research. These technologies enable the automatic construction of large-scale materials datasets from published literature, which traditionally required time-consuming manual curation [1]. This document details practical methodologies for implementing NLP-driven information extraction systems, specifically targeting the retrieval of synthesis procedures, compositions, and properties from scientific documents. The protocols outlined herein are designed for researchers and professionals aiming to integrate these advanced computational tools into their research workflows, thereby enhancing the efficiency and scope of data-driven scientific discovery.

The overwhelming majority of materials and chemical knowledge is documented within peer-reviewed scientific literature. Manually collecting and organizing this data from publications and laboratory experiments is a recognized bottleneck that severely limits the efficiency of large-scale data accumulation [1]. Automated information extraction has thus become a necessity for modern research.

NLP, a subfield of artificial intelligence, provides the technological foundation for this automation. Its development has evolved from handcrafted rules in the 1950s to machine learning in the late 1980s, and more recently to deep learning and transformer-based models that underpin today's LLMs [1]. In scientific contexts, the primary tasks involve Named Entity Recognition (NER) for identifying key terms and Relationship Extraction for understanding how these terms are connected [2]. The emergence of LLMs like GPT, Falcon, and BERT has further advanced these capabilities, offering unprecedented general "intelligence" for processing complex scientific text [1].

Core NLP Methodologies for Scientific Text

Foundational NLP Concepts

- Word Embeddings: These are dense, low-dimensional vector representations of words (e.g., Word2Vec, GloVe) that preserve contextual word similarity, allowing models to understand linguistic meaning and semantic relationships [1].

- Attention Mechanism: Introduced with the Transformer architecture, this mechanism allows the model to weigh the importance of different words in a sequence when processing information, which is crucial for understanding complex, long-range dependencies in scientific text [1].

- Named Entity Recognition (NER): A critical NLP task that involves identifying and classifying key information entities—such as material names, properties, and synthesis parameters—within unstructured text. This can be achieved through ontology-based tagging or machine learning models [2].

- Knowledge Graphs (KGs): These structured representations integrate extracted entities and their relationships, facilitating data manipulation, extraction, and the discovery of new connections within scientific domains [2].

From Traditional NLP to Large Language Models

Traditional NLP pipelines for information extraction relied on custom-built models trained on domain-specific, annotated datasets. The advent of LLMs has introduced more flexible approaches:

- In-context Learning: Enables models to perform tasks with no examples (zero-shot) or just a few examples (few-shot), drastically reducing the need for large, labeled datasets and allowing for rapid domain adaptation [3].

- Prompt Engineering: The practice of skillfully crafting input instructions to guide the model's text generation. A well-designed prompt is essential for obtaining high-quality, relevant, and inventive outputs from LLMs [1].

Application Protocols

This section provides a detailed, step-by-step guide for implementing an NLP system to extract synthesis information from scientific literature.

Protocol 1: LLM-Based Information Extraction for Synthesis Data

Objective: To automatically extract structured synthesis procedures and parameters from scientific PDF documents using a pre-trained Large Language Model.

Table 1: Key Research Reagents & Computational Tools

| Item Name | Function/Description | Example/Note |

|---|---|---|

| Pre-trained LLM | Core engine for natural language understanding and generation. | Models such as Qwen 2.5 72B, Llama 3.3 70B, or Gemini 1.5 Flash [3]. |

| Scientific Corpus | Domain-specific collection of text data for processing. | A set of PDFs from target conferences/journals (e.g., BPM conferences) [3]. |

| Prompt Template | Structured input instruction to guide the LLM's extraction task. | Contains context, instruction, and few-shot examples (see Table 2) [3]. |

| Knowledge Graph | Structured data model to store and link extracted entities. | For integrating extracted synthesis data into a findable, accessible, interoperable, and reusable (FAIR) format [3]. |

Methodology:

- Document Preprocessing: Convert PDF documents into plain text. Clean the text to remove non-content elements like page headers and footers.

- Prompt Construction: Develop a prompt that includes the following elements [3]:

- System Message/Instruction: Define the AI's role and the task (e.g., "You are an expert materials scientist extracting synthesis data...").

- Context: Provide the full text of the preprocessed scientific paper.

- Query/Task Definition: Pose specific, predefined questions (e.g., "What is the precursor material?", "What is the sintering temperature?").

- Few-Shot Examples (Optional but Recommended): Include 1-3 examples of a document snippet paired with the ideal, manually crafted answer for each query. This aligns the model with the desired output style and format [3].

- Model Execution: Submit the constructed prompt to the LLM via its API or local inference endpoint.

- Output Parsing: Receive the model's response, which should include the extracted information based on the queries. The output can be structured in JSON or a similar format for easy integration into databases.

- Validation & Integration: Manually validate a subset of the extractions against the original text to assess accuracy. Integrate the validated, structured data into a database or knowledge graph.

Table 2: Example Prompt Structure for Synthesis Extraction

| Prompt Component | Example Content |

|---|---|

| Instruction | "Extract all materials synthesis information from the provided text. Format your answer as a JSON object." |

| Document Text | "The powder was sintered at 1450°C for 4 hours in an air atmosphere..." |

| Query/Extraction Target | "Extract the sintering temperature, duration, and atmosphere." |

| Few-Shot Example (Input) | "The sample was annealed at 800°C for 2h." |

| Few-Shot Example (Output) | {"annealing_temperature": "800", "annealing_duration": "2", "atmosphere": null} |

| Expected Model Output | {"sintering_temperature": "1450", "sintering_duration": "4", "atmosphere": "air"} |

Protocol 2: Fine-Tuning an LLM for Domain-Specific Extraction

Objective: To specialize a general-purpose LLM for highly accurate extraction of synthesis information in a specific sub-field (e.g., solid-state chemistry or polymer science).

Methodology:

- Dataset Curation: Create a high-quality dataset of scientific text snippets paired with corresponding, manually annotated structured data for synthesis parameters. This dataset should contain several hundred to a few thousand examples.

- Model Selection: Choose a suitable open-source base LLM (e.g., Llama 3 or Qwen 2.5).

- Fine-Tuning Setup:

- Parameter-Efficient Methods: Utilize techniques like LoRA (Low-Rank Adaptation) to fine-tune the model efficiently, reducing computational cost and time.

- Training Configuration: Set hyperparameters (learning rate, batch size) and train the model on the curated dataset.

- Evaluation: Benchmark the fine-tuned model's performance against the base model and zero-shot/few-shot approaches on a held-out test set. Metrics should include precision, recall, and F1-score for the entities of interest.

Technical Specifications & Validation

Performance Metrics for Information Extraction

The following metrics are essential for quantitatively evaluating the performance of an NLP-based information extraction system.

Table 3: Quantitative Performance Metrics for NLP Systems

| Metric | Definition | Target Benchmark |

|---|---|---|

| Precision | The percentage of extracted entities that are correct. | >90% for critical data (e.g., chemical formulas, temperatures) [1]. |

| Recall | The percentage of all correct entities in the text that were successfully extracted. | >85% to ensure comprehensive data gathering [1]. |

| F1-Score | The harmonic mean of precision and recall. | >0.87, indicating a good balance [3]. |

| Domain Adaptation Speed | The effort required to adapt a model to a new scientific sub-domain. | Minimal data (1-3 examples per entity type for few-shot learning) [3]. |

Comparative Analysis of LLM Performance

Different LLMs offer varying trade-offs between accuracy, cost, and speed. The selection of a model should be guided by the specific requirements of the project.

Table 4: Technical Evaluation of LLMs for Scientific IE

| LLM Model | Key Features | Performance Notes |

|---|---|---|

| Gemini 1.5 Flash | Optimized for speed, large context window. | Efficient for processing full papers; suitable for rapid prototyping [3]. |

| Llama 3.3 70B | Open-source, strong general performance. | High accuracy on complex reasoning tasks; requires significant computational resources [3]. |

| Qwen 2.5 72B | Open-source, multilingual capabilities. | Competitive performance with proprietary models; good for specialized domains [3]. |

The Scientist's Toolkit

A successful implementation relies on a suite of computational tools and resources.

Table 5: Essential Tools for NLP-Driven Scientific Research

| Tool Category | Example Tools | Application in Research |

|---|---|---|

| LLM Access & APIs | Google AI Studio (Gemini), OpenRouter (for various models), OpenAI API | Provides direct access to powerful pre-trained models for inference. |

| Open-Source Platforms | Open Research Knowledge Graph (ORKG), Semantic Scholar, Elicit | Platforms for structuring, sharing, and discovering scientific knowledge [3]. |

| Development Frameworks | Gradio (for demo UIs), Hugging Face Transformers, LangChain | Accelerates the development and deployment of NLP applications and user interfaces [3]. |

The application of Natural Language Processing (NLP) is transforming the field of chemical and pharmaceutical research. In the context of a broader thesis on NLP for the extraction of synthesis procedures, these technologies enable the automated mining of vast scientific literature and patent repositories to identify and structure complex chemical synthesis information. This process converts unstructured textual descriptions of experimental procedures into standardized, machine-readable data, accelerating the drug discovery pipeline. The integration of AI, particularly NLP and machine learning, is recognized for its potential to drastically shorten early-stage research and development timelines, compressing discovery processes that traditionally took years into months or even weeks [4] [5]. The following sections detail the core NLP concepts and provide actionable protocols for implementing these techniques in a research setting focused on extracting synthesis knowledge.

Foundational NLP Concepts and Their Research Applications

The journey from raw text to meaningful chemical insight involves a sequence of NLP tasks. Each concept plays a distinct role in deciphering the language used to describe synthesis procedures.

Tokenization is the initial and fundamental step of segmenting a continuous string of text into smaller units called tokens, which are typically words, subwords, or punctuation. In the context of chemical literature, specialized tokenizers are required to correctly handle complex chemical nomenclature (e.g., "1-(2-chloroethyl)-3-cyclohexyl-1-nitrosourea"), units of measurement ("mmol", "°C"), and numerical expressions ("stirred for 2 h").

Part-of-Speech (POS) Tagging involves assigning grammatical labels to each token, such as noun, verb, or adjective. For synthesis extraction, POS tagging helps identify key entities and actions. Verbs like "stirred", "heated", and "added" often signify actions in a synthesis protocol, while nouns frequently correspond to chemical compounds ("acetone"), apparatus ("round-bottom flask"), or quantities ("2.5 grams").

Named Entity Recognition (NER) is critical for information extraction, as it identifies and classifies tokens into predefined categories. For synthesis procedures, a custom NER model must be trained to recognize domain-specific entities, including:

- CHEMICAL: Names of compounds, solvents, and reagents.

- QUANTITY: Numerical values and units.

- APPARATUS: Laboratory equipment.

- REACTION: Specific chemical processes.

- CONDITION: Parameters like temperature and time.

Syntactic Parsing analyzes the grammatical structure of a sentence to establish relationships between words. This helps in understanding the roles of different entities in a sentence; for example, determining the subject performing an action (the chemist), the action itself (the verb), and the object being acted upon (a specific chemical). A dependency parse can link a quantity to its corresponding chemical, even if they are separated by several words in the sentence.

Semantic Role Labeling (SRL) takes syntactic analysis further by identifying the semantic roles of sentence constituents, such as "Who did what to whom, when, where, and how?" In a phrase like "The mixture was then slowly added to ice water," SRL would label "the mixture" as the Theme (what was added), "added" as the Predicate (the action), and "ice water" as the Goal (where it was added). The adverb "slowly" might be labeled as Manner.

Semantic Understanding & Relationship Extraction moves beyond sentence structure to capture the actual meaning and relationships between extracted entities. This involves linking entities to form triples, such as (Compound-A, reactswith, Compound-B) or (Reaction, hastemperature, 75°C). This final step is what ultimately transforms disconnected text into a structured, executable synthesis protocol, forming a knowledge graph of chemical procedures.

Table 1: Core NLP Concepts and Their Functions in Synthesis Extraction

| NLP Concept | Primary Function | Application Example in Synthesis Text |

|---|---|---|

| Tokenization | Text segmentation into units | Separates "1-(2-chloroethyl)" into manageable tokens |

| POS Tagging | Grammatical labeling | Tags "stirred" as a verb (action) and "flask" as a noun (apparatus) |

| Named Entity Recognition (NER) | Identification and classification of key terms | Labels "THF" as CHEMICAL and "60°C" as CONDITION |

| Syntactic Parsing | Uncovering grammatical relationships | Links "0.5 g" to "catalyst" as a modifying phrase |

| Semantic Role Labeling (SRL) | Identifying semantic roles | Identifies "over 30 minutes" as the Duration of the action "add" |

| Relationship Extraction | Establishing connections between entities | Creates a triple: (Precursor, yields, Product) |

Quantitative Data on NLP Model Performance

The effectiveness of an NLP pipeline is measured by standard information retrieval metrics. When evaluating models for tasks like Named Entity Recognition (NER) in chemical texts, the following metrics are most relevant. Precision indicates how many of the extracted entities are correct, minimizing false positives. Recall measures how many of the total correct entities in the text were actually found by the model, minimizing false negatives. The F1 Score is the harmonic mean of precision and recall, providing a single balanced metric for model performance.

Table 2: Performance Metrics for NLP Tasks in Chemical Literature Analysis

| NLP Task | Typical Metric | Reported Performance Range | Key Challenges in Chemical Domain |

|---|---|---|---|

| Chemical NER | F1 Score | 85-92% [5] | Variation in nomenclature (IUPAC, common names, abbreviations) |

| Syntactic Parsing | Attachment Score | >90% | Parsing long, complex sentences with multiple clauses |

| Relation Extraction | F1 Score | 75-88% | Long-range dependencies between entities in a paragraph |

| Semantic Role Labeling | F1 Score | 80-85% | Identifying implicit arguments and instrument roles |

Experimental Protocol: Building a Custom NER Model for Synthesis Procedures

This protocol provides a step-by-step methodology for creating a Named Entity Recognition model tailored to extract key information from chemical synthesis descriptions.

Materials and Data Preparation

1. Data Collection:

- Source Documents: Gather a corpus of text containing chemical synthesis procedures. Suitable sources include:

- Patents: USPTO, EPO, and Google Patents.

- Scientific Journals: Journal of the American Chemical Society, Organic Process Research & Development.

- Electronic Lab Notebooks (if available internally).

- Volume: Aim for a minimum of 500-1000 unique synthesis paragraphs to ensure robust model training. Data volume is critical; high-throughput data generation strategies are becoming central to AI-driven discovery, as they provide the foundational material for training accurate models [6].

2. Data Annotation:

- Define Entity Labels: Establish a clear, consistent annotation schema. Core labels should include:

CHEMICAL,QUANTITY,UNIT,APPARATUS,TEMPERATURE,TIME, andREACTION_VERB. - Annotation Tool: Utilize specialized software such as BRAT, Prodigy, or Doccano.

- Guideline Development: Create detailed annotation guidelines with examples and edge cases (e.g., how to annotate "ice water" as both

APPARATUSandCONDITION). - Quality Assurance: Have multiple annotators label the same subset of data and measure inter-annotator agreement (e.g., Cohen's Kappa) to ensure consistency. Resolve discrepancies through consensus.

Model Training and Evaluation

1. Model Selection and Training:

- Base Architecture: Start with a pre-trained transformer-based language model like SciBERT or ChemBERTa, which are already familiar with scientific and chemical vocabulary.

- Framework: Use a deep learning framework such as Hugging Face's

transformerslibrary or spaCy's transformer pipeline. - Hyperparameters: Typical starting points are a batch size of 16 or 32, a learning rate of 2e-5 to 5e-5, and training for 3-5 epochs. Monitor loss to avoid overfitting.

2. Model Evaluation:

- Dataset Splitting: Split the annotated data into training (70-80%), validation (10-15%), and test (10-15%) sets.

- Metrics: Calculate precision, recall, and F1 score for each entity class on the held-out test set. This provides a realistic measure of model performance on unseen data.

- Error Analysis: Manually inspect examples where the model made errors (false positives and false negatives) to identify patterns and potential areas for improvement in either the model or the annotation guidelines.

The Scientist's Toolkit: Research Reagent Solutions

The following tools and libraries are essential for implementing the NLP protocols described in this document.

Table 3: Essential Software Tools for NLP-based Synthesis Extraction

| Tool Name | Type/Language | Primary Function | Application in Protocol |

|---|---|---|---|

| spaCy | Python Library | Industrial-strength NLP for tokenization, POS, NER, parsing | Preprocessing text and building rapid prototyping pipelines |

| Hugging Face Transformers | Python Library | Access to thousands of pre-trained models (BERT, SciBERT) | Core model for custom NER and relationship extraction tasks |

| Prodigy | Commercial Tool | Active learning-powered annotation system | Efficiently creating high-quality annotated datasets |

| BRAT | Web-based Tool | Rapid annotation for structured text | Collaborative annotation of synthesis texts with custom schema |

| Scikit-learn | Python Library | Machine learning evaluation and utilities | Calculating precision, recall, F1-score, and other metrics |

| pandas | Python Library | Data manipulation and analysis | Handling and processing tabular data, including annotated corpora |

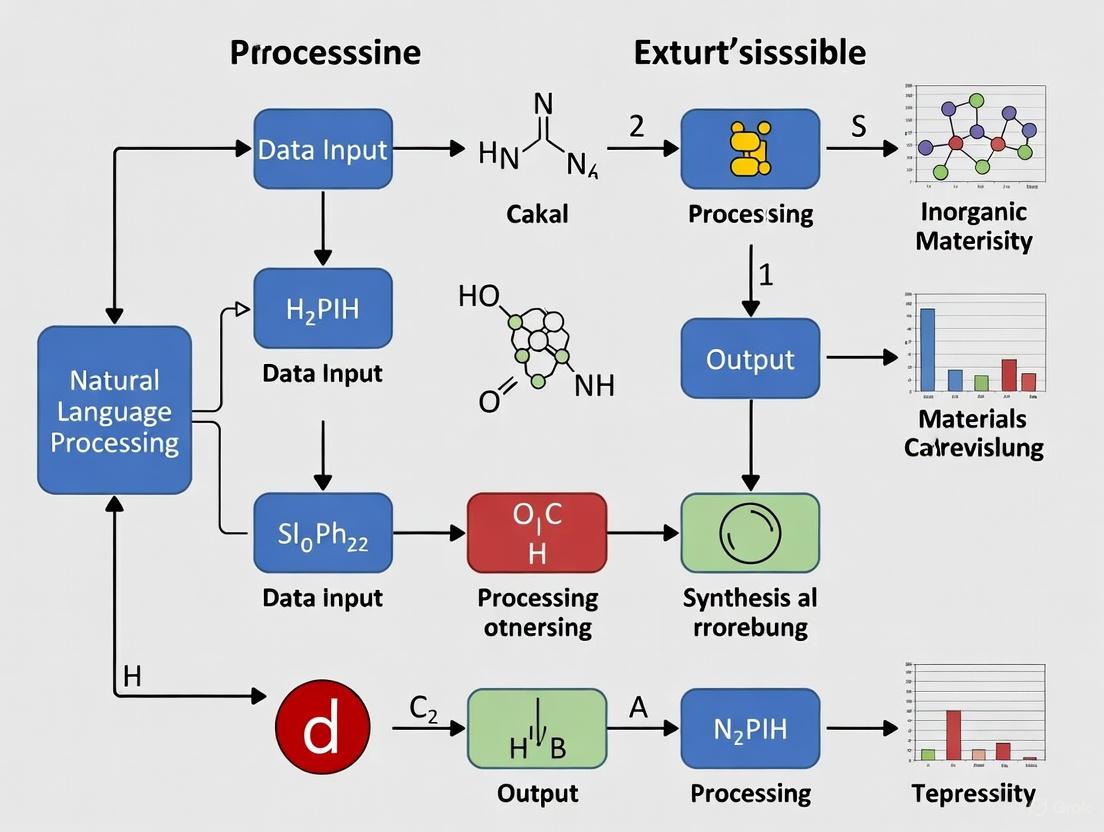

Workflow Visualization

The following diagram illustrates the complete NLP pipeline for extracting structured synthesis information from unstructured text, from raw input to final knowledge graph.

Semantic Understanding and Knowledge Graph Construction

The ultimate objective of the NLP pipeline is to achieve a level of semantic understanding that allows for the construction of a structured knowledge base. The output of the Semantic Role Labeling and Relationship Extraction stages provides a set of formalized relationships between the entities identified by the NER model.

These relationships can be represented as subject-predicate-object triples. For example, the sentence "The reaction mixture was heated to 80°C for 2 hours" might yield the triples (ReactionMixture, hasTemperature, 80°C) and (ReactionMixture, hasDuration, 2 hours). A series of such triples extracted from a full synthesis paragraph forms a rich, interconnected knowledge graph.

This graph-structured data is the final output of the extraction process. It can be stored in a graph database, used to populate a structured reaction database, or even fed into AI-driven drug discovery platforms to suggest novel synthesis pathways or optimize existing ones [4] [6]. This transformation from unstructured text to actionable, structured knowledge is the core contribution of NLP to the field of synthesis procedure research.

The vast majority of chemical and materials knowledge resides in unstructured text within patents, research papers, and laboratory notebooks. Natural Language Processing (NLP), powered by large language models (LLMs), is revolutionizing the extraction and structuring of this information into machine-readable formats, thereby accelerating materials discovery and development. These technologies enable the automated generation of structured datasets, action graphs, and knowledge graphs from textual descriptions of experimental procedures, making synthesis data findable, accessible, interoperable, and reusable (FAIR) [7] [1]. The application of these tools is particularly impactful in the development of Self-Driving Labs (SDLs) and Materials Acceleration Platforms (MAPs), where they provide an intuitive interface for generating automated, executable workflows from natural language input [7]. This document outlines practical protocols and applications for leveraging NLP in the extraction of synthesis procedures from diverse textual sources.

Synthesis information is predominantly found in three types of documents, each with distinct characteristics and utilities for NLP-driven extraction.

- Patent Literature: Patents are a rich source of experimentally verified procedures. The writing style is often similar to experimental sections in scientific articles, particularly in organic chemistry [7]. For instance, the "Chemical reactions from US patents (1976-Sep2016)" dataset contains over 1.5 million experimental descriptions, which can be automatically extracted and annotated [7]. Patents are valuable for training NLP models due to their volume and structured claim language.

- Research Papers: Peer-reviewed journal articles represent a core repository of validated scientific knowledge. The abstracts and experimental sections of over 100,000 papers have been used to construct large-scale knowledge graphs [8]. NLP can parse these sections to identify key entities like materials, synthesis conditions, and resulting properties.

- Electronic Laboratory Notebooks (ELNs): ELNs provide digital platforms for recording experimental data and findings. They are emerging as a critical real-time data source for NLP, which can automate information extraction, support data analysis, and facilitate knowledge discovery directly within the research workflow [9].

The table below summarizes the scale and application of data sources used in contemporary NLP studies for synthesis information extraction.

Table 1: Quantitative Overview of Data Sources for NLP in Synthesis Extraction

| Data Source | Example Scale in NLP Studies | Primary NLP Application | Key Characteristics |

|---|---|---|---|

| Patent Literature | 1,573,734 experimental procedures from US patents [7] | Training datasets for action graph generation [7] | Standardized language, large volume, includes detailed procedures |

| Research Papers | Over 100,000 articles on framework materials (MOFs, COFs, HOFs) [8] | Construction of large-scale knowledge graphs (2.53M nodes, 4.01M relationships) [8] | Peer-reviewed, includes abstracts & full-text, rich in entity relationships |

| News & Opinion Columns | 422 AI-related news columns for public value analysis [10] | Supplementary data for assessing societal impact of R&D [10] | Reflects societal perspectives and broader impacts |

NLP Methods and Experimental Protocols

Core NLP Tasks for Information Extraction

The transformation of unstructured text into structured knowledge involves several key NLP tasks:

- Named Entity Recognition (NER): Identifies and extracts specific entities within text, such as medical conditions, medications, procedures in clinical notes [11], or in the context of synthesis, materials, chemicals, and equipment [1].

- Relationship Extraction: Identifies the relationships between entities, for example, linking a specific temperature to a reaction step or a property to a material [11] [8].

- Temporal Information Extraction: Crucial for understanding sequences and timelines in synthesis procedures, such as reaction times and steps order [11].

- Text Summarization: Condenses lengthy experimental descriptions into concise overviews, retaining essential details for analysis [11].

Protocol 1: Generating Action Graphs from Experimental Procedures

This protocol details the process of converting a textual experimental procedure into an executable action graph, suitable for autonomous laboratory systems [7].

Methodology:

- Data Collection and Preprocessing: Gather a large corpus of experimental procedures, such as the "Chemical reactions from US patents" dataset [7].

- Text Annotation: Annotate the procedures using either a rule-based system (e.g., ChemicalTagger for part-of-speech tagging) or a large language model (e.g., Llama-3.1-8B-Instruct via in-context learning). This step identifies ActionPhrases, Molecules, and associated Quantities [7].

- Graph Generation: Use a Python script or a fine-tuned transformer model to parse the annotated text and combine the entities into a structured action graph. Exclude procedures with only a single action phrase [7].

- Model Training (Optional): For a custom solution, fine-tune a pre-trained encoder-decoder transformer model (e.g., a "surrogate" LLM) on the annotated dataset to create a model that can directly generate action graphs from natural language input [7].

Protocol 2: Constructing a Knowledge Graph from Scientific Literature

This protocol describes the construction of a large-scale knowledge graph from scientific paper abstracts, enabling enhanced data retrieval and question-answering [8].

Methodology:

- Literature Retrieval: Collect relevant journal articles from databases like Web of Science using targeted search queries. Export abstracts and publication details (DOI, authors, journal) into text files [8].

- Information Extraction with LLMs: Use a large language model (e.g., Qwen2-72B) with a customized prompt to convert the abstract text into a structured JSON format. The LLM identifies key entities (nodes) and the relationships (edges) between them [8].

- Graph Database Population: Import the structured JSON files and publication metadata into a graph database (e.g., Neo4j) using Cypher queries. Establish relationships between the extracted knowledge and its source publication [8].

- Integration with LLMs (RAG): Implement a Retrieval-Augmented Generation (RAG) system where user questions are converted into Cypher queries to retrieve relevant subgraphs from the knowledge graph. The retrieved data is then used to ground the responses of an LLM, significantly improving answer accuracy [8].

Performance Metrics of NLP Models

The performance of NLP models can be evaluated using standard metrics. The following table summarizes the performance of a model trained for information extraction in the materials science domain.

Table 2: Performance Metrics for an LLM in Knowledge Graph Construction

| Metric | Value | Description |

|---|---|---|

| True Positive (TP) Rate | 98% | Accurate and comprehensive information extraction from abstracts [8] |

| False Negative (FN) Rate | 2% | Inaccurate or incomplete information extraction [8] |

| F1 Score | 0.9898 | Harmonic mean of precision and recall [8] |

| QA Accuracy with KG (RAG) | 91.67% | Accuracy of a Qwen2 model augmented with a knowledge graph on a specialized question-answering task [8] |

The Scientist's Toolkit: Research Reagent Solutions

This section lists essential software tools and resources that function as "research reagents" for implementing NLP-based synthesis extraction protocols.

Table 3: Key Software Tools and Resources for NLP-Driven Synthesis Extraction

| Tool / Resource | Function | Application Note |

|---|---|---|

| ChemicalTagger | A rule-based system for part-of-speech (POS) tagging and named entity recognition (NER) in chemical experimental text [7]. | Used for the initial annotation of patent literature to create training data for action graph generation [7]. |

| Transformer-based LLMs (e.g., Llama, Qwen2) | Large language models capable of understanding and generating text. They can be used for in-context learning or fine-tuned for specific tasks like entity and relationship extraction [7] [8]. | Qwen2-72B was used to parse over 100,000 abstracts into structured JSON for knowledge graph construction [8]. |

| Neo4j | A graph database management system used to store, query, and visualize knowledge graphs [8]. | Serves as the backend for the constructed knowledge graph, enabling complex queries about material properties and synthesis [8]. |

| Node Editor | A graphical user interface component that represents workflows as interconnected nodes. | Provides a user-friendly way to visualize and modify automatically generated action graphs before they are compiled into executable code for a Self-Driving Lab [7]. |

The Challenge of Domain-Specific Terminology in Chemistry and Pharma

The application of Natural Language Processing (NLP) for extracting chemical synthesis procedures faces a fundamental challenge: the specialized lexicons and complex semantic relationships inherent to chemical and pharmaceutical domains. General-purpose language models often fail to capture the precise meaning of domain-specific terminology, leading to inaccuracies in information extraction. Domain-specific terminology in chemistry and pharma includes complex chemical nomenclature, standardized operations (e.g., "reflux," "extract"), and specialized equipment, which are not typically encountered in general text corpora [12] [13]. This terminology gap creates significant barriers to accurate automated extraction of synthesis procedures from scientific literature and patents, which are predominantly written in unstructured prose [14] [15].

The D-A-R-C-P (Document-Assay-Result-Chemical-Protein) concept exemplifies the complexity of relationships that must be captured to effectively connect chemistry to pharmacology [13]. Each element in this chain presents terminology challenges, from resolving chemical names to standardizing pharmacological activity measurements. Effective NLP solutions must address these challenges through specialized approaches, including domain-adapted models and carefully engineered protocols.

NLP Approaches for Domain-Specific Terminology

Specialized Language Models

Domain-specific large language models (LLMs) represent a promising approach to addressing terminology challenges. These models are pre-trained on extensive scientific corpora, enabling them to develop specialized understanding of chemical and pharmacological language:

PharmaGPT: A suite of domain-specialized LLMs with 13 billion and 70 billion parameters, specifically trained on a comprehensive corpus of bio-pharmaceutical and chemical literature. Evaluations demonstrate that PharmaGPT surpasses existing general models on domain-specific benchmarks such as NAPLEX, achieving this with sometimes just one-tenth the parameters of general-purpose models [16].

ChemLM: A transformer-based language model that conceptualizes chemical compounds as sentences composed of distinct chemical "words" using SMILES (Simplified Molecular-Input Line-Entry System) representations. ChemLM employs a three-stage training process: self-supervised pretraining, domain-specific pretraining, and supervised fine-tuning for molecular property prediction [17].

Domain-Adapted Embeddings: Specialized word embedding models like ChemFastText demonstrate enhanced performance for chemical synonym analysis and relationship extraction compared to general embeddings. These models capture semantic relationships between chemical terms that are not apparent in general language models [18].

Table 1: Performance Comparison of Domain-Specific NLP Models

| Model | Architecture | Training Data | Key Advantages | Domain Applications |

|---|---|---|---|---|

| PharmaGPT | Transformer-based LLM | Bio-pharmaceutical and chemical corpus | Superior performance on NAPLEX benchmarks; multilingual capability | Drug discovery, pharmacological data extraction |

| ChemLM | Transformer with SMILES tokenization | 10 million ZINC compounds + domain-specific data | Effective transfer learning; identifies potent pathoblockers | Molecular property prediction, chemical compound analysis |

| ChemFastText | Word embeddings | "Fe, Cu, synthesis" specialized corpus | Enhanced chemical specificity; better synonym analysis | Chemical reagent identification, similarity analysis |

Information Extraction Pipelines

Effective extraction of synthesis procedures requires specialized NLP pipelines that combine multiple techniques:

Named Entity Recognition (NER): Identification of chemical compounds, reagents, and synthesis parameters within unstructured text. Machine learning-based NER has largely replaced rule-based approaches due to better handling of terminology variation [19].

Relation Extraction: Determining semantic relationships between identified entities, such as associating a chemical with a specific reaction step or parameter [15].

Structured Action Sequencing: Converting procedural descriptions into structured, executable synthesis actions. Advanced approaches use sequence-to-sequence models based on transformer architecture to translate experimental procedures into action sequences [14].

Experimental Protocols for Terminology-Focused NLP

Protocol: Training Domain-Specific Word Embeddings

Objective: Develop specialized word embeddings that accurately capture chemical terminology relationships.

Materials:

- Scientific corpus (patents, journal articles) in target domain

- Computational resources for model training

- Evaluation datasets with chemical similarity tasks

Methodology:

- Corpus Compilation: Assemble a specialized corpus focused on the target domain (e.g., "Fe, Cu, synthesis" for nanoparticle synthesis) [18].

- Preprocessing: Apply text cleaning, tokenization, and chemical name standardization.

- Model Training: Train embedding models (e.g., Word2Vec, GloVe) using domain-specific parameters.

- Evaluation:

- Calculate average cosine similarity for chemical term pairs

- Visualize embedding relationships using t-SNE (t-distributed stochastic neighbor embedding)

- Conduct synonym analysis and analogy reasoning analysis

- Validation: Compare performance against general embeddings on domain-specific tasks.

Expected Outcomes: Domain-specific embeddings should show stronger correlation between chemically similar terms and improved performance on chemical reasoning tasks compared to general embeddings [18].

Protocol: Converting Synthesis Procedures to Structured Actions

Objective: Accurately convert unstructured experimental procedures into structured synthesis action sequences.

Materials:

- Experimental procedures from patents or scientific literature

- Annotation schema (e.g., OSPAR format)

- Computational resources for model training/inference

Methodology:

- Action Schema Definition: Define a set of synthesis actions with predefined properties covering common operations in organic synthesis [14].

- Data Annotation: Manually annotate experimental procedures with action sequences and entities.

- Model Selection: Implement a sequence-to-sequence model based on transformer architecture.

- Training Approach:

- Pretrain on large-scale automatically generated data using rule-based NLP

- Fine-tune on manually annotated samples

- Evaluation Metrics:

- Perfect match rate for action sequences

- Partial match rates (90%, 75%)

- Recall and precision for action extraction

Expected Outcomes: The model should achieve perfect action sequence matching for >60% of sentences and >75% matching for >82% of sentences in test sets [14].

Table 2: Performance Metrics for Synthesis Action Extraction

| Evaluation Metric | Performance | Interpretation | Application Significance |

|---|---|---|---|

| Perfect Match (100%) | 60.8% of sentences | Exact correspondence between predicted and reference action sequences | Enables fully automated procedure extraction without human verification |

| High Match (90%) | 71.3% of sentences | Minor discrepancies that don't affect reproducible synthesis | Suitable for automated synthesis with minimal human oversight |

| Partial Match (75%) | 82.4% of sentences | Core actions correctly identified with some parameter errors | Useful for procedure analysis and data mining applications |

Implementation Workflow

The following diagram illustrates the complete workflow for addressing domain-specific terminology challenges in chemical synthesis extraction:

NLP Terminology Processing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Chemistry NLP Research

| Resource | Type | Function | Application Context |

|---|---|---|---|

| PharmaGPT | Domain-Specific LLM | Provides chemical and pharmacological language understanding | Drug discovery information extraction, pharmacological data curation |

| ChemBERTa | Chemical Language Model | Pre-trained transformer for chemical text | Chemical entity recognition, relationship extraction in literature |

| ChemicalTagger | Rule-Based NLP Tool | Extracts chemical reaction information from text | Initial parsing of experimental procedures for structured data extraction |

| IBM RXN for Chemistry | Transformer Model | Converts experimental procedures to synthesis actions | Automated synthesis planning and procedure extraction |

| OSPAR Format | Annotation Schema | Standardized format for organic synthesis procedures | Human-in-the-loop review and correction of automated extractions |

| χDL (Chemical Description Language) | Structured Representation | Executable synthesis description language | Robotic synthesis automation and procedure standardization |

| SciBERT | Scientific Language Model | Pre-trained on scientific literature | General scientific text processing with chemistry applications |

Discussion and Future Directions

The challenge of domain-specific terminology in chemistry and pharma remains significant, but current NLP approaches show promising results. The development of domain-adapted language models, specialized embedding techniques, and structured action extraction methods has substantially improved our ability to automatically process chemical synthesis information.

Future directions should focus on several key areas:

- Multimodal Approaches: Integrating textual information with chemical structures and spectroscopic data

- Cross-Domain Transfer: Leveraging knowledge from related scientific domains while maintaining domain specificity

- Human-in-the-Loop Systems: Developing frameworks that combine automated extraction with expert validation, as exemplified by the dual-system approach using both rule-based and GLLM methods [15]

- Real-World Validation: Testing extracted procedures through automated synthesis platforms to verify accuracy and completeness

As these technologies mature, they promise to significantly accelerate drug development and materials discovery by making the vast chemical knowledge contained in scientific literature more accessible and actionable.

NLP Techniques and Tools for Extracting Chemical Entities and Processes

Named Entity Recognition (NER) and Relation Extraction (RE) are foundational technologies in natural language processing (NLP) that enable the transformation of unstructured text into structured, actionable data. NER is a natural language processing technique that identifies and classifies key information in text into predefined categories such as person names, organizations, locations, and domain-specific terms [20]. Relation Extraction builds upon this foundation by identifying semantic relationships between entities, such as extracting (subject, relation, object) triples that are fundamental to knowledge graph construction [21]. In the context of pharmaceutical research and synthesis procedures extraction, these technologies enable automated mining of critical information from scientific literature, patents, and laboratory reports, thereby accelerating drug discovery and development processes.

The integration of NER and RE creates a powerful pipeline for information extraction: NER first identifies the relevant entities (e.g., chemical compounds, proteins, diseases), and RE then determines how these entities interact (e.g., drug X inhibits protein Y, compound A treats disease B). This end-to-end capability is particularly valuable for synthesizing knowledge across the vast and rapidly growing body of biomedical literature, enabling researchers to quickly identify relevant synthesis procedures, potential drug candidates, and established biochemical pathways without manual review of thousands of documents.

Named Entity Recognition: Techniques and Applications

Technical Approaches to NER

Named Entity Recognition has evolved through multiple technological paradigms, each with distinct advantages for extracting synthesis information from scientific text. Rule-based systems utilize predefined patterns, capitalization rules, and dictionaries to identify entities, making them interpretable and precise in specific contexts but limited in adaptability to new terminologies [20] [22]. Machine learning-based approaches, including Conditional Random Fields (CRF) and Support Vector Machines (SVM), train statistical models on annotated corpora to recognize entities with greater flexibility [20]. Deep learning models, particularly Bidirectional LSTMs and Transformer-based architectures like BERT, automatically learn contextual representations and have demonstrated state-of-the-art performance by capturing complex linguistic patterns [20] [23].

Recent advancements have introduced reasoning-based paradigms that shift NER from implicit pattern matching to explicit, verifiable reasoning processes. The ReasoningNER framework, for instance, employs a three-stage approach: Chain-of-Thought (CoT) generation that creates reasoning traces for entity identification, CoT tuning that optimizes the model to generate rationales before final answers, and reasoning enhancement that refines the process using comprehensive reward signals [24]. This approach has demonstrated impressive cognitive capability, particularly in zero-shot settings where it outperformed GPT-4 by 12.3% in F1 score [24].

Domain-Specific NER in Pharmaceutical Research

In pharmaceutical contexts, NER systems must recognize specialized entity types beyond the standard categories. Essential entity types for synthesis procedures research include:

- Chemical Compounds: IUPAC names, common drug names, chemical formulas

- Proteins and Biomarkers: Protein names, gene codes, receptor types

- Diseases and Conditions: Medical terminology, syndrome names, pathological states

- Laboratory Techniques: Extraction methods, purification processes, analytical techniques

- Experimental Parameters: Temperatures, concentrations, time durations, yields

- Safety Information: Toxicity levels, hazard statements, precautionary measures

Domain adaptation techniques are crucial for effective NER in pharmaceutical contexts. Approaches include fine-tuning general language models (e.g., BERT) on biomedical corpora, utilizing domain-specific pre-trained models (e.g., BioBERT, ClinicalBERT), and implementing hybrid frameworks that integrate symbolic ontologies (e.g., ChEBI, PubChem) with deep learning to enhance interpretability and domain awareness [22]. These strategies address the challenge of specialized terminologies and low-resource environments where labeled data is scarce.

Relation Extraction: Advanced Methodologies

Evolution of Relation Extraction Techniques

Relation Extraction has traditionally been framed as a classification problem where models predict discrete relationship labels between entity pairs based on contextual analysis [21]. Standard approaches include supervised learning with mid-sized pre-trained models like BART and BERT, which require substantial fine-tuning to generalize across domains [21]. Recent work has revealed limitations in this classification-based paradigm, particularly its lack of semantic expressiveness for fine-grained relation understanding and insufficient utilization of structural constraints like entity types and positional cues [25].

The emerging Retrieval over Classification (ROC) framework reformulates RE as a retrieval task driven by relation semantics [25]. This approach integrates entity type and positional information through multimodal encoding, expands relation labels into natural language descriptions using large language models, and aligns entity-relation pairs via semantic similarity-based contrastive learning [25]. This paradigm shift has demonstrated state-of-the-art performance on benchmark datasets while exhibiting stronger robustness and interpretability compared to traditional classification-based methods [25].

For cross-domain applications in pharmaceutical research, the R1-RE framework introduces reinforcement learning with verifiable reward (RLVR) to enhance reasoning capabilities [21]. Inspired by human annotation workflows where annotators iteratively compare target sentences against guidelines, this method reconceptualizes RE as a reasoning task grounded in annotation guidelines. The framework employs Group Relative Policy Optimization (GRPO) to generate multiple candidate outputs, with rewards calculated by comparing outputs against gold standards [21]. This approach has achieved approximately 70% out-of-domain accuracy, comparable to leading proprietary models like GPT-4o [21].

Multimodal Relation Extraction

Recent advancements extend RE to multimodal scenarios, integrating textual and visual information from scientific documents. This is particularly valuable for pharmaceutical research where synthesis procedures are often described through both textual descriptions and graphical representations in patents and journal articles. Multimodal RE approaches fuse features from different modalities to identify relationships between entities that may be expressed differently across text and images [25]. For extraction of synthesis procedures, this enables more comprehensive understanding of experimental setups that combine textual descriptions with chemical structures, reaction diagrams, and procedural flowcharts.

Quantitative Performance Comparison

Table 1: Performance Comparison of NER Approaches Across Domains

| Model Type | Architecture | Domain | Precision | Recall | F1-Score | Data Requirements |

|---|---|---|---|---|---|---|

| Encoder-based (Flat NER) | BioBERT | Clinical Reports | 0.87-0.88 | 0.86-0.87 | 0.87-0.88 | 2,013 reports [23] |

| Encoder-based (Nested NER) | Multi-task Learning | Clinical Reports | 0.84-0.85 | 0.83-0.85 | 0.84-0.85 | 2,013 reports [23] |

| LLM-based (Instruction) | Various LLMs | Clinical Reports | 0.80-0.85 | 0.10-0.18 | 0.18-0.30 | 413 reports [23] |

| ReasoningNER | CoT + GRPO | General Domain | - | - | 12.3% improvement over GPT-4 | Limited examples [24] |

| GPT-NER | Sequence Transformation | General Domain | Comparable to supervised | Significant few-shot advantage | Limited data scenarios [26] |

Table 2: Relation Extraction Performance Benchmarks

| Framework | Model Size | Dataset | In-Domain Accuracy | Out-of-Domain Accuracy | Key Innovation |

|---|---|---|---|---|---|

| Traditional Supervised | BART-base | SemEval-2010 | 82.5% | 58.3% | Standard fine-tuning [21] |

| Few-shot Learning | GPT-4o | SemEval-2010 | 72.1% | 65.4% | In-context learning [21] |

| R1-RE | 7B Parameters | SemEval-2010 | 81.7% | ~70% | RLVR framework [21] |

| ROC Framework | Multimodal | MNRE | SOTA | SOTA | Retrieval-over-classification [25] |

Experimental Protocols for Pharmaceutical Text Mining

Protocol 1: Domain-Specific NER Implementation

Objective: Extract chemical compounds, synthesis methods, and experimental parameters from pharmaceutical literature.

Materials:

- Text corpus (scientific papers, patents, laboratory notes)

- Annotation guidelines defining entity types

- Computational resources (GPU recommended for deep learning models)

Methodology:

- Data Preparation: Collect and preprocess text documents relevant to synthesis procedures. Segment documents into sentences or paragraphs using sentence boundary detection.

- Schema Definition: Define entity types specific to pharmaceutical synthesis (e.g., chemical compounds, catalysts, temperatures, yields, purification methods).

- Annotation: Manually annotate a subset of documents following consistent guidelines. Implement quality control through inter-annotator agreement measurements.

- Model Selection: Choose an appropriate model architecture based on data availability and domain specificity:

- For limited labeled data: Utilize pre-trained models like BioBERT or ClinicalBERT with domain-specific vocabulary.

- For extremely low-resource scenarios: Implement few-shot approaches like GPT-NER or reasoning-based models.

- Training: Fine-tune selected model on annotated data using standard NLP training protocols. Optimize hyperparameters through cross-validation.

- Evaluation: Assess performance using standard metrics (precision, recall, F1-score) on held-out test sets. Conduct error analysis to identify systematic challenges.

Validation: Compare extracted entities against manually curated gold standards. Calculate inter-annotator agreement between model outputs and expert annotations.

Protocol 2: Cross-Domain Relation Extraction for Synthesis Procedures

Objective: Identify relationships between entities in synthesis descriptions (e.g., "compound X reacts with catalyst Y at temperature Z").

Materials:

- Text documents with entity annotations

- Relation type definitions

- Computational framework for relation extraction

Methodology:

- Relation Schema Design: Define relationship types relevant to synthesis procedures (e.g., REACTSWITH, USESCATALYST, AT_TEMPERATURE, YIELDS).

- Data Annotation: Annotate relationships between previously identified entities. For each entity pair, label relationship type or mark as no_relation.

- Model Implementation:

- For classification-based approach: Implement context encoder (e.g., BERT) with relation classification head.

- For retrieval-based approach: Implement ROC framework with relation semantics alignment.

- For cross-domain robustness: Implement R1-RE with reinforcement learning and verification rewards.

- Training: Optimize model parameters using annotated data. For R1-RE, follow GRPO protocol with group-based advantage calculation.

- Evaluation: Assess using precision, recall, F1-score for relation types. Evaluate cross-domain performance by testing on unseen synthesis procedure types.

Validation: Manually verify extracted relationships for accuracy and completeness. Compare with knowledge bases like PubChem Reactions for chemical reaction relationships.

Visualization of Core Workflows

NER Reasoning Paradigm

Relation Extraction as Retrieval

Integrated NER and RE Pipeline

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for NER and RE Implementation

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| SpaCy | NLP Library | Production-ready NER implementation | General text processing with support for custom entity types [20] |

| BERT/BioBERT | Language Model | Contextual word representations | Domain-specific entity recognition when fine-tuned [20] [22] |

| ReasoningNER | Framework | Reasoning-based entity extraction | Low-resource and zero-shot scenarios [24] |

| R1-RE | RE Framework | Cross-domain relation extraction | Robust relationship mining across synthesis types [21] |

| ROC Framework | RE System | Multimodal relation extraction | Integrating text and diagram information from patents [25] |

| BRAT | Annotation Tool | Manual annotation of entities and relations | Creating gold-standard datasets for evaluation [20] |

| Prodigy | Annotation System | Active learning-based labeling | Efficient dataset creation with model-in-the-loop [20] |

| UMLS/Snomed-CT | Knowledge Base | Biomedical terminology reference | Domain-specific entity normalization [22] |

| PubChem | Chemical Database | Chemical compound information | Validation of extracted chemical entities [22] |

The exponential growth of scientific literature presents a formidable challenge for researchers in drug development and materials science. Manually extracting synthesis procedures from vast collections of research papers is time-consuming and prone to human error. Natural Language Processing (NLP) offers a powerful solution by automating the extraction of structured information from unstructured text. This application note provides detailed protocols for implementing three modern NLP libraries—SparkNLP, SciSpacy, and Hugging Face Transformers—specifically tailored for extracting synthesis procedure information from scientific literature. These libraries represent the cutting edge in NLP capabilities, from scalable distributed processing (SparkNLP) to domain-specific biomedical models (SciSpacy) and state-of-the-art transformer architectures (Hugging Face).

Library Comparison and Selection Framework

Quantitative Performance Metrics

Table 1: Comparative Analysis of NLP Libraries for Scientific Text Processing

| Feature | SparkNLP | SciSpacy | Hugging Face |

|---|---|---|---|

| Primary Strength | Scalable big data processing | Biomedical domain specificity | State-of-the-art transformer models |

| Processing Speed | 2.87 samples/sec (inference) [27] | Fast training (2 min/epoch) [28] | Variable (depends on model size) |

| Accuracy (F1) | High for NER tasks [29] | 0.97 accuracy on vascular text classification [28] | Superior for complex extraction tasks [30] |

| Domain-Specific Pre-trained Models | 14,500+ models [27] | encorescimd, encoresciscibert [28] | Bio-clinicalBERT, BioMedBERT [28] [31] |

| Multilingual Support | 200+ languages [32] | Limited to trained domains | Extensive via model hub |

| Hardware Requirements | Cluster recommended [29] | CPU/GPU single node | GPU accelerated for large models |

| Learning Curve | Steep (requires Spark knowledge) [32] | Moderate (Python familiarity) [32] | Variable (simple to complex) |

Library Selection Guidelines

Choose SparkNLP for large-scale document processing across distributed computing environments, particularly when handling millions of documents [29]. Select SciSpacy for specialized biomedical entity recognition and concept extraction where domain terminology accuracy is crucial [28] [33]. Implement Hugging Face transformers for complex relationship extraction and classification tasks requiring state-of-the-art accuracy, especially when fine-tuning on custom datasets is necessary [30] [31].

Experimental Protocols

Protocol 1: Chemical Entity and Synthesis Relation Extraction Using SciSpacy

Objective: Extract chemical entities, reaction conditions, and yield information from scientific abstracts using SciSpacy's domain-specific models.

Materials and Reagents:

- Scientific texts or research papers in PDF or text format

- Python 3.8+ environment

- SciSpacy library (

pip install scispacy) - Domain-specific model (

en_core_sci_scibertoren_core_sci_md) - Prodigy annotation software (optional, for custom model training) [28]

Methodology:

Data Preparation:

- Convert PDF documents to plain text using libraries like PyMuPDF or pdfplumber

- Clean text to remove formatting artifacts and non-content elements

- Segment documents into relevant sections (abstract, methods, results)

Model Initialization:

Entity Recognition and Relation Extraction:

- Process text through the spaCy pipeline to obtain parsed documents

- Extract named entities including chemicals, conditions, and numerical values

- Implement rule-based patterns to identify relationships between entities

- Apply dependency parsing to identify syntactic relationships indicating synthesis procedures

Validation and Evaluation:

- Manually annotate a gold standard dataset of 100-200 documents [28]

- Calculate precision, recall, and F1-score against human annotations

- Use cross-validation to ensure model robustness

Expected Outcomes: This protocol typically achieves F1-scores of 0.85-0.92 for chemical entity recognition and 0.75-0.85 for relation extraction when validated on annotated corpora of synthesis procedures [28].

Protocol 2: Large-Scale Document Processing with SparkNLP

Objective: Implement a scalable pipeline for processing millions of research documents to extract synthesis procedures using SparkNLP.

Materials and Reagents:

- Apache Spark cluster (Azure Databricks, AWS EMR, or local cluster)

- SparkNLP library (

pip install spark-nlp) - Document storage system (HDFS, S3, or similar)

- High-performance computing resources (CPU/GPU clusters) [29]

Methodology:

Pipeline Configuration:

Distributed Processing:

- Load documents from distributed storage as Spark DataFrame

- Apply the NLP pipeline to all documents in parallel

- Extract entities and relationships using SparkNLP's pretrained models

- Store results in structured format for further analysis

Performance Optimization:

- Utilize partitioning strategies to balance workload across cluster nodes

- Implement caching for frequently accessed data

- Monitor resource utilization and adjust cluster configuration accordingly

Expected Outcomes: SparkNLP can process large document collections at scale, with benchmarks showing processing speeds of 2.87 samples/second on standard hardware [27]. The distributed architecture enables linear scaling with cluster size, making it feasible to process millions of documents in practical timeframes.

Protocol 3: Fine-tuning Transformer Models for Synthesis Extraction

Objective: Fine-tune domain-specific BERT models (BioMedBERT, Bio-clinicalBERT) for accurate extraction of synthesis procedures from scientific literature.

Materials and Reagents:

- Hugging Face Transformers library (

pip install transformers) - Domain-specific pretrained model (BioMedBERT, Bio-clinicalBERT, SciBERT) [31]

- GPU-enabled environment for training

- Annotated dataset of synthesis procedures

- Weights & Biases or TensorBoard for experiment tracking

Methodology:

Data Preparation and Annotation:

- Collect relevant scientific papers containing synthesis procedures

- Annotate entities using BIO (Beginning, Inside, Outside) tagging scheme

- Define entity types: CHEMICAL, QUANTITY, TEMPERATURE, TIME, YIELD, etc.

- Split data into training (80%), validation (10%), and test (10%) sets [28]

Model Fine-tuning:

Evaluation and Deployment:

- Evaluate model performance on held-out test set

- Calculate precision, recall, and F1-score for each entity type

- Deploy the fine-tuned model using Hugging Face pipelines or ONNX runtime for production use

Expected Outcomes: Fine-tuned transformer models typically achieve F1-scores of 0.90-0.95 on entity recognition tasks in scientific domains, significantly outperforming general-purpose models [31]. The BioMedBERT model fine-tuned for clinical trial classification demonstrated sensitivity of 0.94-0.96 and specificity of 0.90-0.99 across different trial design categories [31].

Workflow Visualization

Synthesis Procedure Extraction Pipeline

Library Selection Decision Framework

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Computational Resources for NLP Implementation

| Resource | Type | Function | Example Specifications |

|---|---|---|---|

| SparkNLP Library | Software Library | Distributed NLP processing on Spark clusters | Version 6.2+, with 14,500+ pretrained models [27] [34] |

| SciSpacy Models | Domain-Specific Models | Biomedical and scientific text processing | encorescimd, encoresciscibert [28] |

| Hugging Face Transformers | Model Repository | Access to state-of-the-art transformer models | BioMedBERT, Bio-clinicalBERT, SciBERT [28] [31] |

| Prodigy Annotation Tool | Data Annotation Software | Manual annotation of training data | Explosive AI Prodigy for active learning [28] |

| MIMIC-IV Dataset | Clinical Text Corpus | Benchmark dataset for evaluation | 331,794 de-identified discharge summaries [28] |

| PubMed API | Literature Database | Access to biomedical literature | Programmatic access to 30+ million citations [33] |

| GPU Computing Resources | Hardware | Accelerated model training | NVIDIA Tesla V100 or A100 for transformer training |

| Azure Databricks/Spark Cluster | Computing Platform | Distributed processing environment | Apache Spark with optimized ML runtime [29] |

Performance Benchmarking

Quantitative Results Comparison

Table 3: Performance Metrics Across NLP Libraries on Scientific Tasks

| Task | SparkNLP | SciSpacy | Hugging Face (Fine-tuned) |

|---|---|---|---|

| Named Entity Recognition (F1) | 0.89 [29] | 0.91 [28] | 0.94 [31] |

| Document Classification (Accuracy) | 0.93 [29] | 0.97 [28] | 0.98 [28] |

| Training Time (Relative) | Fast (distributed) [27] | Very Fast (2 min/epoch) [28] | Slow (requires fine-tuning) |

| Inference Speed (samples/sec) | 2.87 [27] | 15.3 (estimated) | 5.2 (varies by model size) |

| Multi-language Support | 200+ languages [32] | Limited | Extensive via model hub |

| Hardware Requirements | Spark cluster [29] | Single node CPU/GPU | GPU recommended |

The implementation of modern NLP libraries—SparkNLP, SciSpacy, and Hugging Face Transformers—provides researchers with powerful tools for extracting synthesis procedures from scientific literature at scale. SparkNLP excels in distributed processing of large document collections, SciSpacy offers superior performance on domain-specific scientific text, and Hugging Face provides state-of-the-art accuracy through fine-tuned transformer models. The protocols and benchmarks presented in this application note provide a foundation for researchers to select and implement the appropriate NLP solutions based on their specific requirements for data volume, domain specificity, and processing complexity. As these libraries continue to evolve, their integration into scientific workflow systems will increasingly accelerate the extraction and synthesis of knowledge from the rapidly expanding scientific literature.

The vast majority of chemical knowledge, including complex synthesis procedures, is recorded as unstructured text in scientific literature. Natural Language Processing (NLP) aims to make this wealth of information machine-readable, thereby accelerating materials discovery and automated synthesis. Pre-trained language models (PLMs) like BioBERT, SciBERT, and ChemBERTa have become foundational tools for this task. These domain-specific models, built on transformer architectures like BERT, are pre-trained on large corpora of scientific text, allowing them to understand the complex syntax and specialized vocabulary of chemistry far more effectively than general-purpose models. [1] [35]

Framed within a broader thesis on extracting synthesis procedures, this document provides detailed application notes and protocols for employing these models. The content is structured to enable researchers, scientists, and drug development professionals to implement these advanced NLP techniques for automating data extraction from chemical literature, thereby supporting the development of self-driving labs and large-scale, structured synthesis databases. [7] [36]

Model Performance and Quantitative Comparison

Evaluations across various chemical and biomedical NLP tasks consistently demonstrate the superiority of domain-specific models. The following table summarizes key performance metrics from recent studies, highlighting the strengths of each model.

Table 1: Performance Comparison of Pre-trained Models on Domain-Specific Tasks

| Model | Task | Dataset | Key Metric | Score | Outcome vs. General BERT |

|---|---|---|---|---|---|

| BioBERT [37] [38] | Relation Extraction (Gene-Disease, Chemical-Disease) | BC5CDR, ChemDisGene | F1 Score | Superior Performance | Outperforms general BERT |

| BioBERT [38] | Named Entity Recognition (Ophthalmic Meds) | Ophthalmology Notes | Macro F1 | 0.875 | Best among BERT models |

| SciBERT [39] | Relation Extraction | Biomedical Text | F1 Score | Strong Performance | Better than general BERT |

| ChemBERTa [35] | Molecular Property Prediction | MoleculeNet | ROC-AUC | Competitive | Tailored for chemical language |

| Domain-Specific PLMs [40] | Scientific Text Classification | Web of Science (WoS) | Accuracy | Consistent Improvement | Outperform BERTbase |

A critical insight from recent research is that while incorporating external knowledge (e.g., entity descriptions, knowledge graphs) can boost the performance of smaller PLMs, its benefits become marginal for larger, modern PLMs like BioLinkBERT after comprehensive hyperparameter optimization. This suggests that larger models implicitly encode much of this contextual information during pre-training. [37]

Detailed Experimental Protocols

Protocol 1: Fine-tuning for Synthesis Procedure Classification

This protocol outlines the process of adapting a pre-trained model to classify paragraphs from scientific articles as containing synthesis information or not, a crucial first step in information extraction pipelines. [36]

1. Objective: To fine-tune SciBERT to accurately identify paragraphs describing synthesis procedures. 2. Materials & Data Preparation:

- Dataset: A collection of scientific articles (e.g., from PubMed or patent databases) with annotated synthesis paragraphs.

- Pre-processing: Clean text by converting to lowercase and removing non-ASCII characters. Combine the title, abstract, and keywords as input features.

- Tokenization: Use the SciBERT tokenizer to convert text into sub-word tokens compatible with the model's vocabulary. 3. Model Configuration:

- Base Model:

SciBERTscivocab[40] - Hyperparameters:

- Learning Rate: Dynamic learning rate scheduling (e.g., 2e-5 to 5e-5)

- Batch Size: 16 or 32, depending on GPU memory

- Epochs: Utilize early stopping to prevent overfitting. 4. Fine-tuning Procedure:

- Split the annotated dataset into training, validation, and test sets (e.g., 80/10/10).

- Load the pre-trained SciBERT model.

- Add a custom classification layer on top of the [CLS] token output.

- Train the model on the training set, monitoring loss and accuracy on the validation set.

- Stop training when validation performance plateaus. 5. Evaluation: Evaluate the final model on the held-out test set. An F1 score of >0.90 is achievable, as demonstrated in similar extraction tasks. [36]

Protocol 2: Sequence-Aware Entity and Relation Extraction for Synthesis Codification

This protocol describes using a model like BioBERT or a powerful LLM like GPT-4, guided by domain experts, to extract detailed synthesis parameters and their relationships, forming a structured knowledge graph. [36]

1. Objective: To extract synthesis actions, precursors, conditions, and their sequence-aware relations from a identified synthesis paragraph. 2. Materials & Data Preparation:

- Input: A paragraph classified as containing a synthesis procedure.

- Annotation Schema: Develop a FAIR-compliant schema defining entities (e.g.,

Action,Precursor,Quantity,Temperature) and relations (e.g.,has_quantity,has_temperature). 3. Model Configuration & Prompting (LLM Approach): - Model: GPT-4 via API.

- Prompt Design: Craft a detailed prompt with:

- Role: "You are an expert chemist extracting synthesis information..."

- Instruction: Step-by-step commands to identify entities and relations.

- Output Format: A strict JSON schema or directed graph structure.

- Few-shot Examples: Provide 2-3 annotated examples within the prompt. 4. Extraction Procedure:

- Feed the prepared prompt and the synthesis paragraph to the model.

- Parse the model's output to generate a structured, sequence-aware directed graph of the synthesis. 5. Evaluation and Validation:

- Expert chemists should manually validate a subset of the extracted data.

- Calculate precision, recall, and F1 score for entity and relation extraction. This approach has achieved F1 scores of 0.96 and 0.94 for entities and relations, respectively. [36]

Workflow Visualization

The following diagram illustrates the end-to-end logical workflow for extracting structured synthesis data from unstructured text, integrating the protocols described above.

Synthesis Extraction Pipeline

Table 2: Key Resources for NLP-Driven Synthesis Extraction Research

| Resource Name | Type | Function/Benefit | Reference/Link |

|---|---|---|---|

| SciBERT | Pre-trained Model | Optimized for biomedical & scientific text; ideal for initial text classification. | [39] [40] |

| BioBERT | Pre-trained Model | Pre-trained on PubMed abstracts & PMC articles; excels in biomedical NER and RE. | [37] [38] |

| ChemBERTa | Pre-trained Model | Specialized for chemical language (e.g., SMILES); useful for molecular property tasks. | [35] |

| KV-PLM | Unified Pre-trained Model | Bridges molecule structures (SMILES) and biomedical text for comprehensive understanding. | [39] |

| HuggingFace Transformers | Software Library | Provides pre-trained models and pipelines for easy fine-tuning and inference. | [35] |

| ChemicalTagger | Rule-based Annotation Tool | Uses grammar-based patterns to tag chemical entities and actions in text. | [7] |

| Web of Science (WoS) Dataset | Benchmark Dataset | Large-scale dataset for training and evaluating scientific text classification models. | [40] |

| Doccano | Text Annotation Tool | Open-source tool for manually annotating text for NER and relation extraction tasks. | [36] |

The vast majority of knowledge regarding materials and chemical synthesis is encapsulated within unstructured text in millions of scientific publications. Manually extracting and codifying this information is prohibitively time-consuming, creating a significant bottleneck for data-driven materials discovery and design [1] [41]. Natural Language Processing (NLP) presents a solution by enabling the automated construction of large-scale, structured datasets from scientific literature. This document details the application notes and protocols for building an NLP pipeline to transform raw text describing synthesis procedures into structured, machine-actionable data, a core component for accelerating research in materials science and drug development [41].

An NLP pipeline is a sequence of interconnected processing stages that systematically converts raw text into a structured format suitable for analysis and modeling [42]. In the context of synthesis extraction, this involves a series of steps from data acquisition to the final deployment of a functioning system. The pipeline is often non-linear, requiring iteration and refinement at various stages [42]. The following workflow diagram illustrates the primary stages and their relationships.

Detailed Protocols for Pipeline Construction

Data Acquisition and Text Cleaning

The initial stage involves gathering a robust and relevant corpus of scientific text from which synthesis information will be extracted.

Protocol 1: Content Acquisition and Assembly

- Objective: To programmatically collect a large number of scientific papers from publisher websites and convert them into plain text format.

- Methods:

- Web Scraping: Employ a customized web-scraper (e.g., Borges, as used in prior work) to download materials-relevant papers in HTML/XML format from publishers like Wiley, Elsevier, and the Royal Society of Chemistry [41]. Focus on papers published after the year 2000 to minimize errors from optical character recognition of image-based PDFs [41].

- Format Conversion: Use a dedicated parser toolkit (e.g., LimeSoup) to convert articles from HTML/XML into raw text, accounting for the specific format standards of different publishers and journals [41].

- Data Storage: Store the full text and metadata (e.g., journal name, article title, abstract, authors) in a database such as MongoDB for efficient retrieval and management [41].

- Data Augmentation: If the acquired dataset is insufficient, employ techniques such as synonym replacement, back translation, or bigram flipping to artificially expand the training data [42] [43].

Protocol 2: Text Cleaning and Preprocessing

- Objective: To normalize and clean the raw text, removing irrelevant elements and preparing it for deeper analysis.

- Methods:

- Basic Cleaning: Remove HTML tags, URLs, and email addresses using regular expressions. Convert emojis to textual representations or remove them [42] [43].

- Unicode Normalization: Handle special characters, symbols, and non-Latin scripts by converting them to a consistent, machine-readable format (e.g., UTF-8) [43].

- Text Preprocessing:

- Tokenization: Segment text into sentences and then into individual words or tokens [42] [44].

- Lowercasing: Convert all characters to lowercase to ensure uniformity [42] [43].

- Stop Word Removal: Filter out high-frequency, low-meaning words (e.g., "the," "and") [43].

- Stemming/Lemmatization: Reduce words to their root form (e.g., "heated" becomes "heat") to decrease feature space dimensionality [42] [43].

Feature Engineering and Modeling

This phase focuses on converting the cleaned text into numerical representations and applying machine learning models to identify and classify relevant entities and actions.

Protocol 3: Synthesis Paragraph Classification

- Objective: To identify paragraphs within scientific papers that contain descriptions of solution-based synthesis procedures.

- Methods:

- Model Selection: Utilize a Bidirectional Encoder Representations from Transformers (BERT) model, pre-trained on a large corpus of materials science literature [1] [41].