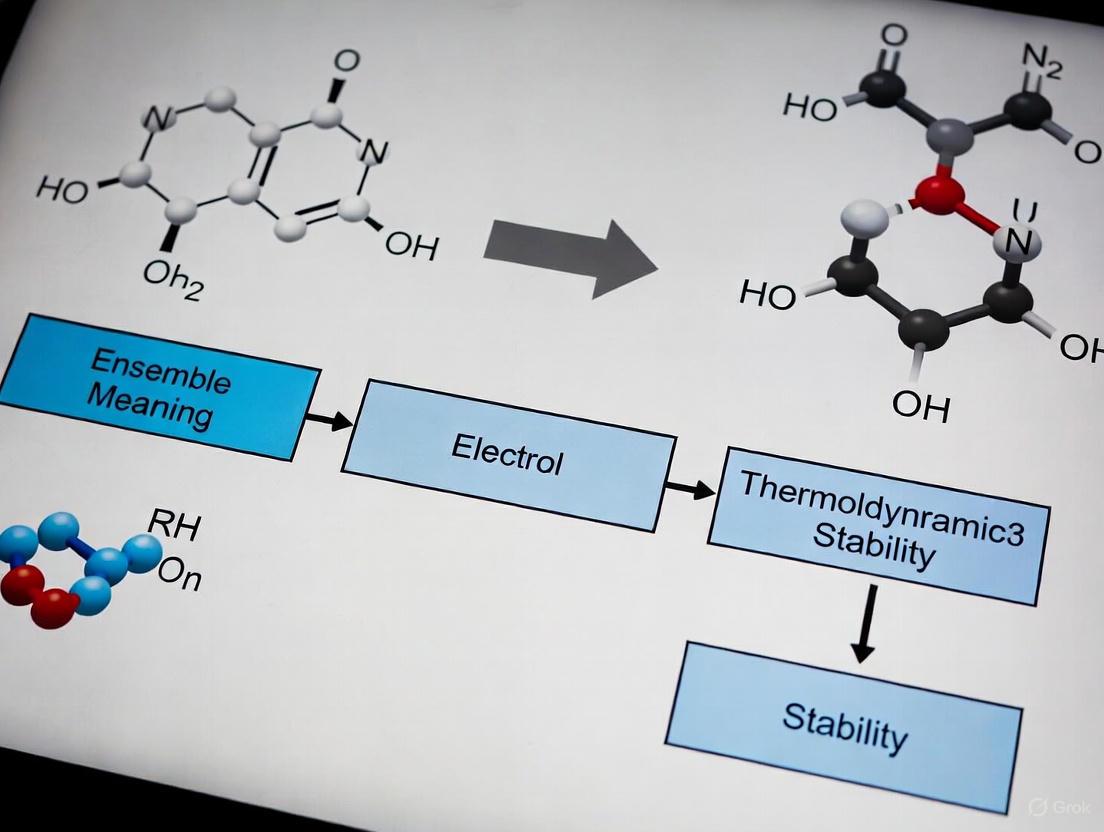

Ensemble Machine Learning with Electron Configuration: A New Paradigm for Predicting Thermodynamic Stability in Materials and Drug Discovery

Predicting thermodynamic stability is a critical yet resource-intensive challenge in materials science and drug development.

Ensemble Machine Learning with Electron Configuration: A New Paradigm for Predicting Thermodynamic Stability in Materials and Drug Discovery

Abstract

Predicting thermodynamic stability is a critical yet resource-intensive challenge in materials science and drug development. This article explores a cutting-edge approach that integrates ensemble machine learning with fundamental electron configuration data to accurately and efficiently forecast stability. We cover the foundational principles of using electron configurations as low-bias model inputs, detail the methodology of stack generalization frameworks like ECSG that combine diverse knowledge domains, and address troubleshooting for data-scarce scenarios. The discussion includes rigorous validation against DFT calculations, demonstrating superior performance with significantly less data. For researchers and drug development professionals, this synergy of AI and quantum mechanics offers a powerful tool to accelerate the discovery of stable inorganic compounds and bioactive molecules.

The Quantum Mechanical Basis: Why Electron Configuration is Key to Stability Prediction

Fundamental Principles of Thermodynamic Stability

Thermodynamic stability describes the state of a material or compound where it exists at its lowest energy level under given conditions, indicating its inherent resistance to decomposition or transformation. In materials science, this is quantitatively assessed by the decomposition energy (ΔHd), the energy difference between a compound and its competing phases in a phase diagram [1]. For proteins, stability is commonly defined by the folding free energy (ΔGfold), the free energy difference between the folded native state and the unfolded denatured state [2]. These quantitative metrics provide the foundation for predicting behavior, guiding synthesis, and ensuring functional performance across scientific and industrial applications.

Metastability represents a crucial intermediate state where a system possesses kinetic durability but not absolute thermodynamic stability. Diamonds serve as a classic example—they are metastable relative to graphite under ambient conditions yet persist indefinitely due to high kinetic barriers to transformation [3]. Recent research has identified engineered materials that exhibit flipped thermodynamic responses in metastable states, expanding potential applications [3].

Thermodynamic Stability in Materials Science: Applications and Protocols

Advanced Materials with Tailored Stability

Revolutionary research has uncovered materials exhibiting negative thermal expansion (shrinking when heated) and negative compressibility (expanding when crushed) in metastable states [3]. These properties, which seemingly defy conventional thermodynamics, enable unprecedented control over material behavior. Potential applications include:

- Zero-thermal-expansion materials for construction, eliminating thermal deformation [3]

- Structural batteries where aircraft fuselages double as energy storage components [3]

- Self-resetting EV batteries that can be restored to original performance through voltage activation [3]

Protocol: Assessing Material Stability via DFT Calculations

Objective: Determine thermodynamic stability of inorganic compound surfaces using density functional theory (DFT).

Materials and Computational Tools:

- DFT simulation software (VASP, Quantum ESPRESSO)

- Crystal structure databases

- Thermodynamic modeling environment

Methodology:

- Surface Model Construction: Create slab models of the crystal surface with various atomic terminations. For Bi₂WO₆ (001), five different surface terminations were evaluated [4].

- Energy Calculation: Compute total Gibbs free energy of each slab model using DFT with appropriate functionals (GGA-PBE for geometry optimization, HSE06 for electronic properties) [4].

- Chemical Potential Definition: Establish chemical potentials (μ) for constituent elements under specific environmental conditions, including temperature and oxygen partial pressure [4].

- Surface Energy Determination: Calculate surface Gibbs free energy using: γ = (1/2A)[Gslab - Σniμi] where A is surface area, Gslab is slab energy, and n_i are atom counts [4].

- Phase Diagram Construction: Plot surface energies as functions of chemical potentials to identify stable terminations under specific conditions [4].

Interpretation: The termination with lowest surface energy under given environmental conditions represents the thermodynamically preferred structure, guiding material design for specific applications.

Figure 1: Computational workflow for determining material surface stability using DFT and thermodynamic calculations.

Ensemble Machine Learning for Stability Prediction

The ECSG (Electron Configuration models with Stacked Generalization) framework integrates three complementary models to predict inorganic compound stability with superior accuracy (AUC = 0.988) and data efficiency [1]:

- ECCNN (Electron Configuration CNN): Processes fundamental electron configuration data using convolutional neural networks

- Magpie: Utilizes statistical features of elemental properties

- Roost: Employs graph neural networks to model interatomic interactions

This ensemble approach mitigates individual model biases and demonstrates exceptional sample efficiency, achieving comparable performance with only one-seventh the data required by conventional models [1].

Thermodynamic Stability in Pharmaceutical Development: Applications and Protocols

Pharmaceutical Stability Challenges

Over 90% of newly developed active pharmaceutical ingredients (APIs) face challenges with low solubility and bioavailability, making thermodynamic research crucial for product development [5]. Stability directly impacts drug safety, efficacy, and shelf life, with most disease-associated human single-nucleotide polymorphisms destabilizing protein structure [2].

Protocol: Comprehensive Pharmaceutical Stability Assessment

Objective: Evaluate API stability under various stress conditions using STABLE framework.

Materials:

- API reference standard

- Stress agents: HCl, NaOH, H₂O₂, organic solvents

- Controlled environment chambers (thermal, photostability)

- HPLC/UPLC with validated stability-indicating methods

Methodology:

- Sample Preparation: Prepare API solutions at multiple concentrations (typically 0.1-1 mg/mL) in appropriate solvents.

- Stress Application:

- Acid Hydrolysis: Expose to 0.1-1 M HCl at 25-80°C for 24 hours [6]

- Base Hydrolysis: Treat with 0.1-1 M NaOH at 25-80°C for 24 hours [6]

- Oxidative Stress: Incubate with 0.1-3% H₂O₂ at room temperature for 24 hours [6]

- Thermal Stress: Subject solid and solution states to 40-80°C for specified durations [6]

- Photostability: Expose to calibrated UV/visible light (ICH Q1B conditions) [6]

- Neutralization: Quench reactions at appropriate timepoints using acid/base or antioxidants.

- Analysis: Employ chromatographic (HPLC/UPLC) techniques to quantify residual API and degradation products.

- Scoring: Apply STABLE scoring system to categorize stability across all conditions.

Interpretation: Degradation ≤10% under harsh conditions indicates high stability, while >20% degradation suggests significant instability requiring formulation intervention [6].

Figure 2: Pharmaceutical stability assessment workflow using the STABLE framework to evaluate multiple stress conditions.

Computational Protein Stability Analysis

Quantitative analysis reveals that computational stability predictors often favor mutations that increase stability at the expense of solubility, and mutations predicted to stabilize are experimentally near neutral on average [7]. The Matthews correlation coefficient (MCC) provides a more reliable performance metric than classification accuracy for evaluating stability prediction tools [7]. Combining multiple mutations significantly improves prospects for achieving stabilization targets [7].

Table 1: Machine Learning Approaches for Thermodynamic Stability Prediction

| Method | Basis | Key Features | Performance | Applications |

|---|---|---|---|---|

| ECSG Framework [1] | Ensemble learning with stacked generalization | Combines ECCNN, Magpie, Roost; reduces inductive bias | AUC = 0.988; 7x data efficiency | Discovering 2D wide bandgap semiconductors, double perovskite oxides |

| ECCNN [1] | Electron configuration | Uses intrinsic atomic characteristics; convolutional neural networks | High accuracy with minimal features | Fundamental property-stability relationships |

| Roost [1] | Graph neural networks | Models crystal structure as complete graph; attention mechanism | Captures interatomic interactions | Complex multi-element compounds |

| Magpie [1] | Elemental property statistics | Uses atomic number, mass, radius statistics; gradient-boosted trees | Broad feature coverage | High-throughput screening |

Table 2: Pharmaceutical Stability Scoring System (STABLE Framework) [6]

| Stress Condition | Experimental Parameters | Stability Scoring Criteria | High Stability Examples |

|---|---|---|---|

| Acid Hydrolysis | 0.1-1 M HCl; 24h; 25-80°C | ≤10% degradation under harsh conditions (>5M HCl, 24h reflux) | Maximum score for exceptional acid resistance |

| Base Hydrolysis | 0.1-1 M NaOH; 24h; 25-80°C | ≤10% degradation under harsh conditions (>5M NaOH, 24h reflux) | Maximum score for exceptional base resistance |

| Oxidative Stress | 0.1-3% H₂O₂; 24h; RT | Minimal degradation under aggressive conditions | Compounds with oxidation-resistant functional groups |

| Thermal Stress | 40-80°C; solid/solution states | Maintains integrity at elevated temperatures | Thermally stable molecular structures |

| Photostability | ICH Q1B conditions | Resists UV/visible light degradation | Compounds without chromophores |

Essential Research Reagents and Computational Tools

Table 3: Scientist's Toolkit for Thermodynamic Stability Research

| Tool/Reagent | Function | Application Context |

|---|---|---|

| DFT Software (VASP, Quantum ESPRESSO) | First-principles energy calculations | Materials surface stability, electronic properties [4] |

| STABLE Framework | Standardized pharmaceutical stability scoring | Comprehensive API stability profiling [6] |

| HPLC/UPLC with PDA/MS detection | Separation and quantification of degradation products | Pharmaceutical stress testing [6] |

| Controlled Environment Chambers | Precise temperature and humidity regulation | Accelerated stability studies [6] |

| ECSG Machine Learning Framework | Ensemble prediction of compound stability | High-throughput materials discovery [1] |

| Calibrated Light Sources | Controlled photostress exposure | Pharmaceutical photostability testing [6] |

| Denaturants (Urea, Guandinium HCl) | Protein unfolding agents | Thermodynamic stability measurements [2] |

The discovery of new materials with tailored properties is a cornerstone of advancement in fields ranging from drug development to renewable energy. For decades, Density Functional Theory (DFT) has served as a primary computational tool for predicting material properties and thermodynamic stability from first principles. However, its computational cost and inherent theoretical limitations constrain its effectiveness for high-throughput screening of large compositional spaces [1]. Concurrently, the rise of machine learning (ML) offers a faster alternative, but its success is often hampered by inductive biases introduced through model architectures and hand-crafted features, which can limit generalizability [1]. This creates a critical methodological challenge: how to reliably and efficiently predict material stability. Framed within ongoing research on ensemble machine learning for electron configuration thermodynamic stability, this analysis examines the specific limitations of traditional DFT and single-model ML approaches. It further explores how emerging ensemble methods, which integrate knowledge from multiple physical scales, are providing a path toward more robust and efficient predictive frameworks.

The Limitations of Density Functional Theory (DFT)

DFT is a quantum mechanical modelling method used to investigate the electronic structure of many-body systems, primarily their ground state [8]. Its versatility has made it immensely popular in physics, chemistry, and materials science. The core strength of DFT lies in its theoretical foundation: the Hohenberg-Kohn theorems establish that all properties of a many-electron system are uniquely determined by its ground-state electron density. This reduces the problem of 3N spatial coordinates (for N electrons) to just three coordinates, drastically lowering the computational cost compared to traditional ab initio methods like Hartree-Fock that deal directly with the many-electron wavefunction [8].

Despite being an exact theory in principle, the practical application of DFT relies on approximations for the exchange-correlation functional. It is these Density Functional Approximations (DFAs), not DFT itself, that are the source of most failures [9].

Table 1: Common Failures of Density Functional Approximations (DFAs)

| Failure Type | Description | Underlying Cause |

|---|---|---|

| Intermolecular Interactions | Poor description of van der Waals forces (dispersion) and hydrogen bonding [8]. | Incomplete treatment of non-local electron correlation effects. |

| Strongly Correlated Systems | Inaccurate results for systems with localized d- or f-electrons (e.g., many transition metal oxides) [9]. | Standard DFAs struggle with near-degeneracies and static correlation. |

| Charge Transfer Excitations | Severe underestimation of energies for excitations where electron density shifts significantly in space [8]. | Incorrect long-range behavior of the exchange potential. |

| Band Gaps | Systematic underestimation of the band gap in semiconductors and insulators [8]. | Self-interaction error and derivative discontinuities. |

| Reaction Barriers | Tendency to underestimate activation energies for chemical reactions [9]. | Inaccurate description of the exchange-correlation energy along the reaction path. |

A significant weakness of the DFA approach is that it is not systematically improvable. Unlike wavefunction-based methods like Coupled-Cluster, where a clear hierarchy (e.g., CCSD, CCSD(T)) exists to approach the exact solution, there is no guaranteed path for DFAs; a "higher-rung" functional on Jacob's Ladder is not certain to yield a more accurate answer for a given system [9]. Furthermore, DFT calculations consume substantial computational resources, creating a bottleneck for the rapid exploration of vast compositional spaces, such as those found in high-entropy alloys or novel perovskite compounds [1].

The Challenge of Inductive Bias in Machine Learning Models

Machine learning presents a promising avenue for circumventing the high computational cost of DFT. By learning patterns from existing DFT or experimental databases, ML models can predict thermodynamic stability orders of magnitude faster. A key step in developing these models is feature engineering, where a material's composition or structure is converted into a numerical representation. However, this process often introduces strong inductive biases—inherent assumptions that guide the learning algorithm toward specific solutions.

Inductive bias in ML for materials science often stems from the domain knowledge used to create input features. While necessary, these assumptions have limited applicability and can result in poor generalization if they do not fully capture the underlying physics [1]. The following table summarizes common biases and their potential impacts.

Table 2: Sources and Impacts of Inductive Bias in ML for Materials Stability

| Source of Bias | Description | Potential Impact on Model |

|---|---|---|

| Feature Selection | Using hand-crafted features based on specific elemental properties (e.g., Magpie features like atomic radius, electronegativity) [1]. | Model performance is capped by the informational content of the selected features; may miss crucial electronic-level information. |

| Architectural Assumptions | Imposing structural priors, e.g., modeling a crystal as a complete graph of interacting atoms (as in Roost) [1]. | May incorrectly model weak or non-existent interactions, leading to over-smoothing or inaccurate relationship learning. |

| Data Scarcity & Imbalance | Training sets are often biased toward common or easily synthesizable materials, with a scarcity of stable compounds [10]. | Models become adept at identifying unstable compounds but perform poorly at predicting the rare, stable ones, which are often the target of discovery [10]. |

For example, a model like ElemNet, which uses only elemental compositions, introduces a large inductive bias by assuming material properties are solely determined by elemental fractions, ignoring the intricate effects of electron configuration and interatomic interactions [1]. This can limit its predictive accuracy and generalizability to new, unexplored regions of chemical space.

Ensemble ML and Electron Configuration: A Path Forward

To mitigate the limitations of both DFAs and single-biased ML models, ensemble methods that integrate diverse physical knowledge offer a compelling solution. The core idea is to combine models grounded in different domain knowledge—such as interatomic interactions, atomic properties, and electron configuration—into a single, more robust framework. This approach, known as stacked generalization, creates a "super learner" that compensates for the individual biases of its base models [1].

A key advancement is the direct incorporation of electron configuration (EC) as an intrinsic material representation. Unlike hand-crafted features, electron configuration describes the fundamental distribution of electrons in an atom's energy levels, which is the very basis for quantum mechanical calculations of ground-state energy in DFT [8] [11]. Using EC as an input feature provides the model with a more foundational physical description, potentially reducing spurious inductive biases.

The workflow for one such framework, the Electron Configuration models with Stacked Generalization (ECSG), is illustrated below. It demonstrates how an ensemble can synergistically combine knowledge from different physical scales.

Experimental Protocol: Building the ECSG Ensemble Model

Objective: To train an ensemble model for predicting the thermodynamic stability of inorganic compounds, achieving high accuracy with minimal data.

Materials and Reagents (Computational):

- Data Source: The Joint Automated Repository for Various Integrated Simulations (JARVIS) database, or similar (e.g., Materials Project, OQMD) [1].

- Representation: Chemical formulas of inorganic compounds and their corresponding decomposition energies (ΔH_d).

- Software: Python with deep learning libraries (e.g., PyTorch, TensorFlow) and materials informatics tools (pymatgen).

Methodology:

Data Curation and Preprocessing:

- Collect formation energies and calculate the decomposition energy (ΔH_d) relative to the convex hull to determine stability labels (stable/unstable) [1].

- Split the dataset into training and test sets, ensuring no data leakage by clustering compounds by structural similarity and ensuring sequences from the same cluster are contained within a single split [10].

Base-Level Model Training (Heterogeneous Knowledge Integration):

- Train ECCNN (Electron Configuration Convolutional Neural Network):

- Input Encoding: Convert the chemical formula into a 118 (elements) × 168 (electron orbitals) × 8 (features) matrix. The features represent the electron occupancy for each orbital quantum number (s, p, d, f) for each element in the compound [1].

- Architecture: Use two convolutional layers (64 filters, 5×5 kernel) for feature extraction, followed by batch normalization, max-pooling, and fully connected layers.

- Train Roost (Representations from Overlap of Site Tensors):

- Input Encoding: Represent the chemical formula as a graph where atoms are nodes and bonds are edges.

- Architecture: Employ a graph neural network with message-passing and attention mechanisms to capture interatomic interactions [1].

- Train Magpie (Materials Agnostic Platform for Informatics and Exploration):

- Input Encoding: Calculate a set of statistical features (mean, range, mode, etc.) from a list of elemental properties (e.g., atomic number, radius, electronegativity) for the compound [1].

- Architecture: Train a Gradient-Boosted Regression Tree (XGBoost) model on these feature vectors.

- Train ECCNN (Electron Configuration Convolutional Neural Network):

Stacked Generalization (Meta-Learning):

- Use the predictions of the three base models (ECCNN, Roost, Magpie) on the training set as input features for a meta-learner (e.g., a linear model or another shallow neural network).

- Train this meta-learner to produce the final, refined stability prediction [1].

Validation and Testing:

- Evaluate the ensemble model on the held-out test set using metrics such as Area Under the Curve (AUC), precision, and recall. The ECSG model has been shown to achieve an AUC of 0.988 [1].

- Validate computational predictions with targeted DFT calculations on newly predicted stable compounds to confirm their stability on the convex hull [1].

Research Reagent Solutions

Table 3: Essential Computational Tools for Ensemble ML in Materials Stability

| Item Name | Function/Description | Relevance to Research |

|---|---|---|

| JARVIS/MP/OQMD Databases | Large-scale repositories of DFT-calculated material properties. | Provide the essential training data (formation energies, band structures) for supervised learning models [1]. |

| Electron Configuration Encoder | Algorithm to convert a chemical formula into a structured matrix representing orbital occupation. | Provides a foundational, low-bias input representation for ML models, directly related to quantum mechanical states [1]. |

| Graph Neural Network (GNN) | A neural network architecture that operates on graph-structured data. | Captures complex interatomic interactions and local coordination environments within a crystal structure (e.g., as used in Roost) [1]. |

| Stacked Generalization Framework | A meta-learning algorithm that combines multiple base models. | Mitigates individual model bias and leverages synergistic effects between different physical representations to boost predictive performance [1]. |

The limitations of traditional DFT and single-model ML are significant but not insurmountable. DFAs, while powerful, fail systematically for certain classes of materials and are computationally expensive for vast compositional searches. Standard ML models, in turn, are often hindered by inductive biases introduced through their design and feature sets. The path forward, as evidenced by recent research, lies in ensemble frameworks like ECSG that strategically integrate diverse physical knowledge—from atomic statistics and graph-based interactions to the fundamental principles of electron configuration. By doing so, these hybrid models achieve a remarkable balance: they retain the high speed of ML while significantly improving accuracy, robustness, and data efficiency. This paradigm shift from relying on a single, biased method to employing a committee of expert models is crucial for accelerating the reliable discovery of new, thermodynamically stable materials for advanced applications.

The discovery and development of new functional materials are often hindered by the vastness of compositional space. Traditional methods for assessing key properties, such as thermodynamic stability, through experimentation or density functional theory (DFT) calculations are resource-intensive and slow [1]. Machine learning (ML) offers a promising alternative, yet many models incorporate significant inductive bias by relying on specific domain knowledge or idealized assumptions about material composition and structure, which can limit their predictive accuracy and generalizability [1].

Electron configuration (EC)—the distribution of electrons in atomic orbitals—represents a fundamental, intrinsic property of elements. It forms the physical basis for chemical bonding and reactivity. Using EC as a primary input for machine learning models minimizes the need for manually crafted, theory-laden features, thereby reducing inductive bias [1]. This approach allows models to learn the underlying physical relationships directly from the foundational principles of quantum mechanics. When integrated into ensemble learning frameworks, EC-based models can achieve remarkable predictive accuracy and data efficiency, accelerating the exploration of new materials with desired properties [1] [12].

Theoretical Foundation & Rationale

Electron Configuration as a Low-Bias Descriptor

In computational materials science, a "descriptor" is a numerical representation of a material that serves as input for a model. Many traditional descriptors for inorganic compounds are derived from elemental properties (e.g., atomic radius, electronegativity) or structural features, which inherently embed specific hypotheses about property-structure relationships [1] [12].

Electron configuration provides a more foundational description. It delineates the distribution of electrons within an atom, encompassing energy levels and electron counts at each level, which are crucial for comprehending chemical properties and reaction dynamics [1] [13]. The electron configuration of an atomic species, whether neutral or ionic, provides deep insight into the shape and energy of its electrons, directly influencing bonding ability, magnetism, and other chemical properties [13].

By using the raw electron configuration as a descriptor, researchers bypass many assumptions required by other models. For instance, models that rely solely on elemental composition fractions cannot handle new elements absent from their training data, and graph-based models may impose specific but incomplete relationship paradigms between atoms in a unit cell [1]. EC serves as an intrinsic characteristic that introduces fewer such inductive biases, allowing the ML algorithm to discover patterns that might be obscured by pre-selected feature sets [1].

Quantum Mechanical Basis

The electron configuration is directly derived from the solutions to the Schrödinger equation for atoms and is described by four quantum numbers [13]:

- Principal Quantum Number (n): Indicates the shell or energy level, defining the overall energy and size of the orbital (n = 1, 2, 3...).

- Orbital Angular Momentum Quantum Number (l): Indicates the subshell and shape of the atomic orbital (l = 0 for s, 1 for p, 2 for d, 3 for f...).

- Magnetic Quantum Number (mₗ): Specifies the orientation of the orbital in space (mₗ = -l, ..., +l).

- Spin Magnetic Quantum Number (mₛ): Describes the electron's intrinsic spin.

This quantum mechanical foundation makes EC a natural input for predicting properties that originate from electronic interactions, forming the basis for first-principles calculations like DFT [1] [13].

Implementing EC-Based Machine Learning

Data Acquisition and Preprocessing

The first step involves building a comprehensive dataset from established materials databases. Key resources include:

- Materials Project (MP)

- Open Quantum Materials Database (OQMD)

- JARVIS Database

These databases provide formation energies, decomposition energies (ΔHd), and structural information for thousands of computed compounds, serving as ground truth for training stability prediction models [1].

Protocol: Encoding Electron Configuration for ML Input A critical step is transforming the elemental composition of a compound into a numerical matrix representation based on electron configuration.

- Elemental Breakdown: Parse the chemical formula of a compound to identify the constituent elements and their proportions.

- EC Matrix Construction: For each element in the periodic table (typically Z=1 to 118), create a comprehensive binary vector representing its electron occupancy across orbitals. The ECCNN model, for example, uses an input matrix of dimensions 118 × 168 × 8, representing 118 elements, 168 orbital blocks, and 8 bits per block for fine-grained electron occupancy information [1].

- Compositional Aggregation: For a given compound, aggregate the EC matrices of its constituent elements, weighted by their stoichiometric proportions, to form a final input representation that encapsulates the overall electronic structure of the material.

Table 1: Key Research Reagent Solutions (Computational Tools & Databases)

| Item Name | Function/Application | Key Features |

|---|---|---|

| Materials Project (MP) Database | Repository of computed materials properties for training and validation. | Provides formation energies, band structures, and other DFT-calculated properties for over 100,000 materials [1]. |

| JARVIS Database | Source of datasets for benchmarking model performance. | Includes thermodynamic stability data for inorganic compounds [1]. |

| Magpie Descriptor Tool | Generates statistical features from elemental properties. | Calculates mean, deviation, range, and other statistics for atomic properties, serving as a baseline or ensemble model [1]. |

| matminer | Open-source toolkit for materials data mining. | Provides a platform for feature extraction and generating descriptors for inorganic compounds [12]. |

Model Architectures and Workflows

Different neural network architectures can be employed to process the encoded electron configuration information.

Protocol: Building an Electron Configuration Convolutional Neural Network (ECCNN) The ECCNN is designed to learn hierarchical features from the EC matrix [1].

- Input Layer: Accepts the encoded EC matrix (e.g., 118 × 168 × 8).

- Convolutional Layers: Typically, two convolutional operations with 64 filters of size 5×5 are used. These layers detect local patterns and correlations between different orbitals and elements.

- Batch Normalization (BN): Inserted after convolutional layers to stabilize and accelerate training.

- Pooling Layer: A 2×2 max pooling layer follows to reduce dimensionality and introduce translational invariance.

- Fully Connected Layers: The extracted features are flattened and passed through one or more dense layers to map the learned features to the final prediction (e.g., decomposition energy or stability class).

Diagram 1: ECCNN model workflow for stability prediction.

Ensemble Framework: Stacked Generalization

To further mitigate bias and enhance robustness, EC-based models can be integrated into an ensemble framework. The Stacked Generalization (SG) technique combines models rooted in diverse knowledge domains, allowing them to complement each other [1].

Protocol: Constructing an Ensemble with Stacked Generalization The Electron Configuration models with Stacked Generalization (ECSG) framework integrates multiple base models [1].

Base-Level Model Selection: Choose three models that operate on different principles:

- ECCNN: Leverages intrinsic electron configuration information [1].

- Roost: Represents the chemical formula as a graph of interacting atoms, using message-passing graph neural networks to capture interatomic interactions [1].

- Magpie: Utilizes statistical features (mean, deviation, range, etc.) computed from a wide array of elemental properties (e.g., atomic number, mass, radius) [1].

Base-Level Training: Train each of these base models independently on the same training dataset.

Meta-Level Dataset Creation: Use the predictions from the trained base models on a validation set (or via cross-validation) as input features for a new "meta-level" dataset. The true target values are retained as labels.

Meta-Learner Training: Train a relatively simple model (the "meta-learner" or "super learner") on this new dataset. This model learns to optimally combine the predictions of the base models to produce a final, more accurate, and robust prediction [1].

Diagram 2: Stacked generalization ensemble framework.

Performance and Validation

The performance of EC-based models and their ensembles has been rigorously validated against standard benchmarks and first-principles calculations.

Table 2: Quantitative Performance of EC-Based ML Models

| Model / Framework | Application / Dataset | Key Performance Metrics | Comparative Advantage |

|---|---|---|---|

| ECSG (Ensemble) [1] | Thermodynamic stability prediction (JARVIS database) | AUC: 0.988 | Achieved same performance as existing models using only 1/7 of the data; successfully identified new 2D semiconductors and double perovskites validated by DFT. |

| ECCNN (Base Model) [1] | Thermodynamic stability prediction | High predictive accuracy within ensemble. | Reduces inductive bias by using fundamental EC input. |

| EC-based ANN [12] | Boiling Point (BP) prediction (537 compounds) | R²: 0.88, MAE: 222.65°C | Covers 87.5% of elements in periodic table; models complex electronic interactions. |

| EC-based ANN [12] | Melting Point (MP) prediction (1647 compounds) | R²: 0.89, MAE: 170.39°C | Covers 98% of elements (102/104); demonstrates wide applicability. |

Protocol: Validation via First-Principles Calculations Predictions of new stable materials made by ML models must be rigorously validated.

- Candidate Identification: Use the trained ECSG model to screen a large, unexplored compositional space and identify candidate compounds predicted to be thermodynamically stable.

- DFT Calculation Setup: Perform DFT calculations on the top candidates. This typically involves:

- Structure Generation: Proposing plausible crystal structures for the composition.

- Geometry Optimization: Relaxing the atomic positions and cell parameters to find the ground state energy.

- Stability Assessment: Calculating the decomposition energy (ΔHd) to determine if the compound lies on the convex hull of stable phases [1] [4].

- Result Comparison: Compare the DFT-calculated stability with the ML model's prediction. A high rate of confirmation indicates the model's remarkable accuracy and reliability for guiding materials discovery [1].

Application Notes

Case Study: Exploring Double Perovskite Oxides

The ECSG framework was applied to navigate the unexplored composition space of double perovskite oxides (A₂BB'O₆). The ensemble model screened numerous potential compositions, identifying several promising candidates predicted to be thermodynamically stable. Subsequent validation through DFT calculations confirmed the model's high accuracy, as a significant majority of the predicted compounds were correctly identified as stable [1]. This demonstrates the framework's utility in rapidly pinpointing synthesizable materials in complex chemical spaces with high reliability.

Integration with Other Workflows

The electron configuration descriptor is highly versatile. Beyond standalone stability prediction, it can be integrated into broader materials design workflows:

- Functional Property Prediction: EC-based models can be used to predict not just stability, but also electronic structure properties, which are critical for applications in photocatalysis and electronics [4].

- Guiding Synthesis: By quickly identifying stable compounds, these models can prioritize targets for experimental synthesis, saving time and resources [14].

- Multi-Objective Optimization: EC descriptors can be used in models that simultaneously optimize for stability, high specific capacitance (for supercapacitor electrodes), and other performance metrics, aiding in the rational design of advanced functional materials [14].

Electron configuration stands as a fundamental, low-bias descriptor that taps directly into the quantum mechanical origins of material behavior. Its implementation within neural network architectures like ECCNN, and particularly its integration into ensemble frameworks like ECSG, provides a powerful and data-efficient paradigm for accelerating materials discovery. This approach mitigates the inductive biases prevalent in models reliant on manually curated features, leading to superior predictive accuracy for thermodynamic stability and other properties. The successful validation of ML-predicted compounds via first-principles calculations underscores the maturity of this methodology, establishing electron configuration as a cornerstone for next-generation, physics-informed machine learning in materials science and drug development.

Ensemble learning has emerged as a cornerstone technique in machine learning, demonstrating remarkable efficacy in enhancing predictive performance and robustness. Its core philosophy is elegantly simple yet profoundly powerful: by combining multiple individual models, a collective intelligence is created that outperforms any single constituent model [15]. This approach is particularly transformative for scientific domains like materials informatics, where the accurate prediction of complex properties such as thermodynamic stability is paramount yet challenged by limited data and inherent biases in modeling approaches.

Within the specific context of predicting thermodynamic stability of inorganic compounds, ensemble models address a critical limitation of single-model approaches: the introduction of inductive biases through domain-specific assumptions [1]. Most existing models are constructed based on particular facets of domain knowledge, which can restrict their applicability and generalizability. Ensemble frameworks, particularly those utilizing stacked generalization, amalgamate models rooted in distinct knowledge domains—such as electron configuration, atomic properties, and interatomic interactions—to create a super learner that mitigates these individual biases and harnesses synergistic effects [1]. This review details the application notes and experimental protocols for implementing such ensemble approaches, with a specific focus on their capacity to mitigate bias and improve generalization in electron configuration-based thermodynamic stability research.

Application Notes: Ensemble Frameworks in Practice

Core Ensemble Architectures for Stability Prediction

The implementation of ensemble methods for thermodynamic stability prediction leverages several distinct architectural paradigms, each with unique mechanisms for improving model performance.

Stacked Generalization (ECSGFramework): This sophisticated approach integrates multiple base models with a meta-learner that optimally combines their predictions. In practice for stability prediction, this involves training diverse base models like Magpie (leveraging atomic property statistics), Roost (modeling interatomic interactions via graph neural networks), and ECCNN (utilizing raw electron configuration data) [1]. The predictions from these models then serve as input features for a meta-model (often a linear classifier or simple neural network) that learns the optimal weighting scheme to produce final stability classifications. This method recognizes that different models excel under different conditions, and a learned combination leverages these complementary strengths more effectively than predetermined strategies [15] [16].

Bagging (Bootstrap Aggregating): This technique reduces variance by creating multiple versions of the training dataset through bootstrap sampling—randomly selecting observations with replacement—and training a separate model on each version [15] [16]. The predictions are then aggregated, typically through averaging for regression tasks or majority voting for classification problems. Random Forests represent the most prominent application of bagging in materials informatics, combining bagging with random feature selection to force diversity among constituent decision trees [15].

Boosting: This sequential approach builds models iteratively, with each new model focusing on correcting errors made by previous ones [15] [16]. Gradient Boosting Machines frame this process as optimizing a loss function through gradient descent in function space, with implementations like XGBoost and LightGBM offering sophisticated handling of the tabular data structures common in materials property datasets [15].

Table 1: Quantitative Performance of Ensemble Methods for Thermodynamic Stability Prediction

| Ensemble Method | AUC Score | Data Efficiency | Key Advantages |

|---|---|---|---|

| ECSG (Stacking) | 0.988 [1] | 7x improvement (requires 1/7th the data of single models) [1] | Mitigates inductive bias from single knowledge domains |

| Random Forest (Bagging) | Varies by implementation | Moderate improvement | Robust to noisy features; handles mixed data types |

| Gradient Boosting (Boosting) | Typically 0.92-0.96 | High efficiency with appropriate regularization | Maximizes predictive accuracy on complex non-linear relationships |

Bias Mitigation Mechanisms

Ensemble methods provide powerful mechanisms for addressing various forms of bias that plague single-model approaches in computational materials science.

Inductive Bias Reduction: Single-model architectures often incorporate strong assumptions about the structure of materials data, such as Roost's assumption that all atoms in a unit cell have strong interactions [1]. By integrating multiple models with divergent assumptions, ensemble frameworks create a more balanced representation that prevents any single biased perspective from dominating predictions [1].

Representation Bias Mitigation: Models trained exclusively on specific types of features (e.g., only atomic properties) may develop blind spots for compounds where electron configuration plays a more decisive role in stability. The ECSG framework addresses this by incorporating the Electron Configuration Convolutional Neural Network (ECCNN), which uses raw electron configuration data as input—an intrinsic atomic characteristic that introduces fewer manual biases compared to hand-crafted features [1].

Algorithmic Bias Compensation: Different learning algorithms have distinct failure modes; decision trees may struggle with smooth boundaries, while neural networks might overfit to sparse regions of feature space. Ensembles leverage the "wisdom of crowds" effect, where uncorrelated errors from diverse models tend to cancel out, resulting in more robust overall predictions [15]. This statistical foundation explains why ensembles typically demonstrate superior generalization to unseen compositional spaces [17].

Experimental Protocols

Protocol 1: Implementing the ECSG Framework for Stability Prediction

The following protocol details the procedure for replicating the Electron Configuration models with Stacked Generalization (ECSG) approach for predicting thermodynamic stability of inorganic compounds [1].

Research Reagent Solutions Table 2: Essential Computational Resources and Tools

| Resource/Tool | Function | Specifications/Alternatives |

|---|---|---|

| JARVIS/MP/ OQMD Databases | Source of formation energies and stability labels | Materials Project (MP), Open Quantum Materials Database (OQMD) |

| Electron Configuration Encoder | Transforms composition to EC matrix | Custom Python implementation (118×168×8 matrix) [1] |

| Magpie Feature Set | Atomic property statistics | Mean, variance, mode, etc. of atomic properties [1] |

| Roost Model | Message-passing neural network | Graph attention for interatomic interactions [1] |

| ECCNN Architecture | Electron configuration processor | Two convolutional layers (64 filters, 5×5), BN, max pooling [1] |

| Meta-Learner | Stacking model | Logistic regression or simple neural network |

Procedure:

Data Preparation and Preprocessing

- Source: Acquire training data from databases such as the Joint Automated Repository for Various Integrated Simulations (JARVIS), Materials Project (MP), or Open Quantum Materials Database (OQMD) containing formation energies and decomposition energies (ΔH_d) for inorganic compounds [1].

- Labeling: Binary stability labels are derived from the decomposition energy, with ΔHd > 0 indicating instability and ΔHd ≤ 0 indicating stability [1].

- Splitting: Partition data into training (70%), validation (15%), and test (15%) sets using stratified sampling to maintain class balance.

Base Model Training

- Magpie Implementation: Compute statistical features (mean, mean absolute deviation, range, minimum, maximum, mode) for elemental properties including atomic number, mass, radius, etc. Train a Gradient Boosted Regression Tree (e.g., XGBoost) using these feature vectors [1] [15].

- Roost Implementation: Represent crystal structures as complete graphs with atoms as nodes. Implement a graph neural network with attention mechanisms to capture interatomic interactions. Train using the formation energy as the target [1].

- ECCNN Implementation:

- Input Encoding: Encode each compound's composition as a 118×168×8 matrix representing the electron configurations of constituent elements [1].

- Architecture: Process through two convolutional layers (64 filters of size 5×5), followed by batch normalization and 2×2 max pooling. Flatten features and pass through fully connected layers for final prediction [1].

- Training: Use Adam optimizer with cross-entropy loss for stability classification.

Stacked Generalization Implementation

- Prediction Collection: Generate out-of-fold predictions from each base model (Magpie, Roost, ECCNN) on the validation set.

- Meta-Feature Construction: Use these predictions as input features for the meta-learner.

- Meta-Learner Training: Train a logistic regression model or a simple neural network on these meta-features to learn optimal combination weights [1].

- Validation: Assess performance on the validation set using Area Under the Curve (AUC) metrics.

Model Evaluation

- Testing: Evaluate the final ECSG model on the held-out test set.

- Comparative Analysis: Compare performance against individual base models and traditional ensemble methods (bagging, boosting).

- Efficiency Assessment: Measure data efficiency by training on progressively smaller subsets and comparing performance degradation against single models.

Protocol 2: Bias Assessment and Mitigation Validation

This protocol provides a systematic approach for quantifying and mitigating biases in thermodynamic stability predictors, extending beyond standard performance metrics.

Research Reagent Solutions

- Bias Assessment Frameworks: PROBAST (Prediction model Risk Of Bias ASsessment Tool) or similar structured tools [18].

- Fairness Metrics: Demographic parity, equalized odds, equal opportunity differences [18].

- Compositional Subgroup Analysis: Tools for identifying underrepresented element combinations in training data.

Procedure:

Bias Identification

- Feature Representation Analysis: Audit training data for representation disparities across different regions of compositional space (e.g., oxides vs. sulfides, transition metal-rich vs. poor compounds).

- Performance Disparity Assessment: Evaluate model performance (accuracy, AUC) separately for different compositional subgroups to identify systematic performance gaps.

- Temporal Bias Check: Assess training-serving skew by comparing model performance on historical vs. newly synthesized compounds [18].

Bias Mitigation Implementation

- Diverse Ensemble Construction: Intentionally select base models with complementary inductive biases (atomic-scale, electronic structure, structural) to create natural compensation mechanisms [1].

- Reweighting Strategies: Apply instance weighting during meta-learner training to increase influence of predictions from specialized models for particular compositional subgroups.

- Adversarial Debiasing: Incorporate adversarial components during base model training to penalize representations that allow prediction of protected attributes (e.g., element group).

Validation and Iteration

- Generalization Testing: Evaluate ensemble performance on truly external datasets containing novel compound classes absent from training data.

- Ablation Studies: Systematically remove individual base models from the ensemble to quantify their contribution to bias reduction.

- Expert Validation: Compare ensemble predictions with first-principles DFT calculations for borderline cases to verify physicochemical plausibility [1].

Protocol 3: High-Dimensional Composition Space Exploration

This protocol leverages the improved generalization of ensemble models for navigating unexplored compositional spaces in search of novel stable compounds.

Research Reagent Solutions

- Composition Space Sampling: Tools for generating candidate compositions within specified constraints.

- First-Principles Validation: DFT calculation infrastructure for experimental validation.

- Uncertainty Quantification: Methods for estimating prediction confidence in ensemble models.

Procedure:

Candidate Generation

- Combinatorial Sampling: Systematically generate candidate compositions within target spaces (e.g., double perovskite oxides, 2D wide bandgap semiconductors) [1].

- Feature Encoding: Encode generated compositions using the same procedures as training (ECCNN matrices, Magpie features, Roost graphs).

Ensemble Screening

- Parallel Prediction: Process candidates through the trained ECSG ensemble to obtain stability predictions.

- Consensus Scoring: Apply meta-learner to combine base model predictions into final stability scores.

- Uncertainty Estimation: Calculate prediction variance across base models as a measure of confidence.

Validation and Discovery

- Priority Ranking: Rank candidates by stability score and prediction confidence.

- First-Principles Verification: Perform DFT calculations for top candidates to verify thermodynamic stability [1].

- Iterative Refinement: Incorporate newly verified stable compounds into training data to improve model performance in targeted regions of composition space.

Building the Framework: Architecting Ensemble Models for Real-World Applications

Stacked generalization, also known as stacking, is an advanced ensemble machine learning method that combines multiple predictive models through a meta-learner to minimize generalization error and enhance prediction accuracy. The technique operates by deducing the biases of various generalizers (base-level models) with respect to a provided learning set [19]. This approach has demonstrated remarkable success across diverse scientific domains, from predicting the thermodynamic stability of inorganic compounds using electron configuration data to forecasting psychosocial maladjustment in medical patients and estimating drug concentrations for precision dosing [1] [20] [21]. The fundamental principle underpinning stacked generalization is its ability to integrate models originating from distinct knowledge domains or algorithmic families, thereby creating a synergistic super-learner that outperforms any individual constituent model [1].

The Electron Configuration models with Stacked Generalization (ECSG) framework represents a cutting-edge implementation of this approach specifically designed for materials science applications. By leveraging ensemble machine learning based on electron configuration, ECSG achieves exceptional accuracy in predicting thermodynamic stability while requiring only one-seventh of the data used by existing models to achieve comparable performance [1]. This remarkable sample efficiency, coupled with an Area Under the Curve (AUC) score of 0.988 as validated against the Joint Automated Repository for Various Integrated Simulations (JARVIS) database, positions ECSG as a transformative methodology for accelerating materials discovery and optimization [1].

Theoretical Foundations of Stacked Generalization

Conceptual Framework and Mathematical Underpinnings

Stacked generalization functions through a two-tiered architecture: a base level comprising multiple heterogeneous learning algorithms, and a meta-level that learns how to optimally combine the base-level predictions [19]. Formally, given a set of base learners ( L1, L2, ..., Lk ) and a meta-learner ( M ), stacking generates the final prediction through the following process. First, each base learner ( Li ) is trained on the available data. Next, cross-validated predictions from each base learner are obtained, forming a new dataset where the features are the predictions of the base learners and the target remains the original outcome variable. Finally, the meta-learner ( M ) is trained on this new dataset to produce the final output [19].

The ECSG framework implements this approach using V-fold cross-validation to build the optimal weighted combination of predictions from a library of candidate algorithms [19]. Optimality is defined by a user-specified objective function, such as minimizing mean squared error or maximizing the area under the receiver operating characteristic curve. Theoretical guarantees ensure that in large samples, the resulting algorithm will perform at least as well as the best individual predictor included in the ensemble [19]. This mathematical foundation provides robustness against the limitations of any single modeling approach, particularly valuable when exploring complex composition-property relationships in materials science where mechanistic understanding may be incomplete [1].

Advantages Over Conventional Ensemble Methods

Stacked generalization offers distinct advantages over simpler ensemble techniques such as bagging or boosting. While homogeneous ensemble methods combine multiple instances of the same algorithm type, stacking strategically integrates fundamentally different modeling approaches, capturing complementary aspects of the underlying patterns in the data [20]. This heterogeneity is crucial for managing noisy and imbalanced datasets where single-classifier models often struggle with overfitting [21]. Additionally, the weighted combination approach of stacking is more nuanced than the winner-takes-all method of selecting a single best performer, often resulting in superior generalization to unseen data [21].

The non-negative least squares constraint frequently applied in stacking (requiring coefficients to be non-negative and sum to 1) enhances model stability and interpretability while maintaining performance [19]. This convex combination approach, motivated by both theoretical results and practical considerations, prevents overfitting on the meta-level and ensures that the ensemble prediction represents a consensus weighting of the constituent models rather than an arbitrary linear combination that might extrapolate poorly [19].

ECSG Framework Architecture

Base-Level Model Specifications

The ECSG framework integrates three distinct base-level models, each rooted in different domains of knowledge to ensure complementarity and minimize inductive bias [1]. This multi-perspective approach enables the capture of material properties and interactions across different scales, from atomic-level electron configurations to macroscopic statistical patterns.

Table 1: Base-Level Models in the ECSG Framework

| Model Name | Domain Knowledge | Algorithmic Approach | Input Representation |

|---|---|---|---|

| Magpie | Atomic properties & statistics | Gradient-boosted regression trees (XGBoost) | Statistical features (mean, deviation, range, etc.) of elemental properties |

| Roost | Interatomic interactions | Graph neural networks with attention mechanism | Chemical formula represented as a complete graph of elements |

| ECCNN | Electron configuration | Convolutional Neural Network | Electron configuration matrix (118×168×8) encoding electron distributions |

The Electron Configuration Convolutional Neural Network (ECCNN) represents a novel contribution specifically designed to address the limited understanding of electronic internal structure in existing models [1]. Unlike manually crafted features, electron configuration serves as an intrinsic atomic characteristic that introduces minimal inductive bias while providing fundamental information about chemical properties and reaction dynamics [1]. The ECCNN architecture processes its input through two convolutional operations, each with 64 filters of size 5×5, followed by batch normalization and 2×2 max pooling before final fully connected layers for prediction [1].

Meta-Learner Integration and Optimization

The meta-learner in ECSG synthesizes predictions from the three base models using stack generalization to produce the final stability classification. This approach employs V-fold cross-validation to generate out-of-sample predictions from each base model, which then serve as input features for training the meta-learner [19]. The specific implementation details of the ECSG meta-learner are adapted from established stacking methodologies that have demonstrated successful application across multiple scientific domains [19] [21].

In operational terms, the stacking process in ECSG follows a structured workflow that can be visualized as follows:

ECSG Stacking Workflow: This diagram illustrates the flow of information through the ECSG framework, from input data through base model processing to meta-learner integration and final prediction.

Experimental Protocols and Implementation

Data Preparation and Feature Engineering

The ECSG framework primarily utilizes composition-based data, requiring specialized processing of chemical formula information before model input [1]. The data extraction pipeline involves:

- Elemental Proportion Calculation: Determining the stoichiometric ratios of each element within the compound [1].

- Feature Representation: Transforming elemental proportions into model-specific input representations:

- For Magpie: Calculating statistical features (mean, mean absolute deviation, range, minimum, maximum, mode) across various elemental properties including atomic number, mass, and radius [1].

- For Roost: Representing the chemical formula as a complete graph where atoms serve as nodes with connecting edges [1].

- For ECCNN: Encoding materials into a 118×168×8 matrix based on their electron configurations, delineating electron distribution across energy levels [1].

This multi-faceted input representation strategy ensures that diverse aspects of material composition are captured, enabling the framework to leverage complementary information across different physical scales and theoretical perspectives [1].

Model Training and Validation Protocol

The implementation of ECSG follows a rigorous training and validation protocol adapted from established stacked generalization methodologies [19]:

- Data Partitioning: Split the dataset into K mutually exclusive and exhaustive folds (typically K=5 or K=10) [19].

- Base Model Training: For each fold k ∈ {1,...,K}:

- Designate fold k as the validation set and remaining folds as the training set.

- Fit each base algorithm (Magpie, Roost, ECCNN) on the training set.

- Use the trained models to predict outcomes for the validation set.

- For each algorithm, estimate performance using an appropriate metric (e.g., mean squared error for regression, AUC for classification) [19].

- Meta-Learner Construction:

- Average performance metrics across all folds for each algorithm.

- Combine cross-validated predictions from all base models to form the "level-one" dataset.

- Train the meta-learner on the level-one data, typically using constrained regression (non-negative coefficients summing to 1) to determine optimal combination weights [19].

- Final Model Generation:

- Re-fit all base models on the complete dataset.

- Generate predictions from these fully-trained base models.

- Combine these predictions using the weights learned by the meta-learner to produce the final ECSG model [19].

This protocol ensures robust performance estimation while minimizing overfitting, as each base model's predictions used for meta-learner training are based on data not used for model fitting [19].

Performance Evaluation Metrics

The ECSG framework employs comprehensive evaluation metrics to assess predictive performance across multiple dimensions:

Table 2: Performance Metrics for Stability Prediction

| Metric | ECSG Performance | Comparative Advantage | Application Context |

|---|---|---|---|

| Area Under Curve (AUC) | 0.988 [1] | Superior discriminative ability | Binary classification (stable/unstable) |

| Sample Efficiency | 1/7 data requirement [1] | Reduced computational resource needs | Data-scarce environments |

| First-Principles Validation | Remarkable accuracy [1] | Experimental verification | Real-world materials discovery |

These metrics demonstrate the compelling advantages of the ECSG approach, particularly its exceptional data efficiency which enables accurate predictions with substantially smaller training datasets compared to conventional methods [1].

Research Reagent Solutions

Implementing the ECSG framework requires specific computational tools and data resources that constitute the essential "research reagents" for reproducibility and extension of the methodology.

Table 3: Essential Research Reagents for ECSG Implementation

| Reagent Category | Specific Instances | Function/Purpose | Access Method |

|---|---|---|---|

| Computational Libraries | XGBoost, PyTorch/TensorFlow, Graph Neural Network libraries | Implement base models and meta-learning | Open-source platforms |

| Materials Databases | Materials Project (MP), Open Quantum Materials Database (OQMD), JARVIS | Provide training data and benchmark comparisons | Publicly accessible databases |

| Validation Tools | Density Functional Theory (DFT) codes (VASP, Quantum ESPRESSO) | First-principles validation of predictions [1] | Academic licenses |

| Electron Configuration Encoder | Custom matrix transformation (118×168×8) | Convert composition to ECCNN input format [1] | Implementation from source |

These computational reagents represent the essential infrastructure for implementing the ECSG framework, with particular importance placed on the materials databases for training and the DFT validation tools for confirming predictions [1].

Application Notes for Materials Research

Case Study: Two-Dimensional Wide Bandgap Semiconductors

The ECSG framework has been successfully applied to navigate unexplored composition spaces, including the discovery of new two-dimensional wide bandgap semiconductors [1]. The implementation protocol for this application involves:

- Problem Formulation: Define the target material class (2D wide bandgap semiconductors) and desired electronic properties.

- Composition Space Sampling: Systematically generate candidate compositions within the defined chemical space.

- High-Throughput Screening: Apply the pre-trained ECSG model to rapidly evaluate thermodynamic stability across the composition space.

- Candidate Selection: Identify promising compounds with predicted high stability and appropriate electronic properties.

- Experimental Validation: Confirm stability and properties through first-principles calculations, with reported results indicating "remarkable accuracy" in correctly identifying stable compounds [1].

This workflow demonstrates the practical utility of ECSG in accelerating materials discovery by prioritizing synthesis efforts toward the most promising candidates, substantially reducing the experimental resources required for exploration of new material systems [1].

Implementation Considerations for Different Material Classes

The ECSG framework exhibits versatility across diverse material systems, with implementation nuances for different classes:

Materials Discovery Pipeline: This workflow illustrates the generalized materials discovery process enhanced by the ECSG framework's stability predictions.

For perovskite materials, particularly lead-free variants being explored for next-generation applications, ECSG provides critical stability assessment capabilities [22]. The framework's ability to predict thermodynamic stability from composition alone is especially valuable for these systems, where stability represents a major bottleneck for practical implementation [22]. Similar advantages extend to other material classes including double perovskite oxides, which have been successfully investigated using the ECSG methodology [1].

The ECSG framework represents a significant advancement in computational materials science, integrating ensemble machine learning with electron configuration theory to achieve unprecedented accuracy and efficiency in predicting thermodynamic stability. By leveraging stacked generalization across complementary modeling approaches, ECSG effectively mitigates the inductive biases inherent in single-perspective models while providing robust predictions validated against first-principles calculations [1]. The framework's demonstrated success in identifying novel two-dimensional wide bandgap semiconductors and double perovskite oxides underscores its practical utility in accelerating materials discovery [1].

Future developments will likely focus on expanding the framework's applicability to dynamic stability assessment under non-equilibrium conditions, integrating kinetic factors alongside thermodynamic stability, and extending the approach to predict functional properties beyond stability. As materials databases continue to grow and computational methods evolve, the ECSG methodology provides a adaptable foundation for next-generation materials informatics, positioning stacked generalization as a cornerstone technique in the digital transformation of materials research and development.

The discovery and development of new materials with specific properties represent a significant challenge in materials science, primarily due to the vast, unexplored compositional space of potential compounds [1]. Conventional methods for determining key properties, such as thermodynamic stability, rely on inefficient experimental investigation or computationally intensive density functional theory (DFT) calculations [1]. Machine learning (ML) offers a promising avenue for expediting this discovery process, providing significant advantages in time and resource efficiency [1].

However, many existing ML models are constructed based on specific, idealized domain knowledge, which can introduce large inductive biases and limit their predictive performance and generalizability [1]. For instance, models that assume material performance is determined solely by elemental composition may overlook critical structural or electronic factors [1]. This application note details a robust ensemble framework that integrates diverse knowledge sources—from classical Magpie features to the relational graph-based approach of Roost—to mitigate these limitations. This methodology is contextualized within a broader research thesis on using ensemble machine learning, anchored by electron configuration data, for predicting thermodynamic stability.

Background and Core Concepts

The Challenge of Isolated Models

Training a machine learning model can be likened to a search for ground truth within the model's parameter space [1]. When a model is built on a single hypothesis or a narrow set of features, the ground truth may lie outside its searchable parameter space, leading to suboptimal accuracy [1]. This is particularly true in materials science, where the relationship between composition, structure, and properties is complex and not fully understood [1].

Our ensemble framework, termed Electron Configuration Stacked Generalization (ECSG), synergistically combines three distinct knowledge paradigms to create a more comprehensive and accurate super learner [1] [23]. The three base models are:

- Magpie: This model emphasizes statistical features derived from diverse elemental properties (e.g., atomic number, mass, radius, electronegativity). It uses statistical summaries (mean, deviation, range, etc.) across the elements in a compound and is typically implemented with gradient-boosted regression trees (XGBoost) [1]. It operates on a coarse, elemental-property level.

- Roost (Representation Learning from Stoichiometry): This model conceptualizes a chemical formula as a graph, where atoms are nodes and their interactions are edges. It employs graph neural networks (GNNs) with an attention mechanism to capture complex interatomic interactions and message-passing processes that are critical for thermodynamic stability [1].

- ECCNN (Electron Configuration Convolutional Neural Network): Developed to address the limited understanding of electronic internal structure in existing models, this model uses electron configuration as its foundational input [1]. Electron configuration is an intrinsic atomic property that delineates the distribution of electrons within an atom, providing crucial information for understanding chemical properties and reaction dynamics with minimal inductive bias [1].

Methodology and Experimental Protocols

The ECSG Ensemble Framework

The core of our approach is the stacked generalization technique [1]. The framework does not simply average the predictions of the base models but uses them as inputs to a meta-learner. The experimental protocol is as follows:

- Base Model Training: The three foundational models (Magpie, Roost, ECCNN) are trained independently on the same dataset. Each model learns to predict thermodynamic stability from its unique perspective.

- Prediction Generation: The trained base models are used to generate predictions on a hold-out validation set or via cross-validation.

- Meta-Model Training: The predictions from the base models are used as input features for a meta-level model (the super learner), which is trained to produce the final, refined prediction [1].

- Validation: The integrated ECSG model is validated against benchmark datasets, such as those from the Joint Automated Repository for Various Integrated Simulations (JARVIS) or Materials Project (MP), to evaluate its performance metrics, including Area Under the Curve (AUC) [1] [23].

This framework effectively mitigates the limitations of individual models by harnessing a synergy that diminishes inductive biases [1].

Protocol for Composition-Based Stability Prediction

This protocol is used when the crystal structure of a compound is unknown, and prediction must be based solely on its chemical formula.

- Input Data Preparation: Provide a CSV file containing at least two columns:

material-id(a unique identifier) andcomposition(the chemical formula, e.g.,Fe2O3) [23]. - Feature Processing:

- Option A (Runtime Processing): The ECSG code processes the CSV file and generates features for each model at runtime [23].

- Option B (Preprocessed Features): For large datasets, features can be precomputed and saved using the provided

feature.pyscript to reduce computation time during cross-validation [23].

- Model Execution:

- Software Environment: Install the ECSG package in a Python 3.8.0 environment with PyTorch 1.13.0 and required dependencies (e.g.,

pymatgen,matminer). Specific wheels are provided fortorch-scatter[23]. - Prediction Command: Use the command

python predict.py --path your_data.csvto generate stability predictions. Results are saved inresults/meta/[name]_predict_results.csv, with the stability outcome in thetargetcolumn [23].

- Software Environment: Install the ECSG package in a Python 3.8.0 environment with PyTorch 1.13.0 and required dependencies (e.g.,

Protocol for Structure-Enhanced Stability Prediction

For higher accuracy when crystal structure information is available, the ECSG framework can be extended to incorporate structural data.

- Input Data Preparation:

- Prepare a folder containing CIF (Crystallographic Information File) files for the materials to be predicted.

- Within this folder, include an

id_prop.csvfile listing the IDs of the CIF files. - Ensure an

atom_init.jsonfile is present for atom embedding [23].

- Model Execution:

Performance and Validation

Experimental results have validated the efficacy of the ECSG framework. The model achieved an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database, demonstrating high predictive accuracy [1] [23].

A key advantage of ECSG is its remarkable sample efficiency. The model attained performance equivalent to existing models using only one-seventh of the training data, which dramatically reduces the computational resources required for training [1].

The model's versatility was further demonstrated through practical case studies, where it facilitated the exploration of new two-dimensional wide bandgap semiconductors and double perovskite oxides. Subsequent validation using first-principles calculations confirmed the model's high reliability in correctly identifying stable compounds [1].

Table 1: Quantitative Performance Summary of the ECSG Framework

| Metric | Performance | Context & Comparison |

|---|---|---|

| Predictive Accuracy (AUC) | 0.988 | Achieved on the JARVIS database for thermodynamic stability prediction [1]. |

| Data Efficiency | Uses ~1/7 of the data | Requires only one-seventh of the data used by existing models to achieve the same performance [1]. |

| Validation Method | First-Principles Calculations | Applied to discovered compounds (e.g., 2D semiconductors, perovskites), confirming high reliability [1]. |

The ECSG Workflow Visualization

The following diagram illustrates the complete workflow of the ECSG framework, from data input to the final ensemble prediction.

To implement the ECSG framework and reproduce the experiments, the following software and data resources are essential.

Table 2: Key Research Reagent Solutions for ECSG Implementation

| Item Name | Type | Function & Application | Source / Example |

|---|---|---|---|

| Magpie Feature Set | Software Library / Feature Generator | Generates a vector of statistical summaries (mean, deviation, range, etc.) from a list of elemental properties for a given chemical composition [1]. | Matminer library [1]. |

| Roost Model | Graph Neural Network Model | Represents a chemical formula as a graph and uses message-passing with attention to model interatomic interactions for property prediction [1]. | Original Roost implementation [1]. |

| ECCNN Model | Convolutional Neural Network Model | Uses electron configuration matrices as input to capture intrinsic electronic structure information with minimal manual feature engineering [1]. | ECSG GitHub repository [23]. |

| JARVIS/MP Databases | Data Repository | Provides large-scale, curated datasets of inorganic materials with computed properties (e.g., formation energy, stability) for training and benchmarking ML models [1]. | JARVIS Database; Materials Project (MP) [1]. |

| Pymatgen | Software Library | A robust, open-source Python library for materials analysis, used for parsing CIF files, handling compositions, and core materials algorithms [23]. | Pymatgen Python package [23]. |

The integration of diverse knowledge sources—from the classical elemental statistics of Magpie to the advanced relational learning of Roost GNNs—within the ECSG ensemble framework represents a significant advancement in the machine learning-based prediction of materials properties. By effectively mitigating the inductive biases inherent in single-hypothesis models, this approach achieves superior predictive accuracy and exceptional data efficiency. The provided application notes and detailed protocols equip researchers and scientists with the tools to apply this powerful framework to their own investigations, accelerating the discovery of novel, thermodynamically stable materials for applications ranging from drug development to advanced semiconductors.

The accurate prediction of thermodynamic stability is a cornerstone of materials discovery and drug development. Traditional methods, whether experimental or computational, are often resource-intensive, creating a bottleneck in the exploration of novel chemical spaces. Machine learning (ML) offers a promising alternative; however, many models introduce significant inductive bias by relying on idealized assumptions or limited domain knowledge. The Electron Configuration Convolutional Neural Network (ECCNN) addresses this gap by using the fundamental electron configuration (EC) of atoms as a primary input feature. This approach is designed to minimize manual feature engineering, thereby reducing bias and capturing intrinsic atomic properties that are critically important for stability. Integrated within a stacked generalization framework, ECCNN contributes to a robust super learner for predicting compound decomposition energy ((\Delta H_{d})), achieving state-of-the-art performance with remarkable sample efficiency [1].

This application note details the architecture of ECCNN and provides a comprehensive protocol for encoding chemical compositions into its required input format, serving as an essential guide for researchers and scientists.

ECCNN Architecture and Workflow