Ensemble Machine Learning for Thermodynamic Stability Prediction: A Comparative Guide for Materials and Pharmaceutical Research

Predicting thermodynamic stability is a fundamental challenge in materials science and pharmaceutical development.

Ensemble Machine Learning for Thermodynamic Stability Prediction: A Comparative Guide for Materials and Pharmaceutical Research

Abstract

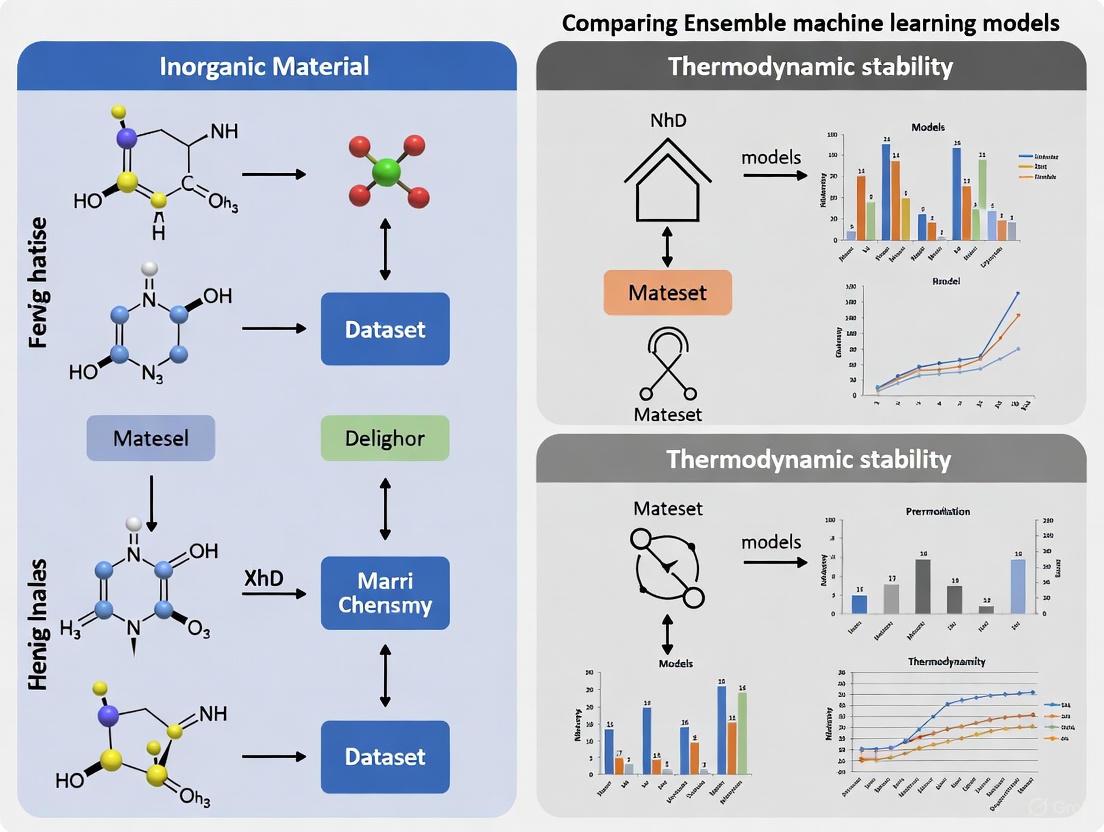

Predicting thermodynamic stability is a fundamental challenge in materials science and pharmaceutical development. This article provides a comprehensive comparison of ensemble machine learning models designed to accurately and efficiently determine the stability of compounds, from inorganic crystals to active pharmaceutical ingredients. We explore the foundational principles of these models, detail cutting-edge methodologies and their diverse applications, address critical troubleshooting and optimization strategies, and present a rigorous validation of model performance across various domains. Aimed at researchers and drug development professionals, this review synthesizes key insights to guide the selection and implementation of ensemble models, highlighting their transformative potential in accelerating the discovery of stable materials and drug formulations.

The Foundation of Stability: Why Ensemble Machine Learning is Revolutionizing Thermodynamic Prediction

Thermodynamic stability serves as a fundamental property that dictates the practical viability of substances across scientific and industrial domains. In materials science, it determines a compound's synthesizability and resistance to degradation under operating conditions, while in pharmaceuticals, it governs active pharmaceutical ingredient (API) solubility, shelf life, and bioavailability. This universal challenge has traditionally been addressed through resource-intensive experimental methods and computational approaches like density functional theory (DFT). However, a paradigm shift is underway with the emergence of ensemble machine learning (ML) models that integrate multiple algorithms and knowledge domains to achieve unprecedented predictive accuracy. This guide provides a comparative analysis of how these advanced computational frameworks are revolutionizing stability research across disciplines, offering researchers objective performance data and methodological insights to navigate this rapidly evolving landscape.

Ensemble Machine Learning Frameworks: A Comparative Analysis

Ensemble machine learning combines multiple models to enhance predictive performance and robustness beyond the capabilities of any single algorithm. In thermodynamic stability prediction, this approach effectively addresses limitations arising from limited data and inherent biases in individual models. The following analysis compares representative ensemble frameworks from materials science and pharmaceutical research.

Table 1: Comparative Analysis of Ensemble ML Frameworks for Stability Prediction

| Aspect | ECSG Framework (Materials Science) | Optimized Ensemble (Pharmaceutical Applications) |

|---|---|---|

| Primary Research Focus | Predicting thermodynamic stability of inorganic compounds [1] | Estimating drug solubility in supercritical CO₂ [2] |

| Constituent Models | Magpie, Roost, ECCNN [1] | XGBR, LGBR, CATr [2] |

| Integration Method | Stacked generalization [1] | Hybrid ensemble facilitated by bio-inspired optimization algorithms (APO, HOA) [2] |

| Key Performance Metrics | AUC = 0.988; High sample efficiency (1/7 data required) [1] | R² = 0.9920, RMSE = 0.08878 [2] |

| Domain Knowledge Integration | Electron configuration, atomic properties, interatomic interactions [1] | Temperature, pressure, molecular weight, melting point [2] |

| Interpretability Approach | Model-agnostic interpretation [1] | SHAP and FAST sensitivity analysis [2] |

| Uncertainty Quantification | Implicit through ensemble diversity [1] | Prediction intervals via bootstrapping [2] |

| Experimental Validation | Identification of stable compounds confirmed by DFT calculations [1] | Experimental solubility measurements for four drugs [2] |

The ECSG framework exemplifies the knowledge-amalgamation approach, integrating models rooted in distinct domains including electron configuration (ECCNN), elemental properties (Magpie), and interatomic interactions (Roost) [1]. This diversity enables the model to mitigate inductive biases that plague single-hypothesis models, particularly valuable for exploring uncharted compositional spaces where prior mechanistic understanding is limited. The framework's exceptional sample efficiency—achieving comparable performance with only one-seventh of the data required by existing models—makes it particularly suitable for materials discovery where experimental data is scarce [1].

In pharmaceutical applications, the optimized ensemble employing XGBR, LGBR, and CATr regressors demonstrates remarkable accuracy in predicting drug solubility in supercritical CO₂, a critical parameter for pharmaceutical processing [2]. The integration of bio-inspired optimization algorithms (APO and HOA) fine-tunes model parameters to capture complex non-linear solubility behaviors that traditional semi-empirical methods struggle to represent. This approach specifically addresses pharmaceutical engineering needs where predicting solubility under varying thermodynamic conditions (temperature and pressure) directly impacts process design and efficiency.

Experimental Protocols and Methodologies

Materials Stability Assessment Protocol

The experimental validation of computational stability predictions in materials science relies on a rigorous protocol centered on the energy above the convex hull (E(_{\text{hull}})) as a key thermodynamic metric [3] [4]. The following workflow outlines the standard methodology:

Standard Experimental Workflow for Materials Stability

Dataset Curation: Large-scale datasets of computed formation energies serve as the foundation for ML model training. For example, studies on actinide compounds utilize datasets containing 62,204 DFT-calculated energies sourced from databases like the Open Quantum Materials Database (OQMD) [5]. Similarly, research on halide double perovskites employs datasets with 469 A(2)B'BX(6) double perovskites with DFT-calculated E(_{\text{hull}}) values [3].

Feature Engineering: Models typically employ 145-200 features derived from elemental properties without structural information, making them applicable to materials composed of any number of elements [5]. These may include electron configuration attributes, atomic radii, electronegativity, and valence electron counts, often processed using statistical measures (mean, variance, range) across compound constituents [1] [3].

Stability Determination: The energy above the convex hull (E({\text{hull}})) serves as the primary stability metric, representing the energy difference between a compound and the most stable combination of competing phases at the same composition [3] [4]. Compounds with E({\text{hull}}) ≤ 0 are considered thermodynamically stable, while those with E(_{\text{hull}}) > 0 are metastable or unstable [4].

Experimental Synthesis & Validation: Predicted stable compounds proceed to synthesis attempts, with resulting materials characterized using X-ray diffraction (XRD) to confirm crystal structure and phase purity [3]. Additional experimental validation may include differential scanning calorimetry (DSC) for thermal stability assessment and long-term environmental testing for degradation resistance.

Pharmaceutical Stability and Solubility Assessment

In pharmaceutical applications, stability assessment encompasses both chemical stability under various stress conditions and solubility profiling for process optimization.

Solubility Measurement in Supercritical CO(2): The experimental determination of drug solubility in supercritical CO(2) follows a gravimetric approach using specialized high-pressure systems [6]. The standard methodology involves:

- System Preparation: A high-pressure equilibrium vessel with internal volume of 200 mL, capable of withstanding pressures up to 40 MPa and temperatures up to 423 K, is calibrated for pressure and temperature sensors [6].

- Sample Preparation: Exactly 2000 mg of API powder is compressed into tablets of uniform diameter (approximately 5 mm) to ensure consistent volume and placed in a basket within the vessel [6].

- CO(2) Introduction: High-purity CO(2) is gradually introduced using a high-precision pump, increasing pressure by 0.1 MPa increments until reaching target pressure (typically 10-30 MPa), maintained within ±0.01 MPa [6].

- Equilibration: The system operates with continuous stirring at 250 rpm for 300 minutes at controlled temperature (308-338 K) to reach solubility equilibrium [6].

- Sampling and Analysis: After equilibration, the system is depressurized, and the undissolved drug is measured gravimetrically using an analytical balance with 0.01 mg sensitivity. Solubility is calculated as mole fraction and mass fraction [6].

Forced Degradation Studies: Pharmaceutical stability under stress conditions follows standardized protocols assessed by tools like the Stability Toolkit for the Appraisal of Bio/Pharmaceuticals' Level of Endurance (STABLE) [7]. This framework evaluates five key stress conditions:

- Acid-Catalyzed Hydrolysis: Exposure to 0.1-1 M HCl at elevated temperatures (25-70°C) for 1-24 hours, followed by neutralization before analysis [7].

- Base-Catalyzed Hydrolysis: Treatment with 0.1-1 M NaOH or KOH under similar conditions as acid hydrolysis [7].

- Oxidative Stability: Subjection to oxidants like hydrogen peroxide (0.1-3%) or metal ions at room temperature for 24 hours [7].

- Thermal Stability: Storage at elevated temperatures (40-70°C) for extended periods [7].

- Photostability: Exposure to UV and visible light following ICH guidelines [7].

Degradation between 5-20% is generally considered acceptable for stability studies and validation of stability-indicating analytical methods [7].

Performance Metrics and Data Comparison

The predictive performance of ensemble ML models for thermodynamic stability is quantitatively assessed through standardized metrics across both materials and pharmaceutical domains.

Table 2: Quantitative Performance Metrics of Ensemble ML Models

| Application Domain | Model Architecture | Key Performance Metrics | Experimental Validation |

|---|---|---|---|

| Inorganic Compounds [1] | ECSG (Stacked Generalization) | AUC: 0.988, High sample efficiency | DFT confirmation of stable compounds |

| Actinide Compounds [5] | RF + NN Ensemble | R²: 0.92 (RF), 0.90 (NN) | Phase diagram prediction for nuclear fuels |

| Halide Double Perovskites [3] | XGBoost | RMSE: ~28.5 meV/atom, R²: 0.89, Accuracy: 0.93, F1: 0.88 | 22 new compounds with experimental validation |

| Drug Solubility in SC-CO₂ [2] | XGBR + LGBR + CATr (HOA optimized) | R²: 0.9920, RMSE: 0.08878 | Experimental solubility for 4 drugs (110 samples) |

| Sumatriptan Solubility [6] | PC-SAFT Equation of State | AARD: 11.75%, Rₐdⱼ: 0.988 | Experimental measurements (308-338 K, 10-30 MPa) |

The consistency of high performance across diverse material systems and pharmaceutical applications demonstrates the robustness of the ensemble approach. In materials science, the ECSG framework achieves exceptional accuracy (AUC = 0.988) in predicting stability of inorganic compounds while requiring only one-seventh of the data used by existing models to achieve comparable performance [1]. For halide double perovskites, the XGBoost model delivers strong regression (R² = 0.89) and classification (accuracy = 0.93) performance, successfully predicting the stability of 22 new experimental compounds [3].

In pharmaceutical applications, the optimized ensemble for drug solubility achieves near-perfect fit (R² = 0.9920) to experimental data, significantly outperforming traditional semi-empirical models like Chrastil and Bartle, which typically show higher error rates [2] [6]. The PC-SAFT equation of state demonstrates superior performance for sumatriptan solubility modeling compared to Peng-Robinson and Soave-Redlich-Kwong equations [6].

Table 3: Essential Research Resources for Thermodynamic Stability Studies

| Resource Category | Specific Tools & Databases | Primary Function | Domain Application |

|---|---|---|---|

| Computational Databases | OQMD [5], Materials Project [4], JARVIS [1] | Source of DFT-calculated formation energies for training ML models | Materials Science |

| Machine Learning Algorithms | XGBoost [2] [3], LightGBM [2], CatBoost [2], Random Forest [5] | Core predictive algorithms for stability and solubility | Cross-domain |

| Interpretability Frameworks | SHAP [2] [3] | Model interpretation and feature importance analysis | Cross-domain |

| Experimental Validation Systems | High-pressure solubility systems [6] | Experimental measurement of drug solubility in supercritical CO₂ | Pharmaceuticals |

| Stability Assessment Tools | STABLE toolkit [7] | Standardized evaluation of API stability under stress conditions | Pharmaceuticals |

| Phase Diagram Construction | pymatgen PhaseDiagram class [4] | Computational construction of phase diagrams from DFT energies | Materials Science |

The comparative analysis presented in this guide demonstrates that ensemble machine learning frameworks consistently outperform single-model approaches across both materials science and pharmaceutical domains. The ECSG framework's multi-knowledge integration and the optimized pharmaceutical ensemble's bio-inspired optimization represent complementary strategies addressing domain-specific challenges. As these methodologies continue to evolve, their increasing integration with experimental validation and high-throughput computational screening promises to accelerate the discovery of stable materials and optimize pharmaceutical formulations. The standardized protocols, performance metrics, and research tools outlined here provide a foundation for researchers to implement these advanced approaches in their thermodynamic stability investigations, ultimately contributing to more efficient and predictive stability assessment across scientific disciplines.

In the fields of materials science and drug development, accurately predicting key properties like thermodynamic stability and electronic band structure is fundamental to innovation. For decades, researchers have relied on two foundational pillars: experimental approaches and computational modeling, primarily Density Functional Theory (DFT). While powerful, both methods possess inherent limitations. Experiments can be time-consuming and expensive, while DFT, a workhorse for calculating electronic structures, is known for systematic errors, such as the underestimation of band gaps [8] [9]. This guide provides a comparative analysis of these traditional methods and introduces ensemble machine learning (ML) as a synergistic approach that leverages the strengths of both to overcome their individual constraints, particularly in thermodynamic stability research.

Direct Comparison: Traditional Methods vs. Ensemble Machine Learning

The table below summarizes the core limitations of experimental and DFT-based approaches, and contrasts them with the emerging capabilities of ensemble machine learning.

| Method | Key Limitations | Typical Performance Metrics | Impact on Thermodynamic Stability Research |

|---|---|---|---|

| Experimental Approaches | - High resource cost: Time-consuming, expensive, and requires specialized equipment [10] [1].- Data scarcity: Limited availability of high-quality, standardized data for many compounds [11].- Indirect measurements: Optical band gaps differ from fundamental (electronic) band gaps due to excitonic effects, complicating direct comparison with theory [12]. | - Establishing a convex hull for stability requires experimental formation energies for all relevant compounds in a phase diagram [1].- Corrosion studies use metrics like corrosion current density (icorr) and polarization resistance (Rp) from electrochemical tests [13]. | Severely restricts the pace of exploration for new stable compounds and the comprehensive understanding of material behavior under realistic conditions. |

| Density Functional Theory (DFT) | - Systematic errors: Standard functionals (e.g., GGA-PBE) underestimate band gaps [8] [9] [14].- Computational cost: High-accuracy functionals (HSE06, SCAN) and methods like DFT+U are computationally expensive, hindering high-throughput screening [1] [8] [9].- Functional dependence: Results are sensitive to the choice of exchange-correlation functional and Hubbard U parameters [9] [15]. | - Band gap error: PBE/GGA MAE ~1.184 eV vs. HSE06 MAE ~0.687 eV against experimental values [8].- DFT+U with optimized parameters can achieve close alignment with experimental lattice constants and band gaps [9]. | Inaccurate prediction of formation energies and decomposition energies (ΔHd) can lead to misclassification of a compound's stability on the convex hull. |

| Ensemble Machine Learning (ML) | - Data dependency: Model performance relies on the quality and size of underlying DFT/experimental training data [1].- Interpretability: "Black box" nature can make it difficult to extract physical or chemical insights without further analysis [1] [8]. | - Stability Prediction: AUC (Area Under the Curve) of 0.988 for classifying stable compounds [1] [16].- Band Gap Prediction: MAE of 0.289 eV for experimental band gaps using transfer learning from DFT data [8]. | Dramatically accelerates the discovery of new compounds by accurately predicting thermodynamic stability at a fraction of the computational cost of DFT [1]. |

Detailed Experimental and Computational Protocols

Experimental Protocol for Corrosion Behavior Analysis

This protocol, used for studies like those on micro-alloyed steel in 3.5% NaCl solution, exemplifies the detailed work required to gather experimental data [13].

- 1. Sample Preparation: Fabricate or acquire the material of interest (e.g., API-grade steels micro-alloyed with Cr, Mo, V). Perform thermal processing (e.g., water quenching) to alter microstructures.

- 2. Microstructural Examination: Use Electron Backscatter Diffraction (EBSD) and Field-Emission Scanning Electron Microscopy (FE-SEM) to analyze grain morphology and precipitate distribution (e.g., M7C3 carbides).

- 3. Electrochemical Testing:

- Immersion: Immerse samples in an electrolyte (e.g., 3.5 wt.% NaCl solution) for a set duration (e.g., 14 days).

- Linear Polarization Resistance (LPR): Measure corrosion current density (icorr) to assess corrosion kinetics.

- Electrochemical Impedance Spectroscopy (EIS): Obtain Nyquist plots to determine polarization resistance (Rp), indicating corrosion resistance.

- Potentiostatic Polarization: Apply a low anodic potential to monitor current density changes, evaluating anodic dissolution behavior.

- 4. Weight Loss Measurement: Measure sample weight before and after immersion to determine corrosion rate (in mm/year).

- 5. Surface Analysis: Use techniques like X-ray Diffraction (XRD) to identify corrosion products and oxide phases (e.g., α-(Fe,Cr)OOH).

Computational Protocol for DFT+U Calculations

This methodology is employed to enhance the predictive accuracy of DFT for materials like metal oxides [9].

- 1. System Selection: Identify the material and its structure (e.g., rutile TiO2, c-CeO2) from a database like the Materials Project.

- 2. Software and Functional: Use a DFT package like VASP. Select a standard functional (e.g., GGA-PBE) and plan for the +U correction.

- 3. Hubbard U Parameterization: Systematically perform calculations scanning a grid of integer values for Ud/f (for metal d/f orbitals) and Up (for oxygen p orbitals). For example, test pairs from (0 eV, 0 eV) to (12 eV, 12 eV).

- 4. Property Calculation: For each (Up, Ud/f) pair, compute the electronic band structure and lattice parameters.

- 5. Benchmarking: Compare the calculated band gaps and lattice constants against known experimental values to identify the optimal (Up, Ud/f) pair that minimizes deviation (e.g., (8 eV, 8 eV) for rutile TiO2).

Workflow Diagram: Integrating Methods for Stability Prediction

The following diagram illustrates how ensemble machine learning integrates with and bridges the gaps between traditional DFT and experimental approaches.

The Scientist's Toolkit: Key Research Reagents and Materials

This table lists essential computational and experimental "reagents" central to the featured methodologies.

| Item / Solution | Function / Role in Research |

|---|---|

| GGA-PBE Functional | A standard approximation in DFT for the exchange-correlation energy; computationally efficient but known to underestimate band gaps [8] [9]. |

| HSE06 Hybrid Functional | A more accurate, higher-cost DFT functional that mixes exact Hartree-Fock exchange to reduce band gap underestimation error [8] [15]. |

| Hubbard U Parameter | A corrective energy term in DFT+U applied to localized electron orbitals (e.g., 3d, 4f) to better describe strongly correlated materials [9]. |

| 3.5 wt.% NaCl Solution | A standard aqueous electrolyte used in electrochemical experiments to simulate a corrosive seawater environment for materials testing [13]. |

| Ensemble ML Framework (ECSG) | A machine learning architecture that combines multiple base models (e.g., Magpie, Roost, ECCNN) via stacked generalization to improve predictive accuracy and reduce bias [1] [16]. |

| Projector Augmented-Wave (PAW) Method | A pseudopotential technique used in DFT calculations (e.g., in VASP) to model core and valence electron interactions efficiently [9]. |

The limitations of traditional DFT and experimental methods are significant but not insurmountable. The integration of these approaches with ensemble machine learning creates a powerful, synergistic pipeline. Ensemble models, like the ECSG framework, can learn from the vast data generated by high-throughput DFT while being benchmarked and refined against critical experimental results [1]. This hybrid strategy mitigates the computational cost and systematic errors of DFT, while also overcoming the resource-intensive and data-scarce nature of pure experimentation. For researchers in thermodynamics and drug development, this represents a paradigm shift towards more efficient, accurate, and predictive materials discovery.

Ensemble learning is a powerful machine learning paradigm that combines the predictions from multiple models, known as base learners or weak learners, to produce a single, more accurate, and robust predictive model. [17] The core principle is that by aggregating the outputs of several models, the ensemble can mitigate individual model errors, leading to better overall performance than any single constituent model could achieve. This approach is particularly valuable in complex research domains, such as predicting the thermodynamic stability of inorganic compounds, where model accuracy and reliability are paramount. [16]

Fundamentally, ensemble methods work by training multiple models and then combining their predictions. The success of an ensemble hinges on the diversity of its base models; if different models make different types of errors, they can cancel out each other's weaknesses when combined. [17] Ensemble learning primarily addresses the bias-variance trade-off in machine learning. A high-bias model is too simple and underfits the data, while a high-variance model is too complex and overfits the noise in the data. Ensemble techniques are designed to reduce either variance or bias, resulting in a model that generalizes better to unseen data. [18]

The three most prominent ensemble techniques are Bagging (Bootstrap Aggregating), Boosting, and Stacking (Stacked Generalization). Bagging and Boosting typically use homogeneous base models (the same type of algorithm), while Stacking specializes in combining heterogeneous models (different types of algorithms). [17] [18] The following sections provide a detailed exploration of these core methods, their comparative performance, and their practical application in scientific research.

Core Ensemble Methods: Bagging, Boosting, and Stacking

Bagging (Bootstrap Aggregating)

Bagging is a parallel ensemble method designed primarily to reduce variance and prevent overfitting, especially in models that are prone to high variance, such as decision trees. [18] The process operates in two key stages:

- Bootstrapping: Multiple subsets, or "bootstrapped samples," are created from the original training dataset by randomly sampling data points with replacement. This means each subset may contain duplicate data points, and some original points may be omitted. [18]

- Aggregation: A base model, typically a decision tree, is trained independently on each of these bootstrapped samples. The final prediction of the ensemble is formed by aggregating the predictions of all individual models. For regression tasks, this is done by averaging the predictions. For classification, majority voting (taking the mode) is used. [19] [18]

A leading example of bagging is the Random Forest algorithm. It extends the basic bagging concept by introducing additional randomness not only in the data samples but also in the features used for splitting tree nodes, further enhancing model diversity and robustness. [20] [19]

Boosting

Boosting is a sequential ensemble technique that focuses on reducing bias by combining multiple weak learners to form a single strong learner. [18] Unlike bagging, boosting trains models one after the other, with each subsequent model aiming to correct the errors made by its predecessors. The general workflow is:

- Sequential Training: Models are trained in sequence. The first model is trained on the entire dataset.

- Weight Adjustment: After each iteration, the training data points that were misclassified are assigned higher weights. This forces the next model to pay more attention to these difficult-to-predict instances. [20] [18]

- Model Combination: The final prediction is a weighted sum (for regression) or a weighted majority vote (for classification) of the predictions from all sequential models. [19]

Popular boosting algorithms include AdaBoost (Adaptive Boosting), which adjusts instance weights, and Gradient Boosting, including its optimized version XGBoost (Extreme Gradient Boosting), which builds models to fit the residual errors of the previous ones, often yielding state-of-the-art results in competitions. [20] [19] [18]

Stacking (Stacked Generalization)

Stacking is a more advanced ensemble technique that combines multiple different base models (e.g., a decision tree, a support vector machine, and a neural network) using a meta-model (also called a blender). The goal is to leverage the unique strengths of diverse algorithms to capture a wider range of patterns in the data. [21] [22] [18] Its architecture is structured in two layers:

- Base Models (Level-0): Several different models are trained on the original training data. These are the "first-level" models. [21]

- Meta-Model (Level-1): The predictions from the base models are used as input features to train a new model. This meta-model learns the optimal way to combine the base models' predictions. [21] [22]

To prevent information leakage and overfitting, the training of the meta-model typically uses predictions made by the base models on a validation set (or through cross-validation) that was not used in their training, ensuring the meta-model learns from generalized patterns. [21] [22] A key advantage of stacking is its flexibility; it can integrate virtually any machine learning model and has been successfully applied in cutting-edge research, such as predicting material stability using a framework based on electron configuration. [16]

The diagram below illustrates the structured, two-layer workflow of a stacking ensemble.

Comparative Analysis of Ensemble Techniques

Performance and Computational Cost

The choice between Bagging and Boosting involves a fundamental trade-off between predictive performance and computational resource consumption. A 2025 study provides a quantitative comparison of these two methods across datasets of varying complexity, measured at different levels of ensemble complexity (number of base learners). [23]

Table 1: Performance (Accuracy) Comparison of Bagging vs. Boosting [23]

| Ensemble Complexity (Number of Base Learners) | Bagging Performance (MNIST) | Boosting Performance (MNIST) | Bagging Performance (CIFAR-100) | Boosting Performance (CIFAR-100) |

|---|---|---|---|---|

| 20 | 0.932 | 0.930 | 0.682 | 0.685 |

| 50 | 0.933 | 0.948 | 0.683 | 0.701 |

| 100 | 0.933 | 0.957 | 0.684 | 0.712 |

| 200 | 0.933 | 0.961 | 0.684 | 0.719 |

Table 2: Computational Time Cost Comparison of Bagging vs. Boosting (Ensemble Complexity = 200) [23]

| Dataset | Bagging Computational Time | Boosting Computational Time | Relative Cost (Boosting/Bagging) |

|---|---|---|---|

| MNIST | 1x (Baseline) | ~14x | ~14 times higher |

| CIFAR-100 | 1x (Baseline) | ~12x | ~12 times higher |

The data reveals distinct patterns:

- Bagging shows rapid performance improvement that quickly plateaus. Increasing the number of base learners beyond a certain point yields diminishing returns, as seen with MNIST and CIFAR-100 performance stabilizing. [23]

- Boosting demonstrates continuous performance gains with increased complexity, often achieving higher final accuracy. However, it can eventually show signs of overfitting, and its performance follows an inverted-U curve on some datasets. [23]

- Computational Cost for Boosting is significantly higher than for Bagging due to its sequential nature. As shown in Table 2, Boosting can be over an order of magnitude slower, making Bagging a more cost-efficient choice when computational resources or time are constrained. [23]

Qualitative Comparison and Guidelines

Beyond quantitative metrics, the three ensemble methods have distinct characteristics, advantages, and limitations.

Table 3: Qualitative Comparison of Bagging, Boosting, and Stacking

| Feature | Bagging | Boosting | Stacking |

|---|---|---|---|

| Primary Goal | Reduce variance, prevent overfitting [18] | Reduce bias, create a strong learner from weak ones [18] | Leverage strengths of diverse models via a meta-learner [21] [18] |

| Training Method | Parallel training of homogeneous models on bootstrapped data [18] | Sequential training, focusing on misclassified instances from previous models [20] [18] | Two-stage: parallel training of heterogeneous base models, then training a meta-model on their predictions [21] [22] |

| Advantages | Highly parallelizable, robust to overfitting, simple to implement [18] | Often achieves higher accuracy, effective at reducing bias [20] [23] | Can capture a wider range of patterns, often leads to superior performance [21] [16] |

| Disadvantages | Performance can plateau; less interpretable [23] | Prone to overfitting on noisy data, high computational cost, sensitive to outliers [23] [18] | Complex to implement and train, slow training time, requires careful setup to avoid data leakage [21] [22] |

| Best Suited For | High-variance models (e.g., deep decision trees), resource-constrained environments [23] [18] | Applications where maximizing predictive accuracy is critical and sufficient resources are available [23] | Complex problems where diverse model perspectives are beneficial, and ample data is available [21] [16] |

Decision Guidelines:

- Choose Bagging when you need a reliable, cost-efficient model and are primarily concerned with controlling overfitting. [23]

- Choose Boosting when the primary goal is to maximize predictive accuracy and computational resources/time are not major constraints. [23]

- Choose Stacking when you have the computational resources and expertise to manage a complex setup and believe that combining fundamentally different algorithms will yield a performance benefit that single-method ensembles cannot achieve. [21] [16]

Experimental Protocols and Applications in Scientific Research

Detailed Protocol for Implementing Stacking

Implementing a stacking ensemble requires a systematic approach to ensure robustness and prevent overfitting. The following protocol, adaptable for platforms like Python's Scikit-learn, outlines the key steps: [21]

- Data Preparation and Splitting: Split the dataset into a training set and a hold-out test set. The test set will be used for the final evaluation and must not be used during any model training or meta-model training phases. [21]

- Base Model Training: Train a diverse set of base models (Level-0) on the training data. Diversity is crucial; select algorithms with different inductive biases (e.g., K-Nearest Neighbors, Naive Bayes, Decision Trees, Support Vector Machines). [21] [22]

- Generation of Predictions for Meta-Model:

- Use k-fold cross-validation on the training set for each base model. For each fold, train the model on (k-1) folds and generate predictions on the remaining validation fold. This produces out-of-fold predictions for the entire training set without data leakage. [21]

- Alternatively, hold out a separate validation set from the training data. Train all base models on the reduced training set and use them to generate predictions on this validation set. [22]

- Meta-Model Training: The collected predictions from the base models (e.g., the out-of-fold predictions) form a new feature matrix. The original target values corresponding to these predictions are used to train the meta-model (Level-1) on this new dataset. [21] [22]

- Final Evaluation and Inference:

- To make a prediction on new data, the base models first generate their predictions.

- These predictions are then fed as features to the trained meta-model, which produces the final ensemble prediction. [21] [22]

- The entire stacking pipeline's performance is evaluated on the hold-out test set that was set aside in Step 1.

Case Study: Ensemble Learning for Thermodynamic Stability Prediction

The application of ensemble learning in materials science showcases its power in accelerating scientific discovery. A 2024 study demonstrated this by developing a machine learning framework to predict the thermodynamic stability of inorganic compounds. [16]

- Objective: To accurately and efficiently predict compound stability, a task traditionally reliant on time-consuming experimental and computational methods like density functional theory (DFT). [16]

- Ensemble Methodology: The researchers employed a stacking approach. The foundation was a base model built using features derived from electron configuration. This model was then enhanced through stacking with two other models that were based on different domain knowledge, creating a robust and knowledge-agnostic framework. [16]

- Results and Impact: The stacked ensemble achieved an exceptional Area Under the Curve (AUC) score of 0.988. A key finding was its remarkable data efficiency; the model required only one-seventh of the data used by existing models to achieve equivalent performance. This efficiency was validated by first-principles calculations, which confirmed the model's high accuracy in identifying stable compounds, including new two-dimensional wide bandgap semiconductors and double perovskite oxides. [16]

This case study underscores how stacking can integrate diverse information sources (e.g., different physical descriptors) to create a highly accurate and efficient predictive tool for complex scientific problems.

Research Reagent Solutions

The following table details key computational tools and conceptual components essential for implementing ensemble methods in a research environment, as illustrated in the cited experiments.

Table 4: Essential Research Reagents and Tools for Ensemble Experiments

| Item Name | Type / Category | Function in Ensemble Research |

|---|---|---|

| Scikit-learn | Software Library | Provides implementations for base models (KNN, Decision Trees, etc.) and meta-models (Logistic Regression). Facilitates data splitting, cross-validation, and evaluation. [21] |

| Random Forest | Bagging Algorithm | Serves as a high-performance, ready-to-use bagging ensemble for benchmarking or as a base model in stacking. [20] [19] |

| XGBoost | Boosting Algorithm | An optimized gradient boosting implementation often used for its high accuracy as a base model or standalone. [20] [18] |

| Cross-Validation | Methodological Protocol | Critical for generating out-of-fold predictions in stacking to train the meta-model without data leakage. [21] |

| Electron Configuration Descriptors | Data Feature Set | Used as foundational input features for base models in material science applications, capturing essential elemental properties. [16] |

| Meta-Model (e.g., Linear Model) | Ensemble Component | The higher-level model that learns the optimal combination of base model predictions in a stacking ensemble. [21] [22] |

Ensemble learning represents a significant advancement in machine learning methodology, offering powerful techniques to enhance predictive accuracy and model robustness. Bagging provides a robust, parallelizable approach to control variance, Boosting delivers high accuracy through sequential correction of errors at a higher computational cost, and Stacking offers a flexible framework to harness the collective power of diverse algorithms.

The experimental data and case studies confirm that the choice of ensemble method is not one-size-fits-all but should be guided by specific project constraints, including the complexity of the dataset, computational resources, and the paramount objective—be it cost efficiency, maximum accuracy, or leveraging diverse model perspectives. As demonstrated in thermodynamic stability research, the strategic application of these ensemble techniques, particularly stacking, can dramatically accelerate discovery and improve predictive efficiency in scientific domains.

Ensemble machine learning models are revolutionizing the prediction of material properties, offering a powerful strategy to overcome the limitations of single-model approaches. By integrating diverse base models, these ensembles mitigate inductive bias—the tendency of a model to prefer one solution over others due to its built-in assumptions or the specific domain knowledge used to train it. In the context of thermodynamic stability research, this translates to more robust, generalizable, and accurate predictions, which are crucial for accelerating the discovery of new inorganic compounds, semiconductors, and metal-organic frameworks. This guide objectively compares the performance of ensemble models against alternative methods, providing the experimental data and protocols needed for informed adoption.

The Problem: Inductive Bias in Materials Science

In materials informatics, a model's inductive bias can significantly skew results. Common sources include:

- Architectural Bias: A model's structure imposes inherent assumptions. For instance, Graph Neural Networks might assume all atoms in a unit cell interact strongly, while Convolutional Neural Networks prioritize local spatial relationships [1] [24].

- Feature Bias: This arises from the choice of input descriptors. Relying solely on elemental composition statistics overlooks crucial information about electron configuration and interatomic interactions [1].

- Data Bias: Models trained on specific types of compounds may fail to generalize to unexplored compositional spaces [25].

When a model's built-in biases do not align with the underlying physics of the problem, its predictive performance and generalizability diminish. Ensemble learning directly addresses this by combining models with different, complementary biases.

Mechanisms of Ensemble Models

Ensemble techniques mitigate bias through several core mechanisms:

- Stacked Generalization (Stacking): This method uses a meta-learner to optimally combine the predictions of diverse base models. The base models, each with different inductive biases, learn from the data first. The meta-learner then learns how to best blend these predictions, effectively correcting for the individual biases of the base models and forming a more robust super-learner [1].

- Complementary Knowledge Integration: Ensembles integrate insights from different physical scales or theoretical perspectives. For example, a powerful ensemble might combine a model based on atomic properties (Magpie), another on interatomic interactions (Roost), and a third on fundamental electron configurations (ECCNN) [1].

- Variance Reduction: By averaging the outputs of multiple models, ensembles smooth out the overreactions or specific errors that any single model might make, leading to more stable and reliable predictions on new data [26] [27].

Experimental Comparison & Performance Data

Experimental results from recent high-impact studies demonstrate the superior performance of ensemble models in predicting thermodynamic stability and related properties.

Table 1: Performance Comparison of ML Models in Thermodynamic Stability Prediction

| Model / Framework | AUC Score | Key Performance Metric | Data Efficiency | Reference / Application |

|---|---|---|---|---|

| ECSG (Ensemble) | 0.988 | Area Under the Curve | Requires only 1/7 of the data to match other models' performance | Predicting stability of inorganic compounds [1] [16] |

| ElemNet (Single Model) | Lower than ECSG (implied) | Area Under the Curve | Standard data requirement | Baseline for stability prediction [1] |

| Ensemble Extra Trees | R² = 0.96 | Coefficient of Determination (Formation Energy) | High | Predicting stability of 2D Conductive MOFs [28] |

| Ensemble Neural Networks | Superior MSE, MSLE, SMAPE | Multiple Error Metrics | High | Fatigue life prediction (for comparison) [26] |

Table 2: Ensemble Model Performance on Electronic Property Classification

| Model / Framework | Bandgap Classification Accuracy | Metallicity Prediction Accuracy | Application |

|---|---|---|---|

| Extra Tree Classifier (Ensemble) | 82% | 92% | 2D Conductive Metal-Organic Frameworks (EC-MOFs) [28] |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for implementation, the following section details the core experimental protocols from the cited studies.

Protocol: Electron Configuration Model with Stacked Generalization (ECSG)

This protocol outlines the methodology for the high-performing ECSG ensemble used for inorganic compound stability [1].

- Objective: To accurately predict the thermodynamic stability of inorganic compounds by integrating multiple models to reduce inductive bias.

- Base-Level Models:

- ECCNN (Electron Configuration CNN): A novel model that uses electron configuration matrices as input, processed through convolutional layers to capture intrinsic atomic properties.

- Roost: A model representing the chemical formula as a graph, using message-passing and attention mechanisms to capture interatomic interactions.

- Magpie: A model using statistical features (mean, deviation, range) of elemental properties (e.g., atomic radius, electronegativity), trained with gradient-boosted trees.

- Meta-Level Model: The predictions from the three base models are used as input features for a final meta-learner, which is trained to produce the final stability prediction.

- Dataset: Training and validation were performed using stability data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database.

- Evaluation Metric: Area Under the Receiver Operating Characteristic Curve (AUC).

Protocol: Ensemble Learning for 2D Conductive MOFs

This protocol describes the approach for predicting the stability and electronic properties of metal-organic frameworks [28].

- Objective: To predict the formation energy (stability) and electronic properties (e.g., metallicity, bandgap) of 2D electrically conductive MOFs.

- Feature Engineering:

- GD, M-GD, A-GD Features: Different feature sets were constructed by integrating compositional features from generic statistical reduction methods with structural descriptors curated from an EC-MOF database.

- Ensemble Models:

- Ensemble Extra Trees: Used for both regression (formation energy) and classification (metallicity, bandgap) tasks.

- Other Benchmarked Models: Linear and other tree-based models were tested for performance comparison.

- Dataset: 536 monolayer systems from the EC-MOF database, split into 90% for training and 10% for testing.

- Evaluation Metrics: Coefficient of Determination (R²) for formation energy; Accuracy for classification tasks.

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogs key computational "reagents" essential for conducting ensemble machine learning research in computational materials science.

Table 3: Essential Research Reagents for Ensemble ML in Materials Science

| Research Reagent | Function & Application | Specific Examples |

|---|---|---|

| Materials Databases | Provides labeled data for training and validation of ML models. Contains calculated or experimental properties of known compounds. | Materials Project (MP), Open Quantum Materials Database (OQMD), JARVIS, EC-MOF Database [1] [28] |

| Feature Representation Tools | Transforms raw chemical compositions or structures into numerical descriptors that ML models can process. | Magpie feature sets (elemental statistics), Electron Configuration (EC) encoders, structural descriptors [1] [28] |

| Base Model Algorithms | Serves as the diverse building blocks of an ensemble, each providing a unique perspective on the data. | Gradient Boosted Trees (e.g., XGBoost), Graph Neural Networks (e.g., Roost), Convolutional Neural Networks (e.g., ECCNN) [1] |

| Ensemble Frameworks | Provides the architecture and algorithms for combining base models into a single, more powerful predictor. | Stacked Generalization (Stacking), Boosting, Bagging [1] [26] [27] |

| Validation & Benchmarking Suites | Enables rigorous evaluation of model performance, generalization, and robustness, free from data shortcuts. | Shortcut Hull Learning (SHL), Shortcut-Free Evaluation Framework (SFEF) [25] |

The experimental evidence is clear: ensemble machine learning models offer a significant advantage in mitigating inductive bias and improving generalization in thermodynamic stability research. The ECSG framework's high AUC score and remarkable data efficiency, alongside the high accuracy of ensemble methods in predicting MOF properties, establish a new benchmark for the field.

Future research will likely focus on developing even more sophisticated ensemble architectures, further refining feature engineering to capture deeper physical insights, and creating comprehensive, bias-free benchmarking datasets. By adopting ensemble methods, researchers and developers can build more reliable and robust predictive models, substantially accelerating the discovery and design of novel materials.

High-throughput density functional theory (HT-DFT) has revolutionized materials science by generating extensive datasets that enable machine learning (ML) applications. Among these, the Materials Project (MP) and the Open Quantum Materials Database (OQMD) have emerged as foundational resources for training predictive models in thermodynamic stability research. These databases provide calculated properties for hundreds of thousands of inorganic compounds, serving as the essential fuel for data-driven materials discovery [29] [30]. The paradigm of leveraging these extensive datasets allows researchers to perform high-throughput screening of new materials at unprecedented scales, significantly accelerating the discovery cycle of compounds with desired properties [30].

For ensemble machine learning models focused on thermodynamic stability, the integration of diverse data sources presents both opportunities and challenges. While these databases share common goals of accelerating materials discovery, they exhibit differences in calculation methodologies, data processing techniques, and compositional focus that introduce important considerations for model training [31] [32]. Understanding these distinctions is crucial for researchers aiming to build robust, generalizable models that can accurately predict compound stability across diverse chemical spaces.

Database Comparative Analysis: MP vs. OQMD

Table 1: Key characteristics of Materials Project and OQMD databases

| Characteristic | Materials Project (MP) | Open Quantum Materials Database (OQMD) |

|---|---|---|

| Database Size | Extensive (part of LeMat-Bulk's 6.7M entries) [30] | ~300,000 DFT calculations [29] |

| Primary Focus | Oxides and battery materials [30] | ICSD compounds and hypothetical structures [29] |

| Formation Energy Accuracy | Part of cross-database variance study [31] | MAE of 0.096 eV/atom vs. experiment [29] |

| Data Access | Freely available, CC-BY-4.0 license [30] | Fully available without restrictions [29] |

| Hypothetical Structures | Limited | Extensive (~259,511 entries) [29] |

The OQMD distinguishes itself by containing nearly 300,000 DFT total energy calculations of compounds from the Inorganic Crystal Structure Database (ICSD) and decorations of commonly occurring crystal structures [29]. As of its 2015 publication, it included 32,559 calculated ICSD compounds and 259,511 hypothetical compounds based on prototype structure decorations, making it particularly valuable for predicting new stable compounds [29]. The database reports an apparent mean absolute error of 0.096 eV/atom between DFT predictions and experimental formation energies, though notably, a significant fraction of this error may be attributed to experimental uncertainties themselves, which show a mean absolute error of 0.082 eV/atom between different experimental measurements [29].

The Materials Project, while similarly extensive, shows particular strengths in specific material classes. Analysis has revealed that MP has a stronger focus on oxides and battery materials, which introduces specific compositional biases that researchers must consider when building generalizable models [30]. This specialization can be advantageous for targeted applications but may require compensation through data integration when building broader stability prediction models.

Table 2: Property reproducibility across HT-DFT databases

| Property | Variance Between Databases | Reproducibility Assessment |

|---|---|---|

| Formation Energy | 0.105 eV/atom (MRAD of 6%) [31] | High |

| Volume | 0.65 ų/atom (MRAD of 4%) [31] | High |

| Band Gap | 0.21 eV (MRAD of 9%) [31] | Moderate |

| Total Magnetization | 0.15 μB/formula unit (MRAD of 8%) [31] | Moderate |

| Metallic Classification | Disagreement in up to 7% of records [31] | Variable |

| Magnetic Classification | Disagreement in up to 15% of records [31] | Variable |

A comparative analysis of AFLOW, Materials Project, and OQMD reveals that while formation energies and volumes show relatively good reproducibility across databases, electronic properties such as band gaps and magnetic properties exhibit more significant variances [31] [32]. These discrepancies stem from differences in pseudopotential choices, DFT+U formalisms, and elemental reference states used across the databases [31]. For thermodynamic stability predictions, the higher consistency in formation energies is favorable, though researchers should remain aware of the potential variances when integrating multiple data sources.

Experimental Protocols for Database Utilization

Data Sourcing and Integration Methodologies

The foundational step in leveraging MP and OQMD for ensemble ML models involves careful data sourcing and integration. Recent initiatives like LeMaterial provide valuable frameworks for this process, having developed pipelines that unify, clean, and standardize data from both MP and OQMD [30]. Their protocol involves:

- Data Collection and Merging: Simultaneous extraction from MP, OQMD, and other databases, preserving multiple DFT functionals (PBE, PBESol, SCAN) where available [30].

- Data Cleaning: Identification and removal of data points with non-compatible calculations or missing critical information [30].

- Standardization: Uniform formatting of fields across databases using the OPTiMaDe standard to ensure consistency [30].

- Material Fingerprinting: Application of a hashing function to assign unique identifiers to each material, enabling duplicate removal and cross-database matching [30].

Ensemble Model Training Framework

The ECSG (Electron Configuration with Stacked Generalization) framework demonstrates an effective methodology for leveraging diverse data sources in ensemble models for stability prediction [33]. This approach employs a stacked generalization technique that combines multiple base models trained on different feature representations:

- Feature Diversification: Implementation of three distinct feature domains to minimize inductive bias - Magpie (atomic properties), Roost (interatomic interactions), and ECCNN (electron configurations) [33].

- Stacked Generalization: Training of a meta-learner that combines the predictions of base models to improve overall accuracy and robustness [33].

- Stability Metric Prediction: Focus on decomposition energy (ΔHd) as the primary stability metric, derived from convex hull analysis of formation energies [33].

Experimental validation of this approach has demonstrated exceptional performance, achieving an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database, along with remarkable data efficiency requiring only one-seventh of the data used by existing models to achieve comparable performance [33].

Ensemble Modeling Approaches for Stability Prediction

The Stacked Generalization Framework

Ensemble methods have shown particular promise in addressing the limitations of individual models trained on specific data representations. The ECSG framework exemplifies this approach by combining three distinct models, each rooted in different domain knowledge [33]:

- Magpie: Utilizes statistical features derived from elemental properties (atomic number, mass, radius, etc.) and employs gradient-boosted regression trees (XGBoost) for predictions [33].

- Roost: Conceptualizes the chemical formula as a complete graph of elements, employing graph neural networks with attention mechanisms to capture interatomic interactions [33].

- ECCNN (Electron Configuration Convolutional Neural Network): Leverages electron configuration information through convolutional neural networks, providing insight into electronic structure effects on stability [33].

This multi-faceted approach effectively mitigates the inductive biases inherent in each individual model, resulting in enhanced predictive performance for thermodynamic stability [33]. By training on diverse feature representations derived from the same underlying MP and OQMD data, the ensemble captures complementary aspects of the structure-property relationships governing material stability.

Table 3: Ensemble model components for stability prediction

| Model Component | Knowledge Domain | Architecture | Strengths |

|---|---|---|---|

| Magpie | Atomic properties & statistics | Gradient Boosted Regression Trees (XGBoost) | Captures elemental diversity trends |

| Roost | Interatomic interactions & graph relationships | Graph Neural Network with attention | Learns compositional relationships |

| ECCNN | Electron configurations & quantum structure | Convolutional Neural Network | Incorporates electronic structure effects |

Addressing Dataset Biases through Integration

A significant advantage of integrating MP and OQMD in ensemble modeling is the mitigation of individual database biases. Materials Project's noted focus on oxides and battery materials (evident in its enrichment of Li, O, P elements) can be balanced by OQMD's broader coverage of ICSD compounds and hypothetical structures [29] [30]. This balanced training data results in models with improved generalizability across diverse chemical spaces.

The LeMaterial initiative demonstrates the value of this integrated approach, creating a unified resource of 6.7 million entries with consistent properties by combining MP, OQMD, and other sources [30]. Their work highlights how such integration enables exploration of extended phase diagrams with finer resolution of material stability across compositional spaces, directly benefiting thermodynamic stability prediction tasks [30].

Performance Evaluation and Experimental Data

Stability Prediction Accuracy

Experimental validation of ensemble models trained on integrated MP and OQMD data demonstrates significant advantages in stability prediction accuracy. The ECSG framework achieves an AUC of 0.988 in predicting compound stability, substantially outperforming individual models [33]. This high performance underscores the value of combining diverse data sources with ensemble techniques that mitigate individual model biases.

Additionally, models trained on integrated data exhibit remarkable sample efficiency, achieving comparable accuracy with only one-seventh of the training data required by existing models [33]. This efficiency is particularly valuable in materials science applications where data acquisition—whether computational or experimental—remains resource-intensive.

Novel Compound Prediction

Beyond accuracy metrics on test sets, integrated MP-OQMD ensemble models have demonstrated practical utility in predicting novel stable compounds. Case studies applying these models to explore new two-dimensional wide bandgap semiconductors and double perovskite oxides have successfully identified promising candidates, with subsequent DFT validation confirming remarkable accuracy in correctly identifying stable compounds [33].

The OQMD's extensive collection of hypothetical structures (over 259,000 entries) provides particularly valuable training data for this application, having enabled the prediction of approximately 3,200 new compounds that had not been experimentally characterized [29]. When combined with MP's data through ensemble approaches, this enables powerful discovery pipelines for novel materials.

Essential Research Reagent Solutions

Table 4: Key computational tools for database integration and ensemble modeling

| Tool/Resource | Function | Application Context |

|---|---|---|

| LeMat-Bulk Dataset | Unified, standardized dataset integrating MP and OQMD | Training data for ensemble models |

| Material Fingerprinting | Unique identification and deduplication of materials | Cross-database matching and novelty detection |

| pymatgen | Materials analysis library | Structure manipulation and property analysis |

| Crystal Toolkit | Visualization framework | Phase diagram exploration and data interpretation |

| ECCNN | Electron configuration-based neural network | Ensemble model component for stability prediction |

| Stacked Generalization | Ensemble learning technique | Combining multiple models for improved accuracy |

The integration of Materials Project and OQMD provides a powerful foundation for ensemble machine learning models targeting thermodynamic stability prediction. While each database has distinct characteristics and strengths—with MP offering specialized coverage of functional materials and OQMD providing extensive hypothetical structures—their combined use enables more robust and generalizable models. The systematic integration of these diverse data sources, coupled with ensemble techniques that leverage complementary feature representations, addresses key challenges in materials informatics including dataset biases, model generalizability, and prediction uncertainty.

Experimental results demonstrate that this integrated approach achieves superior performance in stability prediction, with applications spanning from novel compound discovery to the exploration of specialized material classes like perovskites and two-dimensional semiconductors. As the field progresses, ongoing initiatives like LeMaterial that focus on standardization and harmonization of materials data will further enhance the utility of these foundational databases, accelerating the discovery of new materials with tailored properties.

Model Architectures in Action: Building and Applying Ensemble Frameworks

Accurately predicting the thermodynamic stability of inorganic compounds is a fundamental challenge in materials science, governing the synthesizability of new materials and their potential for degradation under specific conditions [5]. Traditional methods, primarily based on Density Functional Theory (DFT), are computationally expensive and time-consuming, creating a bottleneck in the discovery pipeline [1]. Machine learning (ML) offers a promising avenue for expediting this discovery, providing significant advantages in time and resource efficiency [1] [16]. However, many existing ML models are constructed on specific domain knowledge or idealized scenarios, which can introduce large inductive biases and limit their predictive performance and generalizability [1].

To overcome these limitations, the ECSG (Electron Configuration Stacked Generalization) framework was proposed. It is an ensemble machine learning framework specifically designed for predicting thermodynamic stability. Its core innovation lies in using stacked generalization, a powerful ensemble technique, to amalgamate models rooted in distinct and complementary domains of knowledge [1] [34]. This approach mitigates the biases inherent in single models and harnesses a synergy that enhances overall predictive performance. This guide provides a detailed architectural blueprint of the ECSG framework, objectively compares its performance against other models, and delineates the experimental protocols for its validation.

Framework Anatomy: Deconstructing the ECSG Architecture

The ECSG framework is a super learner built using stacked generalization. Its architecture is designed to integrate diverse hypotheses about the factors governing material stability.

The Stacked Generalization Paradigm

Stacked generalization operates on a two-level (or meta-learning) principle [35]:

- Base-Level Models: Several different models are trained on the original data. Their predictions are used as input features for the next level.

- Meta-Model: A separate model is trained to learn how to best combine the predictions from the base-level models to produce the final, more accurate output.

In ECSG, this technique effectively creates a model that dynamically weights the opinions of its constituent models based on their performance, thereby reducing reliance on any single, potentially biased, assumption [1].

Core Constituent Models and Their Knowledge Domains

The strength of ECSG stems from the deliberate selection of base models that capture material properties at different physical scales, ensuring complementarity [1]. The table below details these core components.

Table: The Base-Level Models within the ECSG Framework

| Model Name | Underlying Knowledge Domain | Core Input Features | Algorithm / Architecture | Role in the Ensemble |

|---|---|---|---|---|

| ECCNN (Electron Configuration Convolutional Neural Network) [1] | Quantum Mechanical / Electronic Structure | Electron configuration (EC) of constituent elements, encoded as a matrix. | Convolutional Neural Network (CNN) | Provides foundational information on chemical properties and reaction dynamics from first principles. |

| Roost [1] | Atomistic / Structural | Chemical formula represented as a graph of elements. | Graph Neural Network with attention mechanism | Captures complex interatomic interactions and message-passing within a crystal. |

| Magpie [1] | Classical / Empirical | Statistical features (mean, deviation, range) of various elemental properties (e.g., atomic mass, radius). | Gradient-Boosted Regression Trees (XGBoost) | Offers a broad, statistics-based view of material diversity using well-established elemental descriptors. |

The meta-learner that integrates the predictions of these three base models is a logistic regression classifier, which assigns optimal weights to each model's output to make the final stability classification [1].

Workflow Visualization

The following diagram illustrates the integrated workflow of the ECSG framework, from input to final prediction.

Performance Benchmark: ECSG Versus Alternative Models

The ECSG framework has been rigorously tested against other machine learning models, demonstrating superior performance in both accuracy and data efficiency.

Quantitative Performance Comparison

Experimental results on datasets from materials databases like the Joint Automated Repository for Various Integrated Simulations (JARVIS) validate the efficacy of the ECSG approach [1].

Table: Performance Comparison of Stability Prediction Models

| Model / Framework | Primary Input Type | Key Performance Metric (AUC) | Data Efficiency (Relative to ElemNet) | Notable Strengths and Weaknesses |

|---|---|---|---|---|

| ECSG (Ensemble) [1] | Composition (Multi-domain) | 0.988 | 7x (Uses 1/7 of the data) | Strengths: High accuracy, robust, sample-efficient. Weakness: More complex architecture. |

| ECCNN [1] | Composition (Electron Config.) | 0.978 (Base model) | Information Not Available | Strength: Leverages fundamental quantum properties. Weakness: Single-domain knowledge. |

| Roost [1] | Composition (Graph) | 0.974 (Base model) | Information Not Available | Strength: Captures interatomic interactions. Weakness: Assumes strong graph connectivity. |

| Magpie [1] | Composition (Elemental Stats) | 0.952 (Base model) | Information Not Available | Strength: Simple, interpretable features. Weakness: Lacks quantum and structural insight. |

| ElemNet [1] | Composition (Element Fractions) | ~0.988 (with full data) | 1x (Baseline) | Strength: Deep learning on raw compositions. Weakness: High data requirement; inductive bias. |

| RF/NN for Actinides [5] | Composition (145 Features) | High Accuracy (Reported) | Information Not Available | Strength: Effective for specialized systems. Weakness: Limited to trained feature set. |

AUC: Area Under the Receiver Operating Characteristic Curve.

Case Study Validation with First-Principles Calculations

The practical utility of ECSG was demonstrated through case studies exploring new two-dimensional wide bandgap semiconductors and double perovskite oxides [1]. After ECSG identified promising stable compounds, researchers validated these predictions using first-principles calculations (DFT). The results showed remarkable accuracy, confirming that ECSG can reliably navigate unexplored composition spaces and correctly identify stable compounds, thereby accelerating the discovery of new functional materials [1] [34].

Experimental Protocols: Methodology for Reproduction

For researchers to reproduce and implement the ECSG framework, a clear understanding of the experimental setup and data handling is essential.

Data Sourcing and Preprocessing

- Data Sources: The model can be trained on large materials databases such as the Materials Project (MP) and the Open Quantum Materials Database (OQMD), which provide DFT-calculated formation energies and stability labels for thousands of inorganic compounds [1] [5].

- Input Representation:

- Composition-based: The primary input is the chemical formula. For ECSG, this is processed into three distinct feature sets corresponding to ECCNN, Roost, and Magpie [1] [34].

- Structure-based (Optional): A variant of ECSG can incorporate structural information from CIF (Crystallographic Information File) files for improved accuracy when crystal structures are known [34].

- Target Variable: The thermodynamic stability is typically represented by the decomposition energy (ΔHd), which is derived from the energy above the convex hull. A compound is classified as stable if it lies on the convex hull (ΔHd = 0) and unstable otherwise [1].

Model Training and Evaluation

- Training Protocol: The framework is trained using a cross-validation approach. The base models are first trained independently. Their predictions on out-of-fold validation data are then used to train the meta-learner (logistic regression), preventing information leakage [1].

- Evaluation Metrics: The primary metric for evaluating classification performance is the Area Under the Curve (AUC). Additional metrics like accuracy, precision, and recall are also used. For regression tasks (predicting formation energy), metrics like Root Mean Square Error (RMSE) and R² are common [5] [36].

- Computational Requirements: Reproducing the ECSG framework requires significant computational resources. The official GitHub repository recommends a system with 128 GB RAM, 40 CPU processors, and a 24 GB GPU, running a Linux operating system [34].

The Scientist's Toolkit: Essential Research Reagents

The following table details the key "research reagents" — datasets, software, and computational tools — essential for working with the ECSG framework or similar ensemble models in thermodynamic stability prediction.

Table: Essential Research Reagents for Ensemble Stability Prediction

| Item Name | Type | Function / Application | Source / Availability |

|---|---|---|---|

| Materials Project (MP) Database [1] | Dataset | Provides a vast repository of DFT-calculated material properties, used for training and benchmarking ML models. | https://materialsproject.org/ |

| Open Quantum Materials Database (OQMD) [5] | Dataset | A high-throughput database containing calculated formation energies for a wide range of compounds, including actinides. | https://www.oqmd.org/ |

| ECSG Code & Pre-trained Models [34] | Software | The official implementation of the ECSG framework, including scripts for training, prediction, and pre-trained models for immediate use. | https://github.com/Haozou-csu/ECSG |

| Vienna Ab Initio Simulation Package (VASP) [36] | Software | A widely used software package for performing first-principles DFT calculations, essential for validating ML predictions and generating training data. | Commercial License |

| PyTorch [34] | Software | An open-source machine learning library; serves as the foundational deep learning framework for building and training models like ECCNN and Roost. | https://pytorch.org/ |

| Moment Tensor Potential (MTP) [36] | Software/Model | A class of machine-learning interatomic potentials used for accurate molecular dynamics simulations, representing an alternative ML approach to direct stability prediction. | Integrated in MLIP packages |

The ECSG framework represents a significant architectural advancement in the machine-learning-based prediction of thermodynamic stability. By strategically employing stacked generalization to integrate complementary models based on electron configuration, interatomic interactions, and empirical elemental properties, ECSG achieves a level of accuracy and data efficiency that surpasses single-model alternatives. Its validated performance in discovering new semiconductors and perovskite oxides underscores its potential as a powerful tool for researchers and scientists aiming to accelerate the design and discovery of novel inorganic compounds. While its ensemble structure is more complex, the substantial gains in predictive power and robustness make ECSG a compelling benchmark in the field of ensemble machine learning for materials science.

The accurate prediction of thermodynamic stability is a cornerstone of materials science and drug development, directly influencing the synthesizability and operational degradation of new compounds and therapeutic agents. Traditional methods, primarily based on density functional theory (DFT),, are computationally intensive, creating a significant bottleneck for high-throughput discovery. Machine learning (ML) offers a promising alternative, yet the performance of these models is profoundly dependent on the features used to represent materials. Feature engineering—the process of creating informative descriptors from raw data—has emerged as a critical step. An ensemble approach that strategically integrates features from different physical scales, namely electron configuration (EC), atomic properties, and interatomic interactions, has been demonstrated to mitigate model bias and achieve state-of-the-art predictive performance [1]. This guide provides a comparative analysis of this integrated feature engineering strategy against models using single-domain knowledge.

Comparative Analysis of Feature Engineering Approaches

The performance of machine learning models in predicting thermodynamic stability varies significantly based on the feature sets and algorithms employed. The table below summarizes quantitative data from recent studies, highlighting the superiority of ensemble methods that integrate multiple feature types.

Table 1: Performance comparison of machine learning models for thermodynamic stability prediction.

| Material Class | Model / Feature Set | Key Feature Types | Performance Metrics | Reference / Source |

|---|---|---|---|---|

| General Inorganic Compounds | ECSG (Ensemble of ECCNN, Magpie, Roost) | Electron Configuration, Atomic Properties, Interatomic Interactions | AUC: 0.988; Achieved same performance with 1/7 the data required by other models [1]. | [1] |

| General Inorganic Compounds | ECCNN (Base model in ECSG) | Electron Configuration | High accuracy, but specific metrics superseded by the ECSG ensemble [1]. | [1] |

| General Inorganic Compounds | Magpie (Base model in ECSG) | Atomic Properties (statistical features) | High accuracy, but specific metrics superseded by the ECSG ensemble [1]. | [1] |

| General Inorganic Compounds | Roost (Base model in ECSG) | Interatomic Interactions (graph-based) | High accuracy, but specific metrics superseded by the ECSG ensemble [1]. | [1] |

| Actinide Compounds | Random Forest (RF) & Neural Network (NN) Ensemble | Compositional Features (145 elemental properties) | R²: ~0.96; MSE: ~0.06 eV/atom (approaching DFT error) [5]. | [5] |

| 2D Conductive MOFs | Stacking Ensemble Model (e.g., Extra Trees) | Compositional & Structural Descriptors (GD, M-GD, A-GD) | R²: 0.96 (Formation Energy); 92% Accuracy (Metallicity Prediction) [37] [28]. | [37] [28] |

Experimental Protocols for Ensemble Model Validation

Data Sourcing and Preprocessing

The development of robust ensemble models relies on large, high-quality datasets of calculated formation energies. Standard protocols involve sourcing data from established computational databases:

- The Open Quantum Materials Database (OQMD): A high-throughput database containing DFT-calculated formation energies and crystallographic parameters for hundreds of thousands of compounds, commonly used for training models on actinides and other inorganic materials [5].

- The Materials Project (MP): Another extensive database providing computed properties of known and predicted materials, used for training general-purpose models [1].

- JARVIS Database: Used for benchmarking model performance on inorganic compounds [1].

- EC-MOF Database: A specialized database for 2D layered electrically conductive metal-organic frameworks, containing 536 monolayer systems [37] [28].

Data preprocessing typically involves cleaning the dataset, handling missing values, and encoding the chemical compositions into feature vectors. For ensemble models like ECSG, the dataset is split into training, validation, and test sets, often in a 90:10 ratio for training and testing [28].

Feature Engineering and Ensemble Training

The core of the integrated approach lies in generating complementary feature sets. The following workflow details the methodology for constructing the ECSG ensemble model.

Figure 1: ECSG ensemble model workflow for stability prediction.

Multi-Scale Feature Generation:

- Electron Configuration (EC) Features: The ECCNN model encodes the electron configuration of each element in a compound into a matrix representation (e.g., 118×168×8), which serves as input to a Convolutional Neural Network (CNN). This captures the fundamental electronic structure that governs chemical bonding [1].

- Atomic Property Features: The Magpie model calculates statistical features (mean, range, mode, etc.) from a wide array of elemental properties like atomic number, mass, radius, and electronegativity. These features represent the average chemical environment [1].

- Interatomic Interaction Features: The Roost model represents a chemical formula as a graph, where atoms are nodes and interactions are edges. A graph neural network with an attention mechanism learns the complex message-passing between atoms, capturing local bonding environments [1].

Base Model Training: The three feature sets are used to train three distinct base models (ECCNN, Magpie, and Roost) on the same stability labeling data (e.g., stable/unstable or formation energy).

Stacked Generalization (Ensemble): The predictions from the three base models are used as input features for a meta-learner (e.g., a linear model or another ML algorithm). This meta-learner is trained to optimally combine the base predictions, effectively learning the strengths of each feature type and producing a final, more accurate, and robust prediction [1].

Validation and Benchmarking

Trained models are rigorously validated against held-out test sets. Key performance metrics include: