Energy Above the Convex Hull (E_hull): A Comprehensive Guide to Stability, Prediction, and AI-Driven Design of Inorganic Materials

This article provides a comprehensive overview of the energy above the convex hull (E_hull), a critical metric for assessing the thermodynamic stability of inorganic materials.

Energy Above the Convex Hull (E_hull): A Comprehensive Guide to Stability, Prediction, and AI-Driven Design of Inorganic Materials

Abstract

This article provides a comprehensive overview of the energy above the convex hull (E_hull), a critical metric for assessing the thermodynamic stability of inorganic materials. Tailored for researchers and scientists, we explore the foundational principles of E_hull, detail cutting-edge computational and AI-driven methodologies for its prediction and application in inverse design, address common troubleshooting and optimization challenges, and present rigorous validation frameworks for model comparison. By synthesizing the latest advancements, including generative models like MatterGen and large-scale datasets such as OMat24, this guide serves as an essential resource for accelerating the discovery of stable, novel materials for technological applications.

What is Energy Above the Convex Hull? The Fundamental Metric for Material Stability

The energy above the convex hull (Ehull) serves as a fundamental metric in computational materials science for assessing the thermodynamic stability of a compound relative to other phases in its chemical space. This whitepaper provides an in-depth examination of Ehull, detailing its theoretical foundation in convex hull constructions, computational methodologies for its determination, and its critical applications in predicting materials synthesizability and stability. By integrating principles from density functional theory, phase diagram analysis, and recent machine learning approaches, this guide establishes a comprehensive framework for researchers to utilize E_hull in accelerating the discovery and development of inorganic materials, with specific relevance to energy storage and catalytic applications.

In inorganic materials research, the energy above the convex hull (E_hull) represents a crucial thermodynamic parameter that quantifies a compound's stability relative to competing phases in composition space. Also denoted as Ehull, this metric is defined as the energy difference between a target compound and the corresponding point on the convex hull at the same composition [1]. Geometrically, it is the vertical distance (in energy) from a phase's formation energy to the minimum-energy "envelope" formed by the most stable phases in a chemical system [1].

The convex hull itself is the smallest convex set that contains all points in a given dataset, representing the minimum-energy "envelope" in energy-composition space [2]. In thermodynamic terms, phases lying precisely on this hull (Ehull = 0) are considered thermodynamically stable at 0 K, while those above it (Ehull > 0) are either metastable or unstable [3]. The magnitude of E_hull indicates the degree of thermodynamic instability, with higher values suggesting greater propensity for decomposition into more stable neighboring phases [1].

This metric has become indispensable for high-throughput computational materials screening, particularly in assessing the synthesizability of predicted materials. Its calculation and interpretation provide critical insights for researchers exploring novel inorganic compounds, battery materials, and functional ceramics.

Theoretical Foundation

Convex Hull Construction in Composition Space

The thermodynamic convex hull is constructed in normalized energy-composition space, where the energy per atom (typically in eV/atom) is plotted against chemical composition [1]. For a multi-element system, the composition space has N-1 dimensions for N elements. The hull is formed by connecting the lowest-energy phases at their respective compositions such that no other phases lie below these connecting lines (in 2D), planes (in 3D), or hyperplanes (in higher dimensions) [2].

Table: Convex Hull Dimensionality Across Chemical Systems

| System Type | Composition Dimensions | Hull Geometry | Example |

|---|---|---|---|

| Binary | 1D | Line segments | AxB1-x |

| Ternary | 2D | Triangles | AxByCz |

| Quaternary | 3D | Tetrahedra | AxByCzDw |

| N-element | N-1D | Convex polytopes | Complex mixtures |

The construction follows the principle of convex combinations, where any point on the hull represents a mixture of the stable phases at the vertices of that hull segment that has the lowest possible energy for that overall composition [2]. Phases on the convex hull are stable against decomposition into any other combination of phases, while those above the hull will have a thermodynamic driving force to decompose into the phases on the hull at that composition.

Mathematical Definition of E_hull

For a compound C with formation energy Ef(C), the energy above hull is calculated as:

Ehull(C) = Ef(C) - Ehull(composition)

where Ehull(composition) is the energy of the point on the convex hull at the same composition as C [1].

For a compound that decomposes into multiple stable phases, the decomposition reaction can be represented as:

C → ΣaiPi

where Pi are the stable product phases and ai are their stoichiometric coefficients normalized such that the total composition is conserved. The E_hull is then the energy change per atom for this decomposition reaction [1].

As a concrete example, for BaTaNO2, the decomposition is:

BaTaNO2 → 2⁄3 Ba₄Ta₂O₉ + 7⁄45 Ba(TaN₂)₂ + 8⁄45 Ta₃N₅

The E_hull is calculated using the normalized (eV/atom) energies of these phases [1]. The stoichiometric coefficients ensure conservation of elemental composition while operating in normalized composition space.

Computational Methodologies

Density Functional Theory Calculations

The accurate calculation of E_hull relies on high-quality density functional theory (DFT) computations to determine formation energies. The standard methodology involves:

- Structural Relaxation: Full optimization of cell parameters and atomic positions for all compounds under consideration

- Energy Calculations: Single-point energy calculations after convergence

- Reference States: Calculation of elemental reference states in their standard forms

- Formation Energy: Computation using Ef = Etotal - ΣniEi, where Etotal is the total energy of the compound, and ni and Ei are the number and energy of atoms of element i [4]

For consistency, particularly when comparing with databases like the Materials Project, specific calculation parameters must be standardized, including exchange-correlation functionals, pseudopotentials, energy cutoffs, and k-point meshes [1].

Table: Standard DFT Parameters for E_hull Calculations

| Parameter | Typical Setting | Importance for E_hull |

|---|---|---|

| Functional | PBE (GGA) | Affects absolute formation energies |

| pseudopotentials | PAW | Consistent elemental references |

| Energy cutoff | 520 eV | Convergence of total energies |

| k-point density | 25-50/Å⁻³ | Brillouin zone sampling |

| Convergence | < 1 meV/atom | Precision for small E_hull values |

Convex Hull Algorithm Implementation

The computational construction of convex hulls employs geometric algorithms to determine the minimum-energy envelope:

- Input Preparation: Collection of formation energies for all known compounds in the chemical system

- Hull Calculation: Application of convex hull algorithms (e.g., Quickhull) to identify the stable phases [2]

- Distance Calculation: Determination of vertical distance to hull for unstable phases

- Decomposition Analysis: Identification of the precise combination of stable phases that define the hull at each composition

For high-dimensional systems (ternary and beyond), specialized algorithms like Qhull are employed to efficiently compute the convex hull in N-1 dimensional composition space [5]. These algorithms typically have time complexity of O(n log n) for 2D and O(n⌊d/2⌋) for higher dimensions, where n is the number of phases and d is the dimensionality [2].

Machine Learning Approaches

Recent advances have incorporated machine learning to predict E_hull, bypassing expensive DFT calculations for initial screening:

- Feature Selection: Physicochemical properties of constituent elements (electronegativity, atomic radius, valence electron count) [4]

- Model Architectures: Neural networks and random forest regression trained on existing databases [4]

- Performance: State-of-the-art models achieve mean absolute errors of 0.08-0.23 eV on test sets for MXenes [4]

These approaches enable rapid screening of vast compositional spaces, directing synthetic efforts toward promising regions with low predicted E_hull values [6].

Interpretation and Applications

Stability Assessment and Synthesizability

E_hull provides a quantitative measure of thermodynamic stability with direct implications for materials synthesizability:

- E_hull = 0 meV/atom: The compound is thermodynamically stable at 0 K and likely synthesizable [3]

- 0 < E_hull ≤ 50 meV/atom: The compound is metastable and may be synthesizable under kinetic control [6]

- E_hull > 50 meV/atom: The compound is unstable and unlikely to be synthesizable under standard conditions

For example, BaTaNO2 with Ehull = 32 meV/atom is metastable but has been successfully synthesized, demonstrating that phases with small positive Ehull values can be experimentally accessible [1]. This reflects the role of kinetic factors in actual synthesis conditions.

Precursor Selection in Solid-State Synthesis

E_hull analysis guides synthetic strategies by identifying optimal precursor pathways:

- Reaction Energy Maximization: Selecting high-energy (unstable) precursors maximizes thermodynamic driving force

- Byproduct Avoidance: Choosing precursor pairs whose compositional slice intersects minimal competing phases

- Inverse Hull Energy: Preferring reactions where the target has large energy difference from competing phases [7]

For LiBaBO3 synthesis, traditional precursors (Li2CO3, B2O3, BaO) form low-energy intermediates, leaving minimal driving force for target formation (ΔE = -22 meV/atom). Using LiBO2 + BaO retains substantial driving force (ΔE = -192 meV/atom) and yields higher phase purity [7].

Materials Discovery and Screening

Large-scale computational screening employing E_hull has accelerated materials discovery:

- High-Throughput DFT: Projects like the Materials Project have computed E_hull for over 100,000 compounds

- Composition Space Mapping: Identification of unexplored yet stable regions in phase diagrams [6]

- Novel Compound Prediction: Successful discovery of previously unknown compounds, such as YAg0.65In1.35, through ML-directed synthesis targeting compositions on or near the convex hull [6]

This approach minimizes experimental trial-and-error by focusing efforts on compositions with high probability of stability.

Experimental Protocols and Validation

Robotic Synthesis and High-Throughput Validation

Robotic laboratories enable large-scale experimental validation of E_hull predictions:

- Automated Synthesis: Robotic systems handle powder preparation, ball milling, and furnace heating

- High-Throughput Characterization: Automated X-ray diffraction for phase identification

- Phase Purity Assessment: Quantitative comparison of diffraction patterns to identify optimal synthesis conditions [7]

In a recent validation study, robotic synthesis of 35 target quaternary oxides demonstrated that precursors selected through Ehull analysis frequently yielded higher phase purity than traditional approaches [7]. This large-scale experimental verification (224 reactions spanning 27 elements) provides strong support for Ehull as a predictive metric for synthesizability.

Table: Computational and Experimental Resources for E_hull Research

| Resource | Type | Function | Access |

|---|---|---|---|

| Materials Project | Database | E_hull values for known compounds | Public |

| Qhull | Algorithm | Convex hull computation | Open source |

| VASP | Software | DFT energy calculations | Commercial |

| pymatgen | Library | Materials analysis | Open source |

| C2DB | Database | 2D materials properties | Public |

| Atomate2 | Workflow | Automated DFT calculations | Open source |

Current Challenges and Future Directions

Despite its utility, E_hull has several limitations that represent active research areas:

- Temperature Effects: Standard E_hull calculations assume 0 K; incorporating finite-temperature effects through phonon calculations remains computationally demanding

- Kinetic Factors: E_hull addresses thermodynamic stability but cannot predict kinetic barriers to phase formation or decomposition

- Disorder and Configurational Entropy: Accurate treatment of disordered systems requires special approaches beyond standard convex hull constructions

- Multicomponent Systems: Computational cost increases exponentially with system dimensionality

Promising directions include the integration of machine learning for rapid E_hull estimation [4], high-throughput experimental validation through robotic labs [7], and the development of dynamic convex hull data structures to efficiently handle expanding materials databases [2].

The energy above the convex hull represents a fundamental bridge between computational thermodynamics and experimental materials synthesis. By providing a quantitative measure of relative stability, Ehull enables researchers to prioritize compounds for synthesis, design efficient reaction pathways, and understand decomposition mechanisms. As computational methods advance through machine learning and high-throughput frameworks, and experimental validation scales through robotic laboratories, Ehull will continue to play a central role in accelerating the discovery and development of novel inorganic materials for energy applications, catalysis, and beyond. The integration of E_hull analysis into materials research workflows represents a cornerstone of modern, data-driven materials science.

The energy above the convex hull (Ehull) has long served as a foundational metric in computational materials science for assessing thermodynamic stability. This technical guide examines the critical role of Ehull in predicting material synthesizability and practical viability within inorganic materials research. While Ehull provides an essential first-principles filter for identifying potentially stable compounds, recent advances reveal its limitations when used in isolation. We explore how integrating Ehull with emerging machine learning approaches for synthesizability prediction and thermodynamic strategies for precursor selection creates a more robust framework for materials discovery. Experimental validations across multiple studies demonstrate that this integrated approach successfully bridges the gap between computational prediction and experimental realization, accelerating the development of functional materials for energy, catalysis, and beyond.

In computational materials science, the energy above the convex hull (Ehull) serves as a fundamental metric for assessing thermodynamic stability. Calculated through density functional theory (DFT), Ehull represents the energy difference between a compound and a linear combination of the most stable competing phases on the convex hull of formation energies in a given chemical space [8]. A material with Ehull = 0 eV/atom is thermodynamically stable, while those with positive values are metastable (Ehull > 0) or unstable (with sufficiently large positive values).

The relationship between Ehull and synthesizability stems from basic thermodynamic principles: materials with lower Ehull values possess greater thermodynamic driving forces for formation from their constituent elements or precursors. This relationship has made E_hull a cornerstone screening parameter in high-throughput computational materials discovery. However, thermodynamic stability alone cannot guarantee experimental synthesizability, as kinetic barriers, precursor selection, and reaction pathways play equally critical roles [9] [10].

The limitations of relying exclusively on Ehull have become increasingly apparent as materials databases have expanded. For instance, the Materials Project lists 21 SiO₂ structures within 0.01 eV of the convex hull, yet the commonly synthesized cristobalite phase is not among them [9]. Similarly, numerous structures with favorable formation energies remain unsynthesized, while various metastable structures with less favorable Ehull values have been successfully synthesized [11]. These observations have spurred the development of complementary approaches that augment traditional E_hull analysis with synthesizability metrics and synthesis pathway planning.

Computational Framework and Methodologies

Convex Hull Construction and E_hull Calculation

The construction of a convex hull begins with the calculation of formation energies for all known compounds in a chemical space. DFT serves as the computational workhorse for these energy calculations, though the specific functional choices and computational parameters can significantly impact results [8]. The convex hull represents the lower convex envelope of formation energies across compositions, with stable phases residing on this hull and metastable phases lying above it.

Table 1: Key Metrics for Stability and Synthesizability Assessment

| Metric | Definition | Typical Range | Interpretation |

|---|---|---|---|

| E_hull | Energy above convex hull | 0 eV (stable) to >0.1 eV (metastable) | Thermodynamic stability relative to competing phases |

| CLscore | Machine-learned synthesizability score [11] | 0-1 (higher = more synthesizable) | Probability of successful experimental synthesis |

| Inverse Hull Energy | Energy below neighboring stable phases [7] | Varies by system | Selectivity of target phase against competing by-products |

| Reaction Energy | ΔE of synthesis reaction from precursors | Typically negative (eV/atom) | Thermodynamic driving force for specific synthesis pathway |

The calculation of Ehull involves determining the minimum energy difference between a compound and any linear combination of other compounds on the convex hull that would yield the same composition. This computation becomes increasingly complex in multicomponent systems, where the dimensionality of the composition space grows exponentially. Despite this complexity, Ehull remains widely used due to its physical interpretability and computational tractability compared to finite-temperature thermodynamic calculations or kinetic modeling.

Beyond Thermodynamics: Machine Learning for Synthesizability

Recent approaches have integrated Ehull with machine learning models that capture additional factors influencing synthesizability. The Crystal Synthesis Large Language Models (CSLLM) framework demonstrates this paradigm, achieving 98.6% accuracy in predicting synthesizability by combining structural and compositional features beyond thermodynamic stability [11]. Similarly, Prein et al. developed a unified synthesizability score that integrates compositional and structural descriptors through ensemble modeling, significantly outperforming Ehull-based screening alone [9].

These models address fundamental limitations of Ehull-centric approaches. While Ehull effectively captures thermodynamic stability at zero Kelvin, it overlooks finite-temperature effects, entropic contributions, and kinetic factors that govern experimental synthetic accessibility [9]. Furthermore, E_hull provides no guidance on actual synthesis parameters such as precursor selection, reaction temperatures, or processing times [10].

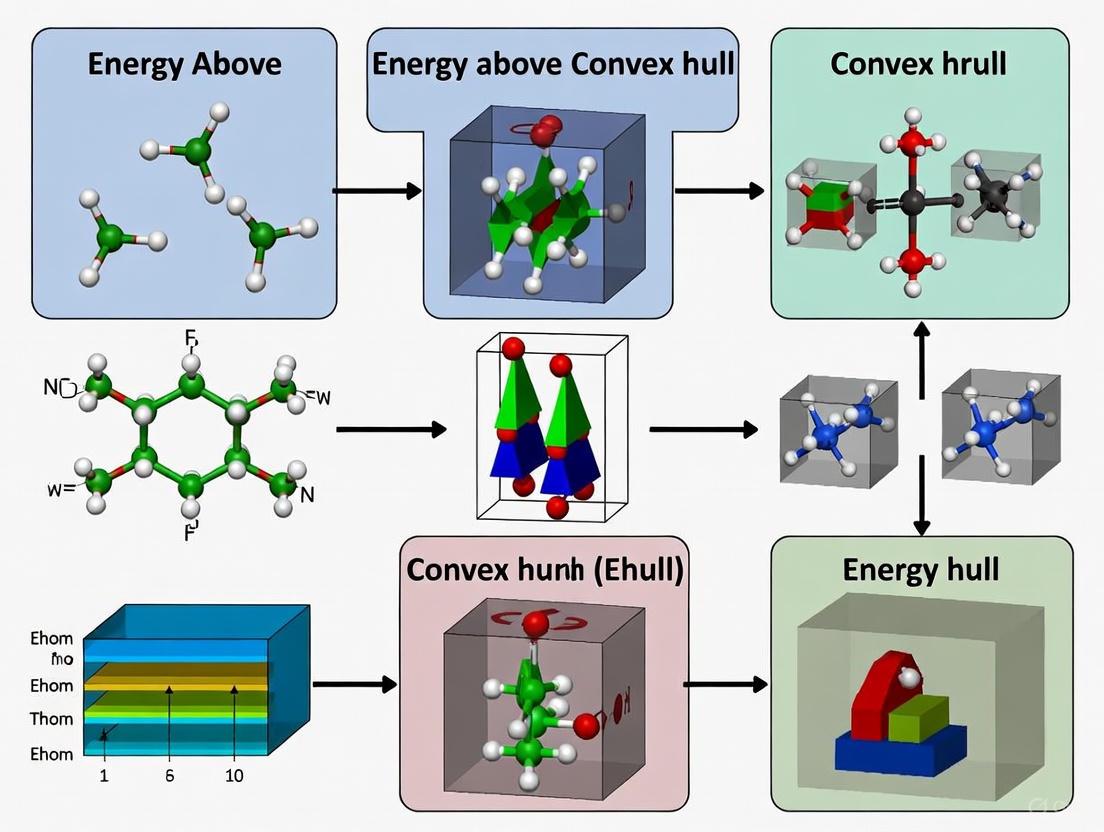

The following diagram illustrates how E_hull integrates into a modern synthesizability-guided discovery pipeline:

Experimental Validation: From Prediction to Synthesis

Synthesizability-Guided Discovery Pipeline

A recent large-scale validation of synthesizability prediction demonstrated an integrated approach combining Ehull screening with machine learning models. The pipeline began with 4.4 million candidate structures from major materials databases (Materials Project, GNoME, Alexandria) [9]. Initial Ehull screening identified 1.3 million potentially stable structures (E_hull ≤ 0.1 eV/atom), consistent with conventional stability criteria.

The key innovation emerged in subsequent steps, where researchers applied a unified synthesizability model integrating both compositional and structural descriptors. This model employed two encoders: a compositional transformer (MTEncoder) fine-tuned for synthesizability prediction and a graph neural network (JMP model) processing crystal structure graphs [9]. Predictions from both models were combined using a rank-average ensemble (Borda fusion) to prioritize candidates with high synthesizability scores.

This approach identified approximately 500 highly synthesizable candidates from the initial pool. Subsequent retrosynthetic planning employed precursor-suggestion models (Retro-Rank-In) and synthesis condition prediction (SyntMTE) trained on literature-mined solid-state synthesis data [9]. Experimental synthesis of 16 selected targets yielded 7 successfully characterized materials matching the target structures, including one novel compound and one previously unreported phase. The entire process from prediction to characterization required only three days, demonstrating the efficiency gains possible through integrated stability and synthesizability assessment.

Thermodynamic Strategies for Precursor Selection

Beyond identifying synthesizable materials, E_hull analysis informs precursor selection to enhance reaction kinetics and phase purity. A robotic synthesis study of 35 quaternary oxides established principles for navigating high-dimensional phase diagrams using convex hull analysis [7]. The strategy focuses on identifying precursor compositions that circumvent low-energy competing by-products while maximizing reaction energy to drive fast phase transformation kinetics.

Table 2: Thermodynamic Principles for Effective Precursor Selection [7]

| Principle | Description | Role in Synthesis |

|---|---|---|

| Two-Precursor Initiation | Reactions should begin between only two precursors | Minimizes simultaneous pairwise reactions forming kinetic traps |

| High-Energy Precursors | Selection of relatively unstable precursors | Maximizes thermodynamic driving force and reaction kinetics |

| Deepest Hull Point | Target should be lowest energy in reaction hull | Ensures greater driving force for target than competing phases |

| Minimal Competing Phases | Few competing phases along reaction path | Reduces opportunity for by-product formation |

| Large Inverse Hull Energy | Target substantially lower than neighbors | Enhances selectivity against potential impurities |

The application of these principles is illustrated in the synthesis of LiBaBO₃. Traditional precursors (Li₂CO₃, B₂O₃, BaO) exhibit a large overall reaction energy (ΔE = -336 meV/atom) but form low-energy ternary intermediates that consume most of the driving force [7]. Alternatively, using pre-synthesized LiBO₂ as a precursor with BaO provides a direct reaction pathway with substantial retained energy (ΔE = -192 meV/atom) and higher phase purity. This approach demonstrates how E_hull analysis extended to reaction pathways enables more efficient synthesis of target materials.

Workflow for Experimental Synthesis and Characterization

The experimental validation of predicted materials follows a systematic workflow implemented in automated materials synthesis platforms:

Precursor Preparation: Stoichiometric quantities of precursors are determined through balanced chemical reactions, often including volatile atmospheric gases (O₂, N₂, CO₂) for proper redox balancing [10].

Mechanical Processing: Powder precursors undergo ball milling to ensure intimate mixing and reactant contact, critical for solid-state reaction kinetics.

Thermal Treatment: Calcination occurs at predicted temperatures (from models like SyntMTE) with appropriate atmospheric control and dwelling times.

Phase Characterization: X-ray diffraction (XRD) provides rapid phase identification and purity assessment through comparison with simulated patterns of target structures.

Property Validation: Successful synthesis leads to measurement of functional properties (electrochemical, catalytic, electronic) to confirm predicted performance.

Robotic laboratories have dramatically accelerated this workflow, enabling a single experimentalist to perform hundreds of synthesis reactions with high reproducibility [7]. This automation facilitates large-scale hypothesis testing and provides robust validation of synthesizability predictions.

Case Studies and Applications

Successful Discovery of Functional Materials

Integrated E_hull and synthesizability screening has enabled the discovery of novel functional materials across multiple domains. In a study targeting low-work-function perovskite oxides for catalysis and energy applications, machine learning identified 27 stable candidates from an initial pool of 23,822 compositions [12]. Subsequent synthesis and characterization confirmed two promising compounds: Ba₂TiWO₈, which exhibited catalytic activity for NH₃ synthesis and decomposition, and Ba₂FeMoO₆, which demonstrated exceptional cycling stability as a Li-ion battery electrode.

The MatterGen generative model represents another advanced application, generating stable, diverse inorganic materials across the periodic table [13]. This diffusion-based model produces structures with 78% falling below the 0.1 eV/atom E_hull threshold, while 61% represent new materials not present in existing databases. As a proof of concept, one generated material was successfully synthesized with measured properties within 20% of the target values [13].

Limitations and Complementary Approaches

Despite these successes, important limitations persist in Ehull-centric approaches. A critical examination of machine-learned formation energies revealed that accurate prediction of Ehull does not guarantee accurate stability classification [8]. While formation energies can be predicted with low mean absolute error, the subtle energy differences governing stability (typically 0.06±0.12 eV/atom) require exceptional precision for reliable hull placement.

Text-mining studies of synthesis recipes further highlight the complexity of synthesizability prediction. Analysis of 31,782 solid-state synthesis recipes revealed significant challenges in data quality, including limitations in volume, variety, veracity, and velocity [10]. These limitations arise from anthropological biases in how chemists have historically explored synthesis spaces, with conventional intuition sometimes impeding rather than enabling novel discoveries.

The most valuable insights often emerged from anomalous recipes that defied conventional wisdom, suggesting alternative reaction mechanisms and precursor selection strategies [10]. This observation underscores the importance of complementing E_hull analysis with kinetic considerations, precursor chemistry, and reaction pathway engineering to fully address the synthesizability challenge.

Table 3: Key Research Reagent Solutions and Computational Tools

| Resource | Function | Application Context |

|---|---|---|

| DFT Software (VASP, Quantum ESPRESSO) | First-principles energy calculations | E_hull determination, reaction energy computation |

| Materials Databases (MP, ICSD, OQMD) | Repository of crystal structures and properties | Training data for ML models, convex hull construction |

| Robotic Synthesis Platforms | Automated powder processing and heat treatment | High-throughput experimental validation |

| X-ray Diffractometers | Phase identification and structure verification | Characterization of synthesis products |

| CSLLM Framework | Synthesizability and precursor prediction [11] | ML-guided synthesis planning |

| MatterGen | Generative design of crystal structures [13] | Inverse materials design with property constraints |

| SyntMTE & Retro-Rank-In | Synthesis condition and precursor prediction [9] | Retrosynthetic planning for solid-state reactions |

The energy above the convex hull remains an essential metric in computational materials science, providing a physically grounded assessment of thermodynamic stability. However, the journey from predicted stability to synthesized material requires integrating E_hull with complementary approaches that address kinetic and synthetic accessibility. Machine learning models trained on both compositional and structural features now demonstrate remarkable accuracy in predicting synthesizability, exceeding 98% in some frameworks [11].

The most successful materials discovery pipelines combine E_hull screening with synthesizability prediction, retrosynthetic planning, and automated experimental validation. This integrated approach has demonstrated concrete successes, realizing novel functional materials with targeted properties. Future advances will likely focus on improving finite-temperature stability predictions, incorporating kinetic barriers explicitly into synthesizability models, and developing more sophisticated precursor selection algorithms that consider both thermodynamics and transport phenomena.

As these methodologies mature, the role of E_hull will evolve from a standalone filter to one component in a multifaceted synthesizability assessment. This integrated perspective promises to accelerate the discovery and realization of novel materials, bridging the gap between computational prediction and experimental synthesis to address pressing technological challenges in energy, catalysis, and beyond.

In the field of inorganic materials research, the energy above the convex hull ((E{\text{hull}})) serves as a fundamental metric for assessing thermodynamic stability. A material's (E{\text{hull}}) represents its energy distance to the convex hull of thermodynamic stability—a hypersurface in materials space whose vertices are the most stable compounds. A low (E_{\text{hull}}) (typically < 0.1 eV/atom) indicates stability against decomposition into other phases and higher likelihood of successful synthesis [14]. The accurate prediction of this property is therefore a critical bottleneck in the discovery of new functional materials.

The rise of large-scale computational databases and machine learning (ML) has dramatically accelerated the exploration of material space. This guide provides an in-depth technical examination of three pivotal resources—Materials Project, Alexandria, and OMat24—for conducting robust stability analysis. We detail their unique data characteristics, provide protocols for their use, and demonstrate how they can be integrated into a modern materials discovery workflow focused on (E_{\text{hull}}) prediction.

Database Comparative Analysis

The landscape of materials databases has expanded significantly, offering researchers various data types and scales. The table below summarizes the core attributes of the three primary resources for stability analysis.

Table 1: Key Databases for Inorganic Materials Stability Analysis

| Database | Primary Data Type & Scale | Computational Method | Key Features for Stability Analysis | Access & License |

|---|---|---|---|---|

| Materials Project (MP) [15] | Curated properties & structures (~155,000 entries) [14] | DFT (PBE, GGA+U, r2SCAN) [15] | - Pre-computed (E_{\text{hull}}) & phase diagrams- Extensive API for programmatic querying- is_stable and energy_above_hull fields [15] |

REST API (free key required) [15] |

| Alexandria [16] | Massive computed structures (>4.4 million 3D compounds) [14] | DFT (PBE, PBEsol, SCAN) [16] | - Massive scale of candidate structures- Convex hull data files available for download- Includes disordered ICSD structures [13] | Creative Commons Attribution 4.0 [16] |

| OMat24 (Open Materials 2024) [17] [18] | ~118 million DFT single-point calculations & ML models | DFT (PBE+U); EquiformerV2 neural network potential (NNP) | - ML models approaching DFT accuracy for formation energy- State-of-the-art F1 score (>0.9) for stability classification [17]- Fast, SCF-free property prediction [18] | Creative Commons 4.0 (data); Permissive OS license (models) [17] |

Database-Specific Technical Protocols

Stability Queries with the Materials Project API

The Materials Project (MP) provides a Python client (MPRester) for direct querying of stability data. The following code demonstrates how to search for stable materials and retrieve their (E_{\text{hull}}) [15].

High-Throughput Screening with Alexandria

Alexandria's immense dataset is ideal for large-scale stability screening. The workflow often involves using its structures with a reliable property predictor, such as a universal interatomic potential (UIP), to calculate formation energies and subsequently compute (E_{\text{hull}}) [14]. The general workflow is as follows:

- Data Retrieval: Download the desired subset of crystal structures (e.g., the 3D bulk materials dataset) from the Alexandria portal [16].

- Property Prediction: Use a trained ML model (like those from OMat24 or other UIPs) to rapidly predict the formation energy for each structure. This step is computationally efficient compared to running DFT.

- Hull Construction: For a given chemical system, gather all known and predicted compounds, then construct the convex hull using their formation energies to determine each compound's (E_{\text{hull}}).

Machine-Learning Accelerated Workflows with OMat24

The OMat24 release provides pre-trained models that can predict DFT-level energies and forces orders of magnitude faster than DFT [18]. This enables high-throughput stability screening without performing costly electronic structure calculations.

The OMat24 authors demonstrated that their models achieve an F1 score above 0.9 for classifying thermodynamic stability, closely matching the accuracy of the underlying PBE functional while being vastly faster [17].

Integrated Workflow for Stability Analysis

Combining the strengths of these resources creates a powerful pipeline for materials discovery. The diagram below illustrates a prospective stability screening workflow.

This workflow addresses key benchmarking challenges [19] by using a realistic discovery pipeline (prospective benchmarking), employing the correct stability target ((E_{\text{hull}})), and leveraging ML for scalable pre-screening. The final DFT step ensures high-fidelity validation, as ML models, while highly accurate, are ultimately approximations of DFT [18].

The Scientist's Toolkit

Table 2: Essential Tools and Resources for Stability Analysis

| Tool/Resource | Type | Primary Function in Stability Analysis |

|---|---|---|

| MPRester [15] | Python Client | Programmatic access to query and retrieve pre-computed (E_{\text{hull}}) and structures from the Materials Project. |

| EquiformerV2 [17] | Neural Network Architecture | The core model architecture for OMat24, achieving state-of-the-art accuracy in predicting formation energies and forces. |

| Universal Interatomic Potentials (UIPs) [19] | ML Model | A class of ML force fields trained on diverse data; shown to be highly effective for pre-screening thermodynamic stability. |

| Convex Hull Analysis | Algorithm | The computational method to determine the phase diagram and calculate the (E_{\text{hull}}) for any given compound from its formation energy. |

| Pymatgen | Python Library | A comprehensive library for materials analysis, essential for manipulating crystal structures and parsing database outputs. |

The synergistic use of the Materials Project, Alexandria, and OMat24 represents a paradigm shift in how researchers can approach stability analysis in inorganic materials. Materials Project offers a curated source of validated stability data, Alexandria provides an unprecedented scale of candidate structures, and OMat24 delivers the ML tools for rapid, accurate property prediction. By following the technical protocols and integrated workflow outlined in this guide, researchers can construct efficient, high-throughput discovery pipelines to identify novel stable materials with targeted properties, significantly accelerating the development of next-generation technologies.

In the field of inorganic materials research, the energy above the convex hull (Ehull) has become a cornerstone metric for predicting synthesizability. Retrieved from high-throughput density functional theory (DFT) calculations, this parameter measures a compound's thermodynamic stability relative to competing phases on a phase diagram [20]. A material on the convex hull (Ehull = 0 meV/atom) is considered thermodynamically stable, while those with Ehull > 0 are metastable or unstable, with values exceeding 200 meV/atom generally indicating very low synthesizability potential [20]. However, this purely thermodynamic perspective presents an incomplete picture of material stability, as it essentially represents a 0 K ground-state property that neglects vibrational contributions to the free energy [21].

The critical shortcoming of relying exclusively on Ehull emerges from the phenomenon of vibrational instability, where materials possessing favorable Ehull values nevertheless exhibit imaginary phonon modes in their vibrational dispersion spectra [22]. These imaginary frequencies indicate that the structure does not reside at a minimum on its potential energy surface and is dynamically unstable, meaning atomic vibrations would cause the structure to distort or collapse over time [22]. Consequently, a material can be thermodynamically stable according to convex hull analysis yet remain vibrationally unstable and therefore unsynthesizable.

Table 1: Examples of Vibrationally Unstable Materials with Low Ehull* Values*

| Material | MP ID | Ehull (meV/atom) | Vibrational Status |

|---|---|---|---|

| LiZnPS₄ | mp-11175 | 0 | Unstable |

| SiC | mp-11713 | 3 | Unstable |

| Ca₃PN | mp-11824 | 0 | Unstable |

This article introduces vibrational stability as an essential complementary filter for materials synthesizability assessment. By integrating vibrational analysis with traditional convex hull methods, researchers can achieve a more comprehensive and accurate prediction of which computationally predicted materials are likely to be experimentally realizable.

Theoretical Foundations: From Thermodynamic to Vibrational Stability

The Convex Hull and Energy Above Hull

The convex hull in materials science represents the minimum energy "envelope" in energy-composition space, constructed from the most stable phases across different chemical compositions [1]. The energy above hull for a specific compound is the vertical energy distance to this lower envelope, representing the decomposition energy required for the compound to break down into a combination of more stable neighboring phases on the hull [1]. This decomposition energy (Ed) can be calculated using the normalized (eV/atom) energies of the identified decomposition products [1]. For instance, BaTaNO₂ (mp-1221508) has decomposition products of ²⁄₃ Ba₄Ta₂O₉ + ⁷⁄₄₅ Ba(TaN₂)₂ + ⁸⁄₄₅ Ta₃N₅, and its Ehull is calculated as:

Ehull = EBaTaNO₂ - (²⁄₃ EBa₄Ta₂O₉ + ⁷⁄₄₅ EBa(TaN₂)₂ + ⁸⁄₄₅ ETa₃N₅)

where all energies are normalized per atom [1].

Vibrational Stability and the Potential Energy Surface

While Ehull assesses thermodynamic stability, vibrational stability evaluates dynamic behavior by examining the curvature of the potential energy surface at the material's equilibrium geometry [22]. A vibrationally stable material exhibits exclusively real phonon frequencies across all wave vectors in the Brillouin zone, confirming that the structure resides at a local minimum on the potential energy surface [22]. In contrast, imaginary phonon frequencies (often reported as negative values in computational outputs) indicate vibrational instability, signifying that some atomic displacements would lower the system's energy, leading to structural distortion or collapse [22].

The connection between these concepts becomes apparent when considering the thermodynamic stability of a material at finite temperatures, which requires incorporating vibrational contributions through the Gibbs free energy:

ΔG(T) = ΔH + ΔFvib - TΔSmix

where ΔH represents the formation enthalpy (related to Ehull), ΔFvib is the vibrational free energy difference, and TΔSmix accounts for configurational entropy contributions [21]. The vibrational term ΔFvib = ΔEZPE - TΔSvib includes both zero-point energy and vibrational entropy, computed from the phonon density of states [21].

Computational Assessment of Vibrational Stability

First-Principles Phonon Calculations

The primary methodology for determining vibrational stability involves first-principles phonon calculations following the workflow above. The finite displacement method implements small atomic displacements in a supercell to compute the force constant matrix, which determines vibrational frequencies across the Brillouin zone [21]. These calculations typically employ DFT with numerical parameters carefully converged for accurate force predictions.

Machine Learning for High-Throughput Screening

Given the computational expense of phonon calculations, machine learning (ML) classifiers have been developed to predict vibrational stability directly from structural features. A random forest model trained on ~3100 materials achieved an average f1-score of 0.63 for the unstable class with a mean AUC of 0.73 [22]. Performance improved to 0.70 f1-score when operating at higher confidence thresholds (≥0.65) while maintaining coverage of approximately 65% of data points [22].

Table 2: Machine Learning Classifier Performance for Vibrational Stability Prediction

| Metric | Stable Class | Unstable Class | Overall |

|---|---|---|---|

| Precision | 0.83 | 0.60 | - |

| Recall | 0.87 | 0.68 | - |

| F1-Score | 0.85 | 0.63 | - |

| AUC | - | - | 0.73 |

Feature importance analysis revealed that BACD (Bond Angle and Coordination Distribution) and ROSA (Radial and Orbital Structure Analysis) descriptors were most significant for predicting vibrational stability, followed by space group (SG) features [22]. Specific descriptors like std_average_anionic_radius and metals_fraction appeared consistently important across all training folds [22].

Integrated Workflow for Synthesizability Prediction

Combined Stability Assessment Protocol

The integrated workflow for synthesizability assessment sequentially applies thermodynamic and vibrational stability filters. Materials first undergo Ehull screening, with those passing this initial filter (typically Ehull < 50-100 meV/atom) proceeding to vibrational stability analysis [22]. This hierarchical approach efficiently eliminates unpromising candidates while conserving computational resources for the more expensive phonon calculations on the most thermodynamically favorable materials.

Experimental Validation and Case Studies

Large-scale experimental validation demonstrates that this combined approach significantly improves synthesizability predictions. In assessments of ~3100 materials, approximately 15-21% exhibited vibrational instability despite favorable Ehull values [22]. This substantial fraction highlights the critical limitation of relying solely on convex hull analysis for synthesizability assessment.

Robotic inorganic materials synthesis laboratories provide platforms for high-throughput validation of these computational predictions. In one study encompassing 35 target quaternary oxides with chemistries relevant to battery applications, precursors selected using thermodynamic strategies that considered competing by-products frequently yielded higher phase purity than traditional precursors [7]. This experimental validation confirms that synthesis outcomes depend critically on both thermodynamic driving forces and kinetic pathways, which are influenced by vibrational stability.

Table 3: Computational and Experimental Resources for Stability Assessment

| Resource | Type | Function | Application Context |

|---|---|---|---|

| VASP | Software | DFT calculations for total energies and forces | Ehull computation and phonon calculations [21] |

| Phonopy | Software | Phonon analysis from force constants | Vibrational spectra and stability assessment [21] |

| Pymatgen | Library | Phase diagram analysis and Ehull calculation | Convex hull construction [1] [21] |

| Materials Project | Database | Experimental and calculated material properties | Reference energies for Ehull calculations [20] |

| Finite Displacement Method | Computational Method | Force constant matrix calculation | Phonon dispersion relationships [21] |

| Machine Learning Classifier | Predictive Model | Vibrational stability from structural features | High-throughput screening [22] |

The integration of vibrational stability assessment with traditional convex hull analysis represents a significant advancement in materials synthesizability prediction. By addressing both thermodynamic and dynamic stability considerations, researchers can more accurately identify computationally predicted materials with genuine potential for experimental realization. This combined approach is particularly valuable for guiding high-throughput synthesis efforts in complex chemical spaces, such as multicomponent oxides for energy applications [7].

Future developments will likely focus on improving the efficiency and accuracy of vibrational stability predictions through enhanced machine learning models trained on expanded datasets. As these methodologies mature, integration of vibrational stability filters into major materials databases will provide researchers with readily accessible synthesizability metrics, ultimately accelerating the discovery and realization of novel functional materials.

From Calculation to Creation: AI, Machine Learning, and Inverse Design for Stable Materials

The energy above the convex hull (Ehull) serves as a fundamental metric for assessing thermodynamic stability in inorganic materials research. This whitepaper delineates the established high-throughput Density Functional Theory (DFT) workflow for determining Ehull, a methodology that underpins modern computational materials discovery. We detail the core computational protocols, data handling procedures, and benchmarking standards that enable the rapid screening of material stability across vast compositional spaces. The document further contextualizes the enduring role of these DFT-based approaches amidst emerging machine-learning methodologies, framing E_hull determination as a critical component in the pipeline for predicting synthesizable materials, from next-generation superconductors to functional perovskites for energy applications.

In the paradigm of data-driven materials science, the energy above the convex hull (Ehull) has emerged as a foundational descriptor for a material's thermodynamic stability. It quantifies the energy difference, in eV/atom, between a given compound and the most stable combination of other phases at the same composition, as defined by the convex hull of formation energies in the relevant chemical space [23]. A low Ehull value indicates that a material is thermodynamically stable or metastable, making it a primary filter in high-throughput virtual screening campaigns. This metric is indispensable for transforming vast databases of computationally predicted compounds into credible candidates for experimental synthesis, thereby accelerating the discovery of novel materials for technologies ranging from photovoltaics and catalysis to superconductors [24] [25].

High-Throughput DFT (HT-DFT) constitutes the traditional and most rigorous backbone for the large-scale calculation of E_hull. While machine learning models are increasingly used for rapid stability prediction [24] [26], HT-DFT remains the benchmark for accuracy, providing the reliable formation energy data required to construct the convex hull itself. The workflow involves the systematic and automated application of DFT calculations to thousands of material structures, followed by sophisticated thermodynamic analysis. This guide provides an in-depth examination of this core workflow, its associated protocols, and its critical role within a broader materials discovery ecosystem that now includes generative models [13] and synthesizability predictors [27].

Core Workflow for High-Throughput E_hull Determination

The determination of E_hull for a material involves a multi-stage computational process. The following diagram visualizes the end-to-end high-throughput DFT workflow, from initial structure selection to the final stability assessment.

Workflow Visualization

Detailed Workflow Stages

Stage 1: Structure Selection and Preparation The process begins with curating a comprehensive set of crystal structures for analysis. Sources include experimental databases like the Inorganic Crystal Structure Database (ICSD) [28] [23] and repositories of hypothetical structures from generative models or prototype decorations [13] [23]. Data cleaning is often necessary, using machine learning to correct missing or incorrect lattice parameters and space group information to ensure high-fidelity input structures [28].

Stage 2: High-Throughput DFT Calculation

Each curated structure undergoes a DFT calculation to determine its ground-state total energy (Etot). These calculations are automated using workflow managers like the qmpy python package [23]. Standard practice employs the Vienna Ab initio Simulation Package (VASP) with the projector-augmented wave (PAW) method and the PBE generalized gradient approximation (GGA) for the exchange-correlation functional [23]. For systems with strong electron correlations (e.g., containing d- or f-electrons), the DFT+U formalism is applied with element-specific U values to better describe on-site Coulomb interactions [28] [23].

Stage 3: Formation Energy Calculation The formation energy (H~f~) for a compound is calculated from its DFT total energy. For a perovskite with formula ABO~3~, the formation energy per atom is given by: H~f~^ABO3* = [E(ABO~3~) - µ~A~ - µ~B~ - 3µ~O~] / N~at~ where E(ABO~3~) is the DFT total energy of the compound, µ~i~ are the chemical potentials of the constituent elements referenced to their standard states, and N~at~ is the number of atoms in the unit cell [23].

Stage 4: Construct Global Convex Hull & Calculate Ehull A global convex hull is constructed from the formation energies of all known and calculated phases in the chemical space of interest. The energy above the convex hull (Ehull) for a specific compound is then defined as: E_hull = H~f~^compound* - H~f~^hull* where H~f~^hull* is the formation energy of the convex hull at that compound's composition [23]. This value represents the compound's thermodynamic instability relative to decomposition into other phases.

Quantitative Benchmarks and Stability Thresholds

The tabulated data below summarizes key quantitative benchmarks and typical E_hull thresholds used for stability classification in high-throughput studies.

Table 1: Experimentally Validated E_hull Thresholds for Stability Prediction

| Material System | Stability Threshold (meV/atom) | Prediction Accuracy | Context and Validation |

|---|---|---|---|

| ABO~3~ Perovskites [23] | < 25 meV/atom | 395 predicted stable compounds | Matches ~kT at room temperature; used to identify novel, synthesizable perovskites. |

| General Inorganic Crystals [24] | < 40 meV/atom | N/A | Common heuristic for thermodynamic stability at room temperature in high-throughput screening. |

| Generative Model Output (MatterGen) [13] | < 100 meV/atom | 75% of generated structures | Benchmark for success of inverse design; lower thresholds (e.g., 40 meV) yield fewer candidates. |

Table 2: Performance of Machine Learning Models for E_hull Prediction

| ML Model Type | Dataset Size | Target Property | Prediction Performance (R²) | Key Application |

|---|---|---|---|---|

| Multi-output GBR [24] | 2,480 ABO~3~ Perovskites | E_hull & Bandgap | 0.938 for E_hull | Simultaneous prediction of stability and electronic properties for photovoltaics screening. |

| Graph Neural Networks [26] | >5 Million Structures | Multiple Properties | Improves with data size | Leverages large datasets ("alexandria") for accurate property prediction, including stability. |

Successful implementation of a high-throughput DFT workflow relies on a suite of specialized software tools, databases, and computational resources.

Table 3: Essential Resources for High-Throughput DFT and E_hull Analysis

| Resource Name | Type | Primary Function in Workflow | Reference/Link |

|---|---|---|---|

| VASP | Software | Performs the core DFT energy calculations. | [29] [23] |

qmpy Python Package |

Software | Manages high-throughput workflow, automates calculations, and performs thermodynamic analysis. | [23] |

| Materials Project | Database | Provides pre-calculated E_hull and formation energies for over 144,000 materials for validation and hull construction. | [24] [13] |

| OQMD (Open Quantum Materials Database) | Database | Source of ~470,000 phases (experimental and hypothetical) used as references for convex hull construction. | [23] |

| ICSD (Inorganic Crystal Structure Database) | Database | Source of experimentally reported crystal structures used as initial inputs and for validation. | [27] [28] [23] |

| Alexandria | Database | Large dataset of >5 million DFT calculations used for training machine learning models. | [26] |

Interplay with Advanced Predictive Models

The traditional HT-DFT workflow is not isolated but synergistically integrates with modern computational approaches. The reliable Ehull data generated by HT-DFT serves as the foundational training set for machine learning models that predict stability directly from composition or structure [24] [26]. For instance, multi-output gradient boosting regression (GBR) models can predict Ehull with high accuracy (R² = 0.938), dramatically accelerating the initial screening process [24].

Furthermore, Ehull is a critical filter for the outputs of generative models like MatterGen, which design novel crystal structures from scratch. The stability of these generated materials is ultimately validated by comparing their DFT-calculated Ehull to established thresholds [13]. This creates a powerful, multi-tiered discovery pipeline: generative models propose candidates, ML models pre-screen them rapidly, and HT-DFT provides the definitive stability assessment via E_hull before experimental synthesis is attempted [27].

High-throughput DFT workflows remain the indispensable backbone for the accurate and reliable determination of Ehull, a property central to judging the thermodynamic viability of new inorganic materials. As detailed in this guide, the process—encompassing automated DFT computation, formation energy derivation, and convex hull construction—provides the quantitative rigor required for serious materials discovery efforts. While emerging machine learning and generative models enhance the speed and scope of exploration, their development and validation are deeply rooted in the data produced by these traditional HT-DFT methods. The continued refinement of these workflows, coupled with the growth of extensive DFT databases, ensures that Ehull will maintain its role as a cornerstone metric in the computational design and development of next-generation functional materials.

The prediction of material properties, particularly stability metrics like the energy above the convex hull (Ehull), is crucial for accelerating the discovery of novel inorganic materials. This whitepaper provides an in-depth technical examination of two dominant machine learning architectures—Graph Neural Networks (GNNs) and Transformers—for predicting Ehull and related thermodynamic properties. We synthesize current methodologies, benchmark quantitative performance from recent studies, and detail experimental protocols for implementing these predictors. By framing this discussion within the context of inorganic materials research, we aim to equip scientists with the knowledge to select, implement, and optimize these powerful tools for high-throughput virtual screening and materials design.

The energy above the convex hull (Ehull) is a fundamental metric in computational materials science that quantifies the thermodynamic stability of a compound relative to other phases in its chemical space. A material with an Ehull of zero is thermodynamically stable, while a positive value indicates a metastable or unstable compound that may decompose into more stable phases [1]. Accurate prediction of Ehull is therefore a critical first step in identifying synthesizable materials.

Traditional methods for calculating Ehull rely on Density Functional Theory (DFT), which provides high accuracy but at a prohibitive computational cost for screening vast compositional spaces. Machine learning (ML) models have emerged as a powerful alternative, capable of predicting Ehull and other properties at a fraction of the computational expense. This guide focuses on the two most promising ML architectures for this task: Graph Neural Networks (GNNs), which natively operate on atomic structures, and Transformer architectures, which have shown remarkable success in sequence and pattern recognition tasks.

Theoretical Foundations: Energy Above the Convex Hull

The convex hull, in a materials context, is a geometric construction in energy-composition space. It represents the set of the most thermodynamically stable phases for all possible compositions in a given chemical system. For a compound with composition X, its Ehull is the vertical energy difference (often in meV/atom) between its formation energy and the convex hull at that exact composition [1].

- Calculation Geometry: The convex hull is constructed from the formation energies of all known phases in the system. For a multi-component system (e.g., ternary, quaternary), the hull becomes a hyper-surface in N-dimensional space. The decomposition of a target compound is not necessarily into phases of the same composition, but into a combination of the most stable neighboring phases that conserve the total stoichiometry. For example, a specific compound might decompose into a mixture like

2/3 of Phase A + 7/45 of Phase B + 8/45 of Phase C[1]. - Practical Significance: Ehull provides a direct measure of synthesizability. Materials with low Ehull (e.g., < 50 meV/atom) are often synthesizable as metastable phases, while those with high values are unlikely to form. ML models learn the complex, non-linear relationships between a material's composition, structure, and its resulting Ehull, enabling rapid stability assessment without explicit DFT calculation for every candidate.

Machine Learning Architectures for Property Prediction

Graph Neural Networks (GNNs)

GNNs have become a cornerstone of modern materials informatics because they operate directly on the most natural representation of a molecule or crystal: a graph.

Core Principles and Message Passing Framework

In a GNN, a material's structure is represented as a graph ( G = (V, E) ), where atoms are nodes ((v \in V)) and chemical bonds are edges ((e_{v,w} = (v, w) \in E)). Each node and edge is associated with feature vectors (e.g., atom type, electronegativity, coordination number, bond type) [30]. The powerful "message passing" paradigm is the engine of most GNNs designed for materials. In this framework, node embeddings are updated through iterative steps where nodes receive and aggregate "messages" from their neighboring nodes, effectively capturing the local chemical environment [30]. This process can be summarized in three key steps [30]:

- Message Passing: For each node, a message is computed from its neighbors.

- Node Update: Each node's feature vector is updated based on the aggregated messages it received.

- Readout: After several message-passing steps, a graph-level representation (embedding) is generated by pooling the updated node features, which is then used for property prediction (e.g., Ehull regression).

This architecture allows GNNs to learn rich, hierarchical representations of materials that are inherently invariant to translation, rotation, and atom indexing.

Application to Ehull Prediction

GNNs are particularly well-suited for predicting properties like Ehull that depend on the detailed local atomic coordination and long-range interactions within a crystal structure. By processing the atomic graph, a GNN can learn how specific structural motifs—such as polyhedral connectivity or the presence of certain functional groups—correlate with thermodynamic stability.

Transformer Architectures

While renowned for natural language processing, Transformers are increasingly applied to scientific problems due to their powerful attention mechanisms.

Core Principles and Attention Mechanisms

The Transformer's key innovation is the self-attention mechanism, which allows the model to weigh the importance of different parts of the input sequence when computing representations. In the context of materials:

- Tokenization: A material must first be converted into a sequence of "tokens." This can be done in several ways, such as using atomic symbols and their relative positions, or using patches of atomic environments [31].

- Attention: The model then calculates attention scores between all pairs of tokens, identifying which parts of the material structure are most relevant for predicting the target property. For instance, it might learn that specific long-range interactions between metal atoms are critical for determining stability.

Studies have shown that in many benchmark tasks, simpler Transformer models with effective tokenization and normalization (e.g., Z-score normalization) can outperform more complex architectures, highlighting the importance of robust foundational components over sheer architectural complexity [31].

Application to Ehull Prediction

Transformers can be applied to predict Ehull by treating the material's composition or structure as a sequence. The model can learn complex, non-local relationships across the entire composition that influence stability. For example, in a high-entropy alloy system, the attention mechanism could potentially identify how the configuration of five different metal elements across lattice sites affects the overall formation energy.

Quantitative Performance Benchmarking

The following tables summarize the performance of various ML models reported in recent literature for predicting properties related to material stability.

Table 1: Performance of ML Models in Predicting Stability Metrics of MXenes [4]

| Target Property | Model Type | Features Used | MAE (Training) | MAE (Testing) |

|---|---|---|---|---|

| Heat of Formation | Random Forest | 12 physicochemical features | 0.15 eV | 0.23 eV |

| Heat of Formation | Neural Network | 12 physicochemical features | 0.18 eV | 0.21 eV |

| Energy Above Hull | Neural Network | 14 physicochemical features | 0.03 eV | 0.08 eV |

Table 2: Comparison of GNNs and CNNs for Composite Property Prediction [32]

| Model Architecture | Task | Accuracy | Parameter Count | Key Advantage |

|---|---|---|---|---|

| Graph Neural Network (GNN) | Homogenization of elastic & fracture properties | >99% | ~160x fewer than CNN | High accuracy with minimal parameters; handles unstructured data. |

| Convolutional Neural Network (CNN) | Homogenization of elastic & fracture properties | Lower than GNN | Baseline | Struggles with representing complex microstructures efficiently. |

The data indicates that both carefully designed neural networks and GNNs can achieve high accuracy in predicting stability-related properties. The choice of model often depends on the input data representation (feature vectors vs. atomic graphs) and the desired balance between accuracy and computational efficiency.

Experimental Protocols and Methodologies

Protocol 1: Predicting Ehull using Physicochemical Features

This protocol is based on the methodology used to predict the heat of formation and Ehull for MXenes, as detailed in [4].

- Data Curation: Source a dataset of known materials with calculated Ehull values. Public databases like the Computational 2D Materials Database (C2DB) [4] or the Materials Project are ideal starting points. The dataset used in [4] contained 300 MXene entries.

- Feature Engineering: Compute a comprehensive set of physicochemical features for each material. This typically includes:

- Elemental properties of constituent atoms (e.g., electronegativity, atomic radius, electron affinity).

- Stoichiometric attributes.

- Properties of surface termination atoms (highlighted as particularly important in [4]).

- Model Training and Selection:

- Split the data into training and testing sets (e.g., 80/20).

- Train multiple model types, such as Random Forest and Neural Networks, using the feature set.

- Employ feature importance analysis (e.g., provided by Random Forest) to identify the most critical descriptors and potentially build reduced-order models.

- Validation: Validate the final model's performance on the held-out test set using metrics like Mean Absolute Error (MAE) and R² score.

Protocol 2: Predicting Properties using Graph Neural Networks

This protocol outlines the process for using GNNs for material property prediction, as applied in [32] and generalized in [30].

- Graph Representation:

- Input: Crystal Structure Information (CIF files).

- Graph Construction: Convert the crystal structure into a graph. Nodes represent atoms, with features such as atom type, charge, and spin. Edges represent bonds or atom-pair interactions within a specified cutoff radius, with features potentially including bond length and type.

- Model Implementation:

- Architecture: Implement a Message Passing Neural Network (MPNN). Each layer performs message passing, aggregation, and node update operations.

- Normalization: For problems with high contrast in material properties (e.g., vastly different elastic moduli), implement normalization techniques, such as the mean-field method (MFM) used in [32], to stabilize training.

- Training Loop:

- Use a mean-squared-error loss between predicted and true Ehull values.

- Utilize the Adam optimizer and employ techniques like learning rate scheduling and early stopping.

- Readout and Prediction: After the final message-passing layer, a global pooling operation (e.g., mean, sum, or a learned weighted sum) generates a fixed-size graph embedding. This embedding is passed through a fully connected network to produce the final Ehull prediction.

Visualization of Workflows and Architectures

GNN Message Passing for Crystal Graphs

(GNN Workflow)

Material Tokenization for Transformers

(Transformer Material Analysis)

Table 3: Key Computational Tools and Datasets for ML-Driven Materials Research

| Tool / Resource Name | Type | Primary Function | Relevance to Ehull Prediction |

|---|---|---|---|

| C2DB [4] | Database | Repository of computed properties for 2D materials. | Provides curated training data (formation energy, Ehull) for 2D materials like MXenes. |

| Materials Project [1] | Database | Extensive database of DFT-calculated properties for inorganic compounds. | The primary source for Ehull data and reference phases for convex hull construction for a vast range of materials. |

| PyMatgen [1] | Software Library | Python library for materials analysis. | Contains robust tools for parsing CIF files, generating composition-based features, and constructing phase diagrams and calculating Ehull from DFT energies. |

| MP-Api [1] | Software Library | Python interface to the Materials Project REST API. | Allows for programmatic retrieval of materials data for building custom datasets. |

| CHGNet [1] | Machine Learning Model | A pretrained GNN for atomistic modeling. | Provides a method to obtain DFT-quality formation energies and Ehull for new structures without running expensive DFT calculations, useful for data augmentation. |

The integration of GNNs and Transformers into the materials science workflow represents a paradigm shift in how researchers discover and design new stable materials. GNNs offer an intuitive and powerful method for learning from atomic structures directly, while Transformers provide a flexible framework for capturing complex, long-range dependencies within material representations.

For the specific task of predicting the energy above the convex hull, the current state-of-the-art leverages both approaches. The choice between them often depends on data availability and representation: GNNs are superior when full structural information is available, while feature-based Transformers or other neural networks can be highly effective when working primarily with compositional data. As these fields mature, we anticipate a convergence of architectures, leading to models that combine the geometric reasoning of GNNs with the powerful representational capacity of Transformers, further accelerating the discovery of next-generation inorganic materials.

The discovery of novel inorganic materials with tailored properties is a cornerstone of technological advancement in fields such as energy storage, catalysis, and carbon capture. Traditional methods, reliant on experimental trial-and-error or computational screening of known databases, are fundamentally limited by their inability to efficiently explore the vast space of potential, unknown crystalline compounds. This whitepaper details how MatterGen, a foundational generative model, represents a paradigm shift in inorganic materials design. We frame its capabilities within the critical context of thermodynamic stability, as measured by the energy above the convex hull (E_hull), a key metric for predicting synthesizability. MatterGen directly generates novel, stable crystal structures conditioned on desired property constraints, dramatically accelerating the inverse design process. This guide provides an in-depth technical examination of MatterGen's diffusion-based architecture, its performance benchmarks against established methods, and detailed protocols for its application in designing viable inorganic materials.

The design of functional materials is essential for driving technological breakthroughs, from developing cheaper batteries for grid-level energy storage to designing adsorbents for carbon capture [33]. Historically, materials discovery has been a slow process, guided by human intuition and costly experimentation. While computational screening of large materials databases has accelerated this process, it remains constrained to the finite number of known compounds, which is only a "tiny fraction of the number of potentially stable inorganic compounds" [13].

A critical hurdle in proposing new materials is ensuring their thermodynamic stability, which is reliably predicted by the energy above the convex hull (Ehull). The Ehull represents the energy difference between a material and the most stable combination of other phases at the same composition from a reference phase diagram. A material with an Ehull of 0 eV/atom lies on the convex hull and is considered thermodynamically stable, while those with positive values are metastable or unstable [1]. Proposing new materials with low Ehull is therefore a primary objective, but traditional screening methods cannot access the vast space of unknown, stable crystals.

Generative AI offers a solution through inverse design—directly generating candidate materials that satisfy target property constraints. However, prior generative models have struggled with low success rates in proposing stable crystals or could only satisfy a narrow set of constraints [13]. MatterGen addresses these limitations, establishing a new paradigm for creating stable, diverse inorganic materials across the periodic table.

MatterGen is a diffusion model specifically tailored for the generative design of crystalline materials. Diffusion models learn to generate data by reversing a fixed corruption process. MatterGen defines a crystalline material by its unit cell, comprising atom types (A), coordinates (X), and a periodic lattice (L) [13].

The Diffusion Process for Crystalline Materials

Unlike image diffusion, which uses Gaussian noise, MatterGen employs a customized corruption process that respects the unique geometry and symmetries of crystals:

- Atom Type Diffusion: Atom types are corrupted in a categorical space, where individual atoms are gradually transitioned to a masked state [13].

- Coordinate Diffusion: A wrapped Normal distribution respects periodic boundaries, with noise scaled to account for cell size effects in Cartesian space. The process approaches a uniform distribution at the noisy limit [13].

- Lattice Diffusion: The lattice is diffused in a symmetric form, approaching a distribution centered on a cubic lattice with an average atomic density derived from training data [13].

To reverse this process, MatterGen uses a learned score network that outputs invariant scores for atom types and equivariant scores for coordinates and the lattice, inherently respecting the necessary symmetries without needing to learn them from data [13].

Property Conditioning via Adapter Modules

A key innovation of MatterGen is its ability to steer generation toward materials with desired properties. This is achieved through adapter modules—tunable components injected into each layer of the base model that alter its output based on a given property label [13]. This approach allows for efficient fine-tuning on relatively small labeled datasets. The fine-tuned model is used with classifier-free guidance to steer the generation toward target constraints, such as [13] [34]:

- Chemical composition

- Crystallographic symmetry (Space Group)

- Electronic properties (e.g., DFT band gap)

- Mechanical properties (e.g., bulk modulus from an ML predictor)

- Magnetic properties (e.g., DFT magnetic density)

- Thermodynamic stability (e.g., energy above hull)

The following diagram illustrates the complete generation and conditioning workflow.

Quantitative Performance: MatterGen vs. State-of-the-Art

The performance of MatterGen was rigorously benchmarked against previous state-of-the-art generative models, including CDVAE and DiffCSP. Metrics focused on the likelihood of generating stable, unique, and new (SUN) materials and the geometric quality of the proposed structures.

Table 1: Benchmarking MatterGen against prior generative models. Performance metrics are based on generating 1,000 samples from each model, evaluated using Density Functional Theory (DFT) [13].

| Model | % Stable, Unique, & New (SUN) | Average RMSD to DFT (Å) | % Stable (E_hull < 0.1 eV/atom) | % Novel |

|---|---|---|---|---|

| MatterGen (Alex-MP-20) | 38.6% | 0.021 | 74.4% | 62.0% |

| MatterGen (MP-20 only) | 22.3% | 0.110 | 42.2% | 75.4% |

| DiffCSP (Alex-MP-20) | 33.3% | 0.104 | 63.3% | 66.9% |

| CDVAE | 14.0% | 0.359 | 19.3% | 92.0% |

MatterGen more than doubles the success rate of generating SUN materials compared to CDVAE and generates structures that are more than ten times closer to their local energy minimum, as indicated by the significantly lower average Root-Mean-Square Deviation (RMSD) after DFT relaxation [13]. This demonstrates a substantial improvement in proposing viable, synthesizable candidates.

Performance in Property-Conditioned Design

MatterGen's ability to generate materials under constraint was tested against traditional baselines like substitution and random structure search (RSS).

Table 2: Performance in property-constrained design. MatterGen is fine-tuned and then generates candidates for target chemical systems or properties, outperforming established baselines [13].

| Design Target | Method | Performance |

|---|---|---|

| Target Chemical System | MatterGen (fine-tuned) | Generates more stable, novel materials in the target system than baselines. |

| Substitution & RSS | Saturates quickly, limited to known structural prototypes. | |

| High Bulk Modulus (>400 GPa) | MatterGen (fine-tuned) | Continues to generate novel, high-modulus candidates. |

| Computational Screening | Saturates due to exhausting known candidates in databases. |

Experimental Protocols and Validation

This section outlines the key methodologies for training, generating, and validating materials with MatterGen.

Model Training and Fine-Tuning Protocol

- Base Model Pretraining: The foundational MatterGen model is trained on the Alex-MP-20 dataset, a curated collection of 607,683 stable structures with up to 20 atoms, recomputed from the Materials Project (MP) and Alexandria databases [13] [34].

- Fine-Tuning for Property Conditioning: For a target property (e.g., bulk modulus, magnetic density), the pretrained base model is fine-tuned on a smaller, labeled dataset using adapter modules. This process is data-efficient, requiring far fewer labeled examples than the initial pretraining [13].

Structure Generation and Evaluation Protocol

- Unconditional Generation: To sample novel materials without constraints, the base model is run starting from a fully noisy crystal. The model refines atom types, coordinates, and the lattice over multiple denoising steps [34].

- Property-Conditioned Generation: To generate materials for a specific application, the fine-tuned model is used with classifier-free guidance. The target property value (e.g.,